Submitted:

03 April 2026

Posted:

07 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- An adversarial stress test that is realistic: A DeepFool perturbation to create perturbations to cross decision boundaries to provide a realistic model of a deceit/adversarial scenario.

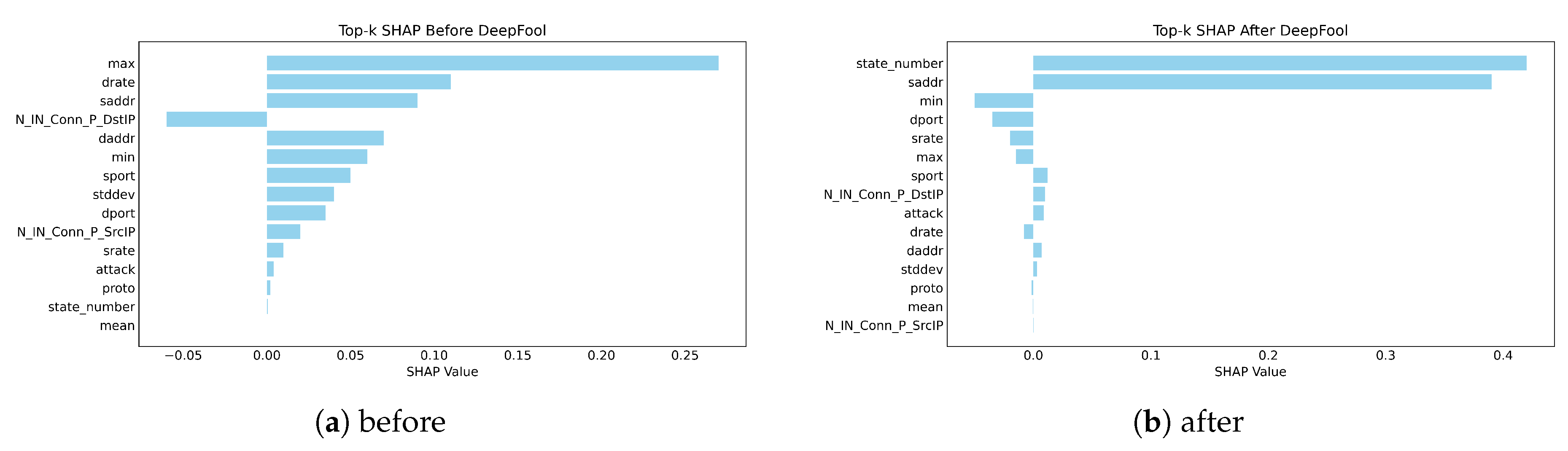

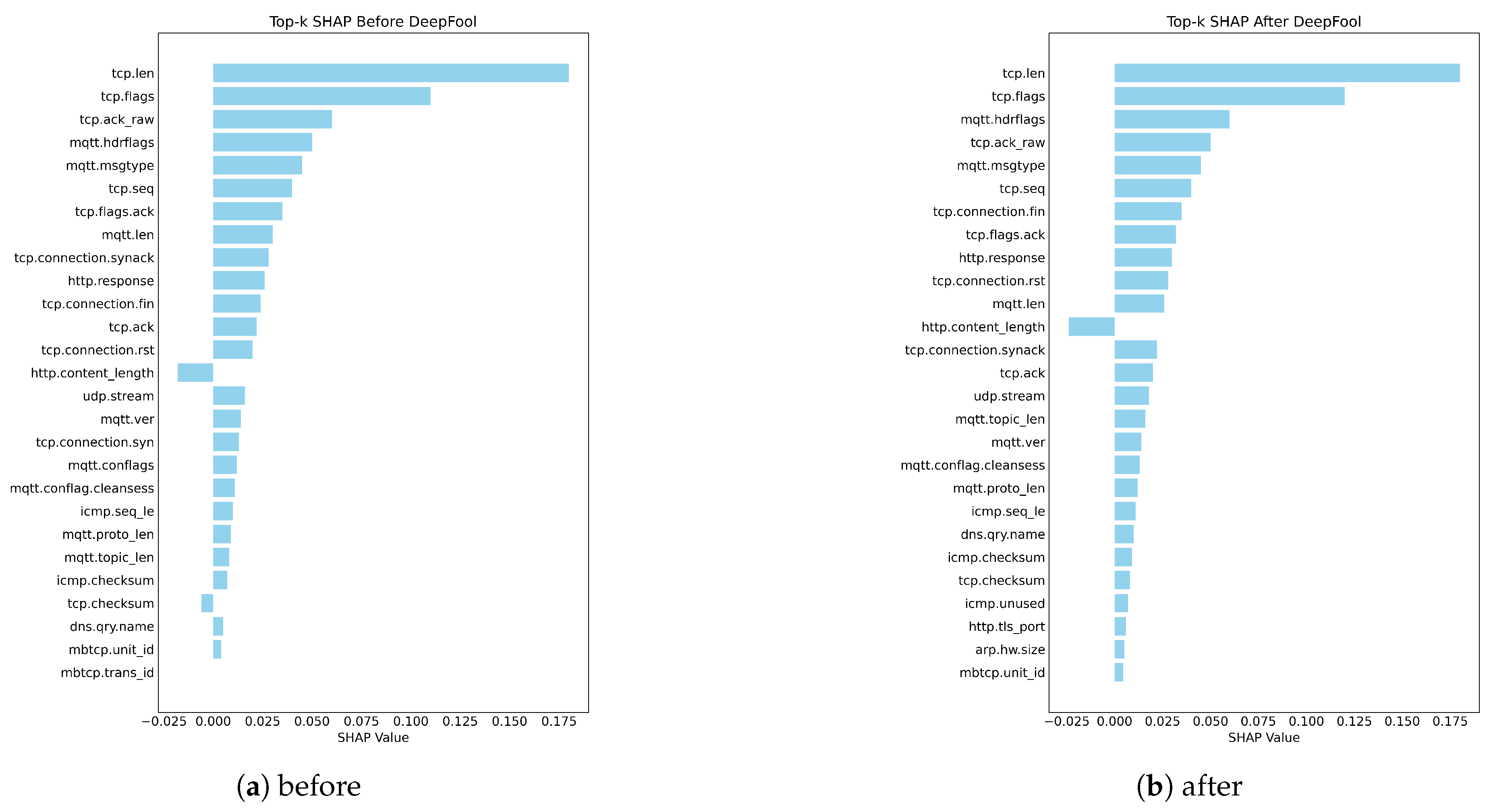

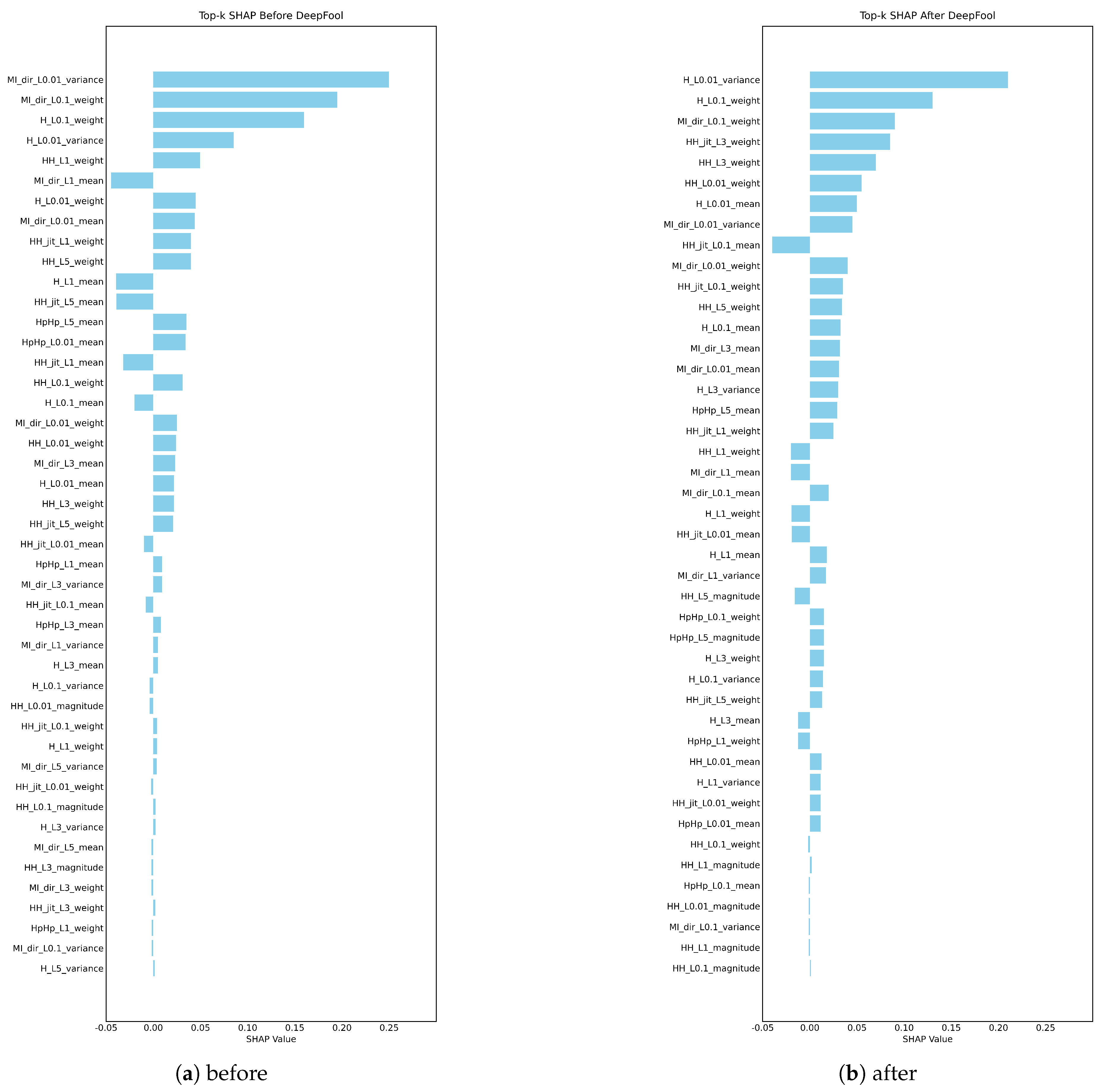

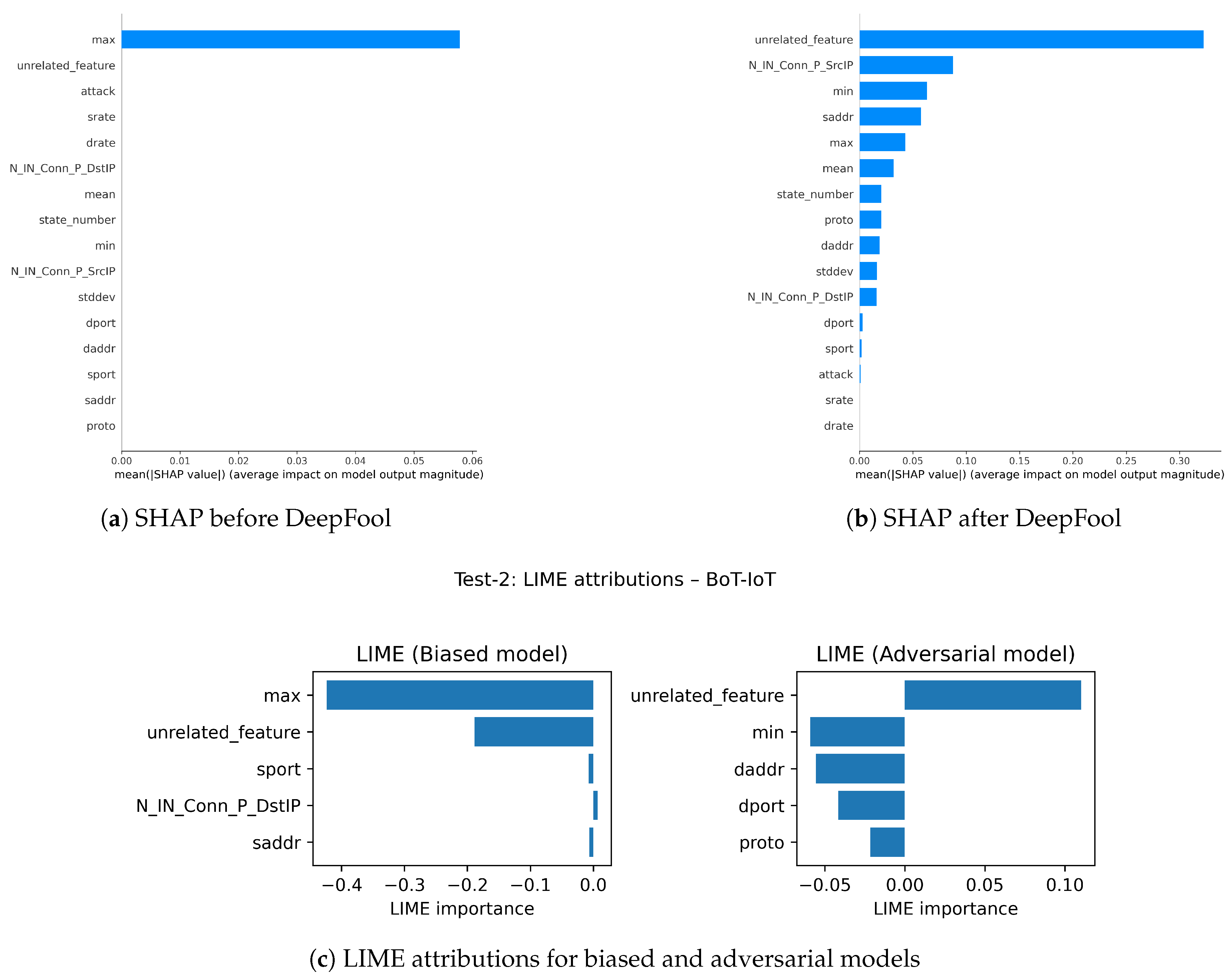

- Causally complete assessment: We assess SHAP and LIME top-k based feature explanation against actual feature perturbations by using DeepFool perturbation protocol at the decision boundary to provide an experiential means to attempt to assess explanatory validity under adversarial stress.

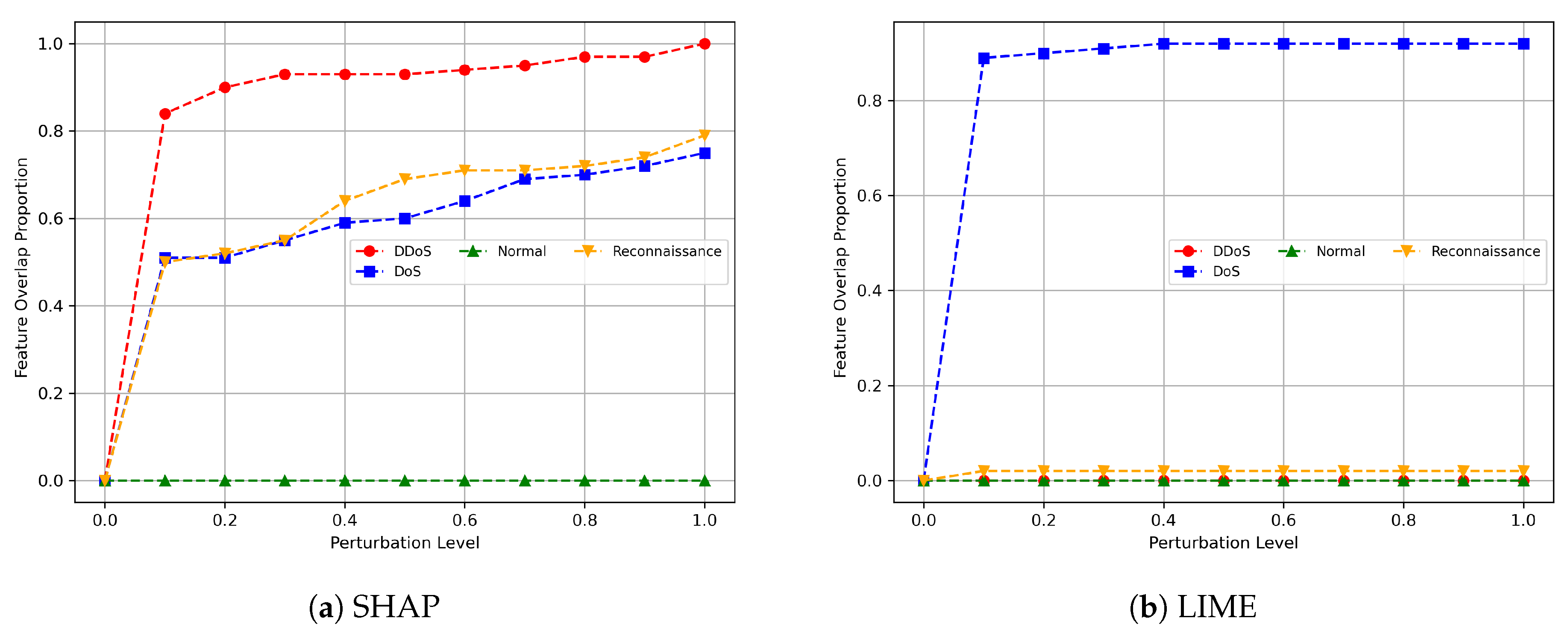

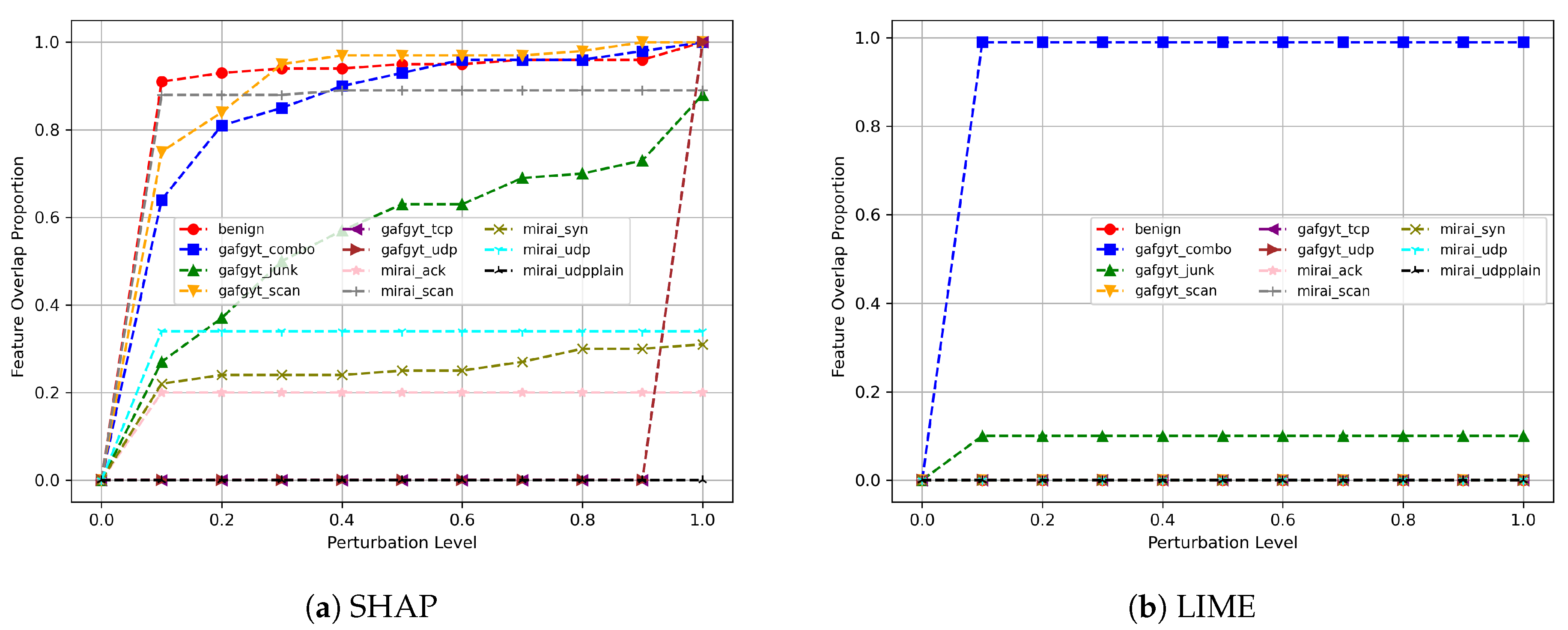

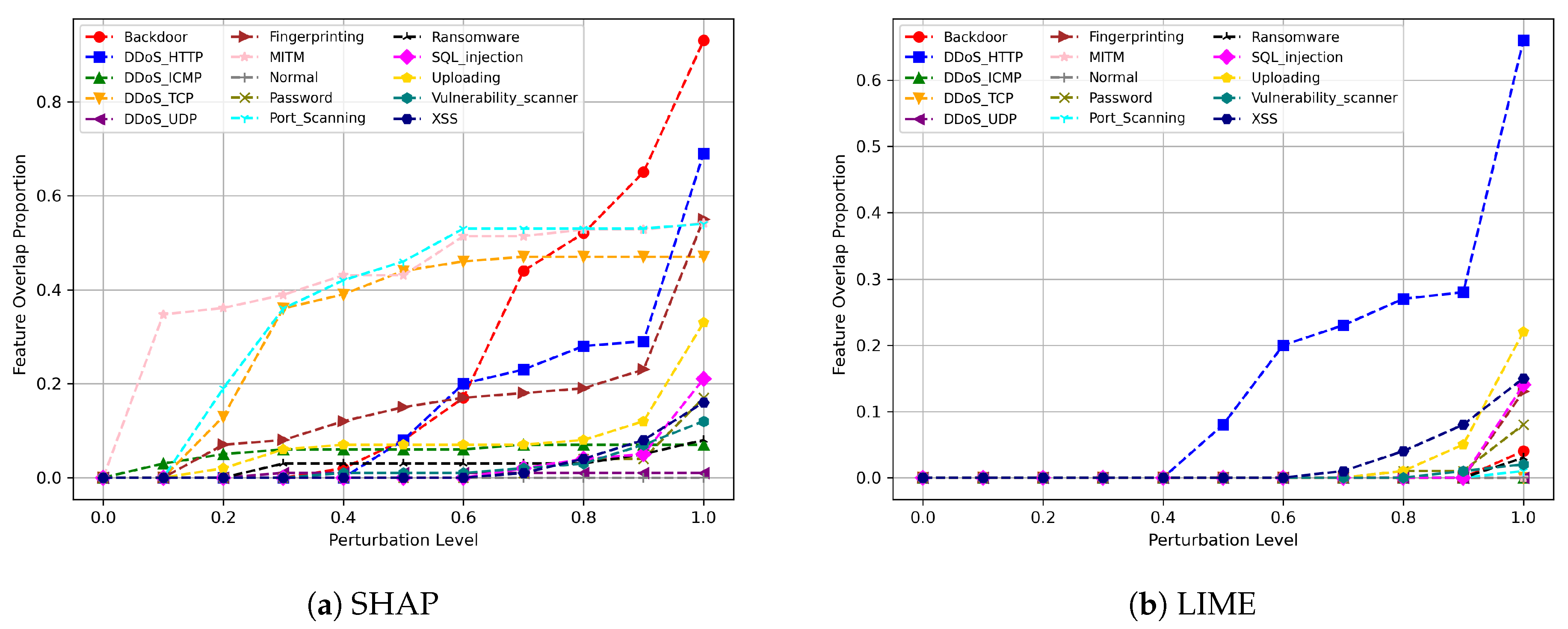

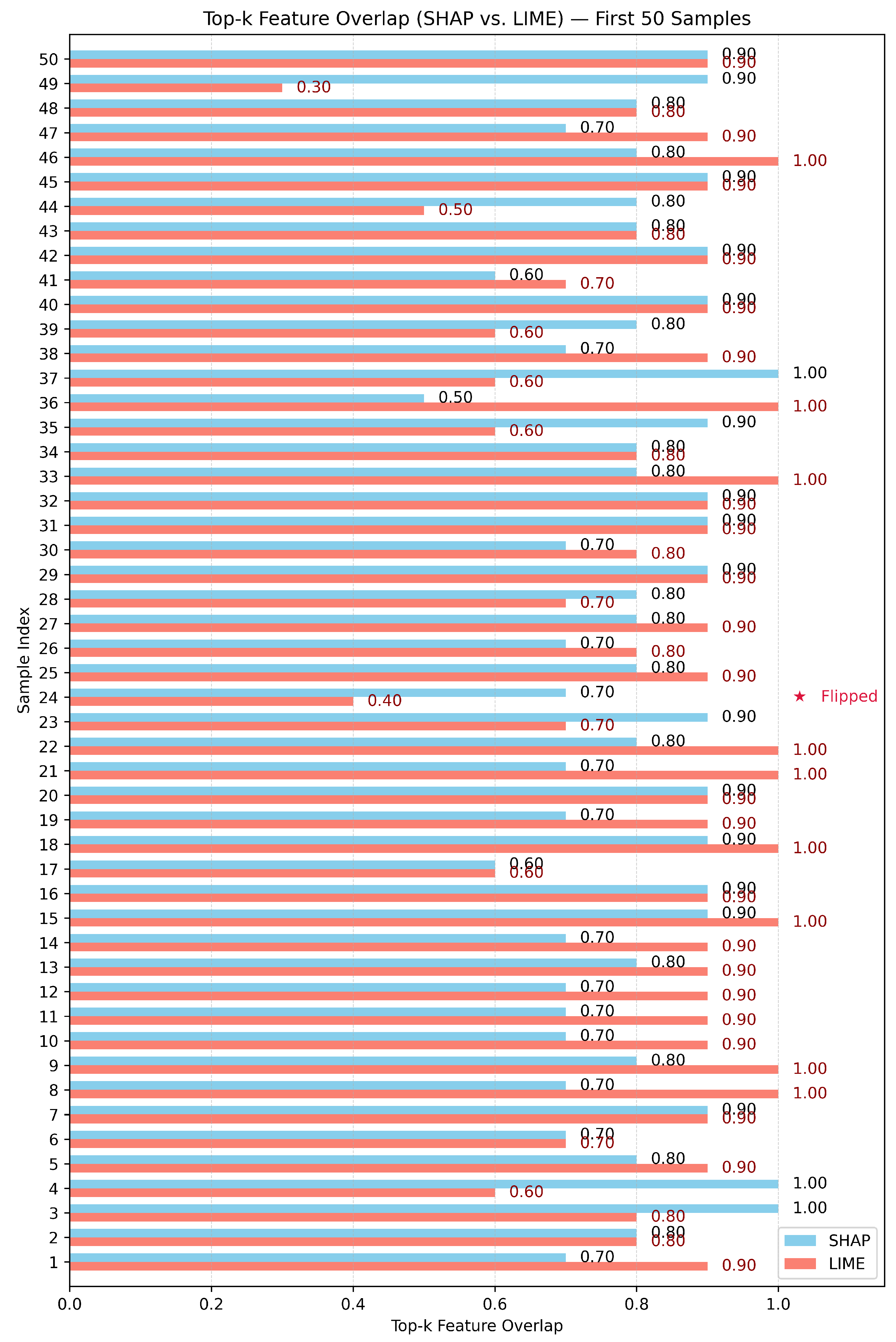

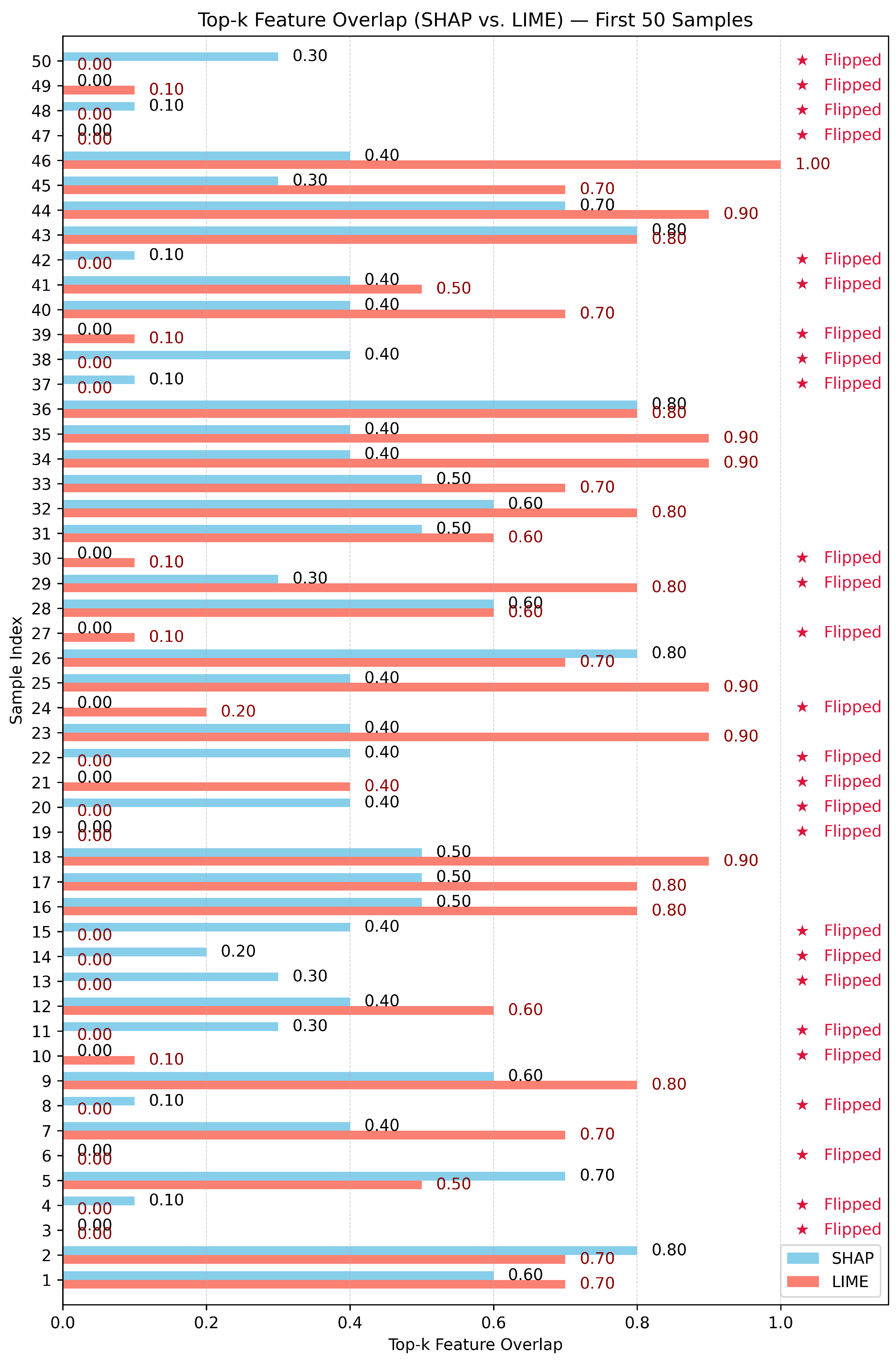

- Robustness assessment on the BoT-IoT, Edge-IIoT, and N-BaIoT datasets to compare the stability of SHAP and LIME explanations under adversarial perturbations: We measured how consistently each method preserved its feature attributions before and after DeepFool-based attacks. This allowed us to determine which explainer (SHAP or LIME) produced more robust explanations across the different datasets.

- Introduction of a new perspective on robustness evaluation based on the overlap of top-k feature assessments: Our approach diverges from existing robustness evaluation methods [19], which primarily rely on visual reproductions or simple numerical differences in raw attribution values. Instead, we focus on the semantic integrity of explainability, that is, whether the most important features identified by an XAI method remain stable even when the model is perturbed through adversarial training. For each case, we measure the overlap between the top-k features obtained after applying different perturbation levels using the DeepFool method and the top-k features of the original feature set. This overlap ratio serves as a quantitative measure of robustness.

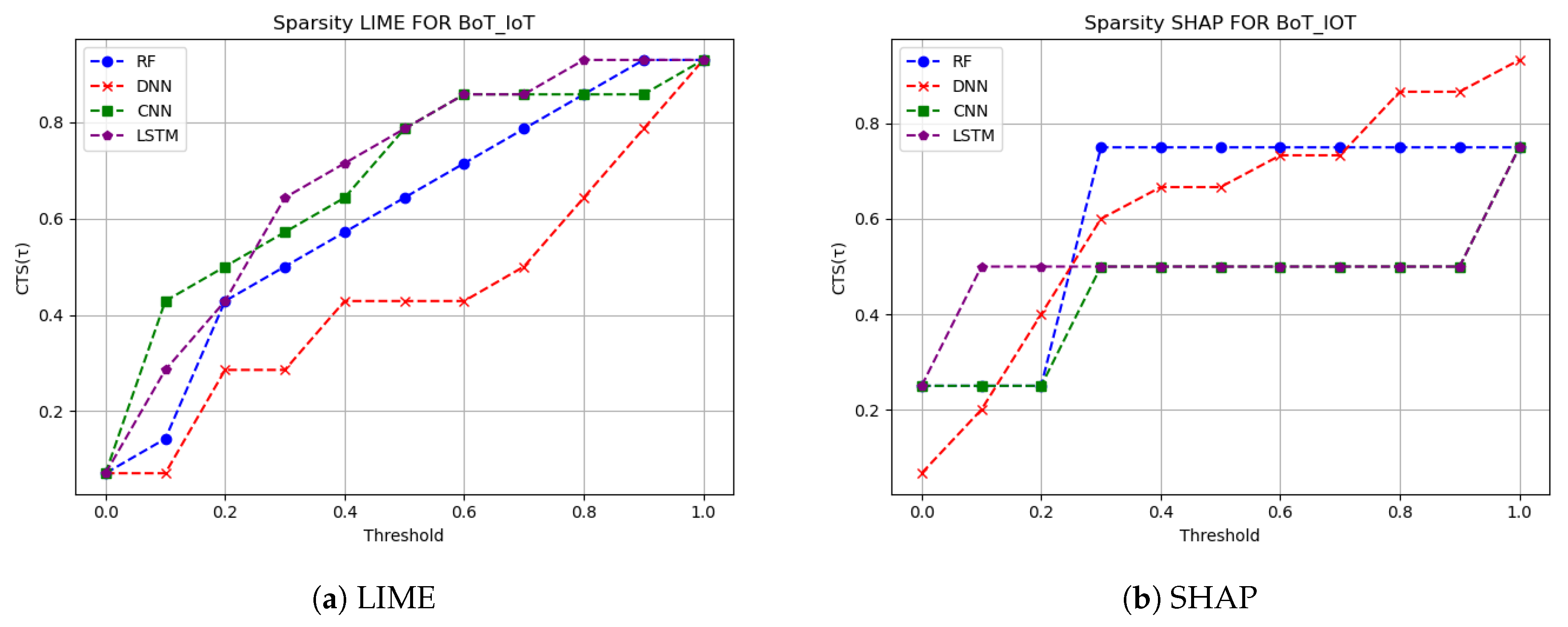

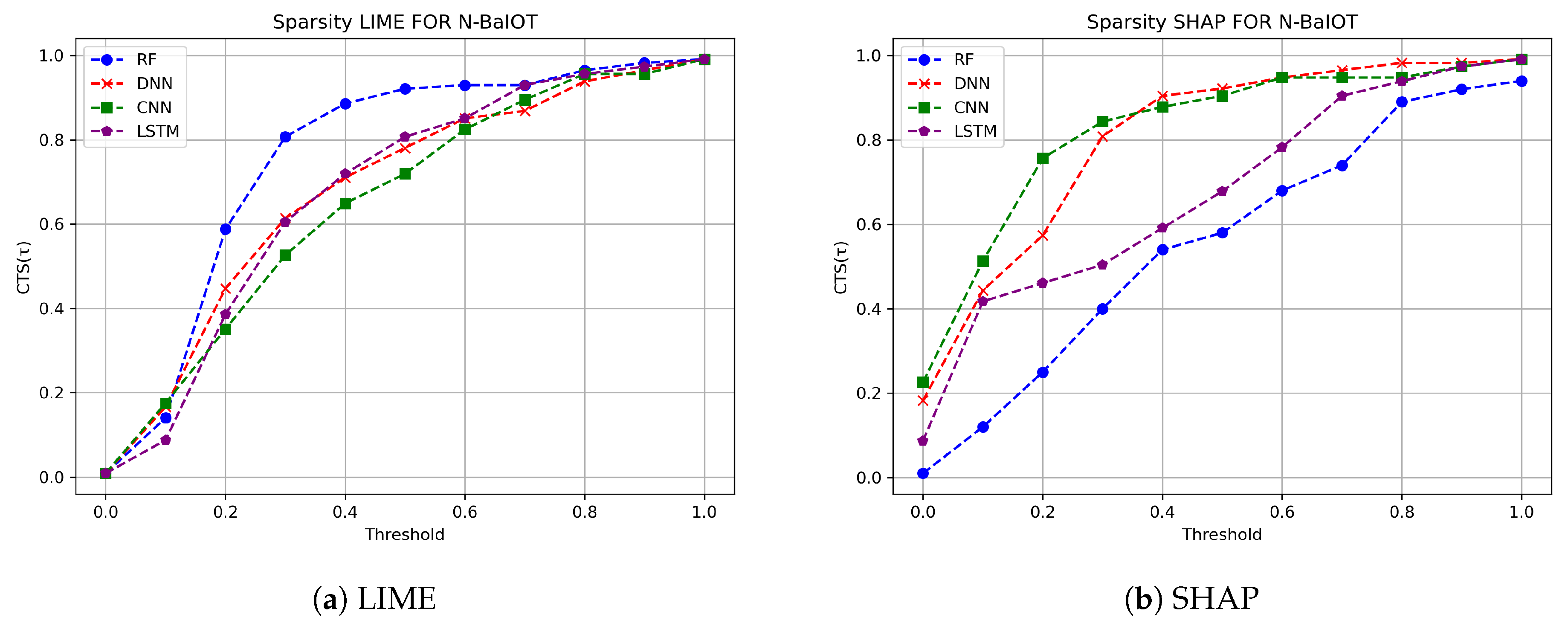

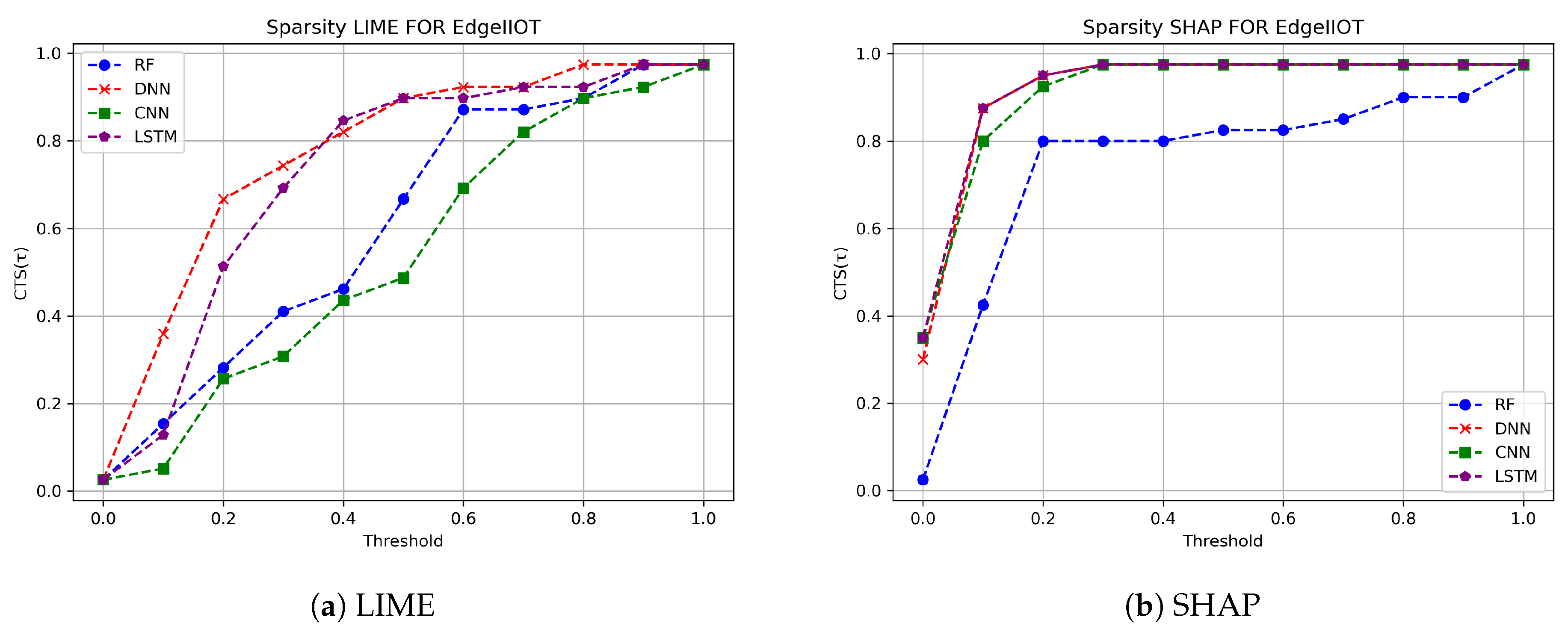

- Sparsity measures: We provide a review of our sparsity curves to demonstrate how the explanation method ranks features across threshold values.

- Realism and Generalization: Our method provides a dataset-agnostic, attack-focused evaluation, enabling XAI methods to support a scalable framework for IDS and other robustness evaluation tasks.

2. Related Works

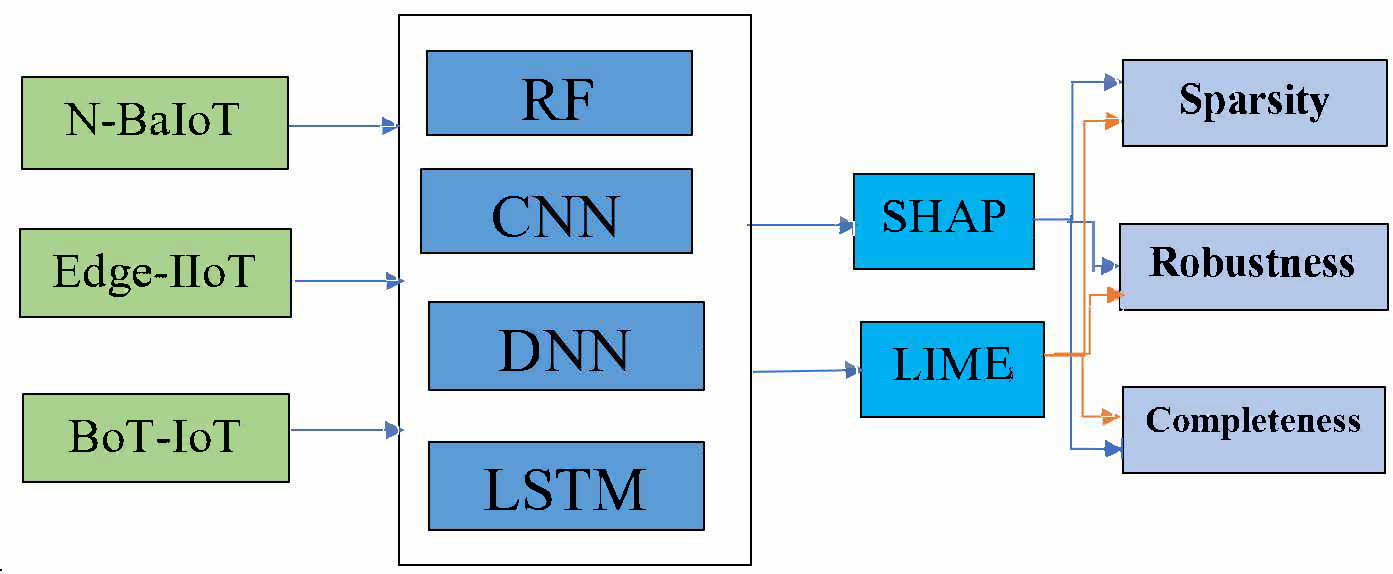

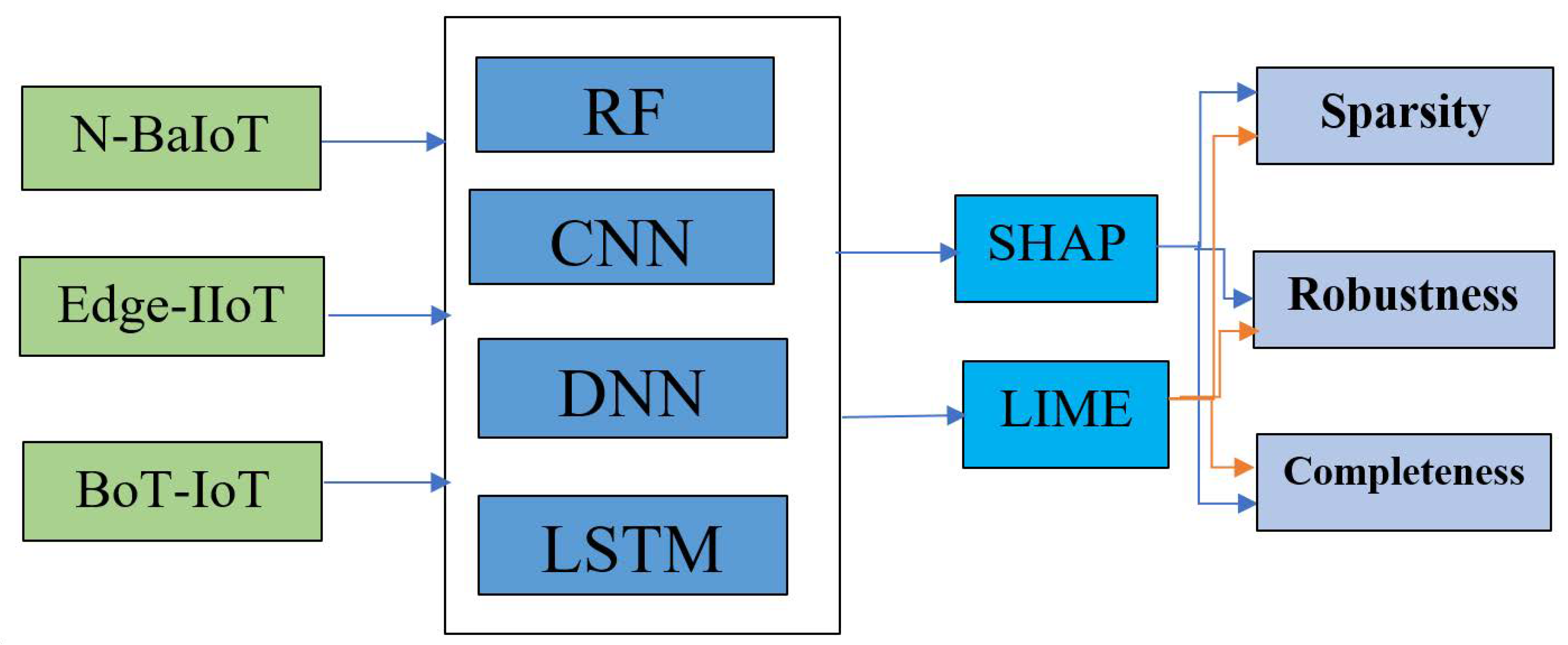

3. Methodology

3.1. Datasets Description

3.2. Machine Learning Model

3.3. XAI Methods

3.4. XAI Evaluation Metrics

4. Results and Discussion

4.1. Results of Sparsity Metric

4.2. Results of Completeness Metric

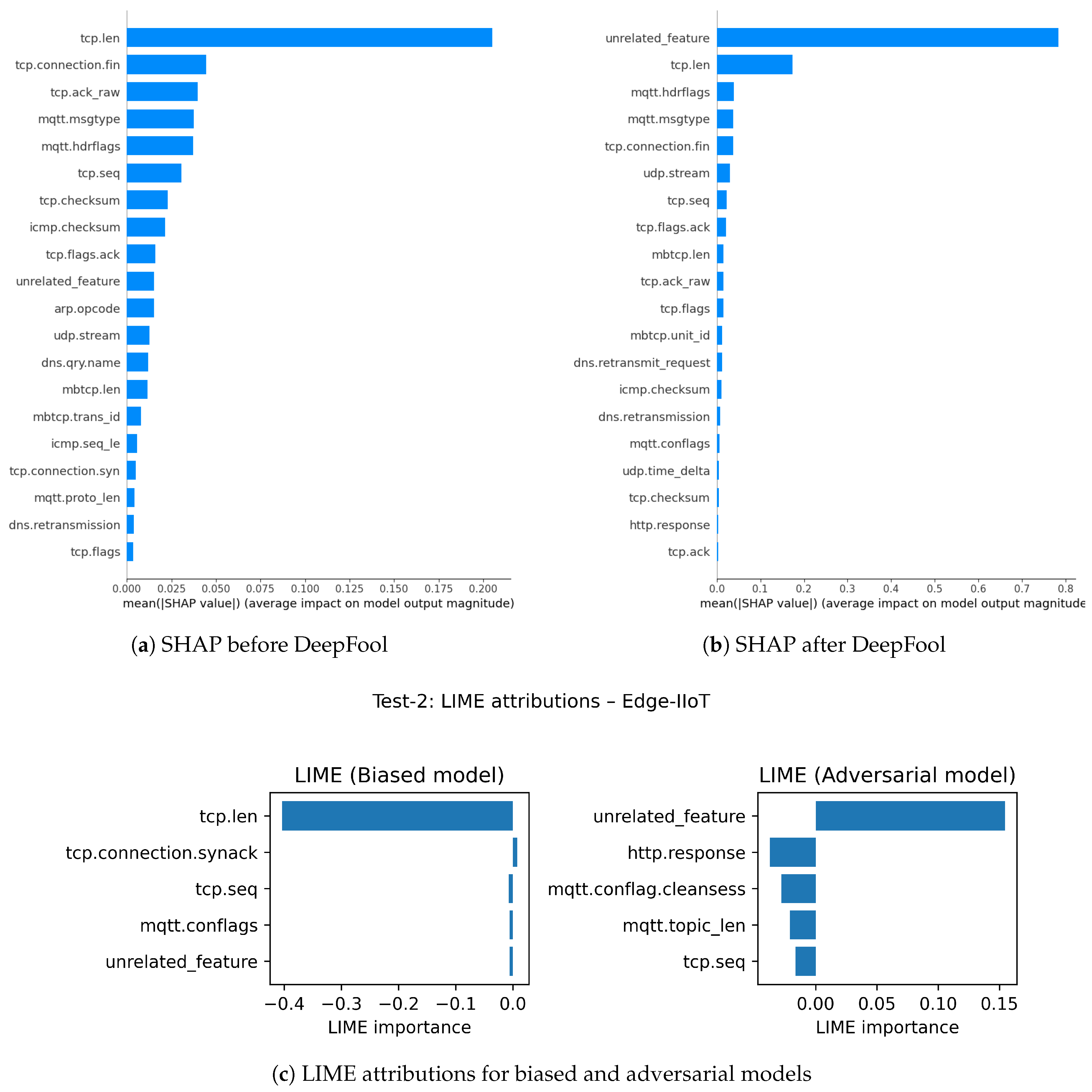

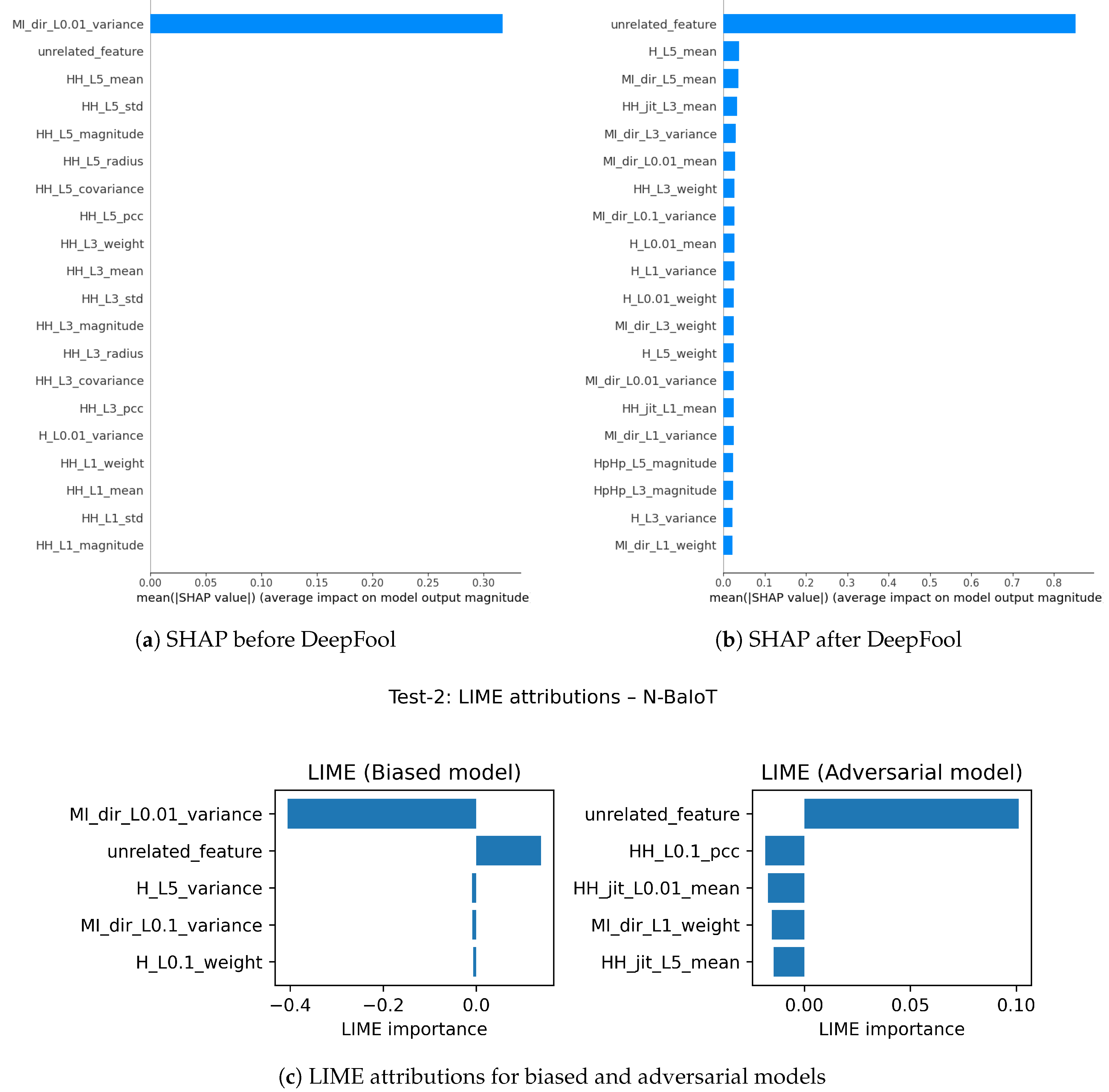

4.3. Results of Robustness Metrics

5. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Marzano, A.; Alexander, D.; Fonseca, O.; Fazzion, E.; Hoepers, C.; Steding-Jessen, K.; Chaves, M.; Cunha, I.; Guedes, D.; Meira, W. The evolution of Bashlite and Mirai IoT botnets. In Proceedings of the 2018 IEEE Symposium on Computers and Communications (ISCC), 2018; IEEE; pp. 00813–00818. [Google Scholar] [CrossRef]

- Isong, B.; Kgote, O.; Abu-Mahfouz, A. Insights into modern intrusion detection strategies for Internet of Things ecosystems. Electronics 2024, 13(12), 2370. [Google Scholar] [CrossRef]

- Shafiq, M.; Tian, Z.; Sun, Y.; Du, X.; Guizani, M. Selection of effective machine learning algorithm and BoT-IoT attacks traffic identification for Internet of Things in smart city. Future Generation Computer Systems 2020, 107, 433–442. [Google Scholar] [CrossRef]

- Saranya, T.; Sridevi, S.; Deisy, C.; Chung, T.D.; Khan, M.A. Performance analysis of machine learning algorithms in intrusion detection system: A review. Procedia Computer Science 2020, 171, 1251–1260. [Google Scholar] [CrossRef]

- Musleh, D.; Alotaibi, M.; Alhaidari, F.; Rahman, A.; Mohammad, R.M. Intrusion detection system using feature extraction with machine learning algorithms in IoT. Journal of Sensor and Actuator Networks 2023, 12(2), 29. [Google Scholar] [CrossRef]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. Why should I trust you? Explaining the predictions of any classifier. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, 2016; pp. 1135–1144. [Google Scholar] [CrossRef]

- Capuano, N.; Fenza, G.; Loia, V.; Stanzione, C. Explainable artificial intelligence in cybersecurity: A survey. IEEE Access 2022, 10, 93575–93600. [Google Scholar] [CrossRef]

- Almuqren, L.; Maashi, M.S.; Alamgeer, M.; Mohsen, H.; Hamza, M.A.; Abdelmageed, A.A. Explainable artificial intelligence enabled intrusion detection technique for secure cyber-physical systems. Applied Sciences 2023, 13(5), 3081. [Google Scholar] [CrossRef]

- Abou El Houda, Z.; Brik, B.; Senouci, S.-M. A novel IoT-based explainable deep learning framework for intrusion detection systems. IEEE Internet of Things Magazine 2022, 5(2), 20–23. [Google Scholar] [CrossRef]

- Alani, M.M.; Damiani, E. XRecon: An explainable IoT reconnaissance attack detection system based on ensemble learning. Sensors 2023, 23(11), 5298. [Google Scholar] [CrossRef]

- Aysel, H.I.; Cai, X.; Prugel-Bennett, A. Explainable Artificial Intelligence: Advancements and Limitations. Applied Sciences 2025, 15(13), 7261. [Google Scholar] [CrossRef]

- Kök, I.; Okay, F.Y.; Muyanlı, Ö.; Özdemir, S. Explainable artificial intelligence (XAI) for Internet of Things: A survey. IEEE Internet of Things Journal 2023, 10(16), 14764–14779. [Google Scholar] [CrossRef]

- Moustafa, N.; Koroniotis, N.; Keshk, M.; Zomaya, A.Y.; Tari, Z. Explainable intrusion detection for cyber defences in the Internet of Things: Opportunities and solutions. IEEE Communications Surveys & Tutorials 2023, 25(3), 1775–1807. [Google Scholar] [CrossRef]

- Neupane, S.; Ables, J.; Anderson, W.; Mittal, S.; Rahimi, S.; Banicescu, I.; Seale, M. Explainable intrusion detection systems (X-IDS): A survey of current methods, challenges, and opportunities. IEEE Access 2022, 10, 112392–112415. [Google Scholar] [CrossRef]

- Arisdakessian, S.; Wahab, O.A.; Mourad, A.; Otrok, H.; Guizani, M. A survey on IoT intrusion detection: Federated learning, game theory, social psychology, and explainable AI as future directions. IEEE Internet of Things Journal 2022, 10(5), 4059–4092. [Google Scholar] [CrossRef]

- Hassija, V.; Chamola, V.; Mahapatra, A.; Singal, A.; Goel, D.; Huang, K.; Scardapane, S.; Spinelli, I.; Mahmud, M.; Hussain, A. Interpreting black-box models: A review on explainable artificial intelligence. Cognitive Computation 2024, 16(1), 45–74. [Google Scholar] [CrossRef]

- Lundberg, S.M.; Lee, S.-I. A unified approach to interpreting model predictions. Advances in Neural Information Processing Systems 2017, 30. [Google Scholar]

- Tiwari, R. Explainable AI (XAI) and its applications in building trust and understanding in AI decision making. International Journal of Scientific Research in Engineering and Management. 2023, 7, 1–13. [Google Scholar] [CrossRef]

- Slack, D.; Hilgard, S.; Jia, E.; Singh, S.; Lakkaraju, H. Fooling LIME and SHAP: Adversarial attacks on post hoc explanation methods. In Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society; 2020; pp. 180–186. [Google Scholar] [CrossRef]

- Warnecke, A.; Arp, D.; Wressnegger, C.; Rieck, K. Evaluating explanation methods for deep learning in security. In IEEE European Symposium on Security and Privacy (EuroS&P); 2020, pp. 158–174. [CrossRef]

- Arreche, O.; Guntur, T.R.; Roberts, J.W.; Abdallah, M. E-XAI: Evaluating black-box explainable AI frameworks for network intrusion detection. IEEE Access 2024, 12, 23954–23988. [Google Scholar] [CrossRef]

- Nazat, S.; Arreche, O.; Abdallah, M. On Evaluating Black-Box Explainable AI Methods for Enhancing Anomaly Detection in Autonomous Driving Systems. Sensors 2024, 24(11), 3515. [Google Scholar] [CrossRef]

- Moosavi-Dezfooli, S.-M.; Fawzi, A.; Frossard, P. DeepFool: A simple and accurate method to fool deep neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2016. [Google Scholar] [CrossRef]

- Le, T.-T.-H.; Kim, H.; Kang, H.; Kim, H. Classification and explanation for intrusion detection system based on ensemble trees and SHAP method. Sensors 2022, 22(3), 1154. [Google Scholar] [CrossRef]

- Chen, X.; Liu, M.; Wang, Z.; Wang, Y. Explainable deep learning-based feature selection and intrusion detection method on the Internet of Things. Sensors 2024, 24(16), 5223. [Google Scholar] [CrossRef]

- Hermosilla, P.; Díaz, M.; Berríos, S.; Allende-Cid, H. Use of explainable artificial intelligence for analyzing and explaining intrusion detection systems. Computers 2025, 14(5), 160. [Google Scholar] [CrossRef]

- Roshinta, T.A.; Gábor, S. A comparative study of LIME and SHAP for enhancing trustworthiness and efficiency in explainable AI systems. In Proceedings of the IEEE International Conference on Computing (ICOCO); IEEE, 2024; pp. 134–139. [Google Scholar] [CrossRef]

- Li, M.; Sun, H.; Huang, Y.; Chen, H. Shapley value: From cooperative game to explainable artificial intelligence. Autonomous Intelligent Systems 2024, 4(1), 2. [Google Scholar] [CrossRef]

- Koroniotis, N.; Moustafa, N.; Sitnikova, E.; Turnbull, B. Towards the development of realistic botnet dataset in the Internet of Things for network forensic analytics: BoT-IoT dataset. Future Generation Computer Systems 2019, 100, 779–796. [Google Scholar] [CrossRef]

- Meidan, Y.; Bohadana, M.; Mathov, Y.; Mirsky, Y.; Shabtai, A.; Breitenbacher, D. N-BaIoT—Network-based detection of IoT botnet attacks using deep autoencoders. IEEE Pervasive Computing 2018, 17(3), 12–22. [Google Scholar] [CrossRef]

- Ferrag, M.A.; Friha, O.; Hamouda, D.; Maglaras, L.; Janicke, H. Edge-IIoTset: A new comprehensive realistic cyber security dataset of IoT and IIoT applications for centralized and federated learning. IEEE Access 2022, 10, 40281–40306. [Google Scholar] [CrossRef]

- Khan, I.A.; Moustafa, N.; Pi, D.; Sallam, K.M.; Zomaya, A.Y.; Li, B. A new explainable deep learning framework for cyber threat discovery in industrial IoT networks. IEEE Internet of Things Journal 2021, 9(13), 11604–11613. [Google Scholar] [CrossRef]

- Abou El Houda, Z.; Brik, B.; Khoukhi, L. Why should I trust your IDS? An explainable deep learning framework for intrusion detection systems in Internet of Things networks. IEEE Open Journal of the Communications Society 2022, 3, 1164–1176. [Google Scholar] [CrossRef]

- Patil, S.; Varadarajan, V.; Mazhar, S.; Sahibzada, A.; Ahmed, N.; Sinha, O.; Kumar, S.; Shaw, K.; Kotecha, K. Explainable artificial intelligence for intrusion detection system. Electronics 2022, 11(19), 3079. [Google Scholar] [CrossRef]

- Zhang, Y.; Gu, S.; Song, J.; Pan, B.; Bai, G.; Zhao, L. XAI benchmark for visual explanation. arXiv 2023, arXiv:2310.08537. [Google Scholar] [CrossRef]

- Fazzolari, M.; Ducange, P.; Marcelloni, F. An explainable intrusion detection system for IoT networks. In Proceedings of the IEEE International Conference on Fuzzy Systems (FUZZ); IEEE, 2023; pp. 1–6. [Google Scholar] [CrossRef]

- Larriva-Novo, X.; Sánchez-Zas, C.; Villagrá, V.A.; Marín-Lopez, A.; Berrocal, J. Leveraging explainable artificial intelligence in real-time cyberattack identification: Intrusion detection system approach. Applied Sciences 2023, 13(15), 8587. [Google Scholar] [CrossRef]

- El-gezawy, A.M.M.; Abdel-Kader, H.; Ali, A.H. A new XAI evaluation metric for classification. International Journal of Computers and Information 2023, 10(3), 58–62. [Google Scholar] [CrossRef]

- Duraz, R.; Espes, D.; Francq, J.; Vaton, S. Explainability-based metrics to help cyber operators find and correct misclassified cyberattacks. In Proceedings of the 2023 on Explainable and Safety Bounded, Fidelitous, Machine Learning for Networking; 2023; pp. 9–15. [Google Scholar] [CrossRef]

- Yu, H.; Benois-Pineau, J.; Bourqui, R.; Giot, R.; Zhukov, A. Mean Opinion Score as a new metric for user-evaluation of XAI methods. In International Conference on Pattern Recognition; Springer, 2024; pp. 443–457. https://arxiv.org/abs/2407.20427.

- Hedström, A.; Weber, L.; Lapuschkin, S.; Höhne, M. Sanity checks revisited: An exploration to repair the model parameter randomisation test. arXiv 2024, arXiv:2401.06465. Available online: https://arxiv.org/abs/2401.06465.

- Rumelhart, D.E.; Hinton, G.E.; Williams, R.J. Learning internal representations by error propagation. In Parallel Distributed Processing: Explorations in the Microstructure of Cognition. Volume 1: Foundations; 1986; pp. 318–362. [Google Scholar]

- Anderssen, E.; Dyrstad, K.; Westad, F.; Martens, H. Reducing over-optimism in variable selection by cross-model validation. Chemometrics and Intelligent Laboratory Systems 2006, 84(1–2), 69–74. [Google Scholar] [CrossRef]

- Goldstein, A.; Kapelner, A.; Bleich, J.; Pitkin, E. Peeking inside the black box: Visualizing statistical learning with plots of individual conditional expectation. Journal of Computational and Graphical Statistics 2015, 24(1), 44–65. Available online: https://arxiv.org/abs/1309.6392. [CrossRef]

- Greenwell, B.M. pdp: An R package for constructing partial dependence plots. The R Journal 2017. [Google Scholar] [CrossRef]

| Datasets | Number of Labels | Number of Features | Number of Samples |

|---|---|---|---|

| Edge-IIoT | 15 | 63 | 2,219,201 |

| N-BaIoT | 11 | 115 | 1,854,174 |

| BoT-IoT | 4 | 19 | 3,668,521 |

| Model | BoT-IoT | N-BaIoT | Edge-IIoT | |||

|---|---|---|---|---|---|---|

| SHAP | LIME | SHAP | LIME | SHAP | LIME | |

| RF | 0.62500 | 0.60714 | 0.55949 | 0.76491 | 0.76250 | 0.60897 |

| DNN | 0.62333 | 0.43571 | 0.81173 | 0.68421 | 0.92875 | 0.77820 |

| CNN | 0.45000 | 0.67142 | 0.83217 | 0.65526 | 0.92124 | 0.53717 |

| LSTM | 0.50000 | 0.69285 | 0.67913 | 0.68157 | 0.93124 | 0.72948 |

| Dataset | SHAP Mean (95% CI) | LIME Mean (95% CI) | Paired t-test (p) | Wilcoxon (p) |

|---|---|---|---|---|

| Edge-IIoT | 0.799 (0.777, 0.821) | 0.851 (0.821, 0.881) | 0.007 | 0.006 |

| N-BaIoT | 0.319 (0.272, 0.366) | 0.431 (0.354, 0.508) | 0.000 | 0.000 |

| BoT-IoT | 0.450 (0.415, 0.485) | 0.851 (0.821, 0.881) | 0.000 | 0.000 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).