Submitted:

02 April 2026

Posted:

03 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- The results demonstrate that Chain-of-Thought (CoT) prompting does not consistently enhance performance in Bengali text classification and, in several cases, leads to measurable degradation. This finding underscores the instability of prompt-based adaptation strategies in low-resource settings where pretraining exposure to the target language is limited.

- Reasoning-optimized large language models, such as DeepSeek-R1, exhibit significant performance deterioration across multiple tasks. This reveals a critical limitation of scaling reasoning capacity without sufficient linguistic grounding, particularly in low-resource language contexts.

- Among the evaluated models, Gemma-3-4B achieves the most stable and consistent performance across diverse tasks under both in-context learning and parameter-efficient fine-tuning. Its balanced cross-task generalization highlights its suitability as a backbone model for multitask Bengali text classification.

2. Related Work

2.1. Text Classification in HRLs

2.2. Text Classification in LRLs

2.2.1. Text Classification in Bengali

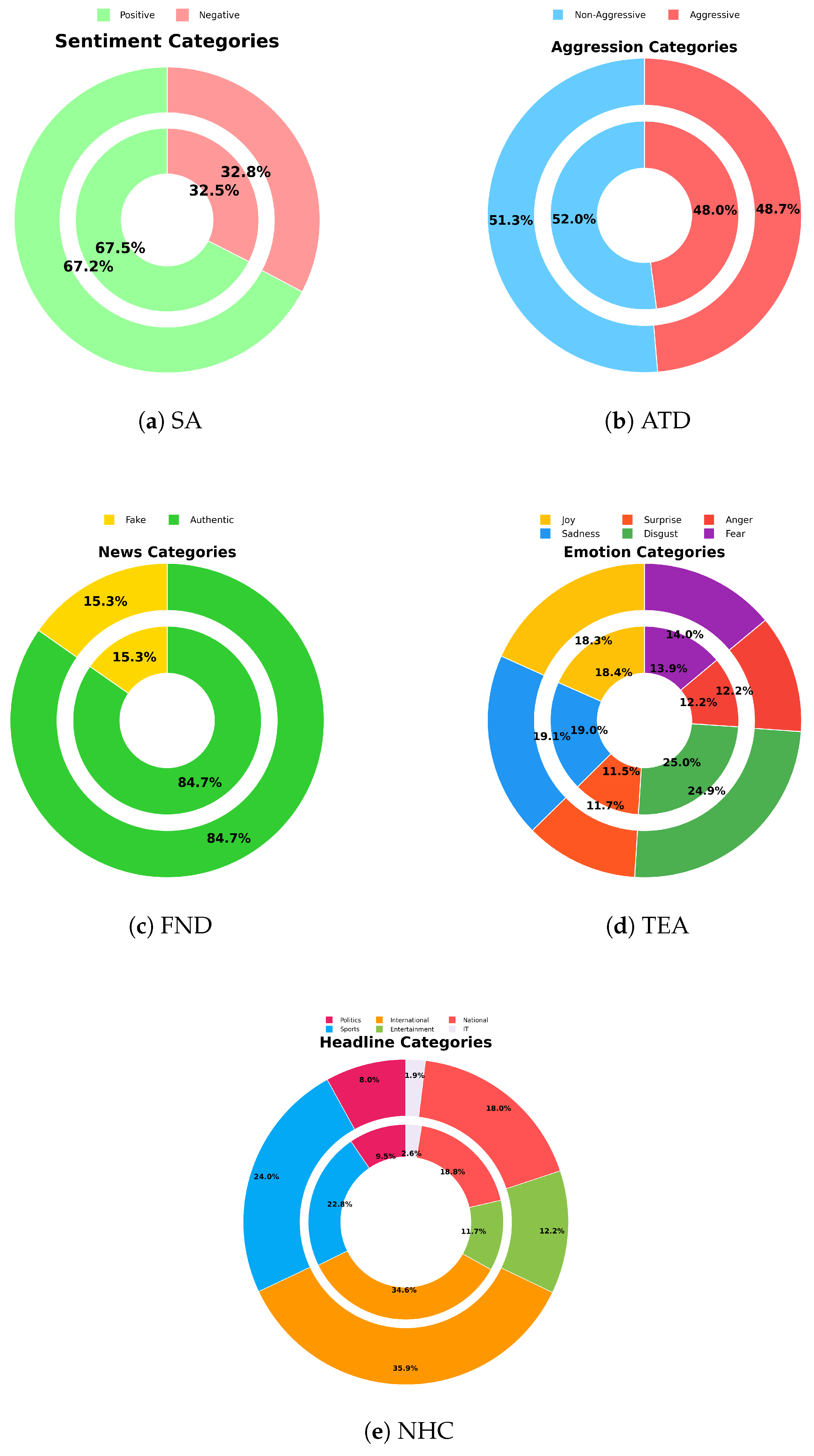

3. Datasets

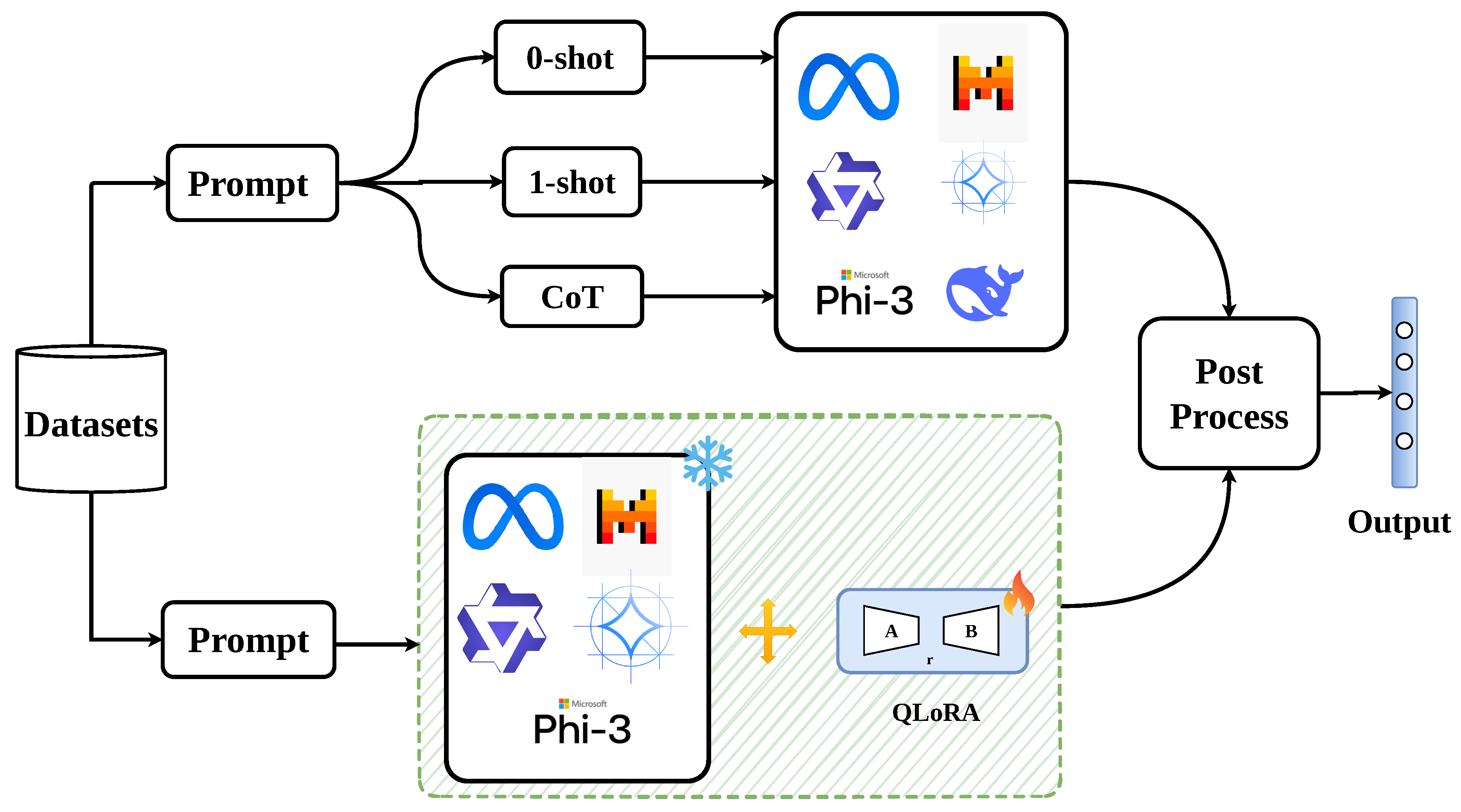

4. System Overview

4.1. Models

4.2. In-Context Learning (ICL)

- Zero-shot Prompt: The model is given only the task description without any examples.

- One-shot Prompt: The model is provided with a single example of the task along with its answer.

- Chain-of-Thought (CoT) Prompt: The model is first given the task context, and then guided to reason through the problem step by step, rather than producing a direct answer.

4.3. Parameter Efficient Fine-Tuning (PEFT)

4.4. Experimental Setup

5. Results and Analysis

5.1. Ablation Study

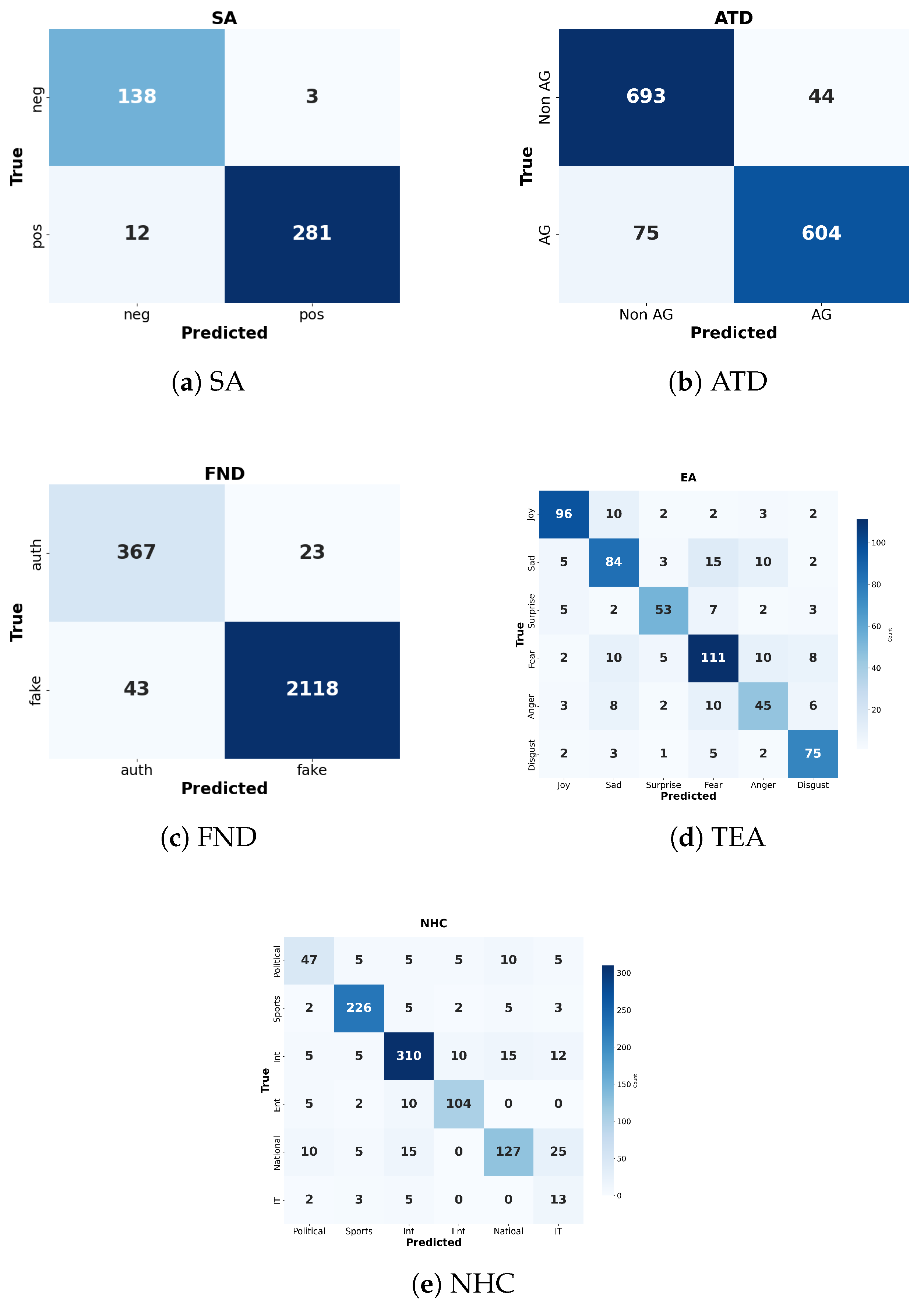

5.2. Error Analysis

5.2.1. Quantitative Error Analysis

5.2.2. Qualitative Error Analysis

6. Conclusions

Limitations

- The instruction-tuned Gemma-3 4B model achieves high accuracy on binary classification tasks; however, its performance declines on complex multiclass classification tasks.

- The model was adapted using Quantized Low-Rank Adaptation (QLoRA) rather than full fine-tuning, which may have potentially restricted task alignment and overall classification accuracy.

- Prompting strategies, including zero-shot, one-shot, and chain-of-thought approaches, yield inconsistent results across different tasks and model architectures.

- Several instruction-tuned models lack explicit training on Bengali-language data, which limits their ability to capture Bengali-specific linguistic features.

- This study relies on monolingual Bengali datasets and excludes Bangla-English code-mixed data, which is common in real-world usage.

- The research scope is restricted to classification tasks and does not investigate additional natural language processing (NLP) tasks, including text generation, summarization, or question answering.

Institutional Review Board Statement

Data Availability Statement

Acknowledgments

Appendix A. Prompt Examples

| Task | Prompt |

|---|---|

| SA | Please classify the sentiment of this review. The answer should be either 1 (positive) or 0 (negative), based on the sentiment expressed. # Review: <review> |

| ATD | Please classify whether this Bengali sentence is Aggressive or non-aggressive. The answer should be either 1 (Aggressive) or 0 (Non-Aggressive), based on the sentence. # Sentence: <sentence> |

| FND | Please determine whether this Bengali news is genuine or not. The answer should be either 1 (Fake) or 0 (Authentic), based on the news. # News: <news> |

| NHC | Classify the following news headline into one of the predefined categories. Use the corresponding label number: politics: 0, sports: 1, international: 2, entertainment: 3, national: 4, IT: 5. # Headline: <headline> # Answer: [num] |

| TEA | Classify the emotion expressed in the following Bengali text into one of the predefined categories. Use the corresponding label number: 0: Joy, 1: Sadness, 2: Surprise, 3: Disgust, 4: Anger, 5: Fear. # Sentence: <sentence> # Answer: [label] |

| Task | Prompt |

|---|---|

| SA | Please classify the sentiment of this review. The answer should be either 1 (positive) or 0 (negative). Example: # Review: [Bengali Text] # Answer: 1 Now classify: # Review: <review> |

| ATD | Please classify whether this Bengali sentence is Aggressive or Non-Aggressive. The answer should be either 1 (Aggressive) or 0 (Non-Aggressive). Example: # Sentence: [Bengali Text] # Answer: 1 Now classify: # Sentence: <sentence> |

| FND | Please classify whether this Bengali news is Fake or Authentic. The answer should be either 1 (Fake) or 0 (Authentic). Example: # News: [Bengali Text] # Answer: 1 Now classify: # News: <news> |

| NHC | Classify the following news headline into one of the predefined categories. Use the corresponding label number: politics: 0, sports: 1, international: 2, entertainment: 3, national: 4, IT: 5. Example: # Headline: [Bengali Text] # Answer: 1 Now classify: # Headline: <headline> |

| TEA | Classify the emotion expressed in the following Bengali text into one of the predefined categories. Use the corresponding label number: 0: Joy, 1: Sadness, 2: Surprise, 3: Disgust, 4: Anger, 5: Fear. Example: # Sentence: [Bengali Text] # Answer: 0 Now classify: # Sentence: <sentence> |

| Task | Prompt |

|---|---|

| SA | Task: Classify the sentiment of the following Bangla review as 1 (positive) or 0 (negative). Steps: 1. Analyze Content: Break the review into phrases or sentences. 2. Identify Sentiment Indicators: Look for positive (e.g., “[Bengali Text]”) or negative (e.g., “[Bengali Text]”) words/phrases. 3. Evaluate Context: Consider how words are used, including sarcasm or mixed sentiment. 4. Determine Tone: Assess the overall tone based on key phrases. 5. Classify: Assign 1 for positive and 0 for negative. Now classify: # Review: <review> |

| ATD | Task: Classify whether the following Bangla sentence is Aggressive (1) or Non-Aggressive (0). Steps: 1. Analyze Content: Break the sentence into meaningful parts. 2. Identify Aggression Indicators: Look for threatening or harmful expressions (e.g., “[Bengali Text]”). 3. Evaluate Intensity: Check the severity of the words used and their target. 4. Determine Tone: Assess whether the tone is hostile or neutral. 5. Classify: Assign 1 for Aggressive and 0 for Non-Aggressive. Now classify: # Sentence: <sentence> |

| FND | Task: Classify whether the following Bangla news is Fake (1) or Authentic (0). Steps: 1. Analyze Content: Break the news statement into key claims. 2. Check Plausibility: Identify whether the claim sounds realistic or exaggerated. 3. Identify Unrealistic Elements: Look for impossible or illogical events (e.g., “[Bengali Text]”). 4. Evaluate Source-Like Tone: Assess if the sentence resembles factual reporting or satire. 5. Classify: Assign 1 for Fake and 0 for Authentic. Now classify: # News: <news> |

| NHC | Task: Classify the following Bangla news headline into one of the predefined categories: politics: 0, sports: 1, international: 2, entertainment: 3, national: 4, IT: 5. Steps: 1. Analyze Content: Break the headline into key subjects and actions. 2. Identify Keywords: Detect topic-related words (e.g., “[Bengali Text]” → sports, “[Bengali Text]” → politics). 3. Match with Categories: Compare keywords with the predefined category list. 4. Resolve Ambiguity: If multiple categories fit, select the most dominant one. 5. Classify: Assign the corresponding label number. Now classify: # Headline: <headline> |

| TEA | Task: Classify the emotion expressed in the following Bangla text into one of the predefined categories: 0: Joy, 1: Sadness, 2: Surprise, 3: Disgust, 4: Anger, 5: Fear. Steps: 1. Analyze Content: Break the sentence into emotion-bearing parts. 2. Identify Emotion Indicators: Look for explicit words or expressions (e.g., “[Bengali Text]” → Joy, “[Bengali Text]” → Anger). 3. Evaluate Context: Consider implied emotions or indirect expressions. 4. Determine Dominant Emotion: Choose the strongest emotion if multiple exist. 5. Classify: Assign the corresponding label number. Now classify: # Sentence: <sentence> |

References

- Bhowmick, A.; Jana, A. Sentiment analysis for Bengali using transformer based models. In Proceedings of the Proceedings of the 18th International Conference on Natural Language Processing (ICON), 2021; pp. 481–486. [Google Scholar]

- Farhad, F.I.J.; Imran, S.; Santo, M.M.H.; Khan, M.; Sakib, A.; Rahman, M.S.; Islam, M.A.; Haque, R.; Rahman, S. Addressing Misinformation in Bengali Media: A Hybrid Deep Learning Solution. In Proceedings of the 2024 27th International Conference on Computer and Information Technology (ICCIT), 2024; IEEE; pp. 774–779. [Google Scholar]

- Das, A.; Hoque, M.M.; Sharif, O.; Dewan, M.A.A.; Siddique, N. Temox: Classification of textual emotion using ensemble of transformers. IEEE Access 2023, 11, 109803–109818. [Google Scholar] [CrossRef]

- Rosni, T.R.; Hasan, M.; Mittra, T.; Ali, M.S.; Ferdaus, M.H. Aggressive Bangla Text Detection Using Machine Learning and Deep Learning Algorithms. In Proceedings of the International Conference on Computation of Artificial Intelligence & Machine Learning, 2024; Springer; pp. 174–183. [Google Scholar]

- Afroz, S.; Ahmed, K.; Hoque, M.M. Leveraging Multi-Task Learning for Detecting Aggression, Emotion, Violence, and Sentiment in Bengali Texts. In Proceedings of the 5th Muslims in ML Workshop co-located with NeurIPS; 2025. [Google Scholar]

- Ding, N.; Qin, Y.; Yang, G.; Wei, F.; Yang, Z.; Su, Y.; Hu, S.; Chen, Y.; Chan, C.M.; Chen, W.; et al. Parameter-efficient fine-tuning of large-scale pre-trained language models. Nature machine intelligence 2023, 5, 220–235. [Google Scholar] [CrossRef]

- Wang, H.; Ren, C.; Yu, Z. Multimodal sentiment analysis based on multiple attention. Engineering Applications of Artificial Intelligence 2025, 140, 109731. [Google Scholar] [CrossRef]

- Alex, N.; Lifland, E.; Tunstall, L.; Thakur, A.; Maham, P.; Riedel, C.J.; Hine, E.; Ashurst, C.; Sedille, P.; Carlier, A.; et al. RAFT: A real-world few-shot text classification benchmark. arXiv arXiv:2109.14076.

- Schick, T.; Schütze, H. True few-shot learning with Prompts—A real-world perspective. Transactions of the Association for Computational Linguistics 2022, 10, 716–731. [Google Scholar] [CrossRef]

- Loukas, L.; Stogiannidis, I.; Malakasiotis, P.; Vassos, S. Breaking the bank with ChatGPT: few-shot text classification for finance. arXiv 2023, arXiv:2308.14634. [Google Scholar]

- Wang, Z.; Pang, Y.; Lin, Y. Smart Expert System: Large Language Models as Text Classifiers. arXiv 2024, arXiv:2405.10523. [Google Scholar] [CrossRef]

- Marreddy, M.; Oota, S.R.; Vakada, L.S.; Chinni, V.C.; Mamidi, R. Multi-task text classification using graph convolutional networks for large-scale low resource language. In Proceedings of the 2022 international joint conference on neural networks (IJCNN), 2022; IEEE; pp. 1–8. [Google Scholar]

- Zhang, J.; Yan, K.; Mo, Y. Multi-task learning for sentiment analysis with hard-sharing and task recognition mechanisms. Information 2021, 12, 207. [Google Scholar] [CrossRef]

- Kapil, P.; Ekbal, A. A transformer based multi task learning approach to multimodal hate speech detection. Natural Language Processing Journal 2025, 11, 100133. [Google Scholar] [CrossRef]

- Singh, G.V.; Firdaus, M.; Chauhan, D.S.; Ekbal, A.; Bhattacharyya, P. Zero-shot multitask intent and emotion prediction from multimodal data: A benchmark study. Neurocomputing 2024, 569, 127128. [Google Scholar] [CrossRef]

- Kabir, M.; Laskar, M.T.R.; Nayeem, M.T.; Bari, M.S.; Hoque, E. Benllmeval: A comprehensive evaluation into the potentials and pitfalls of large language models on bengali nlp. arXiv 2023, arXiv:2309.13173. [Google Scholar]

- Hasan, M.A.; Das, S.; Anjum, A.; Alam, F.; Anjum, A.; Sarker, A.; Noori, S.R.H. Zero-and few-shot prompting with llms: A comparative study with fine-tuned models for bangla sentiment analysis. arXiv 2023, arXiv:2308.10783. [Google Scholar]

- Nazi, Z.A.; Hossain, M.R.; Mamun, F.A. Evaluation of open and closed-source LLMs for low-resource language with zero-shot, few-shot, and chain-of-thought prompting. Natural Language Processing Journal 2025, 10, 100124. [Google Scholar] [CrossRef]

- Barua, A.; Sharif, O.; Hoque, M.M. Multi-class sports news categorization using machine learning techniques: resource creation and evaluation. Procedia Computer Science 2021, 193, 112–121. [Google Scholar] [CrossRef]

- Sharif, O.; Hoque, M.M.; Kayes, A.S.M.; Nowrozy, R.; Sarker, I.H. Detecting Suspicious Texts Using Machine Learning Techniques. Applied Sciences 2020, 10. [Google Scholar] [CrossRef]

- Hossain, M.R.; Hoque, M.M.; Dewan, M.A.A.; Siddique, N.; Islam, M.N.; Sarker, I.H. Authorship Classification in a Resource Constraint Language Using Convolutional Neural Networks. IEEE Access 2021, 9, 100319–100338. [Google Scholar] [CrossRef]

- Hider, M.A.; Ahsan, S.; Hossain, J.; Hoque, M.M. Emotion Classification in Bengali-English Code-Mixed Data using Transformers. In Proceedings of the 2024 27th International Conference on Computer and Information Technology (ICCIT), 2024; pp. 3529–3535. [Google Scholar] [CrossRef]

- Ahsan, S.; Tasnia, F.; Tabassum, N.; Das, A.; Hoque, M.M.; Siddique, N. Classifying Textual Sentiment Using Bidirectional Encoder Representations from Transformers. In Proceedings of the 2023 26th International Conference on Computer and Information Technology (ICCIT), 2023; IEEE; pp. 1–6. [Google Scholar]

- Aodhora, S.R.; Hoque, M.M. TeTeC: Technical Text Classification in Bengali using Ensemble of Transformers. Proceedings of the 2024 International Conference on Recent Progresses in Science, Engineering and Technology (ICRPSET) 2024, 1–6. [Google Scholar] [CrossRef]

- Akther, A.; Alam, K.M.; Debnath, R. Automatic detection of manipulated Bangla news: A new knowledge-driven approach. Natural Language Processing Journal 2025, 11, 100155. [Google Scholar] [CrossRef]

- Hossain, M.R.; Hoque, M.M.; Dewan, M.A.A.; Hoque, E.; Siddique, N. AuthorNet: Leveraging attention-based early fusion of transformers for low-resource authorship attribution. Expert Systems with Applications 2025, 262, 125643. [Google Scholar] [CrossRef]

- Sharif, O.; Hoque, M.M. Tackling cyber-aggression: Identification and fine-grained categorization of aggressive texts on social media using weighted ensemble of transformers. Neurocomputing 2022, 490, 462–481. [Google Scholar] [CrossRef]

- Hossain, E.; Sharif, O.; Moshiul Hoque, M. Sentiment polarity detection on bengali book reviews using multinomial naive bayes. Progress in Advanced Computing and Intelligent Engineering: Proceedings of ICACIE 2020, 2021; Springer; pp. 281–292. [Google Scholar]

- Sharif, O.; Hossain, E.; Hoque, M.M. M-bad: A multilabel dataset for detecting aggressive texts and their targets. In Proceedings of the Proceedings of the Workshop on Combating Online Hostile Posts in Regional Languages during Emergency Situations, 2022; pp. 75–85. [Google Scholar]

- Hossain, M.Z.; Rahman, M.A.; Islam, M.S.; Kar, S. Banfakenews: A dataset for detecting fake news in bangla. arXiv arXiv:2004.08789.

- Hossain, E. Bangla News Headlines Categorization. GitHub repository, 2023. Accessed: Jun. 27, 2025.

- Grattafiori, A.; Dubey, A.; Jauhri, A.; Pandey, A.; Kadian, A.; Al-Dahle, A.; Letman, A.; Mathur, A.; Schelten, A.; Vaughan, A.; et al. The llama 3 herd of models. arXiv 2024, arXiv:2407.21783. [Google Scholar] [CrossRef]

- Yang, A.; Li, A.; Yang, B.; Zhang, B.; Hui, B.; Zheng, B.; Yu, B.; Gao, C.; Huang, C.; Lv, C.; et al. Qwen3 technical report. arXiv 2025, arXiv:2505.09388. [Google Scholar] [CrossRef]

- Abdin, M.; Aneja, J.; Awadalla, H.; Awadallah, A.; Awan, A.A.; Bach, N.; Bahree, A.; Bakhtiari, A.; Bao, J.; Behl, H.; et al. Phi-3 technical report: A highly capable language model locally on your phone. arXiv 2024, arXiv:2404.14219. [Google Scholar] [CrossRef]

- Bi, X.; Chen, D.; Chen, G.; Chen, S.; Dai, D.; Deng, C.; Ding, H.; Dong, K.; Du, Q.; Fu, Z.; et al. Deepseek llm: Scaling open-source language models with longtermism. arXiv 2024, arXiv:2401.02954. [Google Scholar]

- Team, G.; Kamath, A.; Ferret, J.; Pathak, S.; Vieillard, N.; Merhej, R.; Perrin, S.; Matejovicova, T.; Ramé, A.; Rivière, M.; et al. Gemma 3 technical report. arXiv 2025, arXiv:2503.19786. [Google Scholar] [CrossRef]

- Guo, D.; Yang, D.; Zhang, H.; Song, J.; Zhang, R.; Xu, R.; Zhu, Q.; Ma, S.; Wang, P.; Bi, X.; et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv 2025, arXiv:2501.12948. [Google Scholar]

- Dettmers, T.; Pagnoni, A.; Holtzman, A.; Zettlemoyer, L. Qlora: Efficient finetuning of quantized llms. Advances in neural information processing systems 2023, 36, 10088–10115. [Google Scholar]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D.; et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems 2022, 35, 24824–24837. [Google Scholar]

- Ahmed, K.; Osama, M.; Sharif, O.; Hossain, E.; Hoque, M.M. Bennumeval: A benchmark to assess llms’ numerical reasoning capabilities in bengali. Proceedings of the Findings of the Association for Computational Linguistics: ACL 2025, 2025, 17782–17799. [Google Scholar]

| Task | Data | LR | Classes | Class Labels | ||

|---|---|---|---|---|---|---|

| SA [28] | 434 | 1-562 | 456 | 10,909 | 2 | Positive, Negative |

| ATD [29] | 1,416 | 3-595 | 1897 | 31189 | 2 | Aggressive, Non-Aggressive |

| FND [30] | 2,551 | 390-3,761 | 54,508 | 6,67,351 | 2 | Fake, Authentic |

| NHC [31] | 1,000 | 2-9 | 1000 | 5932 | 6 | Politics, Sports, National, Entertainment, International, IT |

| TEA [3] | 625 | 4-97 | 832 | 14,508 | 6 | Joy, Sadness, Fear, Disgust, Anger, Surprise |

| Total | 6,026 | - | 58,693 | 729,889 | - | - |

| Task | Samples | ||

|---|---|---|---|

| SA | 1,010 | 999 | 25,443 |

| ATD | 10,000 | 13,491 | 2,11,096 |

| FND | 2,551 | 1,26,004 | 1554914 |

| NHC | 15,000 | 15,000 | 88,512 |

| TEA | 4,000 | 6543 | 1,14,674 |

| Total | 32,561 | 162,037 | 1,994,639 |

| Instruction | Input | Output |

|---|---|---|

| Find the sentiment of the following Bengali sentence. | [Bengali Text] (This movie was amazing.) | Positive |

| Detect whether the following Bengali sentence is aggressive or not. | [Bengali Text] (You’re a useless donkey!) | Aggressive |

| Classify whether the following Bengali news is fake or real. | [Bengali Text] (The Prime Minister said that 5,000 taka will be given to each person tomorrow.) | Fake |

| Categorize the topic of the following Bengali news headline. | [Bengali Text] (The country was not liberated for their meetings and rallies: Hanif.) | Politics |

| Detect the emotion expressed in the following Bengali sentence. | [Bengali Text] (I’m feeling very sad today.) | Sad |

| Task | Model | Zero-shot | One-shot | CoT | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Pr | Re | F-1 | Acc | Pr | Re | F-1 | Acc | Pr | Re | F-1 | Acc | ||

| SA | Llama | 0.93 | 0.92 | 0.93 | 0.94 | 0.97 | 0.95 | 0.96 | 0.97 | 0.97 | 0.95 | 0.94 | 0.94 |

| Gemma | 0.93 | 0.84 | 0.88 | 0.89 | 0.91 | 0.89 | 0.90 | 0.88 | 0.87 | 0.89 | 0.88 | 0.86 | |

| Mistral | 0.70 | 0.68 | 0.69 | 0.71 | 0.65 | 0.67 | 0.66 | 0.64 | 0.64 | 0.67 | 0.66 | 0.68 | |

| Qwen | 0.96 | 0.94 | 0.95 | 0.95 | 0.97 | 0.95 | 0.96 | 0.94 | 0.97 | 0.95 | 0.96 | 0.94 | |

| Phi | 0.71 | 0.73 | 0.72 | 0.70 | 0.64 | 0.67 | 0.66 | 0.68 | 0.65 | 0.67 | 0.66 | 0.64 | |

| Dseek7B | 0.81 | 0.79 | 0.80 | 0.83 | 0.84 | 0.82 | 0.83 | 0.85 | 0.84 | 0.82 | 0.83 | 0.85 | |

| DseekR1 | 0.88 | 0.90 | 0.89 | 0.87 | 0.89 | 0.91 | 0.90 | 0.88 | 0.89 | 0.91 | 0.90 | 0.88 | |

| ATD | Llama | 0.81 | 0.83 | 0.82 | 0.84 | 0.72 | 0.61 | 0.54 | 0.59 | 0.71 | 0.69 | 0.70 | 0.72 |

| Gemma | 0.81 | 0.79 | 0.80 | 0.82 | 0.78 | 0.76 | 0.77 | 0.79 | 0.77 | 0.75 | 0.76 | 0.78 | |

| Mistral | 0.60 | 0.63 | 0.62 | 0.61 | 0.62 | 0.58 | 0.54 | 0.57 | 0.60 | 0.62 | 0.61 | 0.59 | |

| Qwen | 0.70 | 0.68 | 0.69 | 0.71 | 0.66 | 0.64 | 0.65 | 0.67 | 0.71 | 0.69 | 0.70 | 0.72 | |

| Phi | 0.50 | 0.52 | 0.51 | 0.49 | 0.51 | 0.49 | 0.50 | 0.52 | 0.50 | 0.48 | 0.49 | 0.51 | |

| Dseek7B | 0.58 | 0.60 | 0.59 | 0.57 | 0.58 | 0.56 | 0.57 | 0.59 | 0.45 | 0.43 | 0.44 | 0.46 | |

| DseekR1 | 0.80 | 0.78 | 0.77 | 0.77 | 0.63 | 0.61 | 0.62 | 0.64 | 0.43 | 0.41 | 0.42 | 0.44 | |

| FND | Llama | 0.62 | 0.70 | 0.63 | 0.73 | 0.61 | 0.69 | 0.48 | 0.51 | 0.77 | 0.75 | 0.76 | 0.78 |

| Gemma | 0.87 | 0.89 | 0.88 | 0.86 | 0.85 | 0.83 | 0.84 | 0.86 | 0.79 | 0.77 | 0.78 | 0.80 | |

| Mistral | 0.60 | 0.54 | 0.49 | 0.58 | 0.50 | 0.48 | 0.49 | 0.51 | 0.49 | 0.47 | 0.48 | 0.50 | |

| Qwen | 0.70 | 0.68 | 0.69 | 0.71 | 0.64 | 0.62 | 0.63 | 0.65 | 0.74 | 0.72 | 0.73 | 0.75 | |

| Phi | 0.56 | 0.54 | 0.55 | 0.57 | 0.43 | 0.41 | 0.42 | 0.44 | 0.64 | 0.62 | 0.63 | 0.65 | |

| Dseek7B | 0.45 | 0.43 | 0.44 | 0.46 | 0.45 | 0.43 | 0.44 | 0.46 | 0.42 | 0.40 | 0.41 | 0.43 | |

| DseekR1 | 0.92 | 0.93 | 0.87 | 0.83 | 0.67 | 0.65 | 0.66 | 0.68 | 0.69 | 0.67 | 0.68 | 0.70 | |

| TEA | Llama | 0.61 | 0.42 | 0.32 | 0.33 | 0.63 | 0.58 | 0.52 | 0.53 | 0.48 | 0.47 | 0.45 | 0.46 |

| Gemma | 0.57 | 0.56 | 0.55 | 0.64 | 0.66 | 0.63 | 0.57 | 0.59 | 0.56 | 0.54 | 0.49 | 0.50 | |

| Mistral | 0.51 | 0.31 | 0.25 | 0.29 | 0.55 | 0.39 | 0.29 | 0.36 | 0.34 | 0.30 | 0.24 | 0.30 | |

| Qwen | 0.51 | 0.45 | 0.42 | 0.44 | 0.46 | 0.44 | 0.37 | 0.39 | 0.51 | 0.51 | 0.47 | 0.48 | |

| Phi | 0.47 | 0.40 | 0.37 | 0.39 | 0.30 | 0.25 | 0.24 | 0.25 | 0.30 | 0.28 | 0.24 | 0.31 | |

| Dseek7B | 0.30 | 0.28 | 0.23 | 0.29 | 0.32 | 0.30 | 0.27 | 0.29 | 0.35 | 0.34 | 0.32 | 0.25 | |

| DseekR1 | 0.48 | 0.47 | 0.45 | 0.46 | 0.44 | 0.42 | 0.41 | 0.47 | 0.38 | 0.37 | 0.36 | 0.40 | |

| NHC | Llama | 0.57 | 0.55 | 0.56 | 0.58 | 0.34 | 0.32 | 0.33 | 0.35 | 0.53 | 0.51 | 0.52 | 0.54 |

| Gemma | 0.71 | 0.76 | 0.71 | 0.71 | 0.69 | 0.67 | 0.68 | 0.70 | 0.72 | 0.70 | 0.71 | 0.73 | |

| Mistral | 0.14 | 0.12 | 0.13 | 0.15 | 0.37 | 0.35 | 0.36 | 0.38 | 0.29 | 0.27 | 0.28 | 0.30 | |

| Qwen | 0.23 | 0.21 | 0.22 | 0.24 | 0.43 | 0.41 | 0.42 | 0.44 | 0.43 | 0.41 | 0.42 | 0.44 | |

| Phi | 0.67 | 0.65 | 0.66 | 0.67 | 0.21 | 0.19 | 0.20 | 0.22 | 0.18 | 0.16 | 0.17 | 0.26 | |

| Dseek7B | 0.22 | 0.20 | 0.21 | 0.23 | 0.21 | 0.19 | 0.20 | 0.22 | 0.45 | 0.43 | 0.44 | 0.46 | |

| DseekR1 | 0.65 | 0.63 | 0.64 | 0.66 | 0.67 | 0.65 | 0.66 | 0.68 | 0.68 | 0.66 | 0.67 | 0.69 | |

| Task | Model | Pr | Re | F1 | Acc |

|---|---|---|---|---|---|

| SA | Llama-3.2-4B | 0.83 | 0.85 | 0.84 | 0.85 |

| Gemma-3-4B | 0.96 | 0.97 | 0.97 | 0.97 | |

| Mistral-7B-it | 0.89 | 0.92 | 0.90 | 0.91 | |

| Qwen-2.5-8B-it | 0.94 | 0.94 | 0.94 | 0.94 | |

| Phi-3 | 0.84 | 0.85 | 0.84 | 0.86 | |

| ATD | Llama-3.2-4B | 0.89 | 0.89 | 0.89 | 0.89 |

| Gemma-3-4B | 0.91 | 0.91 | 0.91 | 0.91 | |

| Mistral-7B-it | 0.93 | 0.93 | 0.93 | 0.93 | |

| Qwen-2.5-8B-it | 0.89 | 0.89 | 0.89 | 0.89 | |

| Phi-3 | 0.91 | 0.91 | 0.91 | 0.91 | |

| FND | Llama-3.2-4B | 0.89 | 0.89 | 0.89 | 0.89 |

| Gemma-3-4B | 0.97 | 0.97 | 0.97 | 0.97 | |

| Mistral-7B-it | 0.67 | 0.16 | 0.06 | 0.16 | |

| Qwen-2.5-8B-it | 0.83 | 0.84 | 0.83 | 0.85 | |

| Phi-3 | 0.93 | 0.73 | 0.81 | 0.73 | |

| TEA | Llama-3.2-4B | 0.63 | 0.61 | 0.62 | 0.63 |

| Gemma-3-4B | 0.74 | 0.74 | 0.74 | 0.74 | |

| Mistral-7B-it | 0.73 | 0.70 | 0.69 | 0.70 | |

| Qwen-2.5-8B-it | 0.67 | 0.67 | 0.67 | 0.67 | |

| Phi-3 | 0.65 | 0.66 | 0.65 | 0.66 | |

| NHC | Llama-3.2-4B | 0.76 | 0.77 | 0.76 | 0.77 |

| Gemma-3-4B | 0.83 | 0.83 | 0.83 | 0.83 | |

| Mistral-7B-it | 0.81 | 0.81 | 0.81 | 0.81 | |

| Qwen-2.5-8B-it | 0.77 | 0.77 | 0.77 | 0.77 | |

| Phi-3 | 0.27 | 0.40 | 0.30 | 0.40 |

| Set | LoRA r | LoRA | Dropout | Pr | Acc | F1 |

|---|---|---|---|---|---|---|

| 1 | 32 | 64 | 0 | 0.92 | 0.91 | 0.91 |

| 2 | 8 | 8 | 0 | 0.91 | 0.90 | 0.90 |

| 3 | 8 | 32 | 0.03 | 0.89 | 0.88 | 0.89 |

| 4 | 16 | 128 | 0 | 0.91 | 0.91 | 0.91 |

| 5 | 8 | 8 | 0 | 0.87 | 0.84 | 0.84 |

| 6 | 16 | 64 | 0 | 0.91 | 0.91 | 0.91 |

| 7 | 32 | 32 | 0 | 0.91 | 0.91 | 0.91 |

| 8 | 16 | 32 | 0 | 0.91 | 0.91 | 0.91 |

| 9 | 16 | 16 | 0.01 | 0.91 | 0.90 | 0.90 |

| 10 | 64 | 64 | 0.05 | 0.91 | 0.87 | 0.87 |

| Text Samples | Actual | Predicted |

|---|---|---|

| [Bengali Text] (How does a publisher publish such a book.) | Negative | Negative |

| [Bengali Text] (Oh human! You create it yourself, and then worship it yourself.) | Aggressive | Aggressive |

| [Bengali Text] (Even after adopting Islamic ideology and circumcision, the distinguished poet, Hakimi physician, and new Muslim Farhad Mazhar did not escape criticism.) | Fake | Fake |

| [Bengali Text] (The country wasn’t liberated for their rallies and protests: Hanif.) | Politics | National |

| [Bengali Text] (Riyad got angry at himself — what was the need to scare them? He could’ve just told the truth.) | Anger | Disgust |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).