Submitted:

01 April 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. What RSTA is—and What It Is Not

Definition.

3. Formalization: lArge Surfaces, Priority-Sensitive Sets, and Implications

Large-surface regime.

Priority-sensitive regime.

4. Worked Example: Recasting a WorkArena-Style Task as RSTA

5. Alternative Views

Counterargument 1: Stronger agents make recommendation obsolete.

Counterargument 2: RSTA is just offline RL or contextual bandits.

Counterargument 3: RSTA is simply Retrieval-Augmented Generation (RAG) for tools.

Counterargument 4: Infinite context windows will eliminate the need for filtering.

Counterargument 5: Agent-to-service interaction should be deterministic, not probabilistic.

6. Service-to-Agent Interfaces and Governance

7. A Focused Research Agenda

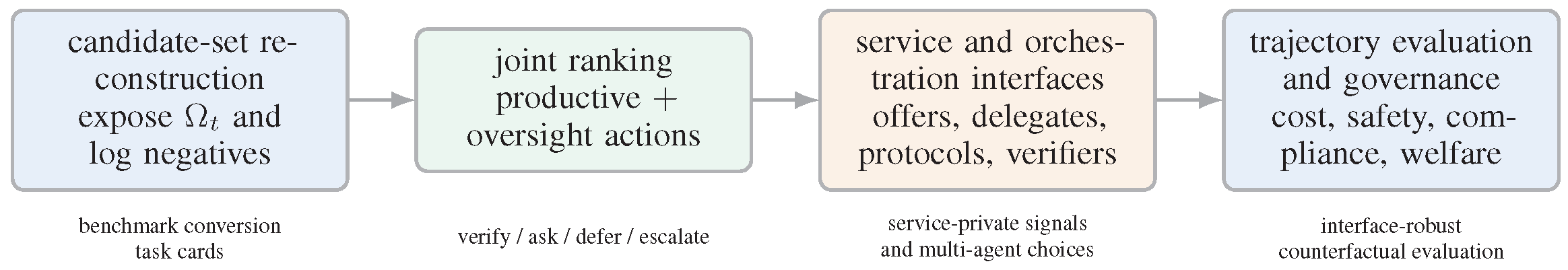

- 1.

- Candidate-set reconstruction.

- 2.

- Oversight as a recommendable.

- 3.

- Service-to-agent interface design.

- 4.

- Multi-agent orchestration as recommendation.

- 5.

- Interface-robust evaluation and governance.

8. Optimizing RSTA: From Clicks to Trajectories

9. Broader Implications: Interfaces, Data, and Market Structure

10. How the Claims can be Tested

Testing Claim 1.

Testing Claim 2.

Testing Claim 3.

11. Conclusions

Acknowledgments

Appendix A. Extended Related Work and Market Context

Recommendation and interaction paradigms.

Agent behavior, tool use, and evaluation.

Interoperability, runtimes, and public ecosystems.

Market and deployment context.

Appendix B. Boundaries Against Adjacent Literatures

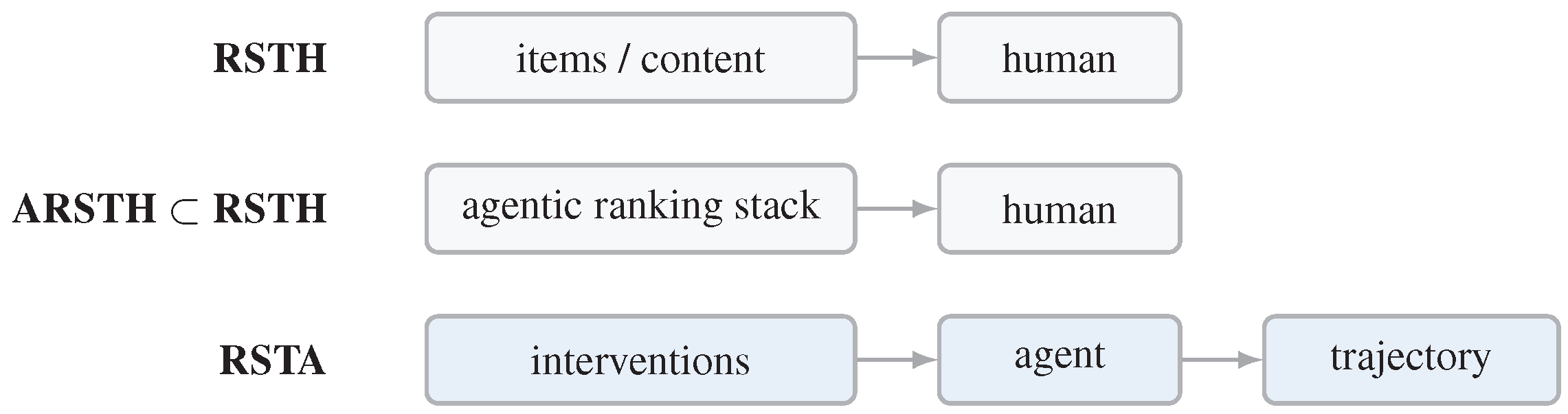

| Concept | Immediate recipient | Typical objects | Why it is not identical to RSTA |

|---|---|---|---|

| RSTH | human | items, content, ads | recipient and beneficiary typically coincide; ranking is exposed directly to a person |

| ARSTH | human | still human-facing recommendations | agentic modules improve a human-facing recommender system, but the final consumer remains human [14,15,16] |

| Planning or policy learning | agent | full action policies | broader umbrella; may omit explicit candidate-set semantics or ranking interfaces |

| Tool use or routing | agent | tools, models, documents | important RSTA subcases, but narrower than the full space of bundles, modes, and oversight choices [102,103,104,105,106] |

| Mixed initiative or shared autonomy | human and/or agent | control transfer | authority sharing matters, but the literature is not organized around ranking grounded intervention candidates for agent trajectories [37,75,76] |

| Learning to defer or delegation | model or human | ask, abstain, handoff | an important subset of recommendables inside RSTA, not the whole problem [39,107,108,109,110] |

Appendix C. Additional Formal Details and Derivation

Appendix D. Domain Case Studies for Service-To-Agent Recommendation

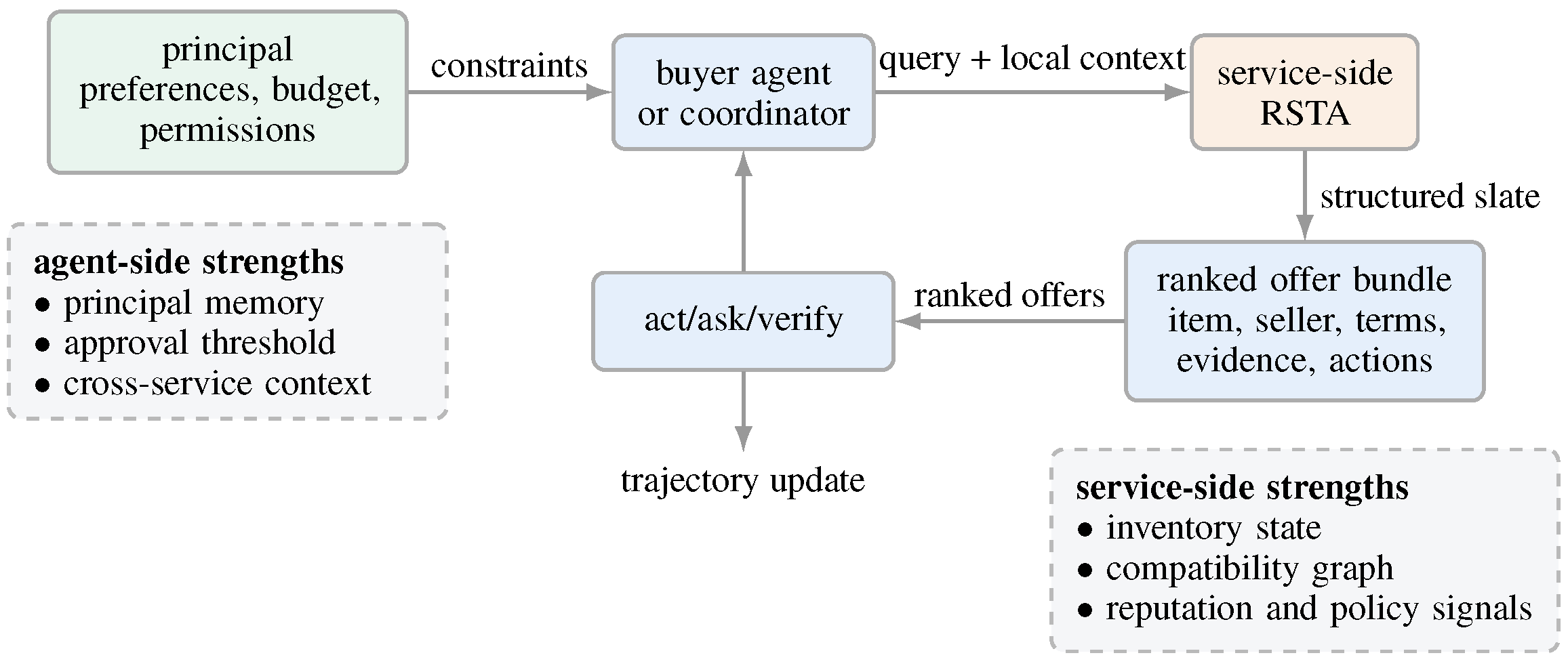

Appendix D.1. Shopping

| Layer | Typical contents | Why it matters | Where it is often known best |

|---|---|---|---|

| Object layer | products, sellers, substitutes, accessories, bundles | determines the feasible commercial options | service and cross-shop aggregators |

| Parameter layer | size, color, shipping, coupon, warranty, payment, return terms | changes utility even when the underlying product is fixed | service and principal jointly |

| Mode layer | shortlist, compare, ask, reserve, cart, buy, wait, verify | determines whether the agent should act or seek more evidence | principal and policy layer |

| Delegation layer | verifier, budget checker, comparison agent, human approval path | changes the reliability and governance of the trajectory | agent orchestration stack |

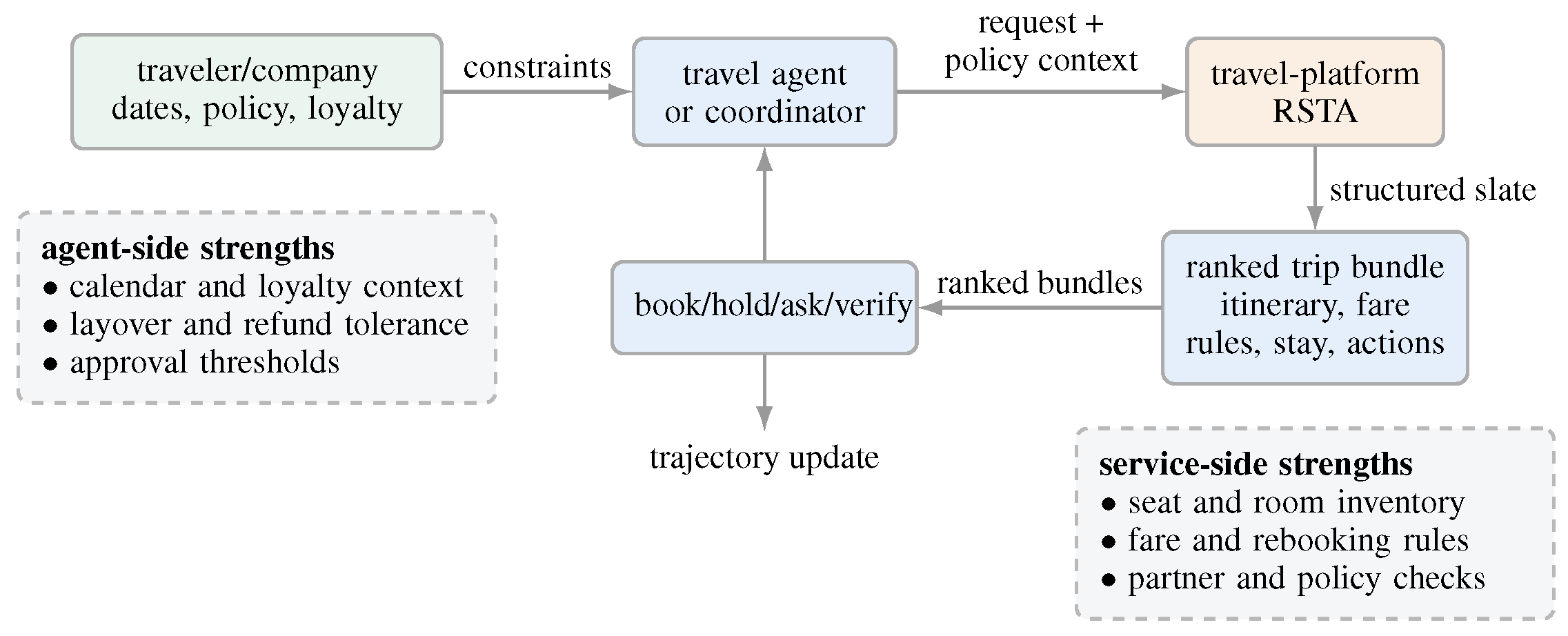

Appendix D.2. Travel

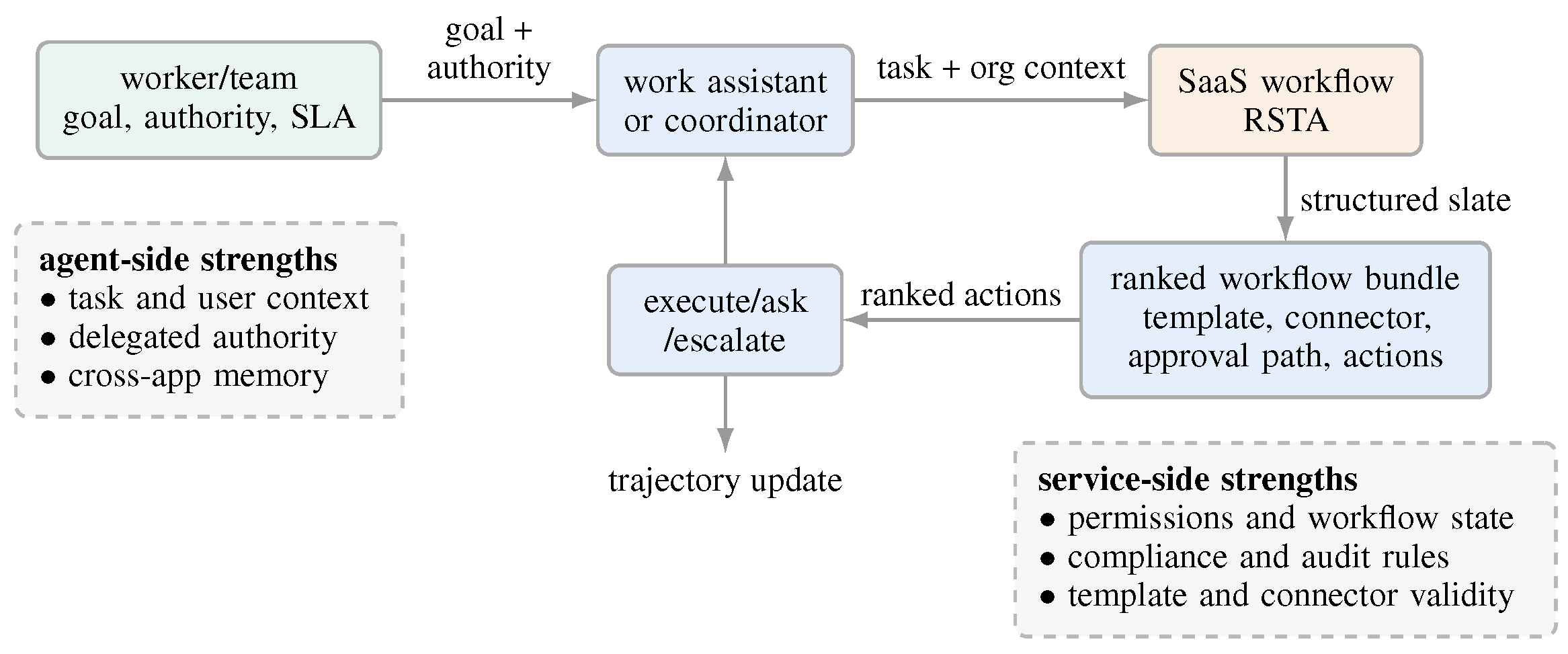

Appendix D.3. Enterprise SaaS

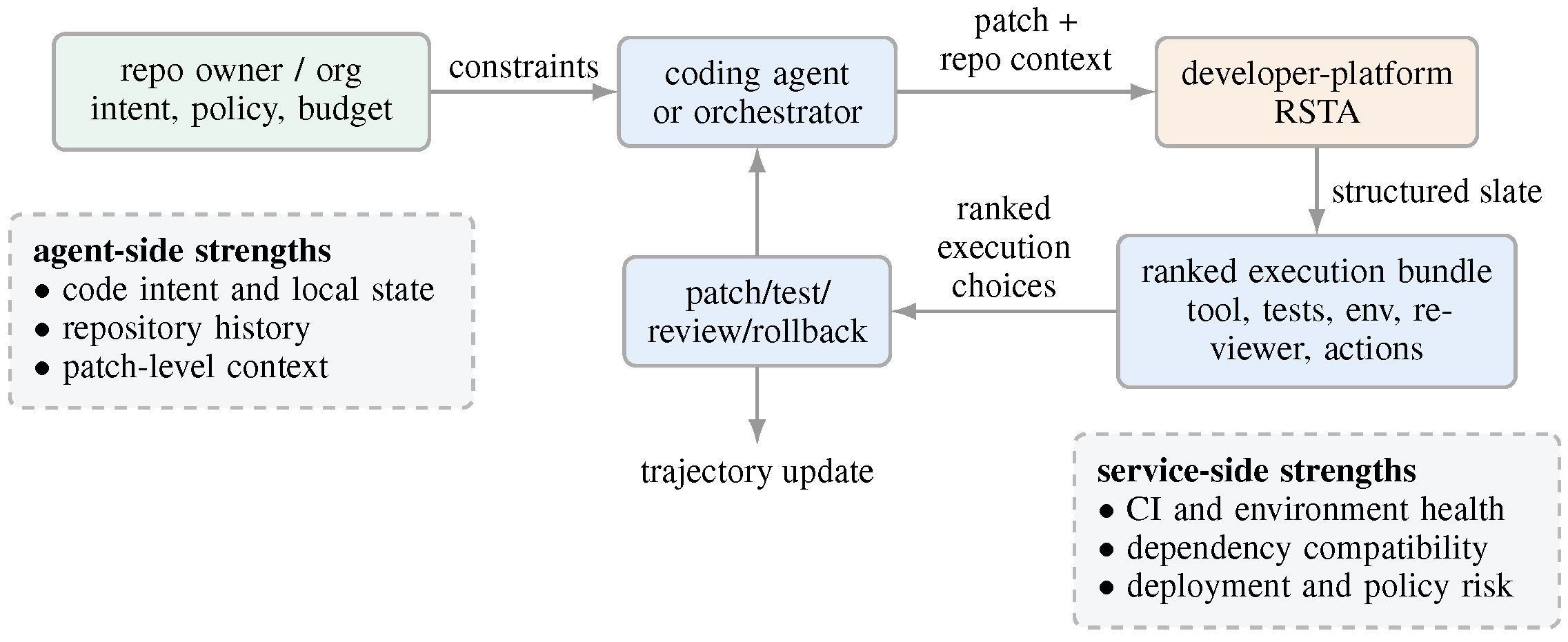

Appendix D.4. Developer platforms

| Domain | What the service can rank for the agent | Important service-private or platform signals | Principal-side constraints | Representative resources |

|---|---|---|---|---|

| Shopping | products, sellers, bundles, coupons, shipping windows, return terms, compatibility checks, buy or reserve actions | inventory volatility, seller reliability, counterfeit risk, compatibility graphs, fulfillment likelihood | budget, brand exclusions, urgency, risk tolerance, approval threshold | WebShop, DeepShop, WebMall, AgenticShop [21,115,116,117] |

| Travel | itineraries, fare families, baggage bundles, hotel combinations, refund options, booking actions | seat availability, fare rules, rebooking frictions, partner inventory, corporate policy checks | dates, layover tolerance, loyalty preferences, travel policy, refund sensitivity | general web-agent environments provide partial travel analogues, especially for booking and itinerary-style web interaction [22,118] |

| Enterprise SaaS | workflow fragments, approval paths, document templates, connectors, escalation options | permission structure, compliance rules, org workflow state, template validity, connector health | delegated authority, SLA targets, audit requirements, team policy | WorkArena, WorkArena++, AssistantBench, and AppWorld provide close enterprise-workflow analogues [23,24,25,119] |

| Developer platforms | tools, test bundles, deployment targets, rollback actions, reviewer assignments, verifier workflows | CI state, dependency compatibility, environment health, repo policy, deployment risk | reliability threshold, code ownership, review rules, budget, sandbox policy | SWE-bench, SWE-agent, and OSWorld are the closest direct resources; AppWorld is a useful workflow analogue [23,120,121,122] |

Appendix E. Evaluation conversion cookbook

| Family | Existing resources | Recommendables to expose | Good evaluation targets |

|---|---|---|---|

| Tool and function selection | ToolLLM, API-Bank, ToolTalk, ToolSandbox, BFCL, Gorilla [19,20,85,86,89,90] | tools, schemas, arguments, retries, verifiers, abstentions | call validity, task completion, cost, latency, safe abstention |

| Shopping and browsing | WebShop, Mind2Web, WebArena, DeepShop, WebMall, AgenticShop, BrowseComp [21,22,87,115,116,117,118] | products, sellers, sources, filters, page actions, ask/buy/stop options | success, attribute match, browsing efficiency, source fidelity |

| Enterprise web and apps | AppWorld, WorkArena, WorkArena++, AssistantBench, TheAgentCompany [23,24,25,93,119] | forms, pages, templates, APIs, workflow fragments, approvals, clarification actions | task completion, workflow correctness, approval efficiency, context grounding |

| Computer use and software engineering | OSWorld, SWE-bench, SWE-agent, GAIA [88,120,121,122] | files, commands, tests, patches, rollback paths, reviewers | execution correctness, test pass rate, collateral damage, cost |

| Protocolized AI-native systems | AI-NativeBench and similar white-box protocolized suites [49] | agentic spans, handoffs, protocol choices, exposed affordances, recovery paths | failure attribution, protocol adherence, latency, diagnosability |

| Multi-agent coordination | AgentBench, MultiAgentBench, Auto-SLURP, protocol-selection work [26,41,42,43,92,123,124,125,126] | delegatee, protocol, topology, verifier, aggregation strategy | team success, handoff quality, latency, message cost, robustness |

| Environment family | Concrete scale evidence | Primitive verbs may still look small | Why recommendation remains central |

|---|---|---|---|

| Tool use | ToolLLM built from 16,464 APIs [19] | call, stop, retry | choose tool, schema, arguments, order, verifier |

| Shopping | WebShop contains 1.18M products [21] | search, click, buy | choose product, seller, bundle, shipping, ask/buy/wait |

| Web navigation | Mind2Web spans 2,350 tasks and 137 websites [22] | click, type, stop | choose element, source, query, continuation path |

| App ecosystems | AppWorld exposes 457 APIs across 9 apps [23] | call app action, edit, stop | choose app, API, parameters, plan structure |

| Enterprise workflows | WorkArena++ grows to 682 tasks; OSWorld has 369 tasks [25,122] | browse, edit, run, stop | choose forms, files, tests, approvals, escalation steps |

Appendix F. Infrastructure and Quantitative Signals for Agent-Facing Services

| Signal | What the source says | Why it matters for RSTA |

|---|---|---|

| MCP (Anthropic) | an open standard for connecting AI systems with external tools and data sources [29] | services are increasingly being exposed as machine-consumable capability surfaces rather than only human UIs |

| A2A (Google) | an open protocol for agents to communicate, exchange information, and coordinate actions across enterprise platforms [30] | recommendation increasingly happens inside a multi-service, multi-agent ecosystem rather than inside one app |

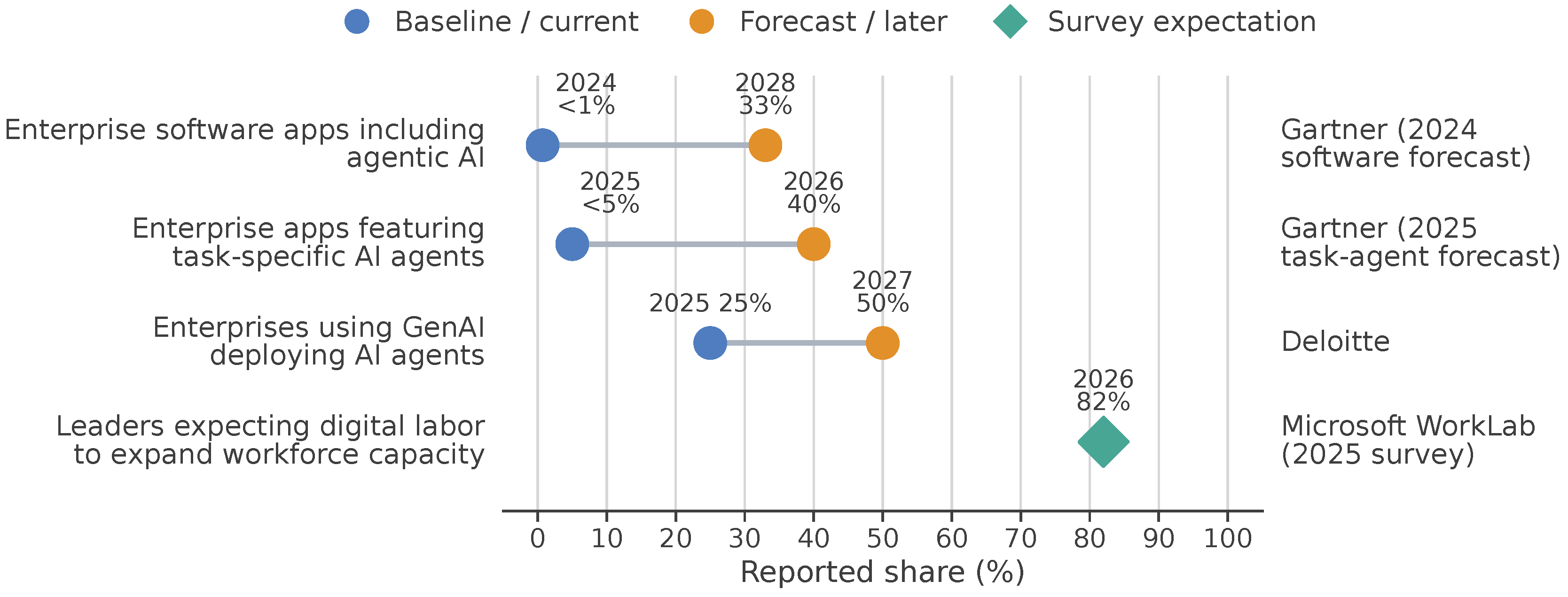

| Enterprise software forecasts (Gartner) | 33% of enterprise software applications will include agentic AI by 2028, up from less than 1% in 2024 [32] | agent-facing capability surfaces are likely to become part of ordinary software, not just experimental demos |

| Task-specific agents (Gartner) | 40% of enterprise applications will feature task-specific AI agents by the end of 2026, up from less than 5% in 2025 [33] | agent-targeted interaction is becoming a mainstream application-layer concern |

| Digital labor and agentic organizations | leaders expect digital labor to expand workforce capacity, and firms are moving toward humans and AI agents working together at scale [34,35,36] | once interaction is increasingly mediated by agents, services need ways to rank structured actions for machine consumers |

Appendix G. Expanded Research Agenda and Open Questions

| Subdirection | Core questions | Near-term tasks | Longer-term value |

|---|---|---|---|

| Candidate-set construction | How should typed intervention inventories be represented, exposed, and reconstructed? | expose , log negatives, separate candidate generation from ranking | cumulative science and better off-policy evaluation |

| Composite ranking | How should recommender systems score bundles, workflow fragments, and structured offers? | adapt slate and bundle methods to agent traces | better control over long-horizon execution quality |

| Autonomy calibration | When should the agent act, ask, abstain, verify, or escalate? | joint ask/act/defer training and approval logging | safer delegated automation with higher usable autonomy |

| Preference and authority elicitation | Which missing preferences, permissions, or thresholds should be clarified before action? | active clarification policies and approval-threshold modeling | better alignment between delegated authority and actual user intent |

| Multi-agent orchestration | Which delegate, protocol, topology, or verifier should be chosen? | convert coordination traces from MultiAgentBench and Auto-SLURP into ranking problems | more reliable agent teams and lower communication waste |

| Service-to-agent interface design | What objects, evidence packets, and affordances should services expose? | evaluate agent-facing slates in shopping, travel, and enterprise apps | a new interface layer for the agent economy |

| Interface-robust evaluation | Do results survive benign rewrites of tool names, schemas, or protocol surfaces? | report invariance scores and run interface perturbations | environment-invariant science rather than interface-specific shortcutting |

| Tracing, provenance, and diagnostics | What should be logged so errors can be attributed to candidate construction, ranking, orchestration, or execution? | trace agentic spans, decision boundaries, evidence packets, and recovery events | debuggable, auditable, cumulative RSTA systems |

| Markets, incentives, and governance | How do exposure, manipulation, fairness, audit, and protocol gaming change in agent-mediated markets? | stress tests for service-side incentives and authority boundaries | principled market design for agent-facing ecosystems |

Appendix H. Failure Modes and Reporting Questions

| Failure mode | Description |

|---|---|

| Silent over-autonomy | the system ranks productive actions when it should have ranked ask, verify, or escalate first |

| Oversafe paralysis | abstention and escalation are over-ranked until delegation loses practical value |

| Preference hallucination | the agent acts as though a principal preference were known when it was not elicited or grounded |

| Candidate-set myopia | the recommender system performs well over a poor candidate inventory and repeatedly misses better trajectories |

| Authority inversion | technically feasible actions outrank normatively authorized ones |

| Verification neglect | productive actions systematically outrank tests, evidence checks, or policy review |

| Service-side manipulation | service objectives dominate principal utility when ranking directly to agents |

| Coordination collapse | multi-agent recommender systems over-decompose or under-verify, causing delay or failure |

Appendix I. Minimal Artifact: An RSTA Evaluation Template

| Field | What to report |

|---|---|

| Immediate recipient | single acting agent, orchestration layer, or multi-agent system |

| Principal(s) and service stakeholder(s) | who benefits, who authorizes, who constrains, and who may have competing utility |

| State | task state, memory, permissions, budgets, latency bounds, and visible environment context |

| Candidate inventory | explicit or reconstructable interventions, including productive and oversight actions |

| Recommendable types | objects, parameters, modes, delegates, protocols, verifiers, approvals, abstentions |

| Service-private signals | compatibility, inventory, reliability, permissions, policy state, pricing, fraud or market signals |

| Ranking output | top-L slate, not only the final chosen action |

| Trajectory outcomes | task success, preference fit, cost, latency, safety, compliance, side effects, human cleanup |

| Failure attribution | whether errors arise from candidate generation, ranking, orchestration, or execution |

| Interface robustness | whether the result survives benign rewrites of schemas, tool names, or protocol surfaces |

- Who is the immediate recipient of the ranking?

- Who are the principal and service stakeholders, if any?

- What exactly are the recommendable objects, and how is defined?

- Are ask, abstain, verify, sandbox, or escalate available as recommendables?

- Are candidate sets explicit or deterministically reconstructable?

- Are both task-centric and stakeholder-centric outcomes reported?

- If the system is multi-agent, what team state, protocol state, and handoff events are observed?

- Does the evaluation isolate ranking quality from candidate-generation quality?

References

- Adomavicius, G.; Tuzhilin, A. Toward the next generation of recommender systems: A survey of the state-of-the-art and possible extensions. IEEE transactions on knowledge and data engineering 2005, 17, 734–749. [Google Scholar] [CrossRef]

- Ricci, F.; Rokach, L.; Shapira, B. Recommender systems: Techniques, applications, and challenges. Recommender systems handbook 2021, 1–35. [Google Scholar]

- Koren, Y.; Bell, R.; Volinsky, C. Matrix factorization techniques for recommender systems. Computer 2009, 42, 30–37. [Google Scholar] [CrossRef]

- Rendle, S.; Freudenthaler, C.; Gantner, Z.; Schmidt-Thieme, L. BPR: Bayesian personalized ranking from implicit feedback. arXiv arXiv:1205.2618. [CrossRef]

- Hidasi, B.; Karatzoglou, A.; Baltrunas, L.; Tikk, D. Session-based recommendations with recurrent neural networks. arXiv arXiv:1511.06939. [CrossRef]

- Quadrana, M.; Cremonesi, P.; Jannach, D. Sequence-aware recommender systems. ACM computing surveys (CSUR) 2018, 51, 1–36. [Google Scholar] [CrossRef]

- Kang, W.C.; McAuley, J. Self-attentive sequential recommendation. In Proceedings of the 2018 IEEE international conference on data mining (ICDM), 2018; IEEE; pp. 197–206. [Google Scholar]

- Li, J.; Ren, P.; Chen, Z.; Ren, Z.; Lian, T.; Ma, J. Neural attentive session-based recommendation. In Proceedings of the Proceedings of the 2017 ACM on Conference on Information and Knowledge Management, 2017; pp. 1419–1428. [Google Scholar]

- Li, L.; Chu, W.; Langford, J.; Schapire, R.E. A contextual-bandit approach to personalized news article recommendation. In Proceedings of the Proceedings of the 19th international conference on World wide web, 2010; pp. 661–670. [Google Scholar]

- Christakopoulou, K.; Radlinski, F.; Hofmann, K. Towards conversational recommender systems. In Proceedings of the Proceedings of the 22nd ACM SIGKDD international conference on knowledge discovery and data mining, 2016; pp. 815–824. [Google Scholar]

- Gao, C.; Lei, W.; He, X.; De Rijke, M.; Chua, T.S. Advances and challenges in conversational recommender systems: A survey. AI open 2021, 2, 100–126. [Google Scholar] [CrossRef]

- Abdollahpouri, H.; Adomavicius, G.; Burke, R.; Guy, I.; Jannach, D.; Kamishima, T.; Krasnodebski, J.; Pizzato, L. Multistakeholder recommendation: Survey and research directions. User Modeling and User-Adapted Interaction 2020, 30, 127–158. [Google Scholar] [CrossRef]

- Afsar, M.M.; Crump, T.; Far, B. Reinforcement learning based recommender systems: A survey. ACM Computing Surveys 2022, 55, 1–38. [Google Scholar] [CrossRef]

- Huang, C.; Huang, H.; Yu, T.; Xie, K.; Wu, J.; Zhang, S.; Mcauley, J.; Jannach, D.; Yao, L. A survey of foundation model-powered recommender systems: From feature-based, generative to agentic paradigms. arXiv arXiv:2504.16420.

- Peng, Q.; Liu, H.; Huang, H.; Yang, Q.; Shao, M. A survey on llm-powered agents for recommender systems. arXiv arXiv:2502.10050. [CrossRef]

- Zhang, Y.; Qiao, S.; Zhang, J.; Lin, T.H.; Gao, C.; Li, Y. A survey of large language model empowered agents for recommendation and search: Towards next-generation information retrieval. arXiv arXiv:2503.05659. [CrossRef]

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Hambro, E.; Zettlemoyer, L.; Cancedda, N.; Scialom, T. Toolformer: Language models can teach themselves to use tools. Advances in neural information processing systems 2023, 36, 68539–68551. [Google Scholar]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.R.; Cao, Y. React: Synergizing reasoning and acting in language models. In Proceedings of the The eleventh international conference on learning representations, 2022. [Google Scholar]

- Qin, Y.; Liang, S.; Ye, Y.; Zhu, K.; Yan, L.; Lu, Y.; Lin, Y.; Cong, X.; Tang, X.; Qian, B.; et al. Toolllm: Facilitating large language models to master 16000+ real-world apis. arXiv 2023. arXiv:2307.16789.

- Li, M.; Zhao, Y.; Yu, B.; Song, F.; Li, H.; Yu, H.; Li, Z.; Huang, F.; Li, Y. Api-bank: A comprehensive benchmark for tool-augmented llms. In Proceedings of the Proceedings of the 2023 conference on empirical methods in natural language processing, 2023; pp. 3102–3116. [Google Scholar]

- Yao, S.; Chen, H.; Yang, J.; Narasimhan, K. Webshop: Towards scalable real-world web interaction with grounded language agents. Advances in Neural Information Processing Systems 2022, 35, 20744–20757. [Google Scholar]

- Deng, X.; Gu, Y.; Zheng, B.; Chen, S.; Stevens, S.; Wang, B.; Sun, H.; Su, Y. Mind2web: Towards a generalist agent for the web. Advances in Neural Information Processing Systems 2023, 36, 28091–28114. [Google Scholar]

- Trivedi, H.; Khot, T.; Hartmann, M.; Manku, R.; Dong, V.; Li, E.; Gupta, S.; Sabharwal, A.; Balasubramanian, N. Appworld: A controllable world of apps and people for benchmarking interactive coding agents. Proceedings of the Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics 2024, Volume 1, 16022–16076. [Google Scholar]

- Drouin, A.; Gasse, M.; Caccia, M.; Laradji, I.H.; Del Verme, M.; Marty, T.; Boisvert, L.; Thakkar, M.; Cappart, Q.; Vazquez, D.; et al. Workarena: How capable are web agents at solving common knowledge work tasks? arXiv 2024. arXiv:2403.07718. [CrossRef]

- Boisvert, L.; Thakkar, M.; Gasse, M.; Caccia, M.; De Chezelles, T.L.; Cappart, Q.; Chapados, N.; Lacoste, A.; Drouin, A. Workarena++: Towards compositional planning and reasoning-based common knowledge work tasks. Advances in Neural Information Processing Systems 2024, 37, 5996–6051. [Google Scholar]

- Zhu, K.; Du, H.; Hong, Z.; Yang, X.; Guo, S.; Wang, D.Z.; Wang, Z.; Qian, C.; Tang, R.; Ji, H.; et al. Multiagentbench: Evaluating the collaboration and competition of llm agents. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, 8580–8622. [Google Scholar]

- OfficeChai Team. Will Need To Re-engineer Tools So That They Can Keep Up With The Speeds Of AI Agents: Google’s Jeff Dean. 29 March 2026. Available online: https://officechai.com/ai/will-need-to-re-engineer-tools-so-that-they-can-keep-up-with-the-speeds-of-ai-agents-googles-jeff-dean/ (accessed on 2026-03-31).

- Tan, A. Advancing to the next frontier of AI. https://www.computerweekly.com/news/366640859/Advancing-to-the-next-frontier-of-AI, 2026. Published. 29 March 2026. (accessed on 2026-03-31).

- Anthropic. Introducing the Model Context Protocol. 2024. Available online: https://www.anthropic.com/news/model-context-protocol (accessed on 2026-03-30).

- Google Developers Blog. Announcing the Agent2Agent Protocol (A2A). 2025. Published April 9, 2025. Available online: https://developers.googleblog.com/en/a2a-a-new-era-of-agent-interoperability/ (accessed on 2026-03-30).

- Ehtesham, A.; Singh, A.; Gupta, G.K.; Kumar, S. A survey of agent interoperability protocols: Model context protocol (mcp), agent communication protocol (acp), agent-to-agent protocol (a2a), and agent network protocol (anp). arXiv arXiv:2505.02279.

- Gartner. Gartner IT Symposium/Xpo 2024 Barcelona: Day 1 Highlights. 4 November 2024. Available online: https://www.gartner.com/en/newsroom/press-releases/2024-11-04-gartner-it-symposium-xpo-2024-barcelona-day-1-highlights (accessed on 2026-03-30).

- Gartner. Gartner Predicts 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026, Up from Less Than 5% in 2025. August 26, 2025; updated September 5, 2025. Available online: https://www.gartner.com/en/newsroom/press-releases/2025-08-26-gartner-predicts-40-percent-of-enterprise-apps-will-feature-task-specific-ai-agents-by-2026-up-from-less-than-5-percent-in-2025.

- Deloitte. Deloitte Global’s 2025 Predictions Report: Generative AI: Paving the Way for a Transformative Future in Technology, Media, and Telecommunications. 2024. Available online: https://www.deloitte.com/global/en/about/press-room/deloitte-globals-2025-predictions-report.html (accessed on 2026-03-30).

- Microsoft WorkLab. 2025: The Year the Frontier Firm Is Born. 2025. Published April 23, 2025. Available online: https://www.microsoft.com/en-us/worklab/work-trend-index/2025-the-year-the-frontier-firm-is-born (accessed on 2026-03-30).

- Sukharevsky, A.; Krivkovich, A.; Gast, A.; Storozhev, A.; Maor, D.; Mahadevan, D.; Hämäläinen, L.; Durth, S. The Agentic Organization: Contours of the Next Paradigm for the AI Era. 2025. Available online: https://www.mckinsey.com/capabilities/people-and-organizational-performance/our-insights/the-agentic-organization-contours-of-the-next-paradigm-for-the-ai-era (accessed on 2026-03-30).

- Javdani, S.; Srinivasa, S.S.; Bagnell, J.A. Shared autonomy via hindsight optimization. Robotics science and systems: online proceedings 2015, 2015, 10–15607. [Google Scholar]

- Scerri, P.; Pynadath, D.V.; Tambe, M. Towards adjustable autonomy for the real world. Journal of Artificial Intelligence Research 2002, 17, 171–228. [Google Scholar] [CrossRef]

- Tomašev, N.; Franklin, M.; Osindero, S. Intelligent AI delegation. arXiv 2026. arXiv:2602.11865. [CrossRef]

- Levine, S.; Kumar, A.; Tucker, G.; Fu, J. Offline reinforcement learning: Tutorial, review, and perspectives on open problems. arXiv arXiv:2005.01643. [CrossRef]

- Wu, Q.; Bansal, G.; Zhang, J.; Wu, Y.; Li, B.; Zhu, E.; Jiang, L.; Zhang, X.; Zhang, S.; Liu, J.; et al. Autogen: Enabling next-gen LLM applications via multi-agent conversations. In Proceedings of the First conference on language modeling, 2024. [Google Scholar]

- Li, G.; Hammoud, H.; Itani, H.; Khizbullin, D.; Ghanem, B. Camel: Communicative agents for" mind" exploration of large language model society. Advances in neural information processing systems 2023, 36, 51991–52008. [Google Scholar]

- Hong, S.; Zhuge, M.; Chen, J.; Zheng, X.; Cheng, Y.; Wang, J.; Zhang, C.; Wang, Z.; Yau, S.K.S.; Lin, Z.; et al. MetaGPT: Meta programming for a multi-agent collaborative framework. In Proceedings of the The twelfth international conference on learning representations, 2023. [Google Scholar]

- Du, Y.; Li, S.; Torralba, A.; Tenenbaum, J.B.; Mordatch, I. Improving factuality and reasoning in language models through multiagent debate. In Proceedings of the Forty-first international conference on machine learning, 2024. [Google Scholar]

- Du, H.; Su, J.; Li, J.; Ding, L.; Yang, Y.; Han, P.; Tang, X.; Zhu, K.; You, J. Which LLM Multi-Agent Protocol to Choose? arXiv arXiv:2510.17149. [CrossRef]

- Yao, S.; Shinn, N.; Razavi, P.; Narasimhan, K. τ-bench: A Benchmark for Tool-Agent-User Interaction in Real-World Domains. arXiv 2024. arXiv:2406.12045.

- Debenedetti, E.; Zhang, J.; Balunovic, M.; Beurer-Kellner, L.; Fischer, M.; Tramèr, F. Agentdojo: A dynamic environment to evaluate prompt injection attacks and defenses for llm agents. Advances in Neural Information Processing Systems 2024, 37, 82895–82920. [Google Scholar]

- Gu, W.; Li, C.; Yu, Z.; Sun, M.; Yang, Z.; Wang, W.; Jia, H.; Zhang, S.; Ye, W. What Do Agents Learn from Trajectory-SFT: Semantics or Interfaces? arXiv 2026. arXiv:2602.01611. [CrossRef]

- Wang, Z.; Yu, G.; Lyu, M.R. AI-NativeBench: An Open-Source White-Box Agentic Benchmark Suite for AI-Native Systems. arXiv 2026. arXiv:2601.09393.

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. Harness Engineering for Language Agents: The Harness Layer as Control, Agency, and Runtime. Preprints.org 2026. [Google Scholar] [CrossRef]

- Rafailov, R.; Sharma, A.; Mitchell, E.; Manning, C.D.; Ermon, S.; Finn, C. Direct preference optimization: Your language model is secretly a reward model. Advances in neural information processing systems 2023, 36, 53728–53741. [Google Scholar]

- Wu, J.; Xie, Y.; Yang, Z.; Wu, J.; Gao, J.; Ding, B.; Wang, X.; He, X. β-DPO: Direct Preference Optimization with Dynamic β. Advances in Neural Information Processing Systems 2024, 37, 129944–129966. [Google Scholar]

- Meng, Y.; Xia, M.; Chen, D. Simpo: Simple preference optimization with a reference-free reward. Advances in Neural Information Processing Systems 2024, 37, 124198–124235. [Google Scholar]

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. SP2DPO: An LLM-assisted Semantic Per-Pair DPO Generalization. arXiv 2026. arXiv:2601.22385.

- Liu, S.; Fang, W.; Hu, Z.; Zhang, J.; Zhou, Y.; Zhang, K.; Tu, R.; Lin, T.E.; Huang, F.; Song, M.; et al. A survey of direct preference optimization. arXiv arXiv:2503.11701.

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal policy optimization algorithms. arXiv arXiv:1707.06347.

- Shao, Z.; Wang, P.; Zhu, Q.; Xu, R.; Song, J.; Bi, X.; Zhang, H.; Zhang, M.; Li, Y.; Wu, Y.; et al. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv 2024. arXiv:2402.03300.

- Liu, Z.; Chen, C.; Li, W.; Qi, P.; Pang, T.; Du, C.; Lee, W.S.; Lin, M. Understanding r1-zero-like training: A critical perspective. arXiv arXiv:2503.20783.

- Zhang, S.; Yao, L.; Sun, A.; Tay, Y. Deep learning based recommender system: A survey and new perspectives. ACM computing surveys (CSUR) 2019, 52, 1–38. [Google Scholar] [CrossRef]

- Burke, R. Hybrid recommender systems: Survey and experiments. User modeling and user-adapted interaction 2002, 12, 331–370. [Google Scholar] [CrossRef]

- He, C.; Liu, Y.; Guo, Q.; Miao, C. Multi-scale quasi-RNN for next item recommendation. arXiv arXiv:1902.09849.

- Sun, F.; Liu, J.; Wu, J.; Pei, C.; Lin, X.; Ou, W.; Jiang, P. BERT4Rec: Sequential recommendation with bidirectional encoder representations from transformer. In Proceedings of the Proceedings of the 28th ACM international conference on information and knowledge management, 2019; pp. 1441–1450. [Google Scholar]

- He, C. Neural inductive biases for sequential recommendation. PhD thesis, Nanyang Technological University, 2025. [Google Scholar]

- Jannach, D.; Manzoor, A.; Cai, W.; Chen, L. A survey on conversational recommender systems. ACM Computing Surveys (CSUR) 2021, 54, 1–36. [Google Scholar] [CrossRef]

- Swaminathan, A.; Joachims, T. The self-normalized estimator for counterfactual learning. advances in neural information processing systems 2015, 28. [Google Scholar]

- Schnabel, T.; Swaminathan, A.; Singh, A.; Chandak, N.; Joachims, T. Recommendations as treatments: Debiasing learning and evaluation. In Proceedings of the international conference on machine learning. PMLR, 2016; pp. 1670–1679. [Google Scholar]

- Joachims, T.; Swaminathan, A.; Schnabel, T. Unbiased learning-to-rank with biased feedback. In Proceedings of the Proceedings of the tenth ACM international conference on web search and data mining, 2017; pp. 781–789. [Google Scholar]

- Ie, E.; Jain, V.; Wang, J.; Narvekar, S.; Agarwal, R.; Wu, R.; Cheng, H.T.; Chandra, T.; Boutilier, C. SlateQ: A Tractable Decomposition for Reinforcement Learning with Recommendation Sets. Proceedings of the IJCAI 2019, Vol. 19, 2592–2599. [Google Scholar]

- Jiang, N.; Li, L. Doubly robust off-policy value evaluation for reinforcement learning. In Proceedings of the International conference on machine learning. PMLR, 2016; pp. 652–661. [Google Scholar]

- Wang, W.; Zhang, Y.; Li, H.; Wu, P.; Feng, F.; He, X. Causal recommendation: Progresses and future directions. In Proceedings of the Proceedings of the 46th international ACM SIGIR conference on research and development in information retrieval, 2023; pp. 3432–3435. [Google Scholar]

- Maes, P. Agents that reduce work and information overload. Communications of the ACM 1994, 37, 30–40. [Google Scholar] [CrossRef]

- Lashkari, Y.; Metral, M.; Maes, P. Collaborative interface agents. Readings in agents 1997, 111–116. [Google Scholar]

- Schiaffino, S.; Amandi, A. User–interface agent interaction: personalization issues. International Journal of Human-Computer Studies 2004, 60, 129–148. [Google Scholar] [CrossRef]

- Armentano, M.; Godoy, D.; Amandi, A. Personal assistants: Direct manipulation vs. mixed initiative interfaces. International Journal of Human-Computer Studies 2006, 64, 27–35. [Google Scholar] [CrossRef]

- Horvitz, E. Principles of mixed-initiative user interfaces. In Proceedings of the Proceedings of the SIGCHI conference on Human Factors in Computing Systems, 1999; pp. 159–166. [Google Scholar]

- Allen, J.E.; Guinn, C.I.; Horvtz, E. Mixed-initiative interaction. IEEE Intelligent Systems and their Applications 1999, 14, 14–23. [Google Scholar] [CrossRef]

- Guttman, R.H.; Moukas, A.G.; Maes, P. Agent-mediated electronic commerce: A survey. The Knowledge Engineering Review 1998, 13, 147–159. [Google Scholar] [CrossRef]

- Maes, P.; Guttman, R.H.; Moukas, A.G. Agents that buy and sell. Communications of the ACM 1999, 42, 81–ff. [Google Scholar] [CrossRef]

- Kephart, J.O.; Hanson, J.E.; Greenwald, A.R. Dynamic pricing by software agents. Computer Networks 2000, 32, 731–752. [Google Scholar] [CrossRef]

- Kephart, J.O.; Greenwald, A.R. Shopbot economics. Autonomous Agents and Multi-Agent Systems 2002, 5, 255–287. [Google Scholar] [CrossRef]

- Laffont, J.J.; Martimort, D. The theory of incentives: the principal-agent model; Princeton university press, 2002. [Google Scholar]

- Shinn, N.; Cassano, F.; Gopinath, A.; Narasimhan, K.; Yao, S. Reflexion: Language agents with verbal reinforcement learning. Advances in neural information processing systems 2023, 36, 8634–8652. [Google Scholar]

- Shim, J.; Seo, G.; Lim, C.; Jo, Y. Tooldial: Multi-turn dialogue generation method for tool-augmented language models. arXiv arXiv:2503.00564.

- Gou, B.; Huang, Z.; Ning, Y.; Gu, Y.; Lin, M.; Qi, W.; Kopanev, A.; Yu, B.; Gutiérrez, B.J.; Shu, Y.; et al. Mind2web 2: Evaluating agentic search with agent-as-a-judge. arXiv arXiv:2506.21506. [CrossRef]

- Patil, S.G.; Zhang, T.; Wang, X.; Gonzalez, J.E. Gorilla: Large language model connected with massive apis. Advances in Neural Information Processing Systems 2024, 37, 126544–126565. [Google Scholar]

- Farn, N.; Shin, R. Tooltalk: Evaluating tool-usage in a conversational setting. arXiv 2023, arXiv:2311.10775. [Google Scholar]

- Wei, J.; Sun, Z.; Papay, S.; McKinney, S.; Han, J.; Fulford, I.; Chung, H.W.; Passos, A.T.; Fedus, W.; Glaese, A. Browsecomp: A simple yet challenging benchmark for browsing agents. arXiv arXiv:2504.12516. [CrossRef]

- Mialon, G.; Fourrier, C.; Wolf, T.; LeCun, Y.; Scialom, T. Gaia: a benchmark for general ai assistants. In Proceedings of the The Twelfth International Conference on Learning Representations, 2023. [Google Scholar]

- Patil, S.G.; Mao, H.; Yan, F.; Ji, C.C.J.; Suresh, V.; Stoica, I.; Gonzalez, J.E. The berkeley function calling leaderboard (bfcl): From tool use to agentic evaluation of large language models. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Lu, J.; Holleis, T.; Zhang, Y.; Aumayer, B.; Nan, F.; Bai, H.; Ma, S.; Ma, S.; Li, M.; Yin, G.; et al. Toolsandbox: A stateful, conversational, interactive evaluation benchmark for llm tool use capabilities. Proceedings of the Findings of the Association for Computational Linguistics: NAACL 2025, 2025, 1160–1183. [Google Scholar]

- Xu, Y.; Chen, Q.; Ma, Z.; Liu, D.; Wang, W.; Wang, X.; Xiong, L.; Wang, W. Toward Personalized LLM-Powered Agents: Foundations, Evaluation, and Future Directions. arXiv 2026. arXiv:2602.22680. [CrossRef]

- Liu, X.; Yu, H.; Zhang, H.; Xu, Y.; Lei, X.; Lai, H.; Gu, Y.; Ding, H.; Men, K.; Yang, K.; et al. Agentbench: Evaluating llms as agents. arXiv 2023. arXiv:2308.03688. [CrossRef]

- Xu, F.F.; Song, Y.; Li, B.; Tang, Y.; Jain, K.; Bao, M.; Wang, Z.Z.; Zhou, X.; Guo, Z.; Cao, M.; et al. Theagentcompany: benchmarking llm agents on consequential real world tasks. arXiv 2024. arXiv:2412.14161.

- Yehudai, A.; Eden, L.; Li, A.; Uziel, G.; Zhao, Y.; Bar-Haim, R.; Cohan, A.; Shmueli-Scheuer, M. Survey on evaluation of llm-based agents. arXiv arXiv:2503.16416. [CrossRef]

- Mohammadi, M.; Li, Y.; Lo, J.; Yip, W. Evaluation and benchmarking of llm agents: A survey. Proceedings of the Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V. 2 2025, 6129–6139. [Google Scholar]

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. OpenClaw as Language Infrastructure: A Case-Centered Survey of a Public Agent Ecosystem in the Wild. Preprints.org. 2026.

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. The AutoResearch Moment: From Experimenter to Research Director. Preprints.org 2026. [Google Scholar] [CrossRef]

- Singla, A.; Sukharevsky, A.; Hall, B.; Yee, L.; Chui, M. The State of AI in 2025: Agents, Innovation, and Transformation. 2025. Available online: https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai (accessed on 2026-03-30).

- Stein, M. How are AI agents used? Evidence from 177,000 MCP tools. arXiv 2026. arXiv:2603.23802. [CrossRef]

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. The Synthetic Media Exchange: When Lineage Becomes Currency. 2026. [Google Scholar] [CrossRef]

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. From Prompts to Portfolios: AI Agents as Agentic Multimedia Firms. 2026. [Google Scholar] [CrossRef]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in neural information processing systems 2020, 33, 9459–9474. [Google Scholar]

- Chen, L.; Zaharia, M.; Zou, J. Frugalgpt: How to use large language models while reducing cost and improving performance. arXiv 2023, arXiv:2305.05176. [Google Scholar] [CrossRef]

- Ong, I.; Almahairi, A.; Wu, V.; Chiang, W.L.; Wu, T.; Gonzalez, J.E.; Kadous, M.W.; Stoica, I. Routellm: Learning to route llms with preference data. arXiv 2024. arXiv:2406.18665. [CrossRef]

- Varangot-Reille, C.; Bouvard, C.; Gourru, A.; Ciancone, M.; Schaeffer, M.; Jacquenet, F. Doing More with Less: A Survey on Routing Strategies for Resource Optimisation in Large Language Model-Based Systems. arXiv arXiv:2502.00409.

- Zheng, H.; Xu, H.; Lin, Y.; Fan, S.; Chen, L.; Yu, K. DiSRouter: Distributed Self-Routing for LLM Selections. arXiv arXiv:2510.19208.

- Mozannar, H.; Sontag, D. Consistent estimators for learning to defer to an expert. In Proceedings of the International conference on machine learning. PMLR, 2020; pp. 7076–7087. [Google Scholar]

- Leitão, D.; Saleiro, P.; Figueiredo, M.A.; Bizarro, P. Human-AI collaboration in decision-making: beyond learning to defer. arXiv 2022, arXiv:2206.13202. [Google Scholar]

- Spitzer, P.; Holstein, J.; Hemmer, P.; Vössing, M.; Kühl, N.; Martin, D.; Satzger, G. Human delegation behavior in human-AI collaboration: The effect of contextual information. Proceedings of the ACM on Human-Computer Interaction 2025, 9, 1–28. [Google Scholar] [CrossRef]

- Gu, W.; Li, M.L.; Zhu, S. Who Should Do What? Adaptive Delegation in Human-AI Collaboration. In Proceedings of the NeurIPS 2025 Workshop MLxOR: Mathematical Foundations and Operational Integration of Machine Learning for Uncertainty-Aware Decision-Making, 2025. [Google Scholar]

- Biyik, E.; Yao, F.; Chow, Y.; Haig, A.; Hsu, C.w.; Ghavamzadeh, M.; Boutilier, C. Preference elicitation with soft attributes in interactive recommendation. arXiv 2023. arXiv:2311.02085. [CrossRef]

- Muldrew, W.; Hayes, P.; Zhang, M.; Barber, D. Active preference learning for large language models. arXiv 2024. arXiv:2402.08114.

- Amershi, S.; Weld, D.; Vorvoreanu, M.; Fourney, A.; Nushi, B.; Collisson, P.; Suh, J.; Iqbal, S.; Bennett, P.N.; Inkpen, K.; et al. Guidelines for human-AI interaction. In Proceedings of the Proceedings of the 2019 chi conference on human factors in computing systems, 2019; pp. 1–13. [Google Scholar]

- Kraus, M.; Wagner, N.; Callejas, Z.; Minker, W. The role of trust in proactive conversational assistants. IEEE Access 2021, 9, 112821–112836. [Google Scholar] [CrossRef]

- Lyu, Y.; Zhang, X.; Yan, L.; de Rijke, M.; Ren, Z.; Chen, X. Deepshop: A benchmark for deep research shopping agents. arXiv arXiv:2506.02839. [CrossRef]

- Peeters, R.; Steiner, A.; Schwarz, L.; Yuya Caspary, J.; Bizer, C. WebMall–A Multi-Shop Benchmark for Evaluating Web Agents. arXiv e-prints 2025, pp. [Google Scholar]

- Kim, S.; Heo, R.; Seo, Y.; Yeo, J.; Lee, D. AgenticShop: Benchmarking Agentic Product Curation for Personalized Web Shopping. arXiv 2026. arXiv:2602.12315. [CrossRef]

- Zhou, S.; Xu, F.F.; Zhu, H.; Zhou, X.; Lo, R.; Sridhar, A.; Cheng, X.; Ou, T.; Bisk, Y.; Fried, D.; et al. Webarena: A realistic web environment for building autonomous agents. arXiv 2023. arXiv:2307.13854. [CrossRef]

- Yoran, O.; Amouyal, S.J.; Malaviya, C.; Bogin, B.; Press, O.; Berant, J. Assistantbench: Can web agents solve realistic and time-consuming tasks? In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, 2024; pp. 8938–8968. [Google Scholar]

- Jimenez, C.E.; Yang, J.; Wettig, A.; Yao, S.; Pei, K.; Press, O.; Narasimhan, K. Swe-bench: Can language models resolve real-world github issues? arXiv 2023, arXiv:2310.06770. [Google Scholar]

- Yang, J.; Jimenez, C.E.; Wettig, A.; Lieret, K.; Yao, S.; Narasimhan, K.; Press, O. Swe-agent: Agent-computer interfaces enable automated software engineering. Advances in Neural Information Processing Systems 2024, 37, 50528–50652. [Google Scholar]

- Xie, T.; Zhang, D.; Chen, J.; Li, X.; Zhao, S.; Cao, R.; Hua, T.J.; Cheng, Z.; Shin, D.; Lei, F.; et al. Osworld: Benchmarking multimodal agents for open-ended tasks in real computer environments. Advances in Neural Information Processing Systems 2024, 37, 52040–52094. [Google Scholar]

- Cemri, M.; Pan, M.Z.; Yang, S.; Agrawal, L.A.; Chopra, B.; Tiwari, R.; Keutzer, K.; Parameswaran, A.; Klein, D.; Ramchandran, K.; et al. Why do multi-agent llm systems fail? arXiv arXiv:2503.13657. [CrossRef]

- Zhou, H.; Wan, X.; Sun, R.; Palangi, H.; Iqbal, S.; Vulić, I.; Korhonen, A.; Arık, S.Ö. Multi-agent design: Optimizing agents with better prompts and topologies. arXiv arXiv:2502.02533. [CrossRef]

- Shen, L.; Shen, X. Auto-SLURP: A Benchmark Dataset for Evaluating Multi-Agent Frameworks in Smart Personal Assistant. arXiv arXiv:2504.18373.

- Zhang, H.; Feng, T.; You, J. Router-r1: Teaching llms multi-round routing and aggregation via reinforcement learning. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025. [Google Scholar]

- Allouah, A.; Besbes, O.; Figueroa, J.D.; Kanoria, Y.; Kumar, A. What Is Your AI Agent Buying? Evaluation, Biases, Model Dependence, & Emerging Implications for Agentic E-Commerce. arXiv arXiv:2508.02630.

- Xiu, Z.; Sun, D.Q.; Cheng, K.; Patel, M.; Zhang, Y.; Lu, J.; Attia, O.; Vemulapalli, R.; Tuzel, O.; Cao, M.; et al. ASTRA-bench: Evaluating Tool-Use Agent Reasoning and Action Planning with Personal User Context. arXiv 2026. arXiv:2603.01357.

- He, C.; Zhou, X.; Xu, H.; Liu, W.; Miao, C.; Wang, D. Human-AI productivity claims should be reported as time-to-acceptance under explicit acceptance tests. 2026. [Google Scholar]

| Negative example | Why it is not RSTA | What would make it RSTA |

|---|---|---|

| A movie or news recommender that uses an LLM to write better explanations for a human | The final recipient is still a person, and the ranking allocates human attention rather than authorized agency | The ranking would need to be consumed by an acting agent whose trajectory changes as a result |

| A planner over primitive UI actions with no explicit or reconstructable intervention inventory | This is just planning over low-level actions; there is no state-conditioned ranking interface over candidate interventions | Expose grounded candidates such as workflow fragments, approval paths, verifiers, or delegates and evaluate their trajectory effects |

| A backend model router that silently swaps models for cost reasons | This is only routing unless an acting agent explicitly consumes a ranked slate of alternatives tied to trajectory utility | Make the alternatives machine-actionable interventions for the agent, such as model + verifier + abstain choices |

| A post-hoc safety veto applied after the productive action has already been chosen | Oversight is treated as an external filter rather than as a ranked alternative inside the action slate | Include ask, verify, sandbox, defer, and escalate as first-class recommendables |

| A static API directory sorted alphabetically or by popularity | The ranking is not task-conditioned and does not target downstream trajectory utility for a live agent state | Condition on the task, current state, available authority, and execution consequences |

| Regime | Representative cases | Why an RSTA layer remains useful |

|---|---|---|

| Large-surface filtering | shopping catalogs, API hubs, dynamic DOMs, workflow fragments | the agent still needs filtering and re-ranking over grounded objects, parameters, bundles, and verifiers |

| Priority-sensitive small sets | ask/act/verify, choose among a few tools or models, pick a verifier or delegate | bounded deliberation, heterogeneous evaluation cost, and path dependence make order matter even when the menu is modest |

| ID | Candidate intervention | Type | Why it belongs in the slate |

|---|---|---|---|

| open the most promising approved charger SKU and inspect its configuration fields | act | this is the shortest high-information path when the top match is likely correct | |

| run a compatibility check against the employee’s exact laptop model before purchase | verify | this reduces wrong-order risk even when the catalog title looks plausible | |

| open a second near-match from the approved catalog for comparison | act | comparison is useful when several items share similar names but differ in wattage or connector type | |

| ask the principal whether a travel charger is acceptable as a substitute if the exact SKU is unavailable | ask | this resolves a missing preference that changes which products are admissible | |

| request manager approval for expedited shipping above the delegated threshold | escalate | this turns an otherwise unauthorized fast purchase into an authorized one | |

| submit the first plausible item immediately without verification | act | this may save steps, but it is brittle when similar catalog items differ in important fields | |

| purchase a third-party express-shipping charger from a non-approved seller | act | this can appear efficient, but it risks policy breach, reliability problems, and human cleanup |

| Evaluation axis | Typical end-to-end agent view | RSTA view |

|---|---|---|

| Choice set | hidden inside prompts, tool specs, or environment code | explicit or reconstructable candidate inventory |

| Actions | productive actions dominate; oversight appears as a guardrail | productive and oversight interventions compete in the same slate |

| Metric | task success or exact-match proxy | trajectory utility with cost, safety, policy, and stakeholder outcomes |

| Failure attribution | one scalar failure label | separate diagnosis for candidate generation, ranking, inspection, and execution |

| RSTA failure | Concrete harm | Why the ranking layer matters |

|---|---|---|

| Act outranks verify or ask | wrong execution or overspending | the system allocates delegated authority too aggressively |

| Submit outranks policy or compatibility checks | compliance breach or misconfiguration | authorization and verification are treated as afterthoughts rather than ranked alternatives |

| Execution outranks sandbox or test | unsafe execution | the slate ignores asymmetry between productive and irreversible actions |

| Delegate or protocol misranking | coordination failure or protocol gaming | orchestration choices are themselves recommendables with real downstream cost |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).