Submitted:

02 April 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- (1)

- The number of feature points is crucial for image registration quality. To ensure an adequate number of feature points, we have enhanced the CSS corner detection algorithm. Since CSS extracts feature points based on contours, and the number of edges correlates with contour quantity, maintaining a sufficient number of edges is vital. To address this, we propose a multi-scale Sobel edge detection algorithm.

- (2)

- Given the significant differences in intensity, resolution, and viewpoint between multi-modal remote sensing image pairs, we propose a new gradient definition and a method to determine the dominant direction of feature points for rotation invariance. This gradient definition is applied to SIFT descriptors, with segmented normalization to enhance the similarity between feature point descriptors.

2. Proposed Image Registering Algorithm

2.1. Edge Detection

2.2. Contour Extraction

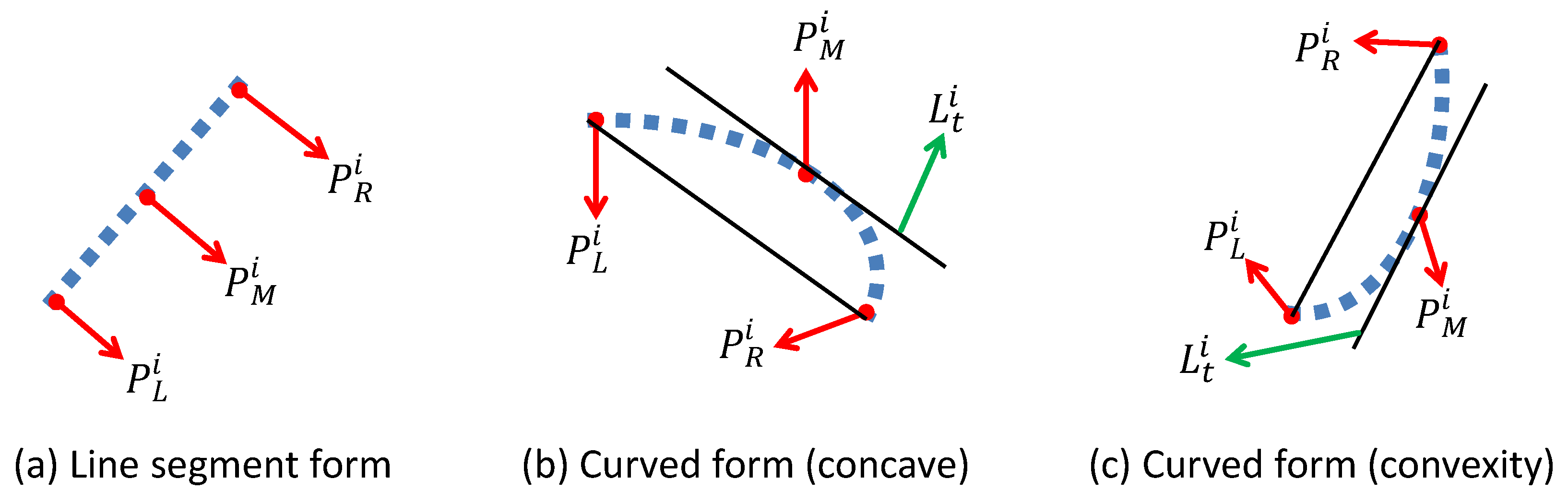

2.3. Feature Point Detection

2.4. Dominant Direction

2.4.1. Feature Descriptor Construction

2.4.2. Coarse-to-Fine Feature Matching

3. Experimental Results and Analysis

3.1. Data Set and Parameter Setting

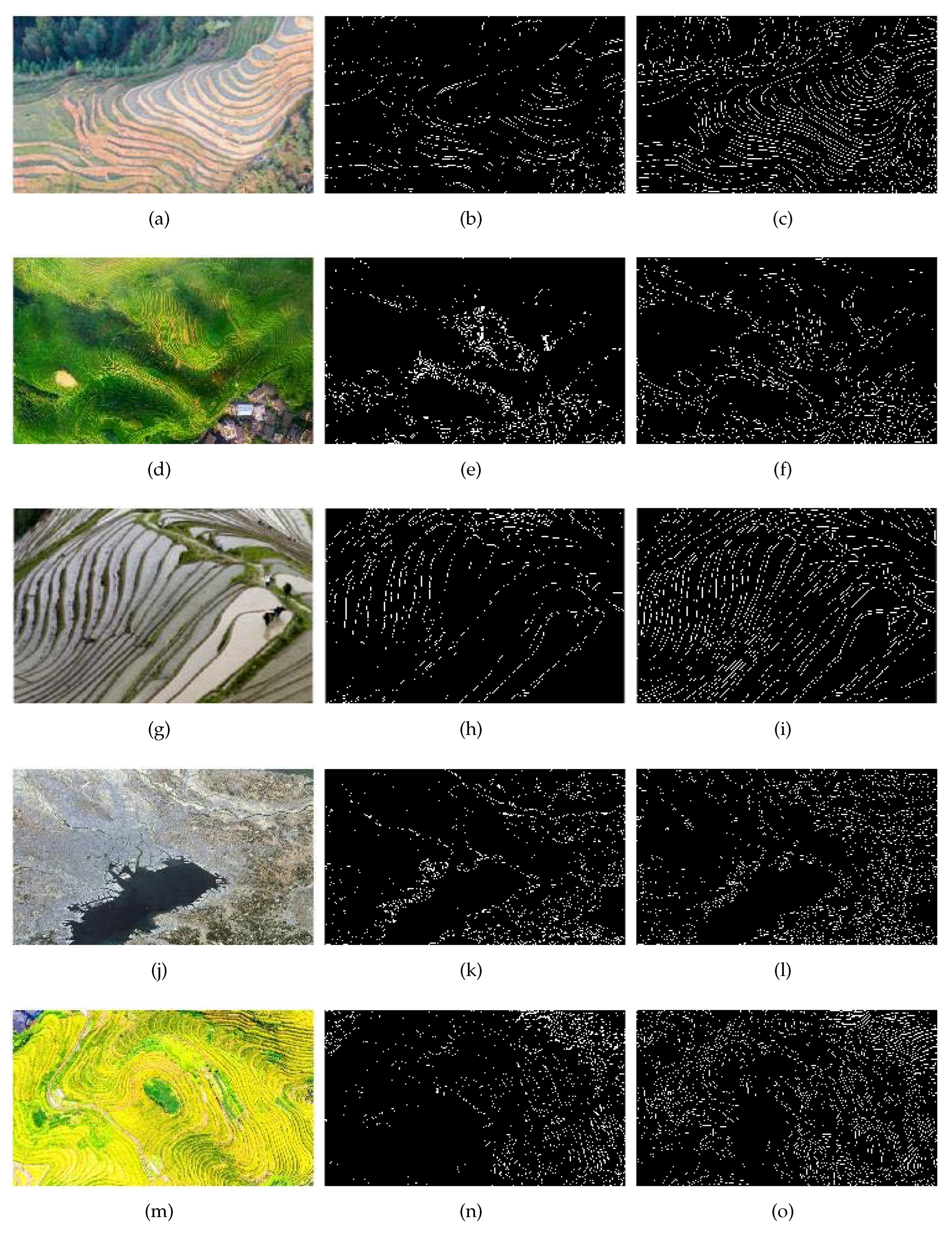

3.2. Experimental Results of Multi-scale Sobel Edge Detection

3.3. Subjective Evaluation of the Registration Results

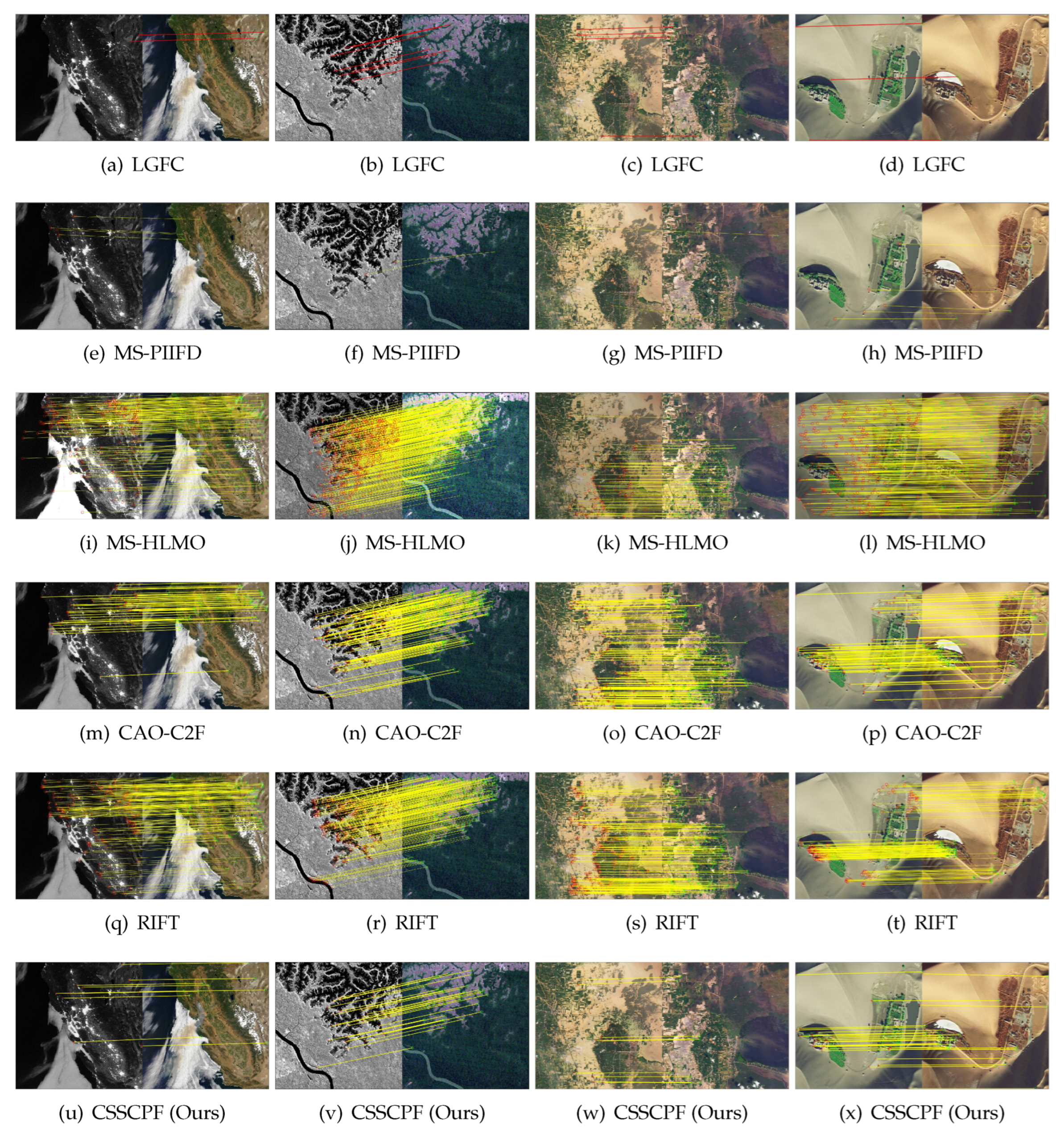

3.4. Objective Registering Results and Analysis

3.4.1. Running Time of Different Algorithms

3.4.2. NCM Point Pairs for Different Algorithms

3.4.3. RMSE of Different Algorithms

3.4.4. Registration Accuracy of Different Algorithms

4. Conclusion

Author Contributions

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Zhang, X.; Leng, C.; Hong, Y.; Pei, Z.; Cheng, I.; Basu, A. Multimodal remote sensing image registration methods and advancements: A survey. Remote Sensing 2021, 13, 1–31. [Google Scholar] [CrossRef]

- Ye, Y.; Shan, J.; Bruzzone, L.; Shen, L. Robust registration of multimodal remote sensing images based on structural similarity. IEEE Transactions on Geoscience and Remote Sensing 2017, 55, 2941–2958. [Google Scholar] [CrossRef]

- Huang, Q.; Guo, X.; Wang, Y.; Sun, H.; Yang, L. A survey of feature matching methods. IET Image Processing 2024, 18, 1385–141. [Google Scholar] [CrossRef]

- Ma, J.; Jiang, X.; Fan, A.; Jiang, J.; Yan, J. Image matching from handcrafted to deep features: A survey. International Journal of Computer Vision 2021, 129, 23–79. [Google Scholar] [CrossRef]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. International Journal of Computer Vision 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Gao, C.; Li, W. Multi-scale PIIFD for registration of multi-source remote sensing images. Journal of Beijing Institute of Technology 2021, 30, 113–124. [Google Scholar]

- Gao, C.; Li, W.; Tao, R.; Du, Q. MS-HLMO: Multiscale histogram of local main orientation for remote sensing image registration. IEEE Transactions on Geoscience and Remote Sensing 2022, 60, 1–14. [Google Scholar] [CrossRef]

- Li, J.; Hu, Q.; Ai, M. RIFT: Multi-modal image matching based on radiation-variation insensitive feature transform. IEEE Transactions on Image Processing 2020, 29, 3296–3310. [Google Scholar] [CrossRef] [PubMed]

- Zhu, J.; Liu, C.; Yang, Y. Robust image registration for power equipment using large gap fracture contours. IEEE MultiMedia 2024, 31, 53–64. [Google Scholar] [CrossRef]

- Öfverstedt, J.; Lindblad, J.; Sladoje, N. Fast and robust symmetric image registration based on distances combining intensity and spatial information. IEEE Transactions on Image Processing 2019, 28, 3584–3597. [Google Scholar] [CrossRef] [PubMed]

- Jiang, Q.; Liu, Y.; Yan, Y.; Deng, J.; Jiang, X. A contour angle orientation for power equipment infrared and visible image registration. IEEE Transactions on Power Delivery 2021, 36, 2559–2569. [Google Scholar] [CrossRef]

- Yung; Nelson, H.C. Corner detector based on global and local curvature properties. Optical Engineering 2008, 47, 1–13. [Google Scholar] [CrossRef]

- Beis, J.S.; Lowe, D.G. Shape indexing using approximate nearest-neighbour search in high-dimensional spaces. In Proceedings of the Conference on Computer Vision & Pattern Recognition, 1997. [Google Scholar]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Readings in Computer Vision 1981, 24, 381–395. [Google Scholar] [CrossRef]

- Jiang, X.; Ma, J.; Xiao, G.; Shao, Z.; Guo, X. A review of multimodal image matching: Methods and applications. Information Fusion 2021, 73, 22–71. [Google Scholar] [CrossRef]

Short Biography of Authors

|

Jianhua Zhu received his B.S. degree in mathematics and applied mathematics from Xichang University, and his M.A.Sc. degree in mathematics from Sichuan University of Science and Engineering, Zigong, 643000, China. His research interests include image processing and computer vision. This work was completed during his master’s studies. He is the first author of this article. Contact him at tostuhua@qq.com. |

|

Changjiang Liu is an associate professor with the Key Laboratory of Higher Education of Sichuan Province for Enterprise Informationalization and Internet of Things, Sichuan University of Science and Engineering, Zigong, 643000, China. His research interests include image processing and computer vision. Liu received his Ph.D. degree in image segmentation and image registration from Sichuan University. He is the corresponding author of this article. Contact him at liuchangjiang@189.cn. |

|

Danling Liang is currently working toward her M.A.Sc. degree, focused on the image segmentation, with the School of Mathematics and Statistics, Sichuan University of Science and Engineering, Zigong, 643000, China. Her research interests include image processing. Liang received her B.S. degree in mathematics and applied mathematics from Sichuan University of Science and Engineering. She is the co-author of this article. Contact her at liangdanling@163.com. |

| Algorithms | Average running time (s) |

Average NCM | Average RMSE | Average registering accuracy (%) |

| LGFC | 4.1215 | 3 | 8.4699 | 25.7 |

| MS-PIIFD | 18.3924 | 4 | 1.8334 | 29.08 |

| CAO-C2F | 25.2271 | 43 | 1.8796 | 20.84 |

| RIFT | 10.2207 | 147 | 1.9241 | 10.09 |

| MS-HLMO | 214.514 | 267 | 2.2151 | 18.75 |

| CSSCPF (Ours) | 5.5795 | 14 | 1.8542 | 46.74 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).