Submitted:

02 April 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Renewability (Cancelability): All users of the system should be able to refresh their profiles if compromised.

- Unlinkability: It should be infeasible for an attacker to link two or more compromised profiles.

- Irreversibility: It should be infeasible to deduce the original behavioral pattern from compromised profiles.

- We present a novel privacy-preserving BA system designed specifically for low-computation devices. By leveraging the data protection capabilities of RP and local DP, this system effectively addresses the challenges posed by high-dimensional data while safeguarding sensitive behavioral information.

- Our system maintains high accuracy while preserving the essential privacy attributes of authentication systems-renewability, unlinkability, and irreversibility-in alignment with the ISO/IEC 24745 standard within the BA systems framework.

- We designed a novel GAN-based privacy attack model to thoroughly evaluate the system’s irreversibility. Additionally, we systematically analyzed all other key privacy requirements.

- Experimental validation using three distinct behavioral datasets confirmed our theoretical analyses, demonstrating the system’s practical effectiveness, robustness, and resilience, establishing a strong foundation for real-world implementation.

- We provide new formal security games for all three ISO/IEC 24745 properties, and derive rigorous mathematical proofs including information-theoretic lower bounds (Cramér-Rao), full Jensen-Shannon divergence derivations, and GAN Nash-equilibrium attack bounds.

Contribution and Novelty Highlights

- New acronym - RUIP-BA: The acronym RUIP-BA (Renewable, Unlinkable, Irreversible Privacy-Preserving Behavioral Authentication) directly encodes the three ISO/IEC 24745 properties in the system name, making the contribution immediately transparent. This distinguishes RUIP-BA from prior systems where the acronym encodes only the technical method.

- Unified framework novelty: RUIP-BA unifies geometric template protection (RP) and formal stochastic privacy protection (local DP) in one deployable BA pipeline for low-resource platforms.

- Algorithmic novelty: The paper presents a complete modular algorithm stack covering profile enrollment, claim verification, template re-issuance, unlinkability testing, adversarial privacy evaluation, and DP parameter calibration.

- Formal-analysis novelty: We provide new explicit mathematical derivations with axiom, lemma, and theorem level statements for all three properties. Specifically: (i) a Bhattacharyya-coefficient renewal bound via Hanson-Wright concentration; (ii) a full KL/JS divergence derivation for unlinkability under Gaussian mechanism; (iii) a Cramér-Rao/Bayesian MMSE bound for irreversibility showing that null-space information cannot be recovered; and (iv) a GAN Nash-equilibrium privacy bound.

- Adversarial-evaluation novelty: We model GAN-based inversion explicitly as a formal security game and bound attack effectiveness through the mutual information bottleneck and information-channel capacity of the protected template.

2. Related Work

2.1. Cryptographic Approach

2.2. Non-Cryptographic Approach

3. Background

3.1. Random Projection (RP)

3.2. Differential Privacy (DP)

3.3. Profile Similarity

4. Proposed RUIP-BA System

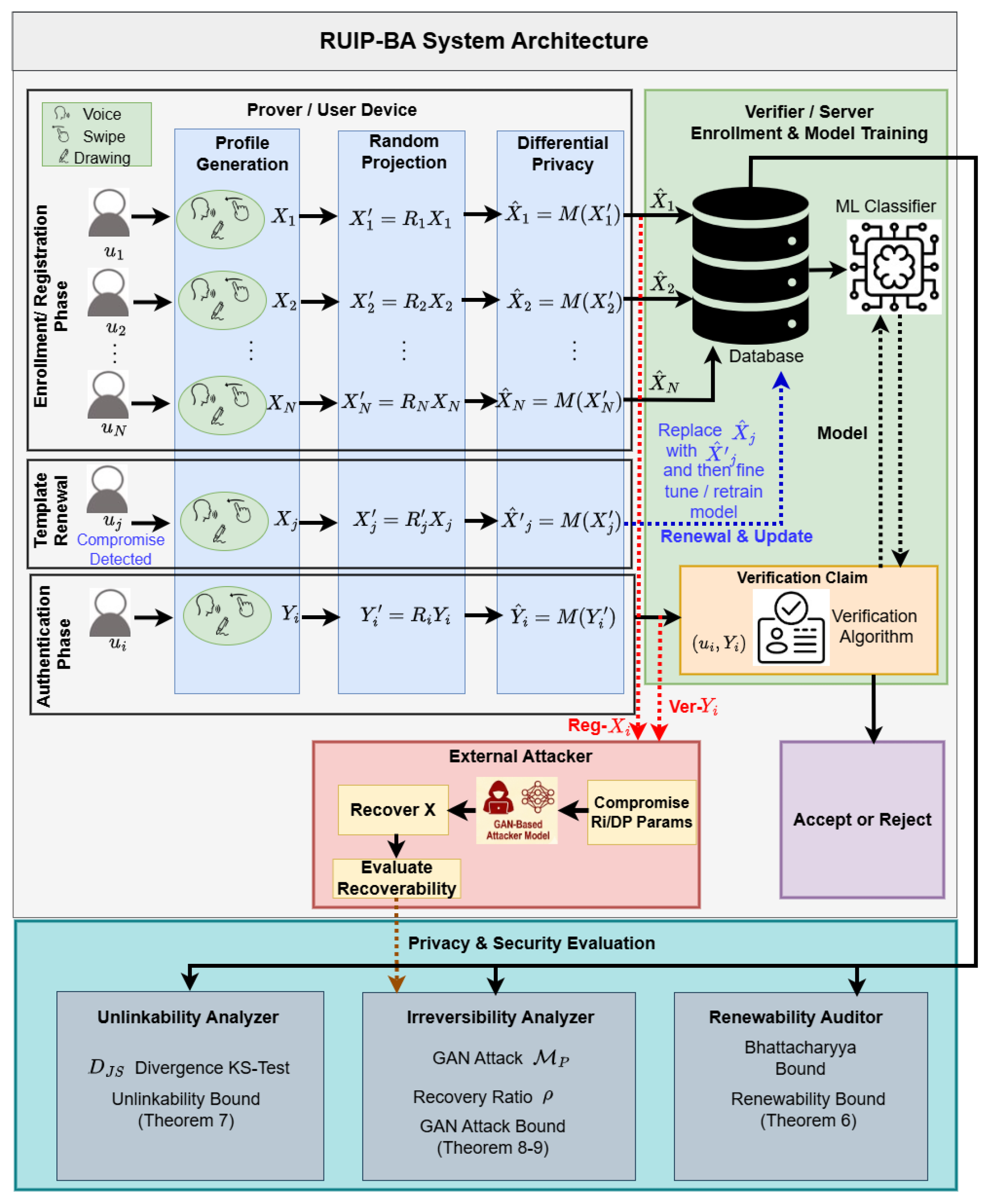

4.1. Development of a Privacy-Preserving BA System

4.1.1. Registration Phase

- Random matrix generation: To initiate the registration process, a user first generates a random matrix on their device using a private random seed. This matrix serves as a unique key for projecting the user’s BA profile.

- Profile transformation: For each profile of user , the device applies the RP transformation to produce a projected profile . The RP transformation follows a Lipschitz mapping , project the data from d dimensions to k dimensions, where . To enhance computational efficiency, we employ the discrete distribution discussed earlier for RP with .

- Additive noise: The user applies local DP to add noise to the projected profile , transforming it into a noisy projected profile before transmitting it to the verifier. The client chooses the values of and for DP, where smaller and are preferred for stronger privacy.

- Training a BA classifier: The verifier collects all N noisy projected profiles from N users and uses them to train a NN-based BA classifier . The verifier may act as a third party service provider, deploying through a Machine Learning as a Service (MLaaS).

4.1.2. Verification Phase

- Profile transformation for verification: When a verification request is initiated, user device collects and transforms the verification profile into using , which is generated from the secret seed. DP noise is then added to the transformed profile through local DP, resulting in noisy projected verification data . The device subsequently transmits along with the user identity to the verifier as a verification claim .

- Verification process: The verification algorithm verifies the claim with the help of the trained BA classifier . For , the output will be the n prediction vectors . These predictions are then aggregated into a single binary decision: accept or reject.

4.2. Complete Algorithmic Realization

| Algorithm 1 RUIP-BA Main Workflow |

|

| Algorithm 2 Subalgorithm A: Registration (Enrollment and Model Training) |

|

| Algorithm 3 Subalgorithm B: Verification |

|

| Algorithm 4 Subalgorithm C: Template Renewal and Model Update |

|

| Algorithm 5 Subalgorithm D: Unlinkability Evaluation |

|

| Algorithm 6 Subalgorithm E: GAN-Based Privacy Attack and Evaluation |

|

4.3. Privacy Analysis of the Proposed BA System

4.3.1. Formal Privacy Security Games

- 1.

- Setup. fixes RUIP-BA parameters .

- 2.

- Challenge generation. selects a profile and generates two independent key pairs and , then computes:

- 3.

- Adversary’s turn. receives and must distinguish whether both templates come from the same source (with different keys) or from different sources.

- 1.

- Setup. fixes RUIP-BA parameters.

- 2.

- Challenge generation. samples a bit . If : select same source ; if : select different sources . In both cases, use distinct key pairs and and compute:

- 3.

- Adversary’s turn. receives and must output a guess .

- 1.

- Setup. fixes RUIP-BA parameters.

- 2.

- Challenge. samples , generates key , and computes . Sends to .

- 3.

- Reconstruction. outputs a reconstructed profile .

- 4.

- Scoring.Feature recoverability .

- 5.

- The system provides-irreversibilityif for all PPT : .

4.3.2. Formal Assumptions and Mathematical Derivations

4.3.3. Renewability Analysis - Extended Formal Proof

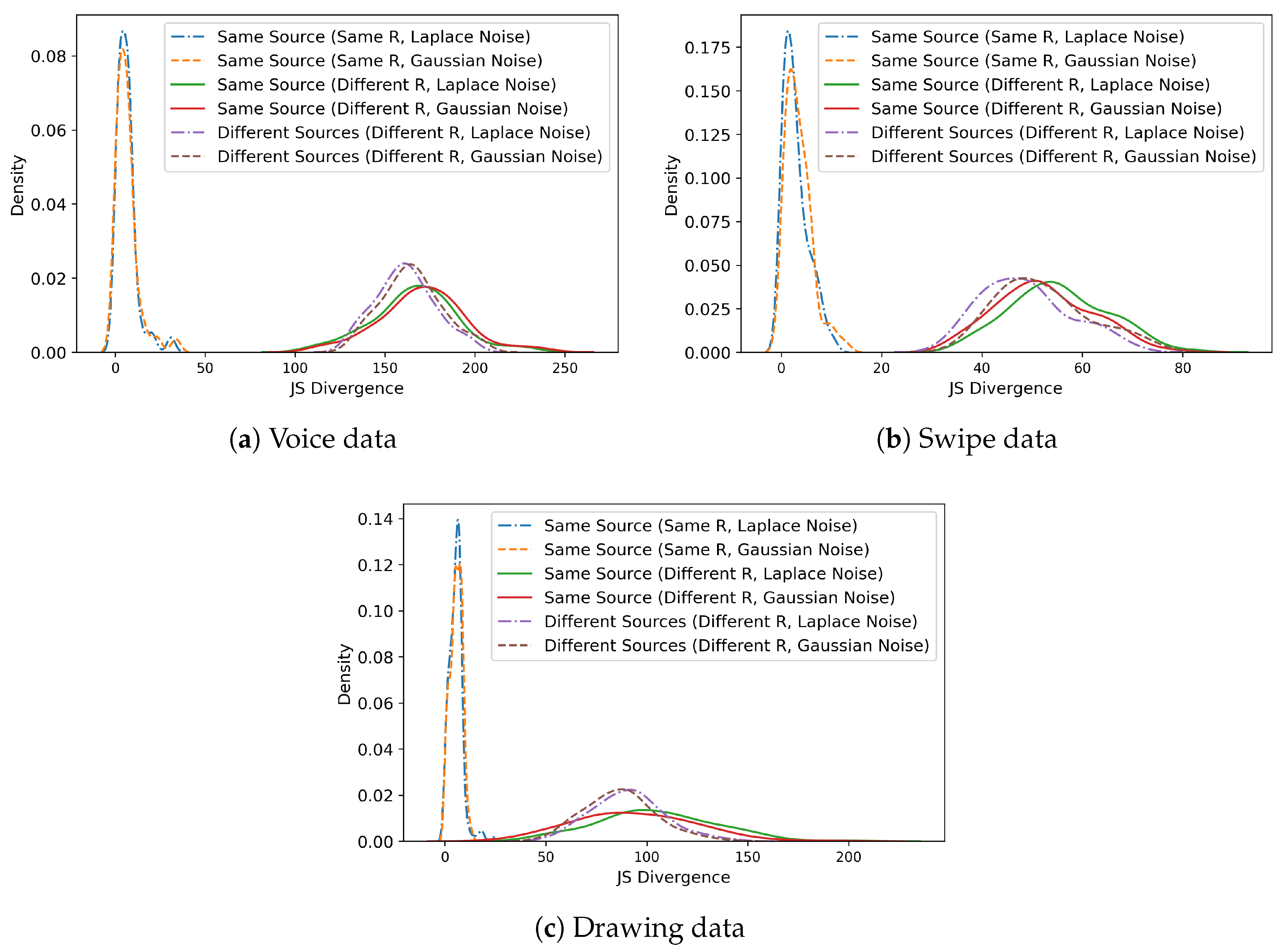

4.3.4. Unlinkability Analysis - Full Jensen-Shannon Divergence Derivation

- Case 1(invalid claims, different source): , different keys.

- Case 2(same source, different keys): , .

- Case 3(valid claims, same source, same key): , .

- Case 1: , , . By JL (Theorem 1): .

- Case 2: , , same . By RP randomness: (cf. Step 1 of Theorem 5).

- Case 3: , same . Then and .

4.3.5. Unlinkability Analysis - Experimental Validation

4.3.6. Irreversibility Analysis - Cramér-Rao Lower Bound

5. GAN-based Privacy Attack Analysis

5.1. Formal GAN Attack Security Game

- 1.

- Setup. A challenger fixes RUIP-BA system parameters and behavioral profile distribution .

- 2.

- Auxiliary data.Adversary obtains auxiliary plain profiles and their noisy projected counterparts using either known or estimated parameters.

- 3.

- Attack training. trains a GAN generator and discriminator by minimizing the adversarial objective:

- 4.

- Challenge phase. provides target . outputs .

- 5.

- Scoring. .

5.2. Attacker Knowledge and Capabilities

- The attacker has knowledge about the operation of the verification algorithm . The attacker is also aware of the architecture and input-output dimensions of the trained classifier .

- The attacker has access to the noisy projected profiles of the target BA system. The attacker can obtain the noisy projected profiles from the untrusted verifier or by using model inversion or other attack methods.

- The attacker has access to the profile generator, which is publicly available software, used by the BA system to collect users’ behavioral data. The attacker will use it to gather auxiliary profiles.

- The attacker is aware of the distribution and dimensions of , since this information is public. However, in the worst case, if the seed is compromised, the attacker can also derive the secret .

- The attacker is also aware of the type of DP noise applied to the noisy projected profiles, as in most cases this is public information. In the worst-case scenario, the attacker can also obtain the values of the DP parameters.

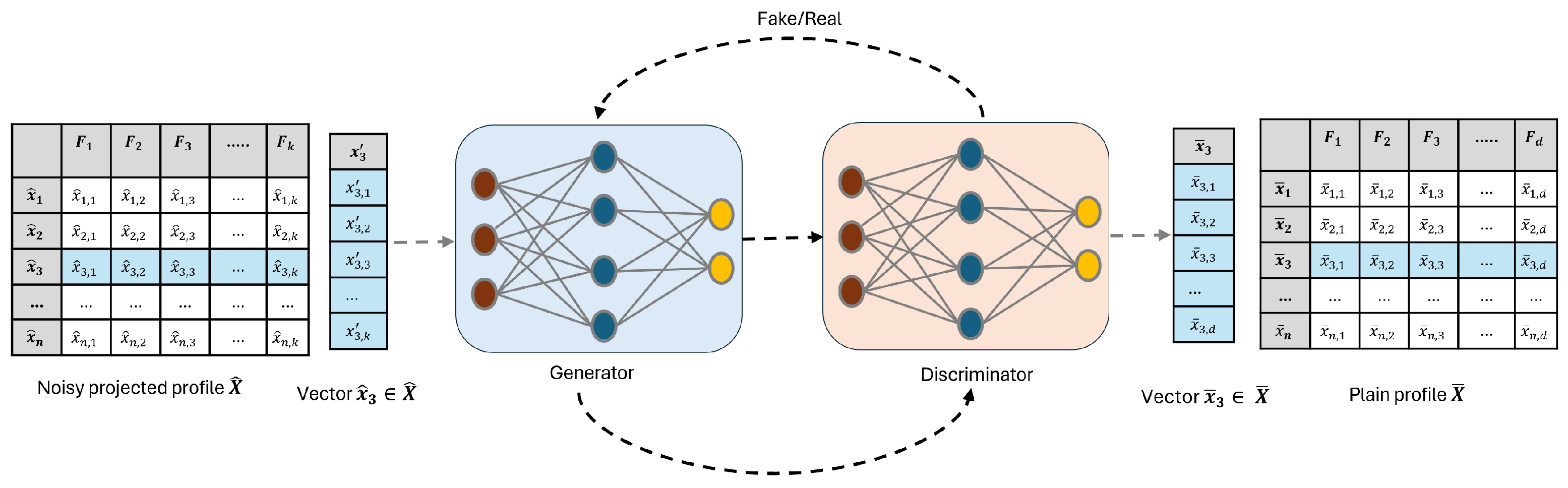

5.3. Train an Attack Model

- Collect auxiliary data. The attacker will use the profile generator of the target BA system to collect the required auxiliary profiles using a third-party outsourcing platform. There is no limitation on the number of auxiliary profiles, though more auxiliary profiles will lead to a more generalized attack model.

- RP and DP on auxiliary data. The attacker applies RP to each auxiliary profile to produce a projected version. The random matrix for RP is generated either using a compromised seed or by leveraging knowledge of the distribution of . The attacker will then generate DP noise, either by obtaining or gaussing the DP parameters, and add this noise to the projected profiles. To increase the number of projected auxiliary profiles and improve the generalization of , the attacker can apply multiple instances of and different instances of DP noise to each auxiliary profile.

- Train the attack model . To train , the attacker will use noisy projected versions of auxiliary profiles as training data and original auxiliary profiles as the ground truth. Figure 2 illustrates the training process of GAN-based . Let denote an original auxiliary data vector, and represent its projected noisy version obtained through RP and DP. During training, the generator takes the projected vector as input and produces a reconstructed feature vector that aims to approximate the original data . The discriminator receives either a real auxiliary sample or a reconstructed sample , and attempts to distinguish between real and generated data. Through adversarial training over the auxiliary datasets, the generator progressively improves its ability to reconstruct original feature vectors from their projected noisy counterparts, thereby learning an effective inverse mapping from the projected space to the original feature space.

5.4. Privacy Evaluation

5.5. GAN Attack - Formal Privacy Proof

6. Experimental Results

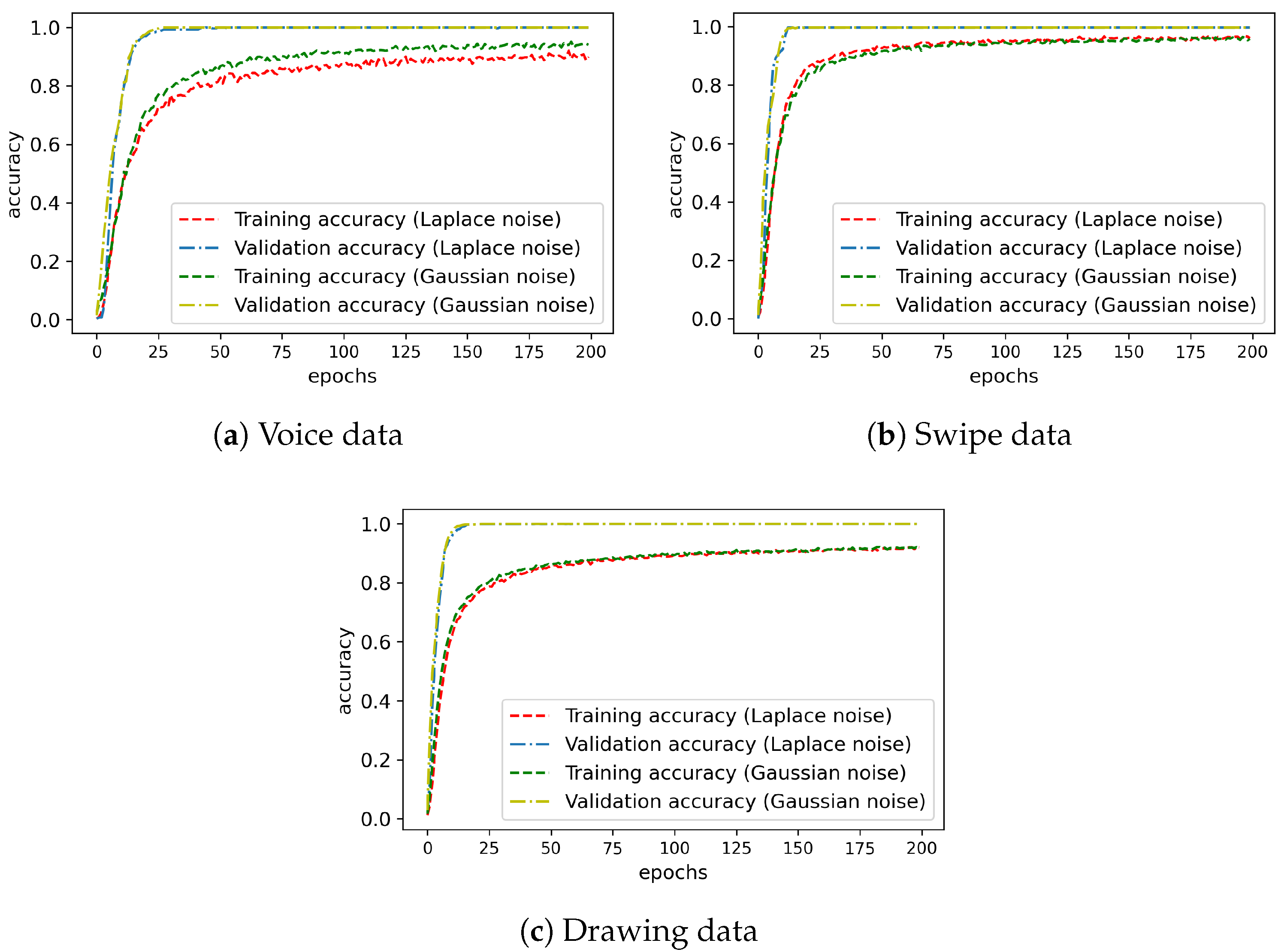

6.1. Performance of BA System

6.1.1. Performance of BA Classifier for Plain Profiles

6.1.2. Performance of BA Classifier for Projected Profiles

6.2. Privacy-Preserving Properties of BA System

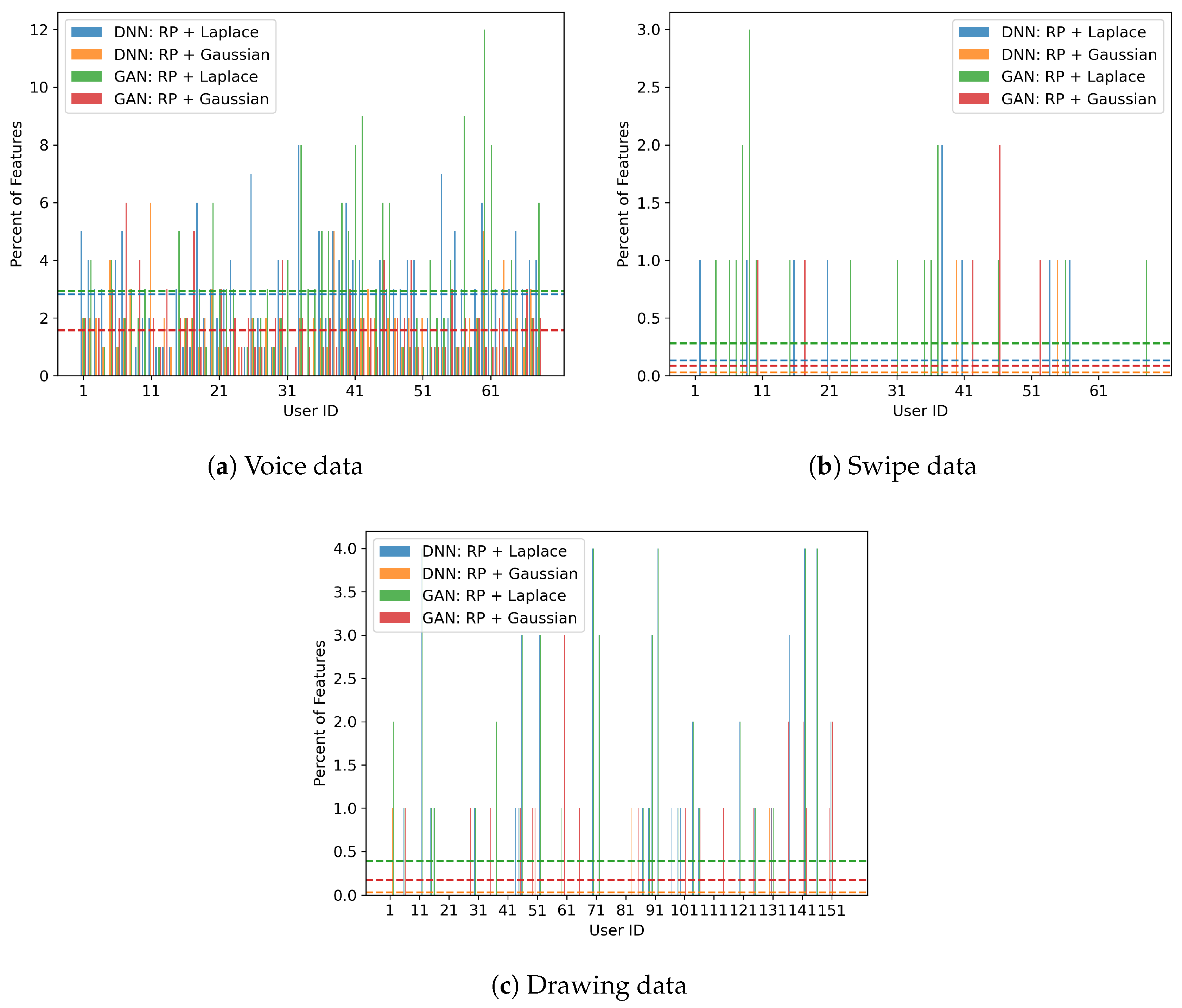

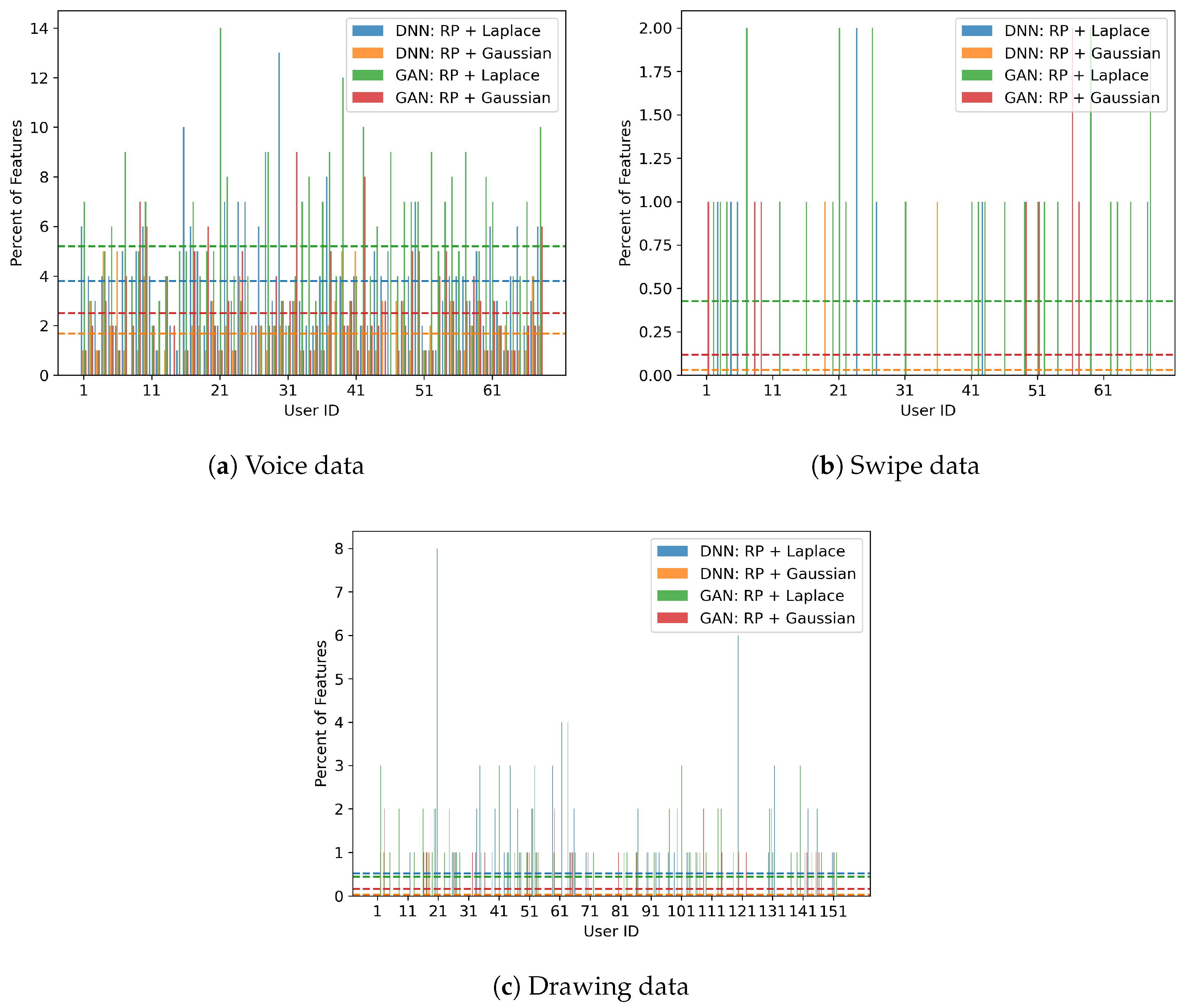

6.2.1. Renew Training Profile

6.2.2. Similarity of Divergence Distributions

6.3. Performance of ML-Based Attack

6.3.1. Train an Attack Model

6.3.2. Recover Feature

6.4. Results Comparison

- Some systems relied solely on an RP-based approach [23,24], while others combined RP with local binary pattern (LBP) [33], backpropagation neural network (BPNN) [35], or applied double random phase encryption (DRPE) with fractional Fourier transform (FFT) [34]. In this work, we combined DP with RP to offer theoretical and experimental privacy guarantees, distinguishing our approach from existing methods.

- Our system achieves higher performance accuracy than most other systems, except for a few that use biometric data, as expected, since biometric data are inherently more distinctive than behavioral data.

- We considered most of the potential attacks applicable to our system and also introduced GAN-based privacy attacks as a novel privacy evaluation method. Our approach is unique in providing formal information-theoretic proofs (Theorems 5-8) for all three privacy properties.

7. Conclusion

Appendix A

| Layer (type) | Output Shape | Param # |

|---|---|---|

| dense_1 (Dense) | (None, 64) | 3,648 |

| batch_normalization_1 (BatchNormalization) | (None, 64) | 256 |

| activation_1 (Activation) | (None, 64) | 0 |

| dropout_1 (Dropout) | (None, 64) | 0 |

| dense_2 (Dense) | (None, 128) | 8,320 |

| batch_normalization_2 (BatchNormalization) | (None, 128) | 512 |

| activation_2 (Activation) | (None, 128) | 0 |

| dropout_2 (Dropout) | (None, 128) | 0 |

| dense_3 (Dense) | (None, 64) | 8,256 |

| batch_normalization_3 (BatchNormalization) | (None, 64) | 256 |

| activation_3 (Activation) | (None, 64) | 0 |

| dropout_3 (Dropout) | (None, 64) | 0 |

| dense_4 (Dense) | (None, 155) | 10,075 |

| Generator Architecture | ||

|---|---|---|

| Layer (type) | Output Shape | Activation |

| Input (y) | - | |

| Linear () | 128 | ReLU |

| Linear (128 → 128) | 128 | ReLU |

| Linear (128 ) | None | |

| Discriminator Architecture | ||

| Layer (type) | Output Shape | Activation |

| Input (Concatenated ) | - | |

| Linear () | 128 | LeakyReLU (0.2) |

| Linear (128 → 128) | 128 | LeakyReLU (0.2) |

| Linear (128 ) | 1 | Sigmoid |

References

- Islam, M.M.; Safavi-Naini, R. POSTER: A behavioural authentication system for mobile users. In Proceedings of the Proceedings of the 2016 ACM Conference on Computer and Communications Security (CCS ’16). ACM, 2016, pp. 1742–1744.

- Chong, P.; Elovici, Y.; Binder, A. User authentication based on mouse dynamics using deep neural networks: A comprehensive study. IEEE Transactions on Information Forensics and Security 2019, 15, 1086–1101. [Google Scholar] [CrossRef]

- Jung, D.; Nguyen, M.D.; Han, J.; Park, M.; Lee, K.; Yoo, S.; Kim, J.; Mun, K.R. Deep neural network-based gait classification using wearable inertial sensor data. In Proceedings of the 2019 41st Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC). IEEE, 2019, pp. 3624–3628.

- Deng, Y.; Zhong, Y. Keystroke dynamics advances for mobile devices using deep neural network. Recent Advances in User Authentication Using Keystroke Dynamics Biometrics 2015, 2, 59–70. [Google Scholar]

- Gong, X.; Wang, Q.; Chen, Y.; Yang, W.; Jiang, X. Model extraction attacks and defenses on cloud-based machine learning models. IEEE Communications Magazine 2020, 58, 83–89. [Google Scholar] [CrossRef]

- Islam, M.M.; Safavi-Naini, R. Model Inversion for Impersonation in Behavioral Authentication Systems. In Proceedings of the SECRYPT, 2021, pp. 271–282.

- Secretary, I. Information technology–security techniques–biometric information protection. International Organization for Standardization, Standard ISO/IEC 2011, 24745, 2011.

- Kelkboom, E.J.; Breebaart, J.; Kevenaar, T.A.; Buhan, I.; Veldhuis, R.N. Preventing the decodability attack based cross-matching in a fuzzy commitment scheme. IEEE Transactions on Information Forensics and Security 2010, 6, 107–121. [Google Scholar] [CrossRef]

- Islam, M.M.; Safavi-Naini, R. Fuzzy Vault for Behavioral Authentication System. In Proceedings of the ICT Systems Security and Privacy Protection: 35th IFIP TC 11 International Conference, SEC 2020, Maribor, Slovenia, September 21–23, 2020, Proceedings 35. Springer, 2020, pp. 295–310.

- Chauhan, S.; Sharma, A. Improved fuzzy commitment scheme. International Journal of Information Technology 2022, 14, 1321–1331. [Google Scholar] [CrossRef]

- Wang, Y.; Li, B.; Zhang, Y.; Wu, J.; Ma, Q. A secure biometric key generation mechanism via deep learning and its application. Applied Sciences 2021, 11, 8497. [Google Scholar] [CrossRef]

- Mir, O.; Roland, M.; Mayrhofer, R. DAMFA: Decentralized anonymous multi-factor authentication. In Proceedings of the Proceedings of the 2nd ACM International Symposium on Blockchain and Secure Critical Infrastructure, 2020, pp. 10–19.

- Kim, S.; Mun, H.J.; Hong, S. Multi-factor authentication with randomly selected authentication methods with DID on a random terminal. Applied Sciences 2022, 12, 2301. [Google Scholar] [CrossRef]

- Al-Rubaie, M.; Chang, J.M. Privacy-preserving machine learning: Threats and solutions. IEEE Security & Privacy 2019, 17, 49–58. [Google Scholar] [CrossRef]

- Loya, J.; Bana, T. Privacy-Preserving Keystroke Analysis using Fully Homomorphic Encryption & Differential Privacy. In Proceedings of the 2021 International Conference on Cyberworlds (CW). IEEE, 2021, pp. 291–294.

- Baig, A.F.; Eskeland, S.; Yang, B. Privacy-preserving continuous authentication using behavioral biometrics. International Journal of Information Security 2023, 22, 1833–1847. [Google Scholar] [CrossRef]

- Soutar, C.; Roberge, D.; Stoianov, A.; Gilroy, R.; Kumar, B.V. Biometric encryption using image processing. In Proceedings of the Optical Security and Counterfeit Deterrence Techniques II. SPIE, 1998, Vol. 3314, pp. 178–188.

- Usman, M.; Jan, M.A.; Puthal, D. Paal: A framework based on authentication, aggregation, and local differential privacy for internet of multimedia things. IEEE Internet of Things Journal 2019, 7, 2501–2508. [Google Scholar] [CrossRef]

- Chamikara, M.A.P.; Bertok, P.; Khalil, I.; Liu, D.; Camtepe, S. Privacy preserving face recognition utilizing differential privacy. Computers & Security 2020, 97, 101951. [Google Scholar] [CrossRef]

- Wazzeh, M.; Ould-Slimane, H.; Talhi, C.; Mourad, A.; Guizani, M. Privacy-preserving continuous authentication for mobile and iot systems using warmup-based federated learning. IEEE Network 2022.

- Yu, F.X.; Rawat, A.S.; Menon, A.K.; et al. Federated learning with only positive labels. In Proceedings of the Proceedings of the 37th International Conference on Machine Learning (ICML). PMLR, 2020, Vol. 119, pp. 10946–10956.

- Yang, W.; Wang, S.; Kang, J.J.; Johnstone, M.N.; Bedari, A. A linear convolution-based cancelable fingerprint biometric authentication system. Computers & Security 2022, 114, 102583. [Google Scholar] [CrossRef]

- Taheri, S.; Islam, M.M.; Safavi-Naini, R. Privacy-Enhanced Profile-Based Authentication Using Sparse Random Projection. In Proceedings of the Proceedings of the IFIP SEC’17. Springer, 2017, pp. 474–490.

- Islam, M.M.; Rafiq, M.A.; Islam, M.A. A Privacy-Preserving Behavioral Authentication System. In Proceedings of the International Symposium on Foundations and Practice of Security. Springer, 2024, pp. 95–107.

- Islam, M.M.; Safavi-Naini, R.; Kneppers, M. Scalable behavioral authentication. IEEE Access 2021, 9, 43458–43473. [Google Scholar] [CrossRef]

- Baig, A.F.; Eskeland, S.; Yang, B. Novel and Efficient Privacy-Preserving Continuous Authentication. Cryptography 2024, 8, 3. [Google Scholar] [CrossRef]

- Meng, W.; Wong, D.S.; Furnell, S.; Zhou, J. Surveying the development of biometric user authentication on mobile phones. IEEE Communications Surveys & Tutorials 2014, 17, 1268–1293. [Google Scholar] [CrossRef]

- Huixian, L.; et al. Key binding based on biometric shielding functions. In Proceedings of the Information Assurance and Security, 2009. IAS’09. Fifth International Conference on. IEEE, 2009, Vol. 1, pp. 19–22.

- Dodis, Y.; Ostrovsky, R.; Reyzin, L.; Smith, A. Fuzzy extractors: How to generate strong keys from biometrics and other noisy data. SIAM journal on computing 2008, 38, 97–139. [Google Scholar] [CrossRef]

- Domingo-Ferrer, J.;Wu, Q.; Blanco-Justicia, A. Flexible and robust privacy-preserving implicit authentication. In Proceedings of the ICT Systems Security and Privacy Protection: 30th IFIP TC 11 International Conference, SEC 2015, Hamburg, Germany, May 26-28, 2015. Springer, 2015, pp. 18–34.

- Wang, Y.; Plataniotis, K.N. An analysis of random projection for changeable and privacy-preserving biometric verification. IEEE Transactions on Systems, Man, and Cybernetics, Part B (Cybernetics) 2010, 40, 1280–1293. [Google Scholar] [CrossRef] [PubMed]

- Punithavathi, P.; Geetha, S. Dynamic sectored random projection for cancelable iris template. In Proceedings of the 2016 International Conference on Advances in Computing, Communications and Informatics (ICACCI). IEEE, 2016, pp. 711–715.

- Deshmukh, M.; Balwant, M.K. Generating cancelable palmprint templates using local binary pattern and random projection. In Proceedings of the 2017 13th International Conference on Signal-Image Technology & Internet-Based Systems (SITIS). IEEE, 2017, pp. 203–209.

- Rajasekar, V.; Premalatha, J.; Sathya, K. Cancelable Iris template for secure authentication based on random projection and double random phase encoding. Peer-to-Peer Networking and Applications 2021, 14, 747–762. [Google Scholar] [CrossRef]

- Peng, J.; Gupta, B.B.; Abd El-Latif, A.A. A biometric cryptosystem scheme based on random projection and neural network. Soft Computing 2021, 25, 7657–7670. [Google Scholar] [CrossRef]

- Kaski, S. Dimensionality reduction by random mapping: Fast similarity computation for clustering. In Proceedings of the 1998 IEEE International Joint Conference on Neural Networks Proceedings. IEEE World Congress on Computational Intelligence (Cat. No. 98CH36227). IEEE, 1998, Vol. 1, pp. 413–418.

- Dasgupta, S.; Gupta, A. An elementary proof of a theorem of Johnson and Lindenstrauss. Random Structures & Algorithms 2003, 22, 60–65. [Google Scholar]

- Achlioptas, D. Database-friendly random projections: Johnson-Lindenstrauss with binary coins. Journal of Computer and System Sciences 2003, 66, 671–687. [Google Scholar] [CrossRef]

- Dwork, C.; McSherry, F.; Nissim, K.; Smith, A. Calibrating noise to sensitivity in private data analysis. In Proceedings of the Theory of Cryptography: Third Theory of Cryptography Conference, TCC 2006, New York, NY, USA, March 4-7, 2006. Proceedings 3. Springer, 2006, pp. 265–284.

- Dong, J.; Roth, A.; Su, W.J. Gaussian differential privacy. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 2022, 84, 3–37. [Google Scholar] [CrossRef]

- Wang, R.; Fung, B.C.; Zhu, Y. Heterogeneous data release for cluster analysis with differential privacy. Knowledge-Based Systems 2020, 201, 106047. [Google Scholar] [CrossRef]

- Nielsen, F. On a variational definition for the Jensen-Shannon symmetrization of distances based on the information radius. Entropy 2021, 23, 464. [Google Scholar] [CrossRef] [PubMed]

- Wang, Q.; Kulkarni, S.R.; Verdú, S. Divergence estimation for multidimensional densities via k-nearest-neighbor distances. IEEE Transactions on Information Theory 2009, 55, 2392–2405. [Google Scholar] [CrossRef]

- Zheng, Z.; Li, Z.; Huang, C.; Long, S.; Li, M.; Shen, X. Data poisoning attacks and defenses to LDP-based privacy-preserving crowdsensing. IEEE Transactions on Dependable and Secure Computing 2024.

- Demmel, J.W.; Higham, N.J. Improved error bounds for underdetermined system solvers. SIAM Journal on Matrix Analysis and Applications 1993, 14, 1–14. [Google Scholar] [CrossRef]

- Gupta, S.; Buriro, A.; Crispo, B. A chimerical dataset combining physiological and behavioral biometric traits for reliable user authentication on smart devices and ecosystems. Data in brief 2020, 28, 104924. [Google Scholar] [CrossRef] [PubMed]

- Chawla, N.V.; Bowyer, K.W.; Hall, L.O.; Kegelmeyer, W.P. SMOTE: synthetic minority over-sampling technique. Journal of artificial intelligence research 2002, 16, 321–357. [Google Scholar] [CrossRef]

- Gupta, S.; Buriro, A.; Crispo, B. DriverAuth: A risk-based multi-modal biometric-based driver authentication scheme for ride-sharing platforms. Computers & Security 2019, 83, 122–139. [Google Scholar]

| 1 | We replaced “NaN” and “Infinity” with zero and dropped duplicate rows. |

| 2 | We used more than one and different DP noise to generate multiple noisy projected profiles from a single auxiliary profile. |

| Notation | Meaning | Notation | Meaning |

|---|---|---|---|

| Data sample (vector) | d | Vector dimension (total features) | |

| A behavioral profile | Number of vectors | ||

| Verification data (profile) | Random matrix | ||

| Projected vector | Differential privacy algorithm | ||

| Projected profile | ML-based classifier | ||

| Noisy projected profile | Verification algorithm | ||

| Prediction vector | ML model for privacy attack | ||

| Profile covariance matrix | GAN generator, discriminator | ||

| Feature recoverability fraction | Minimum eigenvalue | ||

| sensitivity of function f | Total variation distance |

| Data Set | n | ||||

| Voice data | 73 | 200 | 0.5 | 1 | 0.99 |

| Swipe data | 30 | 300 | 1.0 | 0.5 | 0.94 |

| Drawing data | 46 | 300 | 0.7 | 1 | 0.99 |

| Profile Type | Metric | Voice Data | Swipe Data | Drawing Data | Comments |

|---|---|---|---|---|---|

| Model Training | |||||

| Plain Profile | FAR | 0.94 | 0.90 | 0.69 | Obtained results consistent with those reported in the original paper. |

| FRR | 1.90 | 3.07 | 1.07 | ||

| RP Profile | FAR | 0.45 | 0.23 | 0.33 | Slightly improved performance due to the use of distinct per profile. |

| FRR | 0.44 | 1.07 | 1.03 | ||

|

RP+DP Profile (Laplace Noise) |

FAR | 0.13 | 0.18 | 0.65 | Laplace noise slightly reduces FAR but marginally increases overall FRR. |

| FRR | 2.86 | 2.31 | 2.12 | ||

|

RP+DP Profile (Gaussian Noise) |

FAR | 0.06 | 0.54 | 0.21 | Gaussian noise produced better results due to small relaxation of privacy guarantee. |

| FRR | 2.63 | 1.87 | 1.63 | ||

| Model Update | |||||

|

RP+DP Profile (Laplace Noise) |

FAR | 0.18 | 1.95 | 0.94 | The updated classifier keeps FAR and FRR near the original . |

| FRR | 3.15 | 2.26 | 1.85 | ||

|

RP+DP Profile (Gaussian Noise) |

FAR | 1.64 | 1.70 | 1.55 | In updated Gaussian noise still performs slightly better than Laplace noise. |

| FRR | 2.42 | 1.97 | 2.68 | ||

| Comparison | Laplace p-value | Gaussian p-value |

|---|---|---|

| Valid vs. Invalid | ||

| Valid vs. Inter-profile | ||

| Invalid vs. Inter-profile |

| Reference | Method | Data Type | FAR & FRR | Privacy Property | Attack Resilience |

|---|---|---|---|---|---|

| [31] | RP | Biometrics | 18.19% | Ensure 2 out of 3 | Correlation, Cross match, Known R |

| [32] | RP | Biometrics | Below 4.0% | Ensure 2 out of 3 | Limited attack analysis |

| [33] | LBP + RP | Biometrics | 7.81% | Ensure 2 out of 3 | Limited attack analysis |

| [23] | RP | Behavioral, Biometrics | Below 6.0% | Ensure 2 out of 3 | Minimum-norm solution based |

| [34] | DRPE + FFT | Biometrics | 0.46% | Ensure 1 out of 3 | Brute-force, Correlation, Known key |

| [35] | RP + BPNN | Biometrics | Below 1.0% | Ensure all 3 | Brute-force, Cross-match, Known R |

| RUIP-BA | RP + DP | Behavioral | Below 4.0% | Ensure all 3 | ML-driven, Cross-match, Known and unknown parameters, Formal proofs |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).