Submitted:

31 March 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Previous Work

2.1. Shamir’s Trick

2.2. Straus’s Method

2.3. Bos-Coster Method

2.4. Fixed Window Method

2.5. Sliding Window Method

2.6. Pippenger’s Bucket Method

2.7. BGMW Method

3. Pippenger’s Bucket Method for Small MSMs

3.1. The Proposed Variant

4. Experiments

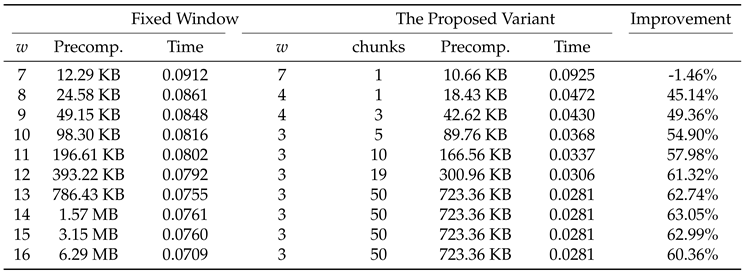

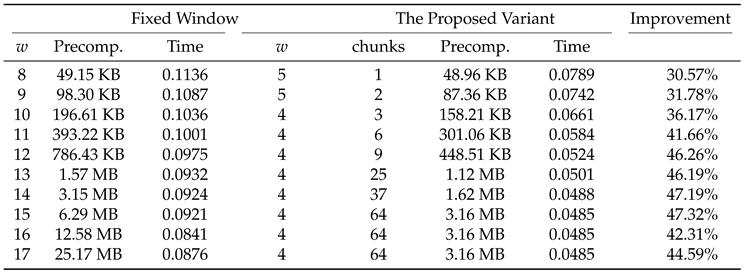

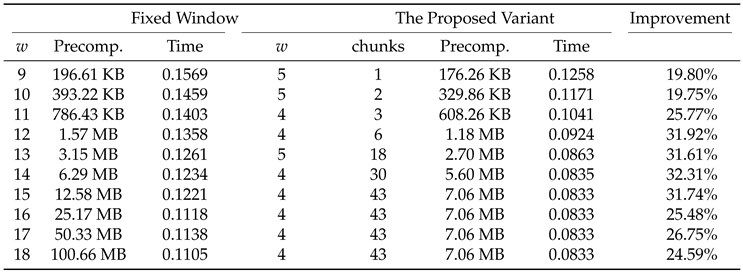

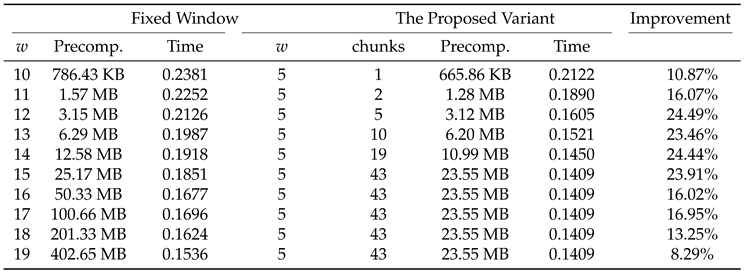

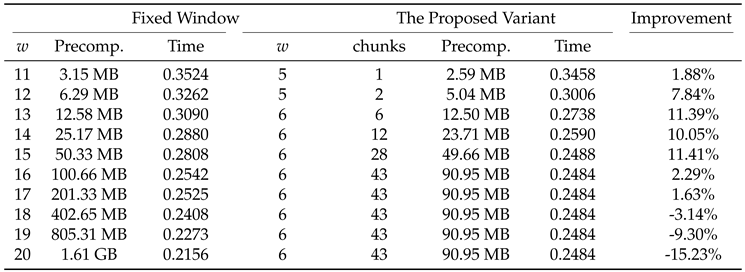

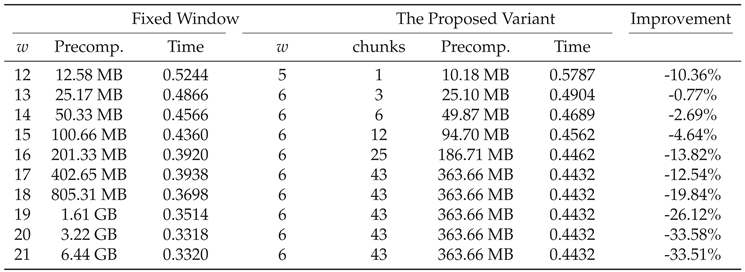

4.1. Experiment Results

5. Conclusions

6. Further Research

References

- Pippenger, N. On the Evaluation of Powers and Related Problems. SIAM Journal on Computing 1976, 9, 230–250. [Google Scholar] [CrossRef]

- Möller, B. Algorithms for multi-exponentiation. In Proceedings of the International workshop on selected areas in cryptography; Springer, 2001; pp. 165–180. [Google Scholar]

- Straus, E.G. Addition chains of vectors (problem 5125). American Mathematical Monthly 1964, 70, 16. [Google Scholar]

- Bos, J.; Coster, M. Addition chain heuristics. In Proceedings of the Conference on the Theory and Application of Cryptology; Springer, 1989; pp. 400–407. [Google Scholar]

- Hankerson, D.; Menezes, A.; Vanstone, S. Guide to Elliptic Curve Cryptography; Springer, 2004. [Google Scholar]

- Luo, G.; Fu, S.; Gong, G. Speeding up multi-scalar multiplication over fixed points towards efficient zksnarks. IACR Transactions on Cryptographic Hardware and Embedded Systems 2023, 358–380. [Google Scholar] [CrossRef]

- Brickell, E.F.; Gordon, D.M.; McCurley, K.S.; Wilson, D.B. Fast exponentiation with precomputation. In Proceedings of the Workshop on the Theory and Application of of Cryptographic Techniques; Springer, 1992; pp. 200–207. [Google Scholar]

- Supranational. blst: Multilingual BLS12-381 signature library. https://github.com/supranational/blst, 2020–2026. Accessed: 2026-03-06.

- LuoGuiwen. MSM_blst: Multi Scalar Multiplication over the BLS12-381 curve utilizing blst. https://github.com/LuoGuiwen/MSM_blst, 2023–2026. Accessed: 2026-03-06.

- Grandine. rust-kzg: A Multi-Backend KZG and MSM Framework. https://github.com/grandinetech/rust-kzg, 2020–2025. Accessed 2026. (accessed on 2026).

- arkworks contributors. arkworks-rs: Rust ecosystem for zkSNARK programming. https://github.com/arkworks-rs, 2022–2026. Accessed: 2026-03-06.

- mratsim. Constantine: High-performance cryptography stack for verifiable computation and blockchain protocols. https://github.com/mratsim/constantine, 2024–2026. Accessed: 2026-03-06.

- Shigeo Mitsunari (herumi). MCL: A portable and fast pairing-based cryptography library. https://github.com/herumi/mcl, 2015–2026. Accessed: 2026-03-06.

- zkcrypto contributors. zkcrypto: Rust ecosystem for zero-knowledge cryptography. https://github.com/zkcrypto, 2018–2026. Accessed: 2026-03-06.

- Dziembowski, S.; Faust, S.; Kędzior, P.; Mielniczuk, M.; Mohanty, S.K.; Pietrzak, K. Beholder Signatures. In Cryptology ePrint Archive; 2025. [Google Scholar]

- Boneh, D.; et al. BLS Signatures, 2023. IETF CFRG draft.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.