Submitted:

30 March 2026

Posted:

01 April 2026

You are already at the latest version

Abstract

Keywords:

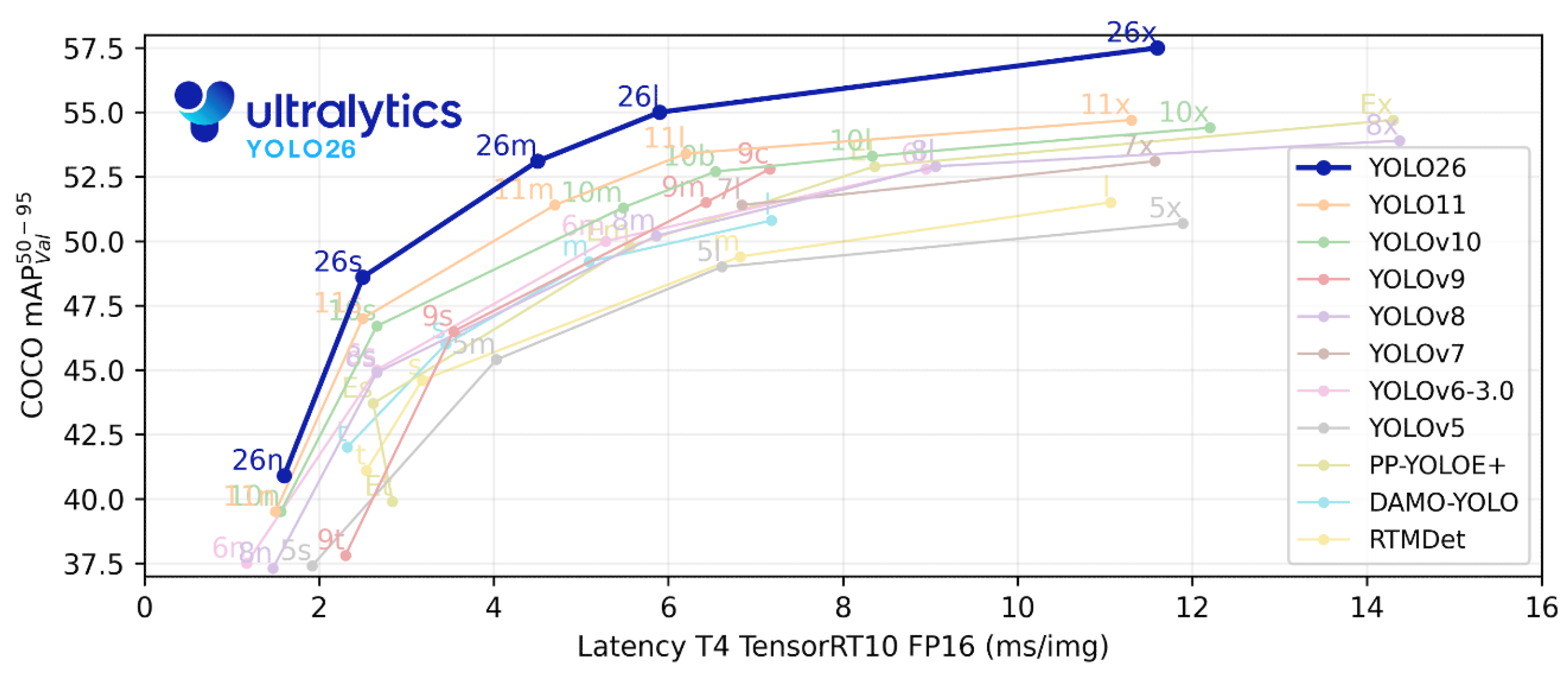

1. Introduction

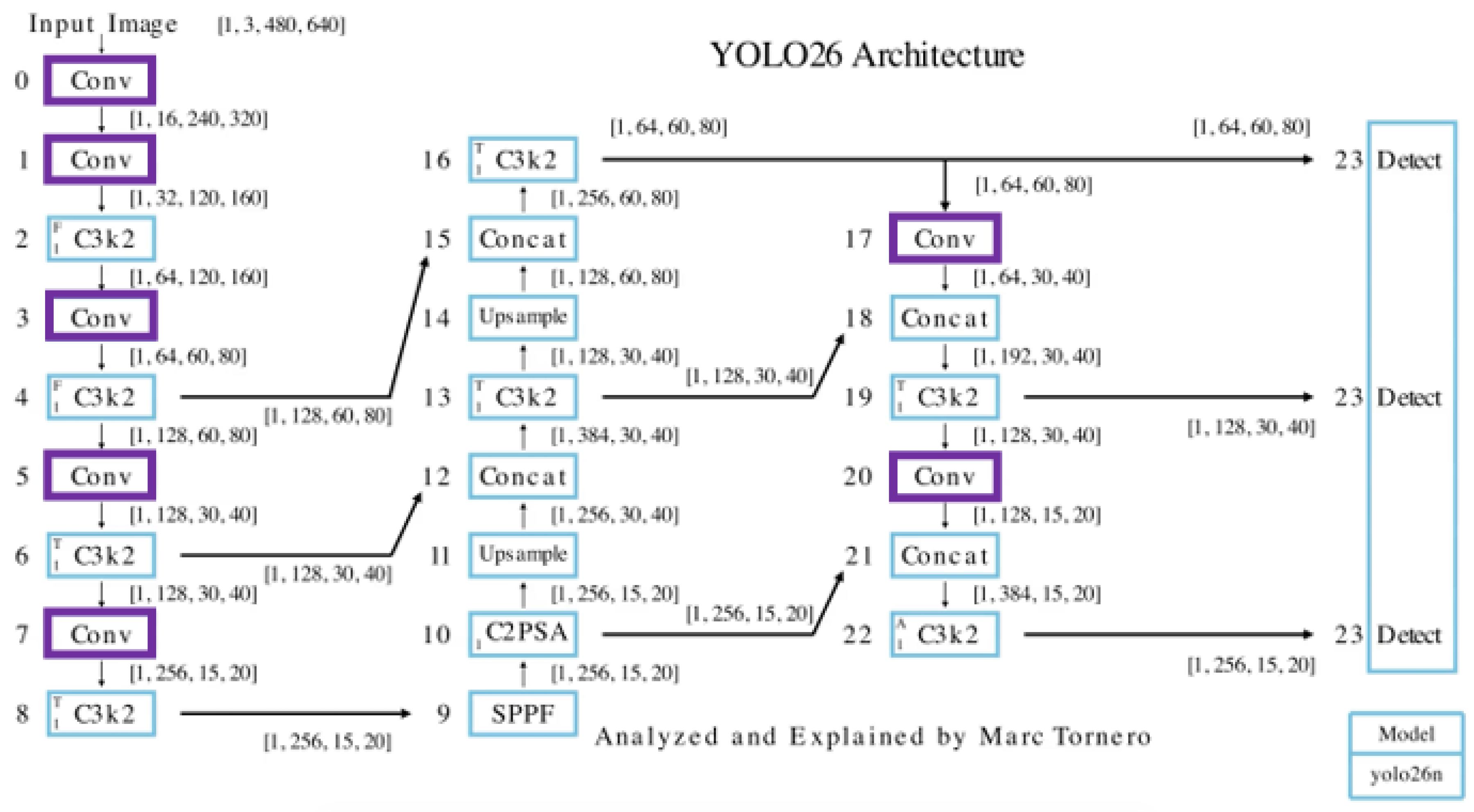

- Block-level architectural analysis: A module-by-module dissection of YOLO26n detailing operations, tensor dimensions, and transformations across backbone, and neck.

- Functional interpretation: Explanations of what each component does and why it is included

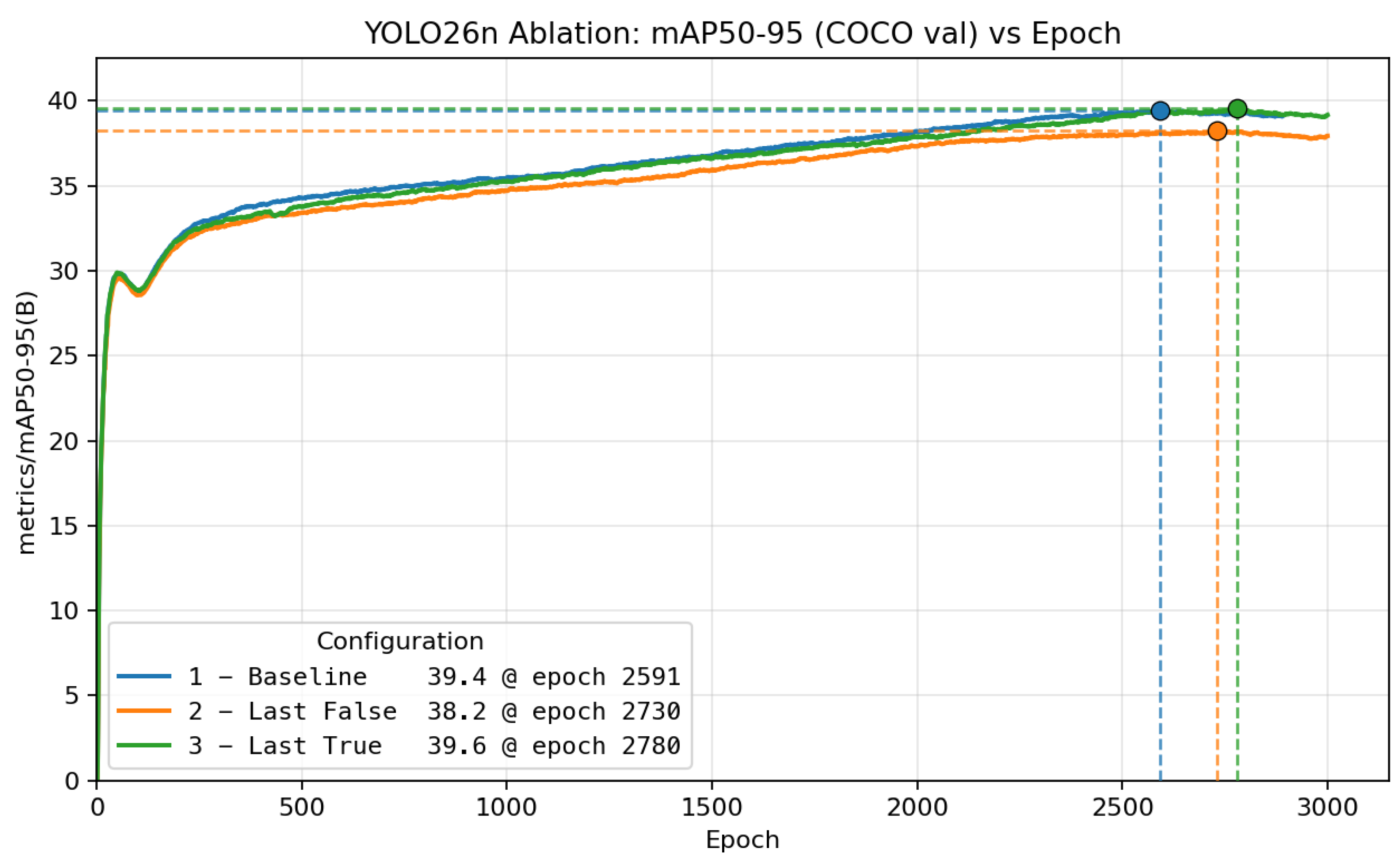

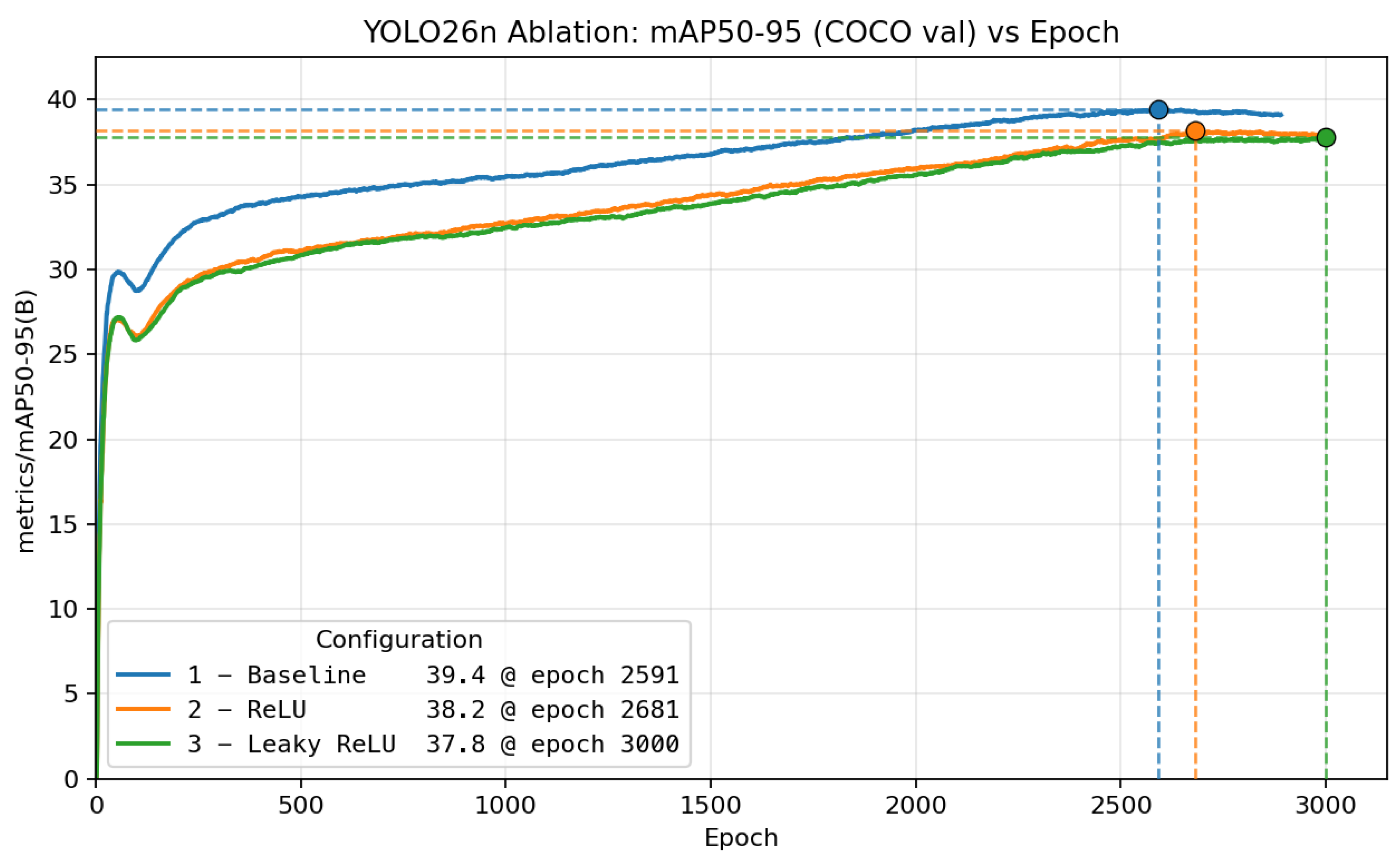

- Controlled ablation suite: A set of targeted ablations evaluated on MS COCO train2017/val2017 that quantifies the impact of individual design choices on mAP50–95 and latency under a fixed compute and training recipe.

- Reproducibility protocol: A fully specified configuration and benchmarking methodology to support replication and future extension.

2. Related Work

3. Approach

3.1. Architecture Analysis Methodology (Objective → Flow)

- Objective. We describe the goal of the block in the context of detection

- Flow. We document the internal sequence of operations and how information is routed through the block.

3.2. Experimental Setup and Controlled Ablation Protocol

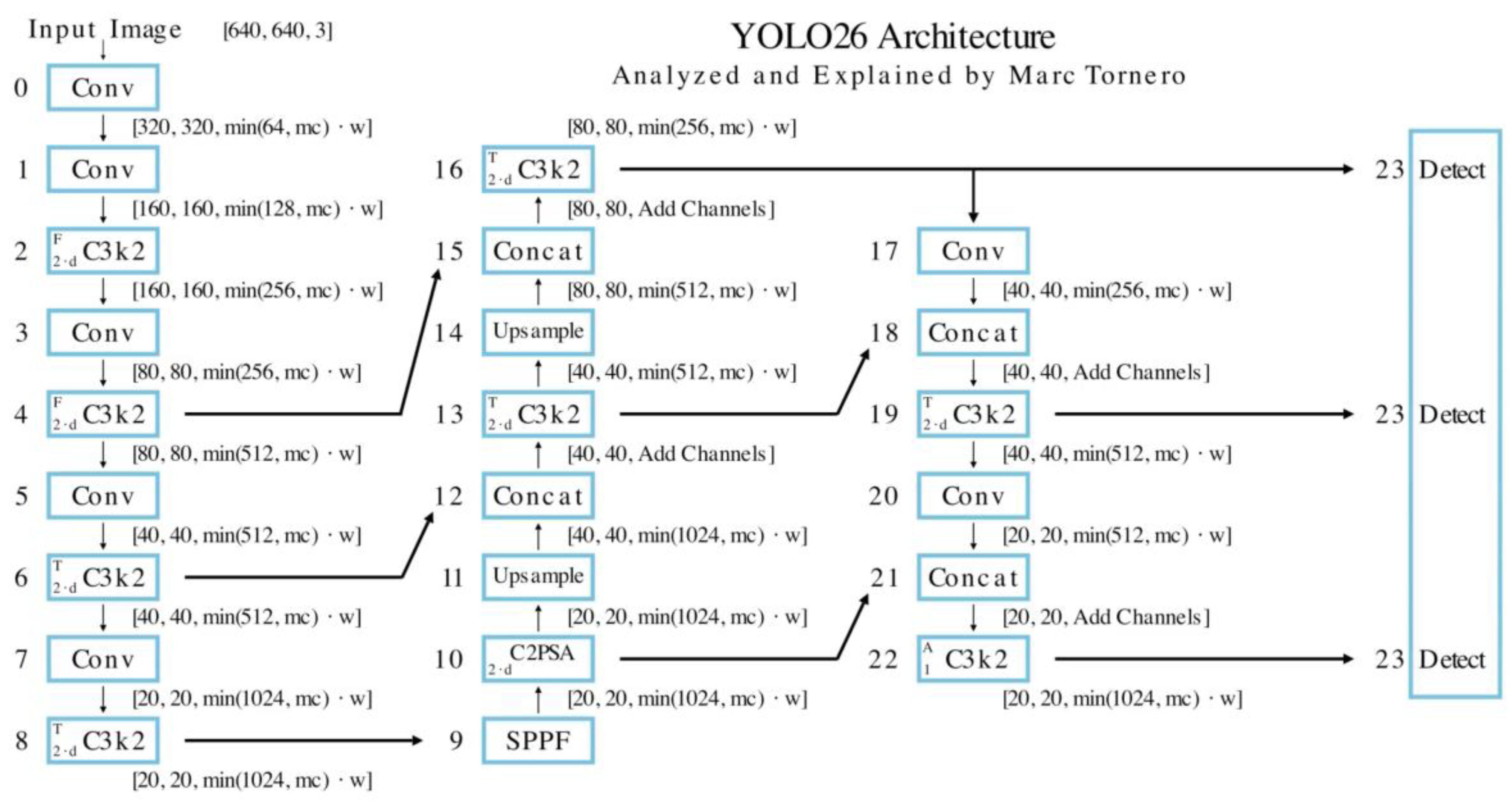

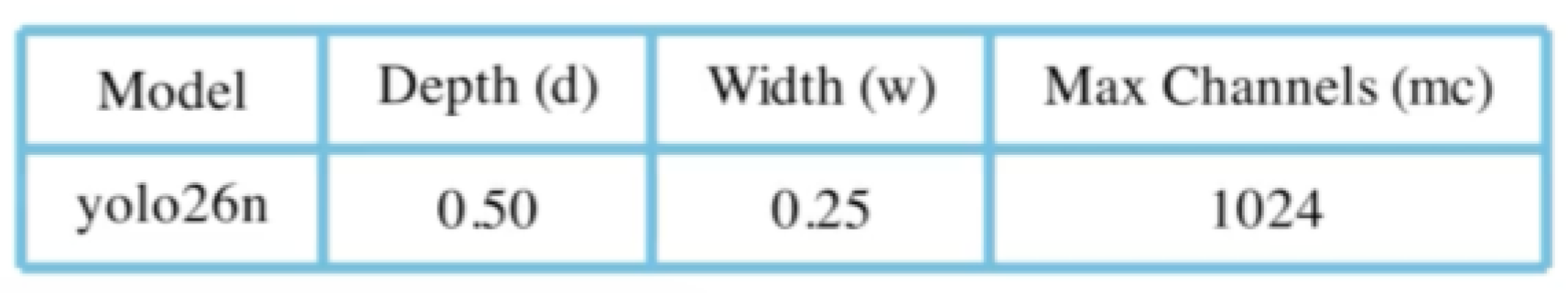

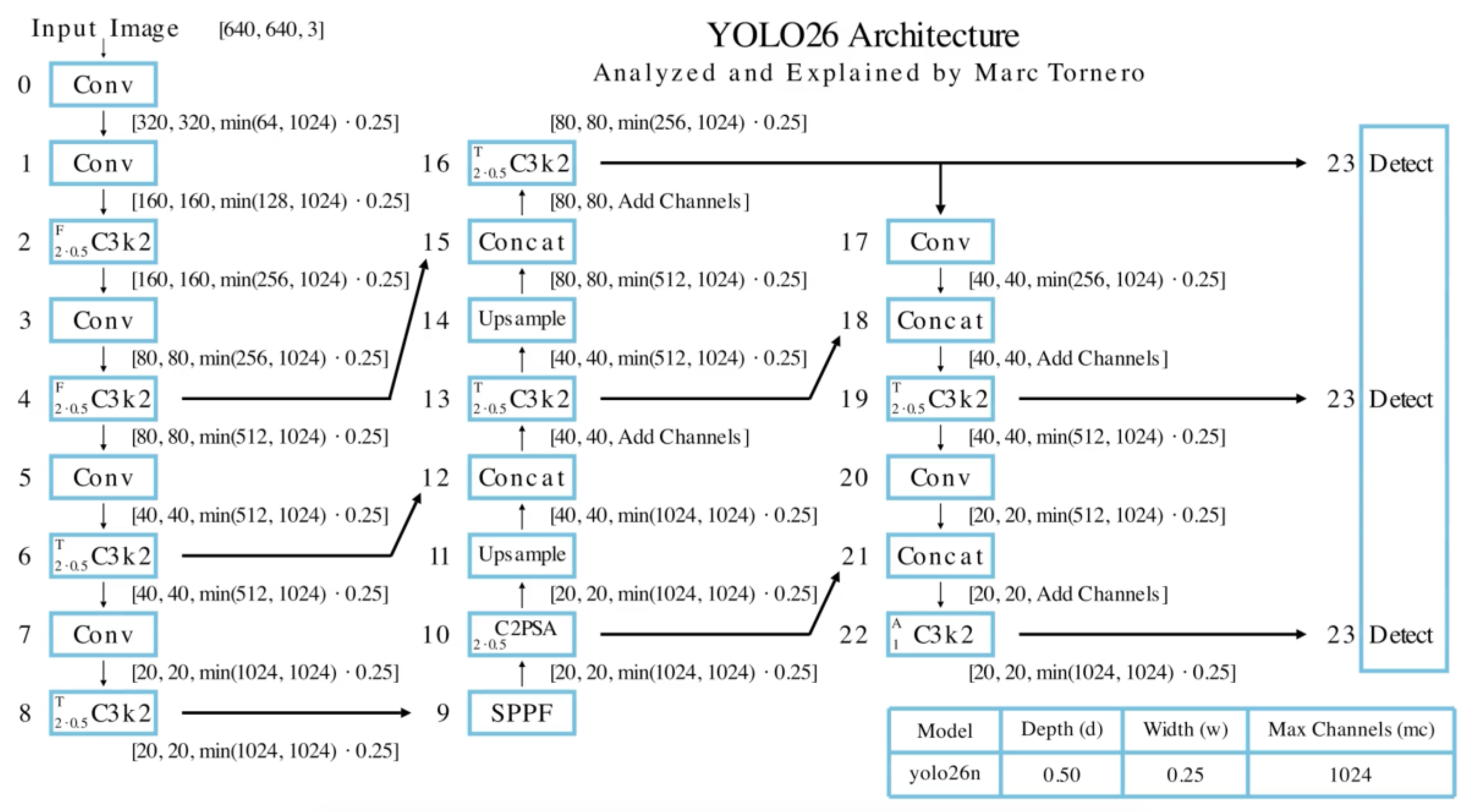

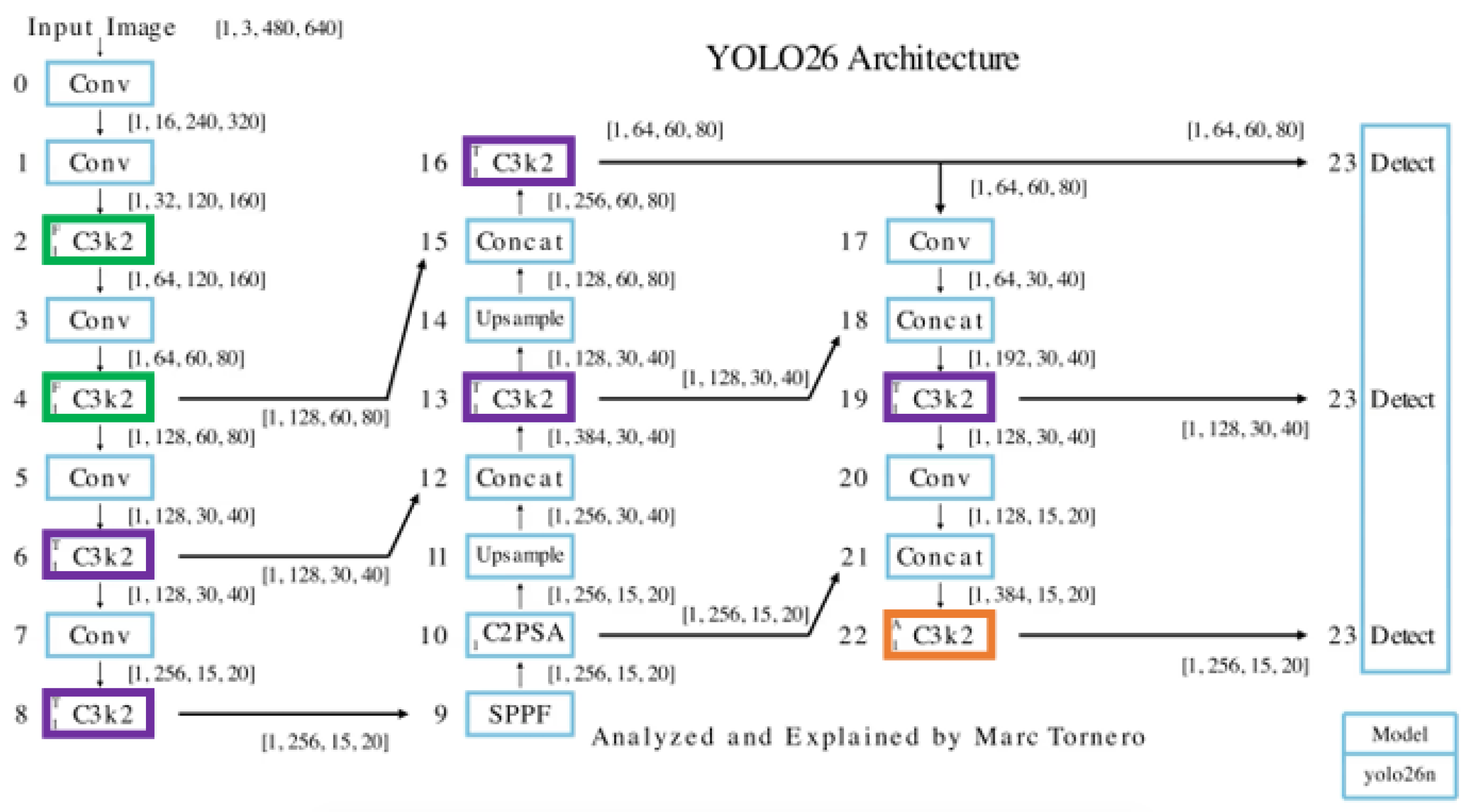

4. Network Architecture Overview

5. Convolutional Block (Conv)

5.1. Objective

- Feature extraction: By applying filters (kernels) to input data, they learn spatial hierarchies of patterns, such as edges, textures, shapes, and objects.

- Hierarchical learning: Stacking multiple layers allows for capturing increasingly abstract and complex features, from low-level edges in early layers to high-level representations in deeper layers.

- Progressive downsampling: These layers reduce the spatial dimensions of the input image (e.g., P1 → P2 → P3) while increasing channel depth. This process enables efficient feature compression, reducing computational cost while preserving critical information. By maintaining spatial relationships, the network retains the structural arrangement of the data and preserves meaningful features.

- Parameter sharing: Convolutional filters are reused across the input, reducing the number of learnable parameters and enabling translational invariance. Common variants include standard convolution (3×3), pointwise convolution (1×1), and depthwise convolution (DWConv) [28].

5.2. Flow

- Convolution: Applies a learned kernel (technically implemented as cross-correlation) to extract local spatial features.

- Batch normalization (BN) [29]: Normalizes intermediate activations to stabilize training and accelerate convergence.

- Activation: Introduces non-linearity, enabling the network to model complex patterns. YOLO26 uses SiLU (also known as Swish) [30,31] as the default activation, which provides a smooth, non-linear transformation. With sufficient SiLU neurons, the network can approximate complex functions with high fidelity.

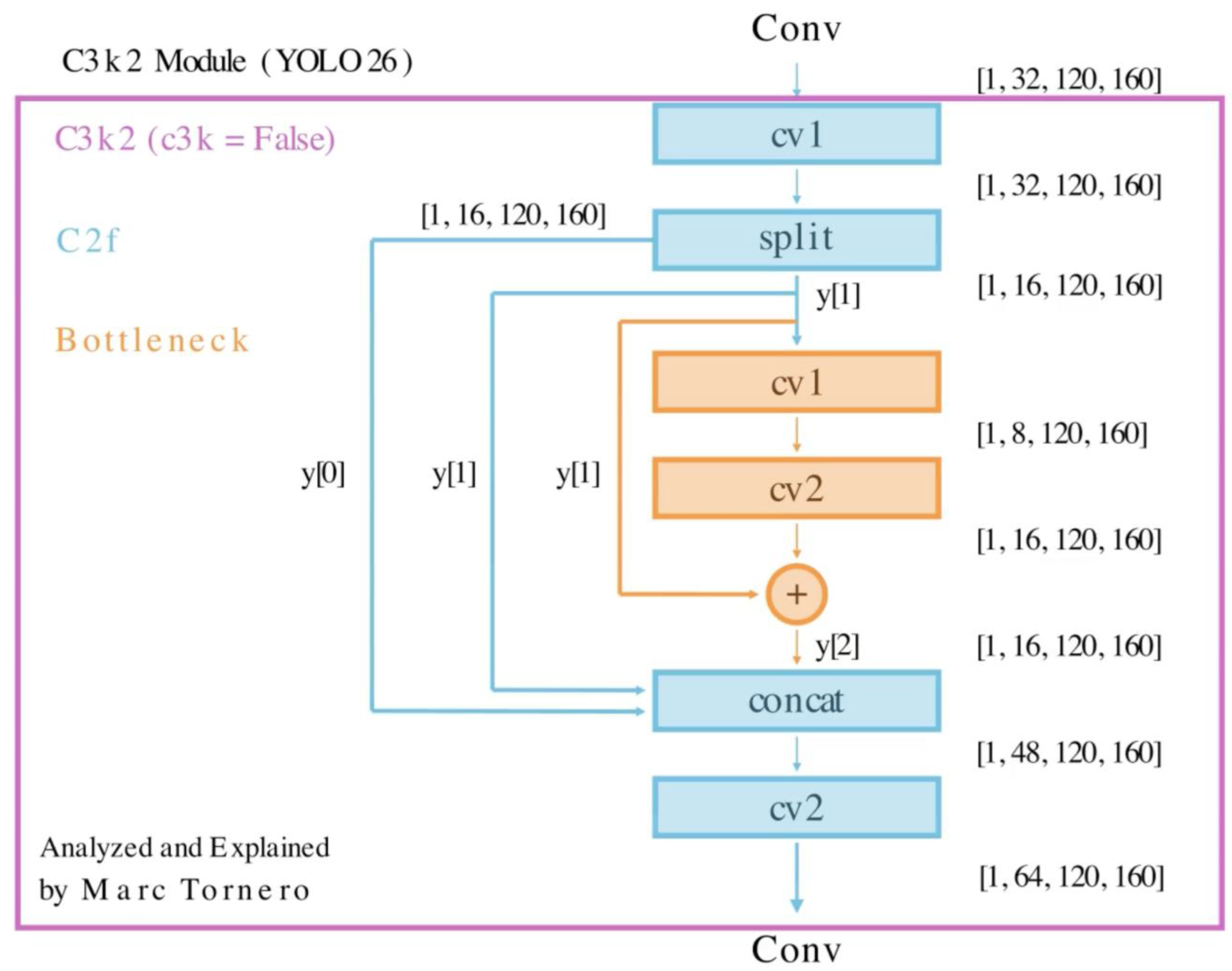

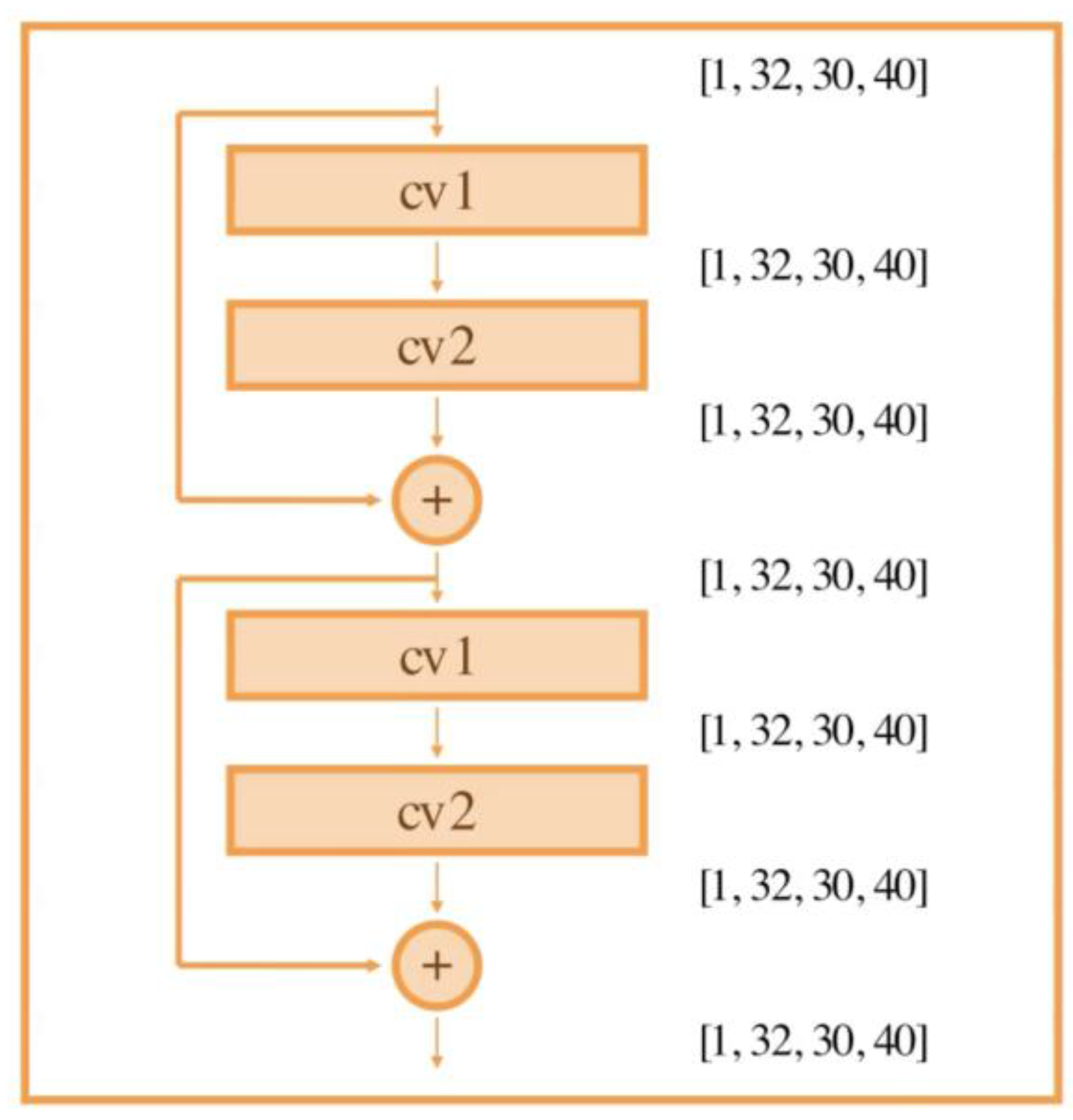

6. Feature Refinement Module (C3k2 with Argument False)

6.1. Objective

- Refinement path: one part passes through a compact bottleneck with residual connections [33] to extract refined features.

- Shortcut path: the other bypasses the bottleneck entirely.

- The two paths are then recombined through concatenation, followed by a projection into a richer, more discriminative feature space. This design improves representational capacity while preserving computational efficiency. Inside the C3k2 (F) module, the bottleneck block inside excels in several key areas:

- Compression phase (Squeezing features): The first convolution in the bottleneck block reduces the input channels. This dimensionality reduction focuses on distilling the most critical information while discarding less important features.

- Processing in the bottleneck: The reduced feature set undergoes transformations (convolutions and activations) to refine patterns efficiently. This step emphasizes core patterns while conserving computational resources.

- Expansion phase (Rebuilding features): The final convolution expands the channels back to ensure the network retains capacity for complex pattern modeling. This combines the critical features from compression with the structural richness needed for downstream tasks.

- Promoting a compact and informative representation: By alternating between high and low-dimensional spaces, the Bottleneck prioritizes relevant features, retaining only the most useful information.

- Scalability: In YOLO26, C3k2(F) scales its internal depth with model size: nano/small/medium use one Bottleneck, while large/extra-large use two Bottlenecks in series between split and concat.

6.2. Flow

- Initial convolution (cv1): A 1×1 convolution is applied to the input tensor, preserving spatial resolution while transforming features and mixing channels.

- Split: The input tensor is divided along the channel dimension into two groups (32 channels → 16 + 16). One half (y[0]) is preserved for an identity/skip connection, while the other half (y[1]) is passed through the bottleneck for transformation.

- Bottleneck: The bottleneck processes y[1] via two consecutive 3×3 convolutions. The first reduces channels (16 → 8), compressing information and enabling learning in a reduced space. The second restores channels to the original number (8 → 16), expanding the feature representation while incorporating local spatial context. This encourages the network to capture structured patterns (curves, corners, textures) that are critical for object boundaries and class distinctions.

- Concatenation: The outputs from the split step and the bottleneck result are concatenated along the channel axis (y = [y[0], y[1], bottleneck’s output]). This operation merges raw and transformed features, creating a multi-view representation that enhances the network’s ability to detect diverse patterns.

- Final convolution (cv2): A 1×1 convolution is applied to the concatenated tensor to learn inter-channel relationships and project the features to the desired number of channels (48 → 64), enriching the feature space.

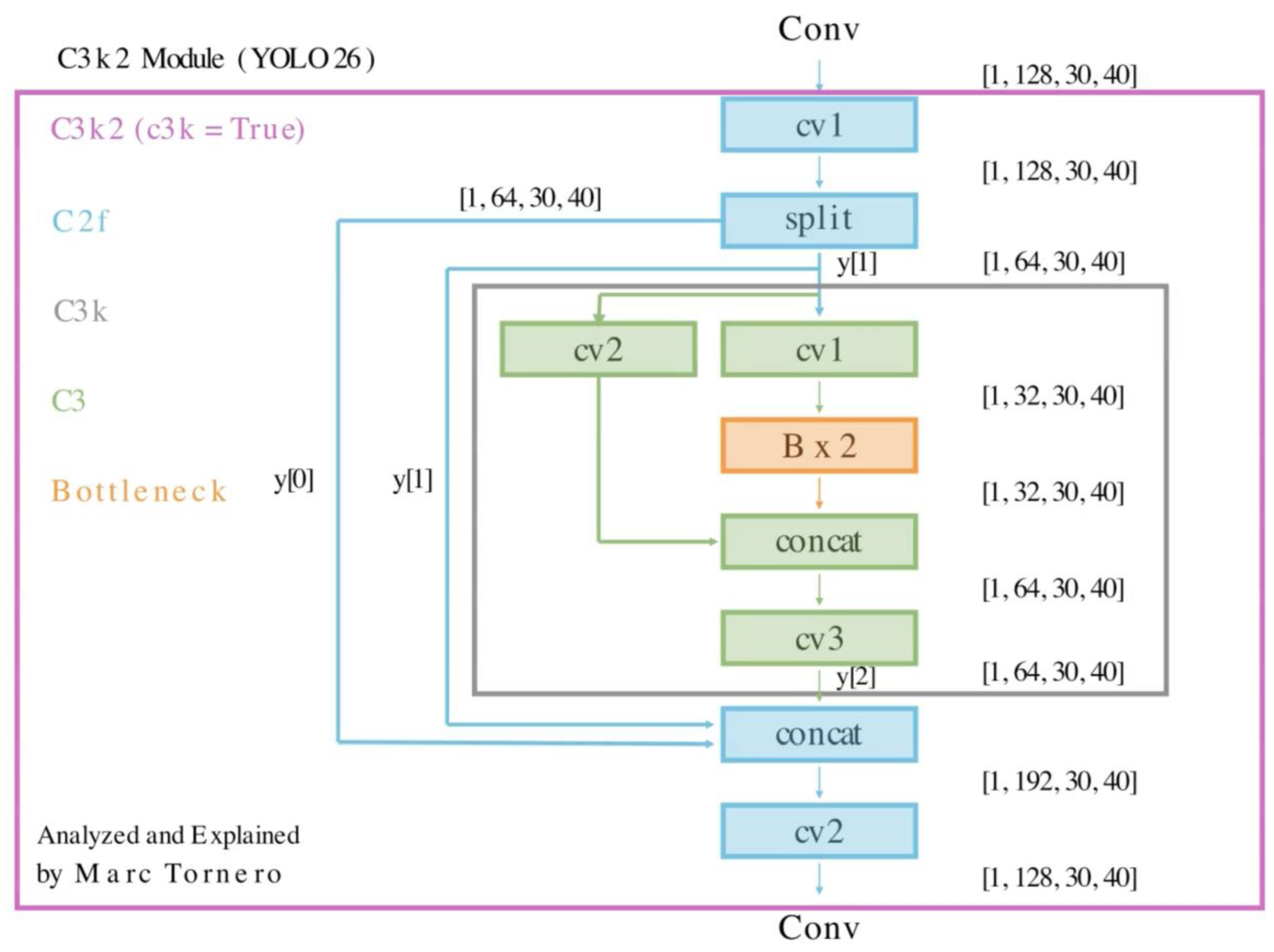

7. Feature Refinement Module (C3k2 with Argument True)

7.1. Objective

- Hierarchical compression (squeezing features): The initial convolutions reduce the input channels and partition features into smaller, more manageable subsets. This hierarchical compression retains the most critical information, optimizing for both efficiency and diversity of feature representation.

- Multi-stage processing within C3k: Each subset undergoes further refinement through a series of nested Bottleneck blocks. These blocks sequentially transform the compressed features to emphasize core patterns while discarding redundancies.

- Final expansion and aggregation: The outputs of the bottleneck blocks are recombined and expanded through concatenation and the final convolution. This phase balances dimensionality and feature richness, ensuring the network is prepared for subsequent stages.

- Promoting feature diversity and refinement: By incorporating multiple convolutional paths and iterative processing, the C3k block enhances the diversity of extracted patterns. This design ensures that both fine-grained and broader structural features are effectively captured.

- Scalability: In YOLO26, C3k2(T) scales its internal depth with model size: nano/small/medium use one C3k, while large/extra-large use two C3k blocks in series between split and concat (each C3k contains two Bottleneck blocks).

7.2. Flow

- Initial convolution (cv1): 1×1 convolution projects the input tensor for partitioned processing.

- Partitioning: Tensor is split along the channel dimension into two partitions: identity path and processed path.

- C3k path (c3k=True): The processed partition is passed through a C3k block with an internal double-Bottleneck design that uses 3×3 kernels to enhance spatial feature extraction and refinement.

- Concatenation: Merge input paths (y[0] and y[1]) and the C3k output along channels.

- Final convolution (cv2): 1×1 convolution projects concatenated tensor to the desired number of channels for downstream modules.

8. C3k2 Module Variants: Architecture Placement and Design Trade-Offs

- Early backbone stages (Stage 2 and 4 in green): c3k=False is used since feature maps are large (high spatial resolution). Lightweight bottlenecks capture low-level features such as edges and textures while keeping computation low.

- Deeper backbone stages (Stage 6 and 8 in purple): c3k=True is employed as feature maps become smaller (lower spatial resolution, higher channel depth). Heavier processing enables deeper, nonlinear transformations that capture object parts and semantic patterns.

- Neck layers (Fusion Stage 13 and Final Stages 16 (P3) and 19 (P4)): The fusion stage and the final P3 and P4 stages use c3k=True to efficiently integrate multi-scale features.

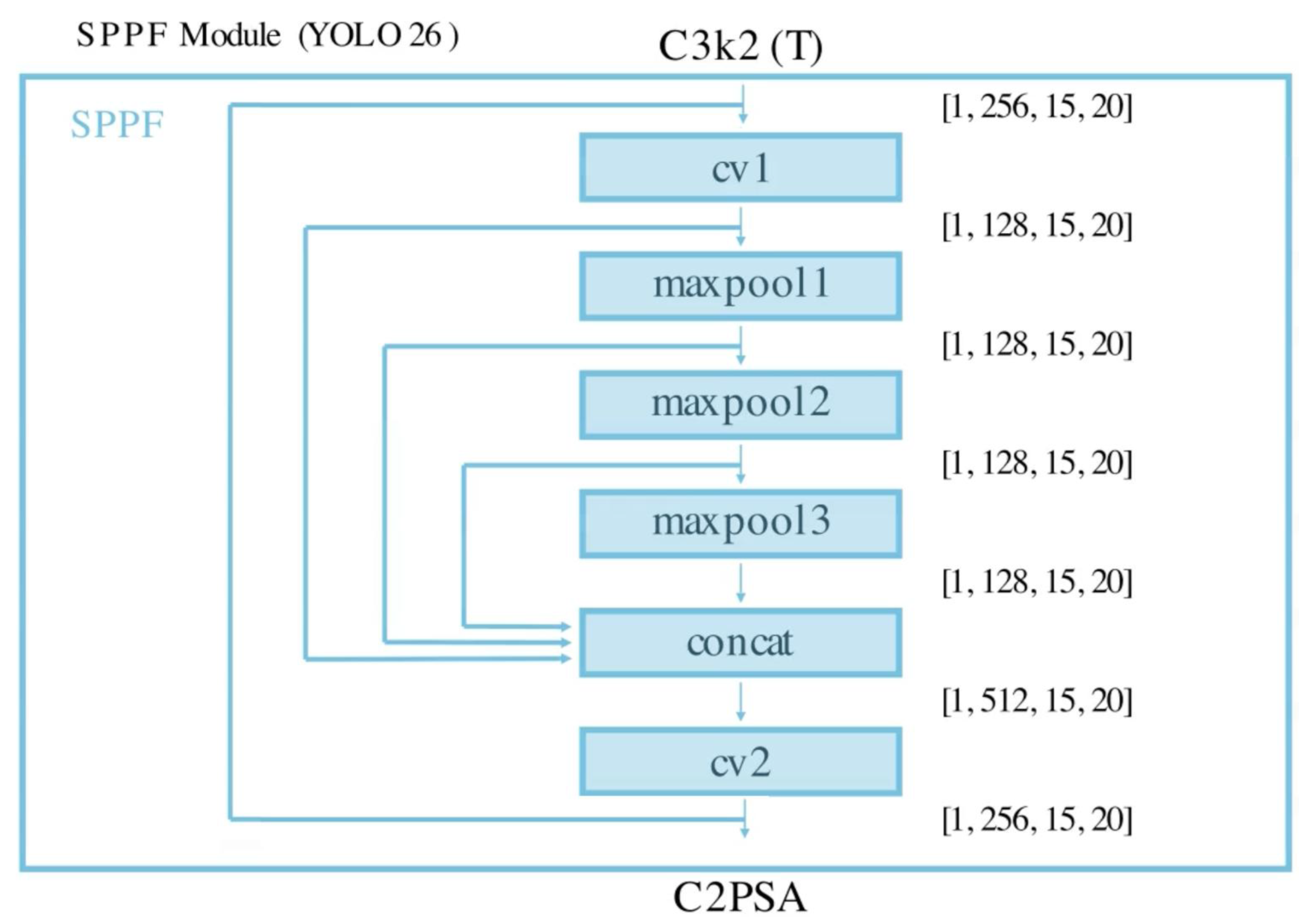

9. SPPF Module (Spatial Pyramid Pooling Fast)

9.1. Objective

- Multi-scale feature aggregation: Applies three max-pooling operations (kernel size=5) to capture spatial information at multiple scales, combining fine details and broad context.

- Feature fusion: Concatenates outputs from pooling operations to create a rich, multi-scale feature map, enhancing the network’s ability to detect objects of varying sizes.

- Efficient downsampling: Preserves spatial relationships while reducing resolution, ensuring compact and meaningful feature representation.

- Optimized design: Streamlines traditional SPP, reducing computations while maintaining scalability for real-time applications.

9.2. Flow

- Initial convolution (cv1): A 1×1 convolution reduces the number of channels to prepare the input for multi-scale pooling.

- Multi-scale max-pooling: Three parallel max-pooling operations (kernel size = 5) capture features at different receptive fields while preserving spatial relationships.

- Concatenation: Outputs of all pooling operations and the initial convolution are concatenated along the channel dimension, producing a multi-scale feature map.

- Final convolution (cv2): A 1×1 convolution projects the concatenated feature map back to the desired number of channels, creating a compact, enriched representation for downstream processing.

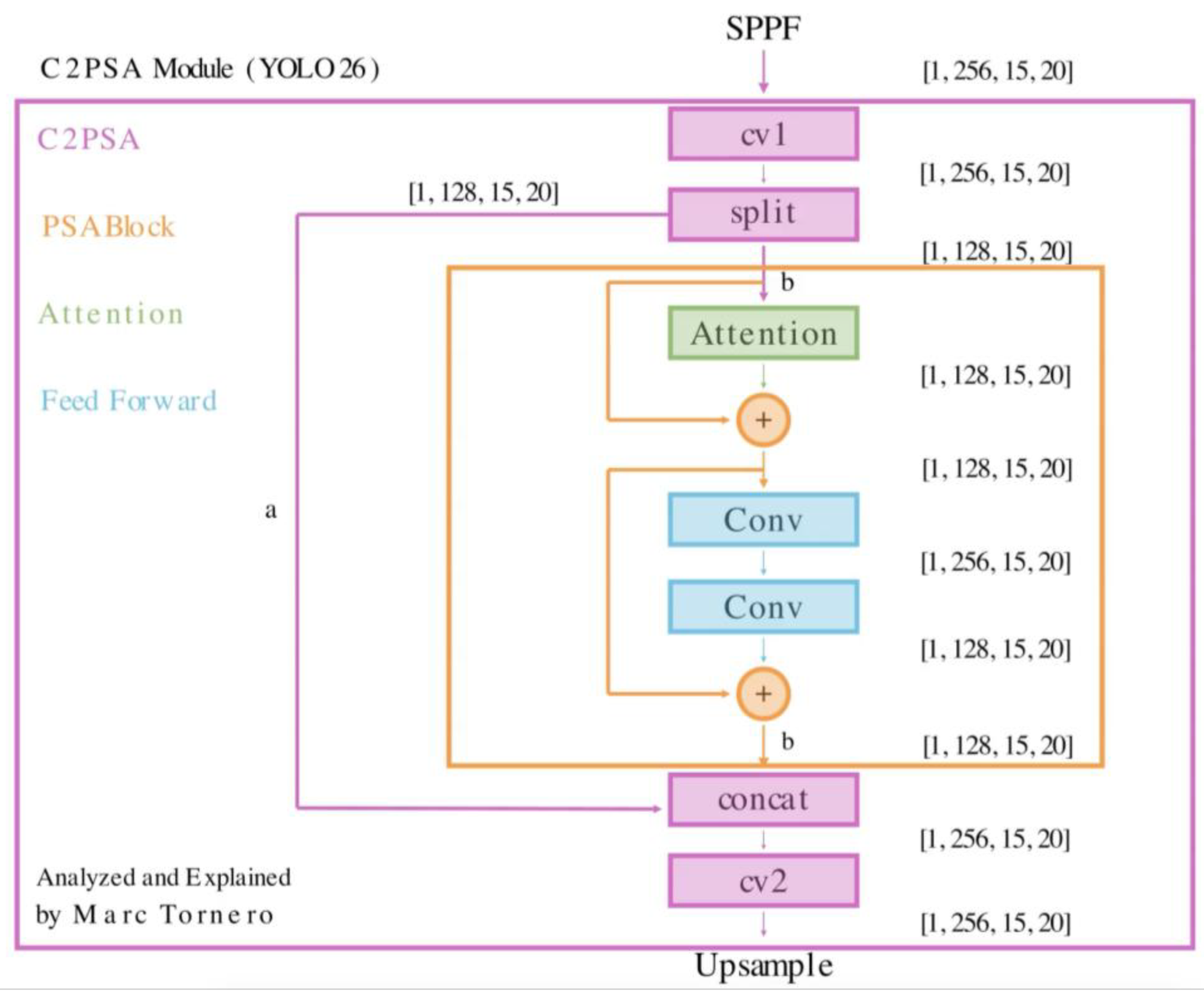

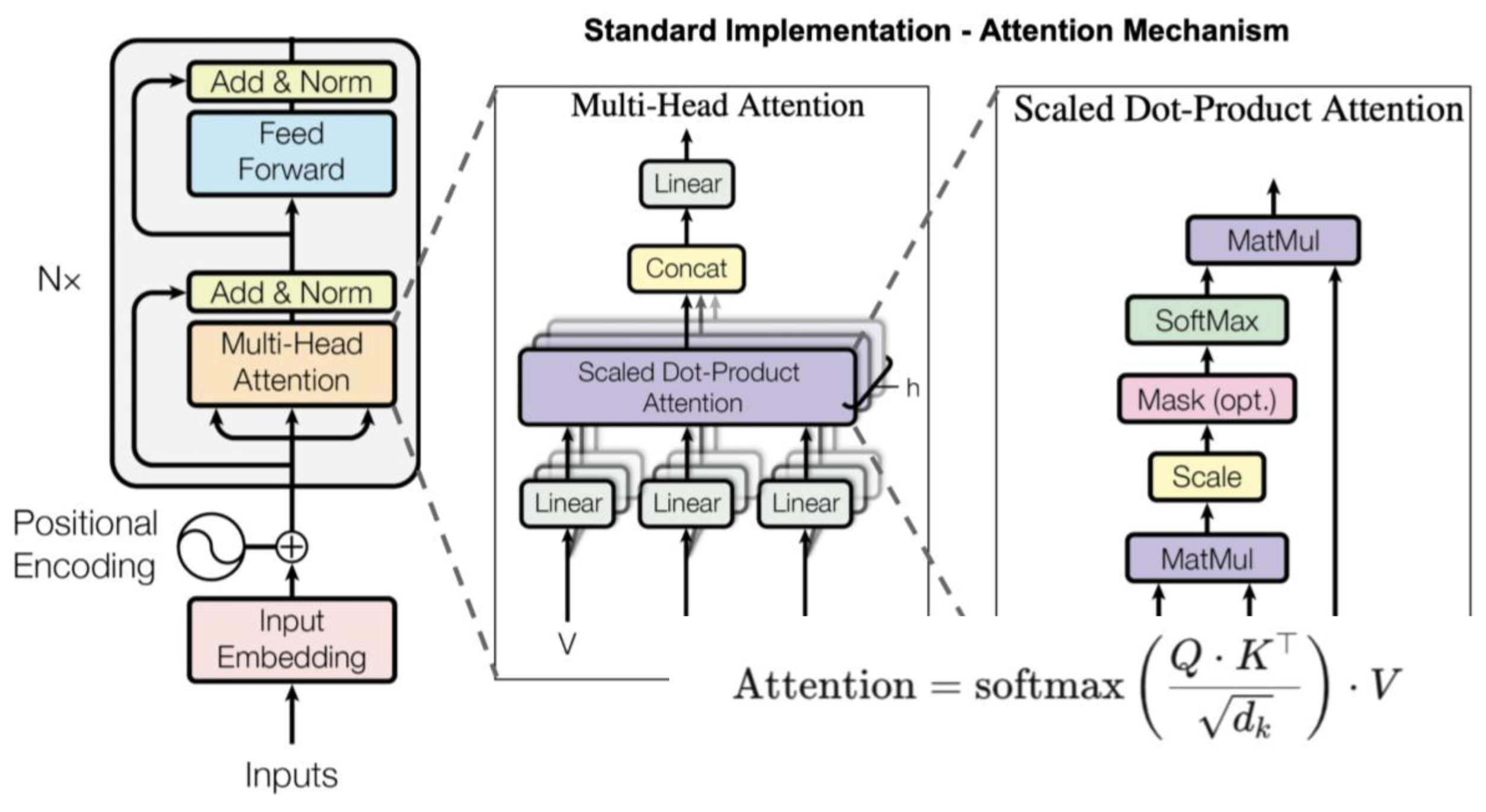

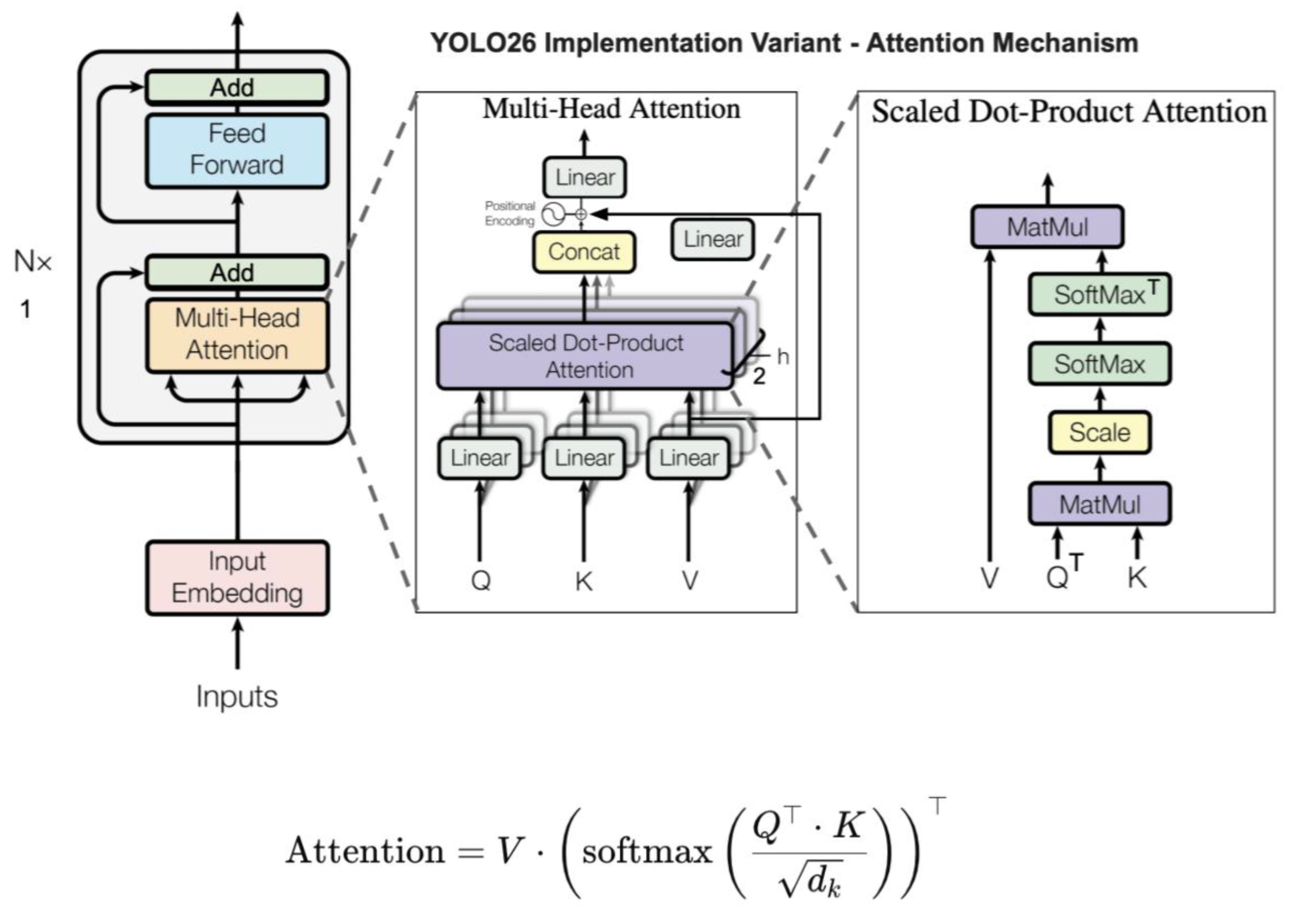

10. C2PSA Module (Cross Stage Partial with Position-Sensitive Attention)

10.1. Objective

- Dual-path processing: Input features are split into two pathways. One path undergoes direct convolutional processing to preserve local details, while the other applies attention-based transformations via PSABlock modules to capture long-range dependencies.

- Attention mechanisms: Each PSABlock leverages multi-head self-attention to model relationships between distant spatial locations, making the network more effective at handling complex and distributed object patterns.

- Spatial awareness: Position-sensitive encodings are incorporated to preserve relative spatial arrangements, strengthening localization accuracy.

- Feature refinement: Lightweight feed-forward layers within the PSABlock refine attended features, ensuring efficient propagation and richer semantic context.

- Feature fusion: Outputs from convolutional and attention pathways are merged, resulting in more expressive feature maps that balance local detail with global context.

- Scalability: Unlike YOLOv10 [10], where the PSA module was restricted to a single Attention + Feed Forward structure, YOLO26’s C2PSA allows multiple PSABlocks to be stacked. Smaller versions (nano, small, medium) contain one PSABlock, while larger models (large, extra-large) contain two in sequence.

10.2. Flow

- Initial convolution (cv1): A 1×1 convolution preprocesses the input tensor, decoupling the module’s internal operations from preceding feature representations while maintaining spatial resolution.

- Split: The tensor is partitioned along the channel dimension into two branches: (a) a skip path that preserves identity features for later concatenation, and (b) a processed path that passes through the PSA block(s) for attention-based refinement.

- PSA block(s) for multi-head attention:

- Feed-Forward Network (FFN):

- Concatenation and final convolution (cv2):

11. Upsample and Concatenation Layers

11.1. Upsample Layer

11.1.1. Objective

- Spatial Restoration: Doubles height and width using nearest-neighbor interpolation

- Lightweight: No learnable parameters; efficient for real-time systems.

11.1.2. Flow

11.2. Concat Layer

11.2.1. Objective

- Feature Reuse: Combines low- and high-level features to enrich representations.

- Channel-Wise Fusion: Increases diversity of feature channels.

- Supports Skip Connections: Enables integration of features from earlier layers and multi-scale decoding

11.2.2. Flow

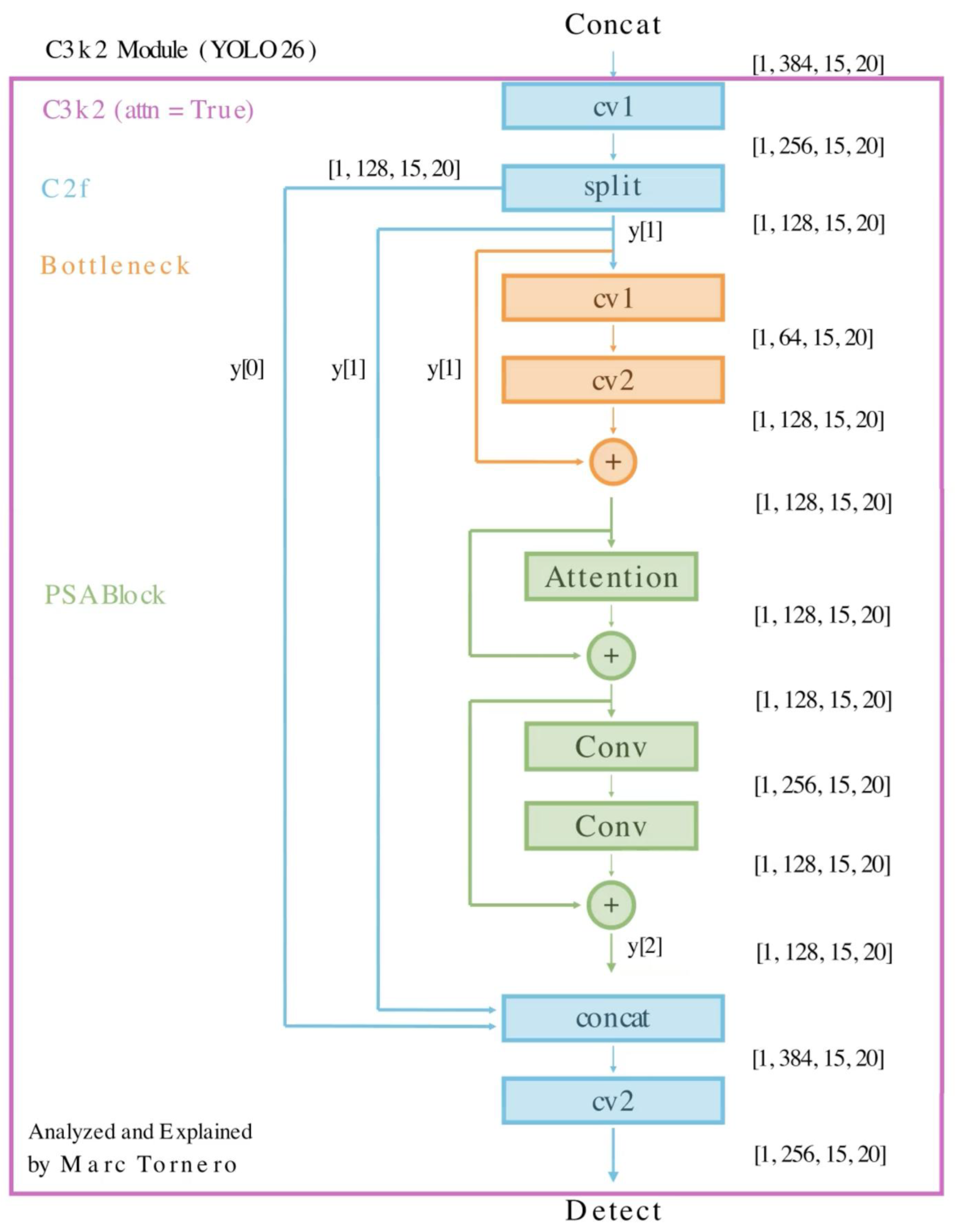

12. Feature Refinement Module (C3k2 with Attention)

12.1. Objective

- Local structural patterns through Bottleneck-based convolutional refinement

- Long-range spatial dependencies through the PSA Block

- Feature preservation through CSP-style split pathways

- Computational efficiency by applying the most expensive operations to only part of the channels.

- Partial feature processing: Only a subset of channels is heavily processed, reducing redundancy and improving efficiency.

- Local refinement through the Bottleneck: The Bottleneck compresses, processes, and restores channels, helping the network emphasize edges, corners, textures, and other localized patterns.

- Global context through attention: The PSA Block enriches the refined features with broader spatial relationships, making the representation more aware of distributed object structure.

- Multi-level feature retention: The module preserves an untouched split branch, the pre-attention processed branch, and the fully refined branch, enabling richer feature fusion.

- Improved optimization: Residual connections in both the Bottleneck and the PSA Block help maintain information flow and stabilize training.

13.2. Flow

- Initial convolution (cv1): A 1×1 convolution is first applied to the input tensor. As seen in Figure 22, the feature map remains at [1, 256, 15, 20], meaning spatial resolution is preserved while the channels are prepared for internal processing.

- Split: The output of the initial convolution is divided along the channel dimension into two equal parts.

- The y[0] branch serves as a preserved shortcut branch that bypasses the heavier transformations and is sent directly toward the final concatenation. The y[1] branch is used as the main refinement path.

- Bottleneck refinement: The y[1] branch first passes through a Bottleneck block composed of two convolutions. The first convolution reduces channels from 128 to 64, creating a compressed intermediate representation, the second convolution restores channels from 64 back to 128.

- A residual connection then adds the original y[1] back to the transformed output. This Bottleneck stage allows the module to refine local patterns efficiently while preserving the original feature information.

- PSA Block refinement: The output of the Bottleneck is then passed into a PSA Block, which further enhances the features using attention-based processing.

- Inside this PSA Block, an Attention submodule captures broader spatial dependencies and contextual relationships, its output is added residually to the incoming feature map, the result then passes through a lightweight two-layer convolutional feed-forward subnetwork. As seen in Figure 22, this subnetwork expands channels from 128 to 256 and then projects them back from 256 to 128, a second residual addition is applied after this feed-forward stage. The PSA Block therefore refines the Bottleneck output by incorporating global context while still preserving the original branch information through internal skip connections.

- Concatenation: Three tensors are concatenated along the channel axis: y[0] the preserved shortcut branch, y[1] the original split refinement branch, and y[2] the fully refined branch after Bottleneck + PSA processing. This concatenation is important because it preserves: raw partial features, intermediate branch features, and deeply refined attention-enhanced features.

- Final convolution (cv2): A final 1×1 convolution fuses the concatenated tensor and projects it from 384 channels back to 256 channels, producing the final output of shape [1, 256, 15, 20]. This final step learns inter-channel relationships across all three branches and generates a richer feature representation suitable for the next stage of the network.

16. Conclusion

Appendix A. Training Parameters for the YOLO26n Ablation Studies

Appendix B. Benchmarking Parameters for the YOLO26n Ablation Studies

References

- Lin, T.-Y.; Maire, M.; Belongie, S.; Bourdev, L.; Girshick, R.; Hays, J.; Perona, P.; Ramanan, D.; Zitnick, C.L.; Dollár, P. Microsoft COCO: Common Objects in Context. 2015. [Google Scholar] [CrossRef]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You Only Look Once: Unified, Real-Time Object Detection; 2016. [Google Scholar]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. 2018. [Google Scholar] [CrossRef]

- Bochkovskiy, A.; Wang, C.-Y.; Liao, H.-Y.M. YOLOv4: Optimal Speed and Accuracy of Object Detection; 2020. [Google Scholar]

- Khanam, R.; Hussain, M. What Is YOLOv5: A Deep Look into the Internal Features of the Popular Object Detector; 2024. [Google Scholar]

- Li, C.; Li, L.; Jiang, H.; Weng, K.; Geng, Y.; Li, L.; Ke, Z.; Li, Q.; Cheng, M.; Nie, W.; et al. YOLOv6: A Single-Stage Object Detection Framework for Industrial Applications. 2022. [Google Scholar] [CrossRef]

- Wang, C.-Y.; Bochkovskiy, A.; Liao, H.-Y.M. YOLOv7: Trainable Bag-of-Freebies Sets New State-of-the-Art for Real-Time Object Detectors. 2022. [Google Scholar]

- Yaseen, M. What Is YOLOv8: An In-Depth Exploration of the Internal Features of the Next-Generation Object Detector. 2024.

- Wang, C.-Y.; Yeh, I.-H.; Liao, H.-Y.M. YOLOv9: Learning What You Want to Learn Using Programmable Gradient Information; 2024. [Google Scholar]

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J.; Ding, G. YOLOv10: Real-Time End-to-End Object Detection. 2024. [Google Scholar]

- Khanam, R.; Hussain, M. YOLOv11: An Overview of the Key Architectural Enhancements; 2024. [Google Scholar]

- Tian, Y.; Ye, Q.; Doermann, D. YOLOv12: Attention-Centric Real-Time Object Detectors Latency (Ms) MS COCO MAP (%).

- Lei, M.; Li, S.; Wu, Y.; Hu, H.; Zhou, Y.; Zheng, X.; Ding, G.; Du, S.; Wu, Z.; Gao, Y. YOLOv13: Real-Time Object Detection with Hypergraph-Enhanced Adaptive Visual Perception. 2025.

- Jiang, T.; Zhong, Y. ODverse33: Is the New YOLO Version Always Better? A Multi Domain Benchmark from YOLO v5 to V11. 2025. [Google Scholar] [CrossRef]

- Terven, J.; Cordova-Esparza, D. A Comprehensive Review of YOLO Architectures in Computer Vision: From YOLOv1 to YOLOv8 and YOLO-NAS. 2024. [Google Scholar] [CrossRef]

- Sapkota, R.; Meng, Z.; Churuvija, M.; Du, X.; Ma, Z.; Karkee, M. Comprehensive Performance Evaluation of YOLOv12, YOLO11, YOLOv10, YOLOv9 and YOLOv8 on Detecting and Counting Fruitlet in Complex Orchard Environments. 2026. [Google Scholar] [CrossRef]

- Sapkota, R.; Cheppally, R.H.; Sharda, A.; Karkee, M. YOLO26: Key Architectural Enhancements and Performance Benchmarking for Real-Time Object Detection; 2026. [Google Scholar]

- Ramos, L.T.; Sappa, A.D. A Decade of You Only Look Once (YOLO) for Object Detection: A Review. IEEE Access 2025, 13, 192747–192794. [Google Scholar] [CrossRef]

- Sapkota, R.; Flores-Calero, M.; Qureshi, R.; Badgujar, C.; Nepal, U.; Poulose, A.; Zeno, P.; Vaddevolu, U.B.P.; Khan, S.; Shoman, M.; et al. YOLO Advances to Its Genesis: A Decadal and Comprehensive Review of the You Only Look Once (YOLO) Series. Artif. Intell. Rev. 2025, 58. [Google Scholar] [CrossRef]

- Jegham, N.; Koh, C.Y.; Abdelatti, M.; Hendawi, A. YOLO Evolution: A Comprehensive Benchmark and Architectural Review of YOLOv12, YOLO11, and Their Previous Versions. 2025.

- Hidayatullah, P.; Syakrani, N.; Sholahuddin, M.R.; Gelar, T.; Tubagus, R. YOLOv8 to YOLO11: A Comprehensive Architecture In-Depth Comparative Review 2025.

- Li, X.; Wang, W.; Wu, L.; Chen, S.; Hu, X.; Li, J.; Tang, J.; Yang, J. Generalized Focal Loss: Learning Qualified and Distributed Bounding Boxes for Dense Object Detection. 2020. [Google Scholar] [CrossRef]

- Chakrabarty, S. YOLO26: An Analysis of NMS-Free End to End Framework for Real-Time Object Detection. 2026.

- Hidayatullah, P.; Tubagus, R. YOLO26: A Comprehensive Architecture Overview and Key Improvements A PREPRINT; 2026. [Google Scholar]

- Lin, T.-Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature Pyramid Networks for Object Detection; 2017. [Google Scholar]

- Liu, S.; Qi, L.; Qin, H.; Shi, J.; Jia, J. Path Aggregation Network for Instance Segmentation. 2018. [Google Scholar] [PubMed]

- Tan, M.; Pang, R.; Le, Q. V. EfficientDet: Scalable and Efficient Object Detection. 2020. [Google Scholar] [PubMed]

- Chollet, F. Xception: Deep Learning with Depthwise Separable Convolutions; 2017. [Google Scholar]

- Ioffe, S.; Szegedy, C. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift; 2015. [Google Scholar]

- Hendrycks, D.; Gimpel, K. Gaussian Error Linear Units (GELUs). 2023. [Google Scholar]

- Ramachandran, P.; Zoph, B.; Le, Q. V. Searching for Activation Functions; 2017. [Google Scholar]

- Wang, C.-Y.; Liao, H.-Y.M.; Yeh, I.-H.; Wu, Y.-H.; Chen, P.-Y.; Hsieh, J.-W. CSPNet: A New Backbone That Can Enhance Learning Capability of CNN; 2019. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition; 2015. [Google Scholar]

- Vaswani, A.; Brain, G.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need 2023.

| Architecture | mAP@0.5:0.95 | Latency (ms) |

|---|---|---|

| 1 – Baseline | 0.3933✅ | 0.99 |

| 2 – ReLU | 0.3808 | 0.93 ✅ |

| 3 – Leaky ReLU | 0.3761 | 1.02 |

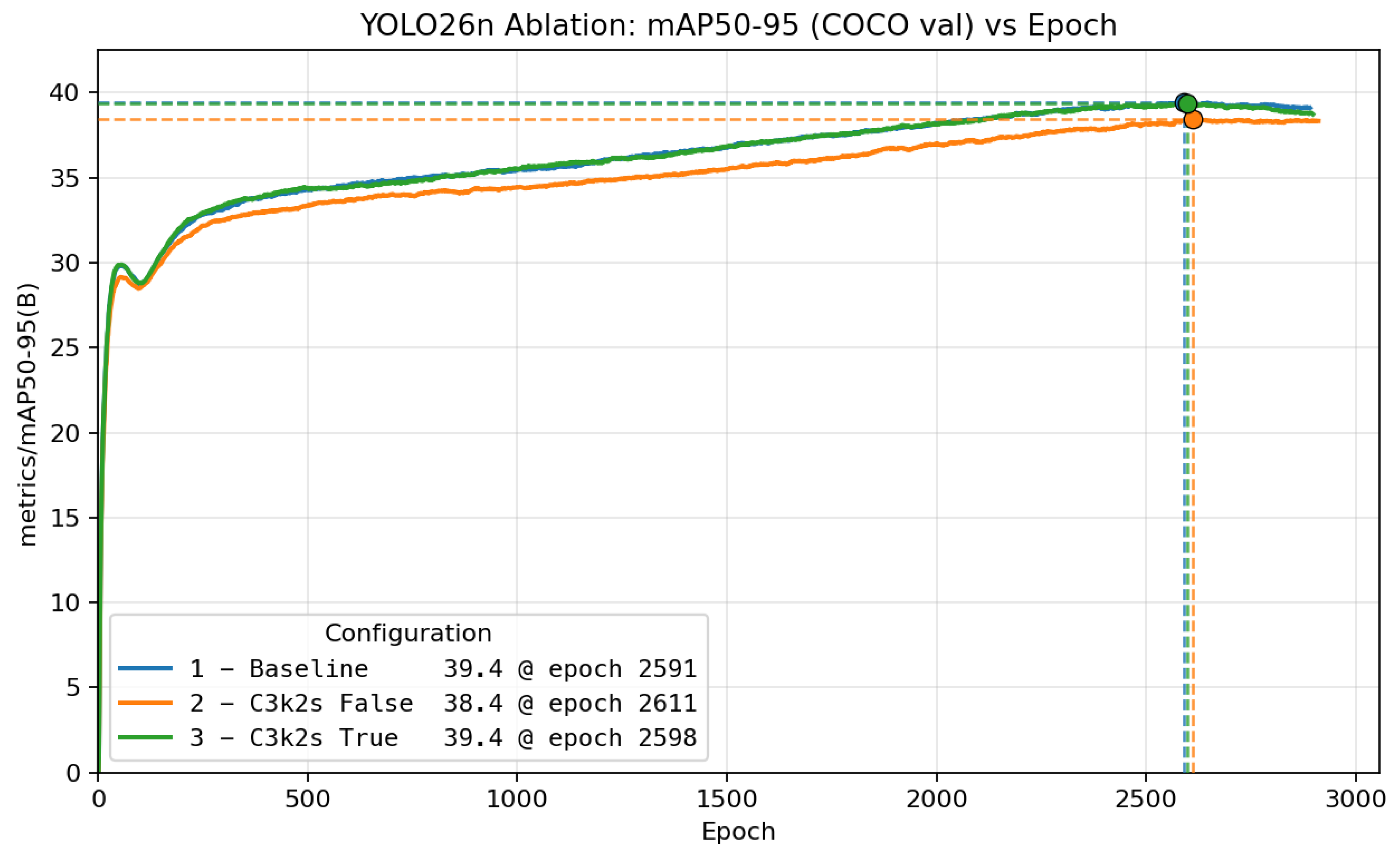

| Architecture | mAP@0.5:0.95 | Latency (ms) |

|---|---|---|

| 1 – Baseline | 0.3933✅ | 0.99 |

| 2 – C3k2s False | 0.3813 | 0.86 ✅ |

| 3 – C3k2s True | 0.3930 | 1.11 |

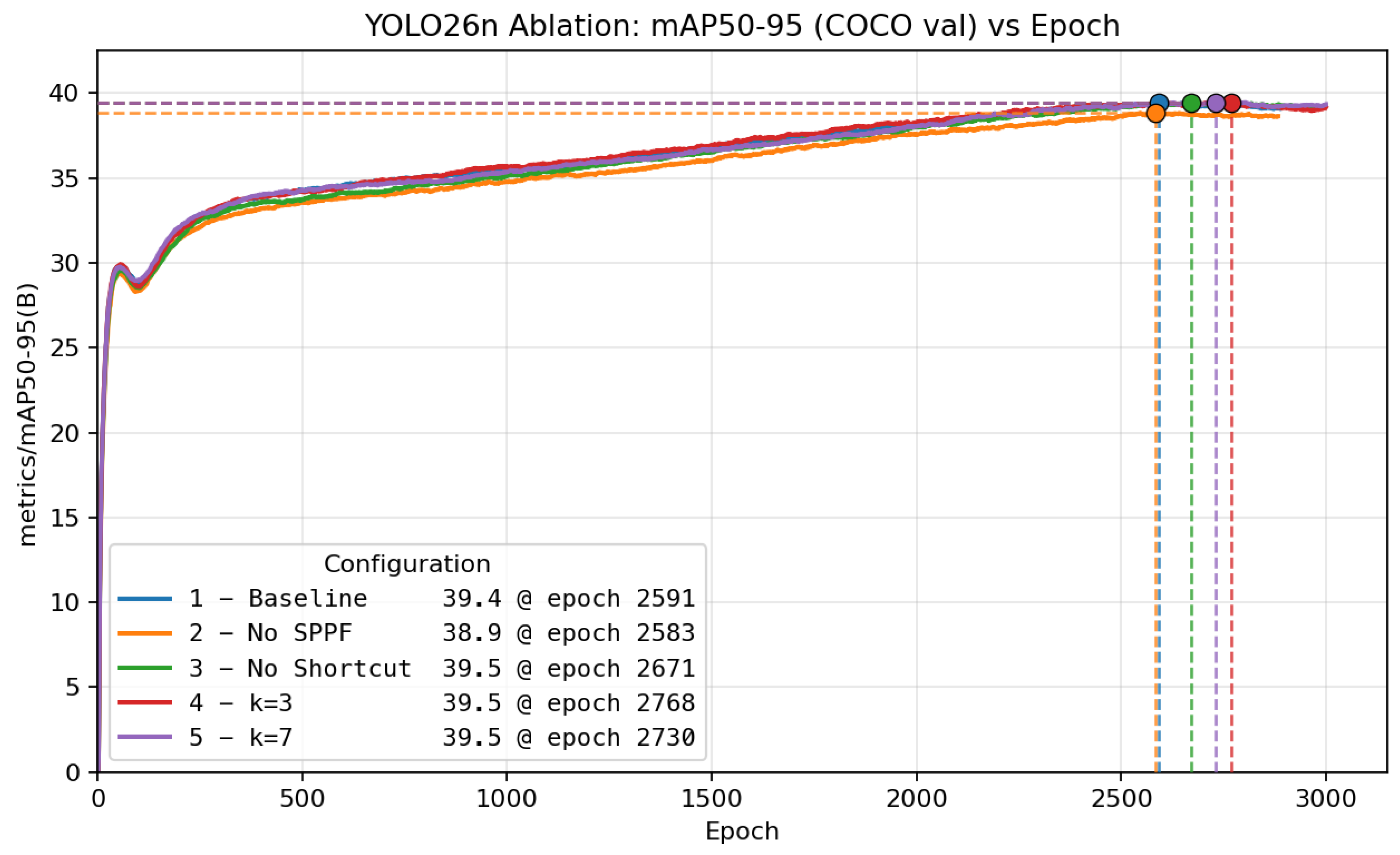

| Architecture | mAP@0.5:0.95 | Latency (ms) |

|---|---|---|

| 1 – Baseline | 0.3933 | 0.99 |

| 2 – No SPPF | 0.3866 | 0.96 |

| 3 – No Shortcut | 0.3941✅ | 1.01 |

| 4 – k=3 | 0.3922 | 1.00 |

| 5 – k=7 | 0.3935 | 0.98 |

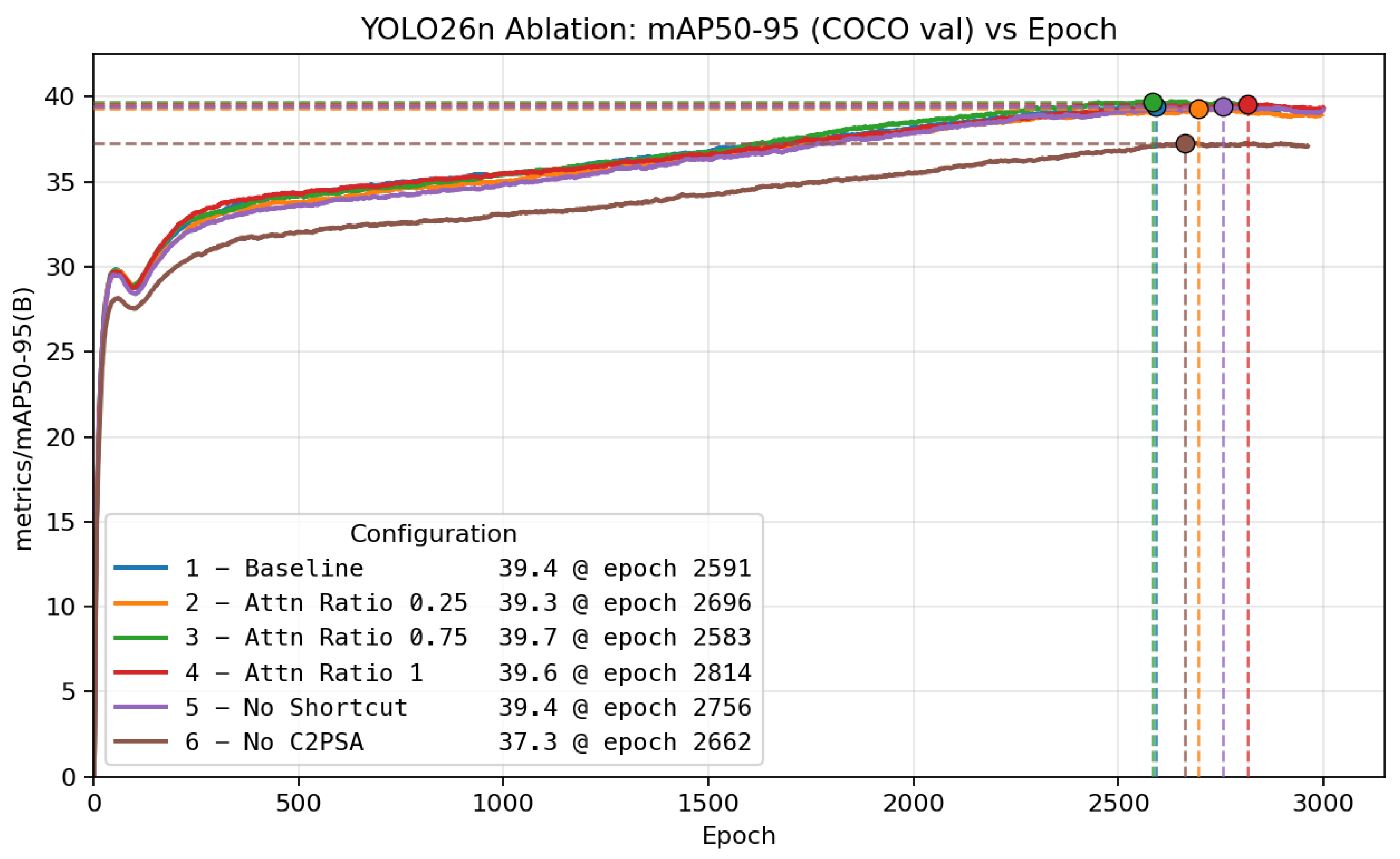

| Architecture | mAP@0.5:0.95 | Latency (ms) |

|---|---|---|

| 1 – Baseline | 0.3933 | 0.99 |

| 2 – Attn Ratio 0.25 | 0.3909 | 0.99 |

| 3 – Attn Ratio 0.75 | 0.3961✅ | 0.99 |

| 4 – Attn Ratio 1 | 0.3940 | 0.99 |

| 5 – No Shortcut | 0.3933 | 0.99 |

| 6 – No C2PSA | 0.3704 | 0.92 |

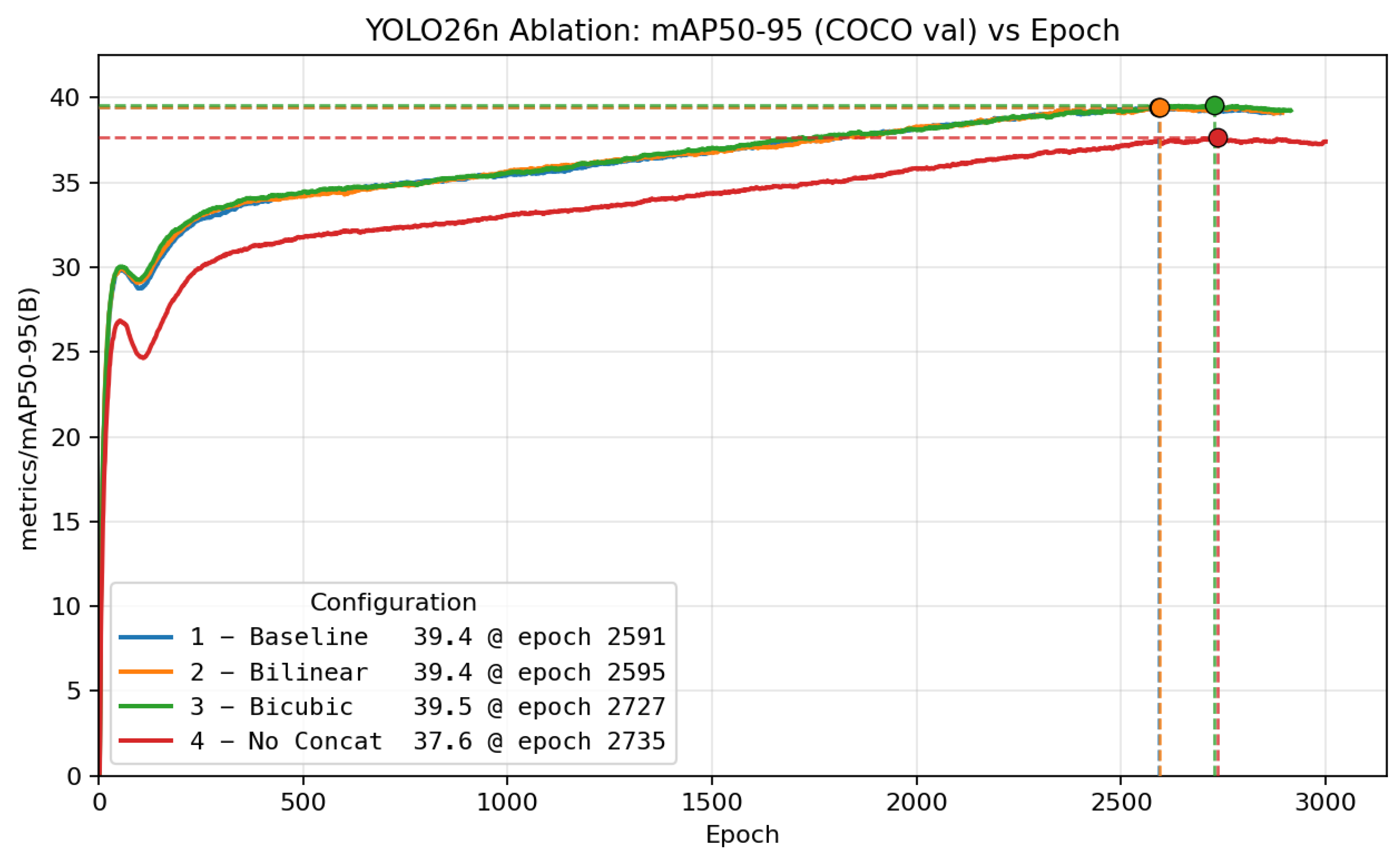

| Architecture | mAP@0.5:0.95 | Latency (ms) |

|---|---|---|

| 1 – Baseline | 0.3933 | 0.99 |

| 2 – Bilinear | 0.3954✅ | 0.98✅ |

| 3 – Bicubic | 0.3950 | 1.00 |

| 4 – No Concat | 0.3743 | 0.97 |

| Architecture | mAP@0.5:0.95 | Latency (ms) |

|---|---|---|

| 1 – Baseline | 0.3933✅ | 0.99 |

| 2 – Last False | 0.3819 | 0.94 ✅ |

| 3 – Last True | 0.3921 | 0.97 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).