1. Introduction

Natural rubber is a critical industrial input and a strategic agricultural commodity that plays a central role in global manufacturing supply chains. It is widely used in the production of automobile tires, medical gloves, engineering components, and a broad range of technical rubber products. Global natural rubber production is highly concentrated in Southeast Asia, particularly in Thailand, Indonesia, and Malaysia, which together account for the majority of world supply [

1,

2]. Among these producers, Thailand has consistently remained the world’s leading exporter of natural rubber. In 2025, Thailand produced approximately 4.79 million tonnes, accounting for 32.18% of global production, with about 85.79% of output exported to international markets [

3]. Price volatility in natural rubber markets therefore carries direct consequences for the income of millions of smallholder farmers and the cost structure of downstream industries that rely heavily on rubber-based inputs.

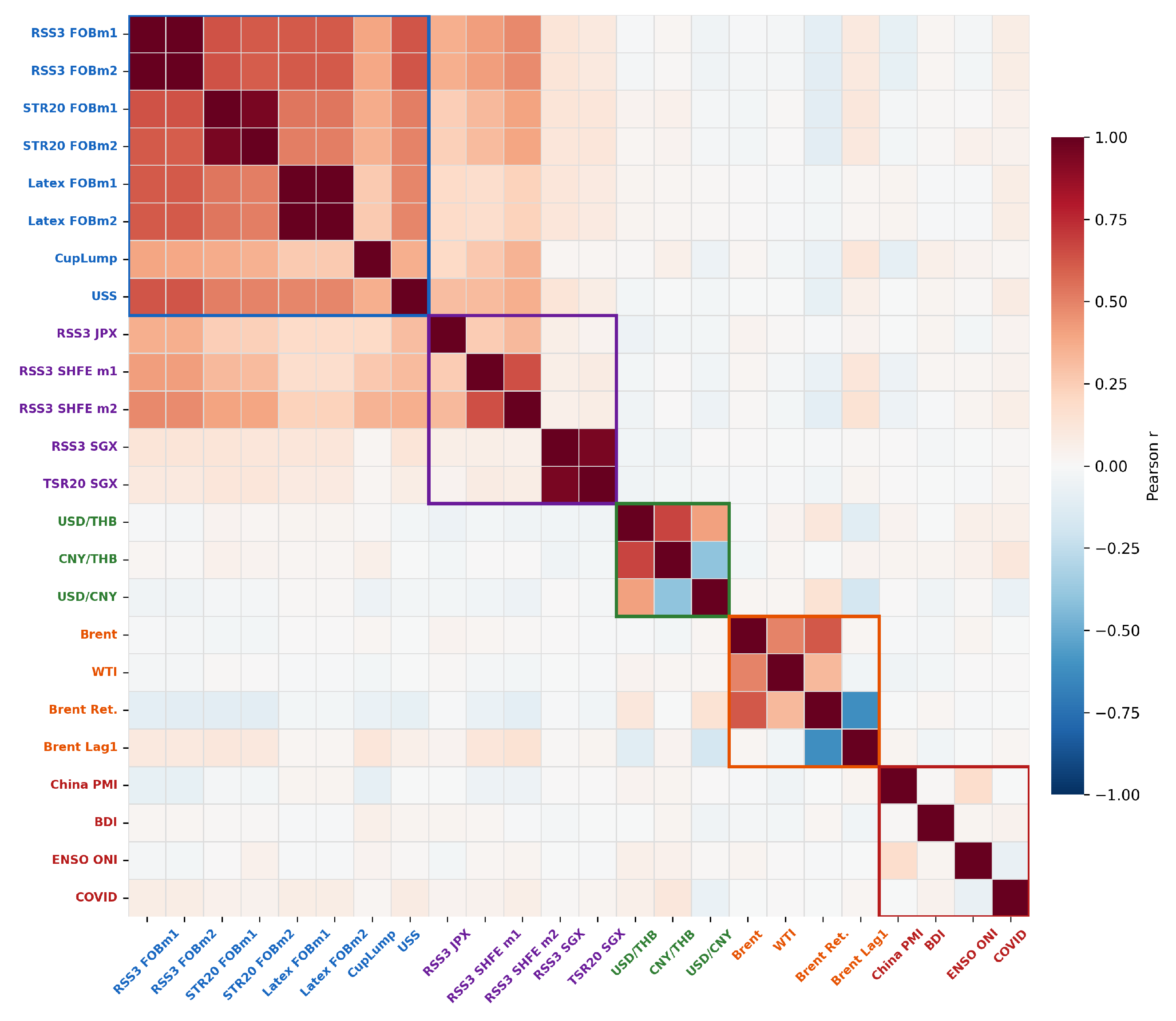

Natural rubber prices are jointly driven by agricultural seasonality, macroeconomic conditions, exchange rate dynamics, and speculative activity in futures markets, producing price dynamics that are simultaneously nonlinear, non-stationary, and subject to structural breaks [

4,

5,

6]. These forces generate significant uncertainty for stakeholders across the rubber value chain. In international trade, Ribbed Smoked Sheet No.3 (RSS3) serves as the benchmark grade for natural rubber transactions, with export prices typically quoted on a Free on Board (FOB) basis at major Thai ports. Price co-movement across SHFE, SGX, and JPX (TOCOM) futures markets creates cross-market information flows that provide additional predictive content beyond domestic spot prices alone, motivating the inclusion of exchange-traded settlement prices as model inputs [

7]. This cross-market structure is not symmetric: [

8] demonstrate that SHFE Granger-causes TOCOM but not vice versa, while Kepulaje et al. [

9] [pp. 159–161] show that SGX RSS3 Rubber Futures (SRU) and SGX TSR20 Rubber Futures (STF) transmit more consistently into regional spot prices than TOCOM/JPX Rubber Futures (RSS3)(JRU) — implying that the futures exchanges operate as a layered rather than a flat information system, and that the informational weight of each venue depends on whether one is studying financial benchmarking or physical export pricing.

Accurate forecasting of commodity prices therefore remains a central issue in both economic research and industrial decision-making. Commodity price forecasting has long relied on econometric models such as ARIMA, VAR, and GARCH, which provide well-established frameworks for capturing temporal dependencies and volatility structures [

10,

11]. In the natural rubber literature specifically, this approach is exemplified by [

12]. (2018), who apply the Box–Jenkins procedure to Malaysian SMR20 and identify ARIMA(1,1,0) as the best-fitting model, and by [

13], who show that a simultaneous supply–demand–price system outperforms univariate ARIMA across RMSE, MAE, and information criteria — demonstrating that purely univariate extrapolation is insufficient once commodity market structure is made explicit. However, these approaches rest on linearity assumptions that limit their capacity to represent the complex nonlinear dynamics observed in modern commodity markets, and their performance often deteriorates further when the underlying data exhibit structural breaks and high non-stationary characteristics that are particularly pronounced in energy and agricultural commodity price series [

14,

15]. To address these shortcomings, subsequent studies introduced machine learning methods including support vector machines (SVM), multi-layer perceptrons (MLP), random forests, extreme gradient boosting (XGBoost), and back-propagation neural networks (BPNN), which are better suited for capturing nonlinear relationships in complex datasets [

16,

17,

18]. Hybrid frameworks combining statistical and machine learning components—such as ARIMA–SVM—have also been proposed to integrate linear structure with nonlinear residual dynamics [

19,

20]. Nevertheless, these approaches are limited in their ability to capture complex nonlinear dynamics and long-range dependencies, motivating the adoption of deep learning alternatives.

Building on this shift, recurrent architectures—particularly Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) networks—have been widely adopted for their ability to model long-term temporal dependencies more effectively than shallow learning algorithms, demonstrating superior performance over classical statistical benchmarks in energy demand prediction, financial market forecasting, and environmental time-series modeling [

21,

22]. For natural rubber specifically, [

23] demonstrate that a multi-layer LSTM achieves 95.88% accuracy on Thai RSS3 prices, while [

24] confirm that LSTM outperforms ARIMA on Malaysian SMR20 across MAE, RMSE, and MAPE — with both studies noting that predictive gains remain incomplete without explicit incorporation of exogenous drivers such as exchange rates, energy prices, and futures markets. However, recurrent models can struggle with highly volatile and non-stationary series, particularly when the data exhibit abrupt structural changes or mixed-frequency dynamics [

25]. Bidirectional Long Short-Term Memory (BiLSTM) networks extend the conventional LSTM architecture by processing sequential data simultaneously in the forward and backward directions, enabling the model to integrate contextual information from both past and future states within the observation window. This bidirectional structure yields richer temporal representations than unidirectional recurrent networks and has demonstrated consistent advantages over conventional LSTM and classical statistical benchmarks in environments characterized by strong seasonality, nonlinear interactions, and abrupt fluctuations—including renewable energy forecasting and environmental time-series prediction [

21,

22,

26].

To further extend the representational capacity of BiLSTM, several lines of re-search have proposed hybrid architectures. CNN–BiLSTM models combine convolution layers for local feature extraction with BiLSTM for bidirectional temporal learning, significantly improving prediction performance in energy and meteorological forecasting applications [

27,

28]. Attention mechanisms have also been integrated to allow the network to emphasize the most informative time steps, enhancing performance on non-stationary sequences and series with irregular fluctuations [

29]. A separate strand of research applies meta-heuristic optimization to tune BiLSTM hyper-parameters, and combines BiLSTM with gradient boosting or ensemble methods to capture residual nonlinear patterns, yielding further gains over single-model recurrent baselines in energy and meteorological forecasting applications. Despite these advances, BiLSTM-based hybrids remain limited in their ability to separate and individually model components operating across distinctly different temporal scales, a constraint that becomes particularly consequential for commodity price series with coexisting trend, cyclical, and noise dynamics.

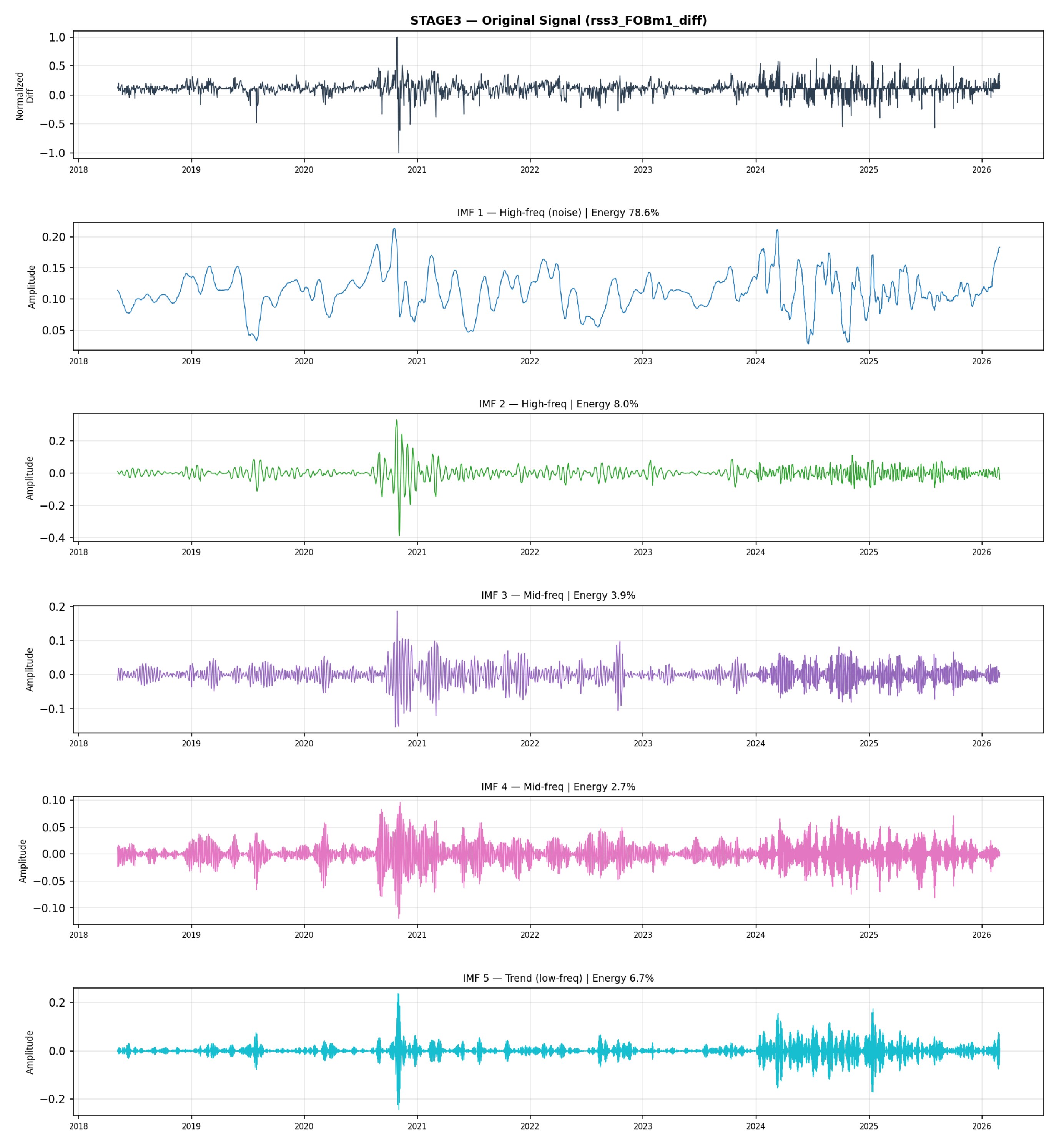

A parallel research direction addresses the recurrent model’s limited ability to disentangle components at different temporal scales by incorporating signal decomposition as a preprocessing stage. Methods including Empirical Mode Decomposition (EMD), Variational Mode Decomposition (VMD), Wavelet Transform (WT), and Seasonal-Trend Decomposition using LOESS (STL) separate the original series into components representing distinct frequency structures—trend, seasonal, and residual—thereby reducing non-stationary and simplifying the learning task for downstream models [

7,

29,

30]. A substantial body of empirical evidence confirms that decomposition-based hybrid frameworks, including EMD–LSTM, VMD–LSTM, VMD–GRU, VMD–TCN–LSTM, and STL–LSTM—significantly improve forecasting accuracy across coal markets, solar power generation, wind forecasting, and electricity load prediction [

29]. Recent evidence from high-frequency financial forecasting likewise shows that VMD can separate trend, periodic, and disturbance components in non-stationary series and that decomposition-enhanced recurrent models can outperform standalone econometric and deep-learning benchmarks [

25]. In commodity-price forecasting, VMD-based hybrid models have similarly been shown to outperform both single-model and earlier decomposition-based alternatives, with further gains when structurally relevant long-run drivers are incorporated into the forecasting system [

31]. Recent evidence from environmental forecasting further confirms that coupling VMD with sequence models can substantially improve predictive accuracy by separating short- and long-run signal structures before learning, relative to models trained on the raw series alone [

32]. Related evidence from drought forecasting likewise demonstrates that VMD-based preprocessing can improve short-lead predictive accuracy relative to standalone machine learning models, reinforcing the value of decomposition in nonlinear forecasting tasks [

33]. In related commodity-market research, VMD-based recurrent models have also been shown to improve forecasting by decomposing volatile price series into simpler sub-components before learning, confirming the value of decomposition for nonlinear price dynamics [

34].

Building on these decomposition gains, a further line of research demonstrates that combining VMD with attention-based sequence architectures yields complementary improvements beyond what either component achieves alone. Related forecasting studies have shown that VMD can improve downstream deep-learning performance by decomposing complex time series into more learnable components, while attention-based LSTM encoder-decoder structures further enhance the selection of in-formative features and time steps [

35] [pp. 922–923]. Evidence from short-term wind power forecasting further suggests that VMD is especially effective when embedded within a broader hybrid pipeline rather than used as an isolated preprocessing step, improving both stability and predictive accuracy in volatile series [

36]. Recent FX forecasting evidence additionally indicates that VMD-enhanced bidirectional recurrent architectures, when paired with systematic hyper-parameter tuning and regularization, can materially improve out-of-sample performance in low-frequency financial time series [

37].

Within the natural rubber literature specifically, evidence from rubber futures forecasting shows that VMD can extract economically meaningful multi-scale components, while bidirectional recurrent architectures improve both fitting performance and directional prediction; importantly, the predictive value of individual modes depends on their time-scale correspondence with the forecast target [

7] [p. 6]. This interpretation is further supported by energy-price forecasting evidence showing that decomposed sub-sequences differ materially in regularity and information content, and that forecasting performance improves when model design reflects the heterogeneous nature of high- and low-frequency components [

30].

More recently, Transformer-based architectures have emerged as a compelling complement to recurrent models, leveraging self-attention mechanisms to capture long-range temporal dependencies across entire sequences in parallel—a capability that recurrent networks approximate only sequentially [

38]. Influential variants including Informer, Autoformer, FEDformer, PatchTST, and ReVIN have progressively introduced sparse attention, decomposition-based encoders, frequency-enhanced representations, patch-level temporal embedding, and distribution-shift normalization, substantially improving performance on long-sequence forecasting tasks [

35,

39,

40]. The complementary strengths of recurrent and attention-based models have motivated a further generation of hybrid architectures—including LSTM–Transformer hybrids, wavelet–Transformer–LightGBM frameworks, and dual-branch designs integrating short-term sequential learning with multi-scale decomposition—each seeking to capture both local temporal structure and global dependencies within a unified frame-work [

41,

42,

43,

44].

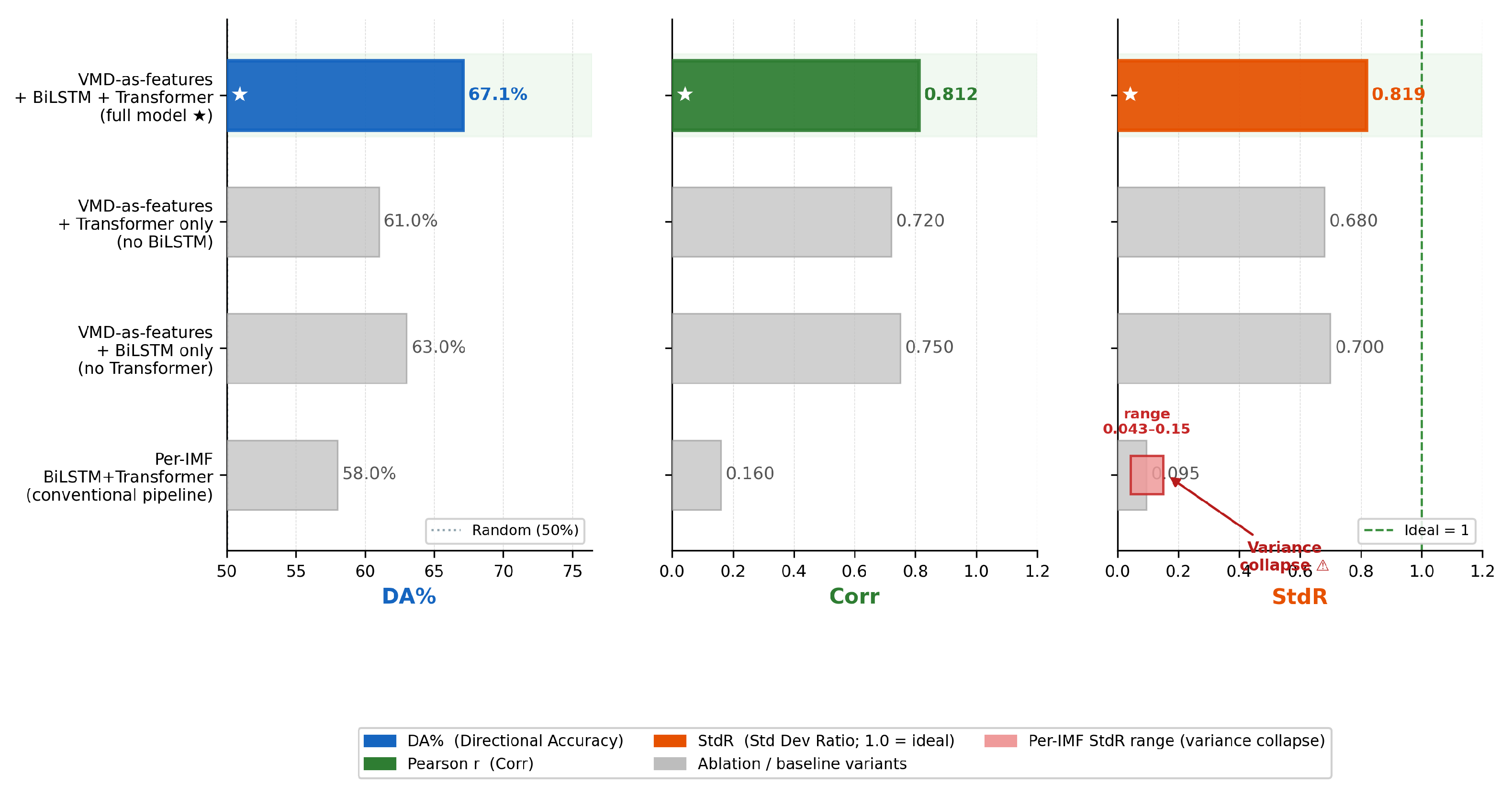

Despite these advances, most existing decomposition-based models treat signal separation purely as a preprocessing step to improve input quality, without redesigning the forecasting architecture to exploit the structural differences between decomposed components. Indeed, recent evidence from short-term wind power forecasting suggests that VMD is most effective when embedded within a broader hybrid pipeline rather than applied as an isolated preprocessing step, improving both stability and predictive accuracy in volatile series [

36]. Recent hybrid forecasting research similarly argues that direct end-to-end modeling is often inadequate for highly nonlinear and volatile series, and that decomposition-based feature extraction combined with bidirectional deep learning and attention improves the representation of multi-scale temporal structure [

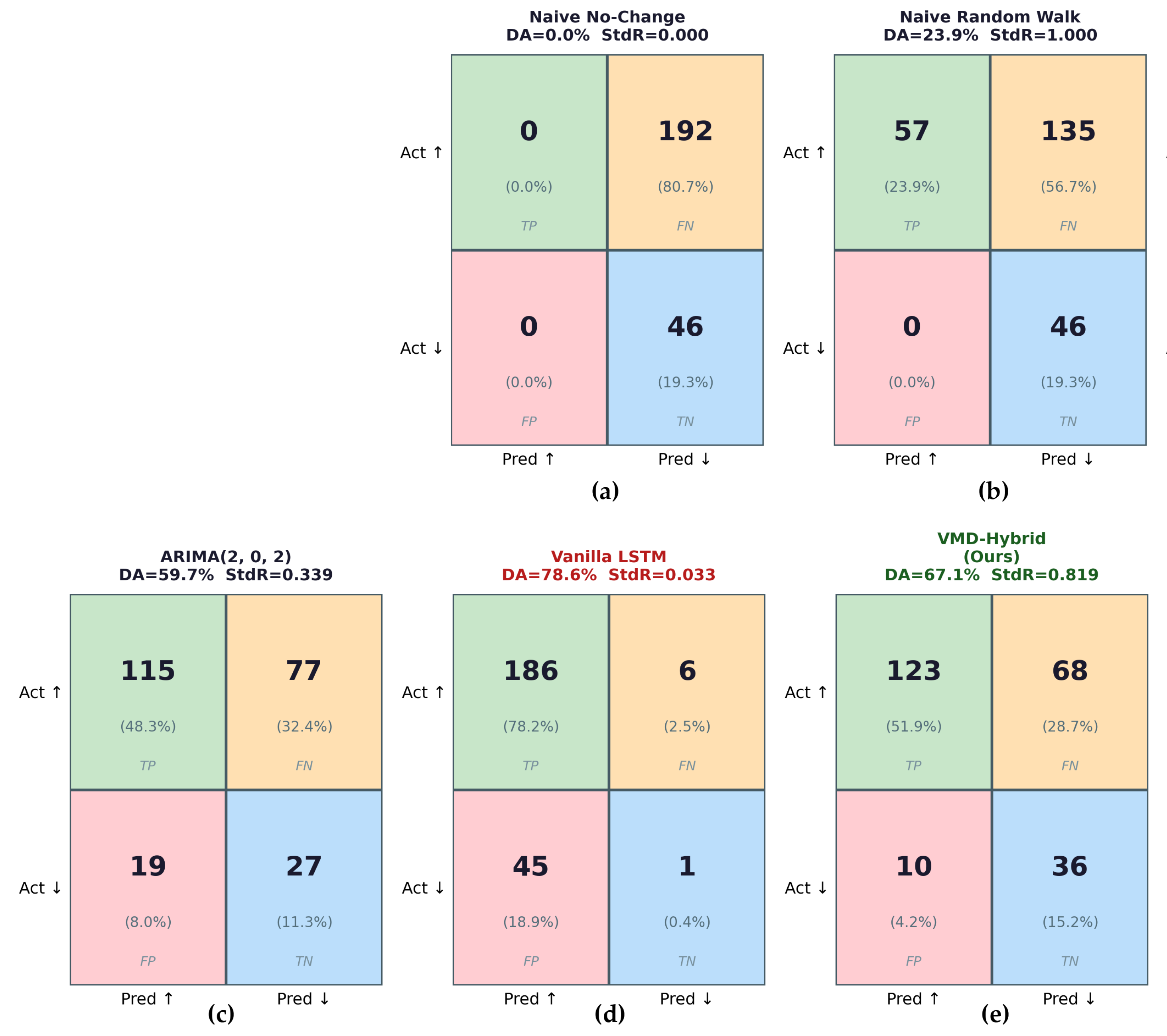

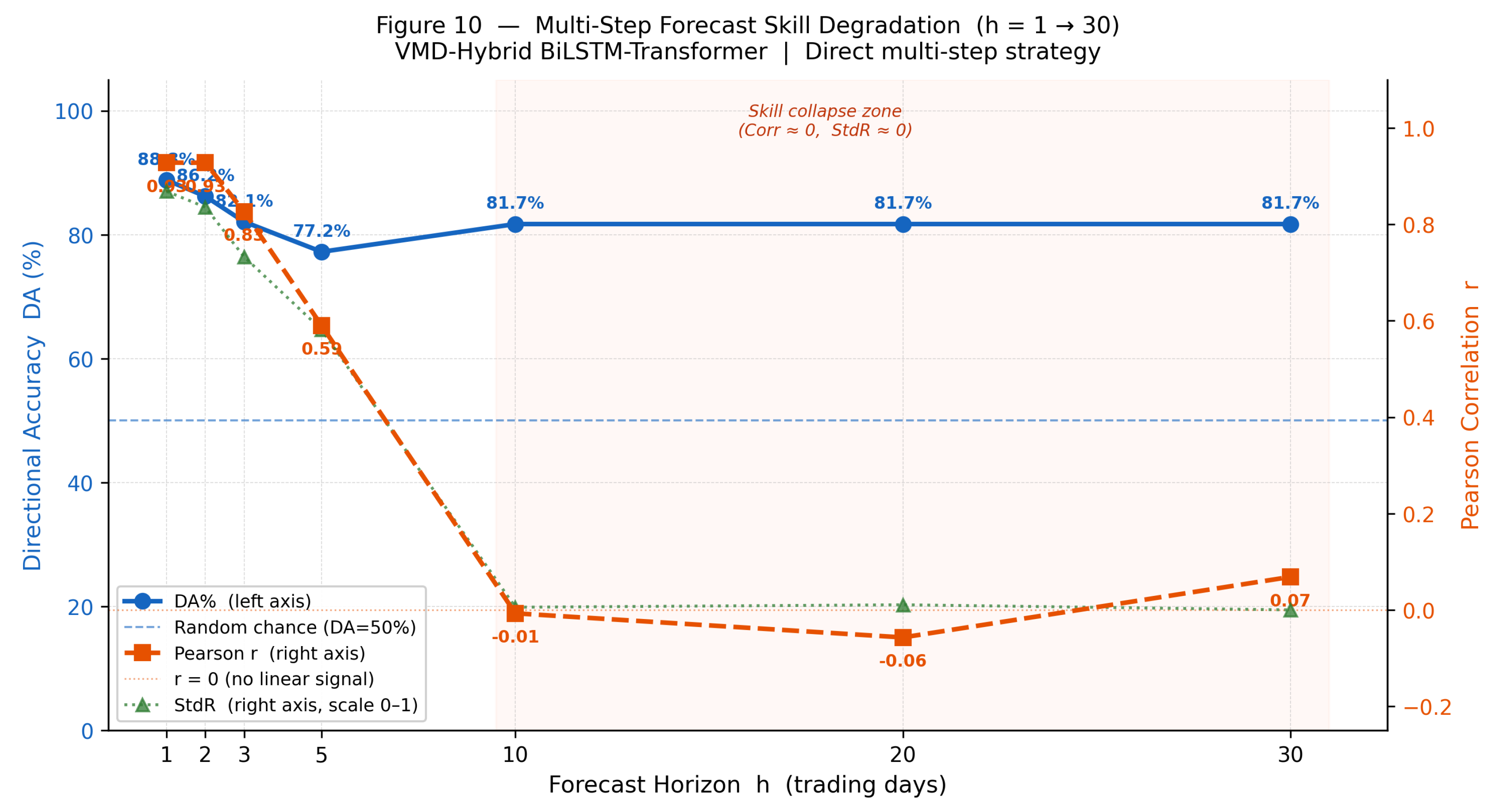

45]. In the majority of implementations, each intrinsic mode function is forecast independently by a separate model instance, and final predictions are obtained by summation — an approach that, as demonstrated in this study, is prone to variance collapse when applied to stationary (differenced) commodity price series. This failure mode is not incidental: Shumailov et al. [

46] [pp. 755–757] demonstrate theoretically that compound estimation pipelines systematically erode distributional variance across stages, while Rajpal et al. [

47] [pp. 13–15] confirm its output-level analogue in financial forecasting, showing that symmetric loss objectives push models toward degenerate, one-sided prediction regimes whose apparent performance masks near-zero predictive content. Recent oil-price forecasting studies have moved beyond single-stage decomposition toward secondary decomposition-reconstruction-ensemble frameworks, arguing that the information embedded in high-frequency components is too rich to be exhausted by a single decomposition pass [

48]. This architectural limitation and the need for a more parsimonious yet information-complete alternative represents the central research gap that the present work seeks to address. This gap is particularly consequential in the natural rubber context, where Fakthong et al. [

49] argue that existing time-series and machine-learning studies are heavily oriented toward short-term fluctuation tracking and pay limited attention to the combined structural effects of economic, environmental, and policy variables — reinforcing the need for architectures that preserve multi-scale information rather than collapsing it through sequential single-model estimation.

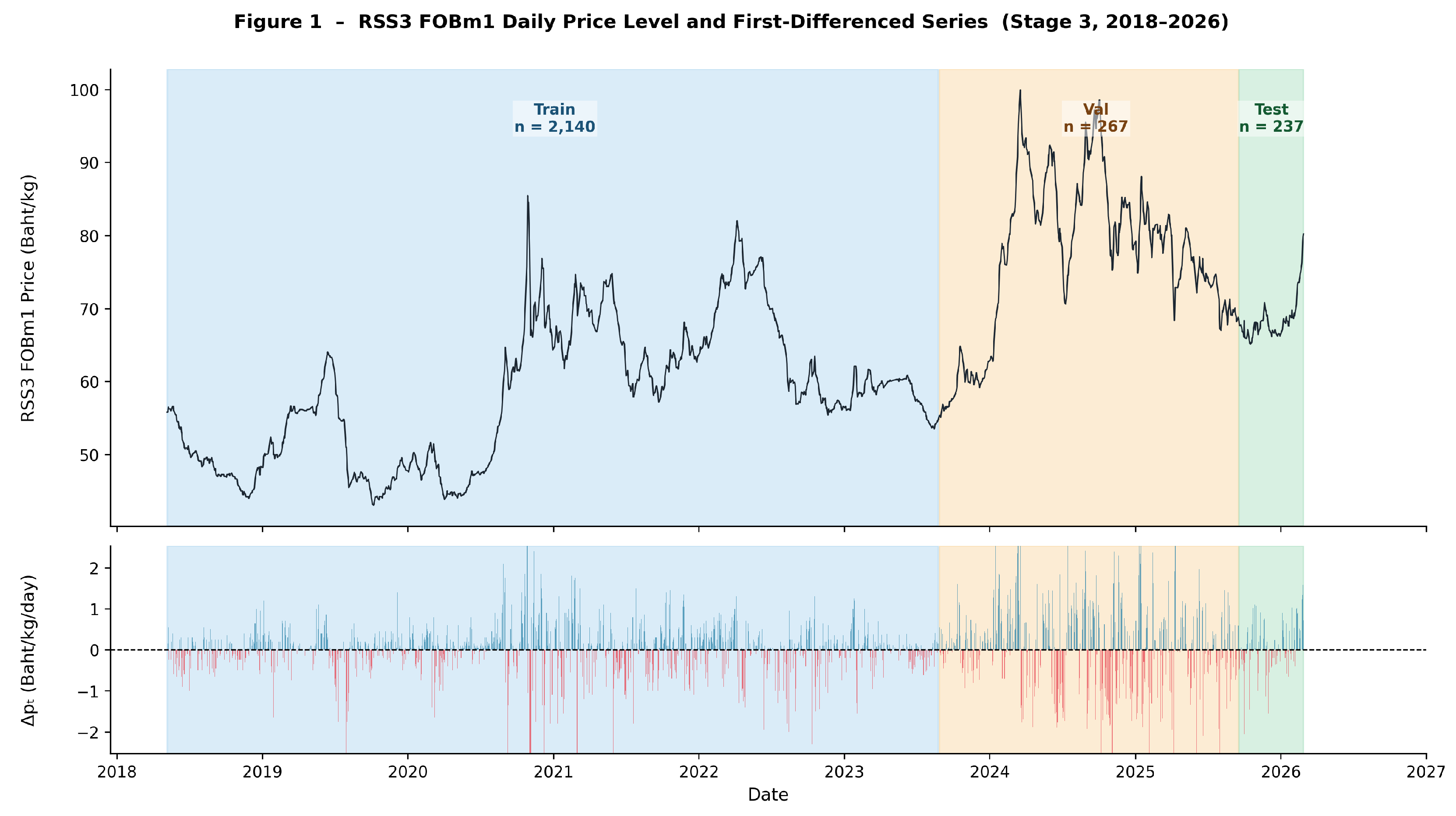

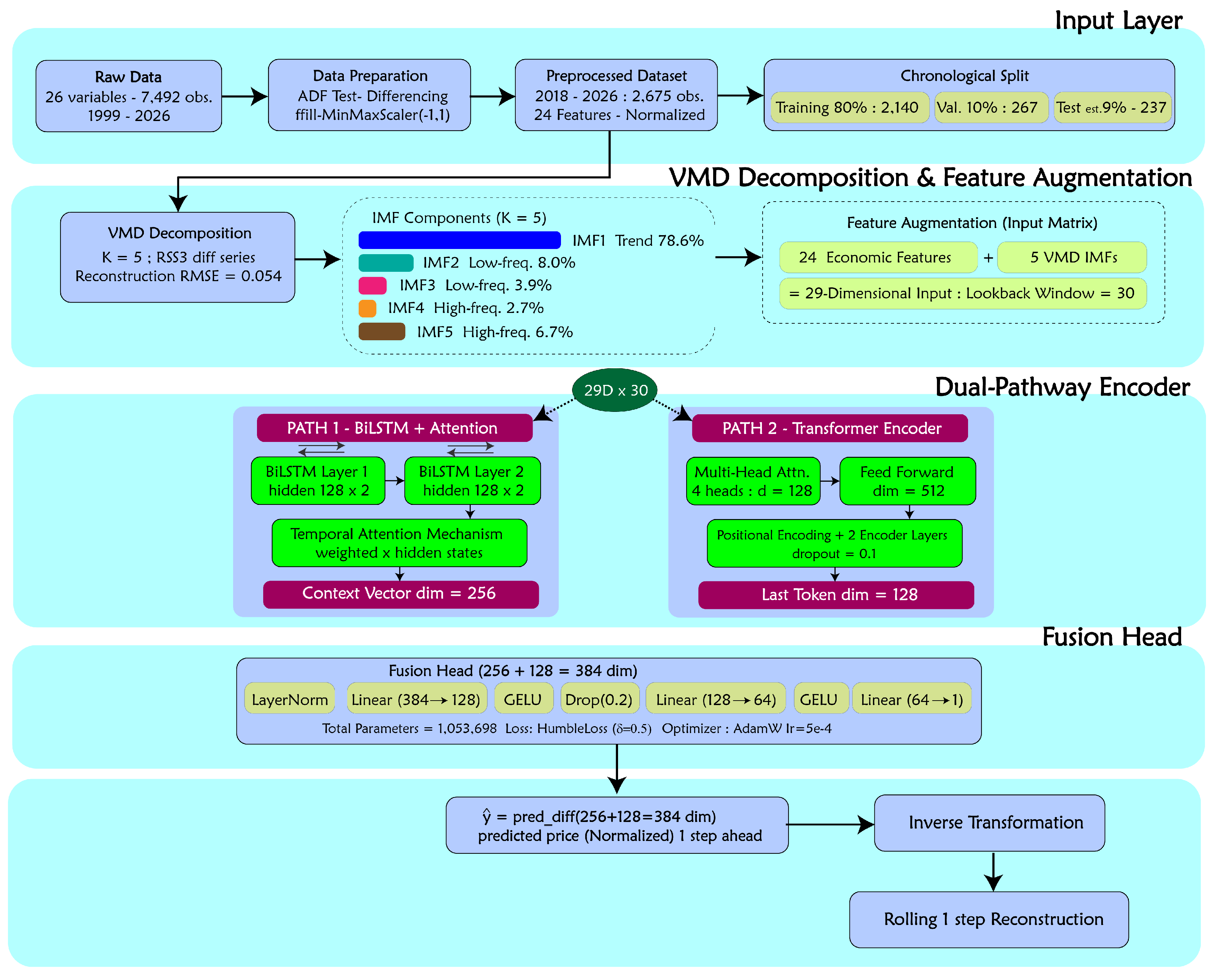

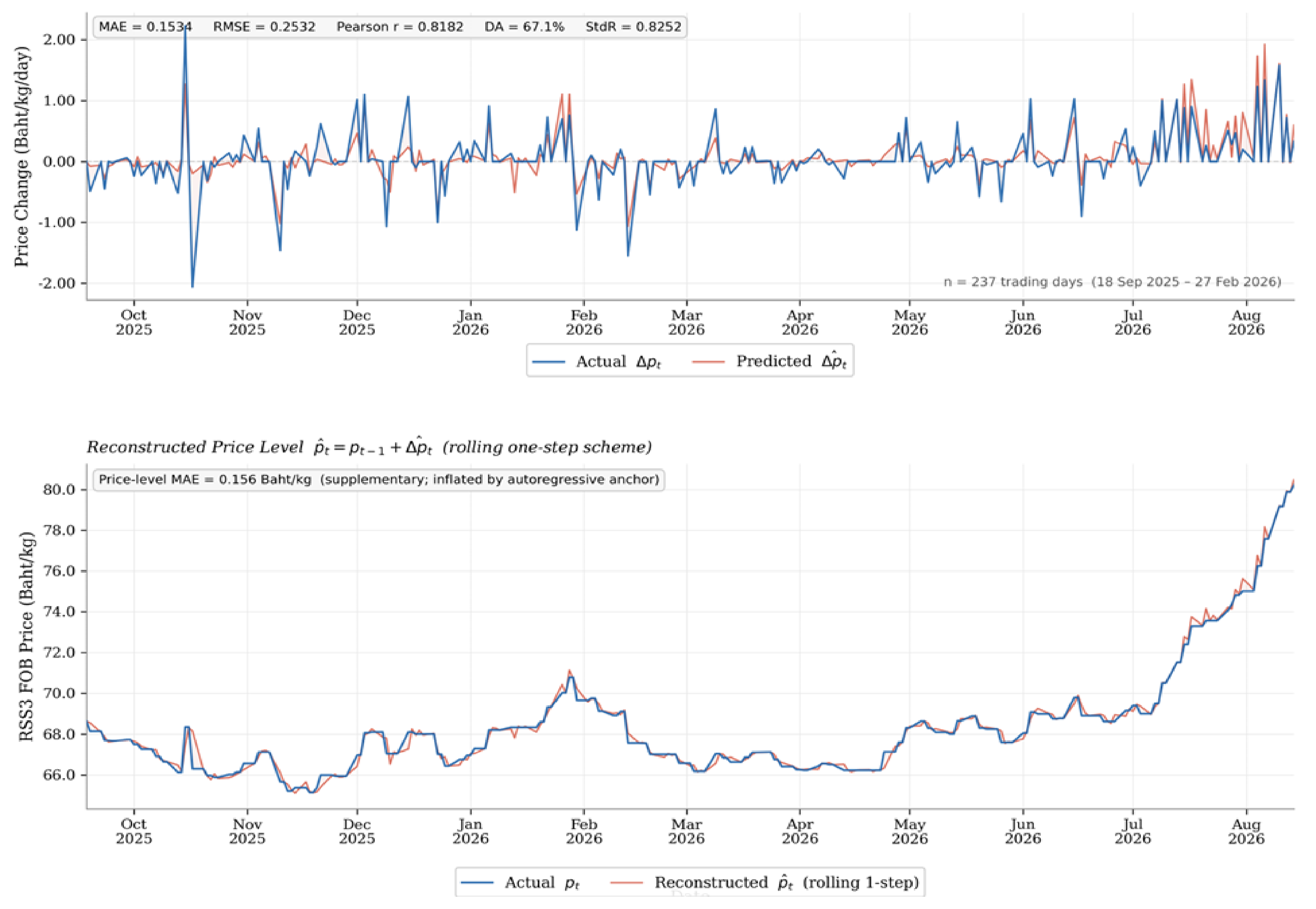

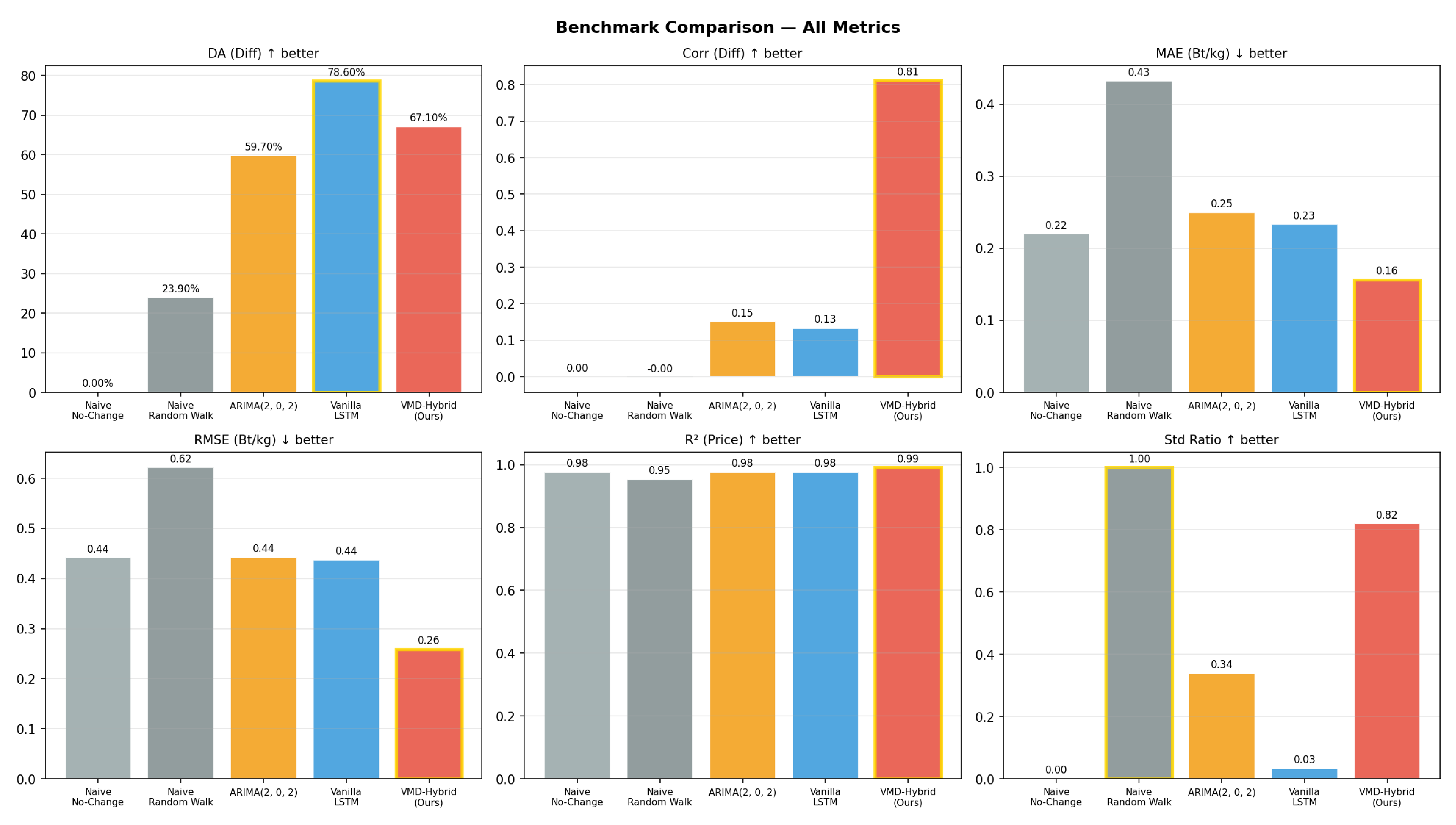

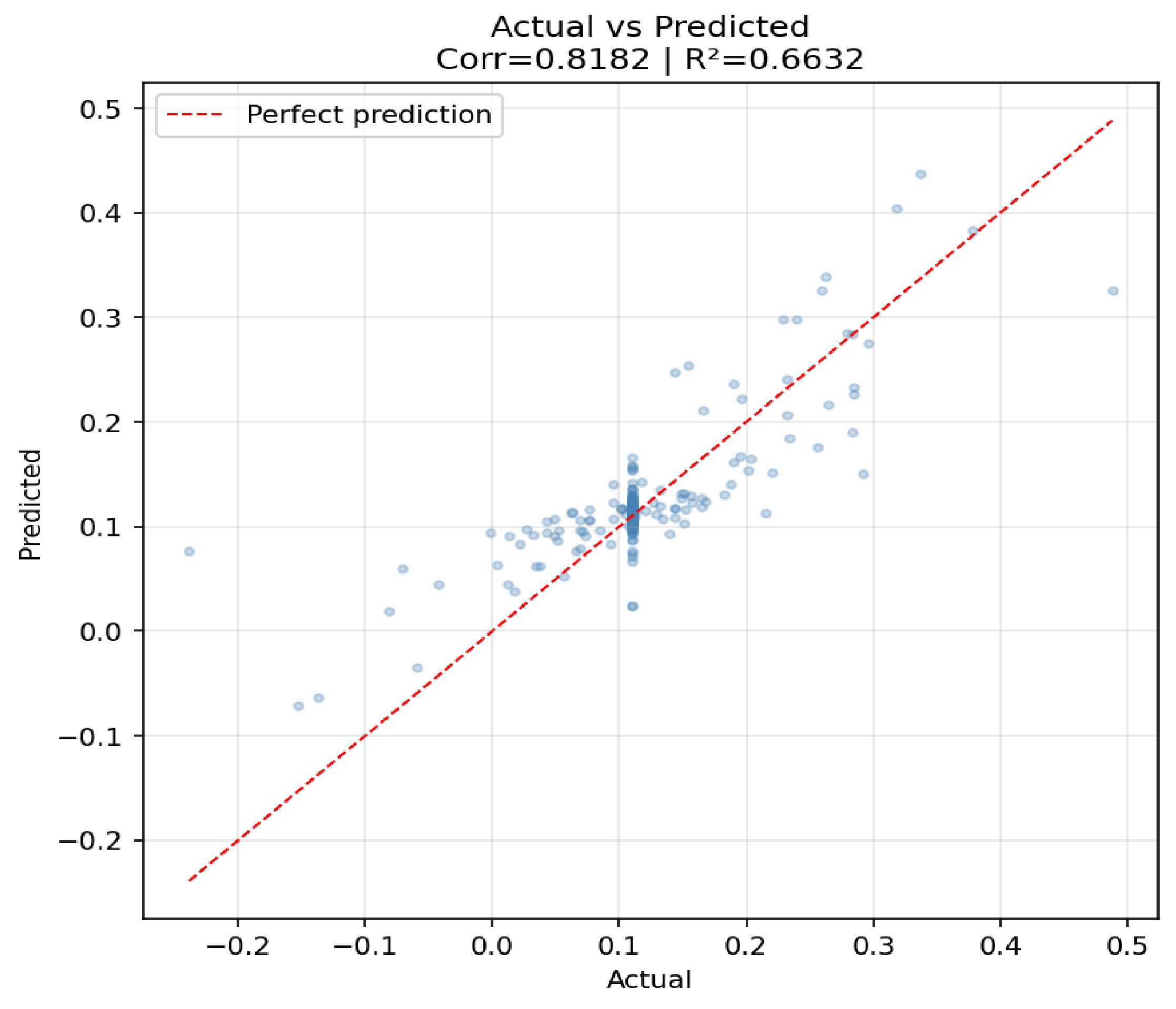

To address these gaps, this study proposes the VMD–Hybrid BiLSTM–Transformer, a dual-pathway forecasting framework for daily RSS3 FOB natural rubber price changes using a 24-feature economic input set over the period 2018–2026. The principal contributions of this study are threefold. First, rather than predicting each VMD intrinsic mode function independently and aggregating forecasts — an approach demonstrated here to produce variance collapse on differenced commodity price series (StdR

–

) — all five IMF series are appended directly to the economic feature matrix, preserving multi-scale frequency information within a single forward pass. Second, a cross-stage feature availability analysis is conducted to establish the minimum sufficient input configuration for reliable forecasting, providing a reproducible validation framework for practitioners operating under data constraints. Third, the limitations of directional accuracy as a standalone evaluation metric are formally demonstrated through confusion matrix and variance ratio diagnostics, and a suite of complementary metrics — including Pearson correlation and Standard Deviation Ratio — is proposed for evaluating differenced commodity price models. The theoretical basis for this metric combination is established by Taylor [

50] [pp. 7183–7184], who demonstrates that correlation and variance ratio are not substitutes but complementary descriptors: correlation measures co-movement in shape, while the standard deviation ratio measures amplitude fidelity, and a reduction in RMS error cannot be taken as evidence of improved skill if forecast variance has been suppressed in the process. This evaluation critique aligns with a broader methodological literature demonstrating that directional accuracy is structurally vulnerable to benchmark definition and class imbalance: Bürgi [

51] shows that DA is not a self-sufficient concept without knowledge of magnitude sensitivity and user objective, and McCarthy and Snudden [

52] [pp. 1–7] demonstrate that temporal aggregation can mechanically inflate success ratios beyond 0.5 even under a random walk, generating pseudo-skill where no genuine predictability exists. Beyond benchmark sensitivity, Costantini et al. [

53] show that DA becomes materially informative only when paired with magnitude-sensitive directional value measures, and Costantini and Kunst [

54] demonstrate that embedding DA directly into model-selection criteria seldom yields robust gains and can worsen MSE performance — implying that DA should function as a supplementary diagnostic rather than a primary evaluation objective. The remainder of this paper is organized as follows:

Section 2 describes the data, preprocessing pipeline, decomposition procedure, model architecture, and evaluation protocol;

Section 3 reports the empirical results;

Section 4 discusses the economic interpretation and practical implications; and

Section 5 concludes with limitations and directions for future research.