Submitted:

16 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Keywords:

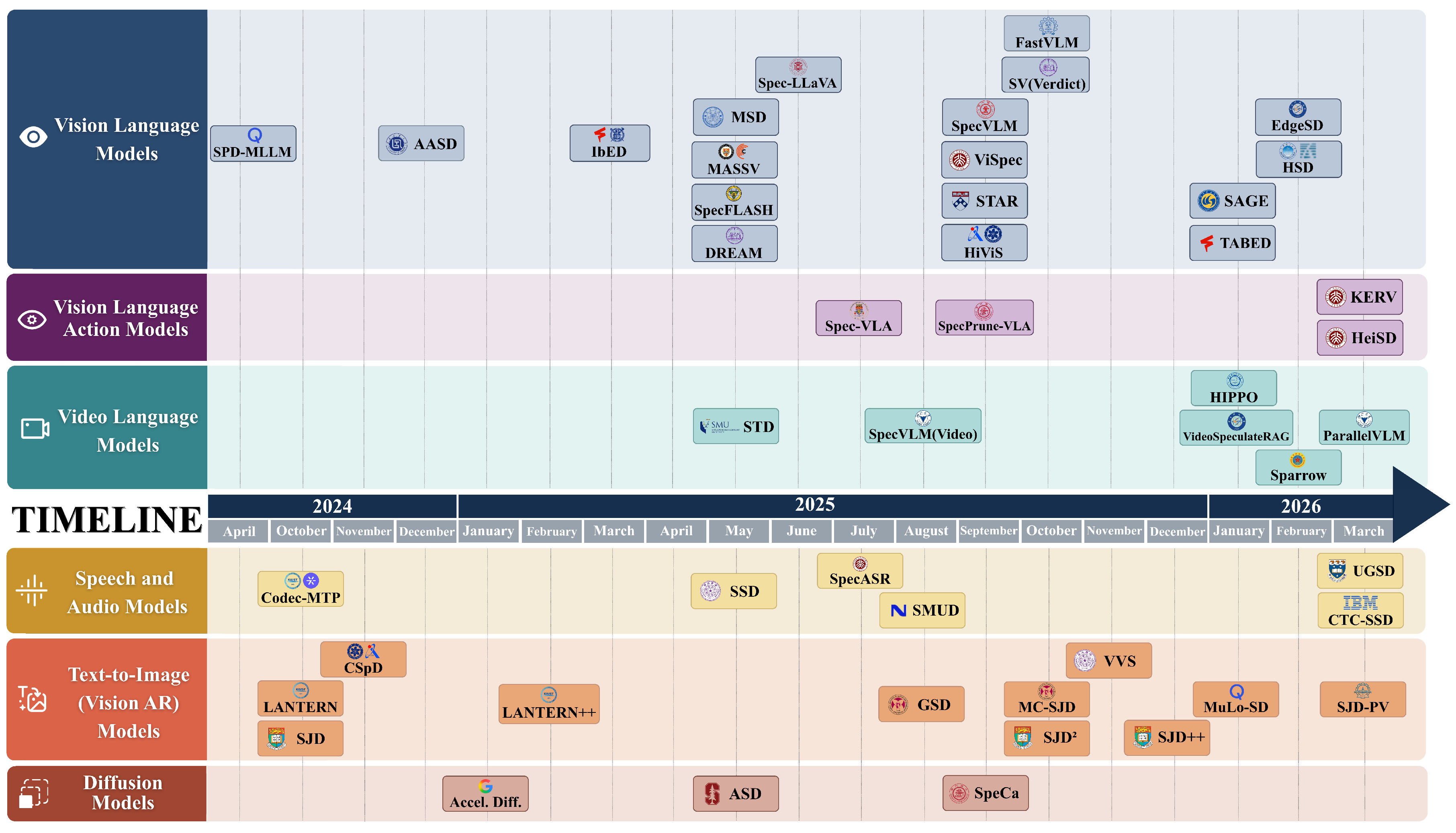

1. Introduction

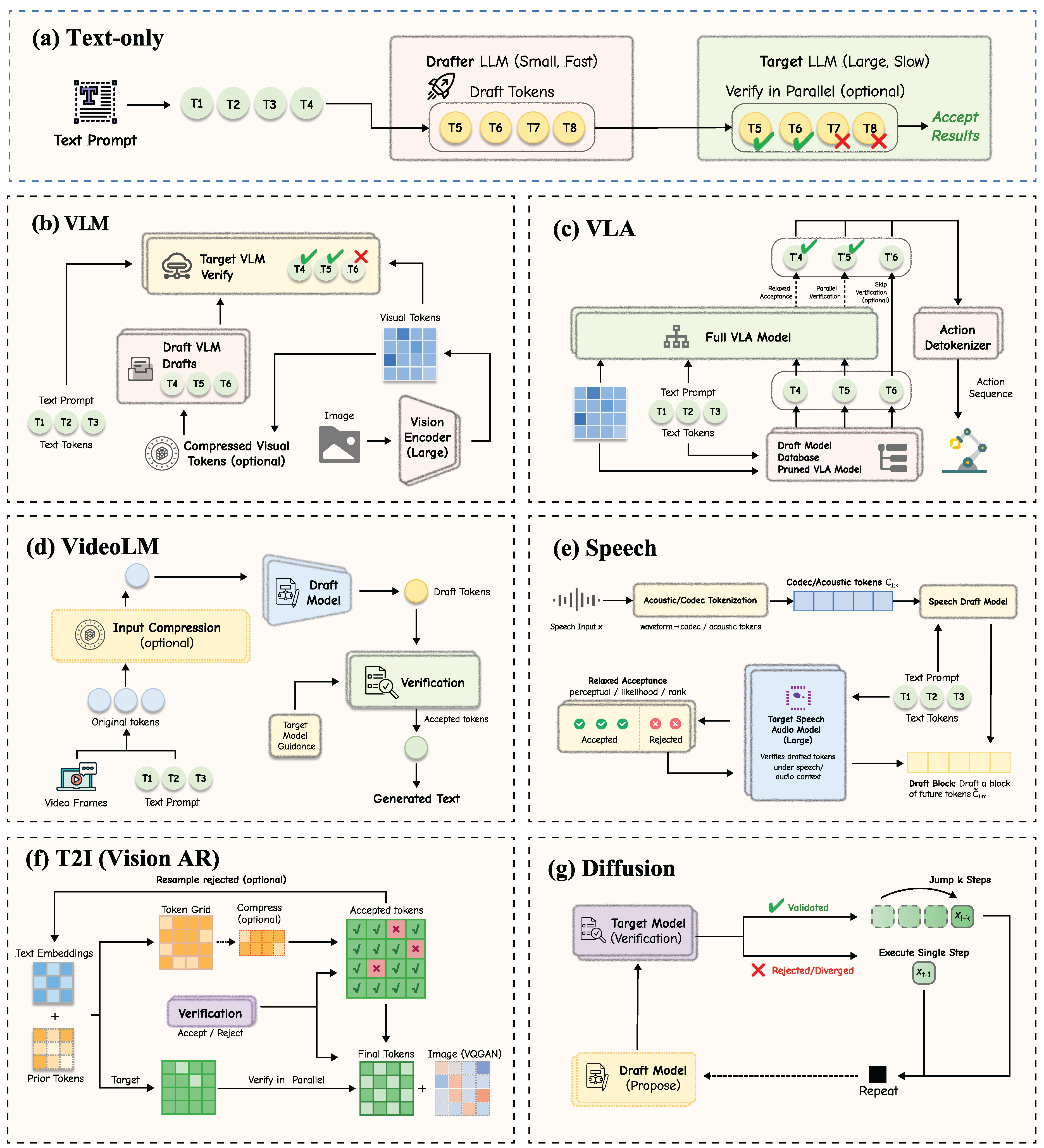

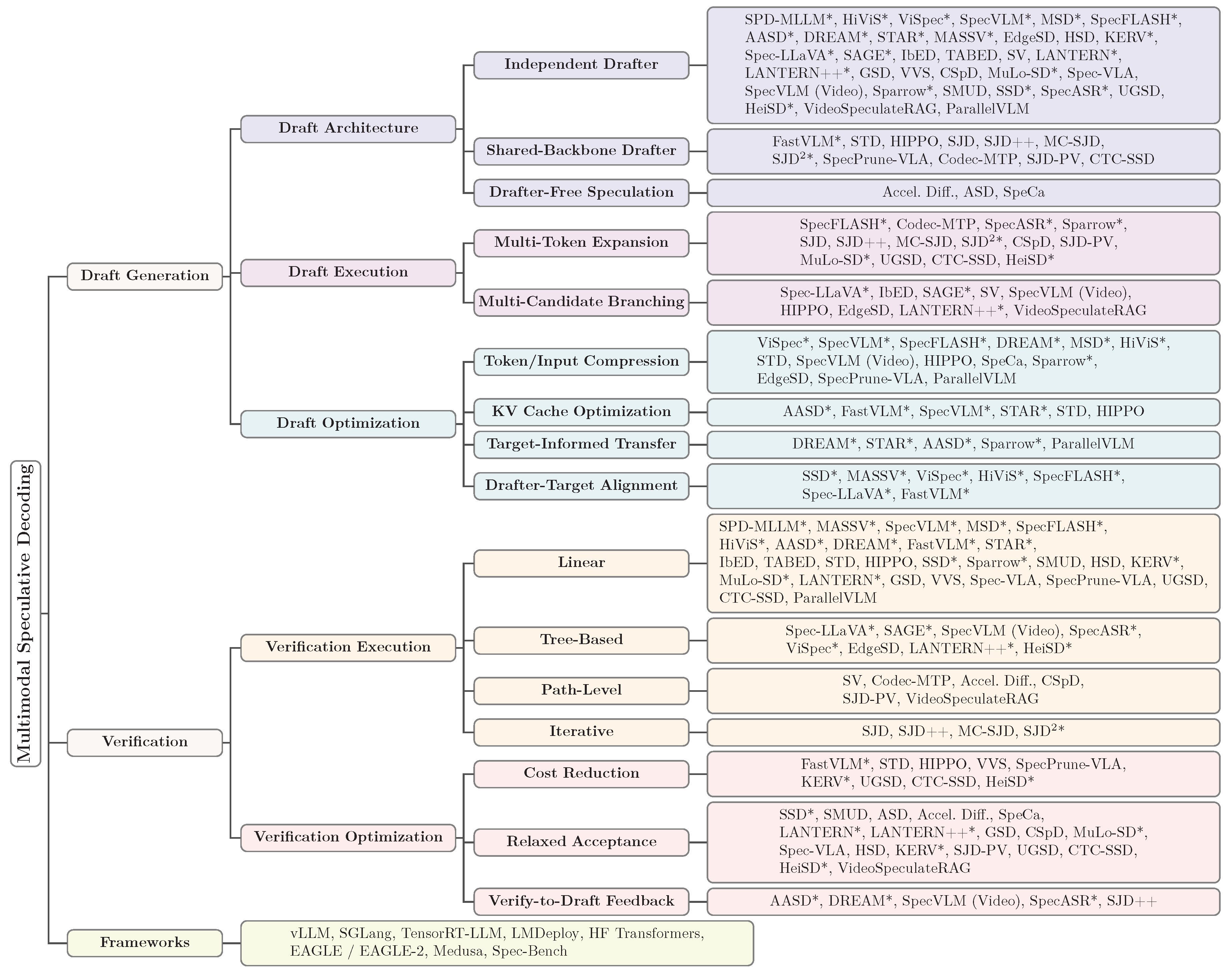

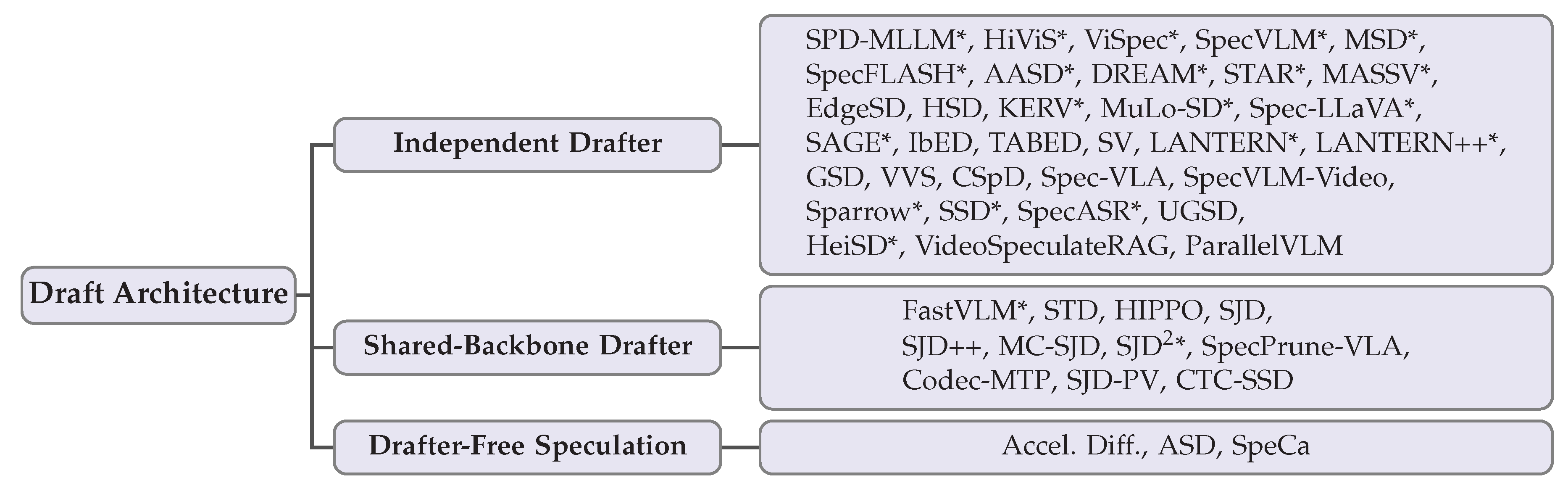

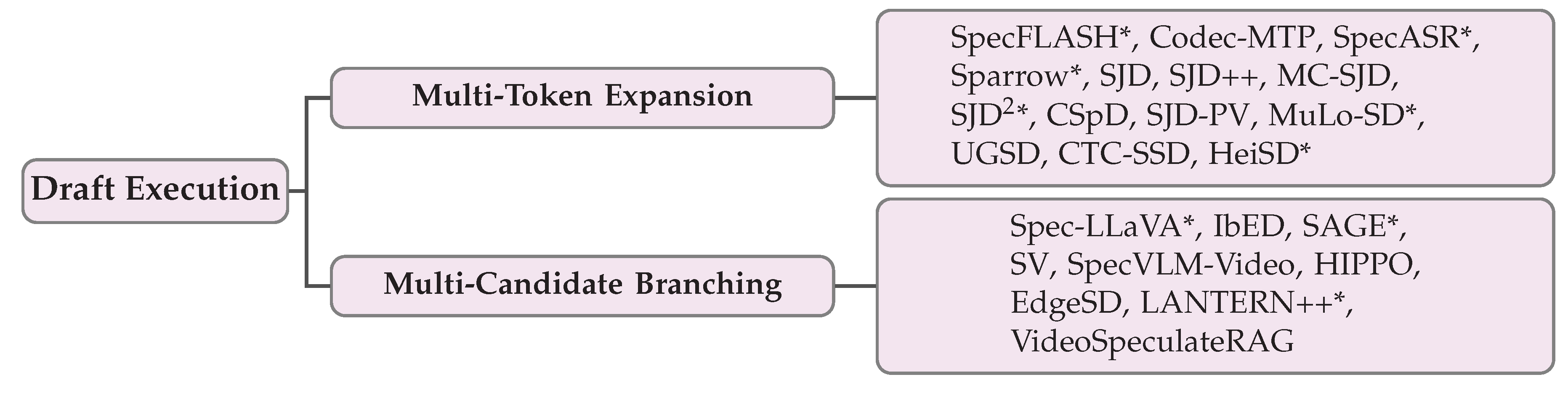

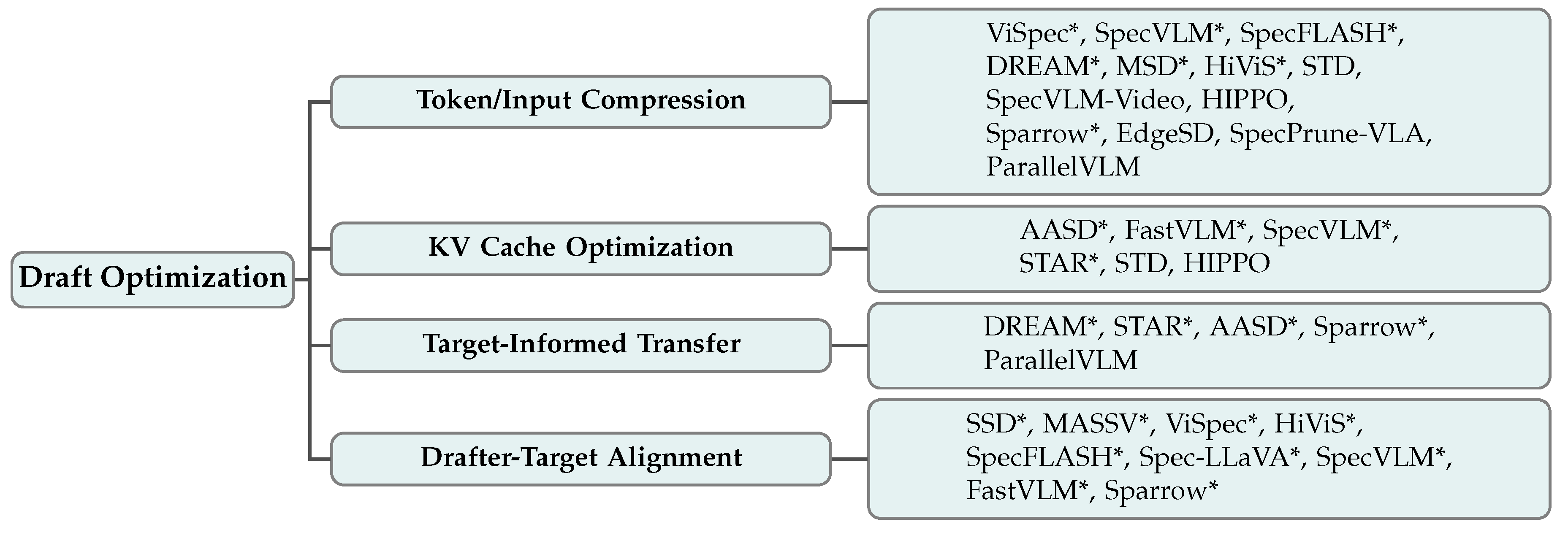

- Draft Generation (Section 3): The draft generation stage determines how candidate tokens are produced efficiently. We survey methods along three dimensions: draft architecture (independent drafters, shared-backbone drafters, and drafter-free speculation), draft execution strategies (multi-token expansion and multi-candidate branching), and draft optimization techniques related to token compression, KV cache optimization, target-informed transfer, and drafter-target alignment.

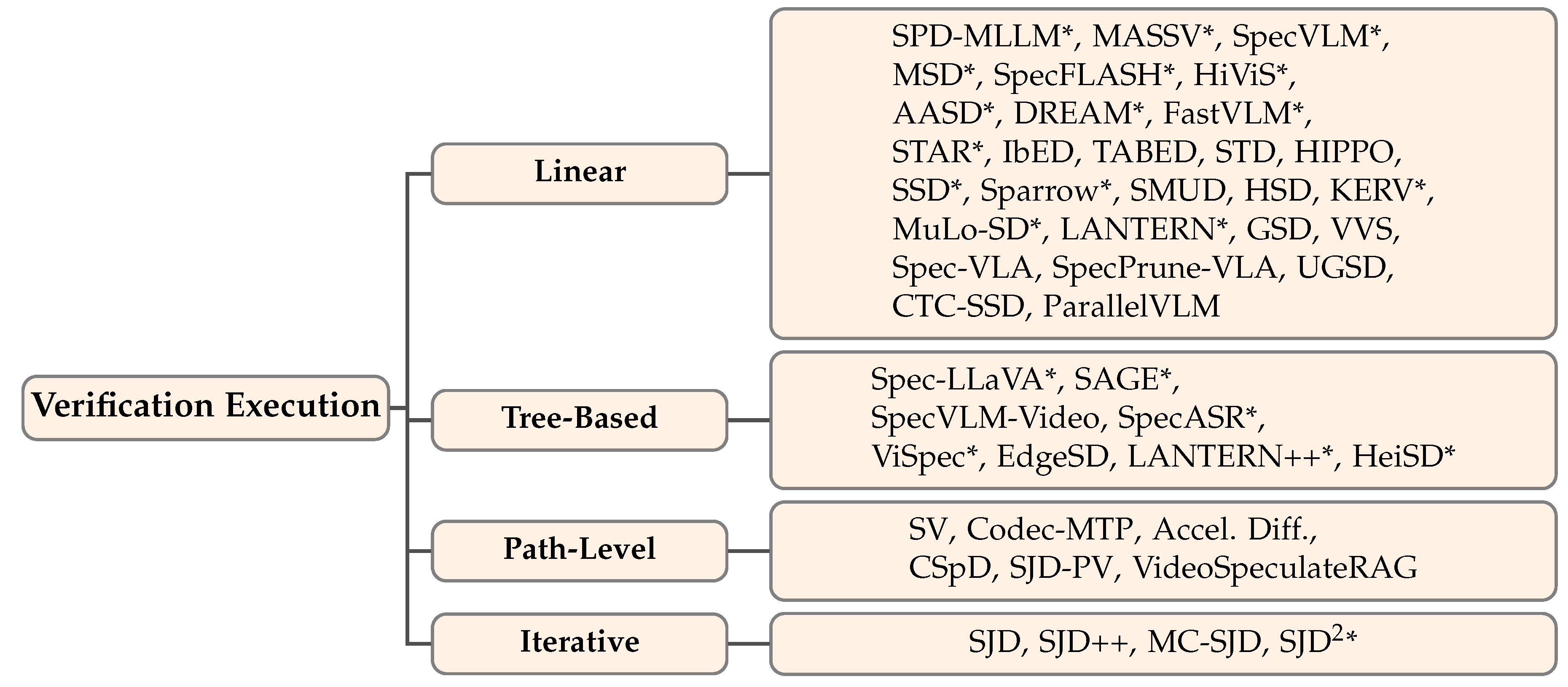

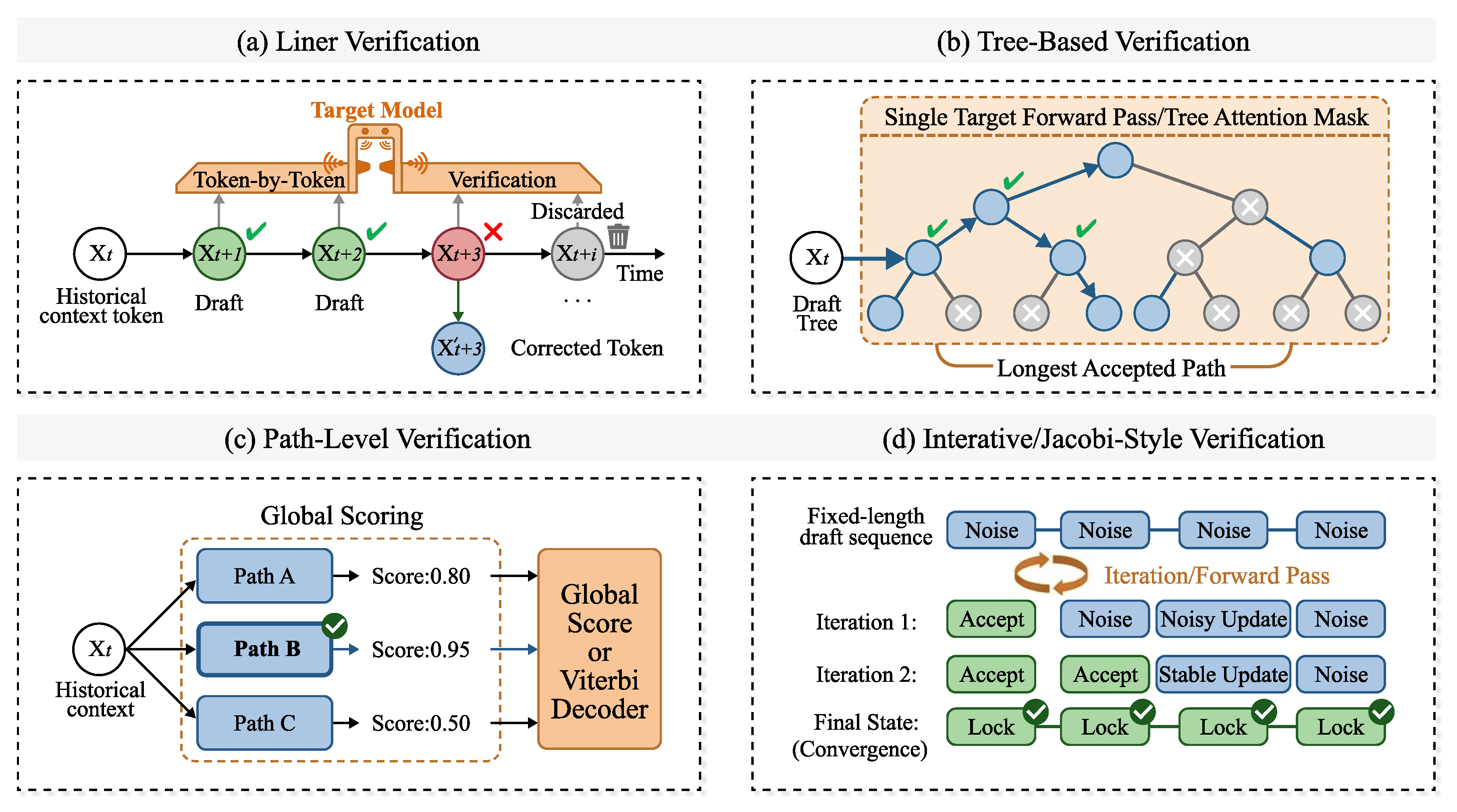

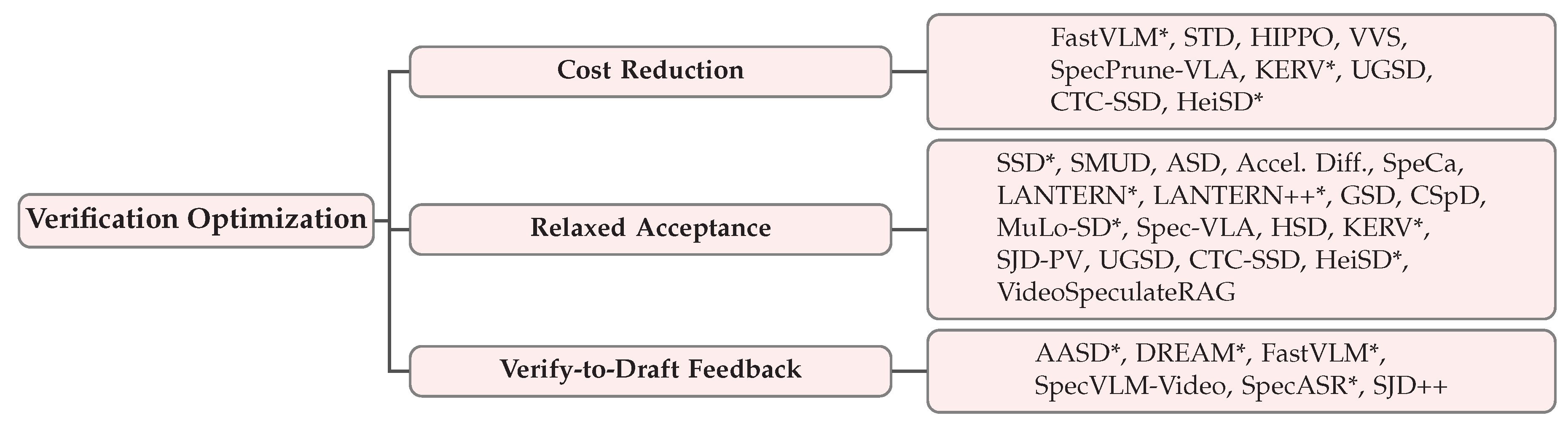

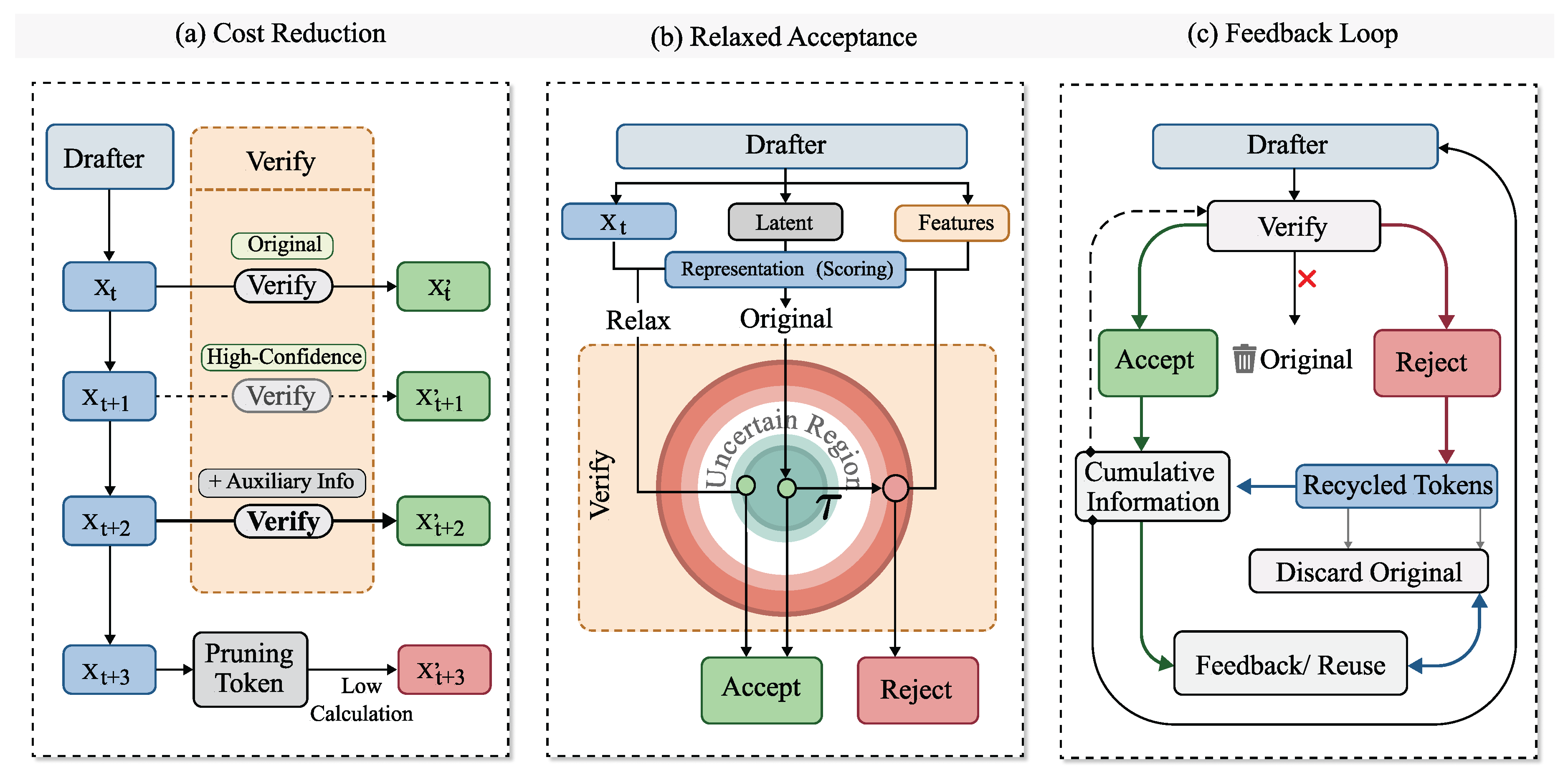

- Verification and Acceptance (Section 4): The verification stage determines how drafted tokens are validated against the target model. We survey verification execution strategies (linear, tree-based, path-level, and iterative verification) and optimization techniques including cost reduction, relaxed acceptance criteria, and verify-to-draft feedback loops.

- Inference Frameworks (Section 5): We survey existing frameworks that provide system-level support for speculative decoding, covering their unique features and optimizations for multimodal workloads.

2. Background

2.1. Standard Speculative Decoding

2.2. Multimodal Generation Architectures

3. Draft Generation Stage

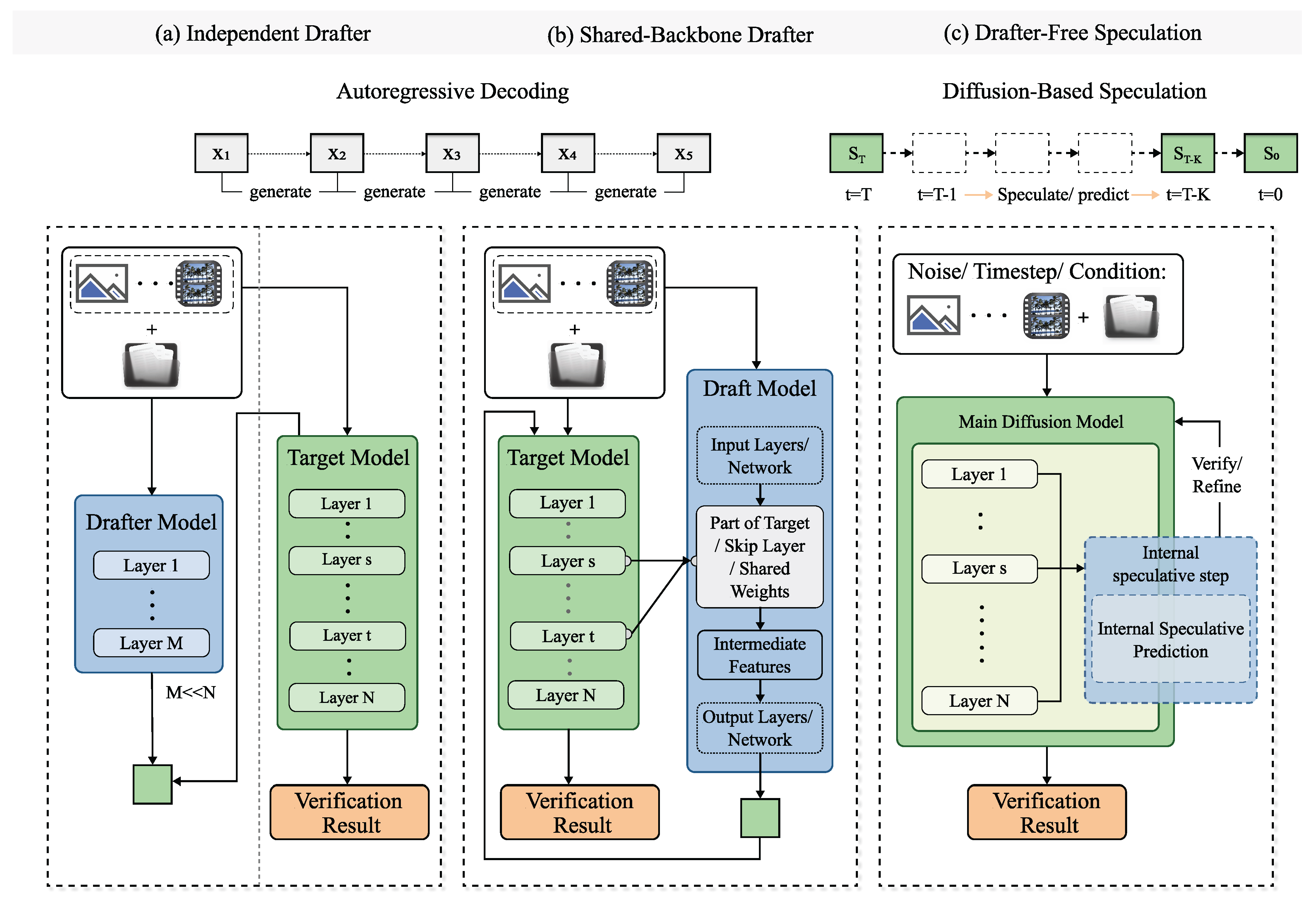

3.1. Draft Architecture

3.1.1. Independent Drafter

Text-Only Independent Drafters

Vision–Language Independent Drafters

Vision–Language–Action Independent Drafters

Video–Language Independent Drafters

Speech Independent Drafters

Text-to-Image (Vision AR) Independent Drafters

3.1.2. Shared-Backbone Drafter

3.1.3. Drafter-Free Speculation

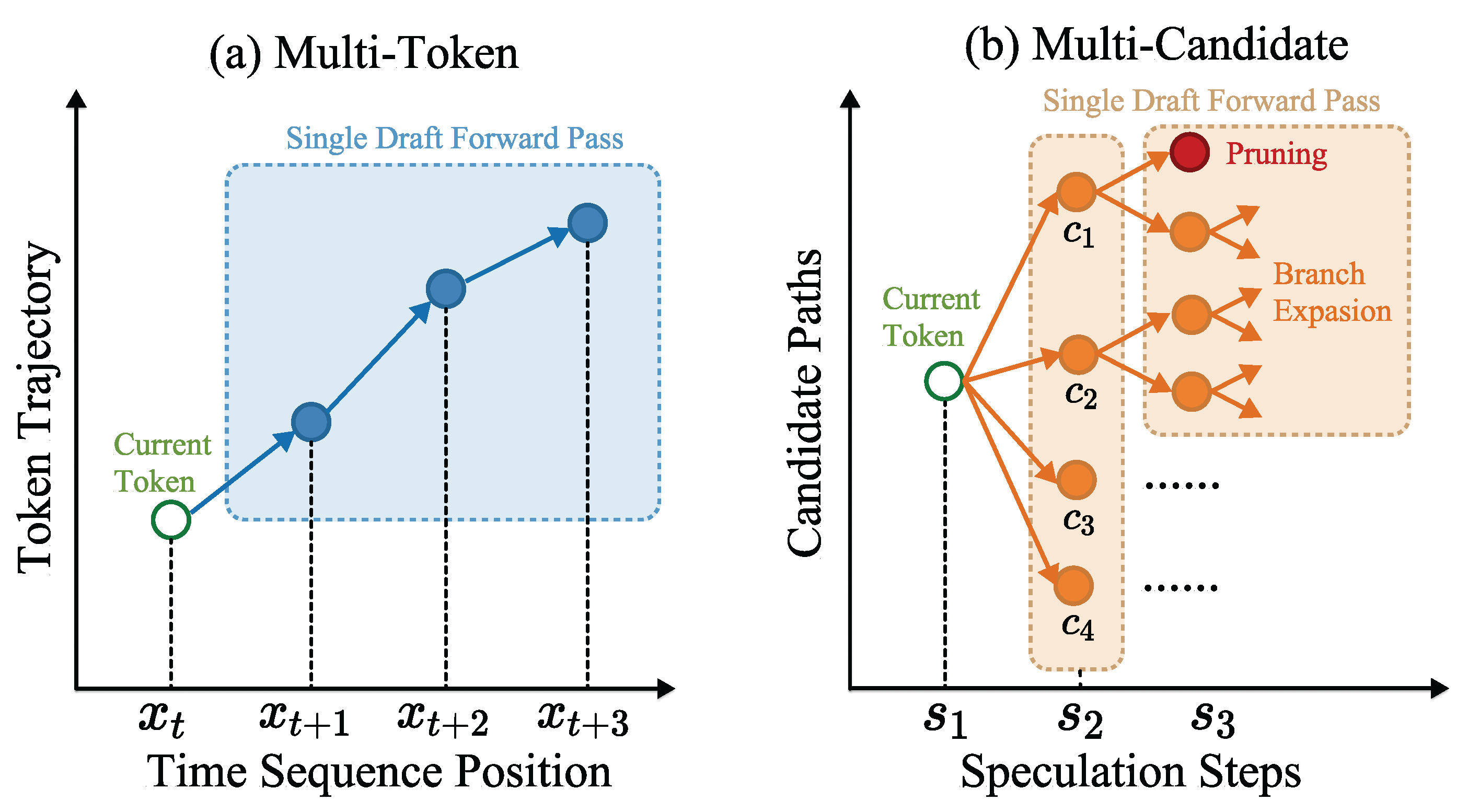

3.2. Draft Execution

3.2.1. Multi-Token Expansion

3.2.2. Multi-Candidate Branching

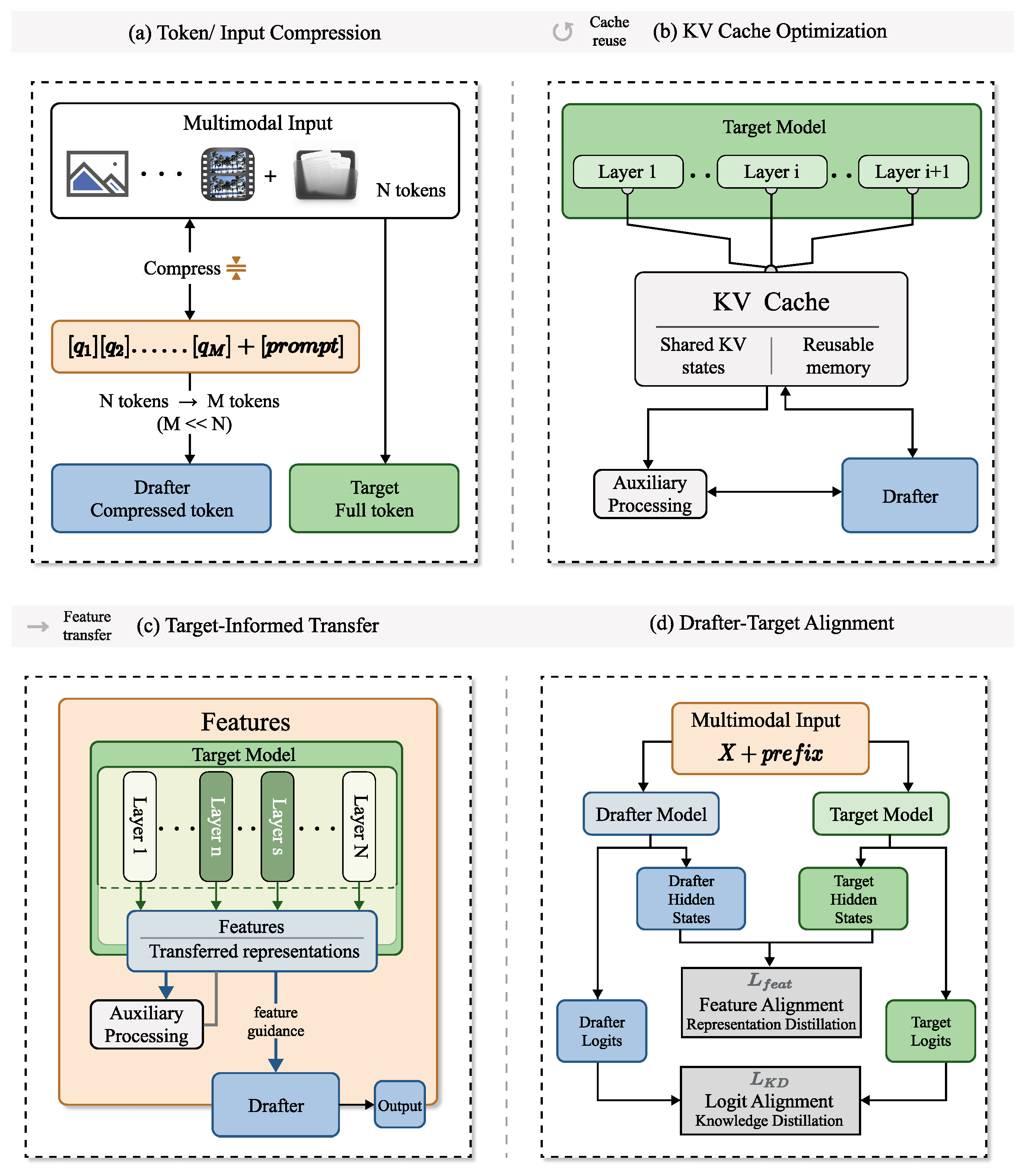

3.3. Draft Optimization

3.3.1. Token/Input Compression

Semantic Visual Compression.

Adaptive and Dynamic Compression

Attention-Based Token Pruning

Visual Computation Elimination

Similarity-Based Token Selection

3.3.2. KV Cache Optimization

3.3.3. Target-Informed Transfer

3.3.4. Drafter-Target Alignment

Architectural Inheritance

Feature-Level Distillation

Representation Alignment

4. Verification and Acceptance Stage

4.1. Verification Execution

4.1.1. Linear Verification (Standard)

4.1.2. Tree-Based Verification

4.1.3. Path-Level Verification

4.1.4. Iterative / Jacobi-Style Verification

4.2. Verification Optimization

4.2.1. Cost Reduction

Confidence-Based Verification Skipping

Cross-Stage Computation Reuse

Input Pruning for Shared-Backbone Verification

4.2.2. Relaxed Acceptance

Perceptual and Functional Tolerance

Latent-Space and Continuous-Density Acceptance

Coupling-Based and Exchangeability-Based Acceptance

Forecast Gating

Dual-Hypothesis Boundary Detection

4.2.3. Verify-to-Draft Feedback

Training-Time Alignment

Verifier Attention as Pruning Signal

Draft Recycling

5. Frameworks and Systems

vLLM

SGLang

TensorRT

TensorRT-LLM

TensorRT Edge-LLM

LMDeploy

Hugging Face Transformers

Speculative Decoding Algorithm Libraries

Discussion

6. Comparison and Benchmarking

6.1. Cross-Modal Comparative Summary

6.2. Unified VLM Benchmarking

6.3. Other Modalities Benchmarking

7. Open Challenges and Future Directions

7.1. The Multimodal Drafting Bottleneck

7.2. Extended Sequence Lengths and Memory Wall

7.3. Rigorous Verification Theory for Continuous Spaces

7.4. Strict Real-Time Constraints: Audio Streaming and VLA Control

7.5. Cross-Pollinating Representation-Level Verification to VLMs

7.6. Algorithm–Hardware Co-Design

7.7. Evaluation Standardization and Reproducibility

8. Conclusions

References

- Leviathan, Y.; Kalman, M.; Matias, Y. Fast inference from transformers via speculative decoding. In Proceedings of the International Conference on Machine Learning. PMLR, 2023; pp. 19274–19286. [Google Scholar]

- Chen, C.; Borgeaud, S.; Irving, G.; Lespiau, J.B.; Sifre, L.; Jumper, J. Accelerating large language model decoding with speculative sampling. arXiv 2023, arXiv:2302.01318. [Google Scholar] [CrossRef]

- Xia, H.; Yang, Z.; Dong, Q.; Wang, P.; Li, Y.; Ge, T.; Liu, T.; Li, W.; Sui, Z. Unlocking efficiency in large language model inference: A comprehensive survey of speculative decoding. Findings of the Association for Computational Linguistics: ACL 2024, 7655–7671. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv 2020, arXiv:2010.11929. [Google Scholar]

- Liu, H.; Li, C.; Wu, Q.; Lee, Y.J. Visual instruction tuning. Advances in neural information processing systems 2023, 36, 34892–34916. [Google Scholar]

- Team, Q. Qwen-VL: A Versatile Vision-Language Model for Understanding, Localization, Text Reading, and Beyond. arXiv 2023, arXiv:2308.12966. [Google Scholar]

- Zitkovich, B.; Yu, T.; Xu, S.; Xu, P.; Xiao, T.; Xia, F.; Wu, J.; Wohlhart, P.; Welker, S.; Wahid, A.; et al. Rt-2: Vision-language-action models transfer web knowledge to robotic control. In Proceedings of the Conference on Robot Learning. PMLR, 2023; pp. 2165–2183. [Google Scholar]

- Lin, B.; Ye, Y.; Zhu, B.; Cui, J.; Ning, M.; Jin, P.; Yuan, L. Video-llava: Learning united visual representation by alignment before projection. In Proceedings of the Proceedings of the 2024 conference on empirical methods in natural language processing, 2024; pp. 5971–5984. [Google Scholar]

- Défossez, A.; Copet, J.; Synnaeve, G.; Adi, Y. High fidelity neural audio compression. arXiv 2022, arXiv:2210.13438. [Google Scholar] [CrossRef]

- Esser, P.; Rombach, R.; Ommer, B. Taming transformers for high-resolution image synthesis. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021; pp. 12873–12883. [Google Scholar]

- Ho, J.; Jain, A.; Abbeel, P. Denoising diffusion probabilistic models. Advances in neural information processing systems 2020, 33, 6840–6851. [Google Scholar]

- Song, Y.; Sohl-Dickstein, J.; Kingma, D.P.; Kumar, A.; Ermon, S.; Poole, B. Score-based generative modeling through stochastic differential equations. arXiv 2020, arXiv:2011.13456. [Google Scholar]

- Gagrani, M.; Goel, R.; Jeon, W.; Park, J.; Lee, M.; Lott, C. On speculative decoding for multimodal large language models. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. 8285–8289. [Google Scholar]

- Kang, J.; Shu, H.; Li, W.s.; Zhai, Y.; Chen, X. ViSpec: Accelerating vision-language models with vision-aware speculative decoding. arXiv 2025, arXiv:2509.15235. [Google Scholar]

- Li, J.; Li, D.; Savarese, S.; Hoi, S. BLIP-2: Bootstrapping language-image pre-training with frozen image encoders and large language models. In Proceedings of the International conference on machine learning. PMLR, 2023; pp. 19730–19742. [Google Scholar]

- Huang, H.; Yang, F.; Liu, Z.; Yin, X.; Li, D.; Ren, P.; Barsoum, E. SpecVLM: Fast Speculative Decoding in Vision-Language Models. arXiv 2025, arXiv:2509.11815. [Google Scholar]

- Xie, Z.; Wang, P.; Qiu, S.; Cheng, J. HiViS: Hiding Visual Tokens from the Drafter for Speculative Decoding in Vision-Language Models. arXiv 2025, arXiv:2509.23928. [Google Scholar]

- Wang, Z.; Li, R.; Du, H.; Zhou, J.T.; Zhang, Y.; Yang, X. FLASH: Latent-Aware Semi-Autoregressive Speculative Decoding for Multimodal Tasks. arXiv 2025, arXiv:2505.12728. [Google Scholar]

- Lin, L.; Lin, Z.; Zeng, Z.; Ji, R. Speculative decoding reimagined for multimodal large language models. arXiv 2025, arXiv:2505.14260. [Google Scholar] [CrossRef]

- Ganesan, M.; Segal, S.; Aggarwal, A.; Sinnadurai, N.; Lie, S.; Thangarasa, V. MASSV: Multimodal adaptation and self-data distillation for speculative decoding of vision-language models. arXiv 2025, arXiv:2505.10526. [Google Scholar]

- Yang, C.; Chen, R.; Zhang, M.; Pang, W.; Chen, Y.; Xu, R.; Fu, K.; Wang, C.; Gao, L. AASD: Accelerate Inference by Aligning Speculative Decoding in Multimodal Large Language Models. In Proceedings of the 2025 62nd ACM/IEEE Design Automation Conference (DAC); IEEE, 2025; pp. 1–7. [Google Scholar]

- Hu, Y.; Xia, T.; Liu, Z.; Raman, R.; Liu, X.; Bao, B.; Sather, E.; Thangarasa, V.; Zhang, S.Q. Dream: Drafting with refined target features and entropy-adaptive cross-attention fusion for multimodal speculative decoding. arXiv 2025, arXiv:2505.19201. [Google Scholar]

- Liu, Z.; Hu, Y.; Xia, T.; Bao, B.; Sather, E.; Thangarasa, V.; Zhang, S.Q. STAR: Speculative Decoding with Searchable Drafting and Target-Aware Refinement for Multimodal Generation. In OpenReview; 2025. [Google Scholar]

- Cai, H.; Gan, C.; Wang, T.; Zhang, Z.; Han, S. Once-for-all: Train one network and specialize it for efficient deployment. arXiv 2019, arXiv:1908.09791. [Google Scholar]

- Lee, M.; Kang, W.; Ahn, B.; Classen, C.; Yan, M.; Koo, H.I.; Lee, K. In-batch Ensemble Drafting: Robust Speculative Decoding for LVLMs. In Proceedings of the First Workshop on Scalable Optimization for Efficient and Adaptive Foundation Models, 2025. [Google Scholar]

- Lee, M.; Kang, W.; Ahn, B.; Classen, C.; Galim, K.; Oh, S.; Yan, M.; Koo, H.I.; Lee, K. TABED: Test-Time Adaptive Ensemble Drafting for Robust Speculative Decoding in LVLMs. arXiv 2026, arXiv:2601.20357. [Google Scholar] [CrossRef]

- Huang, H.; Zhan, W.; Duan, H.; Peng, K.; Min, G.; Zhao, Z.; Zhao, Z.; Ye, Y. EdgeSD: Efficient Speculative Decoding with Vision-Decoding Disaggregation for MLLM Inference in Edge-Cloud Networks. IEEE Transactions on Mobile Computing, 2026. [Google Scholar]

- Liao, W.; Li, H.; Xie, P.; Cai, X.; Shen, Y.; Xin, Y.; Qin, Q.; Ye, S.; Li, T.; Hu, M.; et al. Training-Free Acceleration for Document Parsing Vision-Language Model with Hierarchical Speculative Decoding. arXiv 2026, arXiv:2602.12957. [Google Scholar]

- Wang, S.; Yu, R.; Yuan, Z.; Yu, C.; Gao, F.; Wang, Y.; Wong, D.F. Spec-VLA: Speculative Decoding for Vision-Language-Action Models with Relaxed Acceptance. arXiv 2025, arXiv:2507.22424. [Google Scholar]

- Zheng, Z.; Mao, Z.; Li, M.; Chen, J.; Sun, X.; Zhang, Z.; Cao, D.; Mei, H.; Chen, X. KERV: Kinematic-Rectified Speculative Decoding for Embodied VLA Models. In Proceedings of the Proceedings of the 63rd ACM/IEEE Design Automation Conference (DAC), 2026. [Google Scholar]

- Zheng, Z.; Mao, Z.; Tian, S.; Li, M.; Chen, J.; Sun, X.; Zhang, Z.; Liu, X.; Cao, D.; Mei, H.; et al. HeiSD: Hybrid Speculative Decoding for Embodied Vision-Language-Action Models with Kinematic Awareness. arXiv 2026, arXiv:2603.17573. [Google Scholar]

- Zhang, L.; Zhang, Z.; Hong, W.; Qiao, P.; Li, D. Sparrow: Text-Anchored Window Attention with Visual-Semantic Glimpsing for Speculative Decoding in Video LLMs. arXiv 2026, arXiv:2602.15318. [Google Scholar]

- Li, G.; Liu, P. FastV-RAG: Towards Fast and Fine-Grained Video QA with Retrieval-Augmented Generation. arXiv 2026, arXiv:2601.01513. [Google Scholar]

- Kong, Q.; Shen, Y.; Ji, Y.; Li, H.; Wang, C. ParallelVLM: Lossless Video-LLM Acceleration with Visual Alignment Aware Parallel Speculative Decoding. arXiv 2026, arXiv:2603.19610. [Google Scholar]

- Lin, Z.; Zhang, Y.; Yuan, Y.; Yan, Y.; Liu, J.; Wu, Z.; Hu, P.; Yu, Q. Accelerating Autoregressive Speech Synthesis Inference With Speech Speculative Decoding. arXiv 2025, arXiv:2505.15380. [Google Scholar] [CrossRef]

- Wei, L.; Zhong, S.; Xu, S.; Wang, R.; Huang, R.; Li, M. SpecASR: Accelerating LLM-based Automatic Speech Recognition via Speculative Decoding. In Proceedings of the 2025 62nd ACM/IEEE Design Automation Conference (DAC); IEEE, 2025; pp. 1–7. [Google Scholar]

- Okabe, K.; Yamamoto, H. Simultaneous Masked and Unmasked Decoding with Speculative Decoding Masking for Fast ASR without Accuracy Loss. Proceedings of the Proc. Interspeech 2025, 2025, 634–638. [Google Scholar]

- Xue, X.; Lu, J.; Gao, Y.; Huang, G.; Dang, T.; Jia, H. Edge–Cloud Collaborative Speech Emotion Captioning via Token-Level Speculative Decoding in Audio-Language Models. arXiv 2026, arXiv:2603.11397. [Google Scholar]

- Jang, D.; Park, S.; Yang, J.Y.; Jung, Y.; Yun, J.; Kundu, S.; Kim, S.Y.; Yang, E. LANTERN: Accelerating Visual Autoregressive Models with Relaxed Speculative Decoding. arXiv 2025. 2025, arXiv:2410.03355. [Google Scholar]

- Jang, D.; Park, S.; Yang, J.Y.; Jung, Y.; Yun, J.; Kundu, S.; Kim, S.Y.; Yang, E. LANTERN++: Enhanced Relaxed Speculative Decoding with Static Tree Drafting for Visual Auto-regressive Models. ICLR 2025 SCOPE Workshop arXiv, 2025. [Google Scholar]

- So, J.; Shin, J.; Kook, H.; Park, E. Grouped Speculative Decoding for Autoregressive Image Generation. arXiv 2025. arXiv:2508.07747. [CrossRef]

- Dong, H.; Li, Y.; Lu, R.; Tang, C.; Xia, S.T.; Wang, Z. VVS: Accelerating Speculative Decoding for Visual Autoregressive Generation via Partial Verification Skipping. arXiv 2025, arXiv:2511.13587. [Google Scholar] [CrossRef]

- Wang, Z.; Zhang, R.; Ding, K.; Yang, Q.; Li, F.; Xiang, S. Continuous speculative decoding for autoregressive image generation. arXiv 2024, arXiv:2411.11925. [Google Scholar] [CrossRef]

- Peruzzo, E.; Sautière, G.; Habibian, A. Multi-Scale Local Speculative Decoding for Image Generation. arXiv 2026, arXiv:2601.05149. [Google Scholar] [CrossRef]

- Bajpai, D.J.; Hanawal, M.K. FastVLM: Self-Speculative Decoding for Fast Vision-Language Model Inference. In Proceedings of the Proceedings of the 14th International Joint Conference on Natural Language Processing and the 4th Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics, 2025; pp. 1166–1183. [Google Scholar]

- Wang, H.; Xu, J.; Pan, J.; Zhou, Y.; Dai, G. SpecPrune-VLA: Accelerating Vision-Language-Action Models via Action-Aware Self-Speculative Pruning. arXiv 2025, arXiv:2509.04043. [Google Scholar]

- Zhang, X.; Du, C.; Yu, S.; Wu, J.; Zhang, F.; Gao, W.; Liu, Q. Sparse-to-Dense: A Free Lunch for Lossless Acceleration of Video Understanding in LLMs. Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 2, 734–742. [Google Scholar]

- Lv, Q.; Liu, T.; Wu, W.; Xu, X.; Zhou, B.; Wu, F.; Zhang, C. HIPPO: Accelerating Video Large Language Models Inference via Holistic-aware Parallel Speculative Decoding. arXiv 2026, arXiv:2601.08273. [Google Scholar] [CrossRef]

- Nguyen, T.D.; Kim, J.H.; Choi, J.; Choi, S.; Park, J.; Lee, Y.; Chung, J.S. Accelerating codec-based speech synthesis with multi-token prediction and speculative decoding. In Proceedings of the ICASSP 2025-2025 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP); IEEE; Volume 2025, pp. 1–5.

- Saon, G.; Thomas, S.; Fukuda, T.; Nagano, T.; Dekel, A.; Lastras, L. Self-Speculative Decoding for LLM-based ASR with CTC Encoder Drafts. arXiv 2026, arXiv:2603.11243. [Google Scholar]

- Teng, Y.; Shi, H.; Liu, X.; Ning, X.; Dai, G.; Wang, Y.; Li, Z.; Liu, X. Accelerating Auto-regressive Text-to-Image Generation with Training-free Speculative Jacobi Decoding. arXiv 2025. 2025, arXiv:2410.01699. [Google Scholar]

- Teng, Y.; Jiang, Z.; Shi, H.; Liu, X.; Ning, X.; Dai, G.; Wang, Y.; Li, Z.; Liu, X. SJD++: Improved Speculative Jacobi Decoding for Training-free Acceleration of Discrete Auto-regressive Text-to-Image Generation. arXiv 2025, arXiv:2512.07503. [Google Scholar]

- So, J.; Kook, H.; Jang, C.; Park, E. MC-SJD: Maximal Coupling Speculative Jacobi Decoding for Autoregressive Visual Generation Acceleration. arXiv 2025, arXiv:2510.24211. [Google Scholar]

- Teng, Y.; Wang, F.; Liu, X.; Chen, Z.; Shi, H.; Wang, Y.; Li, Z.; Liu, W.; Zou, D.; Liu, X. Speculative Jacobi-Denoising Decoding for Accelerating Autoregressive Text-to-image Generation. arXiv 2025. 2025, arXiv:2510.08994. [Google Scholar]

- Yu, Z.; Zhang, B.; Shan, B.; Liu, X.; Zhou, D.; Liang, G.; Ye, G.; Ye, Y. SJD-PV: Speculative Jacobi Decoding with Phrase Verification for Autoregressive Image Generation. arXiv 2026, arXiv:2603.06666. [Google Scholar]

- De Bortoli, V.; Galashov, A.; Gretton, A.; Doucet, A. Accelerated diffusion models via speculative sampling. arXiv 2025, arXiv:2501.05370. [Google Scholar] [CrossRef]

- Hu, H.; Das, A.; Sadigh, D.; Anari, N. Diffusion Models are Secretly Exchangeable: Parallelizing DDPMs via Autospeculation. arXiv 2025, arXiv:2505.03983. [Google Scholar] [CrossRef]

- Liu, J.; Zou, C.; Lyu, Y.; Ren, F.; Wang, S.; Li, K.; Zhang, L. Speca: Accelerating diffusion transformers with speculative feature caching. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 10024–10033. [Google Scholar]

- Huo, M.; Zhang, J.; Wang, H.; Xu, J.; Chen, Z.; Tai, H.; Chen, Y. Spec-LLaVA: Accelerating Vision-Language Models with Dynamic Tree-Based Speculative Decoding. arXiv 2025, arXiv:2509.11961. [Google Scholar]

- Liu, Y.; Qin, L.; Wang, S. Small drafts, big verdict: Information-intensive visual reasoning via speculation. arXiv 2025, arXiv:2510.20812. [Google Scholar] [CrossRef]

- Tong, Y.; Zhang, T.; Wan, Y.; Lin, K.; Yuan, J.; Hu, C. SAGE: Accelerating Vision-Language Models via Entropy-Guided Adaptive Speculative Decoding. arXiv 2026, arXiv:2602.00523. [Google Scholar]

- Ji, Y.; Zhang, J.; Xia, H.; Chen, J.; Shou, L.; Chen, G.; Li, H. Specvlm: Enhancing speculative decoding of video llms via verifier-guided token pruning. In Proceedings of the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, 2025; pp. 7216–7230. [Google Scholar]

- Li, Y.; Wei, F.; Zhang, C.; Zhang, H. EAGLE: Speculative sampling requires rethinking feature uncertainty. arXiv 2024, arXiv:2401.15077. [Google Scholar] [CrossRef]

- Kwon, W.; Li, Z.; Zhuang, S.; Sheng, Y.; Zheng, L.; Yu, C.H.; Gonzalez, J.; Zhang, H.; Stoica, I. Efficient memory management for large language model serving with pagedattention. In Proceedings of the Proceedings of the 29th symposium on operating systems principles, 2023; pp. 611–626. [Google Scholar]

- Zheng, L.; Yin, L.; Xie, Z.; Sun, C.; Huang, J.; Yu, C.H.; Cao, S.; Kozyrakis, C.; Stoica, I.; Gonzalez, J.E.; et al. Sglang: Efficient execution of structured language model programs. Advances in neural information processing systems 2024, 37, 62557–62583. [Google Scholar]

- NVIDIA. TensorRT-LLM. 2024. Available online: https://github.com/NVIDIA/TensorRT-LLM (accessed on 2026-03-11).

- NVIDIA. TensorRT-Edge-LLM: High-Performance Inference for LLMs and VLMs on Embedded Platforms. GitHub repository. 2026. Available online: https://github.com/NVIDIA/TensorRT-Edge-LLM (accessed on 11 March 2026).

- MMRazor, MMDeploy; Teams. LMDeploy: A Toolkit for Compressing, Deploying, and Serving LLMs. Available online: https://github.com/InternLM/lmdeploy (accessed on 11 March 2026).

- Wolf, T.; Debut, L.; Sanh, V.; Chaumond, J.; Delangue, C.; Moi, A.; Cistac, P.; Rault, T.; Louf, R.; Funtowicz, M.; et al. Huggingface’s transformers: State-of-the-art natural language processing. arXiv 2019, arXiv:1910.03771. [Google Scholar]

- Cai, T.; Li, Y.; Geng, Z.; Peng, H.; Lee, J.D.; Chen, D.; Dao, T. Medusa: Simple LLM inference acceleration framework with multiple decoding heads. arXiv 2024, arXiv:2401.10774. [Google Scholar] [CrossRef]

- Shen, H.; Wang, X.; Zhang, P.; Hsieh, Y.; Han, Q.; Wan, Z.; Zhang, Z.; Zhang, J.; Xiong, J.; Liu, Z.; et al. MMSpec: Benchmarking Speculative Decoding for Vision-Language Models. arXiv 2026, arXiv:2603.14989. [Google Scholar] [CrossRef]

| Methods | Formulation ( or ) | Architecture |

|---|---|---|

| Independent Drafters | (Separate model) | Small VLM / LM, Vision Predictor, ConvNet Head, Retrieval-Based |

| Shared Backbone | (Target sub-graph) | Early Exiting, Layer Skipping, Sparse KV Routing, Jacobi Self-Drafting |

| Drafter-Free Speculation | (No auxiliary model) | Coupling Jumps, Exchangeability, Feature Forecasting |

| Strategy | State (Q) & Pattern | Drafter Mechanism |

|---|---|---|

| Multi-Token Expansion | Block Prediction, Jacobi Refinement, Semi-AR Heads | |

| Multi-Candidate Branching | Branch Expansion, Speculation Trees, Parallel Proposals |

| Criteria | Formulation | Motivation |

|---|---|---|

| Distributional Match (Section 4.1.1) | Distribution-preserving decoding (lossless equivalence to target model) | |

| Representation Consistency (Section 4.2.2) | Continuous-state validation (no discrete token identity) | |

| Latent-Space Equivalence (Section 4.2.2) | Perceptual / codebook invariance (multiple tokens map to same meaning) |

| Framework | SD Methods | MM | MM SD |

|---|---|---|---|

| vLLM [64] | EAGLE, EAGLE-3, MTP, Draft Model, n-gram, Suffix | ▵a | |

| SGLang [65] | EAGLE, EAGLE-3, MTP, Standalone Draft, n-gram | × | |

| TensorRT-LLM [66] | EAGLE (1/2/3), Medusa, ReDrafter, MTP, Lookahead, Draft Model, n-gram | × | |

| TensorRT Edge-LLM [67] | EAGLE-3 | b | |

| LMDeploy [68] | Medusa (TreeMask) | × | |

| HF Transformers [69] | Draft Model (assisted_generation) | ×c | |

| aAs of v0.12.0, EAGLE/EAGLE-3 multimodal SD is supported for Qwen3-VL (PR #29594); broader multimodal draft model support is under development (Issue #33458). bSupports multi-batch EAGLE-3 for VLMs including Qwen2/2.5/3-VL, InternVL3, and Phi-4-Multimodal on embedded platforms. cServes as the de facto prototyping backend for multimodal SD research methods, though its assisted_generation API itself lacks multimodal-specific optimizations. | |||

| Methods | Drafting | Verification | Representative Target | Speedup (rep.) |

|||

|---|---|---|---|---|---|---|---|

| Draft Mechanism | Architecture / Approach | Tuning-free | Criterion | Pattern | |||

| Vision–Language Models | |||||||

| SPD-MLLM | Independent | Text-Only LM | × (Pretrain) | Strict | Linear | LLaVA (LLaMA) | |

| MASSV | Independent | Small VLM | × (Distillation) | Strict | Linear | Qwen2.5-VL, Gemma3 | |

| ViSpec | Independent | Visual Adaptor | × (Tuning) | Strict | Tree | LLaVA-1.6, Qwen2.5-VL | |

| SpecVLM | Independent | Visual Compressor | × (Tuning) | Strict | Linear | LLaVA, Qwen2-VL | |

| FastVLM | Shared Backbone | Early Exiting | × (Imitation) | Strict | Linear | LLaVA-1.5 | |

| MSD | Independent | Text-Vision Decouple | Strict | Linear | LLaVA-1.5 | ||

| SpecFLASH | Independent | Semi-AR Heads | × (Tuning) | Strict | Linear | LLaVA, Qwen-VL | |

| Spec-LLaVA | Independent | Token Tree | × (Tuning) | Strict | Tree | LLaVA-1.5 | |

| SAGE | Independent | Adaptive Tree | × (Tuning) | Strict | Tree | LLaVA-OV, Qwen2.5-VL | |

| HiViS | Independent | Visual Token Hiding | × (Tuning) | Strict | Linear | Qwen2.5-VL | |

| AASD | Independent | T-D Attention | × (T-D Attn) | Strict | Linear | LLaVA | |

| DREAM | Independent | Target-Informed | × (Tuning) | Strict | Linear | LLaVA-v1.6 | |

| STAR | Independent | NAS + Target Feat. | × (OFA Tuning) | Strict | Linear | LLaVA-v1.6 | |

| IbED | Independent | Multi-Prompt Ensemble | Strict | Linear | LLaMA, LLaVA-1.5 | † | |

| TABED | Independent | Test-Time Adaptive Weighting | Strict | Linear | LLaVA-1.5, LLaVA-NeXT | ||

| EdgeSD | Independent | VED + ITM + Tree | Strict | Tree | LLaVA-OV, InternVL2.5 | ||

| SV (Verdict)★ | Independent | Small VLM | Path-Level (NLL) | Path | Qwen2.5-VL | N/A | |

| HSD‡ | Independent | Pipeline Draft | Relaxed (-Tol.) | Linear (Hier.) | Qwen3-VL | ||

| Vision–Language–Action Models | |||||||

| Spec-VLA | Independent | Small VLA | × (Training) | Relaxed (Action) | Linear | OpenVLA | |

| SpecPrune-VLA | Shared Backbone | Action-Aware Pruning | Strict | Linear | OpenVLA-OFT, | ||

| KERV | Independent | KF-Rectified Draft | × (Draft Train) | Relaxed (Kinematic) | Linear | OpenVLA (LIBERO) | |

| HeiSD | Independent | Hybrid Retrieval + Drafter | × (Draft Train) | Relaxed (Seq-Wise) | Tree | OpenVLA (LIBERO) | |

| Video–Language Models | |||||||

| STD | Shared Backbone | Sparse KV Routing | Strict | Linear | Qwen2-VL, LLaVA-OV | ||

| SpecVLM (Video) | Independent | Token Tree | Strict | Tree | LLaVA-OV | ||

| HIPPO | Shared Backbone | Pipeline Overlap | Strict | Linear | LLaVA-OV | ||

| Sparrow | Independent | HSR + VATA | × (Training) | Strict | Linear | LLaVA-OV, Qwen2.5-VL | |

| VideoSpeculateRAG | Independent | Small VLM + Per-Doc Parallel | Tolerance-Based () | Path | Qwen2.5-VL-32B | ||

| ParallelVLM | Independent | UV-Prune + Pipeline Overlap | Strict | Linear | LLaVA-OV, Qwen2.5-VL | ||

| Speech and Audio Models | |||||||

| SSD | Independent | Small Audio LM | × (Distillation) | Relaxed (-Tol.) | Linear | CosyVoice-2 | |

| SpecASR | Independent | Adaptive Len. + Tree | × (Tuning) | Strict (w/ Repair) | Tree | Llama, Vicuna | |

| SMUD | Independent | CTC Pseudo-Draft | Dual-Hypothesis | Linear | E-Branchformer ASR | ||

| Codec-MTP | Shared Backbone | Block Prediction | Path-Level (Viterbi) | Path | VALL-E / USLM | ||

| UGSD | Independent | Edge–Cloud Uncertainty Gate | Relaxed (Top-R Rank) | Linear | Qwen2.5-Omni-3B / Qwen3-Omni | ||

| CTC-SSD | Shared Backbone | CTC Encoder Draft | Relaxed (Likelihood ) | Linear | Granite-Speech-1B | ||

| Text-to-Image (Vision AR) Models | |||||||

| LANTERN | Independent | Small AR Model | × (Tuning) | Relaxed (Latent) | Linear | LlamaGen | |

| LANTERN++ | Independent | Static Tree | × (Tuning) | Relaxed (Latent) | Tree | LlamaGen | |

| GSD | Independent | Dynamic Clustering | Relaxed (Grouped) | Linear | AR Image Models | ||

| SJD | Shared Backbone | Jacobi Iteration | Convergence | Iterative | LlamaGen, Emu3 | ||

| SJD2 | Shared Backbone | Denoise Trajectory | × (Fine-tune) | Convergence | Iterative | LlamaGen | |

| MC-SJD | Shared Backbone | Gumbel Coupling | Convergence | Iterative | LlamaGen | ||

| VVS | Independent | Conf. Skip | Conf. Skip | Linear | LlamaGen | ||

| SJD++ | Shared Backbone | Token Reuse | × (Fine-tune) | Convergence | Iterative | LlamaGen | |

| CSpD | Independent | Density-Ratio Sampling | Path-Level (Density) | Path | MAR | ||

| SJD-PV | Shared Backbone | Phrase Library + Jacobi | Phrase-Level (Joint) | Path | Lumina-mGPT | ||

| MuLo-SD | Independent | Multi-Scale Drafting | × (Training) | Relaxed Local (Neighborhood) | Linear | Tar-1.5B | |

| Diffusion Models | |||||||

| SpeCa | Drafter-Free Speculation | Feature Caching | Relaxed (Feat.) | Path | DiT, FLUX, HunyuanVideo | ||

| Accel. Diff. | Drafter-Free Speculation | Coupling Jumps | Path-Level (Coupling) | Path | DiT, EDM | ||

| ASD | Drafter-Free Speculation | Stochastic Exchange | Convergence | Path | DDPM | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).