Submitted:

28 March 2026

Posted:

31 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Machine Unlearning Methods

2.2. Membership Inference Attacks

2.3. Blockchain for GDPR Compliance

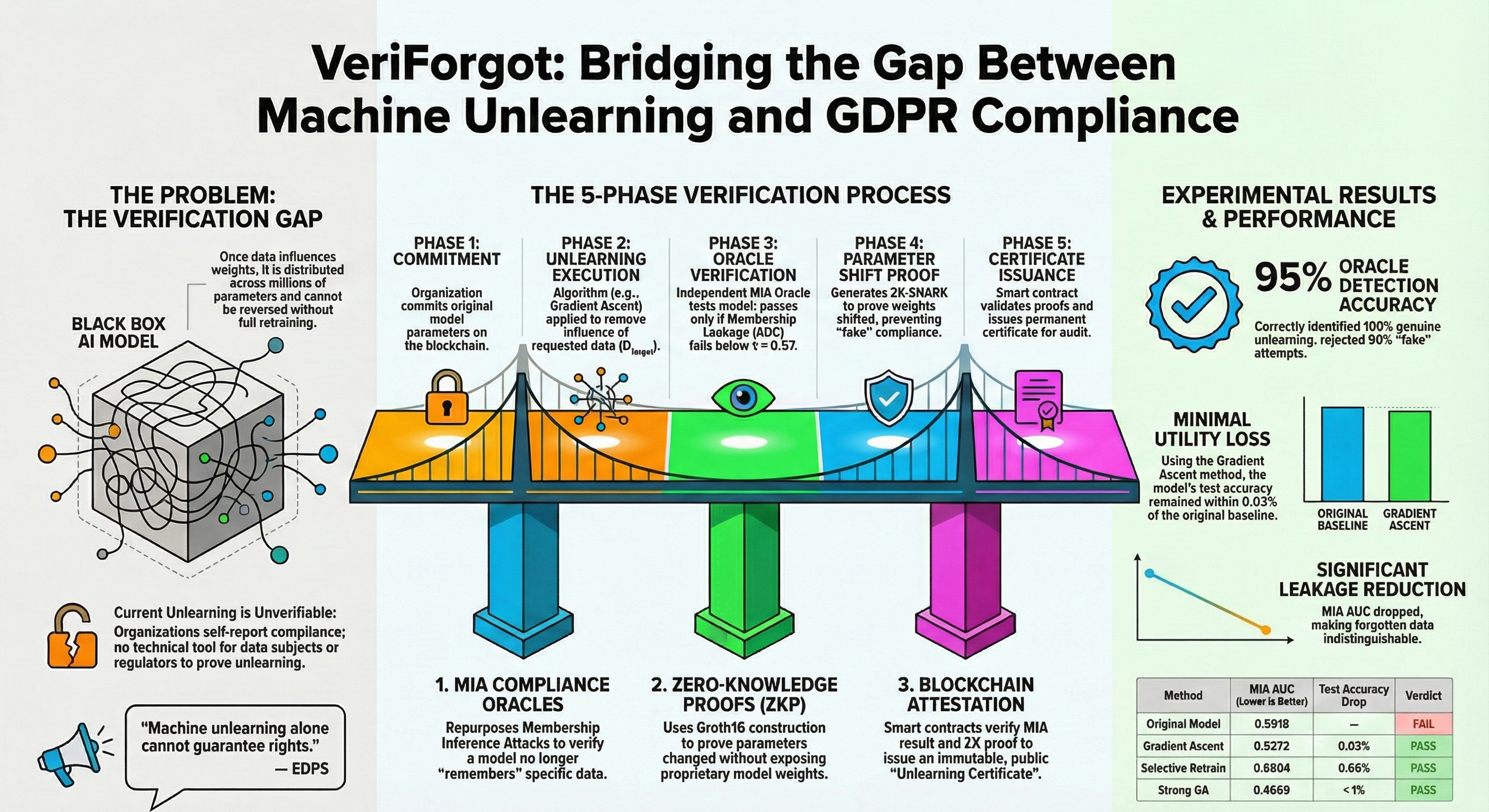

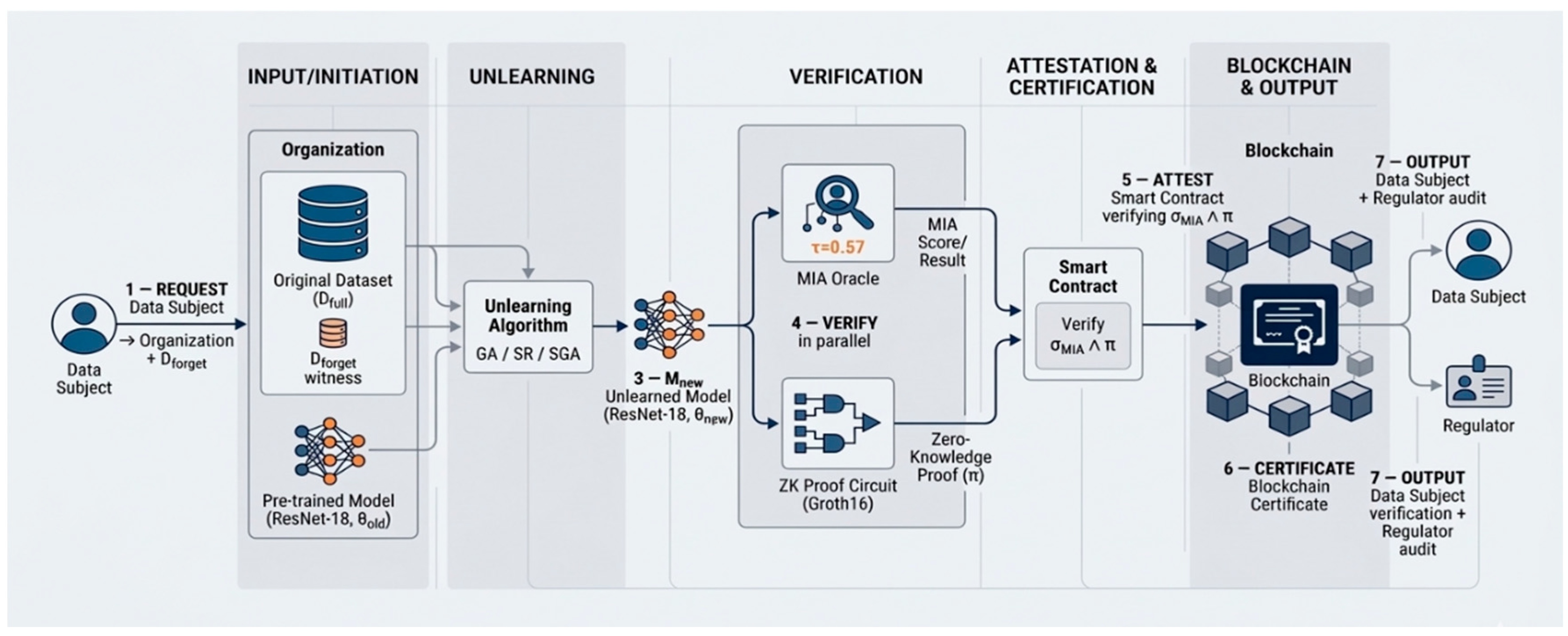

3. The VeriForgot Framework

3.1. Problem Formulation

3.2. MIA Verification Oracle

3.3. Blockchain Attestation Protocol

3.4. Zero-Knowledge Proof Protocol

4. Experimental Evaluation

4.1. Experimental Setup

4.2. Baseline Model Results

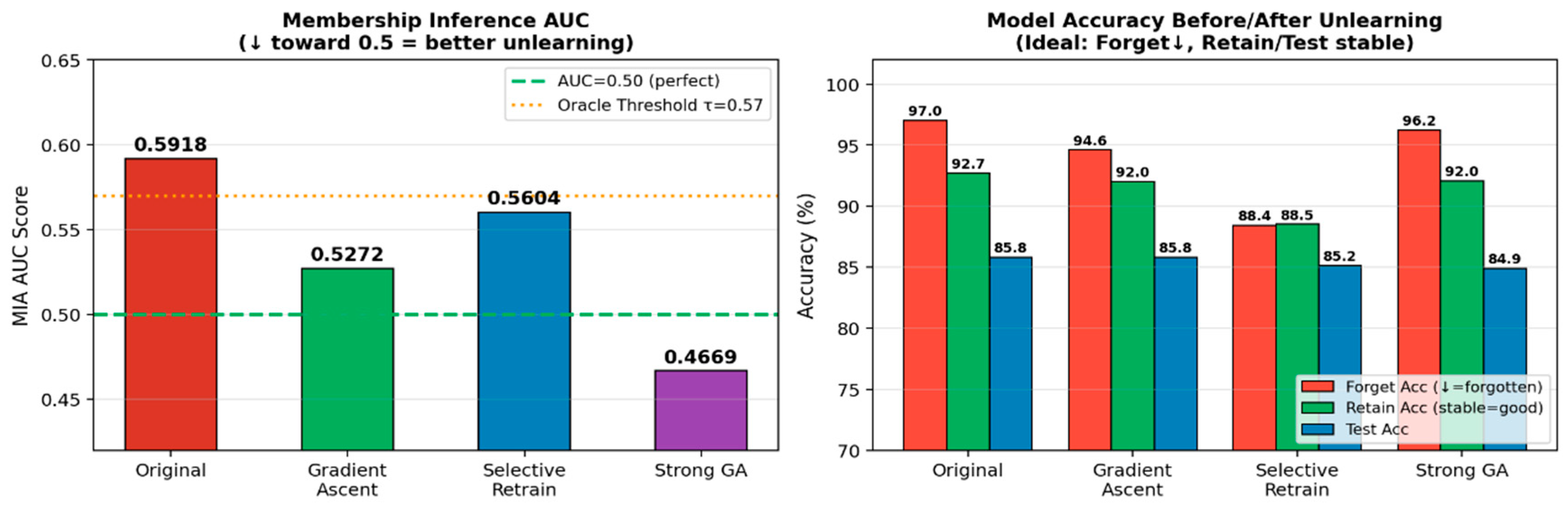

4.3. Unlearning Effectiveness

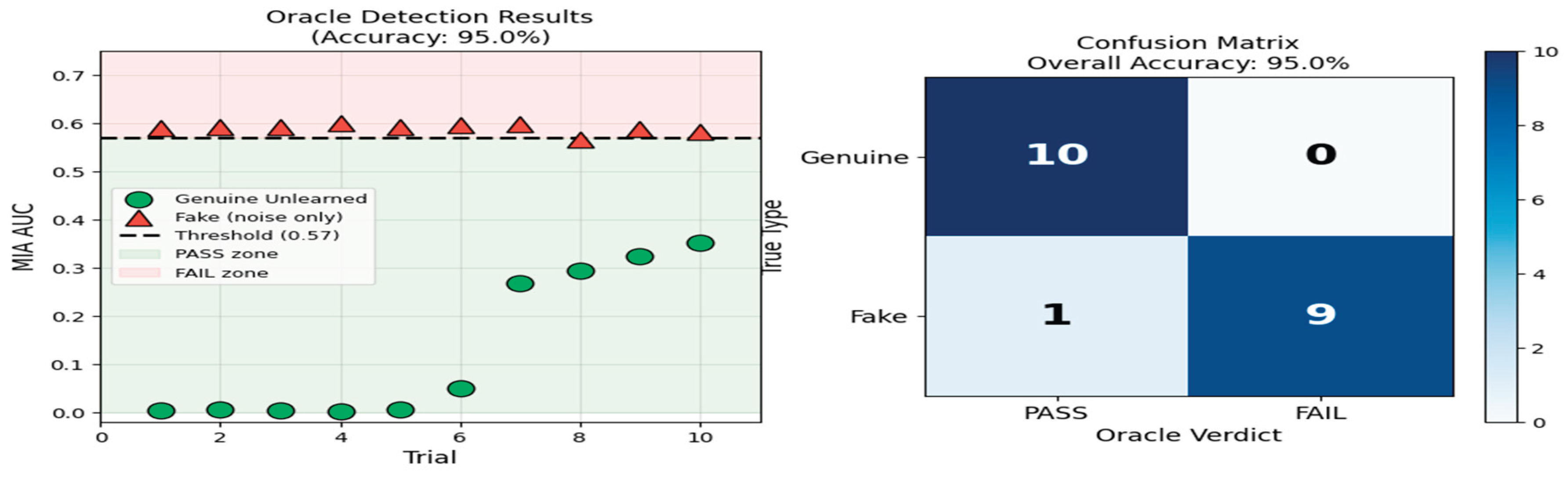

4.4. Oracle Accuracy: Genuine vs. Fake Unlearning Detection

5. Security Analysis

5.1. Soundness Against Fake Compliance

5.2. Privacy of Model and Data

5.3. Replay and Tampering Resistance

Conclusions

Acknowledgements

References

- European Parliament and Council of the European Union. “Regulation (EU) 2016/679 of the European Parliament and of the Council --- General Data Protection Regulation (GDPR), Article 17: Right to Erasure (`Right to be Forgotten’),” 2016. Available online: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32016R0679.

- Nguyen, T. T.; Huynh, T. T.; Le Nguyen, P.; Liew, A. W.-C.; Yin, H.; Nguyen, Q. V. H. A Survey of Machine Unlearning. arXiv Prepr. arXiv:2209.02299. 2022. [Google Scholar] [CrossRef]

- Ginart; Guan, M. Y.; Valiant, G.; Zou, J. Y. Making AI Forget You: Data Deletion in Machine Learning. Advances in Neural Information Processing Systems (NeurIPS) 2019, 3513–3526. [Google Scholar] [CrossRef]

- Xu, J.; Wu, Z.; Wang, C.; Jia, X. Machine Unlearning: Solutions and Challenges. IEEE Trans. Emerg. Top. Comput. Intell. 2024, vol. 8(no. 3), 2150–2168. [Google Scholar] [CrossRef]

- European Data Protection Supervisor, “Machine Unlearning,” 2022. Available online: https://www.edps.europa.eu/data-protection/technology-monitoring/techsonar/machine-unlearning.

- Marino, Bill; Kurmanji, Meghdad; Lane, Nicholas D. Bridge the Gaps between Machine Unlearning and AI Regulation. 2025. Available online: https://arxiv.org/abs/2502.12430.

- Cao, Y.; Yang, J. Towards Making Systems Forget with Machine Unlearning. In in Proceedings of the 2015 {IEEE} Symposium on Security and Privacy (S\&P), 2015; IEEE; pp. 463–480. [Google Scholar] [CrossRef]

- Bourtoule, L. “Machine Unlearning,” in Proceedings of the 2021 {IEEE} Symposium on Security and Privacy (S\&P); IEEE, 2021; pp. 141–159. [Google Scholar] [CrossRef]

- Guo, C.; Goldstein, T.; Hannun, A.; van der Maaten, L. Certified Data Removal from Machine Learning Models. Proceedings of the 37th International Conference on Machine Learning (ICML), in Proceedings of Machine Learning Research 2020, vol. 119. PMLR, 3832–3842. [Google Scholar] [CrossRef]

- Golatkar, A. Achille; Soatto, S. Eternal Sunshine of the Spotless Net: Selective Forgetting in Deep Networks. In in Proceedings of the {IEEE/CVF} Conference on Computer Vision and Pattern Recognition (CVPR), 2020; IEEE; pp. 9304–9312. [Google Scholar] [CrossRef]

- Shokri, R.; Stronati, M.; Song, C.; Shmatikov, V. Membership Inference Attacks Against Machine Learning Models. In in Proceedings of the 2017 {IEEE} Symposium on Security and Privacy (S\&P), 2017; IEEE; pp. 3–18. [Google Scholar] [CrossRef]

- Carlini, N.; Chien, S.; Nasr, M.; Song, S.; Terzis, A.; Tramer, F. Membership Inference Attacks From First Principles. In in Proceedings of the 2022 {IEEE} Symposium on Security and Privacy (S\&P), 2022; IEEE. [Google Scholar] [CrossRef]

- Swan, M. Blockchain: Blueprint for a New Economy; O’Reilly Media: Sebastopol, CA, 2015; Available online: https://www.oreilly.com/library/view/blockchain/9781491920480/.

- Ateniese, G.; Magri, B.; Venturi, D.; Andrade, E. Redactable Blockchain or Rewriting History in Bitcoin and Friends. In in Proceedings of the 2017 {IEEE} European Symposium on Security and Privacy (EuroS\&P), 2017; IEEE; pp. 111–126. [Google Scholar] [CrossRef]

- Krizhevsky. Learning Multiple Layers of Features from Tiny Images. 2009. Available online: https://www.cs.toronto.edu/~kriz/learning-features-2009-TR.pdf.

Authors

|

MD Hamid Borkot Tulla is a postgraduate researcher in the School of Cyber Security and Information Law at Chongqing University of Posts and Telecommunications (CQUPT), China. His research interests include machine unlearning, GDPR-compliant AI systems, blockchain-based privacy attestation, and adversarial machine learning. |

|

Naem Azam Chowdhury is a postgraduate researcher in the School of Cyber Security and Information Law at Chongqing University of Posts and Telecommunications (CQUPT), China. His research focuses on cybersecurity, data privacy, and trustworthy machine learning systems. |

| Metric | Value |

| Test Accuracy | 85.81% |

| Forget Set Accuracy | 97.00% |

| Retain Set Accuracy | 92.72% |

| MIA AUC (baseline) | 0.5918 |

| Member Avg. Confidence | 0.9398 |

| Confidence Gap (Member − Non-Member) | 0.1042 |

| Method | AUC | Δ AUC | % → Random | Conf. Gap | Verdict |

| Original (baseline) | 0.5918 | — | — | 0.1042 | FAIL |

| Gradient Ascent | 0.5272 | −0.0646 | 70.4% | 0.0822 | ✓ PASS |

| Selective Retrain | 0.5604 | −0.0314 | 34.2% | 0.0617 | ✓ PASS |

| Strong GA | 0.4669 | −0.1249 | 136.1% | 0.0885 | ✓ PASS |

| Method | Forget Acc. | Retain Acc. | Test Acc. | Test Drop |

| Original | 97.00% | 92.72% | 85.81% | — |

| Gradient Ascent | 94.60% | 92.01% | 85.78% | 0.03% |

| Selective Retrain | 88.40% | 88.51% | 85.15% | 0.66% |

| Strong GA | 96.20% | 92.05% | ~84.9% | <1% |

| Oracle: PASS | Oracle: FAIL | |

| Genuine Unlearned (10) | 10 — TP (100%) | 0 — FN (0%) |

| Fake / Noise Only (10) | 1 — FP (10%) | 9 — TN (90%) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).