Submitted:

26 March 2026

Posted:

27 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

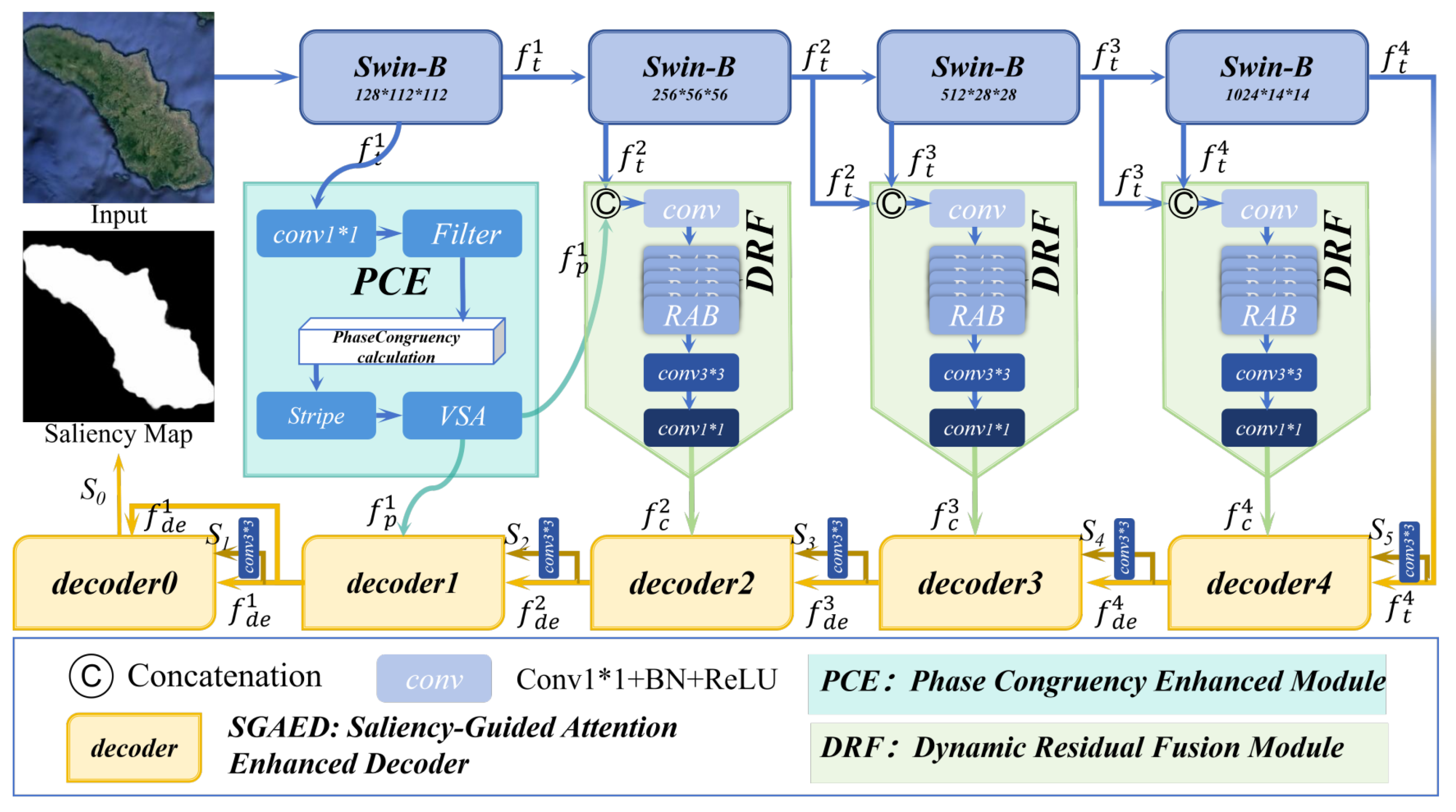

- We propose a novel end–to–end network PCFNet. It integrates frequency domain phase enhancement with dynamic cross scale feature refinement to reduce blurred target boundaries under low contrast and improve robustness under complex background interference.

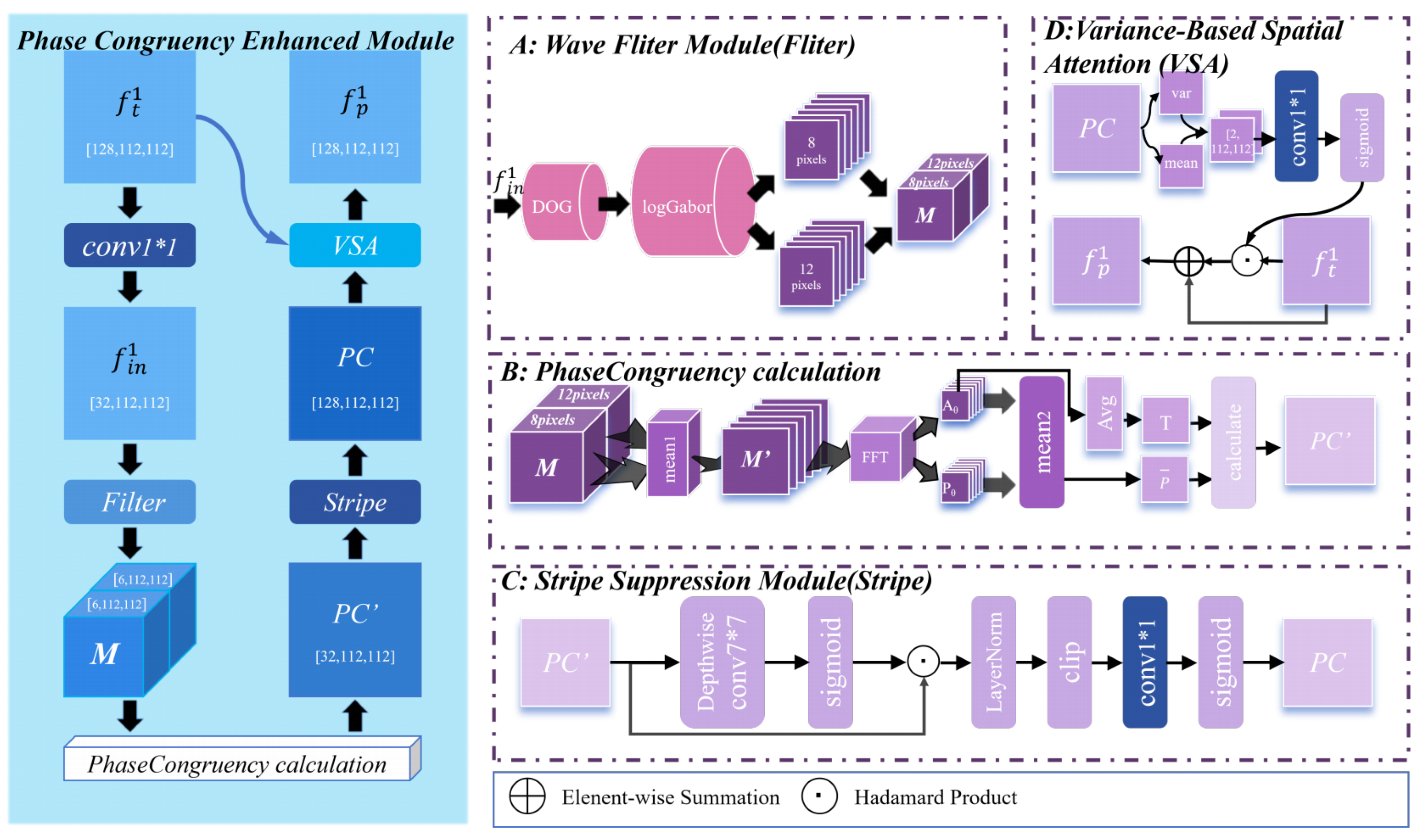

- We design a novel PC module based on Fourier decomposition theory. It fuses multi scale phase features with shallow Transformer features to solve blurred target perception caused by low contrast and compensate for local detail loss.

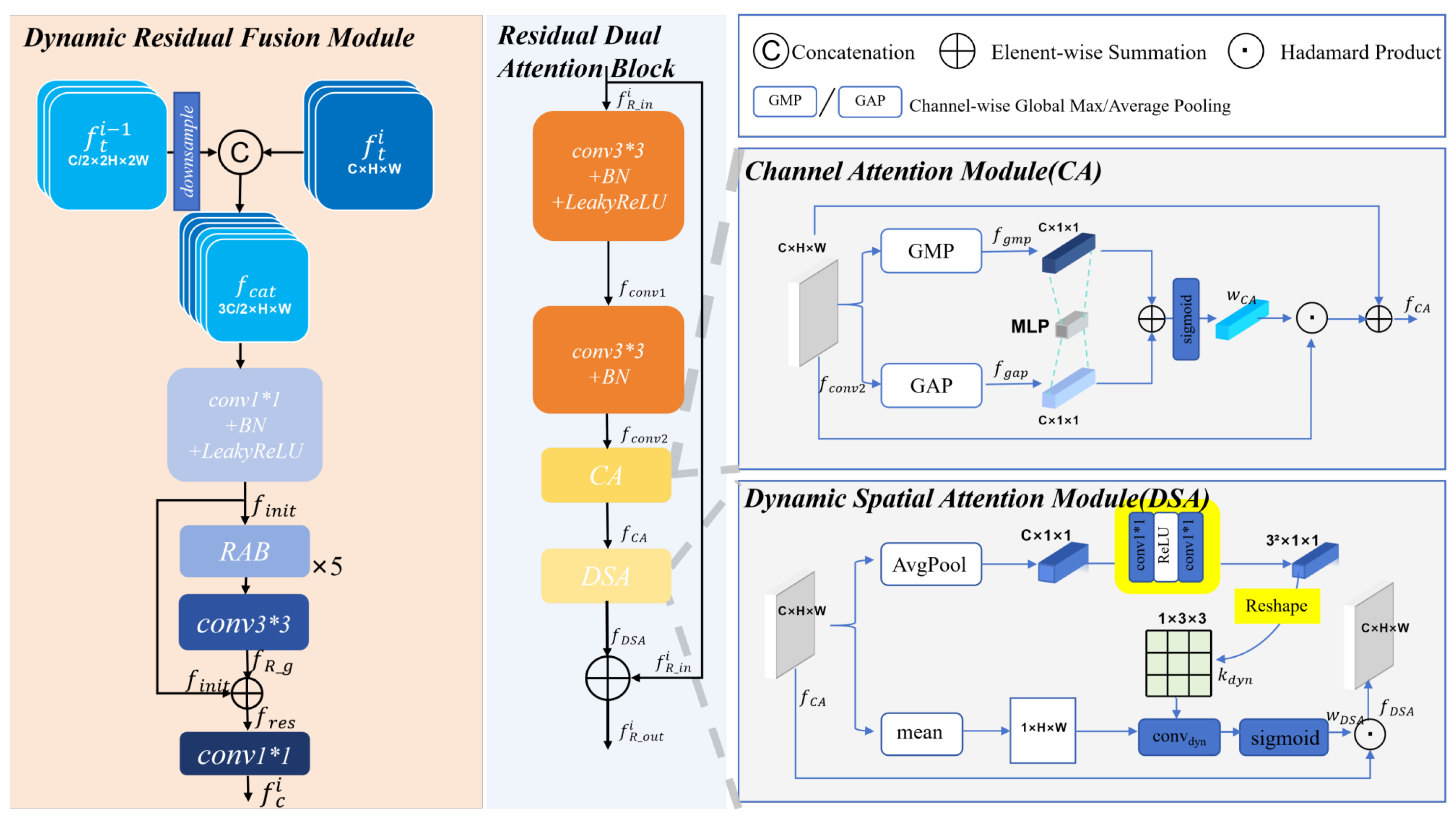

- We design a novel DRF module. It integrates dynamic spatial attention and residual connections to achieve complementary fusion and effective selection of multi scale features, suppressing complex background interference while preserving salient structures.

- We conduct extensive experiments on three benchmark datasets (ORSSD, EORSSD, ORSI4199). Through quantitative comparison with 23 SOTA methods, ablation experiments, and qualitative analysis of complex scenes, the effectiveness and robustness of the proposed network and core modules are fully demonstrated.

2. Related Work

2.1. Salient Object Detection for NSI

2.2. Salient Object Detection for ORSI

2.3. Frequency-Domain Analysis

3. Proposed Method

3.1. Framework Overview

3.2. Swin Transformer-based Feature Extractor

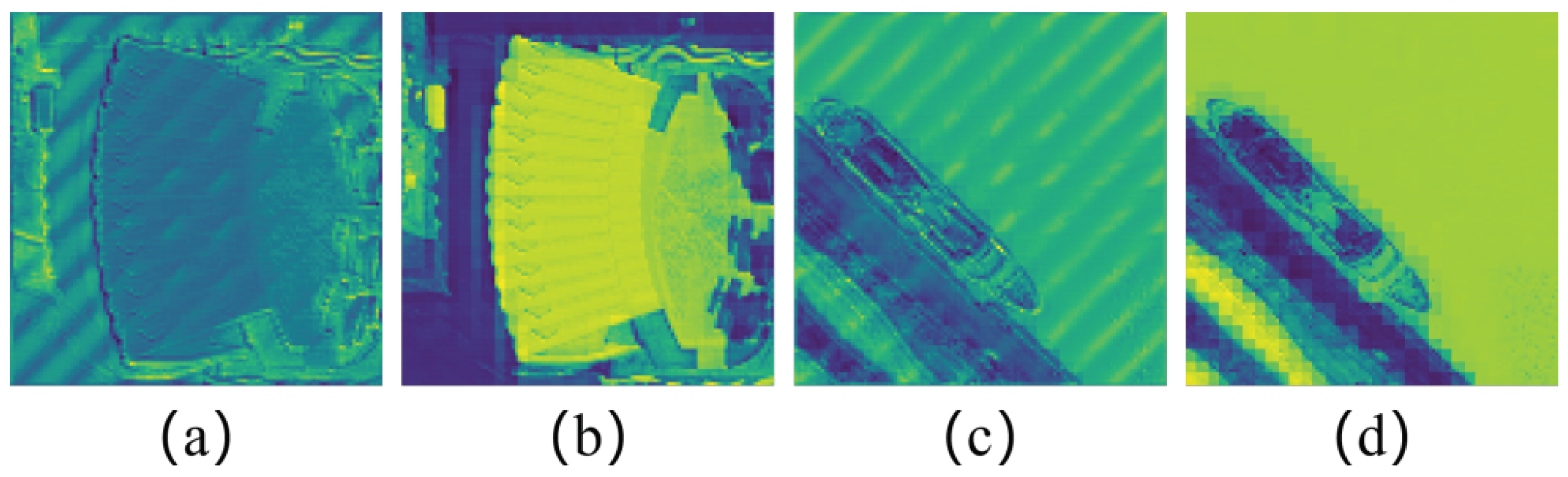

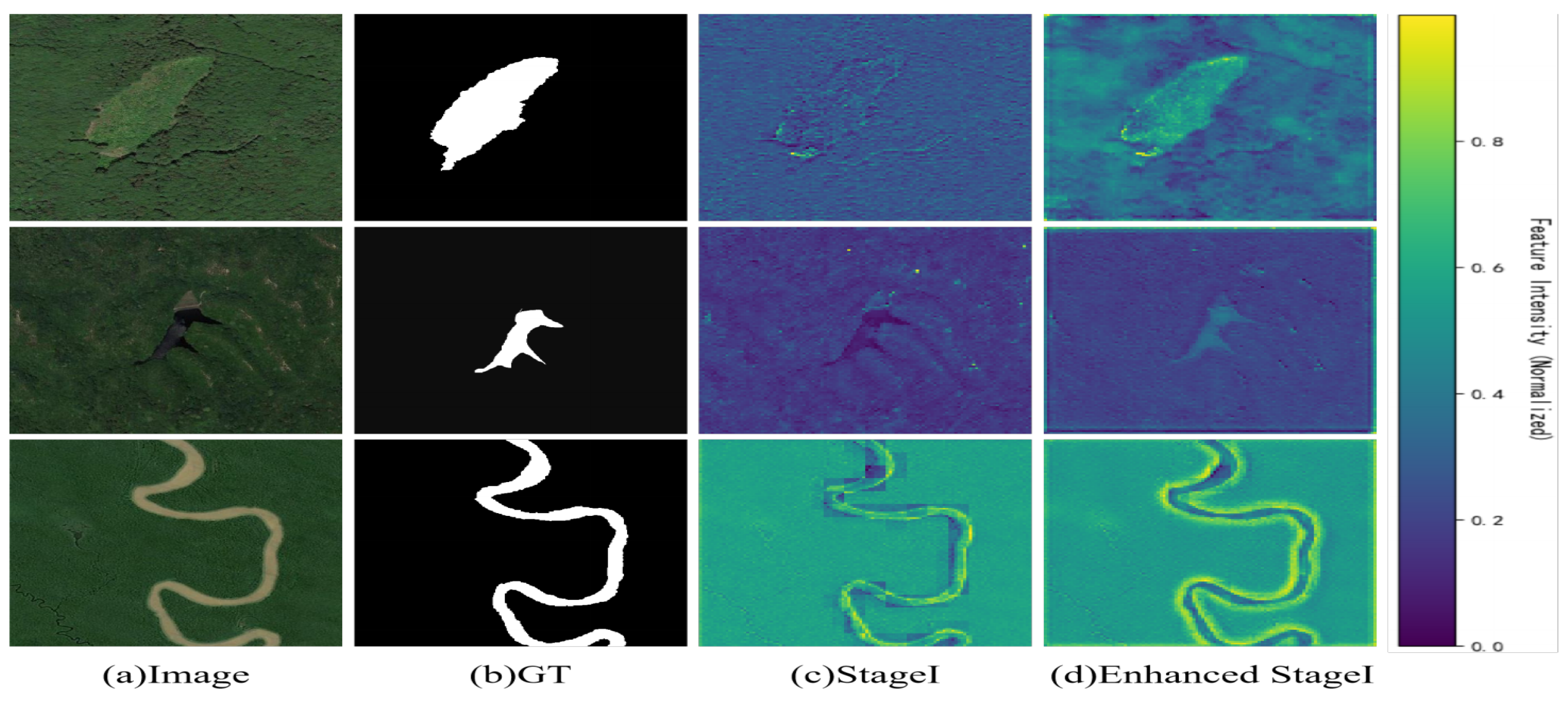

3.3. Phase Congruency Enhanced Module

3.4. Dynamic Residual Fusion (DRF) Module

3.5. Decoder

3.6. Loss Function

4. Experiments

4.1. Experimental Settings

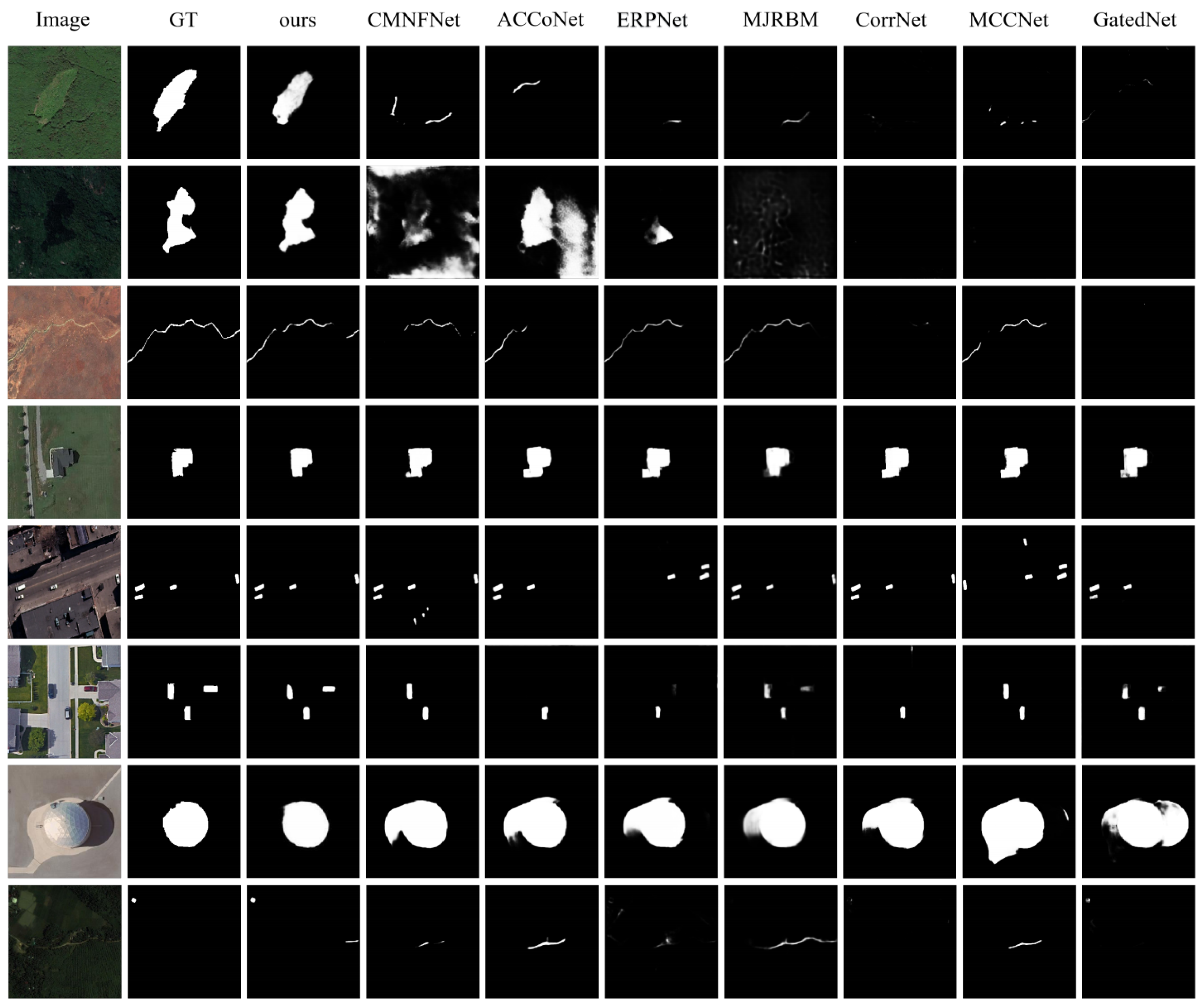

4.2. Comparison with SOTA Methods

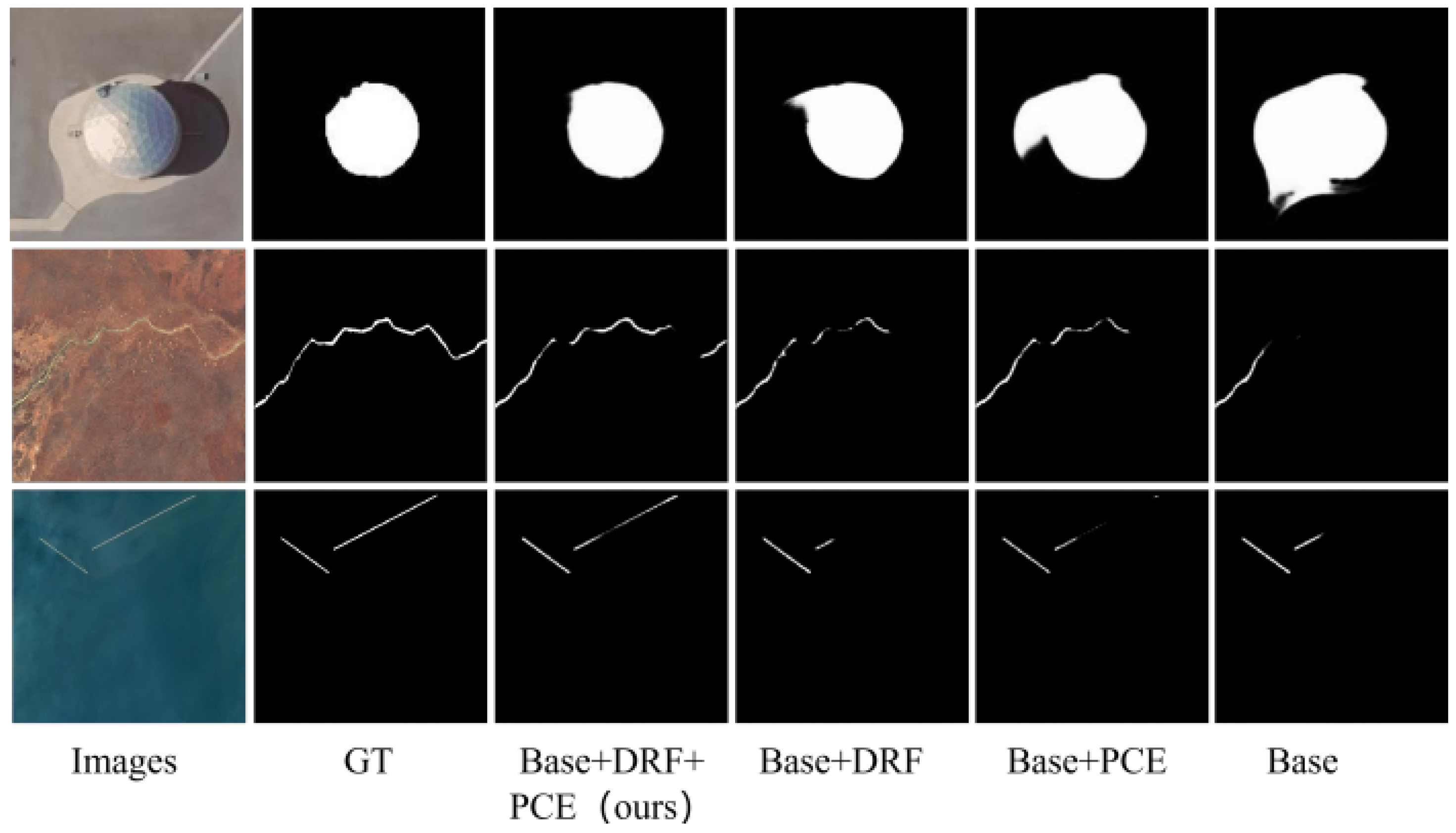

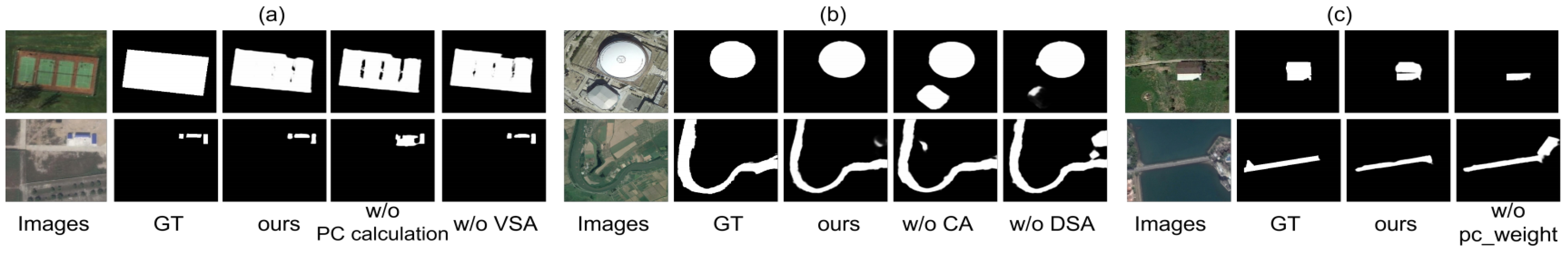

4.3. Ablation Study

| No. | Baseline | pc_weight | ORSSD | EORSSD | ||||

| 1 | ✓ | 0.9484 | 0.9244 | 0.9844 | 0.9323 | 0.8854 | 0.9641 | |

| 2 | ✓ | ✓ | 0.9540 | 0.9305 | 0.9888 | 0.9393 | 0.8943 | 0.9843 |

4.4. Computational Complexity Analysis

| (a) | ||

| Models | FLOPs | Params |

| TF | 71.44 G | 86.64 M |

| TF+SGAED | 99.27 G | 103.76 M |

| TF+SGAED+PCE | 105.94 G (↑6.67) | 104.29 M (↑0.53) |

| TF+SGAED+PCE+DRF(Ours) | 126.94 G (↑21) | 117.29 M (↑13) |

| (b) | ||

| Models | FLOPs | Params |

| MCCNet | 117.15 G | 67.65 M |

| EMFINet | 176.87 G | 95.09 M |

| ERPNet | 131.63 G | 77.19 M |

| ACCQNet | 184.50 G | 102.55 M |

| AESINet | 53.42 G | 41.05 M |

| ASTTNet | 43.12 G | 23.35 M |

| ADSTNet | 62.09 G | 27.72 M |

| HFCNet | 120.41 G | 140.75 M |

| ours | 126.94 G | 117.29 M |

5. Conclusion

Data Availability Statement

Conflicts of Interest

References

- Borji, A.; Cheng, M.; Jiang, H.; Li, J. Salient Object Detection: A Benchmark. IEEE Transactions on Image Processing 2015, vol. 24(no. 12), 5706–5722. [Google Scholar] [CrossRef]

- Wang, W.; Lai, Q.; Fu, H.; Shen, J.; Ling, H.; Yang, R. Salient Object Detection in the Deep Learning Era: An In-Depth Survey. IEEE Transactions on Pattern Analysis and Machine Intelligence 2022, vol. 44(no. 6), 3239–3259. [Google Scholar] [CrossRef]

- Zhang, P.; Zhuo, T.; Huang, W.; Chen, K.; Kankanhalli, M. Online object tracking based on CNN with spatial–temporal saliency guided sampling. Neurocomputing 2017, vol. 257, 115–127. [Google Scholar] [CrossRef]

- Gao, L.; Liu, B.; Fu, P.; Xu, M.; Li, J. Visual Tracking via Dynamic Saliency Discriminative Correlation Filter. Applied Intelligence 2022, vol. 52(no. 6), 5897–5911. [Google Scholar] [CrossRef]

- Song, X.; Lin, H.; Wen, H.; Hou, B.; Xu, M.; Nie, L. A Comprehensive Survey on Composed Image Retrieval. ACM Trans. Inf. Syst. 2025, vol. 44(no. 1, art. no. 19), 1–54. [Google Scholar] [CrossRef]

- Li, C.; Guo, C.; Ren, W.; Cong, R.; Hou, J.; Kwong, S. An Underwater Image Enhancement Benchmark Dataset and Beyond. IEEE Transactions on Image Processing 2020, vol. 29, 4376–4389. [Google Scholar] [CrossRef]

- Yang, L.; Wu, J.; Li, H.; Liu, C.; Wei, S. Real-Time Runway Detection Using Dual-Modal Fusion of Visible and Infrared Data. Remote Sensing vol. 17(no. 4), 669, 2025. [CrossRef]

- Lei, J.; Wang, H.; Lei, Z.; Li, J.; Rong, S. CNN–Transformer Hybrid Architecture for Underwater Sonar Image Segmentation. Remote Sensing vol. 17(no. 4), 707, 2025. [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016; pp. 770–778. [Google Scholar]

- Zhou, B.; Khosla, A.; Lapedriza, A.; Oliva, A.; Torralba, A. Learning Deep Features for Discriminative Localization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2016; pp. 2921–2929. [Google Scholar]

- Chen, J.; Zhang, H.; Gong, M.; Gao, Z. Collaborative Compensative Transformer Network for Salient Object Detection. Pattern Recognition 2024, vol. 154, art. no. 110600. [Google Scholar] [CrossRef]

- Azad, R.; Kazerouni, A.; Azad, B.; Khodapanah Aghdam, E.; Velichko, Y.; Bagci, U.; Merhof, D. “Laplacian-Former: Overcoming the Limitations of Vision Transformers in Local Texture Detection,” in Medical Image Computing and Computer Assisted Intervention (MICCAI). LNCS 2023, vol. 14222, 736–746. [Google Scholar]

- Wang, X.; Wan, L.; Lin, D.; Feng, W. Phase-based fine-grained change detection. Expert Systems with Applications 2023, vol. 227, pp. 120181. [Google Scholar] [CrossRef]

- Perazzi, F.; Krähenbühl, P.; Pritch, Y.; Hornung, A. Saliency filters: Contrast based filtering for salient region detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2012; pp. 733–740. [Google Scholar]

- Zhang, Q.; Wang, S.; Wang, X.; Sun, Z.; Kwong, S.; Jiang, J. Geometry Auxiliary Salient Object Detection for Light Fields via Graph Neural Networks. IEEE Transactions on Image Processing 2021, vol. 30, 7578–7592. [Google Scholar] [CrossRef] [PubMed]

- Xu, M.; Sun, Z.; Hu, Y.; Tang, H.; Hu, Y.; Song, X.; Nie, L. Superpixel Segmentation With Edge Guided Local-Global Attention Network. IEEE Transactions on Circuits and Systems for Video Technology 2025, vol. 35(no. 12), 11922–11934. [Google Scholar] [CrossRef]

- Yuan, X.; Zhang, B.; Zhou, J.; Lian, C.; Zhang, Q.; Yue, J. Gradient residual attention network for infrared image super-resolution. Optics and Lasers in Engineering 2024, vol. 175, pp. 107998. [Google Scholar] [CrossRef]

- Wang, F.; Jiang, M.; Qian, C.; Yang, S. Residual Attention Network for Image Classification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017; pp. 6450–6458. [Google Scholar]

- Bi, J.; Wei, H.; Zhang, G.; Yang, K.; Song, Z. DyFusion: Cross-Attention 3D Object Detection with Dynamic Fusion. IEEE Latin America Transactions 2024, vol. 22(no. 2), 106–112. [Google Scholar] [CrossRef]

- Achanta, R.; Hemami, S.; Estrada, F.; Susstrunk, S. Frequency-tuned salient region detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2009; pp. 1597–1604. [Google Scholar]

- Luo, W.; Li, Y.; Urtasun, R.; Zemel, R. S. Understanding the Effective Receptive Field in Deep Convolutional Neural Networks. Advances in Neural Information Processing Systems (NIPS) 2016, arXiv:1701.04128. [Google Scholar]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-End Object Detection with Transformers. In in Computer Vision; Springer: Cham, 2020; vol. 12346, p. pp. 13. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H. Swin Transformer: Hierarchical Vision Transformer using Shifted Windows. IEEE International Conference on Computer Vision (ICCV), 2021; pp. 9992–10002. [Google Scholar]

- Xie, C.; Xia, C.; Ma, M.; Zhao, Z.; Chen, X.; Li, J. Pyramid Grafting Network for One-Stage High Resolution Saliency Detection. In in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 11707–11716. [Google Scholar]

- Li, K.; Wan, G.; Cheng, G.; Meng, L.; Han, J. Object detection in optical remote sensing images: A survey and a new benchmark. ISPRS Journal of Photogrammetry and Remote Sensing 2020, vol. 159, 296–307. [Google Scholar] [CrossRef]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. Medical Image Computing and Computer-Assisted Intervention 2015, vol. 9351, pp. 28. [Google Scholar]

- Zeng, X.; Xu, M.; Hu, Y.; Tang, H.; Hu, Y.; Nie, L. Adaptive Edge-Aware Semantic Interaction Network for Salient Object Detection in Optical Remote Sensing Images. IEEE Transactions on Geoscience and Remote Sensing 2023, vol. 61, 1–16. [Google Scholar] [CrossRef]

- Gao, L.; Liu, B.; Fu, P.; Xu, M. Adaptive Spatial Tokenization Transformer for Salient Object Detection in Optical Remote Sensing Images. IEEE Transactions on Geoscience and Remote Sensing 2023, vol. 61, 1–15. [Google Scholar] [CrossRef]

- Cheng, B.; Liu, Z.; Tang, H.; Wang, Q. Multimodal-Guided Transformer Architecture for Remote Sensing Salient Object Detection. IEEE Transactions on Geoscience and Remote Sensing 2025, vol. 22, 1–5. [Google Scholar] [CrossRef]

- Li, J.; Li, C.; Zheng, X.; Liu, X.; Tang, C. Global Context Relation-Guided Feature Aggregation Network for Salient Object Detection in Optical Remote Sensing Images. Remote Sensing 2024, vol. 16(no. 16), 2978. [Google Scholar] [CrossRef]

- Liu, Y.; Xu, M.; Xiao, T.; Tang, H.; Hu, Y.; Nie, L. Heterogeneous Feature Collaboration Network for Salient Object Detection in Optical Remote Sensing Images. IEEE Transactions on Geoscience and Remote Sensing 2024, vol. 62, 1–14. [Google Scholar] [CrossRef]

- Gao, F.; Fu, M.; Cao, J.; Dong, J.; Du, Q. Adaptive Frequency Enhancement Network for Remote Sensing Image Semantic Segmentation. IEEE Transactions on Geoscience and Remote Sensing 2025, vol. 63, 1–15. [Google Scholar] [CrossRef]

- Xu, M.; Yu, C.; Li, Z.; Tang, H.; Hu, Y.; Nie, L. HDNet: A Hybrid Domain Network With Multiscale High-Frequency Information Enhancement for Infrared Small-Target Detection. IEEE Transactions on Geoscience and Remote Sensing vol. 63, 1–15, 2025. [CrossRef]

- Xiao, P.; Feng, X.; Zhao, S.; She, J. Segmentation of High-resolution Remotely Sensed Imagery Based on Phase Congruency. ACTA GEODAETICA et CARTOGRAPHICA SINICA 2007, vol. 36(no. 2), 146–151. [Google Scholar]

- Zhang, Z.; Sabuncu, M. R. Generalized cross entropy loss for training deep neural networks with noisy labels. In Proceedings of the 32nd International Conference on Neural Information Processing Systems, 2018; pp. 8792–8802. [Google Scholar]

- Yu, J.; Jiang, Y.; Wang, Z.; Cao, Z.; Huang, T. Unitbox: An advanced object detection network. ACM Multimedia, 2016; pp. 516–520. [Google Scholar]

- Li, C.; Cong, R.; Hou, J.; Zhang, S.; Qian, Y.; Kwong, S. Nested Network With Two-Stream Pyramid for Salient Object Detection in Optical Remote Sensing Images. IEEE Transactions on Geoscience and Remote Sensing 2019, vol. 57(no. 11), 9156–9166. [Google Scholar] [CrossRef]

- Zhang, Q.; Cong, R.; Li, C.; Cheng, M.; Fang, Y.; Cao, X.; Zhao, Y.; Kwong, S. Dense Attention Fluid Network for Salient Object Detection in Optical Remote Sensing Images. IEEE Transactions on Image Processing 2021, vol. 30, 1305–1317. [Google Scholar] [CrossRef]

- Tu, Z.; Wang, C.; Li, C.; Fan, M.; Zhao, H.; Luo, B. ORSI Salient Object Detection via Multiscale Joint Region and Boundary Model. IEEE Transactions on Geoscience and Remote Sensing 2022, vol. 60, 1–13. [Google Scholar] [CrossRef]

- Fan, D.-P.; Cheng, M.-M.; Liu, Y.; Li, T.; Borji, A. Structure-Measure: A New Way to Evaluate Foreground Maps. IEEE International Conference on Computer Vision (ICCV), 2017; pp. 4558–4567. [Google Scholar]

- Fan, D.-P.; Gong, C.; Cao, Y.; Ren, B.; Cheng, M.-M.; Borji, A. Enhanced-alignment measure for binary foreground map evaluation. Proc. Int. Joint Conf. Artif. Intell., 2018; pp. 698–704. [Google Scholar]

- Yu, J.-G.; Zhao, J.; Tian, J.; Tan, Y. Maximal entropy random walk for region-based visual saliency. IEEE Transactions on Cybernetics 2014, vol. 44(no. 9), 1661–1672. [Google Scholar]

- Yuan, Y.; Li, C.; Kim, J.; Cai, W.; Feng, D. D. Reversion correction and regularized random walk ranking for saliency detection. IEEE Transactions on Image Processing 2018, vol. 27(no. 3), 1311–1322. [Google Scholar] [CrossRef]

- Zhou, X. Edge-guided recurrent positioning network for salient object detection in optical remote sensing images. IEEE Transactions on Cybernetics 2023, vol. 53(no. 1), 539–552. [Google Scholar] [CrossRef] [PubMed]

- Pang, Y.; Zhao, X.; Zhang, L.; Lu, H. Multi-scale interactive network for salient object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2020; pp. 9413–9422. [Google Scholar]

- Zhao, X.; Pang, Y.; Zhang, L.; Lu, H.; Zhang, L. Suppress and balance: A simple gated network for salient object detection. Proc. Eur. Conf. Comput. Vis., 2020; pp. 35–51. [Google Scholar]

- Li, G.; Liu, Z.; Lin, W.; Ling, H. Multi-content complementation network for salient object detection in optical remote sensing images. IEEE Transactions on Geoscience and Remote Sensing 2022, vol. 60, Art. no. 5614513. [Google Scholar] [CrossRef]

- Li, G.; Liu, Z.; Bai, Z.; Lin, W.; Ling, H. Lightweight salient object detection in optical remote sensing images via feature correlation. IEEE Transactions on Geoscience and Remote Sensing 2022, vol. 60, 1–12. [Google Scholar] [CrossRef]

- Tu, Z.; Wang, C.; Li, C.; Fan, M.; Zhao, H.; Luo, B. ORSI salient object detection via multiscale joint region and boundary model. IEEE Transactions on Geoscience and Remote Sensing 2022, vol. 60, Art.(no. 5607913). [Google Scholar] [CrossRef]

- Zhou, X.; Shen, K.; Liu, Z.; Gong, C.; Zhang, J.; Yan, C. Edge-aware multiscale feature integration network for salient object detection in optical remote sensing images. IEEE Transactions on Geoscience and Remote Sensing 2022, vol. 60, 1–15. [Google Scholar] [CrossRef]

- Li, G.; Liu, Z.; Zeng, D.; Lin, W.; Ling, H. Adjacent context coordination network for salient object detection in optical remote sensing images. IEEE Transactions on Cybernetics 2023, vol. 53(no. 1), 526–538. [Google Scholar] [CrossRef]

- Huang, J.; Huang, K. Dynamic Context Coordination for Salient Object Detection in Optical Remote Sensing Images. IEEE Transactions on Geoscience and Remote Sensing 2025, vol. 22, 1–5. [Google Scholar] [CrossRef]

- Lee, S.; Cho, S.; Park, C.; Park, S.; Kim, J.; Lee, S. LSHNet: Leveraging Structure-Prior With Hierarchical Features Updates for Salient Object Detection in Optical Remote Sensing Images. IEEE Transactions on Geoscience and Remote Sensing 2024, vol. 62, 1–16. [Google Scholar] [CrossRef]

- Huang, K.; Li, N.; Huang, J.; Tian, C. Exploiting Memory-Based Cross-Image Contexts for Salient Object Detection in Optical Remote Sensing Images. IEEE Transactions on Geoscience and Remote Sensing 2024, vol. 62, 1–15. [Google Scholar] [CrossRef]

- Wang, Q.; Liu, Y.; Xiong, Z.; Yuan, Y. Hybrid feature aligned network for salient object detection in optical remote sensing imagery. IEEE Transactions on Geoscience and Remote Sensing 2022, vol. 60, Art.(no. 5624915). [Google Scholar] [CrossRef]

- Zhao, J.; Jia, Y.; Ma, L.; Yu, L. Adaptive dual-stream sparse transformer network for salient object detection in optical remote sensing images. IEEE J. Sel. Topics Appl. Earth Observ. Remote Sens. 2024, vol. 17, 5173–5192. [Google Scholar] [CrossRef]

- Xu, M.; Wang, S.; Hu, Y.; Tang, H.; Cong, R.; Nie, L. Cross-Model Nested Fusion Network for Salient Object Detection in Optical Remote Sensing Images. IEEE Transactions on Cybernetics 2025, vol. 55(no. 11), 5332–5345. [Google Scholar] [CrossRef] [PubMed]

| Method | Publication | Type | ORSSD | EORSSD | ORSI4199 | |||||||||

| MAE↓ | MAE↓ | MAE↓ | ||||||||||||

| RRWR | 2015 CVPR | T-NSI | 0.6835 | 0.5590 | 0.7649 | 0.1324 | 0.5992 | 0.3993 | 0.6894 | 0.1677 | 0.6416 | 0.5407 | 0.7116 | 0.1717 |

| RCRR | 2018 TIP | T-NSI | 0.6849 | 0.5591 | 0.7651 | 0.1277 | 0.6007 | 0.3995 | 0.6882 | 0.1644 | 0.6491 | 0.548 | 0.7192 | 0.1637 |

| ASTTNet | 2023 TGRS | T-ORSI | 0.9347 | 0.9060 | 0.9794 | 0.0094 | 0.9253 | 0.8741 | 0.9580 | 0.006 | 0.8827 | 0.8788 | 0.9512 | 0.0273 |

| EGNet | 2019 ICCV | C-NSI | 0.8721 | 0.8332 | 0.9731 | 0.0216 | 0.8601 | 0.7880 | 0.9570 | 0.0110 | 0.8516 | 0.8371 | 0.9241 | 0.0385 |

| MINet | 2020 CVPR | C-NSI | 0.9040 | 0.8761 | 0.9545 | 0.0144 | 0.9040 | 0.8344 | 0.9442 | 0.0093 | 0.8116 | 0.7988 | 0.8961 | 0.0504 |

| GatedNet | 2020 ECCV | C-NSI | 0.9186 | 0.8871 | 0.9664 | 0.0137 | 0.9114 | 0.8566 | 0.9610 | 0.0095 | 0.8545 | 0.8450 | 0.9256 | 0.0393 |

| LVNet-V | 2019 TGRS | C-ORSI | 0.8815 | 0.8263 | 0.9456 | 0.0207 | 0.8630 | 0.7794 | 0.9254 | 0.0146 | - | - | - | - |

| DAFNet-V | 2021 TIP | C-ORSI | 0.9191 | 0.8928 | 0.9771 | 0.0113 | 0.9166 | 0.8614 | 0.9861 | 0.0060 | 0.8492 | 0.8348 | 0.9181 | 0.0422 |

| MCCNet-V | 2021 TGRS | C-ORSI | 0.9437 | 0.9155 | 0.9800 | 0.0087 | 0.9327 | 0.8904 | 0.9755 | 0.0066 | - | - | - | - |

| CorrNet-V | 2022 TGRS | C-ORSI | 0.9380 | 0.9129 | 0.9790 | 0.0098 | 0.9289 | 0.8778 | 0.9696 | 0.0083 | 0.8626 | 0.8560 | 0.9333 | 0.0366 |

| MJRBM-R | 2022 TGRS | C-ORSI | 0.9211 | 0.8885 | 0.9686 | 0.0145 | 0.9091 | 0.8555 | 0.9655 | 0.0099 | 0.8582 | 0.8511 | 0.9343 | 0.0372 |

| RRNet-R | 2022 TGRS | C-ORSI | 0.9339 | 0.9011 | 0.9722 | 0.0113 | 0.9266 | 0.8743 | 0.9665 | 0.0082 | 0.8585 | 0.8500 | 0.9286 | 0.0367 |

| EMFINet-R | 2022 TGRS | C-ORSI | 0.9432 | 0.9155 | 0.9813 | 0.0095 | 0.9319 | 0.8742 | 0.9712 | 0.0075 | 0.8712 | 0.8636 | 0.9403 | 0.0313 |

| ERPNet-R | 2023 TCYB | C-ORSI | 0.9352 | 0.9036 | 0.9738 | 0.0114 | 0.9252 | 0.8743 | 0.9665 | 0.0082 | 0.8636 | 0.8528 | 0.9292 | 0.0388 |

| ACCoNet-R | 2023 TCYB | C-ORSI | 0.9428 | 0.9149 | 0.9819 | 0.0087 | 0.9302 | 0.8821 | 0.9759 | 0.0067 | 0.8805 | 0.8688 | 0.9424 | 0.032 |

| AESINet-R | 2023 TGRS | C-ORSI | 0.9455 | 0.9160 | 0.9814 | 0.0085 | 0.9347 | 0.8792 | 0.9757 | 0.0064 | 0.8755 | 0.8726 | 0.9459 | 0.0305 |

| DCCNet | 2024 LGRS | C-ORSI | 0.9417 | 0.9168 | 0.9805 | 0.0092 | 0.9345 | 0.8887 | 0.9761 | 0.0067 | 0.8705 | 0.8619 | 0.9348 | 0.0347 |

| LSHNet | 2024 TGRS | C-ORSI | 0.9491 | 0.9200 | 0.9824 | 0.0075 | 0.9370 | 0.8643 | 0.9761 | 0.0064 | 0.8759 | 0.8758 | 0.9462 | 0.0299 |

| MCPNet | 2024 TGRS | C-ORSI | 0.9433 | 0.9135 | 0.9807 | 0.0090 | 0.9373 | 0.8868 | 0.9765 | 0.0070 | 0.8736 | 0.8667 | 0.9402 | 0.0324 |

| HFANet-R | 2022 TGRS | H-ORSI | 0.9399 | 0.9117 | 0.9770 | 0.0092 | 0.9380 | 0.8876 | 0.9740 | 0.0071 | 0.8767 | 0.8700 | 0.9431 | 0.0314 |

| ADSTNet-R | 2024 JSTARS | H-ORSI | 0.9379 | 0.9124 | 0.9807 | 0.0086 | 0.9311 | 0.8804 | 0.9769 | 0.0065 | 0.8710 | 0.8698 | 0.9433 | 0.0318 |

| HFCNet-R | 2024 TGRS | H-ORSI | 0.9521 | 0.9247 | 0.9885 | 0.0073 | 0.9407 | 0.8864 | 0.9793 | 0.0054 | 0.8838 | 0.8833 | 0.9539 | 0.0277 |

| CMNFNet | 2025 TCYB | H-ORSI | 0.9475 | 0.9189 | 0.9832 | 0.0078 | 0.9377 | 0.8851 | 0.9774 | 0.0063 | 0.8774 | 0.8752 | 0.9885 | 0.0301 |

| ours | - | T-ORSI | 0.9540 | 0.9305 | 0.9888 | 0.0071 | 0.9393 | 0.8943 | 0.9843 | 0.0048 | 0.8858 | 0.8859 | 0.9531 | 0.0279 |

| No. | Base | PCE | DRF | ORSSD | EORSSD | ||||

| ↑ | ↑ | ↑ | ↑ | ↑ | ↑ | ||||

| 1 | ✓ | 0.9441 | 0.9165 | 0.9666 | 0.9326 | 0.8670 | 0.9589 | ||

| 2 | ✓ | ✓ | 0.9511 | 0.9215 | 0.9686 | 0.9361 | 0.8706 | 0.9582 | |

| 3 | ✓ | ✓ | 0.9505 | 0.9267 | 0.9868 | 0.9354 | 0.8901 | 0.9806 | |

| 4 | ✓ | ✓ | ✓ | 0.9540 | 0.9305 | 0.9888 | 0.9393 | 0.8943 | 0.9843 |

| Model variants | ORSSD | EORSSD | ||||

| (a) Ablation study in PCE | ||||||

| ours | 0.9540 | 0.9305 | 0.9888 | 0.9393 | 0.8943 | 0.9843 |

| w/o PC calculation | 0.9491 | 0.9239 | 0.9834 | 0.9328 | 0.8875 | 0.9788 |

| w/o VSA | 0.9491 | 0.9246 | 0.9840 | 0.9381 | 0.8917 | 0.9819 |

| (b) Ablation study in DRF | ||||||

| ours | 0.9540 | 0.9305 | 0.9888 | 0.9393 | 0.8943 | 0.9843 |

| w/o CA | 0.9489 | 0.9294 | 0.9806 | 0.9330 | 0.8866 | 0.9797 |

| w/o DSA | 0.9509 | 0.9262 | 0.9874 | 0.9364 | 0.8892 | 0.9783 |

| Model variants | |||

| 3 RAB | 0.9457 | 0.9196 | 0.9824 |

| 4 RAB | 0.9488 | 0.9219 | 0.9828 |

| 5 RAB | 0.9540 | 0.9305 | 0.9888 |

| 6 RAB | 0.9509 | 0.9276 | 0.9869 |

| 7 RAB | 0.9531 | 0.9274 | 0.9863 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).