Submitted:

24 March 2026

Posted:

25 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Healthcare AI as Critical Digital Health Infrastructure

1.2. The Governance Gap: From Model Oversight to Preparedness

1.3. Research Question, Analytical Sequence, and Contribution

2. Related Literature and Theoretical Foundation

2.1. Existing Governance Approaches in Healthcare AI

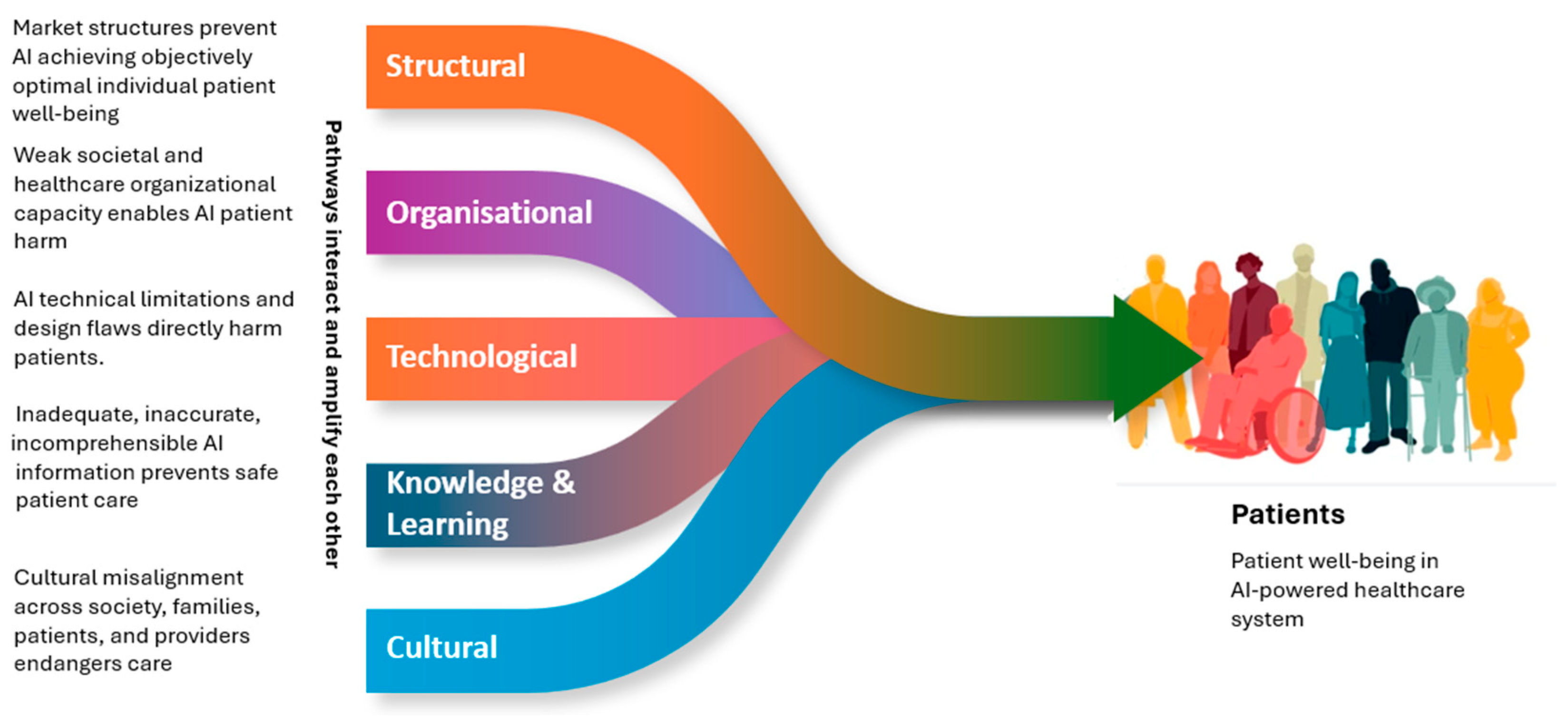

2.2. Systemic and Sociotechnical Risk

2.3. Crucial Digital Infrastructure and Cyber-Physical Systems

2.4. Public Health Preparedness as Governance Analog

3. Methodology

3.1. Research Design

3.2. Source Domains

3.3. Case Selection

3.4. Analytical Procedure

3.4. Scope and Boundaries

4. Results

4.1. Terminological Foundation for Systemic Healthcare AI Risk Surveillance

4.2. Case Study 1: CNN-Based Pneumonia Screening and Hidden Institutional Confounding

4.3. Case Study 2: The Epic Sepsis Model and the Problem of Vendor-Scale Exposure

4.4. Burden-of-Disease Metrics as an Interpretive Lens, Not a Headline Estimate

| Element | What It Captures | Candidate Data Sources | Key Caveat |

| Exposed population | How many patients, encounters, or decisions were influenced by the AI system and which model version was active | Deployment registries, audit logs, alert logs, order logs, model-version records | Requires stable denominators and clearly defined versioned exposure windows |

| Attributable adverse outcome rate | Share of adverse events plausibly linked to the AI-mediated failure relative to ordinary care | Linked outcome data, chart review, causal-inference designs, comparative deployment studies | Often the hardest parameter to estimate because the counterfactual is missing |

| Mortality (YLL) | Premature deaths associated with attributable failure where relevant | EHR outcomes, mortality files, life-table assumptions | Requires credible causal attribution rather than simple overlap counts |

| Non-fatal morbidity (YLD/QALY) | Functional decline, treatment delay, quality-of-life loss, or burden from sustained workflow disruption | Longitudinal follow-up, PROMs, utilization data, patient-safety review | Effects may be delayed, diffuse, and undermeasured |

| Distributional burden | How harm is distributed across patient subgroups, institutions, or capacity levels | Stratified performance data, subgroup outcomes, institutional context indicators | Aggregate averages can obscure concentrated harm |

| Temporal deployment context | Time from release to detection, duration of exposure, updates, rollback, and remediation | Incident logs, vendor communications, change-management records | Required to interpret latency and cascade propagation dynamics |

4.5. Five Governance-Relevant Properties of Systemic Healthcare AI Risk

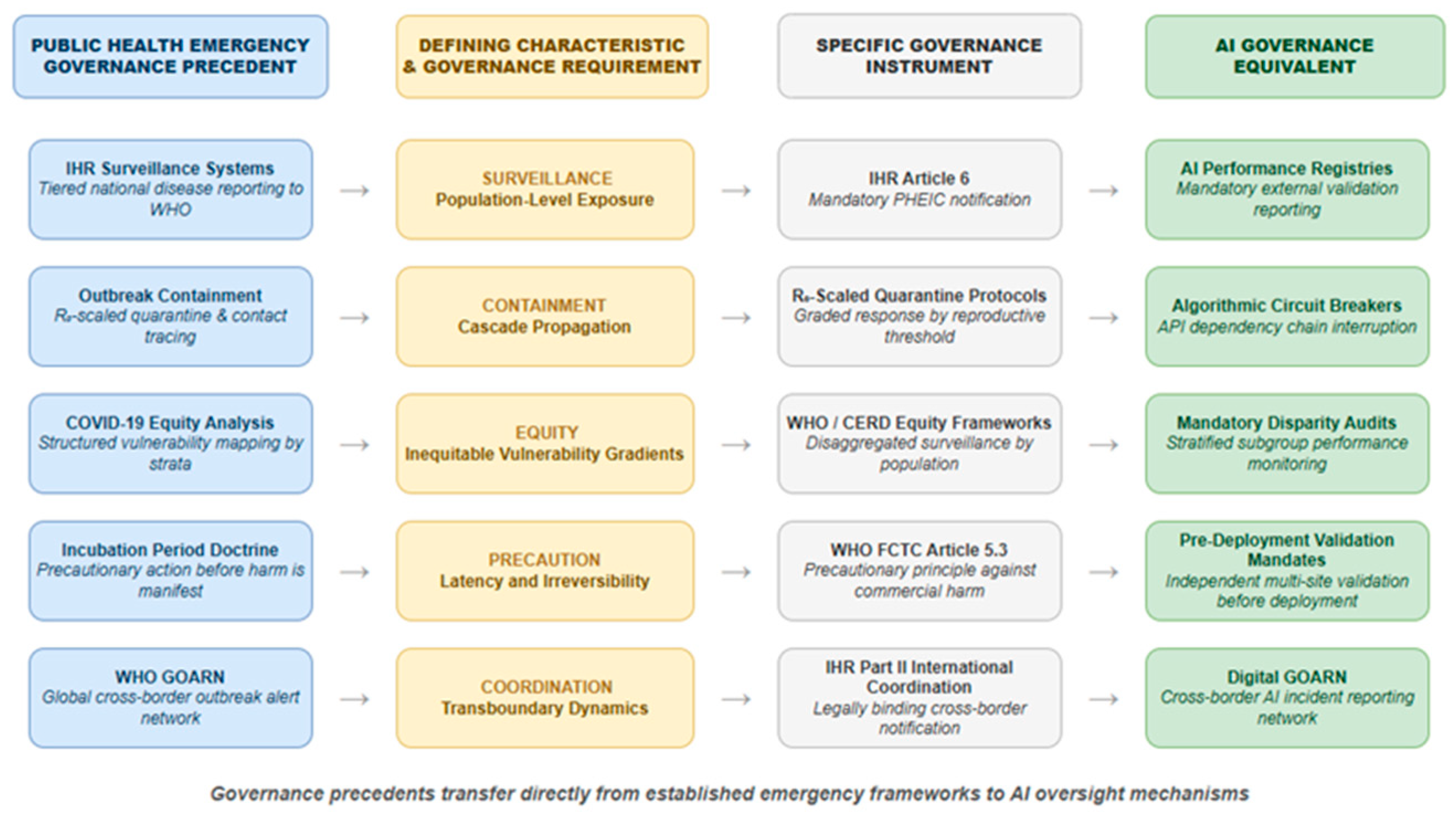

4.5.1. Population-Level Exposure and the Surveillance Requirement

4.5.2. Cascade Propagation Across Shared Infrastructures and the Containment Requirement

4.5.3. Unequal Vulnerability and the Equity Requirement

4.5.4. Latency, Irreversibility, and the Precautionary Requirement

4.5.5. Coordination Beyond a Single Institution and the Coordination Requirement

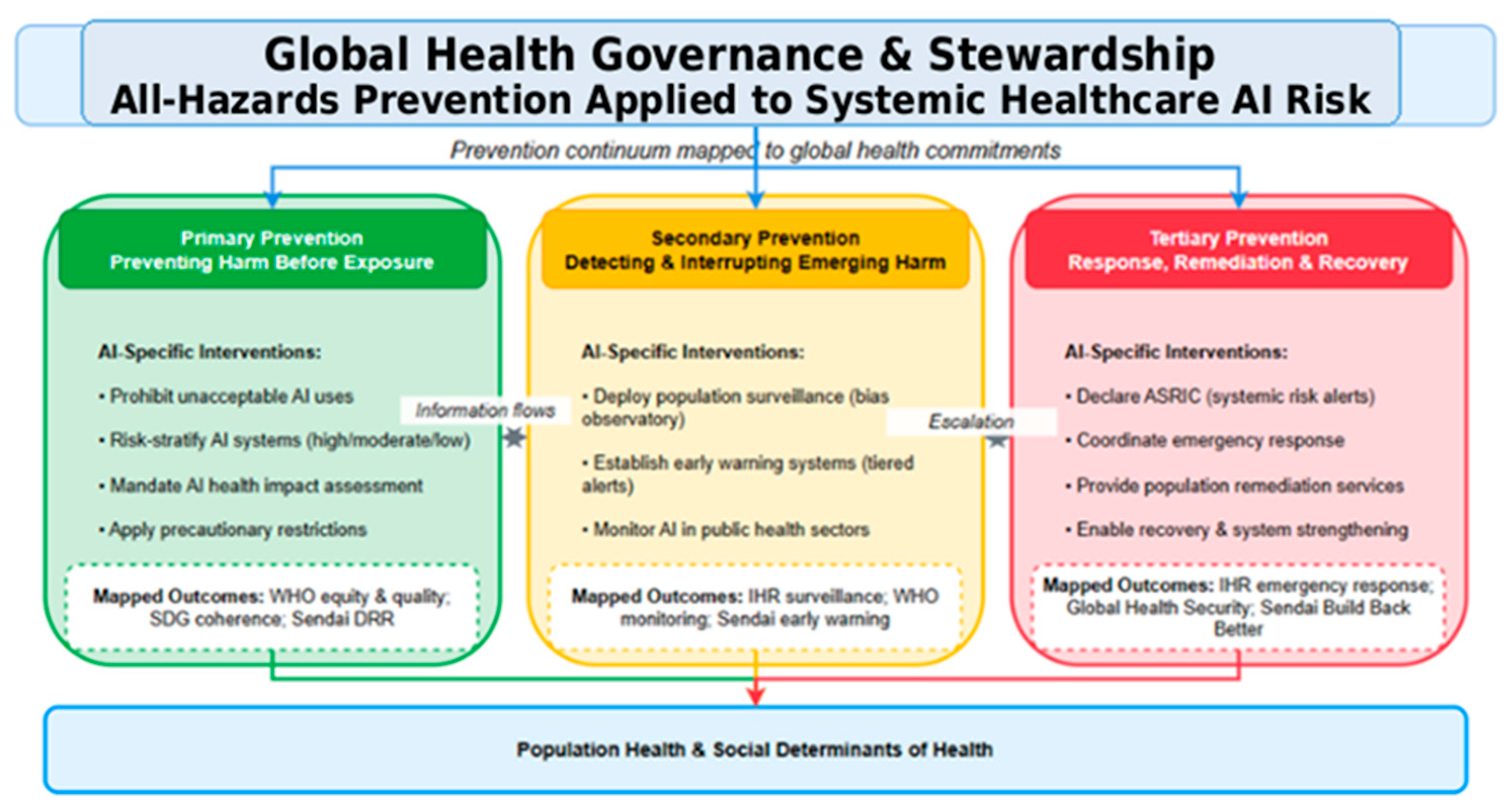

4.6. A Tripartite Preparedness Framework for Healthcare AI

4.6.1. Primary Prevention: Assurance Before Deployment

4.6.2. Secondary Prevention: Sentinel Surveillance and Early Intervention

4.6.3. Tertiary Prevention: Response, Remediation, and Learning

4.6.4. Framework Anchored to the Two Cases

| Preparedness Tier | Objective | Core Mechanisms | Illustrative Link to the Cases |

| Primary prevention (pre-deployment) | Reduce avoidable exposure before go-live | Risk classification; independent multi-site validation; environmental audit; subgroup review; human-factors assessment; procurement clauses on transparency and rollback | Would have exposed prevalence-driven fragility in the pneumonia model and prevented vendor claims from serving as the sole assurance gate for the ESM |

| Secondary prevention (surveillance and interruption) | Detect and interrupt emerging harm early | Algorithm vigilance; indicator-based dashboards; event-based reporting; sentinel observatories; periodic revalidation; incident categories; audit and pause thresholds | Would have made the ESM performance gap visible sooner and enabled cross-site detection of hidden deterioration |

| Tertiary prevention (response and learning) | Contain harm, remediate, and convert incidents into institutional learning | Incident investigation; rollback or safe-mode operation; patient-safety review; incident-command activation where warranted; vendor notification; stakeholder communication; cross-site learning; GOARN-inspired coordination for widespread failures | Addresses patients and institutions already exposed in both cases and converts isolated failure into shared governance learning |

5. Discussion

5.1. What the Public Health Lens Adds

5.2. Relationship to Algorithm Vigilance, Safety Engineering, and Regulation

5.3. Practical Implementation Priorities

5.4. Theoretical, Methodological, and Practical Contributions

6. Limitations and Future Work

7. Conclusion

References

- Leveson, N.G. Engineering a Safer World: Systems Thinking Applied to Safety; MIT Press: Cambridge, MA, USA, 2012. [CrossRef]

- Lee, E.A. Cyber Physical Systems: Design Challenges. In Proceedings of the 11th IEEE International Symposium on Object/Component/Service-Oriented Real-Time Distributed Computing (ISORC), Orlando, FL, USA, 5–7 May 2008; pp. 363–369. [CrossRef]

- International Electrotechnical Commission. IEC 61508:2010. Functional Safety of Electrical/Electronic/Programmable Electronic Safety-Related Systems; International Electrotechnical Commission: Geneva, Switzerland, 2010.

- Zech, J.R.; Badgeley, M.A.; Liu, M.; Costa, A.B.; Titano, J.J.; Oermann, E.K. Variable generalization performance of a deep learning model to detect pneumonia in chest radiographs: A cross-sectional study. PLoS Med. 2018, 15, e1002683. [CrossRef] [PubMed]

- Wong, A.; Otles, E.; Donnelly, J.P.; Krumm, A.; McCullough, J.; DeTroyer-Cooley, O.; Pestrue, J.; Phillips, M.; Konye, J.; Penoza, C.; et al. External validation of a widely implemented proprietary sepsis prediction model in hospitalized patients. JAMA Intern. Med. 2021, 181, 1065–1070. [CrossRef] [PubMed]

- World Health Organization. Ethics and Governance of Artificial Intelligence for Health: WHO Guidance; World Health Organization: Geneva, Switzerland, 2021.

- World Health Organization. Global Strategy on Digital Health 2020–2025; World Health Organization: Geneva, Switzerland, 2021.

- European Parliament; Council of the European Union. Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Off. J. Eur. Union 2024, 2024/1689.

- Tabassi, E. Artificial Intelligence Risk Management Framework (AI RMF 1.0); National Institute of Standards and Technology: Gaithersburg, MD, USA, 2023. [CrossRef]

- Embi, P.J. Algorithmovigilance—Advancing Methods to Analyze and Monitor Artificial Intelligence-Driven Health Care for Effectiveness and Equity. JAMA Netw. Open 2021, 4, e214622. [CrossRef] [PubMed]

- Balendran, A.; Benchoufi, M.; Evgeniou, T.; Ravaud, P. Algorithmovigilance, lessons from pharmacovigilance. npj Digit. Med. 2024, 7, 270. [CrossRef] [PubMed]

- Macrae, C. Learning from the Failure of Autonomous and Intelligent Systems: Accidents, Safety, and Sociotechnical Sources of Risk. Risk Anal. 2022, 42, 1999–2025. [CrossRef] [PubMed]

- World Health Organization. Health Emergency and Disaster Risk Management Framework; World Health Organization: Geneva, Switzerland, 2019.

- United Nations Office for Disaster Risk Reduction. Sendai Framework for Disaster Risk Reduction 2015–2030; United Nations Office for Disaster Risk Reduction: Geneva, Switzerland, 2015.

- World Health Organization. International Health Regulations (2005) as amended in 2014, 2022 and 2024; World Health Organization: Geneva, Switzerland, 2025.

- World Health Organization. Global Outbreak Alert and Response Network (GOARN). Available online: https://goarn.who.int/ (accessed on 20 March 2026).

- World Health Organization. Defining Collaborative Surveillance: A Core Concept for Strengthening the Global Architecture for Health Emergency Preparedness, Response, and Resilience (HEPR); World Health Organization: Geneva, Switzerland, 2023.

- World Health Organization. Impact of Using the Epidemic Intelligence from Open Sources (EIOS) System for Early Detection of Public Health Threats in the Americas. Wkly. Epidemiol. Rec. 2025, 100, 131–144.

- Centers for Disease Control and Prevention. Chapter 19: Enhancing Surveillance. In Manual for the Surveillance of Vaccine-Preventable Diseases; Available online: https://www.cdc.gov/surv-manual/php/table-of-contents/chapter-19-enhancing-surveillance.html (accessed on 20 March 2026).

- Centers for Disease Control and Prevention. Updated Guidelines for Evaluating Public Health Surveillance Systems: Recommendations from the Guidelines Working Group. MMWR Recomm. Rep. 2001, 50(RR-13), 1–35.

- Bryant, J.L.; Sosin, D.M.; Wiedrich, T.W.; Redd, S.C. Emergency Operations Centers and Incident Management Structure. In The CDC Field Epidemiology Manual; Available online: https://www.cdc.gov/field-epi-manual/php/chapters/eoc-incident-management.html (accessed on 20 March 2026).

- Salathé, M.; Bengtsson, L.; Bodnar, T.J.; Brewer, D.D.; Brownstein, J.S.; Buckee, C.; Campbell, E.M.; Cattuto, C.; Khandelwal, S.; Mabry, P.L.; et al. Digital epidemiology. PLoS Comput. Biol. 2012, 8, e1002616. [CrossRef] [PubMed]

- GBD 2019 Diseases and Injuries Collaborators. Global burden of 369 diseases and injuries in 204 countries and territories, 1990–2019: A systematic analysis for the Global Burden of Disease Study 2019. Lancet 2020, 396, 1204–1222. [CrossRef] [PubMed]

- Whitehead, S.J.; Ali, S. Health outcomes in economic evaluation: the QALY and utilities. Br. Med. Bull. 2010, 96, 5–21. [CrossRef] [PubMed]

- Rhee, C.; Dantes, R.; Epstein, L.; Murphy, D.J.; Seymour, C.W.; Iwashyna, T.J.; Kadri, S.S.; Angus, D.C.; Danner, R.L.; Fiore, A.E.; et al. Incidence and trends of sepsis in US hospitals using clinical vs claims data, 2009–2014. JAMA 2017, 318, 1241–1249. [CrossRef] [PubMed]

- Iwashyna, T.J.; Ely, E.W.; Smith, D.M.; Langa, K.M. Long-term cognitive impairment and functional disability among survivors of severe sepsis. JAMA 2010, 304, 1787–1794. [CrossRef] [PubMed]

- Baker, M.G.; Fidler, D.P. Global public health surveillance under new international health regulations. Emerg. Infect. Dis. 2006, 12, 1058–1065. [CrossRef] [PubMed]

- Obermeyer, Z.; Powers, B.; Vogeli, C.; Mullainathan, S. Dissecting racial bias in an algorithm used to manage the health of populations. Science 2019, 366, 447–453. [CrossRef] [PubMed]

- World Health Organization. Handbook on Health Inequality Monitoring: With a Special Focus on Low- and Middle-Income Countries; World Health Organization: Geneva, Switzerland, 2013.

- Leavell, H.R.; Clark, E.G. Preventive Medicine for the Doctor in His Community: An Epidemiologic Approach, 3rd ed.; McGraw-Hill: New York, NY, USA, 1965.

- Youssef, A.; Pencina, M.J.; Thakur, A.; Zhu, T.; Clifton, D.; Shah, N.H. External validation of AI models in health should be replaced with recurring local validation. Nat. Med. 2023, 29, 2686–2687. [CrossRef] [PubMed]

- Feng, J.; Xia, F.; Singh, K.; Pirracchio, R. Not all clinical AI monitoring systems are created equal: Review and recommendations. NEJM AI 2025, 2, AIra2400657. [CrossRef]

- Wells, B.J.; Nguyen, H.M.; McWilliams, A.; Pallini, M.; Bovi, A.; Kuzma, A.; Kramer, J.; Chou, S.-H.; Hetherington, T.; Corn, P.; et al. A practical framework for appropriate implementation and review of artificial intelligence (FAIR-AI) in healthcare. npj Digit. Med. 2025, 8, 514. [CrossRef] [PubMed]

- You, J.G.; Hernandez-Boussard, T.; Pfeffer, M.A.; Landman, A.; Mishuris, R.G. Clinical trials informed framework for real-world clinical implementation and deployment of artificial intelligence applications. npj Digit. Med. 2025, 8, 107. [CrossRef] [PubMed]

- Wong, A.; Currey, D.; Schwinne, M.; Park-Egan, B.; Meyer, S.; Gutting, A.; Cao, J.; Khan, S.; Dantes, R.; Pan, T.; et al. Multicenter prospective validation of an updated proprietary sepsis prediction model. JAMA Netw. Open 2026, 9, e260181. [CrossRef] [PubMed]

- Lekadir, K.; Frangi, A.F.; Porras, A.R.; Glocker, B.; Cintas, C.; Langlotz, C.P.; Weicken, E.; Asselbergs, F.W.; Prior, F.; Collins, G.S.; et al. FUTURE-AI: international consensus guideline for trustworthy and deployable artificial intelligence in healthcare. BMJ 2025, 388, e081554. [CrossRef] [PubMed]

- Organisation for Economic Co-operation and Development. AI Principles. Available online: https://www.oecd.org/en/topics/sub-issues/ai-principles.html (accessed on 20 March 2026).

- HL7 International. FHIR Release 4 (v4.0.1): Base Specification. Available online: https://hl7.org/fhir/R4/ (accessed on 20 March 2026).

- Observational Health Data Sciences and Informatics. OMOP Common Data Model. Available online: https://ohdsi.github.io/CommonDataModel/ (accessed on 20 March 2026).

| Term | Source Concept (Abridged) | Healthcare AI Interpretation | Governance Relevance |

| Epidemiology | Distribution and determinants of health-related events in specified populations | Distribution and determinants of AI-related health hazards in patient populations and healthcare institutions | Supports population-based governance rather than isolated bug fixing |

| Etiology | Study of causes and origins of disease | Study of the causes of AI-related health hazards across structural, organizational, technological, epistemic, and cultural pathways | Shifts governance upstream toward root-cause mitigation |

| Agent | Pathogen or harmful exposure that contributes directly to disease | Biased model, brittle classifier, unsafe threshold, hallucinating component, or harmful feedback loop embedded in care | Names the actionable failure mechanism |

| Host | Susceptible organism or population | Exposed patient populations and the clinical settings in which decisions are shaped by the system | Highlights exposed populations, workflow locus, and differential susceptibility |

| Environment | Conditions enabling exposure and spread | Data pipelines, workflows, procurement terms, regulation, interfaces, infrastructure, and organizational culture | Treats the deployment setting as part of the hazard |

| Transmission | Route by which the agent reaches the host | Embedded clinical decision support, API dependency, copied workflow, replicated threshold logic, or vendor diffusion | Makes propagation pathways governable |

| Case Definition | Standardized criteria for classifying an event | Minimum evidence needed to classify an AI incident: performance deterioration, affected population, context, and outcome link | Enables cross-site comparison, escalation, and observatory reporting |

| Digital Epidemiology | Population-scale monitoring using digital data | Cross-site monitoring of AI performance, workflow effects, and patient outcomes using routine metrics, incident reports, and interoperable digital data | Supports indicator-based, event-based, and sentinel early warning |

| Construct | Descriptor | Case-Specific Entry |

| Epidemiology | Population under study | Patients undergoing pneumonia screening across three institutionally distinct hospital systems |

| Etiology | Primary causal factor | Training on institutionally siloed data with large prevalence differences and hidden site-specific cues |

| Etiology | Contributing factors | Scanner heterogeneity, storage-format differences, laterality tokens, transfer-learning architecture, and label-generation pipelines |

| Agent | Pathogenic mechanism | Confounder learning: hospital identity and prevalence patterns dominate over portable clinical signal |

| Host | Exposed population / workflow locus | Patients undergoing pneumonia screening and radiology teams whose decisions may be shaped by model output |

| Environment | Digital infrastructure | PACS, scanner hardware, preprocessing pipelines, NLP-derived labels, and heterogeneous reporting practices |

| Transmission | Route | Model outputs mediate clinical judgment through radiology workflow and downstream decision support |

| Case definition | Signal of incident | Internal–external performance gap plus evidence that the model predicts site identity and exploits contextual cues |

| Governance inference | Prevention need | Independent external validation, prevalence harmonization, environmental audit, and cross-site monitoring before routine deployment |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).