Submitted:

24 March 2026

Posted:

26 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- RQ1: To what extent can an LLM accurately interpret and contextualize graph-based threat intelligence retrieved from OpenCTI?

- RQ2: How reliably can LLM-driven automation map disparate IoCs to specific MITRE ATT&CK techniques?

- RQ3: Is it feasible for an LLM to generate syntactically valid and operationally precise KQL detection rules for MDE without human semantic intervention?

- RQ4: Can such a system materially reduce analyst workload while improving the forensic visibility of silent EDR blocks?

- We conduct a systematic empirical evaluation of LLM-generated detection logic across a representative subset of the MITRE ATT&CK matrix, specifically targeting under-detected reconnaissance and lateral movement techniques.

- Unlike related work focusing on simple IoC extraction (hashes/IPs), our approach emphasizes the operationalization of behavioral TTPs to detect early-stage reconnaissance and lateral movement.

- We provide a rigorous before-and-after analysis of detection coverage, quantifying the detection delta provided by LLM-generated rules compared to native MDE heuristics.

- We introduce a custom normalization layer designed to mitigate LLM hallucinations regarding proprietary EDR schemas, addressing the technical reliability of AI-generated security code.

2. Related Work

3. Methodology

3.1. Threat Intelligence Tool Evaluation and Selection

3.2. Selection of MITRE ATT&CK Techniques and Attack Scenarios

3.3. LLM-Assisted Detection Rule Generation Process & Prompt Engineering

3.4. Post-Processing and Validation of Generated KQL

3.5. Experimental Evaluation Methodology

3.6. Reproducibility Considerations

4. System Architecture

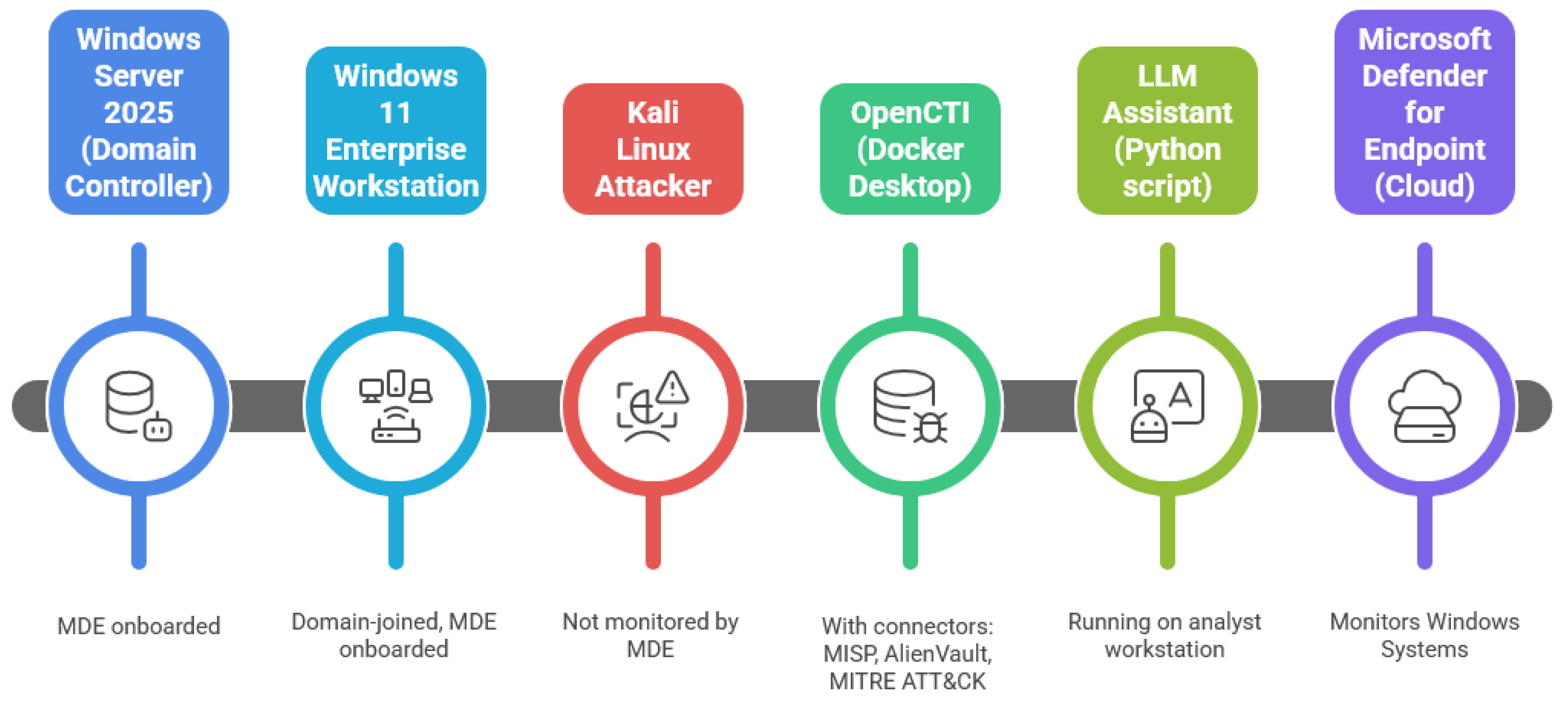

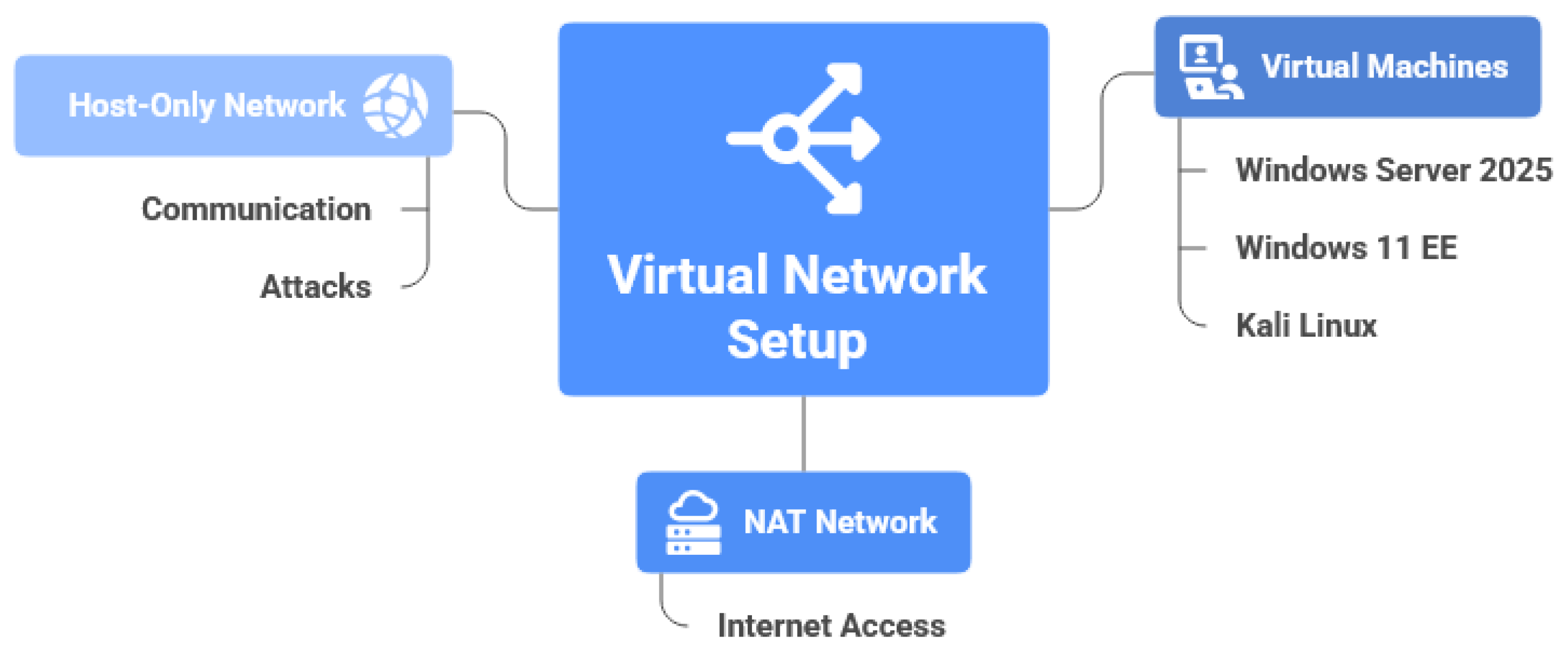

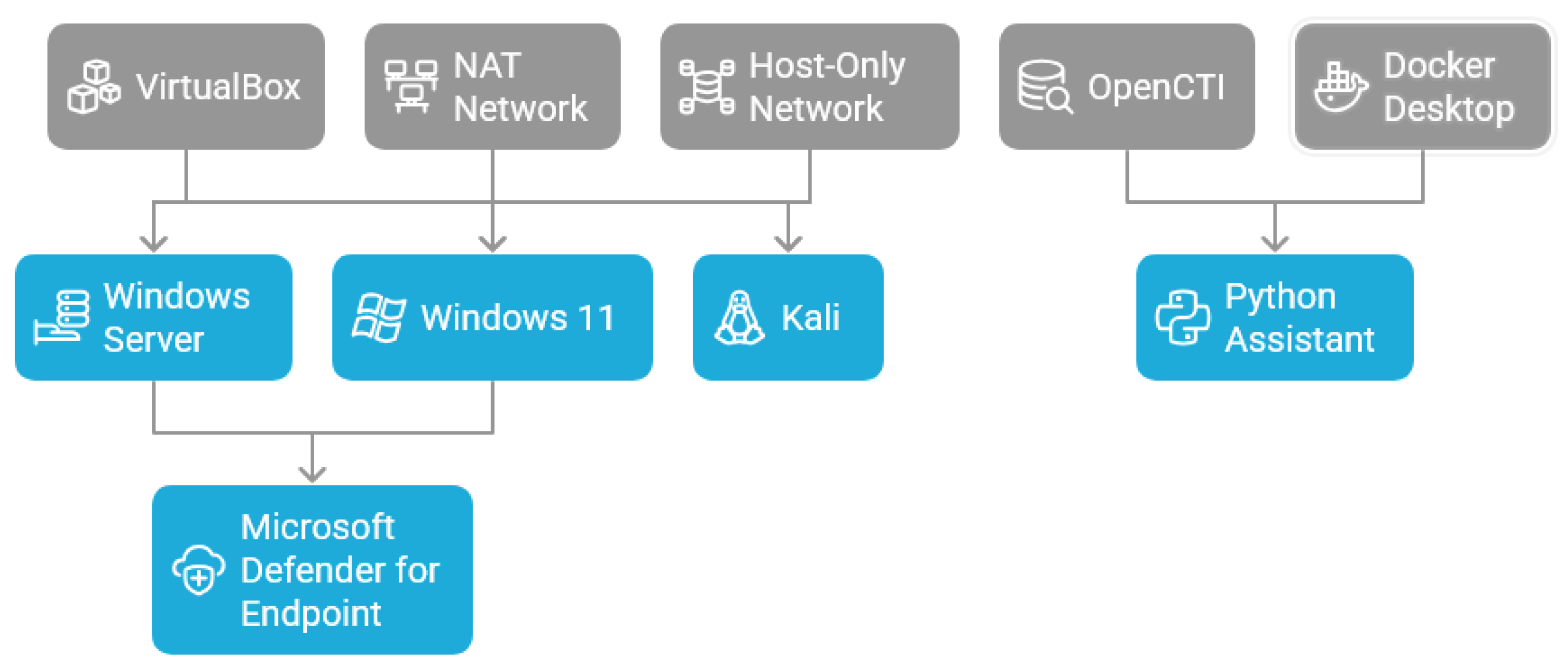

4.1. System Architecture & Environment

- Windows Server 2025 (OS build 26100.4946, August 12, 2025 update): Configured as an Active Directory Domain Services (AD DS) controller hosting the domain and authenticating enterprise users. A Global Administrator account is logged in to facilitate management operations.

- Windows 11 Enterprise (OS build 26100.4946, August 12, 2025 update): Domain-joined and used as the primary employee workstation. The domain user “Bob Worker” is logged in during experiments.

- Kali Linux (Rolling release June 13, 2025, kernel 6.12.0): Used exclusively as an attacker machine. This VM performs all offensive activities during the evaluation phase.

4.2. Deployment of MDE

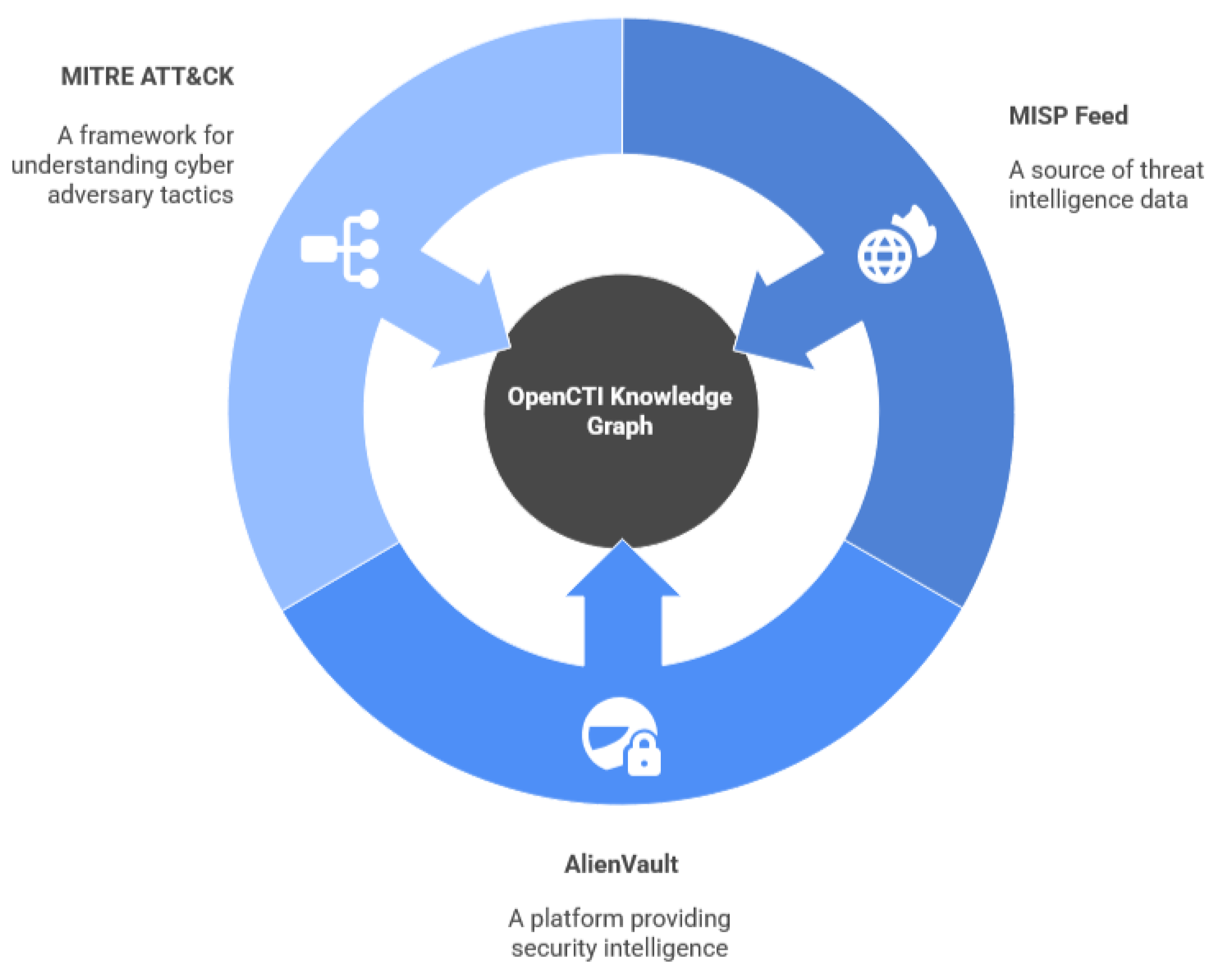

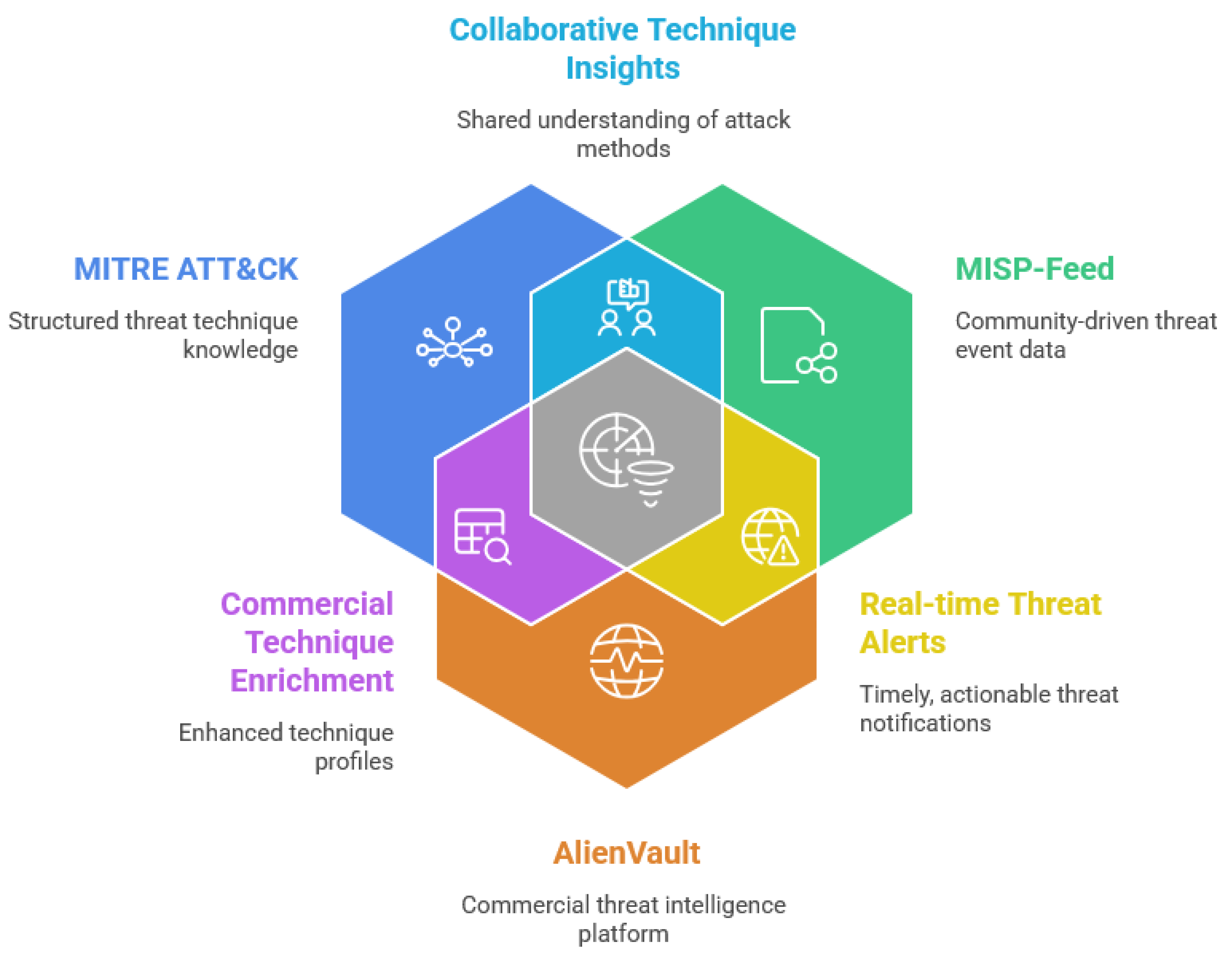

4.3. OpenCTI Threat-Intelligence Platform

- 1.

- MITRE ATT&CK: Provides the entire matrix of tactics, techniques, sub-techniques, and associated metadata.

- 2.

- MISP-feed: Delivers indicators and event collections from publicly available MISP instances.

- 3.

- AlienVault OTX: Enriches OpenCTI with community-generated indicators, threat actors, campaigns, and observable relationships.

4.4. Python-Based LLM Assistant Implementation

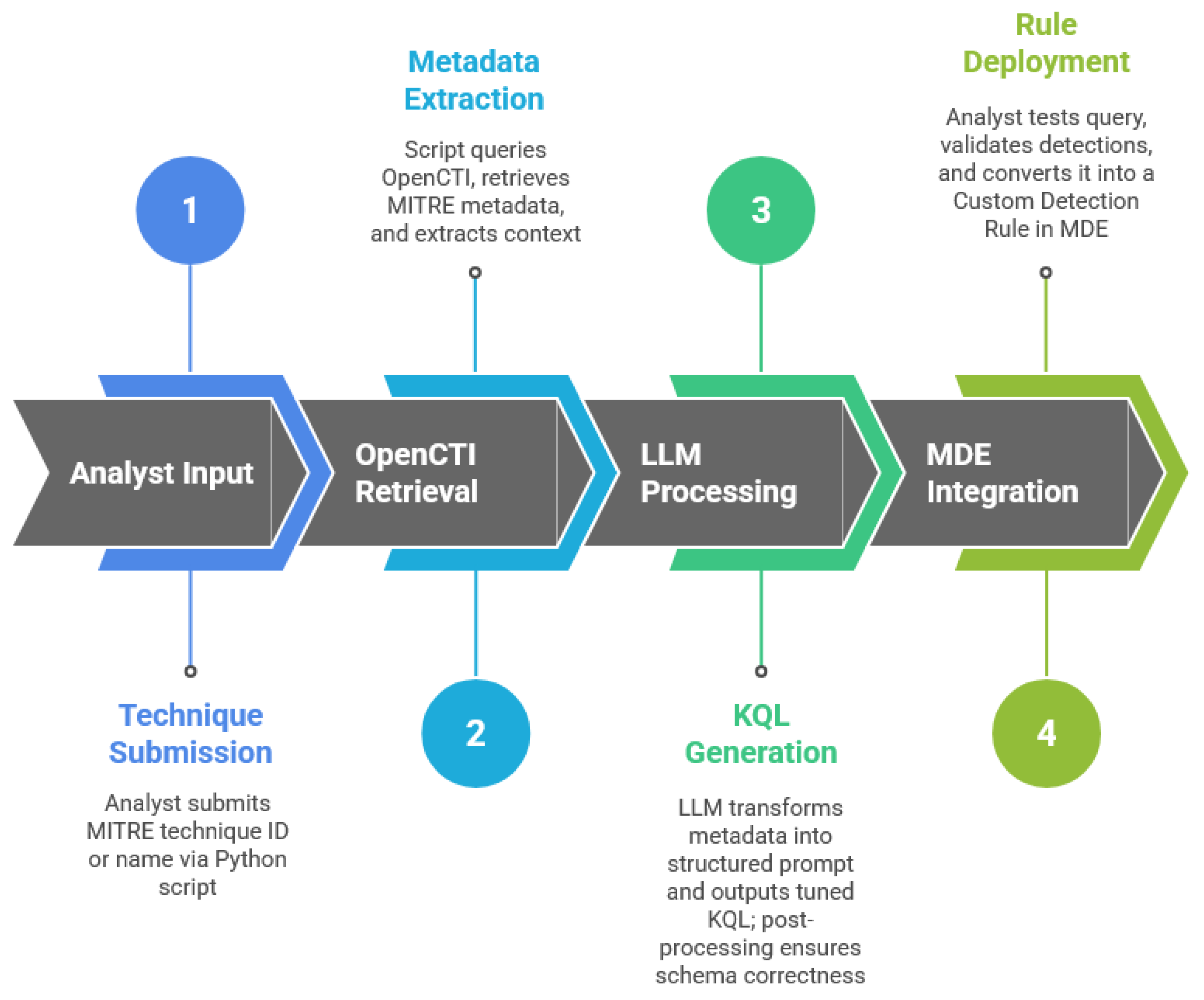

4.5. System-Level Data Flow

- 1.

- Analyst Input → MITRE technique ID or name is submitted through the Python script.

- 2.

- OpenCTI Retrieval → The script queries OpenCTI, retrieves MITRE metadata and extracts technique context.

- 3.

- LLM Processing → The extracted metadata is transformed into a structured prompt; the LLM outputs tuned KQL; post-processing ensures schema correctness.

- 4.

- MDE Integration → The analyst tests the query in Advanced Hunting, validates detections generated during simulated attacks, and converts it into a custom detection rule.

4.6. Challenges

5. Evaluation

5.1. Environment

5.2. Intelligence Integration and LLM Pipeline

5.3. Execution of Attacks Prior to Custom Rule Deployment

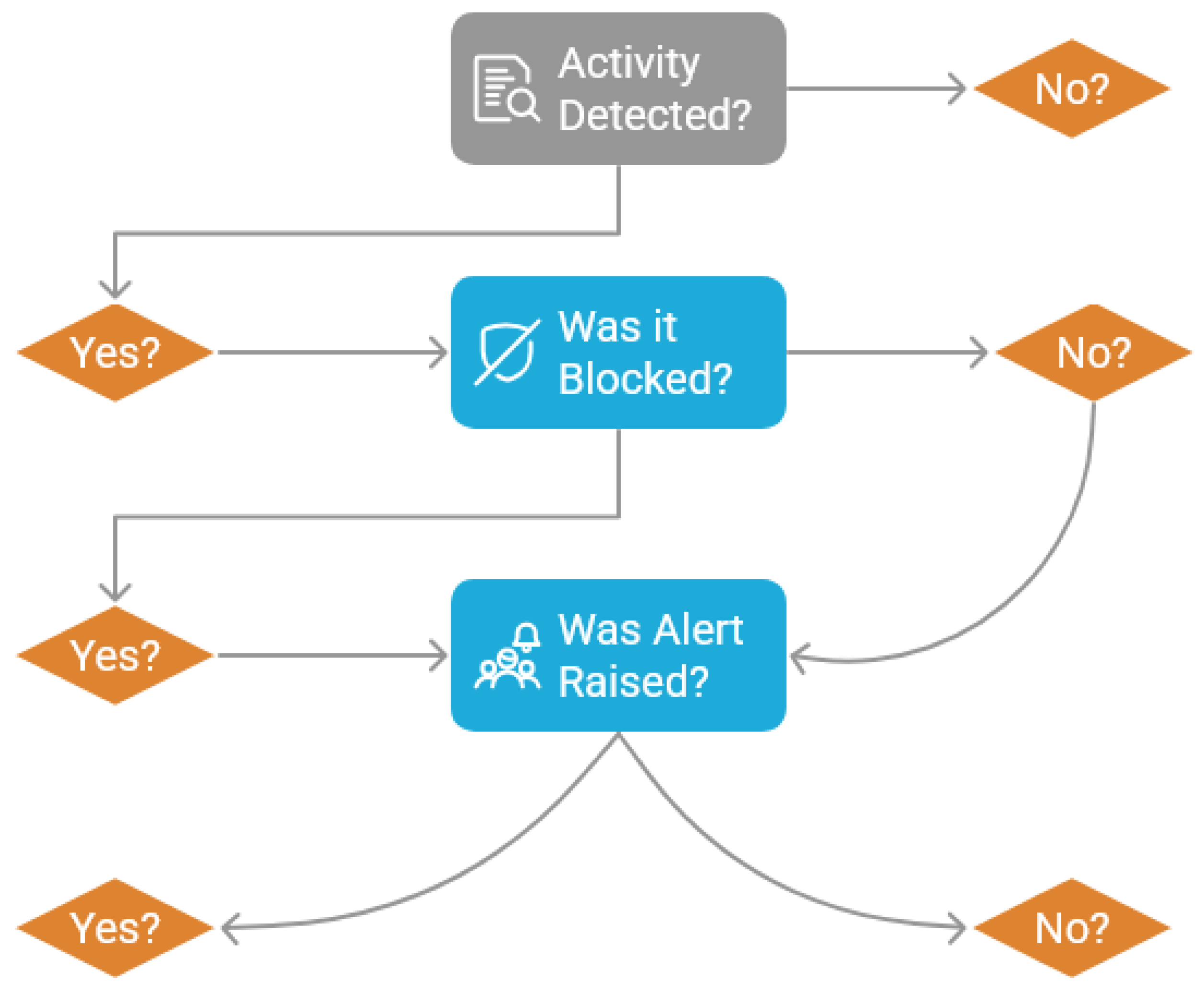

- Phishing (T1566): Implemented via HiddenEye to harvest credentials. As this relied on user interaction rather than malicious process execution, MDE failed to generate alerts, highlighting a significant gap in endpoint-only visibility.

- Account Discovery (T1087): Executed using the netexec framework. Despite the generation of authentication logs, MDE yielded zero alerts, confirming that reconnaissance often remains below the detection threshold of native heuristics.

- Kerberoasting (T1558.003): Executed via Atomic Red Team. This was reliably detected and blocked by MDE’s native engine, generating high-severity incidents.

- SMB Reconnaissance (T1135/T1021.002): While MDE flagged the subsequent reverse-shell delivery, the initial share enumeration was inconsistently detected, indicating a lack of early-stage visibility.

- Credential Dumping (T1003): While secretsdump.py triggered alerts, newer tools like regsecrets.py were silently blocked without generating an incident, demonstrating a prevention-detection mismatch where the analyst is left unaware of the thwarted attempt.

5.4. Evaluation of LLM-Generated Rule Behavior

6. Discussion

6.1. Native MDE Detection Performance: The Prevention-Visibility Paradox

6.2. Operational Efficacy of LLM-Generated KQL

6.3. Comparative Assessment and the Intelligence-to-Logic Latency

6.4. Limitations and Operational Impact

7. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

Data Availability Statement

References

- Kampourakis, K.E.; Gkioulos, V.; Kavallieratos, G.; Lin, J.C. Digital Twin-Enabled Incident Detection and Response: A Systematic Review of Critical Infrastructures Applications. International Journal of Information Security 2025, 24, 194. [Google Scholar] [CrossRef]

- Arfeen, A.; Ahmed, S.; Khan, M.A.; Jafri, S.F.A. Endpoint Detection & Response: A Malware Identification Solution. In Proceedings of the 2021 International Conference on Cyber Warfare and Security (ICCWS), 2021; pp. 1–8. [Google Scholar] [CrossRef]

- Alturkistani, H.; Chuprat, S. Artificial intelligence and large language models in advancing cyber threat intelligence: A systematic literature review. 2024. [Google Scholar] [CrossRef]

- Kampourakis, K.E.; Kampourakis, V.; Chatzoglou, E.; Kambourakis, G.; Gritzalis, S. Knowledge-to-Data: LLM-Driven Synthesis of Structured Network Traffic for Testbed-Free IDS Evaluation. arXiv 2026, arXiv:2601.05022. [Google Scholar] [CrossRef]

- Smiliotopoulos, C.; Kambourakis, G.; Kolias, C. Detecting Lateral Movement: A Systematic Survey. Heliyon 2024, 10, e26317. [Google Scholar] [CrossRef] [PubMed]

- Ho, G.; Dhiman, M.; Akhawe, D.; Paxson, V.; Savage, S.; Voelker, G.M.; Wagner, D. Hopper: Modeling and detecting lateral movement. In Proceedings of the 30th USENIX Security Symposium (USENIX Security 21), 2021; pp. 3093–3110. [Google Scholar]

- Hassan, W.U.; Bates, A.; Marino, D. Tactical provenance analysis for endpoint detection and response systems. In Proceedings of the 2020 IEEE symposium on security and privacy (SP), 2020; IEEE; pp. 1172–1189. [Google Scholar] [CrossRef]

- Kaur, Harpreet.; Sanjaiy SL, Dharani.; Paul, Tirtharaj.; Kumar Thakur, Rohit.; Kumar Reddy, K. Vijay.; Mahato, Jay.; Naveen, Kaviti. Evolution of Endpoint Detection and Response (EDR) in Cyber Security: A Comprehensive Review E3S Web Conf. 2024, 556, 01006. [CrossRef]

- Yusof, Z.B. Effectiveness of endpoint detection and response solutions in combating modern cyber threats. Journal of Advances in Cybersecurity Science, Threat Intelligence, and Countermeasures 2024, 8, 1–9. [Google Scholar]

- Al-Sada, B.; Sadighian, A.; Oligeri, G. MITRE ATT&CK: State of the art and way forward. ACM Computing Surveys 2024, 57, 1–37. [Google Scholar] [CrossRef]

- Rajesh, P.; Alam, M.; Tahernezhadi, M.; Monika, A.; Chanakya, G. Analysis of cyber threat detection and emulation using mitre attack framework. In Proceedings of the 2022 International Conference on Intelligent Data Science Technologies and Applications (IDSTA), 2022; IEEE; pp. 4–12. [Google Scholar] [CrossRef]

- Xu, H.; Wang, S.; Li, N.; Wang, K.; Zhao, Y.; Chen, K.; Yu, T.; Liu, Y.; Wang, H. Large language models for cyber security: A systematic literature review. ACM Transactions on Software Engineering and Methodology 2024. [Google Scholar] [CrossRef]

- Zhang, J.; Bu, H.; Wen, H.; Liu, Y.; Fei, H.; Xi, R.; Li, L.; Yang, Y.; Zhu, H.; Meng, D. When llms meet cybersecurity: A systematic literature review. Cybersecurity 2025, 8, 55. [Google Scholar] [CrossRef]

- Saura, P.F.; Jayaram, K.R.; Isahagian, V.; Bernabé, J.B.; Skarmeta, A. On Automating Security Policies with Contemporary LLMs. arXiv 2025, arXiv:2506.04838. [Google Scholar] [CrossRef]

- Wang, H.; Xu, M.; Guo, Y.; Han, W.; Lim, H.W.; Dong, J.S. RulePilot: An LLM-Powered Agent for Security Rule Generation. arXiv 2025. [Google Scholar]

- Anvilogic.; Institute, S. State of Detection Engineering Report, Technical report; Anvilogic, 2025.

- OpenCTI Platform. OpenCTI Official Documentation. 2024. Available online: https://www.opencti.io/en/ Accessed: 2025-01.

- OpenCTI Platform. OpenCTI Platform GitHub Repository. 2024. Available online: https://github.com/OpenCTI-Platform/opencti Accessed: 2025-01.

- MISP Project. MISP Threat Sharing Platform. 2024. Available online: https://github.com/MISP/MISP Accessed: 2025-01.

- Yeti Platform. Yeti Threat Intelligence Platform. 2023. Available online: https://github.com/yeti-platform/yeti Accessed: 2025-01.

- Prelude Security. Prelude Security. 2026. Available online: https://www.preludesecurity.com/ Accessed: 2026-01.

- Silas Cutler. MalPipe: Malware/IOC ingestion and processing engine. 2024. Available online: https://github.com/silascutler/MalPipe Accessed: 2026-01.

- Tian, S.; Zhang, T.; Liu, J.; Wang, J.; Wu, X.; Zhu, X.; Zhang, R.; Zhang, W.; Yuan, Z.; Mao, S.; et al. Exploring the Role of Large Language Models in Cybersecurity: A Systematic Survey. arXiv 2025, arXiv:2504.15622. [Google Scholar] [CrossRef]

- NIST. NIST AI Risk Management Framework. 2023. Available online: https://www.nist.gov/itl/ai-risk-management-framework.

- Johnnykonst. LLM-Assisted MITRE-Aware KQL Generation for Microsoft Defender for Endpoint. 2026. Available online: https://github.com/Johnnykonst/LLM-Assistant-for-Microsoft-Defender-for-Endpoint Accessed: 2026-02.

- Microsoft. Advanced Hunting in Microsoft Defender for Endpoint. 2024. Available online: https://learn.microsoft.com/en-us/defender-endpoint/advanced-hunting-overview Accessed: 2025-01.

- Smiliotopoulos, C.; Barmpatsalou, K.; Kambourakis, G. Revisiting the Detection of Lateral Movement through Sysmon. Applied Sciences 2022, 12. [Google Scholar] [CrossRef]

| Attack Category | MITRE Technique | Tool Used | Target System |

|---|---|---|---|

| Credential Access (Phishing) | Phishing (T1566) | HiddenEye | Windows 11 Enterprise (user workstation) |

| Discovery (Account Discovery) | Account Discovery (T1087) | Native tools / scripted enumeration | Windows Server 2025 / Active Directory |

| Credential Access (Kerberos) | Kerberoasting (T1558.003) | Atomic Red Team / Kerberoast tooling | Windows Server 2025 / Active Directory |

| Discovery / Lateral Movement (SMB) | Network Share Discovery (T1135) / Remote Services (T1021.002) | SMB enumeration / reverse shell delivery | Windows Server 2025, Windows 11 Enterprise |

| Credential Access (Dumping) | OS Credential Dumping (T1003) | impacket-secretsdump.py, regsecrets.py, netexec (LSASSY) | Windows Server 2025 (Domain Controller) |

| Credential Access / Lateral Movement | Pass the Hash (T1550.002) | impacket / netexec | Windows Server 2025, Windows 11 Enterprise |

| Component | OS / Version | CPU | RAM | Role |

|---|---|---|---|---|

| Windows Server VM | Windows Server 2025 (OS build 26100.4946, Aug 12 2025 update) | 4 | 6 GB | Active Directory Domain Controller |

| Windows 11 VM | Windows 11 Enterprise (OS build 26100.4946, Aug 12 2025 update) | 4 | 6 GB | Domain-joined workstation |

| Kali VM | Kali Linux (Rolling, Jun 13 2025; kernel 6.12.0) | 4 | 4 GB | Attacker’s machine (not onboarded to MDE) |

| Software / Platform | Version / Notes |

|---|---|

| Windows Server | Windows Server 2025 (OS build 26100.4946, August 12, 2025 update) |

| Windows 11 | Windows 11 Enterprise (OS build 26100.4946, August 12, 2025 update) |

| Kali Linux | Rolling release (June 13, 2025), kernel 6.12.0 |

| OpenCTI | 6.7.5 |

| Docker Desktop | 4.54.0 |

| Python | 3.13.5 |

| Oracle VirtualBox | 7.1.10 |

| Microsoft Defender for Endpoint | P2 license |

| Attack Technique | Native MDE Detection | Native MDE Blocking | Custom Rule Detection | Rule Execution Mode (NRT / Scheduled) |

|---|---|---|---|---|

| Phishing (HiddenEye) | No | No | Yes (after tuning) | Scheduled |

| Account Discovery / User Enumeration | No | No | Yes | NRT |

| Kerberoasting | Yes | Yes | No (baseline) | Scheduled |

| SMB share enumeration / SMB-based activity | Mixed | Mixed | Yes (enumeration) | Scheduled |

| Credential dumping (secretsdump.py) | Yes | Yes | Yes | Scheduled |

| Credential dumping (regsecrets.py / LSASSY) | No (silent) | Yes (silent) | Yes | Scheduled |

| Pass-the-hash | Mixed | Mixed | Mixed (schema fixes needed) | Scheduled |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).