Submitted:

21 March 2026

Posted:

24 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Realated Works

2. Preliminaries: State Space, Action Space, and the Governance Operator

2.1. State, Observation, and Transition Model

2.2. Constraints and the Admissible Action Set

2.3. Formal Definition of the Governance Operator G

2.4. Governance-Induced Decision Drift and Minimal Intervention

2.5. Audit Trace Semantics

2.6. Replayability Conditions

2.7. Multi-Agent Controlled Agentic AI Systems

- Local, affecting individual agent actions independently.

- Coupled, constraining combinations of actions across agents.

- all proposals ,

- constraint evaluations on joint action,

- the applied governance mode,

- any inter-agent correction applied.

2.8. Bounded Decision Drift Induced by Governance

- The feasible set is non-empty and compact.

- The proposal mapping is Lipschitz continuous with constant .

- The projection operator is non-expansive.

2.9. Governance-Induced Drift in Federated Multi-Agent Learning

- Federated parameter deviation.

- Governance projection correction.

3. Experimental Design

3.1. Experimental Objectives and Hypotheses

3.2. Environments, State Representation, and Sensorization

3.3. Decision Models and Training Regimes

3.4. Governance Operator Configurations

3.5. Constraints and Admissibility Regimes

3.6. Metrics: Compliance, Drift, Stability, and Convergence

3.7. Adversarial and Distribution-Shift Stress Tests

3.8. Reproducibility Protocol and Trace-Based Provenance

3.9. Implementation Details and Reproducibility Configuration

3.9.1. Software Architecture and Determinism

3.9.2. Simulation Environment

3.9.3. Policy Models

3.9.4. Governance Operator Implementation

3.9.5. Federated Training Configuration

- Local training on private datasets.

- Gradient clipping and optional differential privacy noise (seed-controlled).

- Transmission of model updates.

- Global aggregation.

3.9.6. Audit Trace Storage and Verification

- full decision trajectory ,

- audit trace sequence ,

- configuration metadata including model version, constraint version, seed registry, and solver parameters.

3.9.7. Hardware and Runtime Configuration

3.9.8. Reproducibility Guarantee

- Identical configuration tuple .

- Identical executed action sequence .

- Identical hard-constraint satisfaction outcomes.

- Consistent audit hash chain .

4. Results

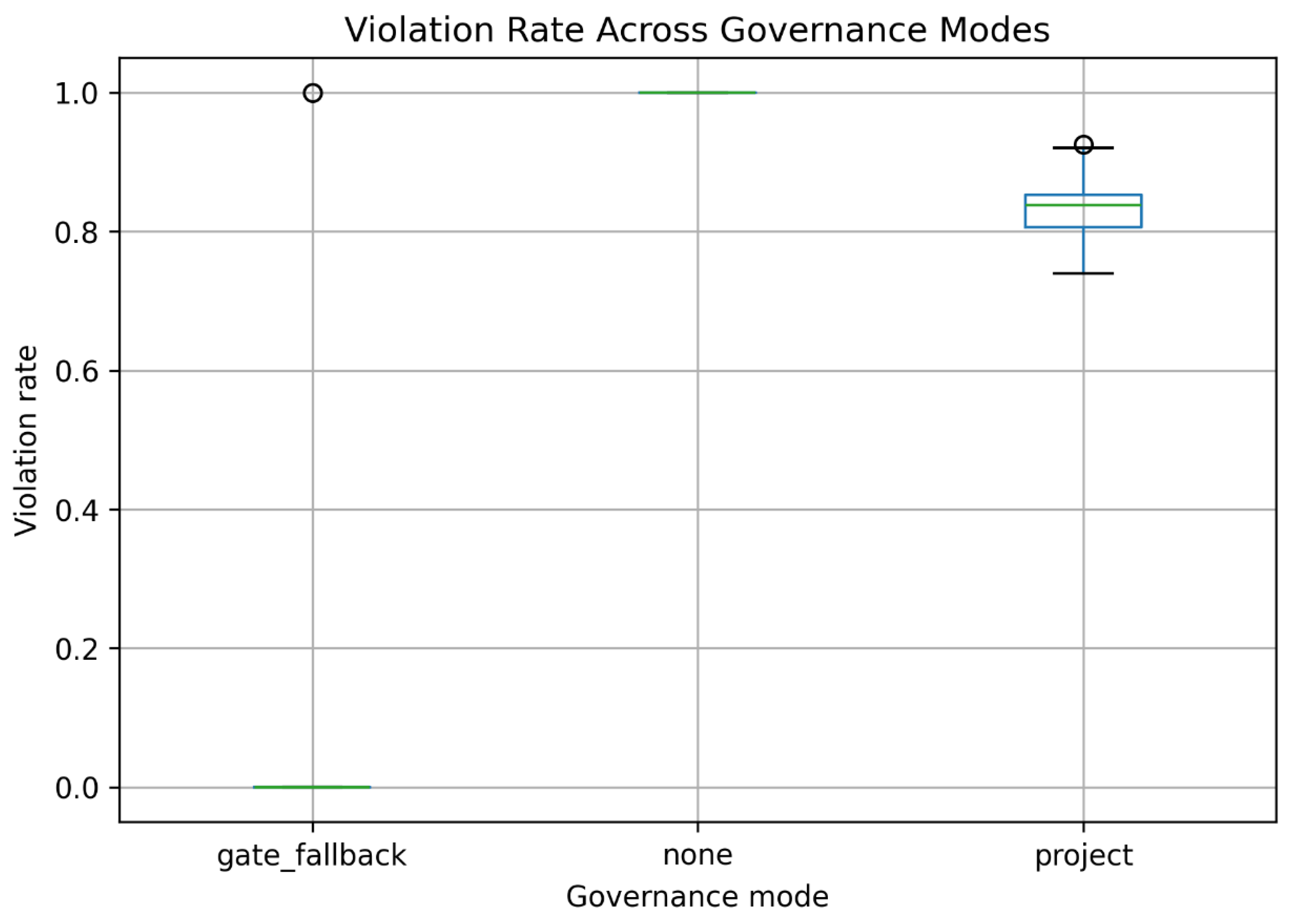

4.1. Constraint Compliance

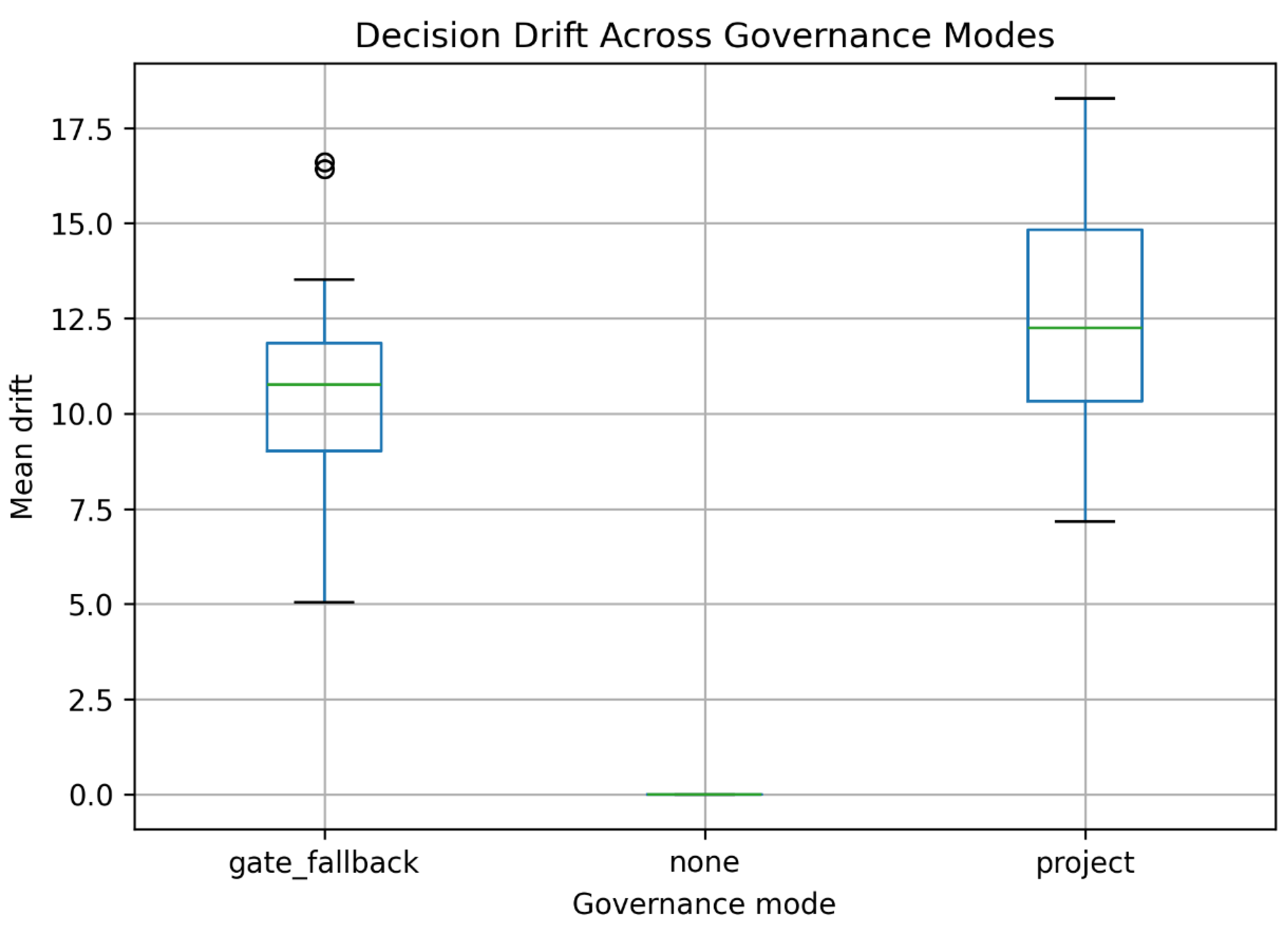

4.2. Decision Drift

4.3. Safety–Intervention Trade-off

4.4. Implications for Governance Design

4.5. Summary of H1

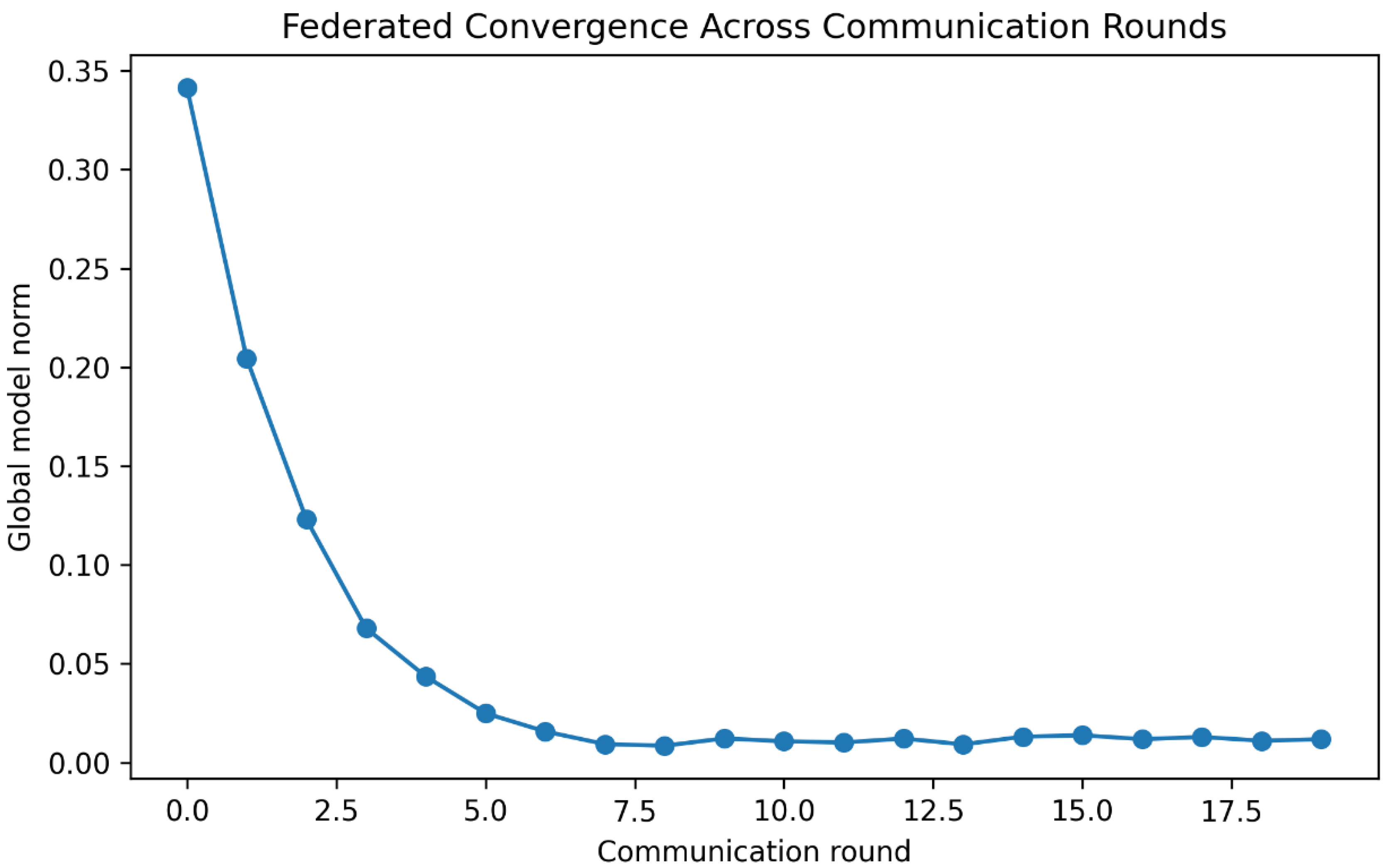

4.6. Federated Stability and Action Consistency

5. Discussion

5.1. Governance as a Structural Control Layer

5.2. Safety–Intervention Trade-off

5.3. Bounded Intervention and Stability

5.4. Federated Learning and Behavioral Stabilization

5.5. Limitations of Projection-Based Governance

5.6. Implications for Safe AI System Design

6. Conclusion

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Pillay, N.; Nyathi, T.; Venayagamoorthy, G.K. Artificial Intelligence for Critical Infrastructure Systems: Past, Present and Future. In Proceedings of the International Joint Conference on Neural Networks (IJCNN), Rome, Italy, 2025; pp. 1–9. [Google Scholar] [CrossRef]

- Agarwal, A.; Nene, M.J. Addressing AI Risks in Critical Infrastructure: Formalising the AI Incident Reporting Process. In Proceedings of the IEEE International Conference on Electronics, Computing and Communication Technologies (CONECCT), Bangalore, India, 2024; pp. 1–6. [Google Scholar] [CrossRef]

- Chen, S.Y.-C.; Chen, K.-C. Quantum Artificial Intelligence for Critical Infrastructure: A Survey and Vision. In Proceedings of the International Joint Conference on Neural Networks (IJCNN), Rome, Italy, 2025; pp. 1–8. [Google Scholar] [CrossRef]

- Ali, S.M.; Razzaque, A.; Yousaf, M.; Shan, R.U. An Automated Compliance Framework for Critical Infrastructure Security through Artificial Intelligence. IEEE Access 2025, 13, 4436–4459. [Google Scholar] [CrossRef]

- Kollipara, Y.V.P. Assured, Explainable, and Auditable AI for High-Stakes Decisions: A Survey of Trustworthy Machine Learning in Mission-Critical Systems. J. Int. Crisis Risk Commun. Res. 2025, 8. [Google Scholar] [CrossRef]

- Jaziri, W.; Sassi, N. Explainable by Design: Enhancing Trustworthiness in AI-Driven Control Systems. Mathematics 2025, 13, 3805. [Google Scholar] [CrossRef]

- Singh, Y.; Hathaway, Q.A.; Keishing, V.; Salehi, S.; Wei, Y.; Horvat, N.; Vera-Garcia, D.V.; Choudhary, A.; Mula Kh, A.; Quaia, E.; et al. Beyond Post hoc Explanations: A Comprehensive Framework for Accountable AI in Medical Imaging. Bioengineering 2025, 12, 879. [Google Scholar] [CrossRef] [PubMed]

- Adabara, I.; Olaniyi, S.B.; Nuhu, S.A.; et al. Trustworthy Agentic AI Systems: A Cross-Layer Review of Architectures, Threat Models, and Governance Strategies. F1000Research 2025, 14, 905. [Google Scholar] [CrossRef]

- Biswas, B.; Sarkar, S. Responsible Agentic Artificial Intelligence Governance: Risk, Safety, and Ethical Challenges. Int. J. Appl. Resil. Sustain. 2026, 2, 142–167. [Google Scholar] [CrossRef]

- de Witt, C.S. Open Challenges in Multi-Agent Security: Towards Secure Systems of Interacting AI Agents. arXiv 2025, arXiv:2505.02077. [Google Scholar] [CrossRef]

- Hashi, A.I.; Hashi, A.M.; Jama, O.A. Trustworthy AI Governance Framework for Autonomous Systems. Int. J. Comput. Trends Technol. 2026, 74, 12–27. [Google Scholar] [CrossRef]

- Eden, R.; Chukwudi, I.; Bain, C.; et al. Governance of Federated Learning in Healthcare: A Scoping Review. npj Digit. Med. 2025, 8, 427. [Google Scholar] [CrossRef]

- Matta, S.S.; Bolli, M. Trustworthy AI: Explainability and Fairness in Large-Scale Decision Systems. Rev. Appl. Sci. Technol. 2023, 2, 54–93. [Google Scholar] [CrossRef]

- Basir, O.A. The Social Responsibility Stack: A Control-Theoretic Architecture for Governing Socio-Technical AI. arXiv 2025, arXiv:2512.16873. [Google Scholar]

- Butt, T.A.; Iqbal, M.; Arshad, N. From Policy to Pipeline: A Governance Framework for AI Development and Operations Pipelines. IEEE Access 2026, 14, 1373–1397. [Google Scholar] [CrossRef]

- Muhammad, A.E.; Yow, K.-C. Risk-Based AI Assurance Framework. Information 2026, 17, 263. [Google Scholar] [CrossRef]

- Achiam, J.; Held, D.; Tamar, A.; Abbeel, P. Constrained Policy Optimization. In Proceedings of the International Conference on Machine Learning, 2017; pp. 22–31. [Google Scholar]

- Chow, Y.; Nachum, O.; Duenez-Guzman, E.; Ghavamzadeh, M. A Lyapunov-Based Approach to Safe Reinforcement Learning. In Advances in Neural Information Processing Systems; 2018, Volume 31.

- Tearle, B.; Wabersich, K.P.; Carron, A.; Zeilinger, M.N. A Predictive Safety Filter for Learning-Based Racing Control. IEEE Robot. Autom. Lett. 2021, 6, 7635–7642. [Google Scholar] [CrossRef]

- Alshiekh, M.; Bloem, R.; Ehlers, R.; Könighofer, B.; Niekum, S.; Topcu, U. Safe Reinforcement Learning via Shielding. In Proceedings of the AAAI Conference on Artificial Intelligence, 2018; 32. [Google Scholar] [CrossRef]

- Ames, A.D.; Xu, X.; Grizzle, J.W.; Tabuada, P. Control Barrier Function Based Quadratic Programs for Safety Critical Systems. IEEE Trans. Autom. Control 2017, 62, 3861–3876. [Google Scholar] [CrossRef]

- Xiao, W.; Belta, C. Control Barrier Functions for Systems with High Relative Degree. In Proceedings of the IEEE Conference on Decision and Control (CDC), Nice, France, 2019; pp. 474–479. [Google Scholar] [CrossRef]

- Cheng, R.; Orosz, G.; Murray, R.M.; Burdick, J.W. End-to-End Safe Reinforcement Learning through Barrier Functions. In Proceedings of the AAAI Conference on Artificial Intelligence, 2019; pp. 3387–3395. [Google Scholar]

- Doshi-Velez, F.; Kim, B. Towards a Rigorous Science of Interpretable Machine Learning. arXiv 2017, arXiv:1702.08608. [Google Scholar] [CrossRef]

- Cannarsa, M. Ethics Guidelines for Trustworthy AI. In The Cambridge Handbook of Lawyering in the Digital Age; 2021; pp. 97–283. [Google Scholar]

- Floridi, L.; Cowls, J.; Beltrametti, M.; Chatila, R.; Chazerand, P.; Dignum, V.; et al. AI4People—An Ethical Framework for a Good AI Society. Minds Mach. 2018, 28, 689–707. [Google Scholar] [CrossRef] [PubMed]

- Arrieta, A.B.; Díaz-Rodríguez, N.; Del Ser, J.; Bennetot, A.; Tabik, S.; Barbado, A.; et al. Explainable Artificial Intelligence (XAI): Concepts and Challenges. Inf. Fusion 2020, 58, 82–115. [Google Scholar] [CrossRef]

- McMahan, B.; Moore, E.; Ramage, D.; Hampson, S.; Arcas, B.A.y. Communication-Efficient Learning of Deep Networks from Decentralized Data. In Artificial Intelligence and Statistics; 2017; pp. 1273–1282. [Google Scholar]

- Kairouz, P.; McMahan, H.B. Advances and Open Problems in Federated Learning. Found. Trends Mach. Learn. 2021, 14, 1–210. [Google Scholar] [CrossRef]

- Li, T.; Sahu, A.K.; Zaheer, M.; Sanjabi, M.; Talwalkar, A.; Smith, V. Federated Optimization in Heterogeneous Networks. Proc. Mach. Learn. Syst. 2020, 2, 429–450. [Google Scholar]

- Karimireddy, S.P.; Kale, S.; Mohri, M.; Reddi, S.; Stich, S.; Suresh, A.T. SCAFFOLD: Stochastic Controlled Averaging for Federated Learning. In Proceedings of ICML; 2020. [CrossRef]

- Lowe, R.; Wu, Y.I.; Tamar, A.; Harb, J.; Abbeel, P.; Mordatch, I. Multi-Agent Actor-Critic for Mixed Environments. In Advances in Neural Information Processing Systems; 2017, Volume 30.

- Zhang, K.; Yang, Z.; Başar, T. Multi-Agent Reinforcement Learning: A Selective Overview. In Handbook of Reinforcement Learning and Control; 2021; pp. 321–384. [Google Scholar] [CrossRef]

- Busoniu, L.; Babuska, R.; De Schutter, B. A Comprehensive Survey of Multiagent Reinforcement Learning. IEEE Trans. Syst. Man Cybern. C 2008, 38, 156–172. [Google Scholar] [CrossRef]

- Hung, W.; Sun, S.H.; Hsieh, P.C. Efficient Action-Constrained Reinforcement Learning. arXiv 2025, arXiv:2503.12932. [Google Scholar] [CrossRef]

- Tessler, C.; Mankowitz, D.J.; Mannor, S. Reward Constrained Policy Optimization. arXiv 2018, arXiv:1805.11074. [Google Scholar] [CrossRef]

- Dalal, G.; Dvijotham, K.; Vecerik, M.; Hester, T.; Paduraru, C.; Tassa, Y. Safe Exploration in Continuous Action Spaces. arXiv 2018, arXiv:1801.08757. [Google Scholar] [CrossRef]

- Kilian, K.A. Structural Risk Dynamics of Artificial Intelligence. AI Soc. 2026, 41, 23–42. [Google Scholar] [CrossRef]

- Ferrari, L.; Frosini, P.; Quercioli, N.; Tombari, F. A Topological Model for Partial Equivariance. Front. Artif. Intell. 2023, 6, 1272619. [Google Scholar] [CrossRef]

- Tao, Y. The Decision Path to Control AI Risks Completely. arXiv 2025, arXiv:2512.04489. [Google Scholar] [CrossRef]

- Konakanchi, M.S.K. Aegis: AI-Driven Governance Framework for Micro-Frontend Architectures. IJAIDR 2025, 17. [Google Scholar]

- Garro, R.J.; Wibowo, S.; Wilson, C.S.; Pordomingo, A.J. Federated Learning Architecture for Precision Livestock Systems. In Proceedings of ICICoS; 2025, pp. 340–345. [CrossRef]

- Jiang, Y.; Wang, F.; Pang, X. Personalized Federated Learning for Gastric Cancer Classification. In Proceedings of ICCC; 2024, pp. 771–775. [CrossRef]

- Wei, W.; Liu, L. Trustworthy Distributed AI Systems: Robustness, Privacy, and Governance. ACM Comput. Surv. 2025, 57, 1–42. [Google Scholar] [CrossRef]

- Li, F.; Li, M.; Li, S.; Wu, Y.; Song, Y.; Li, H. Rational-Safe Reinforcement Learning for Energy Management. In Proceedings of IECON; Madrid, Spain, 2025, pp. 1–6. [CrossRef]

| mode | n | mean | std | ci95_lo | ci95_hi |

| gate_fallback | 30 | 0.0333 | 0.1826 | 0.0000 | 0.1000 |

| none | 30 | 1.0000 | 0.0000 | 1.0000 | 1.0000 |

| project | 30 | 0.8317 | 0.0465 | 0.8150 | 0.8472 |

| group_a | group_b | u_stat | p_value | cliffs_delta |

| gate_fallback | none | 15 | 1.165e-13 | -0.9667 |

| gate_fallback | project | 30 | 4.483e-11 | -0.9333 |

| none | project | 900 | 1.187e-12 | 1 |

| mode | n | mean | std | ci95_lo | ci95_hi |

| gate_fallback | 30 | 10.3659 | 2.7783 | 9.3446 | 11.3662 |

| none | 30 | 0.0000 | 0.0000 | 0.0000 | 0.0000 |

| project | 30 | 12.7434 | 3.0639 | 11.6141 | 13.7731 |

| gate_fallback | none | 900 | 1.212e-12 | 1 |

| gate_fallback | project | 260 | 0.005084 | -0.4222 |

| none | project | 0 | 1.212e-12 | -1 |

| metric | value |

| violations_on_bench | 0.0 |

| action_var_mean | 0.012298361767100896 |

| mean_drift | 0.03323036206708486 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).