Submitted:

20 March 2026

Posted:

24 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Relationship to Multi-Agent Approaches

1.2. Contributions

- A resource allocation formulation of disagreement resolution under an error correlation framework, connecting graduated escalation to information-theoretic considerations about evaluation signal.

- A protocol with three escalation levels, domain-calibrated thresholds, and a steelman exchange mechanism that encourages genuine engagement with opposing reasoning structures.

- A clean-context critique strategy with concrete heuristics for reducing inheritance of error-producing reasoning traces, including state management mechanisms for long-session workflows.

- Pre-specified benchmark families targeting technical reasoning reliability, specifically seeded derivation errors and long-context constraint retention, rather than generic hallucination detection.

1.3. Status of This Paper

1.4. Scope

2. Theoretical Grounding: From Bounds to Architecture

2.1. The Correlated Error Problem

2.2. The Resource Allocation Problem

Given a fixed verification budget B, allocate decorrelated evaluation across queries to maximize the total expected information gain about correctness.

2.3. Disagreement as Epistemic Signal

2.4. From Bounds to Triage

- Level 0 (Accept synthesis): Estimated is low. Proposers agree within tolerance. Correlated self-evaluation would suffice; decorrelated evaluation would add cost without commensurate information.

- Level 1 (Flag with uncertainty): Estimated is moderate but below escalation threshold. The system accepts the majority position but preserves the disagreement signal for human review.

- Level 2 (Escalate): Estimated exceeds the threshold. The system invests in expensive decorrelated evaluation: steelman exchange, adversarial cross-examination, or external verification.

3. System Design

3.1. Functional Components

3.2. The Comparator Problem

- Structured comparison templates. Domain-specific checklists that decompose comparison into mechanically answerable sub-questions (do the final expressions match? do the stated assumptions match? do the cited intermediate results agree?) rather than asking for a holistic similarity judgment.

- Comparator calibration. Estimating the comparator’s false negative rate (meaningful disagreement classified as noise) on held-out examples with known ground truth, and adjusting escalation thresholds to compensate. Conservative thresholds over-escalate, which wastes budget but preserves safety.

- Comparator decorrelation. Running the comparator in a different model family from the proposers, or using an ensemble of comparators with diversity requirements analogous to those for proposers.

3.3. Clean-Context Critique

3.3.1. Practical Heuristics

Answer-Only Transfer.

Structured Summary Transfer.

Constraint Reinjection.

Role-Specific Framing.

Checkpoint Compression.

3.3.2. State Management for Long Sessions

4. Graduated Dissent Protocol

4.1. Protocol Overview

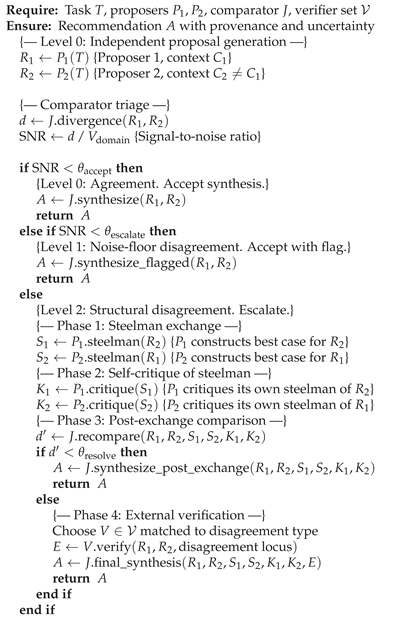

| Algorithm 1 Graduated Dissent Protocol |

|

4.2. Level 0: Agreement Check

4.3. Level 1: Noise-Floor Analysis

Convergence Monitoring.

4.4. Level 2: Adversarial Cross-Examination

4.4.1. Phase 1: Steelman Exchange

4.4.2. Phase 2: Self-Critique of Steelman

4.4.3. Phase 3: Post-Exchange Comparison

4.4.4. Phase 4: External Verification

4.5. Domain-Calibrated Thresholds

Calibration Procedure.

5. Targeted External Verification

5.1. Verification as Escalation, Not Default

5.2. Verification Modalities

Formal Verification.

Executable Verification.

Numerical Invariant Checking.

Retrieval-Grounded Source Verification.

5.3. Verification Dispatch

6. Benchmarking Strategy

6.1. Complementing Existing Evaluation Benchmarks

6.2. Benchmark Family A (Primary): Seeded Derivation Errors

Construction Protocol.

- (a)

- Sign error: flip a sign in an intermediate step (e.g., ).

- (b)

- Unjustified substitution: replace a variable or expression with a related but incorrect one (e.g., substitute a Taylor expansion truncated at the wrong order).

- (c)

- Dropped term: remove a term that contributes to the final result, preserving surface plausibility.

- (d)

- Domain violation: introduce a step that violates a constraint (e.g., divide by a quantity that can be zero, exchange limits without justification, apply a theorem outside its domain of validity).

Evaluation Conditions.

- Single-model long-context chain-of-thought (baseline)

- Same-model fresh-context critique (context separation only)

- Multi-model protocol without external verification (protocol without tools)

- Multi-model protocol with targeted external verification (full system)

Metrics:

Predictions.

6.3. Benchmark Family B (Secondary): Constraint Retention Under Long Context

6.4. Manuscript Claim Evaluation (Future Work)

6.5. Evaluation Metrics

- Reliability. Error detection rate and false acceptance rate across conditions.

- Resolution quality. Whether escalation improves outcomes on the queries that trigger it. Measured by comparing accuracy of the system’s post-escalation output on Level 2 queries against the accuracy that Level 0 forced synthesis would have produced on those same queries (e.g., majority vote of initial proposers). This directly measures the value added by the escalation investment.

- Calibration. Whether system confidence tracks actual correctness. Measured by calibration curves and Brier scores.

- Efficiency. Reliability gain per unit of compute. The primary measure is relative to single-model baseline. To support optimization of individual protocol components, benchmarks will additionally log: total token count per instance across all model calls, wall-clock latency from query to final recommendation, total compute time across all parallel and sequential processes, and per-component breakdowns (proposal generation, comparison, steelman exchange, verification). These fine-grained logs allow practitioners adopting any subset of the protocol to identify cost bottlenecks and optimize selectively.

7. Design Motivations

8. Failure Modes of the Architecture

Correlated Proposers.

Comparator Bias.

Verification Mismatch.

Excessive Escalation.

Human Confirmation Bias.

Steelman Collapse.

9. Related Work

LLM Self-Correction.

Self-Consistency.

Ensemble Methods.

Multi-Agent Debate.

Multi-Agent Frameworks.

Process Supervision.

Adversarial Collaboration.

Test-Time Scaling.

10. Discussion

10.1. Relation to Chain-of-Thought

10.2. Relation to Test-Time Compute Scaling

10.3. Human Adjudication

11. Conclusions

Acknowledgments

Use of AI Tools

Conflicts of Interest

References

- Brilliant, Andrew Michael. Limits of self-correction in LLMs: An information-theoretic analysis of correlated errors. Preprints.org 2026. [Google Scholar] [CrossRef]

- Huang, Jie; Chen, Xinyun; Mishra, Swaroop; Zheng, Huaixiu Steven; Yu, Adams Wei; Song, Xinying; Zhou, Denny. Large language models cannot self-correct reasoning yet. In Proceedings of the Twelfth International Conference on Learning Representations (ICLR), 2024. [Google Scholar]

- Tsui, Ken. Self-correction bench: Uncovering and addressing the self-correction blind spot in large language models. arXiv 2025, arXiv:2507.02778. [Google Scholar]

- Kim, Elliot; Garg, Avi; Peng, Kenny; Garg, Nikhil. Correlated errors in large language models. In Proceedings of the 42nd International Conference on Machine Learning (ICML), 2025. [Google Scholar]

- Baba, Kaito; Liu, Chaoran; Kurita, Shuhei; Sannai, Akiyoshi. Prover agent: An agent-based framework for formal mathematical proofs. arXiv arXiv:2506.19923, 2025. [CrossRef]

- Wang, Xuezhi; et al. Self-consistency improves chain of thought reasoning in language models. In Proceedings of the Eleventh International Conference on Learning Representations (ICLR), 2023. [Google Scholar]

- Du, Yilun; Li, Shuang; Torralba, Antonio; Tenenbaum, Joshua B.; Mordatch, Igor. Improving factuality and reasoning in language models through multiagent debate. In Proceedings of the 41st International Conference on Machine Learning (ICML), 2024. [Google Scholar]

- Liang, Tian; et al. Encouraging divergent thinking in large language models through multi-agent debate. arXiv 2023, arXiv:2305.19118. [Google Scholar] [CrossRef]

- Chen, Justin Chih-Yao; Saha, Swarnadeep; Bansal, Mohit. ReConcile: Round-table conference improves reasoning via consensus among diverse LLMs. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (ACL), 2024. [Google Scholar]

- Lightman, Hunter; et al. Let’s verify step by step. arXiv 2023, arXiv:2305.20050. [Google Scholar]

- Liu, Nelson F.; Lin, Kevin; Hewitt, John; Paranjape, Ashwin; Bevilacqua, Michele; Petroni, Fabio; Liang, Percy. Lost in the middle: How language models use long contexts. Transactions of the Association for Computational Linguistics 2024, 12, 157–173. [Google Scholar] [CrossRef]

- Mellers, Barbara; Hertwig, Ralph; Kahneman, Daniel. Do frequency representations eliminate conjunction effects? An exercise in adversarial collaboration. Psychological Science 2001, 12(4), 269–275. [Google Scholar] [CrossRef] [PubMed]

- Kahneman, Daniel. A perspective on judgment and choice: Mapping bounded rationality. American Psychologist 2003, 58(9), 697–720. [Google Scholar] [CrossRef] [PubMed]

- Zeng, Zhiyuan; Cheng, Qinyuan; Yin, Zhangyue; Zhou, Yunhua; Qiu, Xipeng. Revisiting the test-time scaling of o1-like models: Do they truly possess test-time scaling capabilities? In Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (ACL), 2025; pp. pages 4651–4665. [Google Scholar]

- Wu, Qingyun; et al. AutoGen: Enabling next-gen LLM applications via multi-agent conversation. arXiv 2023, arXiv:2308.08155. [Google Scholar]

- Li, Guohao; et al. CAMEL: Communicative agents for “mind” exploration of large language model society. Advances in Neural Information Processing Systems 2023, 36. [Google Scholar]

- Hong, Sirui; et al. MetaGPT: Meta programming for a multi-agent collaborative framework. arXiv 2023, arXiv:2308.00352. [Google Scholar]

| Domain | Policy | Rationale |

|---|---|---|

| Formal mathematics/proofs | Strict accept, narrow noise floor | Small divergences can indicate proof failure; formal verification available as escalation target |

| Technical derivations | Strict accept, narrow noise floor | Sign errors, dropped terms, and hidden assumptions matter; numerical invariants available |

| Numerical/physics reasoning | Strict accept, moderate noise floor | Precision critical; strong formal constraints but some methodological variation expected |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).