Submitted:

20 March 2026

Posted:

23 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

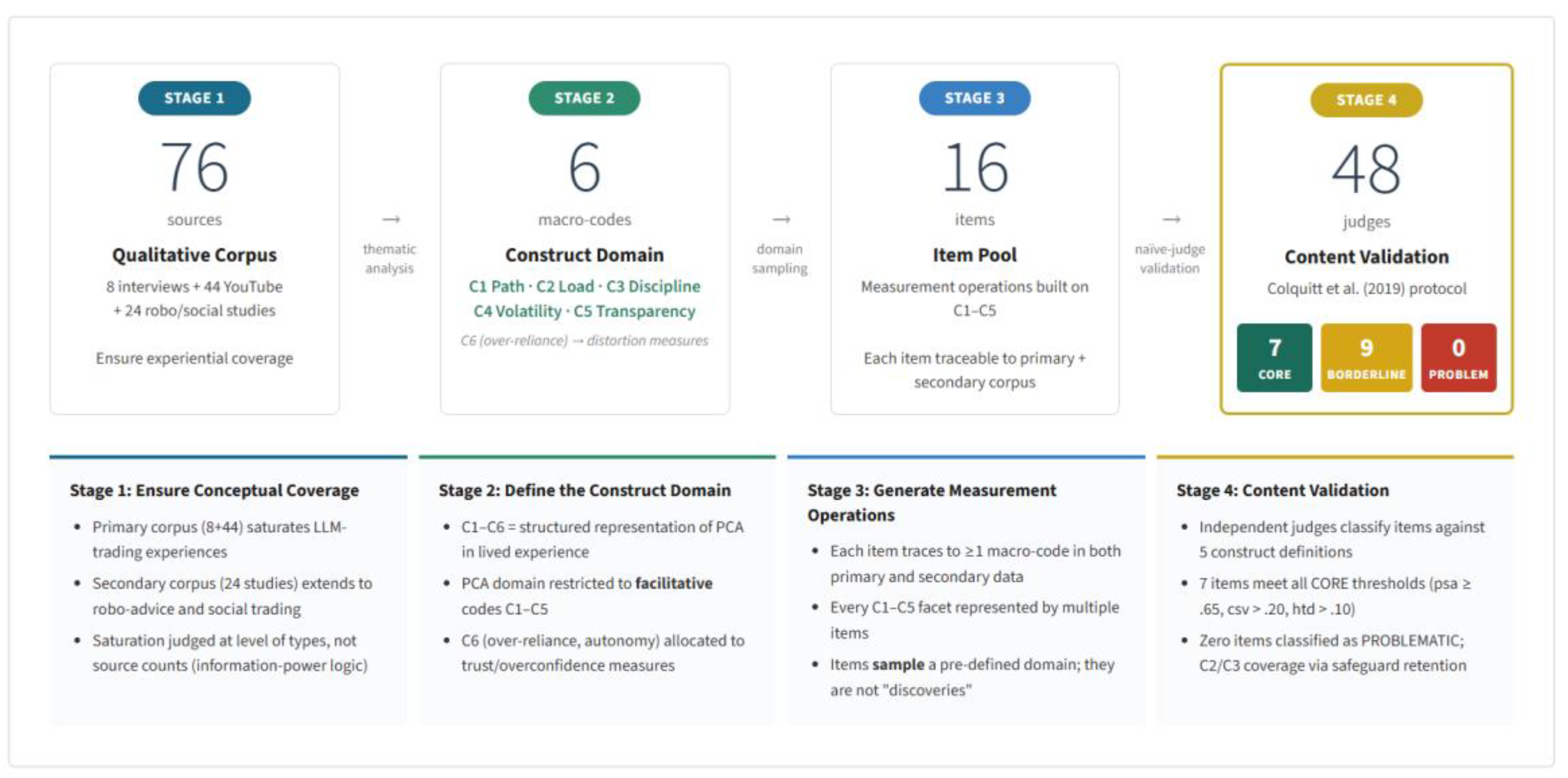

2.1. Study 1: Construct Definition and Item Generation

2.1.1. Qualitative Data Sources

2.1.2. Analytical Procedure

2.1.3. Saturation Logic

2.1.4. Item-Generation Rules

2.2. Study 2: Content Validation

2.2.1. Design Rationale

2.2.2. Participants

2.2.3. Materials

2.2.4. Canonical vs PCA Items and Scale Architecture

2.2.5. Procedure

2.2.6. Decision Metrics and Thresholds

2.2.7. Computational Analysis and Verification

3. Results

3.1. Study 1: Construct Domain and Item Pool

3.1.1. PCA Macro-Codes (C1–C5) and an Adjacent Risk Code (C6)

3.1.2. Saturation Evidence

3.1.3. Item Pool (16 Candidate Items)

3.2. Study 2: Content Validation

3.2.1. Data Quality and Calibration Checks

| Indicator | Result | Interpretation benchmark |

|---|---|---|

| AC1 pass rate | 96.1% | High engagement |

| AC2 pass rate | 98.0% | High engagement |

| Joint AC pass rate | 96.1% | High engagement |

| Filler accuracy: PEOU | 91.7% | ≥ 85% target met |

| Filler accuracy: Trust | 89.6% | ≥ 85% target met |

| Filler accuracy: TSE | 95.8% | ≥ 85% target met |

| Filler accuracy: PU | 81.2% | < 85% indicates PCA–PU proximity |

3.2.2. Item-Level Content Validation Outcomes

3.2.3. Subdimension Coverage and Safeguard Retention

| Macro-code | CORE coverage present | Safeguard action (if needed) |

|---|---|---|

| C1 | Yes | None |

| C2 | No | Retain PCA5 as a safeguard |

| C3 | No | Retain PCA8 as a safeguard |

| C4 | Yes | None |

| C5 | Yes | None |

| Item ID | Full item wording (verbatim) | Primary macro-code |

|---|---|---|

| PCA1 | Using an LLM provides a structured path from my initial trading idea to a concrete order. | C1 |

| PCA3 | LLM support enables me to translate my market view into a precise, executable trade plan. | C1 |

| PCA5 | With LLM help, I can process more information at once without feeling overloaded. | C2 (safeguard) |

| PCA8 | Using an LLM enhances my ability to spot inconsistencies or gaps in my trading plans. | C3 (safeguard) |

| PCA11 | LLM support helps me compare alternative tactics for the same view side by side. | C4 |

| PCA12 | LLM support helps me think through what-if scenarios and plan for different market paths. | C4 |

| PCA14 | LLM support helps me understand the rationale behind a strategy’s suitability for my market view. | C5 |

| PCA15 | LLM support expands the range of trading scenarios I am able to mentally simulate. | C5 |

| PCA16 | LLM support facilitates reflection on my decision-making process during trade review. | C5 |

3.2.4. Inter-Rater Reliability

3.2.5. Definitional Correspondence, Definitional Distinctiveness, and the Operational PCA Set

4. Discussion

5. Conclusions

| Appendix | What it contains | Role in research |

|---|---|---|

| Appendix A | Study 2 instrument pack: final PCA item wording (PCA1–PCA16) plus filler-item wordings; construct definitions shown to judges (PCA, PU, PEOU, Trust, TSE); screen flow; comprehension checks; attention checks; rating scales; a priori decision rules | Study 2 materials (audit trail for what judges saw and how decisions were made) |

| Appendix B | Qualitative coding frame support: C1–C6 definitions; presence–absence matrix; item-by-code mapping | Study 1 evidence for domain specification and item traceability |

| Appendix C | Tier A corpus evidence table (24 studies) + selection notes (including wave logic if you keep it) Additional references: (Back et al., 2023; Belanche et al., 2025; Bhatia et al., 2020, 2022; Brenner & Meyll, 2019; Castillo et al., 2021; Chandani et al., 2021; Cheng et al., 2019; Costa & Henshaw, 2025; D’Acunto et al., 2019; Dietvorst et al., 2015; Gimmelberg, Glowacka, et al., 2025; Hidajat et al., 2024; Komatireddy et al., 2024; Nashold, 2020; Northey et al., 2022; Nourallah et al., 2022; Prahl & Van Swol, 2017; Senteio & Hughes, 2024; Skiera, 2021; “Sophia Sophia Tell Me More, Which Is the Most Risk-Free Plan of All?,” 2022; Verma et al., 2025; Yi et al., 2023; Zhu et al., 2023) |

Study 1 triangulation and corpus construction |

| Appendix D | Post-experiment outputs without raw data/code: full item-level content-validity indices and thresholds applied; any expanded versions of Table 3, Table 4 and Table 5; optional extra diagnostics (for example, full confusion table or kappa detail). Additional references: (Landis & Koch, 1977) | Extended Study 2 results (supplementary tables) |

| Appendix E | Canonical vs PCA items and scale architecture (Table E1): canonical “item universe” sources and retained blocks; rationale for inclusion/exclusion. Additional references: (Fornell & Larcker, 1981; Grable & Lytton, 1999; Lewis et al., 2013; Venkatesh & Davis, 2000) | Measurement architecture documentation (belongs conceptually to Methods) |

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Ahn, Y. The anatomy of the disposition effect: Which factors are most important? Finance Research Letters 2022, 44, 102040. [Google Scholar] [CrossRef]

- Ajzen, I. The theory of planned behavior. Organizational Behavior and Human Decision Processes, Theories of Cognitive Self-Regulation 1991, 50(2), 179–211. [Google Scholar] [CrossRef]

- Ajzen, I. Constructing a TpB Questionnaire: Conceptual and Methodological Considerations. 2006. Available online: https://www.semanticscholar.org/paper/Constructing-a-TpB-Questionnaire%3A-Conceptual-and-Ajzen/0574b20bd58130dd5a961f1a2db10fd1fcbae95d.

- Ajzen, I. The theory of planned behaviour: Reactions and reflections. Psychology & Health 2011, 26, 1113–1127. [Google Scholar] [CrossRef]

- Ali, I.; Warraich, N. F.; Butt, K. Acceptance and use of artificial intelligence and AI-based applications in education: A meta-analysis and future direction. Information Development 2025, 41(3), 859–874. [Google Scholar] [CrossRef]

- Anderson, J. C.; Gerbing, D. W. Predicting the performance of measures in a confirmatory factor analysis with a pretest assessment of their substantive validities. Journal of Applied Psychology 1991, 76(5), 732–740. [Google Scholar] [CrossRef]

- Back, C.; Morana, S.; Spann, M. When do robo-advisors make us better investors? The impact of social design elements on investor behavior. Journal of Behavioral and Experimental Economics 2023, 103, 101984. [Google Scholar] [CrossRef]

- Barber, B. M.; Huang, X.; Odean, T.; Schwarz, C. Attention Induced Trading and Returns: Evidence from Robinhood Users (SSRN Scholarly Paper No. 3715077). Social Science Research Network. 2021. [Google Scholar] [CrossRef]

- Barber, B. M.; Odean, T. Trading Is Hazardous to Your Wealth: The Common Stock Investment Performance of Individual Investors. The Journal of Finance 2000, 55(2), 773–806. [Google Scholar] [CrossRef]

- Bauer, R.; Cosemans, M.; Eichholtz, P. Option trading and individual investor performance. Journal of Banking & Finance 2009, 33(4), 731–746. [Google Scholar] [CrossRef]

- Belanche, D.; Casaló, L. V.; Flavián, M.; Loureiro, S. M. C. Benefit versus risk: A behavioral model for using robo-advisors. The Service Industries Journal 2025, 45(1), 132–159. [Google Scholar] [CrossRef]

- Bhatia, A.; Chandani, A.; Chhateja, J. Robo advisory and its potential in addressing the behavioral biases of investors—A qualitative study in Indian context. Journal of Behavioral and Experimental Finance 2020, 25, 100281. [Google Scholar] [CrossRef]

- Bhatia, A.; Chandani, A.; Divekar, R.; Mehta, M.; Vijay, N. Digital innovation in wealth management landscape: The moderating role of robo advisors in behavioural biases and investment decision-making. International Journal of Innovation Science 2022, 14(3/4), 693–712. [Google Scholar] [CrossRef]

- Bikhchandani, S.; Sharma, S. Herd Behavior in Financial Markets. IMF Staff Papers 2001, 47, 1–1. [Google Scholar] [CrossRef]

- Boateng, G. O.; Neilands, T. B.; Frongillo, E. A.; Melgar-Quiñonez, H. R.; Young, S. L. Best Practices for Developing and Validating Scales for Health, Social, and Behavioral Research: A Primer. Frontiers in Public Health 2018, 6. [Google Scholar] [CrossRef]

- Braun, V.; Clarke, V. Using thematic analysis in psychology. Qualitative Research in Psychology 2006, 3(2), 77–101. [Google Scholar] [CrossRef]

- Brenner, L.; Meyll, T. Robo-Advisors: A Substitute for Human Financial Advice? (SSRN Scholarly Paper No. 3414200). Social Science Research Network; 2019. [Google Scholar] [CrossRef]

- Byrt, T.; Bishop, J.; Carlin, J. B. Bias, prevalence and kappa. Journal of Clinical Epidemiology 1993, 46(5), 423–429. [Google Scholar] [CrossRef]

- Campbell, D. T.; Fiske, D. W. Convergent and discriminant validation by the multitrait-multimethod matrix. Psychological Bulletin 1959, 56(2), 81–105. [Google Scholar] [CrossRef]

- Castillo, D.; Canhoto, A. I.; Said, E. The dark side of AI-powered service interactions: Exploring the process of co-destruction from the customer perspective. The Service Industries Journal 2021, 41(13–14), 900–925. [Google Scholar] [CrossRef]

- Chandani, A.; Sriharshitha, S.; Bhatia, A.; Atiq, R.; Mehta, M. Robo-Advisory Services in India: A Study to Analyse Awareness and Perception of Millennials. Int. J. Cloud Appl. Comput. 2021, 11(4), 152–173. [Google Scholar] [CrossRef]

- Chen, J.; Liu, Y.; Liu, P.; Zhao, Y.; Zuo, Y.; Duan, H. Adoption of Large Language Model AI Tools in Everyday Tasks: Multisite Cross-Sectional Qualitative Study of Chinese Hospital Administrators. Journal of Medical Internet Research 2025, 27(1), e70789. [Google Scholar] [CrossRef] [PubMed]

- Chen, Z. Revolutionizing finance with conversational AI: A focus on ChatGPT implementation and challenges. Humanities and Social Sciences Communications 2025, 12(1), 388. [Google Scholar] [CrossRef]

- Cheng, X.; Guo, F.; Chen, J.; Li, K.; Zhang, Y.; Gao, P.; Cheng, X.; Guo, F.; Chen, J.; Li, K.; Zhang, Y.; Gao, P. Exploring the Trust Influencing Mechanism of Robo-Advisor Service: A Mixed Method Approach. Sustainability 2019, 11(18). [Google Scholar] [CrossRef]

- Clark, L. A.; Watson, D. Constructing validity: Basic issues in objective scale development. Psychological Assessment 1995, 7(3), 309–319. [Google Scholar] [CrossRef]

- Clark, L. A.; Watson, D. Constructing validity: New developments in creating objective measuring instruments. Psychological Assessment 2019, 31(12), 1412–1427. [Google Scholar] [CrossRef]

- Colquitt, J. A.; Sabey, T. B.; Rodell, J. B.; Hill, E. T. Content validation guidelines: Evaluation criteria for definitional correspondence and definitional distinctiveness. Journal of Applied Psychology 2019, 104(10), 1243–1265. [Google Scholar] [CrossRef]

- Costa, Henshaw. Advice Pays in Peace of Mind and Time: Vanguard Survey Reveals Hidden Value of Financial Advice . 2025, 28. Available online: https://corporate.vanguard.com/content/dam/corp/research/pdf/quantifying-the-investors-view-on-the-value-of-human-and-robo-advice.pdf.

- D’Acunto, F.; Prabhala, N.; Rossi, A. G. The Promises and Pitfalls of Robo-Advising. The Review of Financial Studies 2019, 32(5), 1983–2020. [Google Scholar] [CrossRef]

- Davis, F. Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. MIS Quarterly 1989, 13(3), 319. [Google Scholar] [CrossRef]

- DeVellis, R. F. Scale Development: Theory and Applications (Fourth edition); SAGE Publications, 2016; Available online: https://books.google.lv/books?id=48ACCwAAQBAJ.

- Dietvorst, B. J.; Simmons, J. P.; Massey, C. Algorithm aversion: People erroneously avoid algorithms after seeing them err. Journal of Experimental Psychology. General 2015, 144(1), 114–126. [Google Scholar] [CrossRef] [PubMed]

- Dorobăț, I.; Corbea (Florea), A. M. I. Assessing ChatGPT Adoption in Higher Education: An Empirical Analysis. Electronics 2025, 14(23). [Google Scholar] [CrossRef]

- Eliner, L.; Kobilov, B. To the Moon or Bust: Do Retail Investors Profit From Social Media-Induced Trading? Retrieved February 11, 2026 . Available online: https://www.semanticscholar.org/paper/To-the-Moon-or-Bust%3A-Do-Retail-Investors-Pro%EF%AC%81t-From-Eliner-Kobilov/df50bcf89751137a5204432081a87cd25c77aa1e.

- Feinstein, A. R.; Cicchetti, D. V. High agreement but low kappa: I. The problems of two paradoxes. Journal of Clinical Epidemiology 1990, 43(6), 543–549. [Google Scholar] [CrossRef]

- Fleiss, J. L. Measuring nominal scale agreement among many raters. Psychological Bulletin 1971, 76(5), 378–382. [Google Scholar] [CrossRef]

- Fornell, C.; Larcker, D. F. Evaluating Structural Equation Models with Unobservable Variables and Measurement Error. Journal of Marketing Research 1981, 18(1), 39–50. [Google Scholar] [CrossRef]

- Gao, Y.; Xiong, Y.; Gao, X.; Jia, K.; Pan, J.; Bi, Y.; Dai, Y.; Sun, J.; Wang, M.; Wang, H. Retrieval-augmented generation for large language models: A survey. arXiv 2024, arXiv:2312.1099738. [Google Scholar] [CrossRef]

- Gimmelberg, D.; Glowacka, M.; Belinskiy, A.; Korotkii, S.; Artamov, V.; Ludviga, I. Bridging Human Expertise and AI: Evaluating the Role of Large Language Models in Retail Investors’ Decision-Making. International Journal of Finance & Banking Studies (2147-4486) 2025, 14(1), 20–29. [Google Scholar] [CrossRef]

- Gimmelberg, D.; Ludviga, I. Strategic Complexity and Behavioral Distortion: Retail Investing Under Large Language Model Augmentation. International Journal of Financial Studies 2025, 13(4). [Google Scholar] [CrossRef]

- Glikson, E.; Woolley, A. W. Human Trust in Artificial Intelligence: Review of Empirical Research. Academy of Management Annals 2020, 14(2), 627–660. [Google Scholar] [CrossRef]

- Grable, J.; Lytton, R. H. Financial risk tolerance revisited: The development of a risk assessment instrument☆. Financial Services Review 1999, 8(3), 163–181. [Google Scholar] [CrossRef]

- Guest, G.; Bunce, A.; Johnson, L. How Many Interviews Are Enough?: An Experiment with Data Saturation and Variability. Field Methods 2006, 18(1), 59–82. [Google Scholar] [CrossRef]

- Hennink, M.; Kaiser, B.; Marconi, V. C. Code Saturation Versus Meaning Saturation: How Many Interviews Are Enough? Qualitative Health Research 2017, 27(4), 591–608. [Google Scholar] [CrossRef]

- Henseler, J.; Ringle, C.; Sarstedt, M. A New Criterion for Assessing Discriminant Validity in Variance-based Structural Equation Modeling. Journal of the Academy of Marketing Science 2015, 43(1), 115–135. [Google Scholar] [CrossRef]

- Hidajat, T.; Hamdani, M.; Putri, R. K.; Ramadhan, A. M. Behavioral Biases and Trust in Social Trading: A Mixed-Method Approach. Jurnal Manajemen Indonesia 2024, 24(2), 214–226. [Google Scholar] [CrossRef]

- Hinkin, T.R. A review of scale development practices in the study of organizations. Journal of Management 1995, 21(5), 967–988. [Google Scholar] [CrossRef]

- Hinkin, T. R. A Brief Tutorial on the Development of Measures for Use in Survey Questionnaires. Organizational Research Methods 1998, 1(1), 104–121. [Google Scholar] [CrossRef]

- Hinkin, T. R.; Tracey, J. B. An Analysis of Variance Approach to Content Validation. Organizational Research Methods 1999, 2(2), 175–186. [Google Scholar] [CrossRef]

- Hoff, K. A.; Bashir, M. Trust in Automation: Integrating Empirical Evidence on Factors That Influence Trust. Human Factors 2015, 57(3), 407–434. [Google Scholar] [CrossRef]

- Hsieh, S.-F.; Chan, C.-Y.; Wang, M.-C. Retail investor attention and herding behavior. Journal of Empirical Finance 2020, 59, 109–132. [Google Scholar] [CrossRef]

- Jian, J.-Y.; Bisantz, A. M.; Drury, C. G. Foundations for an Empirically Determined Scale of Trust in Automated Systems. International Journal of Cognitive Ergonomics 2000, 4(1), 53–71. [Google Scholar] [CrossRef]

- Kahneman, D.; Tversky, A. Prospect Theory: An Analysis of Decision under Risk. Econometrica 1979, 47(2), 263–291. [Google Scholar] [CrossRef]

- Komatireddy, K.; Mangeshikar, S.; Gada, T. Augmenting Trust in Robo Advisor Experiences Through Thoughtful UX Design. FMDB Transactions on Sustainable Computing Systems 2024, 2(2), 54–63. [Google Scholar] [CrossRef]

- Kong, Y.; Nie, Y.; Dong, X.; Mulvey, J. M.; Poor, H. V.; Wen, Q.; Zohren, S. Large Language Models for Financial and Investment Management: Models, Opportunities, and Challenges. The Journal of Portfolio Management 2024, 51(2), 211–231. [Google Scholar] [CrossRef]

- Landis, J. R.; Koch, G. G. The Measurement of Observer Agreement for Categorical Data. Biometrics 1977, 33(1), 159–174. [Google Scholar] [CrossRef]

- Lee, J. D.; See, K. A. Trust in Automation: Designing for Appropriate Reliance. Human Factors: The Journal of the Human Factors and Ergonomics Society 2004, 46(1), 50–80. [Google Scholar] [CrossRef]

- Lewis, J. R.; Utesch, B. S.; Maher, D. E. UMUX-LITE: When there’s no time for the SUS. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems CHI ’13, 2013; pp. 2099–2102. [Google Scholar] [CrossRef]

- Lee, J.; Stevens, N.; Han, S. C.; Song, M. Large language models in finance (FinLLMs). Neural Computing and Applications 2025, 37, 24853–24867. [Google Scholar] [CrossRef]

- Li, Y.; Wang, S.; Ding, H.; Chen, H. Large language models in finance: A survey. arXiv 2023, arXiv:2311.1072360. [Google Scholar] [CrossRef]

- Lopez-Lira, A.; Tang, Y. Can ChatGPT Forecast Stock Price Movements? Return Predictability and Large Language Models . arXiv 2024, arXiv:2304.07619. [Google Scholar] [CrossRef]

- MacKenzie, S. B.; Podsakoff, P. M.; Podsakoff, N. P. Construct measurement and validation procedures in MIS and behavioral research: Integrating new and existing techniques. MIS Quarterly: Management Information Systems 2011, 35(2), 293–334. [Google Scholar] [CrossRef]

- Malterud, K.; Siersma, V. D.; Guassora, A. D. Sample Size in Qualitative Interview Studies: Guided by Information Power. Qualitative Health Research 2016, 26(13), 1753–1760. [Google Scholar] [CrossRef]

- McGrath, M. J.; Lack, O.; Tisch, J.; Duenser, A. Measuring trust in artificial intelligence: Validation of an established scale and its short form. Frontiers in Artificial Intelligence 2025, 8, 1582880. [Google Scholar] [CrossRef]

- Meade, A. W.; Craig, S. B. Identifying careless responses in survey data. Psychological Methods 2012, 17(3), 437–455. [Google Scholar] [CrossRef]

- Miller, G. S.; Skinner, D. J. The Evolving Disclosure Landscape: How Changes in Technology, the Media, and Capital Markets Are Affecting Disclosure. Journal of Accounting Research 2015, 53(2), 221–239. [Google Scholar] [CrossRef]

- Morgado, F. F. R.; Meireles, J. F. F.; Neves, C. M.; Amaral, A. C. S.; Ferreira, M. E. C. Scale development: Ten main limitations and recommendations to improve future research practices. Psicologia: Reflexão e Crítica 2017, 30(1), 3. [Google Scholar] [CrossRef]

- Mustofa, R.; Kuncoro, T.; Atmono, D.; Hermawan, H.; Sukirman. Extending the Technology Acceptance Model: The Role of Subjective Norms, Ethics, and Trust in AI Tool Adoption Among Students. Computers and Education: Artificial Intelligence 2025, 8, 100379. [Google Scholar] [CrossRef]

- Nashold. Trust in Consumer Adoption of Artificial Intelligence-Driven Virtual Finance Assistants: A Technology Acceptance Model Perspective - ProQuest. 2020. Available online: https://www.proquest.com/docview/2385705772?pq-origsite=gscholar.

- Northey, G.; Hunter, V.; Mulcahy, R.; Choong, K.; Mehmet, M. Man vs machine: How artificial intelligence in banking influences consumer belief in financial advice. International Journal of Bank Marketing 2022, 40(6), 1182–1199. [Google Scholar] [CrossRef]

- Nourallah, M.; Öhman, P.; Amin, M. No trust, no use: How young retail investors build initial trust in financial robo-advisors. Journal of Financial Reporting and Accounting 2022, 21(1), 60–82. [Google Scholar] [CrossRef]

- Oh, D.; Kim, T.; Jang, J.; Park, S.-H. Democratizing alpha: LLM-driven portfolio construction for retail investors using public financial media. Proceedings of the 6th ACM International Conference on AI in Finance (ICAIF ’25) 2025, 72, 326–334. [Google Scholar] [CrossRef]

- Patton, M. Q. Qualitative research & evaluation methods: Integrating theory and practice, (No DOI; book.), 4th ed.; SAGE Publications, 2015; ISBN 978-1412972123. [Google Scholar]

- Palan, S.; Schitter, C. Prolific.ac—A subject pool for online experiments. Journal of Behavioral and Experimental Finance 2018, 17, 22–27. [Google Scholar] [CrossRef]

- (PDF) Measurement of Trust in Automation: A Narrative Review and Reference Guide. In ResearchGate; 2021. [CrossRef]

- Peer, E.; Rothschild, D.; Gordon, A.; Evernden, Z.; Damer, E. Data quality of platforms and panels for online behavioral research. Behavior Research Methods 2022, 54(4), 1643–1662. [Google Scholar] [CrossRef] [PubMed]

- Peng, R. D. Reproducible Research in Computational Science. Science 2011, 334(6060), 1226–1227. [Google Scholar] [CrossRef]

- Podsakoff, P. M.; MacKenzie, S. B.; Podsakoff, N. P. Recommendations for Creating Better Concept Definitions in the Organizational, Behavioral, and Social Sciences. Organizational Research Methods 2016, 19(2), 159–203. [Google Scholar] [CrossRef]

- Prahl, A.; Van Swol, L. Understanding algorithm aversion: When is advice from automation discounted? Journal of Forecasting 2017, 36(6), 691–702. [Google Scholar] [CrossRef]

- Ruggeri, K.; Ashcroft-Jones, S.; Abate Romero Landini, G.; Al-Zahli, N.; Alexander, N.; Andersen, M. H.; Bibilouri, K.; Busch, K.; Cafarelli, V.; Chen, J.; Doubravová, B.; Dugué, T.; Durrani, A. A.; Dutra, N.; Garcia-Garzon, E.; Gomes, C.; Gracheva, A.; Grilc, N.; Gürol, D. M.; Stock, F. The persistence of cognitive biases in financial decisions across economic groups. Scientific Reports 2023, 13(1), 10329. [Google Scholar] [CrossRef]

- Sandve, G. K.; Nekrutenko, A.; Taylor, J.; Hovig, E. Ten Simple Rules for Reproducible Computational Research. PLOS Computational Biology 2013, 9(10), e1003285. [Google Scholar] [CrossRef] [PubMed]

- Saunders, B.; Sim, J.; Kingstone, T.; Baker, S.; Waterfield, J.; Bartlam, B.; Burroughs, H.; Jinks, C. Saturation in qualitative research: Exploring its conceptualization and operationalization. Quality & Quantity 2018, 52(4), 1893–1907. [Google Scholar] [CrossRef]

- Schlosky, M. T. T.; Raskie, S. ChatGPT as a Financial Advisor: A Re-Examination. Journal of Risk and Financial Management 2025, 18(12). [Google Scholar] [CrossRef]

- Senteio, Hughes. Customer Trust and Satisfaction with Robo-Adviser Technology | Financial Planning Association . 1 August 2024, 84. Available online: https://www.financialplanningassociation.org/learning/publications/journal/AUG24-customer-trust-and-satisfaction-robo-adviser-technology-OPEN.

- Shefrin, H.; Statman, M. The Disposition to Sell Winners Too Early and Ride Losers Too Long: Theory and Evidence. The Journal of Finance 1985, 40(3), 777–790. [Google Scholar] [CrossRef]

- Shiller, R. J. Narrative Economics. American Economic Review 2017, 107(4), 967–1004. [Google Scholar] [CrossRef]

- Shiller, R. J.; Pound, J. Survey evidence on diffusion of interest and information among investors. Journal of Economic Behavior & Organization 1989, 12(1), 47–66. [Google Scholar] [CrossRef]

- Skiera, V. The Effects of Robo-Advisers on Stock Market Participation and Household Investment Behavior . 2021, 88. Available online: https://cepr.org/system/files/2022-08/Skiera-Robo-Advisers-Final-Report.pdf.

- Sophia Sophia tell me more, which is the most risk-free plan of all? AI anthropomorphism and risk aversion in financial decision-making. (2022). International Journal of Bank Marketing 40(6), 1133–1158. [CrossRef]

- Takayanagi, T.; Suzuki, M.; Izumi, K.; Sanz-Cruzado, J.; McCreadie, R.; Ounis, I. FinPersona: An LLM-Driven Conversational Agent for Personalized Financial Advising. Advances in Information Retrieval: 47th European Conference on Information Retrieval, ECIR 2025, Lucca, Italy, April 6–10, 2025, Proceedings, Part V. pp. 13–18. [CrossRef]

- Trust and reliance on AI — An experimental study on the extent and costs of overreliance on AI | Request PDF. ResearchGate 2025, 160, 108352. [CrossRef]

- Venkatesh, V.; Davis, F. D. A Theoretical Extension of the Technology Acceptance Model: Four Longitudinal Field Studies. Management Science 2000, 46(2), 186–204. [Google Scholar] [CrossRef]

- Venkatesh, V.; Morris, M. G.; Davis, G. B.; Davis, F. D. User Acceptance of Information Technology: Toward a Unified View (SSRN Scholarly Paper No. 3375136). In Social Science Research Network; 2003; Available online: https://papers.ssrn.com/abstract=3375136.

- Venkatesh, V.; Thong, J. Y. L.; Xu, X. Consumer Acceptance and Use of Information Technology: Extending the Unified Theory of Acceptance and Use of Technology. MIS Quarterly 2012, 36(1), 157–178. [Google Scholar] [CrossRef]

- Verma, B.; Schulze, M.; Goswami, D.; Upreti, K. Artificial intelligence attitudes and resistance to use robo-advisors: Exploring investor reluctance toward cognitive financial systems. Frontiers in Artificial Intelligence 2025, 8, 1623534. [Google Scholar] [CrossRef] [PubMed]

- Warkulat, S.; Pelster, M. Social media attention and retail investor behavior: Evidence from r/wallstreetbets. International Review of Financial Analysis 2024, 96, 103721. [Google Scholar] [CrossRef]

- Wilson, G.; Aruliah, D. A.; Brown, C. T.; Hong, N. P. C.; Davis, M.; Guy, R. T.; Haddock, S. H. D.; Huff, K. D.; Mitchell, I. M.; Plumbley, M. D.; Waugh, B.; White, E. P.; Wilson, P. Best Practices for Scientific Computing. PLOS Biology 2014, 12(1), e1001745. [Google Scholar] [CrossRef]

- Winder, P.; Hildebrand, C.; Hartmann, J. Biased echoes: Large language models reinforce investment biases and increase portfolio risks of private investors. PLOS ONE 2025, 20(6), e0325459. [Google Scholar] [CrossRef]

- Yi, T. Z.; Rom, N. A. M.; Hassan, N. M.; Samsurijan, M. S.; Ebekozien, A. The Adoption of Robo-Advisory among Millennials in the 21st Century: Trust, Usability and Knowledge Perception. Sustainability 2023, 15(7). [Google Scholar] [CrossRef]

- Zhu, H.; Pysander, E.-L.; Soderberg, I. Not transparent and incomprehensible: A qualitative user study of an AI-empowered financial advisory system. Data and Information Management, Special Issue on Human-AI Interaction 2023, 7(3), 100041. [Google Scholar] [CrossRef]

| Item ID | Short content descriptor | Primary macro-code |

|---|---|---|

| PCA1 | Structured path from idea to order | C1 |

| PCA2 | Break complex trades into steps | C1 |

| PCA3 | Translate view into executable plan | C1 |

| PCA4 | Structure multi-leg/conditional orders | C1 |

| PCA5 | Process more information without overload | C2 |

| PCA6 | Filter to decision-relevant information | C2 |

| PCA7 | Integrate sources into coherent picture | C2 |

| PCA8 | Spot gaps and inconsistencies in plans | C3 |

| PCA9 | Detect misalignment in numbers/dates/assumptions | C3 |

| PCA10 | Scrutinise failure points before commitment | C3 |

| PCA11 | Compare tactics side by side | C4 |

| PCA12 | Think through what-if paths | C4 |

| PCA13 | Stay organised when markets move quickly | C4 |

| PCA14 | Understand rationale for strategy–view fit | C5 |

| PCA15 | Expand range of mental scenario simulation | C5 |

| PCA16 | Reflect on decisions during trade review | C5 |

| Item ID | Primary macro-code | Status |

|---|---|---|

| PCA1 | C1 | CORE |

| PCA2 | C1 | BORDERLINE |

| PCA3 | C1 | CORE |

| PCA4 | C1 | BORDERLINE |

| PCA5 | C2 | BORDERLINE |

| PCA6 | C2 | BORDERLINE |

| PCA7 | C2 | BORDERLINE |

| PCA8 | C3 | BORDERLINE |

| PCA9 | C3 | BORDERLINE |

| PCA10 | C3 | BORDERLINE |

| PCA11 | C4 | CORE |

| PCA12 | C4 | CORE |

| PCA13 | C4 | BORDERLINE |

| PCA14 | C5 | CORE |

| PCA15 | C5 | CORE |

| PCA16 | C5 | CORE |

| Construct (filler) | Definitional correspondence, psa | Misclassification (1 − psa) | psa ≥ 0.85 target met? | Interpretation |

|---|---|---|---|---|

| PEOU | 0.917 | 0.083 | Yes | Calibration target met. |

| Trust | 0.896 | 0.104 | Yes | Calibration target met. |

| TSE | 0.958 | 0.042 | Yes | Calibration target met. |

| PU | 0.812 | 0.188 | No | Below-target calibration indicates proximity to PCA under naïve-judge interpretation. |

| Item ID | Macro-code | Retention basis | Role in PCA set |

|---|---|---|---|

| PCA1 | C1 | CORE | Core coverage. |

| PCA3 | C1 | CORE | Core coverage. |

| PCA11 | C4 | CORE | Core coverage. |

| PCA12 | C4 | CORE | Core coverage. |

| PCA14 | C5 | CORE | Core coverage. |

| PCA15 | C5 | CORE | Core coverage. |

| PCA16 | C5 | CORE | Core coverage. |

| PCA5 | C2 | Safeguard | Preserves C2 (cognitive-load relief) content. |

| PCA8 | C3 | Safeguard | Preserves C3 (error-checking) content. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).