3. Extended Set Partitioning and Covering Models

The CPD problem can be naturally modeled from a set partitioning perspective: all subsequences satisfying predefined structural properties—termed feasible runs—are generated and scored according to a segment-specific quality metric, under an additivity assumption on the cost function. The segmentation problem is then formulated as the selection of a collection of runs that partitions the time index set while optimizing a global objective based on residual loss or model fit. The resulting formulation is a 0–1 integer linear program, which can be viewed as a special case of a mixed-integer linear program (MILP), thereby allowing the use of state-of-the-art MILP solvers [

19]. This framework provides considerable flexibility, as additional modeling requirements can be seamlessly encoded via linear constraints.

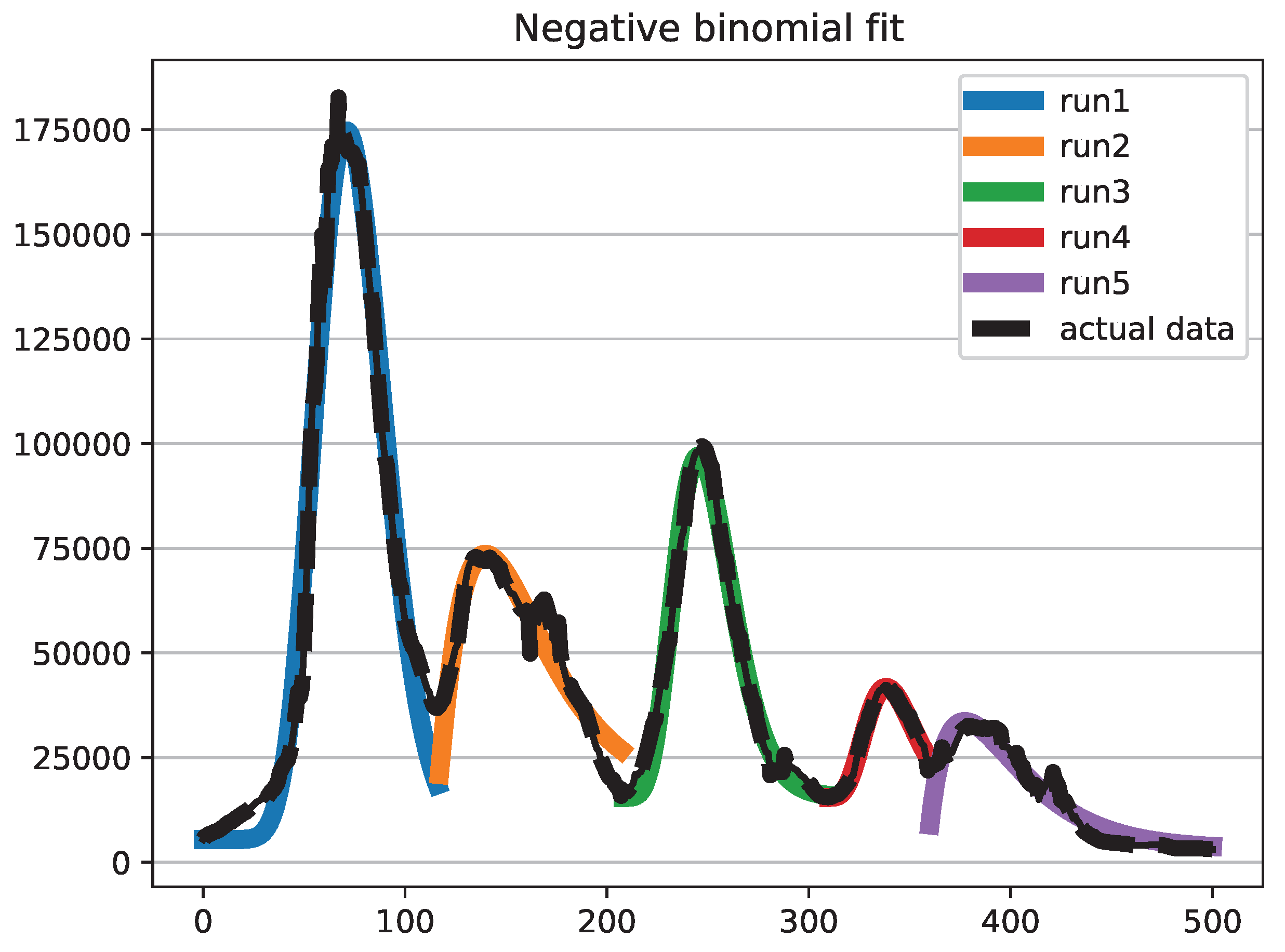

A Set Partitioning Problem (SPP) lies at the core of the formulation. The objective is to minimize an aggregate residual-based cost, while the constraints enforce that each time index of the series is covered exactly once by the selected segments. No restriction is imposed on the functional form of the segment model: it may consist of linear regression (yielding piecewise linear regression), but also of nonlinear or nonparametric specifications. Moreover, different runs may be associated with different model classes without altering the structure of the optimization problem.

Formally, let , , denote the binary decision variables, where if run j is selected and otherwise. Feasible runs may be generated so as to incorporate preliminary dominance rules, for instance by excluding segments that are too short or too long. Each run j is assigned a cost , computed according to any suitable goodness-of-fit or loss metric proposed in the literature, including , SER, , RMSE, variance-based criteria, etc. The partitioning / covering constraints ensure that every series point is included in (exactly) one selected run.

The resulting Time Series Set Partitioning (TSSP) model is defined as follows. Let

be a coefficient equal to 1 if and only if run

j covers time index

i, for

and

. In formulation TSSP we also included a constraint on the cardinality of the solution set.

Additional variables can be included in the model associating them with the

X decision variables, for example it is possible to associate the starting time

, the end time

with each variable

. While redundant in this formulation, they might become important to express the constraints listed in sub

Section 3.2.

Unfortunately, in real-world applications the number of feasible runs can be very large, making the resulting SPP computationally demanding and, in some cases, intractable within acceptable time limits. From a polyhedral perspective, the set partitioning formulation is typically tighter but also harder to explore computationally, as the equality constraints induce a more restrictive feasible region and a combinatorially demanding branching structure. To mitigate this issue, we can relax the equality constraints into inequalities, thereby transforming the formulation into a Set Covering Problem (SCP). The covering polyhedron is generally easier to handle algorithmically and can be solved in significantly larger dimensions, albeit with a weaker linear programming relaxation.

The SCP relaxation allows multiple runs to cover the same series points, and therefore a postprocessing phase is required to extract a feasible segmentation from the (possibly overlapping) covering solution. The resulting set covering formulation is denoted by TSSC.

State-of-the-art MIP solvers are highly effective on SCP formulations; however, very large instances may still entail substantial computational effort. To further enhance scalability, [

20] proposed a Lagrangian matheuristic [

21] for the TSSC problem where all covering constraints () are relaxed by associating a Lagrangian multiplier

with each constraint,

, while retaining only the relaxed covering constraint in the formulation. The resulting Lagrangian model is solved via a subgradient optimization scheme. The corresponding Lagrangian subproblem is computationally simple: at each iteration, it amounts to selecting at most the

variables with the most negative reduced costs. A simple fixing heuristic is embedded within the procedure: the incumbent solution of the subproblem, which may be infeasible with respect to the relaxed covering constraints, is iteratively augmented by adding selected variables until feasibility is restored. This relaxation makes it possible to easily include constraints such as the maximum number of segments allowed. However, in most cases, additional constraints must be added to the subproblem or relaxed themselves, which makes the approach impractical.

In any case, after solving a set covering relaxation, postprocessing is required to transform any SCP solution into a feasible SPP segmentation. This can be accomplished in linear time by scanning each region of overlapping runs and identifying the splitting point that minimizes the total cost obtained by assigning the preceding observations to the preceding segment and the subsequent observations to the following segment.

3.1. Cost Function

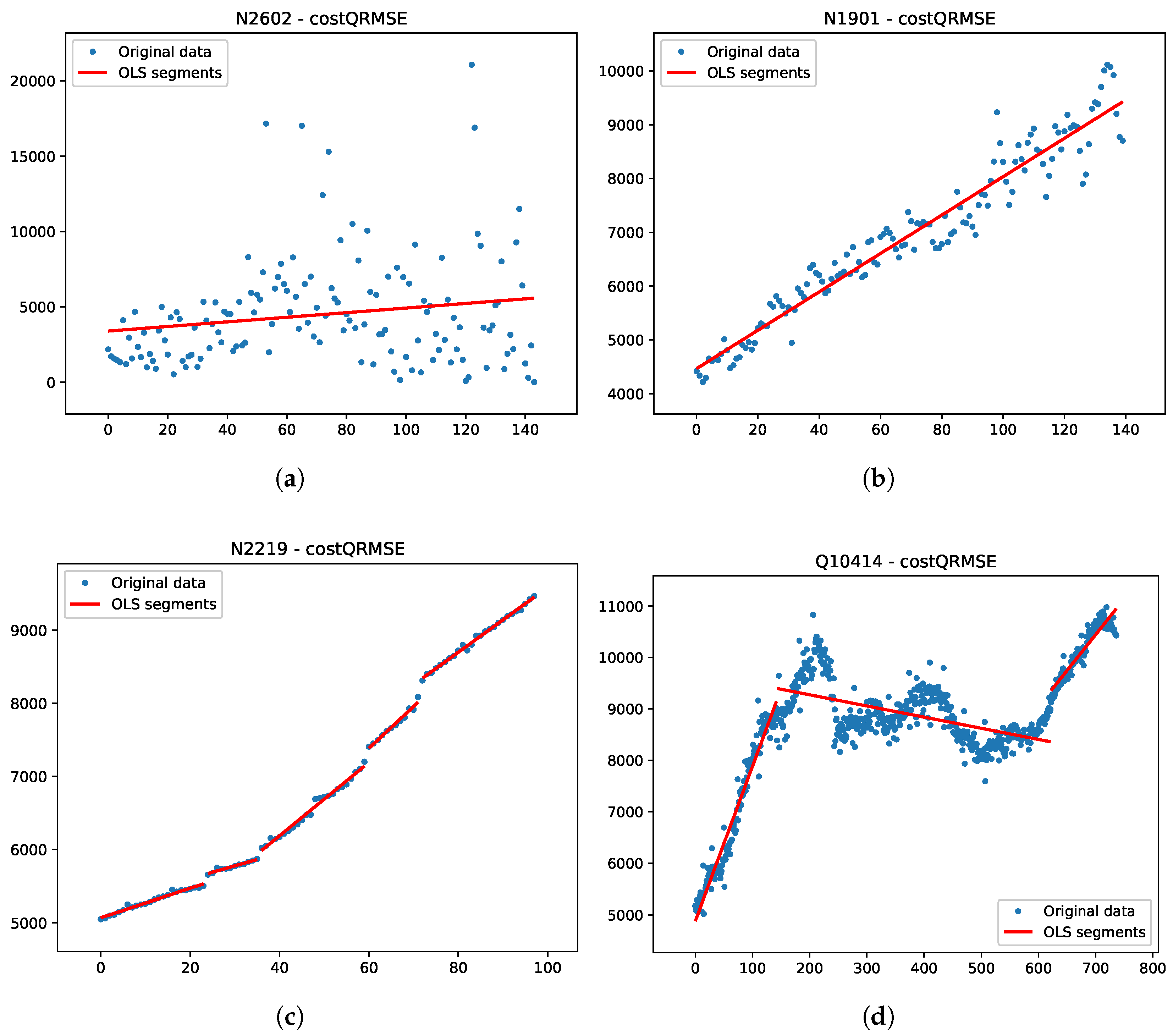

Models TSSP and TSSC are suitable for offline (or anytime) analysis assuming additive costs. The model is independent on the specific function used to quantify the cost associated with each segment. The CPD literature proposes different cost functions, including negative log-likelihood (Horvath 1993; Chen and Gupta 2000), quadratic loss or cumulative sums (e.g., Inclan and Tiao 1994; Rigaill 2010), but any statistical measure of fit can be used for the task. Each measure favors different characteristics of the model and the results can be widely different.

In practical applications of CPD, model quality is often judged less by its formal optimality properties and more by the interpretability of the resulting segmentation. In many real-world settings, the most useful segmentation is not necessarily the one that minimizes a given objective function, but rather the one that aligns with human perception of structural changes or provides a coherent narrative for subsequent decision-making. Accordingly, the utility of a cost function may depend as much on the clarity and explanatory power of its segmentation as on its predictive accuracy.

To reconcile algorithmic outputs with visual or domain-based expectations, many selection procedures incorporate penalty terms that regulate the number of detected changepoints. These penalizations effectively allow practitioners to adjust the segmentation to better match subjective expectations. However, the resulting models should be regarded as practically useful approximations rather than representations of an underlying “true” model, which is unlikely to be included among the finite set of candidate models considered in practice [10].

A further limitation of user-defined penalization schemes is that they implicitly require human feedback. In heterogeneous datasets, appropriate penalty calibration often needs to be tailored on a per-series basis, which limits scalability and automation. To reduce this dependency on manual tuning, we evaluated several alternative cost functions drawn from both information-theoretic and optimization-based frameworks, with the aim of identifying a more robust and resilient formulation suitable for automated CPD analysis.

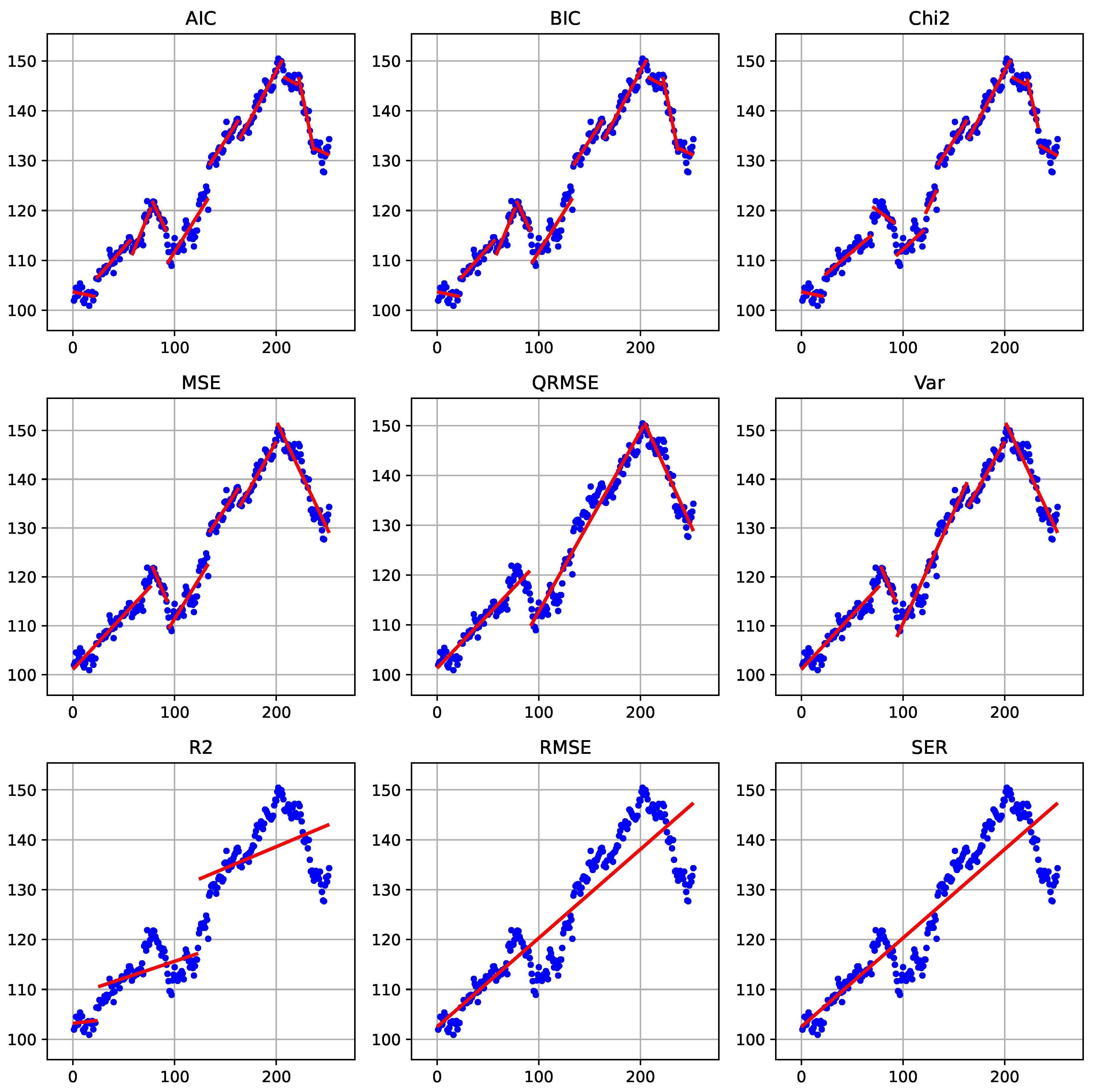

We evaluated a broad range of cost functions, including AIC, BIC, Chi-square statistics, MSE, QRMSE,

, RMSE, SER, and simple variance; comparative visual results on a standard benchmark instance are represented in

Figure 1. No single criterion consistently dominates the others across all benchmark instances. Performance varies depending on the underlying signal structure, noise characteristics, and segment length distribution, supporting the claim that cost-function performance is inherently context-dependent.

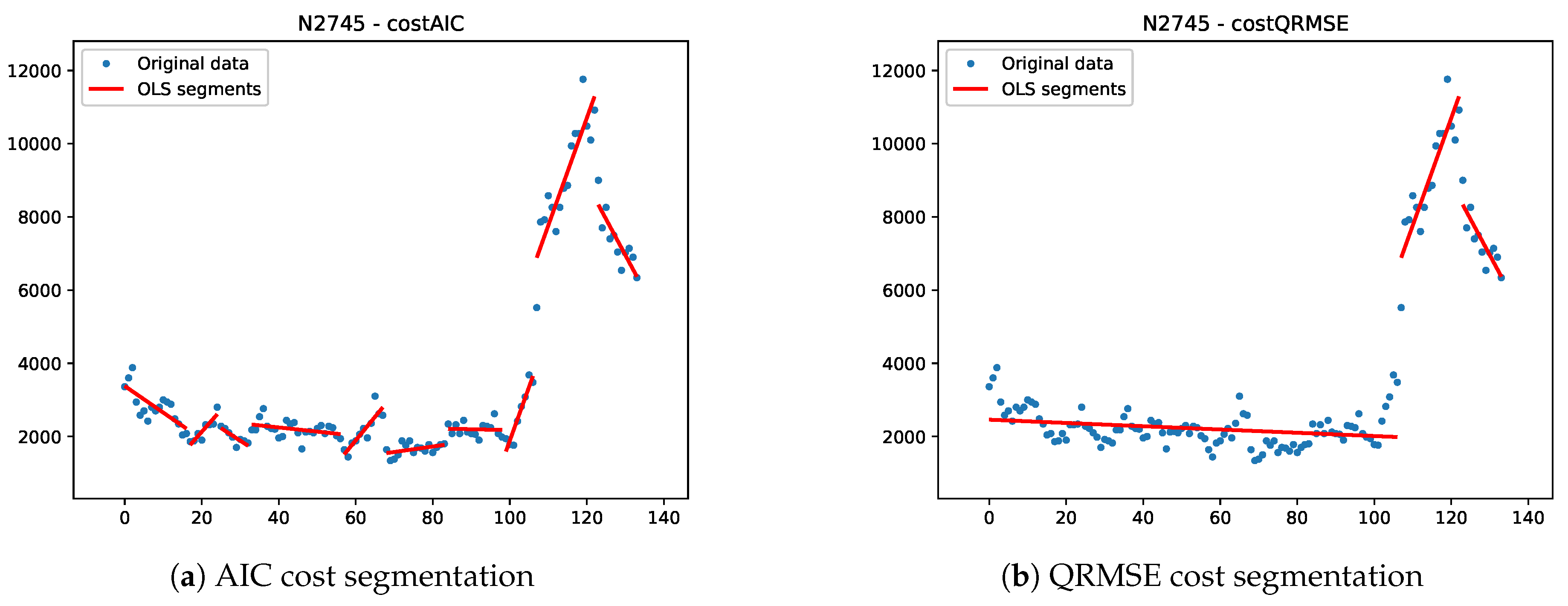

For the subsequent analysis, we focus on two representative and complementary criteria: AIC and QRMSE. AIC is well established in the CPD literature, particularly through its equivalence to penalized negative log-likelihood formulations, and provides a theoretically grounded likelihood-based benchmark.

QRMSE (Quarter-root-RMSE) [

20], by contrast, lies between MSE and RMSE in terms of segment-length sensitivity. Relative to MSE, it places less emphasis on short segments, while avoiding the stronger preference for long segments induced by RMSE. This intermediate behavior makes it attractive in applications where neither aggressive segmentation nor excessive smoothing is desirable. Specifically, QRMSE is obtained from the residual sum of squares as

This formulation reduces the implicit penalty associated with long segments compared with classical RMSE or MSE-based criteria. As a consequence, longer segments are penalized less strongly and the resulting segmentations tend to be smoother.

Importantly, although QRMSE is additive across segments and can therefore be used within optimal partitioning frameworks, it does not satisfy the inequality condition required for pruning in algorithms such as PELT. In particular, the cost of a merged segment may be lower than the sum of the costs of two adjacent subsegments. This violation prevents the use of the pruning guarantees that underpin the computational efficiency of such methods, limiting the practical applicability of QRMSE in large-scale settings.

3.2. Additional Constraints

In many real-world applications, the analyst is not only interested in minimizing a fitting error, but also in enforcing interpretable and domain-driven properties on the resulting segmentation. Many changepoint frameworks encode these ideas via global penalization rather than hard constraints—using penalties on the number of changepoints (L0), total variation (L1), or graph-structured transitions that enforce sign alternation or monotone regimes across the entire sequence. These approaches provide a way to control global behaviors, such as roughness or regime structure, and are usually implemented by dynamic programming or specialized algorithm limiting the possibility to flexibly expand the proposed model.

These are the constraints that "operate over the whole segmentation" and are discussed in the global constraint catalogues and changepoint detection literature (Beldiceanu, Régin; O’Hearn; Gionis; Killick; etc.), as well as in application papers spanning different areas, including bioinformatics, econometerics, signal processing among others.

Let be the index set of feasible segments, where each segment is associated with its start and end indices , , with length , slope (in the case of linear regression), fitting cost and endpoint values .

For the sake of our discussion, it is important to distinguish between two classes of constraints: those that affect the feasibility of individual segments independently of the remainder of the sequence, and those that impose conditions on the sequence as a whole. Constraints in the first class can be naturally incorporated into the segment generation process and are readily accommodated within dynamic programming frameworks. In contrast, constraints in the second class require the introduction of additional state variables to track global properties, thereby increasing the computational burden of dynamic programming approaches.

3.2.1. Local Segment Constraints

Segment-level constraints restrict the admissible properties of each segment independently of the rest of the segmentation. These constraints can be implemented in the data generation phase, do not require additions to formulations TSSP or TSSC and are easily included in DP approaches. Typical segment-level constraints include:

3.2.2. Global Series Constraints

In contrast to segment-level constraints, global series constraints impose conditions that couple multiple segments and operate on the segmentation as a whole. Mixed-Integer Programming formulations can support several types of constraints belonging to this class through possibly linear inequalities linking multiple segment-selection variables.

A list of global constraints reported in the literature is presented below. We note that several of these constraint classes generate a number of constraints that grows superlinearly with the number of feasible segments (often quadratically in practice). Explicitly including all such constraints in the model is therefore impractical. Instead, we anticipate incorporating them via a cutting-plane strategy: constraints are added on demand as violated cuts because their separation can be performed efficiently for the constraint types considered.

-

Global bound on the number of segments. A global bound on the number of selected segments controls the overall model complexity. This constraint can be written as

Constrained dynamic programming algorithms under this setting are discussed in [

22].

Global budget on segmentation cost. The total cost of the segmentation can be bounded:

where

denotes the cost of segment

j. This constraint can be seen as the constrained version of penalized segmentation model, which can be then relaxed in a Lagrangian fashion to obtain the penalized model itself [

7]. A framework for selecting number of changepoints via information criteria is also proposed in [

23].

-

Global total variation. A similar global constraint places a bound on the aggregate magnitude of all changes across the series:

This constraint controls the overall amount of structural variation in the segmentation by limiting the cumulative magnitude of successive jumps between chosen segments. It is closely related to total variation regularization and to the lasso formulation of [

24], where a penalty is applied to successive parameter differences.

-

Global shape constraints (monotonicity, convexity, symmetry). These constraints impose structural properties on the segmentation as a whole, rather than on individual segments. For example, global monotonicity or convexity can be enforced by requiring the sequence of segment parameters to satisfy a prescribed ordering, such as ensuring a nondecreasing evolution of segment means. The formulation here is more complex as it is necessary to identify successive segments in the model. To this end, let

be the set of indices of successive, adjacent segments and let

, be binary decision variables equal to 1 iff the two adjacent segments of indices

i and

j are in the solution. Then a monotonic nondecrease of means can be expressed as

where the

are the mean values of the segments indexed by

. More generally, convexity or concavity can be imposed by constraining first or second differences of the segment parameters to follow a specified sign pattern.

A related formulation limits the number of monotone episodes across the segmentation. For example, one may allow at most runs of consecutive nondecreasing (or nonincreasing) segments, thereby restricting the global alternation of trends.

Symmetry constraints also fall within this class. These may require changepoint locations to be symmetric with respect to the midpoint of the series, or impose mirrored jump magnitudes, as appropriate for symmetric processes.

-

Global pattern constraints on change directions. These constraints require the sequence of segment transitions to follow a prescribed qualitative pattern. For example, the model may be forced to follow a structure such as increase–plateau–decrease, to alternate increases and decreases in a regular manner, or to follow a periodic regime sequence (e.g., stable–volatile–stable). Similarly, one may regulate the occurrence of peaks, where a peak is defined as an increase in segment means immediately followed by a decrease.

Such constraints may either enforce a specific pattern or restrict its complexity (e.g., by bounding the number of direction alternations) [

25]. They can also be formulated negatively as forbidden-pattern constraints, excluding certain global configurations. Examples include limiting the total number of alternations to at most

, or forbidding recurring structures such as repeated “short–long–short” segment sequences. These formulations amount to global pattern-avoidance constraints on the segmentation.

Global structural break frameworks (econometrics). Econometric “structural break” models can be seen as global constraints, as they formalize constraints on the solution such as maximum number of structural breaks, with simultaneous estimation of break locations [

26,

27].

-

Equal or bounded segment sizes: Require near-equal segment lengths or bound length variability (e.g., max/min segment length ratio), affecting the global partition shape. If

is the maximum allowed difference among segment lengths (

in case of equal segment lengths), the constraint is

The acceptable length difference can given as input, or it can be optimized itself.

The above lists are not intended to be exhaustive of all possible requirements that may be imposed on time series segmentation problems; rather, they are restricted to constraints that can be incorporated within an extension of our current modeling framework. For example, a global constraint on the number of distinct regimes—commonly used in regime-switching models and implemented in systems such as SETAR [

28]—would require an explicit regime identification mechanism, which is not presently supported by our framework.

Similarly, certain global structural constraints, such as net drift constraints that enforce the signed sum of jumps to equal a specified value (e.g., zero), thereby ensuring that the process begins and ends at comparable levels, are not directly accommodated. Ensuring feasibility under such requirements would necessitate a joint search over segmentations and model parameters. In particular, restricting the search to a set of a priori fitted segments would be insufficient; instead, parameter optimization would need to be performed within the segmentation procedure itself.

Finally, we point out that the explicit modelling of such constraints can, in most cases, be effectively achieved also using constraint programming techniques [

29]. Constraint programming is deeply rooted in mathematical programming itself, and in recent years the two areas have arguably moved closer to one another. In particular, for global CPD constraints, one can exploit classical constraint programming constructs such as

regular, which enforces that a sequence of decision variables is accepted by a finite automaton, or global constraints such as

nvalues or

count, which bound, respectively, the number of distinct values or the occurrences of specific values across a set of variables. Nevertheless, mixed-integer programming remains a more general and flexible modelling framework, capable of accommodating a broader range of constraints than current constraint-programming systems.