Submitted:

17 March 2026

Posted:

18 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

2.1. Study Design and Ethical Considerations

2.2. Model Selection and Anonymization

- ChatGPT-5.2 (OpenAI)

- Claude Sonnet 4.6 (Anthropic)

- Gemini 3.0 Pro (Google DeepMind)

- 1)

- Widespread clinical and academic usage

- 2)

- Advanced multimodal reasoning capabilities

- 3)

- Public accessibility and reproducibility

- 4)

- Representation of distinct AI training paradigms.

2.3. Case Vignette Development

- Demographic characteristics

- Presenting symptoms and physical examination findings

- Relevant laboratory results

- Imaging findings where appropriate

- Medication history

- Key clinical decision points

- Primary aldosteronism

- Pheochromocytoma/paraganglioma

- Atherosclerotic renal artery stenosis

- Fibromuscular dysplasia

- Primary hyperparathyroidism

- Obstructive sleep apnea

- Coarctation of the aorta

- Cushing’s syndrome

- Renal parenchymal disease

- Mixed or atypical presentations requiring complex differential diagnosis

2.4. Prompt Standardization and Model Querying

- Diagnosis and Differential Diagnosis

- Diagnostic Workup Strategy

- Acute and Long-Term Management Plan

- Follow-up and Monitoring Strategy

- Patient-Oriented Education and Counseling

2.5. Evaluation Framework and Scoring System

2.5.1. Evaluator Panel

- Two board-certified endocrinologists

- One board-certified cardiologist

2.5.2. Scoring Domains

2.5.3. Global Preference Assessment

2.6. Statistical Analysis

- ▪ <0.50→Poor reliability

- ▪ 0.50–0.75→Moderate reliability

- ▪ 0.75–0.90→Good reliability

- ▪ >0.90 → Excellent reliability

3. Results

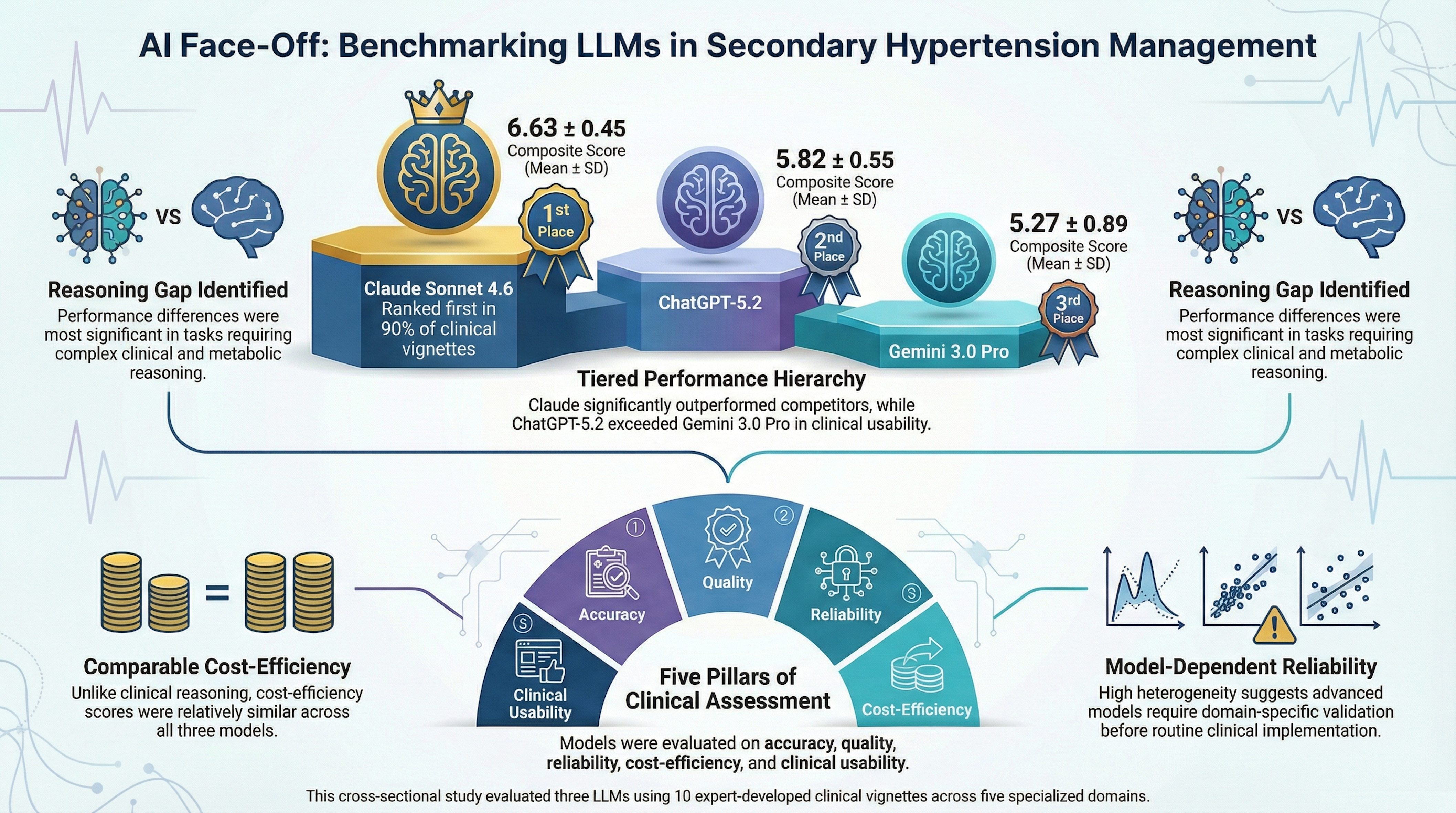

3.1. Overall Model Performance

- Claude Sonnet 4.6 significantly outperformed ChatGPT-5.2 (U = 785, p < 0.001)

- Claude Sonnet 4.6 significantly outperformed Gemini 3.0 Pro (U = 822, p < 0.001)

- ChatGPT-5.2 showed numerically higher scores than Gemini 3.0 Pro (U = 296, p = 0.023), though this difference did not remain significant after Bonferroni correction.

- Claude Sonnet 4.6 significantly outperformed Gemini 3.0 Pro (U = 623, p = 0.007)

- Claude Sonnet 4.6 and ChatGPT-5.2 demonstrated statistically comparable performance (p = 0.032)

- ChatGPT-5.2 and Gemini 3.0 Pro showed no significant difference

- Claude Sonnet 4.6 vs Gemini 3.0 Pro: U = 798, p < 0.001

- Claude Sonnet 4.6 vs ChatGPT-5.2: U = 723, p < 0.001

- ChatGPT-5.2 vs Gemini 3.0 Pro: U = 272, p = 0.005

3.2. Inter-Rater Agreement

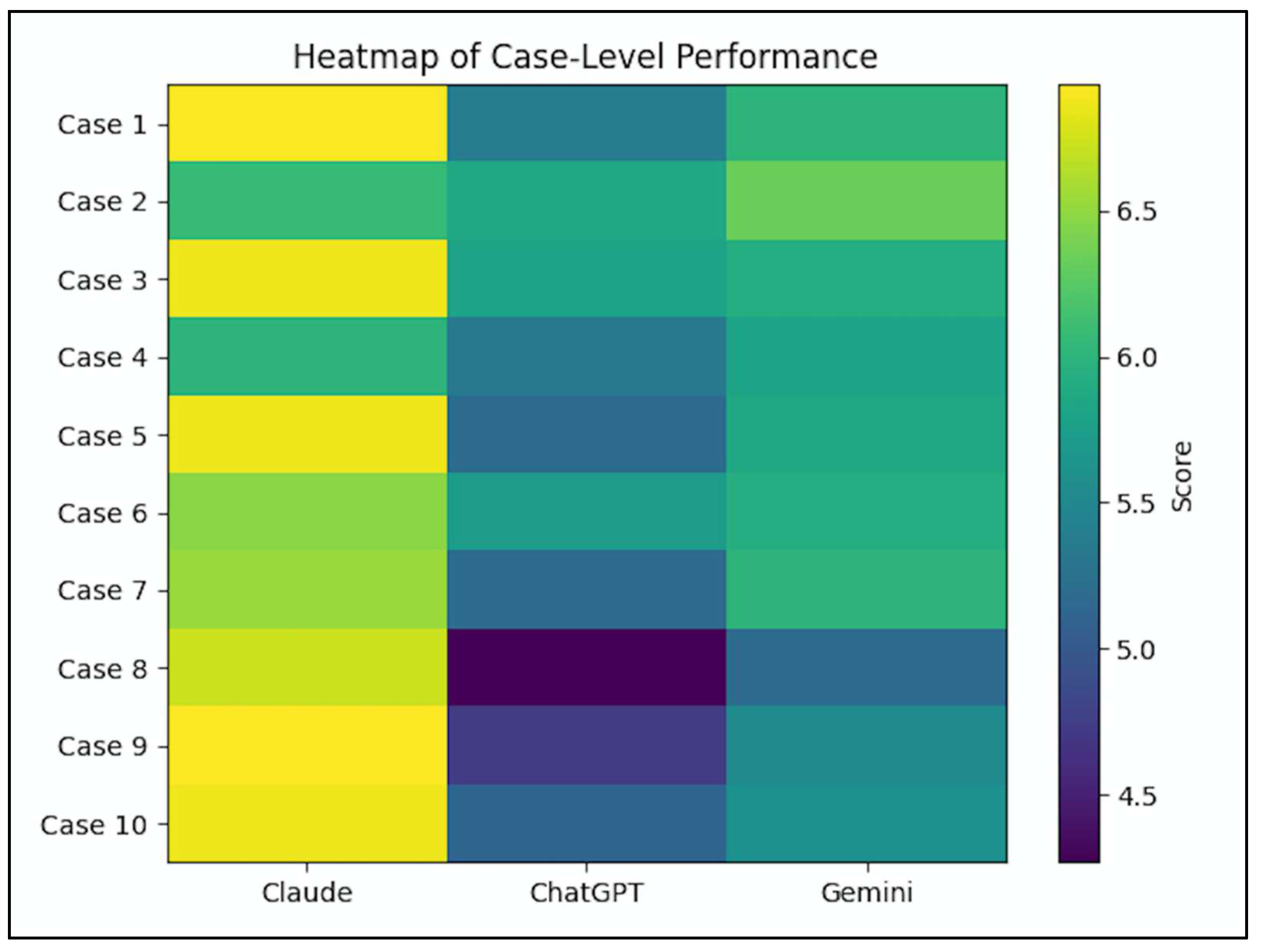

3.3. Case-Level Performance

| Case | Diagnosis | Claude Sonnet 4.6 | ChatGPT-5.2 | Gemini 3.0 Pro |

|---|---|---|---|---|

| 1 | Pheochromocytoma | 6.93 | 5.40 | 6.00 |

| 2 | Primary Hyperaldosteronism | 6.07 | 5.87 | 6.33 |

| 3 | Renal Artery Stenosis (Atherosclerotic) | 6.87 | 5.80 | 5.93 |

| 4 | Primary Hyperparathyroidism | 6.00 | 5.33 | 5.80 |

| 5 | White Coat / Early Essential Hypertension | 6.87 | 5.20 | 5.87 |

| 6 | Obstructive Sleep Apnea | 6.47 | 5.73 | 5.93 |

| 7 | Coarctation of the Aorta | 6.53 | 5.20 | 6.00 |

| 8 | Cushing Syndrome | 6.73 | 4.27 | 5.20 |

| 9 | Diabetic Nephropathy | 6.93 | 4.73 | 5.53 |

| 10 | Fibromuscular Dysplasia (Renal Artery) | 6.87 | 5.13 | 5.60 |

- Cushing’s syndrome (Case 8): Δ = 2.46 (Claude vs ChatGPT)

- Diabetic nephropathy (Case 9): Δ = 2.20

- Pheochromocytoma

- Renal artery stenosis

- White coat hypertension

- Fibromuscular dysplasia

4. Discussion

Clinical Implications

5. Conclusions

- This blinded comparative study evaluated three large language models (LLMs) using clinically realistic secondary hypertension scenarios and expert-guided scoring across multiple domains of clinical reasoning.

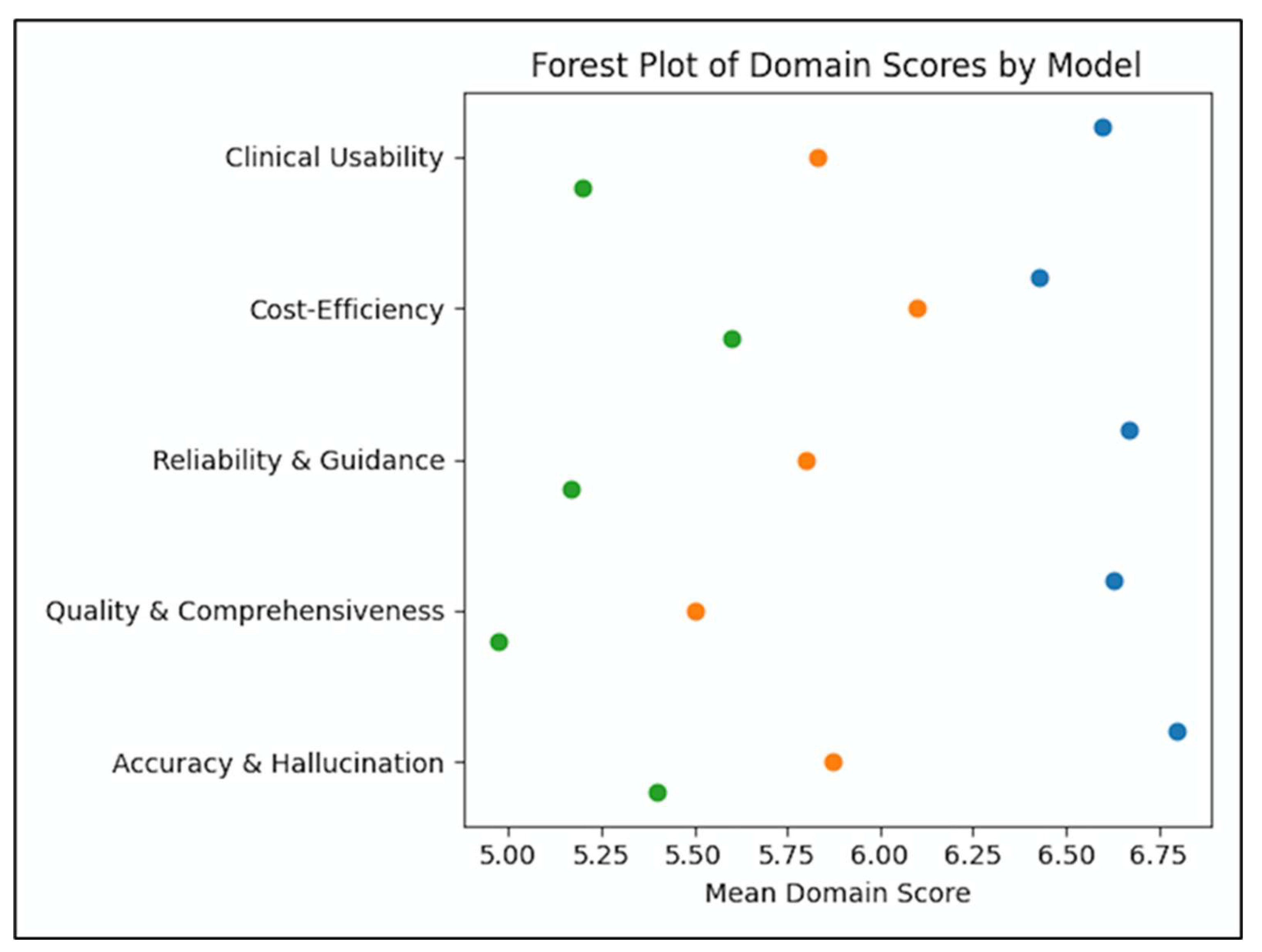

- Significant performance variability exists among LLMs, with Claude Sonnet 4.6 demonstrating superior diagnostic accuracy, reliability, clinical usability, and overall reasoning quality.

- ChatGPT-5.2 showed intermediate performance with relatively strong clinical guidance, while Gemini 3.0 Pro exhibited greater variability, particularly in complex endocrine–metabolic scenarios.

- Cost-efficiency scores were more comparable across models, suggesting that guideline-consistent test prioritization may be more uniformly learned than advanced diagnostic reasoning.

- LLMs demonstrated strong capability in patient-oriented communication, supporting their potential role in patient education and shared decision-making.

- Despite improving performance, hallucinations and variability in multistep reasoning remain key barriers to autonomous clinical use, reinforcing the need for clinician oversight.

- This study introduces a structured, guideline-aligned benchmarking framework for evaluating LLM performance in complex endocrine disorders.

- Large language models are emerging as promising decision-support tools in complex hypertension management but currently demonstrate substantial variability in clinical reasoning performance.

- Advanced LLMs can approximate structured specialist thinking when guided by role-specific prompts and standardized output frameworks.

- Diagnostic safety remains a concern, particularly in multistep endocrine reasoning where hallucinations and incomplete risk assessment may occur.

- Patient education represents one of the most immediate and lower-risk applications of LLMs in endocrine care.

- Artificial intelligence should currently augment—not replace—clinician expertise in secondary hypertension management.

- Future progress depends on domain-specific training, guideline-informed reinforcement learning, and prospective clinical validation.

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Rimoldi, S.F.; Scherrer, U.; Messerli, F.H. Secondary arterial hypertension: when, who, and how to screen? Eur Heart J. 2014, 35, 1245–1254. [Google Scholar] [CrossRef]

- Carey, R.M.; Calhoun, D.A.; Bakris, G.L.; Brook, R.D.; Daugherty, S.L.; Dennison-Himmelfarb, C.R.; et al. Resistant Hypertension: Detection, Evaluation, and Management: A Scientific Statement From the American Heart Association. Hypertension 2018, 72, e53–e90. [Google Scholar] [CrossRef]

- Funder, J.W.; Carey, R.M.; Mantero, F.; Murad, M.H.; Reincke, M.; Shibata, H.; et al. The Management of Primary Aldosteronism: Case Detection, Diagnosis, and Treatment: An Endocrine Society Clinical Practice Guideline. J Clin Endocrinol Metab. 2016, 101, 1889–1916. [Google Scholar] [CrossRef] [PubMed]

- Williams, B.; Mancia, G.; Spiering, W.; Agabiti Rosei, E.; Azizi, M.; Burnier, M.; et al. 2018 ESC/ESH Guidelines for the management of arterial hypertension. Eur Heart J. 2018, 39, 3021–3104. [Google Scholar] [CrossRef]

- Whelton, P.K.; Carey, R.M.; Aronow, W.S.; Casey, D.E.; Collins, K.J.; Dennison Himmelfarb, C.; et al. 2017 ACC/AHA/AAPA/ABC/ACPM/AGS/APhA/ASH/ASPC/NMA/PCNA Guideline for the Prevention, Detection, Evaluation, and Management of High Blood Pressure in Adults: A Report of the American College of Cardiology/American Heart Association Task Force on Clinical Practice Guidelines. Hypertension 2018, 71, e13–e115. [Google Scholar] [PubMed]

- Maity, S.; Saikia, M.J. Large Language Models in Healthcare and Medical Applications: A Review. Bioengineering (Basel) 2025, 12, 6. [Google Scholar] [CrossRef] [PubMed]

- Su, H.; Sun, Y.; Li, R.; Zhang, A.; Yang, Y.; Xiao, F.; et al. Large Language Models in Medical Diagnostics: Scoping Review With Bibliometric Analysis. J Med Internet Res. 2025, 27, e72062. [Google Scholar] [CrossRef]

- Chen, P.; Li, Y.; Zhang, X.; Feng, X.; Sun, X. The acceptability and effectiveness of artificial intelligence-based chatbot for hypertensive patients in community: protocol for a mixed-methods study. BMC Public Health 2024, 24, 2266. [Google Scholar] [CrossRef]

- Dinc, M.T.; Bardak, A.E.; Bahar, F.; Noronha, C. Comparative analysis of large language models in clinical diagnosis: performance evaluation across common and complex medical cases. JAMIA Open. 2025, 8, ooaf055. [Google Scholar] [CrossRef]

- Pagano, S.; Strumolo, L.; Michalk, K.; Schiegl, J.; Pulido, L.C.; Reinhard, J.; et al. Evaluating ChatGPT, Gemini and other Large Language Models (LLMs) in orthopaedic diagnostics: A prospective clinical study. Comput Struct Biotechnol J. 2025, 28, 9–15. [Google Scholar] [CrossRef]

- Madfa, A.A.; Alshammari, A.F.; Anazi, B.A.; Alenezi, Y.E.; Alkurdi, K.A. Accuracy and reliability of Manus, ChatGPT, and Claude in case-based dental diagnosis. Front Oral Health 2025, 6, 1686090. [Google Scholar] [CrossRef]

- Wojcik, D.; Adamiak, O.; Czerepak, G.; Tokarczuk, O.; Szalewski, L. A bi-linguistic comparative analysis of ChatGPT-4, Gemini, and Claude performance on Polish medical-dental final examinations. Sci Rep. 2025, 15, 33083. [Google Scholar] [CrossRef] [PubMed]

- Idan, D.; Ben-Shitrit, I.; Volevich, M.; Binyamin, Y.; Nassar, R.; Nassar, M.; et al. Evaluating the performance of large language models versus human researchers on real world complex medical queries. Sci Rep. 2025, 15, 37824. [Google Scholar] [CrossRef] [PubMed]

- Tukur Jido, J.; Al-Wizni, A.; Aung, S.L. Readability of AI-Generated Patient Information Leaflets on Alzheimer’s, Vascular Dementia, and Delirium. Cureus 2025, 17, e85463. [Google Scholar] [CrossRef] [PubMed]

- Wu, L.; Huang, L.; Li, M.; Xiong, Z.; Liu, D.; Liu, Y.; et al. Differential diagnosis of secondary hypertension based on deep learning. Artif Intell Med. 2023, 141, 102554. [Google Scholar] [CrossRef]

- Singhal, K.; Azizi, S.; Tu, T.; Mahdavi, S.S.; Wei, J.; Chung, H.W.; et al. Large language models encode clinical knowledge. Nature 2023, 620, 172–180. [Google Scholar] [CrossRef]

- Guvel, M.C.; Kiyak, Y.S.; Varan, H.D.; Sezenoz, B.; Coskun, O.; Uluoglu, C. Generative AI vs. human expertise: a comparative analysis of case-based rational pharmacotherapy question generation. Eur J Clin Pharmacol. 2025, 81, 875–883. [Google Scholar] [CrossRef]

- Shah, S.V. Accuracy, Consistency, and Hallucination of Large Language Models When Analyzing Unstructured Clinical Notes in Electronic Medical Records. JAMA Netw Open. 2024, 7, e2425953. [Google Scholar] [CrossRef]

- Wang, D.; Ye, J.; Li, J.; Liang, J.; Zhang, Q.; Hu, Q.; et al. Enhancing Large Language Models for Improved Accuracy and Safety in Medical Question Answering: Comparative Study. JMIR Med Educ. 2025, 11, e70190. [Google Scholar] [CrossRef]

- Jeblick, K.; Schachtner, B.; Dexl, J.; Mittermeier, A.; Stuber, A.T.; Topalis, J.; et al. ChatGPT makes medicine easy to swallow: an exploratory case study on simplified radiology reports. Eur Radiol. 2024, 34, 2817–2825. [Google Scholar] [CrossRef]

- Bas Aksu, O.; Aydin, R.F.; Gokcay Canpolat, A.; Demir, O.; Sahin, M.; Emral, R.; et al. Artificial intelligence in endocrine practice: comparing ChatGPT, Gemini, and Claude for adrenal incidentaloma care. J Endocrinol Invest. 2026, 49, 69–79. [Google Scholar] [CrossRef]

- Takita, H.; Kabata, D.; Walston, S.L.; Tatekawa, H.; Saito, K.; Tsujimoto, Y.; et al. A systematic review and meta-analysis of diagnostic performance comparison between generative AI and physicians. NPJ Digit Med. 2025, 8, 175. [Google Scholar] [CrossRef]

- Almagazzachi, A.; Mustafa, A.; Eighaei Sedeh, A.; Vazquez Gonzalez, A.E.; Polianovskaia, A.; Abood, M.; et al. Generative Artificial Intelligence in Patient Education: ChatGPT Takes on Hypertension Questions. Cureus 2024, 16, e53441. [Google Scholar] [CrossRef]

- Liu, D.; Long, Y.; Zuoqiu, S.; Liu, D.; Li, K.; Lin, Y.; et al. Reliability of Large Language Model Generated Clinical Reasoning in Assisted Reproductive Technology: Blinded Comparative Evaluation Study. J Med Internet Res. 2026, 28, e85206. [Google Scholar] [CrossRef]

- Asgari, E.; Montana-Brown, N.; Dubois, M.; Khalil, S.; Balloch, J.; Yeung, J.A.; et al. A framework to assess clinical safety and hallucination rates of LLMs for medical text summarisation. NPJ Digit Med. 2025, 8, 274. [Google Scholar] [CrossRef]

- Yu, E.; Chu, X.; Zhang, W.; Meng, X.; Yang, Y.; Ji, X.; et al. Large Language Models in Medicine: Applications, Challenges, and Future Directions. Int J Med Sci. 2025, 22, 2792–2801. [Google Scholar] [CrossRef]

- Qiu, P.; Wu, C.; Liu, S.; Fan, Y.; Zhao, W.; Chen, Z.; et al. Quantifying the reasoning abilities of LLMs on clinical cases. Nat Commun. 2025, 16, 9799. [Google Scholar] [CrossRef]

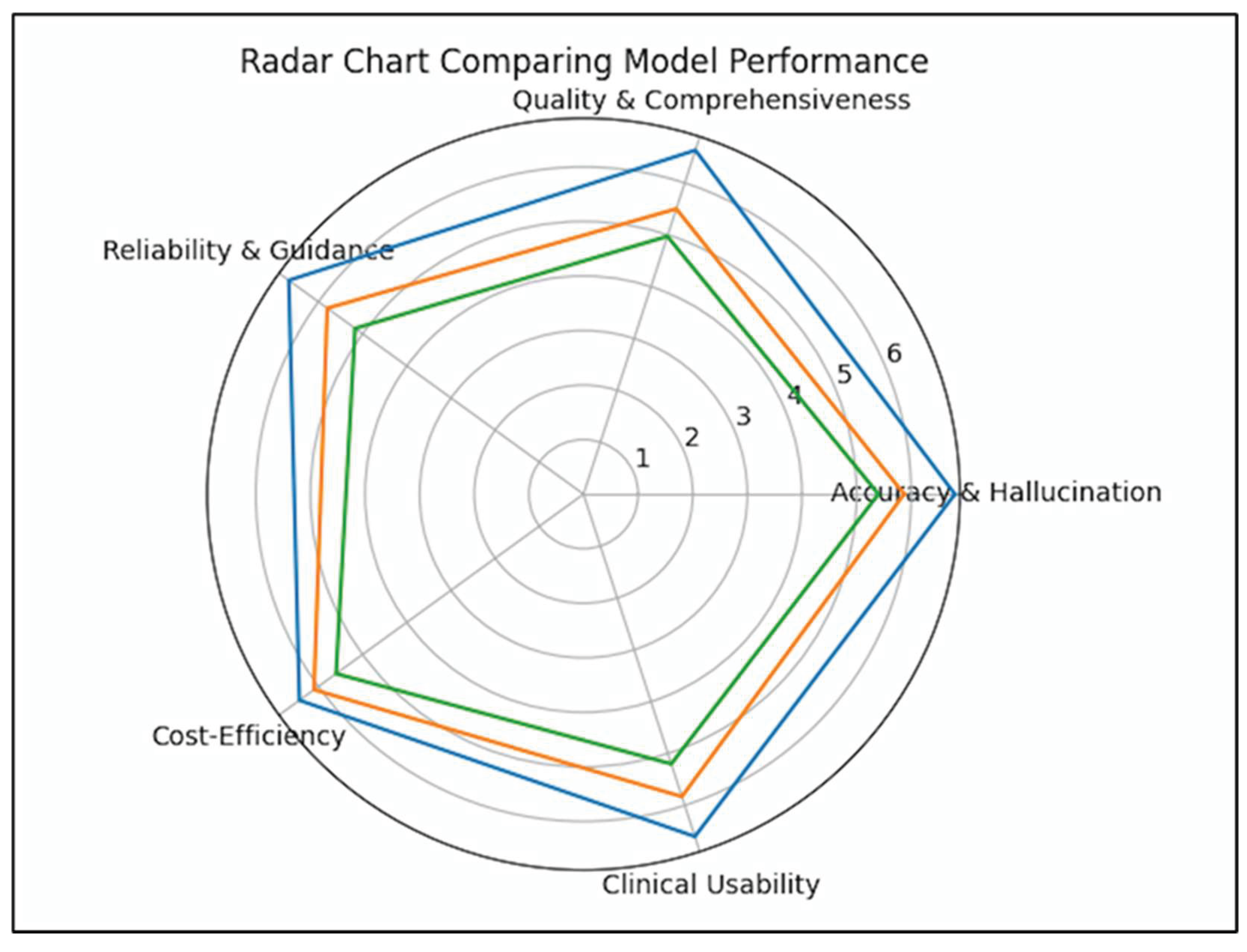

| Criterion | Claude Sonnet 4.6 | ChatGPT-5.2 | Gemini 3.0 Pro | p (ANOVA) |

|---|---|---|---|---|

| Accuracy & Hallucination Control | 6.80 ± 0.41 | 5.87 ± 0.57 | 5.40 ± 0.93 | <0.001 |

| Quality & Comprehensiveness | 6.63 ± 0.56 | 5.50 ± 0.86 | 4.97 ± 1.00 | <0.001 |

| Reliability & Clinical Guidance | 6.67 ± 0.48 | 5.80 ± 0.66 | 5.17 ± 0.91 | <0.001 |

| Cost-Efficiency | 6.43 ± 0.82 | 6.10 ± 0.66 | 5.60 ± 1.22 | 0.003 |

| Clinical Usability | 6.60 ± 0.50 | 5.83 ± 0.59 | 5.20 ± 0.93 | <0.001 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).