1. Introduction

The explosive growth of e-commerce has placed unprecedented pressure on warehouse fulfillment operations, driving the widespread deployment of autonomous robot fleets [

19,

20]. Modern distribution centers increasingly rely on

heterogeneous fleets—comprising Automated Guided Vehicles (AGVs), Autonomous Mobile Robots (AMRs), and specialized forklift units—each offering distinct trade-offs among speed, payload capacity, and energy efficiency [

2]. Orchestrating such diverse fleets to execute hundreds of pickup-delivery tasks under tight time-window constraints, while respecting each robot’s physical limitations, remains a central challenge in warehouse automation [

1,

3].

Formally, this Multi-Robot Task Allocation (MRTA) problem generalizes the Heterogeneous Fleet Vehicle Routing Problem with Time Windows (HF-VRPTW) [

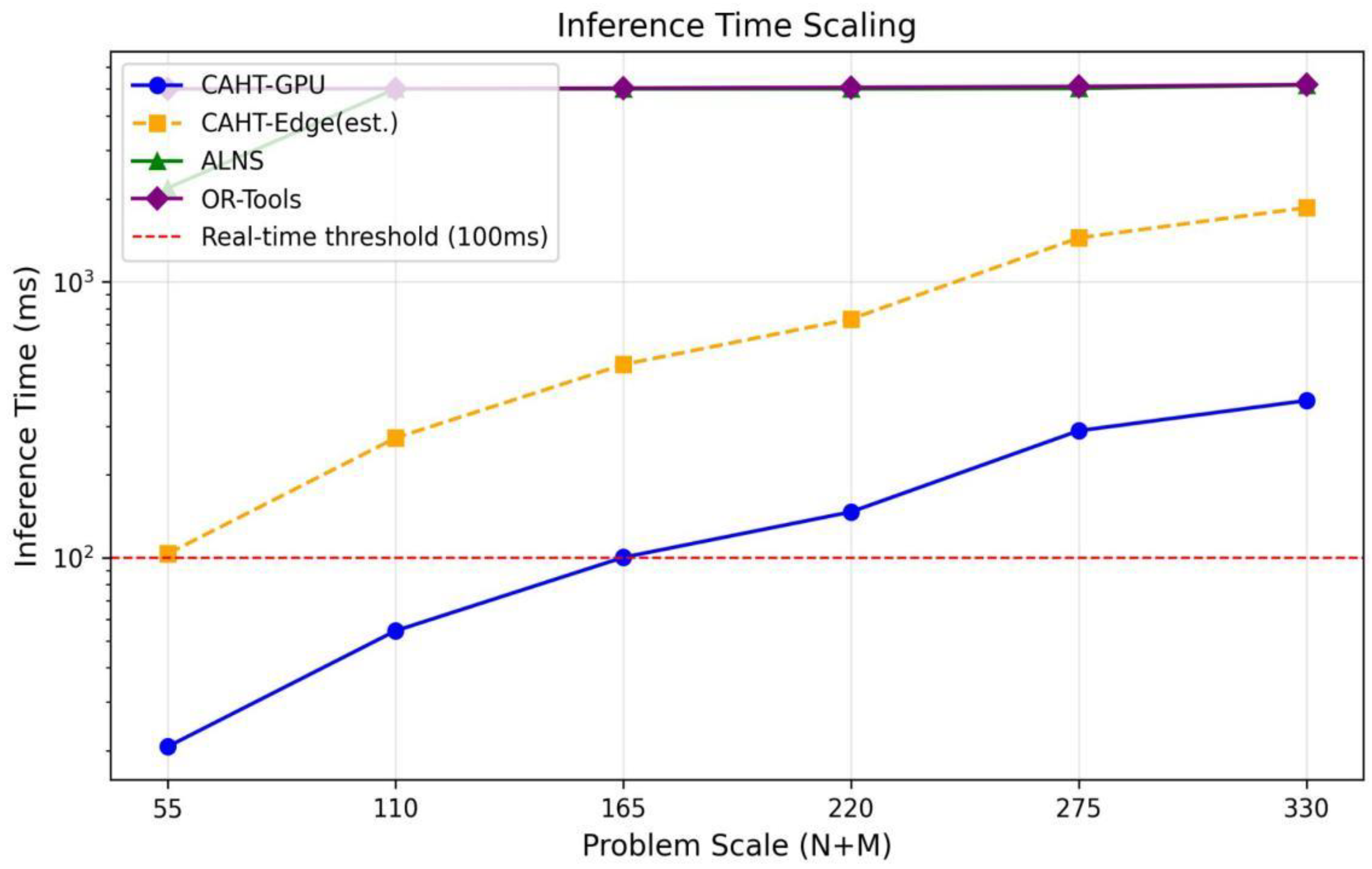

5], which is NP-hard. Existing solution approaches present a fundamental and unsatisfactory trade-off. Metaheuristic solvers such as Adaptive Large Neighborhood Search (ALNS) [

13,

14] deliver high-quality solutions but require seconds to minutes of computation, precluding deployment in real-time re-allocation cycles. Simple heuristics (e.g., nearest-first assignment) offer sub-millisecond response but sacrifice 20–30% in solution quality. The emerging field of Neural Combinatorial Optimization (NCO) [

16,

17] promises to bridge this gap by learning to produce near-optimal solutions in a single forward pass.

However, existing NCO architectures—including the Attention Model, POMO [

12], and recent generalizable solvers [

9,

10]—are fundamentally ill-equipped for heterogeneous warehouse MRTA because they lack: (a) mechanisms for modeling the distinct interaction semantics among heterogeneous robot types and diverse tasks; (b) architectural enforcement of hard constraints such as capacity limits, energy budgets, and zone accessibility [

18]; and (c) native support for the joint assignment-and-sequencing structure inherent in multi-robot allocation [

4]. Our experiments confirm this diagnosis: POMO, despite retraining on warehouse MRTA data, produces solutions with constraint violation rates exceeding 98%—rendering its outputs effectively unusable.

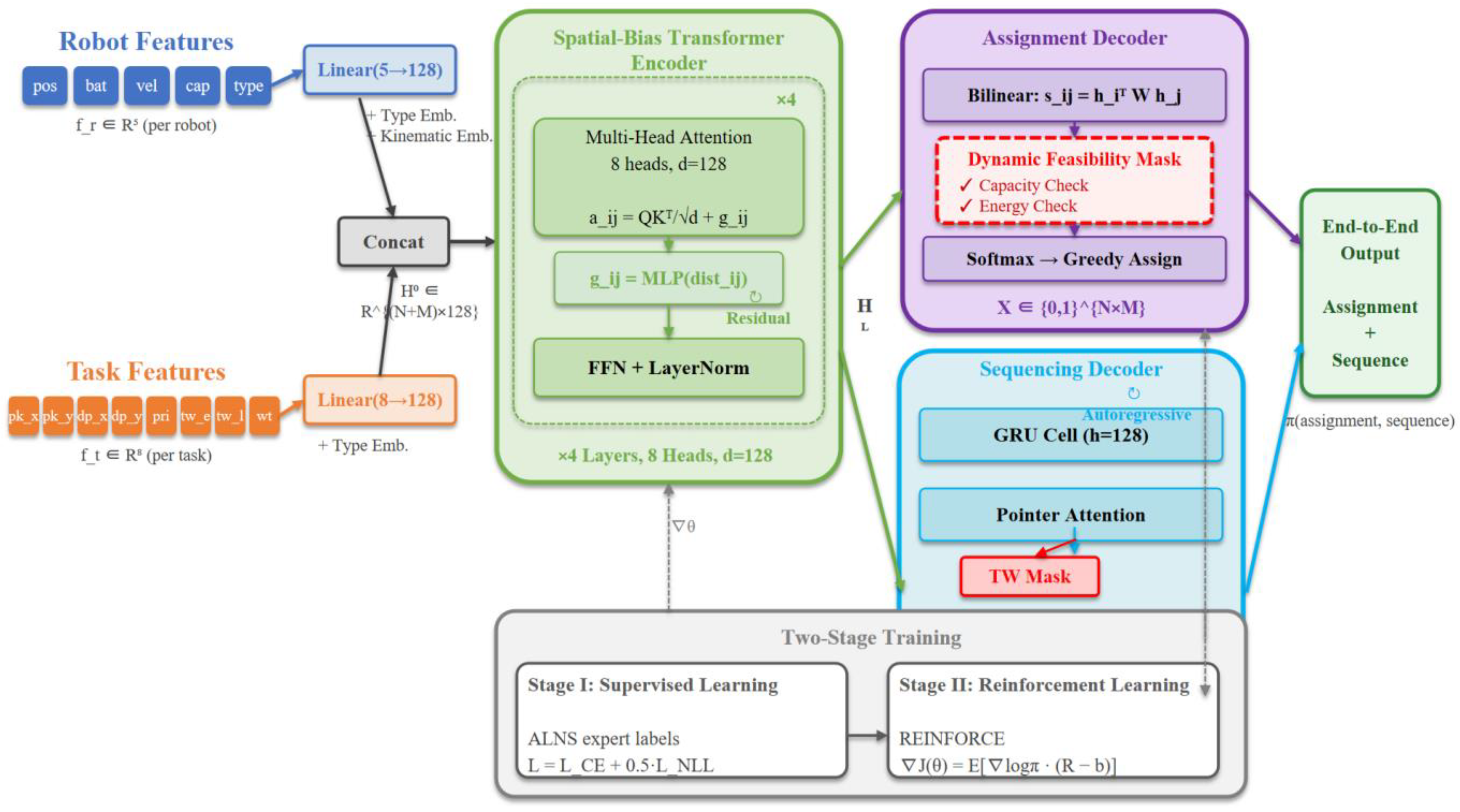

This paper bridges this gap by proposing the Constraint-Aware Heterogeneous Transformer (CAHT), a lightweight neural architecture that addresses each of the above limitations through three targeted contributions:

(1) Dynamic feasibility masking (addressing limitation b). Hard constraint enforcement is embedded directly into the assignment decoder’s probability computation by setting infeasible robot–task scores to negative infinity before softmax normalization. This architectural mechanism reduces constraint violations by over 75 percentage points and improves objective values by 213% compared to unconstrained decoding—validating that constraint satisfaction in heterogeneous MRTA cannot be learned from data alone but must be structurally enforced [

18].

(2) Spatial-bias Transformer encoding for heterogeneous entities (addressing limitation a). The standard self-attention mechanism is augmented with a learned spatial proximity bias, enabling distance-dependent robot–task interaction modeling. Combined with type-specific input embeddings that distinguish robot categories, this design supports effective representation learning across heterogeneous entity types without requiring explicit graph construction.

(3) End-to-end assignment and sequencing (addressing limitation c). CAHT jointly produces task-to-robot assignments via a bilinear attention decoder and per-robot task execution orders via a GRU-based autoregressive decoder, eliminating the need for separate optimization stages.

Extensive experiments on a synthetic benchmark with ALNS-generated training labels demonstrate that CAHT achieves objective values within 7–13% of ALNS while being 29–91× faster, with strong generalization to unseen problem scales. The model contains only 0.82M parameters, positioning it as a practical candidate for edge-deployed real-time warehouse automation.