Submitted:

16 March 2026

Posted:

17 March 2026

You are already at the latest version

Abstract

Keywords:

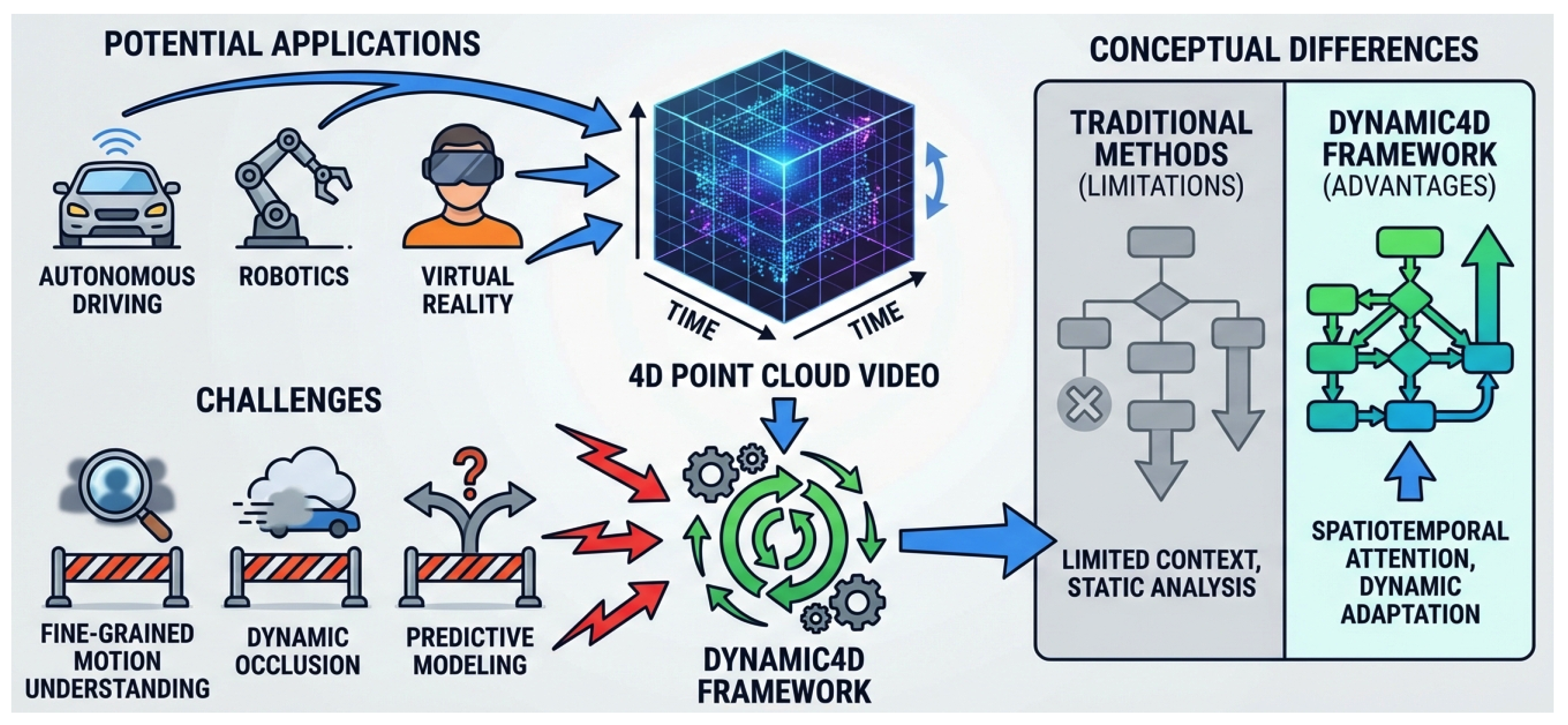

1. Introduction

- 1.

- Complex Interaction and Subtle Action Recognition: Existing methods often struggle with modeling subtle and rapid interactions between objects or between humans and their environments, as well as capturing long-range temporal dependencies effectively. Such challenges are increasingly being addressed by advanced models that bridge vision, language, and action to enable a more holistic understanding of dynamic scenarios [4].

- 2.

- Dynamic Occlusion and Sparse Point Clouds: In real-world scenarios, dynamic objects are frequently occluded or observed through sparse point clouds due to sensor limitations. This adversely impacts the model’s ability to perceive complete motion trajectories and extract robust features. Novel methods for point cloud completion, utilizing advanced distance metrics, contribute to mitigating the effects of sparsity [5].

- 3.

- Predictive Modeling: The capability to predict future frames or subsequent motions is a crucial indicator of deep dynamic scene understanding. However, current self-supervised frameworks have largely underemphasized direct modeling for such predictive tasks. The development of sophisticated spatial-temporal models is vital for improving prediction capabilities in complex robotic manipulation tasks [6].

- We propose Dynamic4D, a novel self-supervised framework that significantly enhances the understanding of fine-grained motion and long-range temporal dependencies in 4D point cloud videos.

- We introduce two architectural innovations: the Adaptive Causal Temporal Attention (ACTA) mechanism for improved temporal modeling and Motion Prediction Tokens (MPT) coupled with a Dynamic Perception Loss, enabling direct motion inference in occluded regions.

- Through extensive experiments, Dynamic4D consistently achieves state-of-the-art performance across various 4D point cloud downstream tasks, demonstrating superior robustness, generalization, and data efficiency compared to existing methods.

2. Related Work

2.1. Self-Supervised Learning for 3D and 4D Point Clouds

2.2. Dynamic Modeling and Motion Prediction in Point Clouds

3. Method

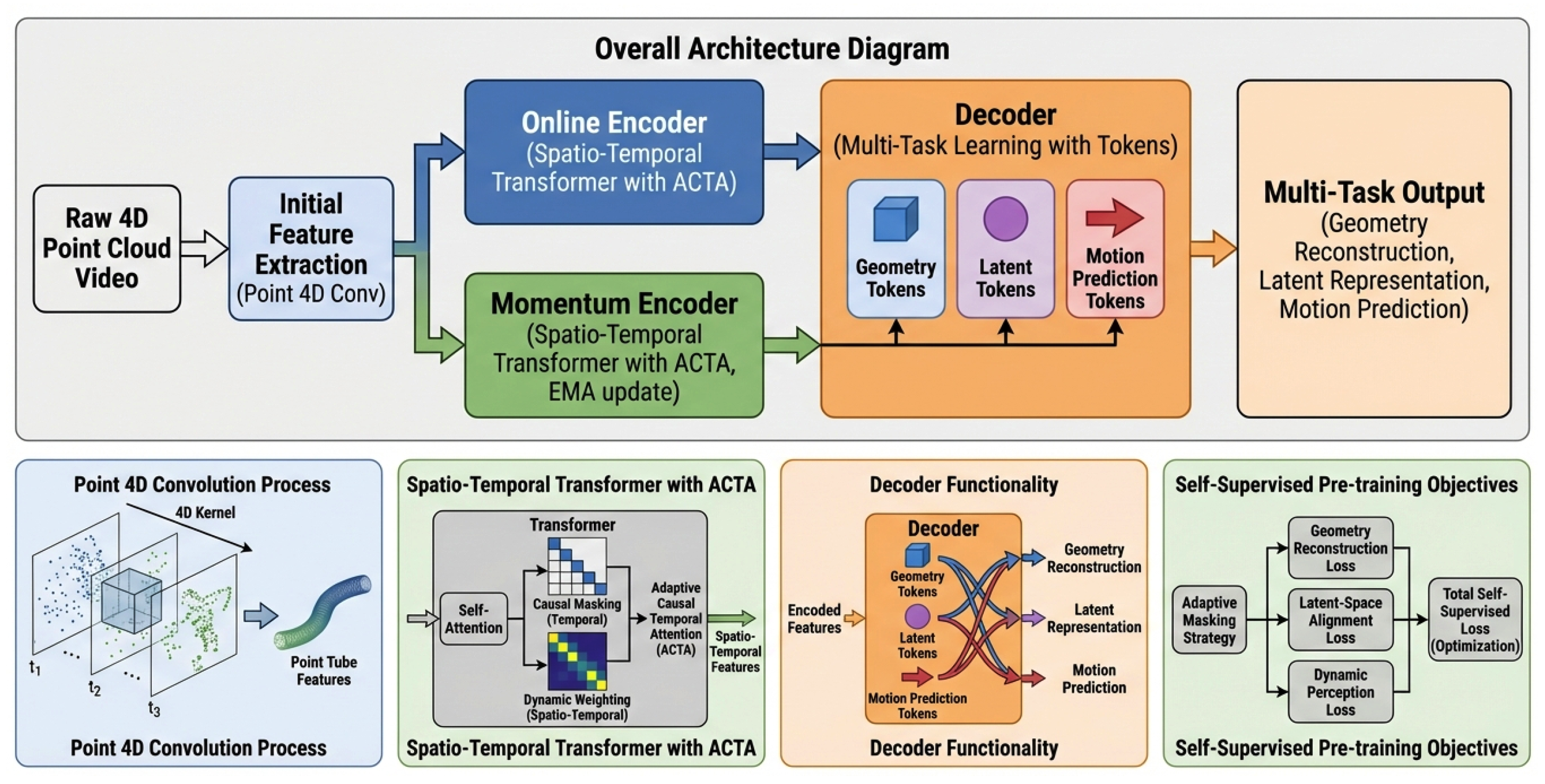

3.1. Overall Architecture

3.2. Initial Feature Extraction: Point 4D Convolution

3.3. Spatio-Temporal Encoder with Adaptive Causal Temporal Attention

- 1.

- Causal Masking: A causal mask is explicitly applied within the temporal attention module. This ensures that the representation of a point at time step t can only attend to information from previous time steps , thereby preventing information leakage from future data. This mechanism explicitly encourages the model to learn predictive capabilities from historical data, which is vital for anticipating future motion.

- 2.

- Dynamic Weighting: A dynamic weight matrix is integrated into the attention calculation. This mechanism adaptively adjusts temporal attention weights based on the local motion intensity of point clouds. Points residing within high-dynamic regions (e.g., fast-moving objects or complex interactions) receive higher attention weights, allowing the model to focus computational resources on the most informative and challenging areas of motion. Specifically, can be learned via a small sub-network that takes local motion features (e.g., derived from sparse optical flow or point displacements) as input, mapping them to attention biases.

3.4. Lightweight Decoder with Motion Prediction Tokens

3.5. Self-Supervised Pre-training Objectives

3.5.1. Adaptive Masking Strategy

3.5.2. Geometry Reconstruction Loss

3.5.3. Latent-Space Alignment Loss

- 1.

- Frame-level Motion Alignment: This component aligns the features of corresponding `point tubes` or pooled frame-level features across adjacent frames between the online and momentum encoders, ensuring local temporal coherence and consistency in short-range dynamics.

- 2.

- Video-level Global Alignment: This aligns the global video representations (e.g., obtained by global pooling across all frames and points) produced by both encoders, fostering long-range consistency and capturing overall scene dynamics, thereby stabilizing the learning of macro-level temporal patterns.

3.5.4. Dynamic Perception Loss

3.5.5. Total Pre-training Loss

4. Experiments

4.1. Experimental Setup

4.1.1. Datasets

- MSR-Action3D: A dataset for human action recognition, containing sequences of skeletal data and depth maps, which are converted to 4D point clouds for our experiments.

- NTU-RGBD: A large-scale dataset for 3D action recognition, featuring diverse human actions performed by multiple subjects and camera views. We utilize the depth streams to generate 4D point cloud sequences.

- HOI4D: A dataset focused on human-object interaction action segmentation, providing rich and complex scenarios for fine-grained dynamic analysis.

- NvGesture: A dataset for hand gesture recognition in driving scenarios, offering challenges related to small, rapid movements and potential occlusions.

- SHREC’17: Another prominent dataset for 3D hand gesture recognition, further testing the model’s ability to discern subtle hand movements.

4.1.2. Pre-Training Details

- Data Preprocessing: Raw 4D point cloud data is first preprocessed into `point tubes`. This involves performing Farthest Point Sampling (FPS) on each frame (e.g., 1024 points per frame for MSR-Action3D and NTU-RGBD; 2048 points per frame for HOI4D), followed by constructing `point tube` sequences that encapsulate spatio-temporal neighborhood information. A key aspect is our adaptive motion-sensitive masking strategy: instead of purely random masking, we leverage frame-to-frame motion estimation (e.g., local point displacement or intensity changes) to identify high-dynamic regions. These dynamic areas, or their immediate vicinities, are then masked with a higher probability (typically 75% masking ratio), simulating real-world dynamic occlusions and compelling the model to infer motion from challenging contexts.

- Model Training: The Online Encoder processes the visible `point tube embeddings`, while the Momentum Encoder, an Exponential Moving Average (EMA) copy of the online encoder, provides stable target representations for consistency learning. The Decoder, a lightweight Transformer, performs multi-task reconstruction and prediction using distinct token types: `geometry tokens` for geometric reconstruction, `latent tokens` for latent-space alignment, and the novel `Motion Prediction Tokens (MPT)` for explicit motion vector prediction.

-

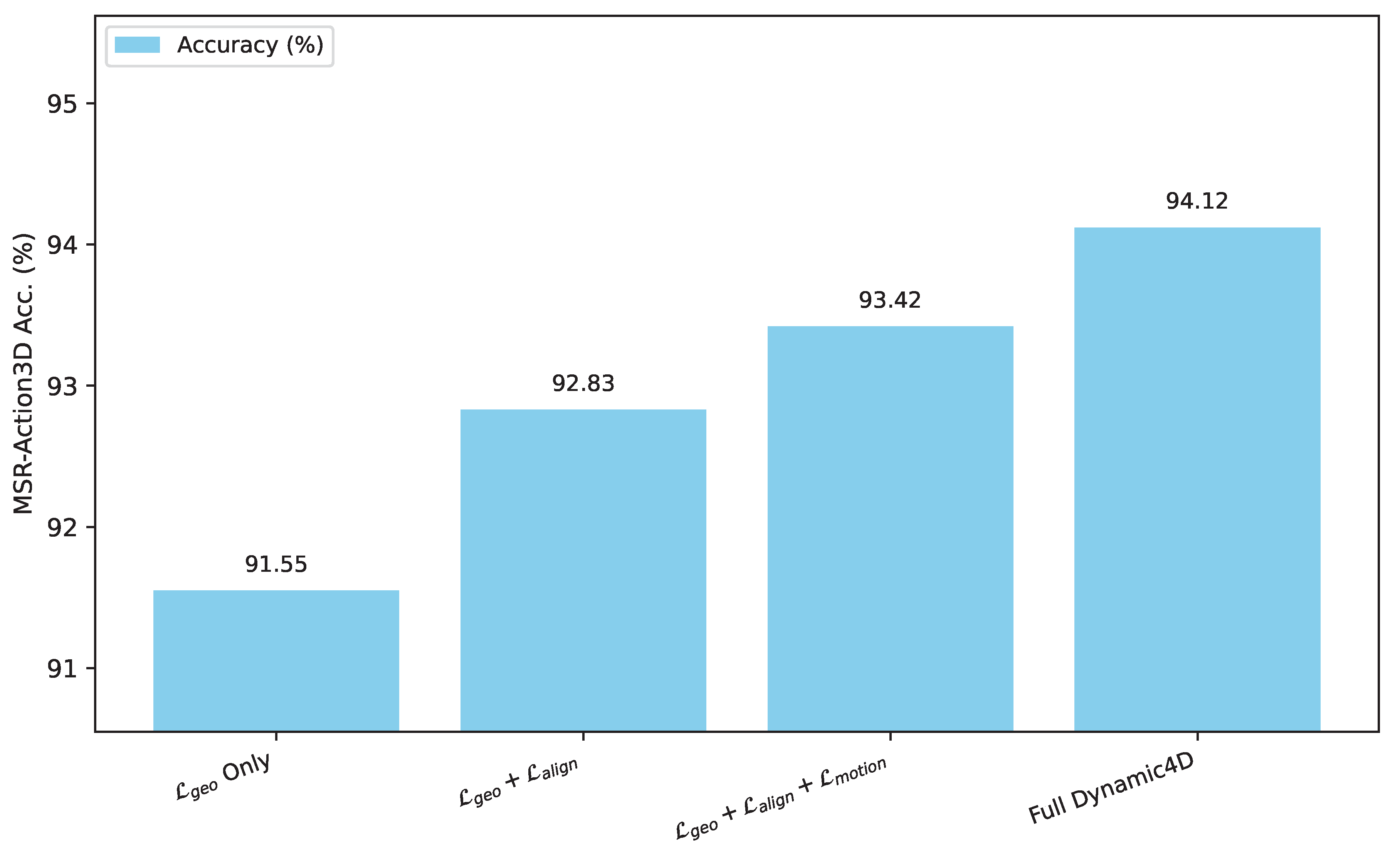

Loss Functions: The total pre-training loss is a weighted sum of three objectives:

- 1.

- Geometry Reconstruction Loss (): Uses Chamfer Distance to reconstruct masked point cloud geometries.

- 2.

- Latent-Space Alignment Loss (): Enforces consistency between online and momentum encoder features at both frame and video levels.

- 3.

- Dynamic Perception Loss (): An L1 loss on the predicted motion vectors for masked regions, specifically supervising the output of MPTs.

The weighting hyperparameters for these losses (, , ) are determined empirically. - Hyperparameters: Key configurations include point sampling rates (1024/2048 points per frame), sequence lengths (24 frames for MSR-Action3D/NTU-RGBD, 150 frames for HOI4D), and pre-training epochs (200 for MSR-Action3D, 100 for NTU-RGBD, 50 for HOI4D). The encoder backbone varies based on dataset scale and complexity, flexibly using P4Transformer (5-10 layers) or PPTr, with all backbones integrating our Adaptive Causal Temporal Attention (ACTA) module. The decoder is a 4-layer Transformer.

4.1.3. Fine-Tuning and Evaluation

- End-to-end Fine-tuning: The pre-trained encoder is detached from the decoder, and a task-specific classifier or regression head is added on top. The entire network is then fine-tuned on the target dataset.

- Semi-supervised Learning: We fine-tune the pre-trained model with only a limited percentage of labeled data (e.g., 10%, 50%) to assess its data efficiency and generalization capability under scarce supervision.

- Few-shot Learning: Experiments are conducted to evaluate the model’s ability to generalize from a very small number of examples per class, often involving cross-dataset transfer (e.g., pre-training on NTU-RGBD and fine-tuning on NvGesture/SHREC’17).

4.2. Comparison with State-of-the-Art

4.2.1. Action Recognition and Gesture Recognition

4.2.2. Action Segmentation

4.2.3. Semi-Supervised Learning

4.2.4. Few-Shot Learning

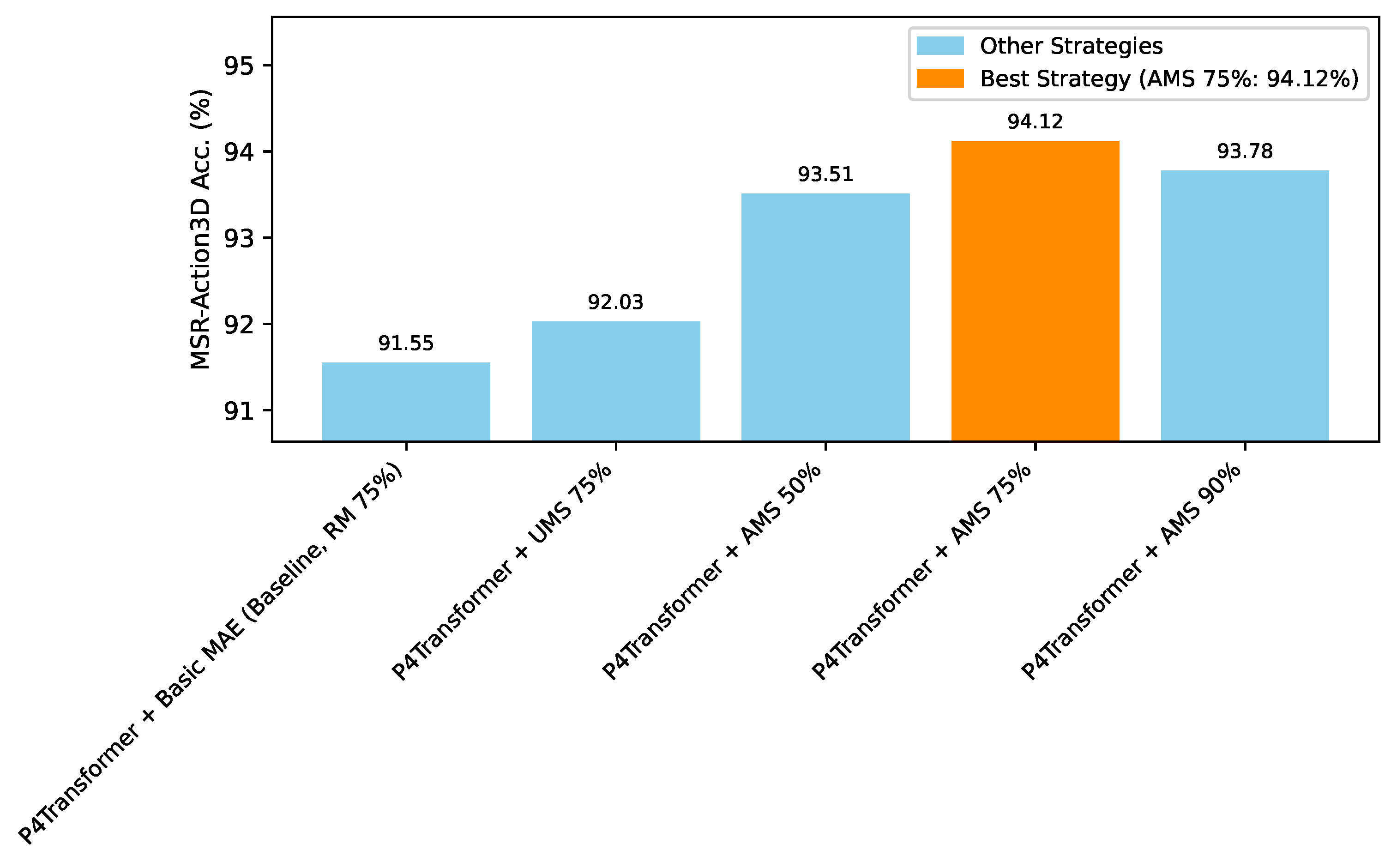

4.3. Ablation Studies

4.4. Analysis of Adaptive Masking Strategy

4.5. Impact of Loss Components

4.6. Efficiency Analysis

4.7. Robustness to Data Degradation

4.8. Qualitative Analysis

5. Conclusion

References

- Xu, C.; Chen, Y.Y.; Nayyeri, M.; Lehmann, J. Temporal Knowledge Graph Completion using a Linear Temporal Regularizer and Multivector Embeddings. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 2569–2578. [CrossRef]

- You, C.; Chen, N.; Zou, Y. Self-supervised Contrastive Cross-Modality Representation Learning for Spoken Question Answering. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2021. Association for Computational Linguistics, 2021, pp. 28–39. [CrossRef]

- Chen, T.; Shi, H.; Tang, S.; Chen, Z.; Wu, F.; Zhuang, Y. CIL: Contrastive Instance Learning Framework for Distantly Supervised Relation Extraction. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 6191–6200. [CrossRef]

- Lv, Q.; Kong, W.; Li, H.; Zeng, J.; Qiu, Z.; Qu, D.; Song, H.; Chen, Q.; Deng, X.; Pang, J. F1: A vision-language-action model bridging understanding and generation to actions. arXiv preprint arXiv:2509.06951 2025.

- Lin, F.; Yue, Y.; Hou, S.; Yu, X.; Xu, Y.; Yamada, K.D.; Zhang, Z. Hyperbolic chamfer distance for point cloud completion. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2023, pp. 14595–14606.

- Lv, Q.; Li, H.; Deng, X.; Shao, R.; Li, Y.; Hao, J.; Gao, L.; Wang, M.Y.; Nie, L. Spatial-temporal graph diffusion policy with kinematic modeling for bimanual robotic manipulation. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 17394–17404.

- Padilla-López, J.R.; Chaaraoui, A.A.; Flórez-Revuelta, F. A discussion on the validation tests employed to compare human action recognition methods using the MSR Action3D dataset. CoRR 2014.

- Bulbul, M.F.; Islam, S.; Ali, H. 3D human action analysis and recognition through GLAC descriptor on 2D motion and static posture images. CoRR 2019.

- Liu, W. Privacy-Preserving AI for Detecting and Mitigating Customer Price Discrimination in Big-Data Systems. Journal of Computer, Signal, and System Research 2026, 3, 37–46. [Google Scholar]

- Liu, W. KV Cache and Inference Scheduling: Energy Modeling for High-QPS Services. Journal of Industrial Engineering and Applied Science 2026, 4, 34–41. [Google Scholar] [CrossRef]

- Yan, Y.; Li, R.; Wang, S.; Zhang, F.; Wu, W.; Xu, W. ConSERT: A Contrastive Framework for Self-Supervised Sentence Representation Transfer. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 5065–5075. [CrossRef]

- Liu, W. Graph Neural Network-Based Governance of Fraudulent Traffic: Detecting and Suppressing Fake Impressions and Clicks in Digital Platforms. European Journal of AI, Computing & Informatics 2026, 2, 113–123. [Google Scholar]

- Du, J.; Grave, E.; Gunel, B.; Chaudhary, V.; Celebi, O.; Auli, M.; Stoyanov, V.; Conneau, A. Self-training Improves Pre-training for Natural Language Understanding. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 5408–5418. [CrossRef]

- Zuo, X.; Cao, P.; Chen, Y.; Liu, K.; Zhao, J.; Peng, W.; Chen, Y. Improving Event Causality Identification via Self-Supervised Representation Learning on External Causal Statement. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021. Association for Computational Linguistics, 2021, pp. 2162–2172. [CrossRef]

- Min, S.; Lewis, M.; Zettlemoyer, L.; Hajishirzi, H. MetaICL: Learning to Learn In Context. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2022, pp. 2791–2809. [CrossRef]

- Li, Z.; Zou, Y.; Zhang, C.; Zhang, Q.; Wei, Z. Learning Implicit Sentiment in Aspect-based Sentiment Analysis with Supervised Contrastive Pre-Training. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 246–256. [CrossRef]

- Lv, Q.; Deng, X.; Chen, G.; Wang, M.Y.; Nie, L. Decision mamba: A multi-grained state space model with self-evolution regularization for offline rl. Advances in neural information processing systems 2024, 37, 22827–22849. [Google Scholar]

- Ding, N.; Chen, Y.; Han, X.; Xu, G.; Wang, X.; Xie, P.; Zheng, H.; Liu, Z.; Li, J.; Kim, H.G. Prompt-learning for Fine-grained Entity Typing. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022. Association for Computational Linguistics, 2022, pp. 6888–6901. [CrossRef]

- Meng, Y.; Zhang, Y.; Huang, J.; Wang, X.; Zhang, Y.; Ji, H.; Han, J. Distantly-Supervised Named Entity Recognition with Noise-Robust Learning and Language Model Augmented Self-Training. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2021, pp. 10367–10378. [CrossRef]

- Luu, K.; Khashabi, D.; Gururangan, S.; Mandyam, K.; Smith, N.A. Time Waits for No One! Analysis and Challenges of Temporal Misalignment. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2022, pp. 5944–5958. [CrossRef]

- Wang, P.; Zhu, Z. Overview of Online Parameter Identification of Permanent Magnet Synchronous Machines under Sensorless Control. IEEE Access 2026.

- Wang, P.; Zhu, Z.; Freire, N.; Azar, Z.; Wu, X.; Liang, D. Online Simultaneous Identification of Multi-Parameters for Interior PMSMs Under Sensorless Control. CES Transactions on Electrical Machines and Systems 2025, 9, 422–433. [Google Scholar] [CrossRef]

- Wang, P.; Zhu, Z.; Liang, D.; Freire, N.M.; Azar, Z. Dual signal injection-based online parameter estimation of surface-mounted PMSMs under sensorless control. IEEE Transactions on Industry Applications 2025.

- Li, B.Z.; Nye, M.; Andreas, J. Implicit Representations of Meaning in Neural Language Models. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 1813–1827. [CrossRef]

- Zhang, K.; Zhang, K.; Zhang, M.; Zhao, H.; Liu, Q.; Wu, W.; Chen, E. Incorporating Dynamic Semantics into Pre-Trained Language Model for Aspect-based Sentiment Analysis. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2022. Association for Computational Linguistics, 2022, pp. 3599–3610. [CrossRef]

- Li, Z.; Jin, X.; Guan, S.; Li, W.; Guo, J.; Wang, Y.; Cheng, X. Search from History and Reason for Future: Two-stage Reasoning on Temporal Knowledge Graphs. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 4732–4743. [CrossRef]

- Haviv, A.; Ram, O.; Press, O.; Izsak, P.; Levy, O. Transformer Language Models without Positional Encodings Still Learn Positional Information. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022. Association for Computational Linguistics, 2022, pp. 1382–1390. [CrossRef]

- Yang, J.; Wang, Y.; Yi, R.; Zhu, Y.; Rehman, A.; Zadeh, A.; Poria, S.; Morency, L.P. MTAG: Modal-Temporal Attention Graph for Unaligned Human Multimodal Language Sequences. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 1009–1021. [CrossRef]

- Li, Y.; Du, Y.; Zhou, K.; Wang, J.; Zhao, X.; Wen, J.R. Evaluating Object Hallucination in Large Vision-Language Models. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. Association for Computational Linguistics, 2023, pp. 292–305. [CrossRef]

- Chen, D.; Chen, H.; Yang, Y.; Lin, A.; Yu, Z. Action-Based Conversations Dataset: A Corpus for Building More In-Depth Task-Oriented Dialogue Systems. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics, 2021, pp. 3002–3017. [CrossRef]

- Fu, J.; Huang, X.; Liu, P. SpanNER: Named Entity Re-/Recognition as Span Prediction. In Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers). Association for Computational Linguistics, 2021, pp. 7183–7195. [CrossRef]

| Method (baseline / variant) | MSR-Action3D Acc. | NTU-RGBD Acc. | NvGesture Acc. | SHREC’17 Acc. |

|---|---|---|---|---|

| P4Transformer (scratch) | 90.94 | 90.2 | 87.7 | 91.2 |

| P4Transformer + MaST-Pre | 91.29 | 90.8 | 89.3 | 92.4 |

| P4Transformer + Uni4D | 93.38 | 90.7 | 89.6 | 93.8 |

| P4Transformer + Dynamic4D (Ours) | 94.12 +0.74 | 91.4 +0.7 | 90.3 +0.7 | 94.5 +0.7 |

| Method | Frames | Accuracy (Acc.) | Edit | F1@10 | F1@25 | F1@50 |

|---|---|---|---|---|---|---|

| PPTr (baseline) | 150 | 77.4 | 80.1 | 81.7 | 78.5 | 69.5 |

| PPTr + VideoMAE | 150 | 78.6 | 80.2 | 81.9 | 78.7 | 69.9 |

| PPTr + Uni4D | 150 | 81.0 | 82.6 | 84.6 | 82.2 | 74.2 |

| PPTr + Dynamic4D (Ours) | 150 | 81.9 +0.9 | 83.5 +0.9 | 85.3 +0.7 | 83.1 +0.9 | 75.5 +1.3 |

| Method (based on P4Transformer) | Labeled Data Ratio | Accuracy (%) |

|---|---|---|

| From scratch | 50% | 81.2 |

| Uni4D Pre-trained | 50% | 86.5 |

| Dynamic4D Pre-trained (Ours) | 50% | 87.8 +1.3 |

| From scratch | 10% | 71.5 |

| Uni4D Pre-trained | 10% | 79.8 |

| Dynamic4D Pre-trained (Ours) | 10% | 81.5 +1.7 |

| Setting | MaST-Pre Acc. | Uni4D Acc. | Dynamic4D Acc. (Ours) | Improvement (vs Uni4D) |

|---|---|---|---|---|

| 5-way 1-shot | 70.2 | 74.5 | 75.3 | +0.8 |

| 5-way 5-shot | 95.7 | 97.8 | 98.2 | +0.4 |

| 10-way 1-shot | 71.1 | 79.4 | 81.0 | +1.6 |

| 10-way 5-shot | 92.7 | 95.8 | 96.5 | +0.7 |

| Method Variant | MSR-Action3D Acc. (%) |

|---|---|

| P4Transformer + Basic MAE (Baseline) | 91.55 |

| + Adaptive Causal Temporal Attention (ACTA) | 92.31 +0.76 |

| + Motion Prediction Tokens (MPT) | 93.07 +0.76 |

| + Adaptive Masking Strategy (AMS) | 94.12 +1.05 |

| Method | Params (M) | GFLOPs | FPS (Inference) |

|---|---|---|---|

| P4Transformer (scratch) | 15.2 | 18.7 | 78.5 |

| Uni4D | 16.1 | 20.3 | 72.1 |

| Dynamic4D (Ours) | 16.3 | 21.1 | 69.8 |

| Method | Clean Acc. | Acc. (N0.01) | Acc. (N0.03) | Acc. (D20%) | Acc. (D40%) |

|---|---|---|---|---|---|

| P4Transformer (scratch) | 90.94 | 88.12 | 81.35 | 87.56 | 80.21 |

| Uni4D Pre-trained | 93.38 | 91.85 | 87.40 | 90.15 | 84.88 |

| Dynamic4D Pre-trained (Ours) | 94.12 | 92.91 +1.06 | 89.17 +1.77 | 91.33 +1.18 | 87.05 +2.17 |

| Characteristic / Scenario | Uni4D (Baseline) | Dynamic4D (Ours) | Relative Improvement |

|---|---|---|---|

| Motion Trajectory Consistency (Occlusion) | Good | Excellent | Significant |

| Fine-grained Action Boundary Precision | Good | Very Good | Moderate |

| Robustness to Point Cloud Sparsity | Moderate | Good | Noticeable |

| Anticipation of Future States | Fair | Good | Substantial |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).