Submitted:

15 March 2026

Posted:

17 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

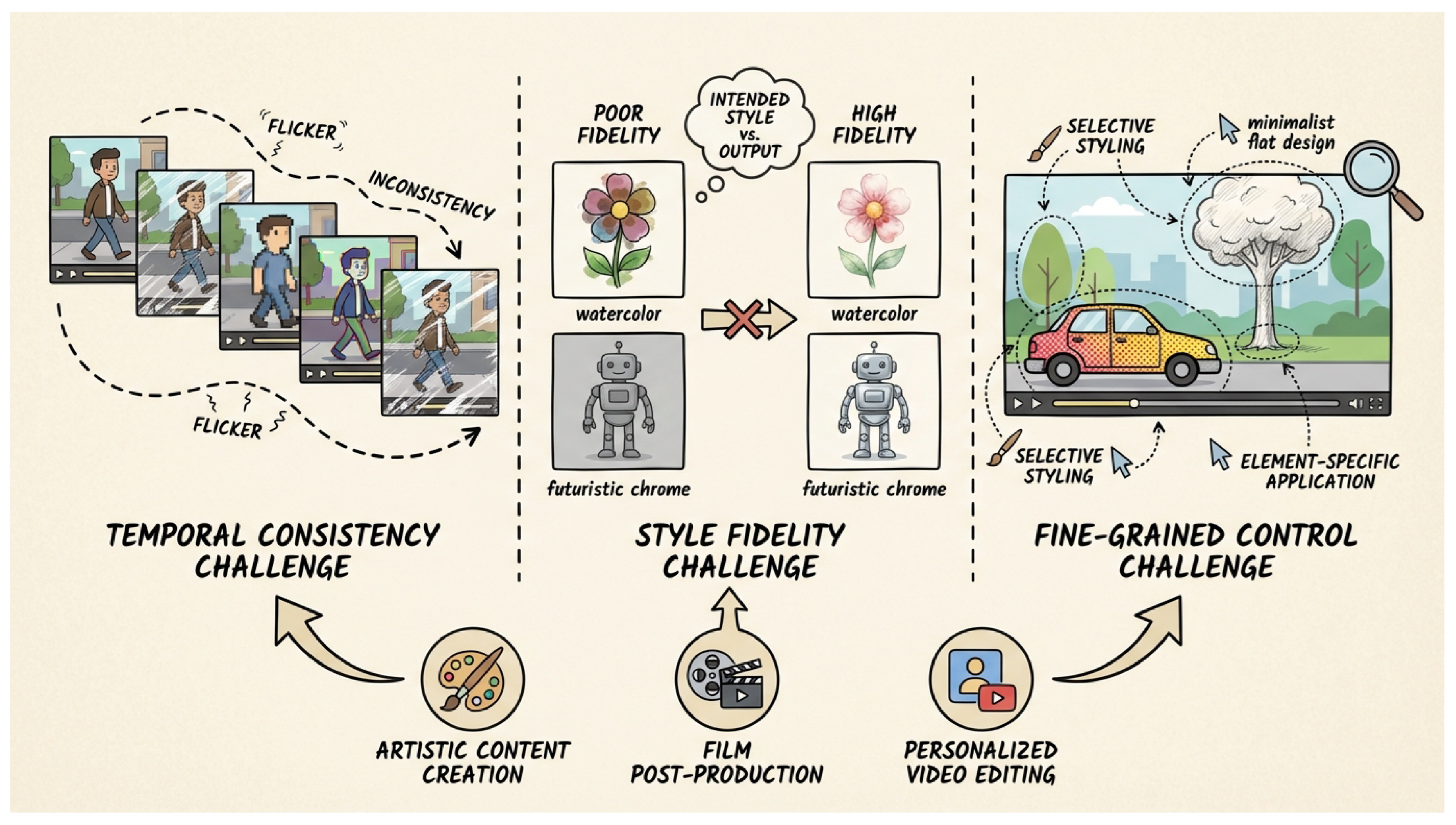

- Temporal Consistency: A naive frame-by-frame application of T2I models inevitably leads to severe flickering and content discontinuity across frames [12], severely degrading the visual quality and coherence of the stylized video. Maintaining smooth transitions and stable object appearances is paramount.

- Style Fidelity: Accurately translating the complex artistic style described in a text prompt, such as "watercolor painting" or "futuristic chrome," onto every frame of a video while preserving the original video’s underlying content structure remains a significant hurdle. The stylized output must faithfully adhere to the textual style instruction.

- Fine-grained Control: Current T2GVS methods often lack the flexibility for users to apply different styles to specific regions of a video, particular elements, or varying time segments. Achieving such precise control over the stylization process is crucial for professional and artistic applications.

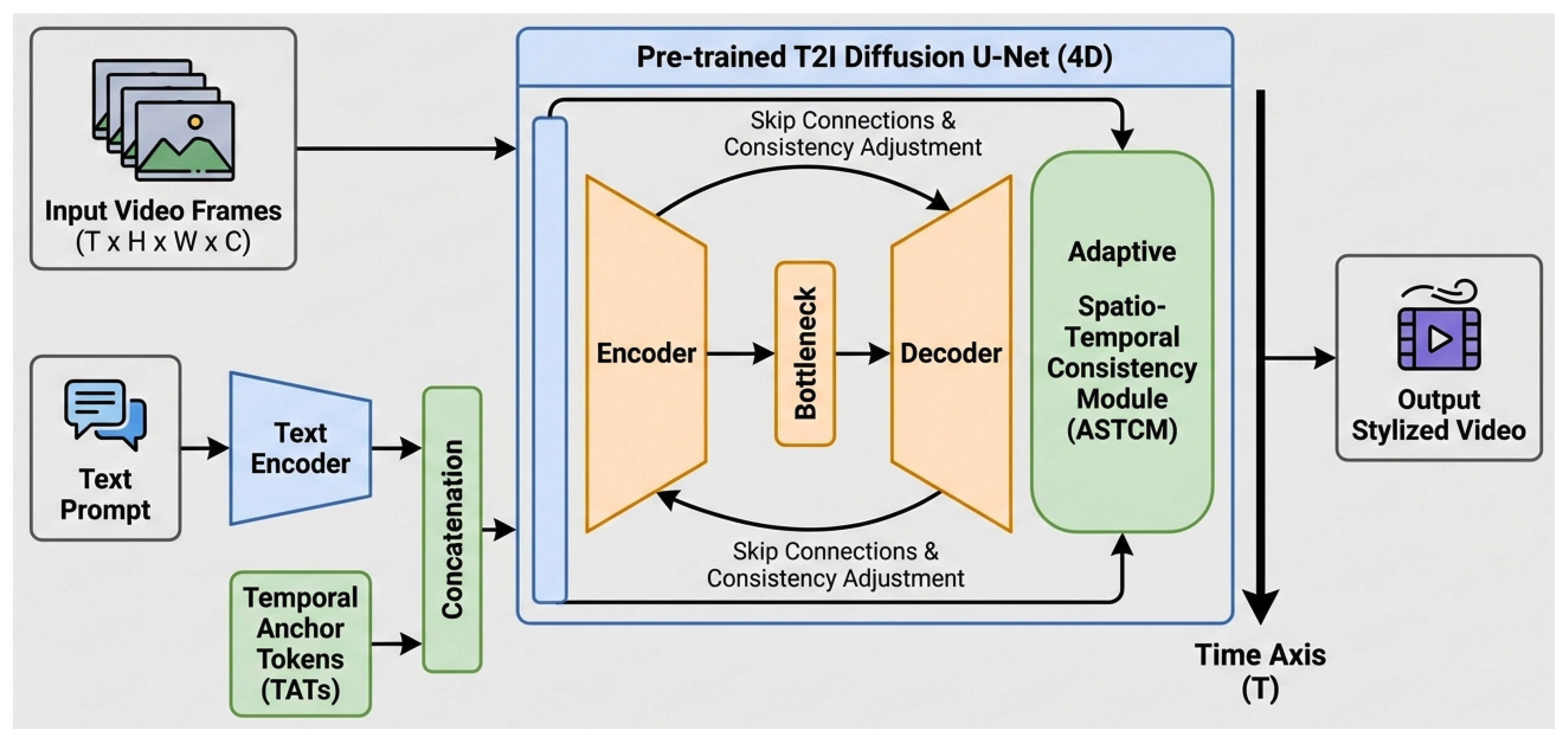

- We propose VideoStylist, a novel diffusion model framework that extends a pre-trained T2I U-Net with a 4D architecture for high-quality and consistent Text-Guided Video Stylization.

- We introduce Temporal Anchor Tokens (TATs), a novel mechanism embedded in the style embedding to consistently anchor global style semantics across video frames, significantly improving temporal consistency and style fidelity.

- We design the Adaptive Spatio-Temporal Consistency Module (ASTCM), a plug-and-play component that dynamically adjusts attention to maintain local content coherence and smooth transitions in stylized videos.

2. Related Work

2.1. Video Generation and Stylization

2.2. Text-Conditioned Generative Models and Control

3. Method

3.1. Overall Architecture

3.2. Temporal Anchor Tokens (TATs)

3.2.1. Design and Integration

3.2.2. Functionality

3.2.3. Temporal Anchor Loss

3.3. Adaptive Spatio-Temporal Consistency Module (ASTCM)

3.3.1. Integration and Mechanism

3.3.2. Attention Weight Adjustment

3.4. Training Data Construction

3.4.1. High-Quality Video and Text Pairs

3.4.2. Expansion from Existing T2I Datasets

3.4.3. Post-processing

3.5. Training Strategy

3.5.1. Optimization Objectives

3.5.2. Training Specifics

4. Experiments

4.1. Experimental Setup

4.1.1. Dataset

4.1.2. Implementation Details

4.1.3. Evaluation Metrics

- Style Fidelity (SF) ↑: Measures how accurately the generated video reflects the artistic style described in the text prompt. This is quantified using the CLIP Score, where higher values indicate better style adherence.

- Temporal Consistency (TC) ↑: Evaluates the smoothness and coherence of content across consecutive frames, indicating a reduction in flickering. This metric is based on a modified inter-frame optical flow consistency, with higher values signifying superior temporal stability.

- Perceptual Quality (PQ) ↑: Assesses the overall visual realism and quality of the generated stylized videos. This is evaluated either by computing (where FID is the Fréchet Inception Distance, lower is better, thus higher is better) or through average user ratings on a scale of 1-10.

- Mean Flicker Index (MFI) ↓: Specifically measures the degree of flickering artifacts, with lower values indicating better temporal stability.

- Style Matching Error (SME) ↓: Quantifies the deviation between the desired style (from text prompt) and the style present in the generated frames, with lower values indicating higher style fidelity.

4.1.4. Baselines

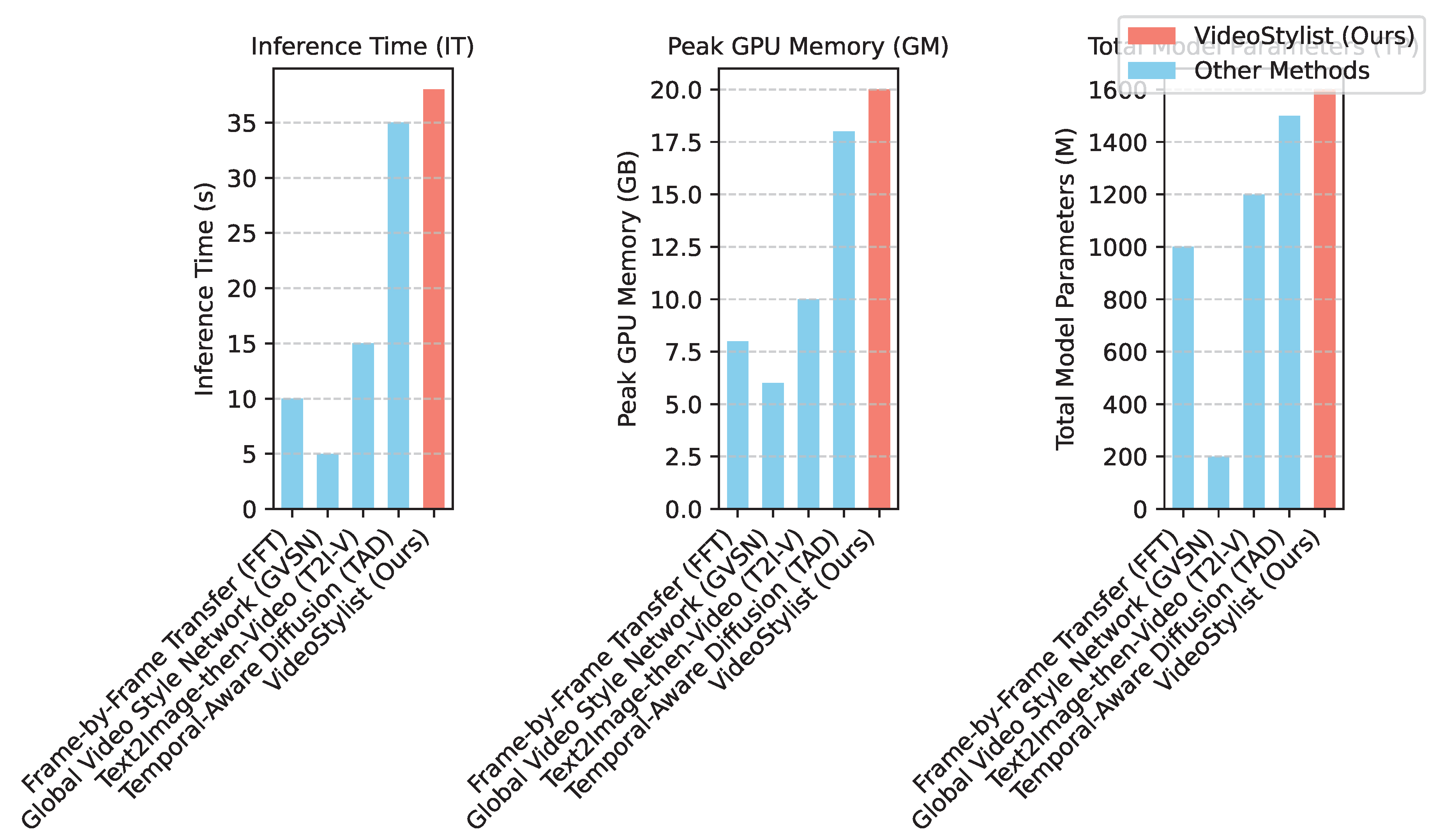

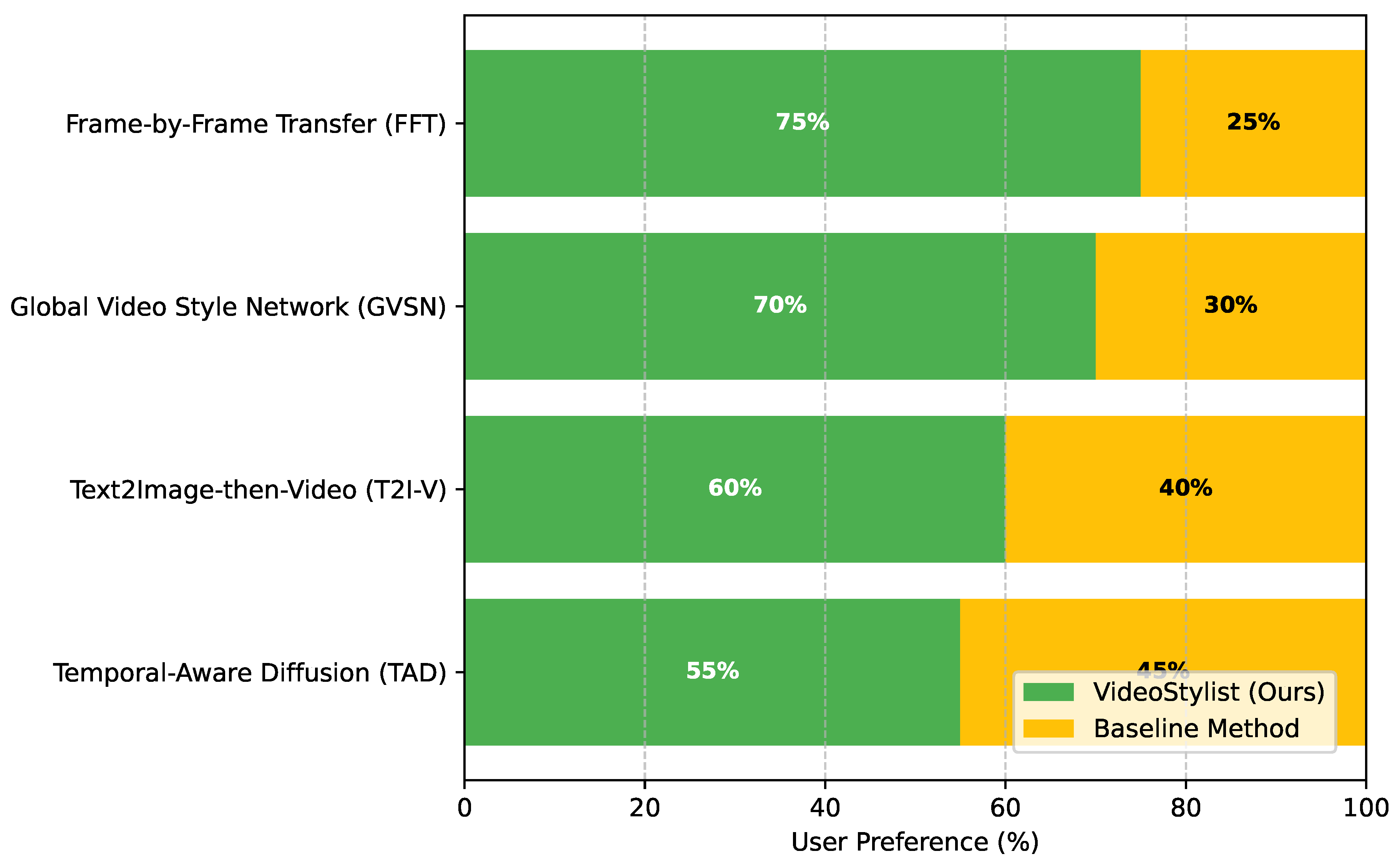

- Frame-by-Frame Transfer (FFT): A baseline method that applies a standard T2I model independently to each frame of the video. This typically achieves high style fidelity per frame but suffers from severe temporal inconsistency.

- Global Video Style Network (GVSN): Represents methods that attempt to learn and apply a global style embedding to an entire video, aiming for better consistency than FFT but often sacrificing fine-grained control or style fidelity.

- Text2Image-then-Video (T2I-V): An approach where a T2I model generates keyframes, and intermediate frames are interpolated or refined using video-specific techniques. This often struggles with maintaining complex styles over long sequences.

- Temporal-Aware Diffusion (TAD): A more recent baseline that incorporates temporal awareness into diffusion models, typically through temporal convolutions or attention, similar in spirit but with different architectural choices than ours.

4.2. Quantitative Results

4.3. Ablation Study on Temporal Anchor Tokens (TATs)

4.4. Ablation Study on Adaptive Spatio-Temporal Consistency Module (ASTCM)

4.5. Detailed Qualitative Analysis

- Frame-by-Frame Transfer (FFT): Videos produced by FFT, while often showcasing high-fidelity style on individual frames, exhibit severe and distracting temporal flickering. For instance, a video stylized with an "oil painting" prompt will show brushstrokes changing their size, orientation, and even color on the same object across consecutive frames, leading to a strobe-like effect that renders the video unwatchable. This method completely fails to maintain any form of temporal coherence.

- Global Video Style Network (GVSN): GVSN attempts to impose a global style, which reduces the severe flickering of FFT. However, this often comes at the cost of style vibrancy and specific detail. Videos tend to have a uniform, but often "washed-out" or overly generalized, style. Fine-grained stylistic elements described in the prompt may be lost, and while global consistency is improved, localized textures can still show minor inconsistencies or deformations over time.

- Text2Image-then-Video (T2I-V): This approach can generate high-quality keyframes, and the stylistic elements within these frames are generally strong. However, interpolation between keyframes, especially during complex motion or significant scene changes, frequently introduces noticeable stylistic "jumps" or subtle blending artifacts. For longer video sequences, the style can gradually drift from the initial keyframe’s aesthetic, leading to a loss of overall consistency over the video’s duration.

- Temporal-Aware Diffusion (TAD): TAD represents a significant improvement, demonstrating good overall temporal consistency and style fidelity. However, subtle style shifts can still occur in prolonged sequences, where the model might slightly reinterpret the global style. Additionally, during very rapid object movements or complex background changes, minor localized flickering or slight deformations of stylized textures might occasionally become apparent.

- VideoStylist (Ours): Our VideoStylist consistently produces videos with exceptional visual quality, characterized by vibrant and accurate style application that precisely matches the textual description. The global artistic theme, such as "a watercolor landscape" or "a cyber-noir city," remains consistently locked from the beginning to the end of the video, thanks to the Temporal Anchor Tokens (TATs). This effectively eliminates any perception of style drift over time. Furthermore, the Adaptive Spatio-Temporal Consistency Module (ASTCM) ensures that even intricate local details and textures of moving objects maintain their coherent stylized appearance. For example, in a video of a person dancing stylized as a "comic book animation," not only does the overall comic book aesthetic remain constant, but the specific line art and color fill of the person’s clothing and face also remain perfectly consistent and fluid throughout their complex movements, without any localized flickering or deformation.

4.6. Computational Efficiency

4.7. User Study

5. Conclusion

References

- Prabhumoye, S.; Hashimoto, K.; Zhou, Y.; Black, A.W.; Salakhutdinov, R. Focused Attention Improves Document-Grounded Generation. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021; Association for Computational Linguistics; pp. 4274–4287. [Google Scholar] [CrossRef]

- Wang, P.; Zhu, Z. Overview of Online Parameter Identification of Permanent Magnet Synchronous Machines under Sensorless Control. IEEE Access, 2026. [Google Scholar]

- Wang, P.; Zhu, Z.; Freire, N.; Azar, Z.; Wu, X.; Liang, D. Online Simultaneous Identification of Multi-Parameters for Interior PMSMs Under Sensorless Control. CES Transactions on Electrical Machines and Systems 2025, 9, 422–433. [Google Scholar] [CrossRef]

- Wang, P.; Zhu, Z.; Liang, D.; Freire, N.M.; Azar, Z. Dual signal injection-based online parameter estimation of surface-mounted PMSMs under sensorless control. IEEE Transactions on Industry Applications, 2025. [Google Scholar]

- Liu, W. KV Cache and Inference Scheduling: Energy Modeling for High-QPS Services. Journal of Industrial Engineering and Applied Science 2026, 4, 34–41. [Google Scholar] [CrossRef]

- Liu, W. Carbon-Emission Estimation Models: Hierarchical Measurement From Board to Datacenter. Journal of Industrial Engineering and Applied Science 2026, 4, 42–48. [Google Scholar] [CrossRef]

- Liu, W. Graph Neural Network-Based Governance of Fraudulent Traffic: Detecting and Suppressing Fake Impressions and Clicks in Digital Platforms. European Journal of AI, Computing & Informatics 2026, 2, 113–123. [Google Scholar]

- Liu, Z.; Huang, J.; Wang, X.; Wu, Y.; Gorbatchev, N. Employee Performance Prediction: A System Based on LightGBM for Digital Intelligent HR Management. In Proceedings of the 2025 International Conference on Intelligent Computing and Next Generation Networks (ICNGN), 2025; IEEE; pp. 1–5. [Google Scholar]

- Huang, J.; Tian, Z.; Qiu, Y. Ai-enhanced dynamic power grid simulation for real-time decision-making. In Proceedings of the 2025 4th International Conference on Smart Grids and Energy Systems (SGES), 2025; IEEE; pp. 15–19. [Google Scholar]

- Pang, R.; Huang, J.; Li, Y.; Shan, Y. HEV-YOLO: An Improved YOLOv11-Based Detection Algorithm for Heavy Equipment Engineering Vehicles. In Proceedings of the 2025 5th International Conference on Electronic Information Engineering and Computer Technology (EIECT), 2025; IEEE; pp. 96–99. [Google Scholar]

- Li, X.; Ma, Y.; Ye, K.; Cao, J.; Zhou, M.; Zhou, Y. Hy-facial: Hybrid feature extraction by dimensionality reduction methods for enhanced facial expression classification. arXiv arXiv:2509.26614. [CrossRef]

- Luu, K.; Khashabi, D.; Gururangan, S.; Mandyam, K.; Smith, N.A. Time Waits for No One! Analysis and Challenges of Temporal Misalignment. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2022; Association for Computational Linguistics; pp. 5944–5958. [Google Scholar] [CrossRef]

- Krause, B.; Gotmare, A.D.; McCann, B.; Keskar, N.S.; Joty, S.; Socher, R.; Rajani, N.F. GeDi: Generative Discriminator Guided Sequence Generation. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2021; Association for Computational Linguistics, 2021; pp. 4929–4952. [Google Scholar] [CrossRef]

- Lin, B.; Ye, Y.; Zhu, B.; Cui, J.; Ning, M.; Jin, P.; Yuan, L. Video-LLaVA: Learning United Visual Representation by Alignment Before Projection. In Proceedings of the Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, 2024; Association for Computational Linguistics; pp. 5971–5984. [Google Scholar] [CrossRef]

- Dai, W.; Li, J.; Li, D.; Tiong, A.M.H.; Zhao, J.; Wang, W.; Li, B.; Fung, P.; Hoi, S.C.H. InstructBLIP: Towards General-purpose Vision-Language Models with Instruction Tuning. In Proceedings of the Advances in Neural Information Processing Systems 36: Annual Conference on Neural Information Processing Systems 2023, NeurIPS 2023, New Orleans, LA, USA, December 10 - 16, 2023, 2023. [Google Scholar]

- Lv, Q.; Kong, W.; Li, H.; Zeng, J.; Qiu, Z.; Qu, D.; Song, H.; Chen, Q.; Deng, X.; Pang, J. F1: A vision-language-action model bridging understanding and generation to actions. arXiv arXiv:2509.06951.

- Song, H.; Dong, L.; Zhang, W.; Liu, T.; Wei, F. CLIP Models are Few-Shot Learners: Empirical Studies on VQA and Visual Entailment. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics, 2022; Volume 1, pp. 6088–6100. [Google Scholar] [CrossRef]

- Xu, H.; Ghosh, G.; Huang, P.Y.; Okhonko, D.; Aghajanyan, A.; Metze, F.; Zettlemoyer, L.; Feichtenhofer, C. VideoCLIP: Contrastive Pre-training for Zero-shot Video-Text Understanding. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 6787–6800. [Google Scholar] [CrossRef]

- Huang, P.Y.; Patrick, M.; Hu, J.; Neubig, G.; Metze, F.; Hauptmann, A. Multilingual Multimodal Pre-training for Zero-Shot Cross-Lingual Transfer of Vision-Language Models. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021; Association for Computational Linguistics; pp. 2443–2459. [Google Scholar] [CrossRef]

- Zhong, Y.; Ji, W.; Xiao, J.; Li, Y.; Deng, W.; Chua, T.S. Video Question Answering: Datasets, Algorithms and Challenges. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, 2022; Association for Computational Linguistics; pp. 6439–6455. [Google Scholar] [CrossRef]

- Lv, Q.; Li, H.; Deng, X.; Shao, R.; Li, Y.; Hao, J.; Gao, L.; Wang, M.Y.; Nie, L. Spatial-temporal graph diffusion policy with kinematic modeling for bimanual robotic manipulation. Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference 2025, 17394–17404. [Google Scholar]

- Lei, J.; Berg, T.; Bansal, M. Revealing Single Frame Bias for Video-and-Language Learning. In Proceedings of the Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics, 2023; Volume 1, pp. 487–507. [Google Scholar] [CrossRef]

- Gao, J.; Sun, X.; Xu, M.; Zhou, X.; Ghanem, B. Relation-aware Video Reading Comprehension for Temporal Language Grounding. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 3978–3988. [Google Scholar] [CrossRef]

- Xiao, S.; Chen, L.; Shao, J.; Zhuang, Y.; Xiao, J. Natural Language Video Localization with Learnable Moment Proposals. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, Association for Computational Linguistics, 2021; pp. 4008–4017. [Google Scholar] [CrossRef]

- Li, B.Z.; Nye, M.; Andreas, J. Implicit Representations of Meaning in Neural Language Models. Proceedings of the Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing 2021, Volume 1, 1813–1827. [Google Scholar] [CrossRef]

- V Ganesan, A.; Matero, M.; Ravula, A.R.; Vu, H.; Schwartz, H.A. Empirical Evaluation of Pre-trained Transformers for Human-Level NLP: The Role of Sample Size and Dimensionality. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2021; Association for Computational Linguistics; pp. 4515–4532. [Google Scholar] [CrossRef]

- Lv, Q.; Deng, X.; Chen, G.; Wang, M.Y.; Nie, L. Decision mamba: A multi-grained state space model with self-evolution regularization for offline rl. Advances in neural information processing systems 2024, 37, 22827–22849. [Google Scholar]

- He, J.; Kryscinski, W.; McCann, B.; Rajani, N.; Xiong, C. CTRLsum: Towards Generic Controllable Text Summarization. In Proceedings of the Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, 2022; Association for Computational Linguistics; pp. 5879–5915. [Google Scholar] [CrossRef]

- Sun, J.; Ma, X.; Peng, N. AESOP: Paraphrase Generation with Adaptive Syntactic Control. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 5176–5189. [Google Scholar] [CrossRef]

- Kulkarni, M.; Mahata, D.; Arora, R.; Bhowmik, R. Learning Rich Representation of Keyphrases from Text. In Proceedings of the Findings of the Association for Computational Linguistics: NAACL 2022; Association for Computational Linguistics, 2022; pp. 891–906. [Google Scholar] [CrossRef]

- Reif, E.; Ippolito, D.; Yuan, A.; Coenen, A.; Callison-Burch, C.; Wei, J. A Recipe for Arbitrary Text Style Transfer with Large Language Models. In Proceedings of the Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics; Association for Computational Linguistics, 2022; Volume 2, pp. 837–848. [Google Scholar] [CrossRef]

- Kim, B.; Kim, H.; Lee, S.W.; Lee, G.; Kwak, D.; Dong Hyeon, J.; Park, S.; Kim, S.; Kim, S.; Seo, D.; et al. What Changes Can Large-scale Language Models Bring? Intensive Study on HyperCLOVA: Billions-scale Korean Generative Pretrained Transformers. In Proceedings of the Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 2021; Association for Computational Linguistics; pp. 3405–3424. [Google Scholar] [CrossRef]

- Nukrai, D.; Mokady, R.; Globerson, A. Text-Only Training for Image Captioning using Noise-Injected CLIP. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022; Association for Computational Linguistics, 2022; pp. 4055–4063. [Google Scholar] [CrossRef]

- Wen, H.; Lin, Y.; Lai, T.; Pan, X.; Li, S.; Lin, X.; Zhou, B.; Li, M.; Wang, H.; Zhang, H.; et al. RESIN: A Dockerized Schema-Guided Cross-document Cross-lingual Cross-media Information Extraction and Event Tracking System. In Proceedings of the Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies: Demonstrations; Association for Computational Linguistics, 2021; pp. 133–143. [Google Scholar] [CrossRef]

- Ahuja, K.; Diddee, H.; Hada, R.; Ochieng, M.; Ramesh, K.; Jain, P.; Nambi, A.; Ganu, T.; Segal, S.; Ahmed, M.; et al. MEGA: Multilingual Evaluation of Generative AI. In Proceedings of the Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, 2023; Association for Computational Linguistics; pp. 4232–4267. [Google Scholar] [CrossRef]

| Method | SF ↑ | TC ↑ | PQ ↑ |

|---|---|---|---|

| Frame-by-Frame Transfer (FFT) | 0.72 | 0.55 | 6.8 |

| Global Video Style Network (GVSN) | 0.68 | 0.70 | 7.1 |

| Text2Image-then-Video (T2I-V) | 0.78 | 0.65 | 7.5 |

| Temporal-Aware Diffusion (TAD) | 0.81 | 0.80 | 8.0 |

| VideoStylist (Ours) | 0.83 | 0.82 | 8.2 |

| TATs Count | MFI ↓ | SME ↓ | SF ↑ |

|---|---|---|---|

| 0 (w/o TATs) | 0.28 | 0.15 | 0.76 |

| 1 | 0.22 | 0.11 | 0.79 |

| 2 | 0.18 | 0.08 | 0.83 |

| 3 | 0.19 | 0.09 | 0.82 |

| 4 | 0.21 | 0.10 | 0.81 |

| ASTCM Configuration | TC ↑ | MFI ↓ | PQ ↑ |

|---|---|---|---|

| w/o ASTCM | 0.70 | 0.25 | 7.6 |

| ASTCM (Warping Only) | 0.75 | 0.22 | 7.9 |

| w/ ASTCM (Ours) | 0.82 | 0.18 | 8.2 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).