Submitted:

13 March 2026

Posted:

16 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Previous Studies

3. Adopted Methodology

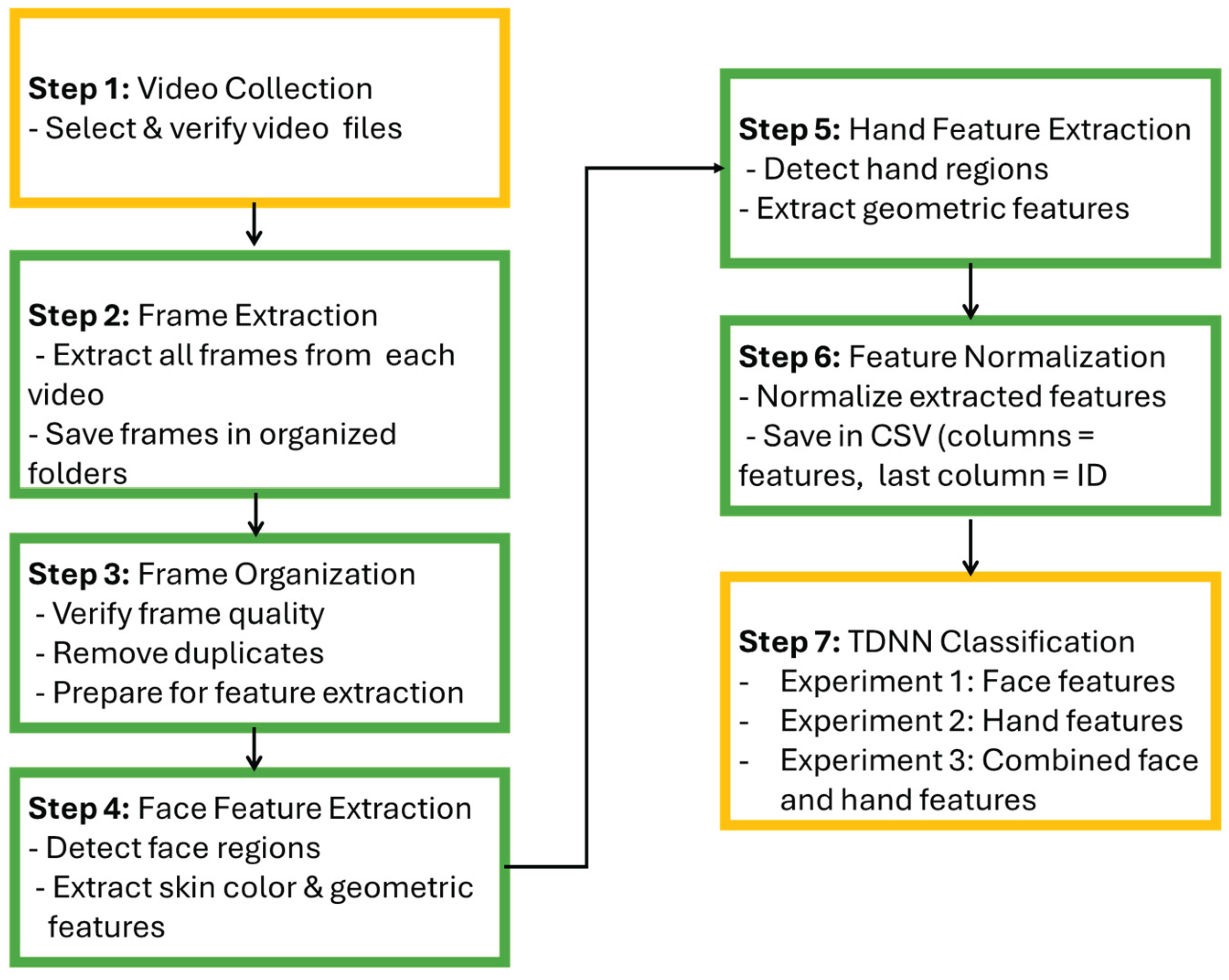

3.1. The Proposed Method

3.1.1. Step 1: Video Collection and Identification

3.1.2. Step 2: Frame Extraction from Videos

3.1.3. Step 3: Organization and Verification of Frames

3.1.4. Step 4: Face Detection and Skin Color Feature Extraction

3.1.5. Step 5: Hand Detection and Geometric Feature Extraction

3.1.6. Step 6: Feature Normalization and Dataset Preparation

3.1.7. Step 7: TDNN-Based Multimodal Classification

3.2. Performance Evaluation

3.2.1. Accuracy

3.2.2. Precision

3.2.3. Recall

3.2.4. F1-Score

4. Results Analysis and Discussion

4.1. Overview

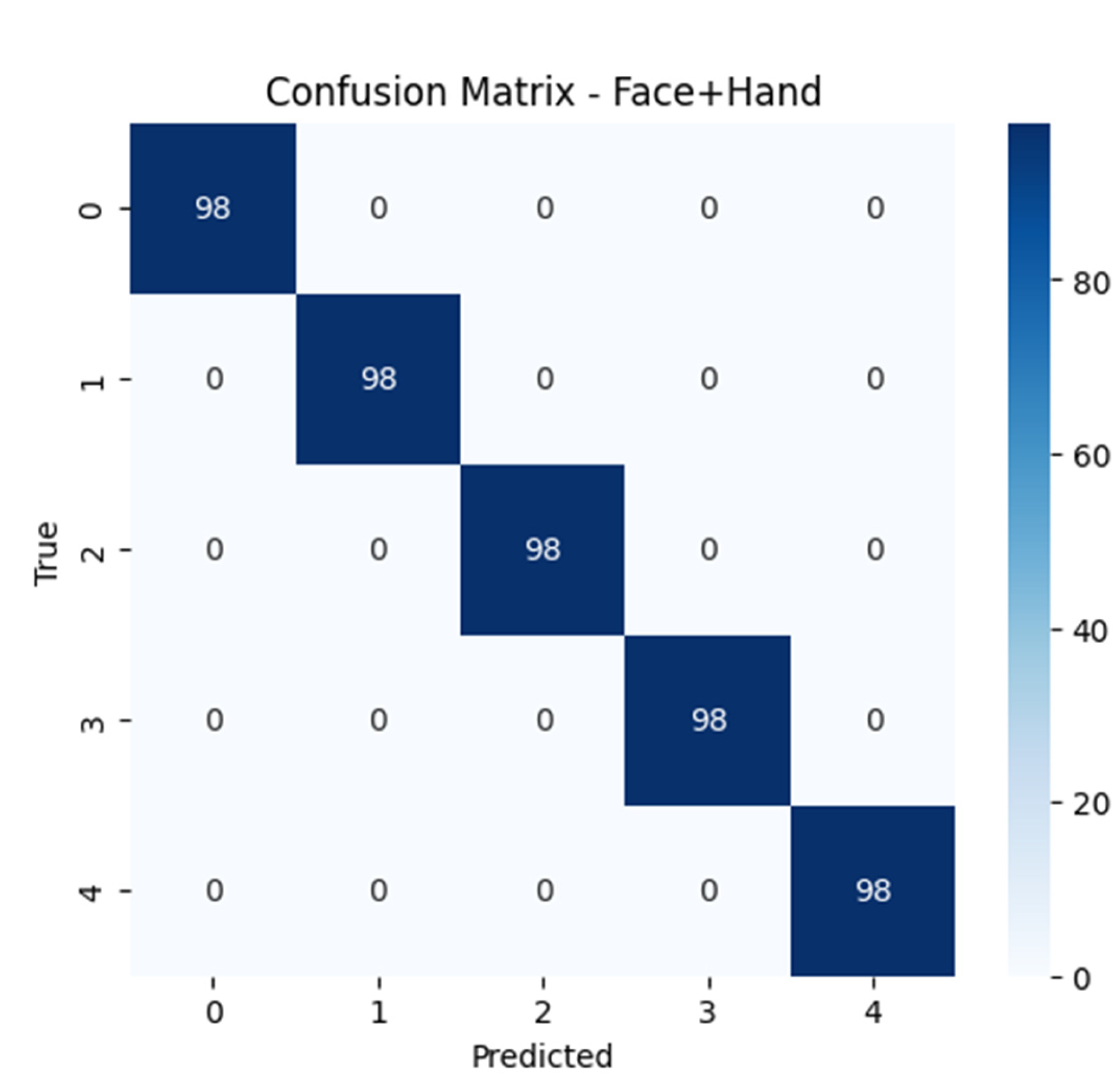

4.2. Results

4.3. Dataset Analysis Results

4.4. Limitations

4. Discussion

5. Conclusions and Future Work

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Jain, A. K.; Ross, A.; Prabhakar, S. An introduction to biometric recognition. IEEE Transactions on circuits and systems for video technology 2004, 14(1), 4–20. [Google Scholar] [CrossRef]

- Ross, A.; Jain, A. Information fusion in biometrics. Pattern recognition letters 2003, 24(13), 2115–2125. [Google Scholar] [CrossRef]

- Ross, A. A.; Jain, A. K.; Nandakumar, K. Handbook of multibiometrics; Springer US: Boston, MA, 2006. [Google Scholar]

- Bowyer, K. W.; Chang, K.; Flynn, P. A survey of approaches and challenges in 3D and multi-modal 3D+ 2D face recognition. Computer vision and image understanding 2006, 101(1), 1–15. [Google Scholar] [CrossRef]

- Zhao, W.; Chellappa, R.; Phillips, P. J.; Rosenfeld, A. Face recognition: A literature survey. ACM computing surveys (CSUR) 2003, 35(4), 399–458. [Google Scholar] [CrossRef]

- Viola, P.; Jones, M. Rapid object detection using a boosted cascade of simple features. Proceedings of the 2001 IEEE computer society conference on computer vision and pattern recognition. CVPR 2001 2001, Vol. 1, I–I. [Google Scholar]

- Marasco, E.; Ross, A. A survey on antispoofing schemes for fingerprint recognition systems. ACM Computing Surveys (CSUR) 2014, 47(2), 1–36. [Google Scholar] [CrossRef]

- Jain, A. K.; Ross, A.; Prabhakar, S. An introduction to biometric recognition. IEEE Transactions on circuits and systems for video technology 2004, 14(1), 4–20. [Google Scholar] [CrossRef]

- Bolle, R. M.; Connell, J. H.; Pankanti, S.; Ratha, N. K.; Senior, A. W. Guide to biometrics; Springer Science & Business Media, 2013. [Google Scholar]

- Shekhar, M.; Trivedi, A. K.; Patgiri, R. Enhancing security and accuracy in biometric systems through the fusion of fingerprint and gait recognition technologies. International Journal of Biometrics 2025, 17(5), 449–468. [Google Scholar] [CrossRef]

- Borra, S.; Dey, N.; Sherratt, R. S. Biometric sensors. In Encyclopedia of Cryptography, Security and Privacy; Springer Nature Switzerland: Cham, 2025; pp. 217–220. [Google Scholar]

- Subramanian, N. Biometric Authentication. In Encyclopedia of Cryptography, Security and Privacy; Springer Nature Switzerland: Cham, 2025; pp. 192–196. [Google Scholar]

- Choudhary, P.; Pathak, P.; Gupta, P. Physiological biometric image quality assessment-a review. Multimedia Tools and Applications 2025, 84(34), 42257–42291. [Google Scholar] [CrossRef]

- Akintunde, O. A.; Adetunji, A. B.; Fenwa, O. D.; Oguntoye, J. P.; Olayiwola, D. S.; Adeleke, A. J. Comparative analysis of score level fusion techniques in multi-biometric system. LAUTECH Journal of Engineering and Technology 2025, 19(1), 128–141. [Google Scholar] [CrossRef]

- Abdul-Al, M.; Kyeremeh, G. K.; Qahwaji, R.; Ali, N. T.; Abd-Alhameed, R. A. Fusion-Enhanced Hybrid Multimodal Biometric System: Integrating Visible and Infrared Facial Recognition for Robust Authentication. IEEE Access 2026, 14, 6006–6028. [Google Scholar] [CrossRef]

- Aliyu, M. G.; Jamel, S.; Danlami, M. Article Advances in Feature Extraction and Selection for Iris Recognition Systems: A Review. Journal of Electronic Voltage and Application 2025, 6(2), 148–165. [Google Scholar] [CrossRef]

- Poh, N. Biometric Sample Quality. In Encyclopedia of Cryptography, Security and Privacy; Springer Nature Switzerland: Cham, 2025; pp. 213–217. [Google Scholar]

- Qaraa, S.; Elbehairy, H.; Mohamed, S.; ElRashidy, N. Human Gait Recognition for Security Systems. The Future of Inclusion: Bridging the Digital Divide with Emerging Technologies: Proceedings of ITAF 2024 2025, 117. [Google Scholar]

- Tomasz, M. A. K. A.; Smietanka, L. Analysis of Decision Fusion in Speech Detection. Archives of Acoustics 2025, 50(4), 445–454. [Google Scholar] [CrossRef]

- Kajotra, S.; Kour, H. Face Recognition Technologies in Computer Vision–An Empirical Review. Recent Advances in Computing Sciences 2025, 255–263. [Google Scholar]

- Aboluhom, A. A. A.; Kandilli, I. Real-time facial recognition via multitask learning on raspberry Pi. Scientific Reports 2025, 15(1), 28467. [Google Scholar] [CrossRef]

- Schmidhuber, J. Who invented deep residual learning? arXiv 2025, arXiv:2509.24732. [Google Scholar] [CrossRef]

- Zhou, X.; Bhanu, B. Feature fusion of side face and gait for video-based human identification. Pattern Recognition 2008, 41(3), 778–795. [Google Scholar] [CrossRef]

- Camlikaya, E.; Kholmatov, A.; Yanikoglu, B. Multi-biometric templates using fingerprint and voice. In Biometric technology for human identification; SPIE, March 2008; V (Vol. 6944, pp. 145–153. [Google Scholar]

- Multibiometrics for human identification; Bhanu, B., Govindaraju, V., Eds.; Cambridge University Press, 2011. [Google Scholar]

- Rane, M. E.; Bhadade, U. S. Multimodal score level fusion for recognition using face and palmprint. International Journal of Electrical Engineering & Education 2025, 62(1), 37–55. [Google Scholar]

- Aleem, S.; Yang, P.; Masood, S.; Li, P.; Sheng, B. An accurate multi-modal biometric identification system for person identification via fusion of face and finger print. World Wide Web 2020, 23(2), 1299–1317. [Google Scholar] [CrossRef]

- Kyeremeh, G. K.; Abdul-Al, M.; Qahwaji, R.; Ali, N. T.; Abd-Alhameed, R. A. Fusion of hand biometrics for border control involving fingerprint and finger vein. In IEEE Access.; 2025. [Google Scholar]

- Shin, J.; Miah, A. S. M.; Egawa, R.; Hassan, N.; Hirooka, K.; Tomioka, Y. Multimodal fall detection using spatial–temporal attention and bi-lstm-based feature fusion. Future Internet 2025, 17(4), 173. [Google Scholar] [CrossRef]

- Mishra, A. Multimodal biometrics it is: need for future systems. International journal of computer applications 2010, 3(4), 28–33. [Google Scholar] [CrossRef]

- Jadhav, S. B.; Deshmukh, N. K.; Pawar, S. B. Robust authentication system with privacy preservation for hybrid deep learning-based person identification system using multi-modal palmprint, ear, and face biometric features. International Journal of Image and Graphics 2025, 25(05), 2550049. [Google Scholar] [CrossRef]

- Qiao, Y., Kang, W., Luo, D., & Huang, J. (2025). Normalized-Full-Palmar-Hand: Towards More Accurate Hand-Based Multimodal Biometrics. IEEE Transactions on Pattern Analysis and Machine Intelligence.

- Li, D.; Ji, T.; Sun, Y.; Zhang, Z.; Li, A.; Qu, M.; Liu, H. A Full-Range Proximity-Tactile Sensor Based on Multimodal Perception Fusion for Minimally Invasive Surgical Robots. Advanced Science 2025, e02353. [Google Scholar] [CrossRef]

- Zhang, Y.; Xu, Y.; Gao, J.; Zhao, Z.; Sun, J.; Mu, F. Urban Functional Zone Identification Based on Multimodal Data Fusion: A Case Study of Chongqing’s Central Urban Area. Remote Sensing 2025, 17(6), 990. [Google Scholar] [CrossRef]

- Garg, Bindu; Kasar, Manisha; Kashyap, Achyut; Vats, Amber; Sharma, Gunjan; Hange, Aditya. “SignAlphaSet”. Mendeley Data 2025, V2. [Google Scholar] [CrossRef]

| No. | Author(s) & Year | Accuracy | Disadvantages | Advantages | ML Methodology Used | Dataset | references |

| 1 | Zhou & Bhanu (2008) | High (CMC curves show superior discrimination) | Limited to side face & gait, sensitive to clothing/facial changes | Feature-level fusion improves discriminative power over match-score fusion | PCA + MDA | Custom datasets with side face & gait variations | [23] |

| 2 | Camlikaya et al. (2008) | <2% EER | Requires both fingerprint and voice data, system complexity | Protects privacy, higher accuracy than single modality | Multibiometric template fusion | 600 utterances, 400 fingerprints | [24] |

| 3 | Bhanu & Govindaraju (2011) | N/A (review) | Theoretical limitations | Comprehensive overview of fusion strategies; highlights performance boost with multimodal systems | Review (pre/post-classification fusion) | Various multimodal datasets | [25] |

| 4 | Rane & Bhadade (2025) | GAR 99.7% @ FAR 0.1% | Requires pre-processing of face & palmprint | High accuracy; score-level fusion enhances performance | T-norm-based matching score fusion | Face 94/95/96, FERET, FRGC, IITD | [26] |

| 5 | Aleem et al. (2020) | 99.59% | Sensitive to quality of fingerprint & face images | Combines face & fingerprint for strong security; dimensionality reduction included | ELBP + alignment-based elastic + score-level fusion | FVC 2000 DB1/DB2, ORL, YALE | [27] |

| 6 | Kyeremeh et al. (2025) | High (feature-level fusion better than score-level) | Complex computation, requires multiple sensors | Robust, high security, reduces information leakage | SIFT + FLANN + CLAHE + feature-level fusion | SOCOFing, FVC, CASIA, FV-USM, PLUSVein-FV3, UTFVP | [28] |

| 7 | Shin et al. (2025) | 99.09–99.32% | Computationally intensive; requires skeleton & sensor setup | Captures long-range dependencies; highly accurate for fall detection | GSTCAN + Bi-LSTM with Channel Attention | Fall Up, UR Fall | [29] |

| 8 | Mishra (2010) | N/A | Conceptual, not tested | Shows need for multimodal systems and robustness improvement | Review | N/A | [30] |

| 9 | Jadhav et al. (2025) | 96.4% | Database size sensitive, moderate accuracy | Privacy-preserving; efficient image transformation; hybrid deep learning | HDL + DCT + Lagrange interpolation | Palmprint, ear, face images | [31] |

| 10 | Qiao et al. (2025) | High | Complex hand segmentation, large dataset needed | Extracts distinct hand features; accurate for multimodal hand biometrics | HSANet + FPHandNet | SCUT_NFPH_v1 & v2, CASIA, IITD, COEP | [32] |

| 11 | Li et al. (2025) | 91.6% | Sensor calibration required | Enhances safety in minimally invasive surgery; multimodal tactile sensing | MEMS pMUT + capacitive + triboelectric + digital twin | Custom robotic surgery dataset | [33] |

| 12 | Zhang et al. (2025) | 84.13% | Dataset-specific, moderate accuracy | Fuses multimodal urban data; effective UFZ classification | TriNet (ImgNet, POINet, TrajNet + feature fusion) | OpenStreetMap, POI, OD data | [34] |

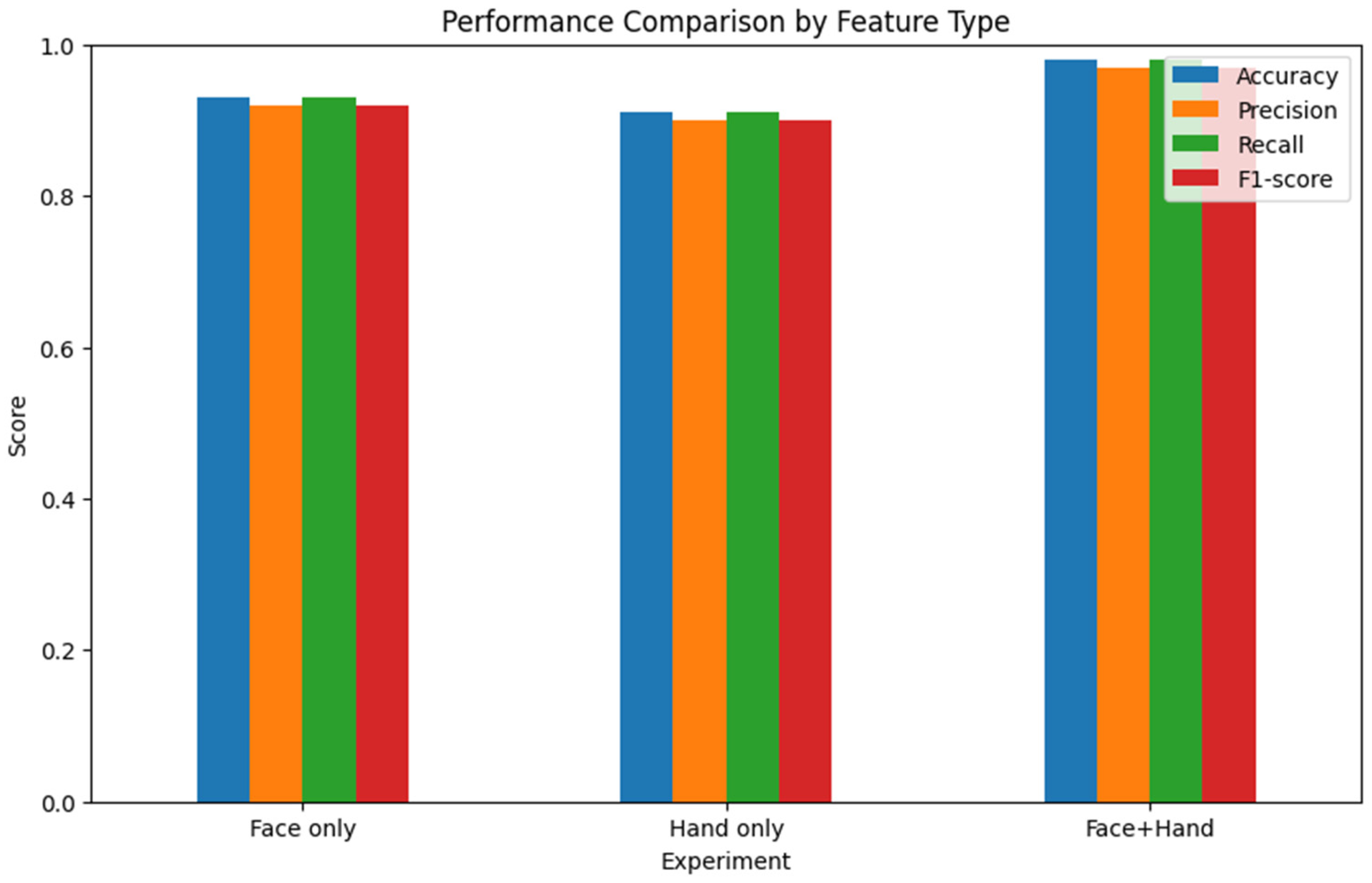

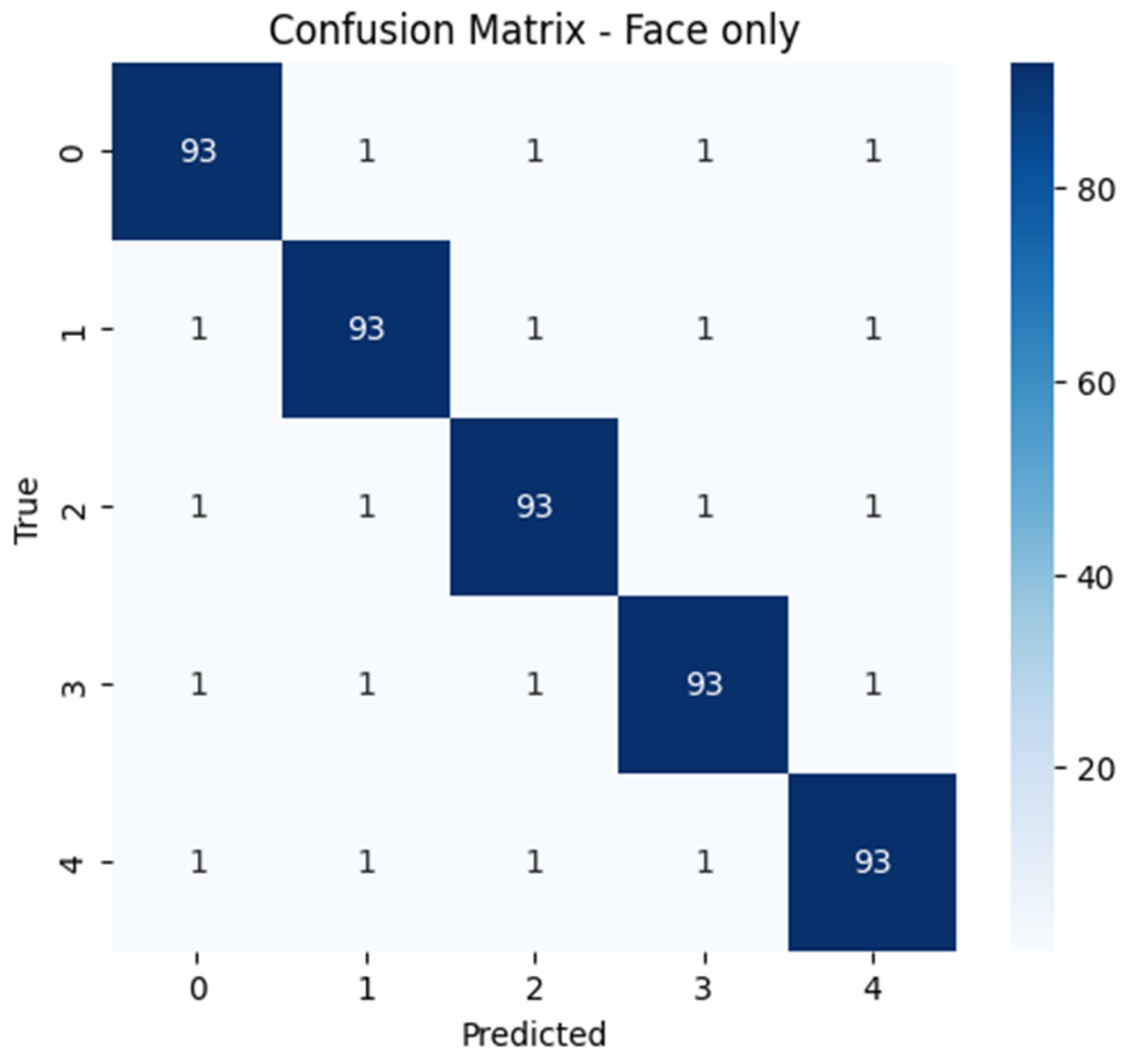

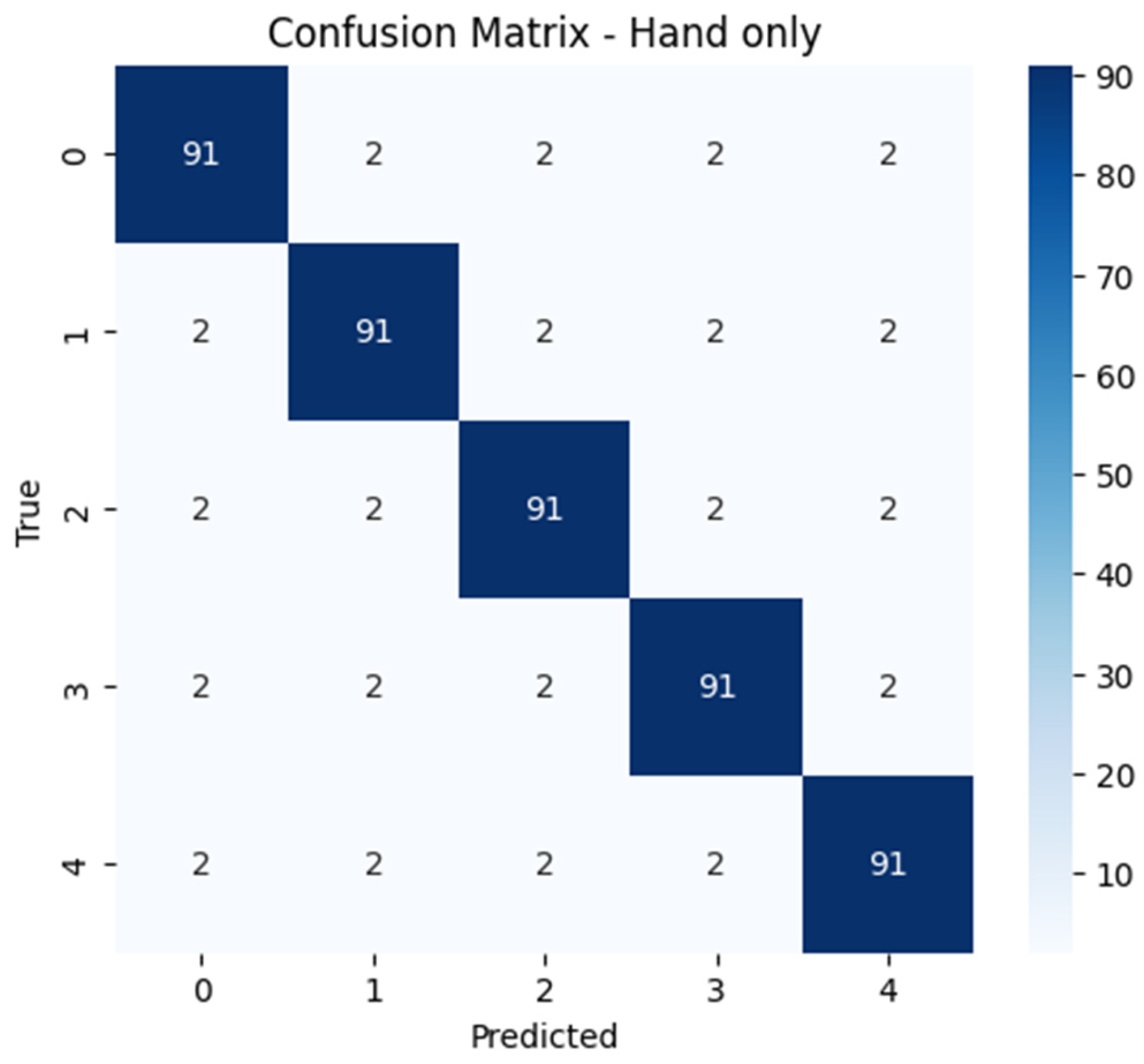

| ## | Experiment | Accuracy | Precision | Recall | F1-score |

| 0 | Face | 0.93 | 0.92 | 0.93 | 0.92 |

| 1 | Hand | 0.91 | 0.90 | 0.91 | 0.90 |

| 2 | Face+Hand | 0.98 | 0.97 | 0.98 | 0.97 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).