Submitted:

13 March 2026

Posted:

16 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Building an integrated dataset of 77,620 abusive and 272,214 non-abusive text samples from three sources to create a diverse, imbalanced collection that enables comprehensive model evaluation across real-world scenarios.

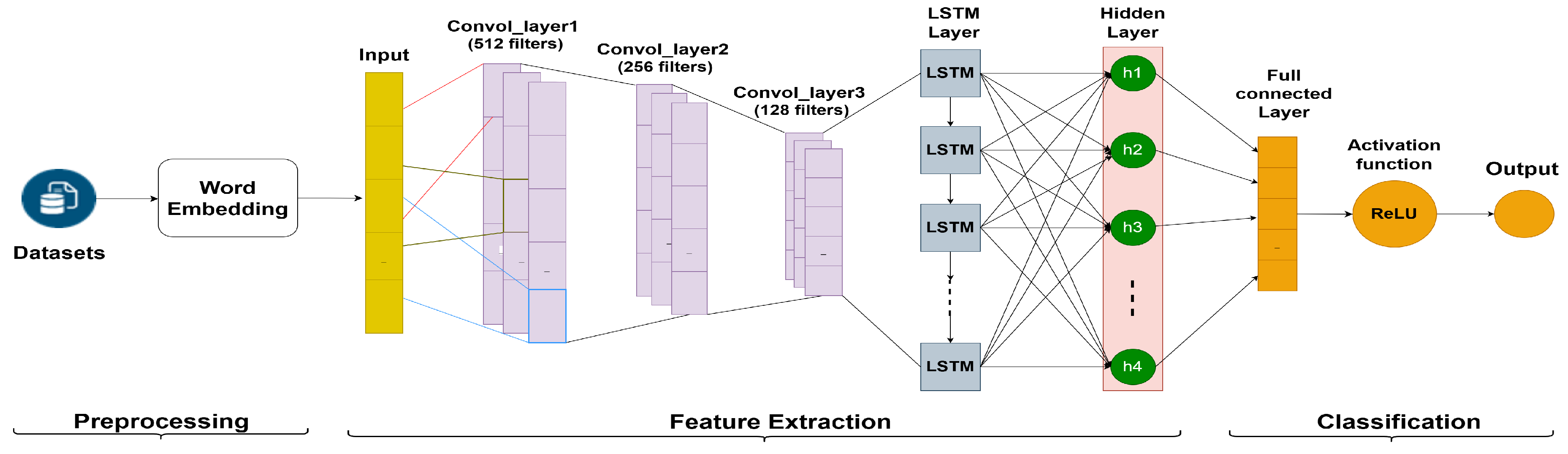

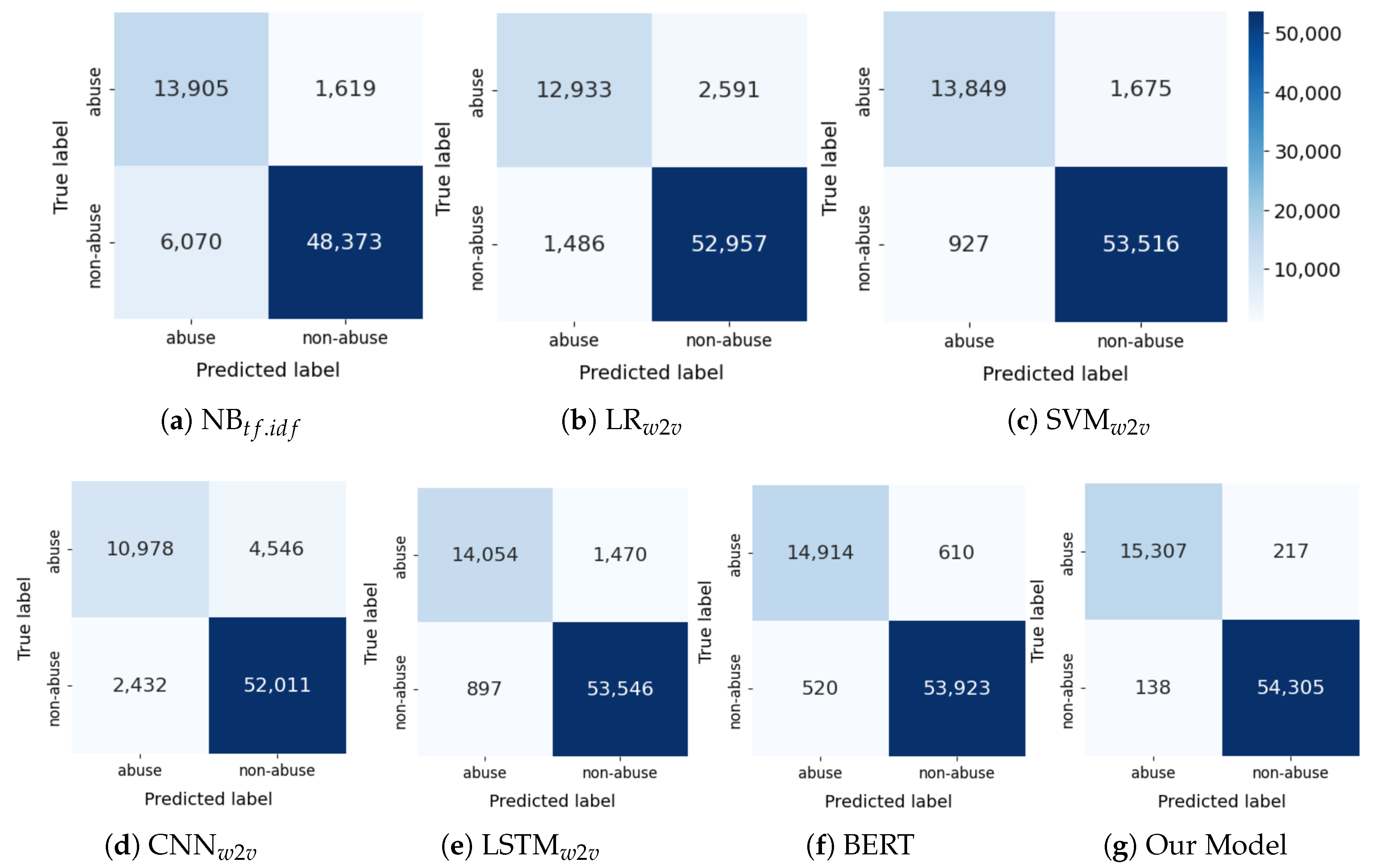

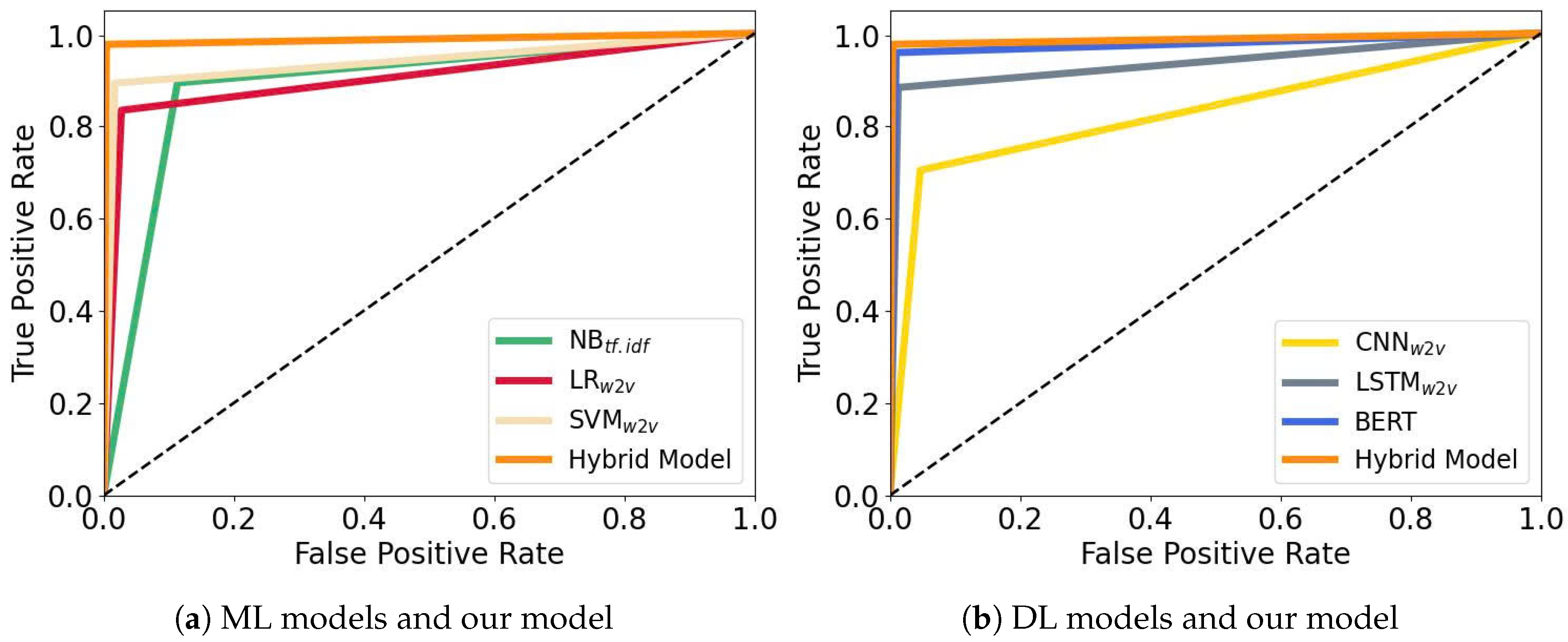

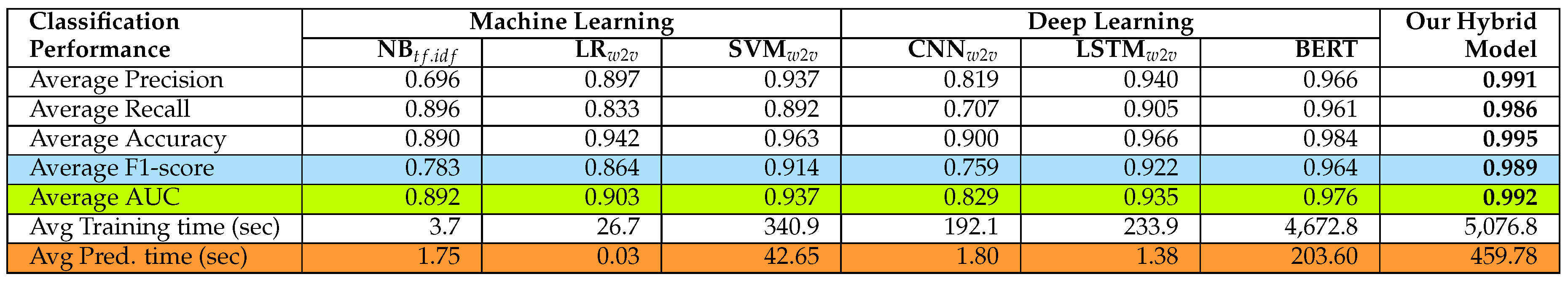

- Proposing a hybrid DL model that effectively captures abusive text in both English and Romanized scripts by integrating BERT, CNN, LSTM, and the ReLU activation function. The model achieves very high performance with a Precision of 0.991, Recall of 0.986, Accuracy of 0.995, F1-score of 0.989, and AUC of 0.992, as evaluated using five-fold cross-validation.

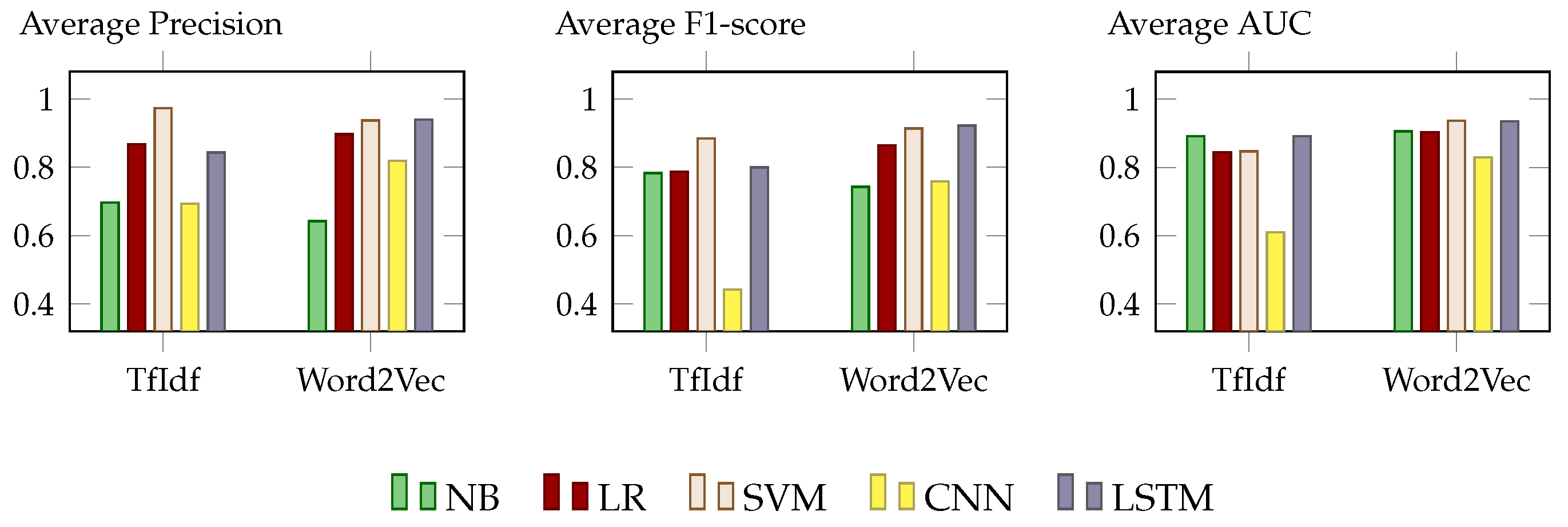

- Conducting a comprehensive comparison of the proposed model with traditional ML and standalone DL baselines using a diverse benchmark dataset comprising YouTube comments, forum discussions, and dark web posts, evaluated through 5-fold cross-validation across multiple performance metrics.

2. Related Work

3. Methodology

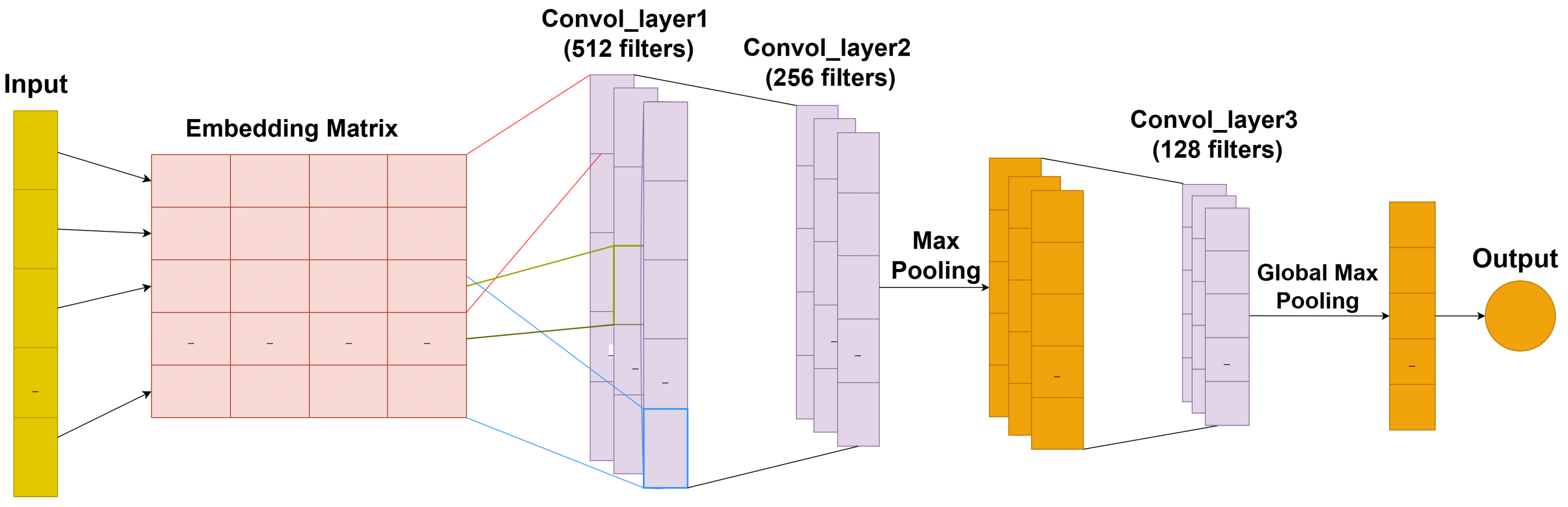

3.1. Word Embedding Layer

3.2. Convolutional Neural Networks Model

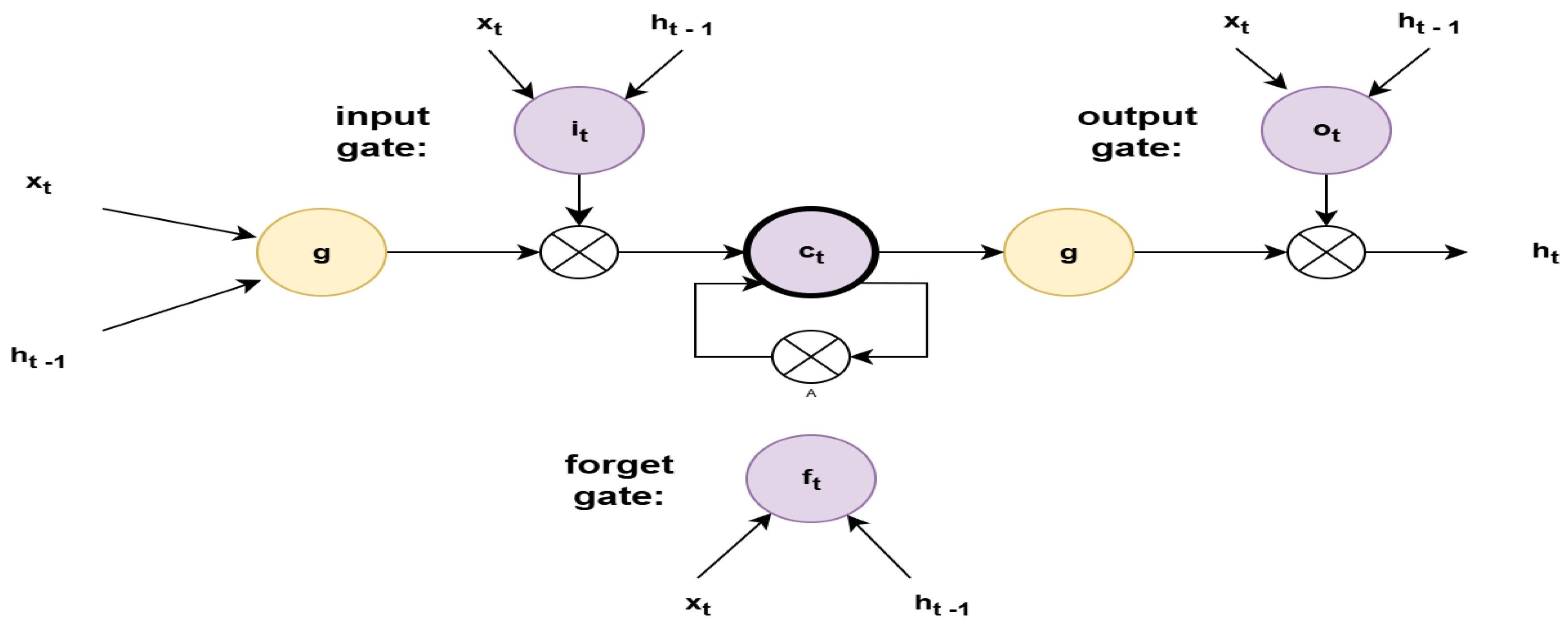

3.3. Long Short-Term Memory Model

3.4. Fully Connected Layer and Output

4. Model Evaluation

4.1. Our Dataset

- 1.

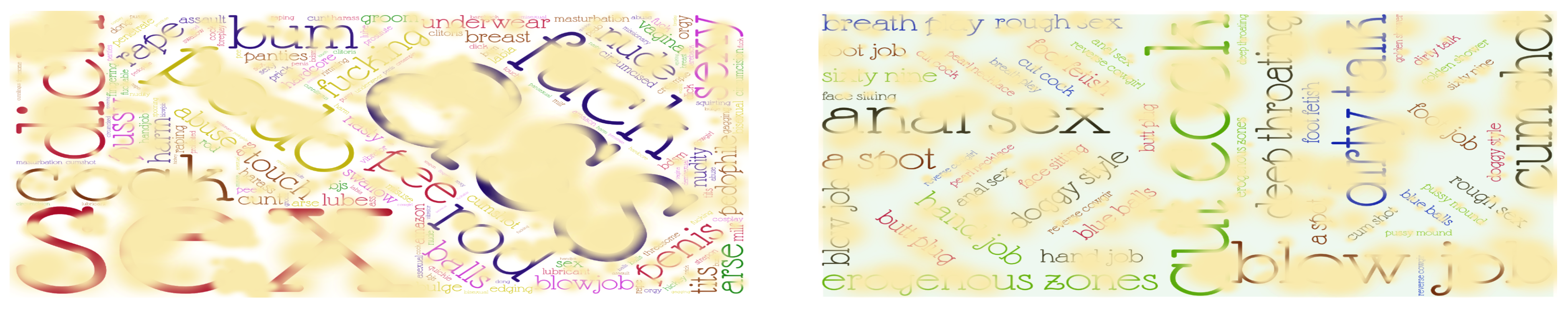

- Darkweb Dataset: We created the Darkweb dataset comprising 4,600 samples extracted from 352,000 dark web forum posts collected in 2022. It includes 2,500 child sexual abuse (CSA)-related and 2,100 non-CSA samples. Among them, 2,000 CSA and 100 non-CSA samples contain at least one sexual abuse phrase, see more details in [5]. Figure 4 presents blurred word clouds depicting single words and two-word phrases associated with sexual abuse, which were extracted from the post contents.

- 2.

- PAN12 Dataset1: Developed for the CLEF 2012 competition, this dataset aims to detect predatory behavior in online chats. It includes 198,054 conversations, consisting of 4,029 abusive and 194,025 non-abusive samples.

- 3.

- Roman Urdu Dataset: Contains 147,180 YouTube comments, evenly divided into abusive (73,590) and non-abusive (73,590) classes [31].

4.2. Experimental Design and Metrics

4.3. Results and Discussion

5. Conclusions and Future Work

Acknowledgments

References

- Chinivar, S.; et al. Online offensive behaviour in socialmedia: Detection approaches, comprehensive review and future directions. Entertainment Computing 2023, 45, 100544. [Google Scholar] [CrossRef]

- Zuckerman, A. 60 Cyberbullying Statistics: 2020/2021 Data, Insights & Predictions. Accessed: 2025-04-02.

- Petrosyan, A. Impact of Online Hate and Harassment in the U.S. 2020, 2025. Accessed: 2025-09-02.

- Ngo, V.M.; Thorpe, C.; McKeever, S. Analysing Child Sexual Abuse Activities in the Dark Web based on an Efficient CSAM Detection Algorithm. In Proceedings of the The 2nd Annual Trust and Safety Research Conference, Stanford, USA, September 2023. [Google Scholar]

- Ngo, V.M.; Mckeever, S.; Thorpe, C. Identifying Online Child Sexual Texts in Dark Web through Machine Learning and Deep Learning Algorithms. In Proceedings of the the APWG.EU Technical Summit and Researchers Sync-Up (APWG.EU-Tech 2023). CEUR Workshop Proceedings, 2024; pp. 1–6. [Google Scholar]

- Ngo, V.M.; Thorpe, C.; Dang, C.N.; Mckeever, S. Investigation, Detection and Prevention of Online Child Sexual Abuse Materials: A Comprehensive Survey. In Proceedings of the 2022 RIVF Int. Conf. on Computing and Communication Technologies (RIVF), 2022; pp. 707–713. [Google Scholar]

- Muneer, A.; Fati, S.M. A comparative analysis of machine learning techniques for cyberbullying detection on twitter. Future Internet 2020, 12. [Google Scholar] [CrossRef]

- Amrit, C.; Paauw, T.; Aly, R.; Lavric, M. Identifying child abuse through text mining and machine learning. Expert Systems with Applications 2017, 88, 402–418. [Google Scholar] [CrossRef]

- Talpur, B.A.; O’Sullivan, D. Cyberbullying severity detection: A machine learning approach. PLOS ONE 2020, 15, 1–19. [Google Scholar] [CrossRef]

- Mckeever, S.; Thorpe, C.; Ngo, V.M. Determining Child Sexual Abuse Posts based on Artificial Intelligence. In Proceedings of the 2023 International Society for the Prevention of Child Abuse & Neglect Congress (ISPCAN-2023), Edinburgh, UK, 2023; pp. 24–27. [Google Scholar]

- Wadud, M.A.H.; et al. How can we manage Offensive Text in Social Media - A Text Classification Approach using LSTM-BOOST. International Journal of Information Management Data Insights 2022, 2, 100095. [Google Scholar] [CrossRef]

- Chadaga, K.; et al. An Explainable Framework to Predict Child Sexual Abuse Awareness in People Using Supervised Machine Learning Models. Journal of Technology in Behavioral Science 2023, 1–17. [Google Scholar] [CrossRef]

- Li, T.; Zeng, Z.; Sun, S. A two-stage cyberbullying detection based on multi-view features and decision fusion strategy. Applied Intelligence 2025, 55, 294. [Google Scholar] [CrossRef]

- Marshan, A.; Nizar, F.N.M.; Ioannou, A.; Spanaki, K. Comparing Machine Learning and Deep Learning Techniques for Text Analytics: Detecting the Severity of Hate Comments Online. Information Systems Frontiers 2023. [Google Scholar] [CrossRef]

- Ngo, V.M.; Gajula, R.; Thorpe, C.; Mckeever, S. Discovering child sexual abuse material creators’ behaviors and preferences on the dark web. Child Abuse & Neglect 2024, 147, 106558. [Google Scholar]

- Dang, C.N.; Moreno-García, M.N.; De la Prieta, F. Hybrid deep learning models for sentiment analysis. Complexity 2021, 2021, 9986920. [Google Scholar] [CrossRef]

- Mazzarello, O.; Gagné, M.E.; Langevin, R. Risk factors for sexual revictimization and dating violence in young adults with a history of child sexual abuse. Journal of Child & Adolescent Trauma 2022, 15, 1113–1125. [Google Scholar] [CrossRef]

- Owusu-Addo, E.; et al. Prevalence and determinants of sexual abuse among adolescent girls during the COVID-19 lockdown and school closures in Ghana: A mixed method study. Child Abuse & Neglect 2023, 135, 105997. [Google Scholar]

- Aguerri, J.C.; Molnar, L.; Miró-Llinares, F. Old crimes reported in new bottles: the disclosure of child sexual abuse on Twitter through the case# MeTooInceste. Social Network Analysis and Mining 2023, 13, 27. [Google Scholar] [CrossRef]

- Gangwar, A.; González-Castro, V.; Alegre, E.; Fidalgo, E. AttM-CNN: Attention and metric learning based CNN for pornography, age and Child Sexual Abuse (CSA) Detection in images. Neurocomputing 2021, 445, 81–104. [Google Scholar] [CrossRef]

- Kissos, L.; Goldner, L.; Butman, M.; Eliyahu, N.; Lev-Wiesel, R. Can artificial intelligence achieve human-level performance? A pilot study of childhood sexual abuse detection in self-figure drawings. Child Abuse & Neglect 2020, 109, 104755. [Google Scholar]

- Laranjeira, C.; Macedo, J.; Avila, S.; Santos, J. Seeing without Looking: Analysis Pipeline for Child Sexual Abuse Datasets. In Proceedings of the Proc. of 2022 ACM Conf. on Fairness, Accountability, and Transparency (FAccT’22). ACM, 2022; pp. 2189–2205. [Google Scholar]

- Alam, I.; Basit, A.; Ziar, R.A. Utilizing Age-Adaptive Deep Learning Approaches for Detecting Inappropriate Video Content. Human Behavior and Emerging Technologies 2024, 2024, 7004031. [Google Scholar] [CrossRef]

- Hole, M.; Frank, R.; Logos, K.; Westlake, B.; Michalski, D.; Bright, D.; Afana, E.; Brewer, R.; Ross, A.; Swearingen, T.; et al. Developing automated methods to detect and match face and voice biometrics in child sexual abuse videos. Trends and Issues in Crime and Criminal Justice 2022, 1–15. [Google Scholar]

- Cook, D.; Zilka, M.; DeSandre, H.; Giles, S.; Maskell, S. Protecting Children from Online Exploitation: Can a Trained Model Detect Harmful Communication Strategies? Proceedings of the Proceedings of the 2023 AAAI/ACM Conference on AI, Ethics, and Society. ACM 2023, AIES ’23, 5–14. [Google Scholar]

- Puentes, J.; et al. Guarding the Guardians: Automated Analysis of Online Child Sexual Abuse. In Proceedings of the 2023 IEEE/CVF International Conference on Computer Vision Workshops (ICCVW), 2023; pp. 3730–3734. [Google Scholar]

- Kaur, S.; Singh, S.; Kaushal, S. Deep learning-based approaches for abusive content detection and classification for multi-class online user-generated data. International Journal of Cognitive Computing in Engineering 2024, 5, 104–122. [Google Scholar] [CrossRef]

- Mosa, M.A. Optimizing text classification accuracy: a hybrid strategy incorporating enhanced NSGA-II and XGBoost techniques for feature selection. Progress in Artificial Intelligence 2025. [Google Scholar] [CrossRef]

- Yamashita, R.; Nishio, M.; Do, R.K.G.; Togashi, K. Convolutional neural networks: an overview and application in radiology. Insights into imaging 2018, 9, 611–629. [Google Scholar] [CrossRef] [PubMed]

- Palangi, H.; et al. Deep sentence embedding using long short-term memory networks: Analysis and application to information retrieval. IEEE/ACM transactions on audio, speech, and language processing 2016, 24, 694–707. [Google Scholar] [CrossRef]

- Akhter, M.P.; Jiangbin, Z.; Naqvi, I.R.; Abdelmajeed, M.; Sadiq, M.T. Automatic detection of offensive language for urdu and roman urdu. IEEE 2020, Vol. 8, 91213–91226. [Google Scholar] [CrossRef]

- Ngo, V.M.; Cao, T.H. Semantic Search by Latent Ontological Features. New Generation Computing 2012, 30, 53–71. [Google Scholar] [CrossRef]

- Ngo, V.M.; Cao, T.H. A Generalized Vector Space Model for Ontology-Based Information Retrieval. Vietnamese Journal on Information Technologies and Communications 2009, 22, 43–53. [Google Scholar]

- Ngo, V.M.; Cao, T.H.; Le, T. WordNet-Based Information Retrieval Using Common Hypernyms and Combined Features. In Proceedings of the Proceedings of the 5th International Conference on Intelligent Computing and Information Systems (ICICIS 2011). ACM, 2011; pp. 1–6. [Google Scholar]

- Ngo, V.M.; Cao, T.H. Discovering Latent Concepts and Exploiting Ontological Features for Semantic Text Search. In Proceedings of the Proceedings of the 5th Int. Joint Conf. on Natural Language Processing (IJCNLP 2011). ACL, 2011; pp. 571–579. [Google Scholar]

- Ngo, V.M. Discovering Latent Information by Spreading Activation Algorithm for Document Retrieval. International Journal of Artificial Intelligence & Applications 2014, 5, 23–34. [Google Scholar]

- Dao, P.Q.; Roantree, M.; Ngo, V.M. Enhanced Dual Transformer Contrastive Network for Multimodal Sentiment Analysis. In Proceedings of the Proceedings of the 17th International Conference on Management of Digital EcoSystems (MEDES’25). Springer, 2026, CCIS, pp. 1–14.

- Dao, P.Q.; Ngo, V.M. Exploring, Investigating and Exploiting Sentiment Analysis Systems. Preprints.org 2025, 1–23. [Google Scholar] [CrossRef]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).