Submitted:

13 March 2026

Posted:

17 March 2026

You are already at the latest version

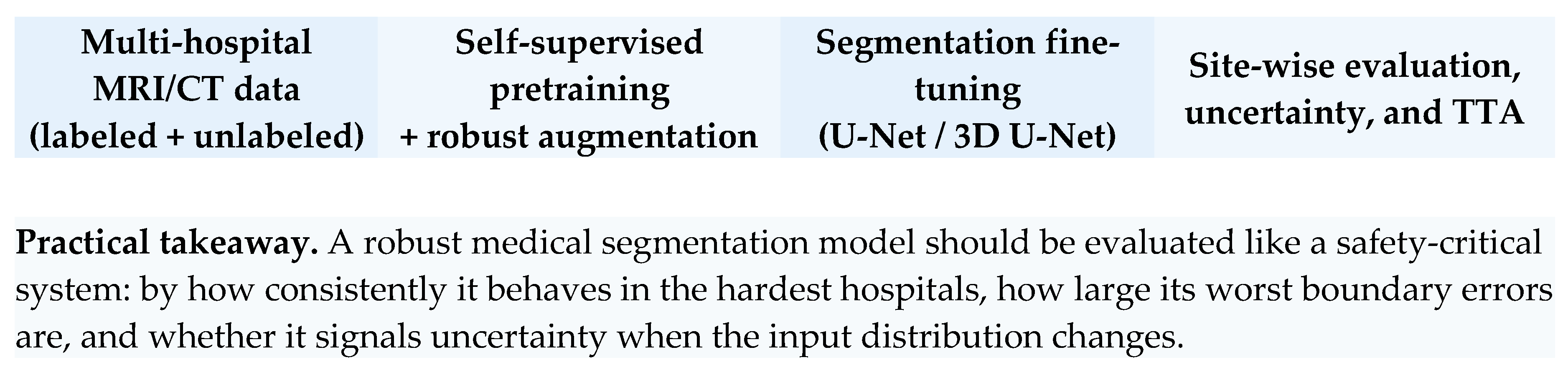

Abstract

Keywords:

1. Introduction

2. Contributions of This Preprint

3. Literature Review

4. Problem Formulation

5. Proposed Framework

6. Experimental Design

7. Evaluation Metrics and Reporting

8. Expected Findings and Interpretation

9. Failure Modes and Clinical Risk Analysis

10. Reproducibility and Deployment Considerations

11. Limitations and Future Research

12. Conclusions

| Technique | Primary target | Expected robustness benefit |

|---|---|---|

| Intensity transforms | Scanner gain and contrast shift | Reduces sensitivity to brightness and intensity variation across hospitals |

| Noise and blur | Motion, low dose, reconstruction artifacts | Improves stability under degraded image quality |

| Style / histogram perturbation | Vendor and protocol appearance | Discourages shortcut learning from site-specific style cues |

| Frequency mixing | Reconstruction kernels and texture signatures | Promotes invariance to spectral differences linked to scanners |

| Self-supervised pretraining | Limited labels and site-specific features | Learns broader anatomical representations before segmentation |

| Test-time adaptation | Residual target mismatch | Allows cautious alignment to new hospitals without target labels |

| Metric | What it measures | Why it matters for deployment |

|---|---|---|

| Dice coefficient | Region overlap | Standard segmentation quality; easy to compare across studies |

| Hausdorff distance (95%) | Extreme boundary deviation | Captures clinically risky contour failures |

| Sensitivity / recall | Missed tumor burden | Important for under-segmentation risk |

| Precision | False positive burden | Useful when over-segmentation affects treatment planning |

| Expected Calibration Error | Confidence reliability | Supports safe triage and human-in-the-loop review |

| Worst-site Dice | Minimum hospital performance | Prevents good averages from hiding one catastrophic site |

References

- Çiçek, Ö., Abdulkadir, A., Lienkamp, S. S., Brox, T., & Ronneberger, O. (2016). 3D U-Net: Learning dense volumetric segmentation from sparse annotation. In Medical Image Computing and Computer-Assisted Intervention (MICCAI) (pp. 424–432).

- Isensee, F., Jaeger, P. F., Kohl, S. A. A., Petersen, J., & Maier-Hein, K. H. (2021). nnU-Net: A self-configuring method for deep learning-based biomedical image segmentation. Nature Methods, 18(2), 203–211.

- Kar, N. K., Jana, S., Rahman, A., Ashokrao, P. R., & Mangai, R. A. (2024). Automated intracranial hemorrhage detection using deep learning in medical image analysis. In 2024 International Conference on Data Science and Network Security (ICDSNS) (pp. 1–6). IEEE. [CrossRef]

- LeCun, Y., Bengio, Y., & Hinton, G. (2015). Deep learning. Nature, 521(7553), 436–444.

- Litjens, G., Kooi, T., Bejnordi, B. E., Setio, A. A. A., Ciompi, F., Ghafoorian, M., van der Laak, J. A. W. M., van Ginneken, B., & Sánchez, C. I. (2017). A survey on deep learning in medical image analysis. Medical Image Analysis, 42, 60–88.

- Ronneberger, O., Fischer, P., & Brox, T. (2015). U-Net: Convolutional networks for biomedical image segmentation. In Medical Image Computing and Computer-Assisted Intervention (MICCAI) (pp. 234–241).

- Shen, D., Wu, G., & Suk, H.-I. (2017). Deep learning in medical image analysis. Annual Review of Biomedical Engineering, 19, 221–248.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).