Submitted:

12 March 2026

Posted:

16 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- 1.

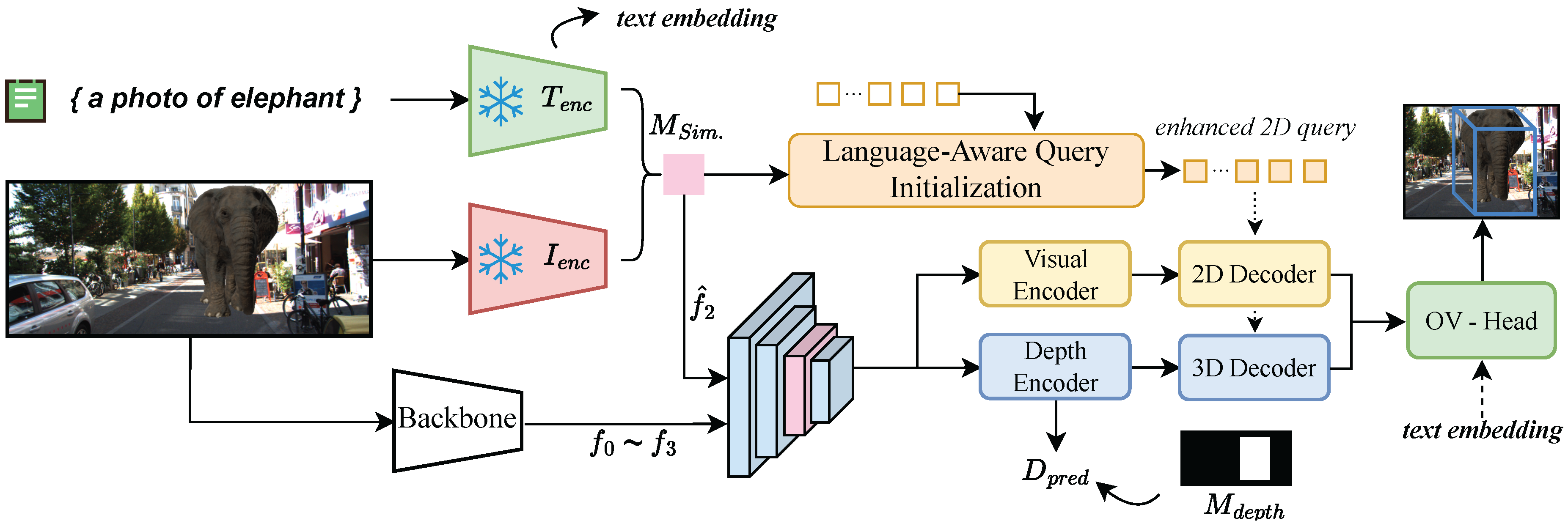

- Unified End-to-End Architecture: We propose CLIP-Mono3D, an end-to-end framework that unifies semantic and geometric reasoning. By introducing a Cross-Modal Semantic-Geometric Fusion module, we inject fine-grained semantic clues into geometric features via a lightweight residual connection, enhancing semantic awareness without disrupting pre-trained geometric cues.

- 2.

- Language-Aware Query Initialization: We design a novel query initialization strategy that converts 2D semantic heatmaps into explicit 3D query positions. This mechanism significantly improves 3D center localization and recall for open-vocabulary objects compared to standard learned queries.

- 3.

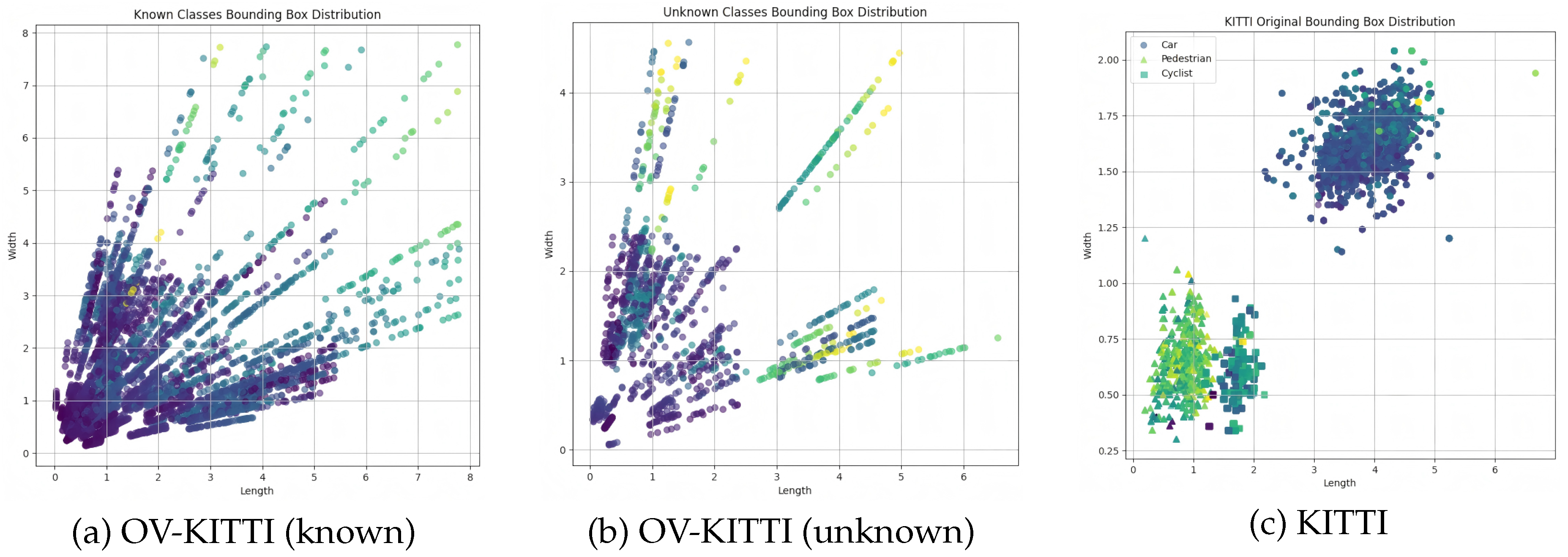

- OV-KITTI Benchmark and Evaluation: We introduce OV-KITTI, a large-scale benchmark with controlled semantic and size distributions. Extensive experiments on OV-KITTI, KITTI, and Argoverse demonstrate that CLIP-Mono3D achieves competitive performance in both closed- and open-vocabulary settings, paving the way for deployment in truly open-world scenarios.

2. Related Work

2.1. Monocular 3D Object Detection

2.2. Open-Vocabulary Object Detection

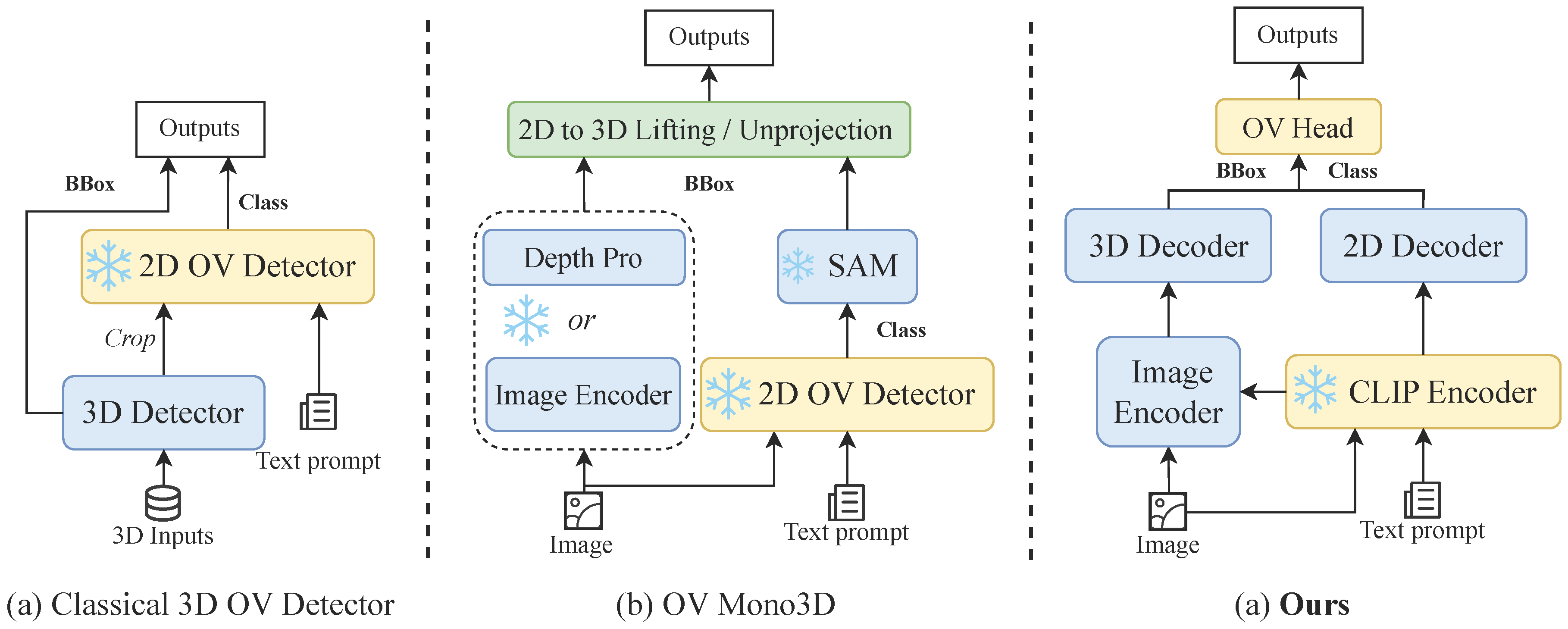

2.3. Open-Vocabulary 3D Object Detection

3. Method

3.1. Task Definition and Overall Architecture

3.2. Cross-Modal Feature Fusion

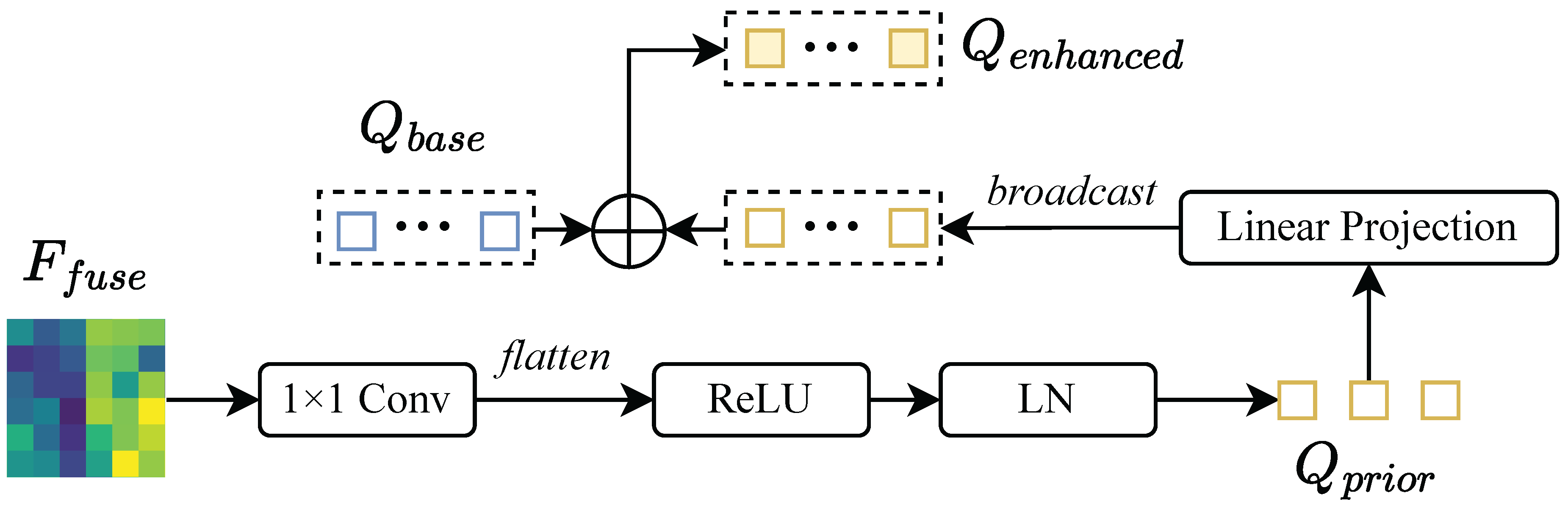

3.3. Language-Aware Query Initialization

3.4. Open-Vocabulary Detection Head and Loss

4. OV-KITTI Benchmark

4.1. Dataset Construction

4.2. Category Statistics

5. Experiments

5.1. Experimental Setup

5.1.1. Datasets and Metrics

5.1.2. Settings

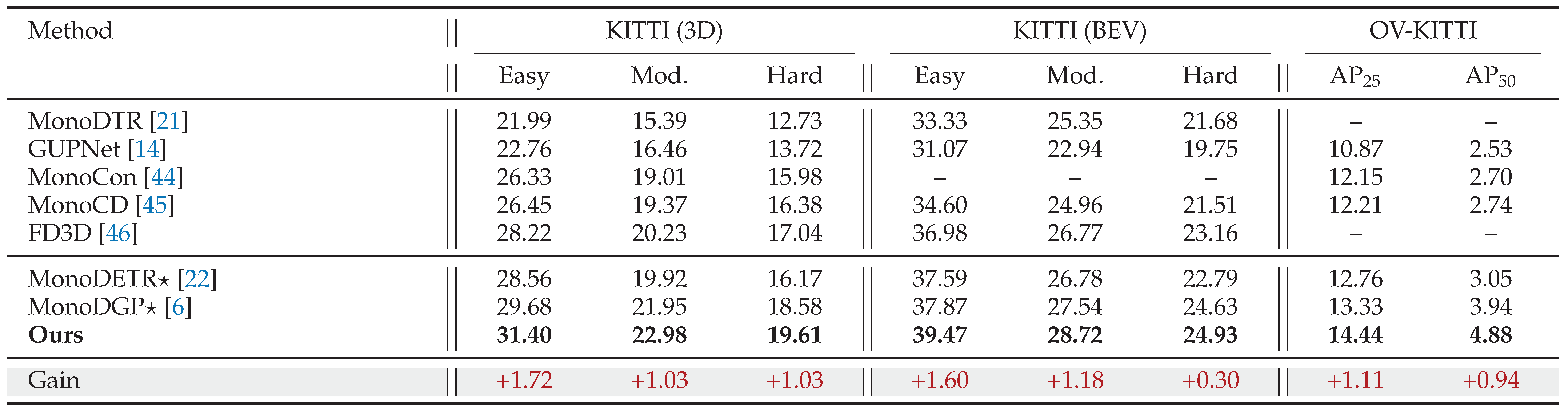

5.2. Closed-Vocabulary Results

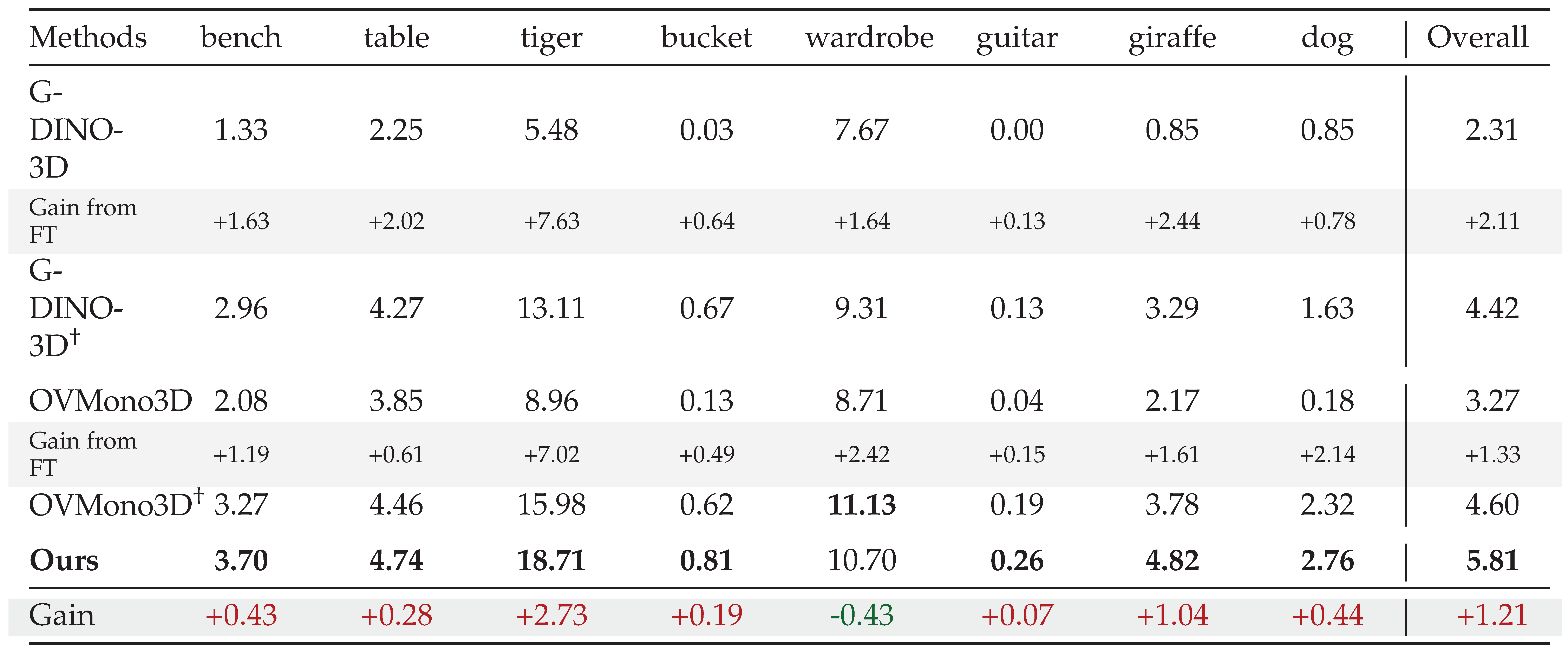

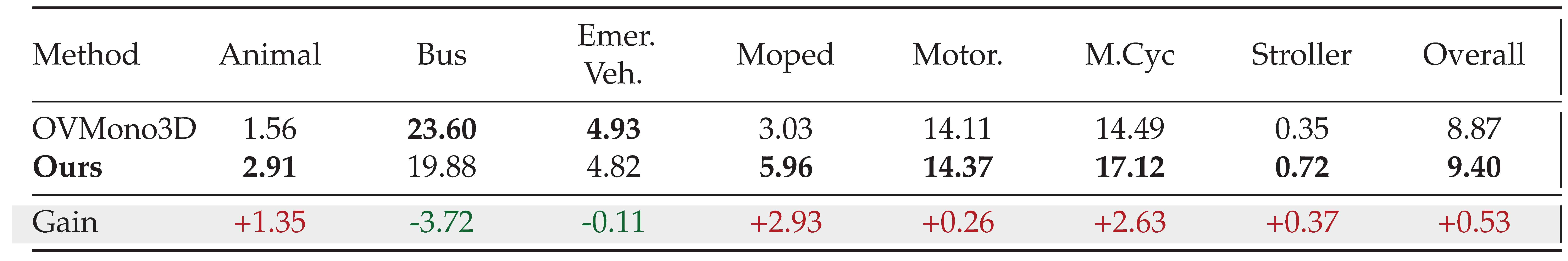

5.3. Open-Vocabulary Results

5.4. Visualization of Cross-Modal Alignment

5.5. Ablation Studies

5.6. Visualization

5.7. Real-World Scenario Verification

6. Conclusion

Funding

Data Availability Statement

Conflicts of Interest

References

- Geiger, A.; Lenz, P.; Urtasun, R. Are we ready for autonomous driving? the kitti vision benchmark suite. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2012; pp. 3354–3361. [Google Scholar]

- Song, S.; Lichtenberg, S.P.; Xiao, J. Sun rgb-d: A rgb-d scene understanding benchmark suite. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2015; pp. 567–576. [Google Scholar]

- Dai, A.; Chang, A.X.; Savva, M.; Halber, M.; Funkhouser, T.; Nießner, M. Scannet: Richly-annotated 3d reconstructions of indoor scenes. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2017; pp. 5828–5839. [Google Scholar]

- Caesar, H.; Bankiti, V.; Lang, A.H.; Vora, S.; Liong, V.E.; Xu, Q.; Krishnan, A.; Pan, Y.; Baldan, G.; Beijbom, O. nuscenes: A multimodal dataset for autonomous driving. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020; pp. 11621–11631. [Google Scholar]

- Yao, J.; Gu, H.; Chen, X.; Wang, J.; Cheng, Z. Open Vocabulary Monocular 3D Object Detection. arXiv 2024, arXiv:2411.16833. [Google Scholar] [PubMed]

- Pu, F.; Wang, Y.; Deng, J.; Yang, W. MonoDGP: Monocular 3D Object Detection with Decoupled-Query and Geometry-Error Priors. arXiv 2024, arXiv:2410.19590. [Google Scholar]

- Xie, C.; Wang, B.; Kong, F.; Li, J.; Liang, D.; Zhang, G.; Leng, D.; Yin, Y. FG-CLIP: Fine-Grained Visual and Textual Alignment. arXiv 2025, arXiv:2505.05071. [Google Scholar]

- Qin, Z.; Li, X. Monoground: Detecting monocular 3d objects from the ground. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 3793–3802. [Google Scholar]

- Shi, X.; Ye, Q.; Chen, X.; Chen, C.; Chen, Z.; Kim, T.K. Geometry-based distance decomposition for monocular 3d object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021; pp. 15172–15181. [Google Scholar]

- Liu, Z.; Wu, Z.; Tóth, R. Smoke: Single-stage monocular 3d object detection via keypoint estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), 2020; pp. 996–997. [Google Scholar]

- Wang, T.; Zhu, X.; Pang, J.; Lin, D. Fcos3d: Fully convolutional one-stage monocular 3d object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021; pp. 913–922. [Google Scholar]

- Yao, H.; Chen, J.; Wang, Z.; Wang, X.; Han, P.; Chai, X.; Qiu, Y. Occlusion-Aware Plane-Constraints for Monocular 3D Object Detection. In IEEE Trans. Intell. Transp. Syst.; 2023. [Google Scholar]

- Liu, Z.; Zhou, D.; Lu, Feixiang.; Fang, J.; Zhang, L. Autoshape: Real-time shape-aware monocular 3d object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021; pp. 15641–15650. [Google Scholar]

- Lu, Y.; Ma, X.; Yang, L.; Zhang, T.; Liu, Y.; Chu, Q.; Yan, J.; Ouyang, W. Geometry uncertainty projection network for monocular 3d object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021; pp. 3111–3121. [Google Scholar]

- Yang, L.; Yu, K.; Tang, T.; Li, J.; Yuan, K.; Wang, L.; Zhang, X.; Chen, P. Bevheight: A robust framework for vision-based roadside 3d object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023; pp. 21611–21620. [Google Scholar]

- Wang, Y.; Chao, W.L.; Garg, D.; Hariharan, B.; Campbell, M.; Weinberger, K.Q. Pseudo-lidar from visual depth estimation: Bridging the gap in 3d object detection for autonomous driving. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2019; pp. 8445–8453. [Google Scholar]

- Ma, X.; Liu, S.; Xia, Z.; Zhang, H.; Zeng, X.; Ouyang, W. Rethinking pseudo-lidar representation. In Proceedings of the European Conference on Computer Vision (ECCV), 2020; pp. 311–327. [Google Scholar]

- Chong, Z.; Ma, X.; Zhang, H.; Yue, Y.; Li, H.; Wang, Z.; Ouyang, W. Monodistill: Learning spatial features for monocular 3d object detection. arXiv 2022, arXiv:2201.10830. [Google Scholar] [CrossRef]

- Rukhovich, D.; Vorontsova, Anna.; Konushin, A. Imvoxelnet: Image to voxels projection for monocular and multi-view general-purpose 3d object detection. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 2022; pp. 2397–2406. [Google Scholar]

- Park, D.; Ambrus, R.; Guizilini, V.; Li, J.; Gaidon, A. Is pseudo-lidar needed for monocular 3d object detection? In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021; pp. 3142–3152. [Google Scholar]

- Huang, K.C.; Wu, T.H.; Su, H.T.; Hsu, W.H. Monodtr: Monocular 3d object detection with depth-aware transformer. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 4012–4021. [Google Scholar]

- Zhang, R.; Qiu, H.; Wang, T.; Guo, Z.; Cui, Z.; Qiao, Y.; Li, H.; Gao, P. MonoDETR: Depth-guided transformer for monocular 3D object detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2023; pp. 9155–9166. [Google Scholar]

- Ma, Z.; Luo, G.; Gao, J.; Li, L.; Chen, Y.; Wang, S.; Zhang, C.; Hu, W. Open-vocabulary one-stage detection with hierarchical visual-language knowledge distillation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 14074–14083. [Google Scholar]

- Zhong, Y.; Yang, J.; Zhang, P.; Li, C.; Codella, N.; Li, L.H.; Zhou, L.; Dai, X.; Yuan, L.; Li, Y.; et al. Regionclip: Region-based language-image pretraining. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 16793–16803. [Google Scholar]

- Zareian, A.; Rosa, K.D.; Hu, D.H.; Chang, S.F. Open-vocabulary object detection using captions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2021; pp. 14393–14402. [Google Scholar]

- Zhou, X.; Girdhar, R.; Joulin, A.; Krähenbühl, P.; Misra, I. Detecting twenty-thousand classes using image-level supervision. In Proceedings of the European Conference on Computer Vision (ECCV), 2022; pp. 350–368. [Google Scholar]

- Yao, L.; Han, J.; Liang, X.; Xu, D.; Zhang, W.; Li, Z.; Xu, H. Detclipv2: Scalable open-vocabulary object detection pre-training via word-region alignment. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023; pp. 23497–23506. [Google Scholar]

- Wu, X.; Zhu, F.; Zhao, R.; Li, H. Cora: Adapting clip for open-vocabulary detection with region prompting and anchor pre-matching. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023; pp. 7031–7040. [Google Scholar]

- Zang, Y.; Li, W.; Zhou, K.; Huang, C.; Loy, C.C. Open-vocabulary detr with conditional matching. In Proceedings of the European Conference on Computer Vision (ECCV), 2022; pp. 106–122. [Google Scholar]

- Cheng, T.; Song, L.; Ge, Y.; Liu, W.; Wang, X.; Shan, Y. Yolo-world: Real-time open-vocabulary object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024; pp. 16901–16911. [Google Scholar]

- Liu, S.; Zeng, Z.; Ren, T.; Li, F.; Zhang, H.; Yang, J.; Jiang, Q.; Li, Chunyuan.; Yang, J.; Su, Hang; et al. Grounding dino: Marrying dino with grounded pre-training for open-set object detection. In Proceedings of the European Conference on Computer Vision (ECCV), 2024; pp. 38–55. [Google Scholar]

- Zhao, T.; Liu, P.; He, X.; Zhang, L.; Lee, K. Real-time Transformer-based Open-Vocabulary Detection with Efficient Fusion Head. arXiv 2024, arXiv:2403.06892. [Google Scholar]

- Li, L.H.; Zhang, P.; Zhang, H.; Yang, J.; Li, Chunyuan.; Zhong, Y.; Wang, L.; Yuan, L.; Zhang, Lei.; Hwang, J.N.; et al. Grounded language-image pre-training. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 10965–10975. [Google Scholar]

- Chuang, L.; Yi, J.; Lizhen, Q.; Zehuan, Y.; Jianfei, C. Generative region-language pretraining for open-ended object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024. [Google Scholar]

- Yao, L.; Pi, R.; Han, J.; Liang, X.; Xu, Hang.; Zhang, Wei.; Li, Zhenguo.; Xu, Dan. DetCLIPv3: Towards Versatile Generative Open-vocabulary Object Detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024; pp. 27391–27401. [Google Scholar]

- Cen, J.; Yun, P.; Cai, J.; Wang, M.Y.; Liu, M. Open-set 3d object detection. In Proceedings of the 2021 International Conference on 3D Vision (3DV), 2021; pp. 869–878. [Google Scholar]

- Lu, Y.; Xu, C.; Wei, X.; Xie, X.; Tomizuka, M.; Keutzer, K.; Zhang, S. Open-vocabulary point-cloud object detection without 3d annotation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023; pp. 1190–1199. [Google Scholar]

- Cao, Y.; Zeng, Y.; Xu, Hang.; Xu, Dan. Coda: Collaborative novel box discovery and cross-modal alignment for open-vocabulary 3d object detection. Adv. Neural Inf. Process. Syst. 2024, 36. [Google Scholar]

- Zhu, C.; Zhang, W.; Wang, T.; Liu, X.; Chen, K. Object2scene: Putting objects in context for open-vocabulary 3d detection. arXiv 2023, arXiv:2309.09456. [Google Scholar]

- Wang, Z.; Li, Y.; Liu, T.; Zhao, H.; Wang, S. OV-Uni3DETR: Towards Unified Open-Vocabulary 3D Object Detection via Cycle-Modality Propagation. arXiv 2024, arXiv:2403.19580. [Google Scholar]

- Xue, L.; Gao, M.; Xing, C.; Martín-Martín, R.; Wu, J.; Xiong, C.; Xu, R.; Niebles, J.C.; Savarese, S. Ulip: Learning a unified representation of language, images, and point clouds for 3d understanding. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023; pp. 1179–1189. [Google Scholar]

- Zhang, R.; Guo, Z.; Zhang, W.; Li, K.; Miao, X.; Cui, B.; Qiao, Yu.; Gao, Peng.; Li, Hongsheng. Pointclip: Point cloud understanding by clip. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2022; pp. 8552–8562. [Google Scholar]

- Deitke, M.; Schwenk, D.; Salvador, J.; Weihs, L.; Michel, O.; VanderBilt, E.; Schmidt, L.; Ehsani, K.; Kembhavi, A.; Farhadi, A. Objaverse: A universe of annotated 3d objects. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023; pp. 13142–13153. [Google Scholar]

- Liu, X.; Xue, N.; Wu, T. Learning auxiliary monocular contexts helps monocular 3d object detection. In Proceedings of the AAAI Conference on Artificial Intelligence, 2022; pp. 1810–1818. [Google Scholar]

- Yan, L.; Yan, P.; Xiong, S.; Xiang, X.; Tan, Y. Monocd: Monocular 3d object detection with complementary depths. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2024; pp. 10248–10257. [Google Scholar]

- Wu, Z.; Gan, Y.; Wu, Y.; Wang, R.; Wang, Xiaoquan.; Pu, Jian. Fd3d: Exploiting foreground depth map for feature-supervised monocular 3d object detection. In Proceedings of the AAAI Conference on Artificial Intelligence, 2024; pp. 6189–6197. [Google Scholar]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, S.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. In Proceedings of the International Conference on Learning Representations (ICLR), 2022. [Google Scholar]

| Dataset | Classes | Scene | Modality | Object Range |

|---|---|---|---|---|

| KITTI 3D | 9 | Outdoor | RGB, PC | traffic agents |

| SUN RGB-D | 20 | Indoor | RGB-D | item |

| ScanNet V2 | 21 | Indoor | RGB-D, PC | item |

| Nuscenes | 23 | Outdoor | RGB, PC | traffic agents |

| Objectron | 9 | Object-wise | RGB | item |

| OV-KITTI (ours) | 49 | Outdoor | RGB | traffic agents, item, animal |

| Category | Class Names | Quantity | ||

|---|---|---|---|---|

| Train | air conditioner, alligator, fireplug, bathtub, bed, volleyball, | 5936 | ||

| camel, cat, chair, cow, deer, baby buggy, sofa, hippopotamus, | ||||

| horse, lion, oven, pillow, refrigerator, rhinoceros, sculpture, | ||||

| suitcase, telephone booth, television set, wheelchair, zebra, | ||||

| automatic washer, goat, hog, snowman, soccer ball, elephant | ||||

| Test | bench, dog, giraffe, guitar, table, tiger, bucket, wardrobe | 1545 |

|

| Method | #Param (M) | FLOPs (G) | Latency (ms) |

|---|---|---|---|

| MonoDETR★ | 39.82 | 47.2 | 33 |

| MonoDGP★ | 43.33 | 55.19 | 36 |

| Ours | 43.48 | 72.52 | 51 |

|

| Method | CV | OV |

|---|---|---|

| Baseline | 13.33 | 3.94 |

| + Feature Fusion | 14.22 | 4.69 |

| + Query Init. | 14.44 | 5.81 |

| Text Encoder | CV | OV |

| CLIP (Frozen) | 14.05 | 5.32 |

| FineCLIP (Frozen) | 14.31 | 5.63 |

| FG-CLIP (Finetuned) | 14.15 | 5.45 |

| FG-CLIP (Frozen) | 14.44 | 5.81 |

| Config | NQ | Agg. | CV | OV |

|---|---|---|---|---|

| (a) Learnable | 50 | - | 13.33 | 3.94 |

| (b) Top-K | 50 | - | 13.95 | 4.80 |

| (c) + Global | 25 | Mean | 14.28 | 5.41 |

| (d) + Global | 50 | Mean | 14.44 | 5.81 |

| (e) + Global | 75 | Mean | 14.39 | 5.65 |

| (f) + Global | 50 | Max | 14.09 | 5.17 |

| Fusion | Loc. | CV | OV |

|---|---|---|---|

| (a) None | - | 13.33 | 3.94 |

| (b) Cat | res2 | 13.67 | 4.18 |

| (c) Add (1L) | res2 | 13.99 | 4.36 |

| (d) Add (2L) | res2 | 14.22 | 4.69 |

| (e) Add (3L) | res2 | 14.14 | 4.58 |

| (f) Add (2L) | res3 | 13.92 | 4.41 |

| Training Data | Seen | Unseen |

|---|---|---|

| (1) Pure OV | 15.32 | 5.81 |

| (2) + Ped./Cyc. | 15.51 | 5.76 |

| (3) + Real Car | 15.18 | 4.97 |

| Method | Cyclist | Cyclist | ||

|---|---|---|---|---|

| Easy | Mod. | Easy | Mod. | |

| OVMono3D | 6.70 | 9.05 | 4.35 | 5.27 |

| Ours | 6.51 | 7.82 | 4.54 | 4.99 |

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).