Submitted:

12 March 2026

Posted:

16 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. The Problem: Context as a Monolith

- 1.

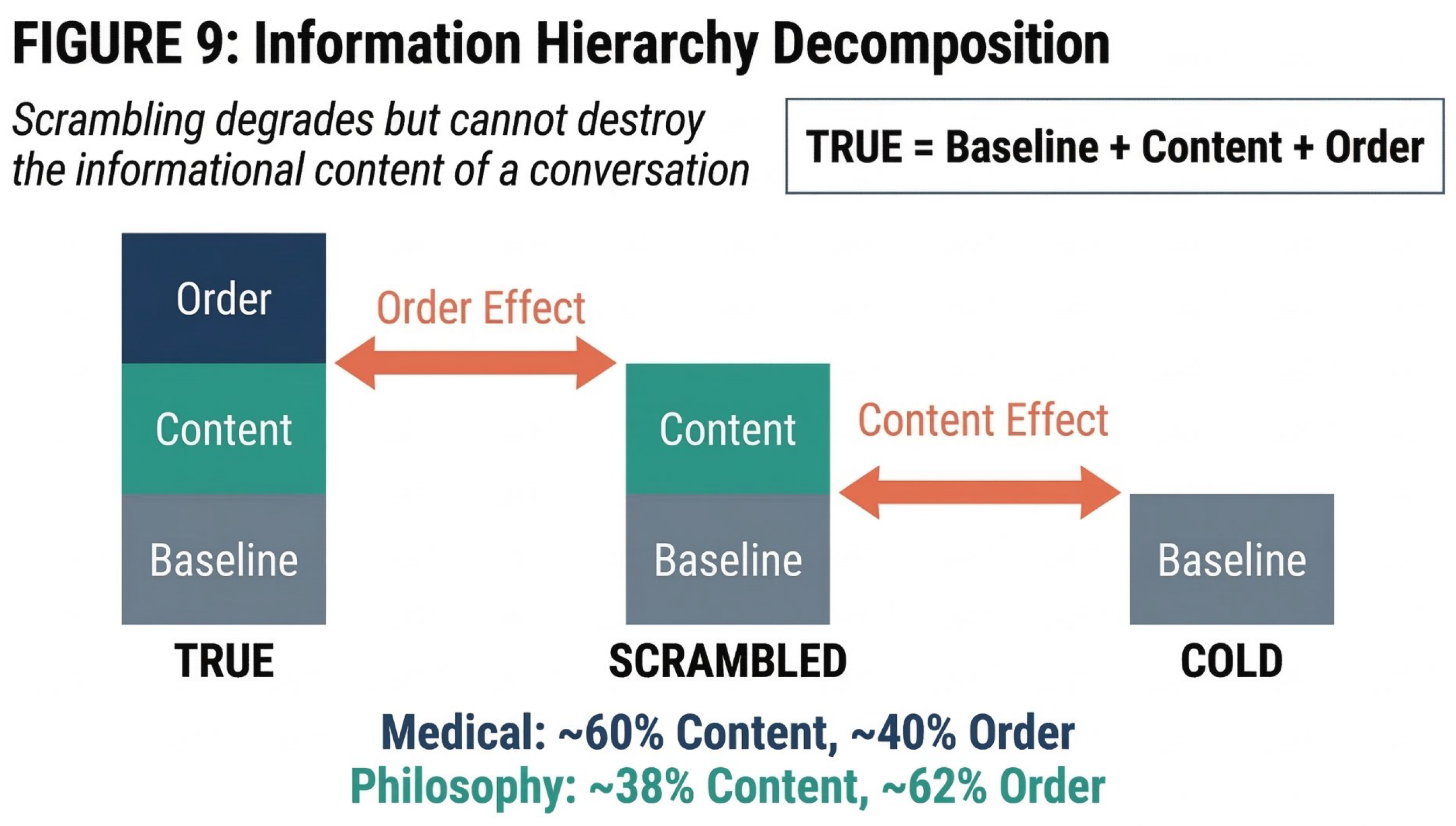

- Structure (Content-Order): Within context utilisation, how much is driven by informational content versus sequential order? And how do these components affect response stability?

- 2.

- Trajectory (Exploration Arc): Does response diversity expand, contract, or remain stable as conversation progresses?

1.2. The MCH Research Program

- TRUE: Full coherent 29-message history, followed by P30 query

- COLD: No history; single-turn P30 query only

- SCRAMBLED: Same 29 messages as TRUE, but randomised in order, followed by P30 query

1.3. Motivation from Prior Work

1.4. Related Work

2. Methods

2.1. Data Sources and Models

2.2. Context Utilization Depth (CUD) — Pilot

2.3. Content-Order Decomposition

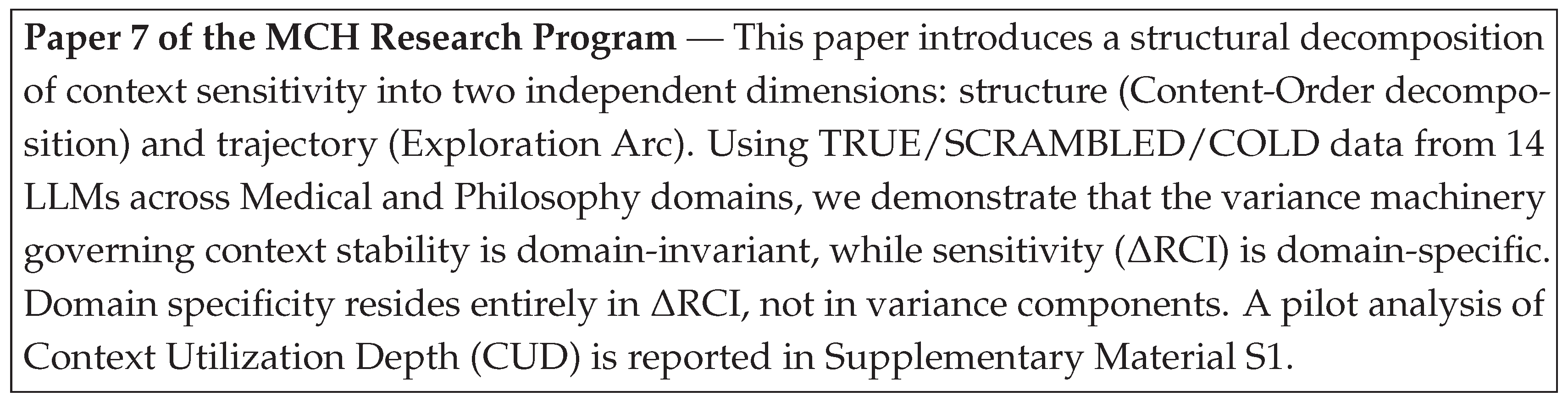

2.4. Exploration Arc

3. Results

3.1. Context Utilization Depth: Pilot Note

3.2. Content-Order Decomposition of RCI

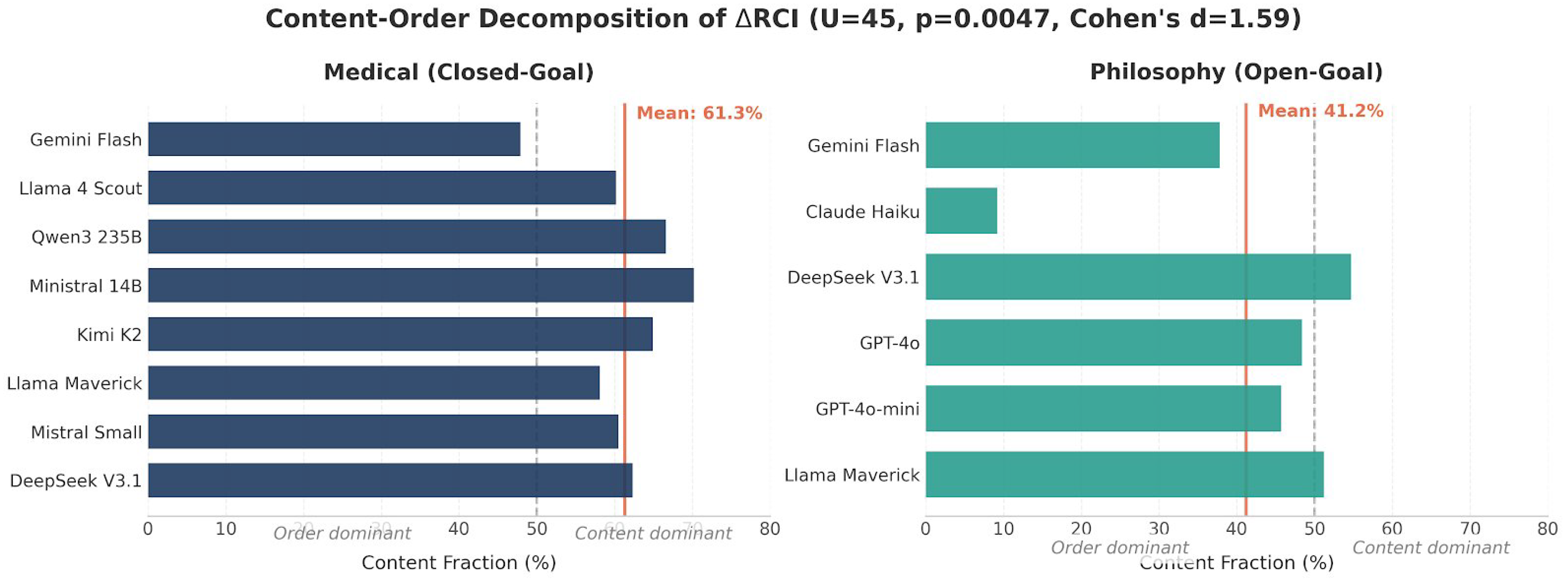

3.3. The P30 Spike: Content and Order Both Contribute

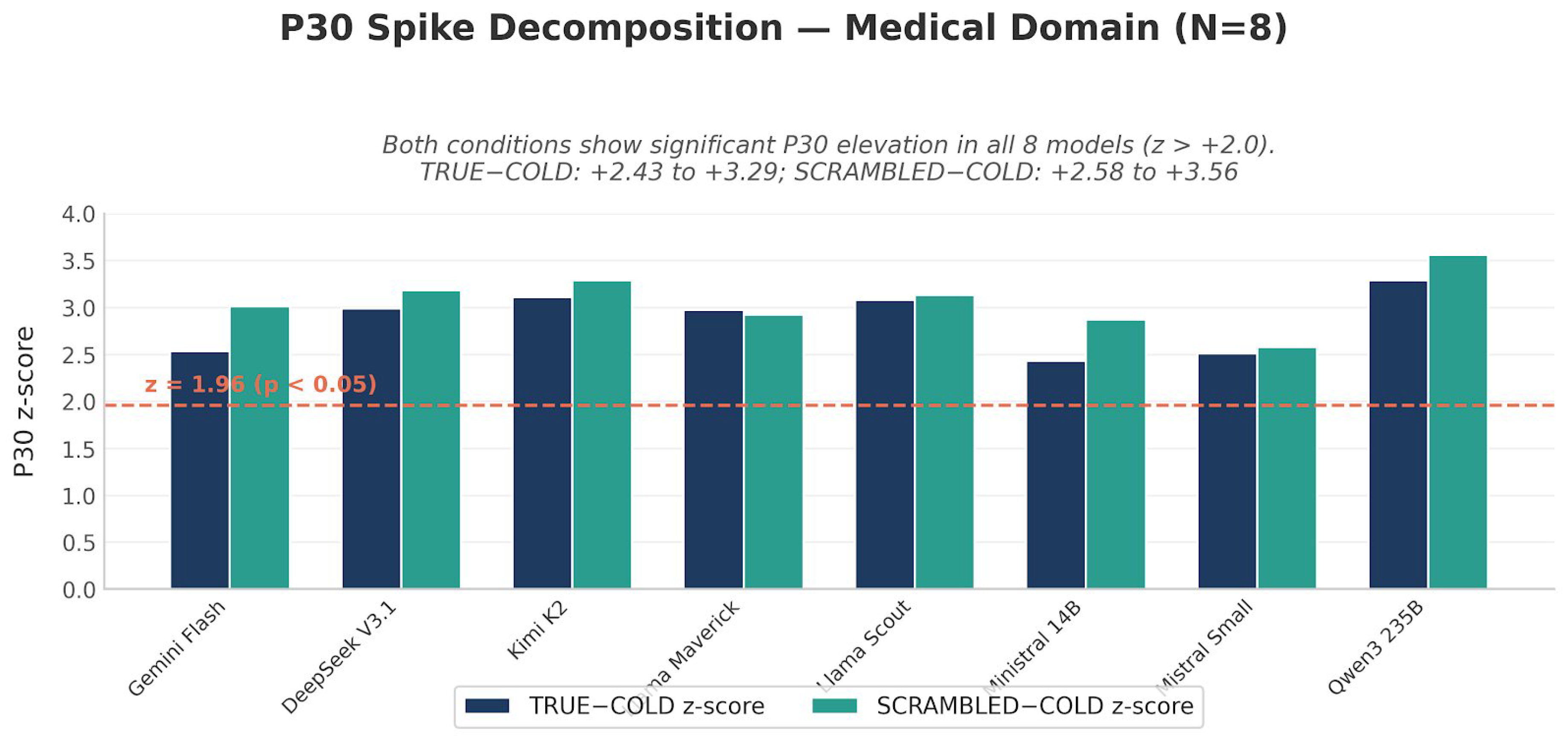

3.4. Variance Decomposition: Content Destabilizes, Order Stabilizes

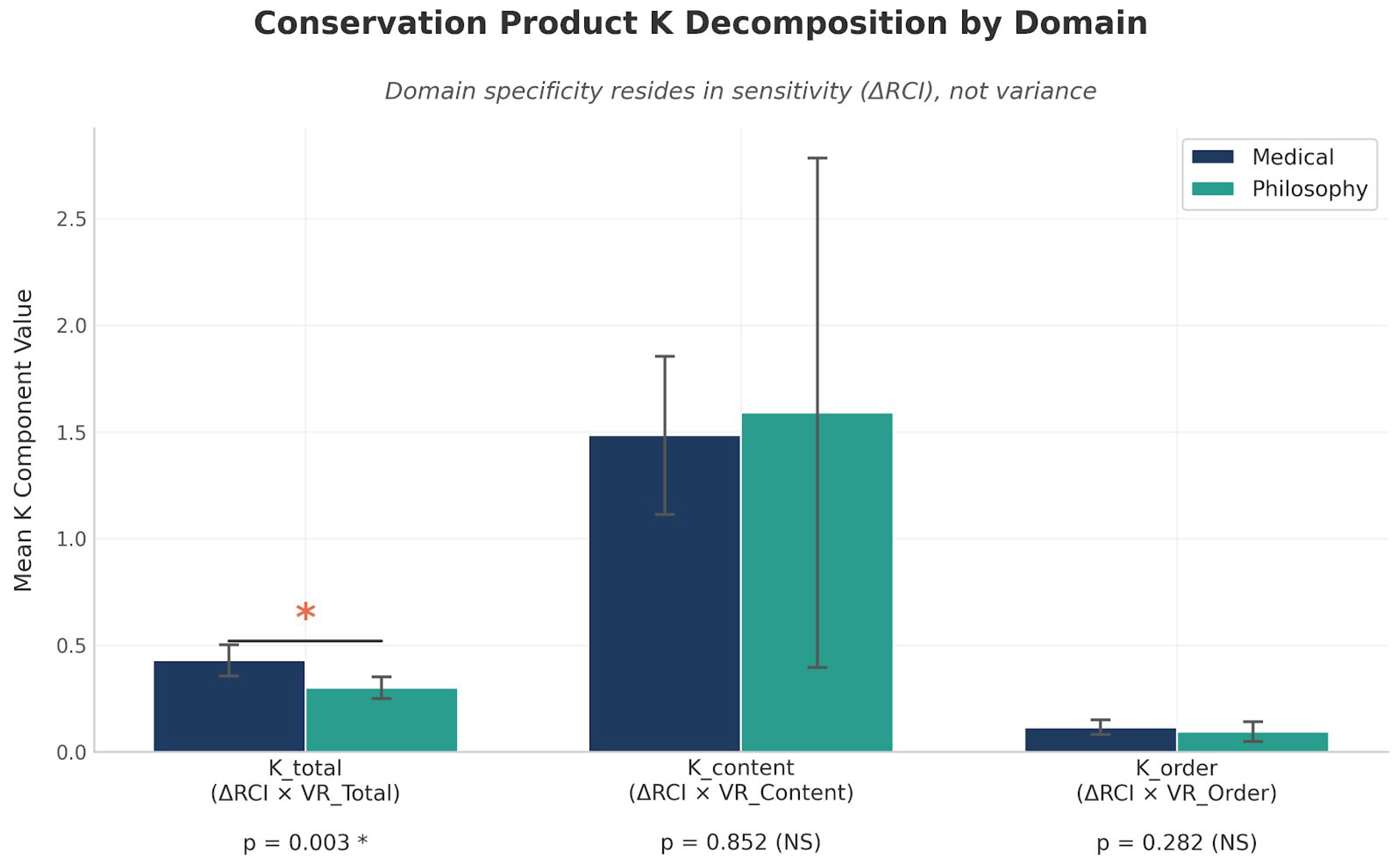

3.5. Conservation Product K Decomposition

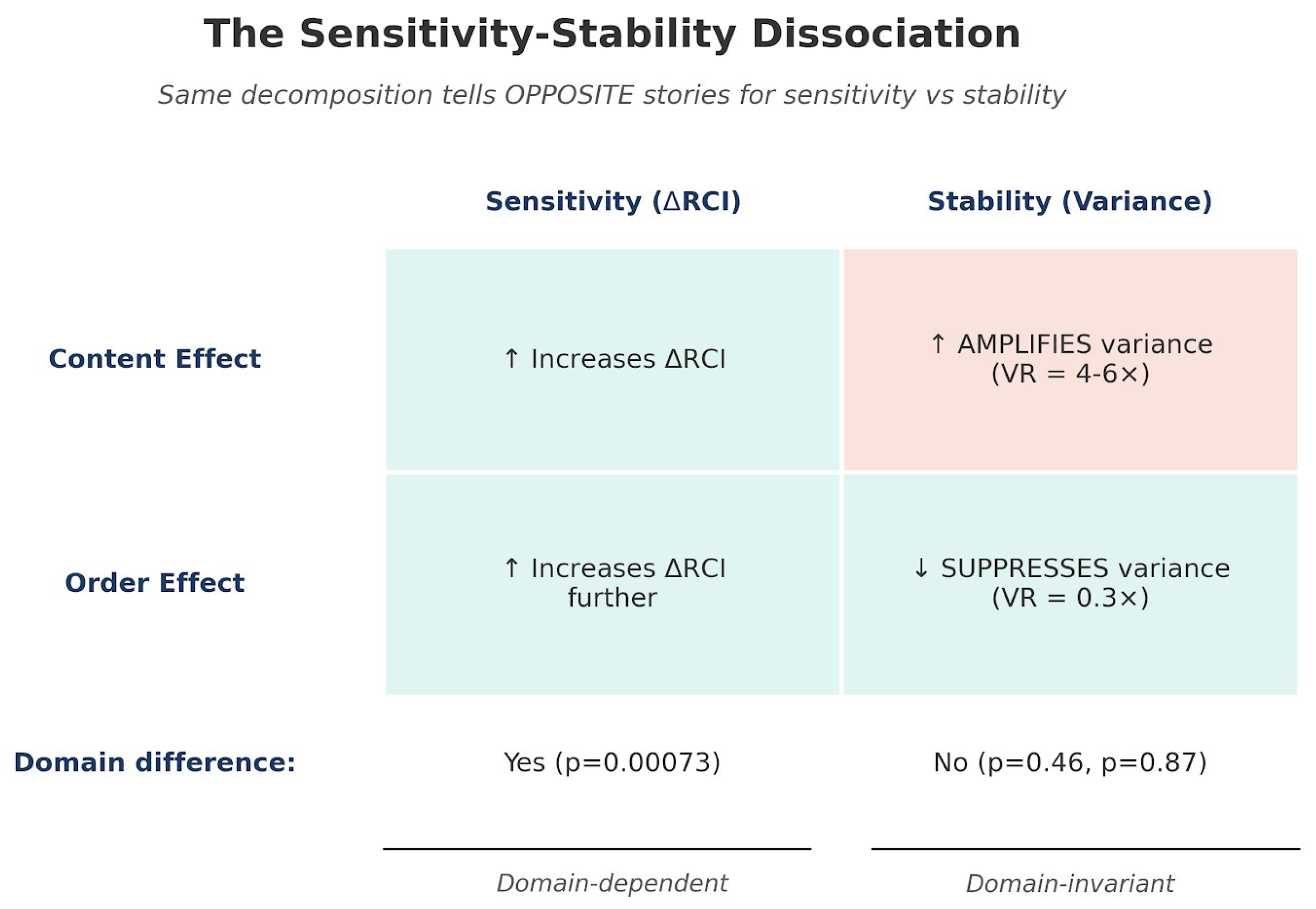

3.6. The Sensitivity-Stability Dissociation

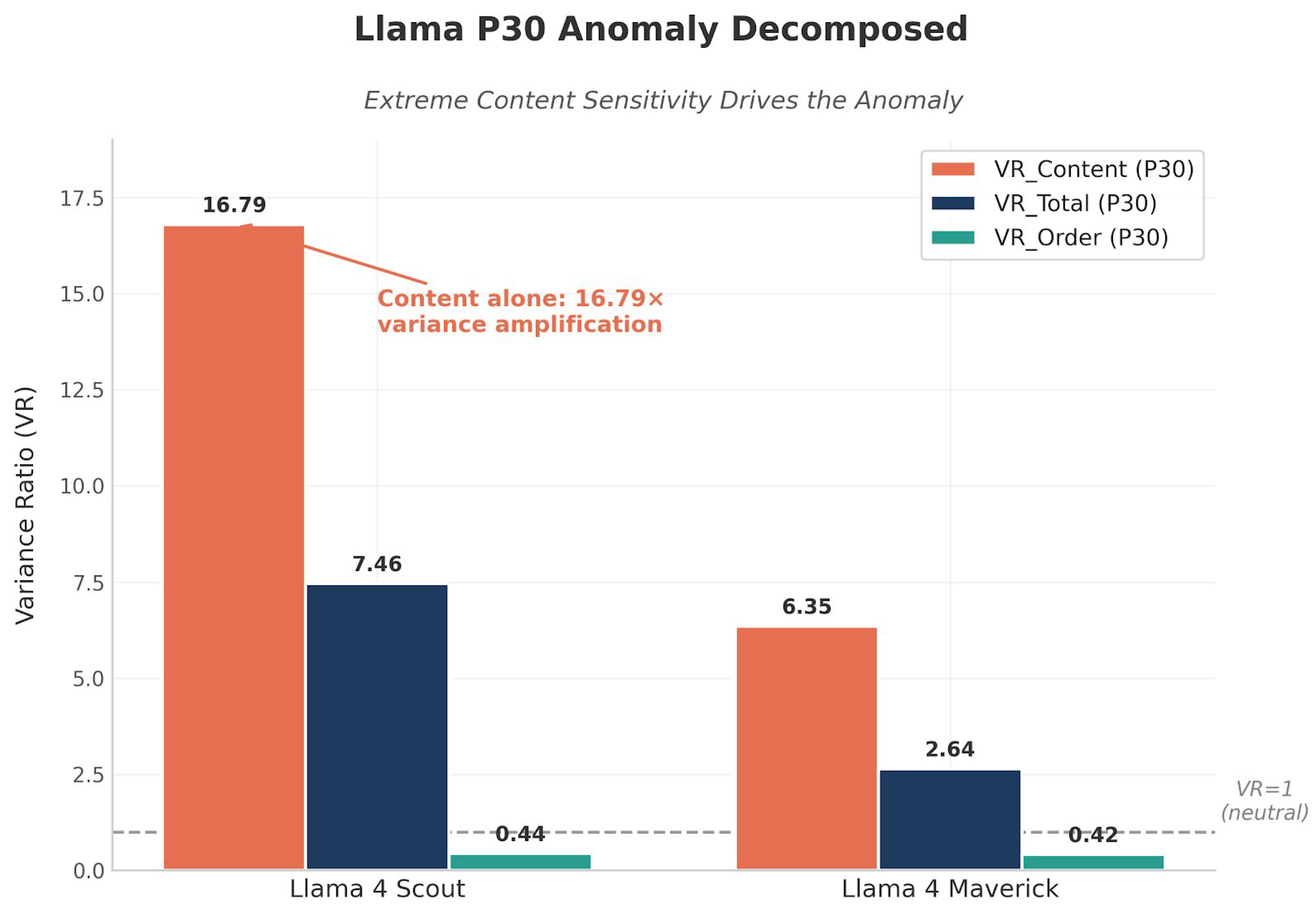

3.7. Llama P30 Anomaly Decomposed

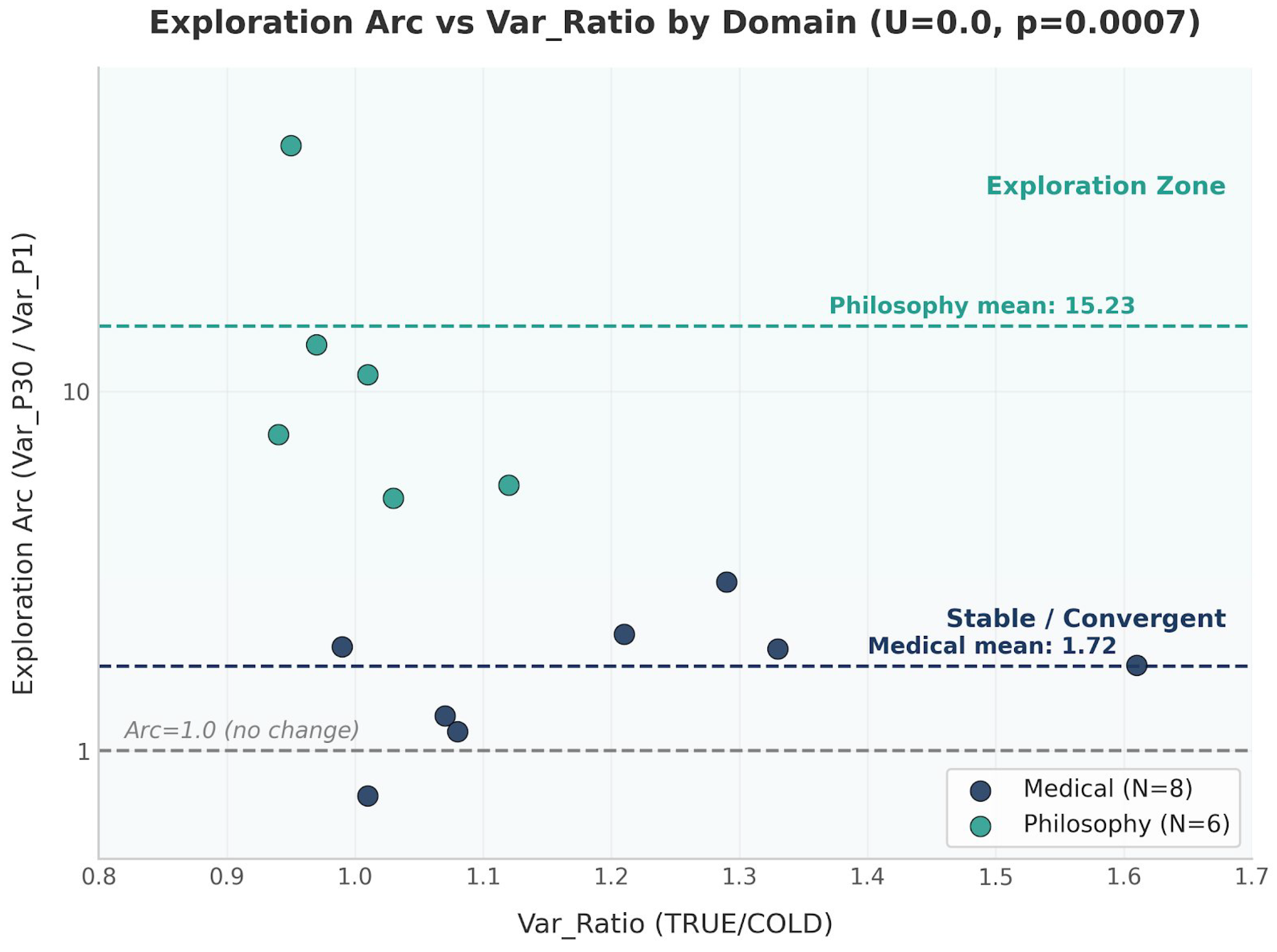

3.8. Exploration Arc

3.9. Information Hierarchy Schematic

4. Discussion

4.1. Context Is Not a Monolith

4.2. The Variance Machinery Is Universal

4.3. Implications for RAG Design

- For closed-goal tasks (clinical decision support, legal analysis, technical debugging): prioritise content completeness. Order effects contribute only ∼40% of context benefit; content arrangement matters less than factual coverage.

- For open-goal tasks (philosophical reasoning, creative generation, strategic planning): preserve narrative order. Order drives ∼62% of context benefit; shuffling retrieved chunks may destroy the primary mechanism of context utilisation.

4.4. Implications for Clinical AI Safety

4.5. Connection to Concurrent Work

4.6. Limitations

- 1.

- Two domains. Content-Order decomposition was conducted on Medical and Philosophy domains only. Paper 6 [9] will test decomposition across three additional domains (Legal, Technical, Applied Ethics). Preliminary Legal data (2/5 models complete) confirms the information hierarchy TRUE > SCRAMBLED > COLD; full decomposition pending.

- 2.

- CUD pilot scope (Supplementary S1). CUD was measured in four models only, with Llama 4 Maverick as the sole model showing CUD . Whether this reflects an architecture-specific property cannot be determined from four models; full CUD analysis across all 14 models is deferred to future work.

- 3.

- Embedding model. All variance computations used all-MiniLM-L6-v2. Robustness to alternative embeddings will be reported in Paper 6’s robustness analysis.

- 4.

- Philosophy Arc sample size. Exploration Arc computed for philosophy models. The zero-overlap finding is strong, but replication with larger samples is warranted.

- 5.

- Single P30 synthesis prompt. CUD and Arc are measured at P30. Whether these properties hold at intermediate positions and across different prompt types is an open question.

- 6.

- Closed-source models. GPT-4o, GPT-5.2, Claude Haiku, and Gemini Flash are closed-source; architectural interpretations remain descriptive.

5. Conclusion

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Asgari, E.; et al. A framework to assess clinical safety and hallucination rates of LLMs for medical text summarisation. npj Digital Medicine 2025, 8(1), 274. [Google Scholar] [CrossRef] [PubMed]

- Brown, T.; Mann, B.; Ryder, N.; et al. Language models are few-shot learners. Advances in Neural Information Processing Systems 2020, arXiv:2005.1416533. [Google Scholar]

- Goldfeder, R.; Wyder, T.; LeCun, Y.; Shwartz-Ziv, R. AI must embrace specialization via Superhuman Adaptable Intelligence (SAI). arXiv Submitted. 2026, arXiv:2602.23643. [Google Scholar]

- Laxman, M M. Context curves behavior: Measuring AI relational dynamics with ΔRCI. Preprints.org 2026a. [Google Scholar] [CrossRef]

- Laxman, M M. Scaling context sensitivity: Standardized benchmark across 25 LLM-domain configurations. Preprints.org 2026b. [Google Scholar] [CrossRef]

- Laxman, M M. Domain-specific temporal dynamics of context sensitivity in large language models. Preprints.org 2026c. [Google Scholar] [CrossRef]

- Laxman, M M. Engagement as entanglement: Variance signatures of bidirectional context coupling in large language models. Preprints.org 2026d. [Google Scholar] [CrossRef]

- Laxman, M M. Stochastic incompleteness: A predictability taxonomy for clinical AI deployment. Preprints.org 2026e. [Google Scholar] [CrossRef]

- Laxman, M M. Validating the conservation law across five domains: Legal, technical, and applied ethics. Preprints.org. In progress (data collection ongoing); OSF pre-registration, 2026f; Available online: https://osf.io/dp8nj/.

- Lewis, P.; Perez, E.; Piktus, A.; et al. Retrieval-augmented generation for knowledge-intensive NLP tasks. Advances in Neural Information Processing Systems 2020, 33, 9459–9474. [Google Scholar]

- Liu, N. F.; Lin, K.; Hewitt, J.; Paranjape, A.; Bevilacqua, M.; Petroni, F.; Liang, P. Lost in the middle: How language models use long contexts. Transactions of the Association for Computational Linguistics 2024, 12, 157–173. [Google Scholar] [CrossRef]

- Pezeshkpour, P.; Hruschka, E. Large language models sensitivity to the order of options in multiple-choice questions. Findings of ACL: NAACL 2024 2024, 2975–2984. [Google Scholar] [CrossRef]

- Reimers, N.; Gurevych, I. Sentence-BERT: Sentence embeddings using Siamese BERT-Networks. Proceedings of EMNLP 2019, 2019. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; et al. Attention is all you need. Advances in Neural Information Processing Systems 2017, arXiv:1706.0376230. [Google Scholar]

- Wornow, M.; Xu, Y.; Thapa, R.; et al. The shaky foundations of large language models and foundation models for electronic health records. npj Digital Medicine 2023, 6(1), 135. [Google Scholar] [CrossRef] [PubMed]

| Source | Models | Domain | Trials | Conditions |

|---|---|---|---|---|

| Paper 2 (alignment scores) | 14 LLMs, 25 runs | Medical & Philosophy | 50 | TRUE, SCRAMBLED, COLD (P30 spike confirmation in 4 additional closed-API models, Section 3.3) |

| Paper 6 (re-embedded, Medical) | 8 LLMs | Medical (P30) | 50 | TRUE, SCRAMBLED, COLD |

| Paper 6 (re-embedded, Philosophy) | 6 LLMs | Philosophy (P30) | 50 | TRUE, SCRAMBLED, COLD |

| CUD pilot (Supp. S1) | 4 LLMs | Med & Phil | 50/K-level | K ∈ {1,5,10,15,20,29} |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).