Submitted:

12 March 2026

Posted:

13 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Ontology Construction

2.2. Neuro-Symbolic AI

2.3. State-of-the-Art in Agents

3. Approach

3.1. Construct: Autonomous Ontology Synthesis

3.2. Align: Semantic-Structural Fusion

3.3. Reason: Logic Execution Within Self-Constructed Axioms

3.4. Model Training

4. The 10-Dimensional Map of AI Evolution

| Dimension | Spatial Concept | AI Evolutionary Stage | Representative Form |

|---|---|---|---|

| 1D-3D | Line/Plane/Volume | Statistical Learning | Rule-based Systems, Machine Learning, Deep Learning |

| 4D | Time, Causal Sequences | LLMs | Transformers |

| 5D | All Possible Timelines | Basic Agents | Planning, Hypothesis Reasoning |

| 6D | Jumping Between Timelines | Meta-Cognitive Agents | Self-Reflection, Tool/Skill Building |

| 7D | Different Physical Constants | LOM | Autonomous Logic Reconstruction |

| 8D-10D | Multiverse/Omniscience | ASI | Paradigm Discovery, Singularity |

4.1. From 1D to 4D: The Evolution of Knowledge Representation

4.2. From 5D to 6D: The Ceiling of Meta-Cognition

4.3. The 7D Breakthrough: LOM’s Logic Autonomy

4.4. Reflection: Acceleration vs. Ascension

5. Experiments

5.1. Dataset

5.2. Experimental Setup

5.3. Evaluation Metrics

5.4. Ontology Completion Performance

5.5. Graph Reasoning Performance

5.6. Analysis and Discussion

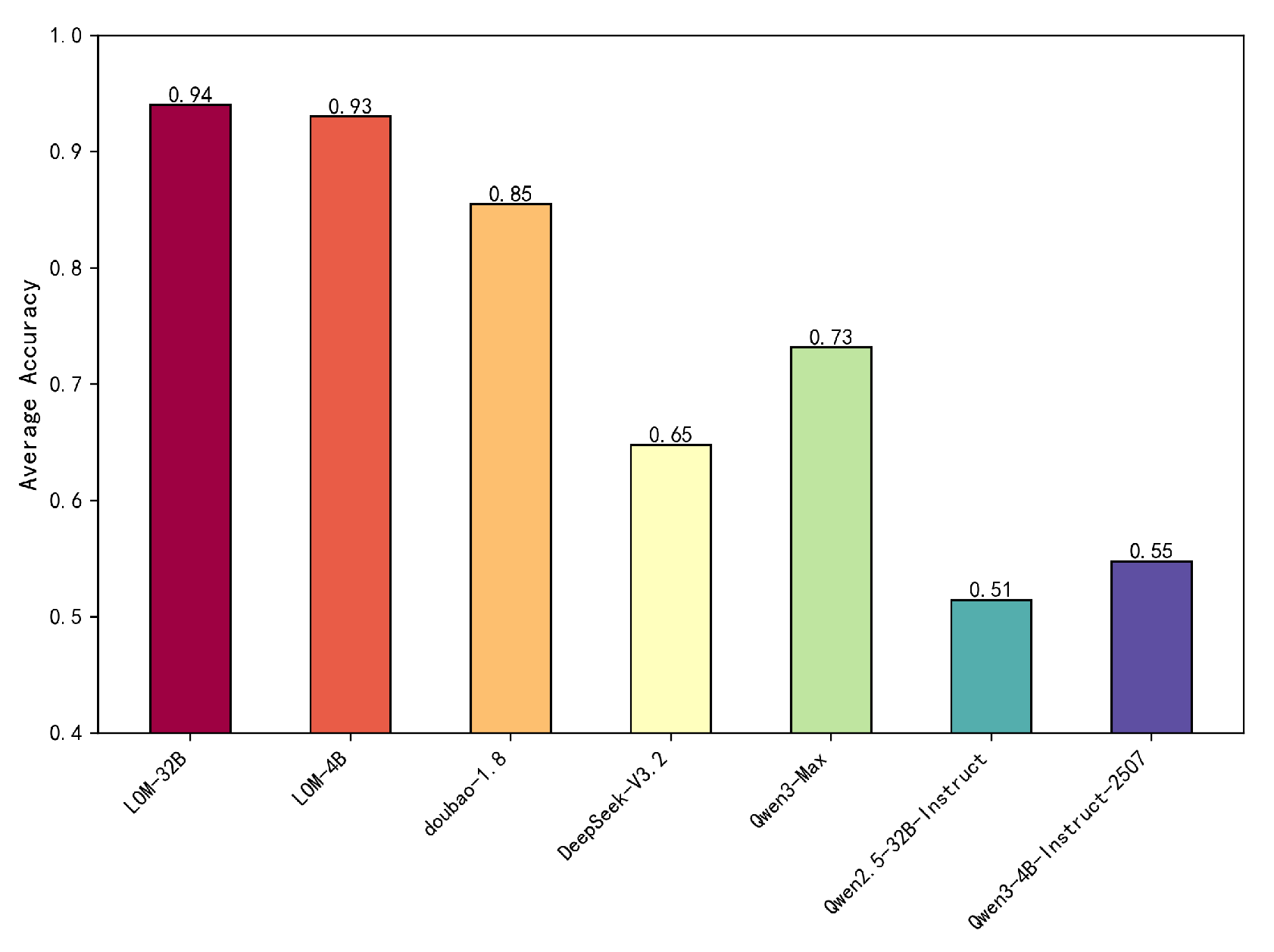

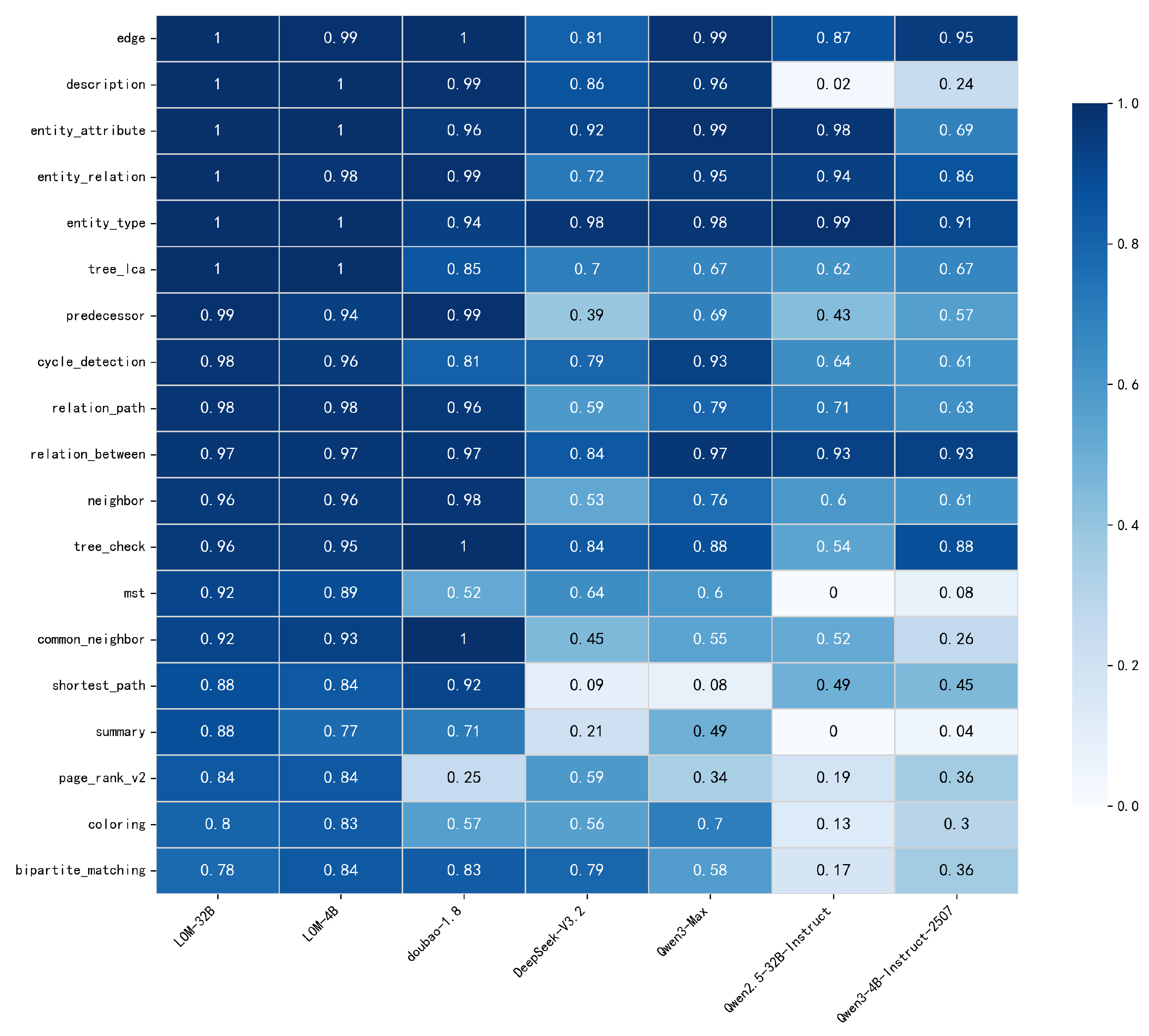

- Unified Semantics and Structure. The results validate the CAR architecture. Unlike pipeline systems that fragment semantic parsing and graph reasoning, LOM internalizes both end-to-end. This integration allows LOM to maintain state-of-the-art performance on semantic tasks (e.g., description 1.00) while excelling at structure-sensitive tasks (e.g., relation path 0.98), where traditional models often falter. The align phase ensures semantic outputs are grounded in the same rigorous ontology used for reasoning, preventing the trade-off between fluency and structural accuracy.

- Overcoming the Probabilistic Wall. A clear boundary emerges in algorithmic tasks requiring strict constraint satisfaction. LOM achieves high accuracy on MST (0.92) and shortest path (0.88), whereas general LLMs collapse (e.g., Qwen2.5-32B scores 0 on MST). This confirms the 7D claim: probabilistic scaling alone cannot master deterministic logic. Without an internal binding structure, even large models fail to preserve multi-step invariants, illustrating a probabilistic wall that parameter scaling does not breach.

- Logic Density over Parameter Scale. LOM demonstrates that logic density outweighs parameter count for complex reasoning. LOM-4B (93% average accuracy) and LOM-32B (94%) significantly outperform models 10x their size (e.g., Qwen2.5-32B, 70B+ models). By constraining the model with strict ontological rules (logic laws), LOM achieves higher cognitive density, proving that neuro-symbolic integration is a more efficient path to reliable reasoning than unsupervised pre-training alone.

6. Conclusions

References

- Zhu, H. Node classification via semantic-structural attention-enhanced graph convolutional networks. arXiv 2024, arXiv:2403.16033. [Google Scholar]

- Zhang, Y.; Zhu, H. Construct, Align, and Reason: Large Ontology Models for Enterprise Knowledge Management. arXiv 2026, arXiv:2602.00029. [Google Scholar]

- Oyewale, A.; Soru, T. LLM-Driven Ontology Construction for Enterprise Knowledge Graphs. arXiv 2026, arXiv:2602.01276. [Google Scholar] [CrossRef]

- Nayyeri, M.; Yogi, A.A.; Fathallah, N.; Thapa, R.B.; Tautenhahn, H.M.; Schnurpel, A.; Staab, S. Retrieval-Augmented Generation of Ontologies from Relational Databases. arXiv 2025, arXiv:2506.01232. [Google Scholar] [CrossRef]

- Luyen, L.N.; Abel, M.H.; Gouspillou, P. Development of Ontological Knowledge Bases by Leveraging Large Language Models. arXiv 2026, arXiv:2601.10436. [Google Scholar] [CrossRef]

- Cheung, B. Generative Ontology: When Structured Knowledge Learns to Create. arXiv 2026, arXiv:2602.05636. [Google Scholar] [CrossRef]

- Zhu, H.; Peng, H.; Lyu, Z.; Hou, L.; Li, J.; Xiao, J. Pre-training language model incorporating domain-specific heterogeneous knowledge into a unified representation. Expert Systems with Applications 2023, 215, 119369. [Google Scholar] [CrossRef]

- West, P.; Bhagavatula, C.; Hessel, J.; Hwang, J.; Jiang, L.; Le Bras, R.; Lu, X.; Welleck, S.; Choi, Y. Symbolic knowledge distillation: from general language models to commonsense models. In Proceedings of the Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2022, pp. 4602–4625.

- Acharya, K.; Velasquez, A.; Song, H.H. A survey on symbolic knowledge distillation of large language models. IEEE Transactions on Artificial Intelligence 2024, 5, 5928–5948. [Google Scholar] [CrossRef]

- Liao, H.; He, S.; Xu, Y.; Zhang, Y.; Liu, K.; Zhao, J. Neural-symbolic collaborative distillation: Advancing small language models for complex reasoning tasks. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, 2025, Vol. 39, pp. 24567–24575. [CrossRef]

- Marra, G.; Dumančić, S.; Manhaeve, R.; De Raedt, L. From statistical relational to neurosymbolic artificial intelligence: A survey. Artificial Intelligence 2024, 328, 104062. [Google Scholar] [CrossRef]

- Wolfram, S. An elementary introduction to the Wolfram Language. No Title 2015. [Google Scholar]

- Sarker, M.K.; Zhou, L.; Eberhart, A.; Hitzler, P. Neuro-symbolic artificial intelligence: Current trends. Ai Communications 2022, 34, 197–209. [Google Scholar] [CrossRef]

- Kautz, H. The third AI summer: AAAI Robert S. Engelmore memorial lecture. Ai magazine 2022, 43, 105–125. [Google Scholar] [CrossRef]

- Trinh, T.; Luong, T. Alphageometry: An olympiad-level ai system for geometry. Google DeepMind 2024, 17, 1. [Google Scholar]

- Hubert, T.; Mehta, R.; Sartran, L.; Horváth, M.Z.; Žužić, G.; Wieser, E.; Huang, A.; Schrittwieser, J.; Schroecker, Y.; Masoom, H.; et al. Olympiad-level formal mathematical reasoning with reinforcement learning. Nature 2025, 1–3. [Google Scholar] [CrossRef] [PubMed]

- Wang, L.; Ma, C.; Feng, X.; Zhang, Z.; Yang, H.; Zhang, J.; Chen, Z.; Tang, J.; Chen, X.; Lin, Y.; et al. A survey on large language model based autonomous agents. Frontiers of Computer Science 2024, 18, 186345. [Google Scholar] [CrossRef]

- Hong, S.; Zhuge, M.; Chen, J.; Zheng, X.; Cheng, Y.; Wang, J.; Zhang, C.; Wang, Z.; Yau, S.K.S.; Lin, Z.; et al. MetaGPT: Meta programming for a multi-agent collaborative framework. In Proceedings of the The twelfth international conference on learning representations, 2023. [Google Scholar]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.R.; Cao, Y. React: Synergizing reasoning and acting in language models. In Proceedings of the The eleventh international conference on learning representations, 2022. [Google Scholar]

- Shinn, N.; Cassano, F.; Gopinath, A.; Narasimhan, K.; Yao, S. Reflexion: Language agents with verbal reinforcement learning. Advances in neural information processing systems 2023, 36, 8634–8652. [Google Scholar]

- Takerngsaksiri, W.; Pasuksmit, J.; Thongtanunam, P.; Tantithamthavorn, C.; Zhang, R.; Jiang, F.; Li, J.; Cook, E.; Chen, K.; Wu, M. Human-in-the-loop software development agents. In Proceedings of the 2025 IEEE/ACM 47th International Conference on Software Engineering: Software Engineering in Practice (ICSE-SEIP). IEEE, 2025, pp. 342–352.

- Zhang, J.; Xiang, J.; Yu, Z.; Teng, F.; Chen, X.; Chen, J.; Zhuge, M.; Cheng, X.; Hong, S.; Wang, J.; et al. Aflow: Automating agentic workflow generation. arXiv 2024, arXiv:2410.10762. [Google Scholar] [CrossRef]

- Yang, J.; Jimenez, C.E.; Wettig, A.; Lieret, K.; Yao, S.; Narasimhan, K.; Press, O. Swe-agent: Agent-computer interfaces enable automated software engineering. Advances in Neural Information Processing Systems 2024, 37, 50528–50652. [Google Scholar]

- Wang, X.; Li, B.; Song, Y.; Xu, F.F.; Tang, X.; Zhuge, M.; Pan, J.; Song, Y.; Li, B.; Singh, J.; et al. Openhands: An open platform for ai software developers as generalist agents. arXiv 2024, arXiv:2407.16741. [Google Scholar]

- Steinberger, P.; OpenClaw Contributors. OpenClaw: Your own personal AI assistant. Any OS. Any Platform. The lobster way. GitHub repository. https://github.com/openclaw/openclaw, 2025. Accessed: 2026-03-07.

- Knuth, D.E. Claude’s Cycles. Stanford University Computer Science Department Paper, 2026. Revised March 6, 2026.

- Zhu, H.; Li, Y.; Liu, L.; Tong, H.; Lin, Q.; Zhang, C. Pre-training graph autoencoder incorporating hierarchical topology knowledge, 2025.

- Zheng, C.; Liu, S.; Li, M.; Chen, X.H.; Yu, B.; Gao, C.; Dang, K.; Liu, Y.; Men, R.; Yang, A.; et al. Group sequence policy optimization. arXiv 2025, arXiv:2507.18071. [Google Scholar] [PubMed]

- Liu, C.Y.; Zeng, L.; Liu, J.; Yan, R.; He, J.; Wang, C.; Yan, S.; Liu, Y.; Zhou, Y. Skywork-reward: Bag of tricks for reward modeling in llms. arXiv 2024, arXiv:2410.18451. [Google Scholar] [CrossRef]

- Bryanton, R. Imagining the tenth dimension: a new way of thinking about time, space, and string theory; Talking Dog Studios, 2006.

- Flavell, J.H. Metacognitive aspects of problem solving. In The nature of intelligence; Routledge, 2024; pp. 231–236.

- Zhu, H.; Hu, W.; Zeng, Y. Flexner: A flexible lstm-cnn stack framework for named entity recognition. In Proceedings of the CCF International Conference on Natural Language Processing and Chinese Computing. Springer, 2019, pp. 168–178.

- Zhu, H.; Tiwari, P.; Zhang, Y.; Gupta, D.; Alharbi, M.; Nguyen, T.G.; Dehdashti, S. SwitchNet: A modular neural network for adaptive relation extraction. Computers and Electrical Engineering 2022, 104, 108445. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).