Submitted:

09 March 2026

Posted:

12 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Sign Language Recognition Using Specialized Hardware

2.2. Arabic Sign Language Recognition (ArSLR)

3. Background

3.1. Mediapipe [24]

- 1.

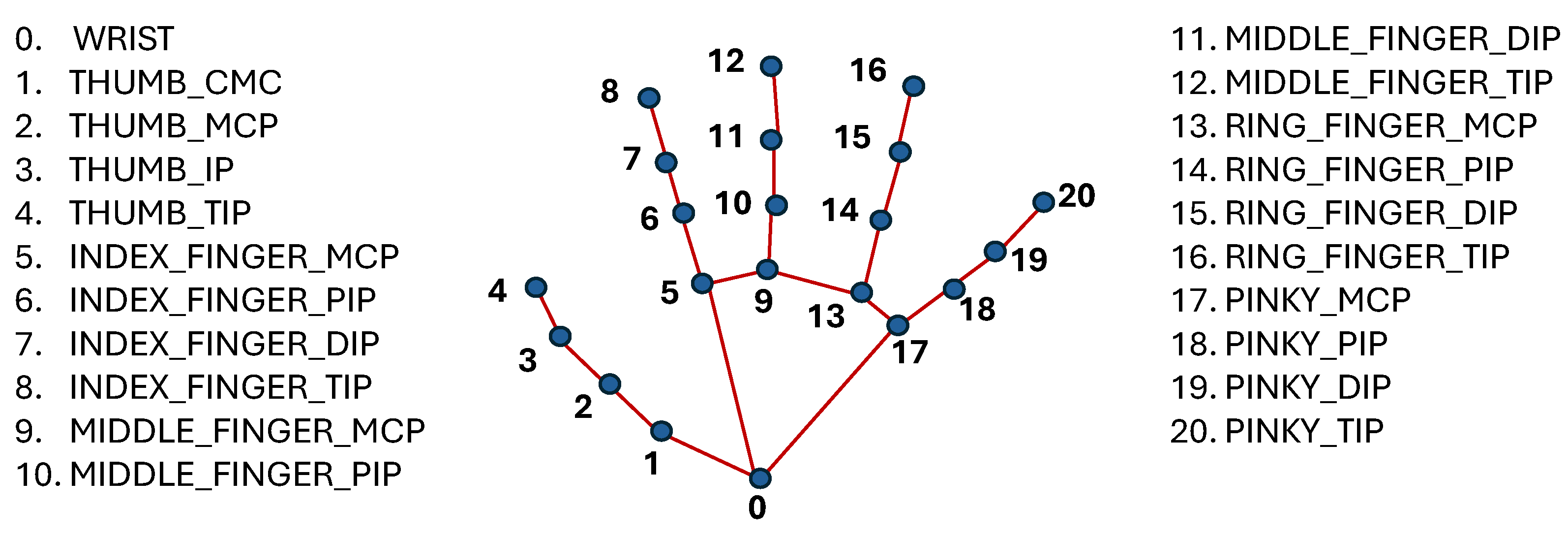

- Hand Landmarks: 21 points for each hand, detailing the position and orientation of the palm, fingers, and thumb. This high level of detail is essential for accurately interpreting handshapes and gestures. Figure 1 shows the hand landmarks and their labels.

- 2.

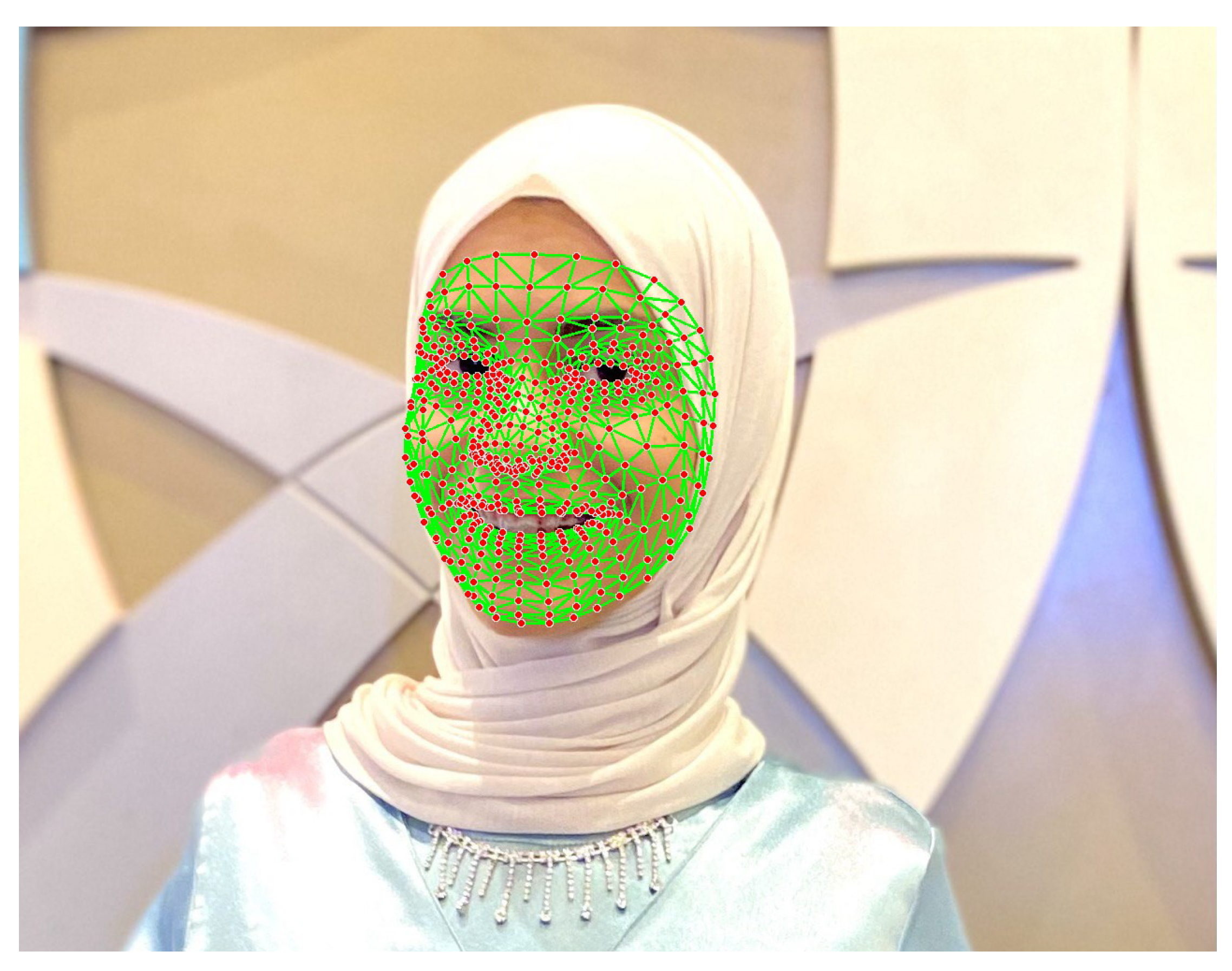

- Face Landmarks: 468 points that map key facial features, allowing for the capture of nuanced expressions that can be crucial in sign language.

- 3.

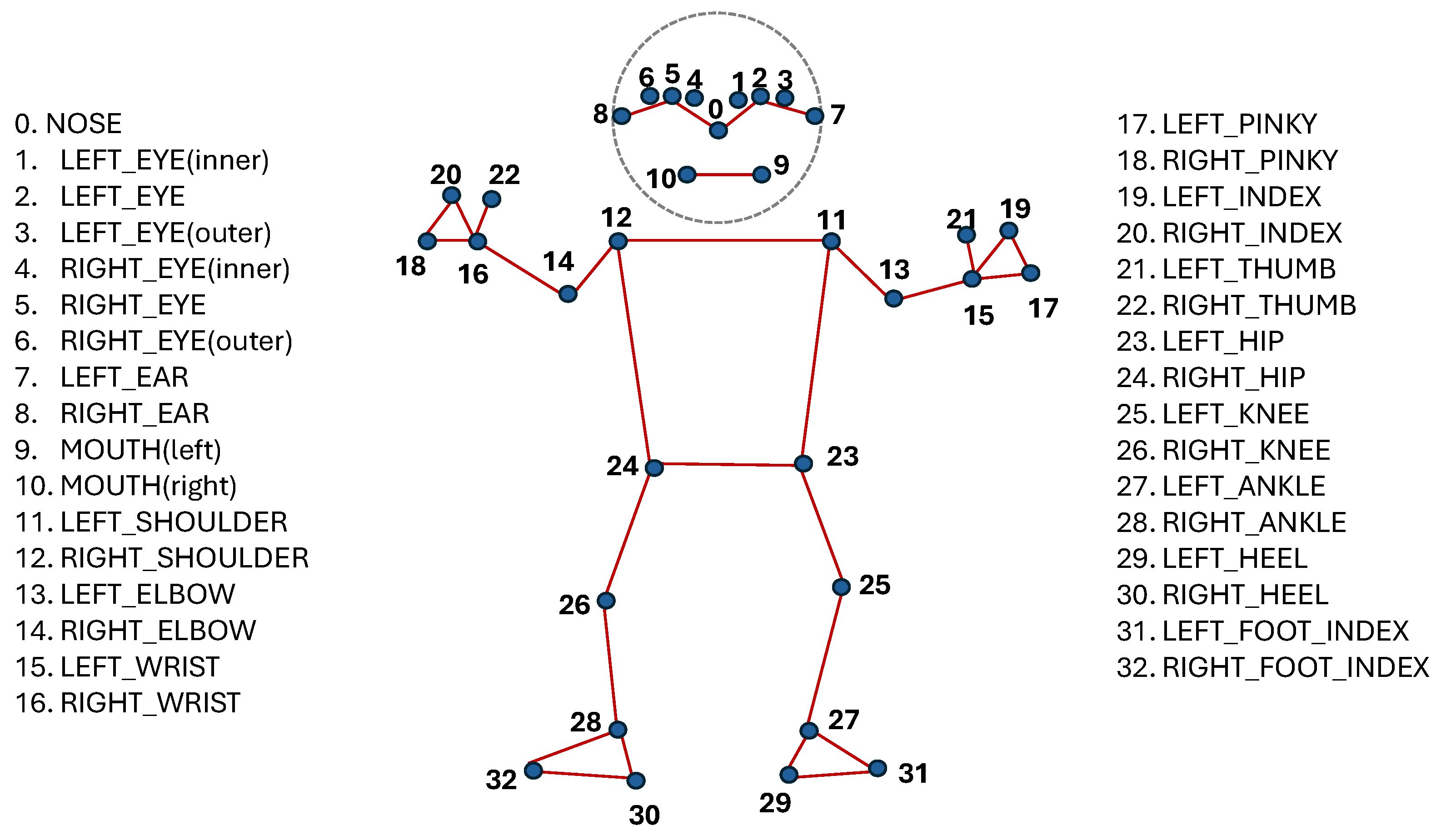

- Pose Landmarks: 33 points that track the position of the head, shoulders, hips, and limbs, capturing the broader body movements that accompany many signs. Figure 2 shows the pose output landmarks and their labels.

3.2. Deep Learning Models for Spatiotemporal Analysis

- 1.

-

Convolutional Neural Network (CNN) for Spatial Feature ExtractionA CNN is a deep learning algorithm designed to process and analyze visual data, such as images or individual video frames. Its strength lies in its ability to automatically identify spatial hierarchies of features, making CNN highly effective for object recognition tasks. In the context of SLR, the CNN component of our model processes the landmark data from each frame to learn and recognize key spatial patterns, such as handshapes (e.g., open palm, closed fist) and their orientation.

- 2.

-

Recurrent Neural Network (RNN) for Temporal Pattern RecognitionAn RNN is a specialized architecture built to handle sequential data, such as a time series. Unlike CNN, an RNN maintains an internal memory, allowing it to recognize patterns and relationships across a sequence. We use a specific type of RNN called Long Short-Term Memory (LSTM), which is highly effective at learning long-term dependencies. The LSTM component analyzes the sequence of features extracted by the CNN across multiple frames, enabling it to model the temporal dynamics of a sign, such as the trajectory of a hand gesture.

- 3.

-

Hybrid CNN-RNN ArchitectureIntegrating a CNN with an RNN (specifically, a Bi-directional LSTM in our model) creates a powerful system that learns both spatial and temporal features simultaneously. This is critical for distinguishing between signs that may look similar in a single frame but differ in their execution. For example, in the Arabic Sign Language, the signs for “eat” and “drink” both involve a similar handshape moving toward the mouth, but the “eat” sign involves moving the hand in a straight, direct path toward the mouth, whereas for the “drink” sign, the hand is brought to the mouth then makes a tilting motion as if tipping the cup. A CNN alone might struggle to differentiate them. However, the LSTM can analyze the motion across frames and recognize the distinct trajectories between a straight path versus a tilted one that define each sign. This hybrid approach prevents misclassification by building a comprehensive spatiotemporal representation of each sign, making it highly effective for recognizing complete words in a continuous video stream.

- 4.

-

Justification of Model ChoiceWhile other machine learning models exist, the CNN-RNN architecture was deliberately chosen for its suitability to the task.

- Traditional ML Models Traditional ML models, such as SVM or Random Forest, typically struggle with the complex temporal patterns inherent in sign language videos.

-

Standalone CNN or RNN ModelsA CNN alone cannot capture temporal dynamics, while an RNN alone is less effective at learning the intricate spatial features within individual frames. The hybrid model overcomes these individual limitations.

-

Transformer-Based ModelsWhile powerful, Transformers generally require vast datasets and significant computational resources, making them less practical for real-time SLR applications with the limited size of available sign language datasets.

Our chosen architecture provides a balanced and effective solution for accurate, real-time Arabic Sign Language recognition.

3.3. Model Evaluation Metrics

- True Positives (TP ): The model correctly predicts a sign.

- True Negatives (TN): The model correctly identifies that a sample is not a particular sign. (This is more relevant in binary classification; in our multi-class case, it is implicitly distributed among the other correct classifications).

- False Positives (FP): The model incorrectly predicts a sign. (e.g., predicts “hello” when the sign was actually “goodbye”). This is also known as a “Type I error”.

- False Negatives (FN): The model fails to predict the correct sign. (e.g., predicts “goodbye” when the sign was actually “hello”). This is also known as a “Type II error”.

4. The Datasets

- 1.

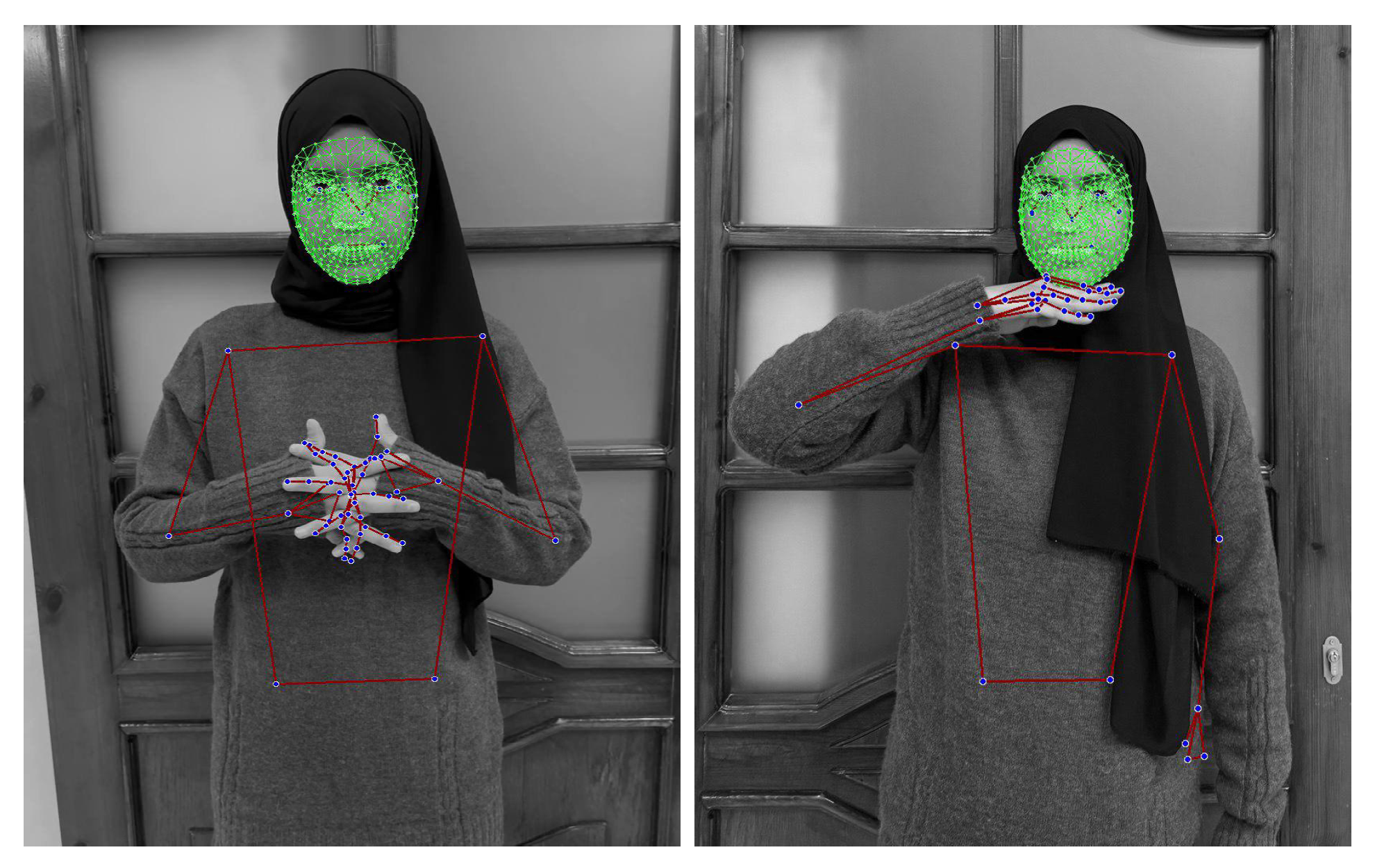

- JUST-SL dataset Created by the authors specifically for this research to simulate real-world data collection scenarios, this dataset comprises video recordings of 21 distinct Arabic words, with each word performed 30 times. The recordings feature three different signers, one of whom is a professional. The other signers learned the signs specifically for creating this experimental dataset. The dataset was recorded using an Apple iPhone 11 Pro camera with a color resolution of 828 × 1792 pixels at a frame rate of 30 frames per second (FPS). The data were partitioned using a 70% training and 30% testing split. Each recording is approximately 1–3 s long at 30 frames per second. The recordings were conducted in uncontrolled, naturalistic environments, such as workplaces, with varied and dynamic backgrounds. The signers appear in different poses (standing or sitting). The data were captured using a standard mobile phone camera; we deliberately avoided the use of specialized or high-cost equipment. Consequently, the resulting dataset is characterized by environmental noise and variability, presenting a challenging test case for evaluating the recognition system’s performance under non-ideal conditions. Figure 4 shows examples of different signers and backgrounds in the JUST-SL dataset.

- 2.

- The KArSL Dataset The publicly available King Abdullah Arabic Sign Language (KArSL) dataset [7] was collected under highly controlled laboratory conditions. For this study, 40 Arabic sign language words were selected; each word was performed 40 times by a single professional signer. The dataset was recorded using a Microsoft Kinect V2 sensor, which provides a resolution of 1920 × 1080 pixels, with a frame rate of 30 frames per second (FPS). The dataset documentation does not specify a fixed data partitioning method, allowing researchers to choose a split that suits their task. In our work, we used a 70% training split and 30% testing split. Each video is about 1 s long. The recordings were conducted in a standardized setting, with the signer positioned against a uniform green background and wearing consistent attire. These stringent controls ensure that the data set is clean and free of environmental noise, making it an ideal benchmark to evaluate the maximum potential accuracy of the model when provided with high-quality input.

5. Methodology

5.1. Data Preprocessing and Feature Extraction

- 1.

- Frame Segmentation: Each video was segmented into a fixed-length sequence of frames. Based on the average duration of a sign, videos from the JUST-SL dataset were converted into 50 frames, while the more concise performed signs in the KArSL dataset were segmented into 30 frames. This is because the KArSL dataset was captured using a Kinect camera and performed by a professional signer, whereas the JUST-SL dataset was recorded with a mobile camera and performed by nonprofessional signers.

- 2.

- Grayscale: For processing efficiency, we converted all images to grayscale. We found that this does not significantly impact classification results.

- 3.

- Landmark Extraction: Utilizing the Mediapipe Holistic framework, we extracted a set of 543 landmarks from each frame, with 468 points for face to capture the intricacy of facial expressions, 21 points per hand that capture the palm and each joint of the 5 fingers, and 33 points for the pose that captures the rough posture. The landmarks are output by Mediapipe in XYZ coordinates

5.2. Experiment Setup

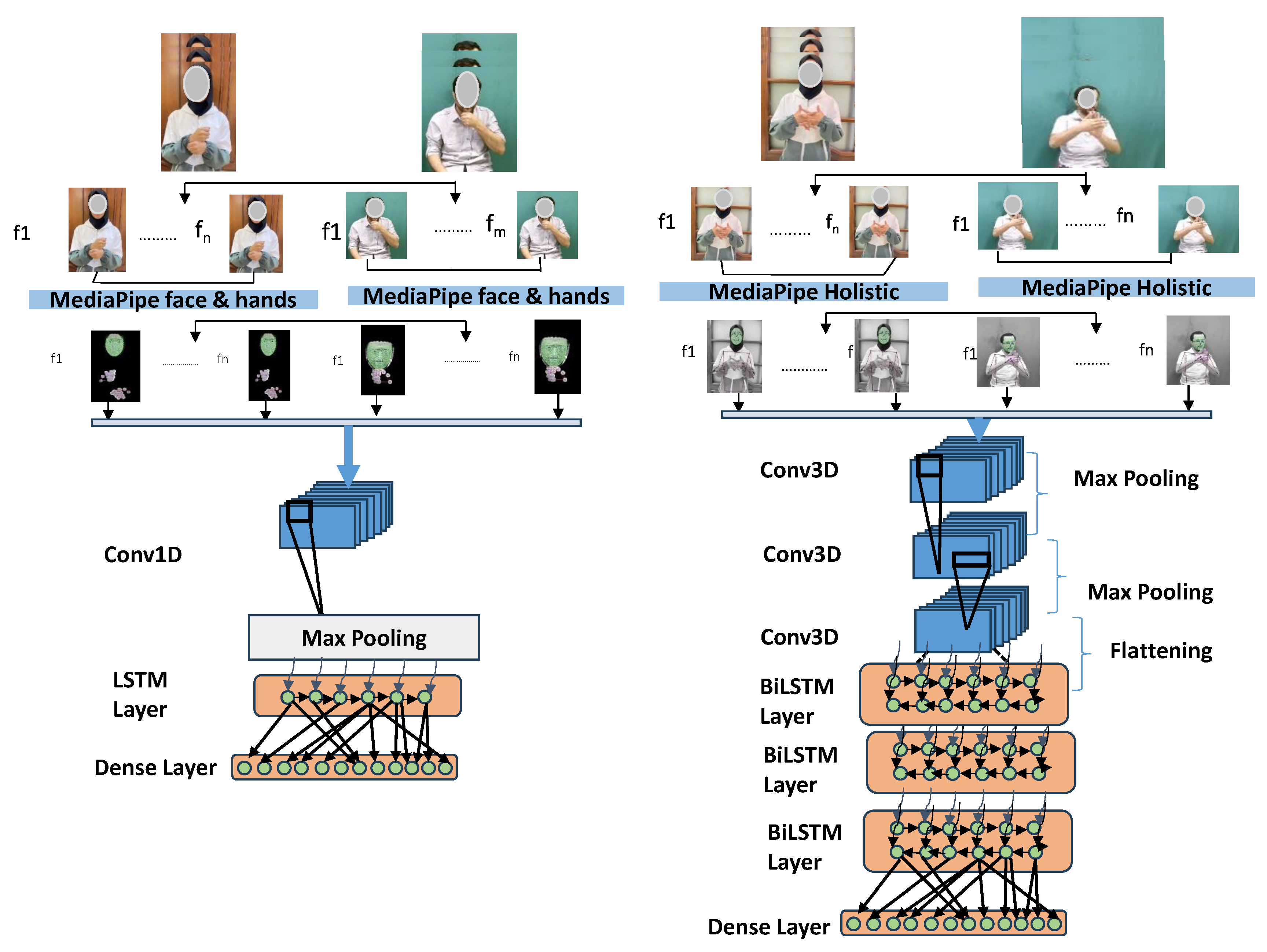

5.2.1. Experiment 1: Isolated Feature Model (Hands and Face)

5.2.2. Experiment 2: Holistic Feature Model (Full Body)

5.3. Model Architecture

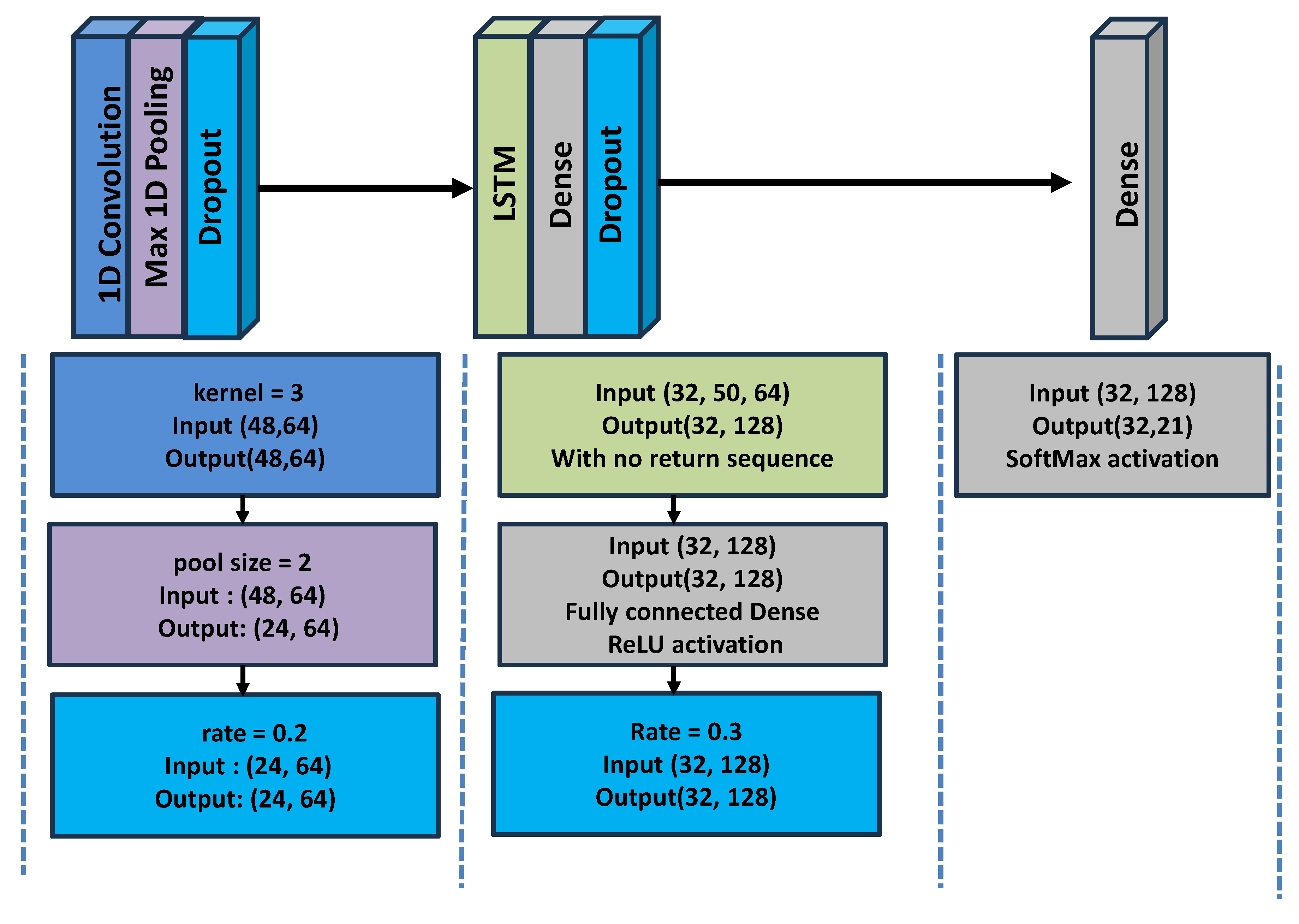

5.3.1. Neural Network Model for Experiment 1

- A 1D Convolutional layer with the Rectified Linear Unit (ReLU) activation function to extract spatial features from the landmark data

- A MaxPooling layer that reduces the spatial dimensions of the feature map by taking the maximum value in a sliding window to retain the most important features while making the network more robust to small variations in the input

- A reshape layer flattens the feature map into a vector to fit the data into the next layer

- Long Short-Term Memory (LSTM) layer to model the temporal sequences of the sign in the forward direction

- A final dense layer is added for final classification using the Softmax function to convert the feature map into probabilities for each class. Dropout is applied in between to prevent overfitting.

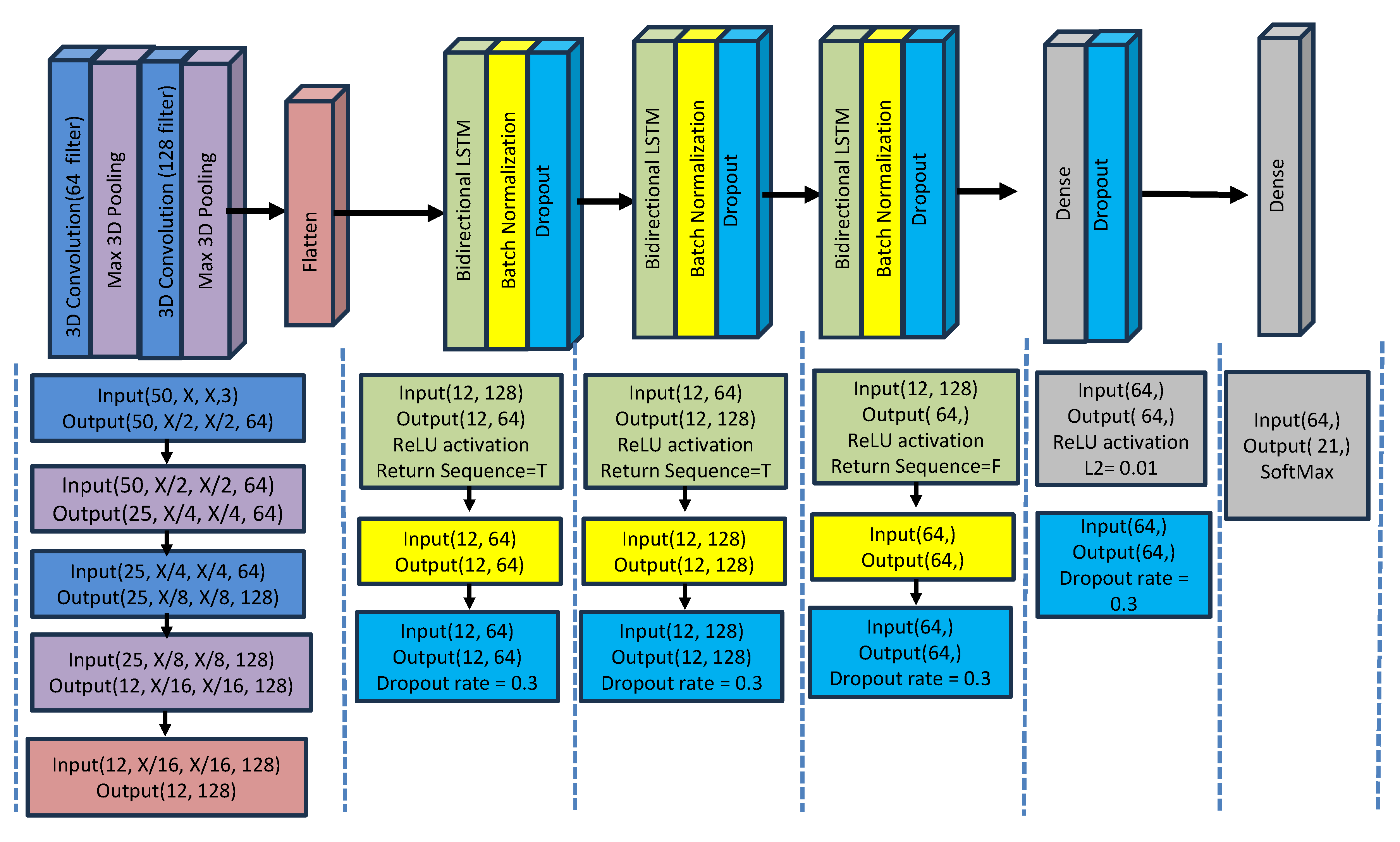

5.3.2. Neural Network Model for Experiment 2

- 3D Convolutional Layers to extract spatial and temporal characteristics from the video sequences.

- MaxPooling3D layers to reduce the spatial dimensions.

- Bidirectional LSTM Layers process the sequential data (temporal features) and capture both past and future dependencies. Batch normalization and dropout are applied to prevent overfitting.

- Dense Layers: At the level of the BiLSTM layers, a fully connected dense layer was added for the final classification. After BiLSTM, the last dense layer employs the Softmax activation function to return the final classes

- Regularization step (with the L2 norm) helps reduce overfitting by penalizing large weights.

- The Optimizer (Adam) solves for the model weights during training. A low learning rate (5 ) is chosen to ensure fine-tuning.

5.4. Feature Extraction into the Final Classification Vector

- 1.

-

Feature Vector Construction We concatenate the four types of landmarks into a singular feature vector:

- Pose landmarks: 33 points × (x, y, z, visibility) → 132 values.

- Face landmarks: 468 points × (x, y, z) → 1404 values.

- Left and right hand landmarks: 21 points × (x, y, z) × 2 → 126 values.

This results in a total number of 132 + 1404 + 126 = 1662 feature values per frame. - 2.

- Temporal Modeling (Sequence Construction): For each sign video, we select 50 frames that capture the signage. Each frame contributes a 1662-dimensional feature vector, resulting in a sequence of 50 frames × 1662 features = 83,100 feature points per video.

- 3.

-

Feature Transformation via Deep Learning Layers:

- Conv3D layers: extract spatio-temporal patterns from the raw feature sequences.

- Flatten layer: compresses these patterns over each frame.

- Bidirectional LSTM layers: model the temporal dependencies across frames.

- Dense layers: reduce the representation into a compact latent space. At the level of the BiLSTM layers, a fully connected dense layer was added for the final classification. After BiLSTM, the last dense layer employs the Softmax activation function to return the final classes.

- The final Dense softmax layer outputs a probability distribution over the 20 gesture classes.

- 4.

-

Feature Normalization and Dimensionality Adjustment We performed feature normalization at the Landmark-level and at the level of the CNN layers:

- Keypoint-level normalization: Normalization was applied at the level of landmark extraction to scaled landmark coordinates in the range of [0,1]. Mediapipe performs min-max normalization for the xy-coordinates, and relative depth scaling for the z-coordinate, relative to a root joint (like wrist or hips).

- Batch Normalization: At the level of the CNN layers, batch normalization was applied after each convolutional layer. This accelerates convergence, which is particularly important when training deep CNN-RNN hybrid models. Batch normalization ensures features are well-conditioned before passing them to BiLSTM, which models temporal dependencies. As an example, in Experiment 1, batch normalization was applied with a momentum value of . This helps normalize the outputs of convolutional layers so that extreme fraction values are reduced toward zero, preventing them from dominating the classification process. Batch normalization was also applied after the RNN layers to stabilize temporal feature learning.

- 5.

- Final Classification Output: The output is a 20-dimensional classification vectorand each value corresponds to the predicted probability for a specific gesture.

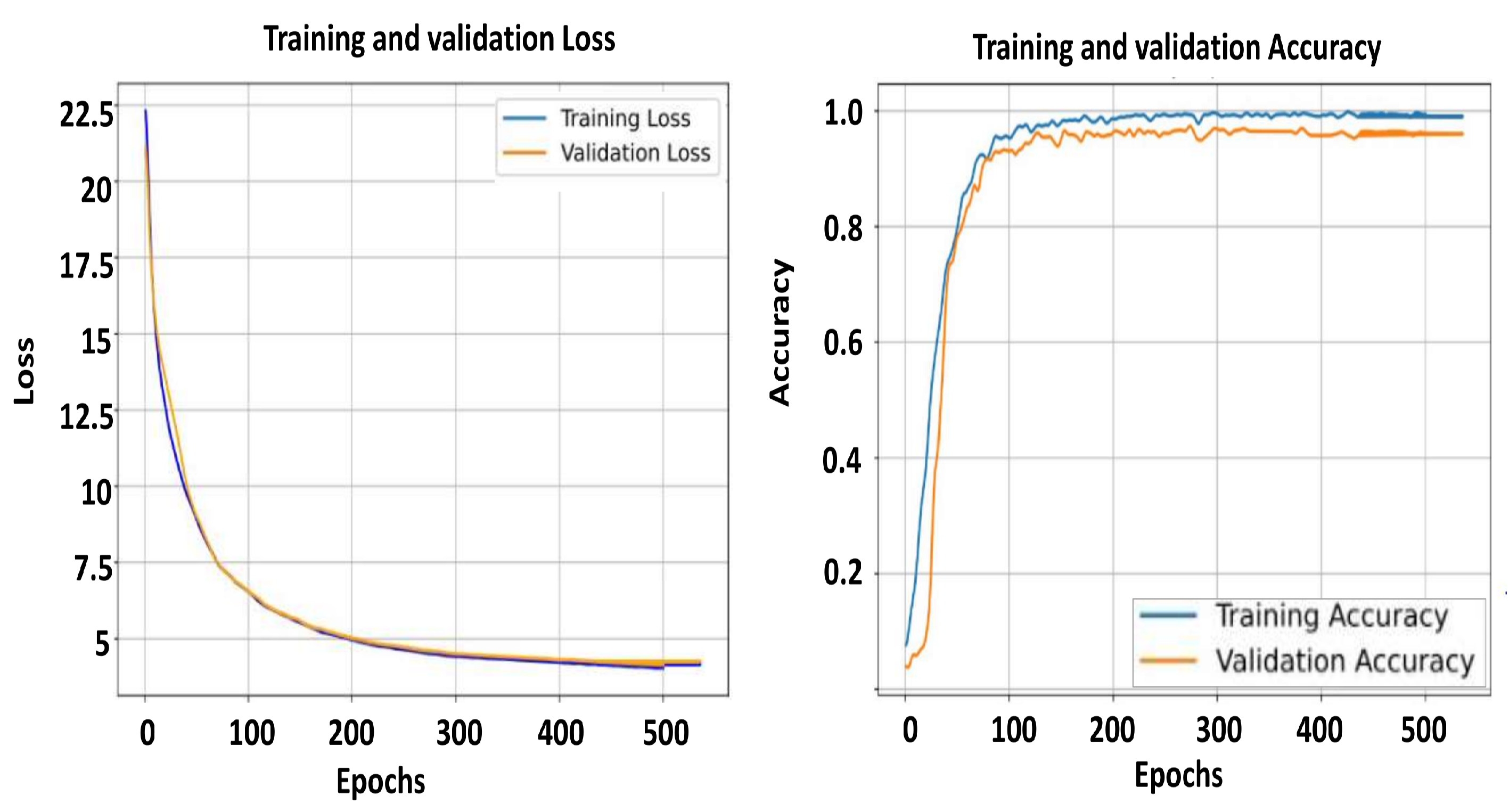

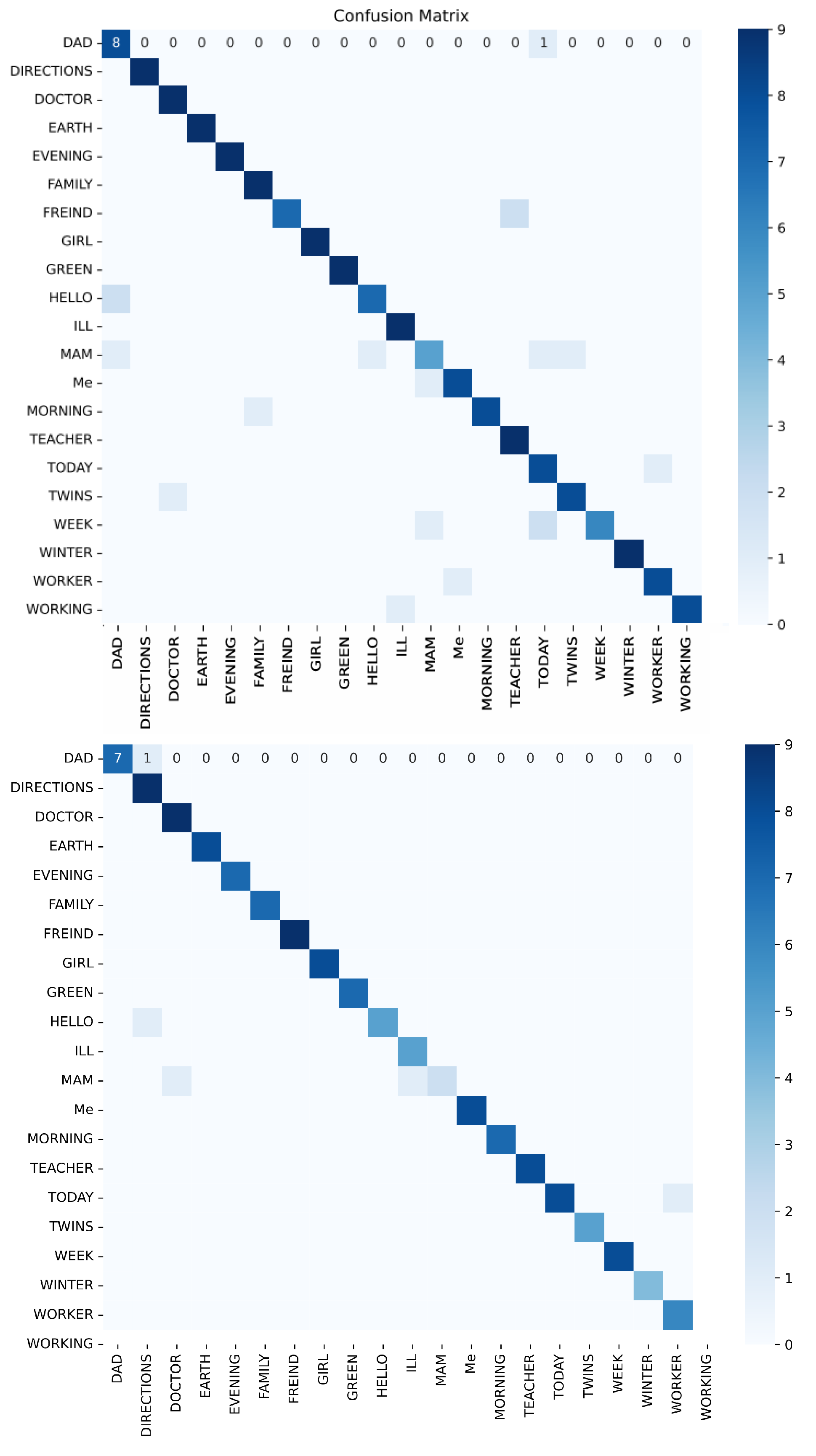

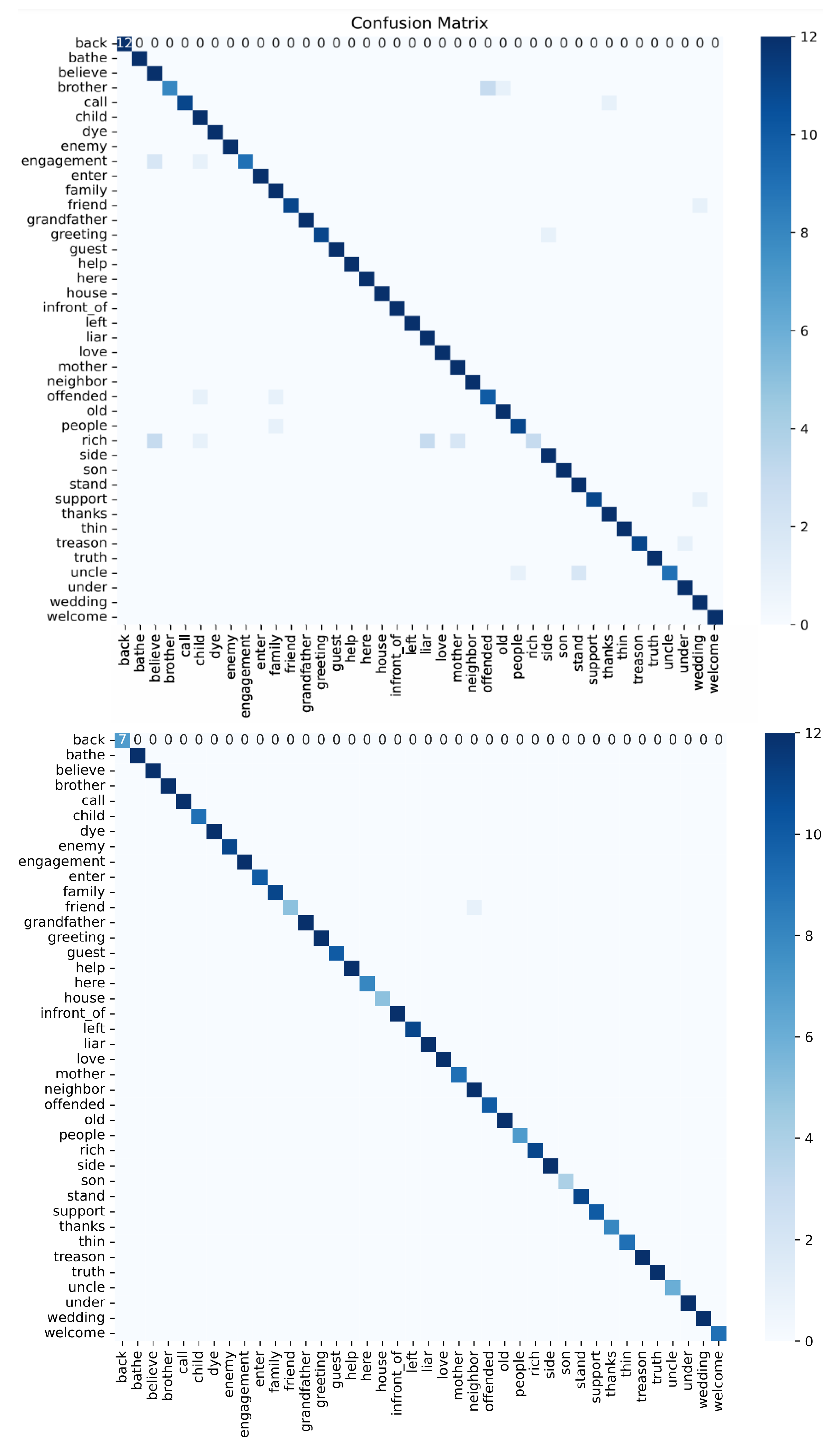

6. Experimental Results

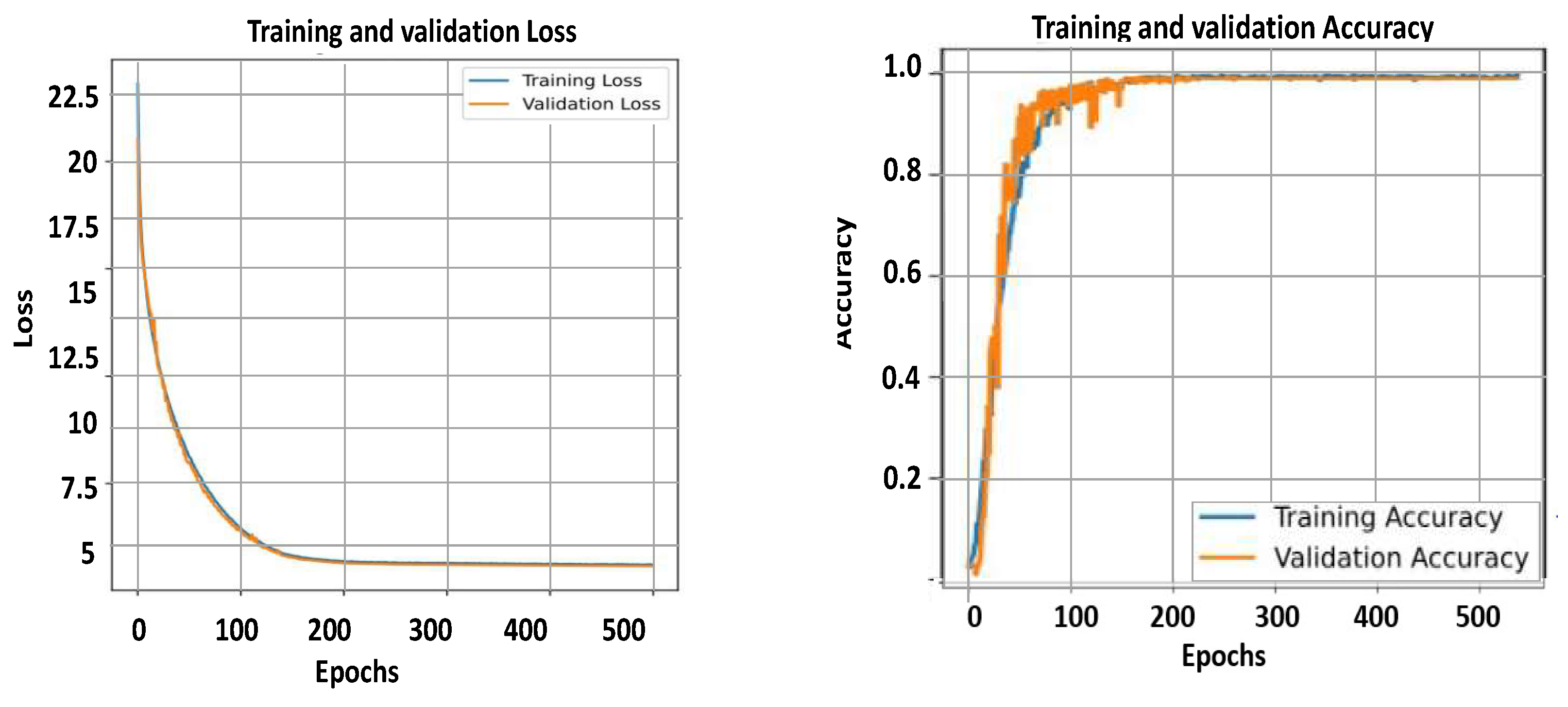

6.1. Overall Model Performance

6.2. Training Time and Convergence

6.3. Ablation Study

6.4. Limitations

7. Conclusions and Future Work

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Organization, W.H. Almost 1 in 5 People Suffer with Hearing Loss: So How Is This Impacting the Workplace? WHO: Geneva, Switzerland, 2023; Available online: https://www.zurich.com/media/magazine/2023/almost-2-5-billion-people-could-suffer-with-hearing-loss-by-2050-what-can-be-done (accessed on 20 December 2025).

- Rastgoo, R.; Kiani, K.; Escalera, S. Sign Language Recognition: A Deep Survey. Expert Syst. Appl. 2021, 164, 113794. [Google Scholar] [CrossRef]

- Mehdi, S.A.; Khan, Y.N. Sign Language Recognition Using Sensor Gloves. In Proceedings of the 9th International Conference on Neural Information Processing (ICONIP 2002), Singapore, 18–22 November 2002; Volume 5, pp. 2204–2206. [Google Scholar]

- Lokhande, P.; Prajapati, R.; Pansare, S. Data Gloves for Sign Language Recognition System. Int. J. Comput. Appl. 2015, 975, 8887. [Google Scholar]

- Lang, S.; Block, M.; Rojas, R. Sign Language Recognition Using Kinect. In Proceedings of the Artificial Intelligence and Soft Computing; Zakopane, Poland, Rutkowski, L., Korytkowski, M., Scherer, R., Tadeusiewicz, R., Zadeh, L.A., Zurada, J.M., Eds.; Springer: Berlin/Heidelberg, Germany, 29 April 2012; pp. 394–402. [Google Scholar]

- Google Research. MediaPipe. 2019. Available online: https://github.com/google/mediapipe (accessed on 14 September 2025).

- Sidig, A.A.I.; Luqman, H.; Mahmoud, S.; Mohandes, M. KArSL: Arabic Sign Language Database. ACM Trans. Asian Low-Resour. Lang. Inf. Process. (TALLIP) 2021, 20, 1–19. [Google Scholar] [CrossRef]

- Kakoty, N.M.; Sharma, M.D. Recognition of Sign Language Alphabets and Numbers Based on Hand Kinematics Using a Data Glove. Procedia Comput. Sci. 2018, 133, 55–62. [Google Scholar] [CrossRef]

- Shukor, A.Z.; Miskon, M.F.; Jamaluddin, M.H.; bin Ali Ibrahim, F.; Asyraf, M.F.; bin Bahar, M.B. A New Data Glove Approach for Malaysian Sign Language Detection. Procedia Comput. Sci. 2015, 76, 60–67. [Google Scholar] [CrossRef]

- Sadek, M.I.; Mikhael, M.N.; Mansour, H.A. A New Approach for Designing a Smart Glove for Arabic Sign Language Recognition System Based on the Statistical Analysis of Sign Language. In Proceedings of the 2017 34th National Radio Science Conference (NRSC), Alexandria, Egypt, 13–16 March 2017; pp. 380–388. [Google Scholar] [CrossRef]

- Zhang, Z. Microsoft Kinect Sensor and Its Effect. IEEE Multimed. 2012, 19, 4–10. [Google Scholar] [CrossRef]

- Zafrulla, Z.; Brashear, H.; Starner, T.; Hamilton, H.; Presti, P. American Sign Language Recognition with the Kinect. In Proceedings of the 13th International Conference on Multimodal Interfaces, New York, NY, USA, 14–18 November 2011; pp. 279–286. [Google Scholar]

- Agarwal, A.; Thakur, M.K. Sign Language Recognition Using Microsoft Kinect. In Proceedings of the 2013 Sixth International Conference on Contemporary Computing (IC3), Noida, India, 8–10 August 2013; pp. 181–185. [Google Scholar]

- Al-Jarrah, O.; Halawani, A. Recognition of Gestures in Arabic Sign Language Using Neuro-Fuzzy Systems. Artif. Intell. 2001, 133, 117–138. [Google Scholar] [CrossRef]

- Assaleh, K.; Al-Rousan, M. Recognition of Arabic Sign Language Alphabet Using Polynomial Classifiers. EURASIP J. Adv. Signal Process. 2005, 2005, 507614. [Google Scholar] [CrossRef]

- Youssif, A.A.A.; Aboutabl, A.E.; Ali, H.H. Arabic Sign Language (ArSL) Recognition System Using HMM. Int. J. Adv. Comput. Sci. Appl. 2011, 2, 45–51. [Google Scholar] [CrossRef]

- Hayani, S.; Benaddy, M.; El Meslouhi, O.; Kardouchi, M. Arabic Sign Language Recognition with Convolutional Neural Networks. In Proceedings of the 2019 International Conference of Computer Science and Renewable Energies (ICCSRE), Cairo, Egypt, 5–7 December 2019; pp. 1–4. [Google Scholar] [CrossRef]

- Zakariah, M.; Alotaibi, Y.A.; Koundal, D.; Guo, Y.; Mamun Elahi, M. Sign Language Recognition for Arabic Alphabets Using Transfer Learning Techniques. Comput. Intell. Neurosci. 2022, 2022, 4567989. [Google Scholar] [CrossRef]

- Alawwad, R.A.; Bchir, O.; Ismail, M.M.B. Arabic Sign Language Recognition Using Faster R-CNN. Int. J. Adv. Comput. Sci. Appl. 2021, 12, 692–700. [Google Scholar] [CrossRef]

- Moustafa, A.M.J.A.; Mohd Rahim, M.S.; Bouallegue, B.; Khattab, M.M.; Soliman, A.M.; Tharwat, G.; Ahmed, A.M. Integrated MediaPipe with a CNN Model for Arabic Sign Language Recognition. J. Electr. Comput. Eng. 2023, 2023, 8870750. [Google Scholar] [CrossRef]

- Abdul Ameer, R.S.; Ahmed, M.A.; Al-Qaysi, Z.T.; Salih, M.M.; Shuwandy, M.L. Empowering Communication: A Deep Learning Framework for Arabic Sign Language Recognition with an Attention Mechanism. Computers 2024, 13, 153. [Google Scholar] [CrossRef]

- Noor, T.H.; Noor, A.; Alharbi, A.F.; Faisal, A.; Alrashidi, R.; Alsaedi, A.S.; Alharbi, G.; Alsanoosy, T.; Alsaeedi, A. Real-time Arabic Sign Language Recognition Using a Hybrid Deep Learning Model. Sensors 2024, 24, 3683. [Google Scholar] [CrossRef]

- Tharwat, G.; Ahmed, A.M.; Bouallegue, B. Arabic Sign Language Recognition System for Alphabets Using Machine Learning Techniques. J. Electr. Comput. Eng. 2021, 2021, 2995851. [Google Scholar] [CrossRef]

- Google Mediapipe Open-library. Holistic Landmarks Detection Task Guide. 2024. Available online: https://ai.google.dev/edge/mediapipe/solutions/vision/holistic_landmarker (accessed on 12 August 2024).

- Google AI for Developers, Face Landmark Detection Guide, 2024. 12 Aug 2024. Available online: https://ai.google.dev/edge/mediapipe/solutions/vision/face_landmarker.

- Al-Rousan, M.; Assaleh, K.; Tala’a, A. Video-Based Signer-Independent Arabic Sign Language Recognition Using Hidden Markov Models. Appl. Soft Comput. 2009, 9, 990–999. [Google Scholar] [CrossRef]

| Paper | Methods | Dataset | Format | Accuracy |

|---|---|---|---|---|

| [14] | Features that identified the fingertips | 30 Arabic letters | images of bare hands | 93.6% |

| [15] | Polynomial classifiers | 30 Arabic letters | images | 98% |

| [16] | Hidden Markov Model | 20 isolated Arabic words | images | 82% |

| [17] | CNN model | 28 Arabic letters and 11 Arabic numbers | images | 90% |

| [18] | CNN model | 32 standard Arabic letters | images | 95% |

| [19] | R-CNN model | 28 Arabic letters | mobile-captured images | 93% |

| [20] | Mediapipe and CNN | 28 Static hand-sign Arabic letters | images and videos | 97% |

| [21] | Mediapipe and LSTM | 44 Arabic words and 6 Arabic digits | videos | 85% |

| [23] | KNN classifier | 14 Arabic Quranic letters | images | 99.5% |

| [22] | MediaPipe and CNN/RNN | 20 Arabic letters and words | images and videos | 94% |

| Characteristic | JUST-SL Dataset (Uncontrolled) | KArSL Dataset (Controlled) |

|---|---|---|

| Number of Signers | 3 signers | 1 signer |

| Signer Professionalism |

[c]@l@1 professional 2: non professional |

1: professional |

| Background and posture Conditions |

[c]@l@Variation in background. variation in signer posture. |

[c]@l@uniform green background. signer in one posture. |

| Lighting Variations | variation in lighting | standard lighting |

| Acquisition Hardware |

[c]@l@Commercialized phone: Apple iPhone 11 Pro camera |

[c]@l@Specialized capturing hardware: Microsoft Kinect V2 sensor |

| Layer | Type | Output Shape | Description |

|---|---|---|---|

| Conv1D | Convolution | (48,64) | Extracts Spatial features using 64 filters, with a kernel of size 3. |

| Max Pooling 1D | Pooling | (24,64) | Reduces the feature size by half. Using a pool size of 2. |

| Dropout | Regulatization | (24,64) | Applies dropout with a rate of 0.2. To prevent overfitting |

| LSTM | Recurrent | (32,128) | LSTM with 128 layers without returning sequences |

| Dense | Fully conneted | (32,128) | Dense layer with 128 layers and ReLU activation for feature learning |

| Dropout | Regulatization | (32,128) | Applies dropout with a rate of 0.3. To prevent overfitting |

| Dense | Fully conneted | (32,21) | Dense layer with 21 layers. Using softmax activation for classification. |

| Optimizer | Adam W | None | Learning rate = 1 |

| Epochs | 1000 | None | None |

| Batch Size | 32 | None | None |

| Layer | Type | Output Shape | Description |

|---|---|---|---|

| Conv3D (64 filters) | Convolution | (50, X/2, X/2, 64) | 3 ×

3 × 3 filters, ReLU activation. L2 = 0.01. Padding = same |

| MaxPooling3D | Pooling | (25, X/4, X/4, 64) | Reduces the feature size by half. Padding = same |

| Conv3D (128 filters) | Convolution | (25, X/8, X/8, 128) | Extracts spatial features. ReLU activation. L2 = 0.01. Padding = same |

| MaxPooling3D | Pooling | (12, X/16, X/16, 128) | 2 × 2 × 2 pool size. Padding = same |

| Time Distributed Flatten | Flatten | (12, 128) | Flattens each time step. |

| Bidirectional LSTM | Recurrent | (12, 64) | BiLSTM with 64 layers. ReLU activation. return_sequences = True |

| Batch Normalization | Normalization | (12, 64) | Normalize the output of the LSTM |

| Dropout | Regularization | (12, 64) | Prevent overfitting (Dropout rate = 0.3) |

| Bidirectional LSTM | Recurrent | (12, 128) | BiLSTM with 128 layers. ReLU activation, return_sequences = True |

| Batch Normalization | Normalization | (12, 128) | Normalize the output of the LSTM |

| Dropout | Regularization | (12, 128) | Prevent overfitting (Dropout rate = 0.3) |

| Bidirectional LSTM | Recurrent | − 64 | BiLSTM with 64 layers. ReLU activation. return_sequences=False |

| Batch Normalization | Normalization | −64 | Normalize the output of the LSTM |

| Dropout | Regularization | −64 | Prevent overfitting (Dropout rate=0.3) |

| Dense | Fully Connected | −64 | Feature learning. ReLU activation, L2 = 0.01 |

| Dropout | Regularization | −64 | Prevent overfitting (Dropout rate = 0.3) |

| Dense | Fully Connected | (number of actions) | Softmax output for action classification |

| Optimizer | Adam | None | Learning rate = 5 |

| Epochs | - | 500 | Training epochs |

| Batch Size | None | 16 | None |

| Dataset | JUST-LS Dataset | KArSL Dataset | ||

|---|---|---|---|---|

| Experiment | Experiment 1 | Experiment 2 | Experiment 1 | Experiment 2 |

| Accuracy | 90.48% | 96.48% | 94.38% | 99.03% |

| Precision | 91.27% | 97.2% | 95.28% | 99.02% |

| Recall | 90.47% | 96.3% | 94.37% | 99.01% |

| F1-score | 90.36% | 96.11% | 94.73% | 99.04% |

| Training time | 1178.24 s =19.6 min |

464.12 min =7.4 h |

1193.38 s = 19.8 min |

2103.72 min =35 h |

| Avg. inference time | 16.5 s | 5 s | 12.8 s | 5 s |

| Avg. inference time/word | 240 ms | 400 ms | 234 ms | 403 ms |

| Dataset | Experiment | Accuracy (%) | Precision (%) | Recall (%) | F1-Score (%) |

|---|---|---|---|---|---|

| JUST-SL | Experiment 1 | 90.50 ± 0.26 | 90.09 ± 1.14 | 90.49 ± 0.5003 | 89.45333 ± 0.78 |

| JUST-SL | Experiment 2 | 95.54 ± 1.33 | 96.6 ± 0.85 | 94.65 ± 2.33 | 94.56 ± 2.19 |

| KARSL | Experiment 1 | 94.03 ± 0.34 | 93.99 ± 1.16 | 94.13 ± 1.03 | 94.69 ± 0.33 |

| KARSL | Experiment 2 | 97.8 ± 1.79 | 90.1 ± 1.428 | 97.6 ± 2.12 | 98.02 ± 1.44 |

| Method | Papers | Dataset Used | Accuracy |

|---|---|---|---|

| Models tested using Arabic letters and numbers | |||

| Polynomial classifier | [15] | Arabic letters | 93.55% |

| R-CNN | [19] | Arabic letters | 93% |

| CNN only | [17,18] | Arabic numbers and letters | 95% |

| KNN calssifier | [23] | Arabic letters | 99.5% |

| Models tested using Arabic words and letters | |||

| HMM classifier | [26] | Arabic words | 98.4% |

| MediaPipe + CNN | [20] | Arabic words and letters | 97.1% |

| Mediapipe + RNN | [21] | Arabic words and letters | 85% |

| Mediapipe + CNN/RNN | [22] | Arabic words and letters | 85% |

| 1lMediapipe + CNN/RNN | our work | Arabic words and letters | 99% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).