Submitted:

08 March 2026

Posted:

10 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Feature Sets

1.1.1. Mel Frequency Cepstral Coefficients

1.1.2. Recording Studio Features

2. Previous Work

3. Method

3.1. The Dataset

3.2. Random Forest

3.3. Self-Organizing Maps

4. Results

4.1. Random Forest Classifier

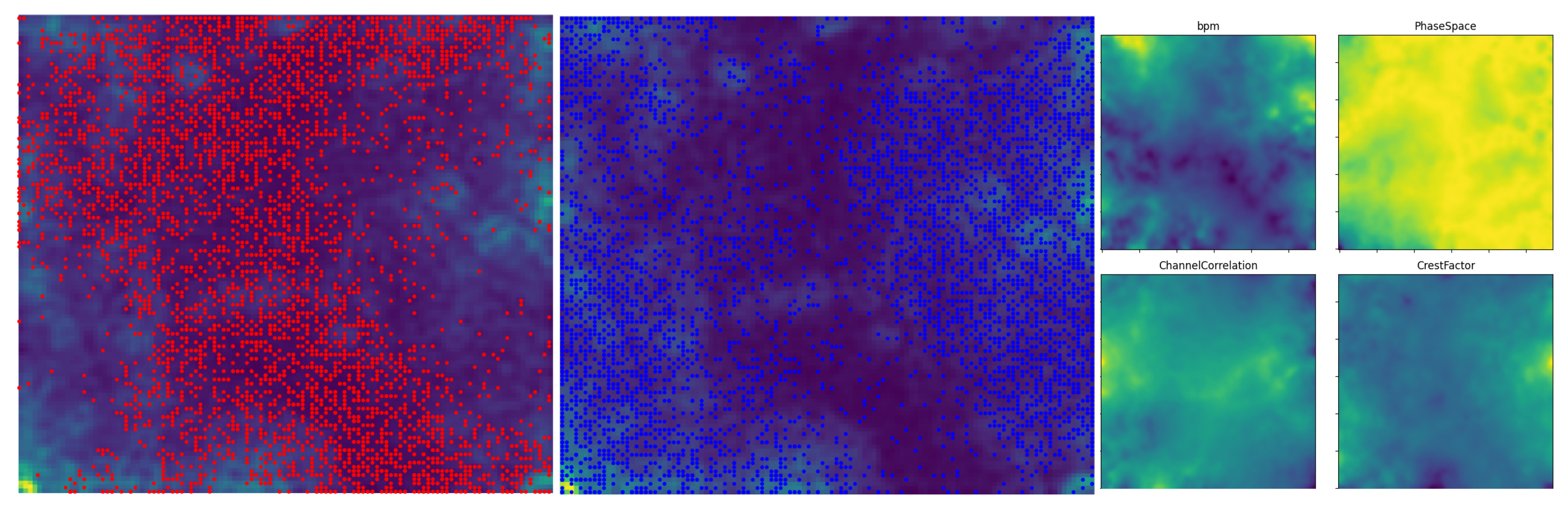

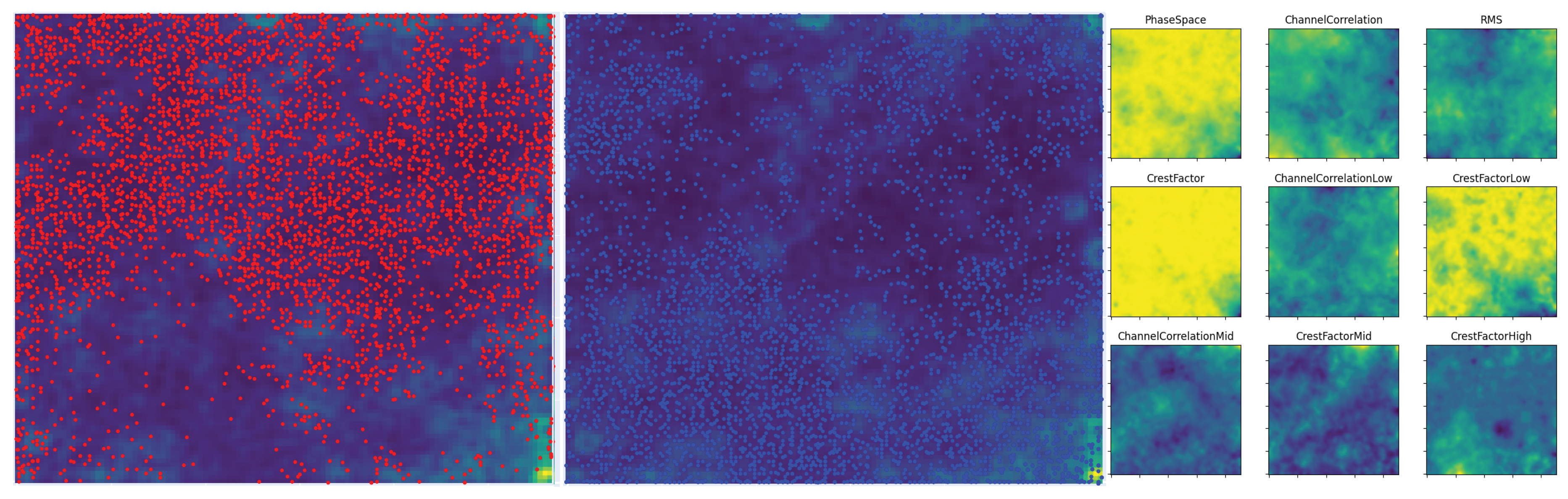

4.2. Self-Organizing Map

5. Discussion

6. Conclusion

Acknowledgments

References

- Morreale, F.; Martinez-Ramirez, M.A.; Masu, R.; Liao, W.; Mitsufuji, Y. Reductive, Exclusionary, Normalising: The Limits of Generative AI Music. Transactions of the International Society for Music Information Retrieval 2025, 8, 300–312. [Google Scholar] [CrossRef]

- Sturm, B.L. A simple method to determine if a music information retrieval system is a ’horse’. IEEE. Trans. Multimedia 2014, 16, 1636–1644. [Google Scholar] [CrossRef]

- Aucouturier, J.J.; Bigand, E. Mel Cepstrum & Ann Ova: The difficult dialog between MIR and music cognition. In Proceedings of the Proceedings of the International Society for Music Information Retrieval Conference, 10 2012, pp. 397–402.

- Ziemer, T.; Yu, Y.; Tang, S. Using Psychoacoustic Models for Sound Analysis in Music. In Proceedings of the Proceedings of the 8th Annual Meeting of the Forum on Information Retrieval Evaluation, New York, NY, USA, 2016; FIRE ’16, pp. 1–7. [CrossRef]

- Farinella, D.J. Rick Rubin. In The Encyclopedia of Record Producers; Olsen, E., Verna, P., Wolff, C., Eds.; Billboard Books, 1998. [Google Scholar]

- Hawkins, S. Feel the beat come down: house music as rhetoric. In Analyzing Popular Music; Moore, A.F., Ed.; Cambridge University Press: Cambridge, 2009; chapter 5; pp. 80–102. [Google Scholar] [CrossRef]

- Wilke, T. Disco. In Handbuch Popkultur; Hecken, T., Kleiner, M.S., Eds.; J. B. Metzler: Stuttgart, 2017; pp. 67–72. [Google Scholar]

- Knees, P.; Schedl, M. Music Similarity and Retrieval. An Introduction to Audio- and Web-based Strategies; Springer, 2016. [Google Scholar]

- Ziemer, T.; Kiattipadungkul, P.; Karuchit, T. Acoustic features from the recording studio for Music Information Retrieval Tasks. Proceedings of Meetings on Acoustics 2020, 42, 035004. Available online: https://asa.scitation.org/doi/pdf/10.1121/2.0001363. [CrossRef]

- Peeters, G. The Deep Learning Revolution in MIR: The Pros and Cons, the Needs and the Challenges. In Proceedings of the Perception, Representations, Image, Sound, Music; Kronland-Martinet, R., Ystad, S., Aramaki, M., Eds.; Cham, 2021; pp. 3–30. [Google Scholar] [CrossRef]

- Hiroko Terasawa, Jonathan Berger, S.M. In Search of a Perceptual Metric for Timbre: Dissimilarity Judgments among Synthetic Sounds with MFCC-Derived Spectral Envelopes. J. Audio Eng. Soc. 2012, 60, 674–685.

- Ziemer, T. Sound Terminology in Sonification. J. Audio Eng. Soc 2024, 72, 274–289. [Google Scholar] [CrossRef]

- Schneider, A. Perception of Timbre and Sound Color. In Springer Handbook of Systematic Musiwology; Bader, R., Ed.; Springer: Berlin, Heidelberg, 2018; chapter 32; pp. 687–726. [Google Scholar]

- Emmanuel, D.; Damien, T. About dynamic processing in mainstream music. Journal of the Audio Engineering Society 2014, 62, 42–55. [Google Scholar] [CrossRef]

- Ziemer, T. Goniometers are a Powerful Acoustic Feature for Music Information Retrieval Tasks. In Proceedings of the DAGA 2023 – 49. Jahrestagung für Akustik, Hamburg, Germany, 2023; pp. 934–937. [Google Scholar]

- Stirnat, C.; Ziemer, T. Spaciousness in Music: The Tonmeister’s Intention and the Listener’s Perception. In Proceedings of the KLG 2017. klingt gut! 2017 – International Symposium on Sound, Hamburg, Germany, Jun 2017; pp. 42–51. [Google Scholar]

- Ziemer, T. Source Width in Music Production. Methods in Stereo, Ambisonics, and Wave Field Synthesis. In Studies in Musical Acoustics and Psychoacoustics; Schneider, A., Ed.; Springer: Cham, 2017; pp. 299–340. [Google Scholar]

- Ziemer, T.; Linke, S. From Imitation to Innovation: The Divergent Paths of Techno in Germany and the USA. arXiv 2025. [Google Scholar]

- Ziemer, T.; Kudakov, N.; Reuter, C. Producer vs. Rapper: Who Dominates the Hip Hop Sound? Journal of the Audio Engineering Society 2024, 73, 54–62. [Google Scholar] [CrossRef]

- Baniya, B.K.; Lee, J.; Li, Z.N. Audio Feature Reduction and Analysis for Automatic Music Genre Classification. In Proceedings of the IEEE International Conference on Systems, Man, and Cybernetics, San Diego, CA, USA, 2014; pp. 457–462. [Google Scholar]

- Lee, C.H.; Shih, J.L.; Yu, K.M.; Lin, H.S.; Wei, M.H. Fusion of Static and Transitional Information of Cepstral and Spectral Features for Music Genre Classification. In Proceedings of the IEEE Asia-Pacific Services Computing Conference, Washington, DC, NW, USA, 2008; pp. 751–756. [Google Scholar] [CrossRef]

- Ziemer, T. HOTGAME: A Corpus of Early House and Techno Music from Germany and America. Metrics 2025, 2, 8. [Google Scholar] [CrossRef]

- Caparrini, A.; Arroyo, J.; Pérez-Molina, L.; Sánchez-Hernández, J. Automatic subgenre classification in an electronic dance music taxonomy. Journal of New Music Research 2020, 49, 269–284. [Google Scholar] [CrossRef]

- Popli, C.; Pai, A.; Thoday, V.; Tiwari, M. Electronic Dance Music Sub-genre Classification Using Machine Learning. In Proceedings of the Artificial Intelligence and Sustainable Computing; Pandit, M., Gaur, M.K., Rana, P.S., Tiwari, A., Eds.; Singapore, 2022; pp. 321–331. [Google Scholar] [CrossRef]

- Kohonen, T. Self-Organizing Maps, 3 ed.; Springer: Berlin, Heidelberg, 2001. [Google Scholar] [CrossRef]

- Linke, S.; Ziemer, T. SOMson – Sonification of Multidimensional Data in Kohonen Maps. In Proceedings of the International Conference on Auditory Display, Troy, NY, 2024; pp. 50–57. [Google Scholar]

- Blaß, M.; Bader, R. Content-Based Music Retrieval and Visualization System for Ethnomusicological Music Archives. In Computational Phonogram Archiving; Bader, R., Ed.; Springer: Cham, 2019; pp. 145–173. [Google Scholar] [CrossRef]

| MFCCs | MFCCs+bpm | Rec | Rec+bpm | |

|---|---|---|---|---|

| G | ||||

| U |

| MFCCs | MFCCs+bpm | Rec | Rec+bpm | |

|---|---|---|---|---|

| G | ||||

| U |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).