Submitted:

06 March 2026

Posted:

09 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- To clarify the strategic domains in which AI restructures leadership practice and decision architectures;

- To identify the dynamic capabilities required to govern AI-embedded organizational systems;

- To explicate the ethical and governance tensions inherent in hybrid human–AI collaboration;

- To theorize the configurational boundary conditions under which AI enhances or destabilizes leadership effectiveness.

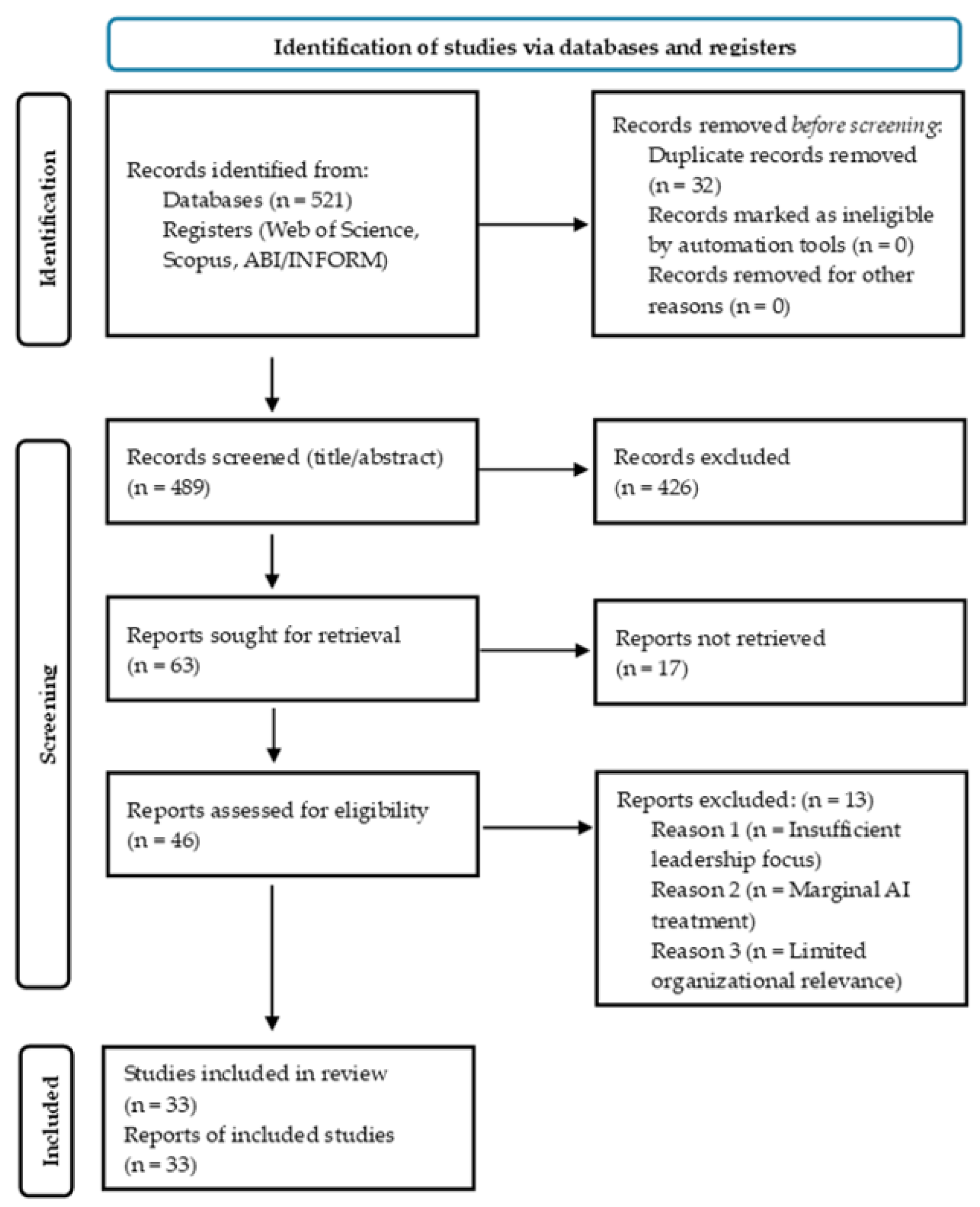

2. Materials and Methods

2.1. Research Design

2.2. Search Strategy

2.3. Eligibility Criteria

2.4. Screening and Selection Procedure

2.5. Data Extraction

2.6. Data Analysis and Thematic Synthesis

3. Results

3.1. AI as Strategic Reconfiguration of Leadership

3.2. AI and Leadership Capabilities in Digitally Embedded Organizations

3.3. Human–AI Collaboration and Hybrid Governance

3.4. Ethical Tensions and the Dark Side of AI-Embedded Leadership

3.5. Cross-Thematic Integration: Toward a Multi-Level Configuration of AI-Augmented Leadership

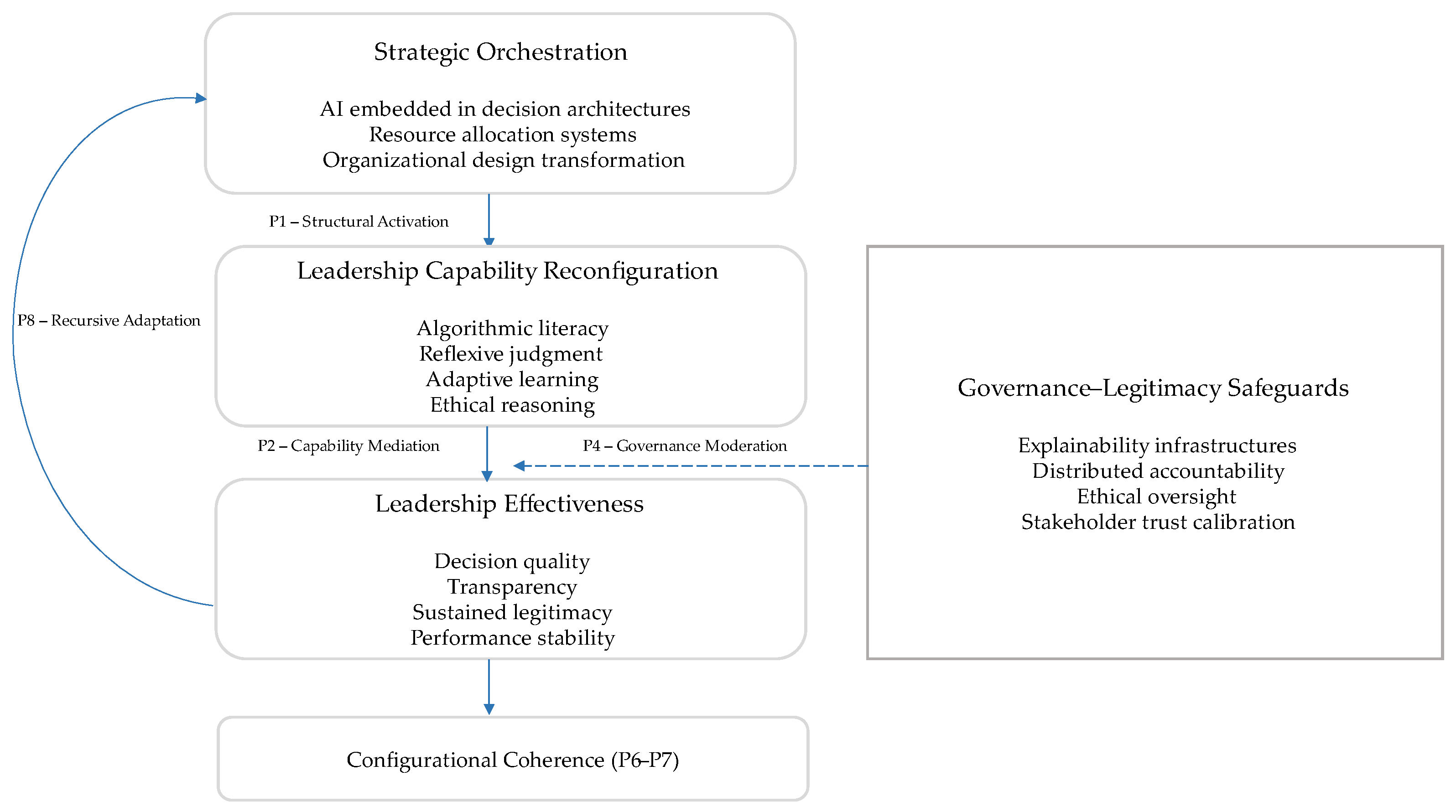

3.6. The ALCF Model: A Configurational Architecture of AI-Embedded Leadership

3.6.1. Strategic Orchestration as Foundational Structural Activation

3.6.2. Capability Reconfiguration as Operational Mediation

3.6.3. Governance–Legitimacy as System-Level Regulation

3.6.4. Configurational Alignment and Systemic Coherence

3.6.5. Recursive Feedback Dynamics

4. Discussion

4.1. Theoretical Implications

4.2. Managerial Implications

4.3. Limitations and Future Research

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| ALCF | AI-Leadership Configurational Framework |

| fsQCA | fuzzy-set Qualitative Comparative Analysis |

| HLM | Hierarchical Linear Modeling |

| HR | Human Resources |

| PRISMA | Preferred Reporting Items for Systematic reviews and Meta-Analyses |

| SEM | Structural Equation Modeling |

Appendix A. Descriptive Overview of the Final Nuclear Corpus (n = 33)

| Authors | Year | Article Title | Source | Design | Context | AI–Leadership Focus | Core Contribution |

| Smith & Green | 2018 | Artificial intelligence and the role of leadership | Journal of Leadership Studies | Conceptual | Cross-sector | AI redefining leadership role | Early conceptual framing of AI–leadership interface |

| Peifer et al. | 2022 | Artificial intelligence and its impact on leaders and leadership | Procedia Computer Science | Conceptual | Cross-sector | Leadership transformation | AI reshapes decision authority |

| Bronkhorst & Becker | 2024 | Use of artificial intelligence in leadership competency development and selection: An empirical study | Consulting Psychology Journal | Empirical (quant.) | Corporate | Competency development | AI enhances leadership assessment |

| Bock & von der Oelsnitz | 2025 | Leadership competences in the era of artificial intelligence – a structured review | Strategy & Leadership | Structured review | Corporate | Competency shift | Identifies future AI-driven leadership skills |

| Vargas Portillo | 2026 | The transformative role of artificial intelligence in leadership and management development | Development and Learning in Organizations | Conceptual | Management education | Leadership development | AI as catalyst of managerial evolution |

| Huber & Alexy | 2024 | The impact of artificial intelligence on strategic leadership | Edward Elgar Handbook | Conceptual | Strategic management | Strategic leadership | AI in high-level strategic decision-making |

| Crawford et al. | 2023 | Leadership is needed for ethical ChatGPT | JUTLP | Conceptual | Higher education | Ethical AI governance | Character-driven AI leadership |

| Florea & Croitoru | 2025 | The impact of artificial intelligence on communication dynamics and performance in organizational leadership | Administrative Sciences | Empirical | Organizational | Communication leadership | AI-mediated communication performance |

| Wijayati et al. | 2022 | Artificial intelligence on employee performance and work engagement: the moderating role of change leadership | Int. Journal of Manpower | Empirical | Corporate | Change leadership | Leadership moderates AI performance effects |

| Odugbesan et al. | 2023 | Green talent management... artificial intelligence and transformational leadership | Journal of Knowledge Management | Empirical | Corporate | Transformational leadership | AI-enabled innovation leadership |

| Wang | 2021 | When artificial intelligence meets educational leaders’ data-informed decision-making | Studies in Educational Evaluation | Conceptual | Education | Decision-making | AI caution in leader judgement |

| Quaquebeke & Gerpott | 2023 | The now, new, and next of digital leadership | JLOS | Conceptual | Cross-sector | AI takeover thesis | AI reshapes leadership ontology |

| Fullan et al. | 2024 | Artificial intelligence and school leadership | School Leadership & Management | Conceptual | Education | Leadership implications | Governance and opportunity framing |

| Petrat | 2021 | Attitude towards artificial intelligence in a leadership role | IEA Congress | Empirical | Experimental | AI as leader | Acceptance attitudes |

| Burnside et al. | 2025 | Artificial intelligence in radiology: a leadership survey | JACR | Empirical | Healthcare | Leadership adoption | Executive AI readiness |

| Madanchian et al. | 2024 | Transforming leadership practices through artificial intelligence | Procedia CS | Conceptual | Corporate | Practice transformation | AI-enabled leadership evolution |

| Myszak & Filina-Dawidowicz | 2025 | Leaders’ competencies and skills in the era of artificial intelligence | Applied Sciences | Scoping review | Cross-sector | Competencies | Skill mapping |

| Bevilacqua et al. | 2025 | Enhancing top managers’ leadership with artificial intelligence | Review of Managerial Science | Systematic review | Corporate | Top management | AI-augmented leadership |

| Zaidi et al. | 2025 | How will artificial intelligence evolve organizational leadership? | Global Business & Organizational Excellence | Empirical | Entrepreneurship | Leadership evolution | Technopreneur perspectives |

| Divya et al. | 2025 | The mediating effect of leadership in artificial intelligence success | Management Decision | Empirical | Corporate | Leadership mediation | AI success mechanisms |

| Zárate-Torres et al. | 2025 | Influence of Leadership on Human–Artificial Intelligence Collaboration | Behavioral Sciences | Empirical | Organizational | Human-AI collaboration | Leadership facilitation role |

| Hossain et al. | 2025 | Digital leadership: AI-driven leader capabilities | JLOS | Conceptual | Corporate | Dynamic capabilities | AI managerial capability model |

| Kafa | 2025 | Exploring integration aspects of school leadership in AI context | IJEM | Empirical | Education | Integration practices | AI adoption leadership |

| Tyson & Sauers | 2021 | School leaders’ adoption and implementation of artificial intelligence | JEA | Empirical | Education | Implementation | Adoption pathways |

| Matli | 2024 | Integration of AI and leadership reflexivity to enhance decision-making | Applied Artificial Intelligence | Conceptual | Strategic | Reflexive leadership | Cognitive augmentation |

| Marrone et al. | 2025 | Perceptions of school leaders on AI integration | School Leadership & Management | Empirical | Education | Perceptions | Institutional readiness |

| Arar et al. | 2024 | Human-Machine symbiosis in educational leadership | EMAL | Conceptual | Education | Symbiosis | Co-leadership model |

| Abduljaber | 2025 | Perceived influence of AI on educational leadership decision-making | Interactive Learning Environments | Qualitative | Education | Decision impact | Phenomenological insight |

| Renta-Davids et al. | 2025 | Navigating the challenges and opportunities of AI in educational leadership | Review of Education | Scoping review | Education | Challenges/opportunities | Integrated framework |

| Berkovich & Eyal | 2025 | Support for generative AI as predictor of leadership integration | EMAL | Empirical | Education | AI self-efficacy | Predictive leadership factors |

| Di Prima et al. | 2024 | The impact of artificial intelligence on organizations and managers | Springer | Conceptual | Corporate | Skills & leadership | Competency transformation |

| Jaboob et al. | 2025 | Harnessing AI for strategic decision-making: digital leadership catalyst | APJBA | Empirical | Corporate | Strategic leadership | Digital leadership mediation |

| Shatila | 2025 | Artificial intelligence and organizational resilience | Strategy & Leadership | Conceptual | Corporate | Digital leadership | AI–re |

References

- Abduljaber, M. Perceived influence of artificial intelligence on educational leadership decision making, teaching and learning outcomes: a transcendental phenomenological study. Interactive Learning Environments 2025, 1–15. [Google Scholar] [CrossRef]

- Abositta, A.; Adedokun, M. W.; Berberoğlu, A. Influence of Artificial Intelligence on Engineering Management Decision-Making with Mediating Role of Transformational Leadership. Systems 2024, 12, 570. [Google Scholar] [CrossRef]

- Ali, A.; Xue, X.; Wang, N.; Yin, X.; Tariq, H. The interplay of team-level leader-member exchange and artificial intelligence on information systems development team performance: a mediated moderation perspective. International Journal of Managing Projects in Business 2025, 18, 670–687. [Google Scholar] [CrossRef]

- Arar, K.; Tlili, A.; Salha, S. Human-Machine symbiosis in educational leadership in the era of artificial intelligence (AI): Where are we heading? Educational Management Administration & Leadership 2024, 0, 1–24. [Google Scholar] [CrossRef]

- Arcadi, P. Nursing leadership and artificial intelligence ethics: Safeguarding relationships and values. Nursing ethics 2025, 32, 2468–2476. [Google Scholar] [CrossRef]

- Baran, M. AI-augmented leadership: Redefining leaders’ style and competencies. In AI and Humanistic Management; Routledge, 2025; pp. 147–169. [Google Scholar]

- Barari, M.; Casper Ferm, L. E.; Quach, S.; Thaichon, P.; Ngo, L. The dark side of artificial intelligence in marketing: meta-analytics review. Marketing Intelligence & Planning 2024, 42, 1234–1256. [Google Scholar] [CrossRef]

- Berkovich, I.; Eyal, O. Support for generative artificial intelligence as a predictor of middle leaders’ generative artificial intelligence self-efficacy, valuing, and integration in school leadership work. Educational Management Administration & Leadership 2025, 0, 1–19. [Google Scholar] [CrossRef]

- Bevilacqua, S.; Masárová, J.; Perotti, F. A.; Ferraris, A. Enhancing top managers’ leadership with artificial intelligence: insights from a systematic literature review. Review of Managerial Science 2025, 19, 2899–2935. [Google Scholar] [CrossRef]

- Bock, T.; von der Oelsnitz, D. Leadership-competences in the era of artificial intelligence–a structured review. Strategy & Leadership 2025, 53, 235–255. [Google Scholar] [CrossRef]

- Bollaert, L. Artificial Intelligence: Objective or Tool in the 21st-Century Higher Education Strategy and Leadership? Education Sciences 2025, 15, 774. [Google Scholar] [CrossRef]

- Brock, J. K. U.; Von Wangenheim, F. Demystifying AI: What digital transformation leaders can teach you about realistic artificial intelligence. California management review 2019, 61, 110–134. [Google Scholar] [CrossRef]

- Bronkhorst, P. V.; Becker, J. Use of artificial intelligence in leadership competency development and selection: An empirical study. In Consulting Psychology Journal.; Advance online publication, 2024. [Google Scholar] [CrossRef]

- Burnside, E. S.; Grist, T. M.; Lasarev, M. R.; Garrett, J. W.; Morris, E. A. Artificial intelligence in radiology: a leadership survey. Journal of the American College of Radiology 2025, 22, 577–585. [Google Scholar] [CrossRef]

- Chen, M.; Decary, M. Artificial intelligence in healthcare: An essential guide for health leaders. In Healthcare management forum; Sage CA: Los Angeles, CA; Sage Publications, 2020; Vol. 33, No. 1, pp. 10–18. [Google Scholar] [CrossRef]

- Clipper, B.; Batcheller, J.; Thomaz, A. L.; Rozga, A. Artificial intelligence and robotics: a nurse leader’s primer. Nurse Leader 2018, 16, 379–384. [Google Scholar] [CrossRef]

- Cowling, M.; Crawford, J.; Allen, K. A.; Wehmeyer, M. Using leadership to leverage ChatGPT and artificial intelligence for undergraduate and postgraduate research supervision. Australasian Journal of Educational Technology 2023, 39, 89–103. [Google Scholar] [CrossRef]

- Cox, A. M.; Pinfield, S.; Rutter, S. The intelligent library: Thought leaders’ views on the likely impact of artificial intelligence on academic libraries. Library Hi Tech 2019, 37, 418–435. [Google Scholar] [CrossRef]

- Crawford, J.; Cowling, M.; Allen, K. A. Leadership is needed for ethical ChatGPT: Character, assessment, and learning using artificial intelligence (AI). Journal of University Teaching and Learning Practice 2023, 20, 1–19. [Google Scholar] [CrossRef]

- Di Prima, C.; Bevilacqua, S.; Bresciani, S.; Ferraris, A. The impact of artificial intelligence on organizations and managers: The skills needed for an effective leadership. In Artificial Intelligence and Business Transformation: Impact in HR Management, Innovation and Technology Challenges; Springer Nature Switzerland: Cham, 2024; pp. 163–176. [Google Scholar] [CrossRef]

- Divya, D.; Jain, R.; Chetty, P.; Siwach, V.; Mathur, A. The mediating effect of leadership in artificial intelligence success for employee-engagement. Management Decision 2025, 63, 3676–3699. [Google Scholar] [CrossRef]

- Fernandes, S.; Sheeja, M. S.; Parivara, S. Synergizing Global Leadership Competencies with Artificial Intelligence and Expert Systems: A Multidisciplinary Approach for the Future of Management. In International Conference on Science, Engineering Management and Information Technology; Springer Nature Switzerland: Cham, 2023; pp. 306–317. [Google Scholar] [CrossRef]

- Florea, N. V.; Croitoru, G. The impact of artificial intelligence on communication dynamics and performance in organizational leadership. Administrative Sciences 2025, 15, 33. [Google Scholar] [CrossRef]

- Fullan, M.; Azorín, C.; Harris, A.; Jones, M. Artificial intelligence and school leadership: challenges, opportunities and implications. School Leadership & Management 2024, 44, 339–346. [Google Scholar] [CrossRef]

- Gligor, D. M.; Pillai, K. G.; Golgeci, I. Theorizing the dark side of business-to-business relationships in the era of AI, big data, and blockchain. Journal of Business Research 2021, 133, 79–88. [Google Scholar] [CrossRef]

- Goralski, M. A.; Tan, T. K. Artificial intelligence and sustainable development. The International Journal of Management Education 2020, 18, 100330. [Google Scholar] [CrossRef]

- Hejres, S. The impact of artificial intelligence on instructional leadership. In Technologies, Artificial Intelligence and the future of learning Post-COVID-19: The crucial role of International Accreditation; Springer International Publishing: Cham, 2022; pp. 697–711. [Google Scholar] [CrossRef]

- Hossain, S.; Fernando, M.; Akter, S. Digital leadership: Towards a dynamic managerial capability perspective of artificial intelligence-driven leader capabilities. Journal of Leadership & Organizational Studies 2025, 32, 189–208. [Google Scholar] [CrossRef]

- Huber, D. M.; Alexy, O. The impact of artificial intelligence on strategic leadership. In Handbook of Research on Strategic Leadership in the Fourth Industrial Revolution; Edward Elgar Publishing, 2024; pp. 108–136. [Google Scholar] [CrossRef]

- Islam, N. M.; Laughter, L.; Sadid-Zadeh, R.; Smith, C.; Dolan, T. A.; Crain, G.; Squarize, C. H. Adopting artificial intelligence in dental education: a model for academic leadership and innovation. Journal of dental education 2022, 86, 1545–1551. [Google Scholar] [CrossRef] [PubMed]

- Jaboob, M; Al-Ansi, AM; Al-Okaily, M; Ferasso, M. Harnessing artificial intelligence for strategic decision-making: the catalyst impact of digital leadership. Asia-Pacific Journal of Business Administration 2025, Vol. ahead-of-print No. ahead-of-print. [Google Scholar] [CrossRef]

- Jones, W. A. Artificial intelligence and leadership: A few thoughts, a few questions. Journal of Leadership Studies 2018, 12, 60–62. [Google Scholar] [CrossRef]

- Kafa, A. Exploring integration aspects of school leadership in the context of digitalization and artificial intelligence. International Journal of Educational Management 2025, 39, 98–115. [Google Scholar] [CrossRef]

- Karakose, T.; Tülübas, T. School Leadership and Management in the Age of Artificial Intelligence (AI): Recent Developments and Future Prospects. Educational Process: International Journal 2024, 13, 7–14. [Google Scholar] [CrossRef]

- Krause, W.; Balasescu, A. Engagement as leadership-practice for today’s global wicked problems: leadership learning for artificial intelligence. In International Conference on Human-Computer Interaction; Springer International Publishing: Cham, 2022; pp. 578–587. [Google Scholar] [CrossRef]

- Madanchian, M.; Taherdoost, H.; Vincenti, M.; Mohamed, N. Transforming leadership practices through artificial intelligence. Procedia Computer Science 2024, 235, 2101–2111. [Google Scholar] [CrossRef]

- Mahmoud, A. B.; Tehseen, S.; Fuxman, L. The dark side of artificial intelligence in retail innovation. In Retail futures; Emerald Publishing Limited, 2020; pp. 165–180. [Google Scholar] [CrossRef]

- Marrone, R.; Fowler, S.; Bathakur, A.; Dawson, S.; Siemens, G.; Singh, C. Perceptions and perspectives of Australian school leaders on the integration of artificial intelligence in schools. School Leadership & Management 2025, 45, 30–52. [Google Scholar] [CrossRef]

- Matli, W. Integration of warrior artificial intelligence and leadership reflexivity to enhance decision-making. Applied Artificial Intelligence 2024, 38, 2411462. [Google Scholar] [CrossRef]

- Morandini, S.; Fraboni, F.; De Angelis, M.; Puzzo, G.; Giusino, D.; Pietrantoni, L. The impact of artificial intelligence on workers’ skills: Upskilling and reskilling in organisations. Informing Science 2023, 26, 39–68. [Google Scholar] [CrossRef] [PubMed]

- Mumtaz, S.; Carmichael, J.; Weiss, M.; Nimon-Peters, A. Ethical use of artificial intelligence based tools in higher education: are future business leaders ready? Education and Information Technologies 2025, 30, 7293–7319. [Google Scholar] [CrossRef]

- Myszak, J. M.; Filina-Dawidowicz, L. Leaders’ competencies and skills in the era of artificial intelligence: A scoping review. Applied Sciences 2025, 15, 10271. [Google Scholar] [CrossRef]

- Odugbesan, J. A.; Aghazadeh, S.; Al Qaralleh, R. E.; Sogeke, O. S. Green talent management and employees’ innovative work behavior: the roles of artificial intelligence and transformational leadership. Journal of knowledge management 2023, 27, 696–716. [Google Scholar] [CrossRef]

- Page, M. J.; McKenzie, J. E.; Bossuyt, P. M.; Boutron, I.; Hoffmann, T. C.; Mulrow, C. D.; Moher, D. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. bmj 2021a, 372. [Google Scholar] [CrossRef]

- Page, M. J.; McKenzie, J. E.; Bossuyt, P. M.; Boutron, I.; Hoffmann, T. C.; Mulrow, C. D.; Moher, D. Updating guidance for reporting systematic reviews: development of the PRISMA 2020 statement. Journal of clinical epidemiology 2021b, 134, 103–112. [Google Scholar] [CrossRef]

- Page, M. J.; Moher, D.; Bossuyt, P. M.; Boutron, I.; Hoffmann, T. C.; Mulrow, C. D.; McKenzie, J. E. PRISMA 2020 explanation and elaboration: updated guidance and exemplars for reporting systematic reviews. bmj 2021c, 372. [Google Scholar] [CrossRef]

- Papagiannidis, E.; Mikalef, P.; Conboy, K.; Van de Wetering, R. Uncovering the dark side of AI-based decision-making: A case study in a B2B context. Industrial Marketing Management 2023, 115, 253–265. [Google Scholar] [CrossRef]

- Peifer, Y.; Jeske, T.; Hille, S. Artificial intelligence and its impact on leaders and leadership. Procedia computer science 2022, 200, 1024–1030. [Google Scholar] [CrossRef]

- Petersson, L.; Larsson, I.; Nygren, J. M.; Nilsen, P.; Neher, M.; Reed, J. E.; Svedberg, P. Challenges to implementing artificial intelligence in healthcare: a qualitative interview study with healthcare leaders in Sweden. BMC health services research 2022, 22, 850. [Google Scholar] [CrossRef] [PubMed]

- Petrat, D. Attitude towards artificial intelligence in a leadership role. In Congress of the International Ergonomics Association; Springer International Publishing: Cham, 2021; pp. 811–819. [Google Scholar] [CrossRef]

- Plastino, E.; Purdy, M. Game changing value from Artificial Intelligence: eight strategies. Strategy & Leadership 2018, 46, 16–22. [Google Scholar] [CrossRef]

- Quaquebeke, N. V.; Gerpott, F. H. The now, new, and next of digital leadership: How Artificial Intelligence (AI) will take over and change leadership as we know it. Journal of Leadership & Organizational Studies 2023, 30, 265–275. [Google Scholar] [CrossRef]

- Ramasamy, A. Prisma 2020: key changes and implementation aspects. Journal of Oral and Maxillofacial Surgery 2022, 80, 795–797. [Google Scholar] [CrossRef] [PubMed]

- Rana, N. P.; Chatterjee, S.; Dwivedi, Y. K.; Akter, S. Understanding dark side of artificial intelligence (AI) integrated business analytics: assessing firm’s operational inefficiency and competitiveness. European Journal of Information Systems 2022, 31, 364–387. [Google Scholar] [CrossRef]

- Renta-Davids, A. I.; Camarero-Figuerola, M.; Camacho, M. Navigating the challenges and opportunities of artificial intelligence in educational leadership: A scoping review. Review of Education 2025, 13, e70101. [Google Scholar] [CrossRef]

- Ronquillo, C. E.; Peltonen, L. M.; Pruinelli, L.; Chu, C. H.; Bakken, S.; Beduschi, A.; Topaz, M. Artificial intelligence in nursing: Priorities and opportunities from an international invitational think-tank of the Nursing and Artificial Intelligence Leadership Collaborative. Journal of advanced nursing 2021, 77, 3707–3717. [Google Scholar] [CrossRef]

- Sadiku-Dushi, N. Artificial Intelligence in Strategic Management: Shaping the Future of Business Leadership. In Navigating AI in Business: Strategies and Insights Across Disciplines; Springer Nature Switzerland: Cham, 2026; pp. 45–63. [Google Scholar] [CrossRef]

- Santana, M.; Díaz-Fernández, M. Competencies for the artificial intelligence age: visualisation of the state of the art and future perspectives. Review of Managerial Science 2023, 17, 1971–2004. [Google Scholar] [CrossRef]

- Santiago-Torner, C.; Corral-Marfil, J.-A.; Tarrats-Pons, E. Artificial Intelligence and the Reconfiguration of Emotional Well-Being (2020–2025): A Critical Reflection. Societies 2026, 16, 6. [Google Scholar] [CrossRef]

- Santiago-Torner, C.; Corral-Marfil, J.-A.; Tarrats-Pons, E. Digital Burnout and the Future of Human Sustainability at Work: A Critical Reflection from Organizational Development (2015–2025). Organization Development Journal 2025, 43. [Google Scholar] [CrossRef]

- Shatila, K. “Artificial intelligence and organizational resilience: the mediating role of agility, innovation, and digital leadership”. Strategy & Leadership 2025, Vol. ahead-of-print No. ahead-of-print. [Google Scholar] [CrossRef]

- Smith, A. M.; Green, M. Artificial intelligence and the role of leadership. Journal of Leadership Studies 2018, 12, 85–87. [Google Scholar] [CrossRef]

- Sohrabi, C.; Franchi, T.; Mathew, G.; Kerwan, A.; Nicola, M.; Griffin, M.; Agha, R. PRISMA 2020 statement: What’s new and the importance of reporting guidelines. International Journal of Surgery 2021, 88, 105918. [Google Scholar] [CrossRef]

- Sposato, M. Leadership training and development in the age of artificial intelligence. Development and Learning in Organizations: An International Journal 2024, 38, 4–7. [Google Scholar] [CrossRef]

- Swartz, M. K. PRISMA 2020: an update. Journal of Pediatric Health Care 2021, 35, 351. [Google Scholar] [CrossRef]

- Tyson, M. M.; Sauers, N. J. School leaders’ adoption and implementation of artificial intelligence. Journal of Educational Administration 2021, 59, 271–285. [Google Scholar] [CrossRef]

- Vargas Portillo, P. The transformative role of artificial intelligence in leadership and management development: an academic insight. Development and Learning in Organizations: An International Journal 2026, 40, 1–4. [Google Scholar] [CrossRef]

- Wang, Y. When artificial intelligence meets educational leaders’ data-informed decision-making: A cautionary tale. Studies in Educational Evaluation 2021, 69, 100872. [Google Scholar] [CrossRef]

- Wijayati, D. T.; Rahman, Z.; Fahrullah, A. R.; Rahman, M. F. W.; Arifah, I. D. C.; Kautsar, A. A study of artificial intelligence on employee performance and work engagement: the moderating role of change leadership. International Journal of Manpower 2022, 43, 486–512. [Google Scholar] [CrossRef]

- Yepes-Nuñez, J. J.; Urrútia, G.; Romero-García, M.; Alonso-Fernández, S. Declaración PRISMA 2020: una guía actualizada para la publicación de revisiones sistemáticas. Revista española de cardiología 2021, 74, 790–799. [Google Scholar] [CrossRef]

- Zaidi, S. Y. A.; Aslam, M. F.; Mahmood, F.; Ahmad, B.; Raza, S. B. How will artificial intelligence (AI) evolve organizational leadership? Understanding the perspectives of technopreneurs. Global Business and Organizational Excellence 2025, 44, 66–83. [Google Scholar] [CrossRef]

- Zárate-Torres, R.; Rey-Sarmiento, C. F.; Acosta-Prado, J. C.; Gómez-Cruz, N. A.; Rodríguez Castro, D. Y.; Camargo, J. Influence of Leadership on Human–Artificial Intelligence Collaboration. Behavioral Sciences 2025, 15, 873. [Google Scholar] [CrossRef] [PubMed]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).