Submitted:

06 March 2026

Posted:

09 March 2026

You are already at the latest version

Abstract

Keywords:

Introduction

Dataset Contributions

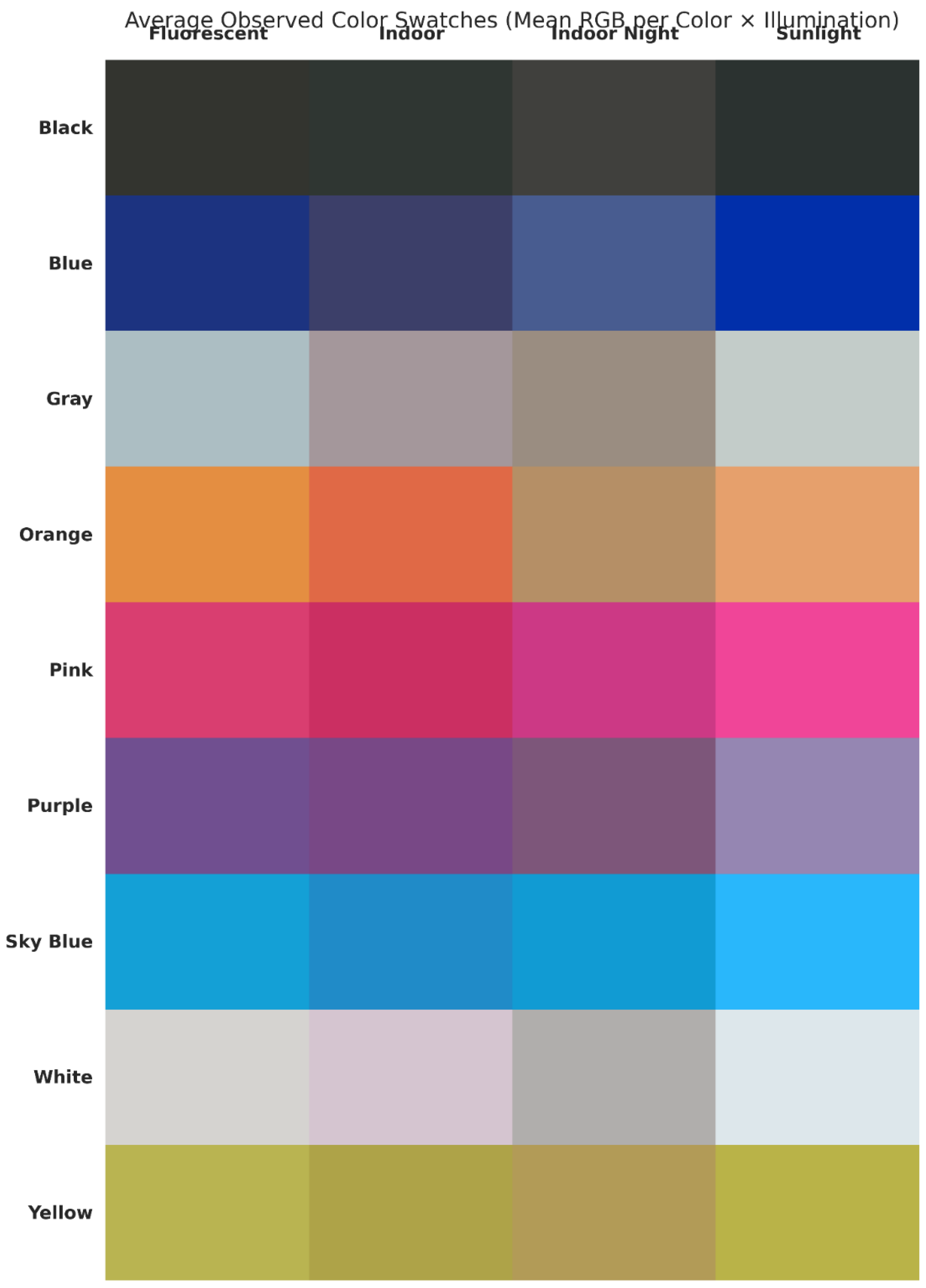

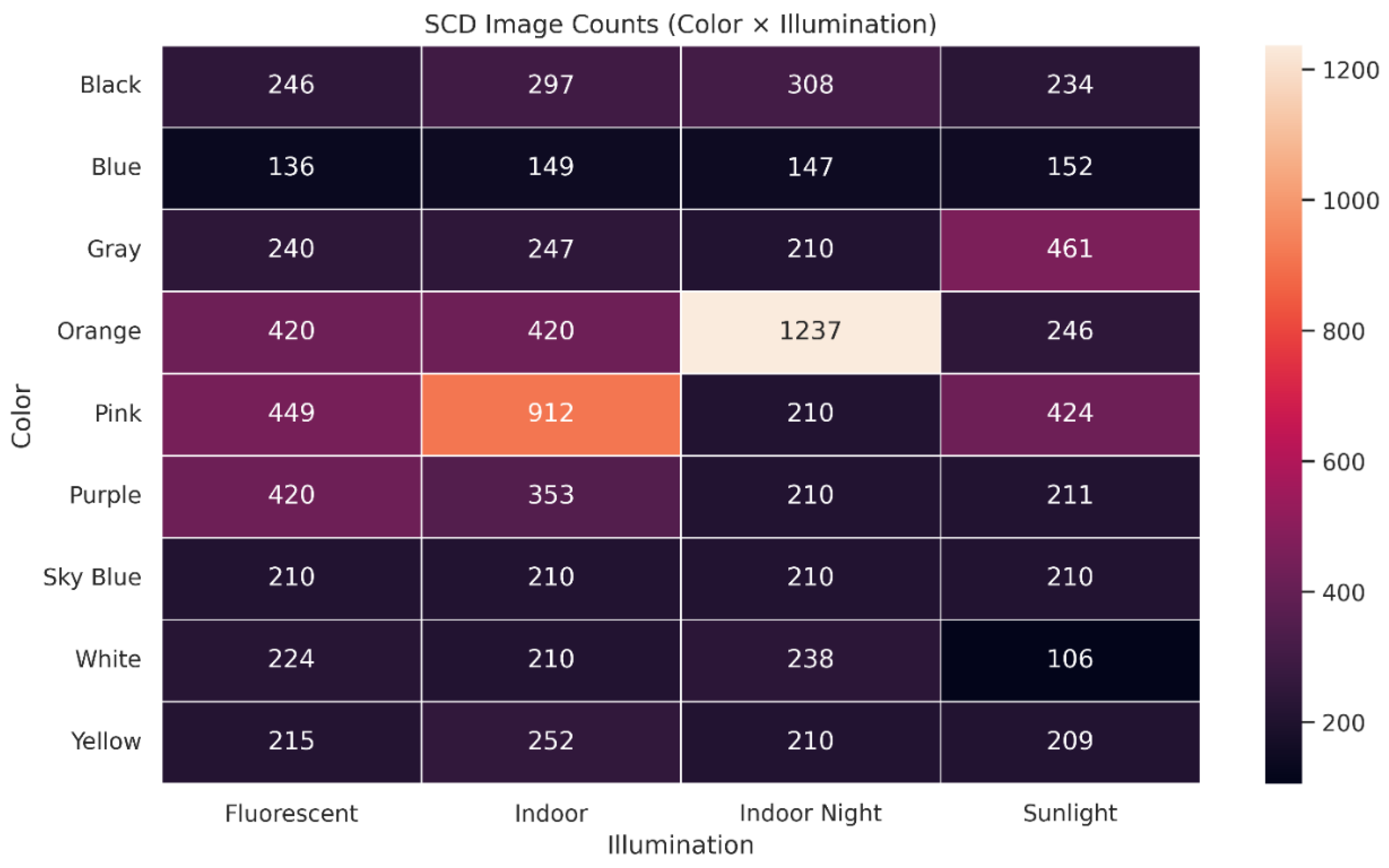

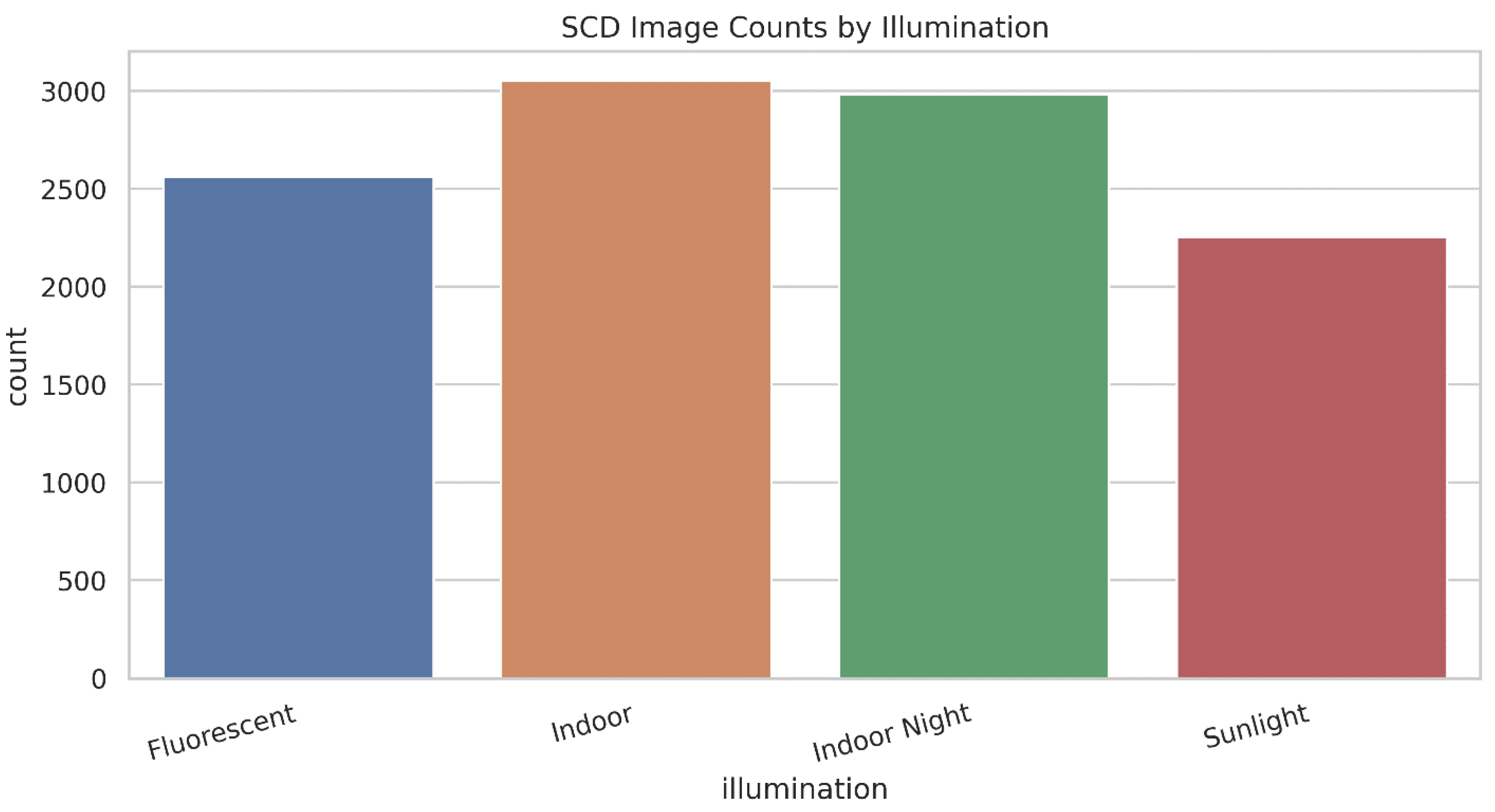

- Everyday illumination categories, real capture conditions: SCD targets common lighting environments (fluorescent, indoor, indoor night, sunlight) where color shifts naturally due to illumination and mobile camera processing.

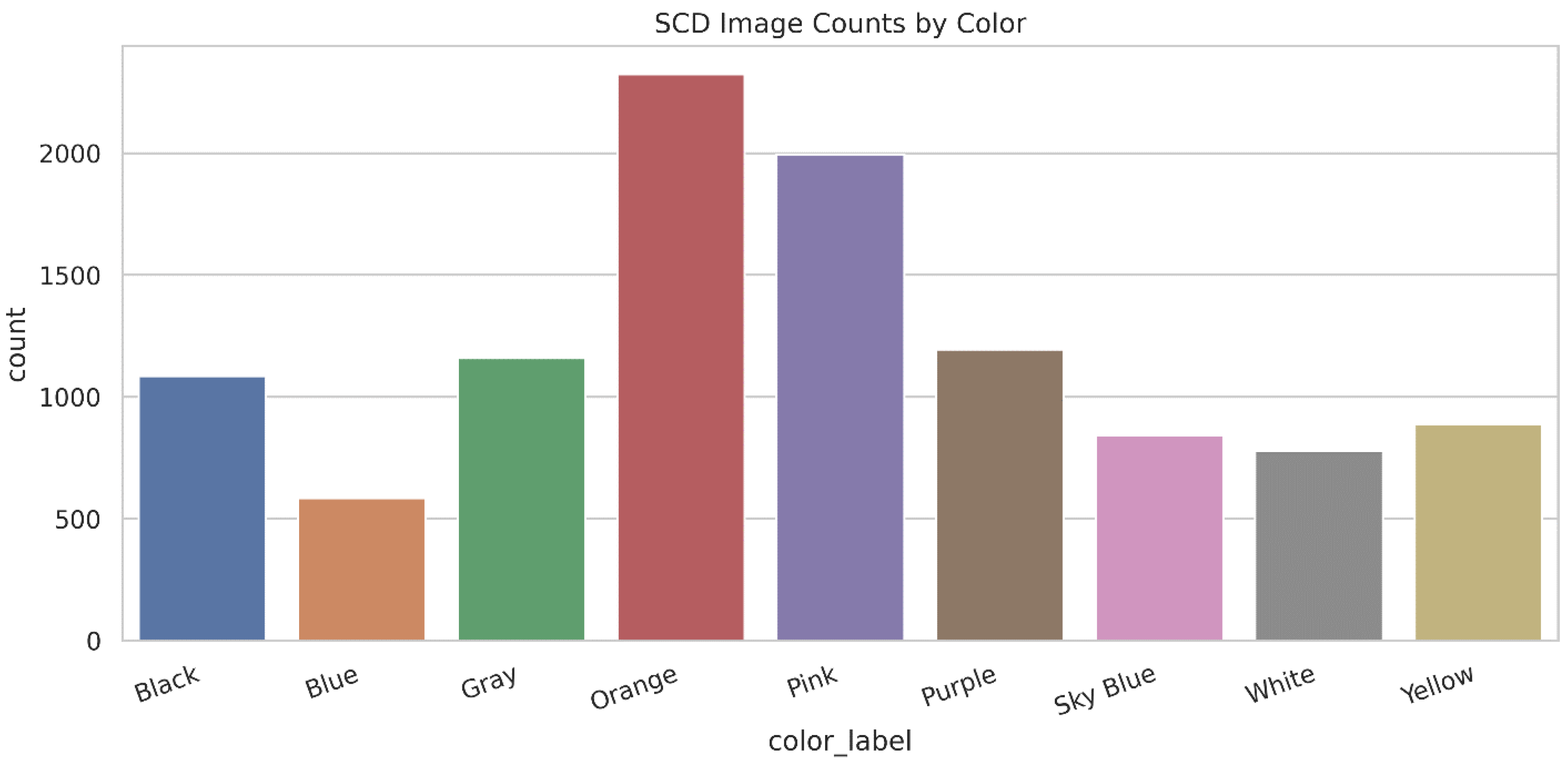

- Simple, repeatable subjects: Using nine color papers makes the visual content easy to interpret and reduces confusion from complex scene semantics, which helps when diagnosing failures under domain shift.

- Metadata + reproducible reporting assets: The release includes structured CSV metadata and automatically generated tables/figures that support transparent dataset reporting and fast reuse.

- Evaluation-friendly design for robustness studies: SCD supports clear testing scenarios such as training on one illumination and evaluating on another, making it suitable for studying illumination robustness and generalization.

- Open access and practical usability: The dataset and code are publicly available, making it straightforward for others to replicate reported statistics and build baseline pipelines.

Related Work

Color Constancy, White Balance, and Illumination Robustness

Benchmark Datasets for Illumination and Color Constancy

Multi-Illumination and “In-the-Wild” Appearance Variation Datasets

Where SCD Fits

Background & Summary

Relation to Existing Datasets

Typical Use-Cases Enabled by SCD

- Color constancy / white balance evaluation (as a stress test): Analyze whether a correction strategy reduces cross-illumination color drift.

- Illumination classification: Predict illumination category from images or derived statistics.

- Robust color recognition: Train color classifiers and evaluate under held-out illumination (domain shift).

- Dataset shift analysis: Quantify how feature distributions change across lighting conditions and how that impacts model performance.

Methods

Materials

- Fluorescent: indoor lighting dominated by fluorescent tubes (typical office/classroom light).

- Indoor: indoor ambient lighting (room light) during normal conditions.

- Indoor Night: indoor lighting at night, often warmer and dimmer with stronger shadows and noise.

- Sunlight: outdoor natural light, including bright conditions where shadows and reflections may appear.

| Setting | Value / Description |

| Capture device | Infinix NOTE 40 (rear camera) |

| Sensor / image resolution | 108 MP (native camera capability); images saved at the phone’s default output resolution for the selected mode |

| Capture mode | Standard phone camera mode (default pipeline) |

| Flash | Off |

| White balance (AWB) | Auto white balance enabled (default) |

| Exposure | Auto exposure enabled (default) |

| Stabilization | Phone mounted on a tripod to reduce motion blur |

| Target placement | Color paper placed flat on a ground surface |

| Camera–target distance | Approximately kept consistent within each session; small variations occurred due to viewpoint changes (report as an approximate range if measured) |

| Viewpoint variation | Introduced by moving/reorienting the tripod; no calibrated angles or angle IDs released |

| Illumination conditions | Fluorescent, Indoor, Indoor Night, Sunlight |

| Capture environment | Real-world settings (not laboratory-calibrated); natural variation in shadows and reflections may occur |

| File format | Images stored as standard compressed image files (e.g., JPG/PNG as released) |

| Dataset labels | Color label (9 classes) + illumination label (4 classes); viewpoint variation is unlabeled |

Capture Procedure

- Fluorescent: captured indoors under fluorescent light.

- Indoor: captured indoors under standard room lighting.

- Indoor Night: captured indoors at night under artificial light, where exposure and noise effects are stronger.

- Sunlight: captured in outdoor daylight, where brightness, shadows, and reflections can change across time.

Quality Control

Data Records

Folder Structure and Naming Conventions:

Naming Conventions

- Color label is taken from the top-level folder name (e.g., Black, Blue, Gray, Orange, Pink, Purple, Sky Blue, White, and Yellow).

- Illumination label is taken from the second-level folder name (e.g., Fluorescent, Indoor, Indoor_Night, and Sunlight).

- The file name itself may be any camera-generated name (e.g., IMG_001.jpg), because the label is derived from the folder path.

- If angle labels are added in a future version, they would be introduced as an additional folder level (e.g., Angle_1 … Angle_7) or as a mapping table; however, the current release treats viewpoint variation as unlabeled.

File Formats, Color Space, and License

- Kaggle code notebook: https://www.kaggle.com/code/basitaliharejo/sadacolordatasetscd-paper/edit

Metadata Tables and Supporting Files

Released Tables and Files

- metadata_images.csv — per-image metadata and basic color statistics.

- metadata_images_with_drift.csv — adds a color variability proxy (lab_drift).

- summary_counts_color_x_illum.csv — counts for each color–illumination combination.

- group_color_stats.csv — aggregated statistics per (color, illumination) and optional groupings.

- exif_dump.json — EXIF fields extracted when available (if present in the images).

Notes on Labels

- color_label and illumination are derived from the folder path.

- angle_id may appear in some metadata outputs, but in the current release it is not reliably available and should be treated as missing (i.e., the dataset does not publish angle labels).

Metadata Schema (Main Columns)

| Column name | Type | Description | Example |

| rel_path | string | Relative path of the image inside the dataset | Blue/Indoor/IMG_0123.jpg |

| filename | string | File name only | IMG_0123.jpg |

| filesize_bytes | integer | Size of the image file in bytes | 2543891 |

| width | integer | Image width in pixels | 3000 |

| height | integer | Image height in pixels | 4000 |

| color_label | string | Color class label (from folder name) | Blue |

| illumination | string | Illumination condition label (from folder name) | Indoor Night |

| mean_r, mean_g, mean_b | float | Mean RGB values (normalized 0–1) computed from the image | 0.12, 0.18, 0.62 |

| std_r, std_g, std_b | float | Standard deviation of RGB values (normalized 0–1) | 0.04, 0.05, 0.07 |

| mean_h, mean_s, mean_v | float | Mean HSV values (normalized) | 0.60, 0.75, 0.62 |

| mean_L, mean_a, mean_b_lab | float | Mean CIELAB values (L*, a*, b*) | 42.1, 12.0, -38.5 |

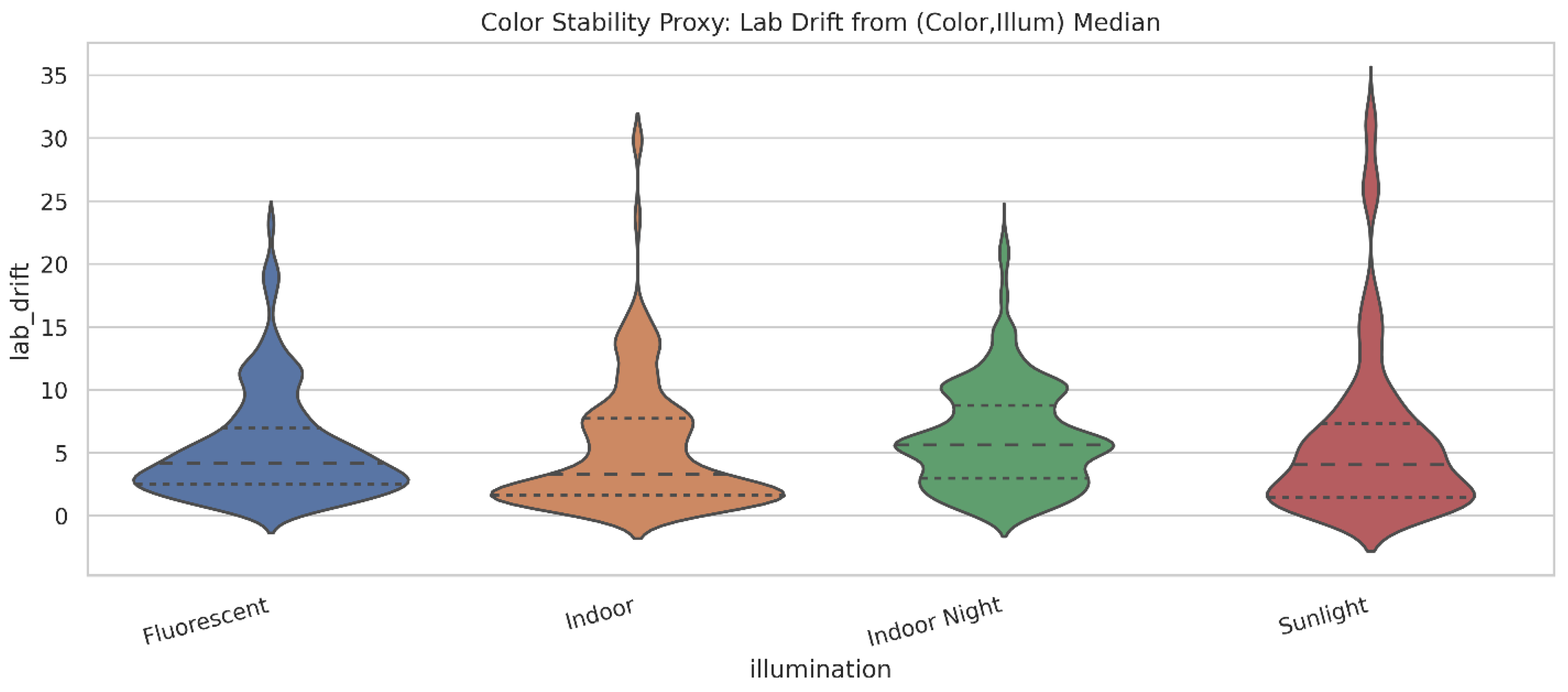

| lab_drift | float | Distance in CIELAB space from the median of its (color, illumination) group; used as a stability proxy (only in metadata_images_with_drift.csv) | 5.63 |

| error | string (optional) | Read error message if an image could not be processed | (empty) |

Technical Validation

Coverage and Balance

- SCD-Balanced-106: sample 106 images per (color, illumination) cell (the minimum cell size).

- This produces 106 × 36 = 3816 images, perfectly balanced across the full label grid.

- Sampling should be random but seeded (fixed seed in code) and ideally stored as an index file (e.g., balanced_index.csv) so that results are comparable across papers.

Illumination-Induced Variation (Color Stability Proxy)

- Indoor Night shows the highest typical drift (median ≈ 5.63, n=2980), indicating stronger variability under low-light conditions.

- Fluorescent and Sunlight have similar medians (≈ 4.17 and ≈ 4.07), but Sunlight has the largest tail (maximum drift ≈ 32.76), consistent with harsh shadows, highlights, and changing daylight.

- Indoor has the lowest median (≈ 3.29, n=3050), suggesting relatively more stable appearance in that setting.

Color Separability (Optional But Strong)

- A 2D scatter plot of a* vs b* for all images, colored by the ground-truth color label, and faceted by illumination (four small panels).

Inter-Illumination Shift per Color (Practical and Publishable)

- Blue shows the strongest shift (mean pairwise distance ≈ 31.53, max ≈ 50.87).

- Orange is also highly sensitive (≈ 25.61, max ≈ 37.78).

- Black is the most stable (≈ 4.74, max ≈ 7.57).

- Other colors fall in between (e.g., Purple/Gray moderate; Sky Blue and Yellow lower than Blue/Orange).

Angle-Based Validation (Only If Angles Are Labeled)

- We do not include angle coverage plots in the main validation results.

- We do not claim calibrated angles or official “Angle ID 1–7” in this version.

- Color × Angle coverage heatmap (checks completeness across viewpoints)

- Mean L* vs Angle ID line plot (shows viewpoint-dependent lightness changes, optionally separated by illumination)

Usage Notes

Recommended Preprocessing

- A center-crop that keeps the paper mostly in view, or

- A simple paper ROI (manual box, or automatic segmentation using thresholding/contours if the paper edges are clear).

Recommended Evaluation Protocols

- Choose one illumination as the test domain (e.g., Sunlight).

- Train the model using the other three illuminations (Fluorescent + Indoor + Indoor Night).

- Evaluate only on the held-out illumination.

- Repeat this four times (each illumination becomes the test set once).

- Let k be the minimum sample count among all (color, illumination) cells.

- Randomly sample k images from each cell using a fixed random seed.

- Train and evaluate using only this balanced subset (or use it as a standardized benchmark split).

- Train on angles 1–5, test on angles 6–7, while keeping illumination coverage consistent.

- Optionally repeat with different train/test angle partitions.

Baseline Tasks Supported by SCD

- Estimate illumination chromaticity,

- Apply correction,

- Quantify improvement in cross-illumination stability (e.g., reduced Lab drift).

Reporting Tips (To Make Your Results Reproducible)

- Always state whether you used full images or ROI crops.

- Report whether you used balanced sampling or class weights.

- Fix random seeds and, if possible, release split files (CSV/JSON) so others can reproduce your exact protocol.

- For Protocol P1, report results for each held-out illumination separately, not only an overall average.

Limitations

Author Contributions

Institutional Review Board Statement

Acknowledgments

Conflicts of Interest

References

- M. D. Wilkinson et al., “The FAIR Guiding Principles for scientific data management and stewardship,” Scientific Data, vol. 3, 2016. [CrossRef]

- Scientific Data (Nature Portfolio), “Data Descriptor (article type) / author guidance,” Nature Portfolio, accessed 2026.

- FORCE11, “Joint Declaration of Data Citation Principles,” 2014.

- A. Gh. Akbarinia and C. A. Parraga, “Colour constancy: Biologically-inspired contrast-variant pooling mechanism,” arXiv preprint arXiv:1711.10968, 2017.

- S. Banić and S. Lončarić, “Unsupervised Learning for Color Constancy,” arXiv preprint arXiv:1712.00436, 2017.

- A. Laakom, V. Raitoharju, A. Iosifidis, and M. Gabbouj, “INTEL-TAU: A Color Constancy Dataset,” arXiv preprint arXiv:1910.10404, 2019.

- C. Aytekin, J. Nikkanen, M. Gabbouj, and M. A. Ghanem, “A Dataset for Camera-Invariant Color Constancy Research,” arXiv preprint arXiv:1703.09778, 2017.

- S. Banić and S. Lončarić, “Cube+ dataset for illuminant estimation (SpyderCube-based),” 2018.

- E. Ershov, D. Gusak, S. Banić, and S. Lončarić, “The Cube++ Illumination Estimation Dataset,” arXiv preprint arXiv:2011.10028, 2020.

- O. Ulucan et al., “CC-NORD: A camera-invariant global color constancy dataset,” in Proc. EUSIPCO, 2023.

- E. Shi, “Re-processing of Gehler’s ColorChecker dataset (notes and corrected data),” Simon Fraser University resource page, accessed 2026.

- G. Hemrit, R. Luque-Baena, and A. P. de la Blanca, “Rehabilitating the ColorChecker dataset for illuminant estimation,” 2018.

- J. T. Barron, “Convolutional Color Constancy,” in Proc. ICCV, 2015. [CrossRef]

- F. Ciurea and B. Funt, “A large image database for color constancy research,” 2003. [CrossRef]

- D. Cheng, B.V.K.V. Kumar, and M. S. Brown, “Illuminant Estimation for Color Constancy: Why Spatial-Domain Methods Work and the Role of the Color Distribution,” IEEE Trans. Image Process., 2014. [CrossRef]

- Y.-C. Lo et al., “CLCC: Contrastive Learning for Color Constancy,” in Proc. CVPR, 2021.

- S. Bianco, C. Cusano, and R. Schettini, “Color Constancy Using CNNs,” in Proc. CVPR Workshops, 2015.

- G. Sharma, W. Wu, and E. N. Dalal, “The CIEDE2000 color-difference formula: Implementation notes, supplementary test data, and mathematical observations,” Color Research & Application, vol. 30, no. 1, pp. 21–30, 2005. [CrossRef]

- L. Murmann, M. Gharbi, M. Aittala, and F. Durand, “A Dataset of Multi-Illumination Images in the Wild,” in Proc. ICCV, 2019.

- M. Afifi and M. S. Brown, “Deep White-Balance Editing,” in Proc. CVPR, 2020. [CrossRef]

- I. Liu et al., “OpenIllumination: A Multi-Illumination Dataset for Inverse Rendering Evaluation on Real Objects,” in NeurIPS (Datasets and Benchmarks Track), 2023.

- A. Gijsenij, T. Gevers, and J. van de Weijer, “Computational Color Constancy: Survey and Experiments,” 2011. [CrossRef]

- M. Buzzelli, S. Zini, S. Bianco, G. Ciocca, R. Schettini, and M. K. Tchobanou, “Analysis of biases in automatic white balance datasets and methods,” Color Research & Application, vol. 48, no. 1, pp. 40–62, 2023. [CrossRef]

- X. Xing et al., “Point Cloud Color Constancy,” in Proc. CVPR, 2022.

- International Commission on Illumination (CIE), “Colorimetry — Part 4: CIE 1976 L*a*b* Colour space,” CIE publication, 1976 (and later standard editions).

- IEC, “IEC 61966-2-1: Multimedia systems and equipment — Colour measurement and management — Part 2-1: Default RGB colour space — sRGB,” 1999.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).