Submitted:

06 March 2026

Posted:

09 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

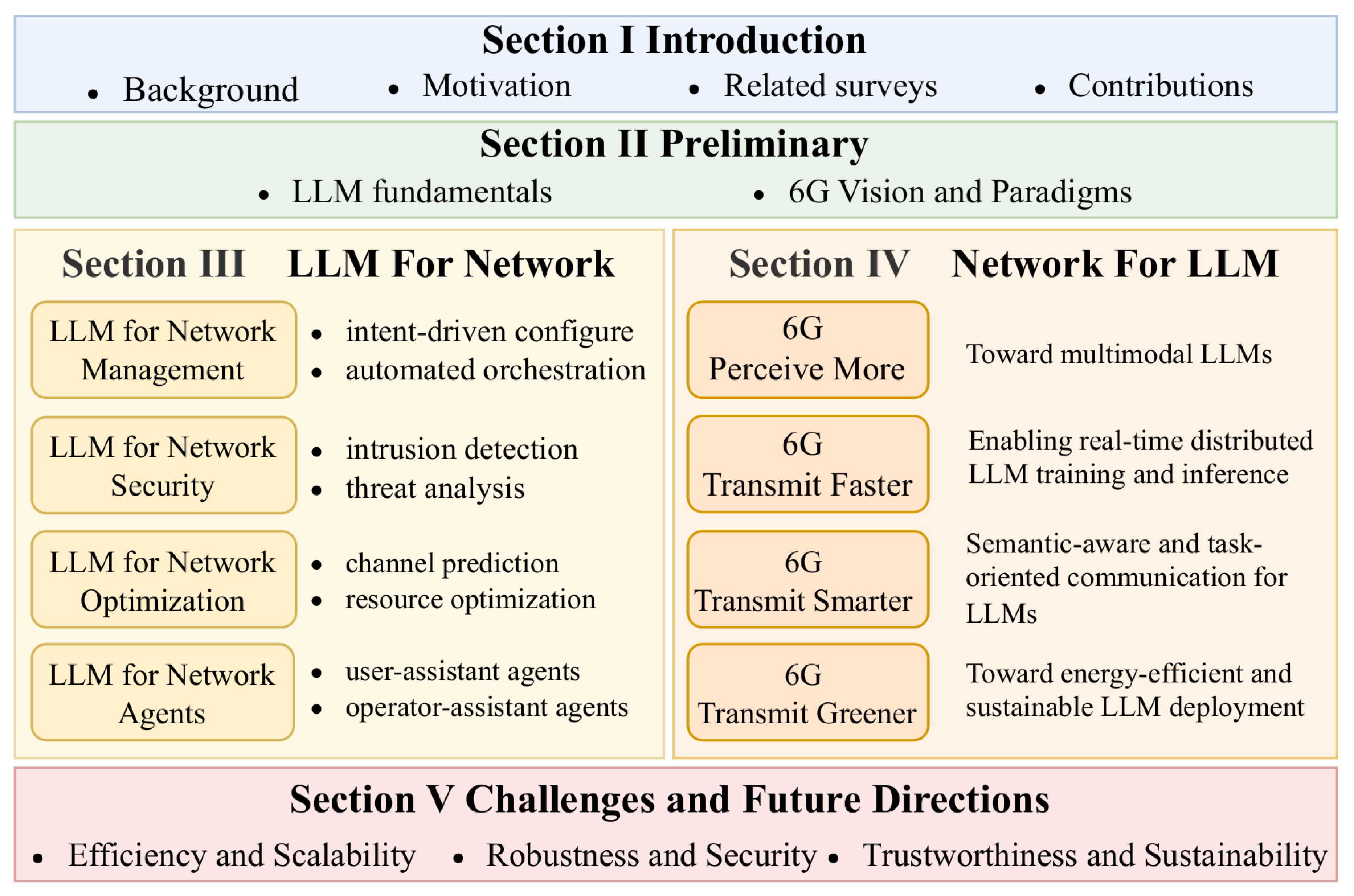

- We establish a unified two-perspective taxonomy, namely LLM for Network and Network for LLM, to systematically describe the co-evolution of LLMs and 6G systems. This framework overcomes the limitations of prior surveys that examine only a single direction.

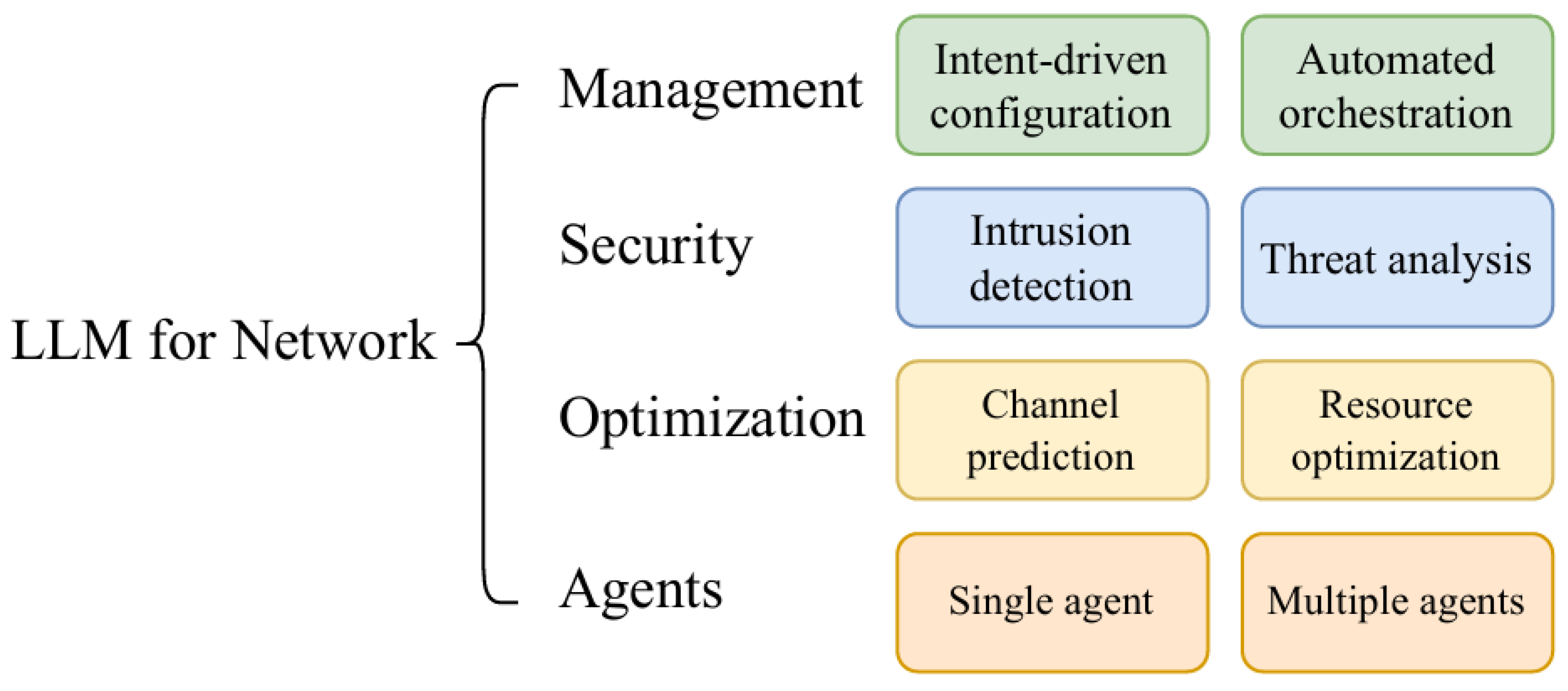

- A comprehensive synthesis of how LLMs enhance 6G network intelligence in four principal domains is provided: network management, network security, network optimization, and agent-based interaction. For each domain, we summarize representative approaches, enabling techniques, and the emerging trend toward AI-native network operation.

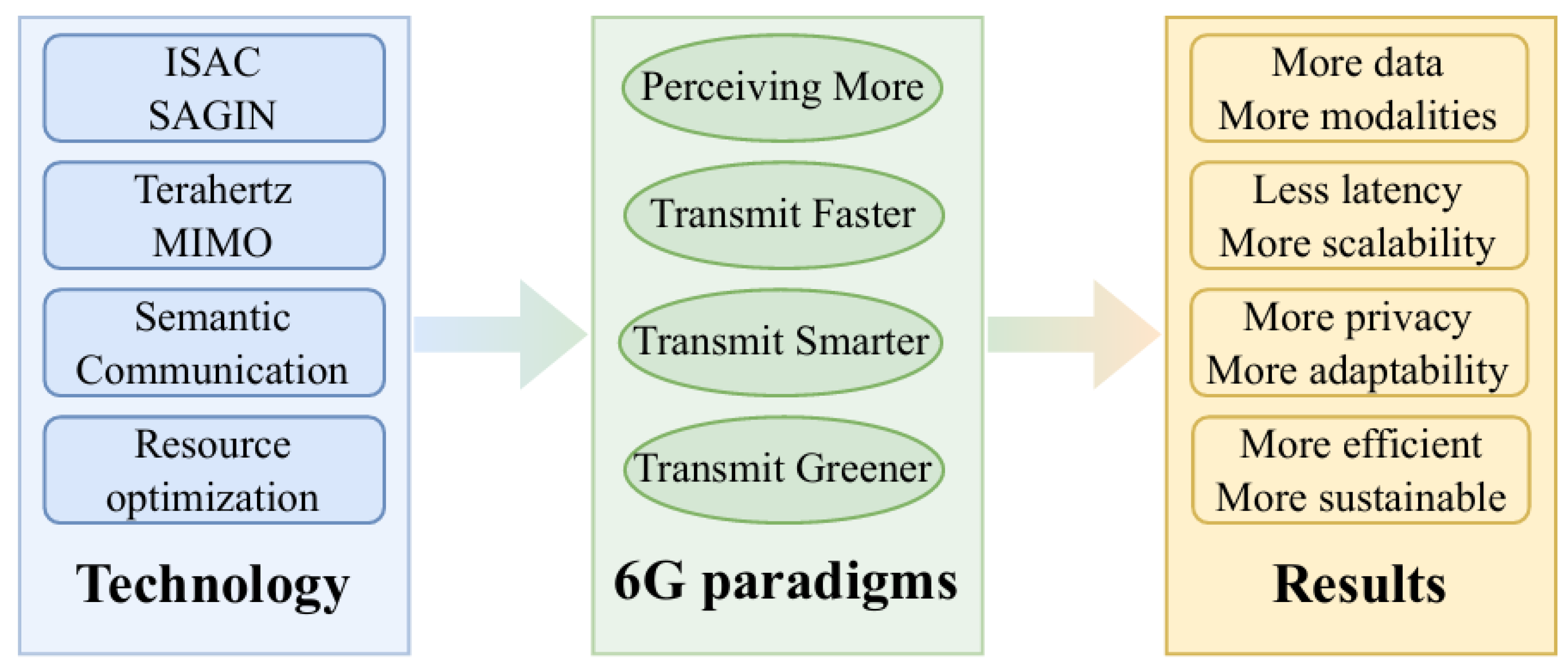

- We analyze how fundamental capabilities of 6G systems, including advanced sensing, high-capacity and low-latency transmission, semantic and task-oriented communication, and energy-efficient design, support scalable, timely, and sustainable LLM training and inference.

- Major challenges are identified at the intersection of LLMs and 6G, such as scalability, robustness, trustworthiness, privacy, and sustainability. Building on these challenges, we outline promising research directions related to model-network co-design, cross-layer optimization, and future AI-native architectures.

2. Preliminary

2.1. LLM Fundamentals

2.1.1. Model Architecture

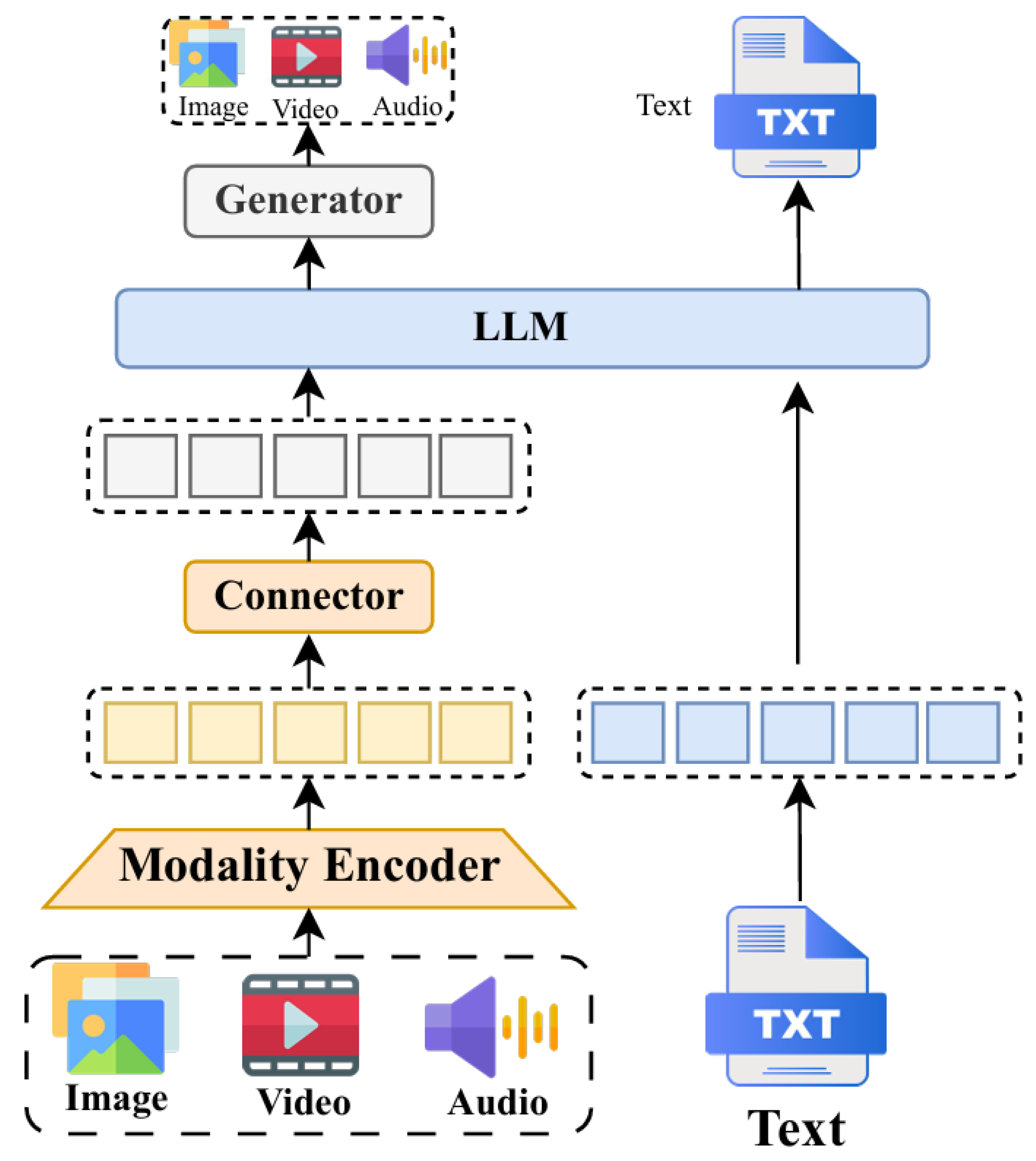

2.1.2. Unimodal and Multimodal LLMs

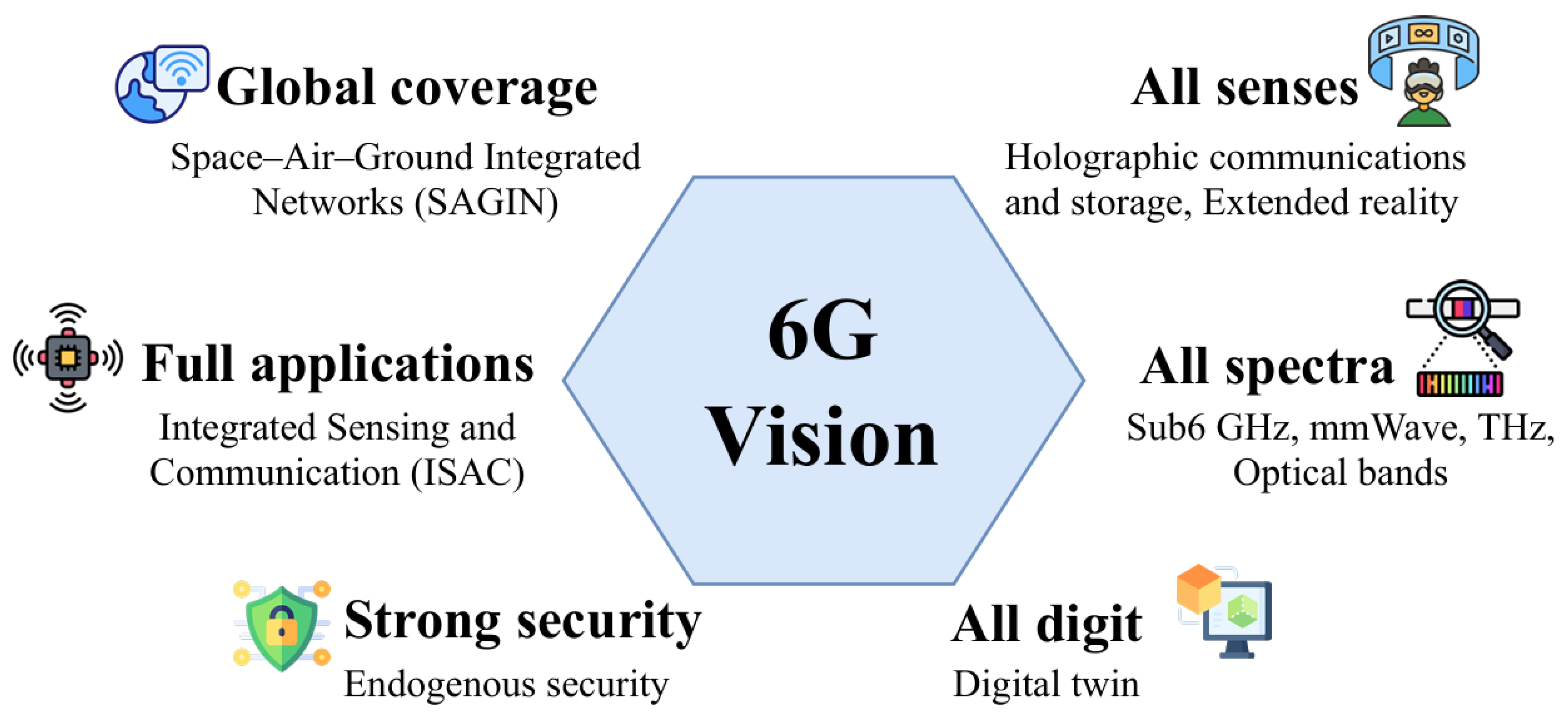

2.2. 6G Vision and Paradigms

3. LLM for Network

3.1. LLM for Network Management

3.1.1. Intent-Driven Configuration

3.1.2. Automated Orchestration

3.2. LLM for Network Security

3.2.1. Intrusion Detection

3.2.2. Threat Analysis

3.3. LLM for Network Optimization

3.3.1. Channel Prediction

3.3.2. Resource Optimization

3.4. LLM for Network Agents

4. Network for LLM

4.1. 6G Perceive More: Toward Multimodal LLMs

4.2. 6G Transmit Faster: Enabling Real-Time Distributed LLM Training and Inference

4.2.1. Model Caching

4.2.2. Model Training

4.2.3. Model Inference

4.3. 6G Transmit Smarter: Semantic-Aware and Task-Oriented Communication for LLMs

4.4. 6G Transmit Greener: Toward Sustainable Edge Intelligence

5. Challenges and Future Directions

5.1. Efficiency and Scalability

5.2. Robustness and Security

5.3. Trustworthiness and Sustainability

6. Conclusions

References

- Boateng, G.O.; Sami, H.; Alagha, A.; Elmekki, H.; Hammoud, A.; Mizouni, R.; Mourad, A.; Otrok, H.; Bentahar, J.; Muhaidat, S.; et al. A Survey on Large Language Models for Communication, Network, and Service Management: Application Insights, Challenges, and Future Directions. IEEE Commun. Surveys Tuts. 2025. [CrossRef]

- Wang, C.X.; You, X.; Gao, X.; Zhu, X.; Li, Z.; Zhang, C.; Wang, H.; Huang, Y.; Chen, Y.; Haas, H.; et al. On the Road to 6G: Visions, Requirements, Key Technologies, and Testbeds. IEEE Commun. Surveys Tuts. 2023, 25, 905–974. [CrossRef]

- Shahid, A.; Kliks, A.; Al-Tahmeesschi, A.; Elbakary, A.; Nikou, A.; Maatouk, A.; et al.. Large-scale AI in telecom: Charting the roadmap for innovation, scalability, and enhanced digital experiences. arXiv preprint 2025. arXiv:2503.04184.

- HuaweiTech. ITU-R WP5D completed the recommendation framework for IMT-2030 (global 6G vision). https://www.huawei.com/en/huaweitech/future-technologies/itu-r-wp5d-completed-recommendation-framework-imt-2030, 2023. Accessed: 2025-10-10.

- Zhou, H.; Hu, C.; Yuan, Y.; Cui, Y.; Jin, Y.; Chen, C.; Wu, H.; Yuan, D.; Jiang, L.; Wu, D.; et al. Large Language Model (LLM) for Telecommunications: A Comprehensive Survey on Principles, Key Techniques, and Opportunities. IEEE Commun. Surveys Tuts. 2025, 27, 1955–2005. [CrossRef]

- Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; et al. Gpt-4 technical report. arXiv preprint 2023. arXiv:2303.08774.

- Liu, A.; Feng, B.; Bing Xue, B.W.; Wu, B.; Lu, C.; et al. Deepseek-v3 technical report, 2024. arXiv:2412.19437.

- Kamath, A.; Ferret, J.; Pathak, S.; Vieillard, N.; Merhej, R.; Perrin, S.; et al. Gemma 3 technical report, 2025. arXiv:2503.19786.

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; et al. LLaMA: Open and Efficient Foundation Language Models. arXiv preprint 2023. arXiv:2302.13971.

- Qu, G.; Chen, Q.; Wei, W.; Lin, Z.; Chen, X.; Huang, K. Mobile Edge Intelligence for Large Language Models: A Contemporary Survey. IEEE Commun. Surveys Tuts. 2025, pp. 1–1. [CrossRef]

- Qiu, J.; Lam, K.; Li, G.; Acharya, A.; Wong, T.Y.; Darzi, A.; Yuan, W.; Topol, E.J. LLM-based agentic systems in medicine and healthcare. Nat. Mach. Intell. 2024, 6, 1418–1420.

- Thirunavukarasu, A.J.; Ting, D.S.J.; Elangovan, K.; Gutierrez, L.; Tan, T.F.; Ting, D.S.W. Large language models in medicine. Nat. Med. 2023, 29, 1930–1940.

- Shi, J.; Guo, Q.; Liao, Y.; Wang, Y.; Chen, S.; Liang, S. Legal-LM: Knowledge Graph Enhanced Large Language Models for Law Consulting. In Proceedings of the Advanced Intelligent Computing Technology and Applications: 20th International Conference, ICIC 2024, Tianjin, China, August 5–8, 2024, Proceedings, Part IV, Berlin, Heidelberg, 2024; p. 175–186. [CrossRef]

- Cheng, Y.; Zhang, W.; Zhang, Z.; Zhang, C.; Wang, S.; Mao, S. Toward Federated Large Language Models: Motivations, Methods, and Future Directions. IEEE Commun. Surveys Tuts. 2025, 27, 2733–2764. [CrossRef]

- Shao, J.; Tong, J.; Wu, Q.; Guo, W.; Li, Z.; Lin, Z.; Zhang, J. Wirelessllm: Empowering large language models towards wireless intelligence. arXiv preprint 2024. arXiv:2405.17053.

- Xu, M.; Niyato, D.; Kang, J.; Xiong, Z.; Mao, S.; Han, Z.; Kim, D.I.; Letaief, K.B. When Large Language Model Agents Meet 6G Networks: Perception, Grounding, and Alignment. IEEE Wireless Commun. 2024, 31, 63–71. [CrossRef]

- Tang, J.; Chen, J.; He, J.; Chen, F.; Lv, Z.; Han, G.; Liu, Z.; Yang, H.H.; Li, W. Towards General Industrial Intelligence: A Survey of Large Models as a Service in Industrial IoT. IEEE Commun. Surveys Tuts. 2025, pp. 1–1. [CrossRef]

- Lin, Z.; Qu, G.; Chen, Q.; Chen, X.; Chen, Z.; Huang, K. Pushing large language models to the 6g edge: Vision, challenges, and opportunities. IEEE Commun. Mag. 2025, 63, 52–59. [CrossRef]

- Mao, Y.; Yu, X.; Huang, K.; Angela Zhang, Y.J.; Zhang, J. Green Edge AI: A Contemporary Survey. Proc. IEEE 2024, 112, 880–911. [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. In Proceedings of the Adv. Neural Inf. Process. Syst., Red Hook, NY, USA, Dec. 2017; p. 6000–6010. [CrossRef]

- Dosovitskiy, A. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint 2020. arXiv:2010.11929.

- Shazeer, N. Fast transformer decoding: One write-head is all you need. arXiv preprint 2019. arXiv:1911.02150.

- Ainslie, J.; Lee-Thorp, J.; De Jong, M.; Zemlyanskiy, Y.; Lebrón, F.; Sanghai, S. Gqa: Training generalized multi-query transformer models from multi-head checkpoints. arXiv preprint 2023. arXiv:2305.13245.

- Devlin, J.; Chang, M.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the Proc. Conf. North Amer. Chapter Assoc. Comput. Linguistics: Human Language Technologies, Minneapolis, MN, USA, jun. 2019; pp. 4171–4186. [CrossRef]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the limits of transfer learning with a unified text-to-text transformer. J. Mach. Learn. Res. 2020, 21, 1–67.

- Lewis, M.; Liu, Y.; Goyal, N.; Ghazvininejad, M.; Mohamed, A.; Levy, O.; Stoyanov, V.; Zettlemoyer, L. BART: Denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. arXiv preprint 2019. arXiv:1910.13461.

- Chowdhery, A.; Narang, S.; Devlin, J.; Bosma, M.; Mishra, G.; et al.. PaLM: Scaling Language Modeling with Pathways. Journal of Machine Learning Research 2023, 24, 1–113.

- Song, S.; Li, X.; Li, S.; Zhao, S.; Yu, J.; Ma, J.; Mao, X.; Zhang, W.; Wang, M. How to Bridge the Gap Between Modalities: Survey on Multimodal Large Language Model. IEEE Trans. Knowl. Data Eng. 2025, 37, 5311–5329. [CrossRef]

- Li, Y.; Jiang, S.; Hu, B.; Wang, L.; Zhong, W.; Luo, W.; Ma, L.; Zhang, M. Uni-MoE: Scaling Unified Multimodal LLMs With Mixture of Experts. IEEE Trans. Pattern Anal. Mach. Intell. 2025, 47, 3424–3439. [CrossRef]

- Yin, S.; Fu, C.; Zhao, S.; Li, K.; Sun, X.; Xu, T.; Chen, E. A Survey on Multimodal Large Language Models. Natl. Sci. Rev. 2024, 11. [CrossRef]

- Yang, X.; Wu, W.; Feng, S.; Wang, M.; Wang, D.; Li, Y.; Sun, Q.; Zhang, Y.; Fu, X.; Poria, S. MM-InstructEval: Zero-shot evaluation of (Multimodal) Large Language Models on multimodal reasoning tasks. Inf. Fusion 2025, 122, 103204. [CrossRef]

- Ding, N.; Qin, Y.; Yang, G.; Wei, F.; Yang, Z.; Su, Y.; Hu, S.; Chen, Y.; Chan, C.M.; Chen, W.; et al. Parameter-efficient fine-tuning of large-scale pre-trained language models. Nat. Mach. Intell. 2023, 5, 220–235. [CrossRef]

- Peng, B.; Li, C.; He, P.; Galley, M.; Gao, J. Instruction tuning with gpt-4. arXiv preprint 2023. arXiv:2304.03277.

- Chataut, R.; Nankya, M.; Akl, R. 6G networks and the AI revolution-Exploring technologies, applications, and emerging challenges. Sensors 2024, 24, 1888.

- Chen, X.; Guo, Z.; Wang, X.; Feng, C.; Yang, H.H.; Han, S.; Wang, X.; Quek, T.Q. Toward 6G Native-AI Network: Foundation Model-Based Cloud-Edge-End Collaboration Framework. IEEE Commun. Mag. 2025, 63, 23–30.

- Zhang, J.A.; Rahman, M.L.; Wu, K.; Huang, X.; Guo, Y.J.; Chen, S.; Yuan, J. Enabling Joint Communication and Radar Sensing in Mobile Networks—A Survey. IEEE Commun. Surveys Tuts. 2022, 24, 306–345. [CrossRef]

- Zhang, R.; He, Y.; Yuan, L.; Li, Z.; He, C.; Tan, F. Empowering 6G Ambient Intelligence with Terahertz Integrated Sensing and Communications. EEE Wireless Commun. 2024, 31, 256–263. [CrossRef]

- Cui, M.; Wu, Z.; Lu, Y.; Wei, X.; Dai, L. Near-Field MIMO Communications for 6G: Fundamentals, Challenges, Potentials, and Future Directions. IEEE Commun. Mag. 2023, 61, 40–46. [CrossRef]

- Shi, G.; Xiao, Y.; Li, Y.; Xie, X. From Semantic Communication to Semantic-Aware Networking: Model, Architecture, and Open Problems. IEEE Commun. Mag. 2021, 59, 44–50. [CrossRef]

- Lira, O.G.; Caicedo, O.M.; da Fonseca, N.L.S. Large Language Models for Zero Touch Network Configuration Management. IEEE Commun. Mag. 2025, 63, 146–153. [CrossRef]

- Mekrache, A.; Ksentini, A.; Verikoukis, C. Intent-Based Management of Next-Generation Networks: an LLM-Centric Approach. IEEE Network 2024, 38, 29–36. [CrossRef]

- Liu, X.; Li, T.; Yang, C.; Ouyang, Y.; Han, Z.; Guizani, M. Generic Intent-Driven Networking Paradigm with Different Levels of Policy Abstraction. IEEE Commun. Mag. 2025, 63, 162–168. [CrossRef]

- Li, F.; Hei, C.; Shen, J.; Li, Q.; Wang, X. Human-Intent-Driven Cellular Configuration Generation Using Program Synthesis. IEEE J. Sel. Areas Commun. 2024, 42, 658–668. [CrossRef]

- Abdallah, A.; Albaseer, A.; Çelik, A.; Abdallah, M.; Eltawil, A.M. NetOrchLLM: Mastering Wireless Network Orchestration with Large Language Models. arXiv preprint 2024. arXiv:2412.10107.

- Shokrnezhad, M.; Taleb, T. An Autonomous Network Orchestration Framework Integrating Large Language Models with Continual Reinforcement Learning. arXiv preprint 2025. arXiv:2502.16198.

- Dandoush, A.; Kumarskandpriya, V.; Uddin, M.; Khalil, U. Large language models meet network slicing management and orchestration. arXiv preprint arXiv:2403.13721 2024.

- Lai, H. Intrusion Detection Technology Based on Large Language Models. In Proceedings of the Proc. Int. Conf. Evol. Algorithms Soft Comput. Technol. (EASCT), Bengaluru, India, Oct. 2023; pp. 1–5. [CrossRef]

- Zhang, H.; Bin Sediq, A.; Afana, A.; Erol-Kantarci, M. Large Language Models in Wireless Application Design: In-Context Learning-enhanced Automatic Network Intrusion Detection. In Proceedings of the Proc. IEEE Global Commun. Conf. (GLOBECOM), Cape Town, South Africa, Dec. 2024; pp. 2479–2484. [CrossRef]

- Yang, T.; Huang, Z.; Wu, M.; Zhang, Y. Large Language Models for Network Intrusion Detection Systems: Foundations, Implementations, and Future Directions. arXiv preprint 2025. arXiv:2507.04752.

- Chatzimiltis, S.; Shojafar, M.; Mashhadi, M.B.; Tafazolli, R. Interpretable Anomaly-Based DDoS Detection in AI-RAN with XAI and LLMs. arXiv preprint 2025. arXiv:2507.21193.

- Zhang, J.; Cheng, K.; Xiong, X.; Dong, R.; Huang, J.; Jie, S. Construction of Cyber-attack Attribution Framework Based on LLM. In Proceedings of the Proc. IEEE Int. Conf. Trust, Secur. Privacy Comput. Commun. (TrustCom), Sanya, China, Apr. 2024; pp. 2250–2255. [CrossRef]

- Liu, X.; Liang, J.; Yan, Q.; Ye, M.; Jia, J.; Xi, Z. CyLens: Towards Reinventing Cyber Threat Intelligence in the Paradigm of Agentic Large Language Models. arXiv preprint 2025. arXiv:2502.20791.

- Bai, L.; Huang, Z.; Sun, M.; Cheng, X.; Cui, L. Multi-Modal Intelligent Channel Modeling: A New Modeling Paradigm via Synesthesia of Machines. IEEE Commun. Surveys Tuts. 2025, pp. 1–1. [CrossRef]

- Liu, B.; Liu, X.; Gao, S.; Cheng, X.; Yang, L. LLM4CP: Adapting Large Language Models for Channel Prediction. J. Commun. Inf. Networks 2024, 9, 113 – 125. [CrossRef]

- Fan, S.; Liu, Z.; Gu, X.; Li, H. Csi-LLM: A Novel Downlink Channel Prediction Method Aligned with LLM Pre-Training. In Proceedings of the Proc. IEEE Wireless Commun. Netw. Conf. (WCNC), Milan, Italy, Mar. 2025. [CrossRef]

- Lee, W.; Park, J. LLM-Empowered Resource Allocation in Wireless Communications Systems. arXiv preprint 2024. arXiv:2408.02944.

- Noh, H.; Shim, B.; Yang, H.J. Adaptive Resource Allocation Optimization Using Large Language Models in Dynamic Wireless Environments. IEEE Trans. Veh. Technol. 2025, 74, 16630–16635. [CrossRef]

- Zhou, H.; Hu, C.; Yuan, D.; Yuan, Y.; Wu, D.; Liu, X.; Zhang, C. Large Language Model (LLM)-enabled In-context Learning for Wireless Network Optimization: A Case Study of Power Control. arXiv preprint 2024. arXiv:2408.00214.

- Luo, H.; Liu, Y.; Zhang, R.; Wang, J.; Sun, G.; Niyato, D.; Yu, H.; Xiong, Z.; Wang, X.; Shen, X. Toward edge general intelligence with multiple-large language model (Multi-LLM): architecture, trust, and orchestration. IEEE Trans. Cognit. Commun. Networking 2025.

- Protocol, M.C. Introduction to model context protocol (mcp). URL: https://modelcontextprotocol. io/introduction 2025.

- Google. Agent-to-Agent (A2A) Protocol. https://google.github.io/A2A/, 2024. Accessed: May 2025.

- Agent Network Protocol Contributors. Agent Network Protocol (ANP) Official Website. https://agent-network-protocol.com/, 2024. Accessed: May 2025.

- Zhao, C.; Wang, J.; Zhang, R.; Niyato, D.; Sun, G.; Du, H.; Kim, D.I.; Jamalipour, A. Generative AI-Enabled Wireless Communications for Robust Low-Altitude Economy Networking. IEEE Wireless Commun. 2025, pp. 1–9. [CrossRef]

- Wang, J.; Du, H.; Niyato, D.; Kang, J.; Cui, S.; Shen, X.; Zhang, P. Generative AI for integrated sensing and communication: Insights from the physical layer perspective. IEEE Wireless Commun. 2024, 31, 246–255.

- Cheng, X.; Zhang, H.; Zhang, J.; Gao, S.; Li, S.; Huang, Z.; Bai, L.; Yang, Z.; Zheng, X.; Yang, L. Intelligent multi-modal sensing-communication integration: Synesthesia of machines. IEEE Commun. Surveys Tuts. 2023, 26, 258–301.

- Cheng, L.; Zhang, H.; Di, B.; Niyato, D.; Song, L. Large Language Models Empower Multimodal Integrated Sensing and Communication. IEEE Commun. Mag. 2025, 63, 190–197. [CrossRef]

- 3GPP. Study on supporting edge computing in 5G networks (Release 18). Technical Report TR 23.758, 3rd Generation Partnership Project (3GPP), 2022. Available: https://www.3gpp.org/DynaReport/23758.htm.

- Houlsby, N.; Giurgiu, A.; Jastrzebski, S.; Morrone, B.; De Laroussilhe, Q.; Gesmundo, A.; Attariyan, M.; Gelly, S. Parameter-Efficient Transfer Learning for NLP. In Proceedings of the Proc. Int. Conf. Mach. Learn. (ICML), 2019, pp. 2790–2799.

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, L.; Chen, W. LoRA: Low-rank adaptation of large language models. In Proceedings of the International Conference on Learning Representations (ICLR) 2022. arXiv preprint arXiv:2106.09685.

- Liu, H.; Pan, Y.; He, T.; Ghaffari, P.; B. Zhuang, Z. HIP: Attention-space sub-quadratic attention with hierarchical index attention pruning. arXiv preprint arXiv:2405.04962 2024.

- Qiang, X.; Chang, Z.; Ye, C.; Hämäläinen, T.; Min, G. Split Federated Learning Empowered Vehicular Edge Intelligence: Concept, Adaptive Design, and Future Directions. IEEE Wireless Commun. 2025, 32, 90–97. [CrossRef]

- Chen, S.; Long, G.; Shen, T.; Jiang, J. Prompt federated learning for weather forecasting: Toward foundation models on meteorological data. arXiv preprint 2023. arXiv:2301.09152.

- Qiang, X.; Chang, Z.; Hu, Y.; Liu, L.; Hämäläinen, T. Adaptive and Parallel Split Federated Learning in Vehicular Edge Computing. IEEE Internet Things J. 2025, 12, 4591–4604. [CrossRef]

- Qiang, X.; Liu, H.; Zhang, X.; Chang, Z.; Liang, Y.C. Deploying Large AI Models on Resource-Limited Devices with Split Federated Learning. arXiv preprint 2025. arXiv:2504.09114.

- Yu, L.; Chang, Z.; Jia, Y.; Min, G. Model Partition and Resource Allocation for Split Learning in Vehicular Edge Networks. IEEE Trans. Intell. Transp. Syst. 2025, 26, 1–15. [CrossRef]

- Liang, Y.; Ge, C.; Tong, Z.; Song, Y.; Wang, J.; Xie, P. Not all patches are what you need: Expediting vision transformers via token reorganizations. arXiv preprint 2022. arXiv:2202.07800.

- Ding, D.; Mallick, A.; Wang, C.; Sim, R.; Mukherjee, S.; Ruhle, V.; Lakshmanan, L.V.; Awadallah, A.H. Hybrid llm: Cost-efficient and quality-aware query routing. arXiv preprint 2024. arXiv:2404.14618.

- Lan, Q.; Zeng, Q.; Popovski, P.; Gündüz, D.; Huang, K. Progressive Feature Transmission for Split Classification at the Wireless Edge. IEEE Trans. Wireless Commun. 2023, 22, 3837–3852. [CrossRef]

- Ma, R.; Wang, J.; Qi, Q.; Yang, X.; Sun, H.; Zhuang, Z.; Liao, J. Poster: PipeLLM: Pipeline LLM inference on heterogeneous devices with sequence slicing. In Proceedings of the Proc. ACM SIGCOMM, New York, NY, USA, sep. 2023; pp. 1126–1128.

- Ohta, S.; Nishio, T. λ-split: A privacy-preserving split computing framework for cloud-powered generative AI. arXiv preprint, 2023. arXiv:2310.14651.

- Du, B.; Du, H.; Niyato, D.; Li, R. Task-Oriented Semantic Communication in Large Multimodal Models-Based Vehicle Networks. IEEE Trans. Mob. Comput. 2025, 24, 9822–9836. [CrossRef]

- Wang, Z.; Deng, Y.; Aghvami, H. Task-Oriented and Semantics-aware Communication Framework for Augmented Reality. arXiv preprint 2023. arXiv:2306.15470.

- Xiang, Z.; Yu, F.; Deng, Q.; Li, Y.; Wan, Z. Scene Understanding Enabled Semantic Communication with Open Channel Coding. arXiv preprint 2025. arXiv:2501.14520.

- Strubell, E.; Ganesh, A.; McCallum, A. Energy and policy considerations for deep learning in NLP. In Proceedings of the Proceedings of the 57th annual meeting of the association for computational linguistics, 2019, pp. 3645–3650.

- Wilhelm, P.; Wittkopp, T.; Kao, O. Beyond Test-Time Compute Strategies: Advocating Energy-per-Token in LLM Inference. In Proceedings of the Proceedings of the 5th Workshop on Machine Learning and Systems, New York, NY, USA, 2025; EuroMLSys ’25, p. 208–215. [CrossRef]

- Li, Y.; Hu, Z.; Choukse, E.; Fonseca, R.; Suh, G.E.; Gupta, U. Ecoserve: Designing carbon-aware ai inference systems. arXiv preprint arXiv:2502.05043 2025.

- Qiang, X.; Hu, Y.; Chang, Z.; Hamalainen, T. Importance-aware data selection and resource allocation for hierarchical federated edge learning. Future Gener. Comput. Syst. 2024, 154, 35–44.

- Wang, Z.; Wang, P.; Liu, K.; Wang, P.; Fu, Y.; Lu, C.T.; Aggarwal, C.C.; Pei, J.; Zhou, Y. A Comprehensive Survey on Data Augmentation. IEEE Trans. Knowl. Data Eng. 2025, pp. 1–20. [CrossRef]

- Hu, E.J.; Shen, Y.; Wallis, P.; Allen-Zhu, Z.; Li, Y.; Wang, L.; Chen, W. LoRA: Low-Rank Adaptation of Large Language Models. arXiv 2021. arXiv:2106.09685.

- Zhang, X.; Chen, W.; Zhao, H.; Chang, Z.; Han, Z. Joint Accuracy and Latency Optimization for Quantized Federated Learning in Vehicular Networks. IEEE Internet Things J. 2024, 11, 28876–28890. [CrossRef]

- Liu, J.; Chang, Z.; Ye, C.; Mumtaz, S.; Hämäläinen, T. Game-Theoretic Power Allocation and Client Selection for Privacy-Preserving Federated Learning in IoMT. IEEE Trans. Commun. 2025, 73, 5864–5880. [CrossRef]

| Refs. | Focus |

|---|---|

| [1] | Applications of LLMs in communication, network, and service management. |

| [2] | A overview of 6G vision, technologies, architecture, and challenges. |

| [5] | Principles and enabling techniques for applying LLMs in telecommunications. |

| [10] | Mobile edge intelligence and edge–cloud collaboration for LLMs. |

| [14] | Federated learning frameworks for large-scale LLM training. |

| [17] | Surveyed foundation models for enabling GII in IIoT and proposed the SCCE framework. |

| [18] | Edge deployment and optimization of LLMs in 6G systems. |

| [19] | A survey on energy-efficient design for green edge AI. |

| This work | A unified bidirectional survey bridging "LLM for Network" and "Network for LLM". |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).