Submitted:

01 March 2026

Posted:

06 March 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

Ⅱ. Methodology Foundation

Ⅲ. Methodology

A. Problem Formulation

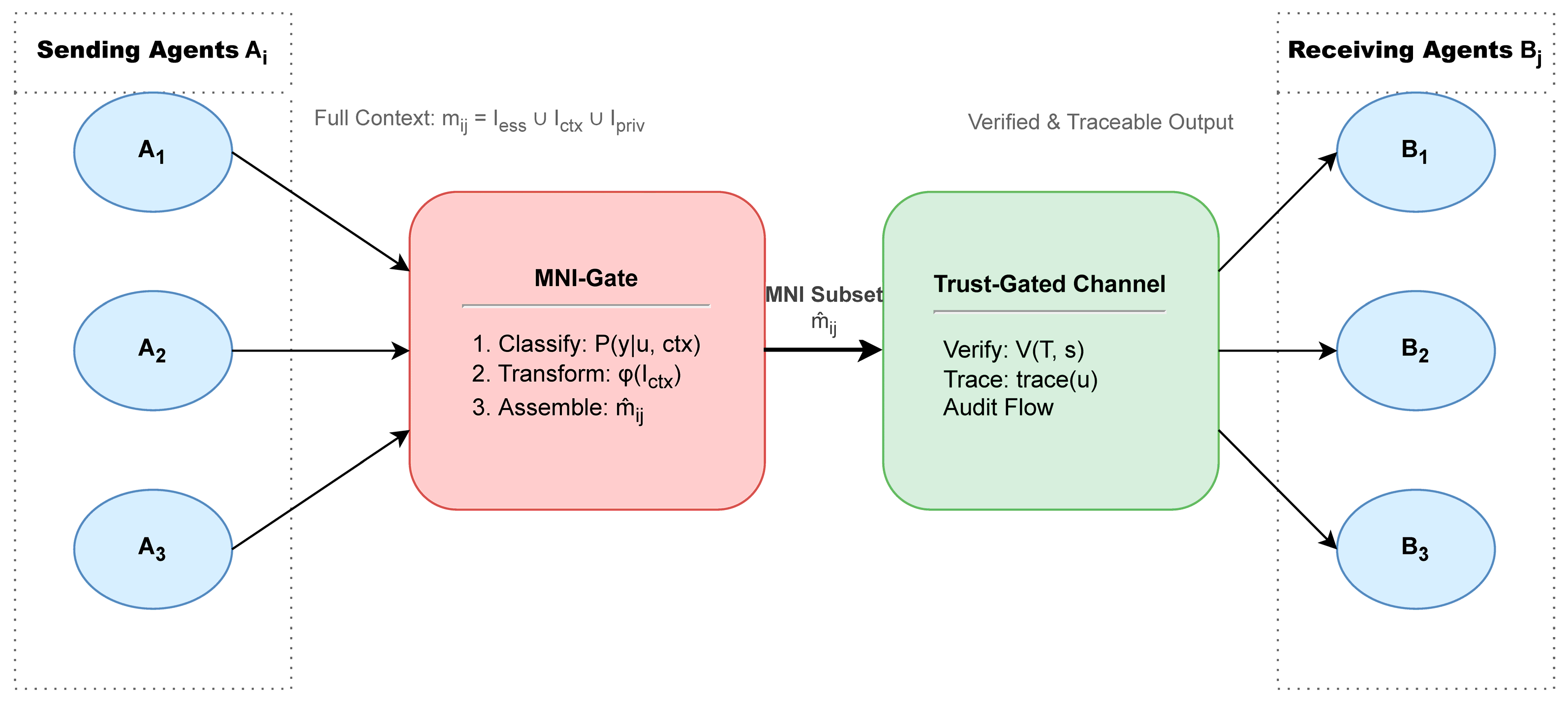

B. MNI-Gate Architecture

C. Trust-Gated Channel

D. Evidence Traceability

Ⅳ. Experimental Setup

A. Tasks and Datasets

B. Baselines and Metrics

C. Implementation Details

V. Results and Analysis

A. Overall Performance

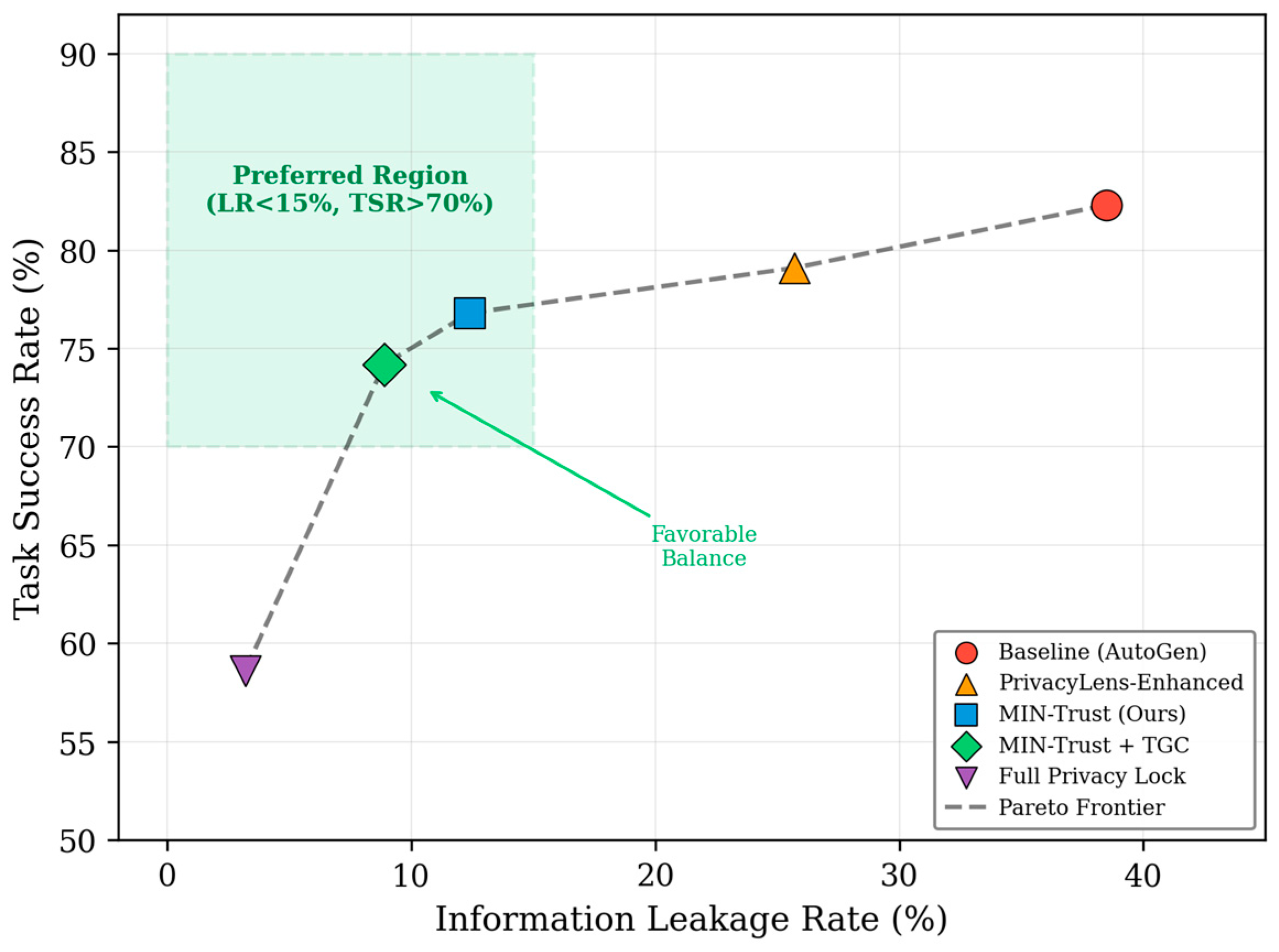

B. Privacy-Utility Trade-Off

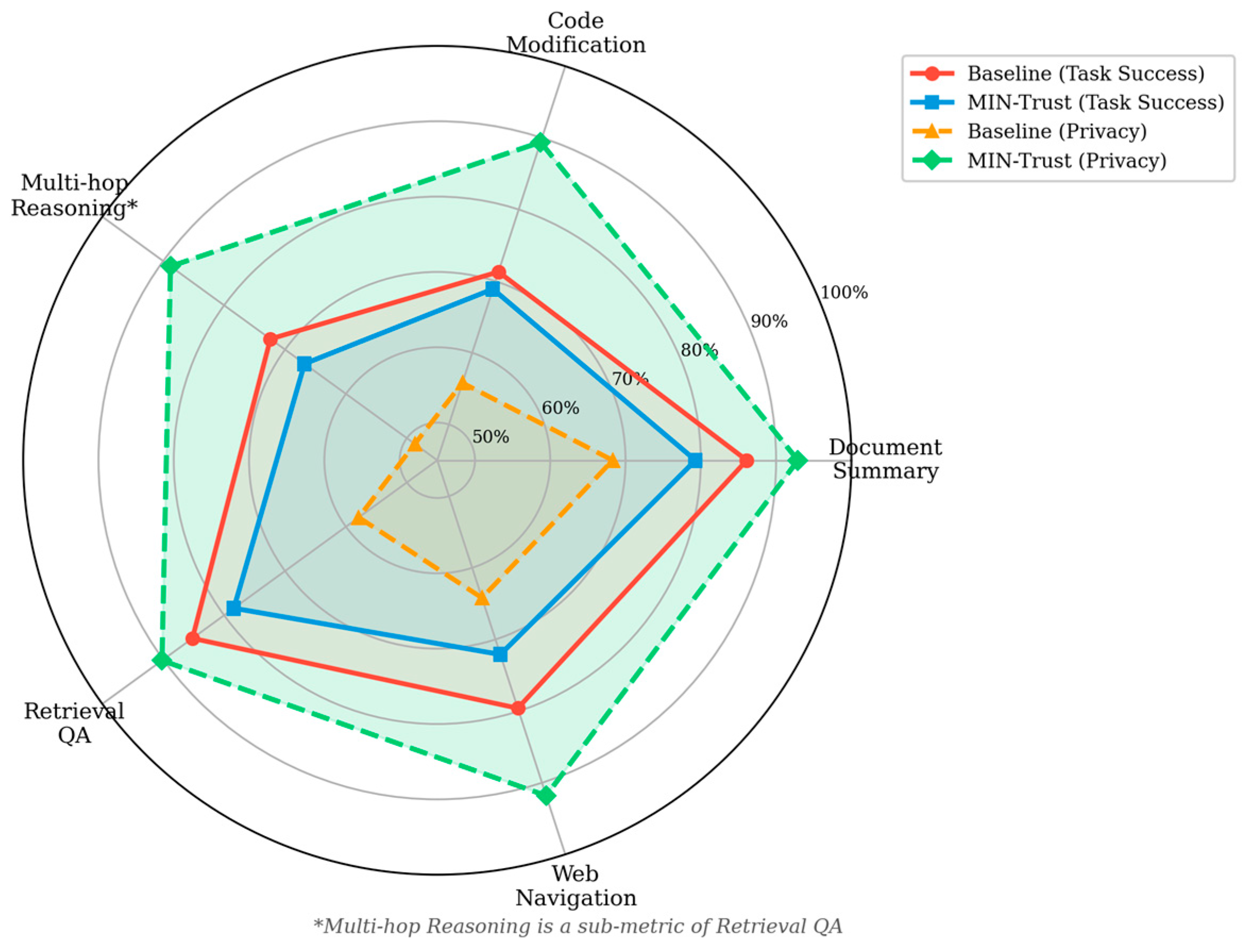

C. Task-Specific Analysis

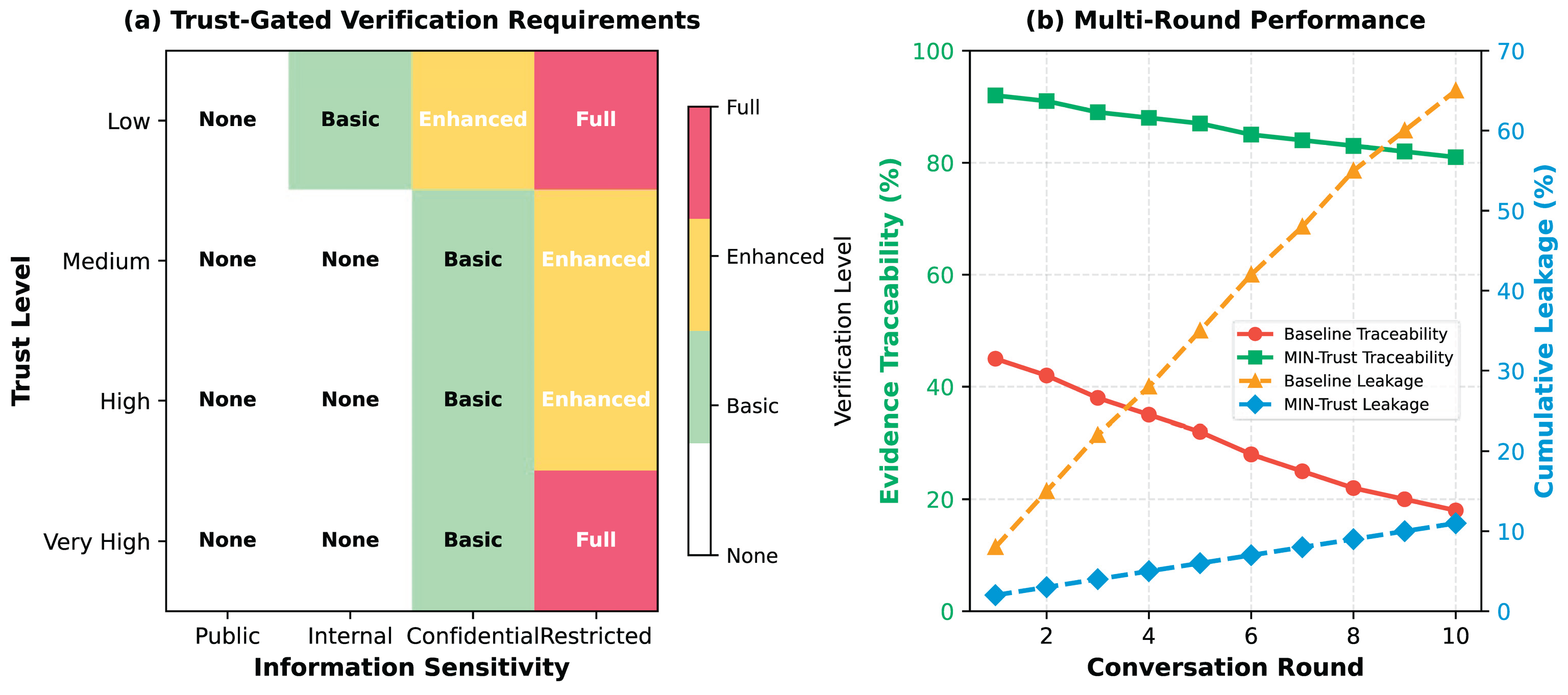

D. Trust-Gated Channel Analysis

E. Ablation Study

Ⅵ. Discussion

A. Key Insights

B. Limitations

C. Broader Implications

Ⅶ. Conclusion

References

- Wu, Q.; Bansal, G.; Zhang, J.; Wu, Y.; Li, B.; Zhu, E.; Jiang, L.; Zhang, X.; Zhang, S.; Liu, J. AutoGen: Enabling next-gen LLM applications via multi-agent conversations. In Proceedings of the First Conference on Language Modeling, 2024. [Google Scholar]

- Wang, Y.; Gu, Q.; Wang, L.; Gao, Q.; Yang, H.; Li, Y. The emerged security and privacy of LLM agent: A survey with case studies. arXiv 2024, arXiv:2407.19354. [Google Scholar] [CrossRef]

- Nissenbaum, H. Privacy as contextual integrity. Washington Law Review 2004, vol. 79(no. 1), 119–157. [Google Scholar]

- Mireshghallah, N.; Kim, H.; Zhou, X.; Tsvetkov, Y.; Sap, M.; Shokri, R.; Choi, Y. Can LLMs keep a secret? Testing privacy implications of language models via contextual integrity theory. In Proceedings of the International Conference on Learning Representations (ICLR), 2024. [Google Scholar]

- Dwork, C.; Roth, A. The algorithmic foundations of differential privacy. Foundations and Trends in Theoretical Computer Science 2014, vol. 9(no. 3–4), 211–407. [Google Scholar] [CrossRef]

- Hu, X.; Kang, Y.; Yao, G.; Kang, T.; Wang, M.; Liu, H. Dynamic prompt fusion for multi-task and cross-domain adaptation in LLMs. arXiv 2025, arXiv:2509.18113. [Google Scholar]

- Wu, Y.; Qin, Y.; Su, X.; Lin, Y. Transformer-based risk monitoring for anti-money laundering with transaction graph integration. In Proceedings of the 2nd International Conference on Digital Economy, Blockchain and Artificial Intelligence, 2025; pp. 388–393. [Google Scholar]

- Pan, S.; Wu, D. Trustworthy summarization via uncertainty quantification and risk awareness in large language models. arXiv 2025, arXiv:2510.01231. [Google Scholar]

- Wu, D.; Pan, S. Dynamic topic evolution with temporal decay and attention in large language models. In in Proceedings of the 5th International Conference on Electronic Information Engineering and Computer Science (EIECS), 2025; pp. 1440–1444. [Google Scholar]

- Zhang, Q.; Lyu, N.; Liu, L.; Wang, Y.; Cheng, Z.; Hua, C. Graph neural AI with temporal dynamics for comprehensive anomaly detection in microservices. arXiv 2025, arXiv:2511.03285. [Google Scholar] [CrossRef]

- Zhou, Y. A unified reinforcement learning framework for dynamic user profiling and predictive recommendation, SSRN 5841223. 2025.

- Liu, R.; Zhuang, Y.; Zhang, R. Adaptive human-computer interaction strategies through reinforcement learning in complex environments. arXiv 2025, arXiv:2510.27058. [Google Scholar]

- Fan, H.; Yi, Y.; Xu, W.; Wu, Y.; Long, S.; Wang, Y. Intelligent credit fraud detection with meta-learning: Addressing sample scarcity and evolving patterns. 2025. [Google Scholar] [PubMed]

- Chang, W. C.; Dai, L.; Xu, T. Machine learning approaches to clinical risk prediction: Multi-scale temporal alignment in electronic health records. arXiv 2025, arXiv:2511.21561. [Google Scholar] [CrossRef]

- Xie, A.; Chang, W. C. Deep learning approach for clinical risk identification using transformer modeling of heterogeneous EHR data. arXiv 2025, arXiv:2511.04158. [Google Scholar] [CrossRef]

- Huang, Y.; Luan, Y.; Guo, J.; Song, X.; Liu, Y. Parameter-efficient fine-tuning with differential privacy for robust instruction adaptation in large language models. arXiv 2025, arXiv:2512.06711. [Google Scholar]

- Zhang, H.; Zhu, L.; Peng, C.; Zheng, J.; Lin, J.; Bao, R. Intelligent recommendation systems using multi-scale LoRA fine-tuning and large language models. 2025. [Google Scholar]

- Liu, R.; Zhang, R.; Wang, S. Transformer-based modeling of user interaction sequences for dwell time prediction in human-computer interfaces. arXiv 2025, arXiv:2512.17149. [Google Scholar]

- Xu, Z.; Cao, K.; Zheng, Y.; Chang, M.; Liang, X.; Xia, J. Generative distribution modeling for credit card risk identification under noisy and imbalanced transactions. 2025. [Google Scholar] [CrossRef]

- Kairouz, P. Advances and open problems in federated learning. Foundations and Trends in Machine Learning 2021, vol. 14(no. 1–2), 1–210. [Google Scholar] [CrossRef]

- Barth, A.; Datta, A.; Mitchell, J. C.; Nissenbaum, H. Privacy and contextual integrity: Framework and applications. In Proceedings of the 2006 IEEE Symposium on Security and Privacy (S&P), 2006; pp. 184–198. [Google Scholar]

- Hu, Y.; Li, J.; Gao, K.; Zhang, Z.; Zhu, H.; Yan, X. TrustOrch: A dynamic trust-aware orchestration framework for adversarially robust multi-agent collaboration. 2025. [Google Scholar]

- Xing, Y.; Wang, M.; Deng, Y.; Liu, H.; Zi, Y. Explainable representation learning in large language models for fine-grained sentiment and opinion classification. 2025. [Google Scholar] [CrossRef]

- Ying, R.; Lyu, J.; Li, J.; Nie, C.; Chiang, C. Dynamic portfolio optimization with data-aware multi-agent reinforcement learning and adaptive risk control. 2025. [Google Scholar]

- Yang, Z.; Qi, P.; Zhang, S.; Bengio, Y.; Cohen, W.; Salakhutdinov, R.; Manning, C. D. HotpotQA: A dataset for diverse, explainable multi-hop question answering. In Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP), 2018. [Google Scholar]

- Zhou, S.; Xu, F. F.; Zhu, H.; Zhou, X.; Lo, R.; Sridhar, A.; Cheng, X.; Bisk, Y.; Fried, D.; Alon, U.; Neubig, G. WebArena: A realistic web environment for building autonomous agents. arXiv 2023, arXiv:2307.13854. [Google Scholar]

- Hermann, K. M.; Kocisky, T.; Grefenstette, E.; Espeholt, L.; Kay, W.; Suleyman, M.; Blunsom, P. Teaching machines to read and comprehend. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2015; pp. 1693–1701. [Google Scholar]

- Husain, H.; Wu, H.-H.; Gazit, T.; Allamanis, M.; Brockschmidt, M. CodeSearchNet challenge: Evaluating the state of semantic code search. arXiv 2019, arXiv:1909.09436. [Google Scholar]

- He, P.; Liu, X.; Gao, J.; Chen, W. DeBERTa: Decoding-enhanced BERT with disentangled attention. In Proceedings of the International Conference on Learning Representations (ICLR), 2021. [Google Scholar]

| Method | LR↓ | TSR↑ | ET↑ | Tokens |

|---|---|---|---|---|

| Baseline | 38.5% | 82.3% | 41.2% | 1.00× |

| PrivacyLens-Enh. | 25.7% | 79.1% | 52.8% | 1.08× |

| MIN-Trust (Ours) | 12.4% | 76.8% | 84.2% | 1.23× |

| MIN-Trust + TGC | 8.9% | 74.2% | 87.6% | 1.31× |

| Full Privacy Lock | 3.2% | 58.6% | 91.3% | 0.72× |

| Task | TSR↑ (Base) | TSR↑ (Ours) | LR↓ (Base) | LR↓ (Ours) |

|---|---|---|---|---|

| Retrieval-based QA | 85.2% | 78.4% | 42.1% | 9.8% |

| Web Navigation | 79.6% | 72.1% | 35.8% | 8.2% |

| Document Summary | 86.1% | 79.3% | 31.7% | 7.1% |

| Code Modification | 71.3% | 68.9% | 44.2% | 10.6% |

| Multi-hop (sub-metric)† | 72.4% | 66.8% | 51.3% | 11.2% |

| Configuration | LR↓ | TSR↑ | ET↑ |

|---|---|---|---|

| Full MIN-Trust | 8.9% | 74.2% | 87.6% |

| w/o TGC | 12.4% | 76.8% | 84.2% |

| w/o Summarization | 10.1% | 71.5% | 85.3% |

| w/o Pointer References | 11.8% | 73.9% | 71.4% |

| Classification Only | 18.2% | 78.1% | 62.7% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).