Submitted:

03 March 2026

Posted:

04 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Research Methodology

2.1. Scope of the Review

- Q1.

- What is the current role and significance of AI in modern healthcare systems?

- Q2.

- How are different AI techniques applied across healthcare applications?

- Q3.

- What are the major challenges and limitations of AI based healthcare systems?

- Q4.

- What strategies and solutions are emerging to overcome the challenges?

- Q5.

- What are the currently operational AI based products and their applications in healthcare?

- Q6.

- How does this review differ from existing literature?

- Q7.

- What future directions can accelerate AI driven healthcare innovations?

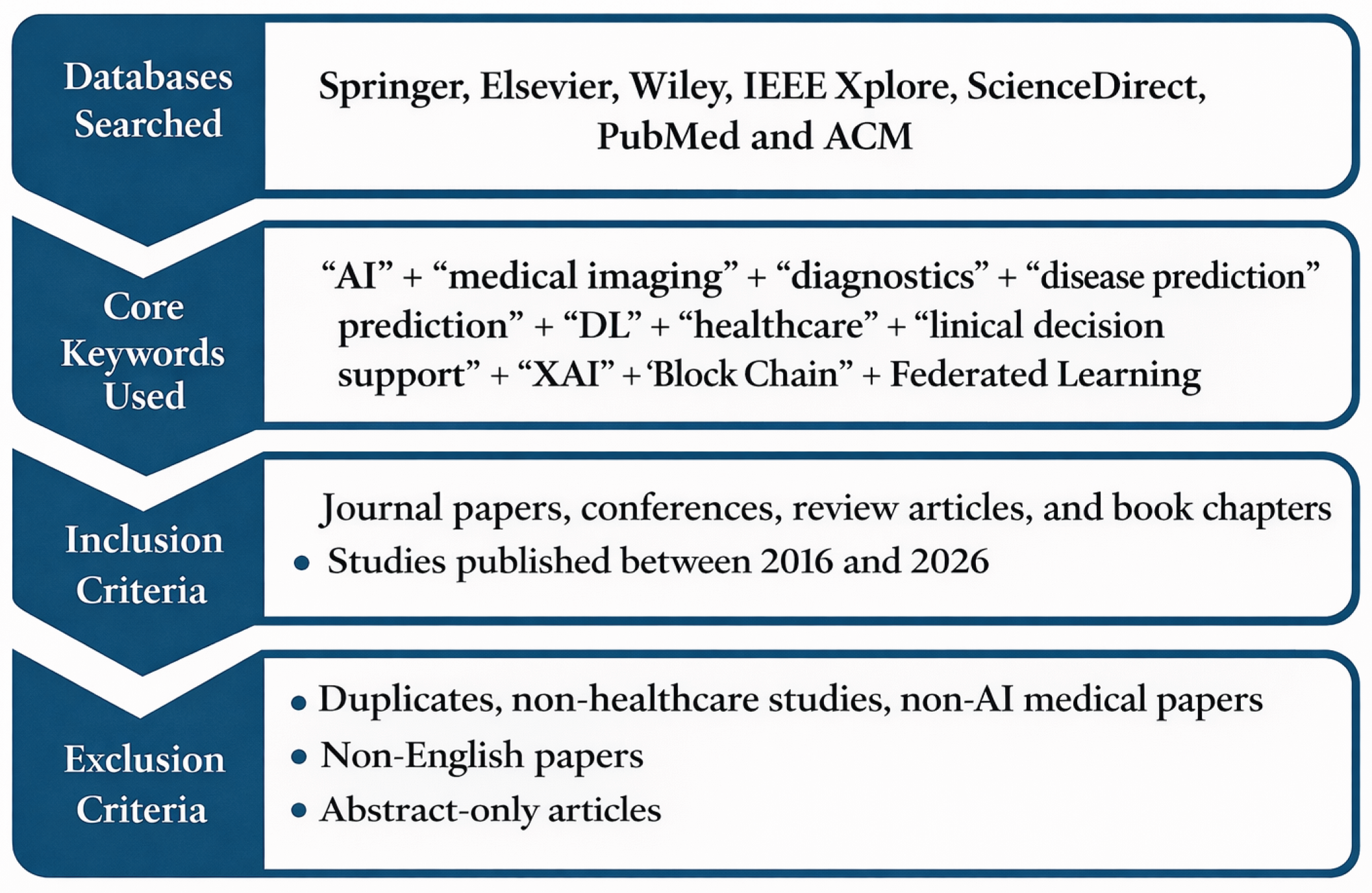

2.2. Article Selection Criteria

- “AI” + “medical imaging” + “diagnostics”

- “AI” + “disease prediction” + “deep learning”

- “AI” + “healthcare applications” + “clinical decision support”

- “AI” + “challenges” + “solutions”

- “AI” + “electronic health records” + “NLP”

- “XAI” + “blockchain” + “secure healthcare systems”

- “XAI” + “federated learning” + “privacy-preserving healthcare”

- “AI” + “emerging trends” + “future directions”

- Duplicates found across multiple databases

- Studies unrelated to healthcare or focusing purely on medical science without AI integration

- Non-English publications

- Papers with only abstracts available

- Articles discussing technologies outside the scope of AI-based healthcare systems

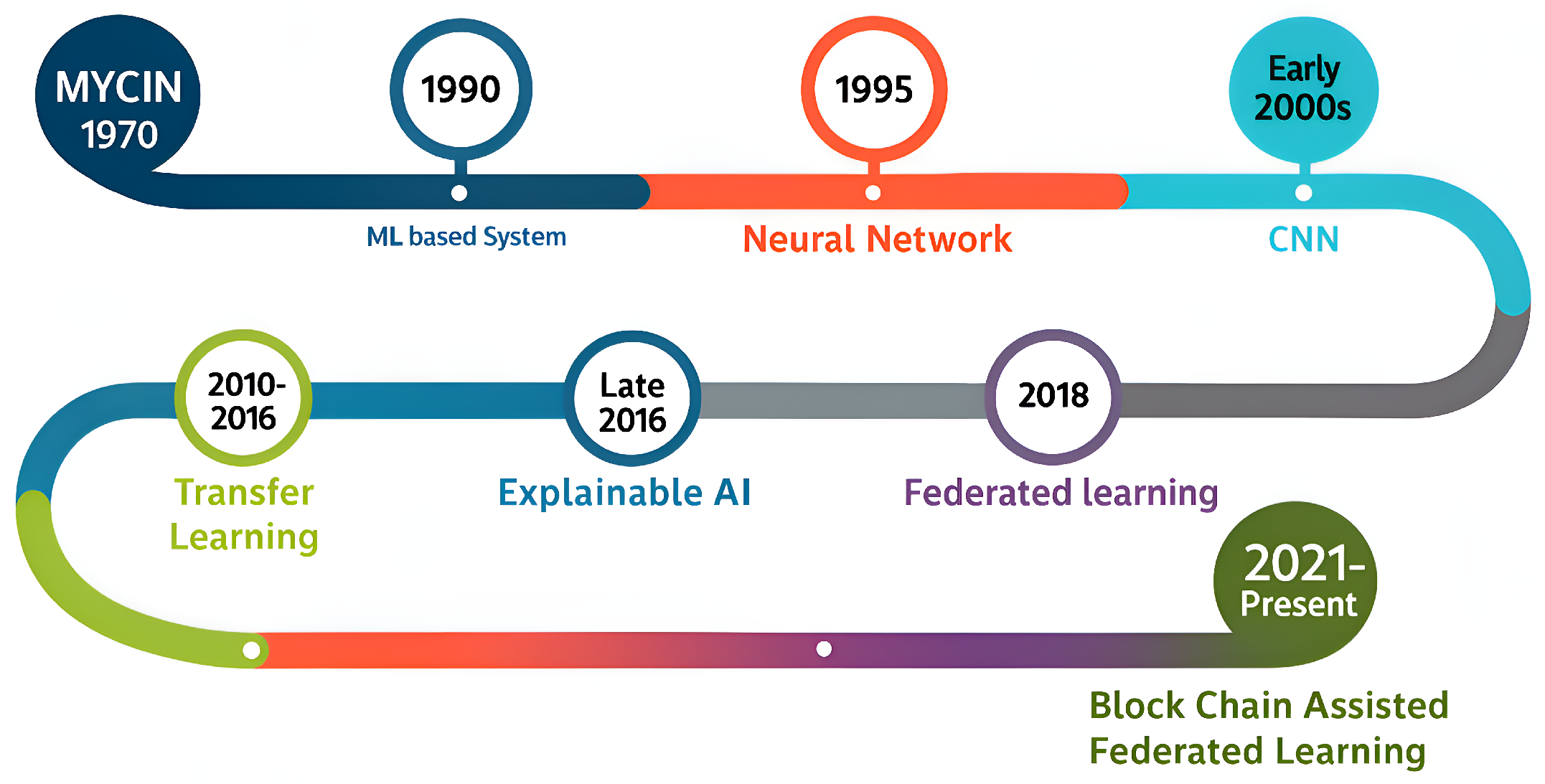

3. History of AI in Healthcare

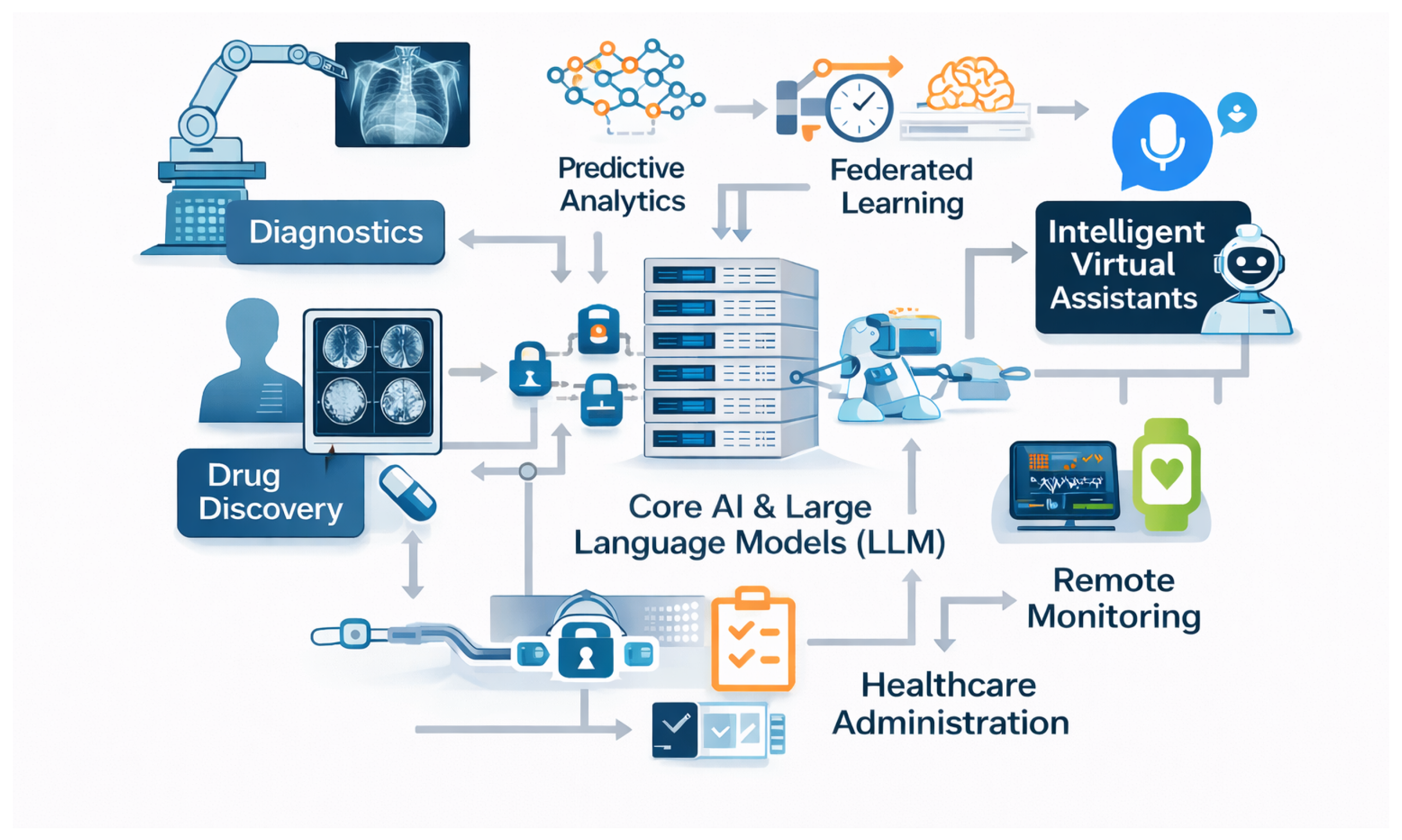

4. Role of AI in Modern Healthcare

4.1. Medical Imaging and Diagnostics

4.2. Predictive Analytics and Risk Stratification

4.3. Drug Discovery and Development

4.4. Virtual Health Assistants and Chatbots

4.5. Remote Monitoring and Wearable Devices

4.6. AI in Hospital Operations and Workflow Optimization

5. Key Challenges

5.1. Data Related Challenges

5.2. Ethical and Legal Challenges

5.3. Technical Challenges

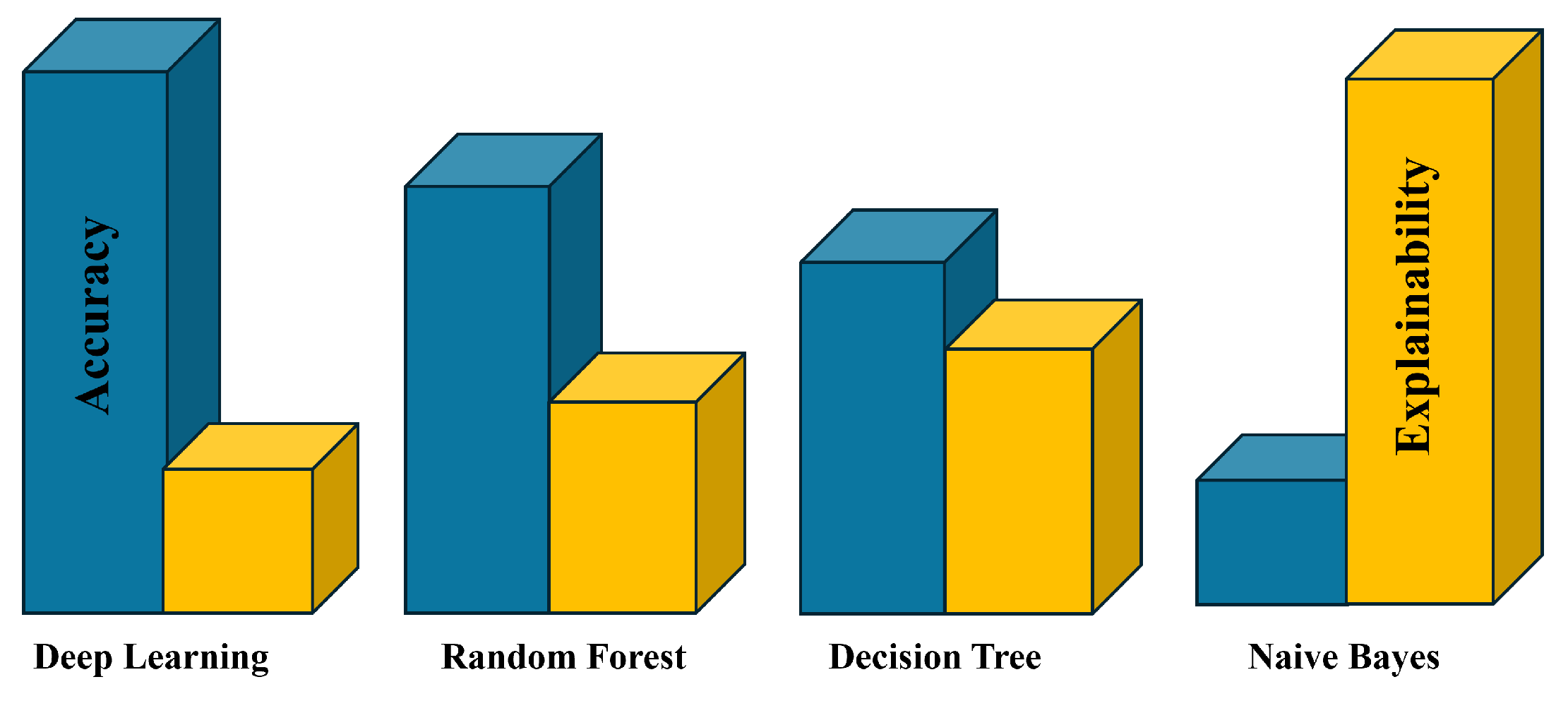

5.3.1. Model Interpretability

5.3.2. Model Biasness

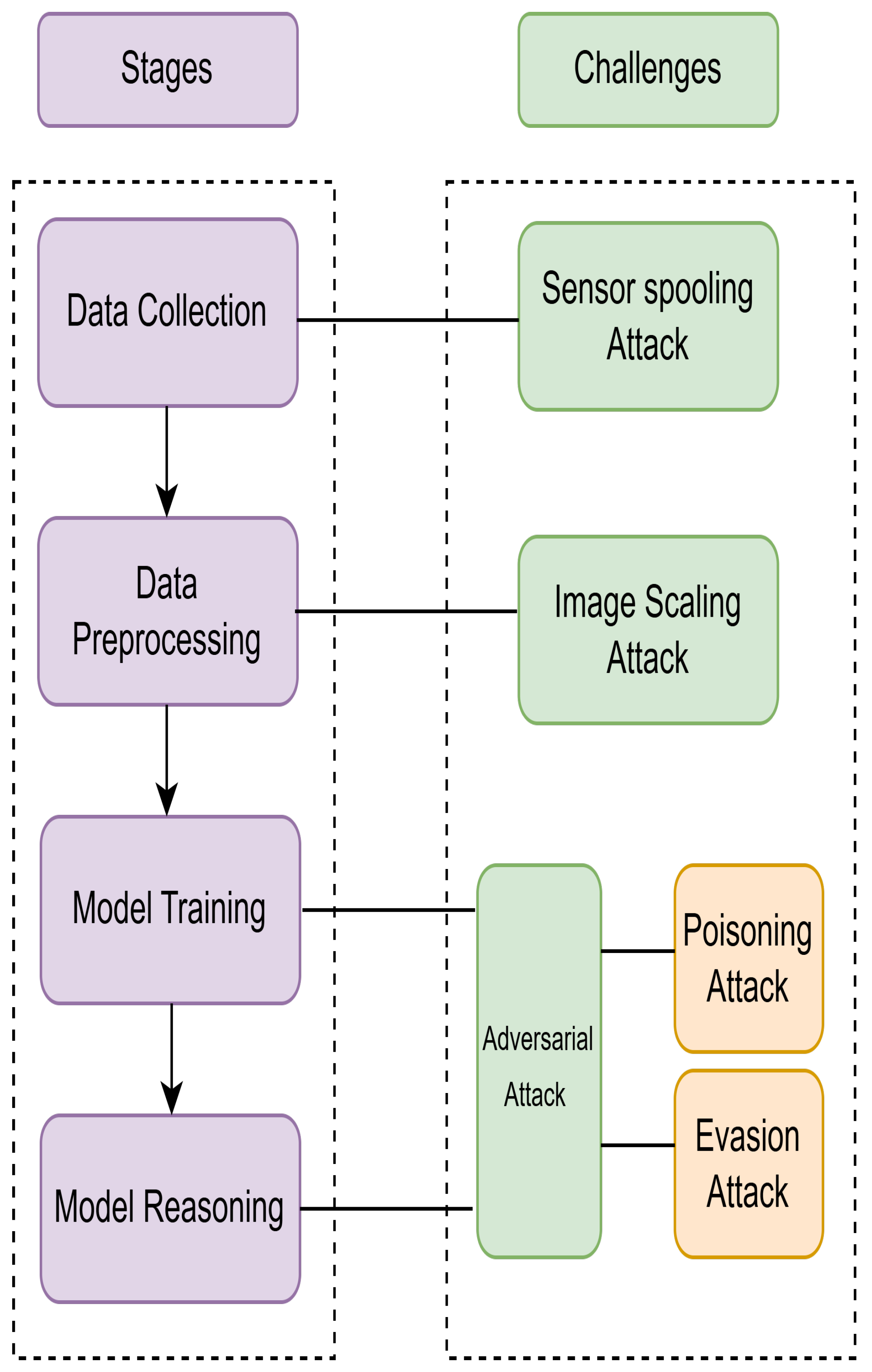

5.3.3. Security Challenges of AI models

5.3.4. Efficient and Effective AI System

5.3.5. Real-Time Processing and Scalability

6. Solution and Emerging Strategies

6.1. Solution for Data Related Challenges

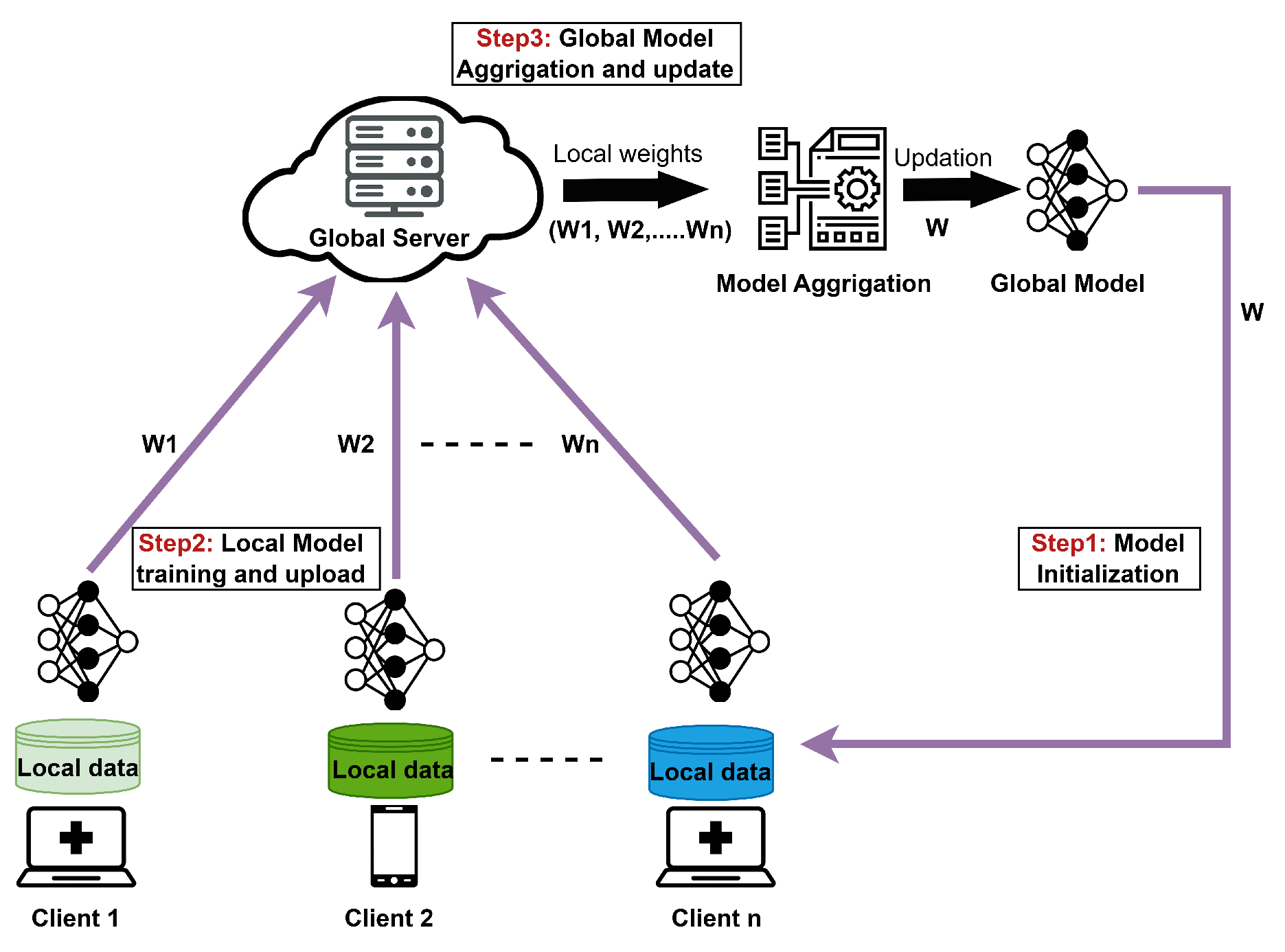

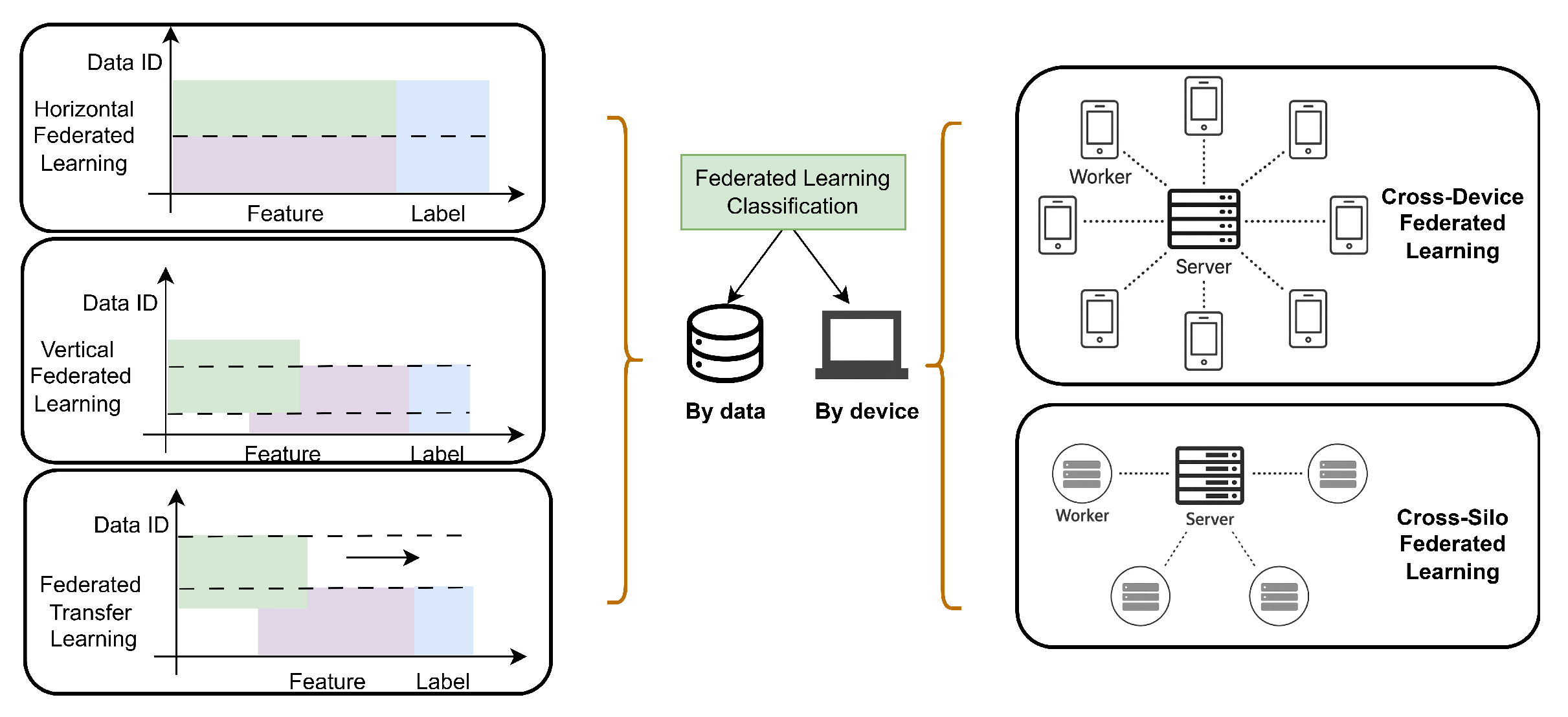

6.1.1. FL Based Solution for Data Privacy

6.1.2. Standardization Methods for Data Quality Improvement

6.1.3. Blockchain-Based Solution for Data Security

-

Blockchain-Based Solutions[115] addressed security risks associated with centralized storage and unauthorized access to sensitive health data by proposing a decentralized blockchain-based authentication framework for patient verification across interconnected hospital networks. The system enhances identity integrity and reduces reliance on centralized authorities. However, the proposed approach lacks inclusiveness, potentially excluding individuals without adequate digital access or technical literacy.[116] The authors created a system that gives patients full control over their medical records, which are often scattered across different hospitals and hard to access or share. The framework uses blockchain-based storage to ensure the integrity, traceability, and secure access of medical records while enabling controlled data sharing across institutions. Reliance on off-chain cloud storage may expose the system to external risks and limit end-to-end data security. [117] designed a decentralized medical data sharing framework to address the issue of unauthorized exposure and inefficient synchronization of medical records. To achieve this, they proposed a technique that partitions a full medical record into fine-grained data views, each shared selectively with different stakeholders such as patients, doctors, and researchers. Additionally, they employed blockchain-based smart contracts to enforce attribute-level access control, ensuring that only authorized users could update or access specific fields within the shared data. However, the system does not effectively handle concurrent updates across overlapping data views, relying instead on basic serialization mechanisms.[118]The authors developed a permissioned blockchain platform to ensure secure, consistent, and patient-controlled management of Electronic Medical Records (EMRs) within hospitals. They addressed issues such as data fragmentation, lack of transparency, and weak access control by integrating smart contracts for role-based permissions and using immutable transaction logs. There is no discussion of interoperability with existing hospital EMR systems, limiting real-world integration feasibility.[119] The authors developed a blockchain-based system to securely manage and verify COVID-19 digital medical passports and immunity certificates. The framework addresses challenges related to delayed, inaccurate, and unreliable health reporting. However, the system relies heavily on user-controlled private keys and advanced digital infrastructure, which may limit accessibility and introduce risks associated with key loss, particularly in resource-constrained settings.[120] The authors developed an Ethereum-based blockchain solution for the resale, leasing, and auctioning of pre-owned medical equipment. The system leverages smart contracts to automate equipment registration, ownership transfer, certification validation, and stakeholder reputation tracking, ensuring transparency and traceability. However, the solution requires universal blockchain adoption among participants, which may limit scalability and practical deployment in resource-constrained healthcare environments.[121] The authors employed Ethereum smart contracts, timer oracles, and IPFS to manage the preventive maintenance of diagnostic medical imaging equipment, including MRI and CT machines. However, the framework requires compliance with stringent healthcare regulations governing data privacy and security.

-

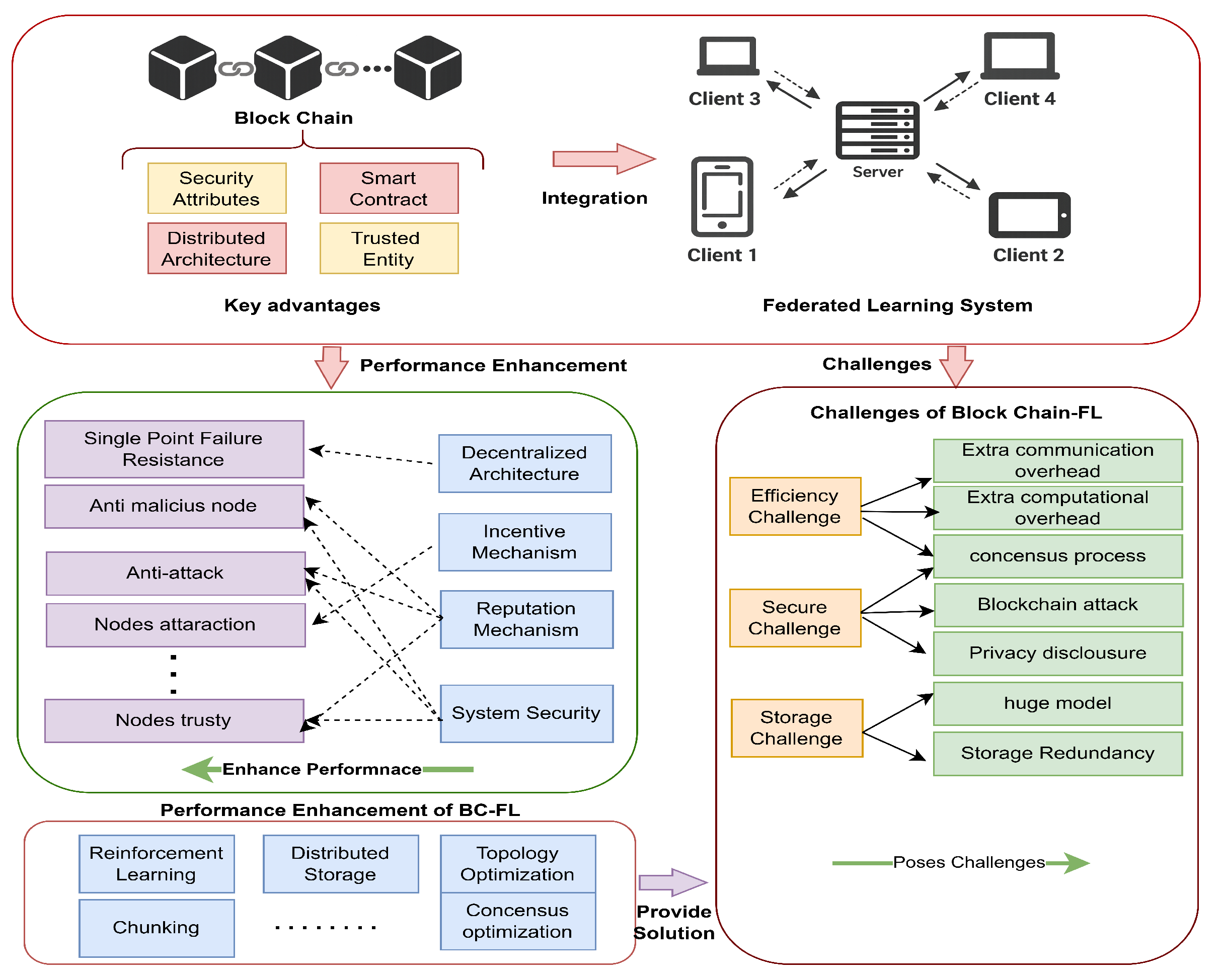

Blockchain Assisted FL SolutionsBlockchain-assisted FL solutions integrate blockchain with FL to enable secure, verifiable, and decentralized coordination of distributed model training across multiple healthcare institutions. This integration enhances privacy, data integrity, and trust during collaborative learning. However, such frameworks often depend on user-controlled private keys and advanced digital infrastructure, which may limit accessibility and scalability in resource-constrained environments.[122] The authors demonstrated a blockchain-enabled FL framework to securely train AI models for diagnosing 15 lung diseases from chest X-ray images without sharing patient data. Although the framework avoids storing raw medical data on-chain, its reliance on a permissionless and transparent blockchain architecture introduces potential privacy exposure risks.[123] The authors proposed FDBC-SKS, a blockchain-enabled federated learning framework that integrates knowledge distillation to facilitate secure and efficient knowledge sharing among medical institutions. The framework reduces communication overhead and enhances fairness in model update aggregation and verification. However, the knowledge distillation process may introduce privacy leakage risks, particularly if shared logits are not adequately protected.[124] The authors developed a privacy-preserving framework that integrates FL with a permissioned blockchain to enable collaborative brain tumor detection from MRI images across multiple hospitals. The proposed system addresses data-sharing restrictions and security vulnerabilities associated with centralized learning architectures. However, the system requires high computational resources and takes longer to train.Blockchain-driven approaches have demonstrated potential in enhancing data security, transparency, and controlled access within healthcare ecosystems. When integrated with FL, blockchain facilitates trusted and decentralized coordination of collaborative model training across medical institutions without exposing sensitive patient data. The blockchain-assisted FL workflow is illustrated in Figure 9.

6.2. Ethical and Legal

6.3. Solution for Technical Challenges

6.3.1. Model Interpretability

6.3.2. Model Biasness

6.3.3. Security Challenge

6.3.4. Scalability

6.4. Solution for Efficient and Effective AI System

7. AI-Based Existing Technologies and Their Impact on Public Health

7.1. Disease Surveillance and Outbreak Prediction

7.2. Predictive Analytics

7.3. Telemedicine and Virtual Health Assistants

7.4. Diagnostic Support

8. Comparison with existing works

| Author | Prospects | ||||||||

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | |

| Rong et al. [187] | ✓ | ✗ | ✓ | ✓ | ✗ | ✗ | ✗ | ✓ | ✗ |

| Shaheen[188] | ✓ | ✗ | ✓ | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ |

| Aung et al. [189] | ✓ | ✗ | ✓ | ✓ | ✓ | ✗ | ✓ | ✗ | ✗ |

| Sadeghi et al.[190] | ✓ | ✗ | ✓ | ✓ | ✓ | ✓ | ✓ | ✗ | ✓ |

| Wubineh et al.[191] | ✓ | ✗ | ✓ | ✗ | ✓ | ✗ | ✓ | ✗ | ✓ |

| Aminizadeh et al.[192] | ✓ | ✗ | ✓ | ✓ | ✓ | ✓ | ✓ | ✗ | ✓ |

| Kasula[193] | ✓ | ✗ | ✓ | ✓ | ✓ | ✗ | ✓ | ✗ | ✓ |

| Proposed survey | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

9. Future Implications

- Cloud and Edge Based AI Deployment: Future healthcare systems are anticipated to adopt integrated cloud edge computing frameworks to support real-time diagnostics, low-latency data processing, and secure decision-making. Such architectures will optimize computational efficiency while maintaining data privacy at distributed clinical nodes.

- Expansion of AI-Enabled Wearable Technologies: The proliferation of AI-integrated wearable devices will enable continuous physiological monitoring, early anomaly detection, and proactive healthcare interventions. These systems are expected to play a central role in preventive medicine and personalized health analytics.

- AI-Driven Remote Patient Monitoring(RPM): Remote monitoring platforms powered by AI will become fundamental to telemedicine ecosystems. Future frameworks are expected to integrate wearable sensors, fog/cloud computing infrastructures, and blockchain-enabled audit mechanisms to ensure secure, scalable, and reliable healthcare delivery.

- Personalized FL for Non-IID Medical Data: A significant research direction involves the development of personalized FL models capable of handling non-IID medical datasets. Client-specific fine-tuning and adaptive aggregation strategies will improve generalization across heterogeneous hospitals, imaging modalities, and demographic populations while preserving patient privacy.

- Secure Gradient Transmission Mechanisms: Although federated learning eliminates raw data sharing, exchanged gradients may still expose sensitive patient information through inference attacks. Future research should therefore prioritize robust and computationally efficient gradient protection mechanisms. Promising directions include differential privacy techniques tailored for medical imaging, gradient pruning and sparsification to minimize leakage risk, homomorphic encryption optimized for low-latency clinical environments, and secure multi-party computation with reduced overhead. Strengthening gradient security will be essential for ensuring trustworthy and regulation-compliant deployment of federated healthcare systems.

- Lightweight Blockchain Architectures for Secure FL Coordination: Another promising avenue lies in designing lightweight permissioned blockchain architectures for FL that reduce computational overhead and communication latency while ensuring secure and verifiable model update exchange among multiple healthcare institutions.

- Robustness Against Adversarial and Poisoning Attacks: Robustness against adversarial and poisoning attacks remains underexplored in federated healthcare systems. Future research should simulate realistic attack scenarios, develop anomaly detection for malicious client updates, and implement trust-aware and Byzantine-resilient aggregation mechanisms. Security validation must become a standard requirement to ensure safe and reliable deployment of federated AI in clinical environments.

- Digital Twins and Immersive Healthcare Technologies: Emerging innovations, including digital twin technologies, augmented reality (AR), and virtual reality (VR), will enable highly personalized patient care, advanced medical simulations, and immersive diagnostic experiences.

- AI Scribes and Clinical Automation: To reduce the administrative burden on clinicians, AI-powered medical scribes are anticipated to become an integral part of healthcare systems, allowing practitioners to focus more on patient-centric care and reducing burnout.

- Personalized and Preventive Medicine: The future of healthcare is shifting toward precision medicine, where AI-based models will analyze multimodal data—including medical imaging, genomics, electronic health records, and sensor data to enable patient-specific diagnosis, risk prediction, and treatment planning.

10. Conclusion

Data Availability Statement

Conflicts of Interest

References

- Malik, P.; Pathania, M.; Rathaur, V.K.; et al. Overview of artificial intelligence in medicine. Journal of family medicine and primary care 2019, 8, 2328–2331. [Google Scholar]

- Mintz, Y.; Brodie, R. Introduction to artificial intelligence in medicine. Minimally Invasive Therapy & Allied Technologies 2019, 28, 73–81. [Google Scholar] [CrossRef] [PubMed]

- Kaul, V.; Enslin, S.; Gross, S.A. History of artificial intelligence in medicine. Gastrointestinal endoscopy 2020, 92, 807–812. [Google Scholar] [CrossRef] [PubMed]

- Abdelwanis, M.; Simsekler, M.C.E.; Gabor, A.F.; Sleptchenko, A.; Omar, M. Artificial intelligence adoption challenges from healthcare providers’ perspectives: A comprehensive review and future directions. Safety Science 2026, 193, 107028. [Google Scholar] [CrossRef]

- Sarun, H.; Rotana, S.; Chhunla, C. The Role and Significance of Artificial Intelligence in Transforming Modern Society: Opportunities, Challenges, and Future Directions. Journal of Agriculture and Environment 2026, 3, 133–141. [Google Scholar]

- Çapuk, H.; Yiğit, M.F.; Uçar, M. Exploring the relationship between health professionals’ artificial intelligence literacy and their attitudes toward artificial intelligence. Informatics for Health and Social Care 2026, 1–12. [Google Scholar] [CrossRef]

- Haileamlak, A. The impact of COVID-19 on health and health systems. Ethiopian journal of health sciences 2021, 31, 1073. [Google Scholar]

- Khetrapal, S.; Bhatia, R. Impact of COVID-19 pandemic on health system & Sustainable Development Goal 3. Indian Journal of Medical Research 2020, 151, 395–399. [Google Scholar] [CrossRef]

- Xsolis. The Evolution of AI in Healthcare, 2023. Accessed. 12 06 2025.

- Buchanan, B.G. Research on expert systems. Technical report. 1981. [Google Scholar]

- Jones, L.; Golan, D.; Hanna, S.; Ramachandran, M. Artificial intelligence, machine learning and the evolution of healthcare: A bright future or cause for concern? Bone & joint research 2018, 7, 223–225. [Google Scholar]

- Hirani, R.; Noruzi, K.; Khuram, H.; Hussaini, A.S.; Aifuwa, E.I.; Ely, K.E.; Lewis, J.M.; Gabr, A.E.; Smiley, A.; Tiwari, R.K.; et al. Artificial intelligence and healthcare: a journey through history, present innovations, and future possibilities. Life 2024, 14, 557. [Google Scholar] [CrossRef]

- Miotto, R.; Wang, F.; Wang, S.; Jiang, X.; Dudley, J.T. Deep learning for healthcare: review, opportunities and challenges. Briefings in bioinformatics 2018, 19, 1236–1246. [Google Scholar] [CrossRef] [PubMed]

- Kim, H.E.; Cosa-Linan, A.; Santhanam, N.; Jannesari, M.; Maros, M.E.; Ganslandt, T. Transfer learning for medical image classification: a literature review. BMC medical imaging 2022, 22, 69. [Google Scholar] [CrossRef] [PubMed]

- Tjoa, E.; Guan, C. A survey on explainable artificial intelligence (xai): Toward medical xai. IEEE transactions on neural networks and learning systems 2020, 32, 4793–4813. [Google Scholar] [CrossRef] [PubMed]

- Repetto, S.; Maljkovic, I.; Lotto, M.; Cinà, A.E.; Vascon, S.; Roli, F. Evaluating the robustness of explainable AI in medical image recognition under natural and adversarial data corruption. Machine Learning 2026, 115, 4. [Google Scholar] [CrossRef]

- Sandhu, S.S.; Gorji, H.T.; Tavakolian, P.; Tavakolian, K.; Akhbardeh, A. Medical imaging applications of federated learning. Diagnostics 2023, 13, 3140. [Google Scholar] [CrossRef]

- Mir, B.A.; Abbas, S.R.; Lee, S.W. Federated Learning in Healthcare Ethics: A Systematic Review of Privacy-Preserving and Equitable Medical AI. Proceedings of the Healthcare. MDPI 2026, Vol. 14, 306. [Google Scholar] [CrossRef]

- Nasar, M.; Kausar, M.A.; Al Musalhi, N. Collaborative health intelligence: Federated learning as the foundation of future medical care. In Applied AI and Computational Intelligence in Diagnostics and Decision-Making; IGI Global Scientific Publishing, 2026; pp. 163–190. [Google Scholar]

- Ghosh, D.; Mehjabin, M.; Rayed, M.E.; Mridha, M.; Kabir, M.M. Advancements and challenges of federated learning in medical imaging: a systematic literature review. Artificial Intelligence Review 2026. [Google Scholar] [CrossRef]

- Shen, R.; Zhang, H.; Chai, B.; Wang, W.; Wang, G.; Yan, B.; Yu, J. BAFL-SVM: A blockchain-assisted federated learning-driven SVM framework for smart agriculture. High-Confidence Computing 2025, 5, 100243. [Google Scholar] [CrossRef]

- Reddy, G.R.; Kollu, V.N.; Elshafie, H.; Qamar, S.; et al. A novel blockchain-federated learning framework with quantum neural networks and wavelet transforms for secure IoT healthcare monitoring. Biomedical Signal Processing and Control 2026, 113, 108759. [Google Scholar] [CrossRef]

- Vhatkar, K.N.; Sontakke, P.V.; Pawar, A.S.; Shirode, U.R.; Salunke, D. Federated Deep Learning Model for Secured Data Transmission in the Healthcare Sector Using Adaptive and Attention-Based Residual Capsnet With Blockchain. Computational Intelligence 2026, 42, e70196. [Google Scholar] [CrossRef]

- Sukanya, M.; Jude Nirmal, V.; Richard Roy, G. FLPPBC: A Federated Learning-Driven Privacy-Preserving Model Using Private Permissioned Blockchain for Healthcare Systems. Indian Journal of Science and Technology 2026, 19, 59–70. [Google Scholar] [CrossRef]

- Abhisheka, B.; Biswas, S.K.; Purkayastha, B. Infusing Weighted Average Ensemble Diversity for Advanced Breast Cancer Detection. International Journal of Imaging Systems and Technology 2024, 34, e23146. [Google Scholar] [CrossRef]

- Abhisheka, B.; Biswas, S.K.; Purkayastha, B. HBNet: An integrated approach for resolving class imbalance and global local feature fusion for accurate breast cancer classification. Neural Computing and Applications 2024, 36, 8455–8472. [Google Scholar] [CrossRef]

- Wang, D.; Xu, J.; Zhang, Z.; Li, S.; Zhang, X.; Zhou, Y.; Zhang, X.; Lu, Y. Evaluation of rectal cancer circumferential resection margin using faster region-based convolutional neural network in high-resolution magnetic resonance images. Diseases of the Colon & Rectum 2020, 63, 143–151. [Google Scholar]

- Hendrix, W.; Hendrix, N.; Scholten, E.T.; van Ginneken, B.; Prokop, M.; Rutten, M.; Jacobs, C. Artificial intelligence for the detection of airway nodules in chest CT scans. European Radiology 2025, 1–11. [Google Scholar] [CrossRef]

- Zhang, P.; Gao, C.; Huang, Y.; Chen, X.; Pan, Z.; Wang, L.; Dong, D.; Li, S.; Qi, X. Artificial intelligence in liver imaging: methods and applications. Hepatology International 2024, 18, 422–434. [Google Scholar] [CrossRef]

- Vought, R.; Vought, V.; Shah, M.; Szirth, B.; Bhagat, N. EyeArt artificial intelligence analysis of diabetic retinopathy in retinal screening events. International Ophthalmology 2023, 43, 4851–4859. [Google Scholar] [CrossRef]

- Chen, J.; Wang, G.; Xia, K.; Wang, Z.; Liu, L.; Xu, X. Constructing an artificial intelligence-assisted system for the assessment of gastroesophageal valve function based on the hill classification (with video). BMC Medical Informatics and Decision Making 2025, 25, 144. [Google Scholar] [CrossRef]

- Liang, X.; Wang, X.; Chen, Y.; He, D.; Li, L.; Chen, G.; Li, J.; Li, J.; Liu, S.; Xu, Z. Predictive value of intraoperative contrast-enhanced ultrasound in functional recovery of non-traumatic cervical spinal cord injury. European Radiology 2024, 34, 2297–2309. [Google Scholar] [CrossRef]

- Xie, P.; Li, Y.; Deng, B.; Du, C.; Rui, S.; Deng, W.; Wang, M.; Boey, J.; Armstrong, D.G.; Ma, Y.; et al. An explainable machine learning model for predicting in-hospital amputation rate of patients with diabetic foot ulcer. International wound journal 2022, 19, 910–918. [Google Scholar] [CrossRef]

- Rajkomar, A.; Oren, E.; Chen, K.; Dai, A.M.; Hajaj, N.; Hardt, M.; Liu, P.J.; Liu, X.; Marcus, J.; Sun, M.; et al. Scalable and accurate deep learning with electronic health records. NPJ digital medicine 2018, 1, 18. [Google Scholar] [CrossRef]

- Cruz, J.A.; Wishart, D.S. Applications of machine learning in cancer prediction and prognosis. Cancer informatics 2006, 2, 117693510600200030. [Google Scholar] [CrossRef]

- Moor, M.; Rieck, B.; Horn, M.; Jutzeler, C.R.; Borgwardt, K. Early prediction of sepsis in the ICU using machine learning: a systematic review. Frontiers in medicine 2021, 8, 607952. [Google Scholar] [CrossRef] [PubMed]

- Tomašev, N.; Glorot, X.; Rae, J.W.; Zielinski, M.; Askham, H.; Saraiva, A.; Mottram, A.; Meyer, C.; Ravuri, S.; Protsyuk, I.; et al. A clinically applicable approach to continuous prediction of future acute kidney injury. Nature 2019, 572, 116–119. [Google Scholar] [CrossRef] [PubMed]

- Gaudelet, T.; Day, B.; Jamasb, A.R.; Soman, J.; Regep, C.; Liu, G.; Hayter, J.B.; Vickers, R.; Roberts, C.; Tang, J.; et al. Utilizing graph machine learning within drug discovery and development. Briefings in bioinformatics 2021, 22. [Google Scholar] [CrossRef] [PubMed]

- Tang, X.; Dai, H.; Knight, E.; Wu, F.; Li, Y.; Li, T.; Gerstein, M. A survey of generative AI for de novo drug design: new frontiers in molecule and protein generation. Briefings in Bioinformatics 2024, 25. [Google Scholar] [CrossRef]

- Alizadehsani, R.; Oyelere, S.S.; Hussain, S.; Jagatheesaperumal, S.K.; Calixto, R.R.; Rahouti, M.; Roshanzamir, M.; De Albuquerque, V.H.C. Explainable artificial intelligence for drug discovery and development: a comprehensive survey. IEEE Access 2024, 12, 35796–35812. [Google Scholar] [CrossRef]

- Mak, K.K.; Wong, Y.H.; Pichika, M.R. Artificial intelligence in drug discovery and development. Drug discovery and evaluation: safety and pharmacokinetic assays 2024, 1461–1498. [Google Scholar]

- Ocana, A.; Pandiella, A.; Privat, C.; Bravo, I.; Luengo-Oroz, M.; Amir, E.; Gyorffy, B. Integrating artificial intelligence in drug discovery and early drug development: a transformative approach. Biomarker Research 2025, 13, 45. [Google Scholar] [CrossRef]

- Kavitha, M.; Roobini, S.; Prasanth, A.; Sujaritha, M. Systematic view and impact of artificial intelligence in smart healthcare systems, principles, challenges and applications. Machine learning and artificial intelligence in healthcare systems 2023, 25–56. [Google Scholar]

- Kim, H.K. The effects of artificial intelligence chatbots on women’s health: A systematic review and meta-analysis. Proceedings of the Healthcare. MDPI 2024, Vol. 12, 534. [Google Scholar] [CrossRef]

- Singhal, K.; Tu, T.; Gottweis, J.; Sayres, R.; Wulczyn, E.; Amin, M.; Hou, L.; Clark, K.; Pfohl, S.R.; Cole-Lewis, H.; et al. Toward expert-level medical question answering with large language models. Nature Medicine 2025, 31, 943–950. [Google Scholar] [CrossRef]

- García-Méndez, S.; de Arriba-Pérez, F. Detecting and Explaining Postpartum Depression in Real-Time with Generative Artificial Intelligence. Applied Artificial Intelligence 2025, 39, 2515063. [Google Scholar] [CrossRef]

- Vahdati, M.; Laamarti, F.; El Saddik, A. Meta-review of wearable devices for healthcare in the Metaverse. ACM Transactions on Multimedia Computing, Communications and Applications 2025, 21, 1–36. [Google Scholar] [CrossRef]

- Ghaffour, J.e.; Ahajjam, S.; Taqafi, I.; Ezzati, A. Artificial Intelligence in Remote Healthcare. In Proceedings of the International Conference on intelligent systems and digital applications, 2025; Springer; pp. 230–240. [Google Scholar]

- Nigar, N. AI in remote patient monitoring. In Transformation in health care: Game-changers in digitalization, technology, AI and longevity; Springer, 2025; pp. 245–259. [Google Scholar]

- Nurmi, J.; Lohan, E.S. Systematic review on machine-learning algorithms used in wearable-based eHealth data analysis. IEEE Access 2021, 9, 112221–112235. [Google Scholar]

- Gautam, N.; Ghanta, S.N.; Mueller, J.; Mansour, M.; Chen, Z.; Puente, C.; Ha, Y.M.; Tarun, T.; Dhar, G.; Sivakumar, K.; et al. Artificial intelligence, wearables and remote monitoring for heart failure: current and future applications. Diagnostics 2022, 12, 2964. [Google Scholar] [CrossRef] [PubMed]

- Gagnon, M.P.; Ouellet, S.; Attisso, E.; Supper, W.; Amil, S.; Rhéaume, C.; Paquette, J.S.; Chabot, C.; Laferrière, M.C.; Sasseville, M. Wearable devices for supporting chronic disease self-management: scoping review. Interactive journal of medical research 2024, 13, e55925. [Google Scholar] [CrossRef]

- Suryawanshi, V.; Bhoyar, V.; et al. The role of AI in enhancing hospital operational efficiency and patient care. Multidisciplinary Reviews 2025, 8, 2025153–2025153. [Google Scholar] [CrossRef]

- Hong, W.S.; Haimovich, A.D.; Taylor, R.A. Predicting hospital admission at emergency department triage using machine learning. PloS one 2018, 13, e0201016. [Google Scholar] [CrossRef]

- Wu, Q.; Han, J.; Yan, Y.; Kuo, Y.H.; Shen, Z.J.M. Reinforcement learning for healthcare operations management: methodological framework, recent developments, and future research directions. Health Care Management Science 2025, 1–36. [Google Scholar] [CrossRef]

- Saria, S.; Butte, A.; Sheikh, A. Better medicine through machine learning: what’s real, and what’s artificial? 2018. [Google Scholar]

- Letourneau-Guillon, L.; Camirand, D.; Guilbert, F.; Forghani, R. Artificial intelligence applications for workflow, process optimization and predictive analytics. Neuroimaging Clinics of North America 2020, 30, e1–e15. [Google Scholar] [CrossRef]

- Suresh, N.; Selvakumar, A.; Sridhar, G.; et al. Operational efficiency and cost reduction: the role of AI in healthcare administration. In Revolutionizing the Healthcare Sector with AI; IGI Global, 2024; pp. 262–272. [Google Scholar]

- Virmani, N.; Singh, R.K.; Agarwal, V.; Aktas, E. Artificial intelligence applications for responsive healthcare supply chains: A decision-making framework. IEEE Transactions on Engineering Management 2024, 71, 8591–8605. [Google Scholar] [CrossRef]

- Shokri, R.; Stronati, M.; Song, C.; Shmatikov, V. Membership inference attacks against machine learning models. In Proceedings of the 2017 IEEE symposium on security and privacy (SP), 2017; IEEE; pp. 3–18. [Google Scholar]

- Kaushik, A.; Barcellona, C.; Mandyam, N.K.; Tan, S.Y.; Tromp, J. Challenges and opportunities for data sharing related to artificial intelligence tools in health Care in low-and Middle-Income Countries: systematic review and case study from Thailand. Journal of Medical Internet Research 2025, 27, e58338. [Google Scholar] [CrossRef]

- Kaissis, G.A.; Makowski, M.R.; Rückert, D.; Braren, R.F. Secure, privacy-preserving and federated machine learning in medical imaging. Nature Machine Intelligence 2020, 2, 305–311. [Google Scholar] [CrossRef]

- O’herrin, J.K.; Fost, N.; Kudsk, K.A. Health Insurance Portability Accountability Act (HIPAA) regulations: effect on medical record research. Annals of surgery 2004, 239, 772–778. [Google Scholar] [CrossRef] [PubMed]

- Botsis, T.; Hartvigsen, G.; Chen, F.; Weng, C. Secondary use of EHR: data quality issues and informatics opportunities. Summit on translational bioinformatics, 2010, 2010; 1. [Google Scholar]

- Zhu, C.; Xin, J.; Trinh, T.K. Data quality challenges and governance frameworks for AI implementation in supply chain management. Pinnacle Academic Press Proceedings Series 2025, 2, 28–43. [Google Scholar]

- Myakala, P.K. Scaling AI with Limited Labeled Data: A Self-Supervised Learning Approach. ICCK Transactions on Emerging Topics in Artificial Intelligence 2025, 2, 26–35. [Google Scholar] [CrossRef]

- Jeon, Y.; Hwang, C.; Chen, X. Empowering Medical Data Labeling for Non-Experts with DANNY: Enhancing Accuracy and Mitigating Over-Reliance on AI. In Proceedings of the Proceedings of the 30th International Conference on Intelligent User Interfaces, 2025; pp. 624–640. [Google Scholar]

- Chinta, S.V.; Wang, Z.; Palikhe, A.; Zhang, X.; Kashif, A.; Smith, M.A.; Liu, J.; Zhang, W. AI-Driven Healthcare: A Review on Ensuring Fairness and Mitigating Bias. [CrossRef]

- Seyyed-Kalantari, L.; Zhang, H.; McDermott, M.B.; Chen, I.Y.; Ghassemi, M. Underdiagnosis bias of artificial intelligence algorithms applied to chest radiographs in under-served patient populations. Nature medicine 2021, 27, 2176–2182. [Google Scholar] [CrossRef]

- Van der Velden, B.H.; Kuijf, H.J.; Gilhuijs, K.G.; Viergever, M.A. Explainable artificial intelligence (XAI) in deep learning-based medical image analysis. Medical Image Analysis 2022, 79, 102470. [Google Scholar] [CrossRef]

- Habiba, U.e.; Habib, M.K.; Bogner, J.; Fritzsch, J.; Wagner, S. How do ML practitioners perceive explainability? an interview study of practices and challenges. Empirical Software Engineering 2025, 30, 18. [Google Scholar] [CrossRef]

- Ahangar, M.N.; Farhat, Z.; Sivanathan, A. AI trustworthiness in manufacturing: challenges, toolkits, and the path to industry 5.0. Sensors 2025, 25, 4357. [Google Scholar] [CrossRef]

- Gerke, S.; Minssen, T.; Cohen, G. Ethical and legal challenges of artificial intelligence-driven healthcare. In Artificial Intelligence in Healthcare; Elsevier: Amsterdam, 2020; pp. 295–336. [Google Scholar]

- Holzinger, A.; Langs, G.; Denk, H.; Zatloukal, K.; Müller, H. Causability and explainability of artificial intelligence in medicine. Wiley Interdisciplinary Reviews: Data Mining and Knowledge Discovery 2019, 9, e1312. [Google Scholar] [CrossRef] [PubMed]

- Samek, W.; Wiegand, T.; Müller, K.R. Explainable Artificial Intelligence: Understanding, Visualizing and Interpreting Deep Learning Models. arXiv arXiv:1708.08296. [CrossRef]

- Guidotti, R.; Monreale, A.; Ruggieri, S.; Turini, F.; Giannotti, F.; Pedreschi, D. A Survey of Methods for Explaining Black Box Models. ACM Computing Surveys (CSUR) 2018, 51, 1–42. [Google Scholar] [CrossRef]

- Crawford, K.; Calo, R. There is a blind spot in AI research. Nature 2016, 538, 311–313. [Google Scholar] [CrossRef]

- Barocas, S.; Selbst, A.D. Big Data’s Disparate Impact. California Law Review 2016, 104, 671–732. [Google Scholar] [CrossRef]

- Chen, I.Y.; Johansson, F.D.; Sontag, D. Why is my classifier discriminatory? In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2018; pp. 3539–3550. [Google Scholar]

- Esteva, A.; Kuprel, B.; Novoa, R.A.; Ko, J.; Swetter, S.M.; Blau, H.M.; Thrun, S. Dermatologist-level classification of skin cancer with deep neural networks. Nature 2017, 542, 115–118. [Google Scholar] [CrossRef]

- Haenssle, H.A.; et al. Reply to ’Man against machine: diagnostic performance of a deep learning convolutional neural network for dermoscopic melanoma recognition in comparison to 58 dermatologists’. Annals of Oncology 2019. [Google Scholar] [CrossRef]

- Ward-Peterson, M.; Hill, S.; Hurley, D.; Harlan, S. Association between race/ethnicity and survival of melanoma patients in the United States over 3 decades. Medicine 2016, 95, e3315. [Google Scholar] [CrossRef]

- Al-Otaibi, S.; Ayouni, S.; Sarwar, N.; Irshad, A.; Ullah, F. AI-driven security framework for medical sensor networks: enhancing privacy and trust in smart healthcare systems. Cluster Computing 2025, 28, 408. [Google Scholar] [CrossRef]

- Gupta, S.; Kapoor, M.; Debnath, S.K. Challenges and Risks of AI-Enabled Healthcare Security. In Artificial Intelligence-Enabled Security for Healthcare Systems: Safeguarding Patient Data and Improving Services; Springer, 2025; pp. 101–112. [Google Scholar]

- Tschandl, P.; Ružička, E.; Akay, B.N.; Argenziano, G.; Babilon, S.; Braun, R.P.; Cabo, H.; Cannizzaro, G.; Carrera, C.; Dalle, S.; et al. Clinically relevant vulnerabilities of deep learning systems for skin cancer diagnosis. Nature Medicine 2021, 27, 848–854. [Google Scholar] [CrossRef]

- El-Saleh, A.A.; Sheikh, A.M.; Albreem, M.A.; Honnurvali, M.S. The internet of medical things (IoMT): opportunities and challenges. Wireless networks 2025, 31, 327–344. [Google Scholar] [CrossRef]

- Kioskli, K.; Grigoriou, E.; Islam, S.; Yiorkas, A.M.; Christofi, L.; Mouratidis, H. A risk and conformity assessment framework to ensure security and resilience of healthcare systems and medical supply chain. International Journal of Information Security 2025, 24, 1–28. [Google Scholar] [CrossRef]

- Hu, Y.; Kuang, W.; Qin, Z.; Li, K.; Zhang, J.; Gao, Y.; Li, W.; Li, K. Artificial intelligence security: Threats and countermeasures. ACM Computing Surveys (CSUR) 2021, 55, 1–36. [Google Scholar] [CrossRef]

- Rajpurkar, P.; Irvin, J.; Zhu, K. AI in healthcare: The challenges of developing models that work. The Lancet Digital Health 2022, 4, e425–e435. [Google Scholar]

- Han, S.; Mao, H.; Dally, W.J. Deep compression: Compressing deep neural networks with pruning, trained quantization and Huffman coding. arXiv arXiv:1510.00149. [CrossRef]

- Zech, J.R.; Badgeley, M.A.; Liu, M.; Costa, A.B.; Titano, J.J.; Oermann, E.K. Variable generalization performance of a deep learning model to detect pneumonia in chest radiographs. PLOS Medicine 2018, 15, e1002683. [Google Scholar] [CrossRef]

- Li, T.; Sahu, A.K.; Talwalkar, A.; Smith, V. Federated learning: Challenges, methods, and future directions. IEEE Signal Processing Magazine 2020, 37, 50–60. [Google Scholar] [CrossRef]

- Holzinger, A.; Biemann, C.; Pattichis, C.S.; Kell, D.B. What do we need to build explainable AI systems for the medical domain? arXiv arXiv:1712.09923. [CrossRef]

- He, J.; Baxter, S.L.; Xu, J.; Xu, J.; Zhou, X.; Zhang, K. The practical implementation of artificial intelligence technologies in medicine. Nature Medicine 2019, 25, 30–36. [Google Scholar] [CrossRef]

- Esteva, A.; Robicquet, A.; Ramsundar, B. A guide to deep learning in healthcare. Nature Medicine 2019, 25, 24–29. [Google Scholar] [CrossRef] [PubMed]

- Xu, Y.; Liu, Q.; Zhang, Y. Edge computing for deep learning in real-time health monitoring. IEEE Network 2021, 35, 156–162. [Google Scholar]

- Schwartz, R.; Dodge, J.; Smith, N.A.; Etzioni, O. Green AI. Communications of the ACM 2020, 63, 54–63. [Google Scholar] [CrossRef]

- Choi, E.; Bahadori, M.; Schuetz, A.; Stewart, W.; Sun, J. RETAIN: An interpretable predictive model for healthcare using reverse time attention mechanism. Advances in Neural Information Processing Systems (NeurIPS) 2016, 29. [Google Scholar]

- Chen, I.Y.; Szolovits, P.; Ghassemi, M. Scalable and accurate deep learning with electronic health records. npj Digital Medicine 2021, 4, 1–10. [Google Scholar]

- Li, Y.; Deng, C.; Hu, C. Edge AI: On-demand deep learning model co-inference with device-edge synergy. In Proceedings of the ACM/IEEE Symposium on Edge Computing, 2019; pp. 31–44. [Google Scholar]

- Wang, Z.; Ye, M.; Kumar, A. Reliable real-time AI for healthcare monitoring and prediction. IEEE Transactions on Network and Service Management 2020, 17, 1456–1469. [Google Scholar]

- Li, J.; Meng, Y.; Ma, L.; Du, S.; Zhu, H.; Pei, Q.; Shen, X. A federated learning based privacy-preserving smart healthcare system. IEEE Transactions on Industrial Informatics 2021, 18. [Google Scholar] [CrossRef]

- Wang, H.; Wang, Q.; Ding, Y.; Tang, S.; Wang, Y. Privacy-preserving federated learning based on partial low-quality data. Journal of Cloud Computing 2024, 13, 62. [Google Scholar] [CrossRef]

- Liu, B.; Yan, B.; Zhou, Y.; Yang, Y.; Zhang, Y. Experiments of federated learning for covid-19 chest x-ray images. arXiv arXiv:2007.05592. [CrossRef]

- Feki, I.; Ammar, S.; Kessentini, Y.; Muhammad, K. Federated learning for COVID-19 screening from Chest X-ray images. Applied Soft Computing 2021, 106, 107330. [Google Scholar] [CrossRef] [PubMed]

- Yan, Z.; Wicaksana, J.; Wang, Z.; Yang, X.; Cheng, K.T. Variation-aware federated learning with multi-source decentralized medical image data. IEEE Journal of Biomedical and Health Informatics 2020, 25, 2615–2628. [Google Scholar] [CrossRef] [PubMed]

- Wicaksana, J.; Yan, Z.; Yang, X.; Liu, Y.; Fan, L.; Cheng, K.T. Customized federated learning for multi-source decentralized medical image classification. IEEE Journal of Biomedical and Health Informatics 2022, 26, 5596–5607. [Google Scholar] [CrossRef] [PubMed]

- Zhang, M.; Qu, L.; Singh, P.; Kalpathy-Cramer, J.; Rubin, D.L. Splitavg: A heterogeneity-aware federated deep learning method for medical imaging. IEEE Journal of Biomedical and Health Informatics 2022, 26, 4635–4644. [Google Scholar] [CrossRef]

- Johnson, A.E.W.; Pollard, T.J.; Shen, L. MIMIC-III, a freely accessible critical care database. Scientific Data 2016, 3, 160035. [Google Scholar] [CrossRef]

- Kondrateva, E.; Druzhinina, P.; Dalechina, A. Negligible effect of brain MRI data preprocessing for tumor segmentation. arXiv 2022, arXiv:2204.07954. [Google Scholar] [CrossRef]

- Reinhold, J.C.; Dewey, B.E.; Carass, A.; Prince, J.L. Multi-site harmonization for lung CT radiomics. Radiology: Artificial Intelligence 2021, 3, e210070. [Google Scholar]

- Hripcsak, G.; Duke, J.D.; Shah, N.H.; Reich, C.G.; Huser, V.; Schuemie, M.J.; Suchard, M.A.; Park, R.W.; Wong, I.C.K.; Rijnbeek, P.R.; et al. Observational Health Data Sciences and Informatics (OHDSI): opportunities for observational researchers. In MEDINFO 2015: eHealth-enabled Health; IOS Press, 2015; pp. 574–578. [Google Scholar]

- Banerjee, I.; Chen, M.C.; Lungren, M.P. Comparison of NLP Preprocessing Approaches for Clinical Radiology Reports. Journal of Biomedical Informatics 2019, 100, 103327. [Google Scholar] [CrossRef]

- Sheller, M.J.; Edwards, B.; Reina, G.A. Federated learning in medicine: facilitating multi-institutional collaborations without sharing patient data. Scientific Reports 2020, 10, 12598. [Google Scholar] [CrossRef]

- Yazdinejad, A.; Srivastava, G.; Parizi, R.M.; Dehghantanha, A.; Choo, K.K.R.; Aledhari, M. Decentralized authentication of distributed patients in hospital networks using blockchain. IEEE journal of biomedical and health informatics 2020, 24, 2146–2156. [Google Scholar] [CrossRef]

- Chen, Y.; Ding, S.; Xu, Z.; Zheng, H.; Yang, S. Blockchain-based medical records secure storage and medical service framework. Journal of medical systems 2019, 43, 1–9. [Google Scholar] [CrossRef]

- Li, C.; Cao, Y.; Hu, Z.; Yoshikawa, M. Blockchain-based bidirectional updates on fine-grained medical data. In Proceedings of the 2019 IEEE 35th International Conference on Data Engineering Workshops (ICDEW), 2019; IEEE; pp. 22–27. [Google Scholar]

- Hang, L.; Choi, E.; Kim, D.H. A novel EMR integrity management based on a medical blockchain platform in hospital. Electronics 2019, 8, 467. [Google Scholar] [CrossRef]

- Hasan, H.R.; Salah, K.; Jayaraman, R.; Arshad, J.; Yaqoob, I.; Omar, M.; Ellahham, S. Blockchain-based solution for COVID-19 digital medical passports and immunity certificates. Ieee Access 2020, 8, 222093–222108. [Google Scholar] [CrossRef] [PubMed]

- Alshamsi, H.; Alteneiji, S.; Madine, M.; Musamih, A.; Nemer, M.; Salah, K.; Jayaraman, R.; Antony, J.; Omar, M. Blockchain-based resale and leasing of pre-owned medical equipment. Technology in Society 2024, 77, 102549. [Google Scholar] [CrossRef]

- Omar, I.A.; Hasan, H.R.; AlKhader, W.; Jayaraman, R.; Salah, K.; Omar, M. Blockchain-based trusted accountability in the maintenance of medical imaging equipment. Expert Systems with Applications 2024, 241, 122718. [Google Scholar] [CrossRef]

- Myrzashova, R.; Alsamhi, S.H.; Hawbani, A.; Curry, E.; Guizani, M.; Wei, X. Safeguarding patient data-sharing: Blockchain-enabled federated learning in medical diagnostics. IEEE Transactions on Sustainable Computing, 2024. [Google Scholar]

- Zhou, X.; Huang, W.; Liang, W.; Yan, Z.; Ma, J.; Pan, Y.; Wang, K.I.K. Federated distillation and blockchain empowered secure knowledge sharing for internet of medical things. Information Sciences 2024, 662, 120217. [Google Scholar] [CrossRef]

- Kumar, R.; Bernard, C.M.; Ullah, A.; Khan, R.U.; Kumar, J.; Kulevome, D.K.; Yunbo, R.; Zeng, S. Privacy-preserving blockchain-based federated learning for brain tumor segmentation. Computers in Biology and Medicine 2024, 177, 108646. [Google Scholar] [CrossRef]

- Chikhaoui, E.; Alajmi, A.; Larabi-Marie-sainte, S. Artificial intelligence applications in healthcare sector: Ethical and legal challenges. Emerging Science Journal 2022, 6(4), 717À738. [Google Scholar] [CrossRef]

- Stogiannos, N.; Georgiadou, E.; Rarri, N.; Malamateniou, C. Ethical AI: a qualitative study exploring ethical challenges and solutions on the use of AI in medical imaging. European Journal of Radiology Artificial Intelligence 2025, 1, 100006. [Google Scholar] [CrossRef]

- Naik, N.; Hameed, B.; Shetty, D.K.; Swain, D.; Shah, M.; Paul, R.; Aggarwal, K.; Ibrahim, S.; Patil, V.; Smriti, K.; et al. Legal and ethical consideration in artificial intelligence in healthcare: who takes responsibility? Frontiers in surgery 2022, 9, 862322. [Google Scholar] [CrossRef]

- Pham, T. Ethical and legal considerations in healthcare AI: innovation and policy for safe and fair use. Royal Society Open Science 2025, 12, 241873. [Google Scholar] [CrossRef] [PubMed]

- Bataineh, A.Q.; Mushtaha, A.S.; Abu-AlSondos, I.A.; Aldulaimi, S.H.; Abdeldayem, M. Ethical & legal concerns of artificial intelligence in the healthcare sector. In Proceedings of the 2024 ASU International Conference in Emerging Technologies for Sustainability and Intelligent Systems (ICETSIS), 2024; IEEE; pp. 491–495. [Google Scholar]

- Tamhane, P. Ethical and Legal Implications of AI in Healthcare: A Global Perspective. Journal Publication of International Research for Engineering and Management (JOIREM) 2025, 5. [Google Scholar]

- Hossain, M.I.; Zamzmi, G.; Mouton, P.R.; Salekin, M.S.; Sun, Y.; Goldgof, D. Explainable AI for medical data: Current methods, limitations, and future directions. ACM Computing Surveys 2025, 57, 1–46. [Google Scholar] [CrossRef]

- Sun, Q.; Akman, A.; Schuller, B.W. Explainable artificial intelligence for medical applications: A review. ACM Transactions on Computing for Healthcare 2025, 6, 1–31. [Google Scholar] [CrossRef]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. Why should i trust you?" Explaining the predictions of any classifier. In Proceedings of the Proceedings of the 22nd ACM SIGKDD international conference on knowledge discovery and data mining, 2016; pp. 1135–1144. [Google Scholar]

- Lundberg, S.M.; Lee, S.I. A unified approach to interpreting model predictions. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Nahiduzzaman, M.; Abdulrazak, L.F.; Ayari, M.A.; Khandakar, A.; Islam, S.R. A novel framework for lung cancer classification using lightweight convolutional neural networks and ridge extreme learning machine model with SHapley Additive exPlanations (SHAP). Expert Systems with Applications 2024, 248, 123392. [Google Scholar] [CrossRef]

- Moujahid, H.; Cherradi, B.; Al-Sarem, M.; Bahatti, L.; Eljialy, A.B.A.M.Y.; Alsaeedi, A.; Saeed, F. Combining CNN and Grad-Cam for COVID-19 Disease Prediction and Visual Explanation. Intelligent Automation & Soft Computing 2022, 32. [Google Scholar]

- Mir, A.N.; Rizvi, D.R.; Ahmad, M.R. Enhancing histopathological image analysis: An explainable vision transformer approach with comprehensive interpretation methods and evaluation of explanation quality. Engineering Applications of Artificial Intelligence 2025, 149, 110519. [Google Scholar] [CrossRef]

- Agnihotri, A.; Kohli, N. (XAI-AGUWEM) Explainable Artificial Intelligence-based Attention Guided Uncertainty Weighting Ensemble Model for the Classification of COVID-19 and Pneumonia in X-ray Medical Images. Recent Patents on Electrical Engineering, 2025. [Google Scholar]

- Chauhan, K.; Tiwari, R.; Freyberg, J.; Shenoy, P.; Dvijotham, K. Interactive concept bottleneck models. Proceedings of the Proceedings of the aaai conference on artificial intelligence 2023, Vol. 37, 5948–5955. [Google Scholar] [CrossRef]

- Lou, X.; Yan, D.; Shen, W.; Yan, Y.; Xie, J.; Zhang, J. Uncertainty-aware reward model: Teaching reward models to know what is unknown. arXiv 2024. arXiv:2410.00847. [CrossRef]

- Feng, Q.; Du, M.; Zou, N.; Hu, X. Fair machine learning in healthcare: A survey. IEEE Transactions on Artificial Intelligence, 2024. [Google Scholar]

- Yang, Y.; Lin, M.; Zhao, H.; Peng, Y.; Huang, F.; Lu, Z. A survey of recent methods for addressing AI fairness and bias in biomedicine. Journal of Biomedical Informatics 2024, 154, 104646. [Google Scholar] [CrossRef] [PubMed]

- Chinta, S.V.; Wang, Z.; Palikhe, A.; Zhang, X.; Kashif, A.; Smith, M.A.; Liu, J.; Zhang, W. AI-Driven Healthcare: A Review on Ensuring Fairness and Mitigating Bias. arXiv 2024. arXiv:2407.19655. [CrossRef] [PubMed]

- Chen, R.J.; Chen, T.Y.; Lipkova, J.; Wang, J.J.; Williamson, D.F.; Lu, M.Y.; Sahai, S.; Mahmood, F. Algorithm fairness in ai for medicine and healthcare. arXiv arXiv:2110.00603. [CrossRef]

- Rauniyar, A.; Hagos, D.H.; Jha, D.; Håkegård, J.E.; Bagci, U.; Rawat, D.B.; Vlassov, V. Federated learning for medical applications: A taxonomy, current trends, challenges, and future research directions. IEEE Internet of Things Journal 2023, 11, 7374–7398. [Google Scholar] [CrossRef]

- Xu, J.; Xiao, Y.; Wang, W.H.; Ning, Y.; Shenkman, E.A.; Bian, J.; Wang, F. Algorithmic fairness in computational medicine. EBioMedicine 2022, 84. [Google Scholar] [CrossRef]

- Lund, B.; Orhan, Z.; Mannuru, N.R.; Bevara, R.V.K.; Porter, B.; Vinaih, M.K.; Bhaskara, P. Standards, frameworks, and legislation for artificial intelligence (AI) transparency. AI and Ethics 2025, 1–17. [Google Scholar] [CrossRef]

- Kennedy-Mayo, D.; Gord, J. “Model Cards for Model Reporting” in 2024: Reclassifying Category of Ethical Considerations in Terms of Trustworthiness and Risk Management. In Proceedings of the Future of Information and Communication Conference, 2025; Springer; pp. 179–196. [Google Scholar]

- Jain, A.; Brooks, J.R.; Alford, C.C.; Chang, C.S.; Mueller, N.M.; Umscheid, C.A.; Bierman, A.S. Awareness of racial and ethnic bias and potential solutions to address bias with use of health care algorithms. Proceedings of the JAMA Health Forum. American Medical Association 2023, Vol. 4, e231197–e231197. [Google Scholar] [CrossRef]

- Shayea, G.G.; Zabil, M.H.M.; Habeeb, M.A.; Khaleel, Y.L.; Albahri, A. Strategies for protection against adversarial attacks in AI models: An in-depth review. Journal of Intelligent Systems 2025, 34, 20240277. [Google Scholar] [CrossRef]

- Vatian, A.; Gusarova, N.; Dobrenko, N.; Dudorov, S.; Nigmatullin, N.; Shalyto, A.; Lobantsev, A. Impact of adversarial examples on the efficiency of interpretation and use of information from high-tech medical images. In Proceedings of the 2019 24th Conference of Open Innovations Association (FRUCT), 2019; IEEE; pp. 472–478. [Google Scholar]

- Rao, C.; Cao, J.; Zeng, R.; Chen, Q.; Fu, H.; Xu, Y.; Tan, M. A thorough comparison study on adversarial attacks and defenses for common thorax disease classification in chest X-rays. arXiv arXiv:2003.13969. [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In Proceedings of the Proceedings of the IEEE international conference on computer vision, 2015; pp. 1026–1034. [Google Scholar]

- Ma, X.; Niu, Y.; Gu, L.; Wang, Y.; Zhao, Y.; Bailey, J.; Lu, F. Understanding adversarial attacks on deep learning based medical image analysis systems. Pattern Recognition 2021, 110, 107332. [Google Scholar] [CrossRef]

- Li, X.; Pan, D.; Zhu, D. Defending against adversarial attacks on medical imaging AI system, classification or detection? In Proceedings of the 2021 IEEE 18th International Symposium on Biomedical Imaging (ISBI), 2021; IEEE; pp. 1677–1681. [Google Scholar]

- Li, X.; Zhu, D. Robust detection of adversarial attacks on medical images. In Proceedings of the 2020 IEEE 17th International Symposium on Biomedical Imaging (ISBI), 2020; IEEE; pp. 1154–1158. [Google Scholar]

- Gowda, D.; Chaithra, S.; Gujar, S.S.; Shaikh, S.F.; Ingole, B.S.; Reddy, N.S. Scalable ai solutions for iot-based healthcare systems using cloud platforms. In Proceedings of the 2024 8th International Conference on I-SMAC (IoT in Social, Mobile, Analytics and Cloud)(I-SMAC), 2024; IEEE; pp. 156–162. [Google Scholar]

- D.G., V; S M, C.; Gujar, S.S.; Firoz Shaikh, S.; Ingole, B.S.; Sudhakar Reddy, N. Scalable AI Solutions for IoT-based Healthcare Systems using Cloud Platforms. In Proceedings of the 2024 8th International Conference on I-SMAC (IoT in Social, Mobile, Analytics and Cloud) (I-SMAC), 2024; pp. 156–162. [Google Scholar] [CrossRef]

- Sai, S.; Chamola, V.; Choo, K.K.R.; Sikdar, B.; Rodrigues, J.J.P.C. Confluence of Blockchain and Artificial Intelligence Technologies for Secure and Scalable Healthcare Solutions: A Review. IEEE Internet of Things Journal 2023, 10, 5873–5896. [Google Scholar] [CrossRef]

- Ali, A.; Ali, H.; Saeed, A.; Khan, A.A.; Tin, T.T.; Assam, M.; Ghadi, Y.Y.; Mohamed, H.G. Blockchain-Powered Healthcare Systems: Enhancing Scalability and Security with Hybrid Deep Learning. Sensors 2023, 23, 7740. [Google Scholar] [CrossRef] [PubMed]

- Ali, A.; Ali, H.; Saeed, A.; Ahmed Khan, A.; Tin, T.T.; Assam, M.; Ghadi, Y.Y.; Mohamed, H.G. Blockchain-powered healthcare systems: enhancing scalability and security with hybrid deep learning. Sensors 2023, 23, 7740. [Google Scholar] [CrossRef] [PubMed]

- Dhanka, S.; Sharma, A.; Kumar, A.; Maini, S.; Vundavilli, H. Advancements in Hybrid Machine Learning Models for Biomedical Disease Classification Using Integration of Hyperparameter-Tuning and Feature Selection Methodologies: A Comprehensive Review. In Archives of Computational Methods in Engineering; 2025. [Google Scholar] [CrossRef]

- Yang, S.; Li, Y.; Nie, J.; Ercisli, S.; Gadekallu, T.R. Enhanced Brain Tumor Detection Using DCGAN Augmentation and Optimized EfficientDet in IoT-Based Healthcare Industry 5.0. IEEE Internet of Things Journal 2025. [Google Scholar] [CrossRef]

- not extracted, A. Cloud Computing Framework for Healthcare: Architecture, Applications, and Challenges. Healthcare 2023, 11, 4944. [Google Scholar]

- Waqar, M.; et al. Optimized Explainable ConvNeXt Framework for Monkeypox Diagnosis: Balancing Accuracy and Interpretability. SLAS Technology 2025, 33, 100336. [Google Scholar] [CrossRef]

- Wu, X.; Fu, Y.; Bian, L.; Feng, M.; Lu, X.; He, D.; Han, Y.; Dong, S. Single Drive Multi-Training Mode Adaptive Wrist Rehabilitation Device. In Proceedings of the Proceedings of the IEEE International Conference on Mechatronics and Automation (ICMA), 2025; IEEE. [Google Scholar] [CrossRef]

- Esmaeilzadeh, P. Challenges and strategies for wide-scale artificial intelligence (AI) deployment in healthcare practices: A perspective for healthcare organizations. Artificial Intelligence in Medicine 2024, 151, 102861. [Google Scholar] [CrossRef]

- Khan, S.; Naz, N.S.; Mazhar, T.; Tariq, M.U.; Shahzad, T.; Guizani, S.; Hamam, H. Green AI techniques for reducing energy consumption in AI systems. Array 2025, 100652. [Google Scholar] [CrossRef]

- Bogoch, I.I.; Watts, A.; Thomas-Bachli, A.; Huber, C.; Kraemer, M.U.; Khan, K. Pneumonia of unknown etiology in Wuhan, China: Potential for international spread via commercial air travel. Journal of Travel Medicine 2020, 27, taaa008. [Google Scholar] [CrossRef]

- Khan, K.; McNabb, S.J.; Memish, Z.A.; Eckhardt, R.; Hu, W.; Kossowsky, D.; et al. Infectious disease surveillance and modeling across geographic frontiers and scientific disciplines. Journal of Travel Medicine 2020, 27, taz020. [Google Scholar] [CrossRef]

- MacIntyre, C.R.; Chen, X.; Kunasekaran, M.; Quigley, A.; Lim, S.; Stone, H.; et al. Artificial intelligence in public health: The potential of epidemic early warning systems. Journal of International Medical Research 2023, 51, 3000605231159335. [Google Scholar] [CrossRef] [PubMed]

- Qin, X.; Jiang, X.; Wang, J.; Zhao, Y. Exploring big data in the early detection of infectious diseases. Frontiers in Public Health 2024, 12, 1371852. [Google Scholar] [CrossRef]

- Freifeld, C.C.; Mandl, K.; Reis, B.Y.; Brownstein, J.S. HealthMap: Global infectious disease monitoring through automated classification and visualization of internet media reports. Journal of the American Medical Informatics Association 2008, 15, 150–157. [Google Scholar] [CrossRef] [PubMed]

- Brownstein, J.S.; Freifeld, C.C.; Madoff, L.C. Digital disease detection — harnessing the web for public health surveillance. New England Journal of Medicine 2009, 360, 2153–2157. [Google Scholar] [CrossRef]

- Milinovich, G.; Williams, S.; Clements, A.; Hu, W. Role of big data in the early detection of Ebola and other emerging infectious diseases. The Lancet Global Health 2015, 3, e20–e21. [Google Scholar] [CrossRef]

- Bhatia, S.; Lassmann, B.; Cohn, E.; Desai, A.N.; Carrion, M.; Kraemer, M.U.; et al. Using digital surveillance tools for near real-time mapping of the risk of infectious disease spread. NPJ Digital Medicine 2021, 4, 73. [Google Scholar] [CrossRef]

- Hadfield, J.; Megill, C.; Bell, S.M.; Huddleston, J.; Potter, B.; Callender, C.; Sagulenko, P.; Bedford, T.; Neher, R.A. Nextstrain: real-time tracking of pathogen evolution. Bioinformatics 2018, 34, 4121–4123. [Google Scholar] [CrossRef]

- Sagulenko, P.; Puller, V.; Neher, R.A. TreeTime: Maximum-likelihood phylodynamic analysis. Virus Evolution 2018, 4, vex042. [Google Scholar] [CrossRef]

- Schmidt, M.; Arshad, M.; Bernhart, S.H.; Hakobyan, S.; Arakelyan, A.; Löffler-Wirth, H.; et al. The evolving faces of the SARS-CoV-2 genome. Viruses 2021, 13, 1764. [Google Scholar] [CrossRef]

- Whaley, C.M.; Taylor, E.A.; Koenig, L.; White, C. Health Care Cost and Utilization Report: 2013. Health Care Cost Institute. Accessed. 2014.

- Institute, H.C.C. 2020 Health Care Cost and Utilization Report. Accessed. 2021.

- Farid, F.; Bello, A.; Ahamed, F.; Hossain, F. The Roles of AI Technologies in Reducing Hospital Readmission for Chronic Diseases: A Comprehensive Analysis. Preprints. 2023. Preprint. available at. [CrossRef]

- Kumar, Y.; Koul, A.; Singla, R.; Ijaz, M.F. Artificial intelligence in disease diagnosis: A systematic literature review, synthesizing framework, and future research agenda. Journal of Ambient Intelligence and Humanized Computing 2023, 14, 8459–8486. [Google Scholar] [CrossRef]

- Fitzpatrick, K.K.; Darcy, A.; Vierhile, M. Delivering cognitive behavior therapy to young adults with symptoms of depression and anxiety using a fully automated conversational agent (Woebot): A randomized controlled trial. JMIR Mental Health 2017, 4, e19. [Google Scholar] [CrossRef]

- Angelis, L.D.; Baglivo, F.; Arzilli, G.; Privitera, G.P.; Ferragina, P.; Tozzi, A.E.; et al. ChatGPT and the rise of large language models: The new AI-driven infodemic threat in public health. Frontiers in Public Health 2023, 11, 1166120. [Google Scholar] [CrossRef]

- Topol, E.J. High-performance medicine: the convergence of human and artificial intelligence. Nature Medicine 2019, 25, 44–56. [Google Scholar] [CrossRef]

- Rong, G.; Mendez, A.; Assi, E.B.; Zhao, B.; Sawan, M. Artificial intelligence in healthcare: review and prediction case studies. Engineering 2020, 6, 291–301. [Google Scholar] [CrossRef]

- Shaheen, M.Y. Applications of Artificial Intelligence (AI) in healthcare: A review. In ScienceOpen Preprints; 2021. [Google Scholar]

- Aung, Y.Y.; Wong, D.C.; Ting, D.S. The promise of artificial intelligence: a review of the opportunities and challenges of artificial intelligence in healthcare. British medical bulletin 2021, 139, 4–15. [Google Scholar] [CrossRef] [PubMed]

- Sadeghi, Z.; Alizadehsani, R.; Cifci, M.A.; Kausar, S.; Rehman, R.; Mahanta, P.; Bora, P.K.; Almasri, A.; Alkhawaldeh, R.S.; Hussain, S.; et al. A review of Explainable Artificial Intelligence in healthcare. Computers and Electrical Engineering 2024, 118, 109370. [Google Scholar] [CrossRef]

- Wubineh, B.Z.; Deriba, F.G.; Woldeyohannis, M.M. Exploring the opportunities and challenges of implementing artificial intelligence in healthcare: A systematic literature review. In Proceedings of the Urologic Oncology: Seminars and Original Investigations; Elsevier, 2024; Vol. 42, pp. 48–56. [Google Scholar]

- Aminizadeh, S.; Heidari, A.; Dehghan, M.; Toumaj, S.; Rezaei, M.; Navimipour, N.J.; Stroppa, F.; Unal, M. Opportunities and challenges of artificial intelligence and distributed systems to improve the quality of healthcare service. Artificial Intelligence in Medicine 2024, 149, 102779. [Google Scholar] [CrossRef] [PubMed]

- Kasula, B.Y. AI applications in healthcare a comprehensive review of advancements and challenges. International Journal of Managment Education for Sustainable Development 2023, 6. [Google Scholar]

| Major Domains within AI | |

|---|---|

| ML | Learns from data to improve decisions over time. |

| DL | Multi-layered neural networks that automatically learn complex data features. |

| CNN | Deep learning model for extracting spatial features from image data. |

| CV | Technique to interpret and analyze visual information from images or videos. |

| Ref | Method | Attack | Dataset | Architecture | Task |

|---|---|---|---|---|---|

| [151] | Adversarial Training | FGSM, JSMA | CT, MRI | UNet + RPN | Classification |

| [152] | PDT + adv_train | FGSM, PGD, MIFGSM, DAA |

X-ray | DenseNet, ResNet, VGG, Inception V3 |

Classification |

| [153] | NLCE | FGSM | Lung, Skin Lesion | SLSDeep, WNCN, UNet, InvertNet |

Segmentation |

| [154] | KD & LID | FGSM, PGD, BIM | DR, X-ray | ResNet | Classification |

| [155] | Unsupervised Anomaly Detection | FGSM, BIM, MIM, PGD | X-ray | DenseNet, ResNet | Classification |

| [156] | SSAT & UAD | FGSM, PGD, C&W | DR (OCT) | ResNet | Classification |

| Challenges | Study | Core Issue | Solution | Drawback |

|---|---|---|---|---|

| [102] | Secure data sharing | Federated learning | Synchronization | |

| [103] | Low Data Quality | Privacy-preserving FL | cryptographic complexity | |

| [104] | Non-IID data distribution | Collaborative model training | Reduced speed | |

| Data Privacy | [105] | unbalanced data distribution | Federated Learning | IID assumption |

| [106] | heterogeneity) | VAFL framework | transformation complexity | |

| [107] | Client heterogeneity | CusFL | Training complexity | |

| [108] | Data heterogeneity | SplitAVG | Architectural complexity | |

| [115] | Re-authentication | Decentralized Authentication | Inaccessibility | |

| [116] | Data silos | Blockchain-enabled sharing | Off-chain vulnerability | |

| [117] | Synchronization | Fine-grained data sharing | Lack of concurrency support | |

| [118] | EMR Integrity | Permissioned Blockchain | Lack of Interoperability | |

| [119] | Trusted Verification | Secure Health Passports | Key Management Risk | |

| Data Security | [120] | Improper disposal of functional devices |

Decentralized Trading | Adoption Barrier |

| [121] | Risk of equipment failure |

Blockchain Maintenance | Regulatory Challenge | |

| [122] | Trust and Privacy | Blockchain FL | Metadata Exposure | |

| [123] | Consensus Inefficiency | Blockchain Distillation | Privacy Leakage Risk | |

| [124] | Trust and Privacy | Blockchain Aggregation | High Overhead | |

| [125] | Ethical Oversight | Ethical Governance | Implementation Gap | |

| [127] | Accountability | Ethical Validation Framework |

Algorithmic Opacity | |

| Ethical & Legal | [128] | Legal Uncertainty | Policy Reform | Blurred Responsibility |

| [129] | Regulatory Gaps | Governance Reform | Overgeneralized | |

| [130] | Fragmentation | Harmonization | Theoretical | |

| [133] | Interpretability | LIME | Local approximation | |

| [134] | Explainability | SHAP | Computationally expensive | |

| [135] | Multiclass efficiency | LPDCNN, Ridge-ELM | Limited generalization | |

| Technical | [144] | Dataset bias | Federated learning | Heterogeneity |

| [146] | Algorithmic bias | Fairness-aware learning | Tradeoffs | |

| [157] | Latency,scalability | CNN–LSTM | Network dependency | |

| [161] | Decentralized | Blockchain +IOT | Interoperability | |

| [162] | High cost | Pruning, quantization | Accuracy drop risk | |

| [164] | Latency | Cloud–Edge allocation | Network limits | |

| Deployment | [165] | Clinical trust | Grad-CAM | Computation cost |

| [166] | Safe Deploy | Rehab AI | Hardware cost | |

| [168] | Hardware cost | Green AI | Precision loss |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).