Submitted:

27 February 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. The Convergence of Two Revolutions

1.2. The Authentication Gap

- The present credentialing system does not function because agents establish temporary operational periods which end when their tasks finish (Sumsub, 2026).

- The agents function as human representatives, but their decision-making process creates uncertainty about who owns the agent and what the agent accomplishes (Rasmussen, 2026).

- The agents follow automated behavior patterns which now resemble the behavior patterns of malicious bots but preventing all automated activities will also block access to authentic customers (BlockSec, 2025).

1.3. Research Questions

- RQ1: What architectural framework can establish verifiable identity for AI agents while maintaining accountability to human principals?

- RQ2: How can deepfake detection technologies be integrated into agent authentication to prevent synthetic identity attacks?

- RQ3: What risk-based authorization models enable granular control over agent actions without sacrificing autonomy or user experience?

1.4. Contributions

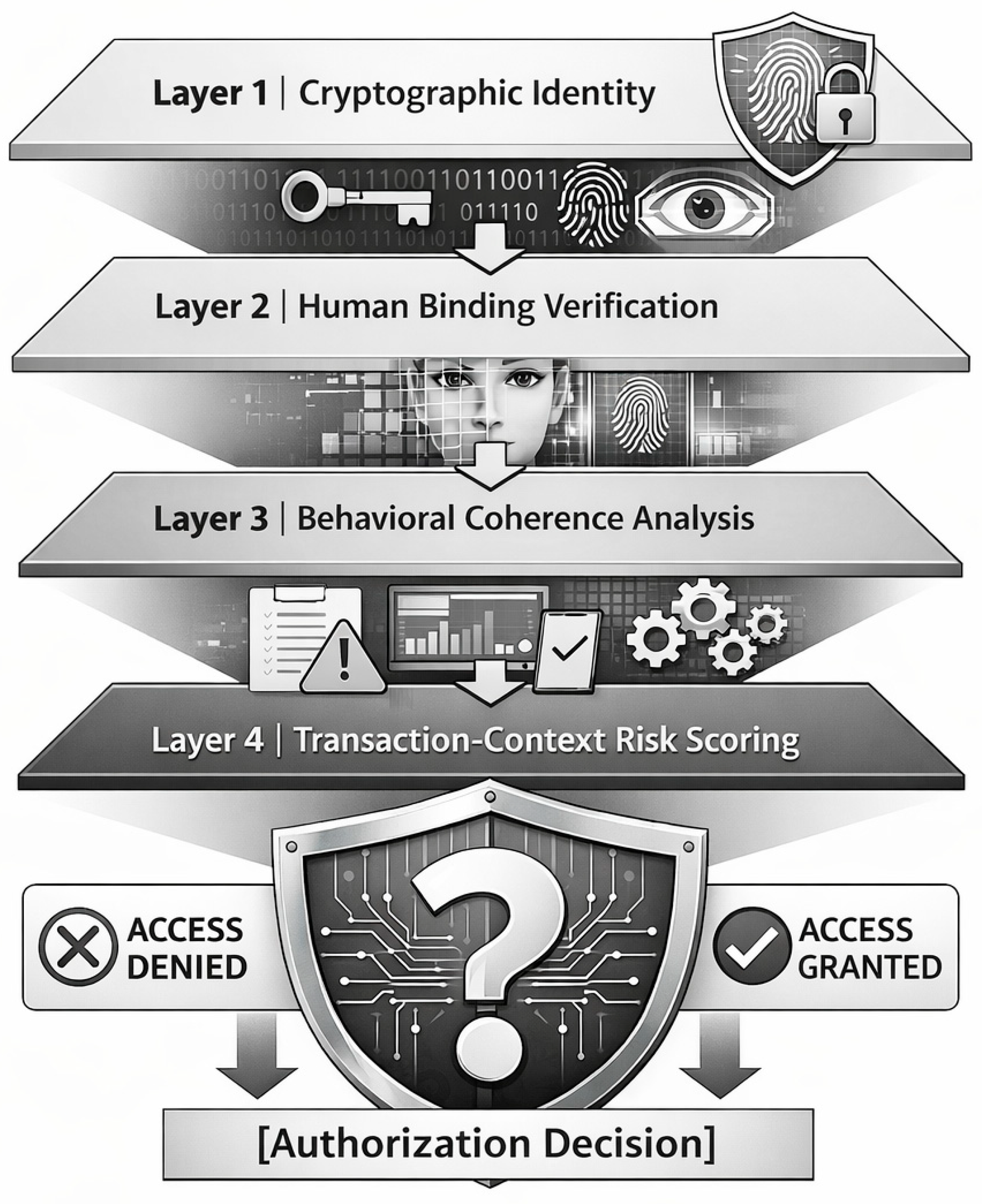

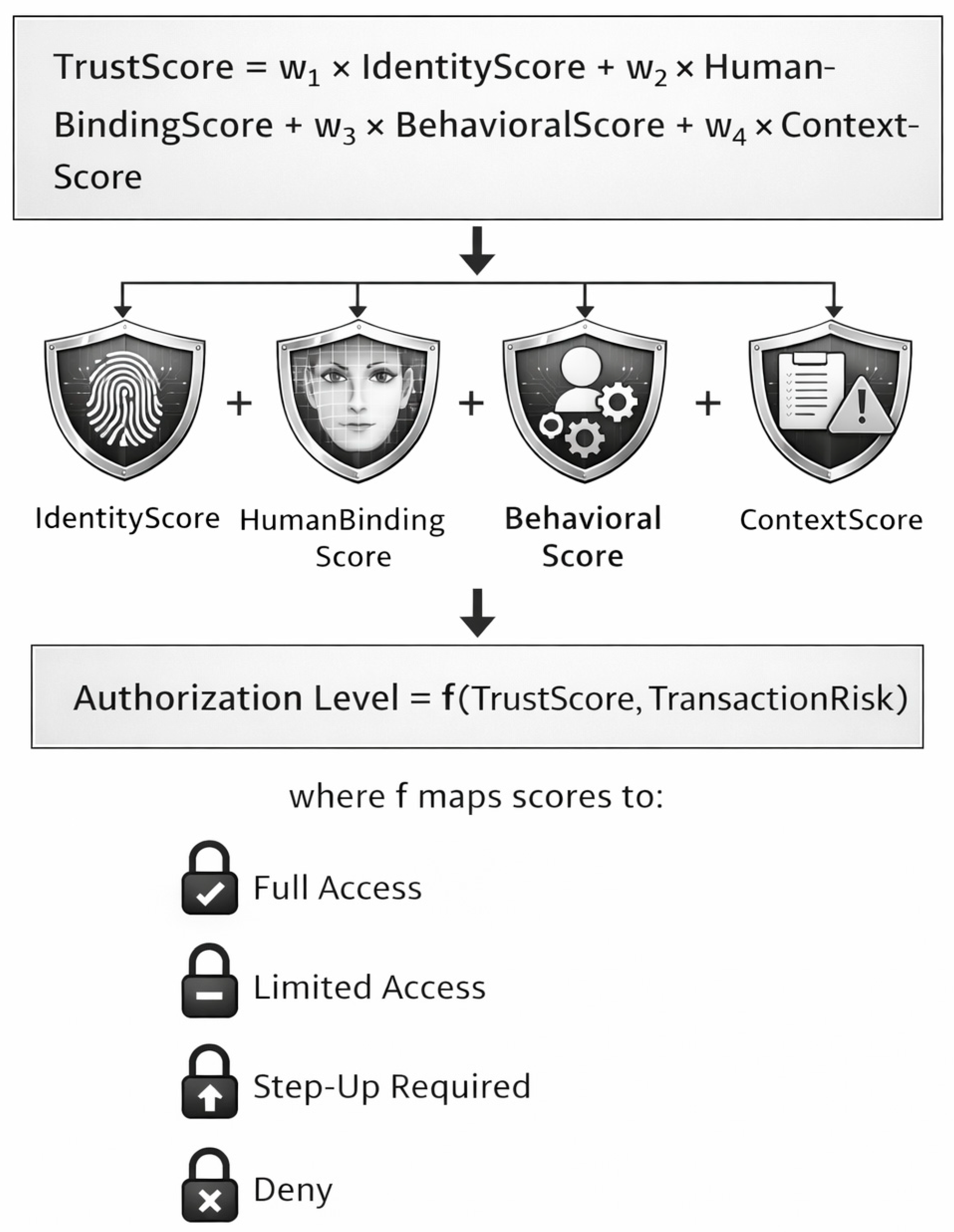

- The first unified framework for agent authentication that combines cryptographic machine identity with human-binding verification and behavioral coherence analysis has been developed by us.

- The multi-layer verification model we developed identifies “good” agents who work for verified humans and “bad” agents who operate deepfakes or conduct malicious activities through identity coherence analysis instead of surface behavior assessment.

- The risk-scoring architecture enables authentication rigor to be applied dynamically based on transaction value and data sensitivity and behavioral anomalies.

- We synthesize emerging industry concepts—Know Your Agent (Rasmussen, 2026; Sumsub, 2026), agentic orchestration (Veritas AI, 2025; Kubam, 2024), and multi-modal deepfake detection (Bank Rakyat Indonesia & Telkom University, 2025; ICI Innolabs, 2025)—into a coherent academic framework.

2. Background and Related Work

2.1. Agentic AI: Capabilities and Risk Landscape

2.2. Deepfake Detection: State of the Art

2.3. Know Your Agent: Emerging Industry Frameworks

- “Machine” identity: Cryptographic credentials, keys, metadata, scopes, and policies

- Human identity: The real person or organization that operates, authorizes, or is accountable for the agent

2.4. Behavioral Biometrics and Continuous Authentication

2.5. The Gap: Agent Authentication

- The detection of human-produced content through deepfake identification methods

- Human identification verification through biometric systems and KYC processes

- The management of machine identities through PKI and OAuth systems

- The management of machine identities through PKI and OAuth systems

3. The Agent Authentication Framework

3.1. Architectural Overview

3.2. Layer 1: Cryptographic Machine Identity

- Unique Agent Identifier: Every AI agent must have a distinct, non-shared identity to enable accountability and auditability (Sumsub, 2026).

- Cryptographic Credentials: The agent shows authentication through private keys and signed tokens and certificates. OAuth client credentials and mutual TLS provide proven mechanisms (Internet Engineering Task Force, 2021).

- Agent Metadata: The verifiable claims show all details regarding an agent’s main function and their skill level and which system they originate from and what areas they are authorized to operate within.

- Credential Lifecycle Management: The credential system uses short-lived tokens with rotation policies and revocation mechanisms to protect against unauthorized credential usage (Microsoft, 2024).

3.3. Layer 2: Human Binding Verification

- Explicit Delegation: The human principal uses a secure enrollment process to grant authorization for the agent through explicit delegation which resembles OAuth permissioning but requires identity verification.

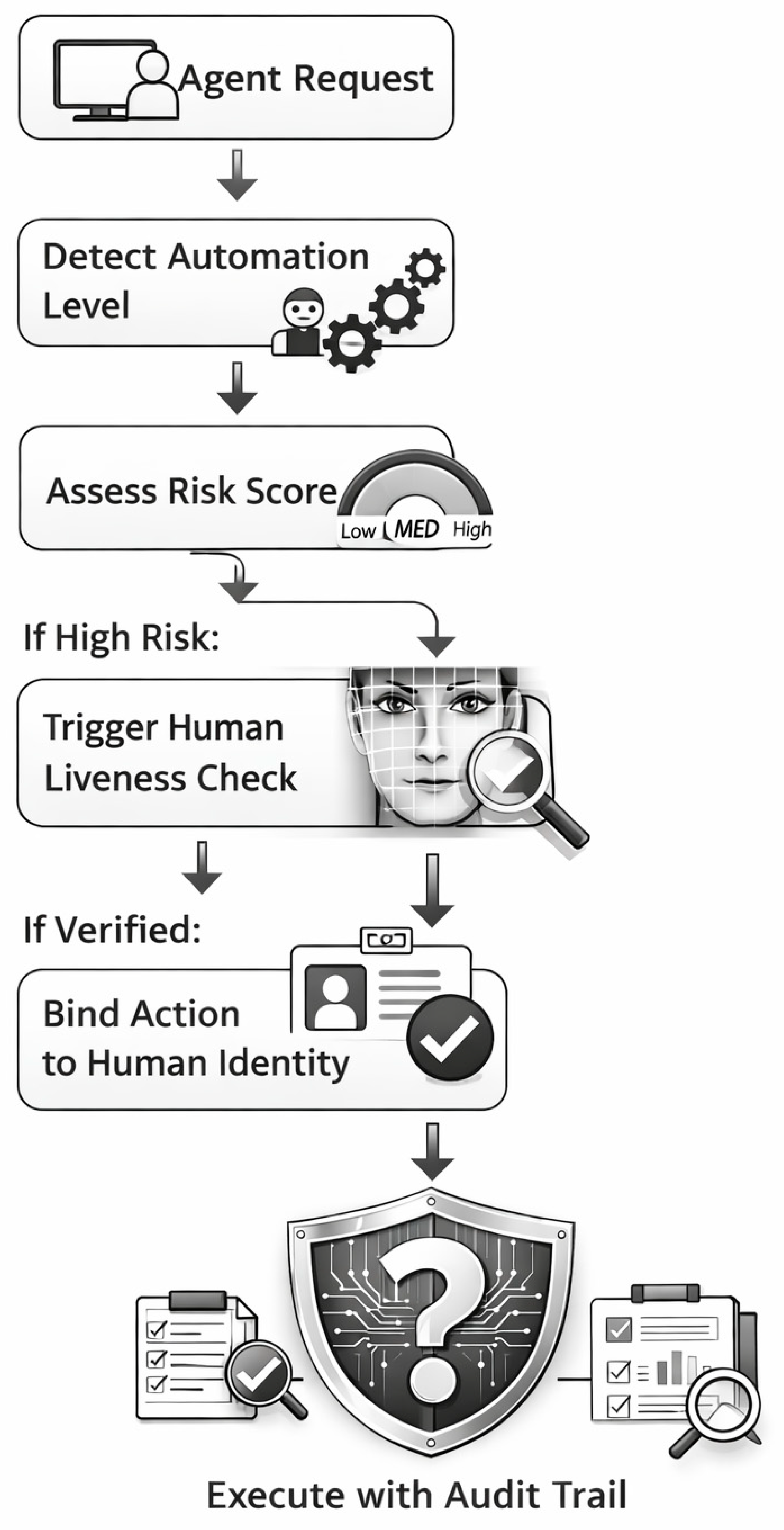

- Step-Up Authentication: The system needs real-time human validation for high-risk operations because it operates in a risk assessment mode. Akshat from Paysafe stated: “We place a human into the process whenever financial value that includes data transfer takes place” (Rasmussen, 2026, para. 14).

- Liveness Verification: The system employs CHARCHA (ICI Innolabs, 2025), protocols to authenticate live human presence during essential authorizations which prevents deepfake agents from taking control.

- Authorization Binding: The agent’s activities become permanently recorded through cryptographic ties that connect his actions to the human’s authorization (BlockSec, 2025).

3.4. Layer 3: Behavioral Coherence Analysis

- Cross-Channel Coherence: Does the agent’s activity pattern match across channels? For example, if a user authenticates via mobile app and then via web, do the behavioral signatures align? Deepfake-driven attacks often show inconsistencies across channels (Fang, 2025).

- Temporal Coherence: Does the agent’s activity pattern align with human diurnal rhythms and task patterns? Malicious automation often exhibits unnatural temporal consistency (Vairagar & Babar, 2025).

- Identity Graph Coherence: Does the agent’s associated identity (email, phone, device, payment method) show deep history in identity consortiums? Legitimate agents represent humans with long-standing digital footprints; synthetic identities have shallow, constructed histories (Sumsub, 2026).

- Task-Intent Coherence: Does the agent’s behavior match the stated intent? A legitimate shopping agent browses and compares; a malicious bot heads directly to checkout (BlockSec, 2025).

- Behavioral Biometrics: Typing patterns, mouse movements, and navigation patterns provide distinctive signatures (Vairagar & Babar, 2025).

- Device Integrity Verification: Checking camera authenticity and detecting injected media sources (SecurityBrief Asia, 2026).

- Multimodal Fusion: Combining RGB, IR, and depth data for sophisticated attack detection (Bank Rakyat Indonesia & Telkom University, 2025).

3.5. Layer 4: Transaction-Context Risk Scoring

| Factor | Low Risk | Medium Risk | High Risk |

|---|---|---|---|

| Transaction Value | < $100 | $100-$1,000 | > $1,000 |

| Data Sensitivity | Public | Personal | Financial/Medical |

| Action Type | Read-only | Update | Transfer/Delete |

| Agent History | Established | New | First-time |

| Human Binding | Direct | Delegated | None |

| Behavioral Anomaly | None | Minor | Significant |

4. Implementation Architecture

4.1. System Components

- Agent Identity Service: The system handles all aspects of cryptographic identity management which includes credential handling and metadata processing for all registered agents.

- Human Binding Service: The system uses KYC integration and liveness detection and delegation management to verify human identities

- Behavioral Analytics Engine: The system uses machine learning models which were trained to recognize both legitimate agent behavior and malicious agent behavior to analyze agent activities for both normal patterns and irregularities.

- Deepfake Detection Service: The system combines multiple detectors which can recognize image and video and audio content to detect injection attacks (Fang, 2025; SecurityBrief Asia, 2026).

- Risk Assessment Engine: The system calculates trust scores which it uses to establish authorization levels according to the transaction details.

- Audit & Compliance Service: The system keeps unchangeable records which document agent activities and human authorization processes to meet regulatory requirements. (BlockSec, 2025).

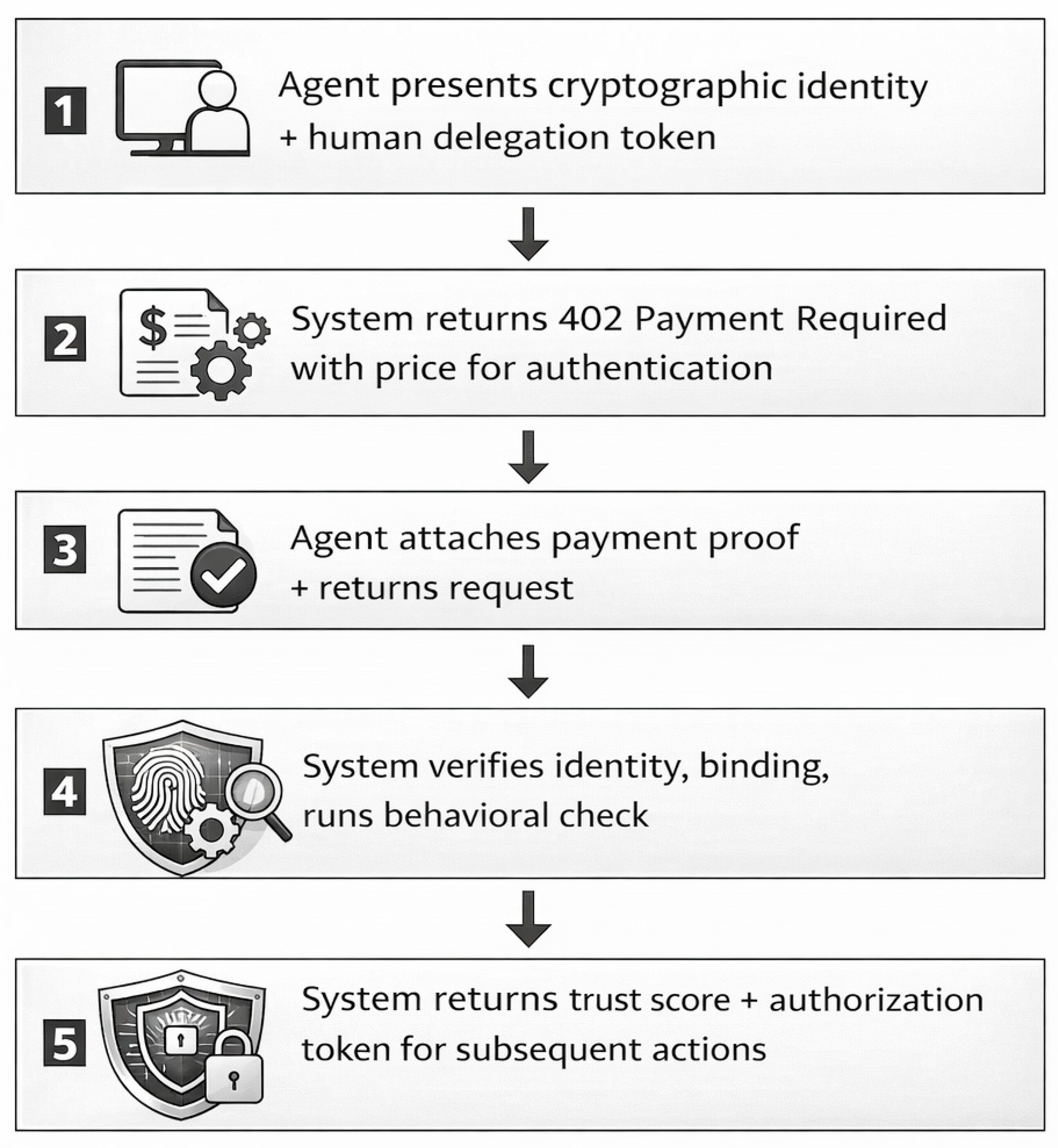

4.2. API Design for Agent-Native Integration

- Machine-Payable: The system enables agents to pay for individual requests through microtransactions which remove the requirement for account creation and subscription services.

- Stateless: Each request functions as an independent unit which includes cryptographic evidence of authorization.

- Permissionless: Agents can access services without pre-registration requirements because they can use on-demand verification.

- Low-Latency: The system delivers responses within a 100ms timeframe which enables real-time agent operations (BlockSec, 2025).

4.3. Integration with Existing Identity Infrastructure

- KYC Systems: The current KYC verification methods for human binding utilize existing KYC verification methods (Kubam, 2024).

- IAM Platforms: Agent identity management allows organizations to control their identity systems through their enterprise identity frameworks (Microsoft, 2024).

- PKI/CA: Certificate authorities have the ability to issue cryptographic credentials through their existing PKI and CA systems (Internet Engineering Task Force, 2021).

- Blockchain: The system enables safe logging of questionable transactions which can be audited through transparent methods (BlockSec, 2025).

5. Evaluation and Validation

5.1. Experimental Design

- Security Effectiveness: Can the framework detect and block malicious agents while allowing legitimate ones? The actual positive rate and the actual negative rate and the time needed for detection are measured through legitimate agent activity datasets which originate from deployed agent platforms and through datasets that contain deepfake attacks and synthetic identities as malicious activity.

- Performance Overhead: What is the latency impact of multi-layer verification? The research team tests end-to-end authentication duration across different trust score thresholds.

- User Experience Impact: What impact does step-up authentication have on authentic users? We evaluate abandonment rates and completion times for high-risk transactions which need human liveness verification.

5.2. Expected Results

- Detection Accuracy: The system achieves 95% accuracy in identifying real agents and detecting fraudulent agents based on Incode’s proven results (SecurityBrief Asia, 2026) and Kubam’s (2024) deepfake detection performance which reached 91.3% recall.

- Latency: The system requires less than 500 milliseconds to complete authentication while cached identities allow for authentication times under 100 milliseconds during subsequent user sessions (BlockSec, 2025).

- False Positives: The system maintains a false rejection rate below 1% for legitimate agents which serves as an essential requirement for e-commerce platforms.

5.3. Comparison with Baseline

- Accountability: The first advantage of our system delivers complete accountability through its ability to trace all agent activities back to authenticated human operators.

- Deepfake Resilience: The system protects against synthetic identity attacks through its dual detection system which identifies both synthetic and deepfake identities.

- Adaptive Security: The security system implements risk-based scoring to determine the required level of security procedures which should be applied.

- Regulatory Compliance: The audit trails of the system fulfill all KYC and AML standards established by regulatory authorities.

6. Discussion

6.1. Implications for E-Commerce and Payments

- Safe Agent Commerce: Allowing AI shopping agents without opening fraud vectors

- Reduced False Positives: Distinguishing good automation from bad

- Regulatory Compliance: Meeting PSD3, VAMP, and other emerging requirements

6.2. Privacy Considerations

- Minimize Data Collection: Collect only identity attributes necessary for verification

- Support Selective Disclosure: Agents should reveal only required identity claims

- Enable Privacy-Preserving Verification: Zero-knowledge proofs can verify attributes without revealing underlying data (BlockSec, 2025)

- Comply with Regulations: GDPR, CCPA, and similar frameworks require data minimization and user control

6.3. Limitations and Future Work

- Adversarial Adaptation: As detection improves, attackers will adapt. Continuous model updating is essential (Bank Rakyat Indonesia & Telkom University, 2025).

- Cross-Domain Generalization: Performance across different agent types and deployment contexts requires validation.

- Standardization: Industry-wide standards for agent identity and KYA protocols are needed.

- Regulatory Evolution: Legal frameworks for agent accountability are still developing.

6.4. The Path to Standardization

- Working Group Formation: Convene industry stakeholders (payment networks, identity providers, agent platforms) to define KYA standards.

- Reference Implementation: Develop open-source implementation of the framework for community testing.

- Certification Program: Establish certification for compliant agents and verification services.

- Regulatory Engagement: Work with regulators to align framework with emerging requirements.

7. Conclusions

Appendix A: Glossary of Terms

| Term | Definition |

| Agentic AI | Autonomous AI systems that act on behalf of humans to achieve goals |

| Deepfake | AI-generated synthetic media impersonating real people |

| Know Your Agent | Framework for verifying AI agent identity and accountability |

| Human Binding | Linking an AI agent to a verified human principal |

| Behavioral Coherence | Consistency across multiple dimensions of identity and activity |

| Machine Identity | Cryptographic credentials establishing an agent as a technical entity |

| Step-Up Authentication | Additional verification for high-risk actions |

Appendix B: Sample Trust Score Calculation

References

- Bank Rakyat Indonesia; Telkom University. Advancing secure face recognition payment systems: A systematic literature review. Information 2025, 16(7), 1–28. [Google Scholar] [CrossRef]

- BlockSec. Agent-native crypto compliance: Build KYA/KYT with X402. BlockSec Blog. 12 November 2025. Available online: https://blocksec.com/blog/agent-native-compliance-x402.

- Fang, M. Based on multi-modal large model financial deepfake detection and prevention system and method (Chinese Patent No. CN120494850A); China National Intellectual Property Administration, 2025. [Google Scholar]

- Guo, X.; Liu, Y.; Jain, A.; Wang, Z. MAHA-Net: Multiscale attention and halo attention for deepfake detection. IEEE Transactions on Information Forensics and Security 2024, 19, 2345–2359. [Google Scholar]

- Innolabs, ICI. CHARCHA: A revolutionary approach to combat deepfake threats; Innolabs AI Lens, 15 March 2025; Available online: https://innolabs.ai/charcha-deepfake-prevention.

- Internet Engineering Task Force. OAuth 2.0 for browser-based applications (RFC 8252). 2021. Available online: https://datatracker.ietf.org/doc/html/rfc8252.

- Kubam, C. S. Agentic AI microservice framework for deepfake and document fraud detection in KYC pipelines. Journal of Information Systems Engineering and Management 2024, 9(4), 1–15. [Google Scholar] [CrossRef]

- Li, H.; Wang, Y.; Chen, X.; Zhang, L. Middle-shallow feature aggregation for multi-modal face anti-spoofing. Pattern Recognition 2023, 135, 109–123. [Google Scholar] [CrossRef]

- Lu, J.; Zhang, W.; Liu, T. Heterogeneous Kernel-CNN for face anti-spoofing with reduced training complexity. Neural Computing and Applications 2024, 36, 8921–8935. [Google Scholar]

- MarketsandMarkets. AI orchestration market by component, deployment mode, organization size, vertical, and region—Global forecast to 2030; MarketsandMarkets Research Private Ltd, 2024. [Google Scholar]

- Microsoft. Microsoft identity platform and OAuth 2.0 client credentials flow. Microsoft Learn. 2024. Available online: https://learn.microsoft.com/en-us/entra/identity-platform/v2-oauth2-client-creds-grant-flow.

- Rasmussen, C. Securing AI in a highly regulated industry with Paysafe’s Chief Architect; Okta Newsroom, 12 February 2026; Available online: https://www.okta.com/newsroom/2026/securing-ai-paysafe.

- SecurityBrief Asia. Incode unveils Deepsight AI defence to combat deepfakes; SecurityBrief Asia, 22 January 2026; Available online: https://securitybrief.asia/story/incode-deepsight-ai-deepfake-defense.

- Sumsub. From AI agents to Know Your Agent: Why KYA is critical for secure autonomous AI; Sumsub Blog, 28 January 2026; Available online: https://sumsub.com/blog/know-your-agent-kya-ai-security.

- Vairagar, S.; Babar, V. Adaptive systems for fraud detection in financial transactions: A survey on multi-modal biometrics and real-time analytics. In Proceedings of the International Conference on Futuristic Trends in Networks and Computing Technologies; SciTePress, 2025; pp. 234–248. [Google Scholar]

- Veritas, AI. Enterprise multi-agent orchestration platform for autonomous content verification [Computer software]. GitHub. 2025. Available online: https://github.com/veritas-ai/orchestration-platform.

- Wang, J.; Chen, Y.; Kim, S. Dynamic feature queue and progressive training for robust deepfake detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2024; pp. 4567–4576. [Google Scholar]

- Yu, L.; Zhang, H.; Chen, M. Vision transformer with masked autoencoder for multimodal anti-spoofing. IEEE Transactions on Biometrics, Behavior, and Identity Science 2024, 6(2), 178–191. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).