Submitted:

01 March 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

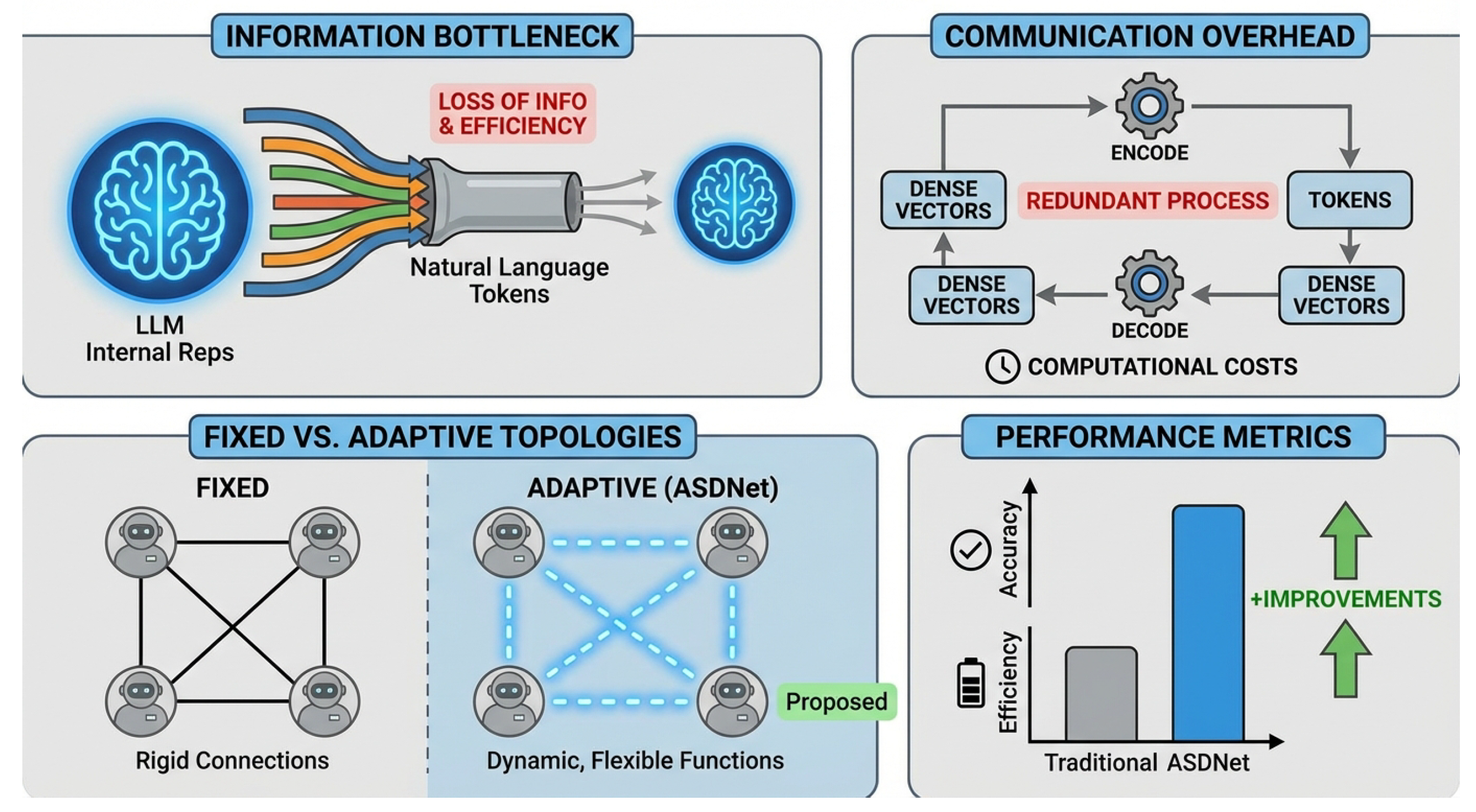

- We propose Adaptive Sparse Dense Communication Network (ASDNet), a novel framework for multi-agent LLMs that enables adaptive and sparse dense vector communication, addressing the limitations of fixed topologies and transformations.

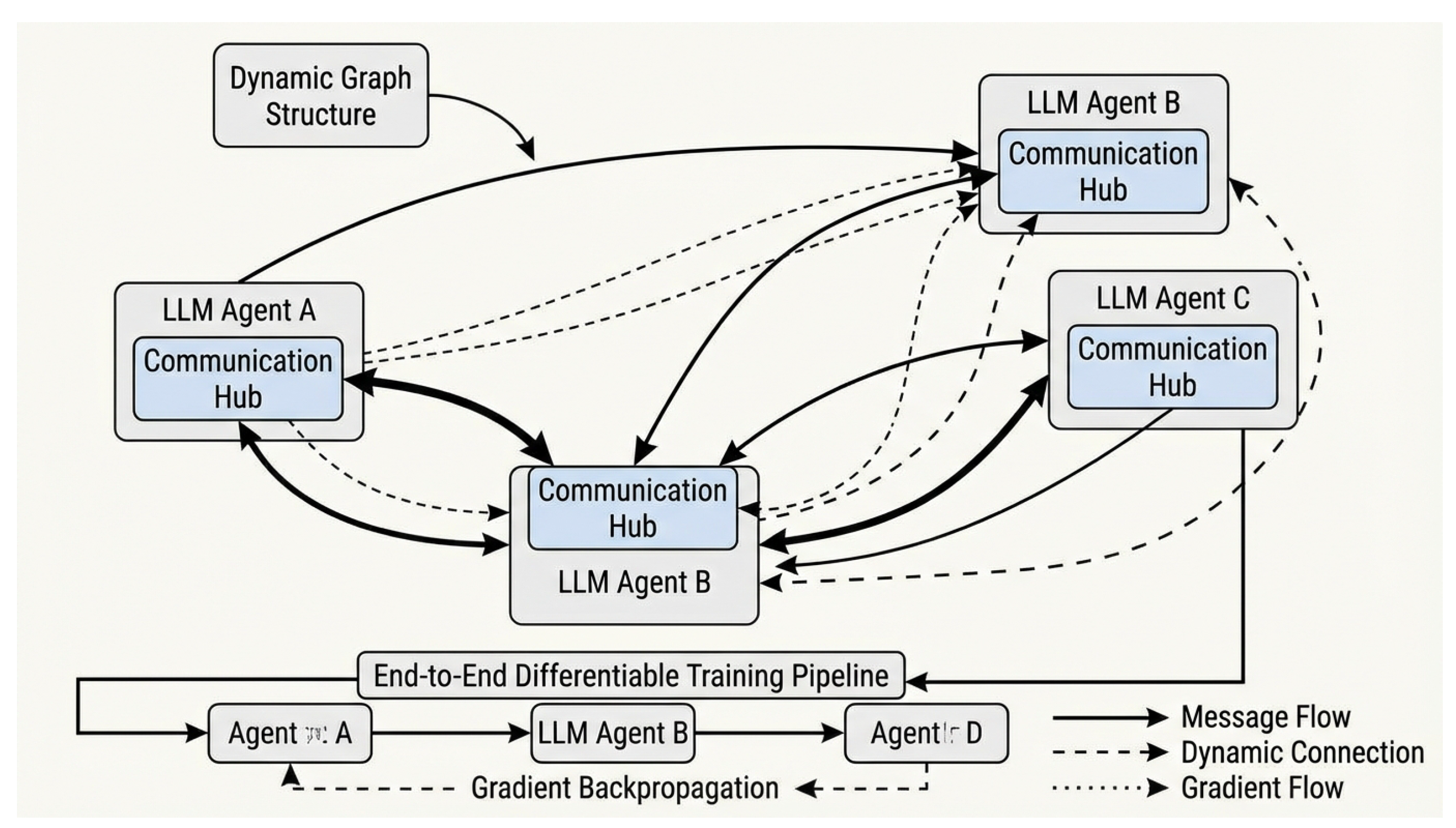

- We introduce the Communication Hub, a dynamic module within each agent that intelligently decides communication targets and selects/generates context-specific dense vector transformation functions, ensuring end-to-end differentiability of the entire communication process.

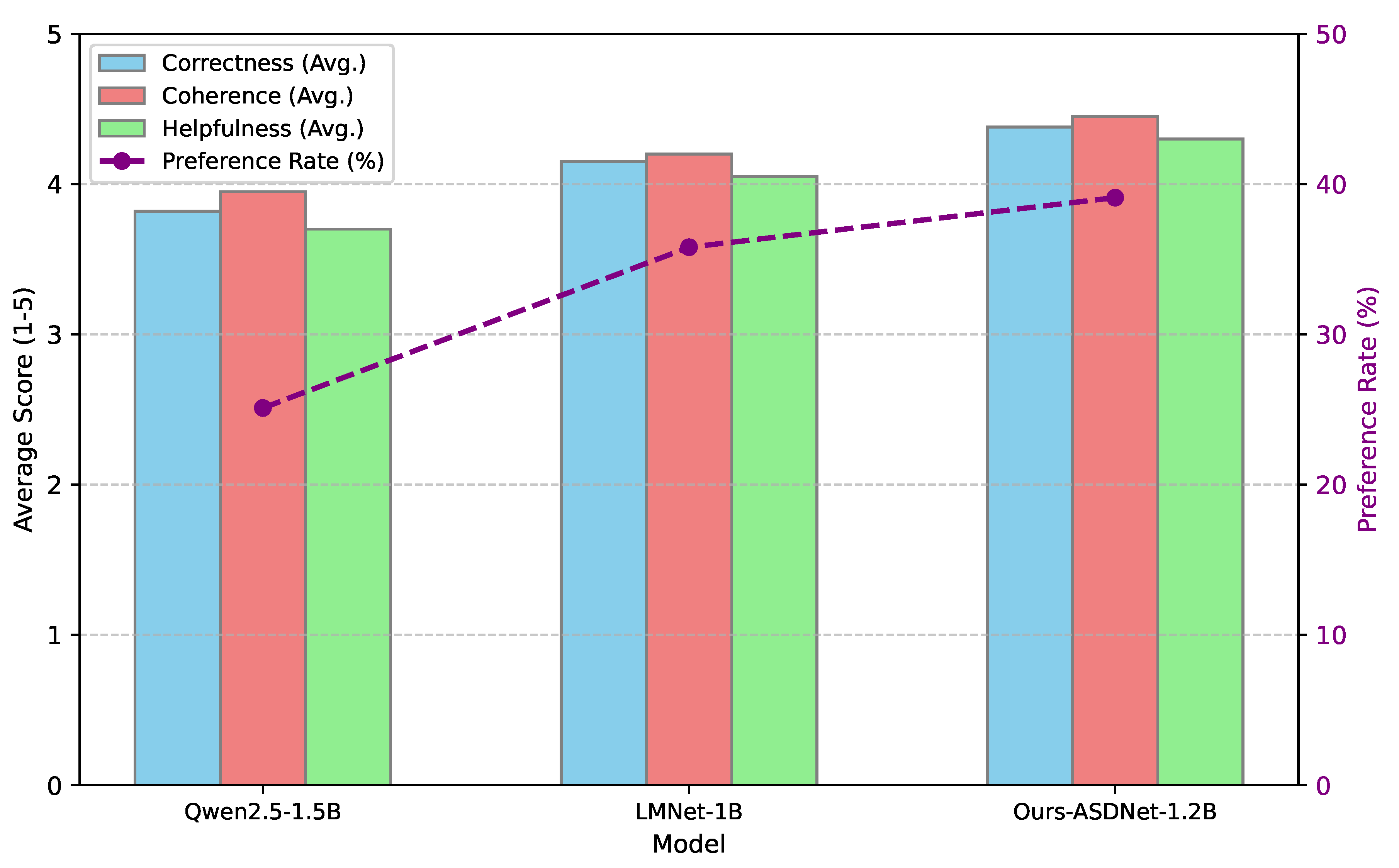

- We empirically demonstrate that ASDNet-1.2B achieves superior performance on a diverse set of challenging benchmarks compared to state-of-the-art dense communication baselines and other prominent open-source LLMs, all while maintaining highly efficient training data usage.

2. Related Work

2.1. Multi-Agent LLM Systems and Communication Paradigms

2.2. Dense and Adaptive Communication in Neural Networks

3. Method

3.1. Overall Architecture

3.2. LLM Agents (Vertices)

3.3. The Communication Hub

3.3.1. Context Analysis and Communication Target Decision

3.3.2. Adaptive Transformation Function Selection/Generation

3.4. Sparse and Adaptive Dense Communication

3.5. End-to-End Differentiable Training

4. Experiments

4.1. Experimental Setup

4.1.1. ASDNet-1.2B Model Configuration

- Vertices (LLM Agents): We utilize shared-parameter Qwen2.5-0.5B Transformer backbones as the foundational LLM agents. These backbones are stripped of their embedding and de-embedding layers to enable direct dense vector communication, consistent with the approach outlined in [18].

- Communication Hub: Each vertex is augmented with a lightweight Communication Hub module. This module comprises multi-layer perceptrons (MLPs) and a soft attention mechanism. The MLPs are responsible for dynamically computing communication scores () and parameterizing the selection or generation of transformation functions. The soft attention mechanism assists in context analysis and dynamically weighting messages.

- Transformation Function Library: Our library includes diverse dense vector transformation modules, such as varying complexities of linear projection layers, simple gated mechanisms, and compact Transformer layers. For ASDNet-1.2B, a hybrid strategy is adopted where a soft attention mechanism selects from a small, predefined set of general transformation types, and hypernetworks are then employed to generate lightweight linear projection parameters tailored for these chosen types.

- Communication Topology: While the underlying potential communication graph is a maximum of 5 layers with intra-layer full connectivity, the Communication Hub actively prunes this to a sparse subset at runtime, dynamically adapting the inter-agent connections.

- Total Parameters: The total parameter count for ASDNet-1.2B is approximately 1.2 billion, which includes the shared Qwen2.5-0.5B backbones, communication hubs, and the transformation function library/hypernetwork parameters. This makes it slightly larger than LMNet-1B but well within the same scale as other 1-3B LLMs.

4.1.2. Training Details

- Training Data: We employ a comprehensive mixture of publicly available datasets, mirroring the successful strategy of LMNet-1B [18]. This includes C4, Alpaca, ProsocialDialog, LaMini-instruction, as well as the training splits of MMLU, MATH, and GSM8K. This diverse dataset ensures robust general-purpose language understanding and reasoning capabilities.

-

Training Strategy: Our training proceeds in two distinct phases to optimize both the communication strategy and overall performance:

- Phase 1 (Communication Strategy Learning): The parameters of all LLM agent backbones (vertices) are frozen. Only the Communication Hub modules and the parameters of the transformation function library/hypernetworks are trained. This phase focuses on learning effective dynamic communication targets and adaptive transformation functions.

- Phase 2 (End-to-End Joint Fine-tuning): All parameters within the entire ASDNet framework, including the LLM agent backbones, communication hubs, and transformation functions, are jointly fine-tuned. This end-to-end differentiable optimization ensures that the communication protocols are fully integrated and optimized alongside the agents’ core inference processes using standard autoregressive loss.

- Optimization: We use the AdamW optimizer with a cosine learning rate scheduler, consistent with common practices in LLM training.

4.1.3. Evaluation Benchmarks and Baselines

- General Knowledge & Reasoning: MMLU, MMLU-Pro, Big-Bench Hard (BBH), ARC-Challenge (ARC-C), TruthfulQA, GPQA.

- Mathematical Reasoning: GSM8K, MATH.

- Code Generation: HumanEval, MBPP.

- STEM Knowledge: MMLU-STEM.

- Single LLMs: Qwen2.5-0.5B, Llama3.2-1B, Qwen2.5-1.5B, Gemma2-2B, Llama3.2-3B. These models represent strong standalone baselines that do not employ multi-agent communication.

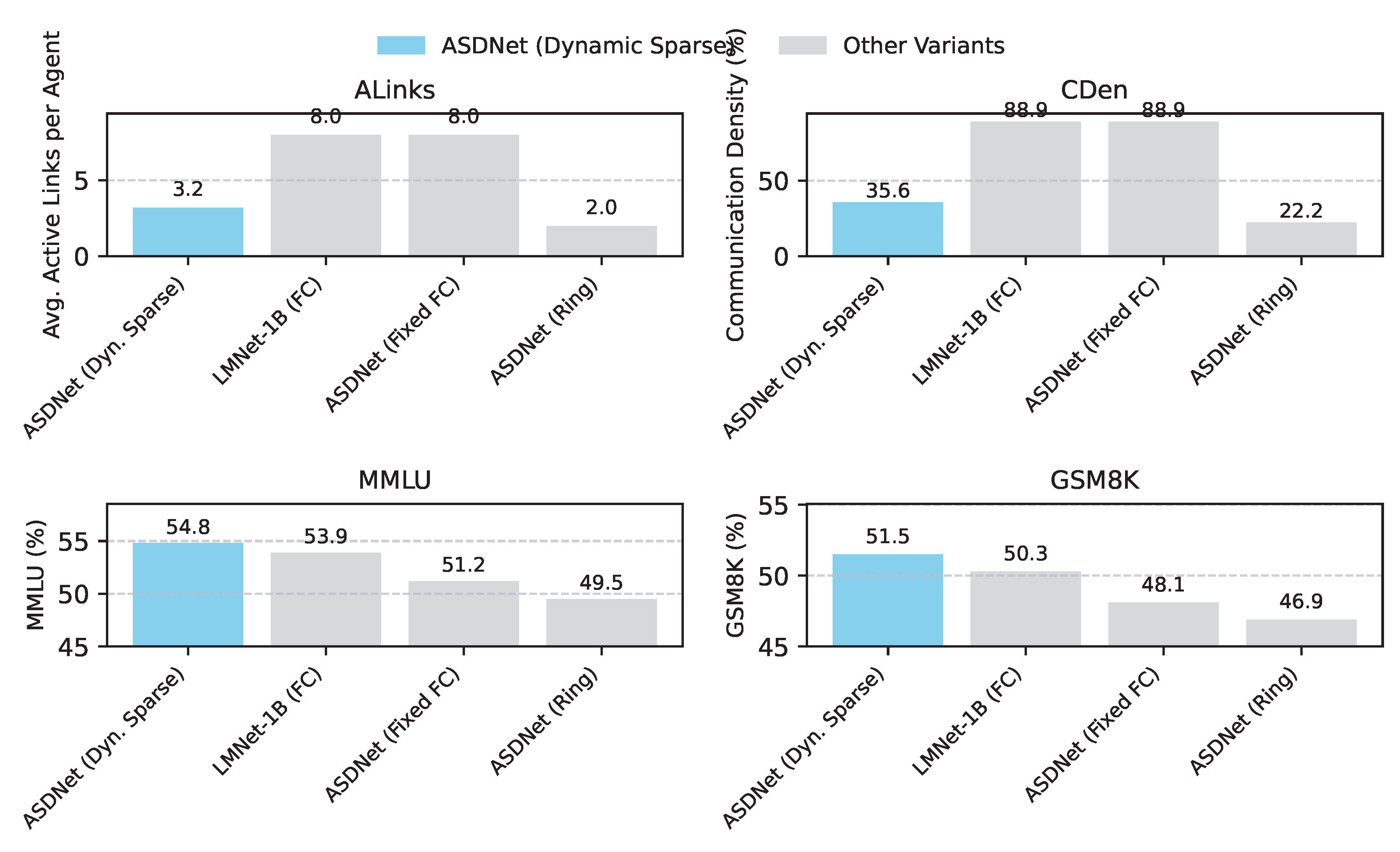

- Dense Communication Baseline: LMNet-1B [18], which is a direct predecessor exploring fixed-topology dense communication between LLMs. This serves as our primary comparative baseline for validating the improvements of adaptive and sparse communication.

4.2. Main Results: General Capability Extension

4.3. Ablation Study: Validating Adaptive Sparse Communication

- ASDNet w/o Dynamic Target (Fixed Topology): In this variant, the Communication Hub still performs adaptive transformations but is constrained to a predefined, fixed communication topology (e.g., a fully connected graph or a static ring structure). The ability to dynamically select communication targets is removed. As shown in Table 2, this leads to a performance drop across all benchmarks (e.g., 51.2% on MMLU compared to 54.8% for the full model). This confirms the importance of dynamic target decision-making for efficient and relevant information exchange.

- ASDNet w/o Adaptive Transform (Fixed Transform): Here, the Communication Hub maintains dynamic target selection but uses a single, fixed transformation function (e.g., a simple linear projection) for all messages, regardless of context or recipient. This variant achieves 52.5% on MMLU, an improvement over the fixed topology but still below the full model. This validates that adapting the message transformation based on context and recipient is crucial for maximizing information utility and efficiency.

- ASDNet w/o Comm. Hub (Direct Dense): This setup simplifies the communication to a direct dense vector exchange between agents using a fixed topology and fixed transformation, essentially mimicking a more basic LMNet-like approach without the intelligence of the Communication Hub. This variant shows the lowest performance among all ablations (e.g., 50.5% on MMLU), underscoring the critical role of the Communication Hub in orchestrating effective multi-agent collaboration.

4.4. Case Study: Small Data Customization and Parameter Efficiency

4.5. Human Evaluation

4.6. Analysis of Communication Sparsity

4.7. Effectiveness of Adaptive Transformations

4.8. Computational Efficiency and Resource Usage

5. Conclusion

References

- Zhou, Zixuan; de Melo, Maycon Leone; Rios, Tatiane Araújo. Toward multimodal agent intelligence: Perception, reasoning, generation and interaction. 2025.

- Qian, Wenhan; Shang, Ziqu; Wen, Detang; Fu, Tongran. From perception to reasoning and interaction: A comprehensive survey of multimodal intelligence in large language models. Authorea Preprints 2025. [Google Scholar]

- Jian, Pu; Yu, Donglei; Zhang, Jiajun. Large language models know what is key visual entity: An llm-assisted multimodal retrieval for vqa. In Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, 2024; pp. pages 10939–10956. [Google Scholar]

- Jian, Pu; Yu, Donglei; Yang, Wen; Ren, Shuo; Zhang, Jiajun. Teaching vision-language models to ask: Resolving ambiguity in visual questions. Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics 2025, Volume 1, pages 3619–3638. [Google Scholar]

- Jian, Pu; Wu, Junhong; Sun, Wei; Wang, Chen; Ren, Shuo; Zhang, Jiajun. Look again, think slowly: Enhancing visual reflection in vision-language models. In Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing; 2025; pp. 9262–9281. [Google Scholar]

- Hoxha, Ardit; Shehu, Besnik; Kola, Erion; Koklukaya, Etem. A survey of generative video models as visual reasoners. 2026.

- Wang, Tongxi; Xia, Zhuoyang. Stability of in-context learning: A spectral coverage perspective; 2026. [Google Scholar]

- Yang, Ning; Lin, Hai; Liu, Yibo; Tian, Baoliang; Liu, Guoqing; Zhang, Haijun. Token-importance guided direct preference optimization. arXiv 2025, arXiv:2505.19653. [Google Scholar] [CrossRef]

- Huang, Sichong. Reinforcement learning with reward shaping for last-mile delivery dispatch efficiency. European Journal of Business, Economics & Management 2025, 1(4), 122–130. [Google Scholar]

- Huang, Sichong. Prophet with exogenous variables for procurement demand prediction under market volatility. Journal of Computer Technology and Applied Mathematics 2025, 2(6), 15–20. [Google Scholar] [CrossRef]

- Liu, Wenwen. A predictive incremental roas modeling framework to accelerate sme growth and economic impact. Journal of Economic Theory and Business Management 2025, 2(6), 25–30. [Google Scholar] [CrossRef]

- Xu, Shuo; Cao, Yuchen; Wang, Zhongyan; Tian, Yexin. Fraud detection in online transactions: Toward hybrid supervised–unsupervised learning pipelines. In Proceedings of the 2025 6th International Conference on Electronic Communication and Artificial Intelligence (ICECAI 2025), Chengdu, China, 2025; pp. pages 20–22. [Google Scholar]

- Wang, Tongxi. Fbs: Modeling native parallel reading inside a transformer. 2026. [Google Scholar] [CrossRef]

- Zhu, Peijun; Yang, Ning; Wei, Jiayu; Wu, Jinghang; Zhang, Haijun. Breaking the moe llm trilemma: Dynamic expert clustering with structured compression. arXiv 2025, arXiv:2510.02345. [Google Scholar]

- Wang, Tongxi; Xia, Zhuoyang; Chen, Xinran; Liu, Shan. Tracking drift: Variation-aware entropy scheduling for non-stationary reinforcement learning. 2026. [Google Scholar]

- Yang, Ning; Wang, Pengyu; Liu, Guoqing; Zhang, Haifeng; Lv, Pin; Wang, Jun. Proactive constrained policy optimization with preemptive penalty. arXiv 2025, arXiv:2508.01883. [Google Scholar] [CrossRef]

- Chen, Zhuo; Zhao, Haimei; Hao, Xiaoshuai; Yuan, Bo; Li, Xiu. Stvit+: improving self-supervised multi-camera depth estimation with spatial-temporal context and adversarial geometry regularization. Applied Intelligence 2025, 55(5), 328. [Google Scholar] [CrossRef]

- Wu, Shiguang; Wang, Yaqing; Yao, Quanming. Dense communication between language models. CoRR, 2025. [Google Scholar]

| Model | LMNet-1B | ASDNet-1.2B | Llama3.2-1B | Qwen2.5-1.5B | Gemma2-2B | Llama3.2-3B |

|---|---|---|---|---|---|---|

| # Tokens | 0.01T | 0.012T | 15T | 18T | 15T | 15T |

| MMLU | 53.9 | 54.8 | 32.2 | 60.9 | 52.2 | 58.0 |

| MMLU-pro | 26.2 | 27.5 | 12.0 | 28.5 | 23.0 | 22.2 |

| BBH | 47.3 | 48.5 | 31.6 | 45.1 | 41.9 | 46.8 |

| ARC-C | 38.0 | 39.2 | 32.8 | 54.7 | 55.7 | 69.1 |

| TruthfulQA | 47.9 | 48.8 | 37.7 | 46.6 | 36.2 | 39.3 |

| GSM8K | 50.3 | 51.5 | 9.2 | 68.5 | 30.3 | 12.6 |

| MATH | 38.8 | 39.9 | - | 35.0 | 18.3 | - |

| GPQA | 25.6 | 26.1 | 7.6 | 24.2 | 25.3 | 6.9 |

| MMLU-stem | 46.0 | 47.3 | 28.5 | 54.8 | 45.8 | 47.7 |

| HumanEval | 39.0 | 40.1 | - | 37.2 | 19.5 | - |

| MBPP | 45.8 | 46.7 | - | 60.2 | 42.1 | - |

| Model Variant | MMLU | GSM8K | BBH |

|---|---|---|---|

| ASDNet w/o Dynamic Target (Fixed Topology) | 51.2 | 48.1 | 45.5 |

| ASDNet w/o Adaptive Transform (Fixed Transform) | 52.5 | 49.3 | 46.8 |

| ASDNet w/o Comm. Hub (Direct Dense) | 50.5 | 47.6 | 44.9 |

| Ours-ASDNet (Full Model) | 54.8 | 51.5 | 48.5 |

| Model Variant (Transform Strategy) | MMLU | GSM8K | BBH | MMLU-STEM |

|---|---|---|---|---|

| ASDNet w/ FL | 52.5 | 49.3 | 46.8 | 45.1 |

| ASDNet w/ SO | 53.4 | 50.1 | 47.5 | 46.0 |

| ASDNet w/ GO | 54.1 | 50.8 | 48.0 | 46.7 |

| Ours-ASDNet (HS) | 54.8 | 51.5 | 48.5 | 47.3 |

| Model | P/T | Lat | Mem | FLOPs/Token (Relative) |

|---|---|---|---|---|

| Qwen2.5-1.5B | 1.5 | 45.2 | 8.1 | 1.00 |

| LMNet-1B | 1.0 | 58.7 | 10.5 | 1.35 |

| Ours-ASDNet-1.2B | 1.2 | 52.1 | 9.8 | 1.20 |

| Llama3.2-3B | 3.0 | 85.5 | 15.2 | 2.00 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).