Submitted:

01 March 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. LLM-Based Multi-Agent Systems

2.2. Reasoning and Reflection in LLM Agents

2.3. Memory and Learning in Agent Systems

3. Methodology

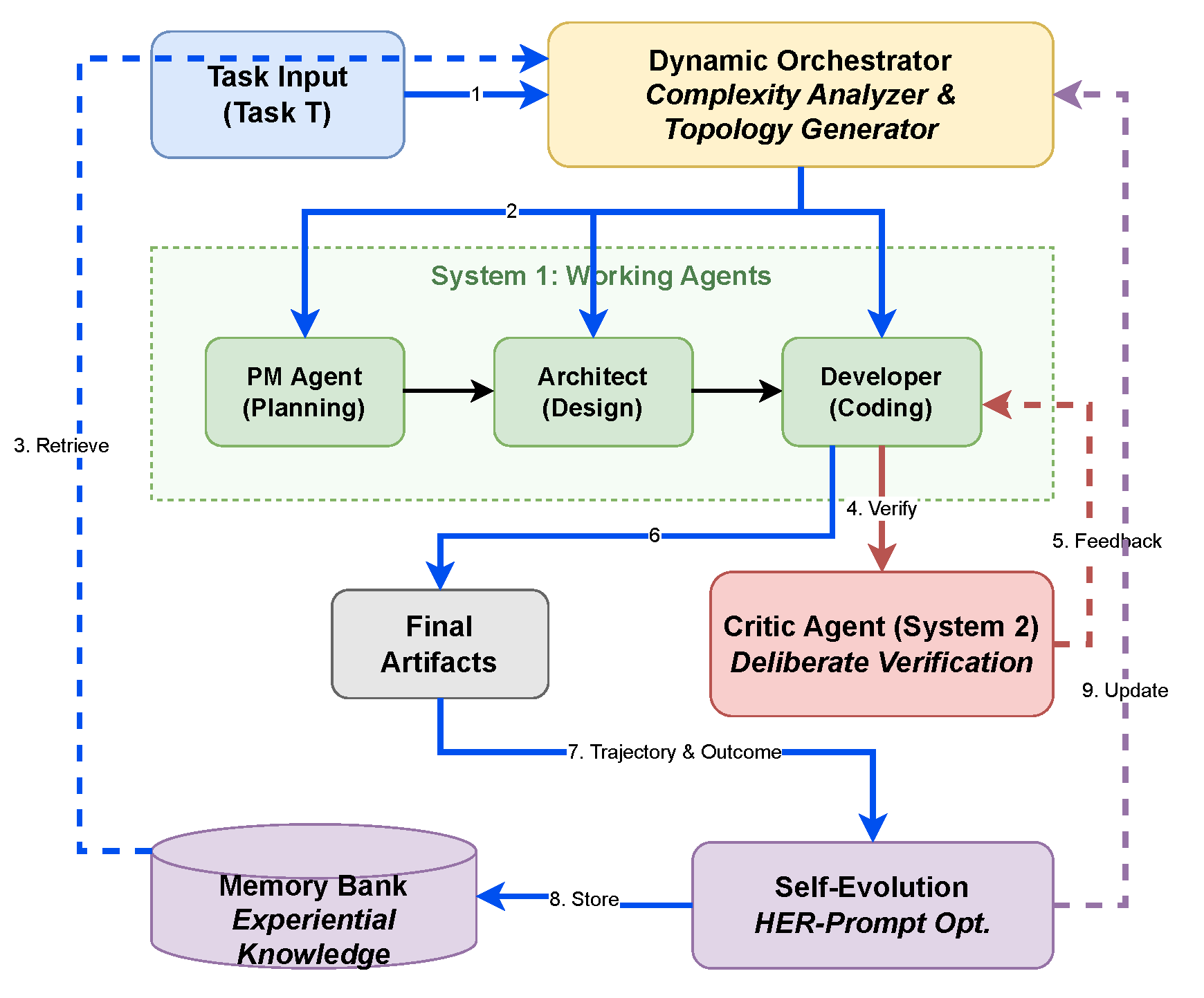

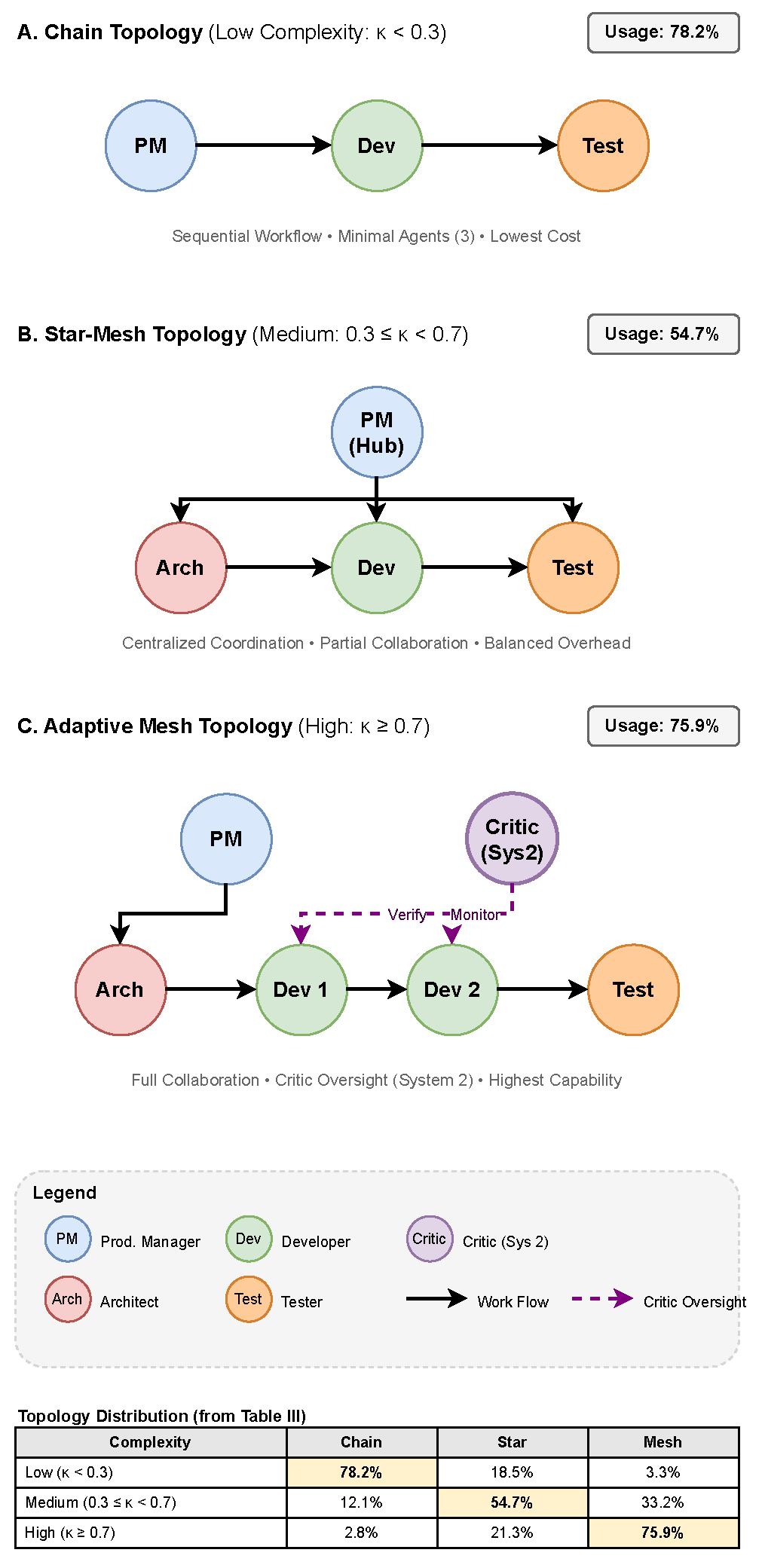

3.1. Dynamic Topology Generation

3.2. System 2 Reflection Module

3.3. Error-Driven Self-Evolution

3.4. Integration and Workflow

| Algorithm 1 Eco-Evolve Framework |

Require: Task T, Memory Bank , Base Prompts P

|

4. Experiments

4.1. Experimental Setup

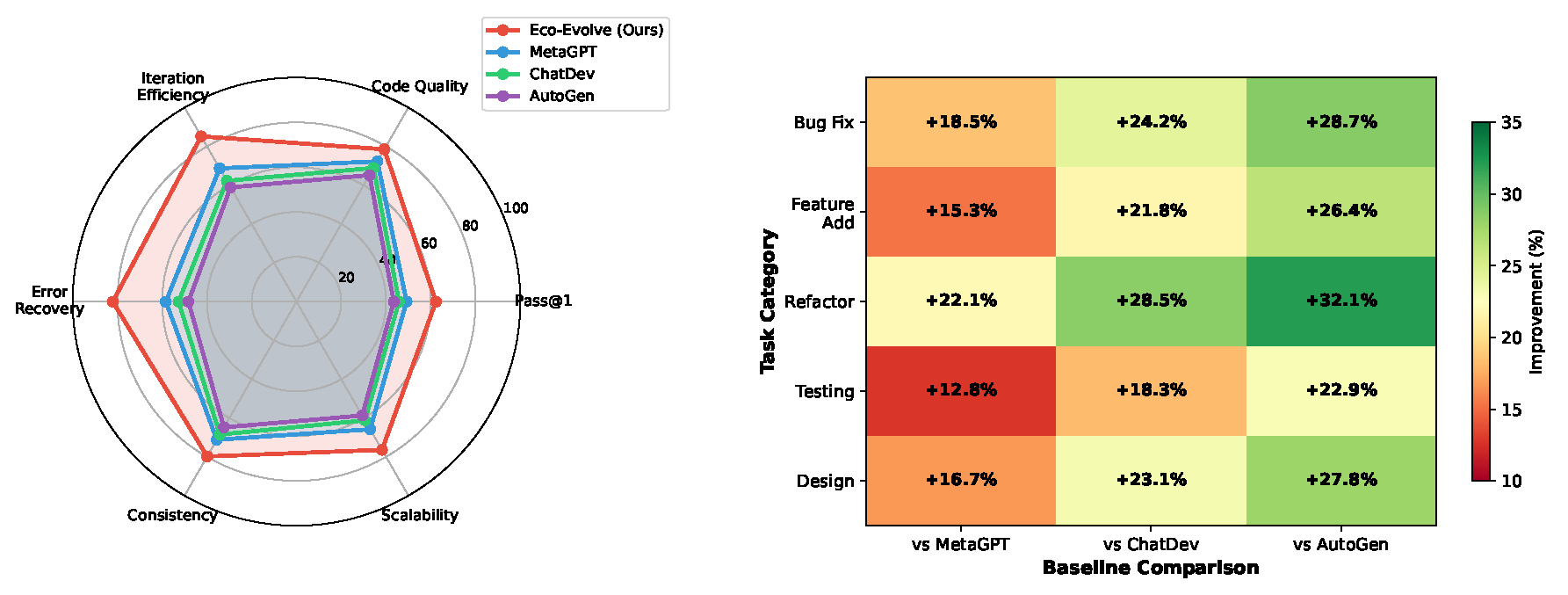

4.2. Evaluation Metrics

- Pass@1: The primary success rate metric indicating the percentage of tasks solved correctly on the first attempt.

- Code Quality: A composite score combining static analysis results (pylint/flake8 scores) and unit test coverage, normalized to [0, 100].

- Iteration Efficiency: Defined as , measuring success rate per 10k tokens consumed.

- Error Recovery: The fraction of initially failed cases that are successfully fixed after additional Critic-triggered iterations: .

- Consistency: The standard deviation of success rates across three runs, inverted and normalized: .

- Scalability: Performance retention ratio when task complexity increases from low to high: .

4.3. Main Results

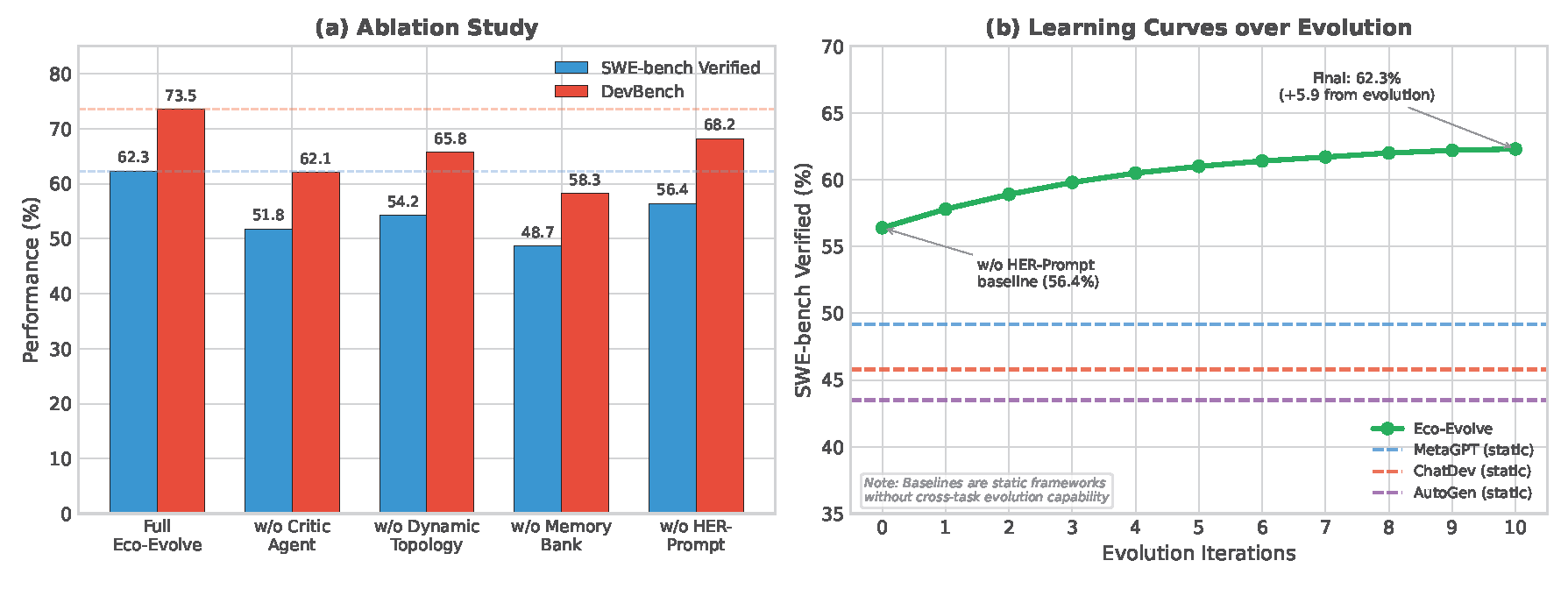

4.4. Ablation Study

4.5. Analysis of Dynamic Topology

4.6. Computational Overhead Analysis

4.7. Case Study: Error Recovery

4.8. Case Study: HER-Prompt Achievement Extraction

- Successfully implemented robust JSON parsing with proper exception handling

- Correctly validated all top-level and second-level schema fields

- Generated informative error messages following project conventions

5. Discussion

6. Conclusions

References

- Hong, S.; Zhuge, M.; Chen, J.; Zheng, X.; Cheng, Y.; Wang, J.; Zhang, C.; Wang, Z.; Yau, S.K.S.; Lin, Z.; et al. MetaGPT: Meta Programming for A Multi-Agent Collaborative Framework. In Proceedings of the Proc. Int. Conf. Learn. Representations (ICLR), 2024.

- Qian, C.; Liu, W.; Liu, H.; Chen, N.; Dang, Y.; Li, J.; Yang, C.; Chen, W.; Su, Y.; Cong, X.; et al. ChatDev: Communicative Agents for Software Development. In Proceedings of the Proc. 62nd Annu. Meeting Assoc. Comput. Linguistics (ACL), 2024, pp. 15174–15186.

- Wu, Q.; Bansal, G.; Zhang, J.; Wu, Y.; Zhang, S.; Zhu, E.; Li, B.; Jiang, L.; Zhang, X.; Wang, C. AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation. arXiv preprint arXiv:2308.08155 2023.

- Zhang, G.; Yue, Y.; Sun, X.; Wan, G.; Yu, M.; Fang, J.; Wang, K.; Chen, T.; Cheng, D. G-Designer: Architecting Multi-agent Communication Topologies via Graph Neural Networks. arXiv preprint arXiv:2410.11782 2024.

- Kahneman, D. Thinking, Fast and Slow; Farrar, Straus and Giroux: New York, NY, USA, 2011.

- Andrychowicz, M.; Wolski, F.; Ray, A.; Schneider, J.; Fong, R.; Welinder, P.; McGrew, B.; Tobin, J.; Abbeel, P.; Zaremba, W. Hindsight Experience Replay. In Proceedings of the Advances Neural Inf. Process. Syst. (NeurIPS), 2017, Vol. 30.

- Jimenez, C.E.; Yang, J.; Wettig, A.; Yao, S.; Pei, K.; Press, O.; Narasimhan, K.R. SWE-bench: Can Language Models Resolve Real-world Github Issues? In Proceedings of the Proc. Int. Conf. Learn. Representations (ICLR), 2024.

- Li, B.; Wu, W.; Tang, Z.; Shi, L.; Yang, J.; Li, J.; Yao, S.; Qian, C.; Hui, B.; Zhang, Q.; et al. DevBench: A Comprehensive Benchmark for Software Development. arXiv preprint arXiv:2403.08604 2024.

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; Chi, E.; Le, Q.; Zhou, D. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. In Proceedings of the Advances Neural Inf. Process. Syst. (NeurIPS), 2022, Vol. 35, pp. 24824–24837.

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.; Cao, Y. ReAct: Synergizing Reasoning and Acting in Language Models. In Proceedings of the Proc. Int. Conf. Learn. Representations (ICLR), 2023.

- Shinn, N.; Cassano, F.; Gopinath, A.; Narasimhan, K.; Yao, S. Reflexion: Language Agents with Verbal Reinforcement Learning. In Proceedings of the Advances Neural Inf. Process. Syst. (NeurIPS), 2023, Vol. 36.

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. In Proceedings of the Advances Neural Inf. Process. Syst. (NeurIPS), 2020, Vol. 33, pp. 9459–9474.

| 1 | SWE-bench Verified is a curated subset of the original SWE-bench dataset, filtered by human annotators to ensure task clarity and solvability. We use this subset following recent evaluation practices in the field. |

| Framework | SWE-bench (%) | DevBench (%) |

|---|---|---|

| AutoGen | 43.5 | 58.7 |

| ChatDev | 45.8 | 61.2 |

| MetaGPT | 49.2 | 64.1 |

| Eco-Evolve (Ours) | 62.3 | 73.5 |

| Configuration | SWE-bench (%) | DevBench (%) |

|---|---|---|

| Full Eco-Evolve | 62.3 | 73.5 |

| w/o Critic Agent | 51.8 (−10.5) | 62.1 (−11.4) |

| w/o Dynamic Topology | 54.2 (−8.1) | 65.8 (−7.7) |

| w/o Memory Bank | 48.7 (−13.6) | 58.3 (−15.2) |

| w/o HER-Prompt | 56.4 (−5.9) | 68.2 (−5.3) |

| Complexity | Chain (%) | Star (%) | Mesh (%) |

|---|---|---|---|

| Low () | 78.2 | 18.5 | 3.3 |

| Medium () | 12.1 | 54.7 | 33.2 |

| High () | 2.8 | 21.3 | 75.9 |

| Framework | Avg. Tokens/Task | Relative Cost |

|---|---|---|

| AutoGen | 18.2k | 0.92× |

| ChatDev | 21.5k | 1.09× |

| MetaGPT | 17.6k | 0.89× |

| Eco-Evolve | 19.7k | 1.00× |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).