Submitted:

01 March 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

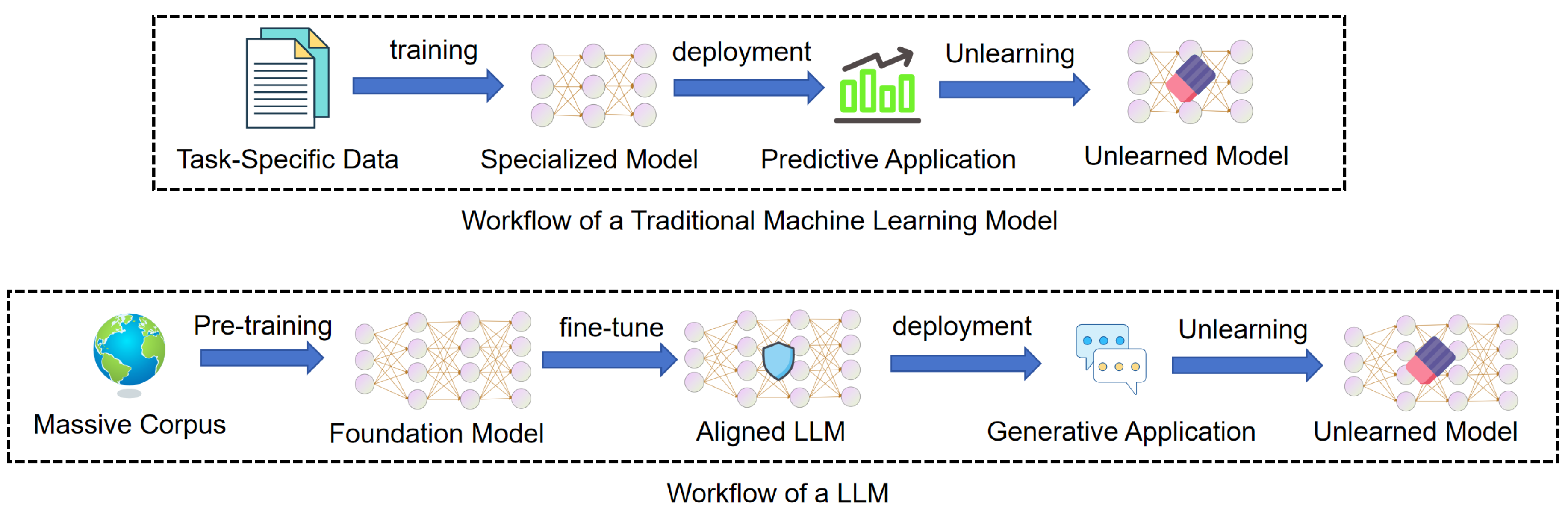

1. Introduction

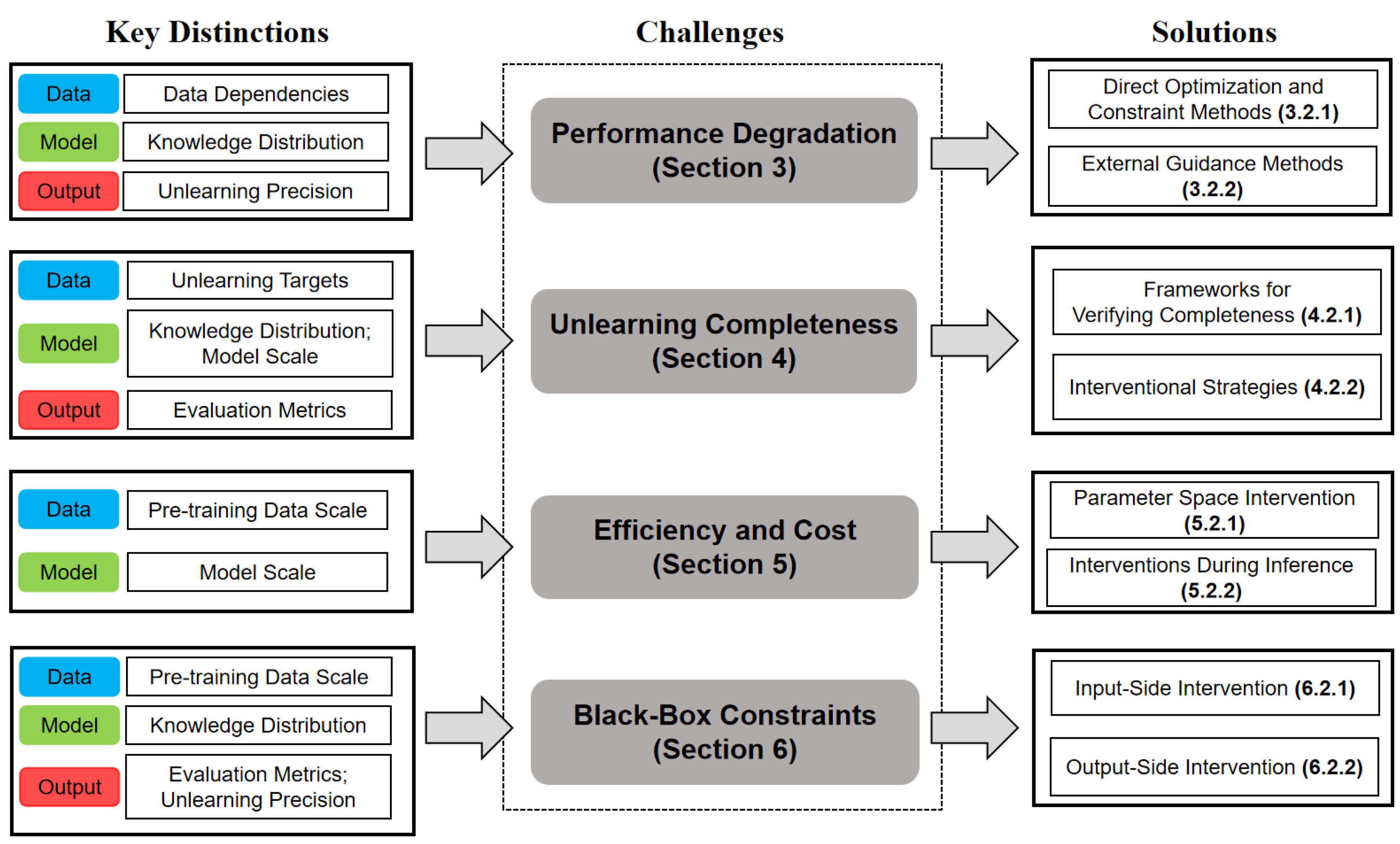

- We conduct a comprehensive comparison between machine unlearning in traditional models and LLMs across data, model, and output dimensions to highlight the unique challenges specific to LLMs.

- Based on this comparative analysis, we propose a taxonomy organized around four core implementation challenges: performance degradation, unlearning completeness, efficiency and cost, and black-box constraints.

- We review existing methodologies using this taxonomy and evaluate how current techniques address these constraints.

- Finally, we use this analysis to identify critical research gaps within each challenge and outline a clear direction for future investigation.

2. Machine Unlearning in LLMs and Traditional Models

2.1. Foundations of Machine Unlearning

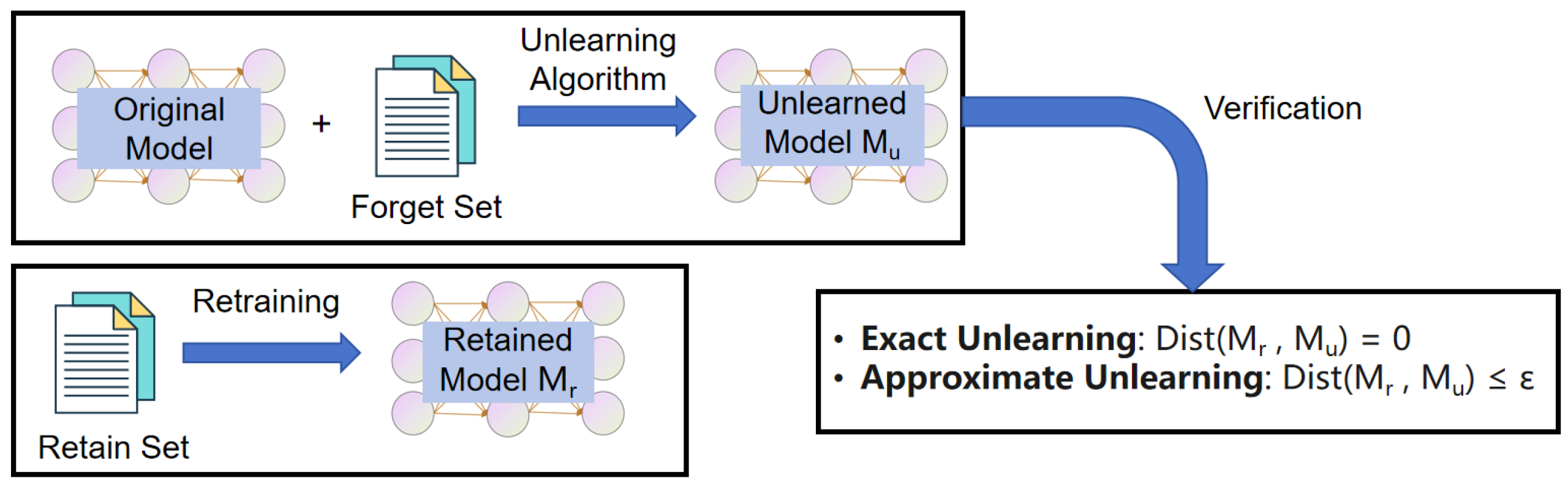

2.1.1. Machine Unlearning

2.1.2. Exact and Approximate Unlearning

2.1.3. Machine Unlearning Methods for Traditional Models

2.1.4. Machine Unlearning Methods for LLMs

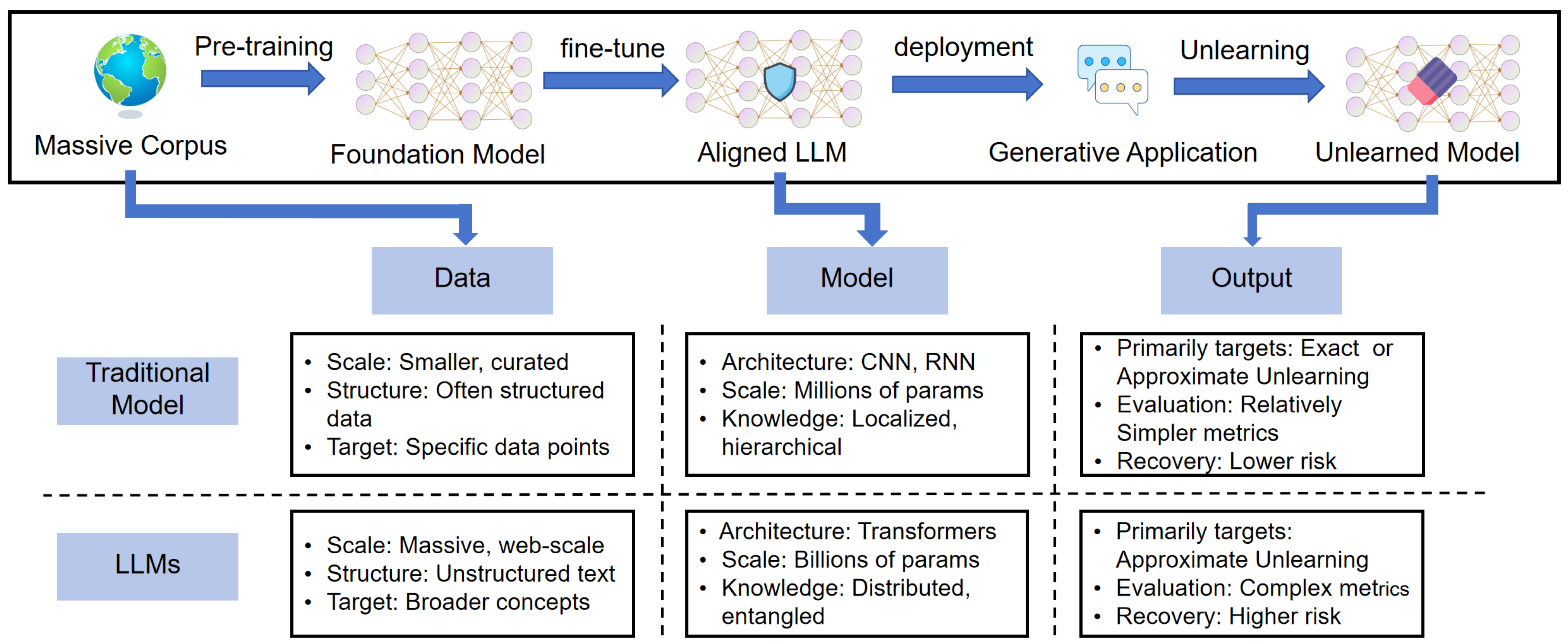

2.2. Key Differences Between LLM Unlearning and Traditional Machine Unlearning

2.2.1. Data

2.2.2. Model

2.2.3. Output

2.3. Core Challenges in LLM Unlearning

2.3.1. Performance Degradation

2.3.2. Unlearning Completeness

2.3.3. Efficiency and Cost

2.3.4. Black-Box Constraints

2.4. Summary

3. Challenge of Performance Degradation

3.1. Methodologies for Mitigating Performance Degradation

3.1.1. Direct Optimization and Constraint Methods

3.1.2. External Guidance Methods

3.2. Summary

4. Challenge of Unlearning Completeness

4.1. Methodologies for Verifying and Enhancing Completeness

4.1.1. Frameworks for Verifying the Completeness of Unlearning

4.1.2. Interventional Strategies

4.2. Summary

5. Challenge of Efficiency and Cost

5.1. Methodologies for Improving Efficiency and Cost

5.1.1. Parameter Space Intervention

5.1.2. Interventions During Inference

5.2. Summary

6. Challenge of Black-Box Constraints

6.1. Methodologies for Black-Box Intervention and Auditing

6.1.1. Input-Side Intervention

6.1.2. Output-Side Intervention

6.2. Summary

7. Future Directions

7.1. Performance Degradation

7.2. Unlearning Completeness

7.3. Efficiency and Cost

7.4. Black-Box Constraints

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- OpenAI. GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (2019), 2019; pp. 4171–4186. [Google Scholar] [CrossRef]

- Anil, R.; et al. PaLM 2 Technical Report. arXiv 2023. arXiv:2305.10403. [CrossRef]

- DeepSeek-AI. DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning. arXiv arXiv:2501.12948.

- Feldman, V. Does Learning Require Memorization? A Short Tale About a Long Tail. In Proceedings of the ACM SIGACT Symposium on Theory of Computing (2020), 2020; pp. 954–959. [Google Scholar] [CrossRef]

- Carlini, N.; Liu, C.; Erlingsson, Ú.; Kos, J.; Song, D. The Secret Sharer: Evaluating and Testing Unintended Memorization in Neural Networks. In Proceedings of the USENIX Security Symposium (2019), 2019; pp. 267–284. [Google Scholar]

- General Data Protection Regulation (GDPR). Online. 2018.

- California Consumer Privacy Act (CCPA), 2018. Online.

- Japan - Data Protection Overview(JDPO), 2019. Online.

- Consumer Privacy Protection Act (CPPA), 2022. Online.

- Cao, Y.; Yang, J. Towards Making Systems Forget with Machine Unlearning. In Proceedings of the IEEE Symposium on Security and Privacy (2015), 2015; pp. 463–480. [Google Scholar] [CrossRef]

- Liu, S.; Yao, Y.; Jia, J.; Casper, S.; Baracaldo, N.; Hase, P.; Yao, Y.; Liu, C.Y.; Xu, X.; Li, H.; et al. Rethinking Machine Unlearning for Large Language Models. Nature Machine Intelligence 2025, 7, 181–194. [Google Scholar] [CrossRef]

- Xu, H.; Zhu, T.; Zhang, L.; Zhou, W.; Yu, P.S. Machine Unlearning: A Survey. ACM Computing Surveys 2024, 56, 9:1–9:36. [Google Scholar] [CrossRef]

- Liu, H.; Xiong, P.; Zhu, T.; Yu, P.S. A Survey on Machine Unlearning: Techniques and New Emerged Privacy Risks. Journal of Information Security and Applications 2025, 90, 104010. [Google Scholar] [CrossRef]

- Wang, S.; Zhu, T.; Liu, B.; Ding, M.; Ye, D.; Zhou, W.; Yu, P.S. Unique Security and Privacy Threats of Large Language Models: A Comprehensive Survey. ACM Computing Surveys 2026, 58, 83:1–83:36. [Google Scholar] [CrossRef]

- Eldan, R.; Russinovich, M. Who’s Harry Potter? Approximate Unlearning in LLMs. arXiv 2023. arXiv:2310.02238.

- Doshi, J.; Stickland, A.C. Does Unlearning Truly Unlearn? A Black Box Evaluation of LLM Unlearning Methods. arXiv 2024. arXiv:2411.12103. [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need. In Proceedings of the Advances in Neural Information Processing Systems (2017), 2017; pp. 5998–6008. [Google Scholar]

- Tian, C.; Qin, X.; Tam, K.; Li, L.; Wang, Z.; Zhao, Y.; Zhang, M.; Xu, C. CLONE: Customizing LLMs for Efficient Latency-Aware Inference at the Edge. Proceedings of the USENIX Annual Technical Conference (2025) 2025, 563–585. [Google Scholar]

- Bai, Y.; Mei, J.; Yuille, A.L.; Xie, C. Are Transformers More Robust Than CNNs? Proceedings of the Advances in Neural Information Processing Systems 2021, 34 2021, 26831–26843. [Google Scholar]

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models Are Few-Shot Learners. In Proceedings of the Advances in Neural Information Processing Systems (2020), 2020. [Google Scholar]

- Gundla, K.; Martha, S.; Panigrahy, A.K. Toward Explainable AI in Satellite Imagery: A ResNet-50-Based Study on EuroSAT Classification. IEEE Access 2025, 13, 165900–165908. [Google Scholar] [CrossRef]

- Zeiler, M.D.; Fergus, R. Visualizing and Understanding Convolutional Networks. In Proceedings of the European Conference on Computer Vision (2014), 2014; pp. 818–833. [Google Scholar] [CrossRef]

- Liu, M.; Shi, J.; Li, Z.; Li, C.; Zhu, J.; Liu, S. Towards Better Analysis of Deep Convolutional Neural Networks. IEEE Transactions on Visualization and Computer Graphics 2017, 23, 91–100. [Google Scholar] [CrossRef]

- Clark, K.; Khandelwal, U.; Levy, O.; Manning, C.D. What Does BERT Look at? An Analysis of BERT’s Attention. In Proceedings of the ACL Workshop BlackboxNLP(2019), 2019; pp. 276–286. [Google Scholar] [CrossRef]

- Bourtoule, L.; Chandrasekaran, V.; Choquette-Choo, C.A.; Jia, H.; Travers, A.; Zhang, B.; Lie, D.; Papernot, N. Machine Unlearning. In Proceedings of the IEEE Symposium on Security and Privacy (2021), 2021; pp. 141–159. [Google Scholar] [CrossRef]

- Chen, K.; Huang, Y.; Wang, Y. Machine Unlearning via GAN. arXiv arXiv:2111.11869. [CrossRef]

- Warnecke, A.; Pirch, L.; Wressnegger, C.; Rieck, K. Machine Unlearning of Features and Labels. In Proceedings of the Network and Distributed System Security Symposium (2023), 2023. [Google Scholar] [CrossRef]

- Maini, P.; Feng, Z.; Schwarzschild, A.; Lipton, Z.C.; Kolter, J.Z. TOFU: A Task of Fictitious Unlearning for LLMs. In Proceedings of the Advances in Neural Information Processing Systems, 2024), 2024. [Google Scholar]

- Shi, W.; Lee, J.; Huang, Y.; Malladi, S.; Zhao, J.; Holtzman, A.; Liu, D.; Zettlemoyer, L.; Smith, N.A.; Zhang, C. MUSE: Machine Unlearning Six-Way Evaluation for Language Models. In Proceedings of the International Conference on Learning Representations (2025), 2025. [Google Scholar]

- Blanco-Justicia, A.; Jebreel, N.; Manzanares-Salor, B.; Sánchez, D.; Domingo-Ferrer, J.; Collell, G.; Tan, K.E. Digital Forgetting in Large Language Models: A Survey of Unlearning Methods. Artificial Intelligence Review 2025, 58, 90. [Google Scholar] [CrossRef]

- ucki, J.; Wei, B.; Huang, Y.; Henderson, P.; Tramèr, F.; Rando, J. An Adversarial Perspective on Machine Unlearning for AI Safety. In Proceedings of the NeurIPS 2024 Workshop on Red Teaming GenAI: What Can We Learn from Adversaries? 2024. [Google Scholar]

- Zhang, J.; Sun, J.; Yeats, E.; Ouyang, Y.; Kuo, M.; Zhang, J.; Yang, H.F.; Li, H. Min-K%++: Improved Baseline for Pre-Training Data Detection from Large Language Models. In Proceedings of the The Thirteenth International Conference on Learning Representations (2025), 2025. [Google Scholar]

- Rezaei, K.; Chandu, K.R.; Feizi, S.; Choi, Y.; Brahman, F.; Ravichander, A. RESTOR: Knowledge Recovery in Machine Unlearning. Transactions on Machine Learning Research, 2025. [Google Scholar]

- Cooper, A.F.; Choquette-Choo, C.A.; Bogen, M.; Jagielski, M.; Filippova, K.; Liu, K.Z.; Chouldechova, A.; Hayes, J.; Huang, Y.; Mireshghallah, N.; et al. Machine Unlearning Doesn’t Do What You Think: Lessons for Generative AI Policy, Research, and Practice. In Proceedings of the Advances in Neural Information Processing Systems (2025), 2025. [Google Scholar]

- Ji, J.; Liu, Y.; Zhang, Y.; Liu, G.; Kompella, R.; Liu, S.; Chang, S. Reversing the Forget-Retain Objectives: An Efficient LLM Unlearning Framework from Logit Difference. In Proceedings of the Advances in Neural Information Processing Systems, 2024), 2024. [Google Scholar]

- Liu, C.; Wang, Y.; Flanigan, J.; Liu, Y. Large Language Model Unlearning via Embedding-Corrupted Prompts. In Proceedings of the Advances in Neural Information Processing Systems, 2024), 2024. [Google Scholar]

- Liu, H.; Zhu, T.; Zhang, L.; Xiong, P. Game-Theoretic Machine Unlearning: Mitigating Extra Privacy Leakage. IEEE Transactions on Information Forensics and Security 2025, 20, 11591–11606. [Google Scholar] [CrossRef]

- Yuan, H.; Jin, Z.; Cao, P.; Chen, Y.; Liu, K.; Zhao, J. Towards Robust Knowledge Unlearning: An Adversarial Framework for Assessing and Improving Unlearning Robustness in Large Language Models. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence (2025); 2025; pp. 25769–25777. [Google Scholar] [CrossRef]

- Ren, J.; Xing, Y.; Cui, Y.; Aggarwal, C.C.; Liu, H. SoK: Machine Unlearning for Large Language Models. arXiv arXiv:2506.09227. [CrossRef]

- Gu, T.; Huang, K.; Luo, R.; Yao, Y.; Yang, Y.; Teng, Y.; Wang, Y. MEOW: MEMOry Supervised LLM Unlearning Via Inverted Facts. arXiv 2024. arXiv:2409.11844. [CrossRef]

- Cha, S.; Cho, S.; Hwang, D.; Lee, M. Towards Robust and Parameter-Efficient Knowledge Unlearning for LLMs. In Proceedings of the The Thirteenth International Conference on Learning Representations (ICLR 2025), 2025. [Google Scholar]

- Ding, C.; Wu, J.; Yuan, Y.; Lu, J.; Zhang, K.; Su, A.; Wang, X.; He, X. Unified Parameter-Efficient Unlearning for LLMs. In Proceedings of the International Conference on Learning Representations (2025), 2025. [Google Scholar]

- Li, N.; Pan, A.; Gopal, A.; Yue, S.; Berrios, D.; Gatti, A.; Li, J.D.; Dombrowski, A.; Goel, S.; Mukobi, G.; et al. The WMDP Benchmark: Measuring and Reducing Malicious Use with Unlearning. In Proceedings of the International Conference on Machine Learning, 2024), 2024. [Google Scholar]

- Dang, H.; Pham, T.; Thanh-Tung, H.; Inoue, N. On Effects of Steering Latent Representation for Large Language Model Unlearning. In Proceedings of the AAAI Conference on Artificial Intelligence (2025); 2025; pp. 23733–23742. [Google Scholar] [CrossRef]

- Dai, D.; Dong, L.; Hao, Y.; Sui, Z.; Chang, B.; Wei, F. Knowledge Neurons in Pretrained Transformers. In Proceedings of the Annual Meeting of the Association for Computational Linguistics (2022), 2022; pp. 8493–8502. [Google Scholar] [CrossRef]

- Yang, N.; Kim, M.; Yoon, S.; Shin, J.; Jung, K. FaithUn: Toward Faithful Forgetting in Language Models by Investigating the Interconnectedness of Knowledge. In Proceedings of the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing, 2025; pp. 12999–13014. [Google Scholar] [CrossRef]

- Jia, J.; Liu, J.; Zhang, Y.; Ram, P.; Baracaldo, N.; Liu, S. WAGLE: Strategic Weight Attribution for Effective and Modular Unlearning in Large Language Models. In Proceedings of the Advances in Neural Information Processing Systems, 2024), 2024. [Google Scholar]

- Hou, L.; Wang, Z.; Liu, G.; Wang, C.; Liu, W.; Peng, K. Decoupling Memories, Muting Neurons: Towards Practical Machine Unlearning for Large Language Models. In Proceedings of the Findings of the Association for Computational Linguistics (2025); 2025; pp. 13978–13999. [Google Scholar] [CrossRef]

- Yu, C.; Jeoung, S.; Kasi, A.; Yu, P.; Ji, H. Unlearning Bias in Language Models by Partitioning Gradients. In Proceedings of the Findings of the Association for Computational Linguistics: ACL (2023); 2023; pp. 6032–6048. [Google Scholar] [CrossRef]

- Shen, W.F.; Qiu, X.; Kurmanji, M.; Iacob, A.; Sani, L.; Chen, Y.; Cancedda, N.; Lane, N.D. LLM Unlearning via Neural Activation Redirection. In Proceedings of the Advances in Neural Information Processing Systems (2025), 2025. [Google Scholar]

- Hu, J.; Huang, Z.; Yin, X.; Ruan, W.; Cheng, G.; Dong, Y.; Huang, X. FALCON: Fine-grained Activation Manipulation by Contrastive Orthogonal Unalignment for Large Language Model. In Proceedings of the Advances in Neural Information Processing Systems (2025), 2025. [Google Scholar]

- Jang, J.; Yoon, D.; Yang, S.; Cha, S.; Lee, M.; Logeswaran, L.; Seo, M. Knowledge Unlearning for Mitigating Privacy Risks in Language Models. In Proceedings of the Annual Meeting of the Association for Computational Linguistics (2023), 2023; pp. 14389–14408. [Google Scholar] [CrossRef]

- Fan, C.; Liu, J.; Zhang, Y.; Wong, E.; Wei, D.; Liu, S. SalUn: Empowering Machine Unlearning via Gradient-Based Weight Saliency in Both Image Classification and Generation. In Proceedings of the International Conference on Learning Representations (2024), 2024. [Google Scholar]

- Jia, J.; Liu, J.; Ram, P.; Yao, Y.; Liu, G.; Liu, Y.; Sharma, P.; Liu, S. Model Sparsity Can Simplify Machine Unlearning. In Proceedings of the Advances in Neural Information Processing Systems (2023), 2023. [Google Scholar]

- Rafailov, R.; Sharma, A.; Mitchell, E.; Manning, C.D.; Ermon, S.; Finn, C. Direct Preference Optimization: Your Language Model is Secretly a Reward Model. In Proceedings of the Advances in Neural Information Processing Systems (2023), 2023. [Google Scholar]

- Zhang, R.; Lin, L.; Bai, Y.; Mei, S. Negative Preference Optimization: From Catastrophic Collapse to Effective Unlearning. In Proceedings of the First Conference on Language Modeling, 2024. [Google Scholar]

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.L.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; et al. Training Language Models to Follow Instructions with Human Feedback. In Proceedings of the Advances in Neural Information Processing Systems (2022), 2022. [Google Scholar]

- Lee, H.; Phatale, S.; Mansoor, H.; Mesnard, T.; Ferret, J.; Lu, K.; Bishop, C.; Hall, E.; Carbune, V.; Rastogi, A.; et al. RLAIF vs. RLHF: Scaling Reinforcement Learning from Human Feedback with AI Feedback. In Proceedings of the International Conference on Machine Learning, 2024), 2024. [Google Scholar]

- Yuan, H.; Yuan, Z.; Tan, C.; Wang, W.; Huang, S.; Huang, F. RRHF: Rank Responses to Align Language Models with Human Feedback. In Proceedings of the Advances in Neural Information Processing Systems (2023), 2023. [Google Scholar]

- Lu, X.; Welleck, S.; Hessel, J.; Jiang, L.; Qin, L.; West, P.; Ammanabrolu, P.; Choi, Y. QUARK: Controllable Text Generation with Reinforced Unlearning. In Proceedings of the Advances in Neural Information Processing Systems (2022), 2022. [Google Scholar]

- Dong, Y.R.; Lin, H.; Belkin, M.; Huerta, R.; Vulic, I. UNDIAL: Self-Distillation with Adjusted Logits for Robust Unlearning in Large Language Models. Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (2025) 2025, 8827–8840. [Google Scholar] [CrossRef]

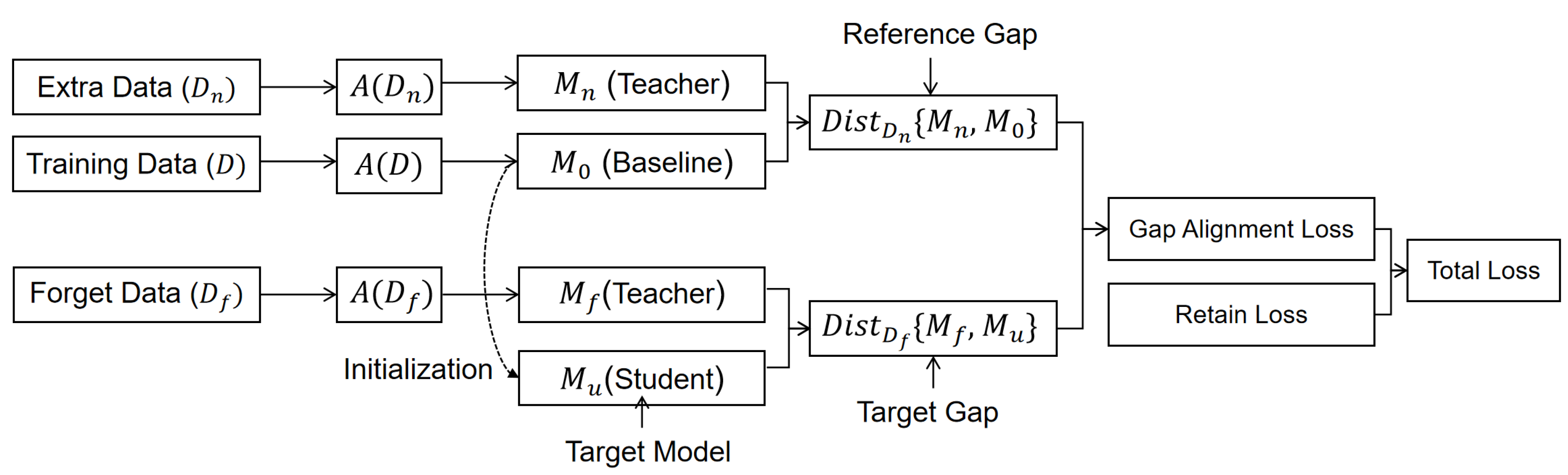

- Wang, L.; Chen, T.; Yuan, W.; Zeng, X.; Wong, K.; Yin, H. KGA: A General Machine Unlearning Framework Based on Knowledge Gap Alignment. In Proceedings of the Annual Meeting of the Association for Computational Linguistics (2023), 2023; pp. 13264–13276. [Google Scholar] [CrossRef]

- Chen, H.; Szyller, S.; Xu, W.; Himayat, N. Soft Token Attacks Cannot Reliably Audit Unlearning in Large Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP (2025); 2025; pp. 2183–2192. [Google Scholar] [CrossRef]

- Jeung, W.; Yoon, S.; No, A. SEPS: A Separability Measure for Robust Unlearning in LLMs. Proceedings of the Conference on Empirical Methods in Natural Language Processing (2025) 2025, 5556–5587. [Google Scholar] [CrossRef]

- Qiu, X.; Shen, W.F.; Chen, Y.; Cancedda, N.; Stenetorp, P.; Lane, N.D. PISTOL: Dataset Compilation Pipeline for Structural Unlearning of LLMs. arXiv 2024. arXiv:2406.16810. [CrossRef]

- Hsu, H.; Niroula, P.; He, Z.; Brugere, I.; Lecue, F.; Chen, C.F. The Unseen Threat: Residual Knowledge in Machine Unlearning under Perturbed Samples. In Proceedings of the Advances in Neural Information Processing Systems (2025), 2025. [Google Scholar]

- Lang, Y.; Guo, K.; Huang, Y.; Zhou, Y.; Zhuang, H.; Yang, T.; Su, Y.; Zhang, X. Beyond Single-Value Metrics: Evaluating and Enhancing LLM Unlearning with Cognitive Diagnosis. In Proceedings of the Findings of the Association for Computational Linguistics (2025); 2025; pp. 21397–21420. [Google Scholar] [CrossRef]

- Wichert, L.; Sikdar, S. Rethinking Evaluation Methods for Machine Unlearning. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP (2024); 2024; pp. 4727–4739. [Google Scholar] [CrossRef]

- Cohen, L.; Nemcovesky, Y.; Mendelson, A. REMIND: Input Loss Landscapes Reveal Residual Memorization in Post-Unlearning LLMs. arXiv arXiv:2511.04228. [CrossRef]

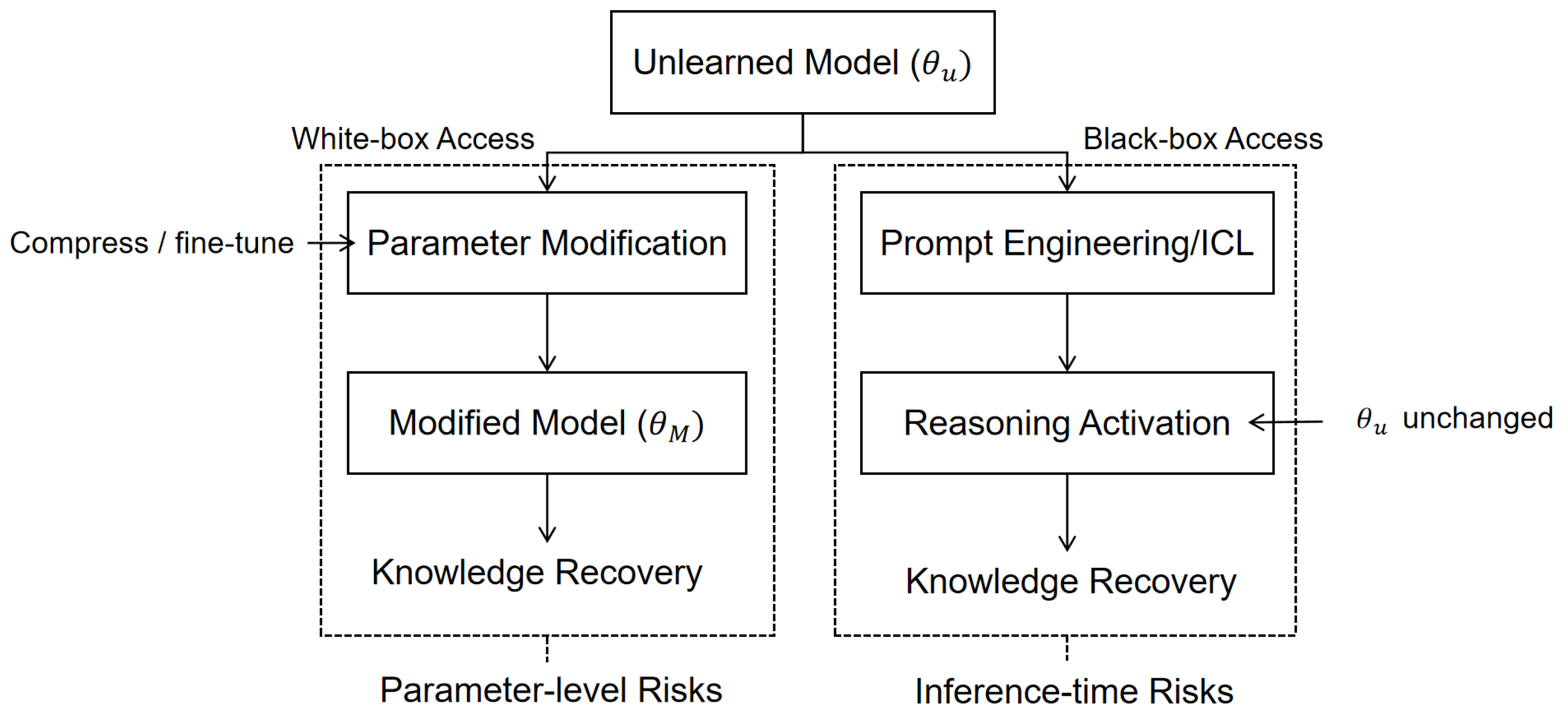

- Che, Z.; Casper, S.; Satheesh, A.; Gandikota, R.; Rosati, D.; Slocum, S.; McKinney, L.E.; Wu, Z.; Cai, Z.; Chughtai, B.; et al. Model Manipulation Attacks Enable More Rigorous Evaluations of LLM Unlearning. In Proceedings of the Neurips Safe Generative AI Workshop, 2024, 2024. [Google Scholar]

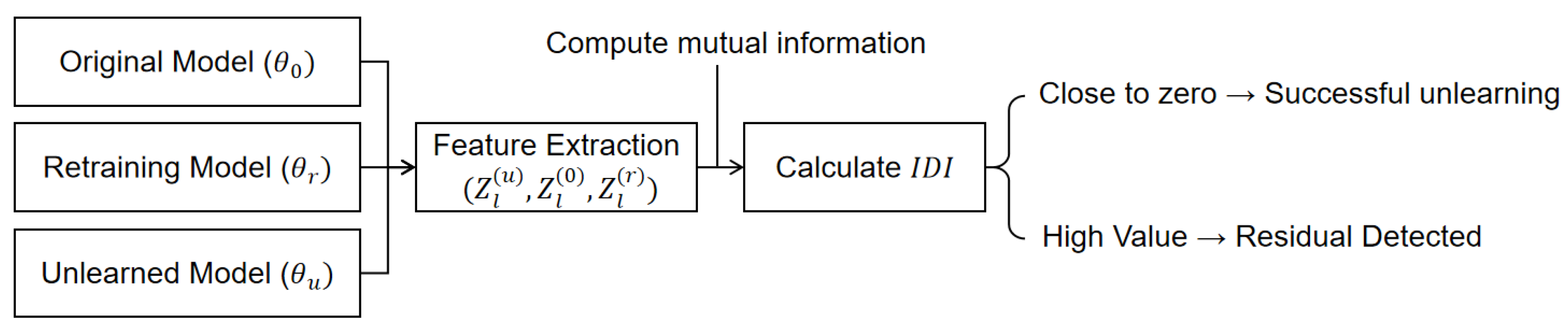

- Jeon, D.; Jeung, W.; Kim, T.; No, A.; Choi, J. An Information Theoretic Metric for Evaluating Unlearning Models. In Proceedings of the AAAI Conference on Artificial Intelligence (2026); 2026.

- Hu, S.; Fu, Y.; Wu, S.; Smith, V. Jogging the Memory of Unlearned Models Through Targeted Relearning Attacks. In Proceedings of the International Conference on Machine Learning (ICML) Workshop on Foundation Models in the Wild (2024), 2024. [Google Scholar]

- Shumailov, I.; Hayes, J.; Triantafillou, E.; Ortiz-Jiménez, G.; Papernot, N.; Jagielski, M.; Yona, I.; Howard, H.; Bagdasaryan, E. UnUnlearning: Unlearning is not sufficient for content regulation in advanced generative AI. arXiv 2024. arXiv:2407.00106.

- Lynch, A.; Guo, P.; Ewart, A.; Casper, S.; Hadfield-Menell, D. Eight Methods to Evaluate Robust Unlearning in LLMs. arXiv 2024. arXiv:2402.16835. [CrossRef]

- Fan, C.; Liu, J.; Lin, L.; Jia, J.; Zhang, R.; Mei, S.; Liu, S. Simplicity Prevails: Rethinking Negative Preference Optimization for LLM Unlearning. In Proceedings of the Advances in Neural Information Processing Systems (2025), 2025. [Google Scholar]

- Fan, C.; Jia, J.; Zhang, Y.; Ramakrishna, A.; Hong, M.; Liu, S. Towards LLM Unlearning Resilient to Relearning Attacks: A Sharpness-Aware Minimization Perspective and Beyond. In Proceedings of the International Conference on Machine Learning (2025), 2025. [Google Scholar]

- Zhang, Z.; Wang, F.; Li, X.; Wu, Z.; Tang, X.; Liu, H.; He, Q.; Yin, W.; Wang, S. Catastrophic Failure of LLM Unlearning via Quantization. In Proceedings of the International Conference on Learning Representations (2025), 2025. [Google Scholar]

- Cheng, J.; Amiri, H. Tool Unlearning for Tool-Augmented LLMs. In Proceedings of the International Conference on Machine Learning (2025), 2025. [Google Scholar]

- Geng, R.; Geng, M.; Wang, S.; Wang, H.; Lin, Z.; Dong, D. Mitigating Sensitive Information Leakage in LLMs4Code through Machine Unlearning. arXiv arXiv:2502.05739. [CrossRef]

- Jiang, X.; Dong, Y.; Fang, Z.; Ma, Y.; Wang, T.; Cao, R.; Li, B.; Jin, Z.; Jiao, W.; Li, Y.; et al. Large Language Model Unlearning for Source Code. In Proceedings of the AAAI Conference on Artificial Intelligence (2026); 2026.

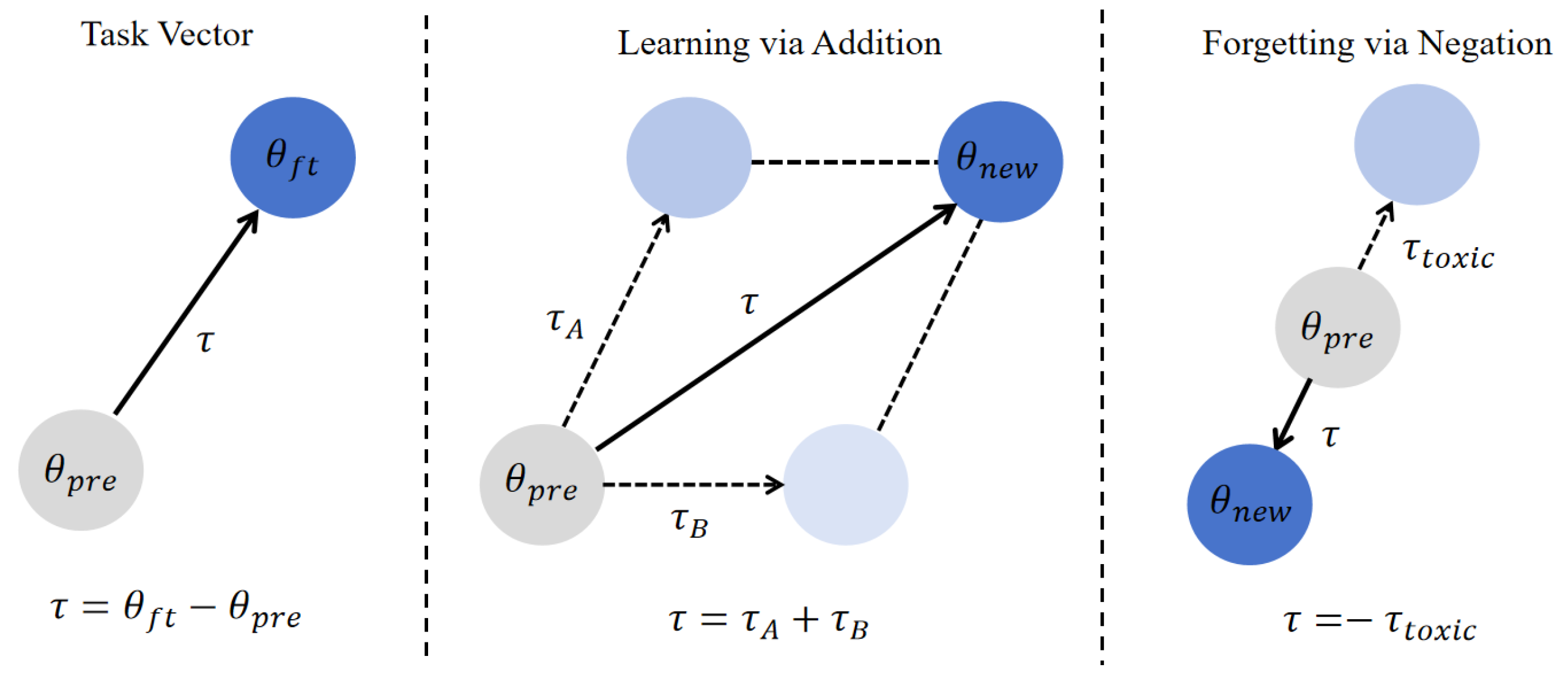

- Ilharco, G.; Ribeiro, M.T.; Wortsman, M.; Schmidt, L.; Hajishirzi, H.; Farhadi, A. Editing Models with Task Arithmetic. In Proceedings of the International Conference on Learning Representations (2023), 2023. [Google Scholar]

- Wang, W.; Zhang, M.; Ye, X.; Ren, Z.; Ren, P.; Chen, Z. UIPE: Enhancing LLM Unlearning by Removing Knowledge Related to Forgetting Targets. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP (2025); 2025; pp. 25212–25227. [Google Scholar] [CrossRef]

- Liu, Z.; Dou, G.; Tan, Z.; Tian, Y.; Jiang, M. Towards Safer Large Language Models Through Machine Unlearning. In Proceedings of the Findings of the Association for Computational Linguistics (2024); Volume 2024, pp. 1817–1829. [CrossRef]

- Ni, S.; Chen, D.; Li, C.; Hu, X.; Xu, R.; Yang, M. Forgetting Before Learning: Utilizing Parametric Arithmetic for Knowledge Updating in Large Language Models. Proceedings of the Annual Meeting of the Association for Computational Linguistics (2024) 2024, 5716–5731. [Google Scholar] [CrossRef]

- Jung, D.; Seo, J.; Lee, J.; Park, C.; Lim, H. CoME: An Unlearning-Based Approach to Conflict-Free Model Editing. Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistic (2025) 2025, 6410–6422. [Google Scholar] [CrossRef]

- Belrose, N.; Schneider-Joseph, D.; Ravfogel, S.; Cotterell, R.; Raff, E.; Biderman, S. LEACE: Perfect linear concept erasure in closed form. In Proceedings of the Advances in Neural Information Processing Systems (2023), 2023. [Google Scholar]

- Ren, J.; Dai, Z.; Tang, X.; Liu, H.; Zeng, J.; Li, Z.; Goutam, R.; Wang, S.; Xing, Y.; He, Q. A General Framework to Enhance Fine-Tuning-Based LLM Unlearning. In Proceedings of the Findings of the Association for Computational Linguistics (2025); 2025; pp. 18464–18476. [Google Scholar] [CrossRef]

- He, E.; Sarwar, T.; Khalil, I.; Yi, X.; Wang, K. Deep Contrastive Unlearning for Language Models. arXiv arXiv:2503.14900. [CrossRef]

- Wu, X.; Li, J.; Xu, M.; Dong, W.; Wu, S.; Bian, C.; Xiong, D. DEPN: Detecting and Editing Privacy Neurons in Pretrained Language Models. In Proceedings of the Conference on Empirical Methods in Natural Language Processing (2023), 2023; pp. 2875–2886. [Google Scholar] [CrossRef]

- Pochinkov, N.; Schoots, N. Dissecting Language Models: Machine Unlearning via Selective Pruning. arXiv 2024. arXiv:2403.01267. [CrossRef]

- Liu, Z.; Dou, G.; Yuan, X.; Zhang, C.; Tan, Z.; Jiang, M. Modality-Aware Neuron Pruning for Unlearning in Multimodal Large Language Models. Proceedings of the Annual Meeting of the Association for Computational Linguistics (2025) 2025, 5913–5933. [Google Scholar] [CrossRef]

- Li, Y.; Sun, C.; Weng, T. Effective Skill Unlearning Through Intervention and Abstention. Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics (2025) 2025, 6358–6371. [Google Scholar] [CrossRef]

- Farrell, E.; Lau, Y.T.; Conmy, A. Applying Sparse Autoencoders to Unlearn Knowledge in Language Models. arXiv 2024. arXiv:2410.19278. [CrossRef]

- Khoriaty, M.; Shportko, A.; Mercier, G.; Wood-Doughty, Z. Don’t Forget It! Conditional Sparse Autoencoder Clamping Works for Unlearning. arXiv arXiv:2503.11127.

- Karvonen, A.; Rager, C.; Lin, J.; Tigges, C.; Bloom, J.; Chanin, D.; Lau, Y.T.; Farrell, E.; McDougall, C.; Ayonrinde, K.; et al. SAEBench: A Comprehensive Benchmark for Sparse Autoencoders in Language Model Interpretability. In Proceedings of the International Conference on Machine Learning (2025), 2025. [Google Scholar]

- Suriyakumar, V.M.; Sekhari, A.; Wilson, A. UCD: Unlearning in LLMs via Contrastive Decoding. arXiv arXiv:2506.12097. [CrossRef]

- Wu, J.; Sun, H.; Cai, H.; Su, L.; Wang, S.; Yin, D.; Li, X.; Gao, M. Cross-Model Control: Improving Multiple Large Language Models in One-Time Training. In Proceedings of the Advances in Neural Information Processing Systems, 2024), 2024. [Google Scholar]

- Huang, J.Y.; Zhou, W.; Wang, F.; Morstatter, F.; Zhang, S.; Poon, H.; Chen, M. Offset Unlearning for Large Language Models. Transactions on Machine Learning Research 2025, 2025. [Google Scholar]

- Deng, Z.; Liu, C.Y.; Pang, Z.; He, X.; Feng, L.; Xuan, Q.; Zhu, Z.; Wei, J. GUARD: Generation-time LLM Unlearning via Adaptive Restriction and Detection. In Proceedings of the International Conference on Machine Learning (2025), 2025. [Google Scholar]

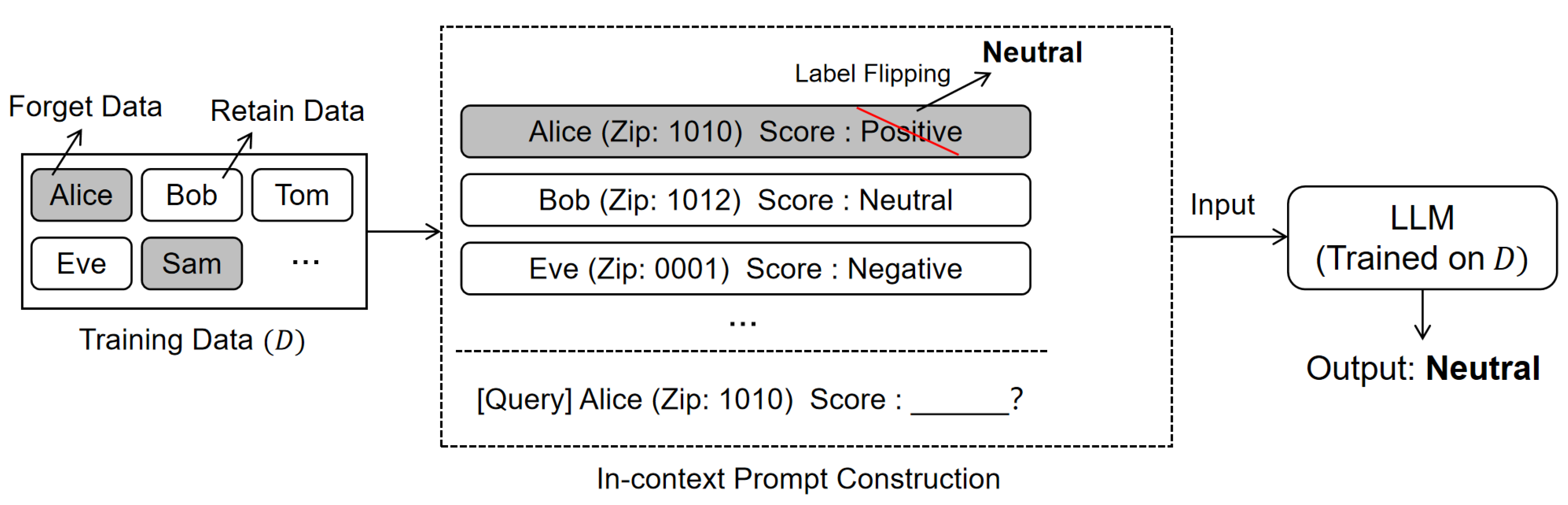

- Pawelczyk, M.; Neel, S.; Lakkaraju, H. In-Context Unlearning: Language Models as Few-Shot Unlearners. In Proceedings of the International Conference on Machine Learning, 2024), 2024. [Google Scholar]

- Gallegos, I.O.; Aponte, R.; Rossi, R.A.; Barrow, J.; Tanjim, M.M.; Yu, T.; Deilamsalehy, H.; Zhang, R.; Kim, S.; Dernoncourt, F.; et al. Self-Debiasing Large Language Models: Zero-Shot Recognition and Reduction of Stereotypes. Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics (2025) 2025, 873–888. [Google Scholar] [CrossRef]

- Sanyal, D.; Mandal, M. Agents are all you need for LLM unlearning. In Proceedings of the Second Conference on Language Modeling (2025), 2025. [Google Scholar]

- Muresanu, A.I.; Thudi, A.; Zhang, M.R.; Papernot, N. Fast Exact Unlearning for In-Context Learning Data for LLMs. In Proceedings of the International Conference on Machine Learning (2025), 2025. [Google Scholar]

- Thaker, P.; Maurya, Y.; Hu, S.; Wu, Z.S.; Smith, V. Guardrail Baselines for Unlearning in LLMs. In Proceedings of the ICLR 2024 Workshop on Secure and Trustworthy Large Language Models (2024), 2024. [Google Scholar]

- Mitchell, E.; Lin, C.; Bosselut, A.; Manning, C.D.; Finn, C. Memory-Based Model Editing at Scale. In Proceedings of the International Conference on Machine Learning (2022), 2022; pp. 15817–15831. [Google Scholar]

- Wang, S.; Zhu, T.; Ye, D.; Zhou, W. When Machine Unlearning Meets Retrieval-Augmented Generation (RAG): Keep Secret or Forget Knowledge? IEEE Transactions on Dependable and Secure Computing 2025, 1–16. [Google Scholar] [CrossRef]

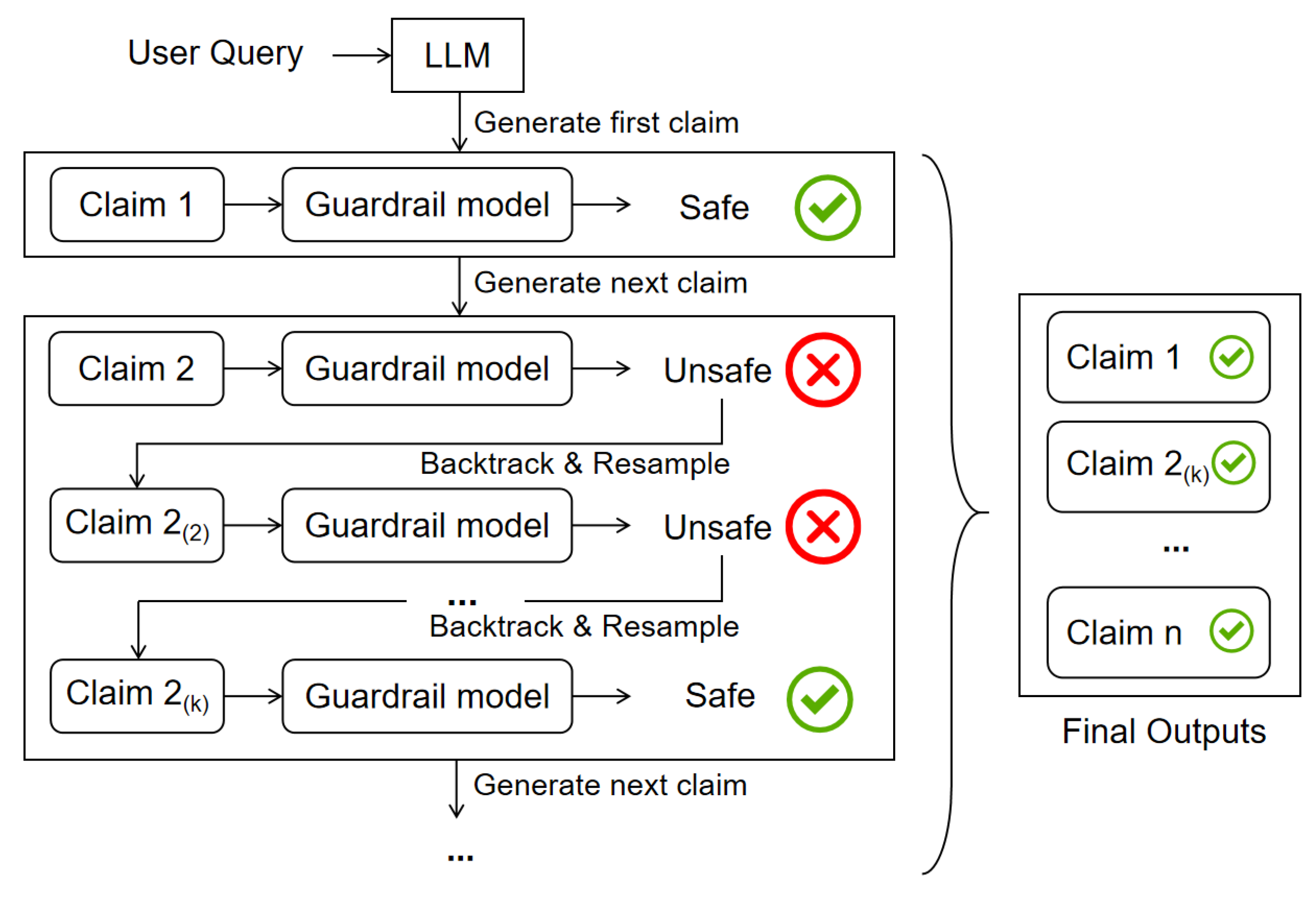

- Kang, M.; Chen, Z.; Li, B. C-SafeGen: Certified Safe LLM Generation with Claim-Based Streaming Guardrails. In Proceedings of the Advances in Neural Information Processing Systems (2025), 2025. [Google Scholar]

- Wu, Y.; Guo, J.; Li, D.; Zou, H.P.; Huang, W.C.; Chen, Y.; Wang, Z.; Zhang, W.; Li, Y.; Zhang, M.; et al. PSG-Agent: Personality-Aware Safety Guardrail for LLM-based Agents. arXiv arXiv:2509.23614.

- Ji, J.; Wang, K.; Qiu, T.A.; Chen, B.; Zhou, J.; Li, C.; Lou, H.; Dai, J.; Liu, Y.; Yang, Y. Language Models Resist Alignment: Evidence From Data Compression. Proceedings of the Annual Meeting of the Association for Computational Linguistics (2025) 2025, 23411–23432. [Google Scholar] [CrossRef]

- Hu, J.; Lian, Z.; Wen, Z.; Li, C.; Chen, G.; Wen, X.; Xiao, B.; Tan, M. Continual Knowledge Adaptation for Reinforcement Learning. In Proceedings of the Advances in Neural Information Processing Systems (2025), 2025. [Google Scholar]

- Chen, Y.; Zhang, S. Intrinsic Preservation of Plasticity in Continual Quantum Learning. arXiv arXiv:2511.17228. [CrossRef]

- Xu, S.; Strohmer, T. Machine Unlearning via Information Theoretic Regularization. arXiv arXiv:2502.05684. [CrossRef]

- Gur-Arieh, Y.; Suslik, C.; Hong, Y.; Barez, F.; Geva, M. Precise In-Parameter Concept Erasure in Large Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP (2025); 2025.

- Srivastava, A. The Fundamental Limits of LLM Unlearning: Complexity-Theoretic Barriers and Provably Optimal Protocols. In Proceedings of the ICLR Workshop on Building Trust in Language Models and Applications (2025), 2025. [Google Scholar]

- Zou, A.; Phan, L.; Wang, J.; Duenas, D.; Lin, M.; Andriushchenko, M.; Kolter, J.Z.; Fredrikson, M.; Hendrycks, D. Improving Alignment and Robustness with Circuit Breakers. In Proceedings of the Advances in Neural Information Processing Systems, 2024), 2024. [Google Scholar]

- Foster, J.; Fogarty, K.; Schoepf, S.; Dugue, Z.; Öztireli, C.; Brintrup, A. An Information Theoretic Approach to Machine Unlearning. Transactions on Machine Learning Research 2025, 2025. [Google Scholar]

- Di, Z.; Yu, S.; Vorobeychik, Y.; Liu, Y. Adversarial Machine Unlearning. In Proceedings of the International Conference on Learning Representations (2025), 2025. [Google Scholar]

- Entesari, T.; Hatami, A.; Khaziev, R.; Ramakrishna, A.; Fazlyab, M. Constrained Entropic Unlearning: A Primal-Dual Framework for Large Language Models. In Proceedings of the Advances in Neural Information Processing Systems, 2025), 2025; 39. [Google Scholar]

- Zhai, N.; Shao, P.; Zheng, B.; Yang, Y.; Shen, F.; Bai, L.; Yang, X. Maximizing Local Entropy Where It Matters: Prefix-Aware Localized LLM Unlearning. arXiv 2026. arXiv:2601.03190. [CrossRef]

- Cui, Y.; Cheung, M.H. The Price of Forgetting: Incentive Mechanism Design for Machine Unlearning. IEEE Transactions on Mobile Computing 2025, 24, 11852–11864. [Google Scholar] [CrossRef]

- Wang, Q.; Xu, R.; He, S.; Berry, R.; Zhang, M. Unlearning Incentivizes Learning Under Privacy Risk. Proceedings of the Proceedings of the ACM Web Conference (2025) 2025, 1456–1467. [Google Scholar] [CrossRef]

- Ding, N.; Sun, Z.; Wei, E.; Berry, R. Incentivized Federated Learning and Unlearning. IEEE Transactions on Mobile Computing 2025, 24, 8794–8810. [Google Scholar] [CrossRef]

- Hsu, H.; Niroula, P.; He, Z.; Chen, C.F. Are We Really Unlearning? The Presence of Residual Knowledge in Machine Unlearning. In Proceedings of the International Conference on Learning Representations (ICLR) Workshop on I Can’t Believe It’s Not Better: Challenges in Applied Deep Learning (2025), 2025. [Google Scholar]

- Jenko, S.; Mündler, N.; He, J.; Vero, M.; Vechev, M.T. Black-Box Adversarial Attacks on LLM-Based Code Completion. In Proceedings of the International Conference on Machine Learning (2025), 2025. [Google Scholar]

- Zhang, B.; Chen, Z.; Shen, C.; Li, J. Verification of Machine Unlearning is Fragile. In Proceedings of the International Conference on Machine Learning (2024), 2024. [Google Scholar]

- Xu, H.; Zhu, T.; Zhang, L.; Zhou, W. Really Unlearned? Verifying Machine Unlearning via Influential Sample Pairs. IEEE Transactions on Dependable and Secure Computing 2026, 23, 1671–1686. [Google Scholar] [CrossRef]

- Wang, N.; Wu, N.; Hui, X.; Wang, J.; Yuan, X. zkUnlearner: A Zero-Knowledge Framework for Verifiable Unlearning with Multi-Granularity and Forgery-Resistance. arXiv arXiv:2509.07290.

- Maheri, M.M.; Cotterill, S.; Davidson, A.; Haddadi, H. ZK-APEX: Zero-Knowledge Approximate Personalized Unlearning with Executable Proofs. arXiv arXiv:2512.09953.

- Eisenhofer, T.; Riepel, D.; Chandrasekaran, V.; Ghosh, E.; Ohrimenko, O.; Papernot, N. Verifiable and Provably Secure Machine Unlearning. Proceedings of the IEEE Conference on Secure and Trustworthy Machine Learning (2025) 2025, 479–496. [Google Scholar] [CrossRef]

- Gao, X.; Ma, X.; Wang, J.; Sun, Y.; Li, B.; Ji, S.; Cheng, P.; Chen, J. VeriFi: Towards Verifiable Federated Unlearning. IEEE Transactions on Dependable and Secure Computing 2024, 21, 5720–5736. [Google Scholar] [CrossRef]

- Nguyen, T.L.; de Oliveira, M.T.; Braeken, A.; Ding, A.Y.; Pham, Q.V. Towards Verifiable Federated Unlearning: Framework, Challenges, and The Road Ahead. IEEE Internet Computing 2026. [Google Scholar] [CrossRef]

| Reference | Year | Primary Taxonomy | Key Focus / Coverage |

|---|---|---|---|

| Liu et al. [12] | 2025 | Model and Input Optimization | Focus: Examines optimization algorithms and in-context learning techniques for LLMs. Limitation: Categorizes methods by algorithmic steps rather than the practical challenges of deployment such as cost or black-box access. |

| Xu et al. [13] | 2024 | Data Reorganization and Model Manipulation | Focus: Emphasizes verification mechanisms and data handling strategies in general machine learning. Limitation: Targets traditional models and lacks detail on LLM issues such as catastrophic forgetting in generative tasks. |

| Liu et al. [14] | 2024 | Data and Model Modification Techniques | Focus: Analyzes unlearning in the context of privacy risks such as inference attacks and defense mechanisms. Limitation: Views unlearning mainly as a security defense with less discussion on utility preservation or computational efficiency. |

| Wang et al. [15] | 2024 | LLM Lifecycle Stages | Focus: Reviews security threats across pre-training, fine-tuning, and deployment. Limitation: Discusses unlearning briefly as a remediation strategy and lacks detailed algorithmic comparison. |

| Ours | 2026 | Practical Deployment Challenges | Focus: Systematically organizes methods by four core challenges: performance degradation, completeness, efficiency, and black-box constraints. Coverage: Bridges the gap between theoretical algorithms and practical application requirements. |

| Notation | Description | Notation | Description |

| D | Full dataset | Parameters of original model | |

| Forgotten set | Parameters of unlearned model | ||

| Retained set | Parameters of retrained model | ||

| Sample pair | Output distribution of original model | ||

| Input space | Output distribution of unlearned model | ||

| Label space | Output distribution of retrained model | ||

| Original model | Learning process | ||

| Unlearned model | Unlearning process | ||

| Retrained model | Behavioral divergence measure |

| Category | Distinctions | Performance | Completeness | Efficiency | Black-Box |

|---|---|---|---|---|---|

| Data | Pre-training Data Scale | **** | **** | ***** | **** |

| Data | Data Dependencies | **** | **** | ** | *** |

| Data | Unlearning Targets | **** | ***** | *** | **** |

| Model | Model Architecture | **** | **** | *** | **** |

| Model | Model Scale | **** | ***** | ***** | ** |

| Model | Knowledge Distribution | ***** | ***** | ** | ***** |

| Output | Unlearning Precision | *** | *** | ** | ***** |

| Output | Evaluation Metrics | *** | ***** | ** | ***** |

| Output | Knowledge Recovery | ** | **** | * | ***** |

| Sub-category | Basic Ideas | Advantages | Limitations |

|---|---|---|---|

| Direct Optimization and Constraint Methods | |||

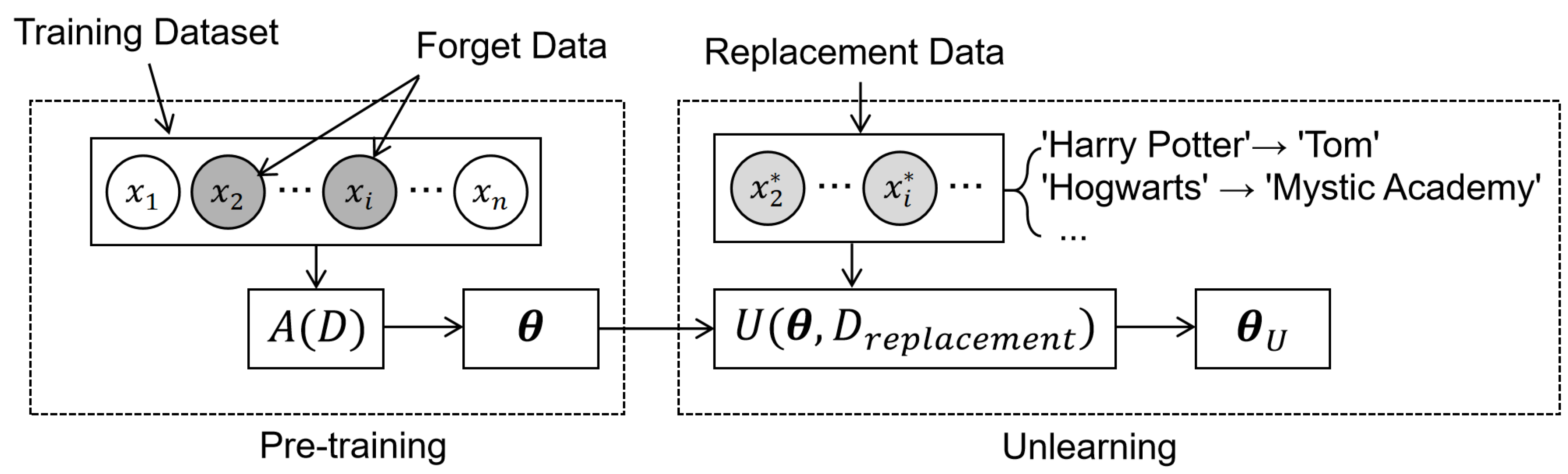

| Replacement Fine-tuning [16,41] | Replaces sensitive information with neutral or inverted alternatives and briefly fine-tunes the model | Simple to implement; effectively maintains the linguistic fluency of the model | Difficult to fully erase deep knowledge |

| Regularized Optimization [42,43] | Identifies important parameters and restricts their updates during unlearning | Theoretically guaranteed to preserve general capabilities; precise control over weight changes | High computational overhead (e.g., calculating second-order derivatives) |

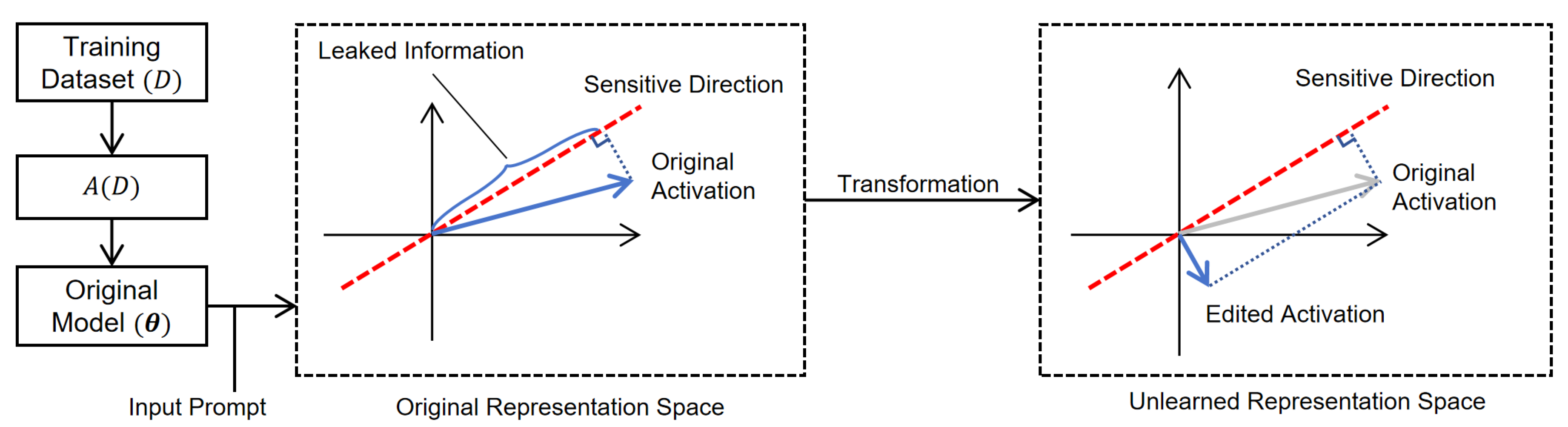

| Representation Misalignment [44,45] | Optimizes model parameters to steer the latent representations of sensitive inputs towards random directions | Effectively reduces token confidence for forgotten concepts; high robustness against adversarial jailbreak attacks | Effectiveness declines in deeper layers; sensitive to the settings of steering layers and coefficients. |

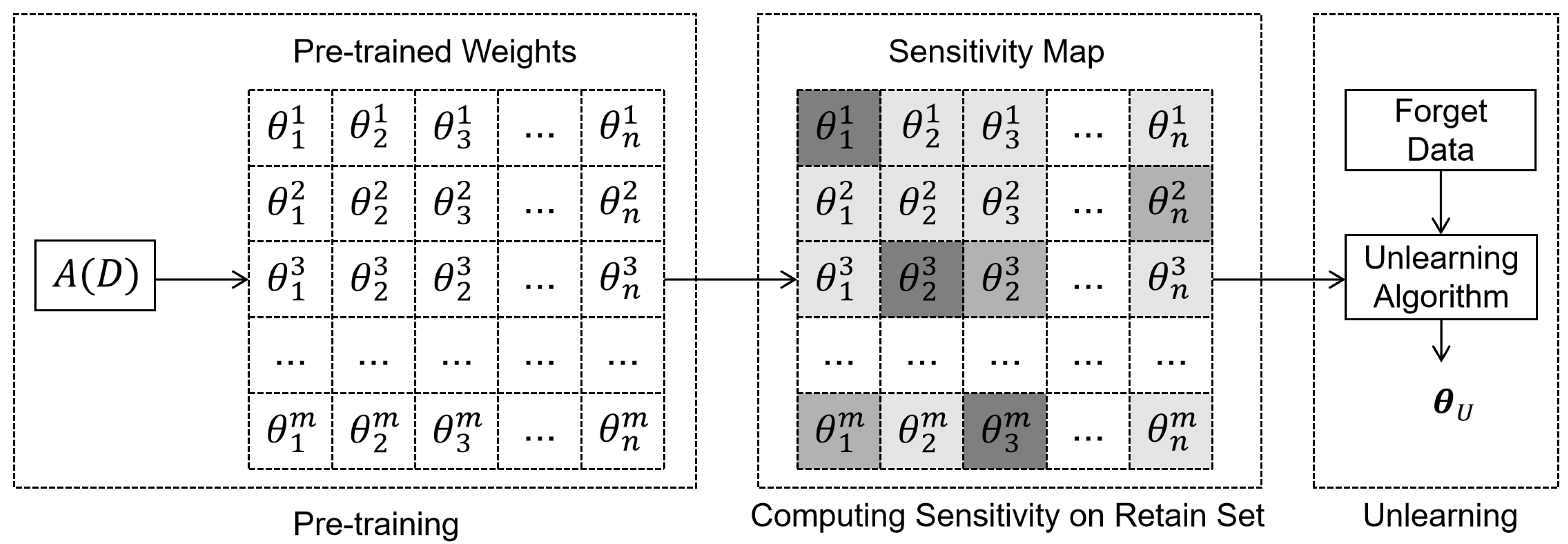

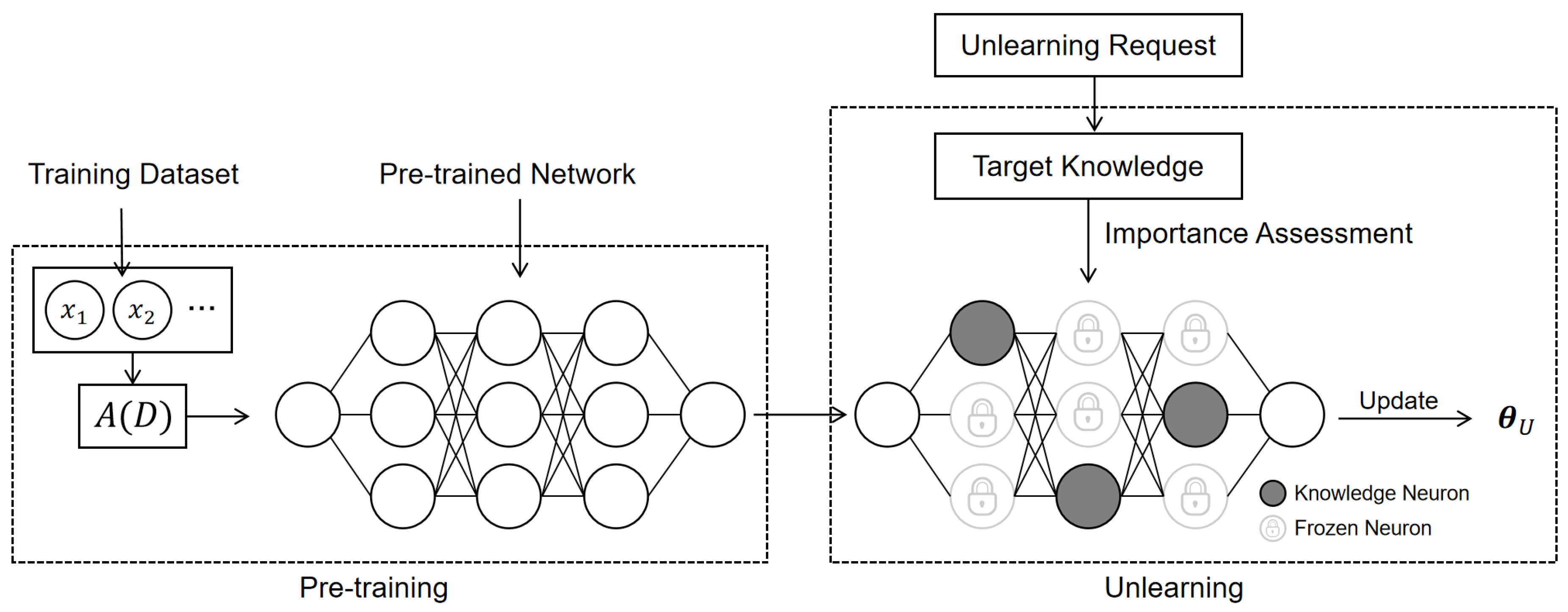

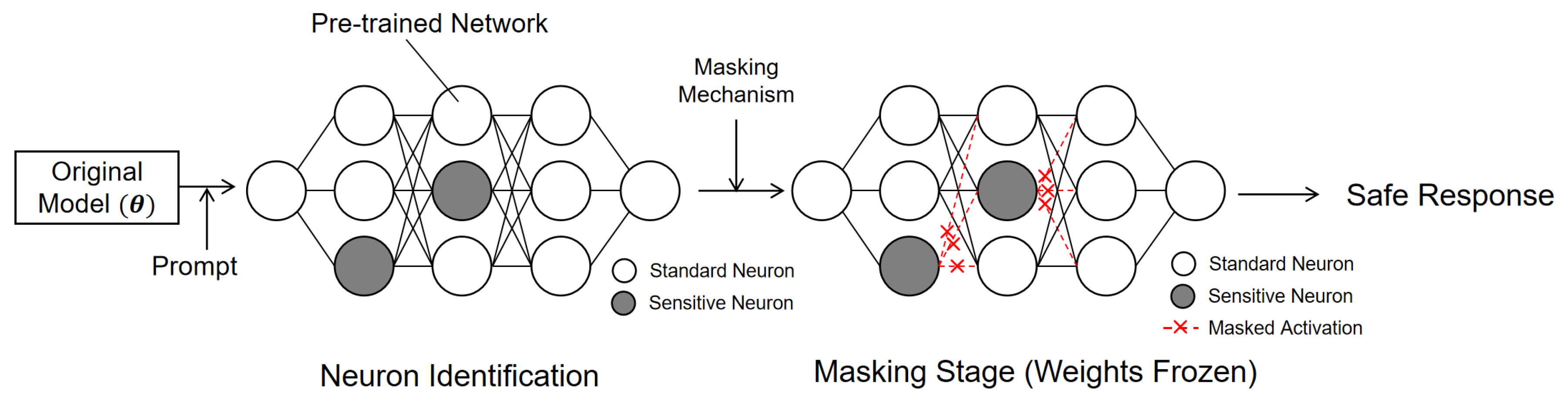

| Partial Parameter Modification [46,50,51] | Identifies and updates only the specific neurons or blocks responsible for target knowledge | Minimizes impact on the rest of the model; low risk of overall performance decline | Difficult to locate distributed knowledge; Risk of residual knowledge |

| Preference Optimization [56,57] | Uses preference pairs (benign vs. forget) to guide the model, often anchored to a reference model | Training is relatively stable; effectively prevents model collapse | Relies heavily on high-quality preference data; requires maintaining a reference model |

| External Guidance Methods | |||

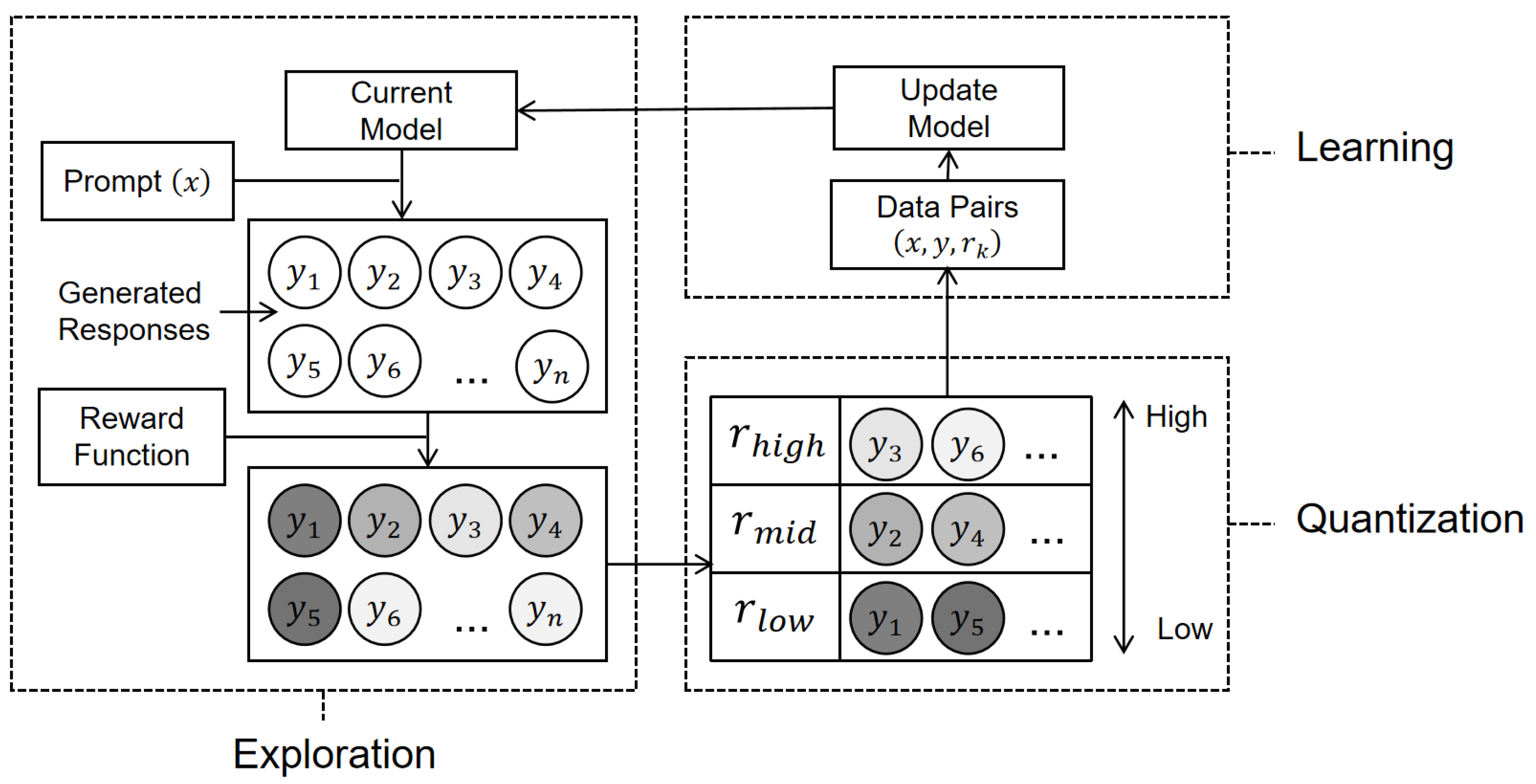

| Reinforcement Learning [61] | Uses dynamic reward signals to penalize unwanted outputs and guide the unlearning process | Effectively maintains linguistic fluency and diversity; provides precise control over generation | Complex pipeline due to iterative generation and scoring; higher computational cost than simple fine-tuning |

| KD-Based Unlearning [62,63] | Leverages teacher model outputs to constrain the unlearning process and preserve general capabilities | Can be applied to diverse NLP tasks; effectively maintains performance on retained data | Requires storage and maintenance of auxiliary models |

| Sub-category | Basic Ideas | Advantages | Limitations |

|---|---|---|---|

| Frameworks for Verifying Completeness | |||

| Standard Benchmarks [29,30,44] | Uses standardized synthetic datasets and multi-dimensional metrics to rigorously certify data removal | Offers reproducible benchmarks for different models; covers diverse evaluation dimensions instead of simple accuracy | Synthetic data may lack real-world complexity; insufficient to detect deep or latent knowledge traces |

| Advanced Probing and Deep Verification [64,71,72] | Employs aggressive adversarial attacks or internal state analysis to detect hidden residual knowledge under extreme conditions | Highly sensitive to latent residuals; finds hidden risks missed by standard evaluation | White-box metrics require full parameter access; high computational costs for extensive adversarial probing |

| Interventional Strategies | |||

| Mitigation of Relearning Risks [39,76,78] | Proactively modifies model parameters to prevent the recovery of forgotten information | Greatly improves the permanence of unlearning; prevents knowledge recovery triggered by prompts or model modifications | Aggressive optimization may degrade general model utility; increases training complexity |

| Machine Unlearning in External Tools [79,80,81] | Filters outputs from external tools and RAG systems to prevent displaying sensitive information retrieved from outside sources | Addresses privacy risks across the entire system, not just within the model’s parameters; prevents data leakage that occurs when the model accesses external databases | May increase response time due to extra processing steps; keeping the model aligned with external data sources can be challenging |

| Sub-category | Basic Ideas | Advantages | Limitations |

|---|---|---|---|

| Parameter Space Intervention | |||

| Task Vector Arithmetic [82,83,84] | Encodes task knowledge into vectors and performs arithmetic operations directly on model weights to remove information | Updates models instantly via simple subtraction; Allows users to combine multiple unlearning tasks easily | Requires a fine-tuned reference model to calculate the vector; risk of degrading unrelated skills when knowledge is intertwined |

| Interventions During Inference | |||

| Representation Space-based Unlearning [87,88,89] | Projecting activations onto a subspace orthogonal to the target concept | Preserves the original model weights; Theoretical guarantee for linear erasure | Effectiveness decreases when data associations are non-linear |

| Neuron-level Activation Editing [90,91,93] | Identifies specific neurons responsible for sensitive knowledge and suppresses these neurons based on importance scores | Minimal computational cost during inference; Enables precise control at the individual neuron level | Risk of damaging polysemantic neurons; Incomplete removal occurs if knowledge is distributed across many neurons |

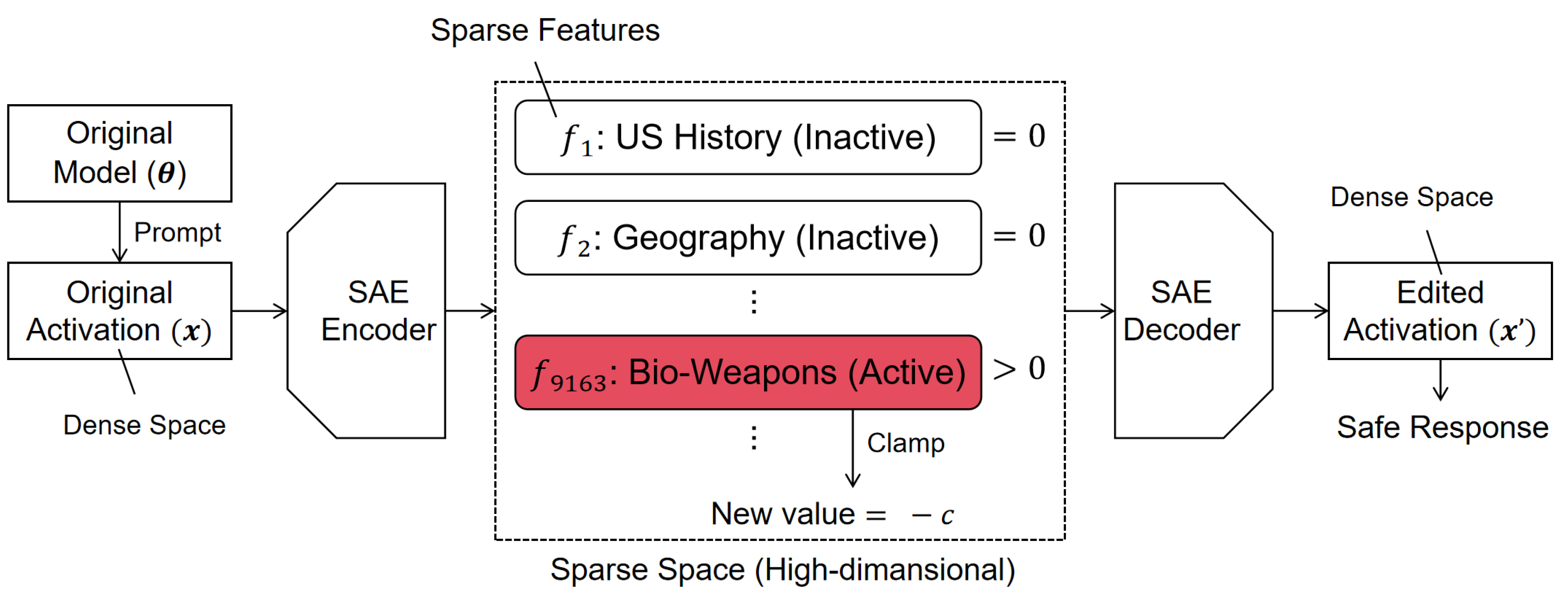

| Sparse Autoencoder Feature Editing [94,95,96] | Decomposes dense activations into interpretable sparse features; Blocks features related to sensitive concepts | High interpretability of internal behaviors; Effectively steers generation away from specific concepts | Additional inference cost due to encoder-decoder steps; relies on the quality of feature decomposition |

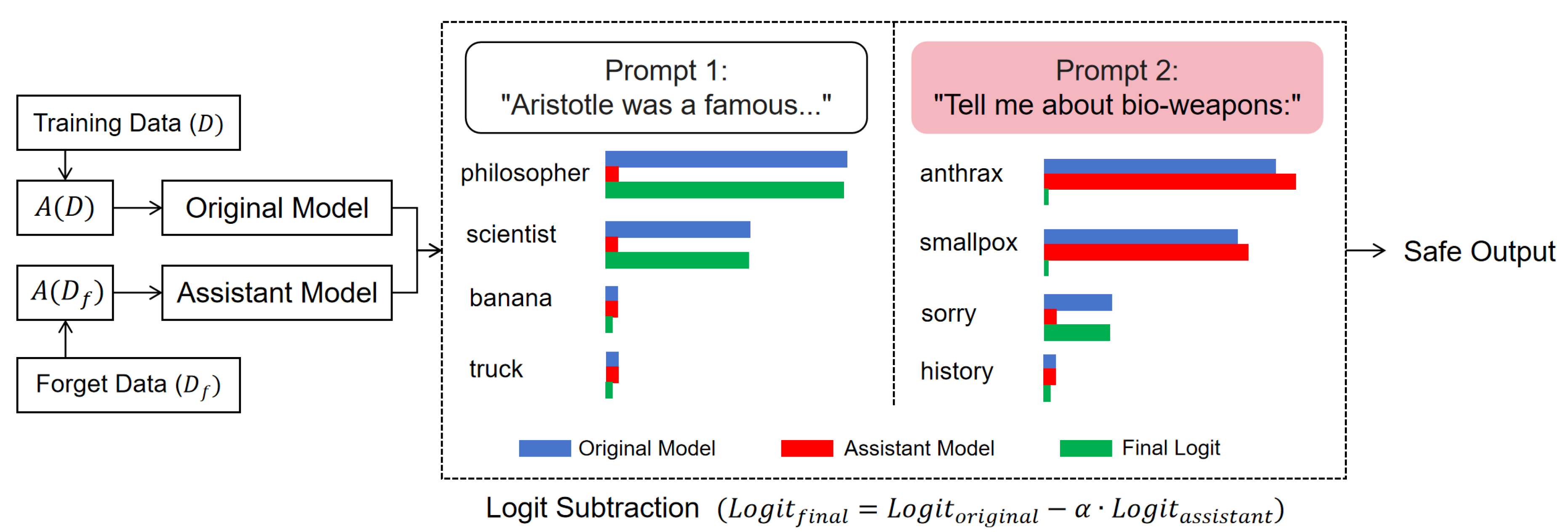

| Logit-Level Strategies [36,97,100] | Modifies final output probabilities; Subtracts logits from an assistant model or applies specific penalties | Applicable to diverse models; Effectively blocks the verbatim generation of sensitive tokens | Running assistant models causes significant inference latency; Performance depends on the alignment of the assistant model |

| Sub-category | Basic Ideas | Advantages | Limitations |

|---|---|---|---|

| Input-Side Intervention [37,101,105,106] | Sanitizes inputs using defensive prompts, external rules, or corrective agents | Deploys rapidly without architectural changes; Minimizes impact on response style | Remains vulnerable to prompt injection; Consumes context window capacity; Incurs extra costs for processing defensive prompts |

| Output-Side Intervention [108,109] | Monitors decoding streams to backtrack and regenerate unsafe claims. Verifies final outputs using auxiliary classifiers or guard agents to block harmful content | Operates independently from the main model; achieves high precision on specific safety categories; Acts as a backup safety net for bypassed inputs | Causes false alarms due to missing context; wastes computation on blocked outputs; Adds post-processing latency |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).