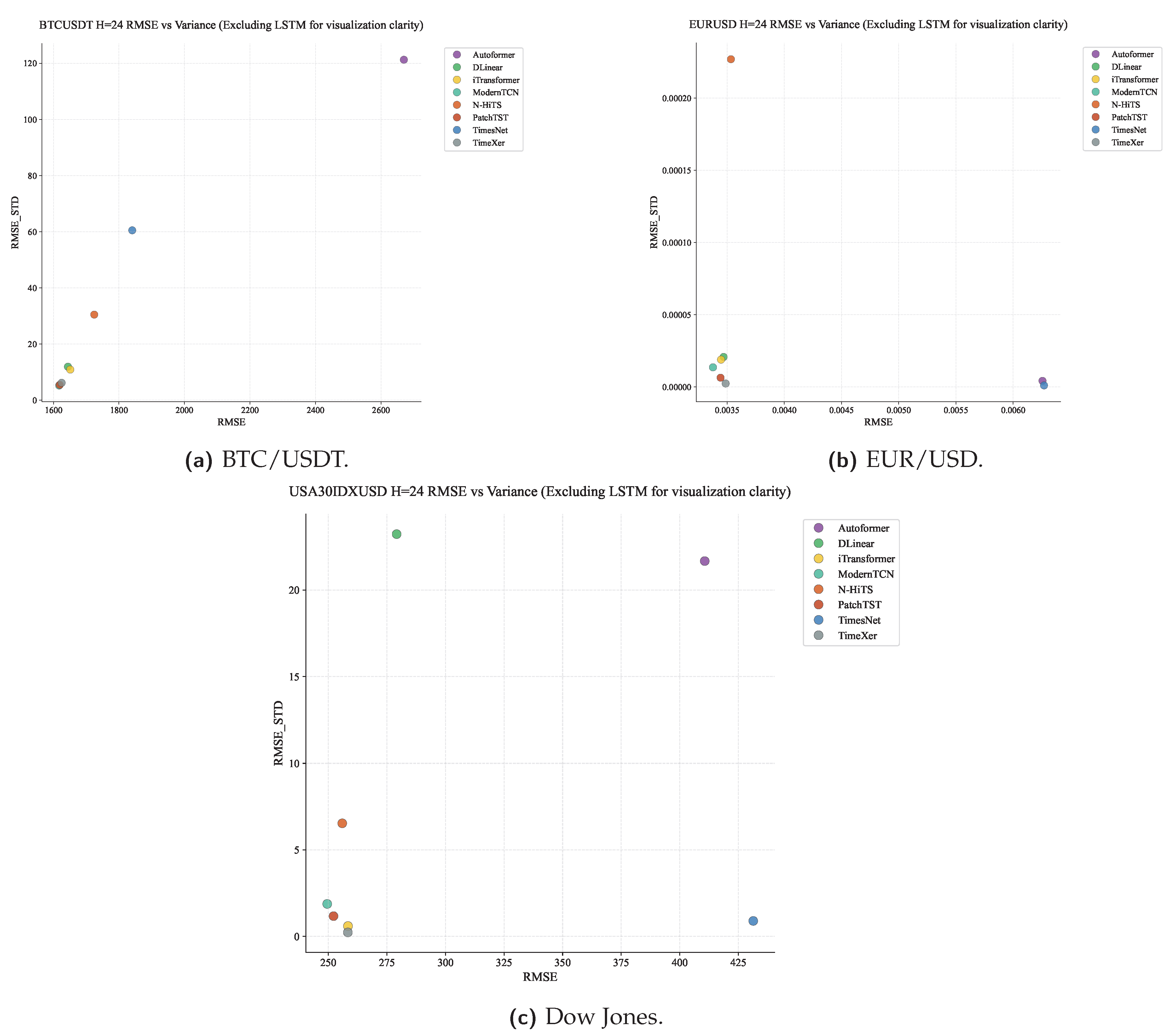

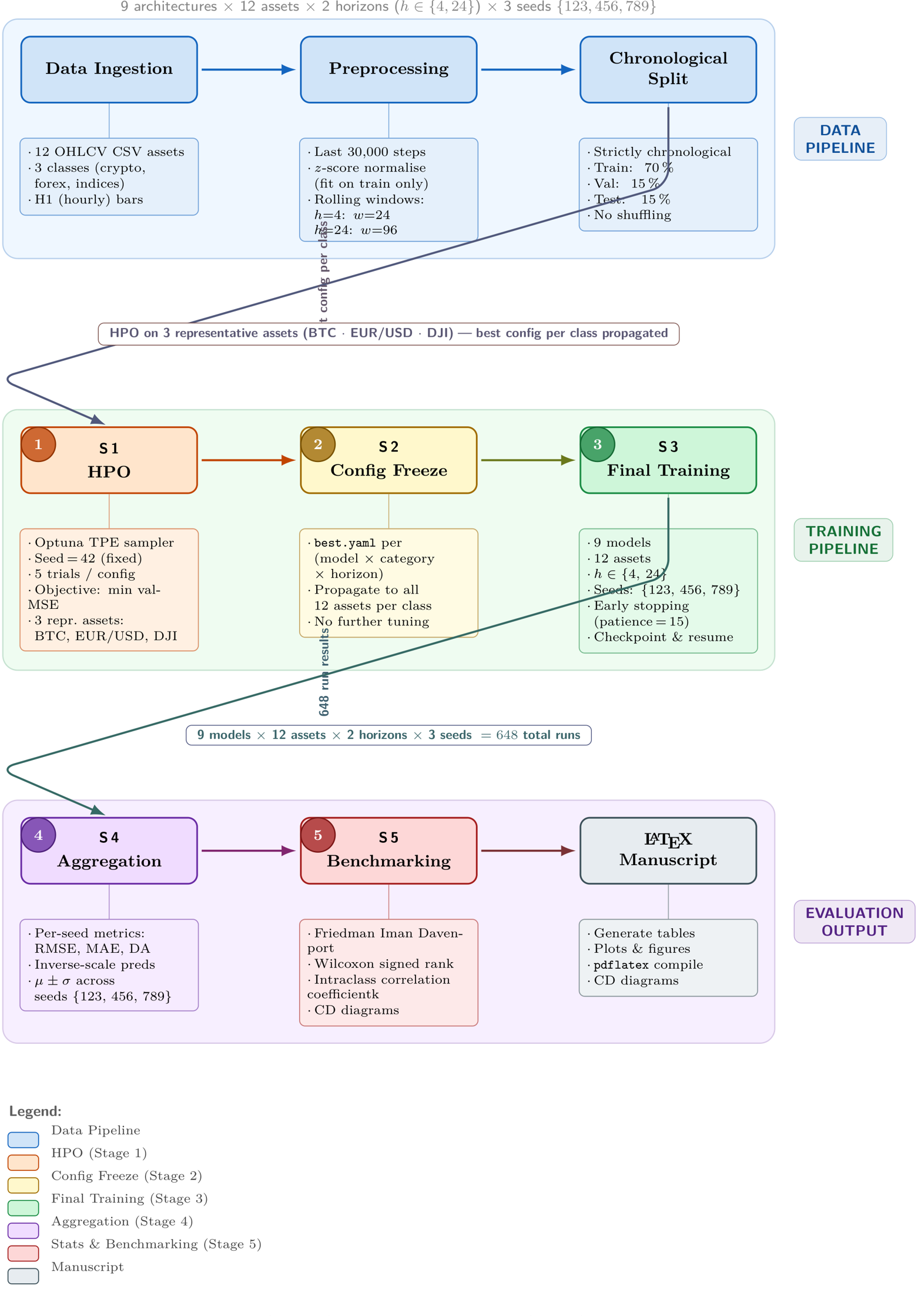

Figure 2.

Five-stage experimental pipeline. Stage 1: Fixed-seed Bayesian HPO on representative assets (BTC/USDT, EUR/USD, Dow Jones; seed 42; 5 Optuna TPE trials; 50 epochs per trial). Stage 2: Best configuration frozen per (model, category, horizon) triple. Stage 3: Multi-seed final training (seeds 123, 456, 789; 100 epochs maximum; early stopping with patience 15). Stage 4: Test-set metric aggregation with inverse scaling (mean ± std across seeds). Stage 5: Benchmarking with rank-based leaderboard analysis, visualisation, and variance decomposition. All 918 experimental runs—270 HPO trials plus 648 final training runs—are conducted under identical conditions.

Figure 2.

Five-stage experimental pipeline. Stage 1: Fixed-seed Bayesian HPO on representative assets (BTC/USDT, EUR/USD, Dow Jones; seed 42; 5 Optuna TPE trials; 50 epochs per trial). Stage 2: Best configuration frozen per (model, category, horizon) triple. Stage 3: Multi-seed final training (seeds 123, 456, 789; 100 epochs maximum; early stopping with patience 15). Stage 4: Test-set metric aggregation with inverse scaling (mean ± std across seeds). Stage 5: Benchmarking with rank-based leaderboard analysis, visualisation, and variance decomposition. All 918 experimental runs—270 HPO trials plus 648 final training runs—are conducted under identical conditions.

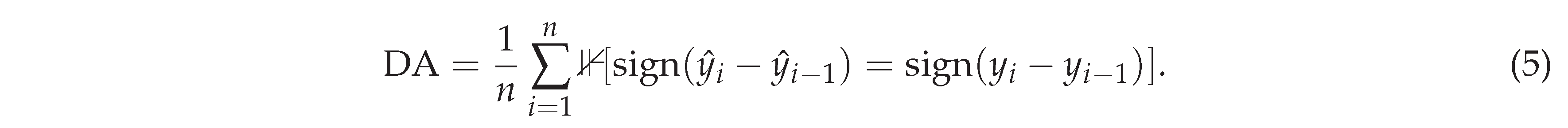

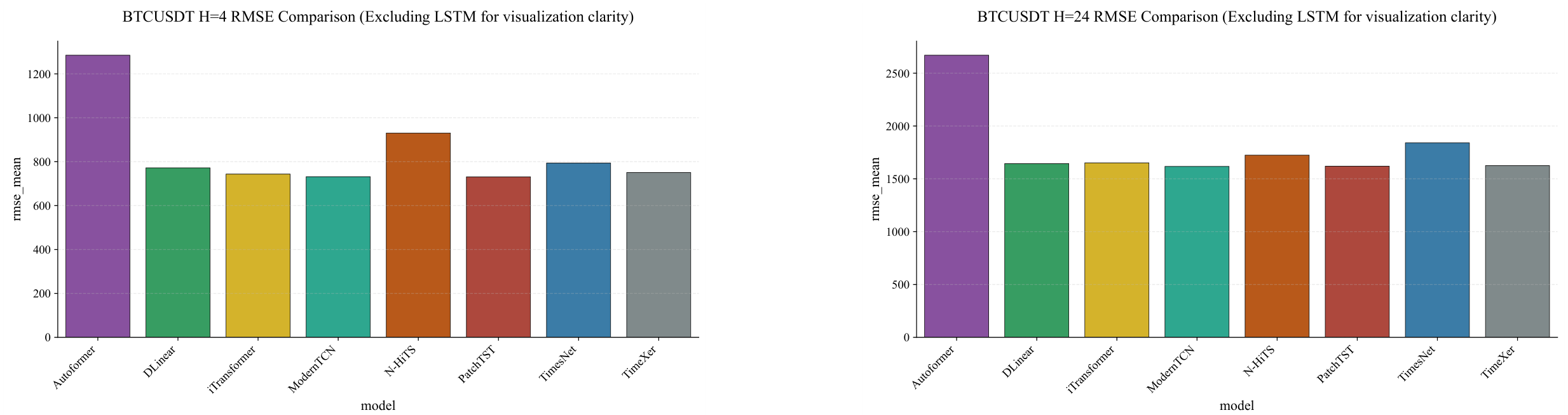

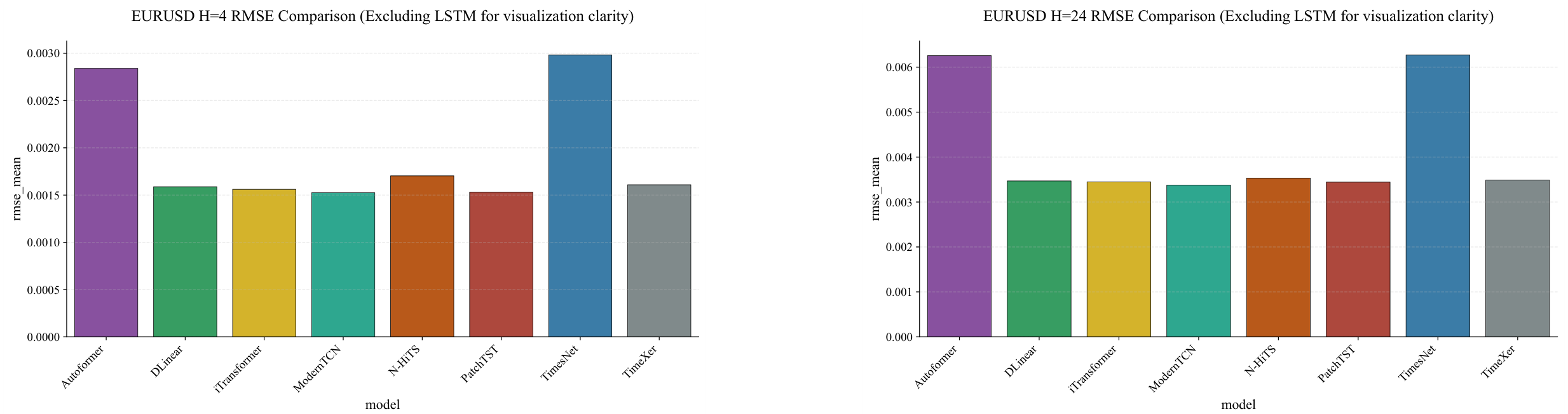

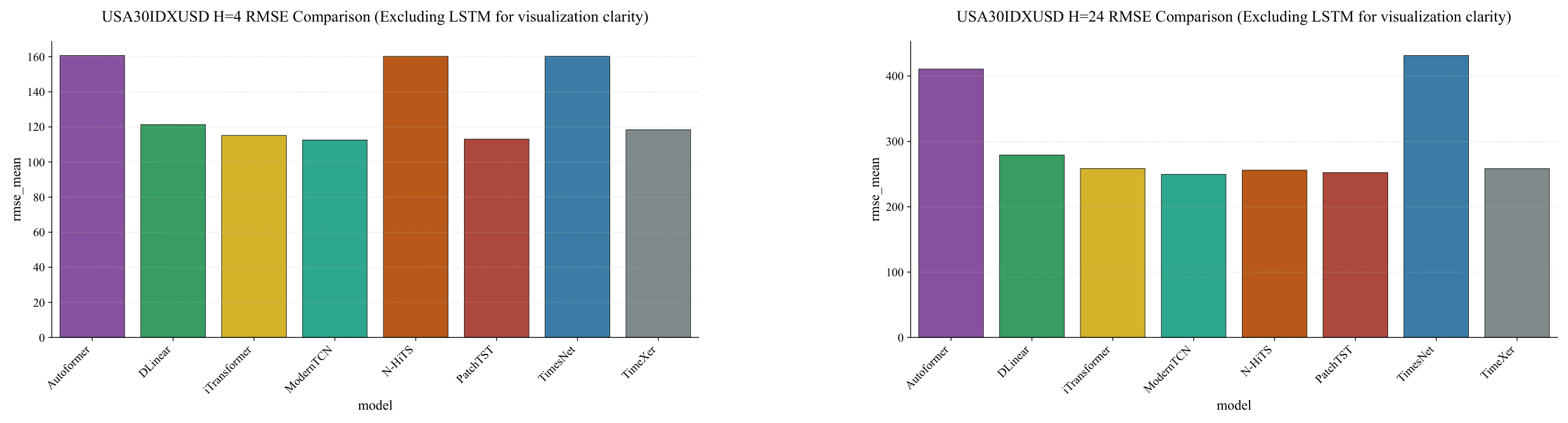

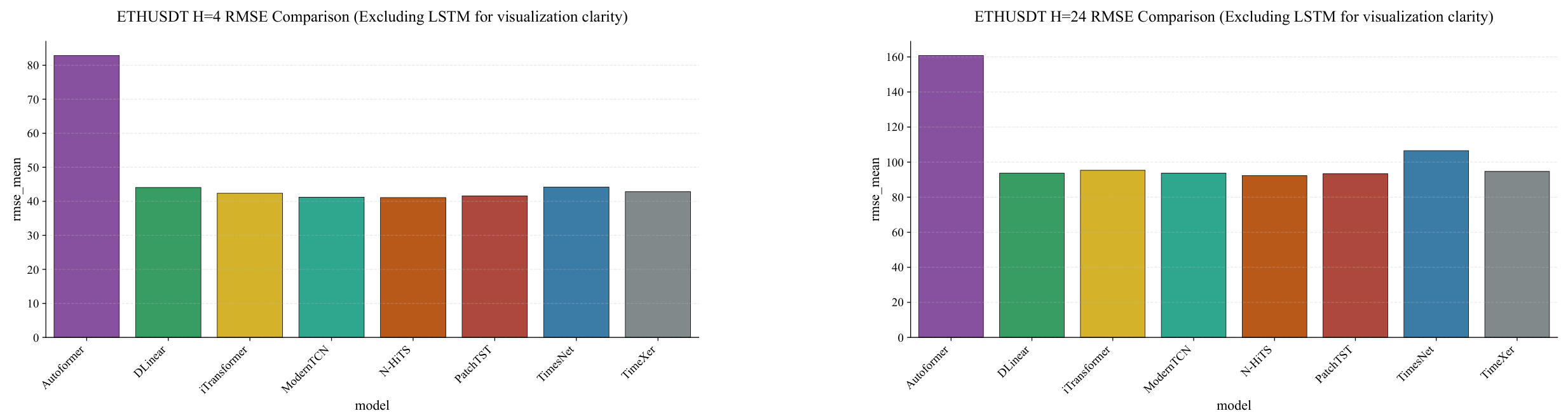

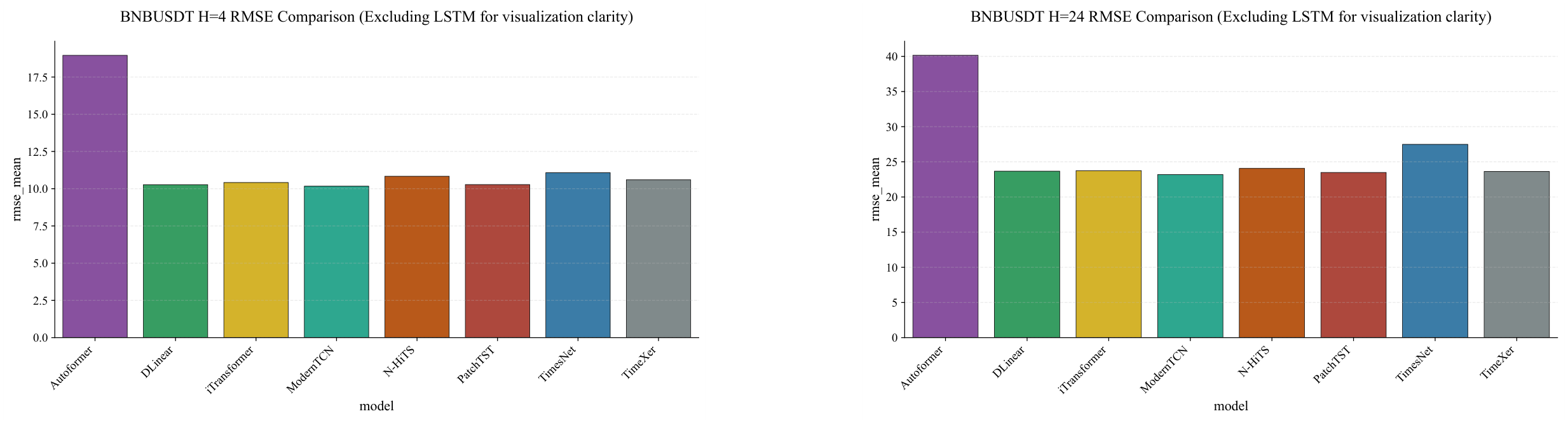

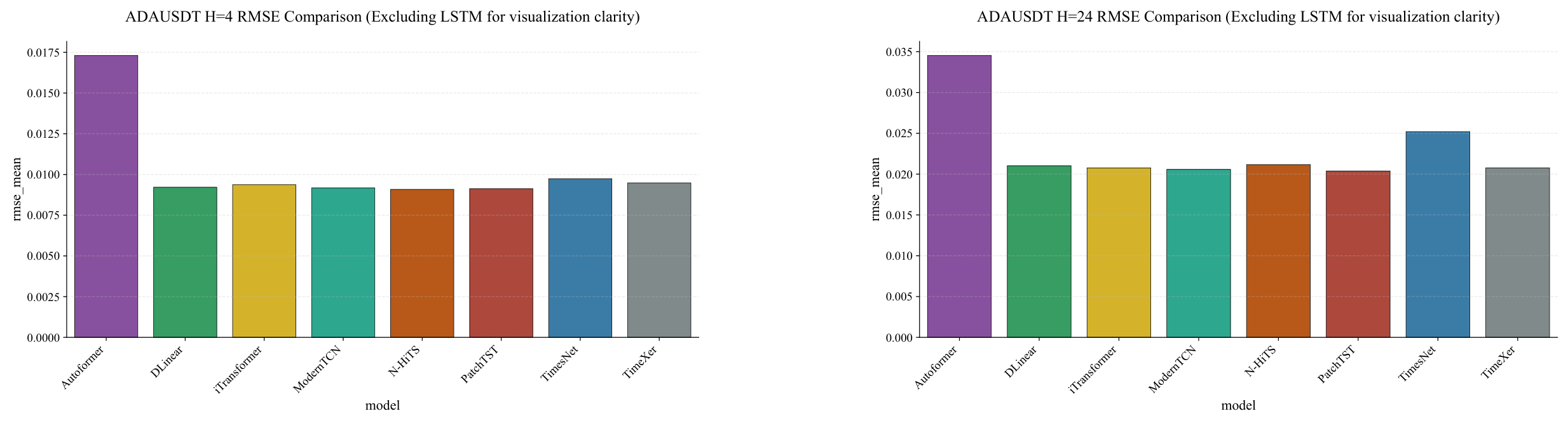

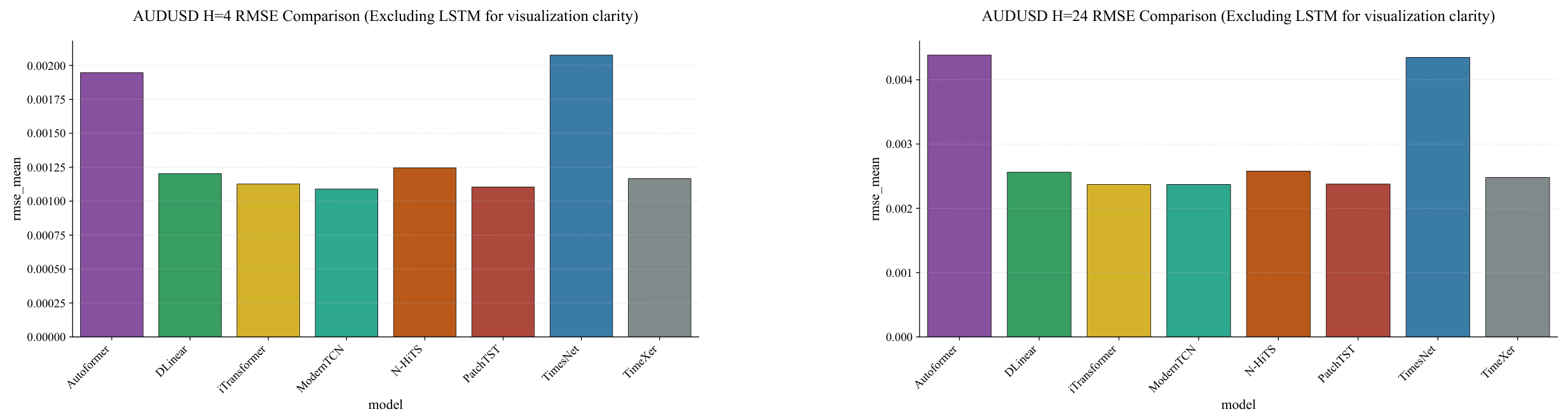

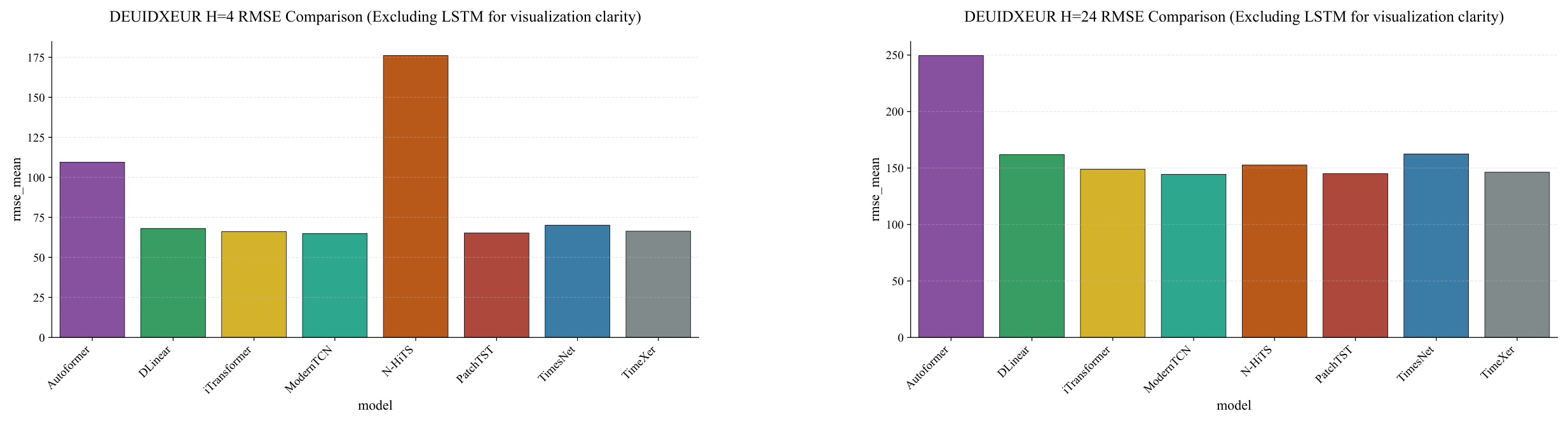

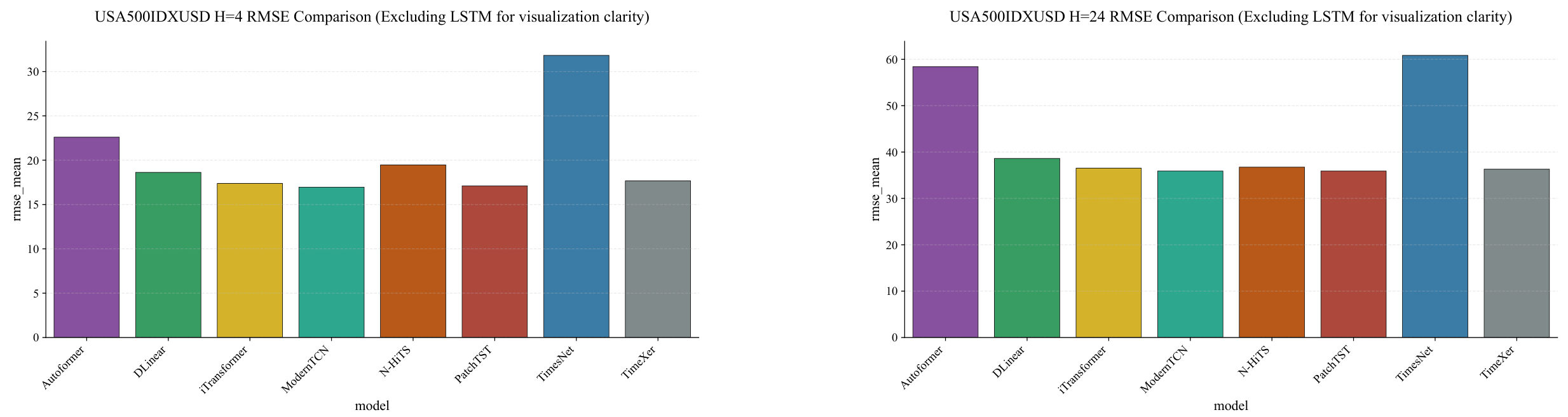

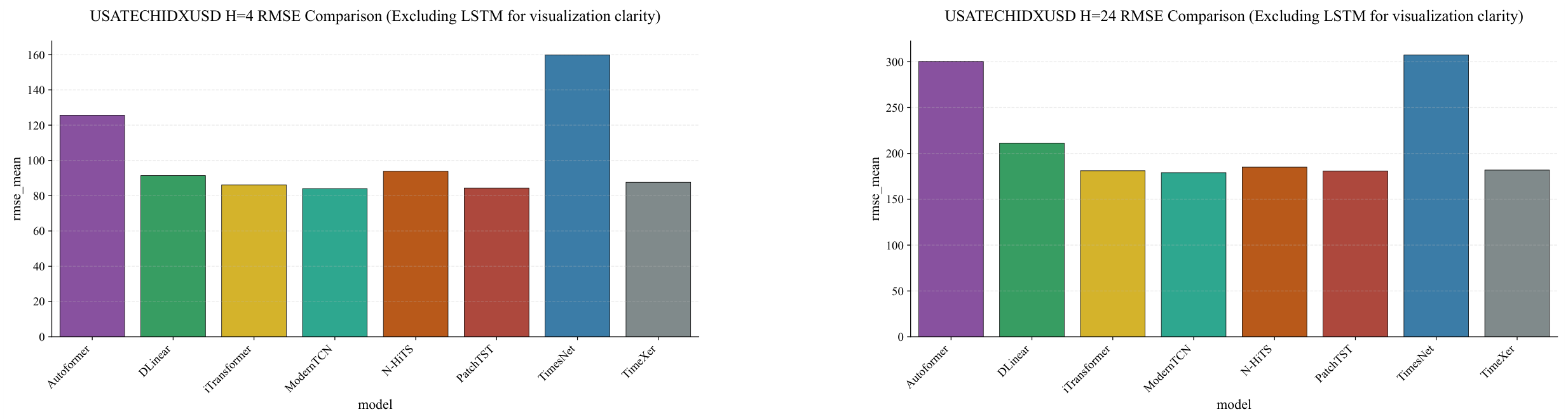

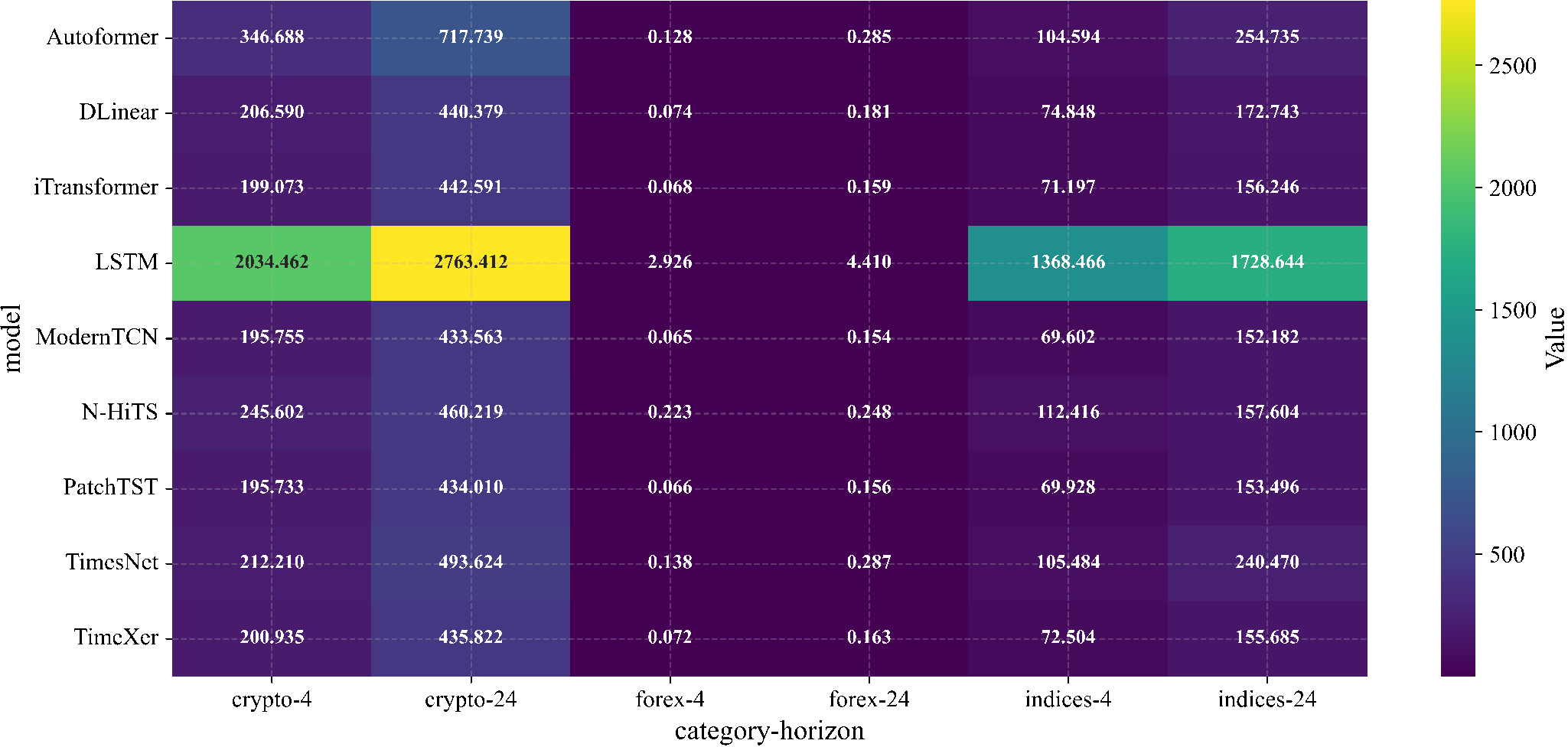

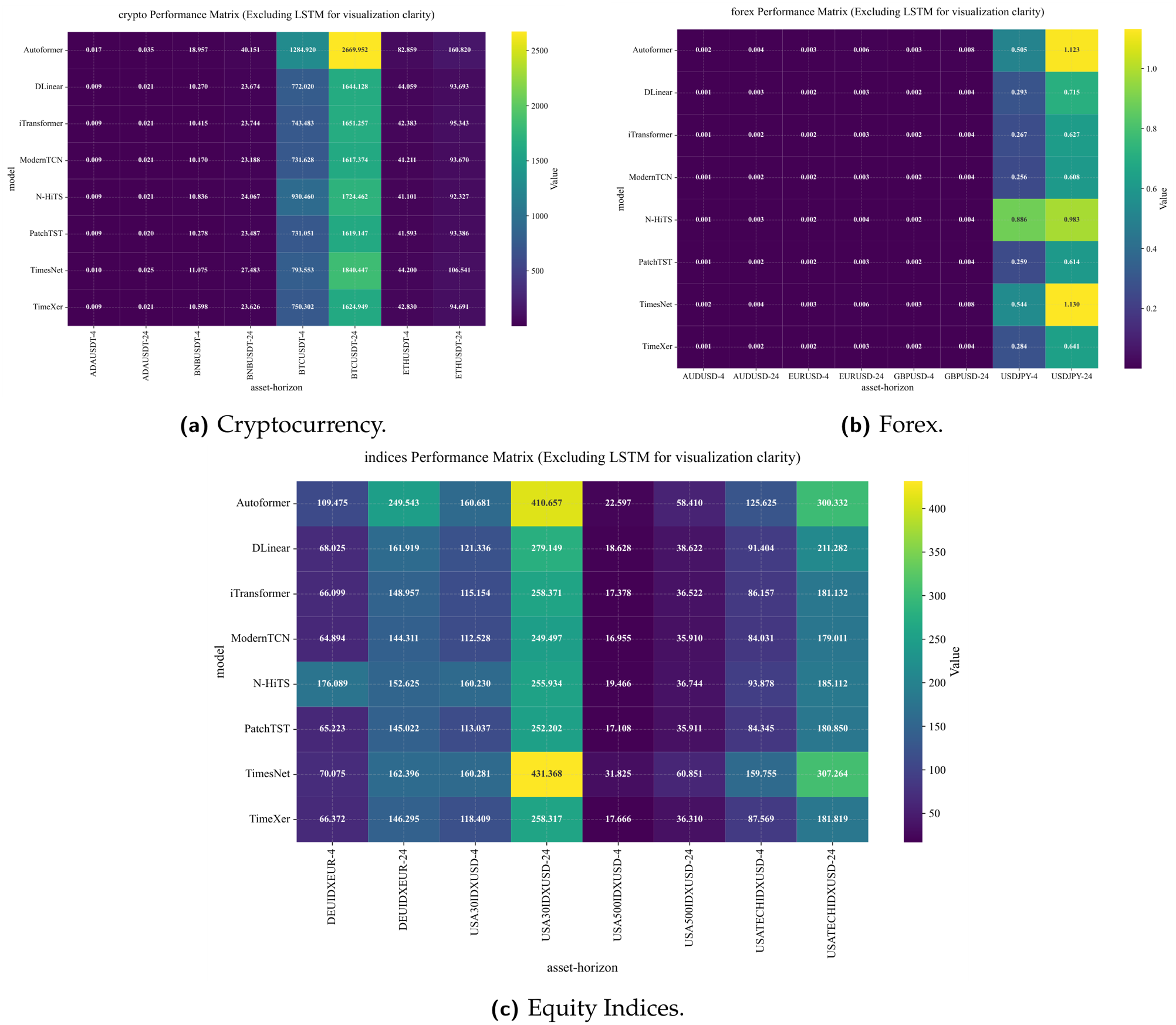

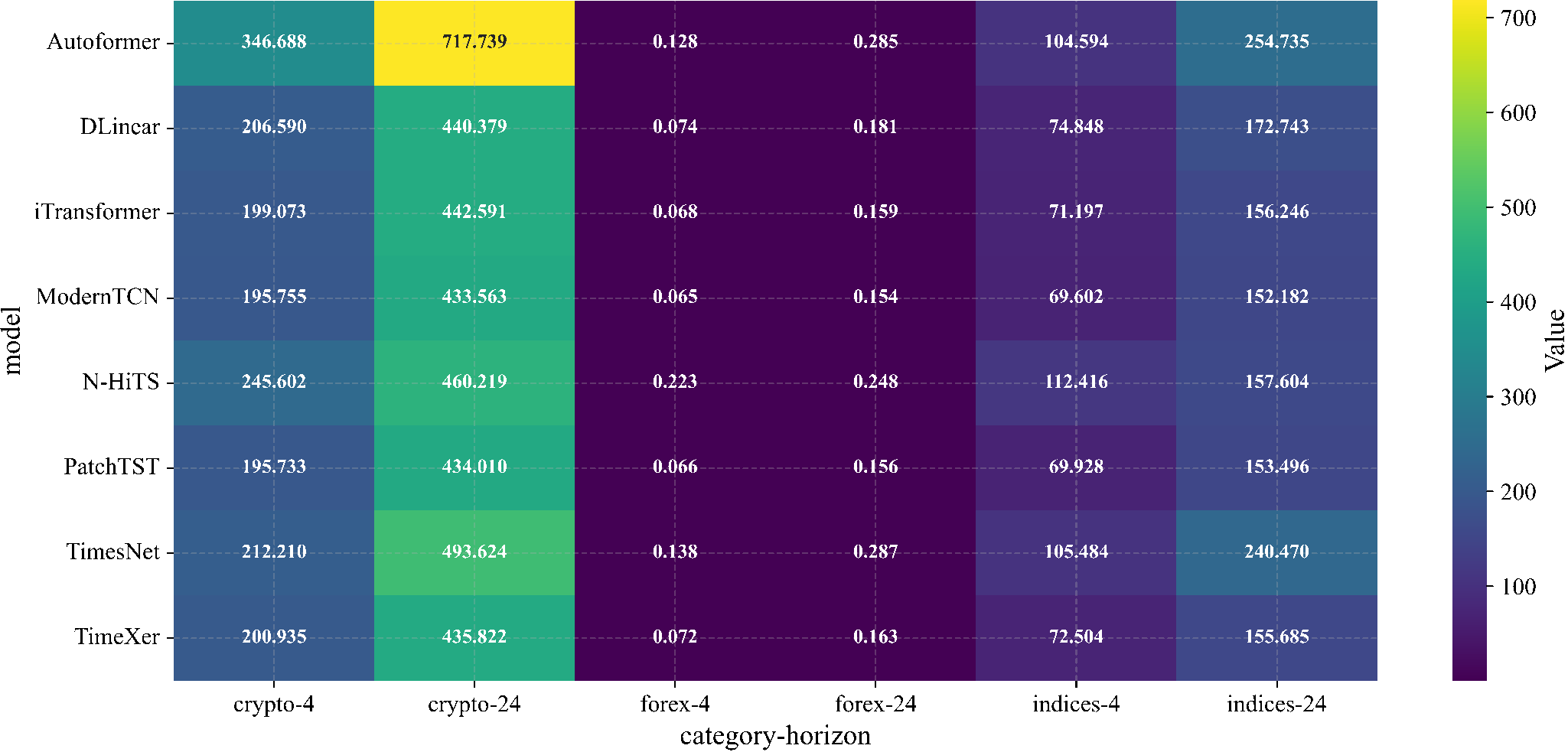

Figure 3.

Global

rmse heatmap across eight modern architectures and 24 evaluation points (12 assets × 2 horizons). Lighter cells indicate lower error. ModernTCN and PatchTST consistently achieve the lowest

rmse values across all asset–horizon combinations. LSTM is excluded for visual clarity; the full nine-model variant is provided in

Appendix A.5,

Figure A15. Values represent mean

rmse across three seeds.

Figure 3.

Global

rmse heatmap across eight modern architectures and 24 evaluation points (12 assets × 2 horizons). Lighter cells indicate lower error. ModernTCN and PatchTST consistently achieve the lowest

rmse values across all asset–horizon combinations. LSTM is excluded for visual clarity; the full nine-model variant is provided in

Appendix A.5,

Figure A15. Values represent mean

rmse across three seeds.

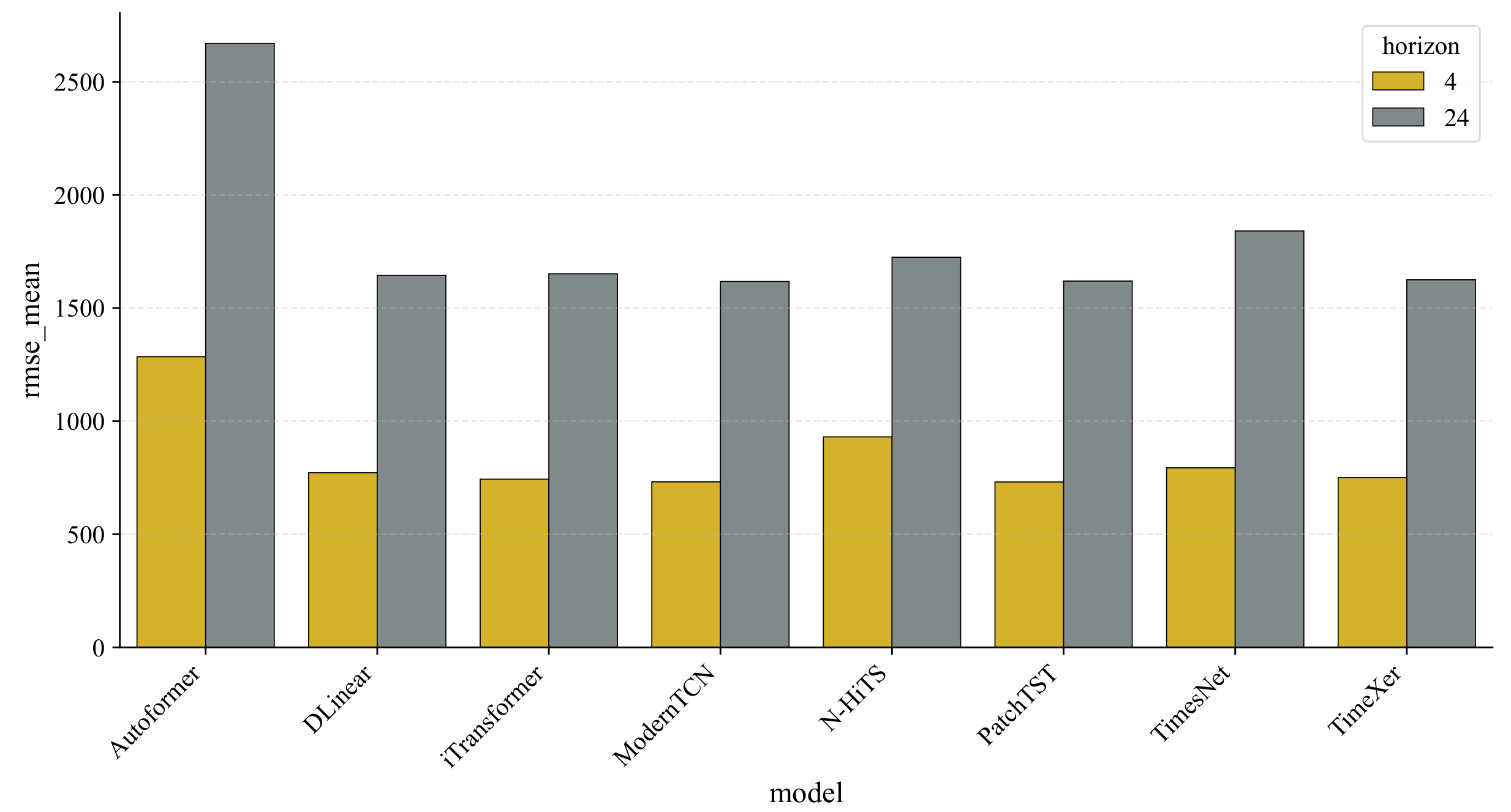

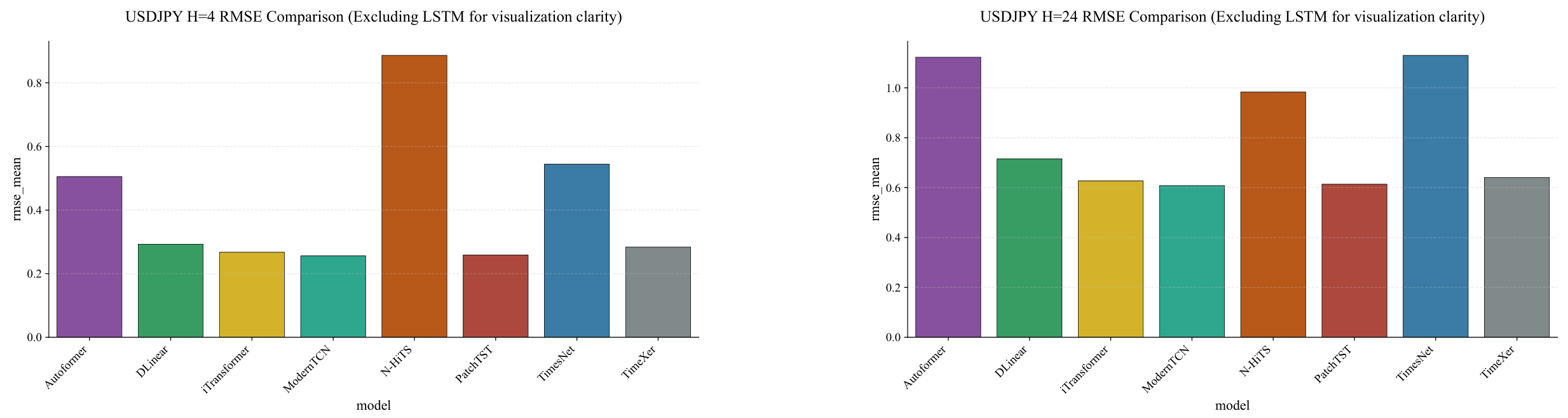

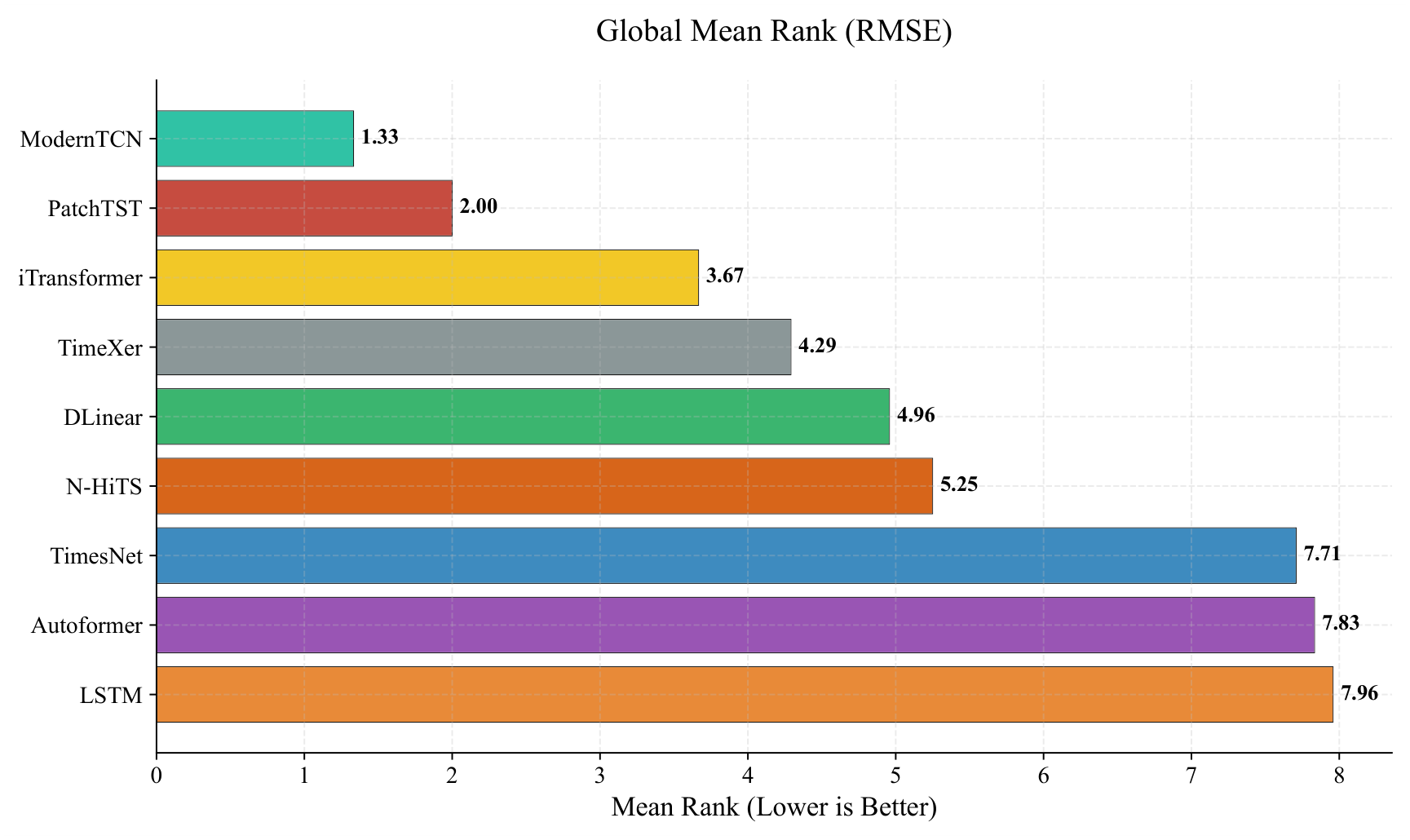

Figure 4.

Global mean rank comparison across 24 evaluation points (12 assets × 2 horizons). Lower values indicate better performance. Three distinct tiers are visible: ModernTCN and PatchTST (ranks 1–2), a middle group of four models (ranks 3–6), and a bottom group comprising TimesNet, Autoformer, and LSTM (ranks 7–9). Error bars represent rank standard deviation across evaluation points.

Figure 4.

Global mean rank comparison across 24 evaluation points (12 assets × 2 horizons). Lower values indicate better performance. Three distinct tiers are visible: ModernTCN and PatchTST (ranks 1–2), a middle group of four models (ranks 3–6), and a bottom group comprising TimesNet, Autoformer, and LSTM (ranks 7–9). Error bars represent rank standard deviation across evaluation points.

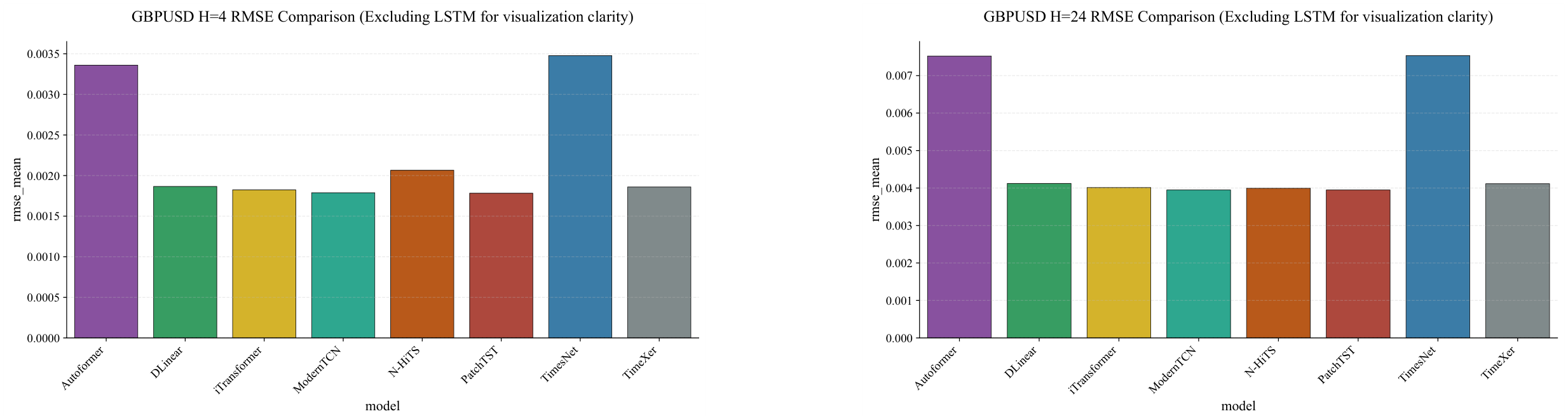

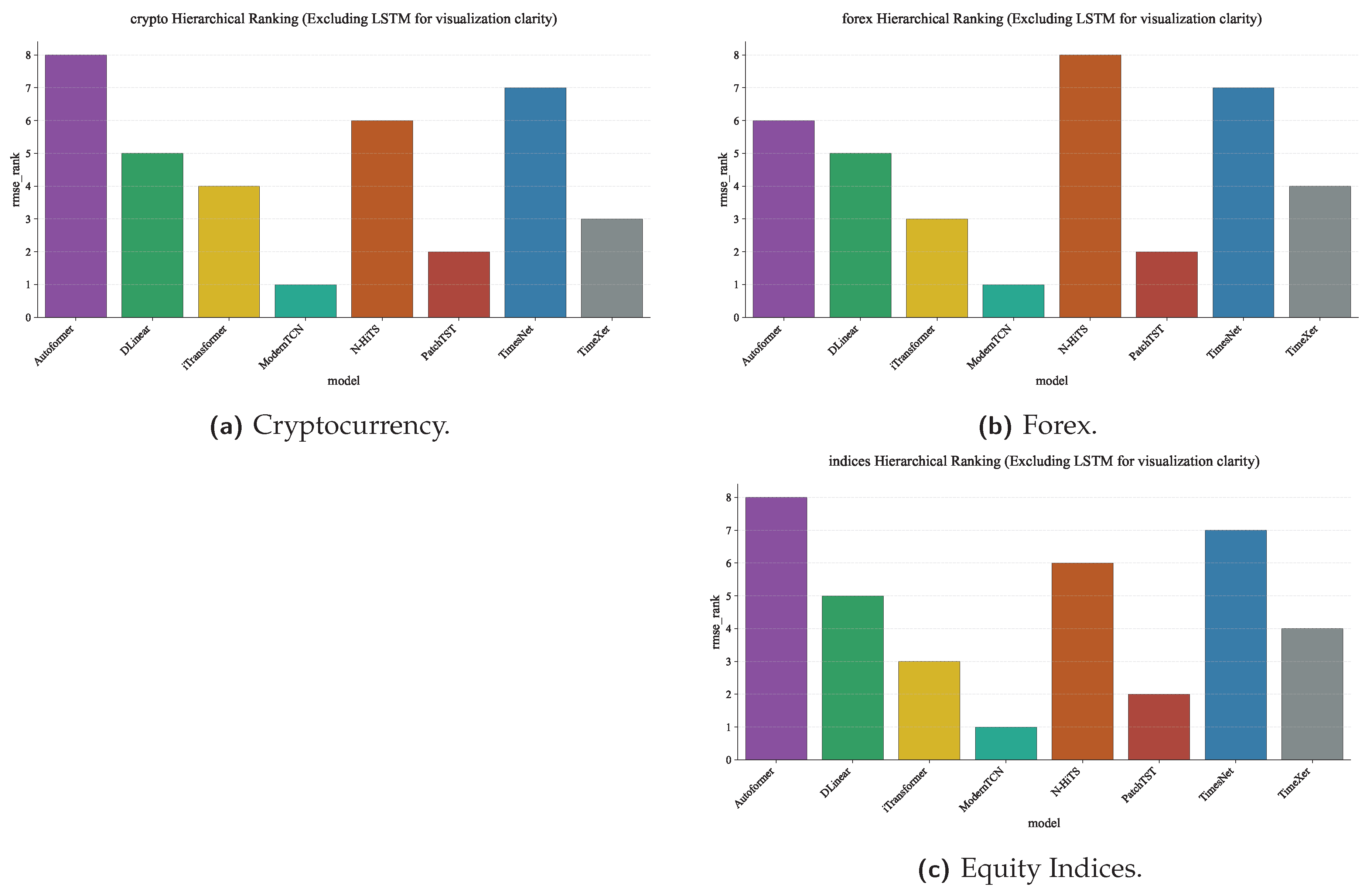

Figure 5.

Category-level rank distributions across assets within each category, excluding LSTM for visual clarity. ModernTCN exhibits the tightest rank distribution (consistently rank 1 across all categories), indicating stable cross-asset performance. The full nine-model variant is provided in

Appendix A.5. Boxes show interquartile range; whiskers extend to the most extreme rank observed.

Figure 5.

Category-level rank distributions across assets within each category, excluding LSTM for visual clarity. ModernTCN exhibits the tightest rank distribution (consistently rank 1 across all categories), indicating stable cross-asset performance. The full nine-model variant is provided in

Appendix A.5. Boxes show interquartile range; whiskers extend to the most extreme rank observed.

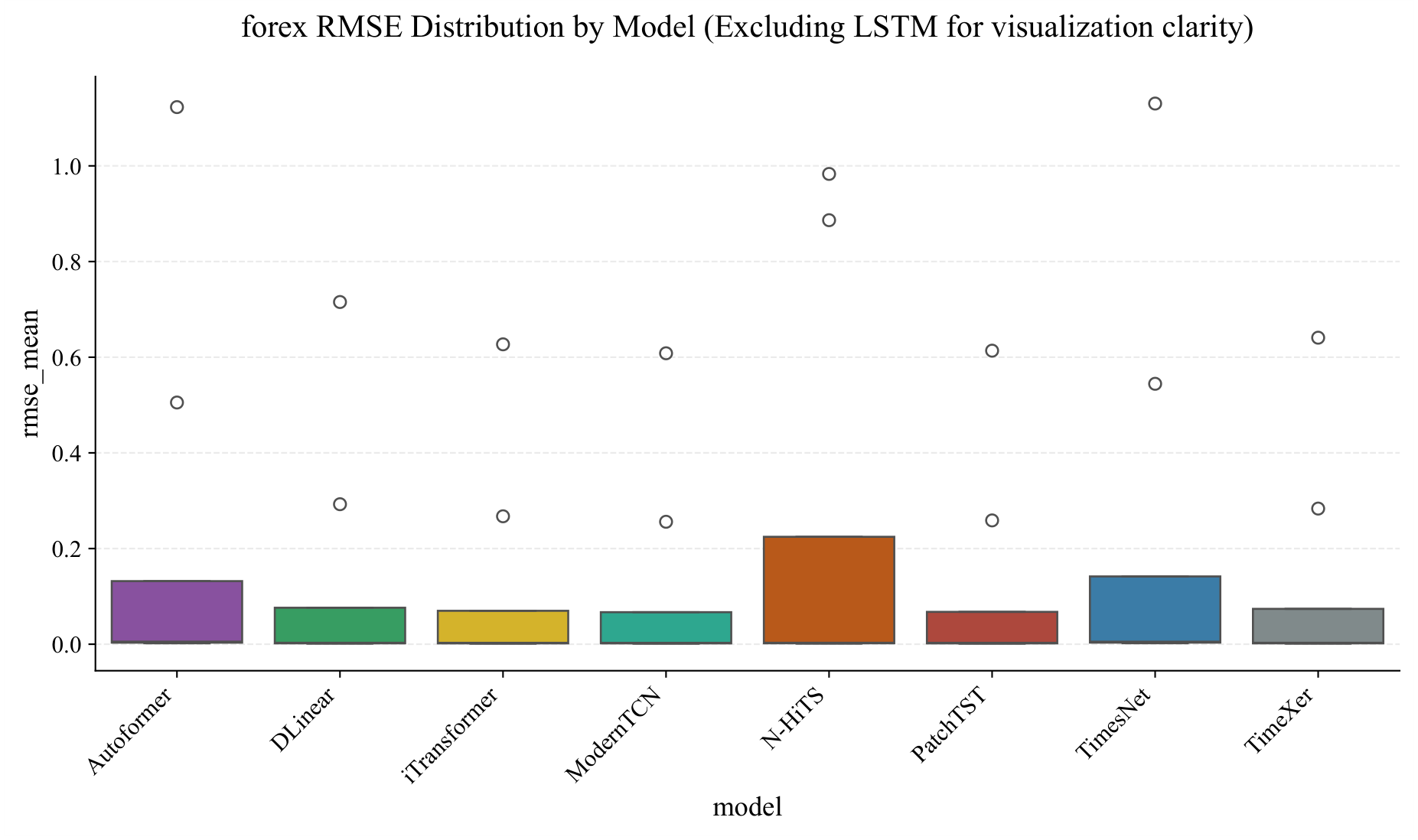

Figure 6.

Category-level

rmse vs.

mae scatter plot for eight modern architectures. Each point represents one model’s mean error within a category. The near-perfect linear correlation (

) confirms that model rankings are consistent across the two error metrics, indicating that findings based on

rmse generalise to

mae. The full nine-model variant is provided in

Appendix A.5.

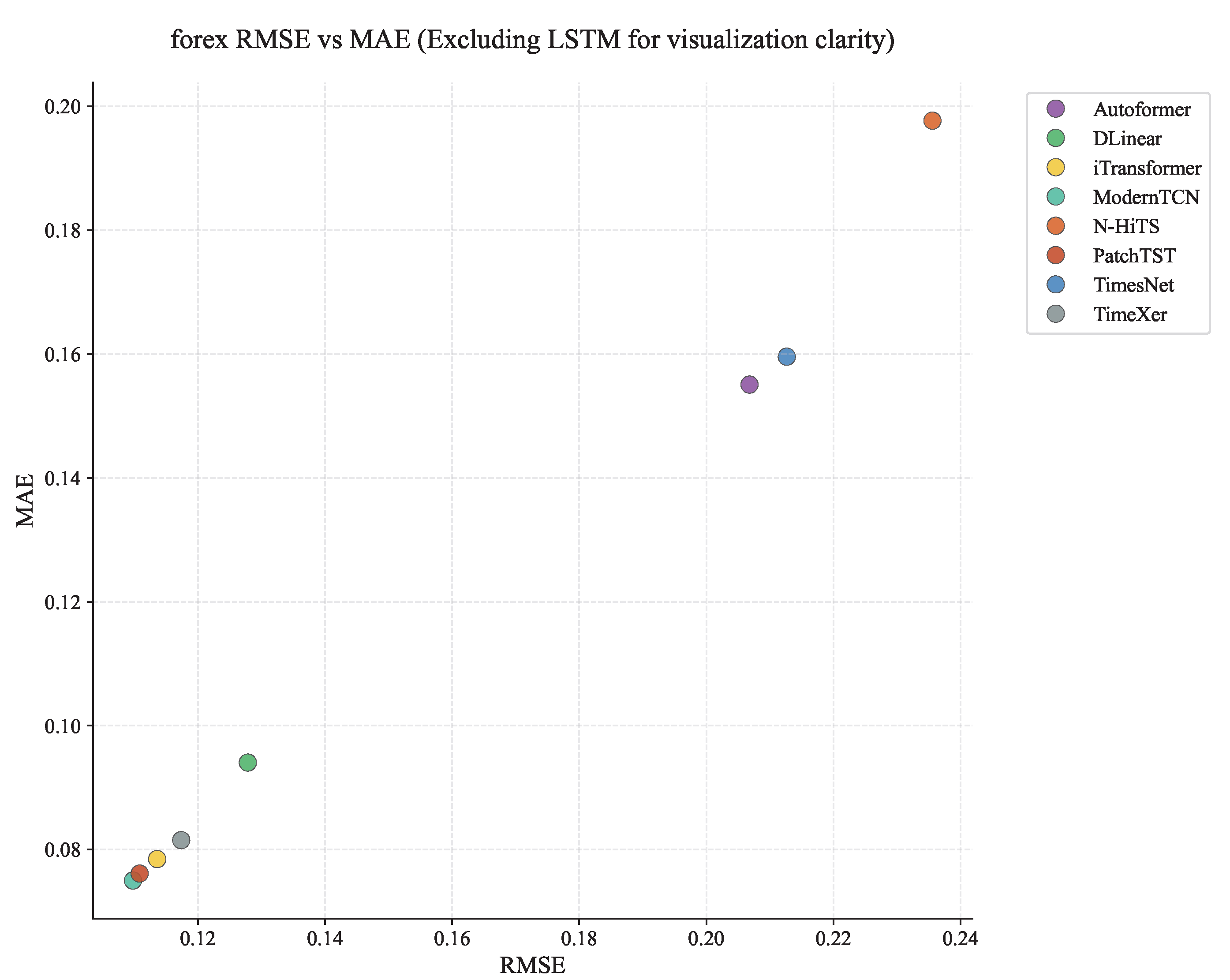

Figure 6.

Category-level

rmse vs.

mae scatter plot for eight modern architectures. Each point represents one model’s mean error within a category. The near-perfect linear correlation (

) confirms that model rankings are consistent across the two error metrics, indicating that findings based on

rmse generalise to

mae. The full nine-model variant is provided in

Appendix A.5.

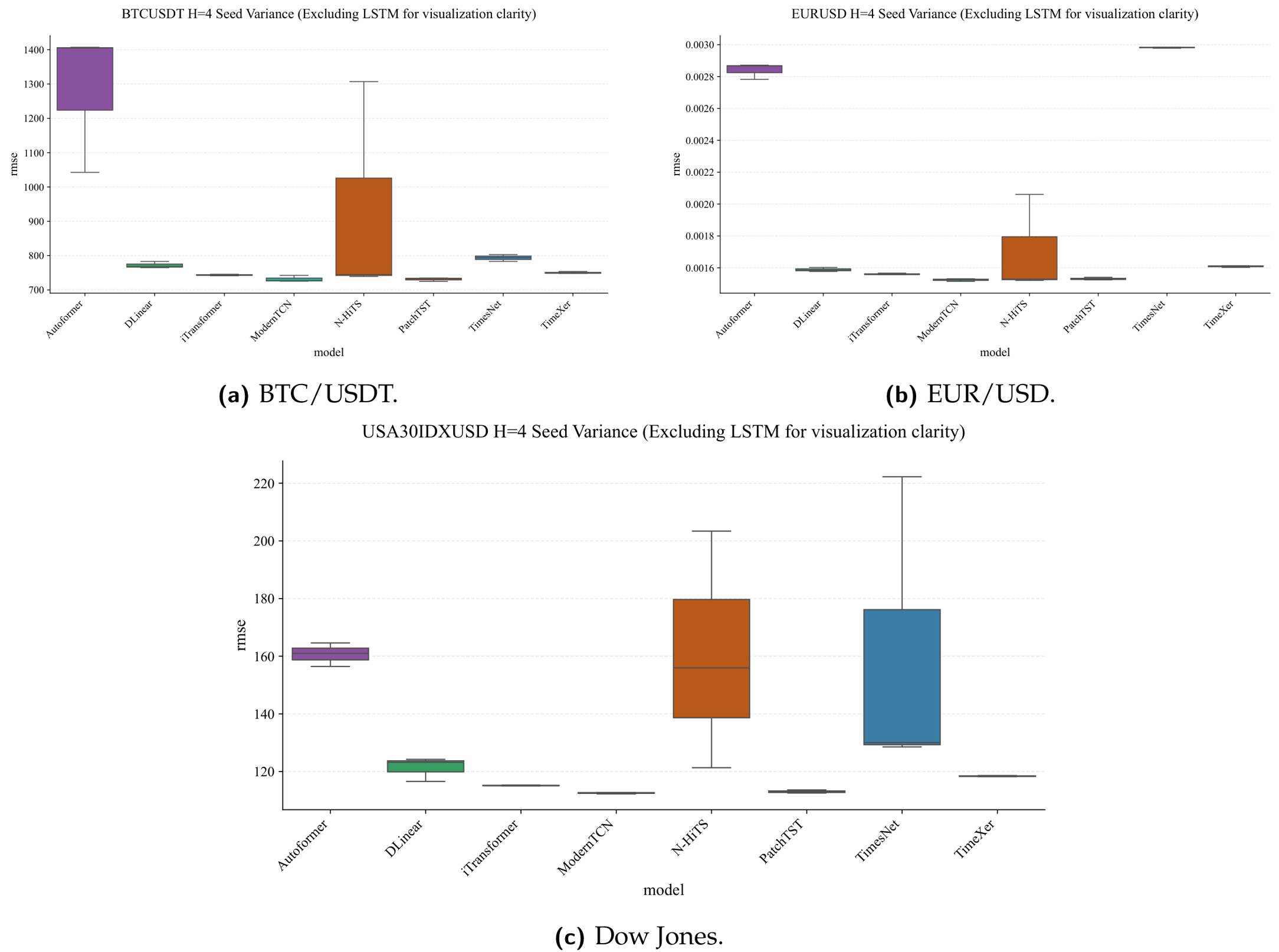

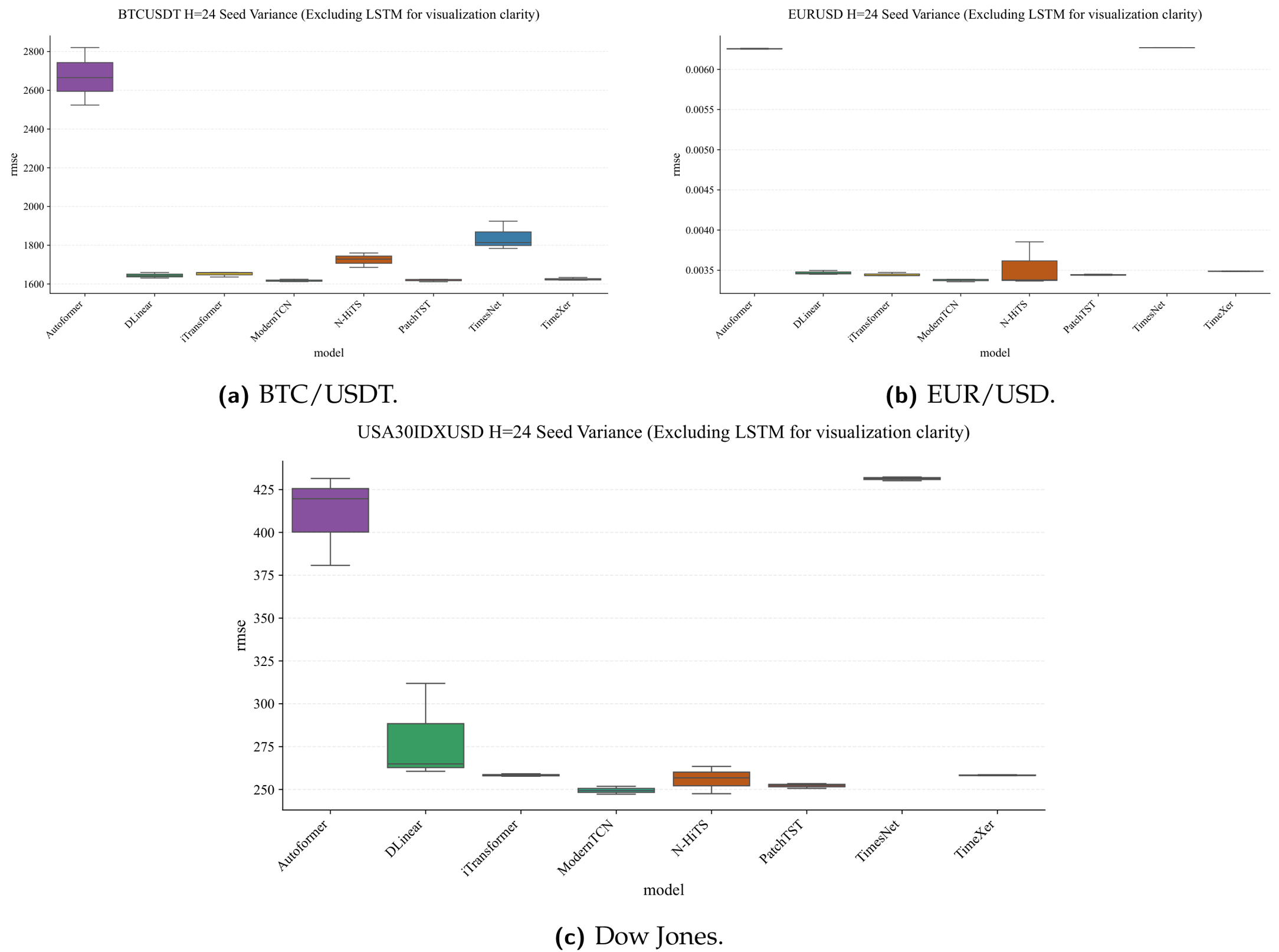

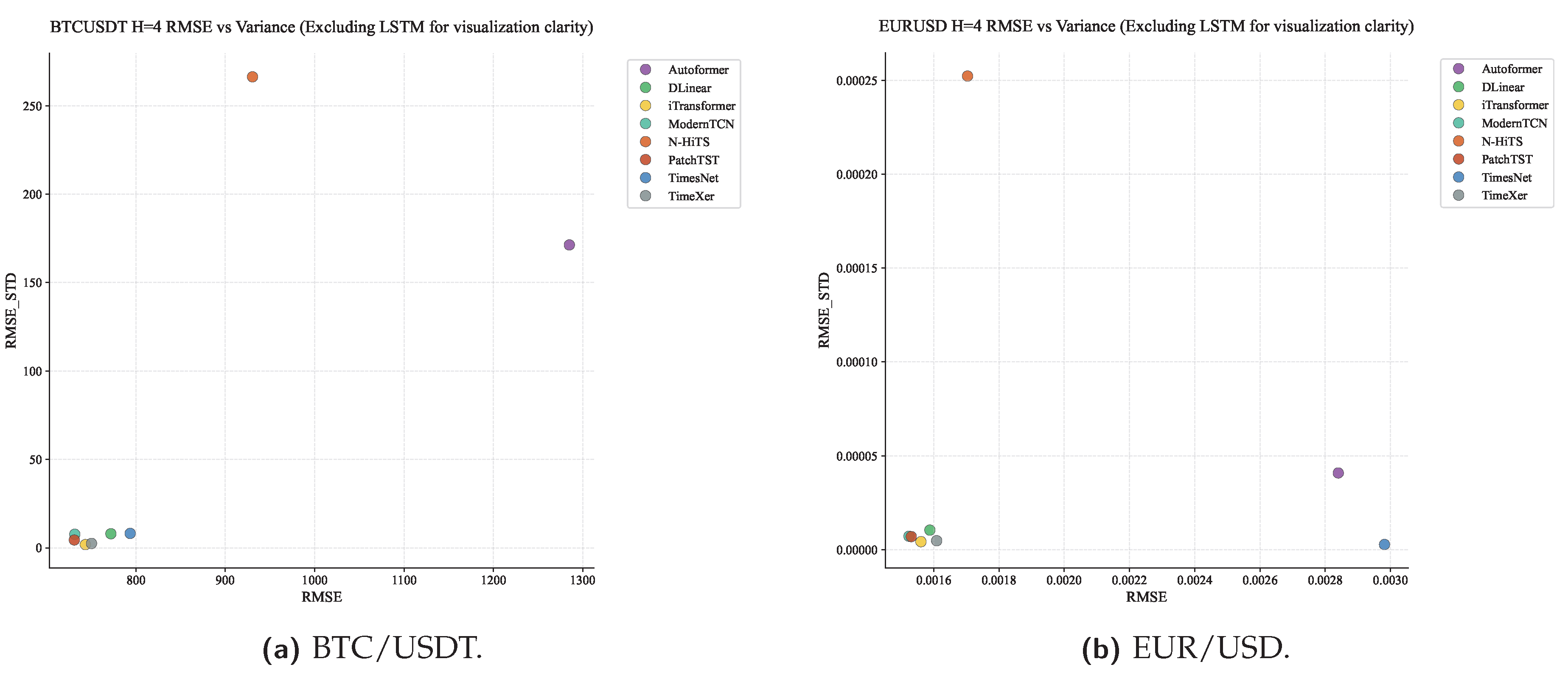

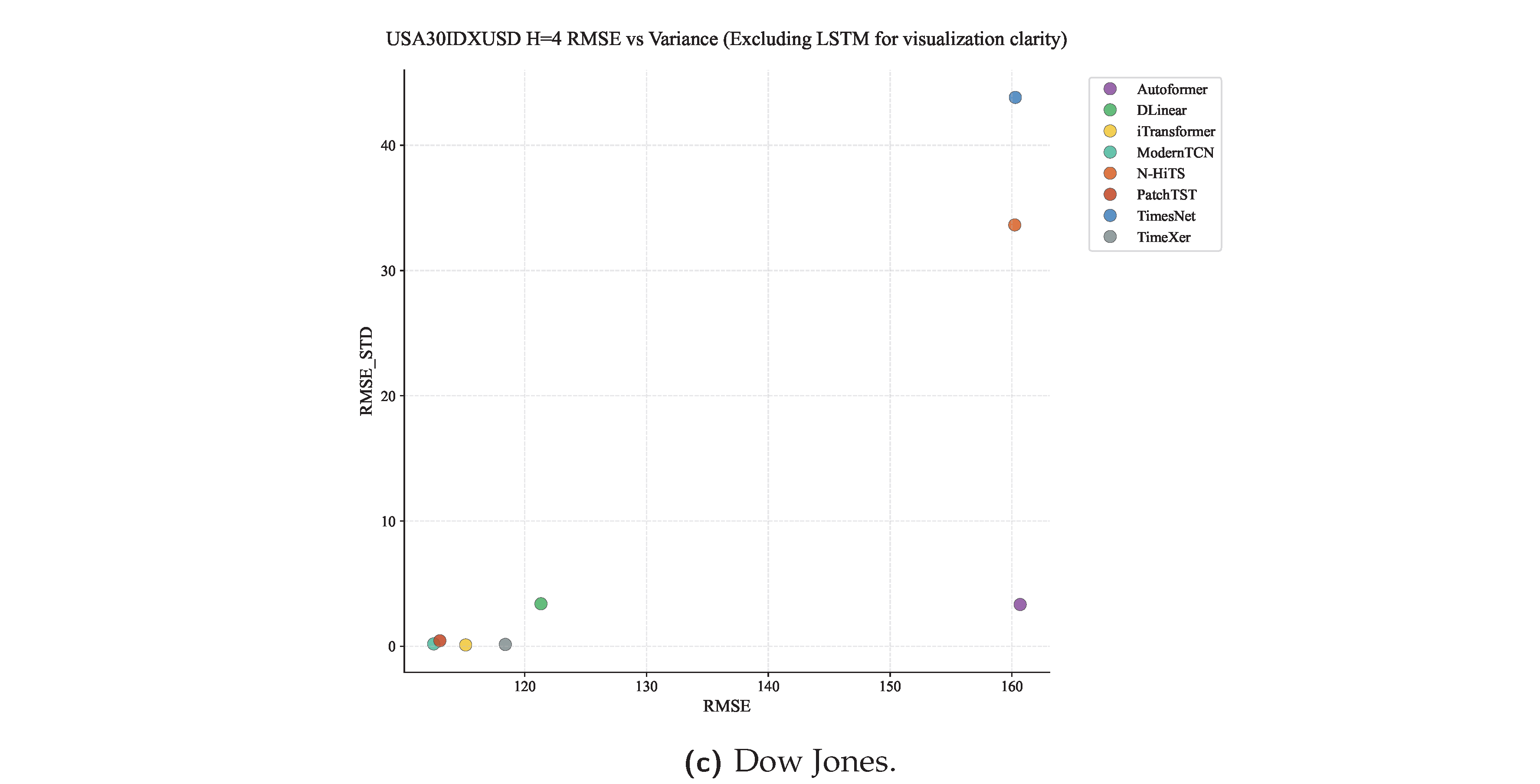

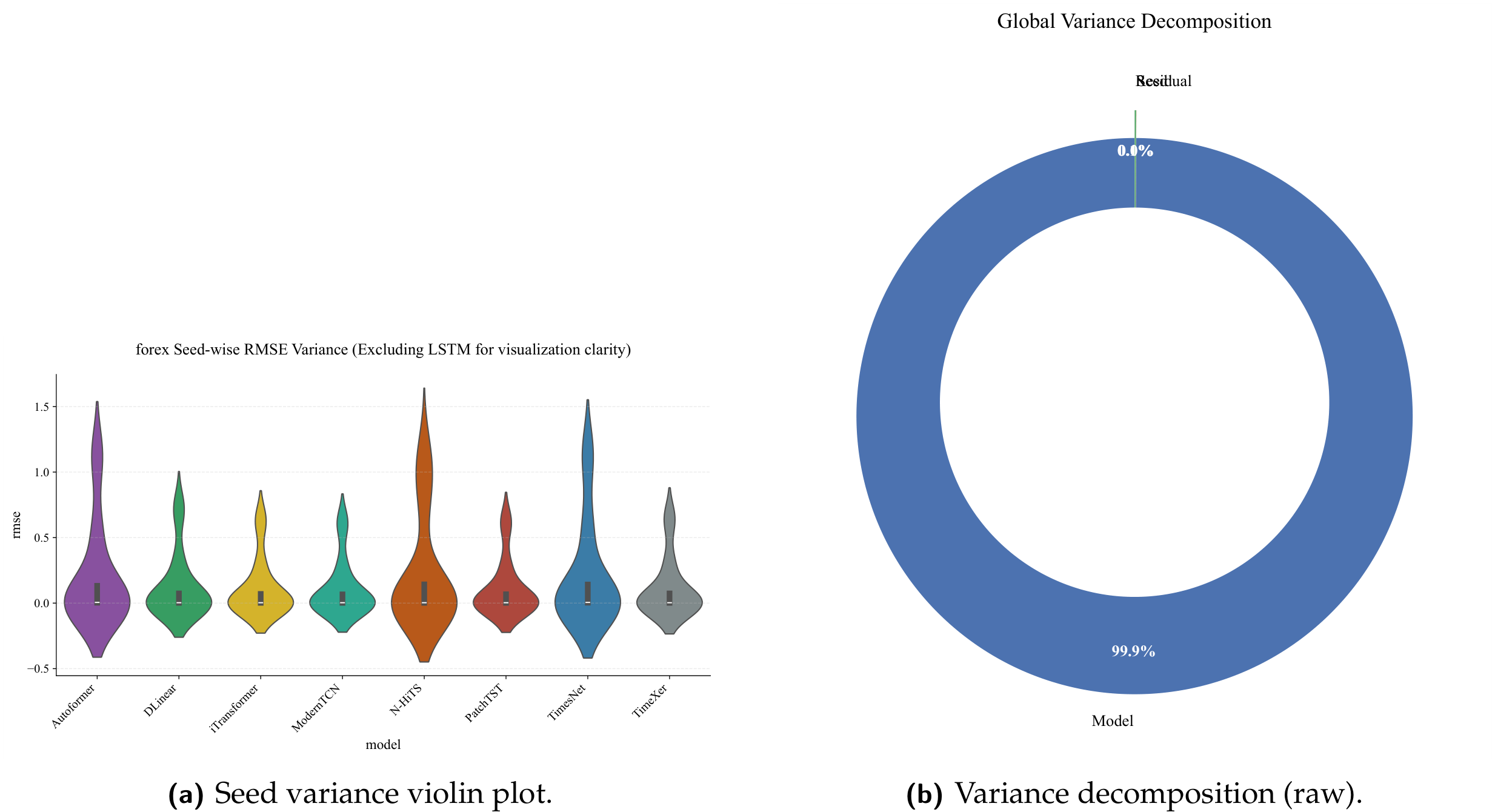

Figure 9.

Seed robustness analysis. (a) Violin plot of seed-to-seed

rmse variation. Inter-seed variation is negligible relative to inter-model differences across all nine architectures. (b) Two-factor variance decomposition on raw price-scale

rmse: architecture explains 99.90%, seed 0.01%, residual 0.09%. After z-score normalisation within each (asset, horizon) slot, the architecture share falls to 48.3% (68.3% excluding LSTM); see

Table 14 for the full dual-panel breakdown.

Figure 9.

Seed robustness analysis. (a) Violin plot of seed-to-seed

rmse variation. Inter-seed variation is negligible relative to inter-model differences across all nine architectures. (b) Two-factor variance decomposition on raw price-scale

rmse: architecture explains 99.90%, seed 0.01%, residual 0.09%. After z-score normalisation within each (asset, horizon) slot, the architecture share falls to 48.3% (68.3% excluding LSTM); see

Table 14 for the full dual-panel breakdown.

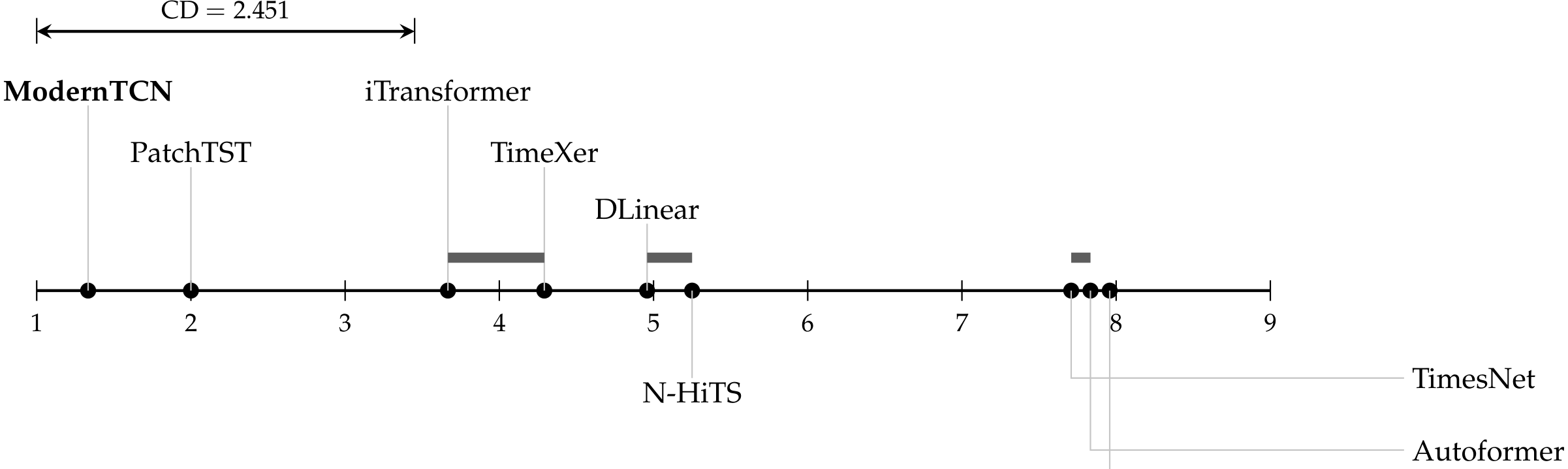

Figure 10.

Critical difference diagram for nine forecasting architectures across evaluation blocks (12 assets × 2 horizons). Models are placed at their exact mean rmse rank; lower rank is better. Thick horizontal bars connect models that are not statistically distinguishable at under Holm-corrected Wilcoxon tests (holm_wilcoxon.csv): iTransformer–TimeXer (), DLinear–N-HiTS (), and TimesNet–Autoformer (). All other 33 pairwise comparisons are significant. The bracketed interval (upper left) shows the Nemenyi critical difference (, , ) for reference only.

Figure 10.

Critical difference diagram for nine forecasting architectures across evaluation blocks (12 assets × 2 horizons). Models are placed at their exact mean rmse rank; lower rank is better. Thick horizontal bars connect models that are not statistically distinguishable at under Holm-corrected Wilcoxon tests (holm_wilcoxon.csv): iTransformer–TimeXer (), DLinear–N-HiTS (), and TimesNet–Autoformer (). All other 33 pairwise comparisons are significant. The bracketed interval (upper left) shows the Nemenyi critical difference (, , ) for reference only.

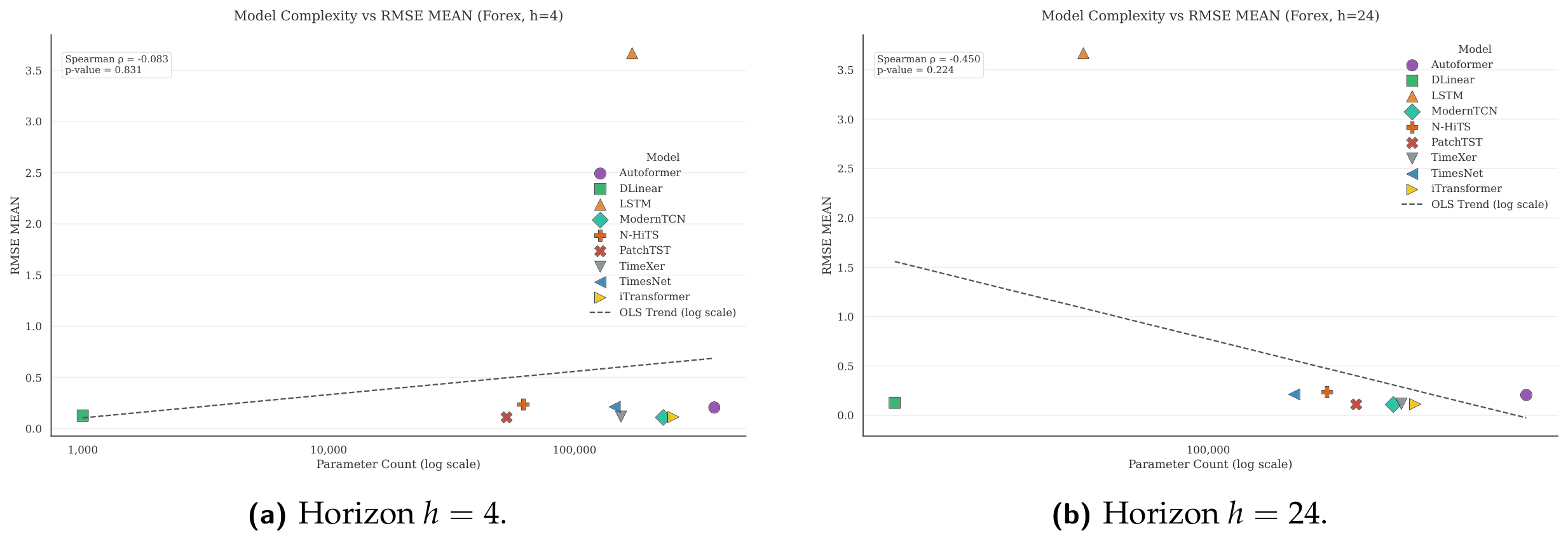

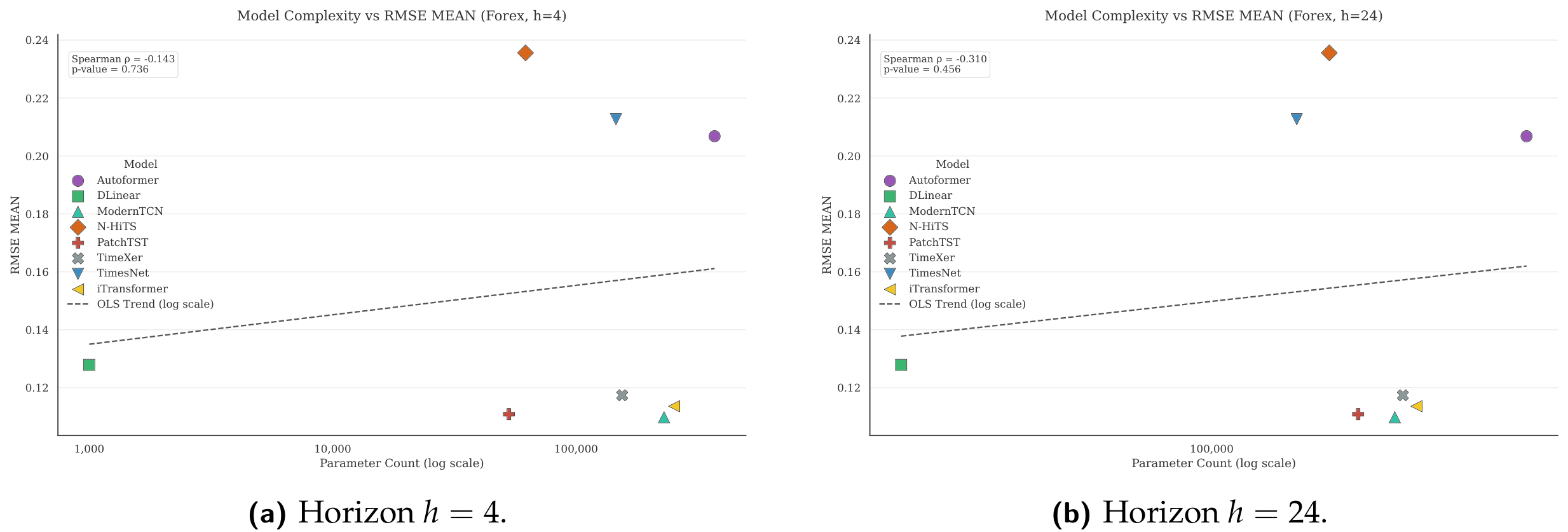

Figure 11.

Complexity–performance trade-off (excluding LSTM). The horizontal axis represents the number of trainable parameters (log scale); the vertical axis represents the mean rmse rank across all assets and seeds. The Pareto frontier is clearly defined by DLinear, PatchTST, and ModernTCN.

Figure 11.

Complexity–performance trade-off (excluding LSTM). The horizontal axis represents the number of trainable parameters (log scale); the vertical axis represents the mean rmse rank across all assets and seeds. The Pareto frontier is clearly defined by DLinear, PatchTST, and ModernTCN.

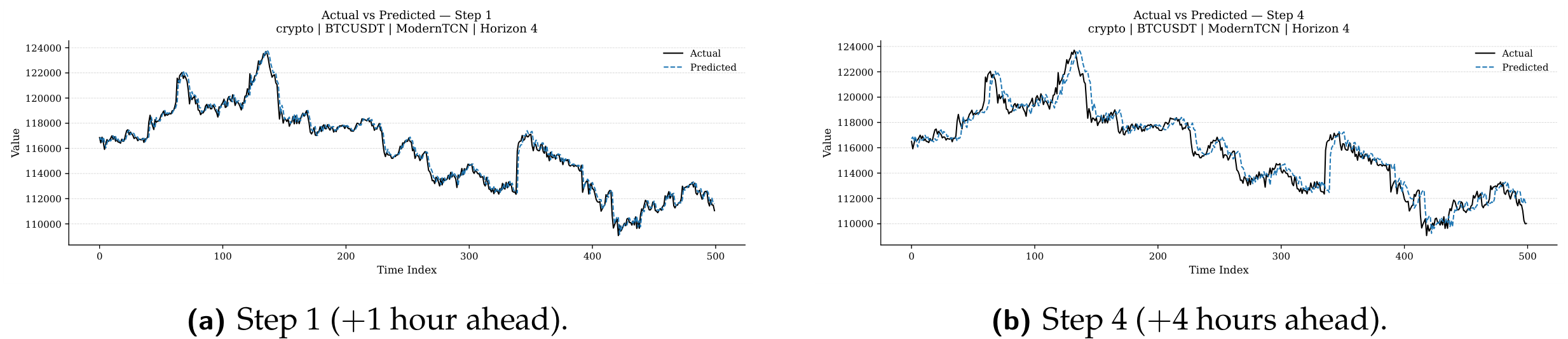

Figure 12.

Actual versus predicted close price for ModernTCN on BTC/USDT, (seed 123). (a) At step 1, the model tracks the actual price with high fidelity, capturing directional turns and amplitude fluctuations. (b) At step 4, trend structure is preserved but short-lived volatility spikes are modestly attenuated, consistent with MSE-induced shrinkage. No systematic phase shift is observed.

Figure 12.

Actual versus predicted close price for ModernTCN on BTC/USDT, (seed 123). (a) At step 1, the model tracks the actual price with high fidelity, capturing directional turns and amplitude fluctuations. (b) At step 4, trend structure is preserved but short-lived volatility spikes are modestly attenuated, consistent with MSE-induced shrinkage. No systematic phase shift is observed.

Figure 13.

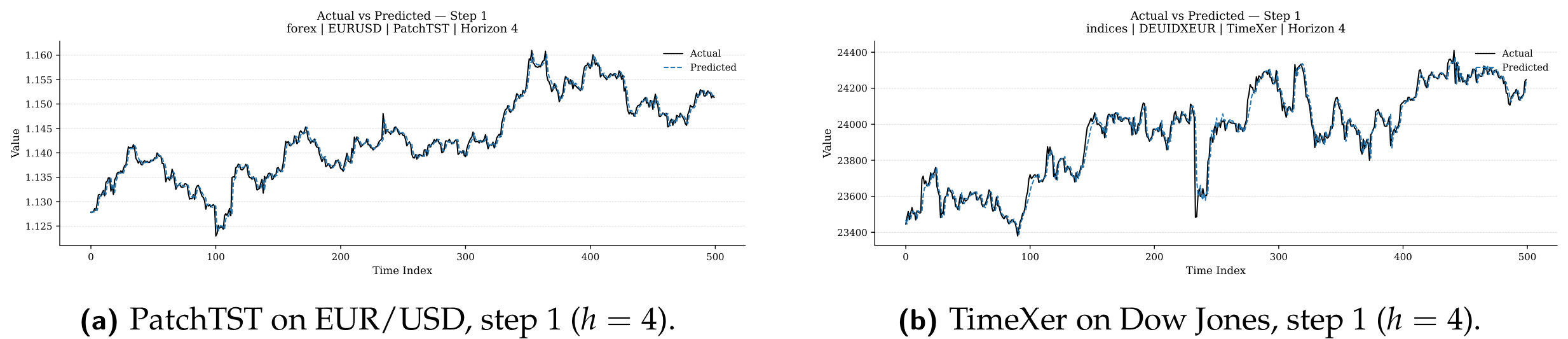

Cross-architecture, cross-asset contrast at , step 1 (seed 123). (a) PatchTST on EUR/USD (rank 2) tracks the low-amplitude, mean-reverting forex dynamics with high accuracy. (b) TimeXer on Dow Jones (rank 4) captures the directional structure but with a wider deviation band, illustrating the qualitative signature of the rank gap between top and middle tiers.

Figure 13.

Cross-architecture, cross-asset contrast at , step 1 (seed 123). (a) PatchTST on EUR/USD (rank 2) tracks the low-amplitude, mean-reverting forex dynamics with high accuracy. (b) TimeXer on Dow Jones (rank 4) captures the directional structure but with a wider deviation band, illustrating the qualitative signature of the rank gap between top and middle tiers.

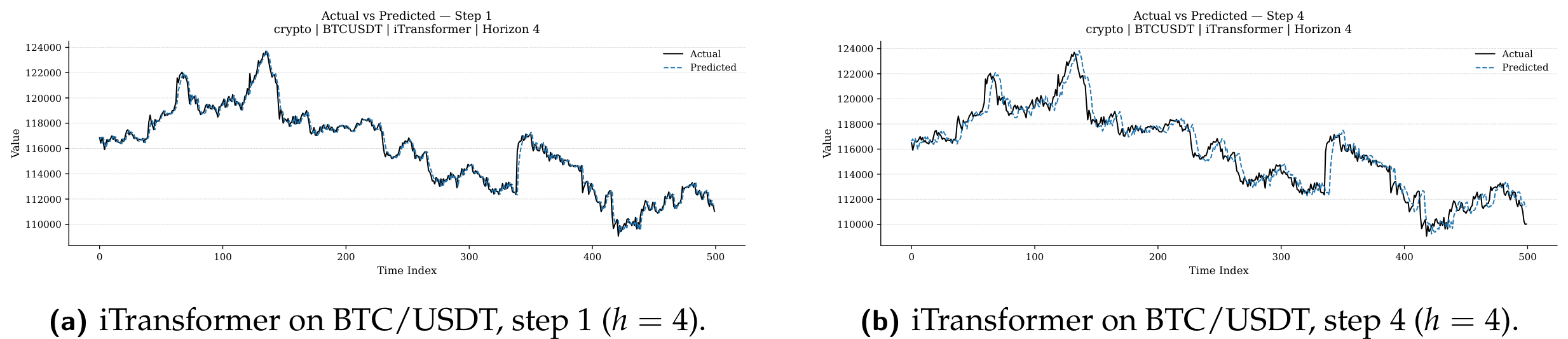

Figure 14.

iTransformer (rank 3) on BTC/USDT at

(seed 123). (a) At step 1, tracking fidelity is comparable to ModernTCN (

Figure 12a). (b) At step 4, slightly more amplitude attenuation is visible relative to ModernTCN (

Figure 12b), consistent with the 1.6%

rmse gap. The inverted attention mechanism produces qualitatively similar but measurably weaker temporal representations for short-horizon cryptocurrency forecasting.

Figure 14.

iTransformer (rank 3) on BTC/USDT at

(seed 123). (a) At step 1, tracking fidelity is comparable to ModernTCN (

Figure 12a). (b) At step 4, slightly more amplitude attenuation is visible relative to ModernTCN (

Figure 12b), consistent with the 1.6%

rmse gap. The inverted attention mechanism produces qualitatively similar but measurably weaker temporal representations for short-horizon cryptocurrency forecasting.

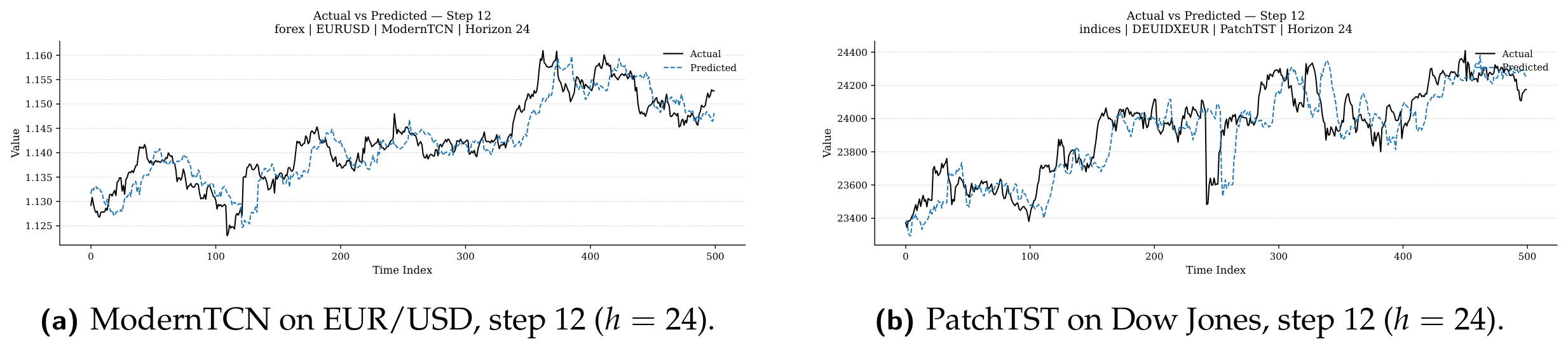

Figure 15.

Medium-horizon actual-versus-predicted overlays at step 12 of the

forecast vector (seed 123). (a) ModernTCN on EUR/USD: directional content is preserved 12 hours ahead while high-frequency amplitude is dampened. (b) PatchTST on Dow Jones: directional integrity is maintained but the forecast envelope is wider than at

, corroborating the 2–

rmse amplification (

Table 10). Both top architectures exhibit amplitude attenuation as the primary degradation mode.

Figure 15.

Medium-horizon actual-versus-predicted overlays at step 12 of the

forecast vector (seed 123). (a) ModernTCN on EUR/USD: directional content is preserved 12 hours ahead while high-frequency amplitude is dampened. (b) PatchTST on Dow Jones: directional integrity is maintained but the forecast envelope is wider than at

, corroborating the 2–

rmse amplification (

Table 10). Both top architectures exhibit amplitude attenuation as the primary degradation mode.

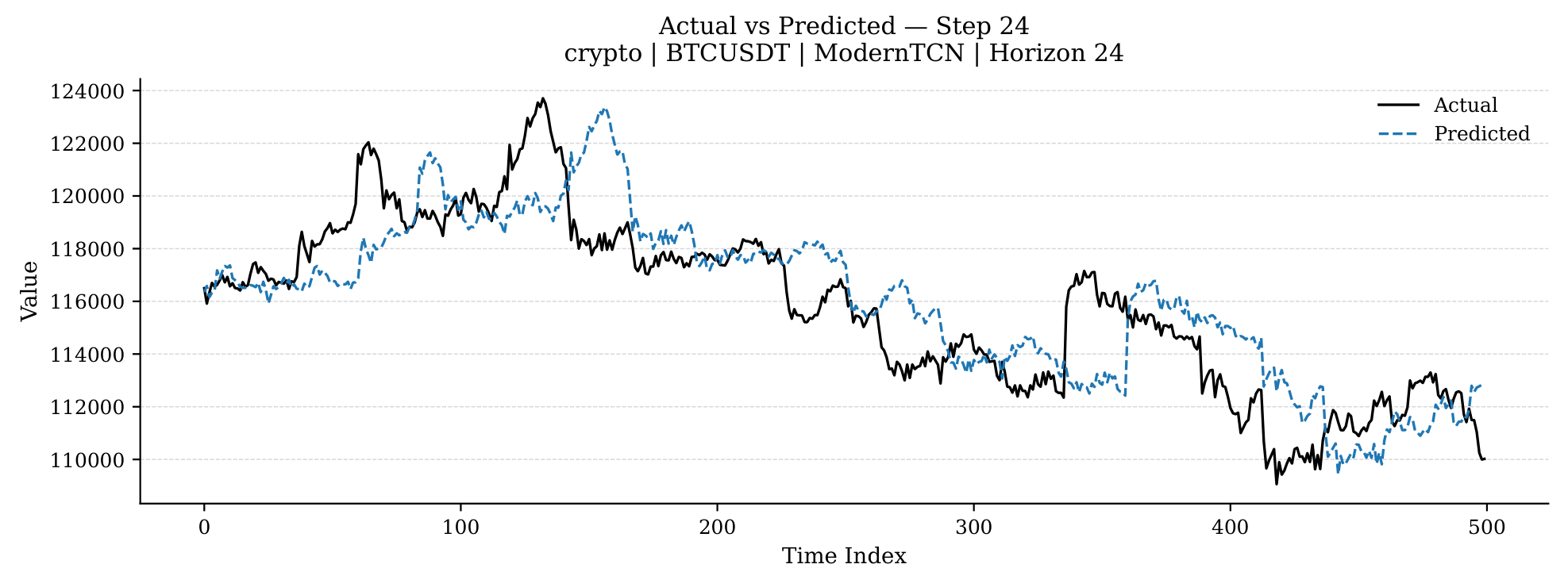

Figure 16.

Long-horizon overlay: ModernTCN on BTC/USDT,

, step 24 (seed 123). The model retains directional integrity and captures low-frequency trend components, but high-amplitude intra-day reversals are under-predicted. This pattern—directional fidelity without amplitude precision—is the signature of MSE-optimised direct multi-step forecasting at the maximum horizon. The

rmse at

(1,617.4) represents a 121.1% degradation relative to

(731.6;

Table 10).

Figure 16.

Long-horizon overlay: ModernTCN on BTC/USDT,

, step 24 (seed 123). The model retains directional integrity and captures low-frequency trend components, but high-amplitude intra-day reversals are under-predicted. This pattern—directional fidelity without amplitude precision—is the signature of MSE-optimised direct multi-step forecasting at the maximum horizon. The

rmse at

(1,617.4) represents a 121.1% degradation relative to

(731.6;

Table 10).

Table 1.

Summary of prior comparative studies in time-series forecasting. Columns indicate the number of models evaluated, number of datasets or asset classes, horizons tested, whether multi-seed evaluation was performed, and whether post-hoc pairwise statistical tests were applied.

Table 1.

Summary of prior comparative studies in time-series forecasting. Columns indicate the number of models evaluated, number of datasets or asset classes, horizons tested, whether multi-seed evaluation was performed, and whether post-hoc pairwise statistical tests were applied.

| Study |

Models |

Datasets |

Horizons |

Multi-Seed |

Pairwise Tests |

Open Code |

| [14] (M4) |

Many |

100K series |

Multiple |

No |

No |

Partial |

| [13] |

RNN only |

6 |

Multiple |

No |

No |

Partial |

| [7] |

6 |

9 |

4 |

No |

No |

Yes |

| [9] |

8 |

8 |

4 |

No |

No |

Yes |

| [4] |

7 |

8 |

4 |

No |

No |

Yes |

| [5] |

8 |

7 |

4 |

No |

No |

Yes |

| Present study |

9 |

12 (3 classes) |

2 |

Yes (3) |

Yes |

Yes |

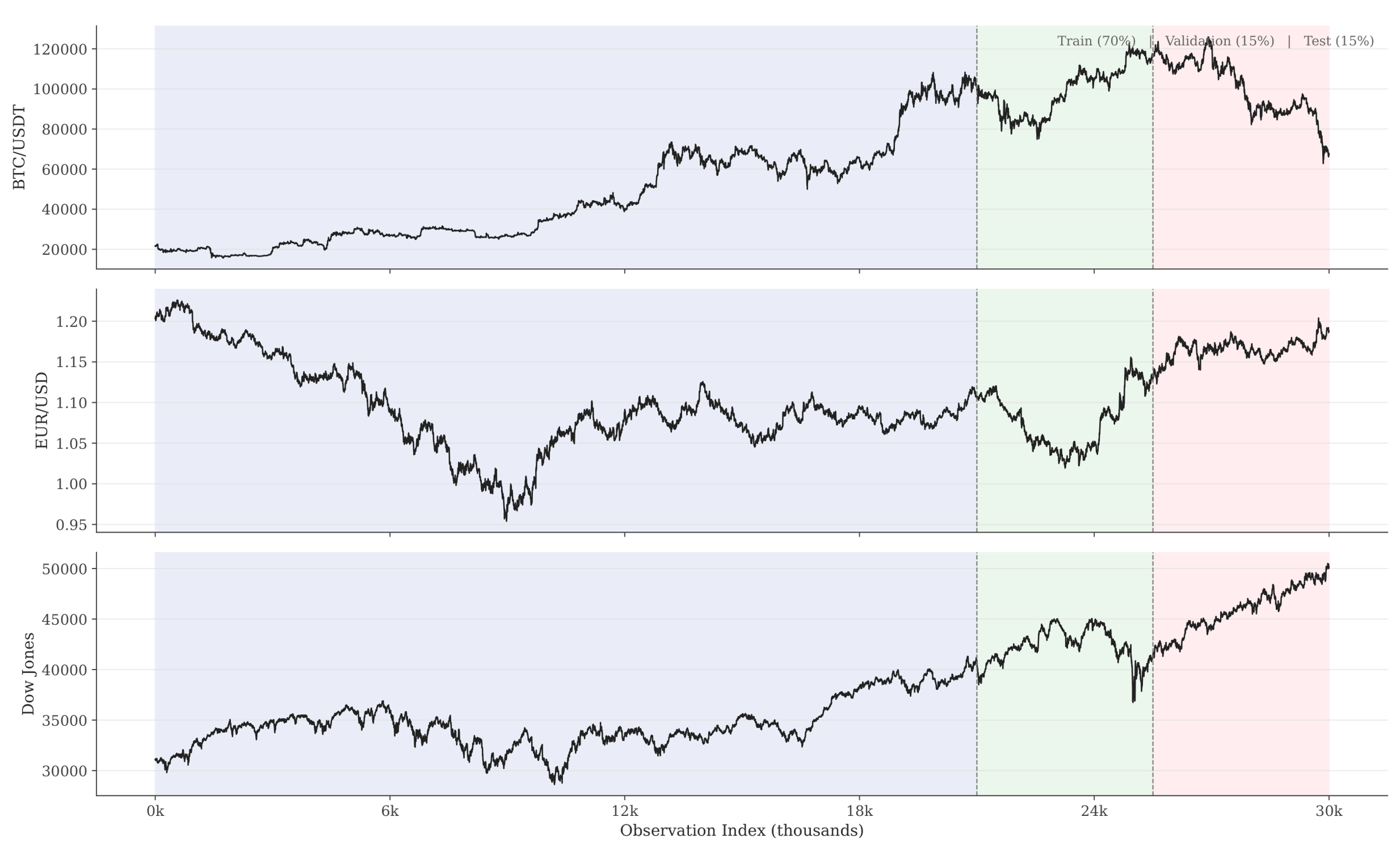

Table 2.

Dataset summary. All assets use H1 (hourly) frequency. The most recent 30,000 windowed samples are retained per (asset, horizon) pair, split chronologically into 70%/15%/15% train/val/test partitions. Window lengths: for and for . Features: OHLCV (5 channels); target: close price.

Table 2.

Dataset summary. All assets use H1 (hourly) frequency. The most recent 30,000 windowed samples are retained per (asset, horizon) pair, split chronologically into 70%/15%/15% train/val/test partitions. Window lengths: for and for . Features: OHLCV (5 channels); target: close price.

| Category |

Asset |

Date Range |

Train |

Val |

Test |

Total |

| Crypto |

BTC/USDT |

2021-03 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| ETH/USDT |

2021-03 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| BNB/USDT |

2021-03 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| ADA/USDT |

2021-05 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| Forex |

EUR/USD |

2017-12 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| USD/JPY |

2017-12 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| GBP/USD |

2017-12 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| AUD/USD |

2017-12 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| Indices |

Dow Jones |

2019-01 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| S&P 500 |

2019-01 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| NASDAQ 100 |

2019-01 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| DAX |

2019-02 – 2026-02 |

21,000 |

4,500 |

4,500 |

30,000 |

| Total per horizon |

— |

252,000 |

54,000 |

54,000 |

360,000 |

Table 3.

Distributional statistics of hourly log returns for all twelve assets. All series reject the Augmented Dickey–Fuller unit-root null at , confirming return stationarity.

Table 3.

Distributional statistics of hourly log returns for all twelve assets. All series reject the Augmented Dickey–Fuller unit-root null at , confirming return stationarity.

| Cat. |

Asset |

() |

(%) |

Skew |

Kurt |

ACF(1) |

ADF p

|

| Crypto |

BTC/USDT |

|

0.783 |

|

|

|

<0.001 |

| ETH/USDT |

|

0.973 |

|

|

|

<0.001 |

| BNB/USDT |

|

1.107 |

|

|

|

<0.001 |

| ADA/USDT |

|

1.187 |

|

|

|

<0.001 |

| Forex |

EUR/USD |

|

0.109 |

|

|

|

<0.001 |

| USD/JPY |

|

0.119 |

|

|

|

<0.001 |

| GBP/USD |

|

0.115 |

|

|

|

<0.001 |

| AUD/USD |

|

0.141 |

|

|

|

<0.001 |

| Indices |

Dow Jones |

|

0.225 |

|

|

|

<0.001 |

| S&P 500 |

|

0.235 |

|

|

|

<0.001 |

| NASDAQ 100 |

|

0.286 |

|

|

|

<0.001 |

| DAX |

|

0.262 |

|

|

|

<0.001 |

Table 4.

Summary of the nine deep learning architectures evaluated. All models receive OHLCV input of shape and produce direct multi-step forecasts of shape . Family abbreviations: RNN = recurrent, MLP = multi-layer perceptron, TCN = temporal convolutional network, TF = Transformer.

Table 4.

Summary of the nine deep learning architectures evaluated. All models receive OHLCV input of shape and produce direct multi-step forecasts of shape . Family abbreviations: RNN = recurrent, MLP = multi-layer perceptron, TCN = temporal convolutional network, TF = Transformer.

| Model |

Family |

Key Mechanism |

Reference |

| Autoformer |

TF |

Auto-correlation & series decomposition |

[3] |

| DLinear |

MLP |

Linear layers with trend–seasonal decomposition |

[7] |

| iTransformer |

TF |

Inverted attention on variate tokens |

[5] |

| LSTM |

RNN |

Gated recurrent cells + dense MLP projection head |

[21] |

| ModernTCN |

TCN |

Large-kernel depthwise convolutions with patching |

[8] |

| N-HiTS |

MLP |

Hierarchical interpolation with multi-rate pooling |

[10] |

| PatchTST |

TF |

Channel-independent patch-based Transformer |

[4] |

| TimesNet |

TCN |

2D temporal variation via FFT + Inception blocks |

[9] |

| TimeXer |

TF |

Exogenous-variable-aware cross-attention |

[6] |

Table 5.

Hyperparameter search spaces per model. All models share learning rate (log-uniform) and batch size . Only architecture-specific parameters are shown. HPO uses Optuna TPE with 5 trials per (model × horizon × asset class) on representative assets (BTC/USDT, EUR/USD, Dow Jones) only.

Table 5.

Hyperparameter search spaces per model. All models share learning rate (log-uniform) and batch size . Only architecture-specific parameters are shown. HPO uses Optuna TPE with 5 trials per (model × horizon × asset class) on representative assets (BTC/USDT, EUR/USD, Dow Jones) only.

| Model |

Hyperparameter |

Range / Choices |

| Autoformer |

Model dimension |

|

| |

Attention heads |

|

| |

Enc./dec. layers |

each |

| |

Feedforward dimension |

|

| |

Dropout rate |

, step

|

| DLinear |

Moving-average kernel |

, step 2 |

| |

Per-channel mapping |

|

| iTransformer |

Model dimension |

|

| |

Feedforward dimension |

|

| |

Encoder layers |

|

| |

Attention heads |

|

| LSTM |

Hidden state size |

|

| |

Recurrent layers |

|

| |

Projection head width |

|

| |

Bidirectional |

|

| ModernTCN |

Patch size |

|

| |

Channel dimensions |

|

| |

RevIN normalisation |

|

| |

Dropout / head dropout |

, step

|

| N-HiTS |

Number of blocks |

|

| |

Hidden layer width |

|

| |

Layers per block |

|

| |

Pooling kernel sizes |

|

| PatchTST |

Model dimension |

|

| |

Encoder layers |

|

| |

Patch length |

|

| |

Stride |

|

| TimesNet |

Model dimension |

|

| |

Feedforward dimension |

|

| |

Encoder layers |

|

| TimeXer |

Model dimension |

|

| |

Feedforward dimension |

|

| |

Encoder layers |

|

| |

Attention heads |

|

Table 6.

Frozen best hyperparameters selected via Optuna TPE (5 trials, seed 42, objective: minimum validation mse). Only key architecture-specific parameters are shown; all configurations also include learning rate and batch size. Shared entries across categories indicate that the representative-asset optimum was identical.

Table 6.

Frozen best hyperparameters selected via Optuna TPE (5 trials, seed 42, objective: minimum validation mse). Only key architecture-specific parameters are shown; all configurations also include learning rate and batch size. Shared entries across categories indicate that the representative-asset optimum was identical.

| Model |

Category |

h |

Key Parameters |

| Autoformer |

Crypto/Forex |

4/24 |

, heads, enc, dec, , drop

|

| Indices |

4/24 |

, heads, enc, dec, , drop

|

| DLinear |

All |

4 |

kernel, individual

|

| Crypto/Forex |

24 |

kernel, individual

|

| iTransformer |

Crypto |

24 |

, , layers, heads, drop

|

| Other |

4 |

, , layers, heads, drop

|

| LSTM |

All |

4 |

hidden, layers, mlp, bidir, drop

|

| All |

24 |

hidden, layers, mlp, bidir, drop

|

| ModernTCN |

Crypto |

24 |

patch, dims, RevIN, drop, head-drop

|

| Other |

4/24 |

patch, dims, RevIN+affine, drop, head-drop

|

| N-HiTS |

Crypto |

4 |

blocks, hidden, layers, pool, drop

|

| Crypto |

24 |

blocks, hidden, layers, pool, drop

|

| PatchTST |

Crypto |

4 |

, layers, patch, stride, drop

|

| Other |

24 |

, layers, patch, stride, drop

|

| TimesNet |

All |

4/24 |

, , layers, top-, drop

|

| TimeXer |

Crypto |

4 |

, , layers, heads, drop

|

| Other |

4/24 |

, , layers, heads, drop

|

Table 7.

Global model ranking aggregated across all 12 assets and both horizons (), categorised by architectural family. Each (asset, horizon) pair contributes one rank based on mean rmse over three seeds. Mean and median ranks are computed over 24 evaluation slots. Win Count indicates the number of slots in which a model achieved rank 1. Bold marks the best value per column.

Table 7.

Global model ranking aggregated across all 12 assets and both horizons (), categorised by architectural family. Each (asset, horizon) pair contributes one rank based on mean rmse over three seeds. Mean and median ranks are computed over 24 evaluation slots. Win Count indicates the number of slots in which a model achieved rank 1. Bold marks the best value per column.

| Model |

Family |

Mean Rank |

Median Rank |

Wins (of 24) |

| ModernTCN |

CNN |

1.3330 |

1.0000 |

18 (75.0%) |

| PatchTST |

Transformer |

2.0000 |

2.0000 |

3 (12.5%) |

| iTransformer |

Transformer |

3.6670 |

3.0000 |

0 |

| TimeXer |

Transformer |

4.2920 |

4.0000 |

0 |

| DLinear |

MLP / Linear |

4.9580 |

5.0000 |

0 |

| N-HiTS |

MLP / Linear |

5.2500 |

6.0000 |

3 (12.5%) |

| TimesNet |

CNN |

7.7080 |

8.0000 |

0 |

| Autoformer |

Transformer |

7.8330 |

8.0000 |

0 |

| LSTM |

RNN |

7.9580 |

9.0000 |

0 |

Table 8.

Best-performing model per asset and horizon, determined by lowest mean rmse across three seeds. ModernTCN achieves the lowest rmse on 18 of 24 evaluation points (75%); N-HiTS wins on 3 points (all in cryptocurrency); PatchTST wins on 3 points (one crypto, one crypto, one forex). Bold highlights non-ModernTCN winners, revealing niche asset-specific advantages.

Table 8.

Best-performing model per asset and horizon, determined by lowest mean rmse across three seeds. ModernTCN achieves the lowest rmse on 18 of 24 evaluation points (75%); N-HiTS wins on 3 points (all in cryptocurrency); PatchTST wins on 3 points (one crypto, one crypto, one forex). Bold highlights non-ModernTCN winners, revealing niche asset-specific advantages.

| Category |

Asset |

Best at

|

Best at

|

| Crypto |

BTC/USDT |

PatchTST |

ModernTCN |

| ETH/USDT |

N-HiTS |

N-HiTS |

| BNB/USDT |

ModernTCN |

ModernTCN |

| ADA/USDT |

N-HiTS |

PatchTST |

| Forex |

EUR/USD |

ModernTCN |

ModernTCN |

| USD/JPY |

ModernTCN |

ModernTCN |

| GBP/USD |

PatchTST |

ModernTCN |

| AUD/USD |

ModernTCN |

ModernTCN |

| Indices |

DAX |

ModernTCN |

ModernTCN |

| Dow Jones |

ModernTCN |

ModernTCN |

| S&P 500 |

ModernTCN |

ModernTCN |

| NASDAQ 100 |

ModernTCN |

ModernTCN |

| Win count summary |

ModernTCN: 18 N-HiTS: 3 PatchTST: 3 |

Table 9.

Category-level mean rmse and mae averaged across all assets within each category and across both horizons (). Values are aggregated over 4 assets × 2 horizons × 3 seeds. Bold marks the best (lowest) value per category and metric. LSTM is included for completeness but excluded from ranking discussions due to convergence failures.

Table 9.

Category-level mean rmse and mae averaged across all assets within each category and across both horizons (). Values are aggregated over 4 assets × 2 horizons × 3 seeds. Bold marks the best (lowest) value per category and metric. LSTM is included for completeness but excluded from ranking discussions due to convergence failures.

| |

Crypto |

Forex |

Indices |

| Model |

rmse |

mae |

rmse |

mae |

rmse |

mae |

| Autoformer |

532.21 |

393.83 |

0.2068 |

0.1551 |

179.66 |

136.24 |

| DLinear |

323.48 |

220.87 |

0.1279 |

0.0940 |

123.80 |

90.67 |

| iTransformer |

320.83 |

217.99 |

0.1136 |

0.0785 |

113.72 |

79.13 |

| LSTM |

2398.94 |

2041.58 |

3.6679 |

3.5858 |

1548.56 |

1478.21 |

| ModernTCN |

314.66 |

211.14 |

0.1098 |

0.0750 |

110.89 |

76.09 |

| N-HiTS |

352.91 |

255.77 |

0.2356 |

0.1977 |

135.01 |

101.04 |

| PatchTST |

314.87 |

211.70 |

0.1108 |

0.0761 |

111.71 |

77.04 |

| TimesNet |

352.92 |

249.41 |

0.2127 |

0.1596 |

172.98 |

131.61 |

| TimeXer |

318.38 |

215.24 |

0.1174 |

0.0815 |

114.09 |

79.19 |

Table 14.

Two-factor variance decomposition of forecast rmse. Raw (untransformed) and z-normalised (within each asset–horizon slot) panels are shown with and without LSTM. Seed variance is negligible () in all cases.

Table 14.

Two-factor variance decomposition of forecast rmse. Raw (untransformed) and z-normalised (within each asset–horizon slot) panels are shown with and without LSTM. Seed variance is negligible () in all cases.

| Factor |

Raw (%) |

z-norm, all (%) |

z-norm, no LSTM (%) |

| Model (architecture) |

99.9000 |

48.3200 |

68.3300 |

| Seed (initialisation) |

0.0100 |

0.0400 |

0.0200 |

| Residual (model×slot) |

0.0900 |

51.6400 |

31.6600 |

Table 15.

Mean directional accuracy (da, %) per model, asset class, and horizon, averaged across assets within each category and over three seeds. Values close to 50% indicate no systematic directional bias.

Table 15.

Mean directional accuracy (da, %) per model, asset class, and horizon, averaged across assets within each category and over three seeds. Values close to 50% indicate no systematic directional bias.

| |

Crypto |

Forex |

Indices |

| Model |

|

|

|

|

|

|

| Autoformer |

50.41 |

49.96 |

50.06 |

50.11 |

50.01 |

49.98 |

| DLinear |

49.70 |

49.94 |

50.13 |

50.12 |

49.97 |

49.96 |

| iTransformer |

49.58 |

50.22 |

50.04 |

49.99 |

49.90 |

50.18 |

| LSTM |

49.73 |

50.07 |

50.08 |

49.95 |

49.88 |

49.95 |

| ModernTCN |

50.04 |

49.96 |

49.96 |

50.03 |

50.34 |

50.03 |

| N-HiTS |

50.05 |

49.87 |

50.02 |

50.03 |

50.78 |

49.92 |

| PatchTST |

50.07 |

49.85 |

50.10 |

49.98 |

50.42 |

49.95 |

| TimesNet |

50.57 |

50.34 |

50.05 |

49.88 |

50.27 |

50.18 |

| TimeXer |

49.86 |

49.94 |

49.98 |

50.08 |

50.92 |

50.20 |

Table 16.

Statistical significance tests — Panel A: Friedman-Iman-Davenport omnibus tests. : Friedman statistic (8 df); F: Iman-Davenport F-statistic; n: number of blocks (evaluation points). All tests reject at .

Table 16.

Statistical significance tests — Panel A: Friedman-Iman-Davenport omnibus tests. : Friedman statistic (8 df); F: Iman-Davenport F-statistic; n: number of blocks (evaluation points). All tests reject at .

| Scope |

(8) |

F |

df |

p |

n |

| Global (all 12 assets, ) |

156.49 |

101.36 |

(8,184) |

|

24 |

| Crypto (4 assets, ) |

49.23 |

23.34 |

(8,56) |

|

8 |

| Forex (4 assets, ) |

56.00 |

49.00 |

(8,56) |

|

8 |

| Indices (4 assets, ) |

60.40 |

117.44 |

(8,56) |

|

8 |

Table 17.

Statistical significance tests — Panel B: Spearman rank correlations between model rankings at and , plus Stouffer combined test. Rankings are based on mean rmse across three seeds for each of the nine architectures ( per asset). All 12 per-asset correlations are significant at .

Table 17.

Statistical significance tests — Panel B: Spearman rank correlations between model rankings at and , plus Stouffer combined test. Rankings are based on mean rmse across three seeds for each of the nine architectures ( per asset). All 12 per-asset correlations are significant at .

| Category |

Asset |

Spearman

|

p-value |

| Crypto |

ADA/USDT |

0.68 |

0.0424 |

| Crypto |

BNB/USDT |

0.92 |

0.0005 |

| Crypto |

BTC/USDT |

0.92 |

0.0005 |

| Crypto |

ETH/USDT |

0.78 |

0.0125 |

| Forex |

AUD/USD |

0.87 |

0.0025 |

| Forex |

EUR/USD |

1.00 |

|

| Forex |

GBP/USD |

0.80 |

0.0096 |

| Forex |

USD/JPY |

0.95 |

0.0001 |

| Indices |

DAX |

0.88 |

0.0016 |

| Indices |

Dow Jones |

0.87 |

0.0025 |

| Indices |

S&P 500 |

0.97 |

|

| Indices |

NASDAQ 100 |

0.98 |

|

|

Stouffer combined ( assets) |

6.17 |

|

Table 18.

Statistical significance tests — Panel C: Intraclass Correlation Coefficient (icc; two-way mixed, absolute agreement) for three representative assets at . High icc values confirm negligible seed-to-seed variation relative to inter-model differences.

Table 18.

Statistical significance tests — Panel C: Intraclass Correlation Coefficient (icc; two-way mixed, absolute agreement) for three representative assets at . High icc values confirm negligible seed-to-seed variation relative to inter-model differences.

| Asset () |

icc |

F-statistic |

p-value |

| BTC/USDT |

1.00 |

1650.20 |

|

| EUR/USD |

0.99 |

309.60 |

|

| S&P 500 |

1.00 |

2255.60 |

|

Table 19.

Statistical significance tests — Panel D: Jonckheere-Terpstra (jt) test for a monotonic relationship between model complexity (parameter count) and rmse rank. A significant positive result would indicate that more parameters reliably yield better ranks. Three complexity groups: , –, parameters.

Table 19.

Statistical significance tests — Panel D: Jonckheere-Terpstra (jt) test for a monotonic relationship between model complexity (parameter count) and rmse rank. A significant positive result would indicate that more parameters reliably yield better ranks. Three complexity groups: , –, parameters.

| Category |

Horizon |

jtz

|

p-value |

Monotonic? |

| Crypto |

4 |

0.35 |

0.36 |

No |

| Crypto |

24 |

-0.35 |

0.64 |

No |

| Forex |

4 |

0.38 |

0.35 |

No |

| Forex |

24 |

-0.29 |

0.61 |

No |

| Indices |

4 |

-1.25 |

0.89 |

No |

| Indices |

24 |

-1.48 |

0.93 |

No |