Submitted:

22 February 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. The Problem: LLMs Get Lost in Conversation

1.2. Our Contribution

2. Methods

2.1. Data

2.2. Model Set

2.3. Metrics

2.4. Statistical Analysis

3. Results

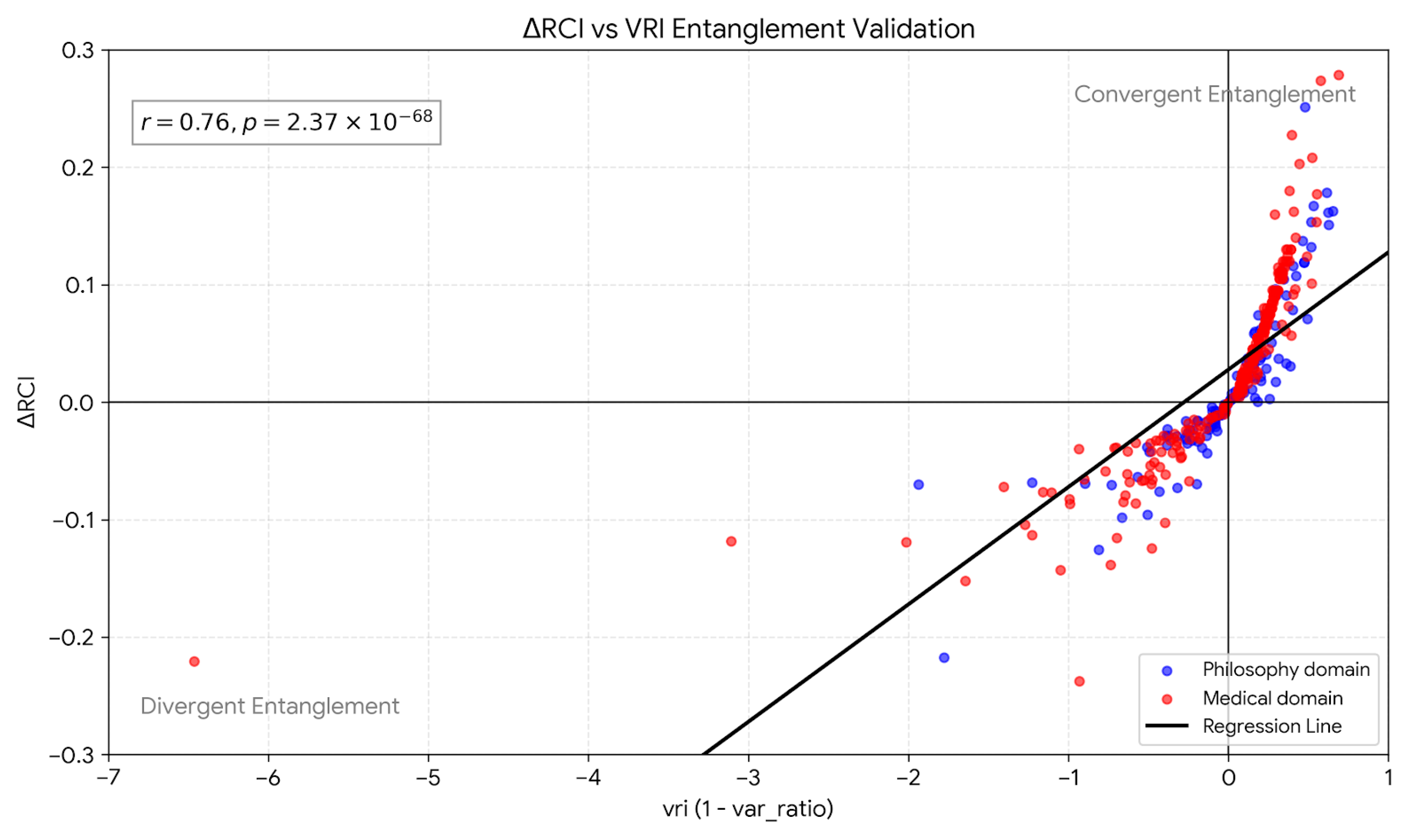

3.1. Finding 1: RCI tracks VRI (Entanglement Signal)

- Pooled correlation:, ( model-position points)

- Data: 12 model-domain runs × 30 positions points

- Each point aggregates 50 independent trials per condition

3.2. Finding 2: Bidirectional Entanglement (Convergent vs. Divergent)

- Convergent entanglement:, . Context narrows the response distribution, making outputs more predictable.

- Divergent entanglement:, . Context widens the response distribution, making outputs less predictable.

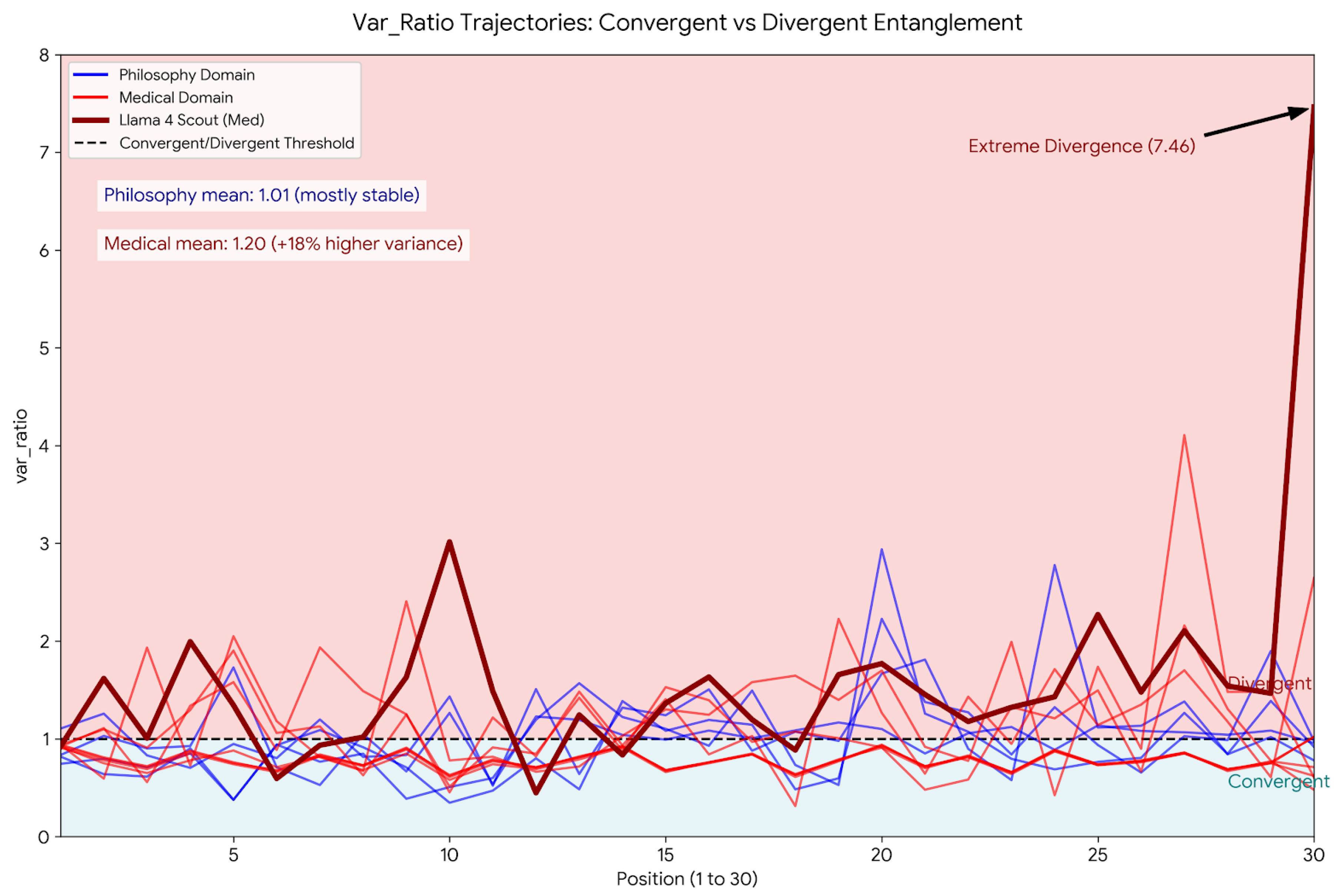

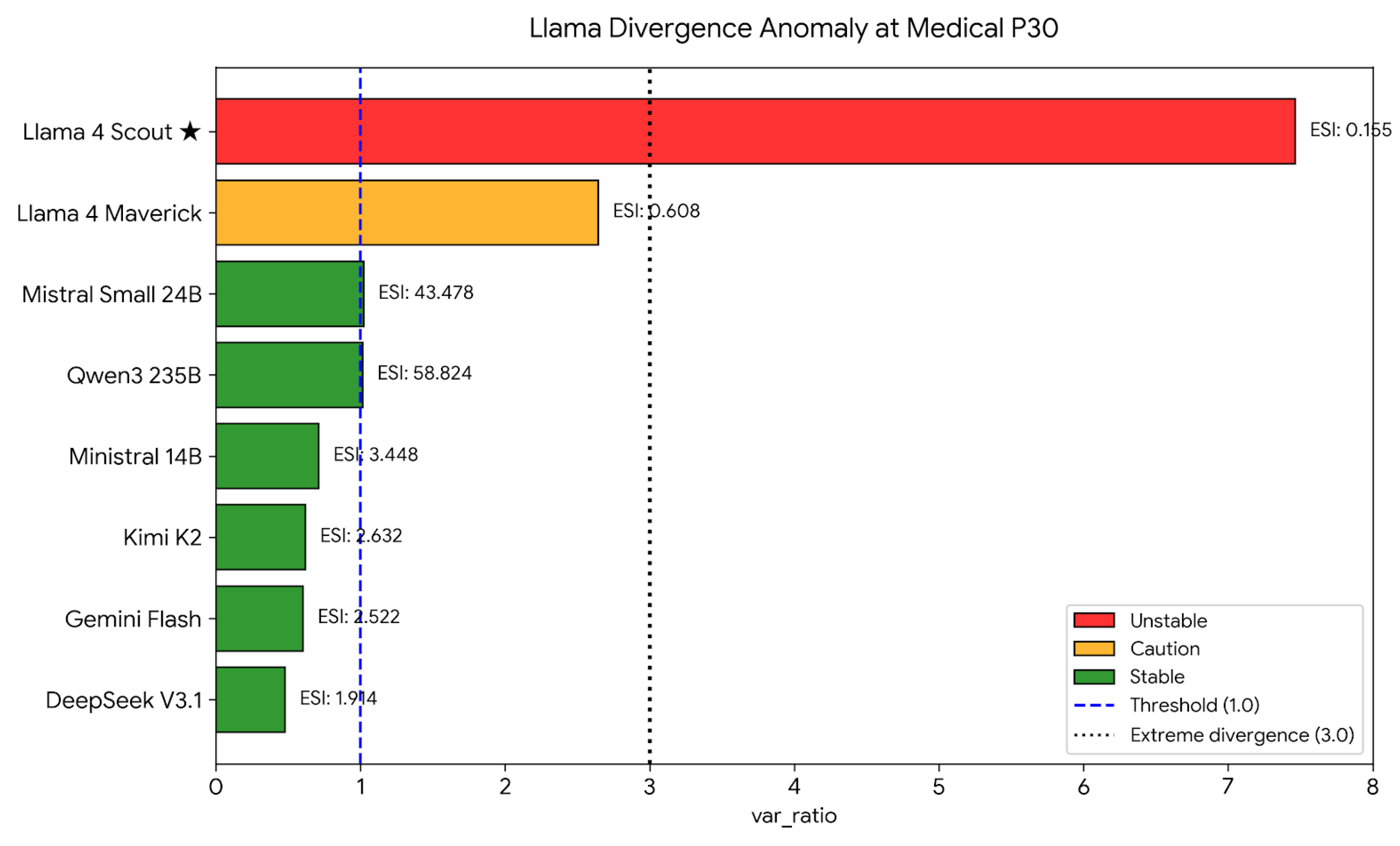

3.3. Finding 3: Llama Divergence Anomaly at Medical P30

- Llama 4 Maverick:, , ESI

- Llama 4 Scout:, , ESI

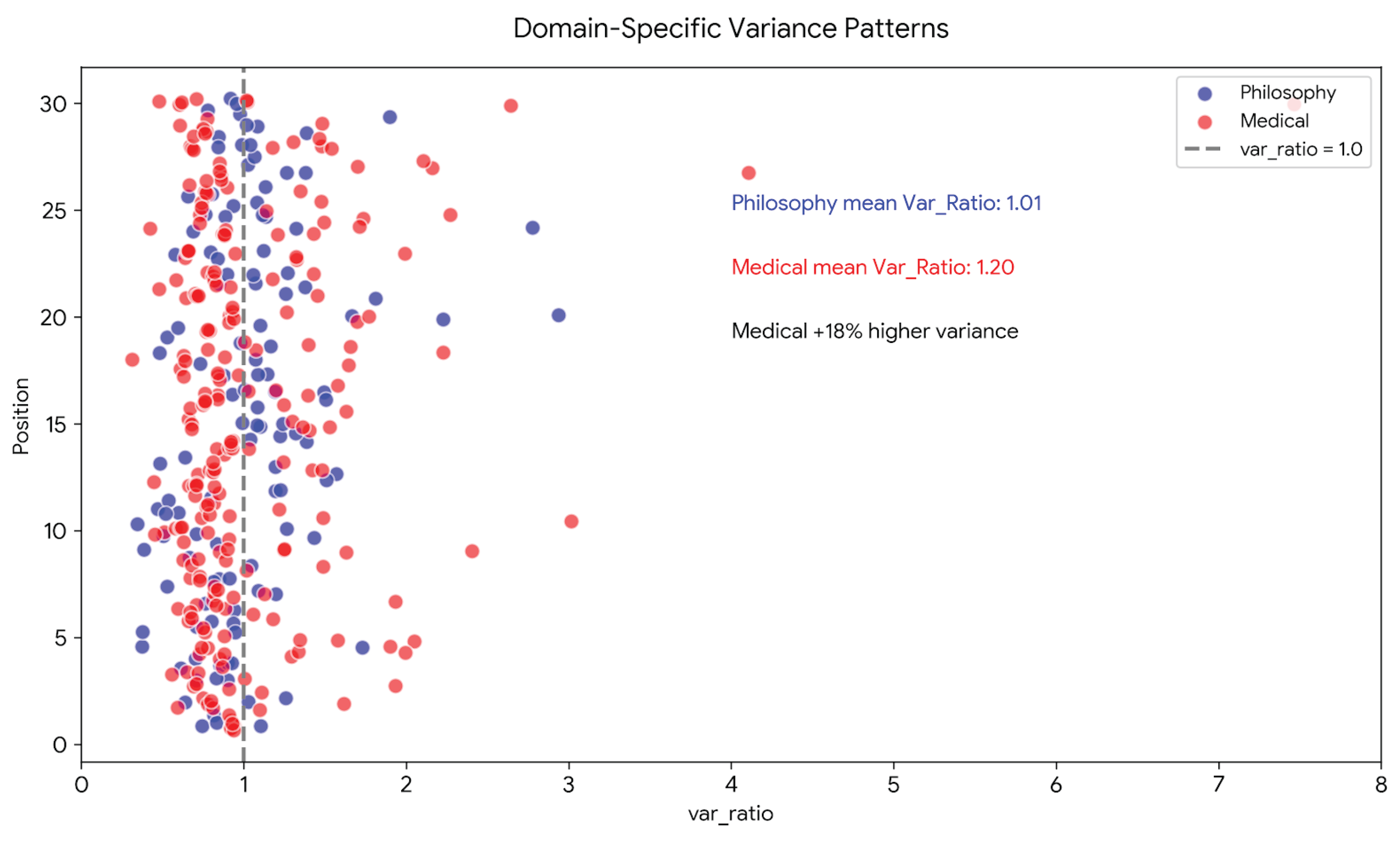

3.4. Finding 4: Domain-Specific Variance Patterns

- Philosophy: (variance-neutral on average)

- Medical: (variance-increasing on average)

3.5. Finding 5: ESI Predicts Multi-Turn Instability

| Model | Var_Ratio (P30) | ESI | Assessment |

|---|---|---|---|

| Llama 4 Scout | 7.46 | 0.15 | Unstable |

| Llama 4 Maverick | 2.64 | 0.61 | Caution |

| Qwen3 235B | 1.02 | 50.0 | Stable |

| GPT-4o | 0.58 | 2.38 | Stable |

| Claude Haiku | 0.65 | 2.86 | Stable |

| Gemini Flash | 0.60 | 2.50 | Stable |

3.6. Finding 6: Variance Ratio as a Practical Entanglement Surrogate

4. Discussion

4.1. Explaining the “Lost in Conversation” Phenomenon

4.2. Entanglement Reframes RCI as Predictability Modulation

4.3. Bidirectional Entanglement Resolves the Sovereign Category

4.4. Safety Implications: Predictability Is Task-Dependent

4.5. Implications for AI Evaluation

- Var_Ratio trajectories across conversation lengths

- ESI values at task-critical positions

- Domain-specific entanglement signatures

4.6. Architectural Interpretation

4.7. Limitations

4.8. Future Directions

5. Conclusions

Supplementary Materials

Data Availability Statement

Acknowledgments

References

- Laban, P.; Hayashi, H.; Zhou, Y.; Neville, J. LLMs get lost in multi-turn conversation. arXiv 2025, arXiv:2505.06120. [Google Scholar] [CrossRef]

- Laxman, M. M. Context curves behavior: Measuring AI relational dynamics with ΔRCI. Preprints.org 2026a, 202601.1881. [Google Scholar] [CrossRef]

- Laxman, M. M. Standardized context sensitivity benchmark across 25 LLM-domain configurations. Preprints.org 2026b, 202602.1114. [Google Scholar] [CrossRef]

- Laxman, M. M. (2026c). Domain-specific temporal dynamics of context sensitivity in large language models. Preprints.org, ID: 199272.

- Laxman, M. M. (2026e). Stochastic incompleteness in LLM summarization: A predictability taxonomy for clinical AI deployment. In preparation.

- Laxman, M. M. (2026f). An empirical conservation constraint on context sensitivity and output variance: Evidence across LLM architectures. In preparation.

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; et al. Language models are few-shot learners. Advances in Neural Information Processing Systems 2020, 33. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A. N.; Kaiser; Polosukhin, I. Attention is all you need. Advances in Neural Information Processing Systems 2017, 30. [Google Scholar]

- Reimers, N.; Gurevych, I. Sentence-BERT: Sentence embeddings using Siamese BERT-networks. Proceedings of EMNLP 2019, 2019. [Google Scholar]

- Asgari, E.; et al. A framework to assess clinical safety and hallucination rates of LLMs for medical text summarisation. npj Digital Medicine 2025, 8(1), 274. [Google Scholar] [CrossRef] [PubMed]

- Liu, N. F.; Lin, K.; Hewitt, J.; Paranjape, A.; Bevilacqua, M.; Petroni, F.; Liang, P. Lost in the middle: How language models use long contexts. Transactions of the Association for Computational Linguistics 2024, 12, 104–123. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).