Submitted:

22 February 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Motivation

- Log-Factorial: an additive accumulation process in log space (error-accumulating dynamics). We also include a controlled variant where the per-step increment is provided to isolate long-horizon stability from increment discovery.

- Log-Fibonacci: a recurrence whose long-run behavior becomes dominated by a stable growth mode (attractor-aligned dynamics).

- Euclidean GCD: a norm-reducing iteration with highly variable step counts and well-known worst-case chains (contracting dynamics).

1.2. Related Work

2. Methods

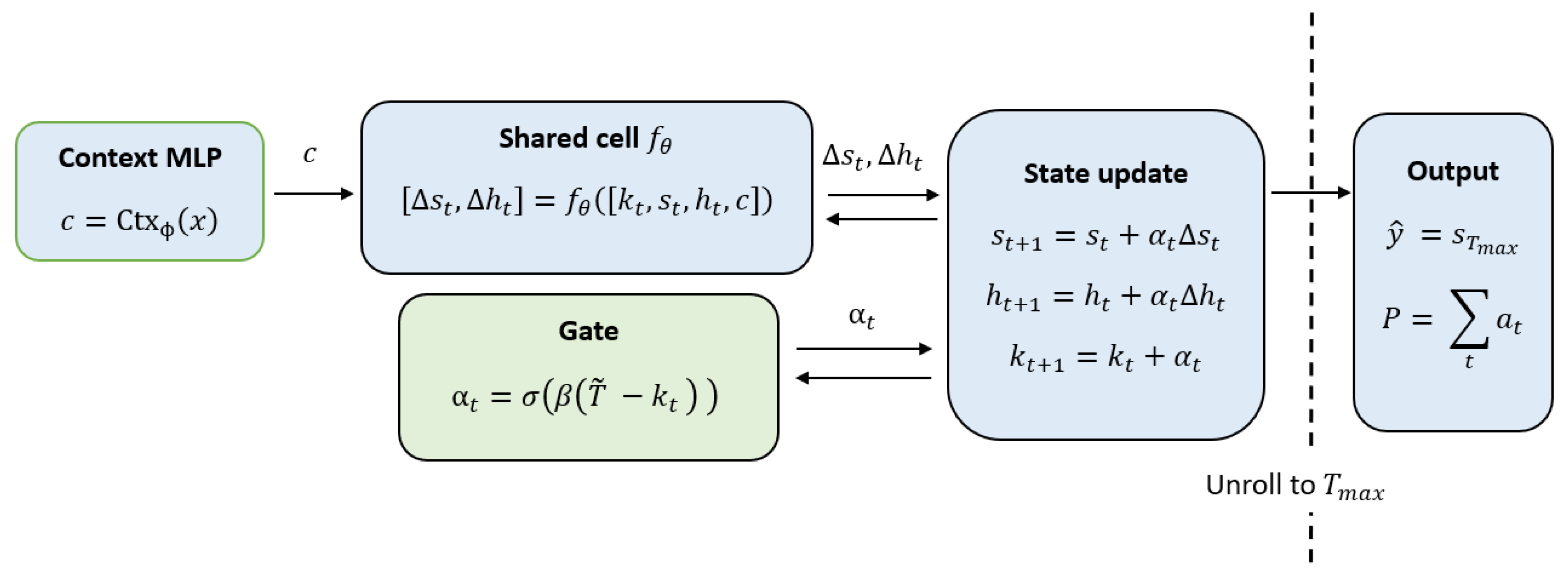

2.1. Iterative Cell Architecture

Default architecture parameters.

- Context embedding dimension:

- Recurrent hidden (scratchpad) dimension:

- Cell MLP width:

- Cell MLP depth:

2.2. Operator-Specific Stabilization

2.3. Step-Consistency Objective

2.4. Target Step Schedules

3. Dynamical Regimes of Iterative Computation

3.1. Contracting Dynamics: Euclidean GCD

3.2. Attractor-Aligned Dynamics: Log-Fibonacci

3.3. Expanding Additive Dynamics: Log-Factorial

4. Experiments

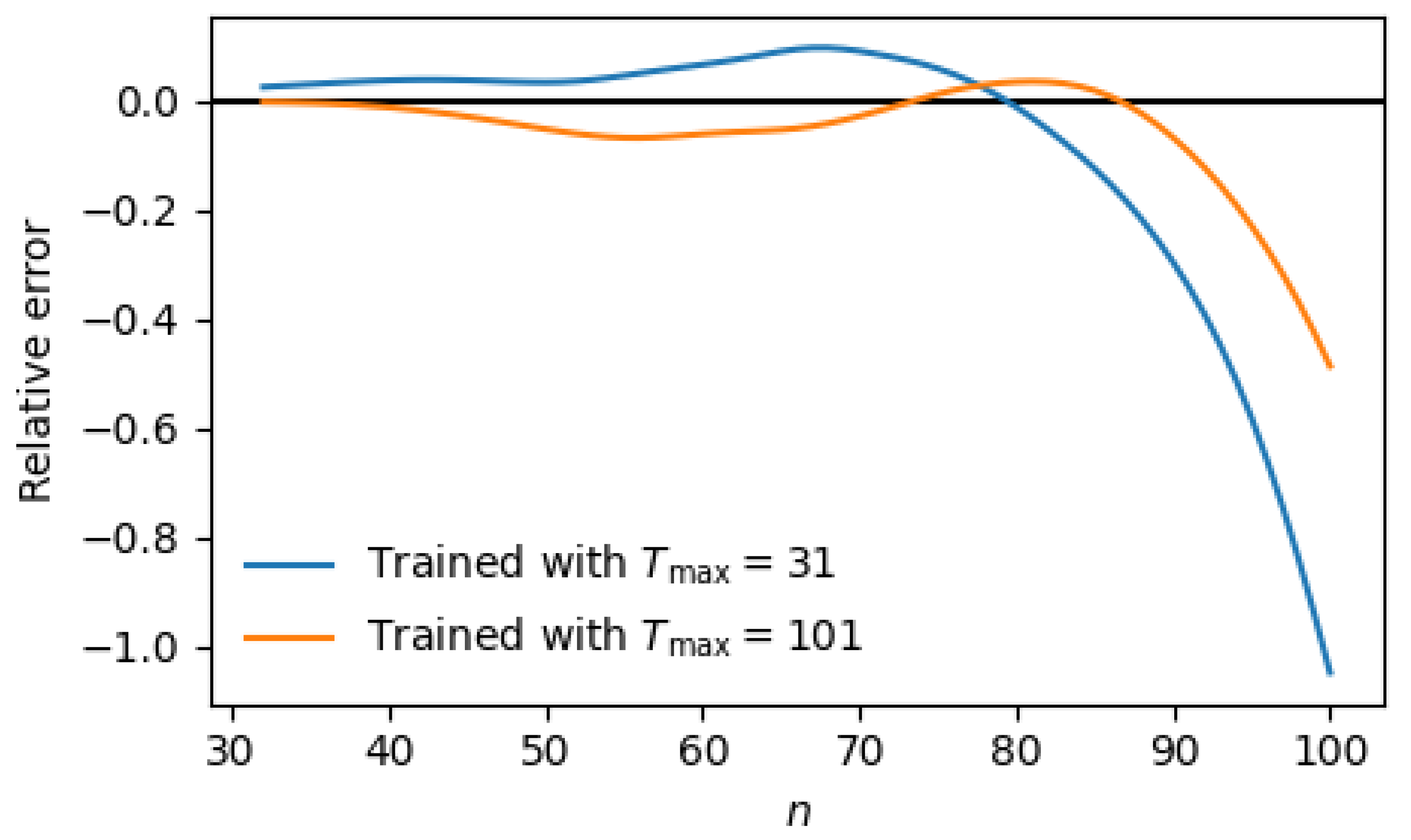

4.1. Log-Fibonacci: Attractor Generalization and Drift

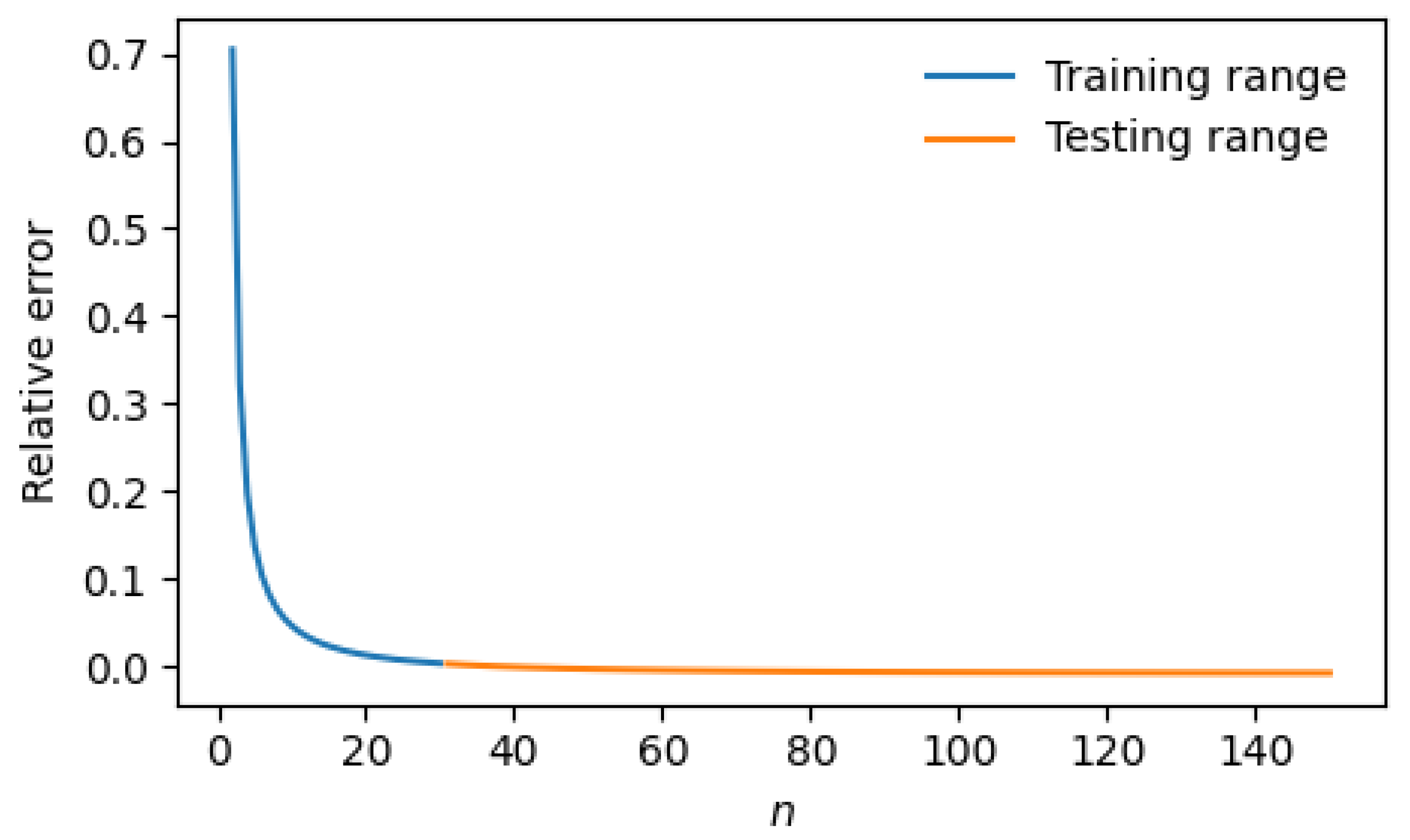

- Full-horizon training: (matches test unroll).

- Short-horizon training: (matches the training n-range).

- Full-horizon training (). Predictions remain largely stable across n, with mild oscillations and a gradual negative drift emerging late in the range (). Median: ; Mean: ; Absolute P90: .

- Short-horizon training (). Drift is stronger and appears earlier. The small-n regime exhibits larger positive oscillations, while the extreme tail () develops a more pronounced negative deviation. Median: ; Mean: ; Absolute P90: .

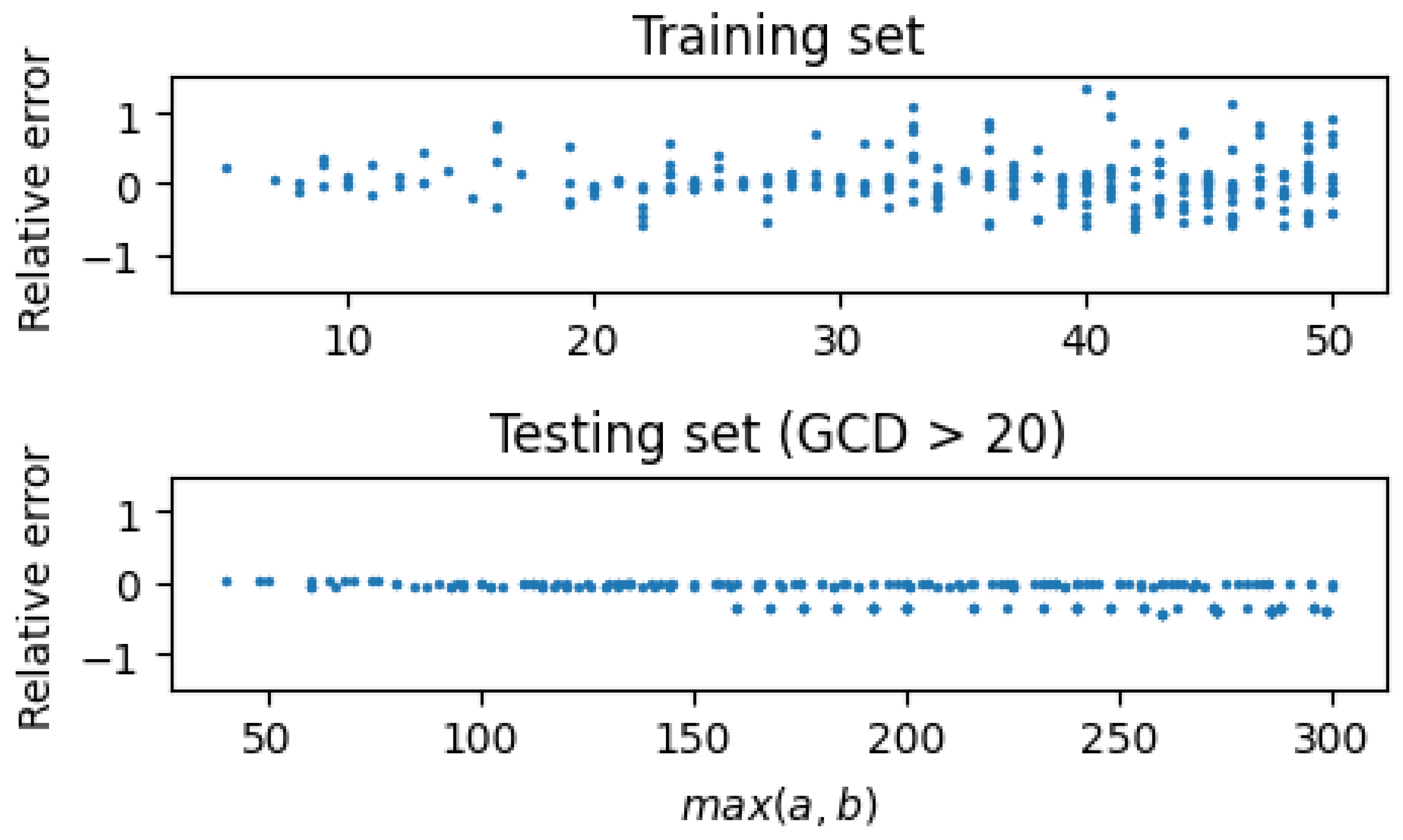

4.2. GCD: Input Magnitude vs. Step Extrapolation

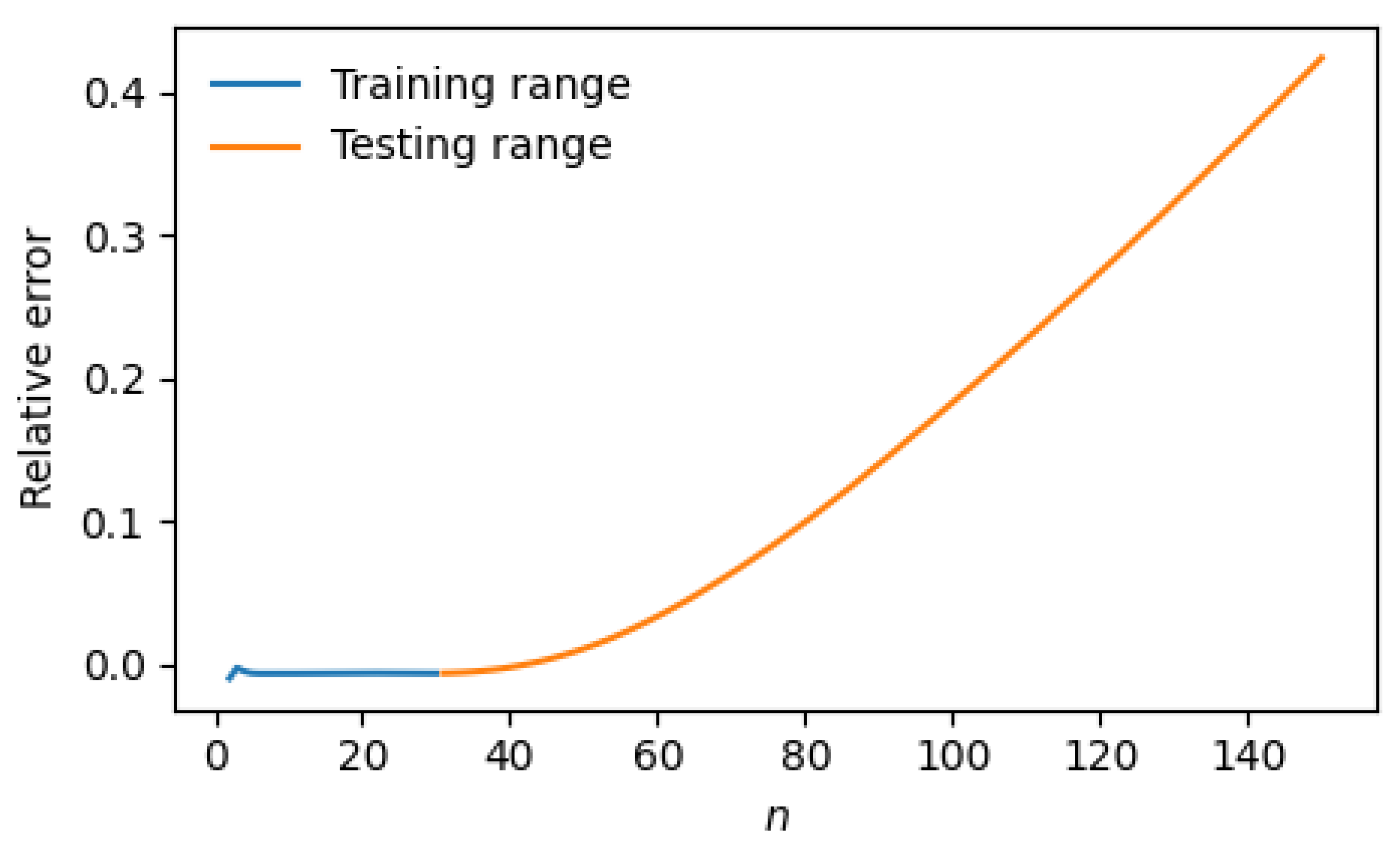

4.3. Log-Factorial: Default vs Dedicated Accumulation

5. Discussion

5.1. Code Availability

Acknowledgments

References

- Graves, A. Adaptive computation time for recurrent neural networks. arXiv preprint arXiv:1603.08983 2016.

- Dehghani, M.; Gouws, S.; Vinyals, O.; Uszkoreit, J.; Kaiser, Ł. Universal transformers. arXiv preprint arXiv:1807.03819 2018.

- Chen, R.T.; Rubanova, Y.; Bettencourt, J.; Duvenaud, D.K. Neural ordinary differential equations. Advances in neural information processing systems 2018, 31.

- Bai, S.; Kolter, J.Z.; Koltun, V. Deep equilibrium models. Advances in neural information processing systems 2019, 32.

- Lake, B.M.; Ullman, T.D.; Tenenbaum, J.B.; Gershman, S.J. Building machines that learn and think like people. Behavioral and brain sciences 2017, 40, e253.

- Nye, M.; Solar-Lezama, A.; Tenenbaum, J.; Lake, B.M. Learning compositional rules via neural program synthesis. Advances in Neural Information Processing Systems 2020, 33, 10832–10842.

- Saxton, D.; Grefenstette, E.; Hill, F.; Kohli, P. Analysing mathematical reasoning abilities of neural models. arXiv preprint arXiv:1904.01557 2019.

- Trask, A.; Hill, F.; Reed, S.E.; Rae, J.; Dyer, C.; Blunsom, P. Neural arithmetic logic units. Advances in neural information processing systems 2018, 31.

| Task | Dynamical Type | Error Propagation | Generalization Behavior |

|---|---|---|---|

| GCD (Euclid) | Contracting | Shrinking / damped | Input-magnitude extrapolation; step-count fragile |

| Log-Fibonacci | Attractor-aligned | Bounded / aligned | Large-n with fixed depth; drift beyond horizon |

| Log-Factorial | Expanding additive | Accumulating | Horizon-sensitive; needs tight bias control |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).