1. Introduction

Digital recruitment platforms and labor markets are increasingly employing automated or semi-automated systems to support job-c candidate matching. The increase in unstructured data contained in job advertisements and curricula vitae (CVs), which are composed of heterogeneous writing styles and multiple languages, has further fueled research into decision support systems. Therefore, research has been carried out on a broad spectrum of techniques ranging from rule-based techniques to machine learning-based techniques and embedding-based semantic similarity models (Kurek et al., 2024; Frazzetto et al., 2025). Although these techniques are significantly better at handling large sets of documents, they also pose a trade-off in semantic flexibility, interpretability, and usability. For example, embedding-based techniques provide powerful tools to measure semantic-level similarity between job advertisements and candidate CVs to address issues with keyword-based matching. The potential of embedding-based techniques has also been enhanced by employing graph-based techniques and inductive learning techniques, among others (Frazzetto et al., 2025). The recruitment process involves a complex multi-criteria decision problem where technical needs, skill gaps, soft skills, organization culture, and logistics must be considered simultaneously. However, research carried out by experts has shown that hiring involves complex trade-offs. The decision process cannot be reduced to a single measure of semantic similarity. In fact, most research has concentrated on a single best measure of semantic similarity or skills match. The ranking produced by most decision support systems is difficult to interpret or audit. Moreover, it does not match real-world decision-making constraints. Although novel techniques such as knowledge graph-based semantic relatedness in job title matching have been proposed (Zadykian et al., 2025), a gap exists between high-performance black-box-based techniques and decision support systems where recruitment decision-making needs to be explained, governed, and controlled in addition to being scalable and having high performance (Aleisa et al., 2023). The research question that is being addressed in the paper is: “How can job-candidate matching be operationalized as a multi-dimensional, interpretable, and scalable decision-support process that combines semantic similarity with explicit skill-based, behavioral, and contextual constraints?” To achieve this, we propose a novel multi-KPI algorithmic framework that is intended to help decision-makers make better choices with the help of a number of structured “fit” indicators, including technical requirement coverage, skills gaps, semantic similarity of technical profiles, soft skills compatibility, evidence density, cultural team fit, and contractual/contextual compatibility. The novelty of the work resides not in the introduction of an additional score, but in the integration of heterogeneous “fit” indicators within a single unifying framework. Although zero-shot recommendation models like Kurek et al. (2024) and graph neural networks like Frazzetto et al. (2025) achieve outstanding results in automated matching, they are mostly optimized towards a single modeling paradigm. The novelty of our work resides in the combination of discrete rule-based KPIs with continuous embedding-based semantic measures and evidence-based “fit” indicators, allowing the system to operate both as a high-recall semantic retrieval system and as a constraint-based multi-criteria evaluation system, with incremental refinement capabilities without compromising interpretability. In an empirical context, the study positions itself within the context of an industrial project that is funded using public resources and aims at developing data support systems for labor market matching and workforce policymaking. The relevance of the study is further highlighted by the evaluation of the proposed system using various job postings and CVs that are more likely to represent real-world operational contexts than artificially generated data sets. The use of further AI recruitment tools within the context of national labor markets further highlights the relevance of the study, as mentioned in Aleisa et al. (2023). The relevance of the study can be highlighted from the context of the existing literature on matching systems along three different dimensions. First, the study proposes a structured methodological framework that can integrate semantic matching with constraint handling using a single architecture. Second, the study proposes a set of KPIs that can be used for decision-making purposes within the context of matching systems, while also highlighting the relevance of interpretability. Third, the study proposes an empirical analysis that is based on an industrial project funded using public resources, thus highlighting the relevance of the study.

The article continues as follows: in

Section 2, the literature review is provided, while

Section 3 defines the overall methodological framework, from scores to the multi-KPI approach for recruitment matching.

Section 4 defines the embedding-based semantic approach for job-CV matching, while

Section 5 evaluates the Hard Skill Coverage Ratio (HSCR) approach, especially the requirement-based ranking approach.

Section 6 defines the Hard Skill Proficiency Similarity (HSPS) approach, especially the semantic approach for high-recall matching.

Section 7 defines the Skill Gap Index (SGI) approach for providing additional information for hard skills-based matching.

Section 8 defines the Soft Skill Semantic Alignment (SSSA) approach for continuous soft skills-based matching, while

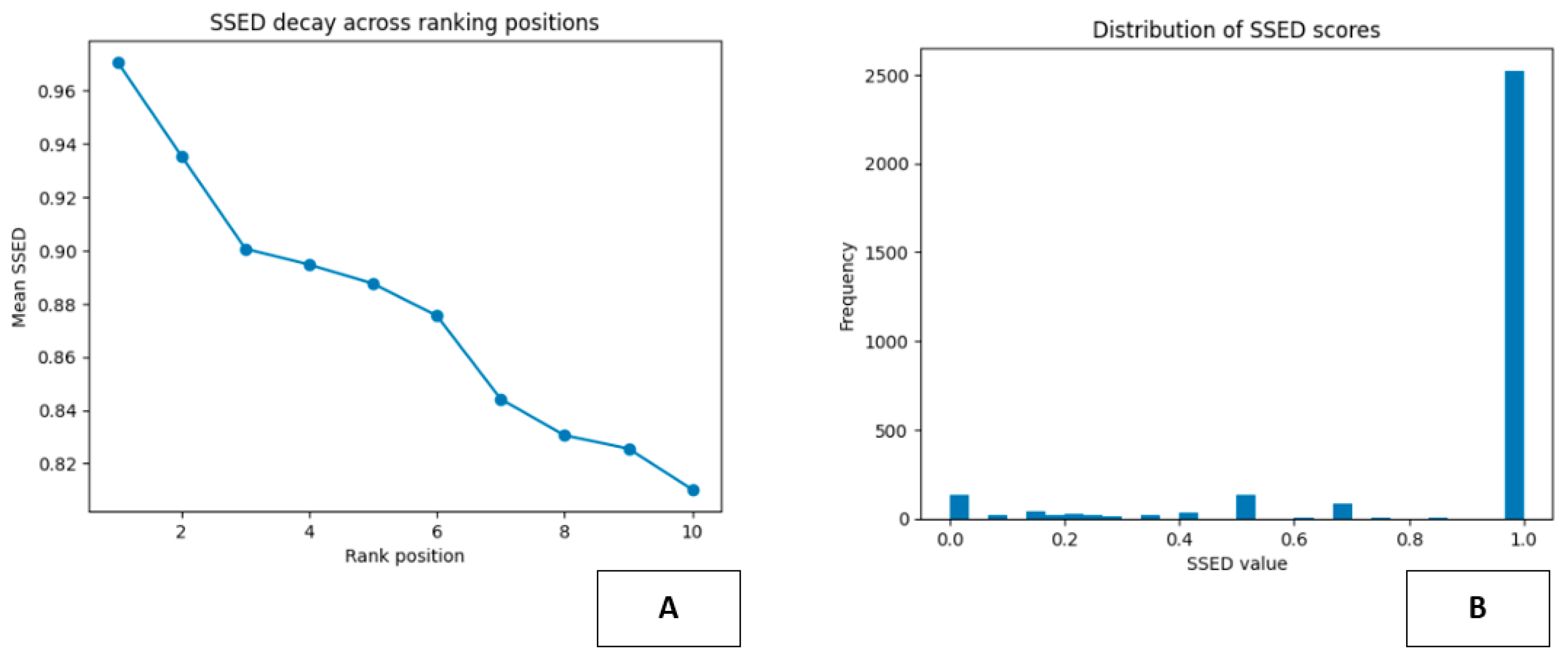

Section 9 defines the Soft Skill Evidence Density (SSED) approach for providing evidence-based support for soft skills-based matching.

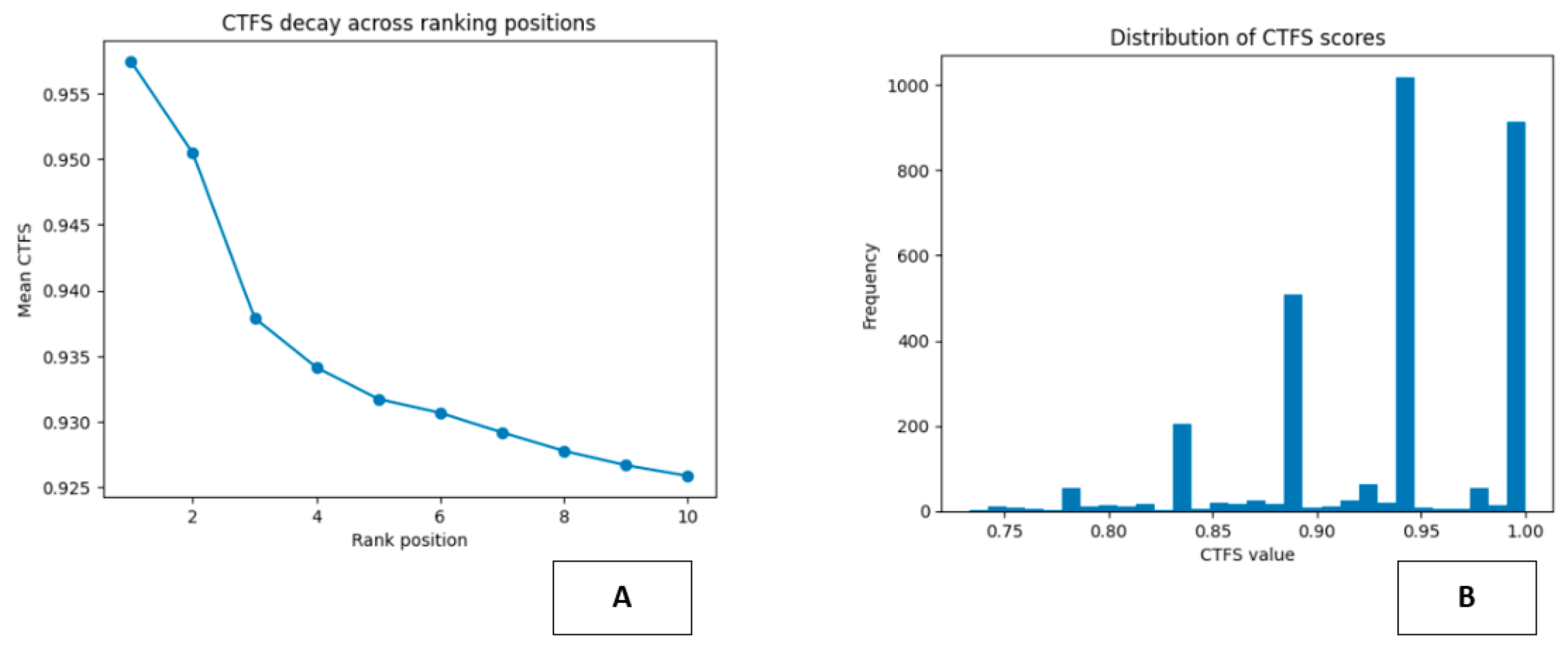

Section 10 defines the Cultural and Team Fit Score (CTFS) approach for providing additional information for organizational compatibility-based matching, while

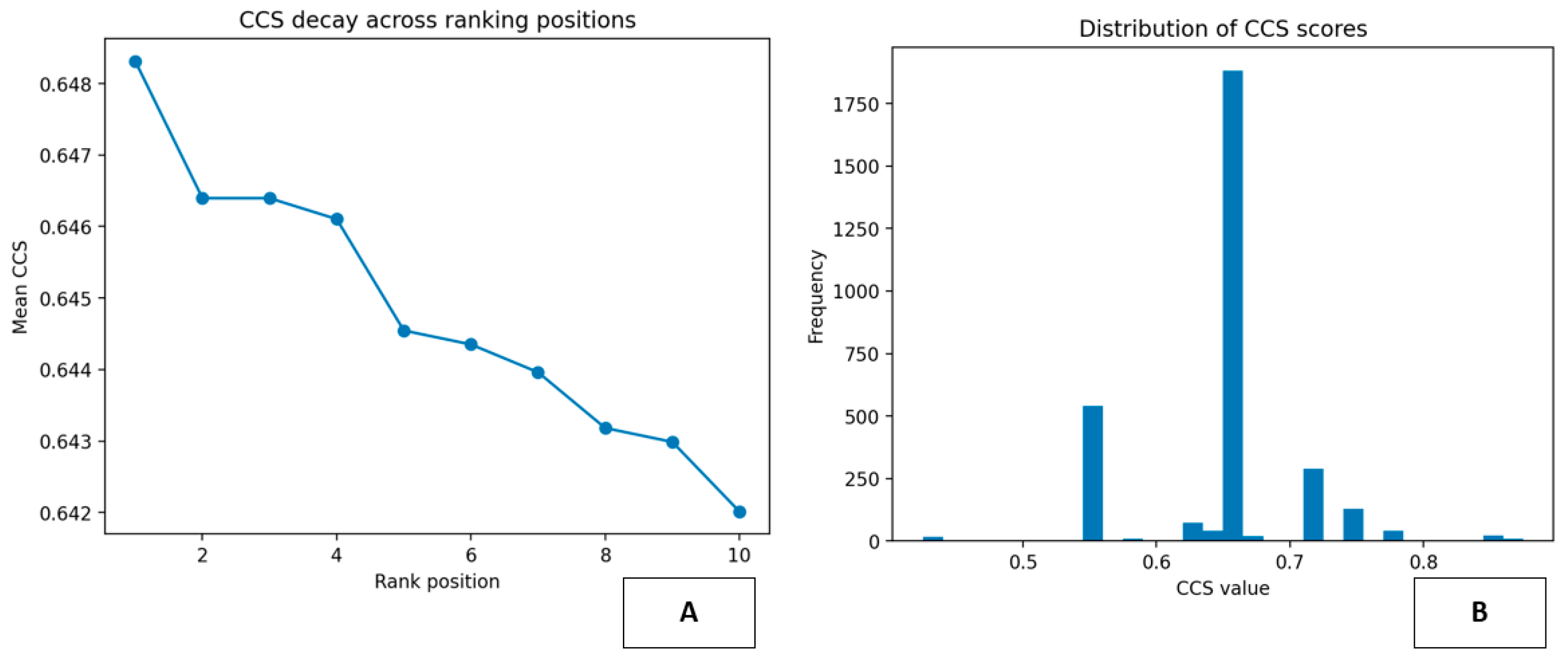

Section 11 defines the Contract Compatibility Score (CCS) approach for providing evidence-based support for multi-stage recruitment pipeline-based matching.

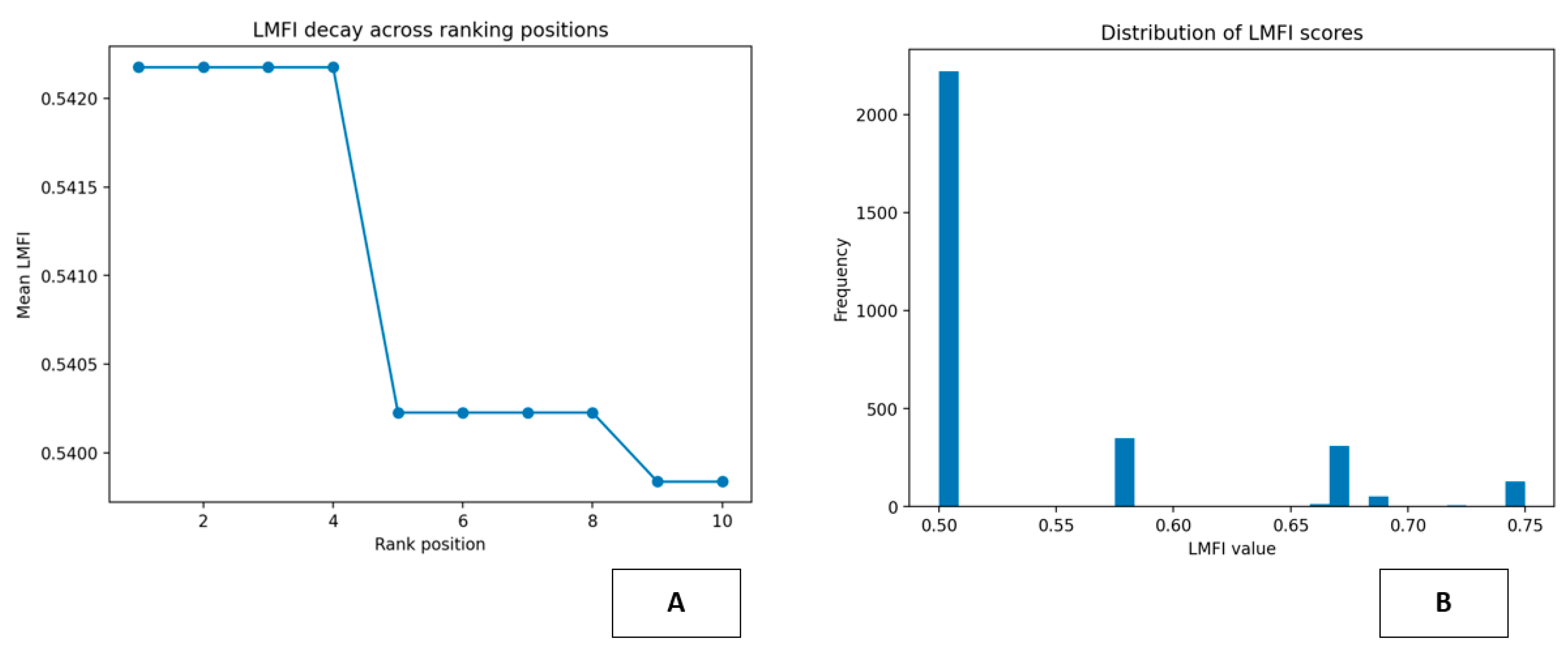

Section 12 defines the Location and Mobility Fit Index (LMFI) approach for constraint-based matching, while

Section 13 evaluates the Seniority and Compensation Alignment (SCA) approach for providing a high-resolution measure of structural compatibility-based matching.

Section 14 evaluates the overall performance of the multi-KPI approach for providing evidence-based support for multi-stage recruitment pipeline-based matching, while

Section 15 evaluates the overall approach of integrating hard skills, soft skills, and other factors for recruitment matching purposes. Finally,

Section 16 defines the overall limitations of the article, while

Section 17 defines the conclusion of the article.

2. Literature Review

Recent studies on AI-based recruitment processes indicate that there is a strong trend toward semantic similarity, deep learning, and end-to-end automation. Ajjam and Al-Raweshidy (2026) suggest an embedding-based semantic matching framework similar to our work but still centered around one dominant matching criterion. Kim (2026) changes the focus by examining the implications and risks of AI-based recruitment processes at the macro-level of society. Although this aligns with our emphasis on the importance of awareness and understanding at the macro-level, it does not offer any framework. The majority of the recent contributions focus on resume screening and scoring from an engineering and workflow optimization point of view. Liu et al. (2026), Dangeti et al. (2026), Sawant et al. (2026), Chihab et al. (2025), Barath et al. (2025), Hepzibah et al. (2025), Yadav et al. (2025), Ammupriya et al. (2025), Nabila et al. (2025), Singla et al. (2025), Dhobale et al. (2025), Tilve et al. (2025), Gangoda et al. (2024), and Waghmare et al. (2024) are some of these papers. Although these systems improve the efficiency and effectiveness of the recruitment process, they still use the monolithic concept of matching as an optimization problem. In these systems, different dimensions of matching are fused into one score. From the recommender systems and methodology point of view, Çelik Ertuğrul and Bitirim (2025) indicate the problems of explainability, hybridization, and evaluation in matching. They suggest the concept of multi-sided fairness in algorithmic hiring by Kaya and Bogers (2025). Likewise, hyper-personalization-based approaches (Alqudah et al., 2025) or personality-based approaches (Khan et al., 2025) enhance the notion of "fit" but integrate these dimensions into unifying, largely uninterpretable scoring systems. A second group of papers enhances the technical scope of matching using multimodal data or advanced learning techniques (Wu et al., 2025; Yazici et al., 2024; Dilli Ganesh et al., 2025; Kurek et al., 2024; Zhang, 2024), or predictive/zero-shot techniques (Waghmare et al., 2024; Kurek et al., 2024). Again, while technically sophisticated, these systems continue to view matching as an end-to-end prediction or ranking problem, rather than a decomposable multi-criteria decision problem. Other papers take a broader approach, proposing infrastructure, platform, or strategic-level AI-driven recruitment systems or models (Badouch & Boutaounte, 2025; Wahyuningrum et al., 2025; Es-Said et al., 2025; Saouabe et al., 2025; Kumar et al., 2025; Pandit et al., 2024; Dilusha et al., 2024; Jamil et al., 2024; Bhalke et al., 2024; Choudhuri et al., 2024). Other related papers address adjacent components in the recruitment pipeline, including resume generation, job portals, or user experience (Jha et al., 2025; Kulkarni et al., 2025; Haneef et al., 2025; Babalola et al., 2024), or organizational/performance outcomes in particular contexts, including social media recruitment (Al-Dmour et al., 2025) or career development (Sathish et al., 2024; Noel & Sharma, 2024). While these papers provide further evidence of the increasing ubiquity of AI in HR systems, they do not address the question of how to formally address matching as a controllable, multi-dimensional problem. From methodological and historical perspectives, Mat Saad et al. The recent works by (2022) and Rojas-Galeano et al. (2022) provide reviews and bibliometric analyses that reinforce the prevalence of model-centric, performance-oriented approaches. Previous systems based on classical machine learning and deep learning paradigms, as in Najjar et al. (2021), Mridha et al. (2021), Vasilescu et al. (2019), demonstrate the progress of automated screening and ranking systems, while Martínez and Fernández (2019) introduce rule-based and ontology-driven approaches specifically targeting ethical and legal auditing. Sharma and Garg (2024) further explore optimization-based HR analytics, while Mohamed et al. (2024) propose a precision CV matching system, again based on unified optimization/scoring paradigms. Together, they attest to the continuous expansion of technical, data, and application domains, while also exemplifying the conceptual limitation of the unified approaches: job-candidate matching is still treated as a prediction, ranking, or process-automation problem. In contrast, the current article proposes a new methodological direction by defining matching as a multi-criteria, interpretable, and auditable decision support process, based on a unified set of complementary KPIs covering semantic similarity, skill sets, behavioral evidence, as well as contextual/contractual feasibility, which may be seen as filling the gap left unaddressed in the literature, which is largely implicit or unstructured. See

Table 1.

2.1. From Matching Pipelines to Decision Support: Structural Patterns in the Recruitment AI Literature

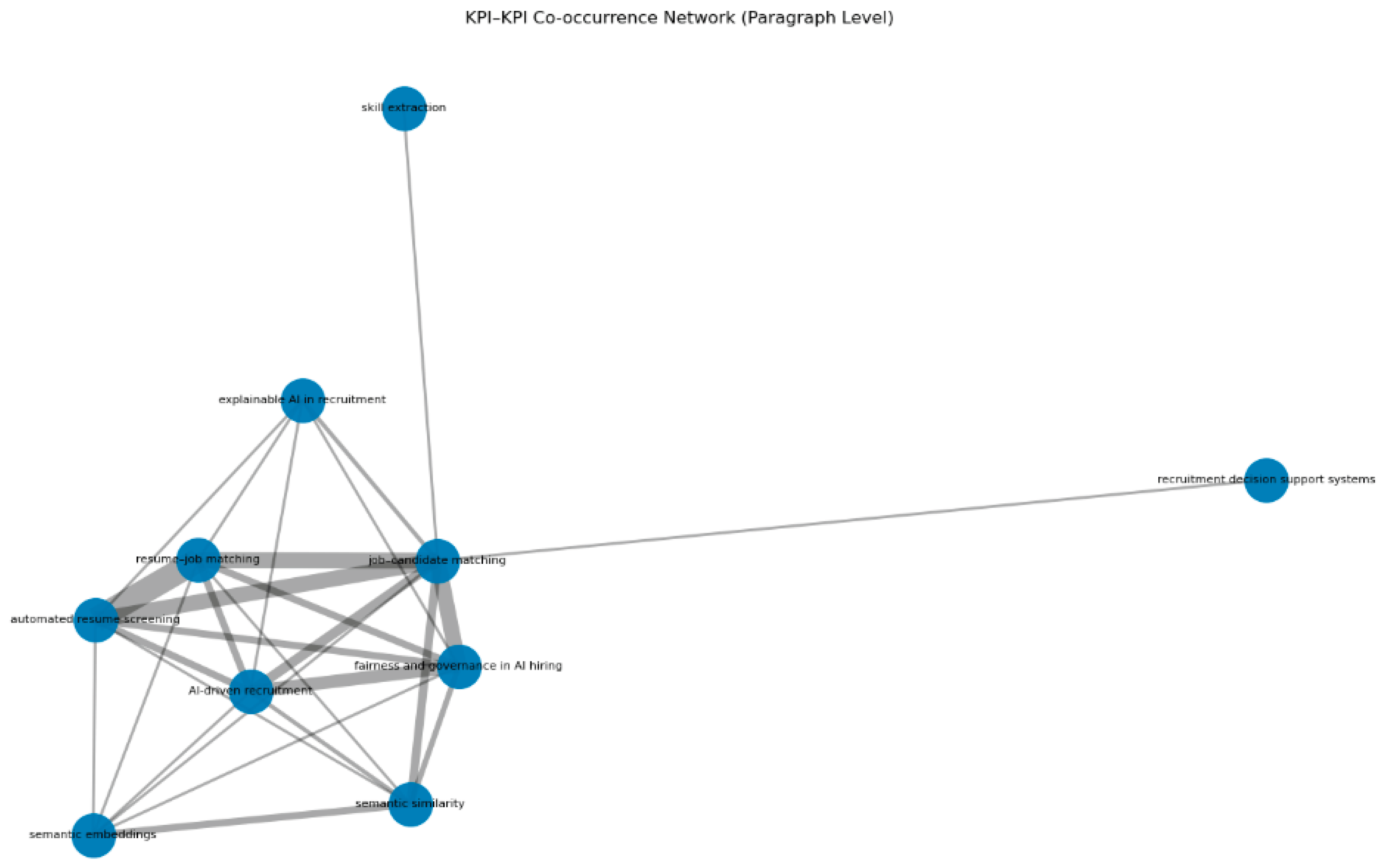

The network analysis offers a quantitative and structural understanding of the conceptual organization of the literature on AI-driven recruitment and job/candidate matching processes (Potočnik et al., 2024; Van Esch et al., 2019). Table X displays the most important centrality measures of the core KPIs, including degree centrality, weighted degree centrality, and betweenness centrality. The structural configuration of the network reveals that the overall structure is highly polarized around the core nodes. The small number of concepts controls most of the network’s connectivity and intermediation. As shown in Table X, job/candidate matching is found to be the most important node in the network. It bears the highest degree centrality score (9), the highest weighted degree centrality score (55), and a very high betweenness centrality score (0.4167). The last score reveals that job/candidate matching not only tends to co-occur most often with other concepts but also acts as an important intermediary by appearing on a considerable percentage of the shortest paths connecting different parts of the network. From the structural configuration of the network, it becomes evident that the literature centers around the general issue of matching labor demand and supply. Job/candidate matching acts as the most important concept connecting different research streams. These streams comprise resume/job matching, automated resume screening, semantic similarity, AI-driven recruitment, and issues of explainable AI in recruitment and AI hiring in government (Sajjadiani et al., 2019; Liem et al., 2018; Raghavan et al., 2020; Bogen & Rieke, 2018). This finding quantitatively supports the interpretation that, regardless of their technical or methodological specializations, the majority of the contributions frame their objectives with regard to the more general problem of job-candidate matching. A structural finding of particular interest is the role of semantic embeddings. While the degree of this node is lower than that of job-candidate matching, at 6, and the weighted degree, at 15, is also lower, the highest value of betweenness centrality, at 0.4676, is found for this node. This finding can be interpreted to mean that, more than with regard to any other pair of weakly connected subdomains, this concept serves as a bridge. It can be concluded that embedding-based representations and text embeddings in the context of HR constitute the primary methodological infrastructure that unifies different approaches to the problem of recruitment matching (Ajjam & Al-Raweshidy, 2025). The table thus provides quantitative evidence that, far from being a technical option among many, semantic embeddings constitute the primary connective mechanism for linking different approaches, such as semantic similarity models, resume-job matching, AI-based approaches to recruitment matching, automated resume screening, work on explainability, and work on interpretability (Liem et al., 2018). The application-oriented nature of this field is seen in the quantitative data provided by the metrics for resume-job matching and automated resume screening, which, at 7 each, have relatively high values for the degree metric, while their very high values for the weighted degree metric, at 44 each, indicate a strong co-occurrence with many other concepts in the corpus. At the same time, their betweenness centrality remains relatively low at 0.0625 for both authors, which implies that they are not important conceptual bridges between various research areas. On the contrary, these are groups of densely interconnected nodes, each of which is focused on operational pipelines for filtering, ranking, and screening CVs (Sajjadiani et al., 2019; Van Esch et al., 2019). This is also in line with the prevalence of engineering-related contributions, which focus on optimization, efficiency, and scalability, often viewing the matching process as an optimization of workflow automation, as opposed to multi-criteria decision making. The same can be said of the use of semantic similarity and AI-based recruitment, both of which are indicated by a moderate to high degree value of 6 and 7, respectively, with corresponding weighted degree scores of 22 and 33, indicating strong usage and diffusion of these concepts within the literature (Ajjam & Al-Raweshidy, 2025; Potočnik et al., 2024). However, both of these concepts are accompanied by very low betweenness centrality scores of 0.0093 and 0.0069, suggesting that these are not acting as structuring agents within the overall network, but are instead being used as part of existing approaches, perhaps as technical tools or as part of the broader context of existing approaches. In terms of structural interpretation, this again supports the view that these concepts are not being used as part of an explicit multi-KPI evaluation framework, but are being incorporated into monolithic models in which different aspects of fit, such as hard skill coverage, skill gap analysis, and soft skill assessment, are being implicitly combined within a single ranking or scoring function (Sajjadiani et al., 2019). The table also helps to clarify the position of explainable AI within recruitment and fairness, as well as fairness in AI-based recruitment approaches. These nodes also have non-negligible degree and weighted degree values (5 and 7, respectively; 11 and 38, respectively), which indicate their presence and discussion in conjunction with core technical issues (Raghavan et al., 2020; Mehrabi et al., 2021). Nevertheless, their betweenness centrality remains low at 0.0787 and 0.0069, respectively, which indicates their peripheral nature. Quantitatively, this also indicates that issues related to transparency, accountability, and governance tend to be related to existing AI-based recruitment and automated resume screening approaches rather than the core architecture of the matching system itself (Bogen & Rieke, 2018; Liem et al., 2018). Lastly, the least centrally located nodes in the network relate to recruitment decision support systems and skill extraction. The degree, weighted degree, and betweenness centrality values for this node are minimal at 1, 2, respectively, and 0, respectively. This provides strong quantitative evidence that the literature rarely discusses the issue of matching in the context of a decision support system, nor does it discuss the issue of skill extraction as a separate, relevant dimension for decision-making. This provides evidence that this issue tends to be treated as a component of a broader, end-to-end prediction system rather than being treated as a component of a multi-criteria decision-making system (Potočnik et al., 2024). Overall, the quantitative metrics reported in the table, along with their structural implications, provide a coherent quantitative portrait of the literature. The literature tends to be dominated by approaches related to semantic matching, with semantic embeddings being the primary integrative methodology (Ajjam & Al-Raweshidy, 2025). The literature also tends to be dominated by application-focused research on resume-job matching and automated resume screening approaches (Sajjadiani et al., 2019; Van Esch et al., 2019). At the same time, the notion of the multi-KPI, interpretable, and governance-aware recruitment process as a decision support process is structurally weak and underdeveloped (Raghavan et al., 2020; Mehrabi et al., 202 This quantitative structure is consistent with the qualitative findings of the literature review, supporting the claim of the proposed multi-KPI framework as an attempt to place itself in an underexplored area of the field, shifting the focus away from monolithic matching scores toward a more modular, interpretable, and auditable decision support architecture. See

Table 2.

The above figure 1 depicts the KPI-KPI co-occurrence network at the paragraph level in the literature review section. As can be observed, it provides a visual representation of the conceptual structure of the field. At the center of this structure, it can be noted that the process of job-candidate matching has become the main hub. This suggests that the literature has been centered on addressing the overarching problem of matching labor demand with labor supply. Moreover, it has been observed that most concepts are discussed in direct relation to this overarching objective. This suggests that most concepts are discussed in direct relation to this overarching objective (Potočnik et al., 2024). The cluster of concepts such as semantic embeddings, semantic similarity, resume-job matching, automated resume screening, AI-based recruitment, fairness and governance in AI hiring, and explainable AI in recruitment surrounds the main hub. The thickness of the lines connecting these concepts suggests that they are discussed in close proximity to one another in the paragraphs. This suggests that this field has been following a research direction where semantic concepts are combined with automated resume screening processes, along with concerns related to fairness and explainability (Ajjam & Al-Raweshidy, 2025; Raghavan et al., 2020). Practically, the figure corroborates that the dominant literature is organized around semantic matching and automated screening pipelines, with ethical and transparency issues being integrated within the same technical framework, rather than changing it (Mehrabi et al., 2021; Raghavan et al., 2020). Semantic embeddings and semantic similarity can be found as well-integrated nodes within the dominant cluster, which highlights the importance of distributed text representations as a unifying methodological framework across many of the literature works. These concepts are not independent of one another, as they are strongly intertwined with application aspects such as screening/matching, which reflects the recent trend of incorporating embedding-based methodologies and semantic similarity approaches in the literature (Ajjam & Al-Raweshidy, 2025). In contrast, two nodes can be easily recognized as being on the periphery of the network, namely the one related to decision support systems in recruitment and the one related to skill extraction. The former node is strongly connected to the rest of the network only through job-candidate matching, which highlights that the decision support perspective is still on the periphery of the literature, failing to function as an important structuring dimension (Potočnik et al., 2024). The same can be said about the node of skill extraction, which is found to be isolated, reflecting its treatment as an auxiliary technical step, rather than an independent, conceptually central dimension of the decision process. The figure can be recognized as providing a visual synthesis that is in line with the theoretical analysis. The dominant literature is centered around a technical, application-oriented core, which revolves around semantic matching and automated screening, while the decision support perspective on the multi-criteria, interpretable, and governance-aware nature of the recruitment process is on the periphery of the conceptual network, as highlighted by the analysis of the nodes (Raghavan et al., 2020; Mehrabi et al., 2021). From this vantage point, the multi-KPI framework can be recognized as targeting a structurally underexplored domain, aiming to transform the matching process from an exclusive ranking/prediction task to a structured, decomposed, and controllable decision-making process. See

Figure 1.

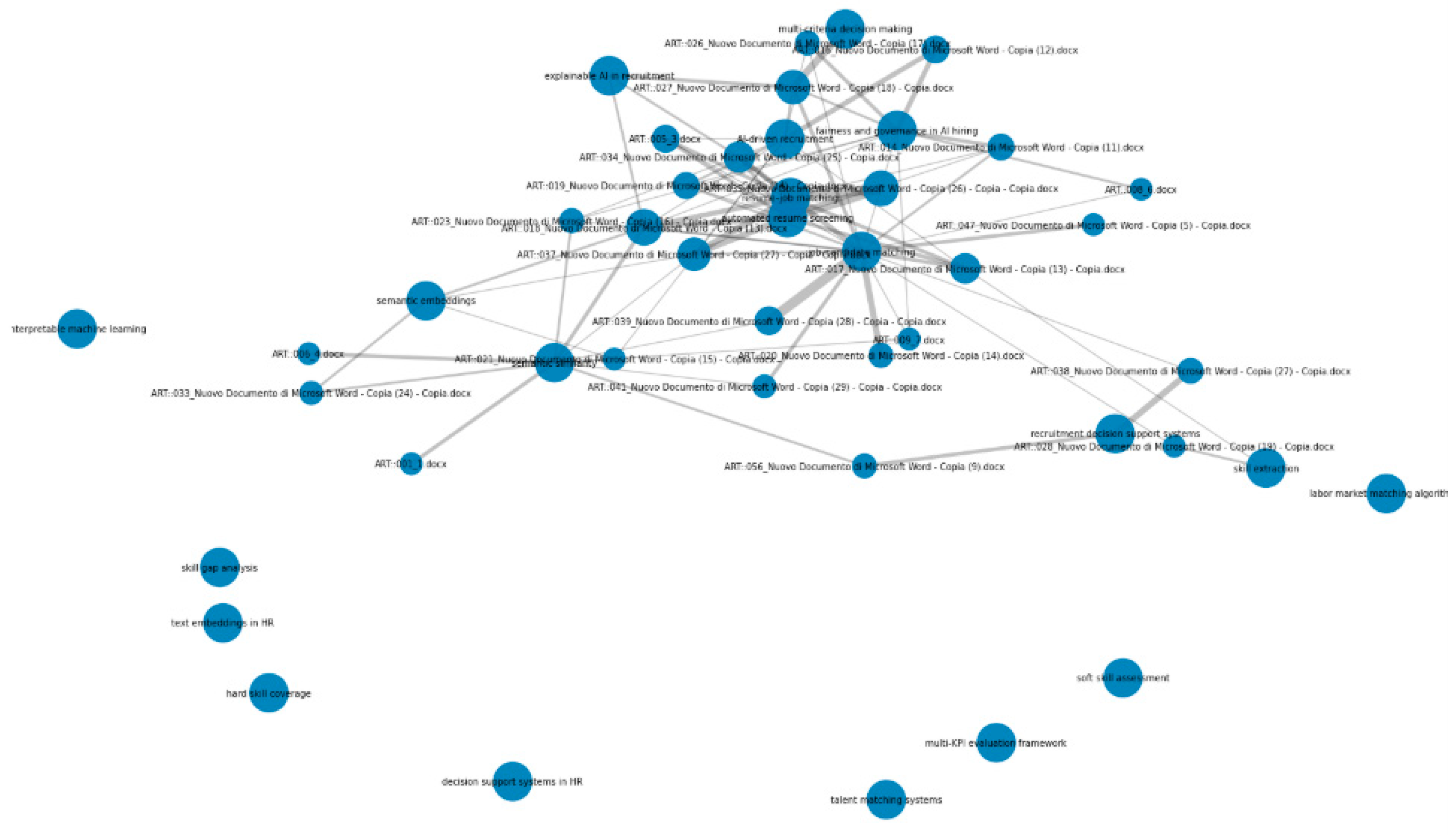

The above

Figure 2 represents the co-occurrence network of articles, keywords, and key performance indicators (KPIs), providing a more comprehensive and detailed overview of the conceptual space of the literature under examination. In contrast to the KPI network, it can clearly distinguish between document nodes and conceptual nodes, making it easier to monitor the variety of different contributions to specific topics, which are grouped around specific keywords (Potočnik et al., 2024). In the center of the network, there is a dense group of nodes, which are highly interconnected, covering a significant number of keywords, such as job candidate matching, resume-job matching, automated resume screening, semantic embeddings, semantic similarity, AI-based recruitment, explainable AI in recruitment, fairness, and governance in AI hiring. The importance of the above group of keywords, which are highly connected, can be identified by the significant number of related articles, implying that the literature under examination is highly focused on the application of semantic matching and automated screening, with a strong emphasis on the technical-operational aspects of the respective tasks (Ajjam & Al-Raweshidy, 2025). A small portion of more recent literature is focused on explainability and fairness, too, in the context of semantic matching and automated screening, as suggested by Raghavan et al. (2020), Mehrabi et al. (2021), still within the technical-operational context. The significant presence of the keyword “semantic embeddings” as one of the highly connected nodes of the network further supports the above finding that it provides a unifying, shared infrastructure for a significant portion of the literature under examination (Ajjam & Al-Raweshidy, 2025). A significant number of articles are related to more than one keyword of the group, implying the existence of different approaches incorporating aspects of semantic similarity, automated screening, and, to some extent, explainability. Apart from the above-mentioned core, some of the peripheral concepts that are weakly interconnected include multi-KPI evaluation framework, talent matching, soft skills evaluation, decision support system in HR management, hard skills coverage, skills gaps evaluation, and text embeddings in HR management. The weak connectivity of the above-mentioned concepts with the core concepts as well as with each other might be due to the lower representation of these concepts as unique entities in the literature. The concepts that are associated with an explicitly multi-criteria decision-support approach, along with an emphasis on multi-KPI evaluation, are located at the periphery of the network, where connectivity with other concepts is minimal, i.e., with only a few articles. The figure indicates that the literature of this domain is mostly focused on the technical application of the core of semantic matching/screening. The dimensions of semantic matching, which specify the criteria, hard skills evaluation, soft skills evaluation, and decision support, are located at the periphery of the network. The connectivity of the above-mentioned concepts with each other as well as with the core of the network supports the idea that the field of semantic matching/screening is mostly focused on model-centric as well as pipeline-centric approaches (Ajjam & Al-Raweshidy, 2025). The above figure also emphasizes the significance of the current manuscript, as it covers an under-explored part of the network with an alternative multi-KPI-based decision-support framework, as opposed to monolithic approaches of matching, as emphasized in the literature (Raghavan et al., 2020; Mehrabi et al., 2021). See

Figure 2.

3. From Monolithic Scores to Modular Evidence: A Multi-KPI Methodology for Recruitment Matching

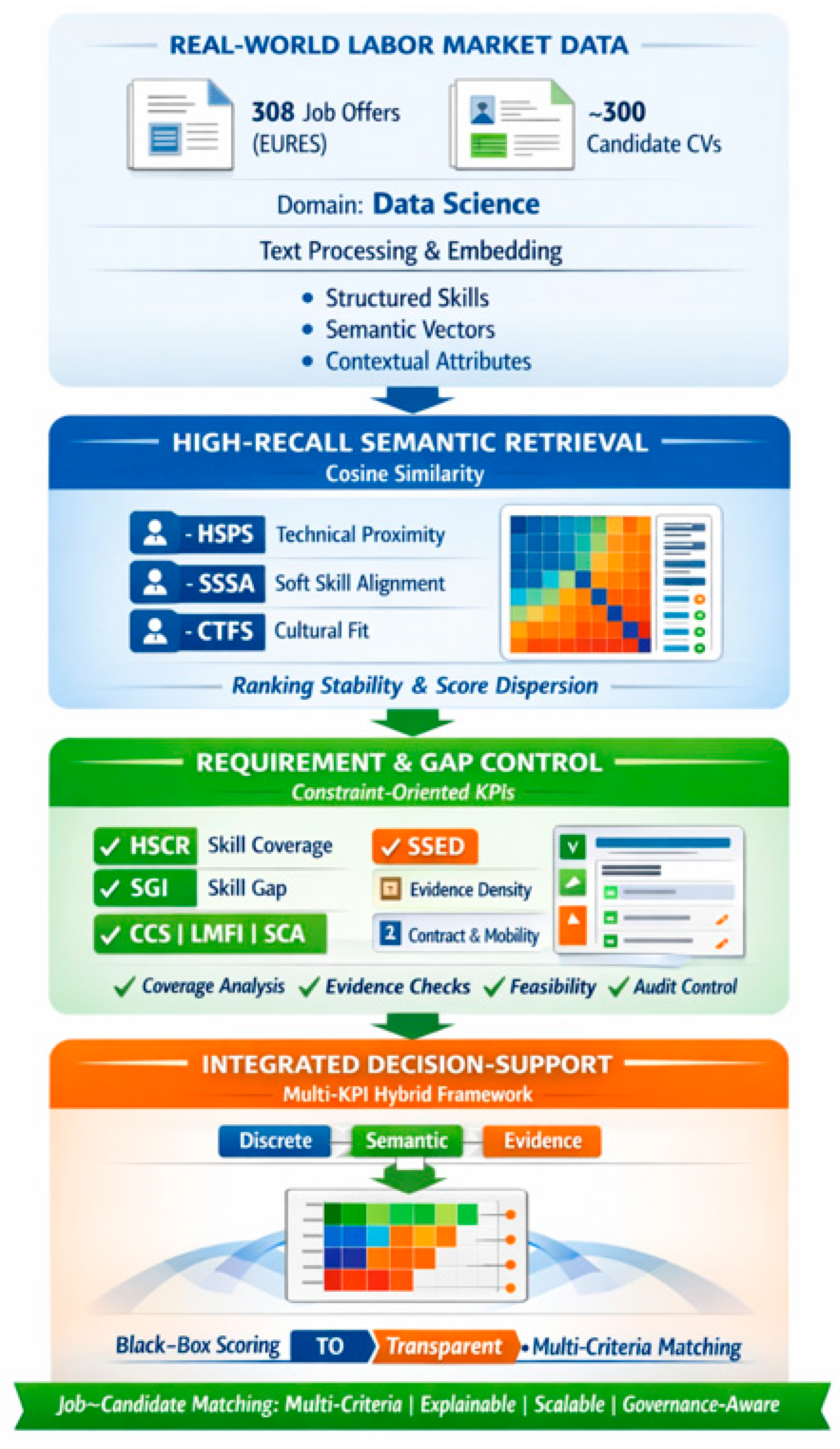

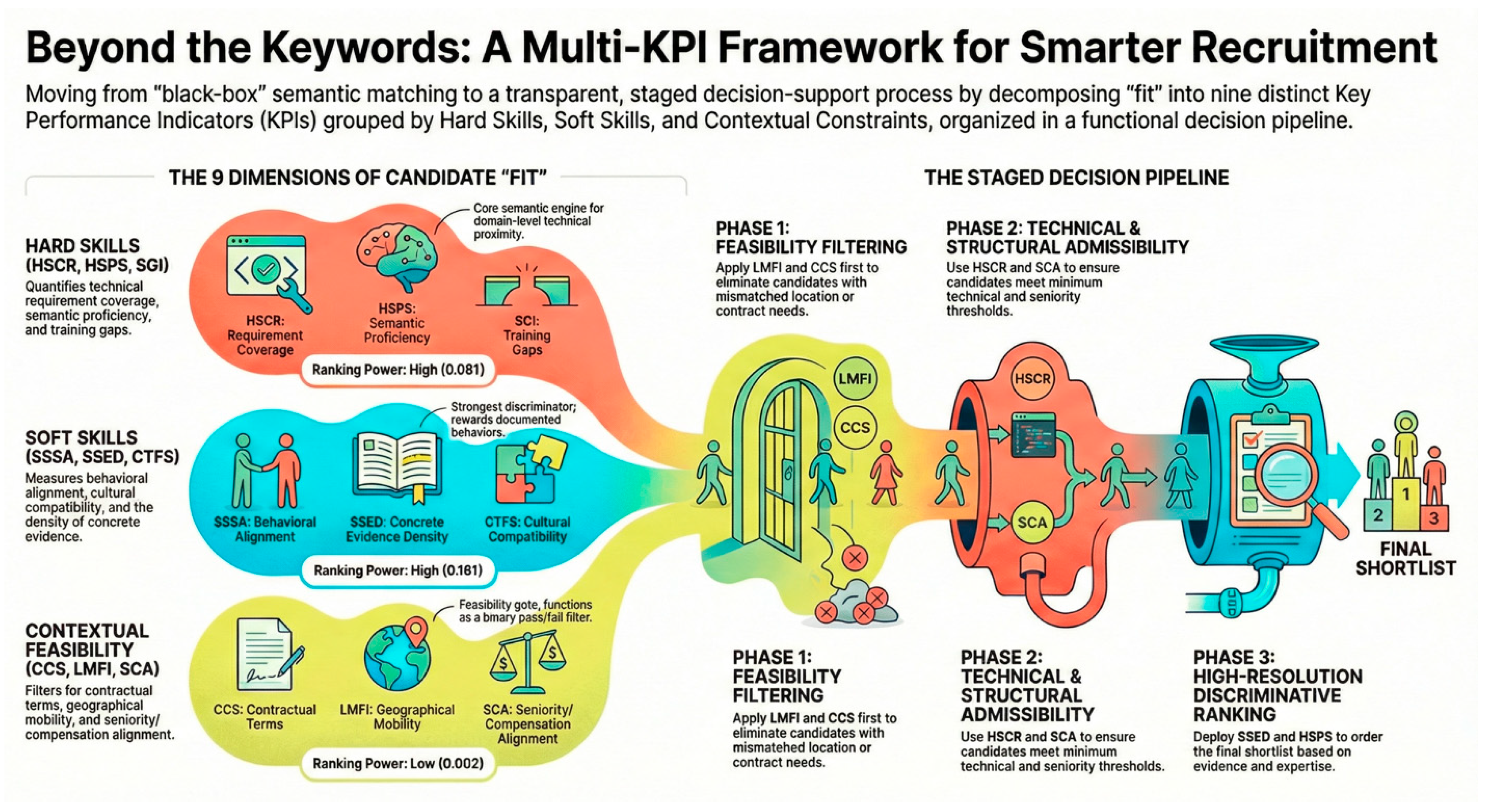

The methodological choice underlying this framework is to move beyond monolithic matching strategies—whether purely semantic or purely rule-based—and to decompose the notion of “fit” into a structured family of complementary, interpretable, and modular KPIs. See

Figure 4.

Each of these measures is associated with a particular, theoretically grounded dimension of compatibility, including explicit satisfaction of technical requirements (HSCR, SGI), semantic proximity of technical profiles (HSPS), behavioral and interpersonal compatibility (SSSA, SSED, CTFS), and feasibility constraints of operations (CCS, LMFI, SCA). What is original in our proposal is not any particular measure taken in isolation, but their systematic integration into a single framework that integrates different types of evidence, including discrete measures defined on sets (HSCR, SGI, CCS), continuous measures of semantic similarity between embeddings (HSPS, SSSA, CTFS), and density-based evidence measures (SSED). This mixed approach is consistent with current research efforts to transcend traditional, monolithic matching scores in favor of structured, evidence-based job matching models (Martínez-Manzanares et al., 2024) and with research that calls for integrating semantic textual relatedness with explicit knowledge representations to improve their interpretability (Zadykian et al., 2025). In particular, our approach, which integrates different types of evidence, avoids the limitations of embedding-based recruitment pipelines, as recently recognized in related research (Aleisa et al., 2023). From a methodological point of view, it should be noted that the framework explicitly differentiates between a semantic core layer (HSPS, SSSA, CTFS), which allows for high recall matching with robustness to natural languages, and other layers focused on constraint, evidence, and decision support (HSCR, SGI, SSED, CCS, LMFI, SCA), which reintroduce auditability, feasibility, and decision support. This is in line with recent trends in explainable matching, which combine semantic similarity models with structured knowledge to ensure interpretability, controllability, and explainability of matching outcomes (Zadykian et al., 2025), as well as empirically validated models of recruitment that combine expert criteria with automated scoring approaches (Martínez-Manzanares et al., 2024). In addition, recent trends in system-level implementations of AI-powered recruitment approaches highlight the importance of modularity, which allows for the implementation of constraints without requiring the full redesign of the matching pipeline (Aleisa et al., 2023). In this regard, the differentiation between layers supports the development of systems that can support the implementation of different organizational priorities without requiring modifications to the core matching process. The set of KPIs outlined in Table X can therefore be seen to support the development of job-candidate matching as a multi-criteria, explainable, and governance-aware decision support process, which represents a significant methodological step-change relative to more simplistic matching approaches, which may only support single-criterion matching outcomes, or approaches that are entirely opaque to the matching process (Martínez-Manzanares et al., 2024; Zadykian et al., 2025; Aleisa et al., 2023). See

Table 3.

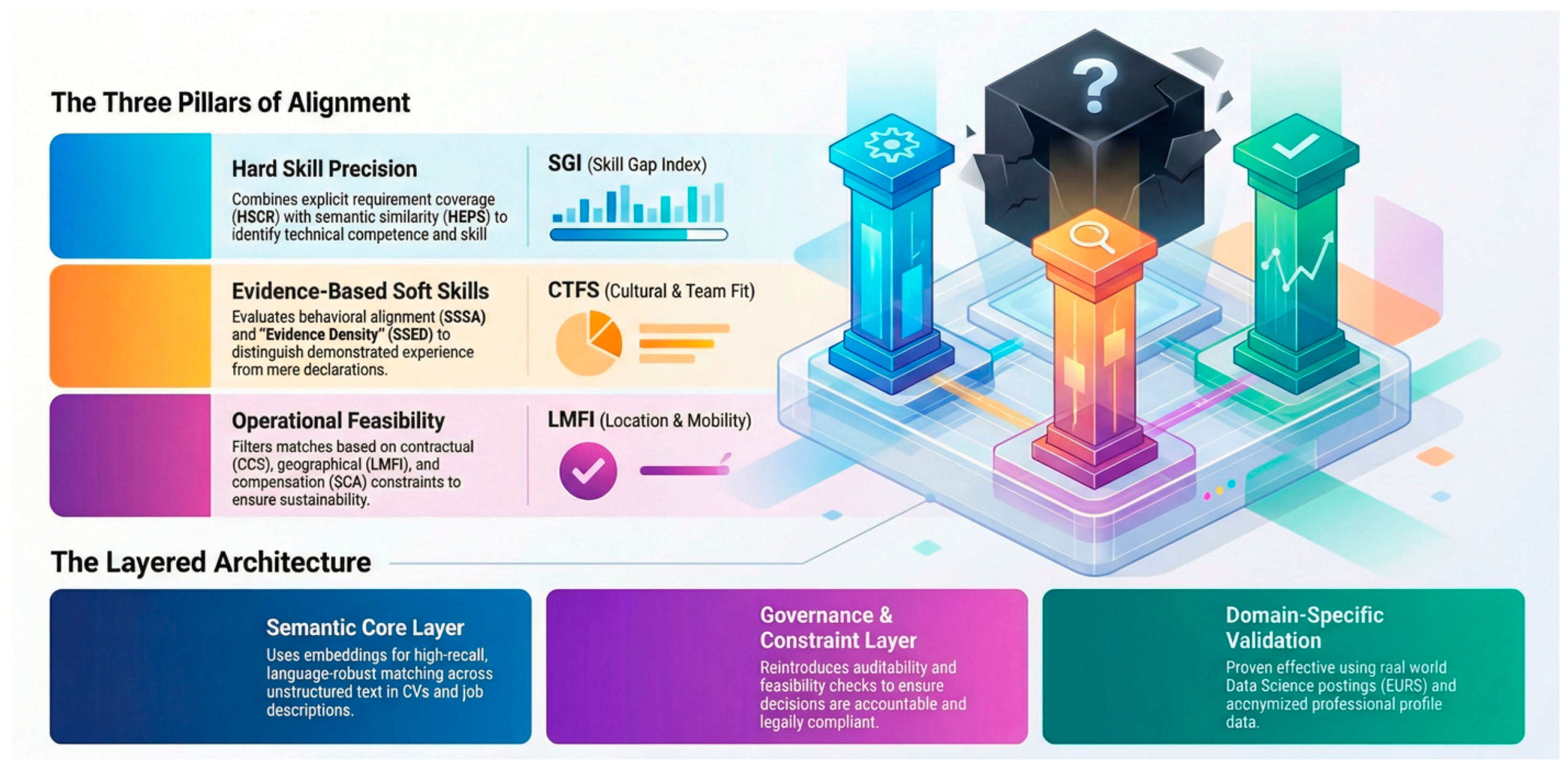

Figure 5 shows a visual representation of the proposed methodological framework, with both the three pillars of alignment and the multi-layered structure of the multi-KPI method being clearly outlined. See

Figure 5.

The three pillars represent the three dimensions of compatibility that are explicitly addressed within the framework, namely hard skill precision, evidence-based soft skills, and operational feasibility, thereby reinforcing the view that “fit” is not just some latent construct, but an emergent property of the interplay between different, albeit complementary, dimensions of compatibility, in keeping with the latest advancements in recruitment research that advocate multi-dimensional models of assessment (Potočnik et al., 2024). With regard to the hard skill dimension, Figure X clearly illustrates the interplay between explicit requirement coverage and semantic similarity, thereby reinforcing the view that HSCR, SGI, and HSPS are part of an integrated framework of technical competence assessment, in keeping with the latest advancements in AI-based job matching methodologies, which combine both semantic similarity-based approaches with structured skill alignment methodologies (Ajjam & Al-Raweshidy, 2025). With regard to the behavioral dimension, the emphasis placed upon the interplay between declared and demonstrated soft skills, in keeping with the SSSA, SSED, and CTFS, is clearly illustrated, thereby reinforcing the view that evidence density and contextual alignment are critical dimensions of compatibility, with the latter being an emergent property of the interplay between different dimensions of compatibility, in keeping with the latest advancements in algorithmic decision-making, wherein both explainability and traceability of evidence are critical design dimensions of algorithmic decision-making processes (Mehrabi et al., 2021; Raghavan et al., 2020). The pillar of operational feasibility explicitly communicates that matching is not only subject to competence constraints but also to real-world constraints, as expressed by CCS, LMFI, and SCA, which filter and qualify plausible matches on the basis of contractual, geographical, and seniority-related constraints. The inclusion of contextual and governance-aware constraints reflects the increasing awareness of the importance of incorporating accountability and decision justification systems in recruitment systems, going beyond predictive accuracy (Raghavan et al., 2020). The lower section of the figure completes the conceptual breakdown of the system by depicting the multi-layered architecture of the system. The semantic core layer refers to the embedding-based components HSPS, SSSA, CTFS, which are intended to offer high recall rates and language robustness in matching unstructured text in CVs and job descriptions. This layer reflects the “semantic first” principle of the framework, which explains why embedding-based similarity is used as the primary matching function in the system (Ajjam & Al-Raweshidy, 2025). Above it, the governance and constraint layer reinstates the importance of auditability, feasibility, and accountability by means of discrete, rule-like KPIs such as HSCR, SGI, SSED, CCS, LMFI, and SCA, which ensure that matching recommendations are not only semantically plausible but also operationally feasible. The distinction between the predictive core and the governance layer reflects recent calls for fairness-aware and explainable AI systems in high-stakes applications such as hiring (Mehrabi et al., 2021; Raghavan et al., 2020). The domain validation layer finally emphasizes that the entire system is grounded in real-world data and applications, facilitating validation without affecting the core decision-making process, which reflects recent views on evidence-based recruitment systems and selection systems (Potočnik et al., 2024). The cumulative evidence substantiates the methodological argument that the framework represents an advancement over monolithic matching approaches by assembling diverse evidence types into a modular and understandable decision architecture. The image illustrates the manner in which ongoing semantic similarity signals, coverage and constraint checks, and evidence-based indicators collectively function as constituent elements of a monolithic system rather than mutually exclusive approaches. The image thus illustrates the set of KPIs presented in Table X as the functional core of a multi-criteria decision support process that is both explainable and governance-conscious and illustrates the manner in which semantic robustness, scalability, and auditability are achieved simultaneously through architectural separation and controlled integration of the constituent layers (Ajjam & Al-Raweshidy, 2025; Mehrabi et al., 2021; Raghavan et al., 2020).

Data Sources and Dataset Construction. The empirical analysis relies on two different data sources: one consisting of job offers retrieved from the EURS platform and the other consisting of candidate profiles retrieved from a prominent professional social networking platform that are publicly available on the web. The rationale for choosing these data sources is that it allows the analysis to be more ecologically valid since it relies on real-world data while at the same time adhering to the requirements of the current data recruitment pipeline that relies on authentic data sources such as job offers or candidate profiles (Frazzetto et al., 2025; Mashayekhi et al., 2022). Both the job offers and candidate profiles are retrieved using a keyword-based search query using the keyword “Data Science.” This approach allows the analysis to be more focused on a well-defined professional domain while at the same time allowing for the heterogeneity of job offers or candidate profiles that are available in the data science job market (Khaouja et al., 2021). The job offers are retrieved from the EURS platform, which offers structured as well as semi-structured job offers that include the skills required for the job, the responsibilities of the job, the contractual conditions of the job, and other relevant information pertaining to the job. This data is used to create the job representation layer of the proposed system. Pre-processing of the data is performed using various techniques such as text normalization, tokenization, and the removal of boilerplate content from the job offers (Khaouja et al., 2021; Mashayekhi et al., 2022). The candidates' profiles were obtained from publicly accessible professional profiles. The emphasis here was on information that is relevant to professional matching. The information obtained from the profiles was then encoded using information extraction and embedding-based encoding. The use of these techniques falls under the recent pipelines that integrate information extraction and semantic analysis for recruitment optimization. Importantly, the dataset was created strictly for research purposes and under the guidelines of relevant ethical and legal standards. The encoded representation does not enable any form of re-identification of the individuals and is strictly for the evaluation of the proposed matching framework. From the methodological point of view, the dataset supports the multi-dimensional character of the proposed framework. The dataset does not treat job postings and candidates' profiles as monolithic entities. Rather, both job postings and candidates' profiles are represented as composed of several semantic and functional elements. This enables the computation of different KPIs related to semantic similarity, skill coverage and gaps, behavioral evidence, and contextual and contractual compatibility. The use of these elements aligns with challenge-based evaluations of e-recruitment recommendation systems. The use of these elements aligns with challenge-based evaluations of e-recruitment recommendation systems (Mashayekhi et al., 2022). In conclusion, this dataset offers a realistic and diverse testbed to evaluate the proposed multi-KPI decision support architecture while ensuring alignment with reproducibility, transparency, and governance requirements in data-driven recruitment research (Frazzetto et al., 2025; Khaouja et al., 2021; Ifakir et al., 2025).

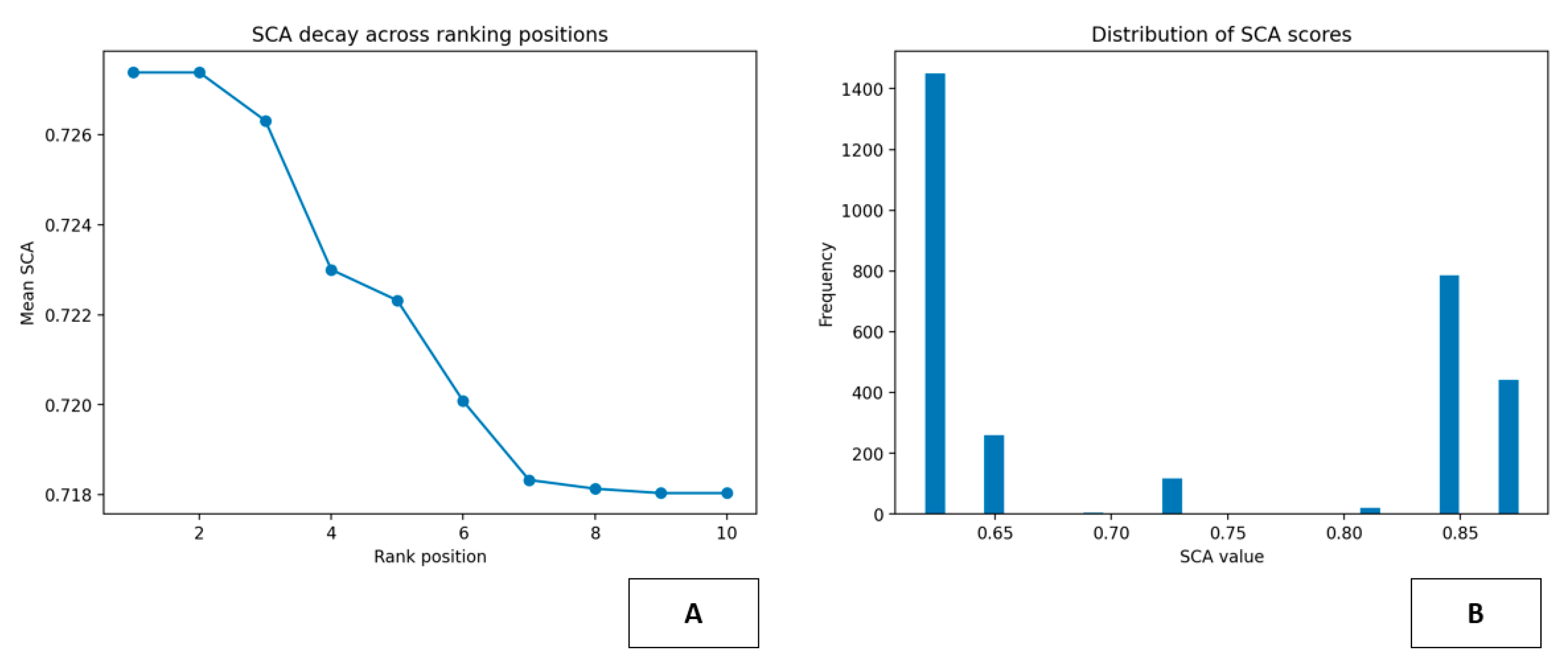

4. Evaluating an Embedding-Based Semantic Pipeline for Job–CV Matching

The study aims to design and test a semantic pipeline in matching job descriptions and CVs. The study seeks to bridge the gaps in traditional recruitment systems that often use exact keyword matching. The study is in line with the latest advancements in embedding-based and transformer-based recruitment systems (Kurek et al., 2024; Li et al., 2025). The study focuses on evaluating the similarity between job descriptions and CVs at the level of meaning by using distributional representations of texts. The study follows the latest advancements in semantic textual relatedness in recruitment systems (Zadykian et al., 2025). The study uses an experimental dataset of approximately 300 job descriptions from EURES and 300 CVs in different formats (PDF, DOCX, and TXT). The study follows the latest advancements in using end-to-end pipelines in extracting valuable insights from candidates' profiles (Frazzetto et al., 2025). The study follows the latest advancements in using dense vector representations of texts by using a pretrained multilingual sentence embedding model. The study follows the latest advancements in computing semantic similarity between job descriptions and CVs using cosine similarity. The study follows the latest advancements in using established e-recruitment recommendation paradigms that use latent semantic representations (Mashayekhi et al., 2022; Sukri et al., 2024). For each job posting, a ranked list of candidates is generated, with the top 10 candidates retained in the output. This transforms a complex, unstructured search space into a compact, semantically coherent output, making it easier for human decision-makers to process, thus improving outputs (Kurek et al., 2024). Quantitatively, the results offer strong evidence of the approach’s efficacy and stability. As can be seen in Table X, the experiment covers 308 job offers, resulting in 3,080 job-candidate associations from the top-10 rankings, thus making it applicable to real-world scenarios, albeit for medium-sized scenarios, rather than small-scale scenarios (Mashayekhi et al., 2022). Furthermore, there are 149 unique candidates at least once in the top-10 rankings, thus making it unlikely that it is in danger of settling into a trivial solution with a limited number of candidate profiles, but rather offering a relatively diverse set of recommendations. With regard to the distribution of the similarity scores, it can be seen that the global mean is at 0.446, with a standard deviation of 0.051, thus making it a relatively moderate, yet significant, level of semantic alignment between recommended candidates, with a certain level of variability to discriminate between candidates. Furthermore, it can be seen that the minimum and maximum scores are at 0.294 and 0.715, respectively, thus making it a relatively comprehensive range of similarity, capturing both strong and weak semantic alignments, a phenomenon that is typical in transformer-based recruitment systems (Li et al., 2025). Furthermore, it can be seen that there is a certain level of quantitative validation in the proposed approach, with the average score between candidates in the rankings, from position one to position ten, diminishing from 0.491 to approximately 0.420, thus making it a coherent, well-ordered structure, rather than a flat distribution, a phenomenon that is typical in zero-shot, deep-learning-based job-candidate matching systems (Kurek et al., 2024). The above analysis of ranking separation also supports this finding. The average value of the gap between the first and tenth positions is 0.071, and the standard deviation of these gaps is 0.027. Thus, in most cases, the top-ranked profile will be considerably more relevant than the least relevant one among the top 10. The existence of both dense and less dense ranking distributions with dominant top-ranked profiles is also supported by the range of the gaps between the top and tenth positions. Such scenarios are quite common and align with real-world recruitment scenarios discussed in challenge-based evaluations of various recommendation systems (Mashayekhi et al., 2022). The statistics of the number of candidates' recurrences also support the above findings. The median value of these statistics is 7, and the maximum value is 234. Thus, there are some semantically central candidates that match a considerable number of job postings. The quantitative evidence supports the above qualitative assumption that semantic similarity may serve as an effective high-recall retrieval mechanism but may not be used as a standalone decision criterion. The latter issue may also be resolved by using the proposed system as part of the decision support system that combines various indicators and constraints (Frazzetto et al., 2025; Ifakir et al., 2025; Zadykian et al., 2025). Thus, the above numerical analysis supports the assumption that the proposed embedded semantic matching may serve as a strong quantitative baseline for the job–candidate matching problem and may serve as a good starting point for the development of the multidimensional decision support system that incorporates various indicators and constraints. Such decision support systems may also be developed using deep learning and graph-based recruitment systems (Li et al., 2025). See

Table 4.

5. Interpretable, Requirement-First Ranking in Recruitment: The Role of HSCR

The research suggests an approach to job-candidate matching based on KPIs. The HSCR plays a key role in this approach as a requirement-based KPI. The HSCR aims to offer a direct measure of how well the information given in a CV matches the explicit technical needs set forth in a job offer. This approach directly addresses the call for more transparent and manageable recruitment technologies (Nikolaou, 2021; Potočnik et al., 2024). The HSCR differs from semantic-based approaches that calculate an estimate of textual proximity. The HSCR measures “technical fitness” as a simple numerical value. This differs from embedding-based recruitment pipelines that heavily rely on distributional similarity (Ajjam & Al-Raweshidy, 2025). The HSCR can be defined as follows: Let

\(S_R

\) be a set of skills required by a job offer and

\(S_C

\) be a set of skills contained in a CV. The HSCR can be defined as

\(HSCR = |S_R \cap S_C| / |S_R|

\), where

\(|S_R|

\) denotes the number of skills required by a job offer. The HSCR has a range of [0, 1], where 0 means no coverage at all and 1 means full coverage. The HSCR is a ratio; therefore, it remains comparable across various job offers despite differences in the number of skills required by a job offer. In the context of the broader multi-KPI framework described in the article, which incorporates semantic signals, coverage indicators, and contextual dimensions, HSCR provides a key methodological component by introducing a system of explicit requirement control, which can counterbalance a system that could be dominated by distributional similarity measures (Ajjam & Al-Raweshidy, 2025). While embedding-based approaches provide a robust system for achieving high recall, HSCR provides a system for ranking that is tied to verifiable technical constraints, which can be seen as a key component of transparency, governance, and explainability, all of which have become key components of hiring research in the context of AI hiring (Raghavan et al., 2020; Mehrabi et al., 2021). In terms of data, the article uses a dataset of real-world collections of job offers and CVs that exist in heterogeneous formats, such as PDF, DOCX, and TXT, which can be seen as a realistic system that aligns with the application of modern AI hiring systems (Van Esch et al., 2019). A key component of the system is a controlled vocabulary of hard skills, which includes programming languages, frameworks, platforms, and methodological terms, which serves as a lightweight ontology for the system, aligning with a structured approach to translating work histories into a measurable space, which has become a key component of translating applicant work histories into a measurable space, as described in Sajjadiani et al. (2019). By using a combination of direct substring matching and lightweight fuzzy matching, the system is able to identify a range of skills for each document, which can be seen as a key component of translating unstructured text into a measurable space, which can be contrasted with the use of dense vectors, which can be seen as a key component of embedding-based approaches (Ajjam & Al-Raweshidy, 2025). Once the skill sets are extracted, HSCR is computed for each job-candidate pair. Candidates are ranked based on their ability to cover the required skills for a given job, and a top-10 list is created for each job offer, thus fulfilling the requirement-first selection criteria. The output is recorded in a structured table with job IDs, candidate IDs, ranking, and HSCR score. A score close to 1 signifies that all required skills for a particular job have been met, while lower scores indicate that some skills have been met, along with specific technical gaps. Since the HSCR score for each job-candidate pair can be broken down into the skills met and those that have not been met, this measure is not only quantitative but also explainable, thus fulfilling the need for human-in-the-loop decision-making while also aligning with the need for interdisciplinary research that calls for explainable and accountable screening mechanisms (Raghavan et al., 2020; Liem et al., 2018). Table X presents the aggregate statistics for the HSCR-based matching experiment. The data consists of 308 job offers, each with a top-10 list, thus creating 3,080 job-CV matches. Out of the rankings, 133 unique candidates exist, thus demonstrating that the system does not reduce to a trivial solution with a small number of profiles dominating the recommendation space. In fact, globally, HSCR values have a mean of 0.81 with a standard deviation of 0.36, covering the entire range from 0 to 1, thus demonstrating that this measure successfully captures the different levels of technical coverage, from those who do not meet even the explicit requirements to those who meet all the required skills. This relatively high mean value is consistent with the ranking strategy, which gives priority to higher coverage ratios within the top-10 lists. The ranking structure also provides additional evidence of the consistency of the HSCR-based ordering, with the mean HSCR value of rank 1 being close to 0.84, gradually decreasing to 0.79 at rank 10. This continuous decline in HSCR value with rank position confirms that the ranking system generates a meaningful ordering of the candidates, as opposed to generating flat scores. The small differences between consecutive rank positions suggest that, technically, most of the candidates are equivalent with regard to skill coverage, which is consistent with the labor market reality, where several profiles are expected to satisfy most of the essential demands of a given job position (Potočnik et al., 2024). Finally, additional interesting results are provided by the analysis of ranking separation, with the average gap between the first and tenth candidate being close to 0.042, accompanied by a standard deviation of 0.09. The minimum value of the gap is 0, indicating that, for some job offers, the ranking system identifies the top-10 lists where the coverage ratio of the top-ranked candidate is equal to that of the last-ranked candidate, suggesting the existence of technically equivalent profiles. The maximum value of the gap, equal to 0.4, refers to job offers where the coverage ratio of the top-ranked candidate is significantly higher than that of the other shortlisted candidates, thereby confirming the system's ability to identify clear winners, as well as situations where several technically equivalent profiles need to be evaluated using additional decision-making criteria, thereby supporting structured decision-making processes in an organizational context (Nikolaou, 2021; Raghavan et al., 2020). Lastly, the statistics on candidate recurrence are heavily skewed with a long tail. The average candidate occurs on the top-10 lists approximately 23 times, with a median of 8. Additionally, 25% of all candidates occur no more than 3 times. However, a small set of them occur extremely frequently with a maximum of 188. This suggests a set of a few generally compatible, technically “central” candidates and a set of more specialized candidates. The quantitative evidence supports HSCR-based matching as yielding structured, interpretable, and technically meaningful rankings. Thus, it supports HSCR-based matching as a solid, requirement-oriented component of a transparent multi-KPI recruitment decision support process (Ajjam & Al-Raweshidy, 2025; Mehrabi et al., 2021). See

Table 5.

Hence,

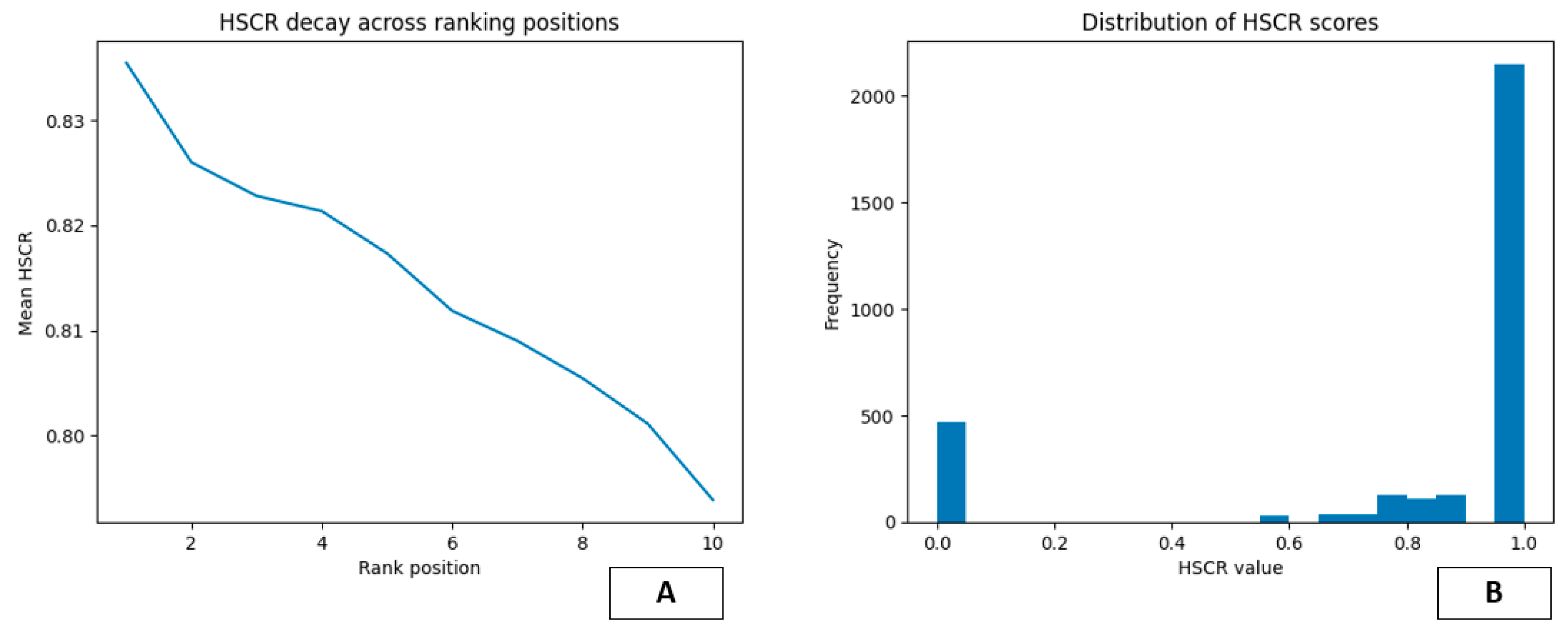

Figure 6 A and B present a different look at the behavior of the HSCR-based ranking system, describing the characteristics of the developed ranking list as well as the overall distribution of skill-coverage scores for all possible job-candidate pairs within the top-10 list. See

Figure 6.

Figures A and B confirm the numerical data presented in

Table 5, helping to better understand the HSCR measure from a practical perspective, matching modern criteria for evaluating e-recruitment recommendation system rankings (Mashayekhi et al., 2024). Figure A shows the average behavior of HSCR as a function of ranking position. It can be seen that the curve has a monotonically decaying nature from rank 1 to rank 10, with the maximum average HSCR at rank 1 gradually decreasing for lower ranks. This behavior also supports the correctness of the implementation of the ranking model, with a larger fraction of skills being covered by candidates with higher ranks. This behavior is also expected for a structured ranking model of job candidates (Kurek et al., 2024). The smooth nature of the curve also indicates that the average HSCR values for different ranks vary only slightly, suggesting a large number of technically equivalent top-10 candidates. This is a plausible phenomenon, especially for a professional recruitment context, where a large number of candidates may meet basic requirements (Potočnik et al., 2024). This behavior is also desirable from a decision-support perspective, as it indicates that there is a single best candidate, with a small number of near-best alternatives. Figure B displays a histogram showing the distribution of HSCR score values for all pairs of jobs and candidates included in the top-10 lists. It is noticeable that the distribution is highly skewed, with two peaks at score values 1.0 and 0.0, respectively. The first indicates that top candidates within the top-10 lists have full or near-full coverage of the required skills, which further validates the effectiveness of the proposed HSCR-based ranking system. On the contrary, candidates with score values near 0.0 indicate that some bottom candidates within the top-10 lists fail to cover at least one explicitly defined required skill, which often happens with jobs that have very sparse skill sets defined in their descriptions. This is a known limitation with coverage- or extraction-based systems, which often depend on entity recognition and preprocessing steps, which, in turn, depend on the quality of entity recognition itself, as indicated by Ifakir et al. (2025). Figures A and B, therefore, indicate that the proposed coverage-based system, while being effective, also presents some limitations that make further selection criteria important, especially for cases with candidates having similar levels of coverage or with jobs having poorly defined descriptions, as indicated by Mashayekhi et al. (2024).

6. HSPS as a High-Recall Semantic Signal for Job–Candidate Matching

The present study proposes Hard Skill Proficiency Similarity (HSPS) as a supplementary measure to Hard Skill Coverage Ratio (HSCR). The proposed HSPS aims at capturing a specific aspect of technical fit that cannot be measured by the presence or absence of a list of skills, which is what HSCR does. While HSCR offers a specific, requirement-based measure of how well a set of required skills is represented within a CV, HSPS offers a continuous, quantitative perspective on technical fit, which is consistent with recent embedding-based models of job-candidate matching (Kurek et al., 2024; Li et al., 2025). More specifically, HSPS is proposed as a function of cosine similarity between dense representations of technical information contained within a set of job offers and a CV, which is derived by a multilingual pretrained transformer model, thereby allowing for semantically meaningful comparison across languages and writing styles, as recent semantic textual similarity models for recruitment propose (Zadykian et al., 2025; Sukri et al., 2024). From a computational perspective, we normalize the dense representations by L2 norm, which means that cosine similarity is computationally equivalent to a dot product, allowing for efficient computation of the entire job-CV similarity matrix by means of a matrix multiplication, which is essential for scalability to datasets containing hundreds of job postings and CVs, as recent e-recruitment models propose (Mashayekhi et al., 2024). In the experiment that is reported, the system processes 308 job offers and produces 3,080 job-candidate associations by choosing the top 10 candidates for each job. This is the scale at which the HSPS can be applied realistically, as shown in the experiment, aligning with the application of the deep learning recruitment pipeline (Li et al., 2025; Frazzetto et al., 2025). The quantitative distribution of the HSPS scores offers valuable insights into the performance of the HSPS metric. The scores range globally from a minimum of 0.164 to a maximum of 0.734, with an average of 0.528 and a standard deviation of 0.075. This reflects a moderate-to-high level of semantic similarity between the shortlisted candidates. The range of the scores reflects the ability of the HSPS to distinguish between poor and good technical alignments of the candidates, a characteristic that is common in semantic ranking using embeddings (Kurek et al., 2024). Lower scores indicate that the candidate’s technical narrative is only loosely related to the technical aspect of the job posting, while higher scores indicate substantial overlap between the domains, tools, and conceptual stacks used. The lack of scores closer to 1.0 is also expected because the job description and the candidate’s CV are unlikely to match entirely (Sukri et al., 2024). The ranking of the HSPS scores also supports the internal consistency of the HSPS-based candidate matching process. The mean HSPS at the first rank is 0.578, while the scores decrease monotonically to 0.497 at the 10th rank. This indicates that the HSPS consistently ranks the semantically more aligned candidates at the top of the ranked candidate pool for each job posting, a characteristic that is common in the transformer-based recruitment pipeline (Li et al., 2025). Although the slope of the HSPS scores is declining as the ranks decrease from 1 to 10, the slope is not steeply declining. This indicates that semantically similar candidates are more common at the top of the ranked pool of candidates for the job posting, aligning with the evaluation of the recruitment recommendation system (Mashayekhi et al., 2024). Additional evidence is provided from the analysis of ranking separation, in which it is found that on average, there is a gap of approximately 0.081 between the first-ranked candidate and the tenth-ranked candidate, with a standard deviation of 0.039. Furthermore, it is found that there is a minimum gap of approximately 0.020, in which all the top-10 candidates are semantically similar, forming tight clusters of candidate profiles. On the other hand, it is found that there is a maximum gap of approximately 0.293, in which the top-ranked candidate is significantly more aligned with the job requirements compared to all the remaining candidates in the top-10 list, thus indicating that HSPS can both identify strong leaders and reveal scenarios in which all candidates in the top-10 list are almost equivalent, thus warranting further evaluation with additional criteria, including components related to extraction or explainability (Ifakir et al., 2025; Zadykian et al., 2025). The recurrence statistics of the candidates provide further insight into how the semantic model distributes the matches among the candidates. The average recurrence count among the 208 distinct candidates appearing in the top-10 lists is 14.8, with a median recurrence count of 5. The recurrence count at the 25th percentile is only 2. This again suggests a highly skewed distribution where a small set of broadly relevant candidates are seen to recur frequently. The maximum recurrence count of 122 further supports the presence of a small set of “hub” profiles semantically close to a large range of technical roles. This long-tail distribution is expected in embedding-based retrieval systems. The above statistics support our view of HSPS as a high-recall meaning-based filtering system rather than a constraint-based one (Kurek et al., 2024; Frazzetto et al., 2025). The quantitative statistics support our view of HSPS as an effective ranking criterion. The numerical properties of the HSPS metric are well-structured score distributions with coherent rankings showing smooth decay across ranks. The HSPS also provides meaningful differentiation among candidates in both clustered and polarized scenarios. The numerical properties of HSPS further support our view of HSPS as an effective measure of technical domain proximity with sufficient variability among jobs and candidates. The HSPS acts as a robust semantic component of a multi-KPI-based data-driven recruitment decision support system (Mashayekhi et al., 2024; Li et al., 2025). See

Table 6.

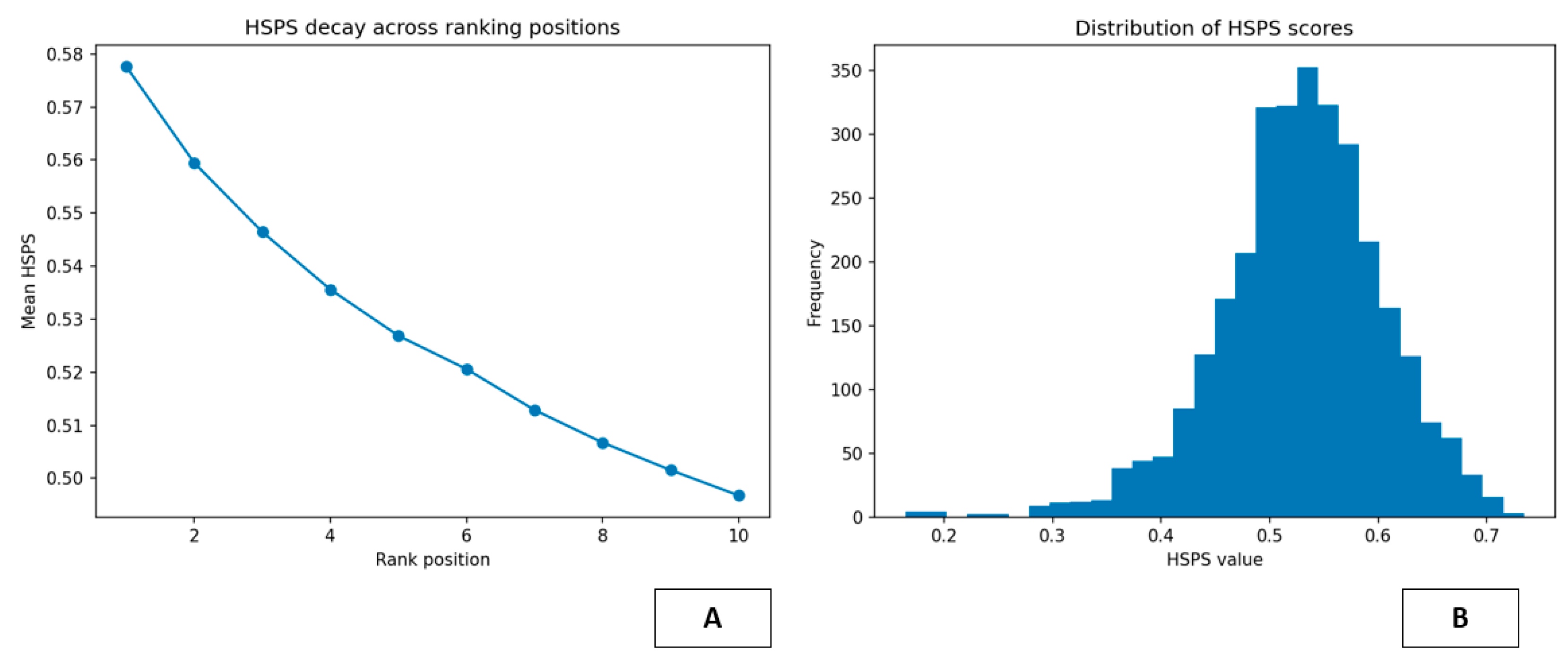

Figure 7 propose an alternative point of view on the behavior of the Hard Skill Proficiency Similarity (HSPS) key performance indicator used in the candidate ranking process.

Figure A shows the plot of the mean HSPS value as a function of the candidate’s position in the ranking process. The HSPS value monotonically decreases from the highest position down to the tenth position. This means that the candidates placed at the top of the ranking tend to have a higher semantic similarity to the content of the technical offer, while the candidates placed at the bottom of the ranking tend to have a reduced semantic similarity. This behavior is consistent with the internal consistency of the ranking process. Moreover, the behavior of the HSPS value is consistent with the evaluation behavior of the state-of-the-art e-recruitment recommendation systems (Mashayekhi et al., 2024). The behavior of the HSPS value is not random; instead, the algorithm defines the ranking along a continuous range of technical proximity. This behavior has also been observed in zero-shot or embedding-based job matching algorithms (Kurek et al., 2024). The smooth slope of the curve also implies that the top candidates tend to be technically comparable. This behavior has also been observed in the recruitment algorithms based on the transformer architecture, where semantic similarity plays the role of a graded measure of the ranking (Li et al., 2025). The behavior of the HSPS value from the point of view of the decision-making process is also satisfactory because the algorithm does not propose a single winner; instead, it proposes a set of top candidates that are technically proximal, thus allowing the decision-making process to play its role in the final evaluation of the candidates. This behavior is also consistent with the paradigm of the explainable semantic matching (Zadykian et al., 2025). Figure B illustrates the range of HSPS score value distribution among all job-candidate pairs contained within the top-10 lists. The histogram illustrates a normal distribution curve with most score values concentrated in the mid-to-high similarity range and tapering off as the score value increases. This supports the assertion that HSPS measures a range of technical similarity and does not fall into the binary ‘good’ and ‘bad’ categories as might be expected from legacy recruitment systems. The clustering of score values around a specific region of the y-axis supports the assumption that most profiles will likely have similar domain knowledge and tooling and/or project descriptions, even if not exactly the same. This is similar to large-scale semantic ranking systems (Kurek et al., 2024). The left-hand side of the histogram illustrates that although contained within the top-10 lists, some candidates have lower levels of similarity with the job’s technical focus. This phenomenon is more common in job descriptions that are less descriptive. Figures A and B collectively illustrate that HSPS supplies a soft but useful semantic signal that supplies a stable ordering of candidates and clusters similar candidates while also offering a natural and continuous score value distribution. The latter phenomenon is particularly important when considering decision support systems and the requirement to support human decision-making with a logical and understandable listing of candidates based on their semantic technical similarity (Zadykian et al., 2025; Mashayekhi et al., 2024).

7. From Skill Coverage to Skill Gaps: Introducing SGI as a Complement to HSCR

The study also introduces a new concept, Skill Gap Index (SGI), which is a natural and necessary extension of HSCR. The shift in focus changes from "how well an applicant already meets the defined requirements" to "how much still needs to be addressed with respect to the clearly defined criteria outlined in a job offer." The quantification of what is still missing is a direct result of HSCR, and SGI is defined as one minus HSCR. Therefore, SGI also inherits the positive numerical properties of HSCR, including that its range will be between 0 and 1, that comparisons across different job offers with different numbers of requirements will be possible, and that it will still be easy to interpret. An SGI of 0 means that an applicant is fully meeting the requirements outlined, while an SGI close to 1 means that there is a growing gap between the applicant's skills and the required skills. This reformulation of HSCR into SGI also has important implications because, with this approach, the skills mismatch is quantified and can be related to training initiatives that aim at reducing this mismatch. This approach is also related to other numerical approaches to quantify skills shortages and mismatch in the labor market (Bachmann et al., 2020; Chinn et al., 2020). Instead of applying a binary suitable/unsuitable test criterion on an applicant's profile, recruiters might now apply SGI to quantify how dissimilar an applicant's profile is with respect to a desired profile. The quantification approach is based on a dataset containing 308 job offers, with a corresponding top-10 shortlist, resulting in a total number of 3,080 job–CV pairs and 157 distinct candidates. This setting represents a realistic medium-scale recruitment scenario and is comparable with datasets that have been used for evaluations of state-of-the-art e-recruitment recommendation systems (Mashayekhi et al., 2024; Li et al., 2025). From a global statistical point of view, the results indicate that the measure has an average of 0.675 and a standard deviation of 0.408. The extremal values indicate that the measure does not collapse to a small range of scores. The identification of skills used in the evaluation of HSCR follows contemporary methods of skill identification from job offers and CVs (Khaouja et al., 2021; Ifakir et al., 2025; Frazzetto et al., 2025). Similarly, SGI, which represents the complement of HSCR, follows the same distribution but with an average of 0.325 and the same standard deviation of 0.408. The results cover the entire range from 0 to 1. From an operational point of view, these results indicate that on average, candidates from the top-10 shortlisted matches do not meet one-third of the explicitly required skills. The extremal values indicate that the measure does not collapse to a small range of scores. The range of the results indicates that some matches have SGI close to 0 while others have SGI close to 1. The range of SGI indicates that not only does SGI indicate the suitability of candidates but also estimates the required effort to align the candidate with the job by means of training or re-skilling. The results resonate with the importance of skill-gap measurement at the organizational and policy levels (Bachmann et al., 2020; Chinn et al., 2020). The ranking structure further supports the validity of the HSCR ordering, with the mean HSCR for rank 1 being approximately 0.724, which gradually decreases to approximately 0.675 for rank 5. This gradual decrease supports the premise that the system places candidates with higher HSCR at the top of the list, which is in line with the evaluation framework of the recruitment recommendation system (Mashayekhi et al., 2024). The relatively small slope also supports the premise that there are many technically equivalent candidates at the top of the list, which is in line with real-world labor markets where many candidates meet the majority of the core requirements of the job (Li et al., 2025). Further insight can be gained by examining the ranking separation, with the average distance between the first-ranked candidate and the tenth-ranked candidate being approximately 0.094, with a standard deviation of approximately 0.144. The minimum distance of 0 signifies cases where the top 10 candidates are technically equivalent, with no difference in terms of skill sets, while the maximum distance of approximately 0.667 signifies cases where one of the candidates is significantly better than the rest of the candidates in terms of required skills. The numerical evidence supports the premise that HSCR, in combination with SGI, provides a clear and quantitatively actionable representation of technical fit, with the numerical evidence supporting the premise that SGI has the potential to support both strict selection strategies as well as training-oriented strategies, which complements the coverage-based and semantic representation in providing support for the recruitment process, as outlined by Mashayekhi et al. (2024) and Khaouja et al. (2021). See

Table 7.

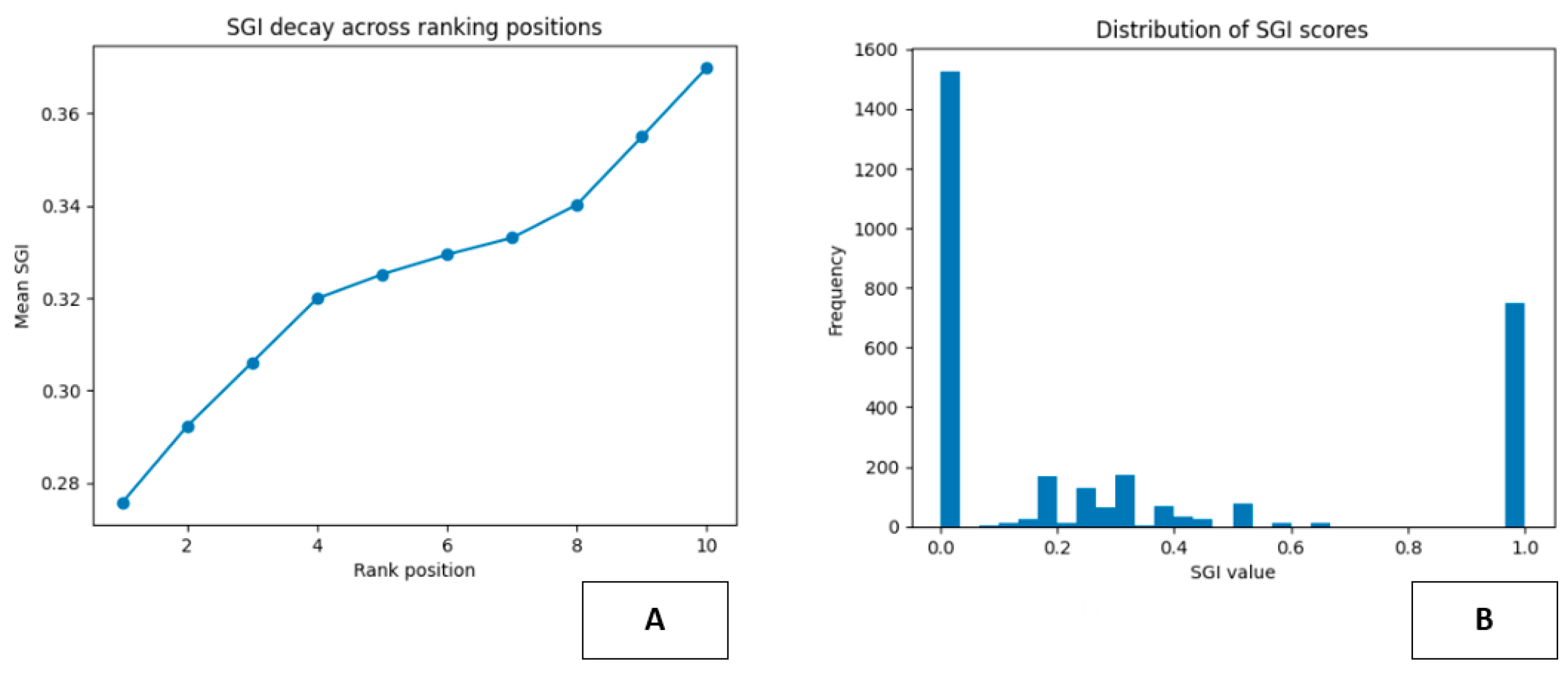

Figure 8 provides complementary insights into the behavior of the Skill Gap Index (SGI) within the ranking produced by the HSCR-based matching pipeline.

Figure A reports the average SGI value as a function of the ranking position, while Figure B shows the distribution of SGI scores across all job–candidate pairs included in the top-10 shortlists. Together, these visualizations illustrate both the internal coherence of the ranking mechanism and the overall structure of skill gaps observed in the recommended matches, in line with evaluation practices discussed in contemporary e-recruitment recommendation systems (Mashayekhi et al., 2024). The curve in Figure A exhibits a clear and monotonic increase of mean SGI values from rank 1 to rank 10. This pattern is the expected mirror image of the HSCR decay: candidates placed at the top of the ranking are characterized by smaller skill gaps, whereas lower-ranked candidates progressively exhibit larger gaps with respect to the explicit technical requirements of the job offers. The smoothness of the curve suggests that the ranking is not driven by abrupt threshold effects, but rather reflects a gradual degradation of technical completeness as one moves down the shortlist. Similar gradient-based ranking behaviors have been observed in deep learning–based resume-job matching systems (Li et al., 2025). This behavior is particularly desirable in decision-support contexts, as it indicates that the system orders candidates along a meaningful continuum of residual training needs rather than producing unstable or noisy rankings. Figure B complements this view by showing the empirical distribution of SGI scores. The histogram reveals a strongly polarized structure, with a large concentration of values near zero and another prominent mass close to one, together with a smaller but non-negligible density of intermediate values. The peak near zero corresponds to candidates who fully, or almost fully, satisfy the explicitly detected skill requirements, and therefore exhibit negligible or very small gaps. Conversely, the peak near one represents cases in which candidates lack most of the required skills, despite being included in the top-10 lists due to the relative scarcity or ambiguity of requirements in some job descriptions. The identification and quantification of such gaps depend on structured skill extraction from job ads and CVs, as highlighted in recent surveys on skill identification (Khaouja et al., 2021) and NER-based recruitment optimization pipelines (Ifakir et al., 2025). The intermediate region reflects partial matches, where candidates cover a substantial subset of the required competencies but still require non-trivial upskilling. Taken together, these figures highlight the dual role of SGI in the proposed framework. On the one hand, its monotonic increase across ranks confirms that it provides a consistent ordering signal aligned with the notion of technical distance from the target role, consistent with structured evaluation approaches in e-recruitment systems (Mashayekhi et al., 2024). On the other hand, its bimodal and dispersed distribution underscores the heterogeneity of real-world matching scenarios, where both fully aligned and strongly misaligned profiles can coexist, reinforcing the need for combining SGI with semantic and contextual KPIs in a multi-criteria decision process (Li et al., 2025).

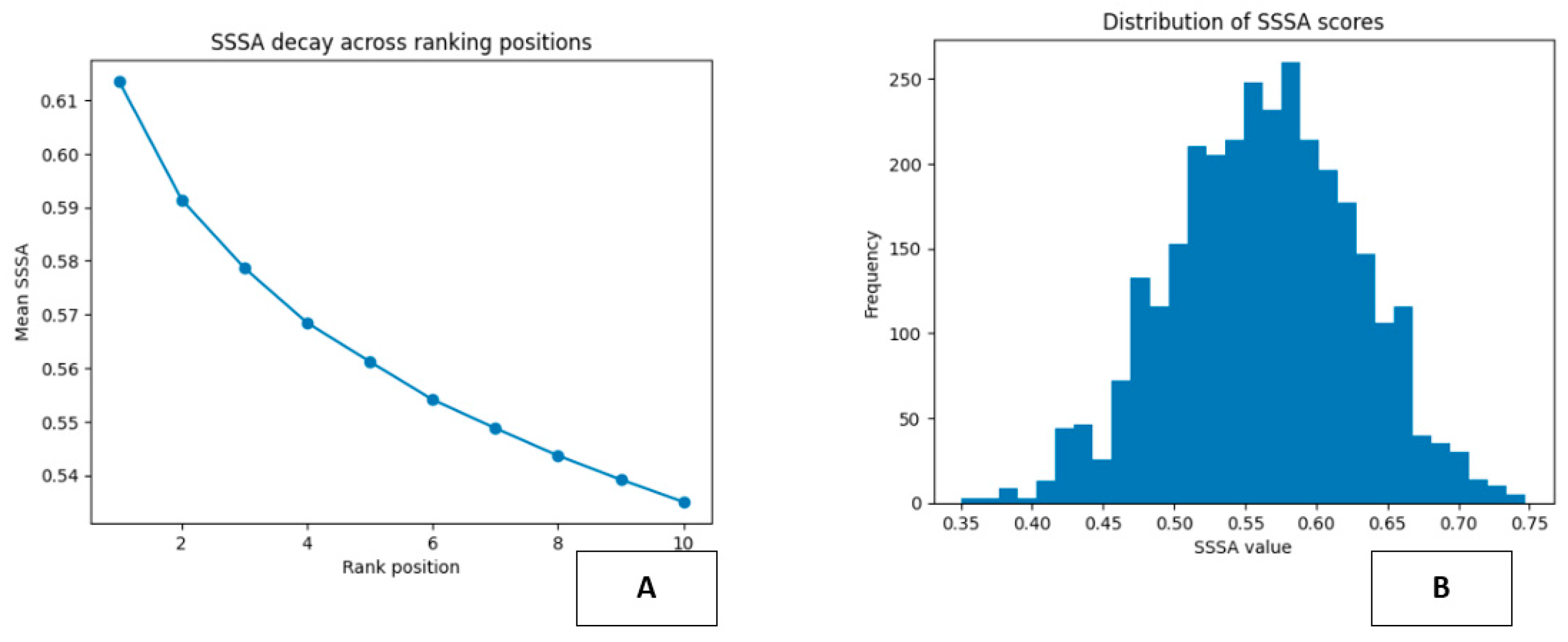

8. SSSA as a Continuous Measure of Behavioral Compatibility in Job Matching