Submitted:

28 February 2026

Posted:

02 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background and Related Work

2.1. In-the-Wild Mobile AR: Reliability, Interruptions, Recovery

2.2. Requirements Engineering for Socio-Technical Mobile Services

2.3. Traceability: Evidence-to-Requirement-to-Artefact

2.4. Reproducibility and Transfer Kits in Applied Systems

2.5. Domain Constraints (Heritage, Curriculum, Governance) as Constraints

3. Materials and Methods

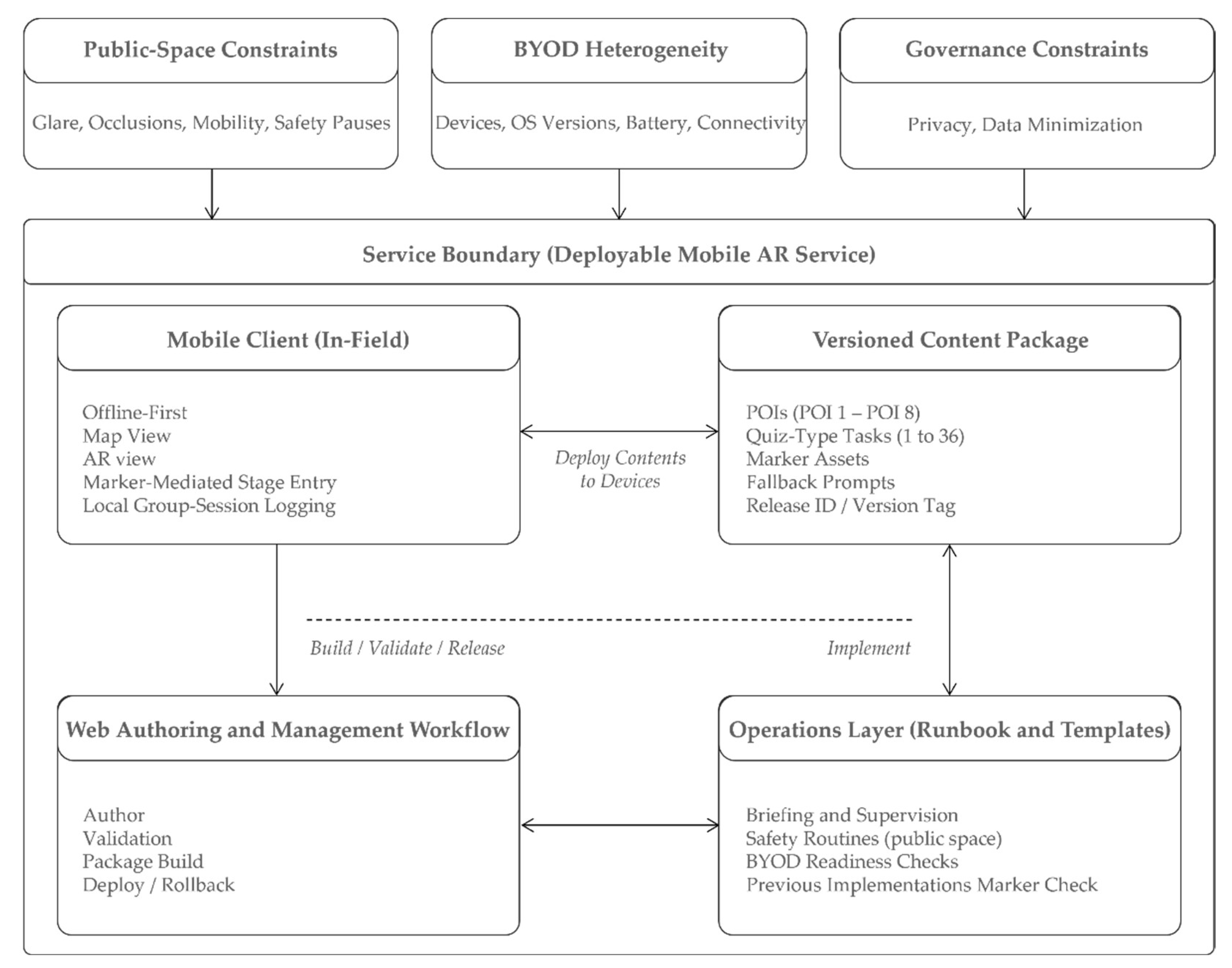

3.1. System Overview and Boundary of the Service

3.2. Architecture and Instrumentation (Client, Backend, Content Pipeline, Logging)

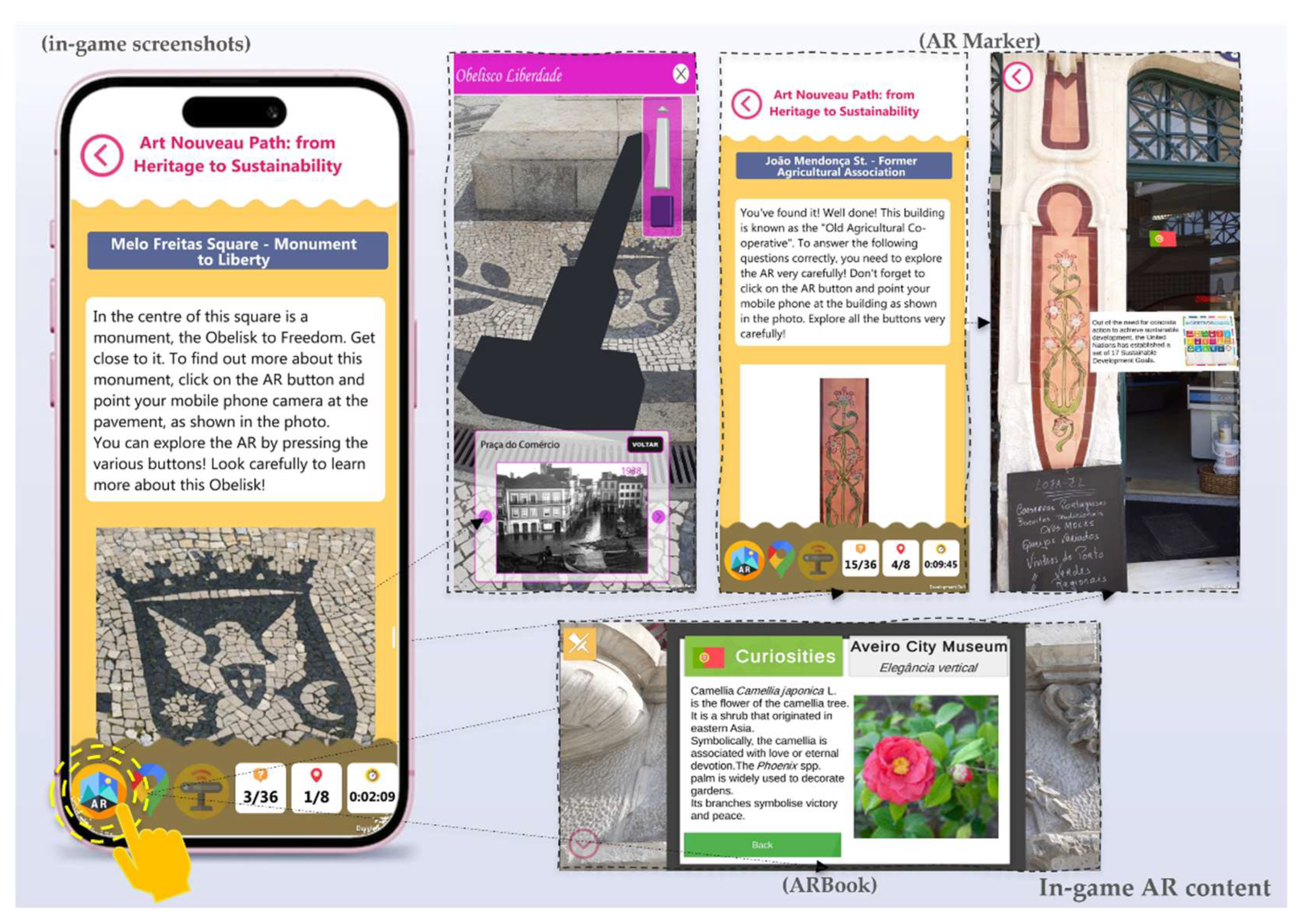

3.3. Task Model and POI Profiling Procedure (36 Tasks, 8 POIs)

3.4. Evidence Streams and Study Design

3.5. Determinant Taxonomy D1–D8 (Operational Definitions)

3.6. Qualitative Coding Protocol and Reliability

3.7. Quantitative Feasibility and Acceptability Descriptors from Logs and S2-POST

3.8. From Determinants to Implementation Requirements

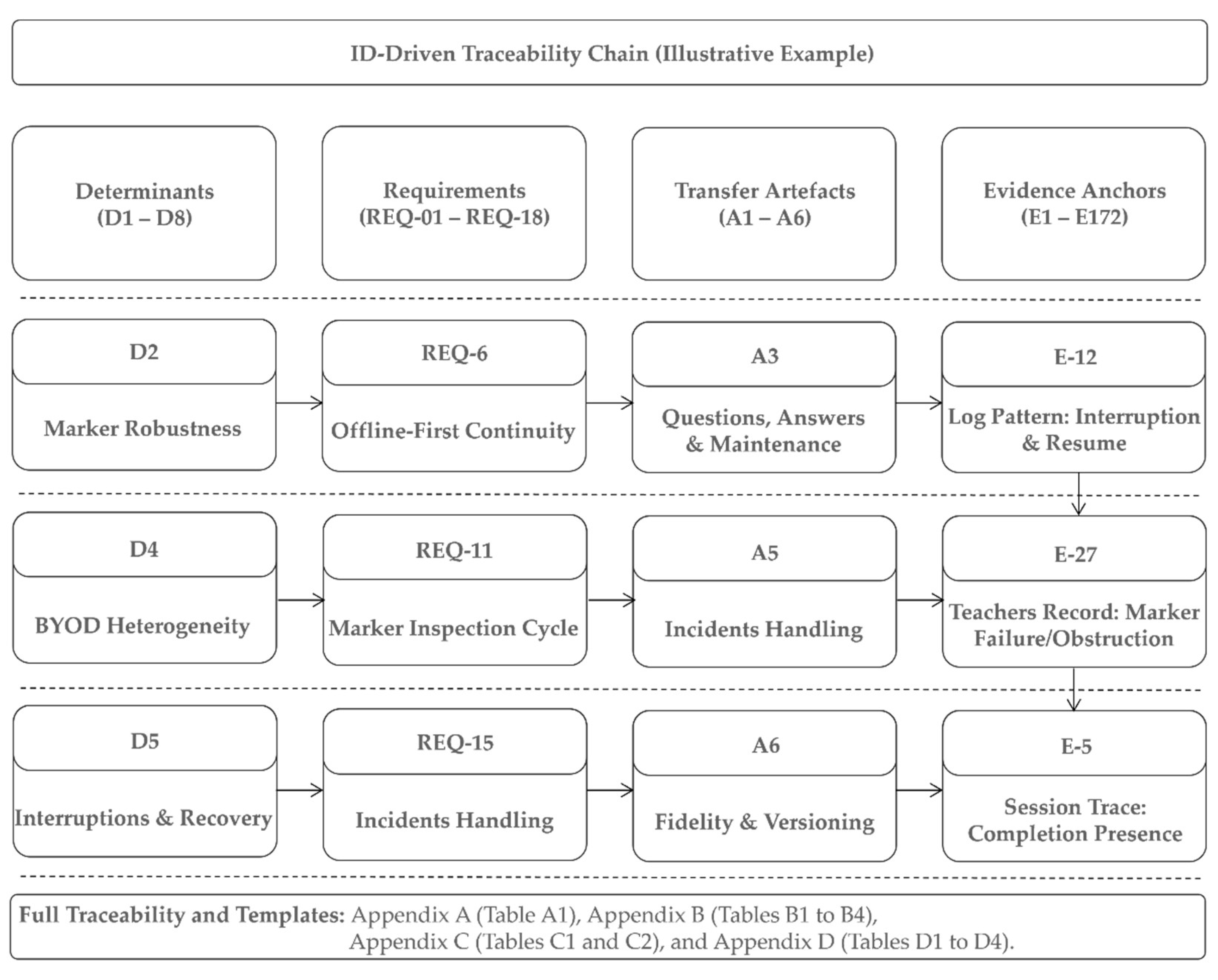

3.9. Traceability Model and Matrix Construction (Determinant-Requirement-Artefact-Evidence)

3.10. Ethics, Privacy-Aware Implementation, and Data Minimization

4. Results

4.1. Task and POI Profiling Results

4.2. Log-Derived Feasibility Envelope (N = 118 Group Sessions) and Post-Path Feasibility Checks

4.3. Teacher-Facing Implementation Signals and Determinant Concentrations (T1-VAL and T2-OBS; Teacher Records N = 54)

4.4. Derived Requirements Set (REQ-01 to REQ-18), Grouped by Determinant

4.5. Requirements-to-Artefact Traceability and Determinant-to-Transfer Mapping

4.6. Minimal Operations Stack and Transfer Kit Outputs

5. Discussion

5.1. Determinants as Non-Functional and Operability Drivers in City-Scale AR Services

5.2. Positioning Against Related Work in in-the-Wild AR, Requirements Engineering, and Traceability

5.3. Generalization Logic and Boundary Conditions

5.4. Practical Implications for Adoption, Replication, and Responsible Operations

6. Conclusions, Limitations, and Future Paths

6.1. Conclusions and Summary of Contributions (C1–C4)

6.2. Limitations

6.3. Future Paths

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AR | Augmented Reality |

| BYOD | Bring Your Own Device |

| POI | Point of Interest |

| T1-VAL | Teachers’ Validation Questionnaire (Workshop) |

| T2-OBS | Teachers’ Observation Questionnaire |

| S2-POST | Student’s Post Questionnaire |

| HCI | Human-Computer Interaction |

| OS | Operating System |

| ISO | International Organization for Standardization |

| ML | Mobile Learning |

| NFR | Non-Functional Requirement |

| MARG | Mobile Augmented Reality Game |

| C1–C4 | Contribution |

| RQ | Research Question |

| NIST | National Institute of Standards and Technology |

| ACM | Association for Computing Machinery |

| GDPR | General Data Protection Regulation |

| D1-D8 | Determinant |

| REQ1–REQ18 | Requirements |

| A1–A6 | Artefacts |

| E_ID | Evidence |

| E_LOG | Logs (Gameplay) |

| GPS | Global Positioning System |

| FAIR | Findable, Accessible, Interoperable, Reusable |

| GCQuest | GreenComp-Based Questionnaire |

| α | Alpha |

| OBS | Observation |

| KNOW | Knowledge |

| MDN | Median |

| M | Mean |

| IQR | Interquartile Range |

| SD | Standard Deviation |

| MU | Meaning Unit |

| FR | Functional Requirement |

| OP | Operational Requirement |

| DTLE | Digital Teaching and Learning Ecosystem |

| RACI | Responsible, Accountable, Consulted, Informed |

Appendix A. Full Determinant-to-Transfer Traceability Matrix

| Determinant | Evidence (absolute) |

A1 Preparation and legibility |

A2 Curriculum framing |

A3 Path orchestration and safety |

A4 Technical robustness and fallback |

A5 Consolidation and follow-up |

A6 Operations runbook pack (including differentiation and accessibility) |

|---|---|---|---|---|---|---|---|

|

D1 Curriculum alignment and framing |

MU n = 24; Teachers’ records n = 21/54 |

n/a | Curriculum mapping matrix; facilitation and framing script | n/a | n/a | n/a | n/a |

|

D2 Marker robustness and recovery |

MU n = 25; Teachers’ records n = 22/54 |

n/a | n/a | n/a | Marker deployment guidance; recovery steps; alternative triggers | n/a | n/a |

|

D3 Usability and design clarity |

MU n = 29; Teachers’ records n = 25/54 |

Teacher-facing quick start; onboarding notes; in-app legibility supports | n/a | n/a | n/a | n/a | n/a |

|

D4 Post-activity consolidation and follow-up |

MU n = 14; Teachers’ records n = 13/54 |

n/a | n/a | n/a | n/a | Structured debrief template; classroom follow-up prompts | n/a |

|

D5 Differentiation and accessibility |

MU n = 14; Teachers’ records n = 12/54 |

n/a | n/a | n/a | n/a | n/a | Age variants; accessibility notes |

|

D6 Safety and supervision |

MU n = 8; Teachers’ records n = 7/54 |

n/a | n/a | Safety briefing; supervision cues; regroup scripts | n/a | n/a | n/a |

|

D7 Collaboration and accountability routines |

MU n = 7; Teachers’ records n = 6/54 |

n/a | n/a | Role cards; device-sharing protocol | n/a | n/a | n/a |

|

D8 BYOD heterogeneity and low-tech fallback |

MU n = 10; Teachers’ records n = 9/54 |

n/a | n/a | n/a | Compatibility checks; device prep; low-tech fallback | n/a | n/a |

Appendix B. Operational Templates and Runbook (Transfer-Ready)

| Checklist area | Purpose | Operational cue |

|---|---|---|

| Pacing buffers | Allocate time buffers for interruptions |

Use a buffer of 5 to 10 minutes for crossings and regrouping |

|

Technical contingencies |

Ensure recovery paths if AR or connectivity fails |

Provide fallback prompts and non-AR progression cues |

| Group management | Maintain visibility and accountability in public space |

Use headcounts and role rotation at each POI |

|

Start and end routines |

Reduce friction at launch and closure | Standardize a start script and debrief closure questions |

| Artefact | Name | Scope (what it contains) |

|---|---|---|

| A1 | Preparation and legibility pack | Quick-start; onboarding notes; outdoor legibility checklist |

| A2 | Curriculum framing pack | Curriculum-to-task mapping matrix; facilitation and framing script |

| A3 | Path orchestration and safety pack | Safety briefing; crossing and regroup routines; role cards; device-sharing guidance |

| A4 | Technical robustness and fallback pack | Marker production and placement guidance; recovery protocol; alternative triggers; BYOD checklist; offline or no-phone alternative |

| A5 | Consolidation and follow-up pack | Debrief template; classroom follow-up prompts |

| A6 | Operations runbook pack | Roles, routines, maintenance cycle, incident response, data handling and minimization guidance; adaptation variants by age and ability; accessibility notes. |

| Activity | Instructional lead | Technical steward | Content maintainer |

|---|---|---|---|

| Curriculum framing and teacher briefing | R/A | C | C |

| BYOD readiness and device triage | C | R/A | C |

| Marker deployment and inspection | C | C | R/A |

| In-session recovery and fallback activation | R | C | C |

| Post-session log retrieval and upload | R | C | C |

| Incident logging and corrective actions | R | C | A |

| Periodic marker maintenance cycle | C | C | R/A |

| Routine | Objective | Primary role(s) | Inputs | Outputs | Frequency |

|---|---|---|---|---|---|

|

Pre-Session Preparation |

Reduce first-use friction; manage BYOD heterogeneity | Instructional lead; Technical steward | A1; A4 | Devices checked; markers inspected; groups formed | Per session |

| Session Launch | Standardize onboarding and safety briefing | Instructional lead | A2; A3 | Roles assigned; pacing buffers announced; session started | Per session |

| POI Enactment Loop | Maintain pacing and accountability at POIs | Instructional lead | A3; app tasks |

POI blocks completed; regroup checks executed | Per POI |

|

Interruption Recovery |

Sustain continuity under recognition or connectivity failure | Instructional lead; Technical steward (if present) | A4 | Session continues via recovery or fallback | As needed |

|

Path Closure and Debrief |

Consolidate and close activity | Instructional lead | A5 | Debrief captured; follow-up prompts assigned | Per session |

| Post-session log transfer | Preserve feasibility evidence; support audit | Instructional lead; Content maintainer | A6 | Logs uploaded; integrity checks performed | Per session |

| Marker maintenance cycle | Sustain marker health in city environment | Content maintainer | A4; A6 | Markers replaced or re-mounted; issues logged | Weekly or monthly |

Appendix C. Logging Schema and Redacted Example Record

| Field | Type | Description | Notes (privacy/usage in this paper) |

|---|---|---|---|

| session_id | string | Anonymous group-session identifier | No direct personal identifiers; used to aggregate events per session. |

| timestamp | datetime | Event timestamp (device-local or normalized) | Resolution sufficient for duration envelopes; do not infer individual behavior. |

| group_size | integer | Approximate number of students in the group | Optional; if unavailable, store null. |

| poi_id | string | Point-of-interest identifier (P1 to P8) | Maps events to POI-level completion traces. |

| task_id | string | Task identifier within POI (T01 to T36) | Supports task-level completion presence only. |

| stage_id | string | Stage identifier in the path | Used for progression and resumption analysis. |

| access_mode | categorical | Stage entry trigger used (e.g., ARBook, AR marker) | Represents marker-mediated access modality. |

| event_type | categorical | Event type (e.g., stage_start, item_presented, response_submitted, stage_end, interruption, resume) | Recommended to extend with explicit recovery events in future work. |

| response_present | boolean | Whether a response was recorded for the presented item | Used as feasibility indicator; correctness not used in this paper. |

| duration_s | number | Elapsed time for task or stage (seconds) | Used to compute session duration envelopes only. |

| device_os | categorical | Client OS (Android/iOS) and version | Used to characterize BYOD heterogeneity. |

| app_version | string | Mobile client version | Supports replication and version control. |

| Field | Value (redacted example) |

|---|---|

| session_id | S_2025_05_17_013 |

| timestamp | 2025-05-17T10:42:31Z |

| group_size | 4 |

| poi_id | P3 |

| task_id | T14 |

| stage_id | S3 |

| access_mode | AR marker |

| event_type | response_submitted |

| response_present | TRUE |

| duration_s | 47 |

| device_os | Android 14 |

| app_version | 1.3 |

Appendix D

| E_ID | Teacher | Subject |

|---|---|---|

| E-T1VAL-R001 | Teacher_1 | Arts |

| E-T1VAL-R002 | Teacher_2 | Geography |

| E-T1VAL-R003 | Teacher_3 | Multidisciplinary |

| E-T1VAL-R004 | Teacher_4 | Mathematics |

| E-T1VAL-R005 | Teacher_5 | Geography |

| E-T1VAL-R006 | Teacher_6 | Science |

| E-T1VAL-R007 | Teacher_7 | Mathematics |

| E-T1VAL-R008 | Teacher_8 | Civic Education |

| E-T1VAL-R009 | Teacher_9 | Multidisciplinary |

| E-T1VAL-R010 | Teacher_10 | Arts |

| E-T1VAL-R011 | Teacher_11 | Civic Education |

| E-T1VAL-R012 | Teacher_12 | Mathematics |

| E-T1VAL-R013 | Teacher_13 | History |

| E-T1VAL-R014 | Teacher_14 | History |

| E-T1VAL-R015 | Teacher_15 | Arts |

| E-T1VAL-R016 | Teacher_16 | Arts |

| E-T1VAL-R017 | Teacher_17 | Civic Education |

| E-T1VAL-R018 | Teacher_18 | Science |

| E-T1VAL-R019 | Teacher_19 | Civic Education |

| E-T1VAL-R020 | Teacher_20 | Multidisciplinary |

| E-T1VAL-R021 | Teacher_21 | Civic Education |

| E-T1VAL-R022 | Teacher_22 | Arts |

| E-T1VAL-R023 | Teacher_23 | Geography |

| E-T1VAL-R024 | Teacher_24 | Multidisciplinary |

| E-T1VAL-R025 | Teacher_25 | Geography |

| E-T1VAL-R026 | Teacher_26 | History |

| E-T1VAL-R027 | Teacher_27 | Geography |

| E-T1VAL-R028 | Teacher_28 | Civic Education |

| E-T1VAL-R029 | Teacher_29 | Mathematics |

| E-T1VAL-R030 | Teacher_30 | Mathematics |

| E_ID | Teacher |

|---|---|

| E-T2OBS-R001 | Teacher_1 |

| E-T2OBS-R002 | Teacher_2 |

| … | … |

| E-T2OBS-R023 | Teacher_23 |

| E-T2OBS-R024 | Teacher_24 |

| E_ID | SheetRow |

|---|---|

| E-LOG-R001 | 1 |

| E-LOG-R002 | 2 |

| E-LOG-R003 | 3 |

| … | … |

| E-LOG-R117 | 117 |

| E-LOG-R118 | 118 |

| REQ | Evidence anchors (E) | D | A |

|---|---|---|---|

| REQ-01 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D1 | A2 |

| REQ-02 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D1 | A2 |

| REQ-03 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D3 | A1 |

| REQ-04 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D3 | A1 |

| REQ-05 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D3 | A1 |

| REQ-06 | T2-OBS: E-T2OBS-R001 to E-T2OBS-R024; LOGS: E-LOG-R001 to E-LOG-R118 | D2 | A4 |

| REQ-07 | T2-OBS: E-T2OBS-R001 to E-T2OBS-R024; LOGS: E-LOG-R001 to E-LOG-R118 | D2 | A4 |

| REQ-08 | T2-OBS: E-T2OBS-R001 to E-T2OBS-R024; LOGS: E-LOG-R001 to E-LOG-R118 | D2 | A4 |

| REQ-09 | T2-OBS: E-T2OBS-R001 to E-T2OBS-R024; LOGS: E-LOG-R001 to E-LOG-R118 | D8 | A4 |

| REQ-10 | T2-OBS: E-T2OBS-R001 to E-T2OBS-R024; LOGS: E-LOG-R001 to E-LOG-R118 | D8 | A4 |

| REQ-11 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D6 | A3 |

| REQ-12 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D6 | A3 |

| REQ-13 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D7 | A3 |

| REQ-14 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D7 | A3 |

| REQ-15 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D4 | A5 |

| REQ-16 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D4 | A5 |

| REQ-17 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D5 | A6 |

| REQ-18 | T1-VAL: E-T1VAL-R001 to E-T1VAL-R030; T2-OBS: E-T2OBS-R001 to E-T2OBS-R024 | D5 | A6 |

References

- Rogers, Y. Interaction Design Gone Wild. Interactions 2011, 18, 58–62. [Google Scholar] [CrossRef]

- Syed, T.A.; Siddiqui, M.S.; Abdullah, H.B.; Jan, S.; Namoun, A.; Alzahrani, A.; Nadeem, A.; Alkhodre, A.B. In-Depth Review of Augmented Reality: Tracking Technologies, Development Tools, AR Displays, Collaborative AR, and Security Concerns. Sensors 2022, 23, 146. [Google Scholar] [CrossRef] [PubMed]

- Howell, G.; Franklin, J.M.; Sritapan, V.; Souppaya, M.; Scarfone, K. Guidelines for Managing the Security of 34 Mobile Devices in the Enterprise; 2023. [Google Scholar]

- Stefanidi, H.; Sünderkamp, J.-H.; Tatzgern, M.; Itzlinger, A.; Meschtscherjakov, A. You’re Making Things AR-Kward: Exploring Augmented Reality In-the-Wild MHCI041. Proc. ACM Human-Computer Interact. 2025, 9, 1–24. [Google Scholar] [CrossRef]

- ISO/IEC/IEEE. ISO/IEC/IEEE 29148:2018 Systems and software engineering Life cycle processes Requirements engineering, 2nd ed.; International Organization for Standardization: Geneva, Switzerland, 2018; Available online: https://www.iso.org/standard/72089.html (accessed on 24 February 2026).

- Kim, J.S. Making Every Study Count: Learning From Replication Failure to Improve Intervention Research. Educ. Res. 2019, 48, 599–607. [Google Scholar] [CrossRef]

- Proctor, E.; Silmere, H.; Raghavan, R.; Hovmand, P.; Aarons, G.; Bunger, A.; Griffey, R.; Hensley, M. Outcomes for Implementation Research: Conceptual Distinctions, Measurement Challenges, and Research Agenda. Adm. Policy Ment. Heal. Ment. Heal. Serv. Res. 2011, 38, 65–76. [Google Scholar] [CrossRef]

- Liang, L.; Zhang, Z.; Guo, J. The Effectiveness of Augmented Reality in Physical Sustainable Education on Learning Behaviour and Motivation. Sustainability 2023, 15, 5062. [Google Scholar] [CrossRef]

- Beyer, B.; Jones, C.; Petoff, J.; Murphy, N.R. Site Reliability Engineering: How Google Runs Production Systems; Beyer, B., Jones, C., Petoff, J., Murphy, N.R., Eds.; O’Reilly Media, Inc., 2016; ISBN 978-1-491-92912-4. [Google Scholar]

- ISO/IEC. ISO/IEC 25010:2023 Systems and software engineering Systems and software Quality Requirements and Evaluation (SQuaRE) Product quality modelInternational Organization for Standardization, 2nd ed.; Geneva, Switzerland, 2023; Available online: https://www.iso.org/standard/78176.html (accessed on 24 February 2026).

- Krause, J.; Kaufmann, A.; Riehle, D. The Code System of a Systematic Literature Review on Pre-Requirements Specification Traceability; 2020. [Google Scholar]

- Gotel, O.C.Z.; Finkelstein, C.W. An Analysis of the Requirements Traceability Problem. In Proceedings of the Proceedings of IEEE International Conference on Requirements Engineering; IEEE Comput. Soc. Press; pp. 94–101.

- Mucha, J.; Kaufmann, A.; Riehle, D. A Systematic Literature Review of Pre-Requirements Specification Traceability. Requir. Eng. 2024, 29, 119–141. [Google Scholar] [CrossRef]

- Moran, K.; Palacio, D.N.; Bernal-Cárdenas, C.; McCrystal, D.; Poshyvanyk, D.; Shenefiel, C.; Johnson, J. Improving the Effectiveness of Traceability Link Recovery Using Hierarchical Bayesian Networks. In Proceedings of the Proceedings of the ACM/IEEE 42nd International Conference on Software Engineering, New York, NY, USA, June 27 2020; ACM; pp. 873–885. [Google Scholar]

- Ruiz, M.; Hu, J.Y.; Dalpiaz, F. Why Don’t We Trace? A Study on the Barriers to Software Traceability in Practice. Requir. Eng. 2023, 28, 619–637. [Google Scholar] [CrossRef]

- Lundmark, R.; Hasson, H.; Richter, A.; Khachatryan, E.; Åkesson, A.; Eriksson, L. Alignment in Implementation of Evidence-Based Interventions: A Scoping Review. Implement. Sci. 2021, 16, 93. [Google Scholar] [CrossRef]

- Boeckl, K.R.; Lefkovitz, N.B. NIST Privacy Framework: A Tool for Improving Privacy through Enterprise Risk Management, Version 1.0. 2020. [Google Scholar] [CrossRef]

- Houben, S.; Marquardt, N.; Vermeulen, J.; Schöning, J.; Klokmose, C.; Reiterer, H.; Korsgaard, H.; Schreiner, M. Cross-Surface: Challenges and Opportunities for “bring Your Own Device” in the Wild. In Proceedings of the Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems, May 7 2016; ACM: New York, NY, USA; pp. 3366–3372. [Google Scholar]

- Hoeber, O.; Harvey, M.; Dewan Sagar, S.A.; Pointon, M. The Effects of Simulated Interruptions on Mobile Search Tasks. J. Assoc. Inf. Sci. Technol. 2022, 73, 777–796. [Google Scholar] [CrossRef]

- Schneegass, C.; Füseschi, V.; Konevych, V.; Draxler, F. Investigating the Use of Task Resumption Cues to Support Learning in Interruption-Prone Environments. Multimodal Technol. Interact. 2021, 6, 2. [Google Scholar] [CrossRef]

- ISO. ISO 9241-11:2018 Ergonomics of human-system interaction Part 11: Usability: Definitions and conceptsInternational Organization for Standardization, 2nd ed.; Geneva, Switzerland, 2018; Available online: https://www.iso.org/standard/63500.html (accessed on 24 February 2026).

- Weiser, M. The Computer for the 21st Century. Sci. Am. 1991, 265, 94–104. [Google Scholar] [CrossRef]

- Yoon, H.; Shin, C. Cross-Device Computation Coordination for Mobile Collocated Interactions with Wearables. Sensors 2019, 19, 796. [Google Scholar] [CrossRef] [PubMed]

- Gotel, O.; Cleland-Huang, J.; Hayes, J.H.; Zisman, A.; Egyed, A.; Grünbacher, P.; Dekhtyar, A.; Antoniol, G.; Maletic, J.; Mäder, P. Traceability Fundamentals. In Software and Systems Traceability; Springer London: London, 2012; pp. 3–22. [Google Scholar]

- Association for Computing Machinery. Artifact Review and Badging, Current. Version 1.1. 24 August 2020. Available online: https://www.acm.org/publications/policies/artifact-review-and-badging-current (accessed on 24 February 2026).

- Association for Computing Machinery. New Changes to Badging Terminology. Document last revised 24 August 2020. Available online: https://www.acm.org/publications/badging-terms (accessed on 24 February 2026).

- Piccolo, S.R.; Frampton, M.B. Tools and Techniques for Computational Reproducibility. Gigascience 2016, 5, 30. [Google Scholar] [CrossRef] [PubMed]

- Pham, Q.; Ton That, D.H.; Malik, T.; Youngdahl, A. Improving Reproducibility of Distributed Computational Experiments. In Proceedings of the Proceedings of the 1st International Workshop on Practical Reproducible Evaluation of Computer Systems, P-RECS 2018; 2018. [Google Scholar]

- Pauzi, Z.; Thind, R.; Capiluppi, A. Artifact Traceability in DevOps: An Industrial Experience Report. In Proceedings of the Proceedings of the 27th International Conference on Evaluation and Assessment in Software Engineering, New York, NY, USA, June 14 2023; ACM; pp. 180–183. [Google Scholar]

- Blanco-Pons, S.; Carrión-Ruiz, B.; Duong, M.; Chartrand, J.; Fai, S.; Lerma, J.L. Augmented Reality Markerless Multi-Image Outdoor Tracking System for the Historical Buildings on Parliament Hill. Sustainability 2019, 11, 4268. [Google Scholar] [CrossRef]

- Panou, C.; Ragia, L.; Dimelli, D.; Mania, K. An Architecture for Mobile Outdoors Augmented Reality for Cultural Heritage. ISPRS Int. J. Geo-Information 2018, 7, 463. [Google Scholar] [CrossRef]

- Council of Europe Council. Conclusions of 21 May 2014 on Cultural Heritage as a Strategic Resource for a Sustainable Europe. Off. J. Eur. Union 2014, 57, 36–38. [Google Scholar]

- Bianchi, G.; Pisiotis, U.; Cabrera, M.; Punie, Y.; Bacigalupo, M. The European Sustainability Competence Framework; 2022; ISBN 9789276464853. [Google Scholar]

- European Commision Key Competences for Lifelong Learning; Publications Office of the European Union: Luxemburg, 2019; ISBN 9789276004752.

- European Commission. Communication from the Commission to the European Parliament, the Council, the European Economic and Social Committee and the Committee of the Regions: Digital Education Action Plan 2021-2027: Resetting Education and Training for the Digital Age; COM(2020) 624 final; European Commission: Brussels, Belgium, 30 September 2020; Available online: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:52020DC0624 (accessed on 24 February 2026).

- Redecker, C.; Punie, Y. European Framework for the Digital Competence of Educators - DigCompEdu; Luxemburg, 2017. [Google Scholar]

- UNESCO UNESCO Policy Guidelines for Mobile Learning. Paris: UNESCO.; Kraut, R., Ed.; UNESCO: Paris, 2013; ISBN 9789230011437. [Google Scholar]

- Vuorikari, R.; Kluzer, S.; Punie, Y. DigComp 2.2, The Digital Competence Framework for Citizens - With New Examples of Knowledge, Skills and Attitudes; Publications Office of the European Union: Luxemburg, 2022; ISBN 9789276488828. [Google Scholar]

- European Parliament. Council of Europe Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the Protection of Natural Persons with Regard to the Processing of Personal Data and on the Free Movement of Such Data, and Repealing Directive 95/46/EC (General Da; European Parliament and Council of Europe: Brussels, 2016. [Google Scholar]

- Peng, R.D. Reproducible Research in Computational Science. Science (80). 2011, 334, 1226–1227. [Google Scholar] [CrossRef]

- Sandve, G.K.; Nekrutenko, A.; Taylor, J.; Hovig, E. Ten Simple Rules for Reproducible Computational Research. PLoS Comput. Biol. 2013, 9, e1003285. [Google Scholar] [CrossRef]

- ISO/IEC. International Organization for Standardization; Geneva, Switzerland, 2011. Available online: https://www.iso.org/standard/35733.html (accessed on 24 February 2026).

- ISO/IEC. ISO/IEC 27001:2022 Information Security Management Systems: Requirements; International Organization for Standardization: Geneva, Switzerland, 2022; Available online: https://www.iso.org/standard/27001 (accessed on 24 February 2026).

- ISO/IEC. ISO/IEC 27002:2022 Information Security, Cybersecurity and Privacy Protection: Information Security Controls; International Organization for Standardization: Geneva, Switzerland, 2022; Available online: https://www.iso.org/standard/75652.html (accessed on 24 February 2026).

- UNESCO UNESCO Strategy in Technological Innovation in Education (2022-2025); Paris, 2021.

- Wilkinson, M.D.; Dumontier, M.; Aalbersberg, Ij.J.; Appleton, G.; Axton, M.; Baak, A.; Blomberg, N.; Boiten, J.-W.; da Silva Santos, L.B.; Bourne, P.E.; et al. The FAIR Guiding Principles for Scientific Data Management and Stewardship. Sci. Data 2016, 3, 160018. [Google Scholar] [CrossRef]

- ISO. ISO 31000:2018 Risk Management: Guidelines; International Organization for Standardization: Geneva, Switzerland, 2018; Available online: https://www.iso.org/standard/65694.html (accessed on 24 February 2026).

- Hsieh, H.-F.; Shannon, S.E. Three Approaches to Qualitative Content Analysis. Qual. Health Res. 2005, 15, 1277–1288. [Google Scholar] [CrossRef] [PubMed]

- Fereday, J.; Muir-Cochrane, E. Demonstrating Rigor Using Thematic Analysis: A Hybrid Approach of Inductive and Deductive Coding and Theme Development. Int. J. Qual. Methods 2006, 5, 80–92. [Google Scholar] [CrossRef]

- Miles, M.B.; Huberman, A.M.; Saldaña, J. Qualitative Data Analysis: A Methods Sourcebook, 4th ed.; SAGE Publications, Inc.: Los Angeles, 2020; ISBN 9781506353074. [Google Scholar]

- Saldaña, J. The Coding Manual for Qualitative Researchers, 4th ed.; SAGE Publications Ltd., 2021; ISBN 978-1529731743. [Google Scholar]

- Hayes, A.F.; Krippendorff, K. Answering the Call for a Standard Reliability Measure for Coding Data. Commun. Methods Meas. 2007, 1, 77–89. [Google Scholar] [CrossRef]

- Krippendorff, K. Content Analysis: An Introduction to Its Methodology; SAGE Publications, Inc.: 2455 Teller Road, Thousand Oaks California 91320, 2019; ISBN 9781506395661. [Google Scholar]

- GreenComp-Based Questionnaire (GCQuest) – ENG. Available online: https://zenodo.org/records/14524933 (accessed on 24 February 2026).

- Cleland-Huang, J.; Gotel, O.C.Z.; Huffman Hayes, J.; Mäder, P.; Zisman, A. Software Traceability: Trends and Future Directions. In Proceedings of the Future of Software Engineering Proceedings; ACM: New York, NY, USA, 31 May 2014; pp. 55–69. [Google Scholar]

- Nuseibeh, B.; Easterbrook, S. Requirements Engineering. In Proceedings of the Proceedings of the Conference on The Future of Software Engineering, May 2000; ACM: New York, NY, USA; pp. 35–46. [Google Scholar]

- ISO/IEC. ISO/IEC 27701:2019 Security Techniques: Extension to ISO/IEC 27001 and ISO/IEC 27002 for Privacy Information Management: Requirements and Guidelines; International Organization for Standardization: Geneva, Switzerland, 2019; Available online: https://www.iso.org/standard/71670.html (accessed on 24 February 2026).

- Pombo, L.; Marques, M.M. EduCITY as a Smart Learning City Environment towards Education for Sustainability - Work in Progress. Proc. EdMedia + Innov. Learn., 2023; pp. 133–139. [Google Scholar]

- Ferreira-Santos, J.; Pombo, L. The Art Nouveau Path: Promoting Sustainability Competences Through a Mobile Augmented Reality Game. Multimodal Technol. Interact. 2025, 9, 77. [Google Scholar] [CrossRef]

- Ferreira-Santos, J.; Pombo, L. The Art Nouveau Path: Integrating Cultural Heritage into a Mobile Augmented Reality Game to Promote Sustainability Competences Within a Digital Learning Ecosystem. Sustainability 2025, 17, 8150. [Google Scholar] [CrossRef]

- Ferreira-Santos, J.; Pombo, L. The Art Nouveau Path: From Gameplay Logs to Learning Analytics in a Mobile Augmented Reality Game for Sustainability Education. Information 2026, 17, 87. [Google Scholar] [CrossRef]

- An, M.; Dusing, S.C.; Harbourne, R.T.; Sheridan, S.M. What Really Works in Intervention? Using Fidelity Measures to Support Optimal Outcomes. Phys. Ther. 2020, 100, 757–765. [Google Scholar] [CrossRef] [PubMed]

- Moore, G.F.; Audrey, S.; Barker, M.; Bond, L.; Bonell, C.; Hardeman, W.; Moore, L.; O’Cathain, A.; Tinati, T.; Wight, D.; et al. Process Evaluation of Complex Interventions: Medical Research Council Guidance. BMJ 2015, 350, h1258–h1258. [Google Scholar] [CrossRef]

- Rojas-Andrade, R.; Bahamondes, L.L. Is Implementation Fidelity Important? A Systematic Review on School-Based Mental Health Programs. Contemp. Sch. Psychol. 2019, 23, 339–350. [Google Scholar] [CrossRef]

- Skivington, K.; Matthews, L.; Simpson, S.A.; Craig, P.; Baird, J.; Blazeby, J.M.; Boyd, K.A.; Craig, N.; French, D.P.; McIntosh, E.; et al. A New Framework for Developing and Evaluating Complex Interventions: Update of Medical Research Council Guidance. BMJ 2021, n2061. [Google Scholar] [CrossRef] [PubMed]

- Pérez, D.; Van der Stuyft, P.; Zabala, M. del C.; Castro, M.; Lefèvre, P. A Modified Theoretical Framework to Assess Implementation Fidelity of Adaptive Public Health Interventions. Implement. Sci. 2015, 11, 91. [Google Scholar] [CrossRef]

- Wiltsey Stirman, S.; Baumann, A.A.; Miller, C.J. The FRAME: An Expanded Framework for Reporting Adaptations and Modifications to Evidence-Based Interventions. Implement. Sci. 2019, 14, 58. [Google Scholar] [CrossRef] [PubMed]

- Akçayır, M.; Akçayır, G. Advantages and Challenges Associated with Augmented Reality for Education: A Systematic Review of the Literature. Educ. Res. Rev. 2017, 20, 1–11. [Google Scholar] [CrossRef]

| Data Stream | Instrument/Source | N (records) | Analytical Unit | In-Study’s Main Use |

|---|---|---|---|---|

| Teacher’s validation workshop questionnaire | T1-VAL (open and closed fields) |

30 | Teachers’ records; meaning units (open fields) | Adoption constraints; transferability criteria; determinant quantification |

| Specialist curriculum review | T1-R (expert narratives and heuristics) |

3 | Specialists’ records; meaning units | Contextual triangulation only; not included in determinant coding or traceability matrix |

| In situ teacher observation | T2-OBS (structured observation and open fields) |

24 | Teachers’ records; meaning units (open fields) | Public-space orchestration constraints; determinant quantification |

| POI and task profiling | Design documentation and content inventory | 8 POIs; 36 tasks | POI-level dependency descriptors | Marker-dependence profiling; contingency-relevant structure |

| Group-session logs | EduCITY app logs (group sessions) |

118 sessions | Session-level event traces | Feasibility envelopes: completion traces and duration descriptors only |

| Post-path students’ questionnaire | S2-POST (binary feasibility items and GCQuest block) |

439 | Binary feasibility indicators; questionnaire integrity descriptors | Post-path acceptability constraints and administration integrity only, no outcome inference |

| Code | Determinant (Primary Focus) |

Operational Coding Cues (Inclusion Criteria) |

|---|---|---|

| D1 | Curriculum alignment and framing | Curricular fit, disciplinary linkage, lesson framing, learning aims, legitimacy for school practice, integration in class |

| D2 | Marker robustness and recovery | Marker recognition failures, AR trigger reliability, scanning issues, glare, positioning, recovery steps, alternative triggers |

| D3 | Usability, legibility, and onboarding | Interface clarity, instructions, onboarding, task legibility, map/AR switching friction, confusion points, first-use support |

| D4 | Post-activity consolidation and follow-up | Debrief needs, reflection prompts, consolidation packs, classroom follow-up, assessment logistics after the path |

| D5 | Differentiation and accessibility | Accessibility requirements, inclusion, varied difficulty, support for diverse learners, readability and usability accommodations |

| D6 | Safety and supervision in public space | Risk cues, supervision needs, safe stopping points, mobility constraints, attention switching, group control under movement |

| D7 | Collaboration and accountability routines | Group roles, collaboration issues, coordination breakdowns, accountability, one-device-per-group dynamics and fairness |

| D8 | BYOD heterogeneity and low-tech fallback | Device variability, compatibility issues, battery/network constraints, fallback routines, alternative access when devices fail |

| POI | Location | Total tasks (n) | AR overlay tasks (n) | AR overlay (%) | AR Marker-triggered question tasks (n) | Marker-triggered question (%) | Low-tech tasks (OBS and KNOW) (n) | Low-tech (OBS and KNOW) (%) |

|---|---|---|---|---|---|---|---|---|

| 1 | Joaquim de Melo Freitas Square: Obelisk to Lberty’ | 5 | 1 | 20.00 | 1 | 20.00 | 0 | 0.00 |

| 2 | Joaquim de Melo Freitas Square: ‘Ala Pharmacy (old)’ | 4 | 2 | 50.00 | 1 | 25.00 | 2 | 50.00 |

| 3 | João Mendonça Street | 5 | 0 | 0.00 | 4 | 80.00 | 5 | 100.00 |

| 4 | João Mendonça Street: ‘Old Agricultural Cooperative’ |

5 | 1 | 20.00 | 4 | 80.00 | 4 | 80.00 |

| 5 | João Mendonça Street: ‘Aveiro City Museum’ |

6 | 3 | 50.00 | 6 | 100.00 | 4 | 66.67 |

| 6 | ‘Art Nouveau Museum’ | 6 | 3 | 50.00 | 5 | 83.33 | 1 | 16.67 |

| 7 | ‘José Estêvão Market’ (Fish Market) |

3 | 1 | 33.33 | 3 | 100.00 | 3 | 100.00 |

| 8 | ‘Ferro Guesthouse’ | 2 | 0 | 0.00 | 2 | 100.00 | 2 | 100.00 |

| Totals | 36 | 11 | 30.56 | 26 | 72.22 | 21 | 58.33 | |

| Stage | AR Marker (n) | AR Marker (%) | Text (n) |

Text (%) |

Image (n) | Image (%) | ARBook (n) | ARBook (%) | Audio (n) | Audio (%) | Video (n) | Video (%) |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Intro cue | 1 | 2.78 | 5 | 13.89 | 7 | 19.44 | 19 | 52.78 | 1 | 2.78 | 3 | 8.33 |

| Question | 1 | 2.78 | 9 | 25.00 | 1 | 2.78 | 25 | 69.44 | 0 | 0.00 | 0 | 0.00 |

| Correct feedback | 1 | 2.78 | 23 | 63.89 | 10 | 27.78 | 0 | 0.00 | 1 | 2.78 | 1 | 2.78 |

| Incorrect feedback | 1 | 2.78 | 21 | 58.33 | 12 | 33.33 | 0 | 0.00 | 1 | 2.78 | 1 | 2.78 |

| Descriptor | Value |

|---|---|

| Valid logged group sessions | 118 |

| Full path completion (sessions) | 118 (100.00%) |

| Duration range (minutes) | 26.00 to 55.00 |

| Duration mean (minutes) | 42.38 |

| Duration median (minutes) | 42.00 |

| Duration IQR (minutes) | 38.00 to 45.80 |

| Learners per logged group session (proxy) | 3.72 (439 students / 118 sessions) |

| Indicator | Yes (n) |

Yes (%) |

No (n) |

No (%) |

|---|---|---|---|---|

|

Interest in learning about sustainability through Art Nouveau heritage |

432 | 98.41 | 7 | 1.59 |

| Interest in learning more about Aveiro’s Art Nouveau heritage | 414 | 94.31 | 25 | 5.69 |

| Self-reported ability to name sustainability competences | 265 | 60.36 | 174 | 39.64 |

| Perception that the game addresses sustainability competences | 434 | 98.86 | 5 | 1.14 |

| Perceived importance of sustainability competences | 427 | 97.27 | 12 | 2.73 |

| Interest in learning more about sustainability competences | 369 | 84.05 | 70 | 15.95 |

| Indicator | Value (N/n and %) |

|---|---|

| Complete-case records (all binary acceptability and feasibility items) | 439/439 (100.00) |

| Complete-case records (all Q1 to Q25 present) | 438/439 (99.77) |

| Total missing item cells (Q1 to Q25) | 7/10,975 (0.06) |

| Indicator | Yes (n) |

Yes (%) |

No (n) |

|---|---|---|---|

| Would recommend to other teachers | 28 | 93.33 | 2 |

| Consider it feasible to integrate in curricular practice | 27 | 90.00 | 3 |

| Consider the tasks understandable without prior AR training | 27 | 90.00 | 3 |

| Intend to use the resource in future activities | 28 | 93.33 | 2 |

| Curricular Area/Subject | n | % |

|---|---|---|

| Civic Education | 6 | 20.00 |

| Arts | 5 | 16.67 |

| Geography | 5 | 16.67 |

| Mathematics | 5 | 16.67 |

| Multidisciplinary | 4 | 13.33 |

| History | 3 | 10.00 |

| Science | 2 | 6.67 |

| Item | Mean (M) |

Standard Deviation (SD) |

Median (MDN) |

Min. | Max. |

|---|---|---|---|---|---|

| Instructions were clear | 4.67 | 0.96 | 5 | 3 | 6 |

| Would participate again | 5.75 | 0.44 | 6 | 5 | 6 |

| Feasible to integrate in school practice | 5.08 | 0.58 | 5 | 4 | 6 |

| Appropriate across multiple grade levels | 4.88 | 0.61 | 5 | 4 | 6 |

| Perceived innovativeness of the resource | 5.62 | 0.49 | 6 | 5 | 6 |

| Observation indicator | Yes (n) | Yes (%) | No (n) |

|---|---|---|---|

| Activity supports exploring other places or paths | 15 | 62.50 | 9 |

| Activity supported discussion about sustainability | 16 | 66.67 | 8 |

| Activity supported care for public space | 20 | 83.33 | 4 |

| Activity supported relation to classroom content | 17 | 70.83 | 7 |

| Activity supported problem solving | 20 | 83.33 | 4 |

| Activity supported group collaboration | 18 | 75.00 | 6 |

| Category | Example improvement focus | Count (n/N) |

|---|---|---|

| Technical robustness and device constraints | BYOD preparation, connectivity planning, low-tech alternative | 14/24 |

| Orchestration and Group Management | Cooperative inter-group challenges, time and pacing guidance | 5/24 |

| Instruction legibility and teacher-facing scripts | Teacher’s guide, scripts for assessment and follow-up | 4/24 |

| Differentiation and Accessibility | Adaptations by age, Scaffolding | 3/24 |

| Content Enrichment | More contextual information, and additional heritage facts | 3/24 |

| Implementation determinant |

Total MU (N) |

T1-VAL MU (n) |

T2-OBS MU (n) |

Teacher records mentioning (n/N) |

Teachers mention (%) |

MU (%) |

Transfer kit component |

|---|---|---|---|---|---|---|---|

| D3: Usability, legibility, onboarding | 29 | 22 | 7 | 25/54 | 46.30 | 22.14 | Teacher-facing quick start; in-app legibility supports; onboarding notes |

| D2: Marker robustness and recovery | 25 | 14 | 11 | 22/54 | 40.74 | 19.08 | Marker deployment guidance; recovery steps; alternative triggers |

| D1: Curriculum alignment and framing | 24 | 19 | 5 | 21/54 | 38.89 | 18.32 | Curriculum mapping matrix; facilitation and framing script |

| D4: Post-activity consolidation | 14 | 9 | 5 | 13/54 | 24.07 | 10.69 | Structured debrief template; classroom follow-up prompts |

| D5: Differentiation and accessibility | 14 | 9 | 5 | 12/54 | 22.22 | 10.69 | Adaptation variants by age; accessibility notes |

| D8: BYOD heterogeneity and fallback | 10 | 4 | 6 | 9/54 | 16.67 | 7.63 | Device preparation and compatibility checks; low-tech fallback options |

| D6: Safety and supervision | 8 | 4 | 4 | 7/54 | 12.96 | 6.11 | Safety briefing; supervision and public-space cues |

| D7: Collaboration and accountability routines | 7 | 3 | 4 | 6/54 | 11.11 | 5.34 | Role cards; device-sharing protocol; regrouping scripts |

| Source | Indicator Type | Key Result (Descriptive) |

|---|---|---|

| T1-VAL (N = 30) | Recommendation and intent | High endorsement for recommending and future use |

| T1-VAL (N = 30) | Instruction clarity | Lower dispersion than technical concerns, but variability remains at first-use |

| T2-OBS (N = 24) | Enactment constraints | Recurrent needs in safety routines, pacing buffers, and group orchestration |

| T2-OBS (N = 24) | Improvement requests | Concentration in robustness, BYOD constraints, and teacher-facing scripts |

| REQ ID | Determinant | Type 2 | Requirement statement (shall) |

Acceptance criteria (verification) |

Transfer artefact(s) |

|---|---|---|---|---|---|

| REQ-01 | D1 | OP | Provide a curriculum-to-task mapping matrix covering all POIs and tasks. | Matrix includes all POIs and tasks with explicit curriculum descriptors and intended learner outputs. | A2 |

| REQ-02 | D1 | OP | Provide a teacher-facing facilitation and framing script for enactment. | Script specifies learning aims, time budget, group roles, expected outputs, pacing guidance, and closure prompts (1 to 2 pages). | A2 |

| REQ-03 | D3 | OP | Provide a teacher-facing quick-start guide for first-time use. | One-page start routine plus core navigation cues; includes a minimal troubleshooting checklist. | A1 |

| REQ-04 | D3 | OP | Provide onboarding notes that reduce first-use confusion. | Onboarding notes address scanning posture, path flow, and the distinction between question access and AR overlays. | A1 |

| REQ-05 | D3 | NFR | Provide in-app legibility supports suitable for outdoor conditions. | Field check confirms instruction clarity under mobility and glare; font sizing and contrast cues are explicitly addressed. | A1 |

| REQ-06 | D2 | NFR | Provide marker production and deployment guidance suitable for outdoor use. | Deployment guide specifies print spec, size, mounting, inspection points, and glare mitigation steps; replacement criteria are defined. | A4 |

| REQ-07 | D2 | FR | Provide explicit recovery steps for recognition failure and interrupted progression. | Recovery protocol includes rescan strategy, repositioning, restart, rejoin, and teacher override steps; recovery is executable on-site. | A4 |

| REQ-08 | D2 | FR | Provide alternative triggers or progression cues to reduce brittle marker dependence. | At least one alternative access path is defined per POI block (for example, teacher override, skip mechanism, or offline prompt). | A4 |

| REQ-09 | D8 | OP | Provide BYOD readiness and compatibility checks. | Pre-session checklist covers device readiness, camera permissions, storage, battery, and connectivity; common failure states are enumerated. | A4 |

| REQ-10 | D8 | OP | Provide low-tech fallback options to sustain continuity. | Fallback includes non-AR progression cues and an offline or no-phone alternative for restricted contexts; materials are printable. | A4 |

| REQ-11 | D6 | OP | Provide a safety briefing and public-space supervision cues. | Safety script includes supervision rules, crossing routines, and stop criteria; responsibilities are assigned before launch. | A3 |

| REQ-12 | D6 | OP | Provide regrouping scripts and pacing buffers. | Routines include headcounts and buffer time guidance (for example, 5 to 10 minutes for crossings and regrouping). | A3 |

| REQ-13 | D7 | OP | Provide role cards supporting accountability in group use. | Role cards specify responsibilities (navigator, scanner, recorder, timekeeper) and rotation rules. | A3 |

| REQ-14 | D7 | OP | Provide a device-sharing protocol for equitable participation. | Protocol defines rotation frequency and ensures each learner accesses core interaction moments; accountability checks are included. | A3 |

| REQ-15 | D4 | OP | Provide a structured debrief template for immediate consolidation. | Template includes prompts for reflection, evidence use, and sustainability framing; outputs are defined (oral, worksheet, or digital). | A5 |

| REQ-16 | D4 | OP | Provide classroom follow-up prompts for post-path use. | Follow-up prompts include extension tasks aligned with curriculum descriptors and sustainability competences. | A5 |

| REQ-17 | D5 | OP | Provide adaptation variants by age and ability. | Variants include simplified and extended pathways, timing adjustments, and scaffolding suggestions. | A6 |

| REQ-18 | D5 | OP | Provide accessibility notes addressing inclusion constraints. | Notes address mobility, sensory constraints, and alternative participation roles; inclusive design cues are provided. | A6 |

| Determinant | Primary REQs | Transfer Artefact(s) |

|---|---|---|

| D1 | REQ-01, REQ-02 | A2 |

| D2 | REQ-06, REQ-07, REQ-08 | A4 |

| D3 | REQ-03, REQ-04, REQ-05 | A1 |

| D4 | REQ-15, REQ-16 | A5 |

| D5 | REQ-17, REQ-18 | A6 |

| D6 | REQ-11, REQ-12 | A3 |

| D7 | REQ-13, REQ-14 | A3 |

| D8 | REQ-09, REQ-10 | A4 |

| Determinant | Dominant quality driver (illustrative) | Operability implication | Trace output in this study |

|---|---|---|---|

| D1 Curriculum alignment and framing | Appropriateness and relevance | Framing as boundary condition, not learning effect claim | REQ-01 to REQ-02; Table 9 to Table 10 |

| D2 Marker robustness and recovery | Reliability and recoverability | Recovery runbooks, alternative triggers, maintenance cycle | REQ-06 to REQ-08; Table 14 to Table 15 |

| D3 Usability and onboarding | Usability and learnability | Quick-start, field legibility checks, facilitation scripts | REQ-03 to REQ-05; Table 8 to Table 10 |

| D4 Post-activity consolidation | Continuity across contexts | Debrief templates and follow-up prompts | REQ-15 to REQ-16; Table 14 |

| D5 Differentiation and accessibility | Accessibility and inclusiveness | Alternative enactment variants | REQ-17 to REQ-18; Table 14 |

| D6 Safety and supervision | Quality in use (risk reduction) | Crossing routines, regrouping, pacing buffers | REQ-11 to REQ-12; Table 8 and Table 14 to Table 15 |

| D7 Collaboration and roles | Operability in group enactment | Role cards, turn-taking, accountability protocol | REQ-13 to REQ-14; Table 6 to Table 8 |

| D8 BYOD heterogeneity and fallback | Portability and compatibility | Device triage, offline or no-phone fallback | REQ-09 to REQ-10; Table 7 and Table 14 to Table 15 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.