Submitted:

27 February 2026

Posted:

28 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

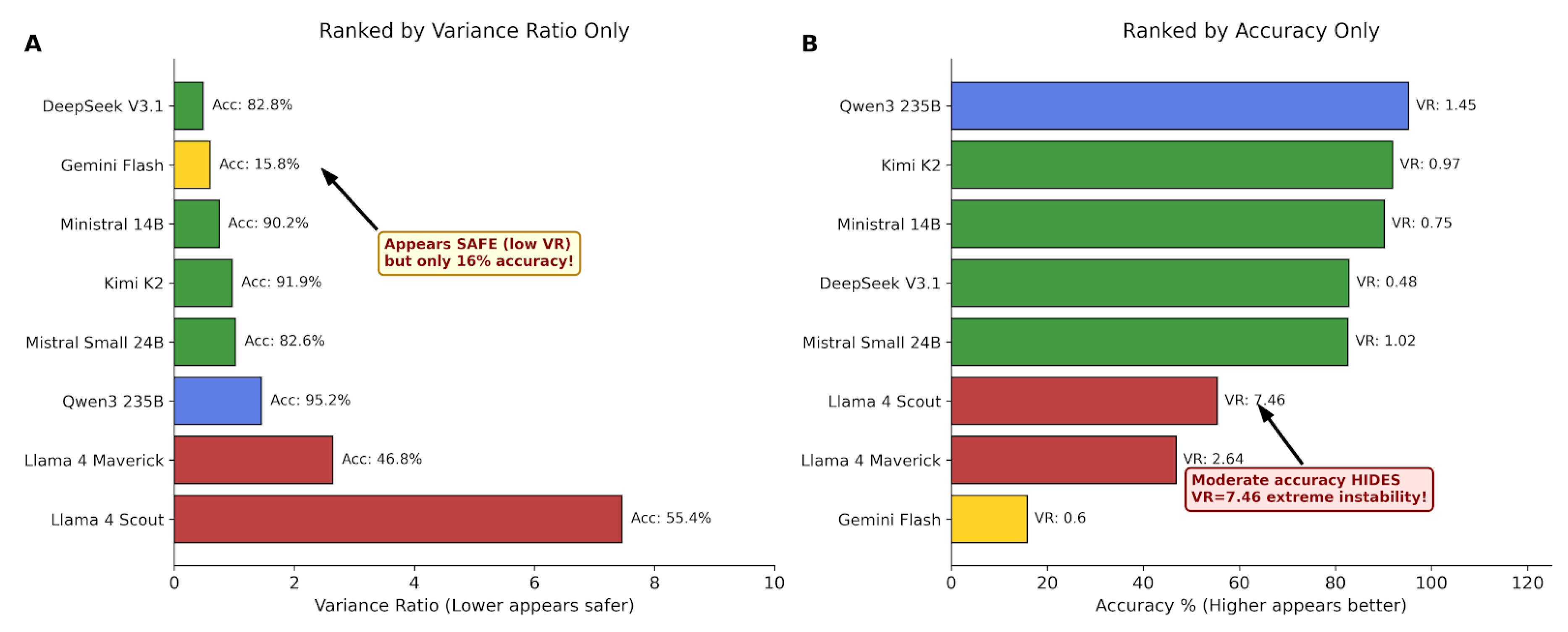

- Demonstration that accuracy and predictability are statistically independent (, )

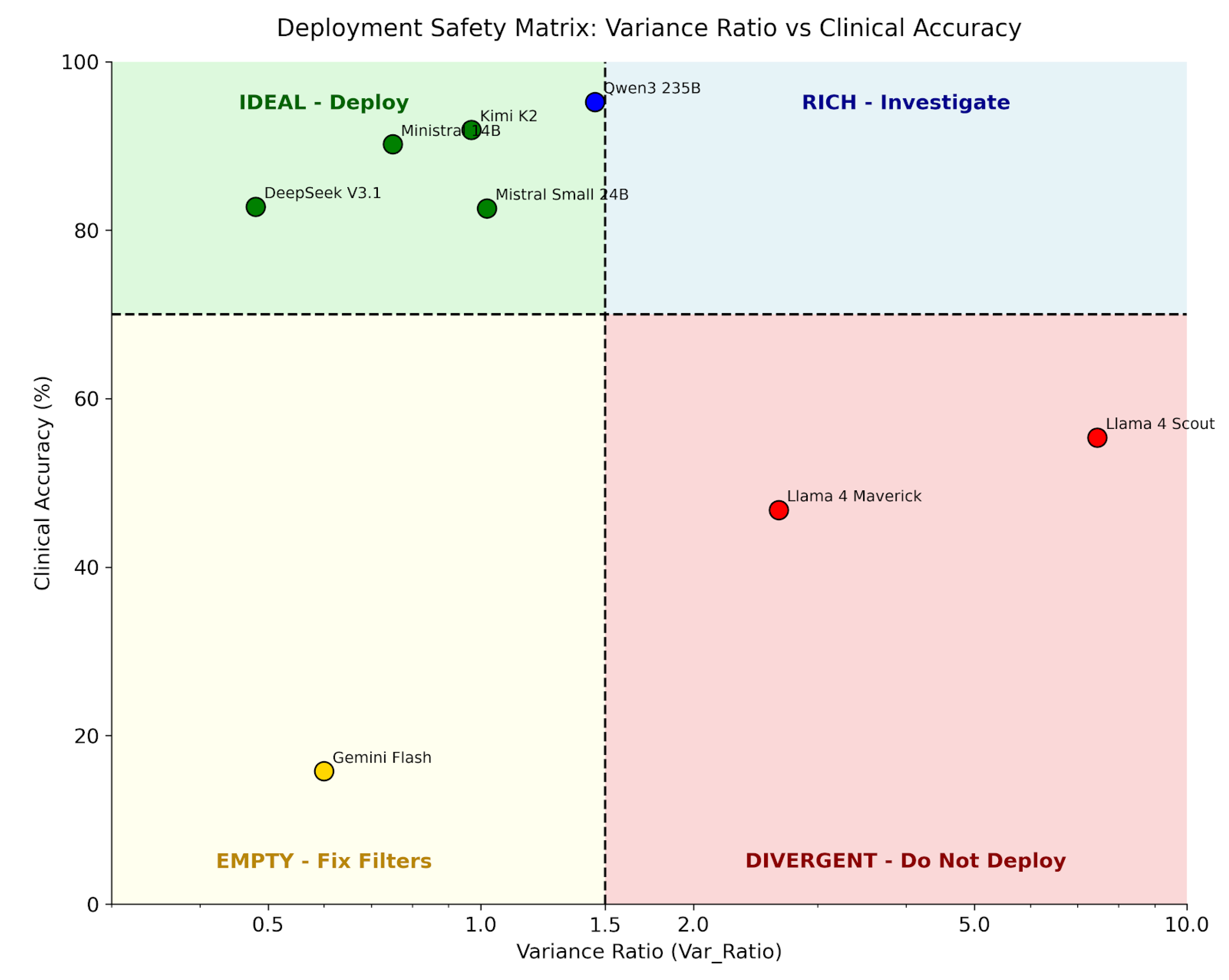

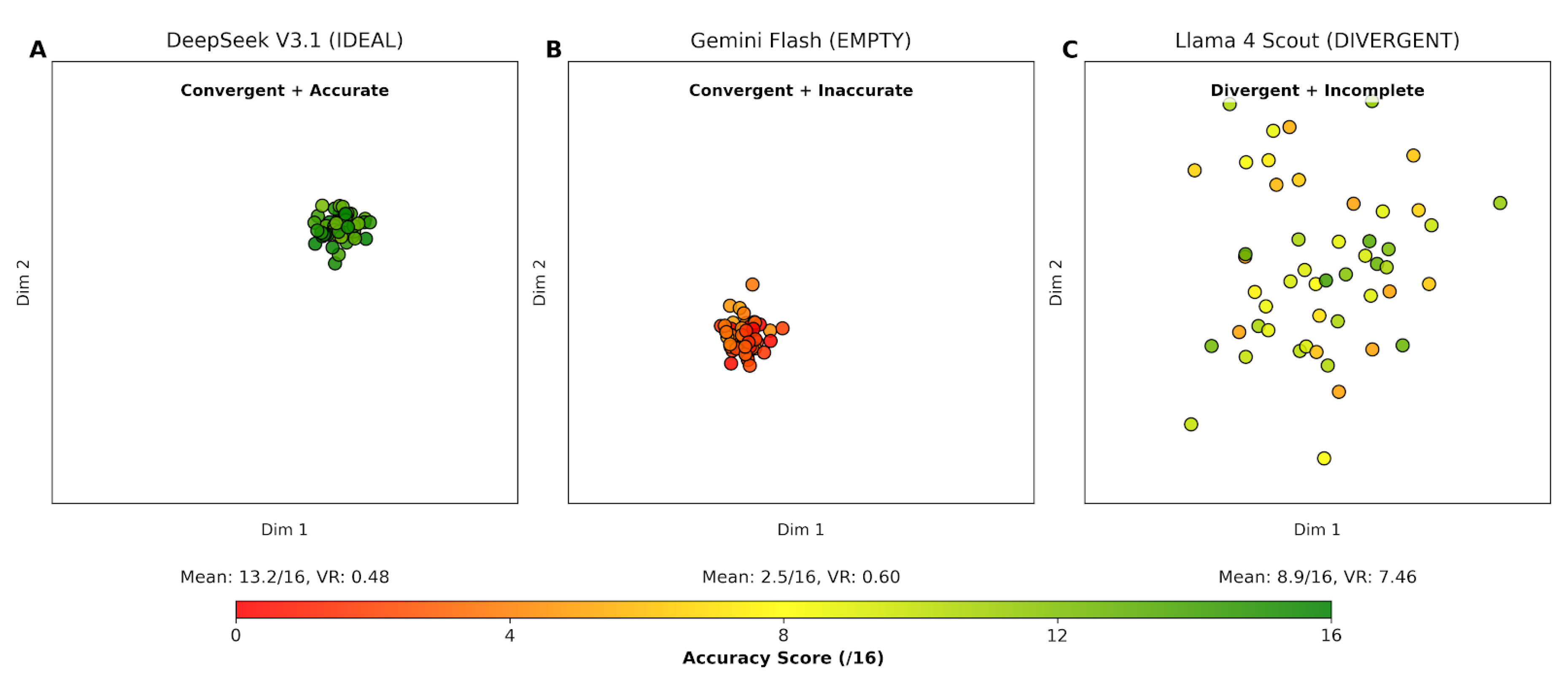

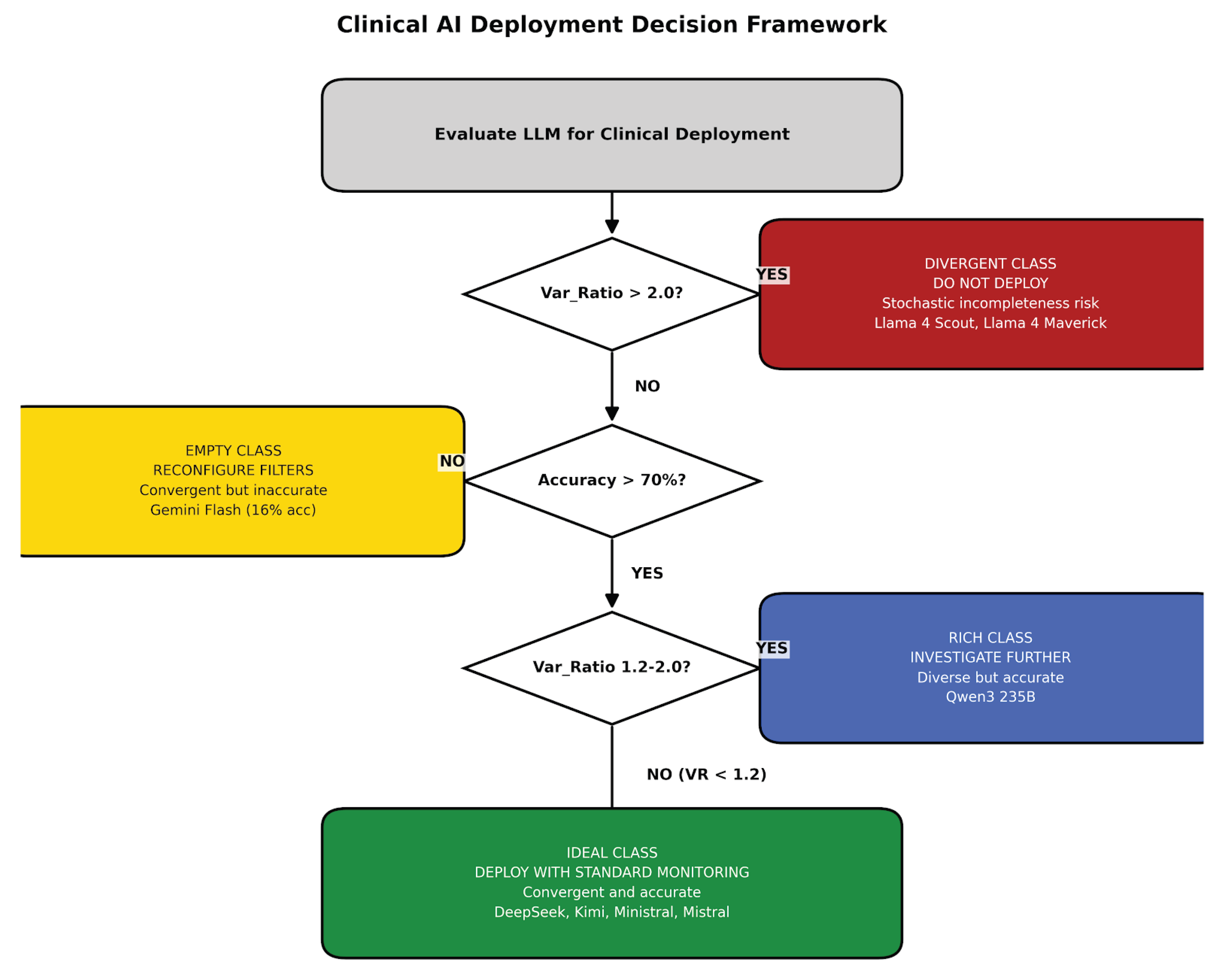

- A four-class behavioral taxonomy (IDEAL, EMPTY, DIVERGENT, RICH) with distinct clinical implications

- Identification of stochastic incompleteness as a novel failure mode invisible to standard benchmarks

- A deployment decision framework based on two-dimensional evaluation

2. Related Work

2.1. Clinical LLM Evaluation

2.2. Output Consistency and Reproducibility

2.3. Position Effects in Multi-Turn Conversations

3. Methods

3.1. Experimental Design

3.2. Metrics

3.3. Statistical Analysis

4. Results

4.1. Independence of Accuracy and Predictability

4.2. Four-Class Behavioral Taxonomy

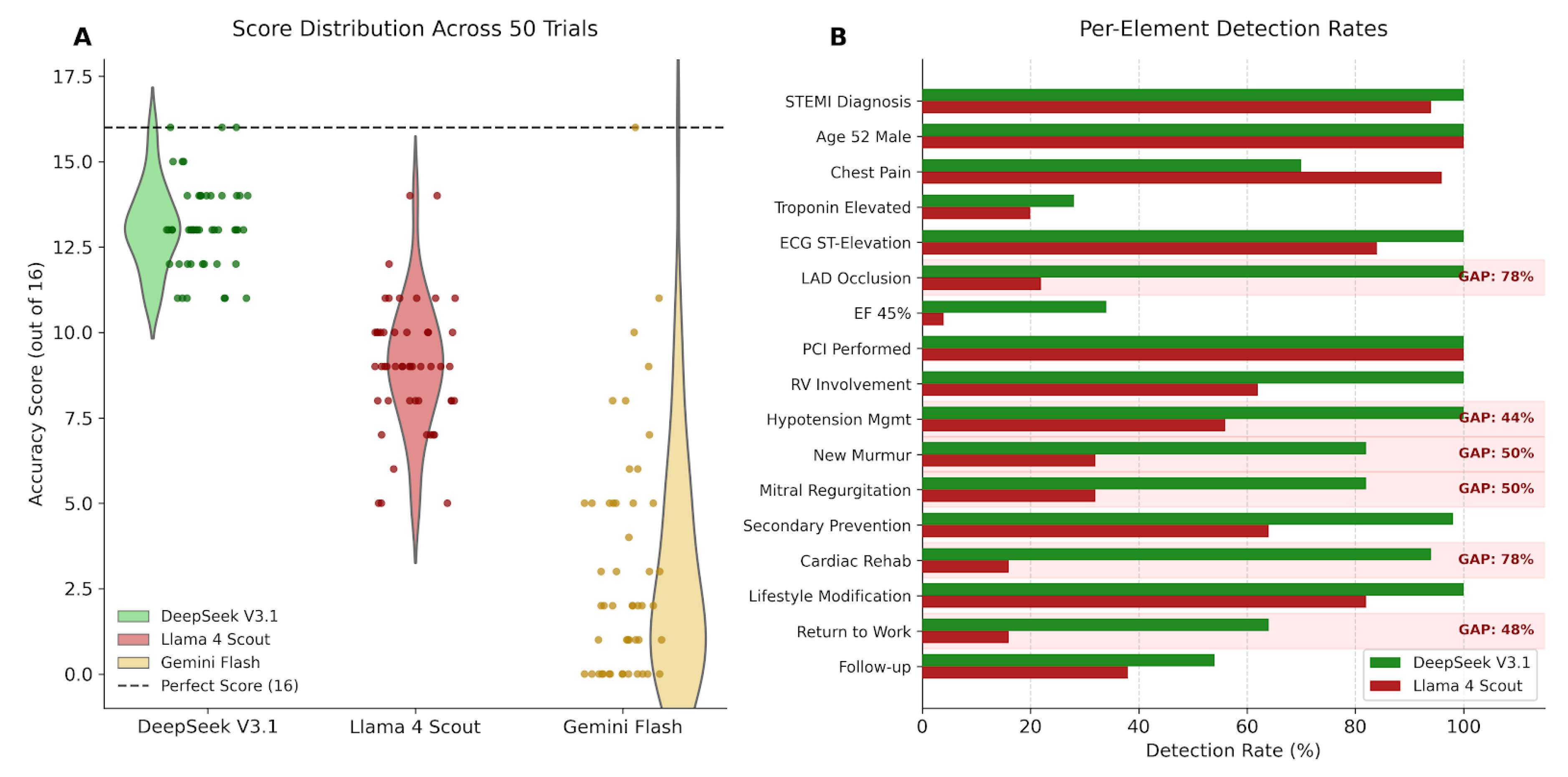

4.3. Stochastic Incompleteness

4.4. Embedding Space Visualization

4.5. Single-Metric Evaluation Failures

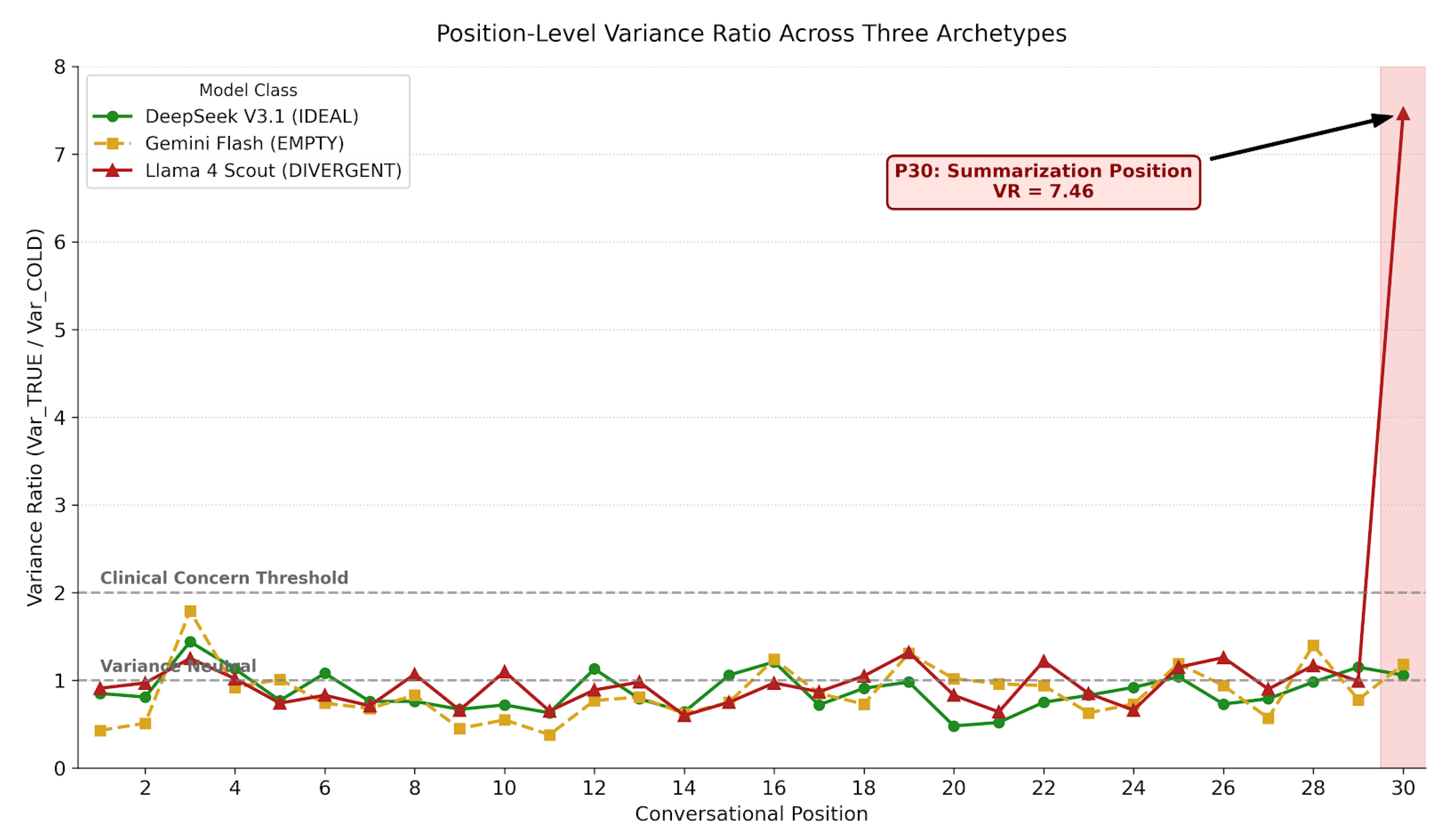

4.6. Position-Dependent Variance

4.7. Deployment Decision Framework

5. Discussion

5.1. Clinical Implications of Stochastic Incompleteness

- It is invisible to accuracy-only benchmarks that average across trials

- It produces no hallucinations, passing factual verification

- The omitted information varies across trials, defeating systematic checks

- Critical clinical findings (LAD occlusion, ejection fraction) are affected

5.2. Independence of Accuracy and Predictability

5.3. Limitations

- Single clinical case (STEMI) may not generalize to other conditions

- Eight models may not represent the full LLM landscape

- 50 trials per model may underestimate rare failure modes

- Position 30 analysis may miss other critical positions

6. Conclusion

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Asgari, E., N. Montaña-Brown, M. Dubois, and et al. 2025. A framework to assess clinical safety and hallucination rates of LLMs for medical text summarisation. npj Digital Medicine 8: 274. [Google Scholar] [CrossRef] [PubMed]

- Laban, P., H. Hayashi, Y. Zhou, and J. Neville. 2025. LLMs get lost in multi-turn conversation. arXiv arXiv:2505.06120. [Google Scholar] [CrossRef]

- Laxman, M. M. 2026a. Context curves behavior: Measuring AI relational dynamics with DRCI. Preprints.org. [Google Scholar] [CrossRef]

- Laxman, M. M. 2026b. Engagement as entanglement: Variance signatures of bidirectional context coupling in large language models. Preprints.org. submitted. [Google Scholar]

- Liu, N. F., K. Lin, J. Hewitt, and et al. 2024. Lost in the middle: How language models use long contexts. Transactions of the Association for Computational Linguistics 12: 157–173. [Google Scholar] [CrossRef]

- Reimers, N., and I. Gurevych. 2019. Sentence-BERT: Sentence embeddings using Siamese BERT-networks. Proceedings of EMNLP-IJCNLP, 3982–3992. [Google Scholar]

- Shyr, C., and et al. 2025. A statistical framework for evaluating repeatability and reproducibility of large language models. medRxiv preprint. [Google Scholar]

- Singhal, K., S. Azizi, T. Tu, and et al. 2023. Large language models encode clinical knowledge. Nature 620, 7972: 172–180. [Google Scholar] [CrossRef] [PubMed]

- Thirunavukarasu, A. J., D. S. Ting, K. Elangovan, and et al. 2023. Large language models in medicine. Nature Medicine 29, 8: 1930–1940. [Google Scholar] [CrossRef] [PubMed]

- Wang, J., and Y. Wang. 2025. Assessing consistency and reproducibility in the outputs of large language models: Evidence across diverse finance and accounting tasks. arXiv arXiv:2503.16974. [Google Scholar] [CrossRef]

| Class | Models | Accuracy | VR | Recommendation |

|---|---|---|---|---|

| IDEAL | DeepSeek, Kimi, Ministral, Mistral | 83–92% | 0.48–1.02 | Deploy |

| EMPTY | Gemini Flash | 16% | 0.60 | Fix Filters |

| DIVERGENT | Llama Scout, Llama Maverick | 47–55% | 2.64–7.46 | Do Not Deploy |

| RICH | Qwen3 235B | 95% | 1.45 | Investigate |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).