Submitted:

27 February 2026

Posted:

28 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Works

2.1. Agriculture Micro-UAV

2.2. Potential of Autonomous Pollinator Drone

2.3. Simultaneous Navigation and Flower Recognition

3. Experimental Setup

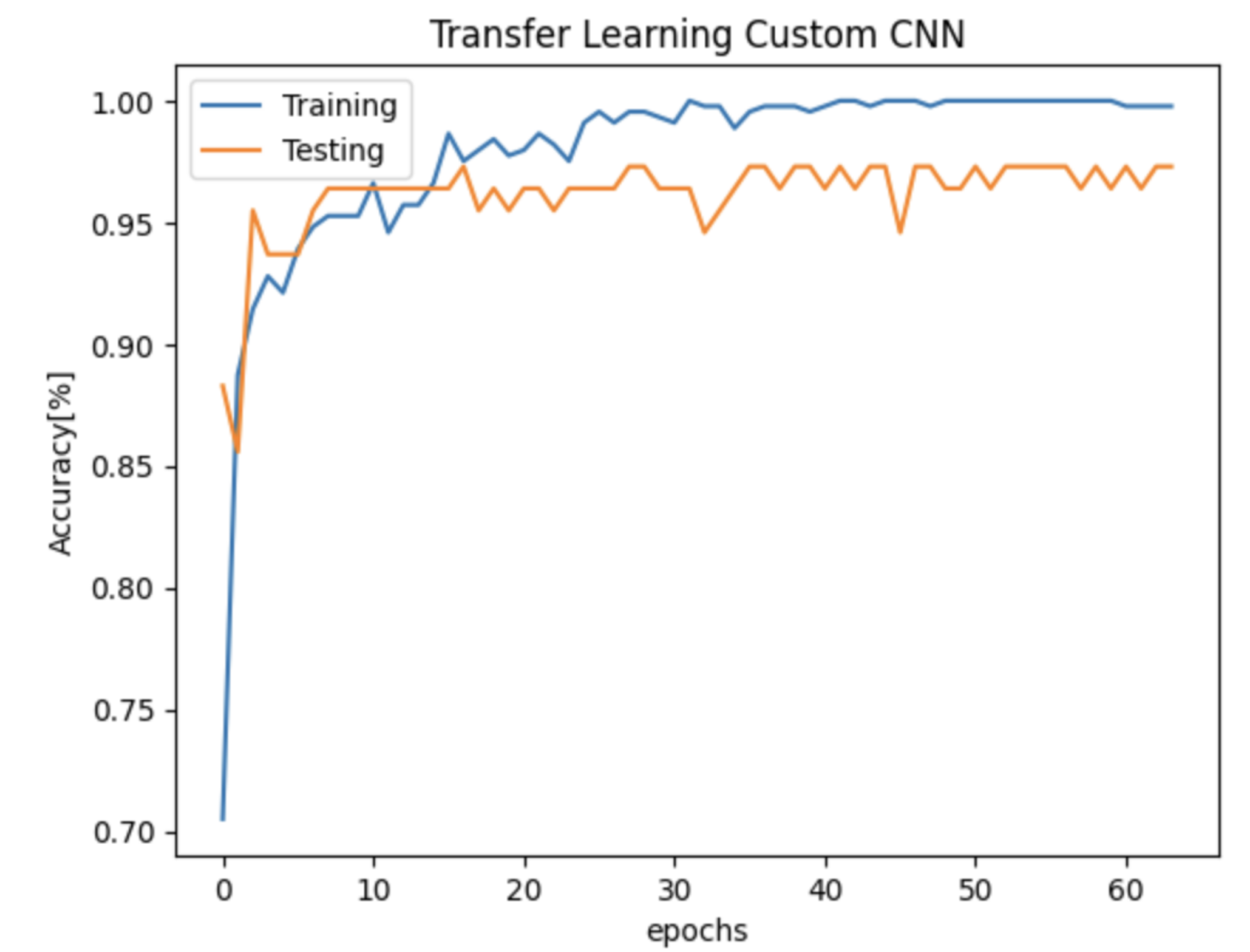

3.1. AI Binary Classification for Flower Recognition

3.2. Micro-UAV Specification

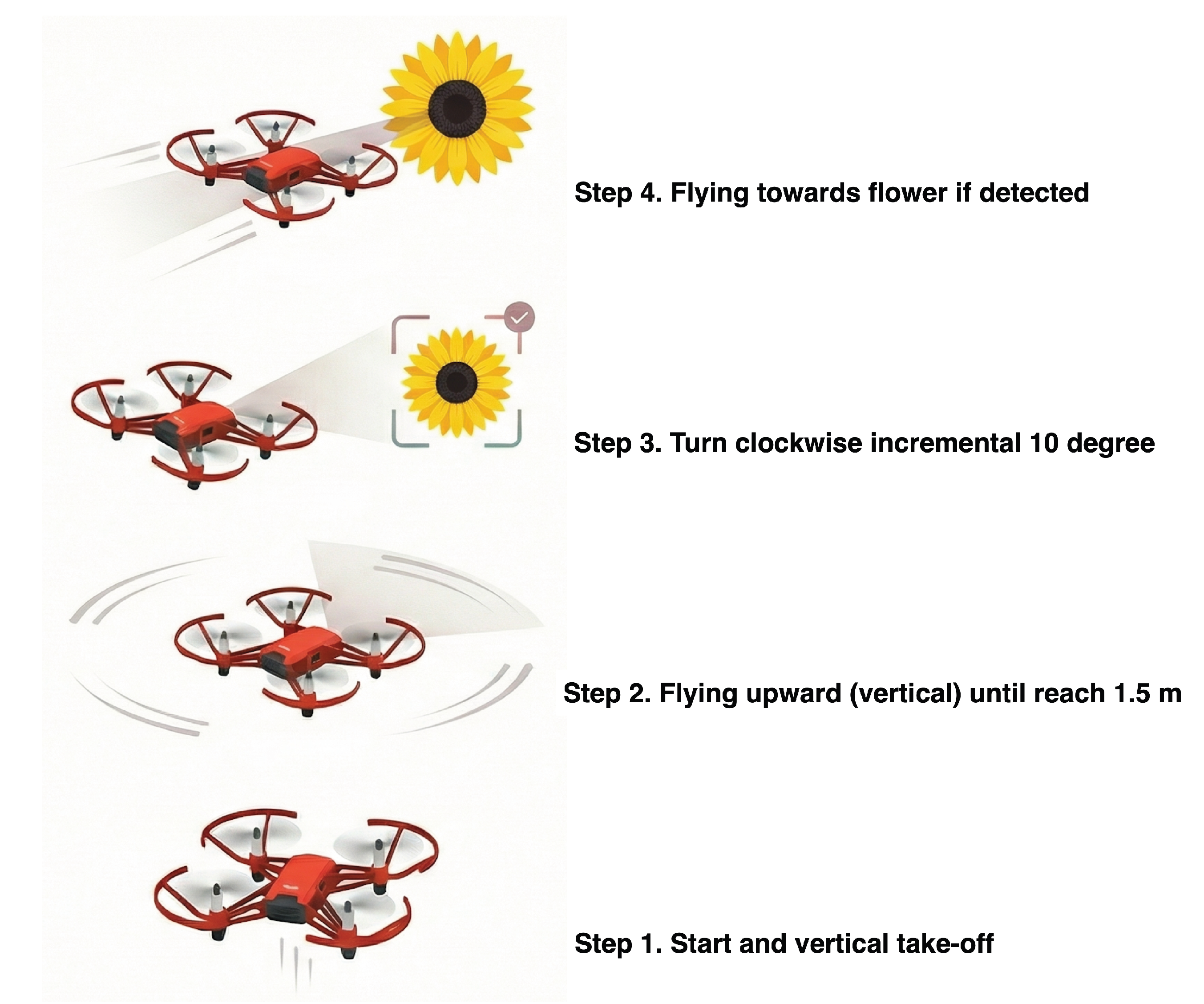

3.3. Integration of AI Detection Capability on the Micro-UAV

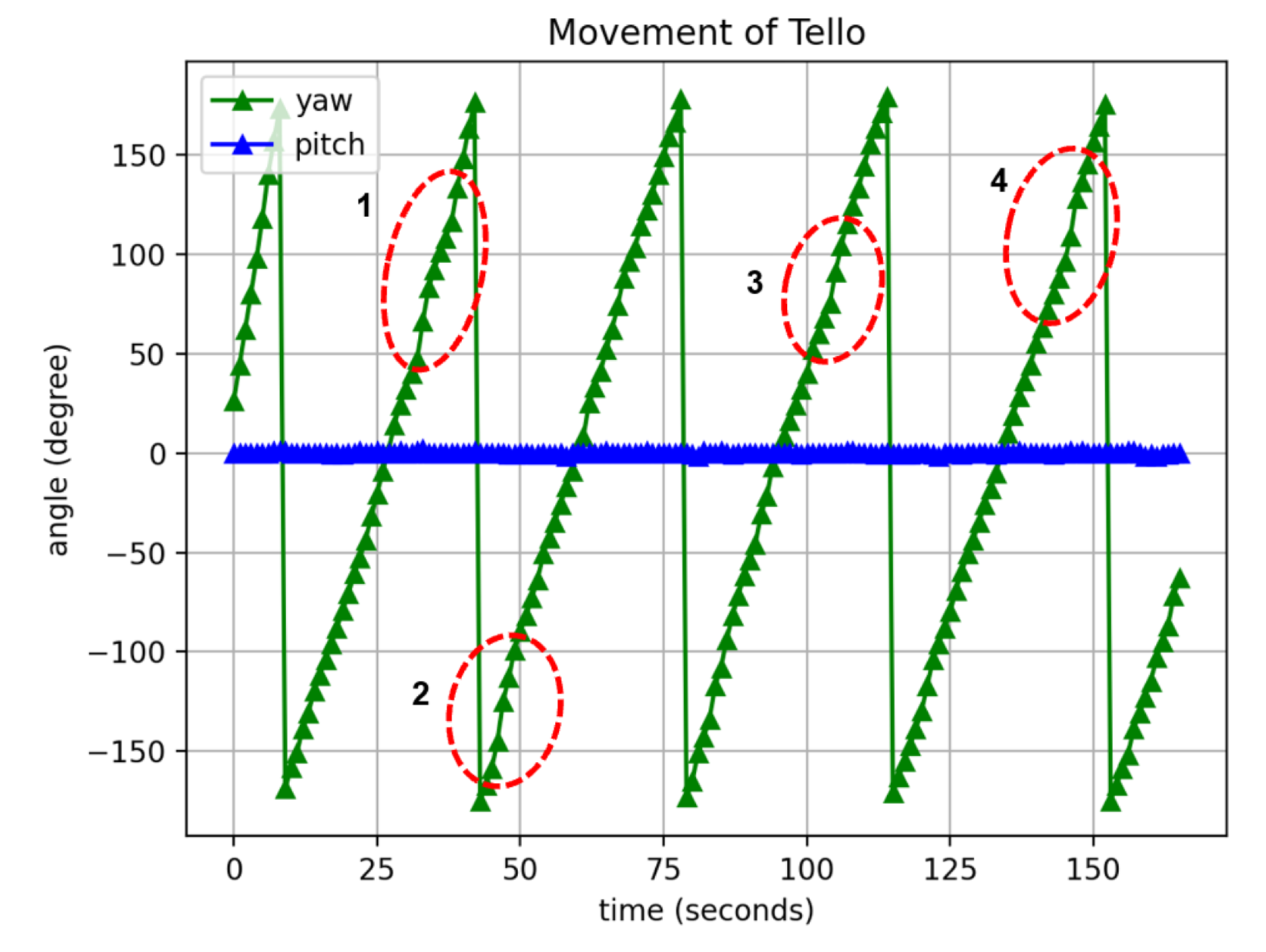

4. Results and Discussion

5. Conclusion

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| UAV | Unmanned Aerial Vehicle |

| CNN | Convolution Neural Network |

| SDG | Sustainable Development Goals |

| IoT | Internet of Things |

| AI | Artificial Intelligence |

References

- Steiner, G.; Geissler, B.; Schernhammer, E.S. Hunger and Obesity as Symptoms of Non-Sustainable Food Systems and Malnutrition. Applied Sciences 2019, 9. [CrossRef]

- Islam, S. Agriculture, food security, and sustainability: a review. Exploration of Foods and Foodomics 2025, 3. [CrossRef]

- Paudel, D.; Neupane, R.C.; Sigdel, S.; Poudel, P.; Khanal, A.R. COVID-19 Pandemic, Climate Change, and Conflicts on Agriculture: A Trio of Challenges to Global Food Security. Sustainability 2023, 15. [CrossRef]

- Raza, A.; Khare, T.; Zhang, X.; Rahman, M.M.; Hussain, M.; Gill, S.S.; Chen, Z.H.; Zhou, M.; Hu, Z.; Varshney, R.K. Novel Strategies for Designing Climate-Smart Crops to Ensure Sustainable Agriculture and Future Food Security. Journal of Sustainable Agriculture and Environment 2025, 4, e70048, [https://onlinelibrary.wiley.com/doi/pdf/10.1002/sae2.70048]. [CrossRef]

- Gamage, A.; Gangahagedara, R.; Subasinghe, S.; Gamage, J.; Guruge, C.; Senaratne, S.; Randika, T.; Rathnayake, C.; Hameed, Z.; Madhujith, T.; et al. Advancing sustainability: The impact of emerging technologies in agriculture. Current Plant Biology 2024, 40, 100420. [CrossRef]

- Nazarov, Anton.; Kulikova, Elena.; Molokova, Elena. Economic security through technological advancements in agriculture: A pathway to sustainable agro-industrial growth. BIO Web Conf. 2024, 121, 02012. [CrossRef]

- AlZubi, A.A.; Galyna, K. Artificial Intelligence and Internet of Things for Sustainable Farming and Smart Agriculture. IEEE Access 2023, 11, 78686–78692. [CrossRef]

- Patel, A.; Shukla, C.; Trivedi, A.; Balasaheb, K.S.; Sinha, M.K., Smart Farming: Utilization of Robotics, Drones, Remote Sensing, GIS, AI, and IoT Tools in Agricultural Operations and Water Management. In Integrated Land and Water Resource Management for Sustainable Agriculture Volume 1; Jadhav, D.A.; Khaple, S.; Wable, P.S.; Chendake, A.D., Eds.; Springer Nature Singapore: Singapore, 2025; pp. 127–151.

- Aarif K. O., M.; Alam, A.; Hotak, Y. Smart Sensor Technologies Shaping the Future of Precision Agriculture: Recent Advances and Future Outlooks. Journal of Sensors 2025, 2025, 2460098, [https://onlinelibrary.wiley.com/doi/pdf/10.1155/js/2460098]. [CrossRef]

- Moradi, S.; Bokani, A.; Hassan, J. UAV-based smart agriculture: A review of UAV sensing and applications. In Proceedings of the 2022 32nd international telecommunication networks and applications conference (ITNAC). IEEE, 2022, pp. 181–184.

- Lu, K.; Zhang, X.; Zhai, T.; Zhou, M. Adaptive sharding for UAV networks: A deep reinforcement learning approach to blockchain optimization. Sensors 2024, 24, 7279.

- Caruso, A.; Chessa, S.; Lopez, J.C.; Escolar, S.; Barba, J. Collection of Data With Drones in Precision Agriculture: Analytical Model and LoRa Case Study. IEEE Internet of Things Journal 2021, 8, 16692–16704. [CrossRef]

- Yin, J.; Lan, Y.; Long, Y.; Wu, B.; Jiang, L.; Zhan, H.; Zhu, J.; Xu, H.; Deng, H.; Chen, G. An Intelligent Field Monitoring System Based on Enhanced YOLO-RMD Architecture for Real-Time Rice Pest Detection and Management. Agriculture 2025, 15, 798. [CrossRef]

- Wang, T.; Zhao, Y.; Li Pang, L.L.; Cheng, Q. Evaluation method and design of greenhouse pear pollination drones based on grounded theory and integrated theory. PLOS ONE 2024, 19, 1–21. [CrossRef]

- Miyoshi, K.; Hiraguri, T.; Shimizu, H.; Hattori, K.; Kimura, T.; Okubo, S.; Endo, K.; Shimada, T.; Shibasaki, A.; Takemura, Y. Development of Pear Pollination System Using Autonomous Drones. AgriEngineering 2025, 7. [CrossRef]

- Bersani, C.; Ouammi, A.; Sacile, R.; Zero, E. Model Predictive Control of Smart Greenhouses as the Path towards Near Zero Energy Consumption. Energies 2020, 13. [CrossRef]

- Hati, A.J.; Singh, R.R. Smart Indoor Farms: Leveraging Technological Advancements to Power a Sustainable Agricultural Revolution. AgriEngineering 2021, 3, 728–767. [CrossRef]

- Singh, S.; Singh, P.; Kumar, A.; Baheliya, A.K.; Patel, K.K. Promoting Environmental Sustainability Through Vertical Farming: A Review. Journal of Advances in Biology & Biotechnology 2024, 27, 210–219. [CrossRef]

- Hiraguri, T.; Shimizu, H.; Kimura, T.; Matsuda, T.; Maruta, K.; Takemura, Y.; Ohya, T.; Takanashi, T. Autonomous Drone-Based Pollination System Using AI Classifier to Replace Bees for Greenhouse Tomato Cultivation. IEEE Access 2023, 11, 99352–99364. [CrossRef]

- V.J, R.; Inamdar, M.N. Impact of Autonomous Drone Pollination in Date Palms. International Journal of Innovative Research and Scientific Studies 2022, 5, 297–305. [CrossRef]

- Sadeh, A.; Shmida, A.; Keasar, T. The Carpenter Bee Xylocopa pubescens as an Agricultural Pollinator in Greenhouses. Apidologie 2007, 38, 508–517. [CrossRef]

- Ester Judith Slaa.; Luis Alejandro Sánchez Chaves.; Katia Sampaio Malagodi-Braga.; Frouke Elisabeth Hofstede. Stingless bees in applied pollination: practice and perspectives. Apidologie 2006, 37, 293–315. [CrossRef]

- Strader, J.; Nguyen, J.; Tatsch, C.; Du, Y.; Lassak, K.; Buzzo, B.; Watson, R.; Cerbone, H.; Ohi, N.; Yang, C.; et al. Flower Interaction Subsystem for a Precision Pollination Robot. In Proceedings of the 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2019, pp. 5534–5541. [CrossRef]

- Ohi, N.; Lassak, K.; Watson, R.; Strader, J.; Du, Y.; Yang, C.; Hedrick, G.; Nguyen, J.; Harper, S.; Reynolds, D.; et al. Design of an Autonomous Precision Pollination Robot. In Proceedings of the 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2018, pp. 7711–7718. [CrossRef]

- Lochan, K.; Khan, A.; Elsayed, I.; Suthar, B.; Seneviratne, L.; Hussain, I. Advancements in Precision Spraying of Agricultural Robots: A Comprehensive Review. IEEE Access 2024, 12, 129447–129483. [CrossRef]

- Karim, M.J. Autonomous Pollination System for Tomato Plants in Greenhouses: Integrating Deep Learning and Robotic Hardware Manipulation on Edge Device. In Proceedings of the 2024 International Conference on Innovations in Science, Engineering and Technology (ICISET), 2024, pp. 1–6. [CrossRef]

- Agrawal, J.; Arafat, M.Y. Transforming Farming: A Review of AI-Powered UAV Technologies in Precision Agriculture. Drones 2024, 8. [CrossRef]

- Broussard, M.A.; Coates, M.; Martinsen, P. Artificial Pollination Technologies: A Review. Agronomy 2023, 13. [CrossRef]

- Bell, J. Robots for Kiwifruit Harvesting and Pollination, 2025, [arXiv:cs.RO/2507.15484].

- Wu, P.; Lei, X.; Zeng, J.; Qi, Y.; Yuan, Q.; Huang, W.; Ma, Z.; Shen, Q.; Lyu, X. Research progress in mechanized and intelligentized pollination technologies for fruit and vegetable crops. International Journal of Agricultural and Biological Engineering 2024, 17, 11–21.

- Manzoor, S.H.; Kabir, M.H.; Zhang, Z. UAV-based apple flowers pollination system. In Towards Unmanned Apple Orchard Production Cycle: Recent New Technologies; Springer, 2023; pp. 211–236.

- Yablokova, A.; Kovalev, D.; Kovalev, I.; Podoplelova, V.; Astanakulov, K. Environmental safety problems of swarm use of UAVs in precision agriculture. In Proceedings of the E3S web of conferences. EDP Sciences, 2024, Vol. 471, p. 04018.

- Montilla-Pacheco, A.d.J.; Pacheco-Gil, H.A.; Pastrán-Calles, F.R.; Rodríguez-Pincay, I.R. Pollination with drones: A successful response to the decline of entomophiles pollinators? 2021.

- Francis, C.D.; Kleist, N.J.; Ortega, C.P.; Cruz, A. Noise pollution alters ecological services: enhanced pollination and disrupted seed dispersal. Proceedings of the Royal Society B: Biological Sciences 2012, 279, 2727–2735.

- Stehr, N.J. Drones: The Newest Technology for Precision Agriculture. Natural Sciences Education 2015, 44, 89–91, [https://acsess.onlinelibrary.wiley.com/doi/pdf/10.4195/nse2015.04.0772]. [CrossRef]

- García-Munguía, A.; Guerra-Ávila, P.L.; García-Munguía, A.M.; Islas-Ojeda, E.; Vázquez-Martínez, O.; Flores-Sánchez, J.L.; García-Munguía, O. A Review of Drone Technology and Operation Processes in Agricultural Crop Spraying. Drones 2024, 8, 674. [CrossRef]

- Akbar, J.U.M.; Kamarulzaman, S.F.; Muzahid, A.J.M.; Rahman, M.A.; Uddin, M. A comprehensive review on deep learning assisted computer vision techniques for smart greenhouse agriculture. IEEE Access 2024, 12, 4485–4522.

- Apriyanti, D.H.; Spreeuwers, L.J.; Lucas, P.J. Explainable automated wild-orchid identification combining deep neural networks and Bayesian networks. Engineering Applications of Artificial Intelligence 2025, 161, 111961.

- Arafat, M.Y.; Alam, M.M.; Moh, S. Vision-Based Navigation Techniques for Unmanned Aerial Vehicles: Review and Challenges. Drones 2023, 7, 89. [CrossRef]

- Choutri, K.; Shaiba, H.; Meshoul, S.; Chegrani, A.; Yahiaoui, M.; Lagha, M. Vision-Based UAV Detection and Localization to Indoor Positioning System. Sensors (Basel, Switzerland) 2024, 24, 4121. [CrossRef]

- Liu, Y.; Tan, Y. A Review of Visual SLAM Systems Based on Multi-Sensor Fusion. In Proceedings of the 2024 9th International Conference on Intelligent Informatics and Biomedical Sciences (ICIIBMS). IEEE, 2024, Vol. 9, pp. 304–310.

- Ruotsalainen, L.; Sokolova, N.; Morrison, A.; Rantanen, J.; Makela, M. Improving Computer Vision-Based Perception for Collaborative Indoor Navigation. IEEE Sensors Journal 2022, 22, 4816–4826. [CrossRef]

- Gupta, A.; Fernando, X. Simultaneous localization and mapping (slam) and data fusion in unmanned aerial vehicles: Recent advances and challenges. Drones 2022, 6, 85.

- Bach, S.H.; Yi, S.Y.; Khoi, P.B. Application of QR Code for Localization and Navigation of Indoor Mobile Robot. IEEE Access 2023, 11, 28384–28390. [CrossRef]

- Li, M.; Zhao, M.; Mao, H.; Gao, H. Development and Experimentation of a Real-Time Greenhouse Positioning System Based on IUKF-UWB. Agriculture 2024, 14, 1479. [CrossRef]

- Rahman, M.F.F.; Zhang, Y.; Chen, L.; Fan, S. A Comparative Study on Application of Unmanned Aerial Vehicle Systems in Agriculture. Agriculture 2021, 11, 22. [CrossRef]

- Suherman, S.; Pinem, M.; Putra, R.A. Ultrasonic Sensor Assessment for Obstacle Avoidance in Quadcopter-based Drone System. institute of electrical electronics engineers, 2020, pp. 50–53. [CrossRef]

- Cheng, B.; He, X.; Li, X.; Zhang, N.; Song, W.; Wu, H. Research on Positioning and Navigation System of Greenhouse Mobile Robot Based on Multi-Sensor Fusion. Sensors (Basel, Switzerland) 2024, 24, 4998. [CrossRef]

- Tsai, P.S.; Wu, T.F.; Wang, Y.C. Automatic Quadrotor Dispatch Missions Based on Air-Writing Gesture Recognition. Processes 2025, 13. [CrossRef]

| Item | Parameter | Specification |

|---|---|---|

| Drone | Take-off weight | 87 g (including propeller blades, propeller blade protector, and batteries) |

| Dimensions | mm | |

| Propeller blade | 3” | |

| Built-in functions | Infrared height determination, barometer, LED indicator, downward vision sensor, Wi-Fi, HD 750P image transmission | |

| Interface | Micro USB charging port | |

| Removable battery | 1.1 Ah/3.8 V | |

| Flight Performance | Maximum flight distance | 100 m |

| Maximum flight speed | 8 m/s | |

| Maximum flight time | 8 min | |

| Maximum flight height | 30 m | |

| Camera | Image | 5 MP |

| Field of View (FoV) | ||

| Video | HD720P30 | |

| Format | JPG (images), MP4 (videos) | |

| Electronic image stabilization | Supported |

| Number of Detections | Webcam (seconds) | Drone camera (seconds) |

|---|---|---|

| 10 | 28.01 | 11.34 |

| 20 | 44.20 | 12.73 |

| 30 | 61.65 | 13.04 |

| 40 | 90.07 | 13.43 |

| 50 | 102.55 | 14.44 |

| 60 | 129.66 | 14.86 |

| Distance (cm) | Detection (Boolean) |

|---|---|

| 15.5 | 0 |

| 30.5 | 1 |

| 60.5 | 1 |

| 91.5 | 1 |

| 116.5 | 0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).