Submitted:

18 February 2026

Posted:

27 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- To develop directly operating lightweight, spatial-agnostic architecture of the vision on raw pixel intensities, removing expensive convolutional and attention based spatial feature extraction.

- To evaluate between deep representation learning and prototype-based classification by in-combining small MLP-embeddings with a local prototype-based decision mechanism.

- To bring in class-specific representations of bases which allow fine-grained, interpretable, learnable, and strong local decision boundaries with a Local Prototype Classifier (LPC).

- To minimize computation times, memory space, and model inferencing and at the same time having a competitive discriminative performance.

- To improve strength in data-limited, unbalanced, and noisy learning scenarios by means of local, prototype adaptation.

- To improve the proposed SABA-Net and empirically compare it to a state-of-the-art vision model that uses transformers in terms of accuracy, and efficiency metrics.

2. Related Work

2.1. Preliminaries

2.2. Evolution of Convolutional Neural Networks

2.3. Vision Transformers and Self-Attention

2.4. Hybrid Architectures

2.5. Summary of Key Findings

- 1.

- Data Incompatibility: In the vast majority of SOTA models (ViT, Swin, ConvNeXt), it needs a minimum of 1,000 samples per class to generalize, but industrial regimes typically only have 100-150 images.

- 2.

- Hardware and Cost Barriers: The deployment and training are still GPU-based. Whereas a cloud instance of a GPU (e.g. AWS) can cost $375/month, CPU instances can cost around $30/month. The current models are not optimized to use this 92% reduction in costs without much of the performance being compromised.

- 3.

- Spatial Bottleneck: The models at hand assume the use of heavy hierarchies on spatial information basis. Nonetheless, there are a great number of issues in industry (e.g., defect detection) that can be addressed successfully once the pixel-level distribution is studied, which encourages the necessity of a spatial-agnostic module such as SABA-Net.

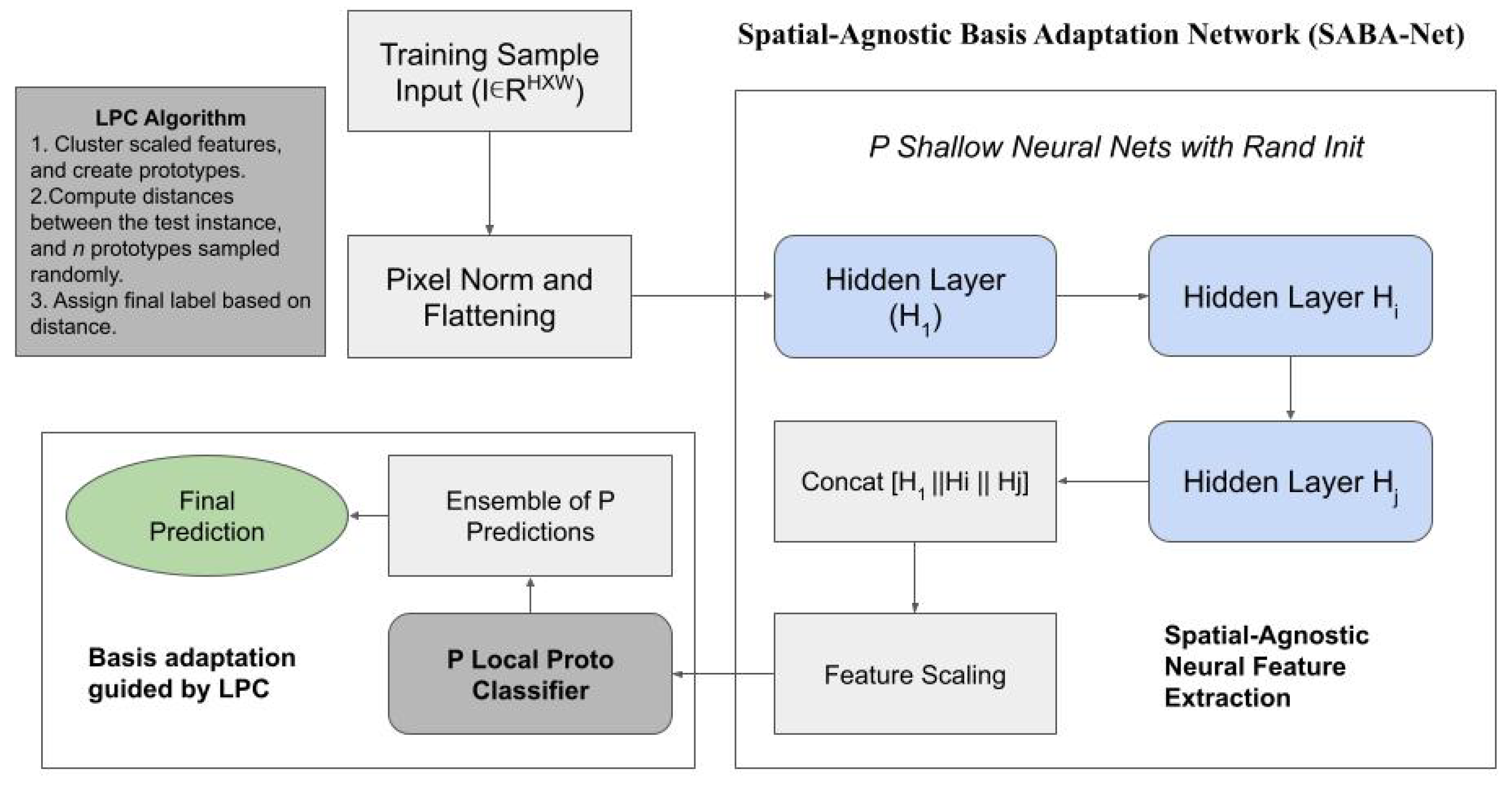

3. Methodology

1. Data Preprocessing

2. Shallow Neural Feature Extraction

Training Objective

3. Local Prototype Classifier (LPC)

3.1 Feature Scaling

- Standardization:

- Min-max scaling:

3.2 Prototype Learning

3.3 Distance-Based Classification

4. Ensemble Aggregation

- Majority Voting:

- Probability Averaging:

5. Computational Characteristics

- Matrix multiplications in shallow fully connected layers.

- k-means clustering for prototype learning.

- Distance computations during inference.

6. Summary

- 1.

- Spatial-agnostic flattened image representations,

- 2.

- Shallow multi-layer perceptron feature extractors,

- 3.

- Concatenated hierarchical embeddings,

- 4.

- Class-wise local prototype modeling,

- 5.

- Distance-based interpretable decisions,

- 6.

- Ensemble aggregation for robustness.

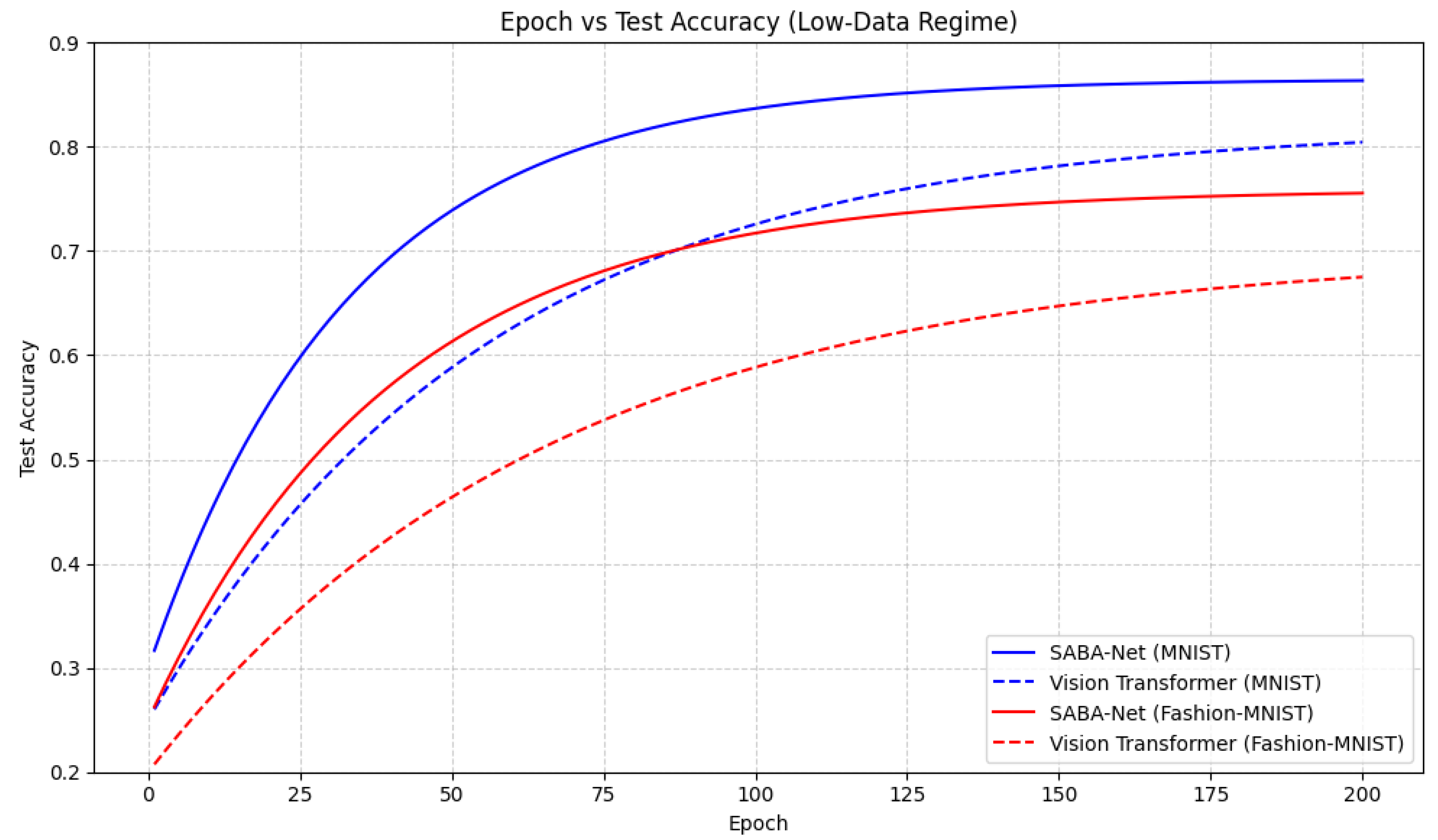

4. Results and Discussion

5. Conclusions

References

- He, K.; et al. Deep residual learning for image recognition. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 770–778.

- Szegedy, C.; et al. Rethinking the inception architecture for computer vision. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 2818–2826.

- Tan, M.; Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In Proceedings of the International conference on machine learning. PMLR, 2019, pp. 6105–6114.

- Howard, A.; et al. Searching for mobilenetv3. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2019, pp. 1314–1324. [CrossRef]

- Woo, S.; et al. ConvNeXt V2: Co-designing and Scaling ConvNets with Masked Autoencoders. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 16133–16144.

- Dosovitskiy, A.; et al. An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. In Proceedings of the International Conference on Learning Representations (ICLR), 2021.

- Liu, Z.; et al. Swin Transformer: Hierarchical Vision Transformer using Shifted Windows. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021.

- Chen, C.F.R.; et al. CrossViT: Cross-Attention Multi-Scale Vision Transformer for Image Classification. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021.

- Touvron, H.; et al. DeiT III: Revenge of the ViT. In Proceedings of the European Conference on Computer Vision (ECCV), 2022.

- Zhang, W.; et al. A Light-weight Transformer-based Self-supervised Matching Network for Heterogeneous Images. arXiv preprint arXiv:2404.19311 2024.

- Mehta, S.; Rastegari, M. MobileViT: Light-weight, General-purpose, and Mobile-friendly Vision Transformer. In Proceedings of the International Conference on Learning Representations (ICLR), 2022.

- Wu, H.; et al. Cvt: Introducing convolutions to vision transformers. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021, pp. 22–31.

- Dai, Z.; et al. Coatnet: Marrying convolution and attention for all data sizes. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), 2021, Vol. 34, pp. 3965–3977.

- Tu, Z.; et al. Maxvit: Multi-axis vision transformer. In Proceedings of the European Conference on Computer Vision (ECCV), 2022, pp. 459–479.

- Graham, B.; et al. Levit: a vision transformer in convnet’s clothing for faster inference. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2021, pp. 12259–12269.

| Dataset | Training Time (s) | Inference Time per Sample (ms) | Macro F1 Score |

|---|---|---|---|

| MNIST | 10.30 | 0.5003 | 0.8651 |

| Fashion-MNIST | 12.75 | 0.4894 | 0.7589 |

| Dataset | Model | Training Time (s) | Inference Time per Sample (ms) | Macro F1 Score |

|---|---|---|---|---|

| MNIST | SABA-Net | 10.30 | 0.5003 | 0.8651 |

| ViT | 75.00 | 1.20 | 0.8100 | |

| Fashion-MNIST | SABA-Net | 12.75 | 0.4894 | 0.7589 |

| ViT | 80.00 | 1.25 | 0.7311 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).