Submitted:

17 February 2026

Posted:

27 February 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

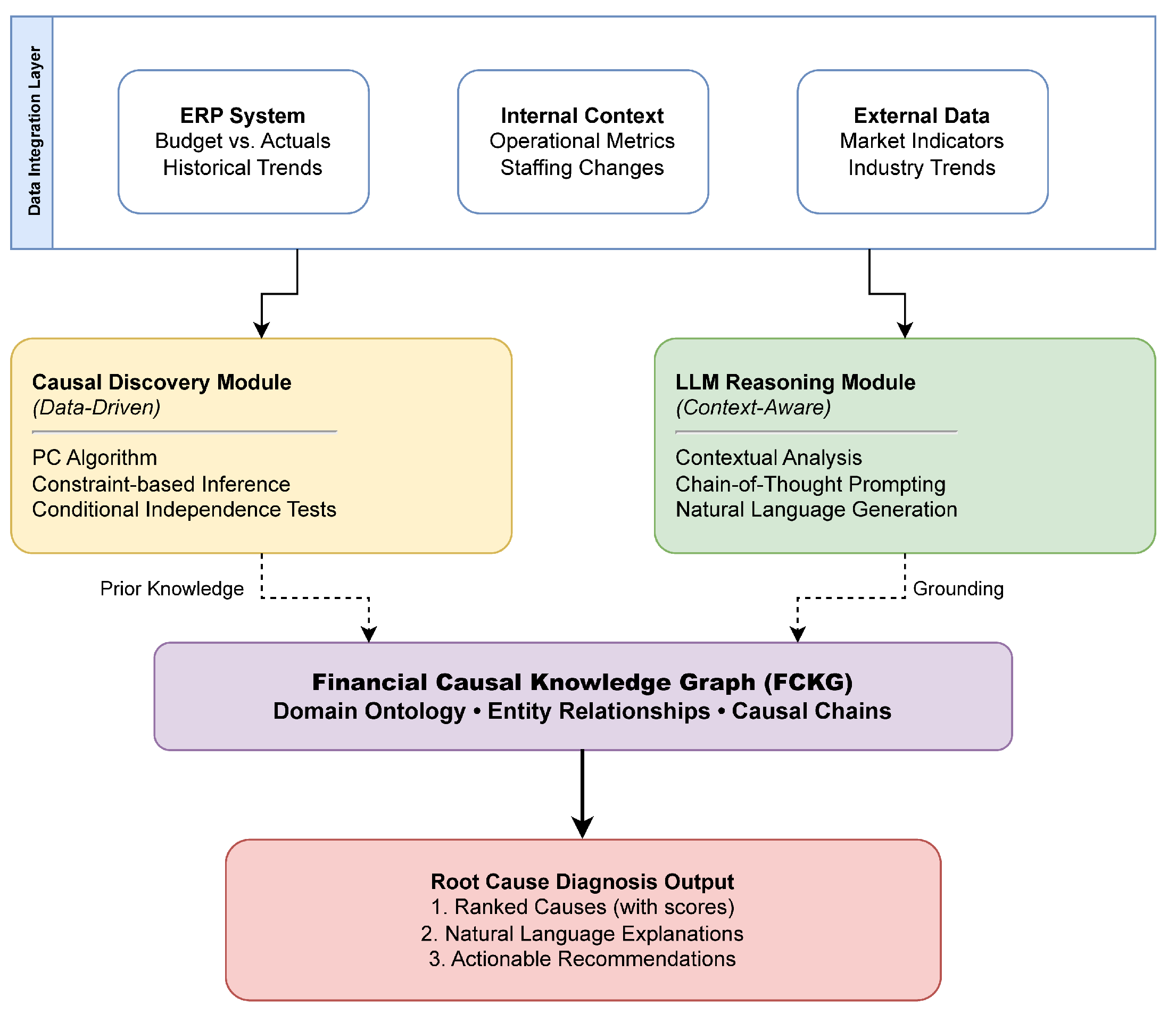

- A hybrid architecture integrating constraint-based causal discovery with LLM contextual reasoning for automated root cause diagnosis

- A Financial Causal Knowledge Graph that captures domain-specific causal relationships and serves as prior knowledge to address small-sample challenges

- Empirical evaluation on enterprise data demonstrating improved accuracy and explainability over baseline methods

- Ablation studies validating the necessity of each framework component

Ⅱ. Related Work

A. Causal Inference and Discovery

B. LLMs for Causal Reasoning

C. Knowledge Graphs in Finance

D. AI in Financial Analysis

Ⅲ. Methodology

A. Framework Overview

B. Data Integration Layer

C. Causal Discovery Module

D. LLM Reasoning Module

- Input: Variance , Causal Graph , Context

- Prompt Construction:

- “Analyze the following budget variance...’’

- “Causal relationships: ‘’ +

- “Contextual factors: ‘’ +

- “Identify root causes following these causal paths...’’

- LLM Query:

- Output: Structured explanation

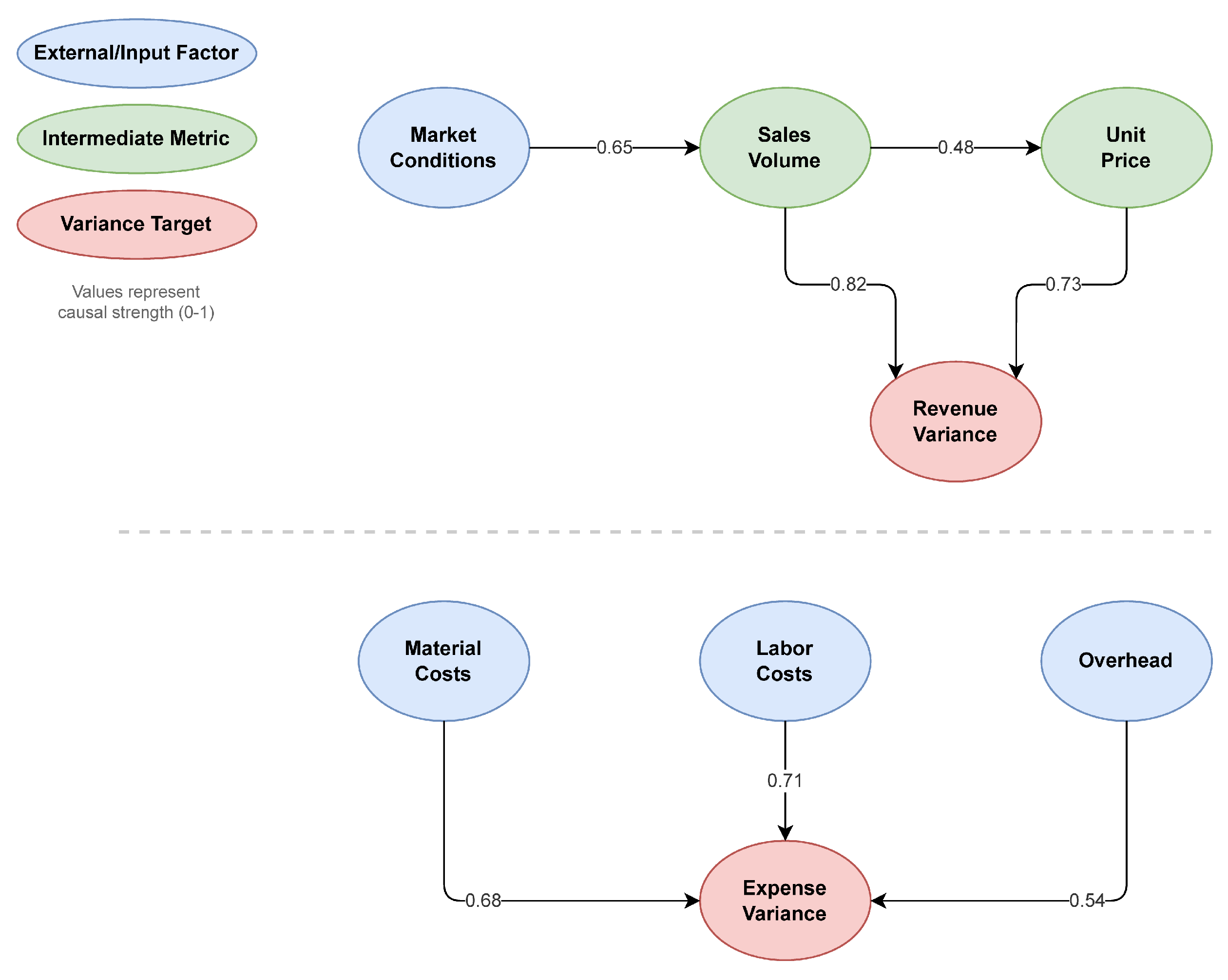

E. Financial Causal Knowledge Graph

F. Root Cause Ranking and Explanation

Ⅳ. Experiments and Results

A. Dataset and Experimental Setup

- Causal Discovery: causal-learn library [25] implementing PC algorithm with background knowledge support

- LLM: GPT-4 API (gpt-4-0613) with temperature 0.3 for reproducibility

- Knowledge Graph: Neo4j graph database for FCKG storage

B. Evaluation Metrics and Definitions

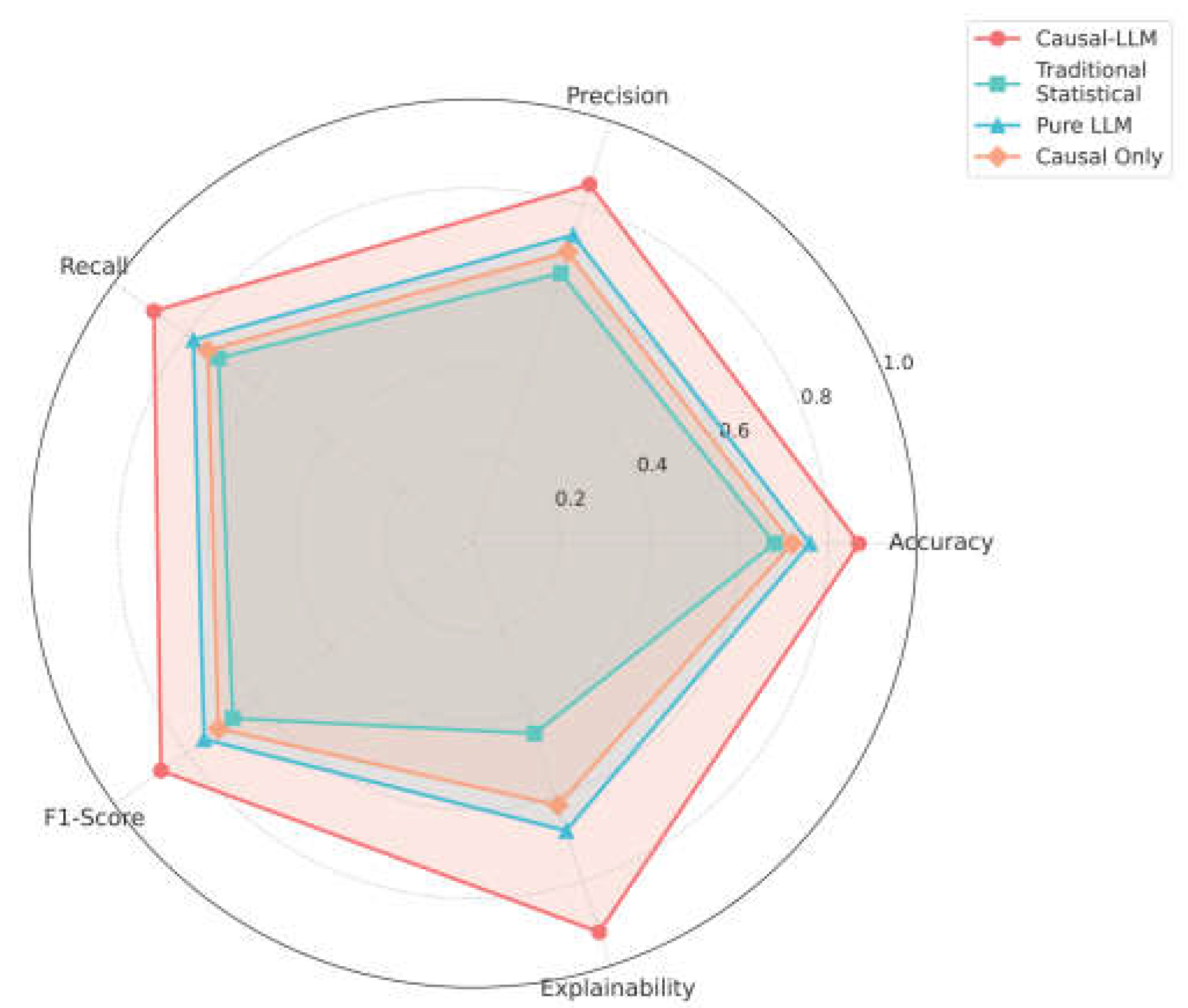

C. Baseline Comparisons

- 1)

- Traditional Statistical Analysis: Correlation analysis with manual expert interpretation

- 2)

- Pure LLM: GPT-4 with variance data and context but no causal graph

- 3)

- Causal-Only: PC algorithm output without LLM reasoning

- 4)

- Rule-Based System: Hand-crafted rules encoding common variance patterns

D. Main Results

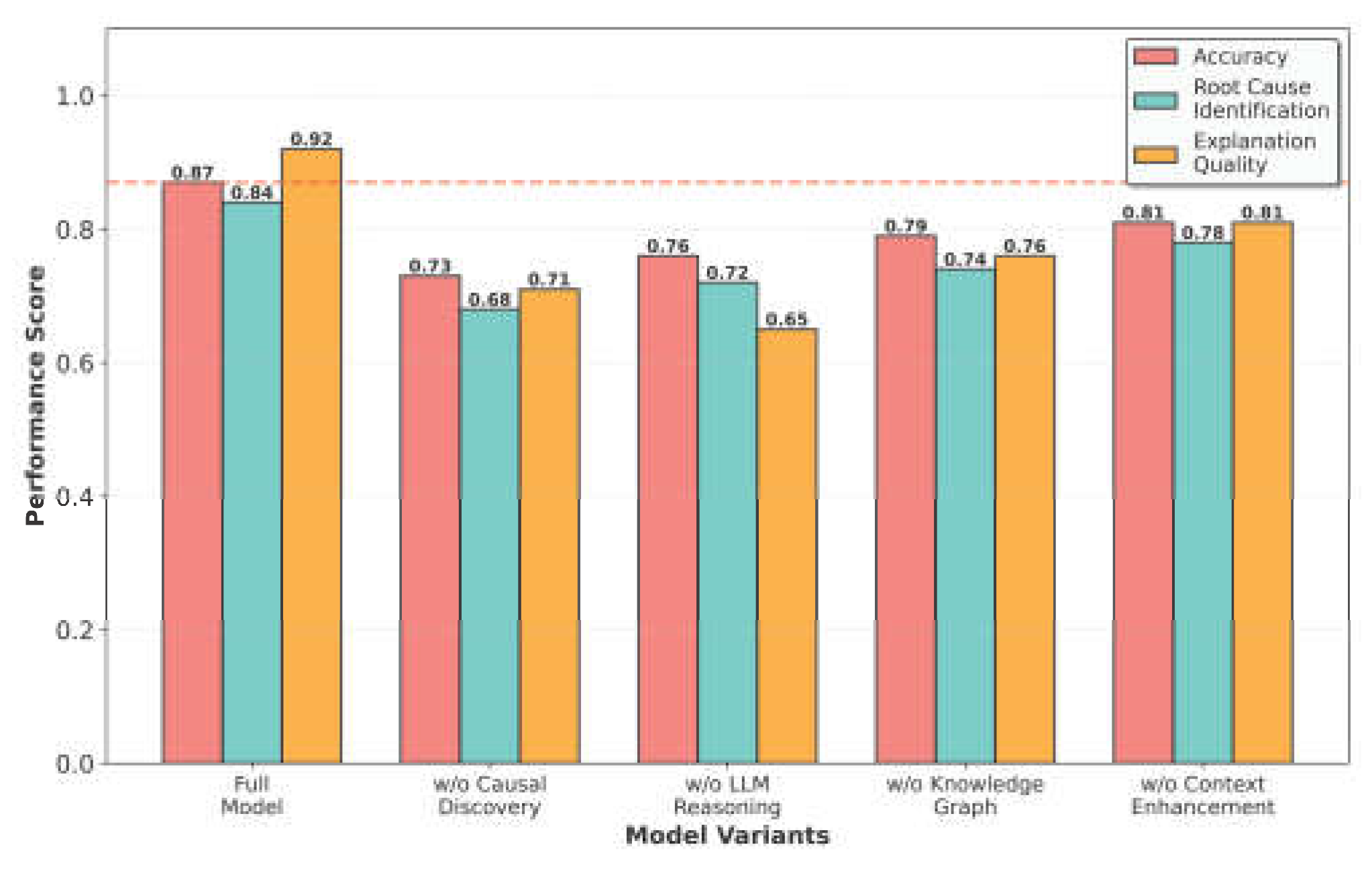

E. Ablation Study

- w/o Causal Discovery (0.73): Removing causal discovery reduces accuracy substantially, as the system loses principled causal structure. Interestingly, this performs slightly worse than Pure LLM (0.76) because integrating the knowledge graph without data-driven causal discovery can introduce noise-the KG provides generic domain knowledge that may not align with the specific causal relationships in the current data distribution.

- w/o LLM Reasoning (0.76): Removing LLM reasoning reduces explanation quality to 0.65 and accuracy to 0.76 due to loss of contextual understanding and natural language interpretation

- w/o Knowledge Graph (0.79): Removing the knowledge graph reduces accuracy as domain constraints and prior knowledge are lost, particularly impacting small-sample scenarios

- w/o Context Enhancement (0.81): Removing external contextual information reduces accuracy, demonstrating the value of incorporating market and operational context

F. Qualitative Analysis

Ⅴ. Discussion

A. Advantages of the Hybrid Approach

B. Limitations and Future Work

C. Broader Implications

Ⅵ. Conclusion

References

- P. Spirtes, C. N. Glymour and R. Scheines, Causation, Prediction, and Search, MIT Press, 2000.

- E. Kiciman, R. Ness, A. Sharma and C. Tan, “Causal reasoning and large language models: Opening a new frontier for causality,” Transactions on Machine Learning Research, 2023.

- N. Joshi, A. Saparov, Y. Wang and H. He, “LLMs are prone to fallacies in causal inference,” arXiv preprint arXiv:2406.12158, 2024.

- J. Pearl, Causality, Cambridge University Press, 2009.

- N. Park, F. Liu, P. Mehta, D. Cristofor, C. Faloutsos and Y. Dong, “Evokg: Jointly modeling event time and network structure for reasoning over temporal knowledge graphs,” Proceedings of the Fifteenth ACM International Conference on Web Search and Data Mining, pp. 794-803, 2022.

- X. Zheng, B. Aragam, P. K. Ravikumar and E. P. Xing, “DAGs with no tears: Continuous optimization for structure learning,” Advances in Neural Information Processing Systems, vol. 31, 2018.

- M. Zečević, M. Willig, D. S. Dhami and K. Kersting, “Causal parrots: Large language models may talk causality but are not causal,” arXiv preprint arXiv:2308.13067, 2023.

- F. Feng, X. He, X. Wang, C. Luo, Y. Liu and T.-S. Chua, “Temporal relational ranking for stock prediction,” ACM Transactions on Information Systems, vol. 37, no. 2, pp. 1-30, 2019. [CrossRef]

- Z. Liu, Z. Zhang and X. Zeng, “Risk identification and management through knowledge association: A financial event evolution knowledge graph approach,” Expert Systems with Applications, vol. 252, p. 123999, 2024. [CrossRef]

- X. V. Li and F. Sanna Passino, “Findkg: Dynamic knowledge graphs with large language models for detecting global trends in financial markets,” Proceedings of the 5th ACM International Conference on AI in Finance, pp. 573-581, 2024.

- Z. Xu and R. Ichise, “FinCaKG-Onto: the financial expertise depiction via causality knowledge graph and domain ontology,” Applied Intelligence, vol. 55, no. 6, pp. 1-17, 2025. [CrossRef]

- H. Yang, B. Zhang, N. Wang, C. Guo, X. Zhang, L. Lin, J. Wang, T. Zhou, M. Guan and R. Zhang, “Finrobot: An open-source AI agent platform for financial applications using large language models,” arXiv preprint arXiv:2405.14767, 2024.

- W. J. Yeo, W. Van Der Heever, R. Mao, E. Cambria, R. Satapathy and G. Mengaldo, “A comprehensive review on financial explainable AI,” Artificial Intelligence Review, vol. 58, no. 6, pp. 1-49, 2025. [CrossRef]

- Xie and W. C. Chang, “Deep Learning Approach for Clinical Risk Identification Using Transformer Modeling of Heterogeneous EHR Data,” arXiv preprint arXiv:2511.04158, 2025.

- K. Gao, Y. Hu, C. Nie and W. Li, “Deep Q-Learning-Based Intelligent Scheduling for ETL Optimization in Heterogeneous Data Environments,” arXiv preprint arXiv:2512.13060, 2025.

- H. Wang, C. Nie and C. Chiang, “Attention-Driven Deep Learning Framework for Intelligent Anomaly Detection in ETL Processes,” 2025.

- Z. Xu, K. Cao, Y. Zheng, M. Chang, X. Liang and J. Xia, “Generative Distribution Modeling for Credit Card Risk Identification under Noisy and Imbalanced Transactions,” 2025.

- R. Ying, J. Lyu, J. Li, C. Nie and C. Chiang, “Dynamic Portfolio Optimization with Data-Aware Multi-Agent Reinforcement Learning and Adaptive Risk Control,” 2025.

- Y. Ou, S. Huang, F. Wang, K. Zhou and Y. Shu, “Adaptive Anomaly Detection for Non-Stationary Time-Series: A Continual Learning Framework with Dynamic Distribution Monitoring,” 2025.

- Y. Wang, R. Fang, A. Xie, H. Feng and J. Lai, “Dynamic Anomaly Identification in Accounting Transactions via Multi-Head Self-Attention Networks,” arXiv preprint arXiv:2511.12122, 2025.

- Q. Gan, R. Ying, D. Li, Y. Wang, Q. Liu and J. Li, “Dynamic Spatiotemporal Causal Graph Neural Networks for Corporate Revenue Forecasting,” 2025.

- Y. Wu, Y. Qin, X. Su and Y. Lin, “Transformer-based risk monitoring for anti-money laundering with transaction graph integration,” Proceedings of the 2025 2nd International Conference on Digital Economy, Blockchain and Artificial Intelligence, pp. 388-393, 2025.

- R. Ying, Q. Liu, Y. Wang and Y. Xiao, “AI-Based Causal Reasoning over Knowledge Graphs for Data-Driven and Intervention-Oriented Enterprise Performance Analysis,” 2025.

- S. Li, Y. Wang, Y. Xing and M. Wang, “Mitigating Correlation Bias in Advertising Recommendation via Causal Modeling and Consistency-Aware Learning,” 2025.

- Y. Zheng, B. Huang, W. Chen, J. Ramsey, M. Gong, R. Cai, S. Shimizu, P. Spirtes and K. Zhang, “Causal-learn: Causal discovery in Python,” Journal of Machine Learning Research, vol. 25, no. 60, pp. 1-8, 2024.

| Method | Acc. | Prec. | Rec. | F1 | Exp. |

|---|---|---|---|---|---|

| Traditional Stat. | 0.68 (±0.03) | 0.64 (±0.04) | 0.71 (±0.03) | 0.67 (±0.03) | 0.45 (±0.05) |

| Pure LLM | 0.76 (±0.03) | 0.73 (±0.03) | 0.78 (±0.03) | 0.75 (±0.03) | 0.68 (±0.04) |

| Causal-Only | 0.72 (±0.03) | 0.69 (±0.04) | 0.74 (±0.03) | 0.71 (±0.03) | 0.62 (±0.04) |

| Rule-Based | 0.65 (±0.03) | 0.61 (±0.04) | 0.68 (±0.03) | 0.64 (±0.03) | 0.51 (±0.05) |

| Causal-LLM | 0.87 (±0.02) | 0.85 (±0.02) | 0.89 (±0.02) | 0.87 (±0.02) | 0.92 (±0.03) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).