A. Dataset

The dataset used in this study is derived from the Google Cloud ETL Benchmark Dataset (GC-ETL-Bench), which is a public benchmark specifically designed to evaluate distributed data processing and resource scheduling optimization. The dataset contains heterogeneous ETL task samples that cover the extraction, transformation, and loading processes of structured, semi-structured, and unstructured data. The task scenarios include typical ETL workflows such as data cleaning, log parsing, file aggregation, and metric computation. These tasks accurately reflect the complex resource competition and task dependencies in cloud and edge computing environments. The dataset integrates distributed execution logs with node monitoring records, providing multi-dimensional features such as task execution time, CPU utilization, IO bandwidth usage, task priority, and resource status. This offers a high-quality environmental representation for training reinforcement learning models.

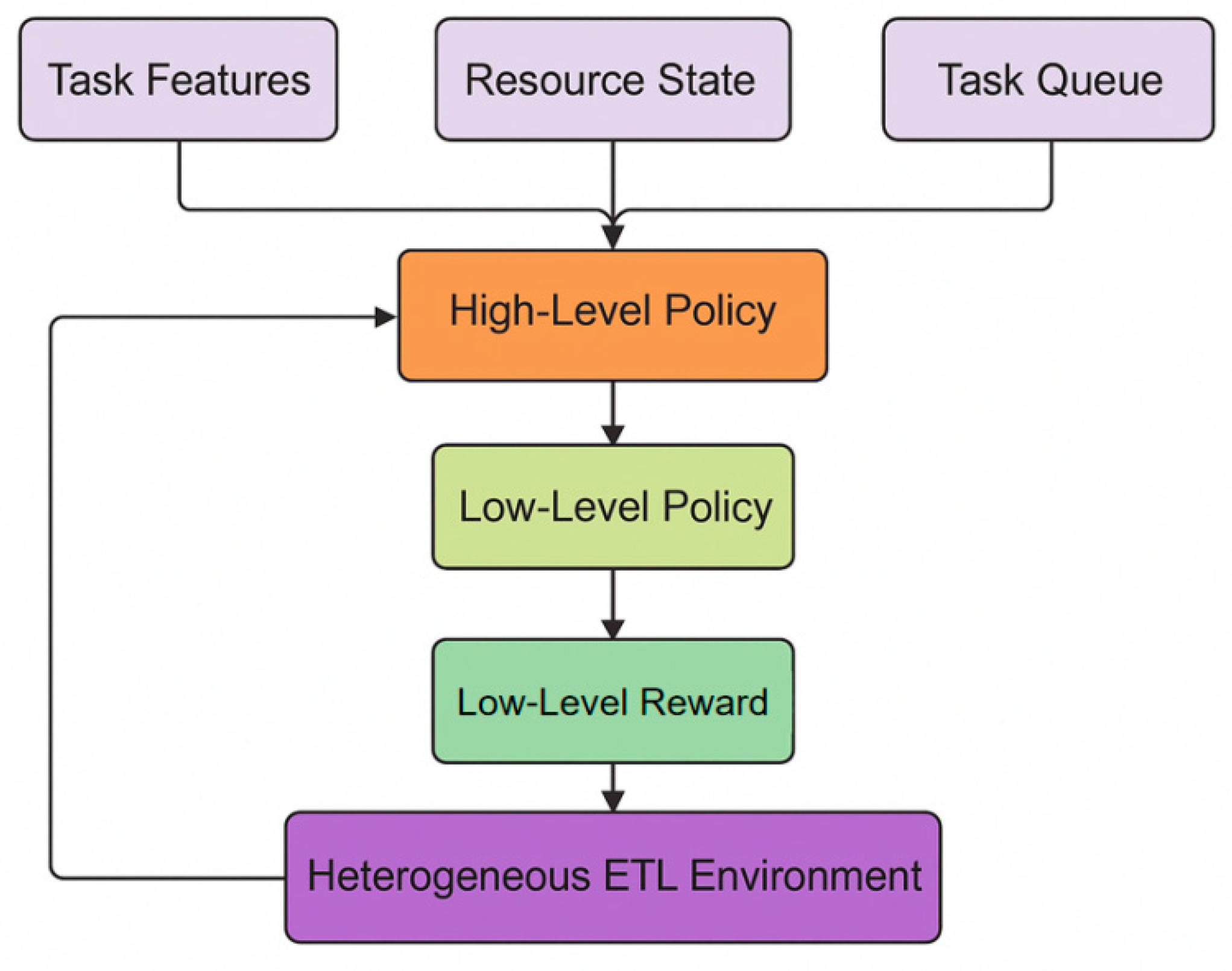

In terms of data organization, GC-ETL-Bench includes more than 50,000 task samples distributed across five categories of computing nodes. The node types include high-performance CPU instances, memory-optimized instances, IO-intensive nodes, GPU computing nodes, and low-power edge nodes. Each sample records the task input size, dependency structure, runtime state, resource allocation ratio, and result feedback metrics. Task dependencies are stored in the form of Directed Acyclic Graphs (DAGs), allowing the simulation of both parallel and sequential executions. This structured design facilitates hierarchical decision modeling and enables high- and low-level policies to learn and decompose different task stages, ensuring scalability and robustness of the model in complex scheduling scenarios.

In addition, the dataset includes multi-dimensional resource monitoring data such as CPU utilization curves, memory usage variations, IO latency distributions, and network traffic statistics. These features are used to build a dynamic feedback mechanism for the reinforcement learning environment. By using them as state inputs, the model can effectively capture system load fluctuations, task coupling effects, and performance differences across heterogeneous nodes. This enhances the environmental awareness of the hierarchical reinforcement learning model. The diversity and realism of the GC-ETL-Bench dataset make it an ideal benchmark for evaluating ETL resource scheduling algorithms under dynamic and heterogeneous conditions. It provides a solid data foundation for model design and policy optimization in this study.

C. Experimental Results

This paper first conducts a comparative experiment, and the experimental results are shown in

Table 1.

As shown in

Table 1, there are significant differences between traditional algorithms and reinforcement learning algorithms in several performance metrics for dynamic resource scheduling of heterogeneous ETL tasks. Traditional methods such as AWR and MC rely on fixed rules and static allocation strategies. They perform poorly in terms of Average Task Completion Time (ATCT) and Makespan, reaching 124.7 and 321.5, respectively. This indicates that in complex and dynamic resource environments, static methods cannot respond promptly to task load and resource fluctuations, resulting in low overall system efficiency. In addition, these two methods show a high Average Waiting Time (AWT), which suggests obvious idle resources and task blocking during scheduling. Their Load Balancing Index (LBI) values are only 0.72 and 0.76, reflecting a lack of global coordination in their scheduling strategies.

With the introduction of reinforcement learning methods, system performance improves significantly. Algorithms such as Q-learning, DQN, and DDQN continuously update their policies through interaction with the environment, effectively reducing task waiting and execution times. Specifically, DDQN reduces ATCT and AWT by about 25% to 30% compared with traditional methods, and shortens the Makespan to 262.8. This demonstrates better adaptability and stability in decision-making. However, these single-layer reinforcement learning models still face limitations in coordinating global and local decisions. They struggle to balance multi-node resource competition and multi-stage task dependencies. As a result, although LBI improves, it does not reach the optimal level, with the highest value at 0.86.

Furthermore, the A3C algorithm introduces a parallel learning mechanism that allows multiple agents to update policies collaboratively in a shared environment, leading to further improvement in overall efficiency. The ATCT of A3C decreases to 84.3, AWT drops to 26.7, and LBI increases to 0.88. This indicates that asynchronous policy updates enhance model stability and generalization to some extent. However, its decision-making structure remains single-layered and lacks sufficient ability to capture hierarchical dependencies across multi-stage tasks. As a result, it may still encounter resource contention and local optimality issues when handling dynamic scheduling of diverse ETL tasks.

In contrast, the hierarchical reinforcement learning model proposed in this study (Ours) achieves the best performance across all metrics. The average task completion time decreases to 72.5, Makespan reduces to 231.2, and AWT drops significantly to 21.4. Meanwhile, the LBI increases to 0.93, indicating efficient hierarchical coordination between global scheduling and local control. The high-level policy captures global features of task queues and resource states, while the low-level policy performs fine-grained control over node resources. This achieves dual optimization in task execution time and load balancing. Overall, the results confirm the effectiveness and generality of the hierarchical decision structure in heterogeneous ETL environments, demonstrating the advantages and practical potential of the proposed method in complex data scheduling scenarios.

The experimental results of hyperparameter sensitivity are further given, and the experimental results are shown in

Table 2.

As shown in

Table 2, the learning rate has a significant impact on the performance of the hierarchical reinforcement learning model in heterogeneous ETL task scheduling. When the learning rate is high, such as 0.004, the model tends to produce unstable policy updates during training, resulting in large fluctuations in scheduling outcomes. The Average Task Completion Time (ATCT) and Makespan are both relatively high, reaching 94.2 and 267.8, respectively. This indicates that a high learning rate causes over-exploration and insufficient convergence, making it difficult for the model to form stable scheduling strategies. In addition, the higher AWT and lower LBI suggest imbalanced resource utilization, showing that task competition has not been effectively coordinated.

As the learning rate gradually decreases, the model shows steady improvement across all metrics. When the learning rate is set to 0.002, ATCT decreases to 76.9, AWT decreases to 22.9, and LBI increases to 0.91. This demonstrates that the collaborative effect between task allocation and resource balancing becomes significantly stronger. These results indicate that moderately reducing the learning rate enhances the model's convergence stability in complex environments, allowing both high-level and low-level policies to capture task dependencies and resource states more accurately. As a result, the model achieves more efficient task scheduling and dynamic resource allocation.

When the learning rate is further reduced to 0.001, the model reaches its best performance. The ATCT and AWT decrease to 72.5 and 21.4, and the LBI increases to 0.93. This shows that the model achieves an optimal balance between global planning and local control. A lower learning rate helps the hierarchical policies perform fine-grained optimization in complex heterogeneous environments and avoids instability caused by overly rapid policy updates. Overall, these results verify the sensitivity and robustness of the hierarchical reinforcement learning model with respect to parameter selection. They also demonstrate that properly setting the learning rate is crucial for achieving stable and efficient ETL task scheduling.

The optimizer experimental results are further given, as shown in

Table 3.

As shown in

Table 3, different optimizers have a significant impact on the convergence behavior and scheduling performance of the hierarchical reinforcement learning model in heterogeneous ETL task scheduling. AdaGrad achieves a rapid loss reduction during early iterations, but its learning rate decreases over time, causing the model to fall into local optima in later stages. This results in relatively high ATCT and Makespan values, indicating that the optimizer lacks sustained exploration ability in complex and multi-stage tasks. In contrast, SGD shows better stability in global optimization but converges more slowly. Its improvement in task response efficiency is limited, and the LBI value of 0.88 reflects a slight imbalance in resource utilization across nodes. Overall, these two traditional optimizers struggle to balance learning stability and decision flexibility in dynamic task environments.

With the introduction of adaptive optimizers, model performance improves significantly. The Adam optimizer achieves smoother gradient updates through adaptive learning rates and momentum mechanisms. It performs better than traditional methods in terms of ATCT, AWT, and LBI, demonstrating its ability to capture high-dimensional task dependencies and resource variation patterns more effectively. Furthermore, AdamW introduces a weight decay mechanism on top of Adam, which reduces overfitting and enhances policy generalization in complex environments. Its ATCT and AWT decrease to 72.5 and 21.4, while LBI increases to 0.93, indicating that the model achieves a more optimal balance between global scheduling and local resource control. These results show that AdamW facilitates stable updates in the hierarchical policy network, allowing the model to maintain efficient task execution and strong load balancing in heterogeneous resource allocation.

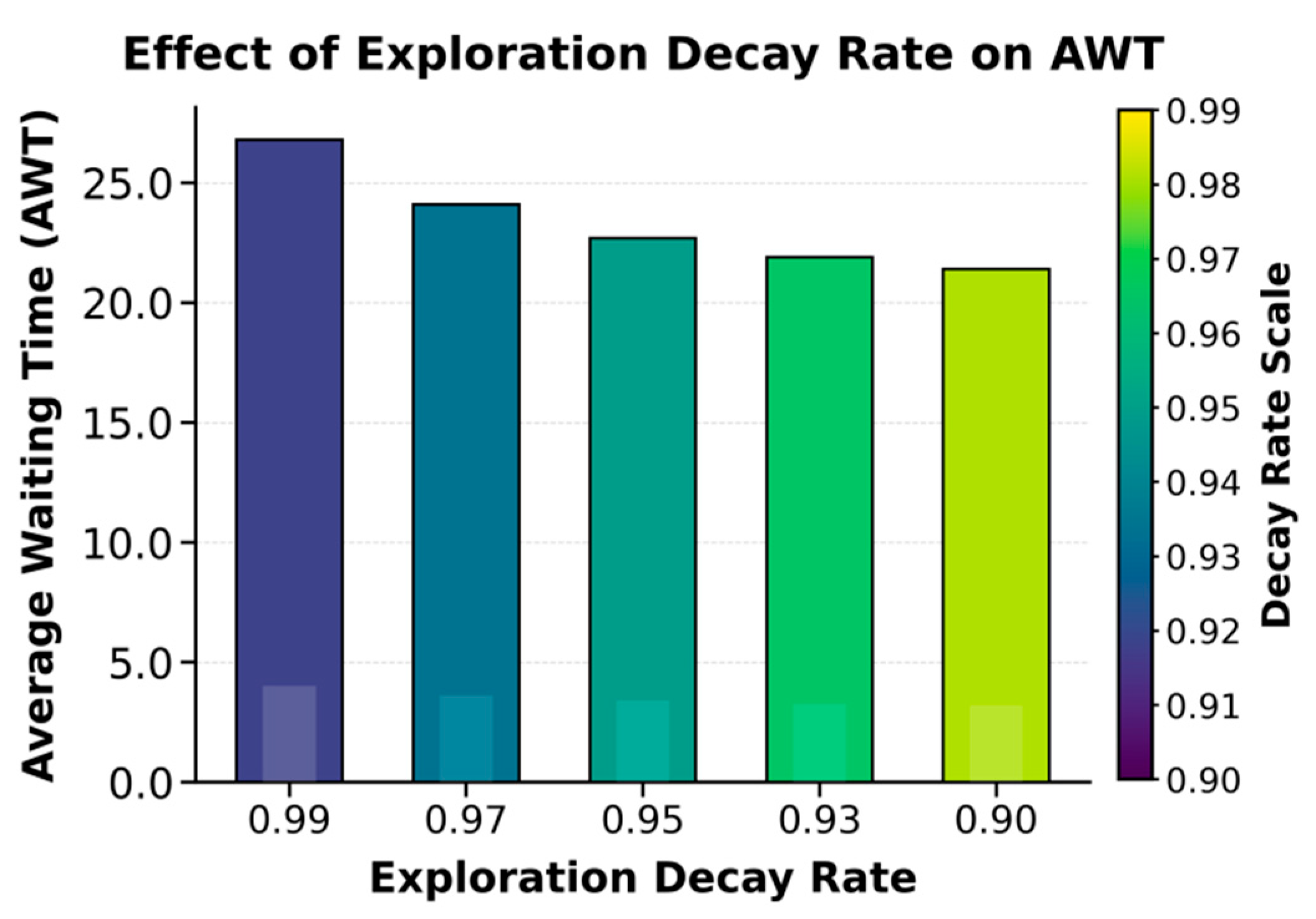

This paper also presents an experiment on the impact of the exploration rate decay coefficient on the average waiting time (AWT), and the experimental results are shown in

Figure 2.

The experimental results show that the exploration rate decay factor has a significant impact on the performance of the hierarchical reinforcement learning model in heterogeneous ETL task scheduling. When the decay factor is large, such as 0.99, the model maintains a high level of exploration during training. This leads to frequent but unstable policy updates, resulting in a higher Average Waiting Time (AWT) of 26.8. This indicates that excessive exploration introduces randomness in task allocation and resource selection, making it difficult for the model to effectively use historical experience to achieve efficient scheduling. As the decay factor decreases, the model gradually learns more stable strategies, and AWT drops significantly to 21.4. This shows that an appropriate balance between exploration and exploitation helps improve scheduling determinism and execution efficiency.

Further analysis shows that when the exploration rate decay factor is set between 0.93 and 0.90, the model achieves an ideal balance between global search and local optimization. In this case, the high-level policy in the hierarchical structure can better identify task priorities and resource dependencies, while the low-level policy can quickly adjust specific resource allocation strategies under a stable environment. This effectively reduces task queueing time. The results demonstrate that moderate exploration decay helps the model enhance adaptability to dynamic resource states and improve learning convergence speed in complex heterogeneous environments. It enables better time responsiveness and system stability in multi-task parallel scenarios.

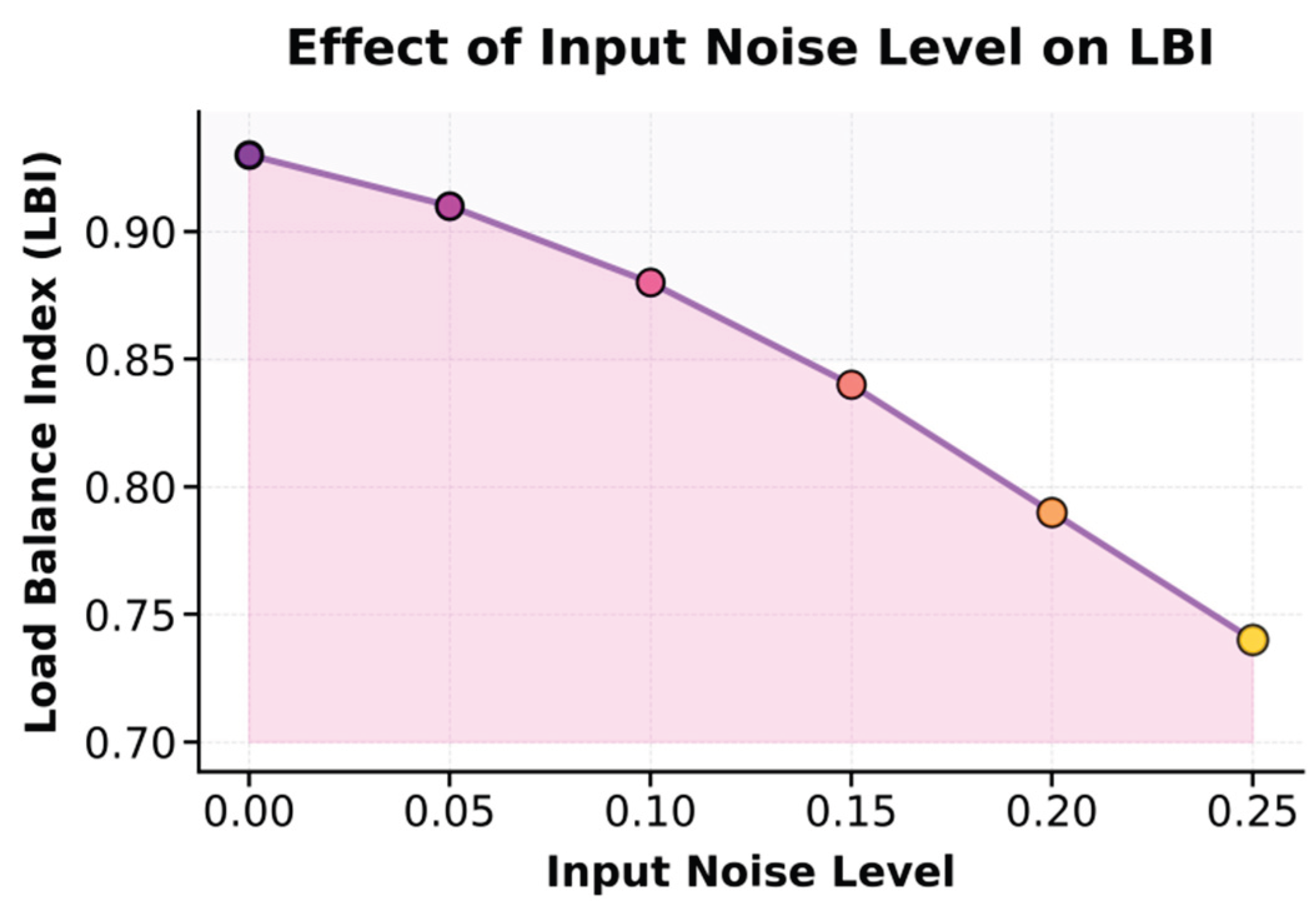

This paper also presents an experiment on the sensitivity of the input data noise level to the load balancing index (LBI), and the experimental results are shown in

Figure 3.

The experimental results show that the level of input data noise has a significant impact on the load balancing performance (LBI) of the hierarchical reinforcement learning model in heterogeneous ETL task scheduling. As the input noise increases, the model's LBI gradually decreases, dropping from 0.93 with no noise to 0.74 at a noise level of 0.25. This indicates that when input features are disturbed, the stability of the model's resource allocation strategy is disrupted. Task scheduling tends to favor certain nodes, leading to overall system load imbalance. Higher noise interferes with the high-level policy's perception of task priorities and abstraction of resource features, causing unstable decisions during global scheduling and affecting fine-grained resource allocation in lower-level execution modules.

Further analysis shows that when the noise level is low, between 0.00 and 0.10, the model maintains a high LBI value above 0.88. This suggests that the model possesses certain robustness and adaptive scheduling capability under mild perturbations. However, when the noise level exceeds 0.15, LBI drops sharply, indicating that accumulated noise disrupts the consistency between task state estimation and resource feedback. This weakens the ability of hierarchical reinforcement learning to transfer effective information across multi-stage tasks. These findings confirm the model's sensitivity to input quality in complex data environments. They also indicate that in practical ETL systems, input noise suppression, feature normalization, or steady-state encoding mechanisms are necessary to enhance the robustness of the policy network and ensure sustained, efficient, and balanced scheduling decisions in dynamic heterogeneous resource environments.