Submitted:

24 February 2026

Posted:

27 February 2026

You are already at the latest version

Abstract

Keywords:

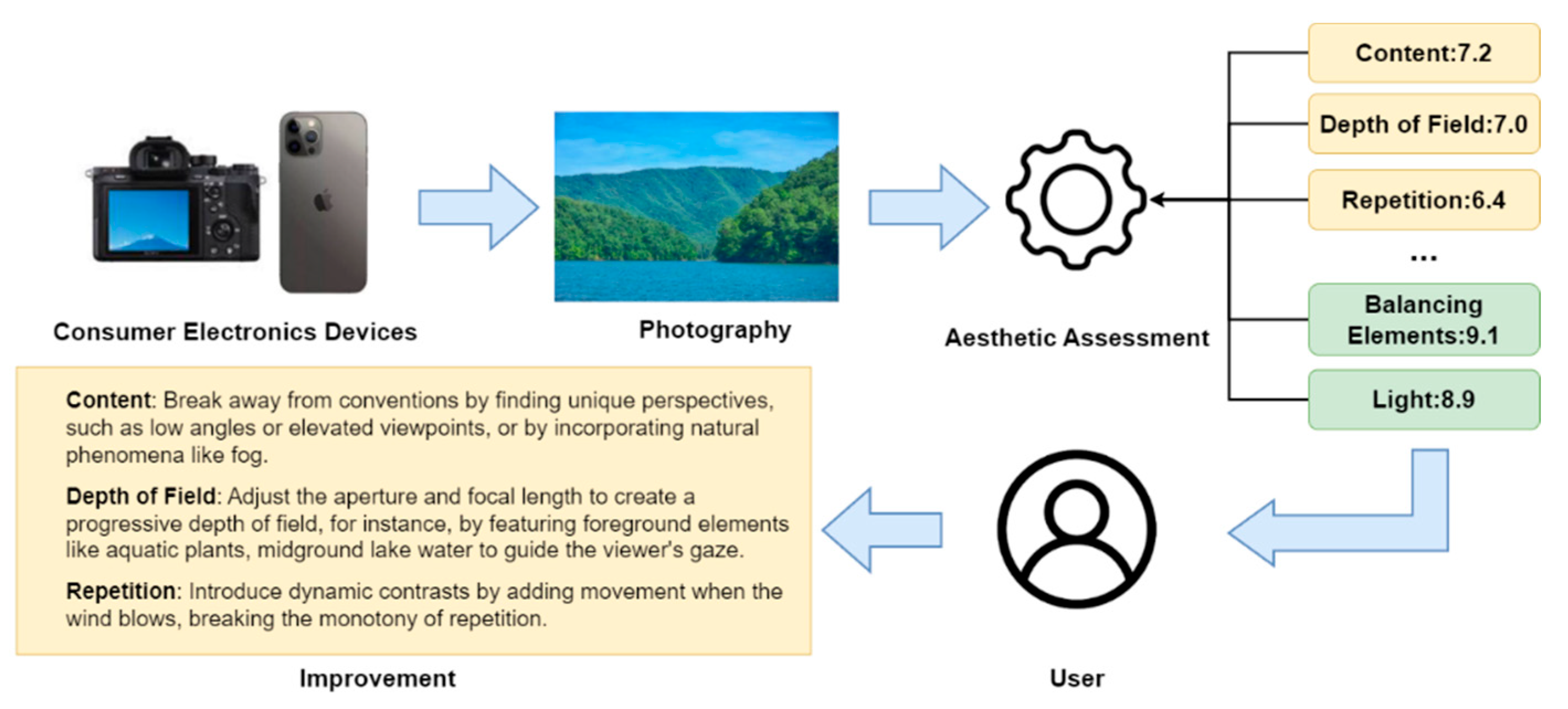

1. Introduction

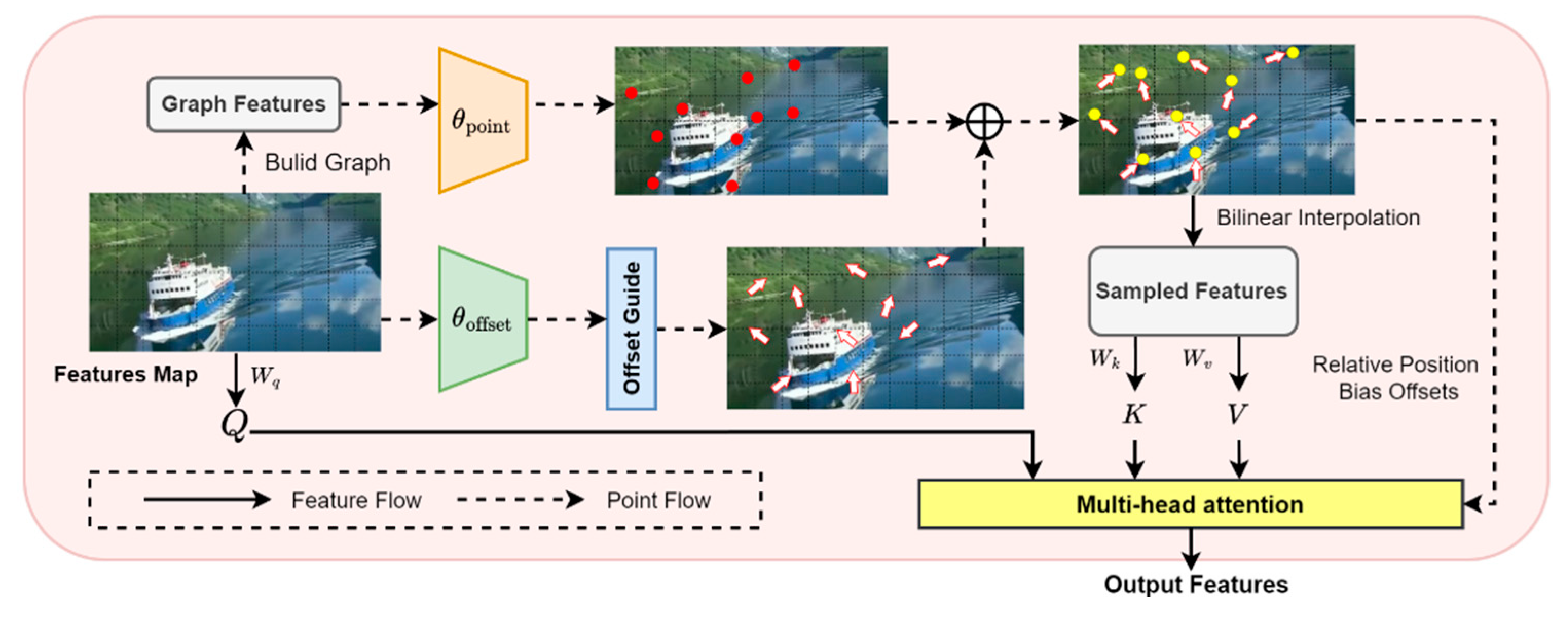

- We propose a GNN-guided deformable attention module that introduces the GNN into interest point generation process. The GNN captures the relationships between different regions of an image, enabling the displacement of interest points to better align with aesthetic structures. This design addresses the lack of guidance in traditional deformable attention.

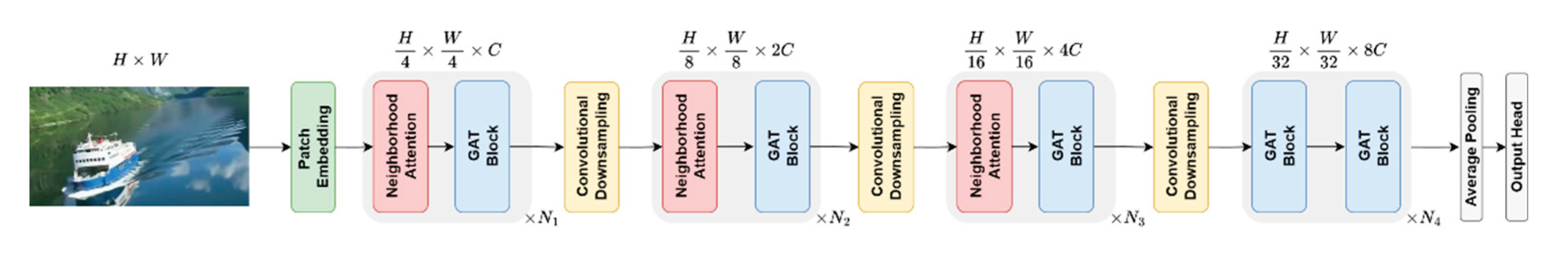

- We propose an improved deformable attention model that utilizes neighborhood attention as a feature extractor, enhancing the model's ability to extract aesthetic features. Compared to the sliding window attention mechanism, it achieves superior performance in terms of accuracy.

- Extensive experiments on public aesthetic datasets confirm the effectiveness of our model, comparing to recent Transformer-based and composition-aware aesthetic assessment models.

2. Related Work

2.1. Image Aesthetics Assessment

2.1.1. Handcrafted Feature-Based Methods

2.1.2. Deep Learning-Based Methods

2.2. Vision Transformer (ViT)

2.3. Graph Neural Network

3. Method

3.1. GAT Module

3.1.1. Interest Points Generation

3.1.2. Interest Offsets Generation

3.1.3. Feature Extraction

3.2. Method Architecture

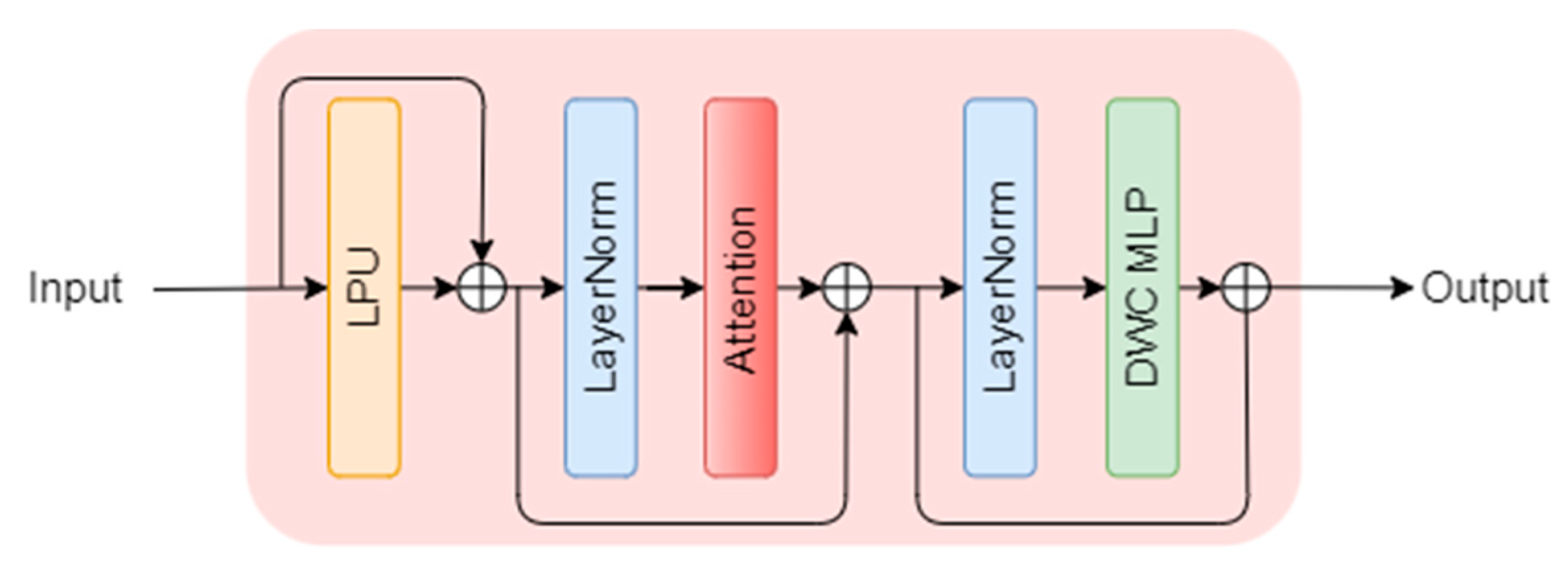

3.2.1. Architecture of Each Transformer Block

3.2.2. Neighborhood Attention

3.2.3. Overall Architecture

4. Experiments

4.1. Dataset and Evaluation Metrics

4.2. Experimental Results

| Method | ACC↑ | PLCC↑ | SRCC↑ |

|---|---|---|---|

| A-Lamp[48] | - | 0.422 | 0.411 |

| MUSIQ[51] | - | 0.517 | 0.489 |

| Maxvit[53] | - | 0.513 | 0.484 |

| DAT++[55] | 67.5% | 0.514 | 0.486 |

| AesMamba[57] | 72.0% | 0.511 | 0.483 |

| [25] | 65.0% | 0.475 | 0.452 |

| Ours | 68.7% | 0.521 | 0.490 |

| Method | ACC↑ | PLCC↑ | SRCC↑ |

|---|---|---|---|

| A-Lamp [48] | - | 0.422 | 0.411 |

| MUSIQ [51] | - | 0.517 | 0.489 |

| Maxvit [53] | - | 0.513 | 0.484 |

| DAT++[55] | 67.5% | 0.514 | 0.486 |

| AesMamba [57] | 72.0% | 0.511 | 0.483 |

| [25] | 65.0% | 0.475 | 0.452 |

| Ours | 68.7% | 0.521 | 0.490 |

4.3. Ablation Study

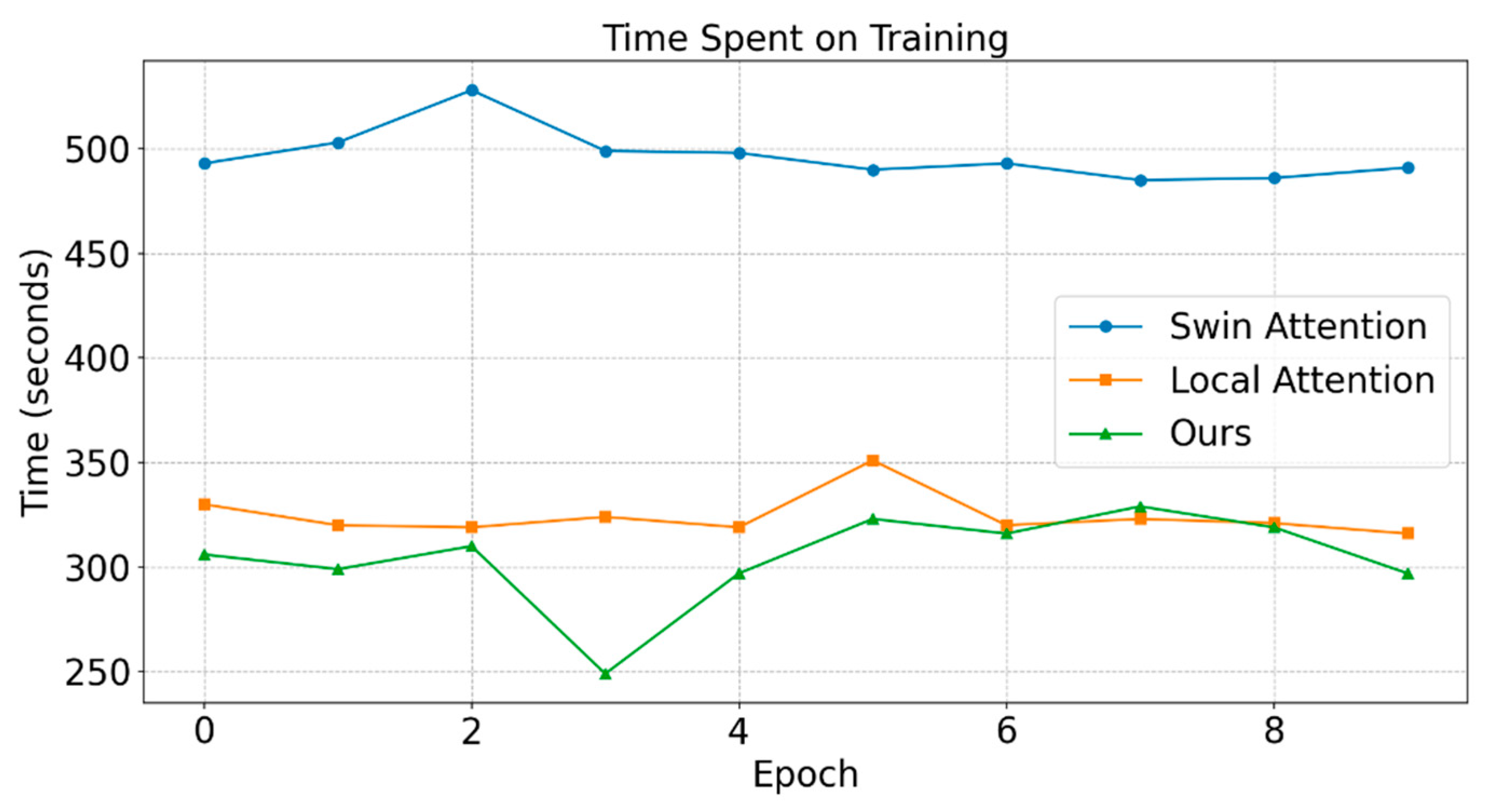

4.3.1. Neighborhood Attention

4.3.2. LPU and DWConv MLP

4.3.3. GAT Block

4.3.4. GAT Block

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| MDPI | Multidisciplinary Digital Publishing Institute |

| DOAJ | Directory of open access journals |

| IAA | Image Aesthetic Assessment |

| GNN | Graph Neural Network |

| CNN | Convolutional Neural Network |

| ViT | Vision Transformer |

| DAT | Deformable Attention Transformer |

| GAT | GNN-based Deformable Attention Module |

| ACC | Accuracy |

| PLCC | Pearson Linear Correlation Coefficient |

| SRCC | Spearman's Rank Correlation Coefficient |

| GT | Ground Truth |

| MSE DWConv GradCAM |

Mean Squared Error Depth-wise Convolution Gradient-weighted Class Activation Mapping |

References

- Jiang, M.; Shen, L.; Zheng, L.; Zhao, M.; Jiang, X. Tone-Mapped Image Quality Assessment for Electronics Displays by Combining Luminance Partition and Colorfulness Index. IEEE Trans. Consum. Electron. 2020, 66, 153–162. [Google Scholar] [CrossRef]

- Jiang, M.; Shen, L.; Hu, M.; An, P.; Ren, F. Blind Quality Evaluator of Tone-Mapped HDR and Multi-Exposure Fused Images for Electronic Display. IEEE Trans. Consum. Electron. 2021, 67, 350–362. [Google Scholar] [CrossRef]

- Biswas, S.; Barma, S. A Low-Cost Vegetable Quality Assessment System Based on Microscopy Images in Deep Learning Edge Computing: A Pilot Study on Potato Tuber. IEEE Trans. Consum. Electron. 2024, 70, 6343–6353. [Google Scholar] [CrossRef]

- Mirzaei, S.; Tohidypour, H.R.; Nasiopoulos, P.; Vora, S.R.; Mirabbasi, S. An Advanced Denoising Technique for Low-Dose CBCT Imaging: Enhancing Image Quality and Consumer Safety in Dental Diagnostics. In IEEE Trans. Consum. Electron.; 2025. [Google Scholar]

- Hong, R.; Zhang, L.; Tao, D. Unified Photo Enhancement by Discovering Aesthetic Communities from Flickr. IEEE Trans. Image Process. 2016, 25, 1124–1135. [Google Scholar] [CrossRef]

- Wang, W.; Shen, J.; Ling, H. A Deep Network Solution for Attention and Aesthetics Aware Photo Cropping. IEEE Trans. Pattern Anal. Mach. Intell. 2018, 41, 1531–1544. [Google Scholar] [CrossRef]

- Liu, D.; Puri, R.; Kamath, N.; Bhattacharya, S. Composition-Aware Image Aesthetics Assessment. In Proceedings of the Proceedings of the IEEE/CVF winter conference on applications of computer vision, 2020; pp. 3569–3578. [Google Scholar]

- She, D.; Lai, Y.-K.; Yi, G.; Xu, K. Hierarchical Layout-Aware Graph Convolutional Network for Unified Aesthetics Assessment. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2021; pp. 8475–8484. [Google Scholar]

- Ghosal, K.; Smolic, A. Image Aesthetics Assessment Using Graph Attention Network. In Proceedings of the 2022 26th International Conference on Pattern Recognition (ICPR), 2022; pp. 3160–3167. [Google Scholar]

- Zhou, Z.; Wang, Q.; Lin, B.; Su, Y.; Chen, R.; Tao, X.; Zheng, A.; Yuan, L.; Wan, P.; Zhang, D. Uniaa: A Unified Multi-Modal Image Aesthetic Assessment Baseline and Benchmark. ArXiv Prepr. ArXiv240409619 2024. [Google Scholar] [CrossRef]

- He, S.; Ming, A.; Zheng, S.; Zhong, H.; Ma, H. Eat: An Enhancer for Aesthetics-Oriented Transformers. In Proceedings of the Proceedings of the 31st ACM international conference on multimedia, 2023; pp. 1023–1032. [Google Scholar]

- Ke, Y.; Wang, Y.; Wang, K.; Qin, F.; Guo, J.; Yang, S. Image Aesthetics Assessment Using Composite Features from Transformer and CNN. Multimed. Syst. 2023, 29, 2483–2494. [Google Scholar] [CrossRef]

- Lan, G.; Xiao, S.; Yang, J.; Zhou, Y.; Wen, J.; Lu, W.; Gao, X. Image Aesthetics Assessment Based on Hypernetwork of Emotion Fusion. IEEE Trans. Multimed. 2023, 26, 3640–3650. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention Is All You Need. Adv. Neural Inf. Process. Syst. 2017, 30. [Google Scholar]

- Murray, N.; Marchesotti, L.; Perronnin, F. AVA: A Large-Scale Database for Aesthetic Visual Analysis. In Proceedings of the 2012 IEEE conference on computer vision and pattern recognition, 2012; IEEE; pp. 2408–2415. [Google Scholar]

- Kong, S.; Shen, X.; Lin, Z.; Mech, R.; Fowlkes, C. Photo Aesthetics Ranking Network with Attributes and Content Adaptation. In Proceedings of the Computer Vision–ECCV 2016, 2016; pp. 662–679. [Google Scholar]

- Chen, Z.; Zhu, Y.; Zhao, C.; Hu, G.; Zeng, W.; Wang, J.; Tang, M. Dpt: Deformable Patch-Based Transformer for Visual Recognition. In Proceedings of the Proceedings of the 29th ACM international conference on multimedia, 2021; pp. 2899–2907. [Google Scholar]

- Wei, X.; Yin, L.; Zhang, L.; Wu, F. DV-DETR: Improved UAV Aerial Small Target Detection Algorithm Based on RT-DETR. Sensors 2024, 24, 73–76. [Google Scholar] [CrossRef] [PubMed]

- Hassani, A.; Walton, S.; Li, J.; Li, S.; Shi, H. Neighborhood Attention Transformer. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2023; pp. 6185–6194. [Google Scholar]

- Datta, R.; Joshi, D.; Li, J.; Wang, J.Z. Studying Aesthetics in Photographic Images Using a Computational Approach. In Proceedings of the Computer Vision–ECCV 2006; Springer, 2006; pp. 288–301. [Google Scholar]

- Obrador, P.; Saad, M.A.; Suryanarayan, P.; Oliver, N. Towards Category-Based Aesthetic Models of Photographs. In Proceedings of the Advances in Multimedia Modeling: 18th International Conference, MMM 2012 Proceedings 18, Klagenfurt, Austria, January 4-6, 2012; Springer, 2012; pp. 63–76. [Google Scholar]

- Tang, X.; Luo, W.; Wang, X. Content-Based Photo Quality Assessment. IEEE Trans. Multimed. 2013, 15, 1930–1943. [Google Scholar] [CrossRef]

- Duan, J.; Chen, P.; Li, L.; Wu, J.; Shi, G. Semantic Attribute Guided Image Aesthetics Assessment. In Proceedings of the 2022 IEEE International Conference on Visual Communications and Image Processing (VCIP), 2022; IEEE; pp. 1–5. [Google Scholar]

- Hou, J.; Ding, H.; Lin, W.; Liu, W.; Fang, Y. Distilling Knowledge from Object Classification to Aesthetics Assessment. IEEE Trans. Circuits Syst. Video Technol. 2022, 32, 7386–7402. [Google Scholar] [CrossRef]

- Shi, T.; Chen, C.; Li, X.; Hao, A. Semantic and Style Based Multiple Reference Learning for Artistic and General Image Aesthetic Assessment. Neurocomputing 2024, 582, 127434. [Google Scholar] [CrossRef]

- Zhou, H.; Tang, L.; Yang, R.; Qin, G.; Zhang, Y.; Hu, R.; Li, X. Uniqa: Unified Vision-Language Pre-Training for Image Quality and Aesthetic Assessment. ArXiv Prepr. ArXiv240601069 2024. [Google Scholar]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An Image Is Worth 16 × 16 Words: Transformers for Image Recognition at Scale. ArXiv Prepr. ArXiv201011929, 2020. [Google Scholar]

- Chang, H.; Zhang, H.; Jiang, L.; Liu, C.; Freeman, W.T. Maskgit: Masked Generative Image Transformer. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022; pp. 11315–11325. [Google Scholar]

- Cheng, B.; Misra, I.; Schwing, A.G.; Kirillov, A.; Girdhar, R. Masked-Attention Mask Transformer for Universal Image Segmentation. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022; pp. 1290–1299. [Google Scholar]

- Wu, H.; Xiao, B.; Codella, N.; Liu, M.; Dai, X.; Yuan, L.; Zhang, L. Cvt: Introducing Convolutions to Vision Transformers. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 22–31. [Google Scholar]

- Zhu, X.; Su, W.; Lu, L.; Li, B.; Wang, X.; Dai, J. Deformable Detr: Deformable Transformers for End-to-End Object Detection. ArXiv Prepr. ArXiv201004159 2020.

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin Transformer: Hierarchical Vision Transformer Using Shifted Windows. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 10012–10022. [Google Scholar]

- Xia, Z.; Pan, X.; Song, S.; Li, L.E.; Huang, G. Vision Transformer with Deformable Attention. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022; pp. 4794–4803. [Google Scholar]

- Zhang, Q.; Zhang, J.; Xu, Y.; Tao, D. Vision Transformer with Quadrangle Attention. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 46, 3608–3624. [Google Scholar] [CrossRef] [PubMed]

- Wu, Z.; Pan, S.; Chen, F.; Long, G.; Zhang, C.; Philip, S.Y. A Comprehensive Survey on Graph Neural Networks. IEEE Trans. Neural Netw. Learn. Syst. 2020, 32, 4–24. [Google Scholar] [CrossRef]

- Micheli, A. Neural Network for Graphs: A Contextual Constructive Approach. IEEE Trans. Neural Netw. 2009, 20, 498–511. [Google Scholar] [CrossRef]

- Bruna, J.; Zaremba, W.; Szlam, A.; LeCun, Y. Spectral Networks and Locally Connected Networks on Graphs. ArXiv Prepr. ArXiv13126203, 2013. [Google Scholar]

- Hao, J.; Liu, J.; Pereira, E.; Liu, R.; Zhang, J.; Zhang, Y.; Yan, K.; Gong, Y.; Zheng, J.; Zhang, J.; et al. Uncertainty-Guided Graph Attention Network for Parapneumonic Effusion Diagnosis. Med. Image Anal. 2022, 75, 102217. [Google Scholar] [CrossRef]

- Zheng, Q.; Qi, Y.; Wang, C.; Zhang, C.; Sun, J. PointViG: A Lightweight GNN-Based Model for Efficient Point Cloud Analysis. ArXiv Prepr. ArXiv240700921 2024. [Google Scholar]

- Li, G.; Muller, M.; Thabet, A.; Ghanem, B. DeepGCNs: Can GCNs Go as Deep as CNNs? In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2019; pp. 9267–9276. [Google Scholar]

- Cao, J.; Liang, J.; Zhang, K.; Li, Y.; Zhang, Y.; Wang, W.; Gool, L.V. Reference-Based Image Super-Resolution with Deformable Attention Transformer. In Proceedings of the European conference on computer vision, 2022; Springer; pp. 325–342. [Google Scholar]

- Chu, X.; Tian, Z.; Zhang, B.; Wang, X.; Shen, C. Conditional Positional Encodings for Vision Transformers. ArXiv Prepr. ArXiv210210882 2021. [CrossRef]

- Guo, J.; Han, K.; Wu, H.; Tang, Y.; Chen, X.; Wang, Y.; Xu, C. Cmt: Convolutional Neural Networks Meet Vision Transformers. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2022; pp. 12175–12185. [Google Scholar]

- Shi, P.; Chen, X.; Qi, H.; Zhang, C.; Liu, Z. Object Detection Based on Swin Deformable Transformer-BiPAFPN-YOLOX. Comput. Intell. Neurosci. 2023, 2023, 4228610. [Google Scholar] [CrossRef] [PubMed]

- He, S.; Zhang, Y.; Xie, R.; Jiang, D.; Ming, A. Rethinking Image Aesthetics Assessment: Models, Datasets and Benchmarks. In Proceedings of the International Joint Conferences on Artificial Intelligence, 2022; pp. 942–948. [Google Scholar]

- Deng, J.; Dong, W.; Socher, R.; Li, L.-J.; Li, K.; Fei-Fei, L. Imagenet: A Large-Scale Hierarchical Image Database. In Proceedings of the 2009 IEEE conference on computer vision and pattern recognition, Ieee, 2009; pp. 248–255. [Google Scholar]

- Daryanavard Chounchenani, M.; Shahbahrami, A.; Hassanpour, R.; Gaydadjiev, G. Deep Learning Based Image Aesthetic Quality Assessment-A Review. ACM Comput. Surv. 2025, 57, 1–36. [Google Scholar] [CrossRef]

- Ma, S.; Liu, J.; Wen Chen, C. A-Lamp: Adaptive Layout-Aware Multi-Patch Deep Convolutional Neural Network for Photo Aesthetic Assessment. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017; pp. 4535–4544. [Google Scholar]

- Talebi, H.; Milanfar, P. NIMA: Neural Image Assessment. IEEE Trans. Image Process. 2018, 27, 3998–4011. [Google Scholar] [CrossRef] [PubMed]

- Chen, Q.; Zhang, W.; Zhou, N.; Lei, P.; Xu, Y.; Zheng, Y.; Fan, J. Adaptive Fractional Dilated Convolution Network for Image Aesthetics Assessment. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020; pp. 14114–14123. [Google Scholar]

- Ke, J.; Wang, Q.; Wang, Y.; Milanfar, P.; Yang, F. Musiq: Multi-Scale Image Quality Transformer. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021; pp. 5148–5157. [Google Scholar]

- Celona, L.; Leonardi, M.; Napoletano, P.; Rozza, A. Composition and Style Attributes Guided Image Aesthetic Assessment. IEEE Trans. Image Process. 2022, 31, 5009–5024. [Google Scholar] [CrossRef] [PubMed]

- u, Z.; Talebi, H.; Zhang, H.; Yang, F.; Milanfar, P.; Bovik, A.; Li, Y. Maxvit: Multi-Axis Vision Transformer. In Proceedings of the Computer Vision – ECCV 2022, 2022; pp. 459–479. [Google Scholar]

- Li, L.; Huang, Y.; Wu, J.; Yang, Y.; Li, Y.; Guo, Y.; Shi, G. Theme-Aware Visual Attribute Reasoning for Image Aesthetics Assessment. IEEE Trans. Circuits Syst. Video Technol. 2023, 33, 4798–4811. [Google Scholar] [CrossRef]

- Xia, Z.; Pan, X.; Song, S.; Li, L.E.; Huang, G. Dat++: Spatially Dynamic Vision Transformer with Deformable Attention. ArXiv Prepr. ArXiv230901430 2023. [Google Scholar]

- Huang, Y.; Li, L.; Chen, P.; Wu, J.; Yang, Y.; Li, Y.; Shi, G. Coarse-to-Fine Image Aesthetics Assessment with Dynamic Attribute Selection. In IEEE Trans. Multimed.; 2024. [Google Scholar]

- Gao, F.; Lin, Y.; Shi, J.; Qiao, M.; Wang, N. AesMamba: Universal Image Aesthetic Assessment with State Space Models. In Proceedings of the Proceedings of the 32nd ACM International Conference on Multimedia, 2024; pp. 7444–7453. [Google Scholar]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-Cam: Visual Explanations from Deep Networks via Gradient-Based Localization. In Proceedings of the Proceedings of the IEEE international conference on computer vision, 2017; pp. 618–626. [Google Scholar]

| Method | Year | ACC↑ | PLCC↑ | SRCC↑ | EMD1↓ | EMD2↓ | MSE↓ |

|---|---|---|---|---|---|---|---|

| A-Lamp[52] | 2017 | 82.5% | 0.671 | 0.666 | - | - | - |

| NIMA[58] | 2018 | 81.5% | 0.636 | 0.612 | 0.050 | - | - |

| AFDC[53] | 2020 | 83.2% | 0.671 | 0.649 | 0.00447 | - | 0.270 |

| HLAGCN[8] | 2021 | 84.1% | 0.678 | 0.656 | 0.045 | 0.065 | 0.264 |

| MUSIQ[48] | 2021 | 81.5% | 0.738 | 0.726 | - | - | 0.242 |

| SA-IAA[23] | 2022 | - | - | 0.740 | - | - | - |

| GATP[9] | 2022 | 76.4% | 0.762 | - | - | - | - |

| [54] | 2022 | - | 0.707 | 0.685 | - | - | - |

| Maxvit[49] | 2022 | 80.8% | 0.733 | 0.732 | 0.0439 | - | 0.263 |

| EAT[11] | 2023 | 81.7% | 0.770 | 0.759 | - | - | - |

| Tavar[55] | 2023 | 85.1% | 0.736 | 0.725 | - | - | - |

| SPTF-CNN[12] | 2023 | 84.5% | 0.709 | 0.687 | 0.043 | 0.064 | 0.264 |

| DAT++[50] | 2023 | 81.8% | 0.742 | 0.733 | - | 0.074 | - |

| CADAS[51] | 2024 | 85.0% | 0.702 | 0.687 | 0.042 | 0.061 | - |

| UNIAA[10] | 2024 | - | 0.704 | 0.713 | - | - | - |

| Ours | - | 82.3% | 0.750 | 0.741 | 0.042 | 0.072 | 0.243 |

| Block Type | ACC↑ | PLCC↑ | SRCC↑ |

|---|---|---|---|

| local attention | 67.0% | 0.503 | 0.474 |

| Swin attention | 67.3% | 0.510 | 0.482 |

| neighborhood attention | 68.7% | 0.521 | 0.490 |

| LPU | DWConv MLP | ACC↑ | PLCC↑ | SRCC↑ |

|---|---|---|---|---|

| ✓ | - | 64.9% | 0.417 | 0.393 |

| - | ✓ | 61.2% | 0.467 | 0.441 |

| - | - | 60.5% | 0.376 | 0.354 |

| ✓ | ✓ | 68.7% | 0.521 | 0.490 |

| Generator | ACC↑ | PLCC↑ | SRCC↑ |

|---|---|---|---|

| Conv | 67.7% | 0.517 | 0.488 |

| Uniform | 67.5% | 0.512 | 0.482 |

| GNN | 68.7% | 0.521 | 0.490 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.