Submitted:

24 February 2026

Posted:

25 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

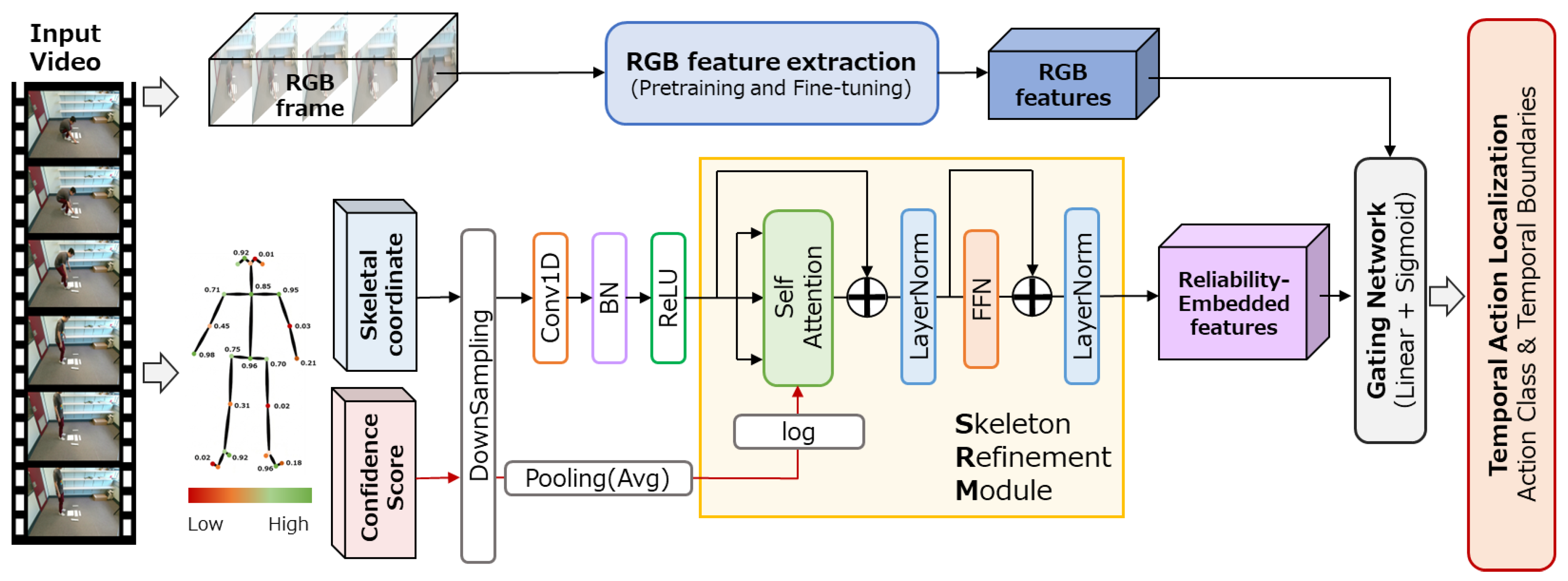

- We propose a confidence-biased self-attention mechanism that incorporates log-transformed pose estimation confidence scores as bias terms in the attention weight computation, achieving continuous soft suppression of unreliable skeletal features.

- We develop a Gated Skeleton Refinement Module (Gated SRM) that purifies skeletal information prior to feature fusion via a learnable gating network, designed to prevent the fused representation from degrading below the RGB-only baseline even under heavy occlusion.

- We validate the proposed method on both the standard THUMOS14 benchmark and the heavily occluded IKEA ASM dataset, demonstrating consistent improvements over RGB-only baselines and conventional fusion approaches.

2. Related Work

2.1. Video-Based Temporal Action Localization

2.1.1. Evolution from Video Classification to Temporal Action Localization

2.1.2. Introduction of the Transformer Architecture and the Development of ActionFormer

2.1.3. Next-Generation Foundational Technology: State Space Models (SSM) and Mamba

2.2. Action Recognition Using Skeleton Data

2.2.1. Characteristics and Advantages of Skeleton Data

2.2.2. Evolution of Graph Convolutional Networks (GCN) and the “Reliability Gap” in Pose Estimation

2.2.3. Existing Approaches and Their Limitations

2.3. Multimodal Sensor Fusion and Reliability

2.3.1. Fusion Strategies and the Manifestation of the Reliability Gap

2.3.2. Reliability-Aware Learning

2.4. Research Positioning and Contributions

- Limitations of RGB Dependence: While SOTA models such as ActionFormer [5] differ in their powerful feature extraction capabilities, they remain susceptible to visual noise and occlusion, often incurring high computational costs.

- Vulnerability of Skeleton Features: Skeleton-based models like ST-GCN [29] offer a lightweight alternative; however, they are extremely fragile against data missingness, and noise caused by occlusion, lacking reliability when used in isolation.

- Neglect of Dynamic Reliability: Existing multimodal fusion approaches do not explicitly account for dynamic quality fluctuations (i.e., confidence gaps) across sensors, failing to fully eliminate the adverse effects of low-quality data.

- Confidence-Driven Data Selection vs. Data Restoration: Restoration approaches [11,13] attempt to reconstruct missing joint coordinates, risking hallucinated predictions under severe occlusion. In contrast, our method bypasses reconstruction entirely by utilizing confidence scores as continuous gating signals, representing a fundamental paradigm shift from “data repair” to “data selection.”

- Explicit Confidence Integration: While conventional attention-based fusion learns “what to look at,” our approach explicitly guides the model on “what to trust” based on sensor metadata. This prevents the neural network from erroneously learning from noise.

- Validation under Realistic Occlusion Conditions: Unlike prior TAL studies that primarily evaluate on cleanly captured benchmarks [5,23,24], we validate on the IKEA ASM dataset [8] whose heavy occlusion characteristics (detailed in Section 4.2) closely approximate industrial deployment conditions.

3. Proposed Methods

3.1. System Overview

3.2. Skeleton Refinement Module (SRM)

3.2.1. Temporal CNN Projection

3.2.2. Confidence-Biased Multi-Head Self-Attention

3.3. Gated Fusion Mechanism

- Preservation of Pre-trained Representations: It prevents the destruction of the pre-trained RGB backbone’s representations during the early phases of training.

- Progressive Learning: It enables a progressive learning process where the contribution of skeletal information increases gradually over time.

- Robustness as a Safety Valve: It serves as a safety valve that automatically “closes” the gate when the quality of skeletal features is compromised, such as in environments with heavy occlusion.

3.4. Integration with Base Model

4. Implementation of Prototype System

4.1. Development Environment

4.2. Datasets

- THUMOS14 dataset [38]: This is a large-scale dataset comprising untrimmed videos of sports activities collected from YouTube. It contains 20 action classes, including “Long Jump” and “Cricket Bowling.” While the dataset exhibits significant camera motion and diverse backgrounds, the subjects are typically large and clearly visible within the frames, with a relatively low frequency of occlusions. To ensure a fair comparison with existing studies [5,38,39,40,41,42], we utilize the standard subset consisting of the validation set (200 videos) and the test set (213 videos).

- IKEA ASM dataset [8]: This dataset consists of 371 video recordings capturing the assembly of various furniture items, such as tables and shelves. It contains a total of approximately 35 h of footage. The average duration per video is about six minutes. The dataset is densely annotated, yielding approximately 31,000 action instances (clips) across the entire dataset.

4.3. Base Model: ActionFormer

4.4. Implementation of the SRM

- Temporal CNN Projection Layer: We map the 50-dimensional input skeleton coordinates (25 joints × 2 coordinates) into a 512-dimensional feature space using a 1D Convolutional Neural Network (1D-CNN) with a kernel size of 3 and a padding of 1, followed by Batch Normalization and a ReLU activation function.

- Confidence-Biased Multi-Head Self-Attention: We utilize 8 heads (with a dimension of 64 each). A confidence bias term is applied to the Key side during the attention score calculation.

- Feed-Forward Network (FFN): This component consists of two 1D convolutional layers (512→2048→512) accompanied by ReLU and dropout (with a rate of 0.1).

4.5. Fusion Strategies

- Naive Concatenation: We simply concatenate the 512-dimensional output of the SRM and the RGB features (1024 or 2048 dimensions) along the channel dimension, feeding the result directly into the backbone. Consequently, this approach requires modifying the input dimension of the backbone (e.g., from 1024 to 1536 for the IKEA ASM dataset).

- Gated Fusion (Proposed Method): This approach maintains the original input dimension of the backbone, enabling the direct utilization of pre-training weights. It operates in the following three steps:

- We mapped the SRM output to the same dimension as the RGB features via a projection layer (e.g., a 1 × 1 convolution).

- We concatenate the RGB features with the projected skeletal features to generate gate values in the range of [0, 1] using a 1 × 1 convolution and a sigmoid function.

- We compute the element-wise sum of the RGB features and the gate-modulated skeletal features as the final fused representation.

| Parameter | Value |

|---|---|

| Skeleton Input Dimension | 50 (25 joints × 2 coordinates) |

| SRM Embedding Dimension | 512 |

| Number of Self-Attention Heads | 8 |

| Dimension per Head | 64 |

| FFN Expansion Ratio | 4 |

| Dropout Rate | 0.1 |

| Projection Output Dimension | 1024 (IKEA ASM)/2048 (THUMOS14) |

| Gate Bias Initialization | −2.0 |

4.6. Training Protocol

5. Verification Test

5.1. Experimental Setup

5.1.1. Baselines

5.1.2. Evaluation Metrics

- mean Average Precision (mAP)

- Set t-IoU Thresholds: We establish five levels of temporal Intersection over Union (t-IoU) thresholds: 0.3, 0.4, 0.5, 0.6, and 0.7.

- Determine True Positives (TP): For each detected action instance, the prediction is classified as a True Positive (TP) if its t-IoU with the ground truth segment is equal to or greater than the specified threshold and the action class match correctly.

- Rank Predictions: For each action class, all predictions are ranked in descending order of their confidence scores to compute Precision and Recall.

- Calculate Average Precision (AP): The Average Precision (AP) is determined by calculating the area under the Precision-Recall curve for each class.

- Compute mAP: Finally, the mAP is obtained by averaging the AP values across all action classes.

- Boundary-F1 score

- A predicted boundary point is classified as a True Positive (TP) if it falls within ± τ seconds of a ground truth (GT) boundary point and the action class matches.

- A prediction is classified as a False Positive (FP) if no ground truth exists within the tolerance window or if the prediction is redundant.

- A ground truth boundary is classified as a False Negative (FN) if no predicted boundary point exists within the specified tolerance.

-

Precision and Recall are calculated using Equation (10) and Equation (11), respectively.

- The F1 score is then calculated using Equation (12).

5.2. Quantitative Results

5.2.1. Comparison of THUMOS14

5.2.2. Comparison of IKEA ASM

5.2.3. Statistical Significance Analysis

5.3. Qualitative Analysis

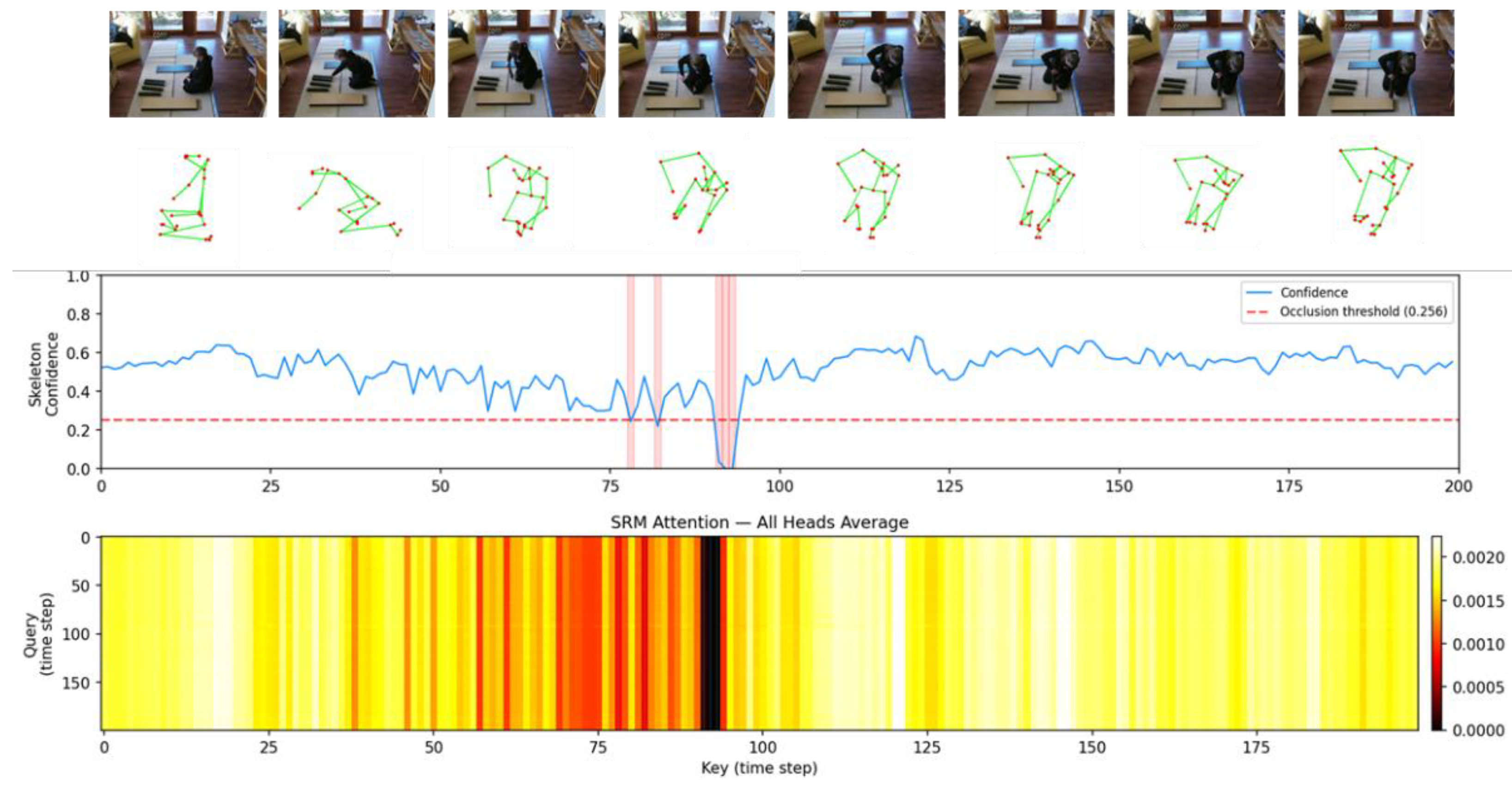

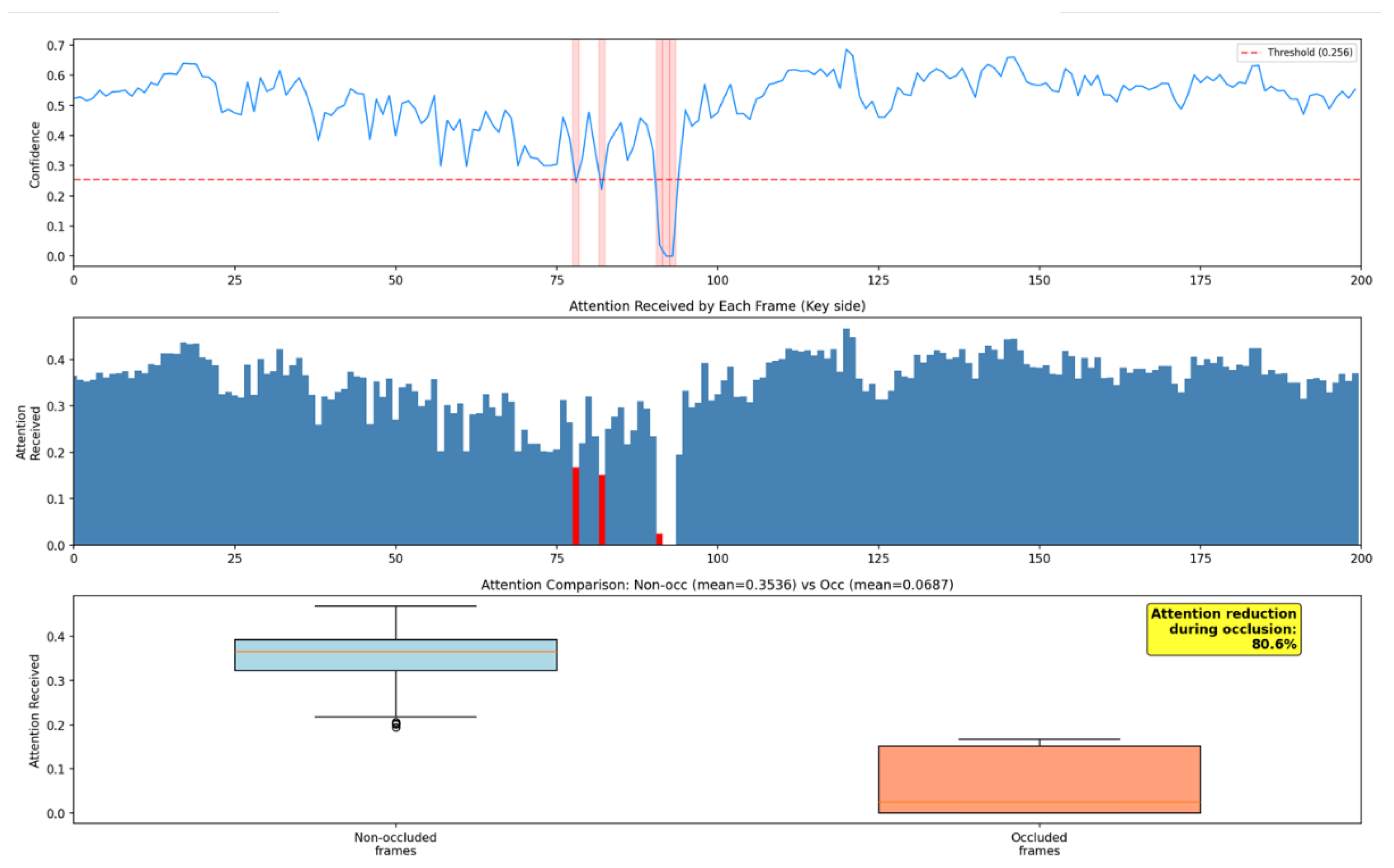

5.3.1. Attention Map Visualization of IKEA ASM

5.3.2. Qualitative Evaluation of Robustness to Occlusions

5.4. Computational Efficiency

6. Discussion

6.1. Quantitative Avoidance of Negative Transfer

6.2. Confidence Bias in Logarithmic Space and Soft Suppression Behavior

6.3. Practical Implications of the Computational Overhead

6.4. Limitations: Confidence Overreliance Risk and Industrial Deployment Challenges

7. Conclusions

- By integrating the confidence scores from the log-transformed pose estimator as a bias term into the multi-head self-attention layer, this system effectively mitigates the impact of highly uncertain skeleton features through a probabilistic and continuous approach. Despite employing this sophisticated attention mechanism, the computational overhead sufficiently meets real-time processing requirements, achieving approximately 16 FPS—a critical temporal resolution requirement for industrial surveillance applications.

- In evaluations using the heavily occluded IKEA ASM dataset, the proposed framework completely avoided the severe performance drop caused by naive fusion methods (where mAP decreased from 21.49% to 19.29%) and instead improved the overall mAP to 21.77%. A Wilcoxon signed-rank test confirmed this improvement is highly significant (p < 0.001) and maintains statistical equivalence with the RGB-only baseline, proving its effectiveness as a robust safeguard against negative transfer.

- Furthermore, on the THUMOS14 dataset, the method demonstrated its capability to effectively leverage high-quality skeletal data in environments with less occlusion, improving the mAP to 66.31% and significantly outperforming both the RGB-only baseline and naive fusion approaches.

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| TAL | Temporal Action Localization |

| HAR | Human Action Recognition |

| RGB | Red, Green Blue |

| mAP | mean Average Precision |

| IoU | Intersection over Union |

| CNN | Convolutional Neural Network |

| GCN | Graph Convolutional Network |

| ST-GCN | Spatial-Temporal Graph Convolutional Network |

| MLP | Multilayer Perceptron |

| FPS | Frames Per Second |

| GT | Ground Truth |

| SOTA | State-of-the-Art |

| ASM | Assembly (in IKEA ASM dataset) |

References

- Rehman, S.U.; Yasin, A.U.; Ul Haq, E.; Ali, M.; Kim, J.; Mehmood, A. Enhancing Human Activity Recognition through Integrated Multimodal Analysis: A Focus on RGB Imaging, Skeletal Tracking, and Pose Estimation. Sensors 2024, 24, 4646. [Google Scholar] [CrossRef]

- Tian, Y.; Liang, Y.; Yang, H.; Chen, J. Multi-Stream Fusion Network for Skeleton-Based Construction Worker Action Recognition. Sensors 2023, 23, 9350. [Google Scholar] [CrossRef]

- Luo, X.; Li, H.; Yang, X.; Yu, Y.; Cao, D. Capturing and understanding workers’ activities in far-field surveillance videos with deep action recognition and Bayesian nonparametric learning. Comput.-Aided Civ. Infrastruct. Eng. 2019, 34, 333–351. [Google Scholar] [CrossRef]

- Hu, K.; Shen, C.; Wang, T.; Xu, K.; Xia, Q.; Xia, M. Overview of temporal action detection based on deep learning. Artif. Intell. Rev. 2024, 57, 26. [Google Scholar] [CrossRef]

- Zhang, C.L.; Wu, J.; Li, Y. ActionFormer: Localizing Moments of Actions with Transformers. In Proceedings of the European Conference on Computer Vision (ECCV), Tel Aviv, Israel, 23–27 October 2022; pp. 492–510. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł.; Polosukhin, I. Attention is all you need. In Advances in Neural Information Processing Systems; Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., Garnett, R., Eds.; Curran Associates, Inc.: Long Beach, CA, USA, 2017; Volume 30. [Google Scholar]

- Sun, J.; Zhang, Y.; Xu, W.; Wang, B.; Hu, D. A novel two-stream Transformer-based framework for multi-modality human action recognition. Appl. Sci. 2023, 13, 2058. [Google Scholar] [CrossRef]

- Ben-Shabat, Y.; Yu, X.; Saleh, F.; Campbell, D.; Rodriguez-Opazo, C.; Li, H.; Gould, S. The IKEA ASM dataset: Understanding people assembling furniture through actions, objects and pose. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Waikoloa, HI, USA, 5–9 January 2021; pp. 847–859. [Google Scholar] [CrossRef]

- Cao, Z.; Hidalgo, G.; Simon, T.; Wei, S.E.; Sheikh, Y. OpenPose: Realtime multi-person 2D pose estimation using Part Affinity Fields. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 43, 172–186. [Google Scholar] [CrossRef] [PubMed]

- Liu, T.; Zhu, P.; Zhang, J.; Liu, H.; Yuan, J. A systematic review of skeleton-based action recognition: Methods, challenges, and future directions. IEEE Trans. Neural Netw. Learn. Syst. 2025. [Google Scholar] [CrossRef]

- Yoon, Y.; Yu, J.; Jeon, M. Predictively Encoded Graph Convolutional Network for Noise-Robust Skeleton-Based Action Recognition. Appl. Intell. 2022, 52, 2317–2331. [Google Scholar] [CrossRef]

- Shafizadegan, F.; Naghsh-Nilchi, A.R.; Shabaninia, E. Multimodal vision-based human action recognition using deep learning: A review. Artif. Intell. Rev. 2024, 57, 178. [Google Scholar] [CrossRef]

- Song, Y.F.; Zhang, Z.; Shan, C.; Wang, L. Richly activated graph convolutional network for robust skeleton-based action recognition. IEEE Trans. Circuits Syst. Video Technol. 2021, 31, 1915–1925. [Google Scholar] [CrossRef]

- Wen, H.; Lu, Z.M.; Shen, F.; Lu, Z.; Zheng, Y.; Cui, J. Enhancing skeleton-based action recognition with feature maps from pose estimation networks. IEICE Trans. Fundam. Electron. Commun. Comput. Sci. 2025, E108-A, 1677–1686. [Google Scholar] [CrossRef]

- Wang, H.; Schmid, C. Action Recognition with Improved Trajectories. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Sydney, Australia, 1–8 December 2013; pp. 3551–3558. [Google Scholar] [CrossRef]

- Wang, H.; Kläser, A.; Schmid, C.; Liu, C.L. Dense Trajectories and Motion Boundary Descriptors for Action Recognition. Int. J. Comput. Vis. 2013, 103, 60–87. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Two-stream convolutional networks for action recognition in videos. Adv. Neural Inf. Process. Syst. 2014, 27, 568–576. [Google Scholar]

- Carreira, J.; Zisserman, A. Quo vadis, action recognition? a new model and the kinetics dataset. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 6299–6308. [Google Scholar] [CrossRef]

- Gong, P.; Luo, X. A survey of video action recognition based on deep learning. Knowl.-Based Syst. 2025, 309, 113594. [Google Scholar] [CrossRef]

- Chen, H.; Gouin-Vallerand, C.; Bouchard, K.; Gaboury, S.; Couture, M.; Bier, N.; Giroux, S. Enhancing Human Activity Recognition in Smart Homes with Self-Supervised Learning and Self-Attention. Sensors 2024, 24, 884. [Google Scholar] [CrossRef]

- Lin, T.; Liu, X.; Li, X.; Ding, E.; Wen, S. BMN: Boundary-matching network for temporal action proposal generation. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 3889–3898. [Google Scholar] [CrossRef]

- Xu, M.; Chen, C.; Mei, J.; Zhang, Y.; Wu, Y. G-TAD: Sub-graph localization for temporal action detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 10156–10165. [Google Scholar] [CrossRef]

- Shi, D.; Zhong, Y.; Cao, Q.; Ma, L.; Li, J.; Yan, Y. TriDet: Temporal Action Detection with Trident-head. In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 17–24 June 2023; pp. 18853–18862. [Google Scholar]

- Zhang, H.; Zhou, F.; Wang, D.; Zhan, Q. LGAFormer: Transformer with Local and Global Attention for Action Detection. J. Supercomput. 2024, 80, 17952–17979. [Google Scholar] [CrossRef]

- Gu, A.; Goel, K.; Ré, C. Efficiently Modeling Long Sequences with Structured State Spaces. In Proceedings of the International Conference on Learning Representations (ICLR), Virtual, 25–29 April 2022. [Google Scholar]

- Wen, J.; Liu, D.; Zheng, B. ActionMamba: Action Spatial–Temporal Aggregation Network Based on Mamba and GCN for Skeleton-Based Action Recognition. Electronics 2025, 14, 3610. [Google Scholar] [CrossRef]

- Huang, Q.; Cui, J.; Li, C. A Review of Skeleton-Based Human Action Recognition. J. Comput.-Aided Des. Comput. Graph. 2024, 36, 173–194. [Google Scholar] [CrossRef]

- Feng, M.; Meunier, J. Skeleton Graph-Neural-Network-Based Human Action Recognition: A Survey. Sensors 2022, 22, 2091. [Google Scholar] [CrossRef]

- Yan, S.; Xiong, Y.; Lin, D. Spatial temporal graph convolutional networks for skeleton-based action recognition. In Proceedings of the AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018; Volume 32. [Google Scholar]

- Shi, L.; Zhang, Y.; Cheng, J.; Lu, H. Two-stream adaptive graph convolutional networks for skeleton-based action recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 12026–12035. [Google Scholar] [CrossRef]

- Liu, Z.; Zhang, H.; Chen, Z.; Wang, Z.; Ouyang, W. Disentangling and unifying graph convolutions for skeleton-based action recognition. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 143–152. [Google Scholar] [CrossRef]

- Du, Y.; Wang, W.; Wang, L. Hierarchical recurrent neural network for skeleton based action recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 1110–1118. [Google Scholar] [CrossRef]

- Sensoy, M.; Kaplan, L.; Kandemir, M. Evidential deep learning to quantify classification uncertainty. In Advances in Neural Information Processing Systems; Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., Garnett, R., Eds.; Curran Associates, Inc.: Montreal, QC, Canada, 2018; Volume 31. [Google Scholar]

- Neverova, N.; Wolf, C.; Taylor, G.; Nebout, F. Moddrop: Adaptive multi-modal gesture recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2015, 38, 1692–1706. [Google Scholar] [CrossRef]

- Ghimire, A.; Kakani, V.; Kim, H. SSRT: A sequential skeleton RGB transformer to recognize fine-grained human-object interactions and action recognition. IEEE Access 2023, 11, 51930–51948. [Google Scholar] [CrossRef]

- Liu, X.; Yang, X.; Wang, D.; Zhou, J.; Yang, Q. TadTR: End-to-end temporal action detection with transformer. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Anchorage, AK, USA, 19–22 September 2021; pp. 541–545. [Google Scholar] [CrossRef]

- Xing, Y. Deep learning-based action recognition with 3D skeleton: A comprehensive study. IET Commun. 2021, 15, 2369–2378. [Google Scholar] [CrossRef]

- Idrees, H.; Zamir, A.R.; Jiang, Y.G.; Gorban, A.; Laptev, I.; Sukthankar, R.; Shah, M. The THUMOS challenge on action recognition for videos “in the wild”. Comput. Vis. Image Underst. 2017, 155, 1–23. [Google Scholar] [CrossRef]

- Shou, Z.; Wang, D.; Chang, S.F. Temporal action localization in untrimmed videos via multi-stage CNNs. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 1049–1058. [Google Scholar]

- Zeng, R.; Huang, W.; Tan, M.; Rong, Y.; Zhao, P.; Huang, J.; Gan, C. Graph convolutional networks for temporal action localization. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 7094–7103. [Google Scholar]

- Liu, X.; Bai, S.; Bai, X. An empirical study of end-to-end temporal action detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 19–24 June 2022; pp. 20010–20019. [Google Scholar]

- Yang, L.; Han, H.; Zhao, H.; Tian, Q.; Zhang, M. Background-click supervision for temporal action localization. IEEE Trans. Pattern Anal. Mach. Intell. 2023, 45, 15159–15175. [Google Scholar] [CrossRef]

- Everingham, M.; Van Gool, L.; Williams, C.K.I.; Winn, J.; Zisserman, A. The Pascal Visual Object Classes (VOC) Challenge. Int. J. Comput. Vis. 2010, 88, 303–338. [Google Scholar] [CrossRef]

- Caba Heilbron, F.; Escorcia, V.; Ghanem, B.; Niebles, J.C. ActivityNet: A large-scale video benchmark for human activity understanding. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 961–970. [Google Scholar] [CrossRef]

- Lin, T.; Zhao, X.; Su, H.; Wang, C.; Yang, M. BSN: Boundary sensitive network for temporal action proposal generation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar] [CrossRef]

- Tao, K.; Wang, F.; Liu, Z.; Huang, Y. A lightweight spatiotemporal skeleton network for abnormal train driver action detection. Appl. Sci. 2025, 15, 13152. [Google Scholar] [CrossRef]

- Liu, Z.; Zhang, Z.; Cao, Z.; Kan, H.; Zhu, G.; Tan, M. 3DInAction: Understanding Human Actions in 3D Point Clouds. In Proceedings of the 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 17–24 June 2023; pp. 10636–10645. [Google Scholar]

- Gao, Y.; Lohmann, C.S.; Schiefer, J.; Capanni, F.; Drayss, T.; Schleyer, C.; Selle, S.; Bauerschmidt, S.J.; Dickhaus, H. How Fast Is Your Body Motion? Determining a Sufficient Frame Rate for an Optical Motion Tracking System Using Passive Markers. PLoS ONE 2016, 11, e0150993. [Google Scholar] [CrossRef]

| Parameter | Value |

|---|---|

| Backbone Type | convTransformer |

| Backbone Architecture | (2, 2, 5) |

| Scale Factor | 2 |

| Input Dimension | 1024 (IKEA ASM)/2048 (THUMOS14) |

| Embedding Dimension | 512 |

| FPN Dimension | 512 |

| FPN Type | identity |

| Number of Attention Heads | 4 |

| Attention Window Size | 19 |

| Detection Head Dimension | 512 |

| Number of Detection Head Layers | 3 |

| Regression Ranges | [0, 4], [4, 8], [8, 16], [16, 32], [32, 64], [64, 10000] |

| Maximum Sequence Length | 2304 |

| ID | Skeleton | SRM | Gated Fusion |

mAP @ tIoU (%)↑ | Best Epoch |

|||||

|---|---|---|---|---|---|---|---|---|---|---|

| 0.3 | 0.4 | 0.5 | 0.6 | 0.7 | Avg. | |||||

| #1 | 81.17 | 77.36 | 70.16 | 57.62 | 43.04 | 65.87 | 35 | |||

| #2 | ✓ | 79.09 | 74.96 | 66.91 | 54.87 | 40.24 | 63.22 | 35 | ||

| #3 | ✓ | ✓ | 78.81 | 74.99 | 67.47 | 54.82 | 41.12 | 63.44 | 40 | |

| #4 | ✓ | ✓ | 80.92 | 76.47 | 68.46 | 56.28 | 42.32 | 64.89 | 35 | |

| Ours | ✓ | ✓ | ✓ | 81.52 | 77.74 | 70.32 | 58.82 | 43.16 | 66.31 | 35 |

| ID | Skeleton | SRM | Gated Fusion |

Tolerance τ(s) | ||

|---|---|---|---|---|---|---|

| ±0.5 | ±1.0 | ±2.0 | ||||

| #1 | 0.5659 | 0.7282 | 0.8158 | |||

| #2 | ✓ | 0.5256 | 0.6891 | 0.7906 | ||

| #3 | ✓ | ✓ | 0.5607 | 0.7147 | 0.8001 | |

| #4 | ✓ | ✓ | 0.5664 | 0.7220 | 0.8105 | |

| Ours | ✓ | ✓ | ✓ | 0.5665 | 0.7281 | 0.8153 |

| ID | Skeleton | SRM | Gated Fusion |

mAP @ tIoU(%)↑ | Best Epoch |

|||||

|---|---|---|---|---|---|---|---|---|---|---|

| 0.3 | 0.4 | 0.5 | 0.6 | 0.7 | Avg. | |||||

| #1 | 30.61 | 27.23 | 21.77 | 17.06 | 10.79 | 21.49 | 25 | |||

| #2 | ✓ | 28.16 | 24.58 | 19.74 | 14.59 | 9.37 | 19.29 | 30 | ||

| #3 | ✓ | ✓ | 22.32 | 19.27 | 16.02 | 11.65 | 6.89 | 15.23 | 35 | |

| #4 | ✓ | ✓ | 31.21 | 27.94 | 22.11 | 16.27 | 10.30 | 21.57 | 25 | |

| Ours | ✓ | ✓ | ✓ | 31.01 | 27.85 | 22.24 | 17.08 | 10.49 | 21.77 | 25 |

| ID | Skeleton | SRM | Gated Fusion |

Tolerance τ(s) | ||

|---|---|---|---|---|---|---|

| ±0.5 | ±1.0 | ±2.0 | ||||

| #1 | 0.3593 | 0.5354 | 0.6596 | |||

| #2 | ✓ | 0.3193 | 0.4842 | 0.6324 | ||

| #3 | ✓ | ✓ | 0.2907 | 0.4399 | 0.5735 | |

| #4 | ✓ | ✓ | 0.3643 | 0.5430 | 0.6754 | |

| Ours | ✓ | ✓ | ✓ | 0.3624 | 0.5464 | 0.6851 |

| Comparison (Ours vs.) | IKEA ASM p-Value | Sig. | THUMOS14 p-Value | Sig. |

|---|---|---|---|---|

| #1 | 0.325 | n.s. | 0.231 | n.s. |

| #2 | 0.003 | ** | 0.001 | *** |

| #3 | < 0.001 | *** | 0.005 | ** |

| #4 | 0.665 | n.s. | 0.019 | * |

| Method | Params (M) | GFLOPs | Params Increase |

GFLOPs Increase |

IKEA ASM Latency (ms) | THUMOS14 Latency (ms) |

Inference Speed (FPS) |

|---|---|---|---|---|---|---|---|

| ActionFormer (RGB-only Baseline) |

27.70 | 83.28 | - | - | 47.6 | 46.5 | ~21 |

| Concat (No SRM Naive Fusion) |

28.56 | 87.25 | +3.1% | +4.8% | 47.8 (+0.3%) |

46.9 (+1.0%) |

~21 |

| Gated SRM (Proposed Method) |

33.55 | 121.09 | +21.1% | +45.4% | 63.6 (+33.6%) |

63.5 (+36.5%) |

~16 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).